Abstract

The literature suggests that plan quality should be distinguished as a type of plan evaluation not only for its focus on content but also for its communicative ends. This distinction is important for expanding the scope of plan quality evaluation, but it also highlights ambiguity about what we mean by “quality.” As a way to address this ambiguity we propose three dimensions of plan quality: documentation (comprehensiveness), policy focus (strength), and discourse (persuasiveness). We also propose a set of four principles for evaluating the strength of policy focus: maximize stability, minimize uncertainty, integrate public priorities across jurisdictions, and accommodate flexibility.

Introduction

What constitutes a good plan is the focus of plan quality evaluation. However, when one examines the meaning of “quality” in plan quality evaluation, one cannot find a clear sense of this defining concept. Most frequently, “quality” is used synonymously with “good” and “better.” For example, Berke and Godschalk (2009) searched for the “good plan”; Lyles and Stevens (2014) associate plan quality with a “better plan.” Presumably, a good or better plan is one that attains a higher score on a scale using prescribed criteria, thereby being of higher quality. However, to say that good or better is a measure of quality is not sufficient because “good” and “better” are used without specific regard for the purpose of a plan or scope of plan evaluation. What, then, does “quality” mean? What does “good” mean? And so on.

In this commentary, our aim is to critically examine the meaning of “quality” in plan quality evaluation. To frame our discussion, we first discuss where and how plan quality, as a distinct type of evaluation, fits within the broader field of plan evaluation. We then examine the meaning of quality by exploring its different dimensions. As a final step, we direct our attention to the matter of principles that define each dimension of plan quality.

Through our examination, we aim to clarify and thereby strengthen the field of plan quality evaluation by refining what it means to evaluate the quality of a plan. In particular, our examination extends current discussions in the field of plan quality prompted by Norton (2008), among others, and responds to the “challenge before plan quality researchers . . . to refine plan quality principles to facilitate application across plan types” (Lyles and Stevens 2014, 438).

Scope of Plan Evaluation

We begin our examination by situating plan quality within the broader field of plan evaluation. Evaluation is widely recognized as the systematic acquisition and assessment of information to provide useful feedback about the significance, worth, or condition of some object or intervention. Accordingly, the field of plan evaluation can be viewed as a systematic evaluation that takes plans, the planning process, planning outputs, or outcomes as the object or intervention.

Whereas the field of plan evaluation can be extended to public policy and related fields, in the following brief account of the field of plan evaluation we look inward in order to focus our discussion of plan quality as a subfield of plan evaluation. We will discuss how the diversity of the field of plan evaluation presents a challenge for understanding where plan quality fits. This diversity is due to different ways of viewing a plan, different approaches used to evaluate plans, and different stages in the planning process when evaluation takes place.

First, plans are viewed as different types of documents that serve different purposes. By way of contrast, plans are often seen as either strict blueprints that articulate clear goals and policies or as frameworks that guide decisions (e.g., Guyadeen and Seasons 2016; Laurian et al. 2004). Plans can also be viewed as a decision that should be made in light of other decisions, whereby a plan can work as an agenda, policy, vision, design, and strategy (Hopkins 2001). In broader terms, Baer (1997) identifies plans as a vision, blueprint, land use guide, remedy, administrative requirement, pragmatic action, and responses to senior government mandates.

That plans can be viewed as different things is not at issue; rather, it is important to recognize that one’s view of a plan is bound fundamentally to how it is evaluated. Correspondingly, as noted throughout the plan evaluation literature (e.g., Baer 1997; Norton 2008; Oliveira and Pinho 2010; Talen 1996), one cannot separate plan evaluation from one’s notion of success and its criteria. By necessity, this relationship is derived from and embedded in one’s view of a plan. An example helps to illustrate the relationship. Hopkins (2001) notes that the success of plans can be viewed with a focus on understanding how plans are used in decision-making situations, whereby he identifies four criteria: interdependence (the value of the results of a decision depends on other decisions), indivisibility (the decision cannot be made in infinitesimally small steps), and irreversibility (the decision cannot be reversed without cost) of decisions in the face of imperfect foresight (we lack complete knowledge of the future). This view of plan success is derived from Hopkins’s central focus on planning decisions as a series of actions, whereby, “in simplest terms, plans provide information about interdependent decisions” (

As a way of organizing the field of plan evaluation, one can also focus on different approaches. Laurian et al. (2010) describe three general approaches: a rational approach examines linkages between plans (as blueprints) and actual developments; a communicative approach examines the performance and use of plans (as guides) as well as the planning process; an integrative approach makes use of both rational and communicative approaches depending on the context and use of a plan (e.g., blueprint or guide). In a similar way, Alexander (2000) presents four complementary views of planning: rational planning, communicative practice, coordinative planning, and frame setting. All of these views involve different kinds of actors who are doing different kinds of planning.

One can also consider plan evaluation in relation to stages of the planning process. Three phases of the planning process during which evaluation takes place are commonly identified: during plan preparation (ex ante or a priori); during plan implementation (ongoing); and after the plan is implemented (ex post) (Alexander 2006a; Laurian et al. 2010; Oliveira and Pinho 2010). Baer (1997) identifies five stages in plan-making during which evaluation can take place: (1) plan assessment, (2) plan testing and evaluation, (3) plan critique, (4) comparative research and professional evaluations, and (5) post hoc evaluation of plan outcomes. Regardless of how the stages may be defined, the timing of the evaluation is strongly associated with the object of study, whereby one might focus on comparing possible alternatives during plan preparation, on results and use of resources during plan implementation, or on whether and to what degree plan policies are carried out (conformance) or their role in effecting change when the plan is implemented (performance).

Collectively, the different perspectives of and within plan evaluation have evolved considerably over the past fifty years during what is often described as a shift from a positivist to constructivist paradigm (Alexander 2002; Oliveira and Pinho 2010). Communicative characteristics of plans are premised on social interaction as a legitimate basis for reason, such that knowledge is not based solely on other individualistic and logical-empirical forms of rationality (Alexander 2006b). Therefore, when a plan is viewed as a communicative policy act in the context of uncertainty and pluralistic interests, it is viewed as a series of assertions that is part of an ongoing, deliberative decision-making process (Norton 2008). Thus, a plan can serve several purposes: advise local officials, explain decisions to local citizens, and justify decisions to the courts (Norton 2008).

Given the shifting perspectives within plan evaluation, what constitutes a “good” plan is “intrinsically relative and historical, and combines problematic issues of capacities, intentions and outcomes” (Alexander 2002, 191). This process of shifting perspectives continues, contributing to several contemporary issues identified by Oliveira and Pinho (2010, 343), as follows:

the need for evaluation and its integration in the planning process;

the timing of the evaluation exercise;

the different conceptions of success in plan implementation;

the necessary adjustments between the evaluation methodology and the specific plan concept;

the evaluation questions, the criteria, and the indicators; and

the presentation of the evaluation results and their use by decision makers.

While these issues highlight important elements of plan evaluation, the breadth of these issues also suggests a field of inquiry and practice still under development.

In context of the diversity of plan evaluation and its ongoing development, several issues obscure the specific contribution of plan quality to plan evaluation. First, the term quality is used throughout the literature, and often in general terms. A more obfuscating factor relates to where plan quality fits among the stages of the planning process. As noted above, most discussions about types of evaluation are aligned with stages of the planning process, yet evaluating a plan as an object does not align well with the commonly used stages. General approaches to evaluation research, at a minimum, distinguish between evaluating plan development and plan implementation. However, evaluating a plan as an object of study does not align precisely with either plan development or implementation; it is something between the two. A plan is an outcome of a development process and the object used afterward in planning decisions. This problem of alignment arises regardless of whether types of evaluation are characterized as three stages (e.g., ex ante, ongoing, and ex post) (e.g., Alexander 2006a; Laurian et al. 2010; Oliveira and Pinho 2010; Talen 1996) or five stages (e.g., Baer 1997). Thus, although aligning types of evaluation with stages in the planning process helps to frame the scope of plan evaluation, this way of organizing approaches to plan evaluation contributes to ambiguity when it comes to understanding where plan quality fits.

One step to resolving the confusion about where plan quality fits in the field of plan evaluation is to more clearly articulate how plan quality evaluation can be seen as a distinct method. Working within the above definition of plan evaluation, we agree with the definition of plan quality evaluation presented by Lyles and Stevens (2014, 434): “the process by which plan content analysis data is linked to normative criteria of what constitutes a better plan.” This definition of plan quality has two features that separate it from other types of plan evaluation. One feature is that it takes place “after they [plans] have been developed.” The other feature is the use of content analysis. To underscore these two features of plan quality evaluation, we emphasize that plan quality evaluation has a primary focus on a plan as it is written as the object of evaluation (hereafter, for our purposes, shortened to “plan-as-object”).

To build upon an understanding of plan-as-object, we introduce efficacy as an additional feature unique to plan quality evaluation. Efficacy is “the power to produce a desired result or effect” (Merriam-Webster n.d.). By definition, the words efficacy and effective are very similar. To emphasize the difference, effective is about producing an effect; efficacy is about the power to produce an effect. When a plan is taken as the object of evaluation, it is the efficacy of a plan as it is written that is evaluated against the desired result (based on preestablished, normative criteria). That is, the power of a plan to produce an effect is embodied in its text, not in its application. It is this difference that sets efficacy apart from the other terms and serves our purpose to draw a clearer distinction between plan quality evaluation and other types of plan evaluation. As distinct from a focus on a plan’s development (efficiency of process) or implementation (effectiveness of application), an evaluation of a plan’s efficacy serves to focus attention on the plan itself. Thus, based on Alexander’s (2006a) means of organizing types of evaluation, we believe that plan quality can be defined clearly as a distinct type of plan evaluation with regard for both the object and timing of evaluation.

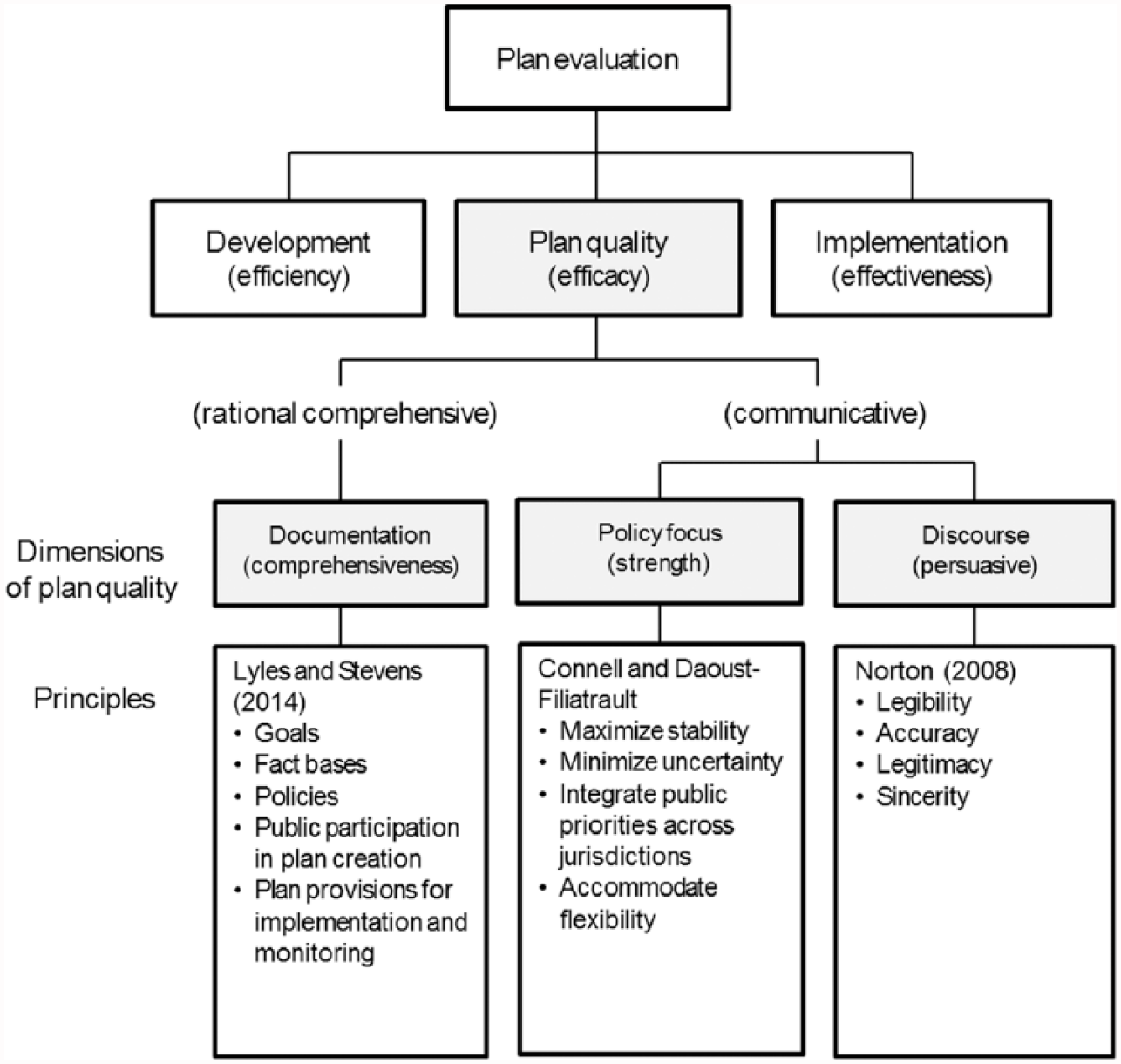

With this understanding of plan quality as a distinct type of evaluation, we divide the field of plan evaluation into three areas, as shown at the top of the chart in Figure 1: plan development, plan quality, and plan implementation. In light of the many approaches and types of plan evaluation mentioned above, this division is primarily heuristic; it is an attempt to simplify a diverse field of study as a means to focus on plan quality evaluation as distinct within the field of plan evaluation research.

The scope of plan evaluation and dimensions of plan quality.

Dimensions of Plan Quality Evaluation

Having framed our focus on the evaluation of plan-as-object as a distinct type of evaluation, we now direct our attention to the dimensions of plan quality. The bulk of plan quality evaluation efforts, as summarized by Berke and Godschalk (2009) and Lyles and Stevens (2014), is synonymous with “plan quality.” However, as we noted at the outset, “quality” is most often used synonymously with “good” and “better.” As Berke and Godschalk (2009, 228 [emphasis added]) state, “Only systematic evaluation enables us to identify their specific strengths and weaknesses, to judge whether their overall quality is good, and to provide a basis for ensuring that they reach a desirable standard.” However, an undifferentiated concept of “quality” fails to reflect important differences identified within plan quality evaluation, which is problematic when it comes to refining the scope and dimensions of quality. That is, we need to move beyond this ambiguous relationship between quality and good/better.

We posit that different dimensions of “quality” can be identified within the current scope of plan quality evaluation. The “core principles” identified by Lyles and Stevens (2014) provide a starting point. These principles include goals, fact bases, policies, public participation in plan creation, and plan provisions for implementation and monitoring. The consensus on this set of principles is the outcome of a measured process of adding and refining elements of what constitutes a good plan. For example, early work focused on three general characteristics of quality: a strong factual basis, clearly articulated goals, and appropriately directed policies (Kaiser, Godschalk, and Chapin 1995). These three categories were soon expanded by Baer (1997), who developed an extensive set of sixty criteria that were organized under eight categories. Berke and Godschalk (2009) identified ten internal and external characteristics. However, as Lyles and Stevens (2014) note, the consensus that emerged from these ongoing developments is “largely focused on the rational comprehensive view of planning and prioritize criteria that conceive of a plan as a blueprint or agenda” (2014, 438). We agree with this association between these principles and a rational comprehensive approach. Correspondingly, we identify this area of plan quality evaluation as one dimension of quality. Consistent with a rational comprehensive approach, and as reflected in the range of criteria associated with this approach, we propose that this dimension of plan quality centers on the quality of documentation as measured by comprehensiveness. (The use of the term “documentation” is consistent with Norton’s [2008] characterization of analytical quality, as discussed below.)

To explore additional dimensions of plan quality evaluation, we now shift our attention to the communicative characteristics of plan quality evaluation. Specifically, Lyles and Stevens (2014) point to critiques by Norton (2008) and Bunnell and Jepson (2011). Both critiques reflect a discursive approach to planning, whereby plans are viewed as guides rather than blueprints, as frameworks for consensus building rather than means of control (Guyadeen and Seasons 2016). Norton’s (2008) critique centers on a concern that the criteria to measure overall plan quality do not account for conceptually distinct dimensions of plans associated with its different functions. As Norton (2008, 433) argues, “the resulting need [is] to separate analytically the policy message of the plan from the conveyance of that message.” Thereby, Norton focused on “policy focus” as a distinct dimension that was previously obscured by existing measures of and focus on “plan quality.” The latter refers to the analysis of a plan’s documentation, or “analytical quality,” which Norton operationalizes using criteria that are similar to the components used in other studies of plan quality.

For our purposes, it is important to emphasize that we agree with Norton that policy focus is a distinct dimension of evaluation. However, whereas Norton argues that policy focus is something different from and should not be conflated with “plan quality,” we take a different view. We see policy focus not as distinct from but as one dimension of plan quality. That is, in our view, Norton’s distinction between “plan quality” (analytical quality) and policy focus points to two dimensions of plan quality, which helps to resolve what Norton characterized as paradoxical implications of trying to separate policy focus from plan quality. In effect, we have realigned Norton’s terms without changing the foundation of his argument.

Isolating policy focus as a dimension of quality is a critical step to separate the means from the ends, but policy focus does not encompass other aspects of a communicative approach to plan quality evaluation. To fill this gap we suggest a third dimension of quality, which we identify as “discourse.” Adding this third dimension captures discursive elements associated with guides rather than blueprints, with consensus building rather than means of control, which are elements that reflect interactive and normative characteristics of a plan. In this regard, both Norton (2008) and Bunnell and Jepson (2011) directly associate this discursive dimension of plan quality with persuasion. Norton states that a plan, as a communicative policy act that makes assertions, is “especially useful for contemplating the extent to which the plan represents persuasive and undistorted communication” (2008, 443 [emphasis added]). Similarly, Bunnell and Jepson (2011, 338 [emphasis added]) argue, “the extent to which plans persuasively connect with readers, and elicit their positive participation, should constitute the core notion of what constitutes a good plan.” Persuasion—the ability to engage and motivate—is necessary, they argue, in order to garner the attention of policy makers and the public.

Thus, we distinguish three dimensions of plan quality, as follows: quality of documentation (comprehensiveness), quality of policy focus (strength), and quality of discourse (persuasion) (as shown above in Figure 1).

Three Sets of Core Principles

To consolidate our ideas, we propose sets of principles to more clearly distinguish each of the three dimensions of plan quality. Consistent with Lyles and Stevens (2014), principles refer to the characteristics that are considered to be widely applicable to each dimension of plan quality. Each set of principles frames the scope of analysis for each dimension of plan quality and can be used to guide the development of specific criteria according to the needs of analysis.

The first set of principles was discussed above, the core principles identified by Lyles and Stevens (2014). Consistent with Lyles and Stevens, we view this set of principles in relation to a rational comprehensive approach to plan quality evaluation.

With regard for a set of principles that inform the “discourse” quality of plans we turn to Norton’s (2008) characterization of a plan as a communicative policy act and his criteria for evaluating communicative action. As Norton explains, there are four key characteristics derived from Habermas’s notion of the ideal speech act. These characteristics are comprehensibility (can the assertion be comprehended or understood?), truth or accuracy (is the statement demonstrably correct?), legitimacy (does the person making the statement have a legitimate basis upon which to do so?), and sincerity (is the person making the statement sincere or is he or she attempting to be manipulative?). In Habermas’s ideal speech, these four factors are the basis for testing the acceptance of an assertion. In relation to plan quality, “the written assertions made by a plan can be ‘tested’ using criteria that parallel those used with the speech act” (Norton 2008, 443). These four principles, as operationalized by Norton, can be stated as follows:

Legibility: relates most directly to how clearly and completely the plan conveys information, policies, and associated implementation responsibilities

Accuracy: relates to the factual and analytical bases of policy assertions, including the fact base, infrastructure, and land suitability analyses

Legitimacy: relates to the various plan-making tasks, roles, and authors that indicate the extent to which plan policies should be accepted as authoritative and representative of public interests

Sincerity: relates to plan consistency, such as policy assertions that are based on real-world conditions and mutually supportive of one another

With our attention directed at the plan-as-object, we present these four characteristics as the set of principles for the discursive dimension of plan quality.

To complete our examination of the dimensions of plan quality, we are left with one item to address: a set of principles that reflect the strength of policy focus. Although Norton (2008) prepared criteria to measure a policy focus on neotraditional landscapes, he does not offer a generic set of principles that guide measures of the strength of any policy focus. To fill this gap, we propose the following set of four principles: maximize stability, minimize uncertainty, integrate public priorities across jurisdictions, and accommodate flexibility.

The proposed set of principles for quality of policy focus is premised on a view of planning (Connell 2009) that is based on a general theory of society (Luhmann 2012, 2013). Our interest is in the function of planning for society as a basis to identify a set of generic principles that is independent of any particular policy focus and plan content. From a broad perspective, planning enables communication about a future that can never be fully visible to society. Correspondingly, planners seek stability by maximizing what we can know about the future and seek security by minimizing what we do not know, thereby establishing a domain of understanding within which to make the best possible decisions in the present. This theoretical perspective led us to two principles that can be analyzed separately: maximize stability; minimize uncertainty. The other two principles that we propose come from different sources. The principle of “integration of public priorities across jurisdictions” is evident among existing plan quality criteria, for example, interorganizational coordination (Berke and Godschalk 2009) and vertical consistency (Norton 2008). As well, Smith (1998) places significant emphasis on this principle for agricultural land use planning in British Columbia, Canada, which has a strong policy focus to protect farmland. The fourth principle of accommodating flexibility is a more pragmatic response to the goal of achieving a balance among competing interests. In more detail, each of the four principles can be used as a basis for evaluating the strength of a plan’s policy focus as follows.

Maximize Stability

Something that is stable is difficult to topple; it stands strong and cannot be moved easily. Likewise, a stable policy focus is one that is well-entrenched in statutory plans that are based on clear, concise language, and can hold up to court challenge; it provides a clear statement of public interest that is not easily changed at the whim of shifting political interests; it is something that people can count on and to know what the rules are. To maximize the stability of a policy focus, a direct statement should be included in a statutory plan or regulation rather than in an aspirational policy document, whereby an enforceable statement is more stable than an aspirational statement. The statement should be prominent, ideally as part of a vision or goal or, of less importance, an objective or policy statement.

Integrate Public Priorities across Jurisdictions

Integrating policies and priorities across jurisdictions is a foundation for building cohesion across senior-level (state, province, region) and local governments. This principle of integration can be viewed as a “policy thread” that weaves together traditional areas of responsibility (Smith 1998). One can also think of integration as a formal “linkage” between plans that provides consistency among them. Such formal linkages can come in the form of a senior-level government policy that requires a lower-level plan “to be consistent with” senior-level government policy statements. The aim of such vertical mechanisms is to ensure that lower-level policies are set within the context of broader public priorities. In turn, local governments can integrate public priorities by including sufficient details about the relevant legislative context that guides and constrains local government plans and strategies.

Minimize Uncertainty

People want to know they can rely on the rules and regulations to be applied consistently and to know how a plan will be applied under different circumstances. While we must accept that we cannot eliminate all uncertainty, governments can minimize uncertainty by eliminating loopholes, ambiguous language, and open-ended conditions. Maintaining internal consistency of a plan, one of the criteria identified in the plan quality literature (e.g., Norton 2008), is another means to reduce uncertainty. A government can ensure that the stated goals in one element of its statutory plan are consistent with goals in its other elements. Clear statements about the domains of responsibility and lines of authority also reduce uncertainty.

Accommodate Flexibility

Creating an effective plan is an act of balance, without being too stable so that it cannot be changed when needed or too strict so that it cannot be applied in a range of circumstances. Thus, flexibility is necessary in order to moderate the restrictive effects of maximizing stability and minimizing uncertainty. The principle is to enable decision makers to accommodate a controlled level of flexibility without compromising the primary functions of the plan and its policy focus to provide stability and reduce uncertainty. One means to accommodate flexibility is to identify possible exceptions, with corresponding criteria to guide decisions, to the general rules and regulations that reflect local priorities and interests. At the same time too much flexibility may be damaging to a plan’s overall efficacy and stability. Hodge and Gordon (2008, 213) state, “although the plan should be amendable, it should not be subject to trivial challenges that would threaten its role as a continuous statement of policy.” Consequently, too much flexibility may also weaken a plan’s policy focus by introducing uncertainty in the planning process.

There is a logical relationship that brings the four principles together as a set and infers an order among them. First, one should seek to maximize the stability of the policy focus. Then, as a means to entrench stability, and as applicable, one should consider how public priorities can be integrated across jurisdictions. One can then look to minimize uncertainty by eliminating loopholes and ambiguous language that undermine stability. Only after these three principles are addressed to satisfy the attendant needs of the policy focus should one consider how to accommodate flexibility. Thus, the four principles are not mutually exclusive, but play off each other in very important ways.

Application

To help illustrate how the four principles can be applied, we provide a brief example of a policy focus on farmland protection in the city of Delta, British Columbia, Canada. This example is based on a situation where the province, as a senior level of government, has adopted enforceable policies to protect farmland. To keep the example brief, we do not discuss specific criteria in detail.

Overall, the city’s policy focus for protecting farmland within Delta is very strong. The strongest aspect is its stability, which is based on clear references to agricultural land use planning and farmland protection in its statutory plan (Official Community Plan [OCP]). At the outset, the OCP states its overall goals, one of which is to protect agricultural lands. This goal is supported by a strategy to protect the supply of agricultural land and promote agricultural viability with an emphasis on food production and 31 comprehensive policies that use strong statements to promote and protect existing farmland. Among these policies, urban containment boundaries are important tools, along with commitments to discourage the fragmentation of agricultural land by maintaining parcel sizes. The stability of the policy focus on farmland protection in the OCP is supported by subarea plans and zoning bylaw.

With regard for the second principle, integrating public priorities across jurisdictions, Delta’s OCP is integrated extensively with both regional and provincial policies. Delta adopted the Metro Vancouver Regional Growth Strategy, and the OCP includes three strategies and eleven detailed regional context statements to meet the region’s agricultural and farmland protection goals. Delta’s OCP also outlines how its policies are consistent with the provincial legislative framework (e.g., Agricultural Land Commission Act, Farm Practices Protection Act, and Local Government Act). Overall, Delta’s statutory plan is well integrated both vertically and horizontally across jurisdictions.

To minimize uncertainty about Delta’s policy focus on farmland protection, the OCP defines terms, minimizes open-ended conditions, and emphasizes planning for agriculture and farmland. This clarity is reinforced through internal consistency between the overarching goals, subarea plans, and zoning bylaw, which collectively provide a uniform means of applying regulations. A future land use plan, which sets out a general vision and pattern of land use, also helps to minimize uncertainty by articulating the general direction of future development.

With regard for the fourth principle, the OCP incorporates elements designed specifically to accommodate flexibility. Most notably, Delta has an Agricultural Advisory Committee (AAC), which is a very important governance mechanism that provides advice to council on matters relating to agriculture in the city. The AAC reviews plans, bylaws, policies, and strategies that affect agriculture. Some policies within the OCP are also designed to accommodate flexibility, such as edge-planning policies to minimize conflicts and manage the agricultural–urban interface. Where nonagricultural uses are approved, setback regulations provide buffers within identified edge areas.

As our example shows, the strength of a specific policy focus is based on the contents of a plan. Whether the evaluator examines the strength of the plan as a whole or only specific policies within a plan depends on the scope of the plan. In the example above, we discussed the strength of farmland protection as a single policy focus by analyzing specific elements of a comprehensive land use plan that covers the whole municipality. In a different situation, one might examine the strength of policy focus of a subarea plan with a stated purpose to protect farmland and applies only to farmland. In this second example, one would be evaluating the strength of policy focus of the whole plan. An evaluation of the strength of a policy focus can also be much broader. Such an evaluation could examine the elements of a legislative framework that includes a local government statutory plan as well as the related regulations, policies, and strategies that frame the plan, and extend both vertically to other levels of government and horizontally to neighboring jurisdictions. For the city of Delta, a broader evaluation of farmland protection as a policy focus would include provincial legislation (e.g., Agricultural Land Commission Act), the provincial regulations for subdivision and nonfarm uses, the city’s zoning bylaw, and the regional growth plan.

With regard for the three dimensions of plan quality, a researcher may choose to focus on only one dimension or combine their interests in quality of documentation (comprehensiveness), policy focus (strength), or discourse (persuasion). Norton (2008), for example, with a specific interest in neotraditional landscapes as a policy focus, incorporated all three dimensions of plan quality in his study. Our view is that no one dimension is more important than the other. What is most important, as discussed above and throughout the literature, is to ensure that the scope of inquiry and methods used, including the types of plan evaluation and dimensions of plan quality, align clearly and directly with the purpose of the evaluation. Although a comprehensive evaluation is always desired, a lack of resources may require a researcher to limit the scope.

Conclusion

While many authors have reflected on understanding and measuring the “good” plan, there has been far less discussion about the meaning of “quality.” Similar to concerns raised by Norton (2008), we believe that important differences are obscured by conceptual problems that define the field of plan quality evaluation. To address this concern, we questioned the presumption that quality is synonymous with “good” or “better,” which led us to three important outcomes. First, plan quality can be understood as one type of plan evaluation with a unique focus on efficacy, that is, evaluating a plan—as it is written—against its desired result. Second, the field of plan quality evaluation can be viewed as having three dimensions of quality: comprehensiveness as a measure of documentation quality, strength as a measure of policy focus, and persuasiveness as a measure of discourse quality. From these two outcomes we recognized a gap in the literature: the absence of a set of principles to guide the measure of policy focus. To fill this gap, we proposed a set of four principles: maximize stability, minimize uncertainty, integrate public priorities across jurisdictions, and accommodate flexibility. The outcome, as summarized in Figure 1, is a picture of the scope of plan quality evaluation and its three dimensions of quality, each with a corresponding set of principles.

Whereas a successful plan quality evaluation links content analysis methods with theoretical arguments and measures of plan characteristics (Lyles and Stevens 2014), a successful evaluation also requires a clear understanding of what constitutes the “quality” of a good plan. By articulating three sets of principles that correspond with three dimensions of quality, we help to resolve the paradoxical implications identified by Norton (2008) of conflating different ends of a plan by using a single measure of quality. By the same means, we also help address the challenge to refine plan quality principles to facilitate application across plan types.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Social Science and Humanities Research Council of Canada (435-2013-1726).