Abstract

When providing behavioral ratings of young children, classrooms with both a lead teacher and assistant teacher have the requisite knowledge about children’s social-emotional/behavioral status, as both teachers are involved in daily activities. A dyadic multigroup invariance test was conducted to examine concordance across teacher groups while accounting for interdependency within the data. Universal screening was conducted with the Behavioral and Emotional Screening System, Teacher Form-Preschool, for a sample of 255 teacher-assistant pairs. Ratings across the groups were largely consistent across the three domains measured by the BESS Teacher Form-Preschool, with ratings of over 80% concordance. Dyadic multigroup invariance held through scalar invariance, showing that the two groups interpreted the scale similarly. Latent mean testing showed equivalency for Externalizing Risk and Internalizing Risk; however, assistant teachers rated preschool children as being at a lower risk level for Adaptive Skills problems relative to lead teacher ratings. Use of assistant and lead teacher ratings is largely equivalent for rating social-emotional behavioral risk, with lead teachers flagging slightly more students as at-risk. Thus, slightly higher numbers of preschoolers may be subjected to Tier 2 MTSS-B intervention if lead teacher ratings are used.

Keywords

Introduction

The Multi-Tiered Systems of Support (MTSS) framework for providing proactive assistance to children struggling with academic and/or social-emotional/behavioral (SEB) risk is widespread in education. The framework typically consists of a continuum of three tiers of prevention: primary, secondary, and tertiary (Barrett et al., 2013; Harlacher et al., 2013). Review from state department of education policy documents nationwide highlights the importance of intervention and assistance for SEB risk and academic risk to support the success of the whole student (Briesch et al., 2019). MTSS involving behavior and/or social-emotional learning is nationwide, covering all K-12 grade levels across the 50 US states (I-MTSS Research Network, 2024; Zhang et al., 2023). While policy statements and research activities examining MTSS for behavioral assistance (MTSS-B) have focused on K-12 grades, there is increasing support for conducting SEB activities with children in preschool settings. Frameworks such as the Pyramid Model (Hemmeter et al., 2006), Positive Behavioral Interventions and Supports (Center on PBIS, 2024), and Conscious Discipline (Bailey, 2011) are often used in preschool to assist social-emotional development.

MTSS-B primary activities (i.e., Tier 1) are used to identify students who may need additional targeted (Tier 2) or intensive (Tier 3) behavioral interventions. While various risk identification methods exist (e.g., teacher nomination and office discipline referrals), universal SEB screening is recommended for preschoolers as well as K-12 students (Missall et al., 2021). Universal screening is cost-effective (Kuo et al., 2009), evaluates all children systematically and with the same criteria, facilitates early intervention (Arango et al., 2018; Tanner et al., 2018), and is more effective in identifying internalizing problems than teacher referrals or discipline records (Dowdy et al., 2013; Naser et al., 2018).

For children in early grades, universal screening measures are completed by the classroom teacher; however, preschool classrooms are typically structured to have at least one assistant teacher (depending on factors such as the number of children enrolled and/or special needs status). Assistant teachers support the lead teacher by helping with classroom management, interacting with students, and enforcing classroom rules, among other duties. Given that the classroom environment is shared, assistant teachers could be asked to provide universal screening ratings of preschoolers’ behavior for MTSS-B to assist the lead teacher. However, if teachers differ in their opinions of the severity or frequency of student behavior, inconsistent ratings may be obtained (De Los Reyes, 2011). Therefore, prior to considering the groups as equivalent, an investigation of similarity and differences in lead and assistant teachers’ behavioral ratings is warranted.

Characteristics of the Preschool Classroom

Practices suggest one adult for every 10 children, with recommended class sizes of no more than 20 preschoolers (NAEYC, 2018). While there are typically one to two assistant teachers for every lead teacher in a preschool classroom (Clifford et al., 2005), there are variations based on setting and age of children. A meta-analysis study reveals that child–teacher ratios in early childhood education and care ranged from 5:1 to 15:1, with a mean close to 9:1 (Bowne et al., 2017).

Preschool classrooms with assistant teachers were noted as higher quality compared to single-teacher classrooms (Shim et al., 2004). Further, even with a lower education level than lead teachers and a lower role in the hierarchical structure, assistant teachers demonstrated similar teaching behaviors in terms of amount, quality, and appropriateness (Shim et al., 2004). While lead and assistant teachers collaborate to provide emotional support, class organization, and instructional support in the same classroom (Curby et al., 2012), they may perceive children’s behavior differently. Wolcott and Williford (2015) found assistant and lead teacher ratings of externalizing problem behavior to be positively correlated, but with values at roughly a moderate level (i.e., r = .50). Thus, even though teaching behaviors are similar among lead and assistant teachers, ratings of individual children’s behavioral patterns may differ.

Behavioral Ratings From Lead and Assistant Teachers

Previous studies have examined differences between ratings of lead and assistant teachers within the same classroom (e.g., Hunter et al., 2018; Mowrey & Farran, 2022). However, these studies have shown mixed findings regarding the consistency of lead and assistant teacher ratings. Some studies found that inter-rater reliability between lead and assistant teachers ranged from low to moderate (Greer et al., 2015; Gresham et al., 2010; Hunter et al., 2018). Other studies suggested that lead and assistant teachers reported higher agreement for some SEB dimensions (e.g., very high or very low levels of externalizing behaviors) (Wolcott & Williford, 2015), but lower agreement in other dimensions (e.g., internalizing behaviors) (Arnold & Dobbs-Oates, 2013; Wolcott & Williford, 2015). Factors affecting rating discrepancy between lead and assistant teachers included students’ socioeconomic status (Arnold & Dobbs-Oates, 2013), developmental variation in preschoolers (Loughran, 2003), dimensions of behavior assessed (Bulotsky-Shearer & Fantuzzo, 2004), and assessment techniques (Gresham et al., 2010).

On the other hand, lead and assistant teachers with different educational levels may interpret children’s specific behavior differently (Anthony et al., 2005). The distinct roles that lead teachers and assistant teachers play may also affect the rating agreement. Lead teachers primarily observe children’s behaviors during the whole-group activities, while assistant teachers interact with children more in individualized settings (Rubie-Davies et al., 2010). In contexts of high demands, children are more likely to demonstrate behavioral problems; however, if behaviors are not extreme, rating discrepancies are more likely to occur (Wolcott & Williford, 2015).

Limitations of Previous Studies

When examining scoring differences between lead and assistant teachers, previous studies largely computed summary statistics (e.g., correlations, reliability estimates, and mean values) across groups. This premise assumes that the instrument is interpreted the same way for both lead and assistant teachers. However, measurement invariance (MI) is a prerequisite for comparing the constructs across groups or conditions (Davidov, 2011; Putnick & Bornstein, 2016). MI examines whether scores from the operationalization of a construct have the same meaning across conditions, such as administration method, time points, and population groups (Meade & Lautenschlager, 2004). Assuming MI without verification may lead to biased conclusions about the difference in mean scores of the construct across groups.

Another limitation of the previous studies is that they used individuals rather than dyads as the unit of analysis, ignoring the interdependence of lead and assistant teachers. When two individuals demonstrate voluntary linkage (i.e., the bond that develops over time) or belong to the same group, scores from the two members are non-independent (Kenny et al., 2020). As assistant teachers work alongside lead teachers, ratings between groups should be non-independent. Studies ignoring non-independence may lead to inaccurate standard errors, a loss of power, and failure to capture whether the measure works the same way across dyad members (Claxton et al., 2015) and could lead to incorrect inferences being made (Cook & Kenny, 2005).

The current study examined universal screening ratings from both lead and assistant teachers with a popular screening instrument, the Behavioral and Emotional Screening System, Teacher Form-Preschool (BESS Teacher Form-Preschool; Kamphaus & Reynolds, 2015). Using scores of the same child, we examined if ratings across teacher types were invariant, while also accounting for the non-independent nature of the data using a dyadic analysis. If lead and assistant teacher ratings were found to be invariant, latent mean differences were examined across behavioral domains.

Methods

Participants

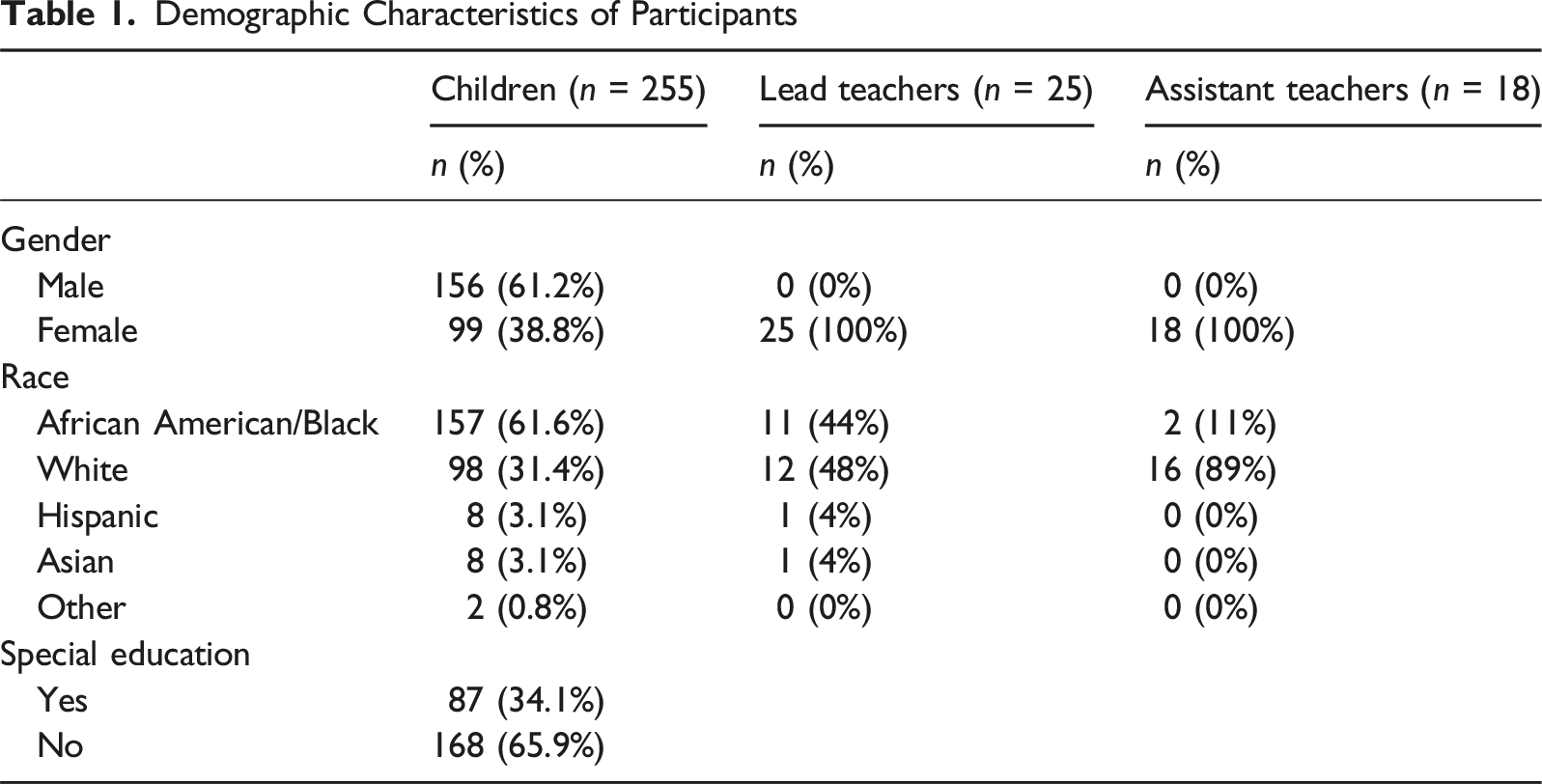

As part of a larger grant investigating universal screening with early school children, 43 teachers, 18 lead teachers, and 25 assistant teachers provided the BESS Teacher Form-Preschool ratings for 255 young children. This sample size is adequate, as 100 to 150 dyads are sufficient for dyadic CFA models, and larger sample sizes are needed only for more complicated models (Ledermann & Kenny, 2012).

Demographic Characteristics of Participants

Instrument

The BESS Teacher Form-Preschool (Kamphaus & Reynolds, 2015) was used with universal MTSS-B screening in the fall of 2023. The screener is composed of 20 items, with six items ascribed to each of the three domains of emotional and behavioral functioning in preschool-age children. These domains include Internalizing Risk (i.e., assessing anxiety, depression, and somatization; e.g., “Is easily stressed”), Externalizing Risk (i.e., externalizing behaviors, such as hyperactivity, aggression, and conduct problems; e.g., “Annoys others on purpose”), and Adaptive Skills (i.e., measuring adaptability, social skills, and activities of daily living important for functioning at home and school, and in the community; e.g., “Communicates clearly”). A higher score on Externalizing Risk and Internalizing Risk means more problems, and a high score on Adaptive Skill Risk means fewer problems. Based on the BESS Teacher Form-Preschool manual, two items measuring Attention Problems do not assess any of the three dimensions at the preschool level. These two items were not included in the analysis.

For the screener, ratings for each item were provided by teachers using a 4-point Likert Scale (“Never” = 0, “Sometimes” = 1, “Often” = 2, and “Almost Always” = 3). BESS Teacher Form-Preschool item raw scores are summed to provide an index of a child’s behavioral adjustment within each of the three subscales. Internalizing subscale scores >6 and Externalizing subscale scores >7 indicate elevated risk. For Adaptive Skills, scores <5 (age 3) or <6 (age 4–5) suggests risk in adaptive functioning. Within MTSS-B, preschoolers exhibiting risk in one or more areas may be flagged for secondary assistance. In addition, an overall score of SEB risk, termed the Behavioral and Emotional Risk Index (BERI), provides an evaluation of summative risk. BERI scores are provided on a T-metric (M = 50, SD = 10), with values over 60 indicating a level of overall risk (Kamphaus & Reynolds, 2015).

To evaluate the quality of ratings on the BESS Teacher Form-Preschool, three validity indexes were examined. All cases fell within the acceptable range for the F Index (0–1), Consistency Index (0–6), and the Response Pattern index (0–2) for both assistant and lead teacher ratings. The findings indicate that all ratings were of adequate quality, supporting the reliability of the data for use in subsequent analysis.

Item-level data were exported from Review360, which rescaled BESS Teacher Form-Preschool items from the original 0–3 scoring to a 1–4 scale. All the analyses were conducted using these 1–4 values. This rescaling preserved the relative distance between response options and is mathematically equivalent to the original scoring system.

Data Analysis

Statistical analyses were conducted using Mplus 8.4 software (Muthén & Muthén, 2019). Given that the BESS Teacher Form-Preschool uses a 4-point Likert scale, we used the weighted least squares with mean and variance correction (WLSMV) estimator to accommodate the categorical nature of the response scale (Finney & DiStefano, 2013). No missing data were identified, as the online system for entering the BESS requires complete responses to all items. To address the non-independent nature of the data, each unit of analysis comprised a pair of lead and assistant teacher ratings for a given child. Additionally, considering the hierarchical structure, with children nested within classrooms, we accounted for clustering design effects to provide more accurate parameter estimates (Raykov & DiStefano, 2021).

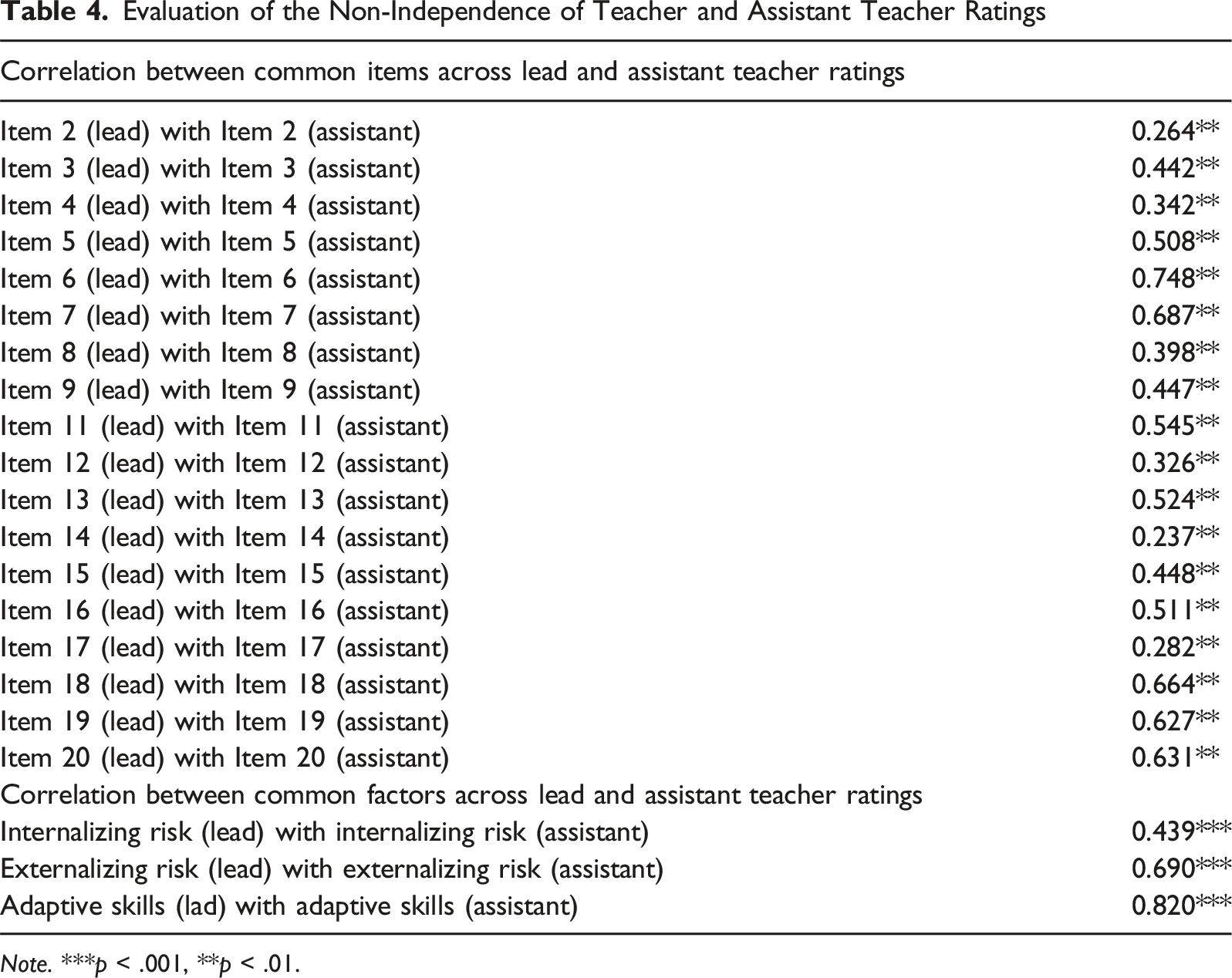

Measurement of Non-Independence

To account for non-independence, we performed all analyses in a dyadic framework with lead and assistant teacher pairs as the unit of analysis. A dyadic design requires an initial analysis of the degree of non-independence in the dependent variable, at both the indicator and latent variable levels (Kenny et al., 2020). At the indicator level, we evaluated the non-independence of the data by calculating the Spearman correlation between lead teacher and assistant teacher ratings for each item. At the latent variable level, the non-independence of the data was assessed by examining the standardized correlation between lead and assistant teachers’ ratings on the same latent factor. Cohen’s effect size (i.e., .1 small, .3 medium, .5 large) was used for evaluating the correlation for the non-independence test (Kenny et al., 2020).

Factor Structure

We conducted dyadic confirmatory factor analysis (CFA) to examine whether the three-factor structure underlying the BESS Teacher Form-Preschool could be confirmed for both lead and assistant teacher ratings. To account for the non-independence of the data within the dyadic CFA framework, covariance terms between common factors and correlated error terms between lead and assistant teachers’ ratings were modeled (Kenny et al., 2020).

CFA fit was assessed using both global and local model fit indices. Global fit indices included the chi-square (χ2) fit statistic, comparative fit index (CFI), root mean squared error of approximation (RMSEA), and standardized root mean square residual (SRMR). Acceptable model fit was indicated by CFI ≥.90, RMSEA ≤.08, and SRMR ≤.10, while good fit was indicated by CFI ≥.95, RMSEA ≤.05, and SRMR ≤.08 (Hu & Bentler, 1999). As global model fit might be affected by the number of items and the magnitude of the item factor loadings (McNeish et al., 2018), local model fit indices, such as factor loadings and covariance residual values, were also examined. Factor loadings were expected to exceed .30 (Costello & Osborne, 2005), and standardized residuals (e.g., |>3.0|) suggested a possible local model misfit (Raykov & Marcoulides, 2012). Model modification indices were examined to identify the mis-specified parameter in the case of model misfit.

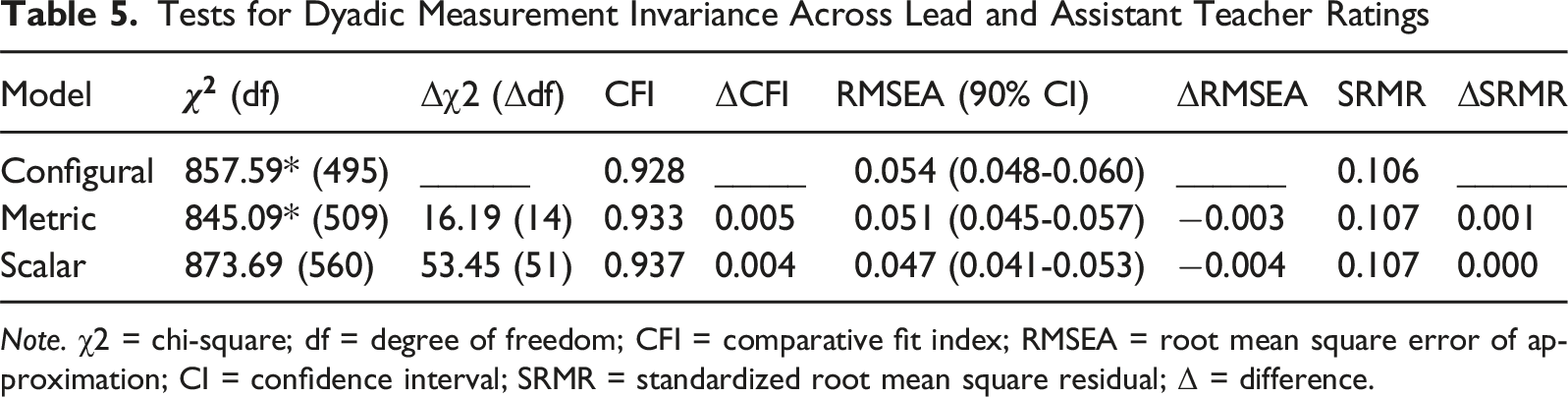

Measurement Invariance

Multiple-group confirmatory factor analysis was used to examine the MI of BESS Teacher Form-Preschool between lead and assistant teacher ratings. Three levels of measurement invariance were tested sequentially: configural, metric, and scalar invariance. Configural invariance examines whether group members interpret the items on the same theoretical groundings. Metric invariance evaluates the equivalence of the association between items and underlying factors across groups (Cheung & Rensvold, 2002; Schmitt & Kuljanin, 2008). Scalar invariance investigates whether participants from separate groups endorse items to the same extent (Vandenberg & Lance, 2000).

The dyadic MI considered the non-independence between lead and assistant teacher ratings with data structured at the dyad level. In the configural invariance model, we allowed the same indicators to load on the common factors for both lead and assistant teacher ratings, while allowing covariance between common factors and correlated error terms between lead and assistant teachers’ ratings. This model served as the baseline for comparison. Metric invariance was tested by constraining the unstandardized factor loadings on the common factors to be equal for both lead and assistant teacher ratings. The metric invariance model was then compared with the configural invariance model. If metric invariance held, scalar invariance was tested by constraining the factor loadings and item thresholds to be equal for lead and assistant teacher ratings across common factors for both ratings. A comparison between the metric and scalar invariance models was then made. If full invariance was not supported at any step, partial invariance was tested by freeing the threshold of one item at a time based on modification indices (Van de Schoot et al., 2012). For the model scaling purposes, the latent variable variance was constrained to 1, and the latent variable means to 0.

We used the following criteria to compare nested models: the difference between models’ chi-square value (Δχ2), RMSEA values (Δ RMSEA), CFI values (ΔCFI), and SRMR values (ΔSRMR). The Mplus DIFFTEST procedure was used to accurately compare differences between WLSMV-adjusted χ2 values, where significant Δχ2 indicates non-invariance. Metric invariance holds if ΔCFI ≤.01, ΔRMSEA ≤.015, and ΔSRMR ≤ .03. Scalar invariance is established if ΔCFI ≤ .01, ΔRMSEA ≤ .015, and ΔSRMR ≤ .01 (Chen, 2007).

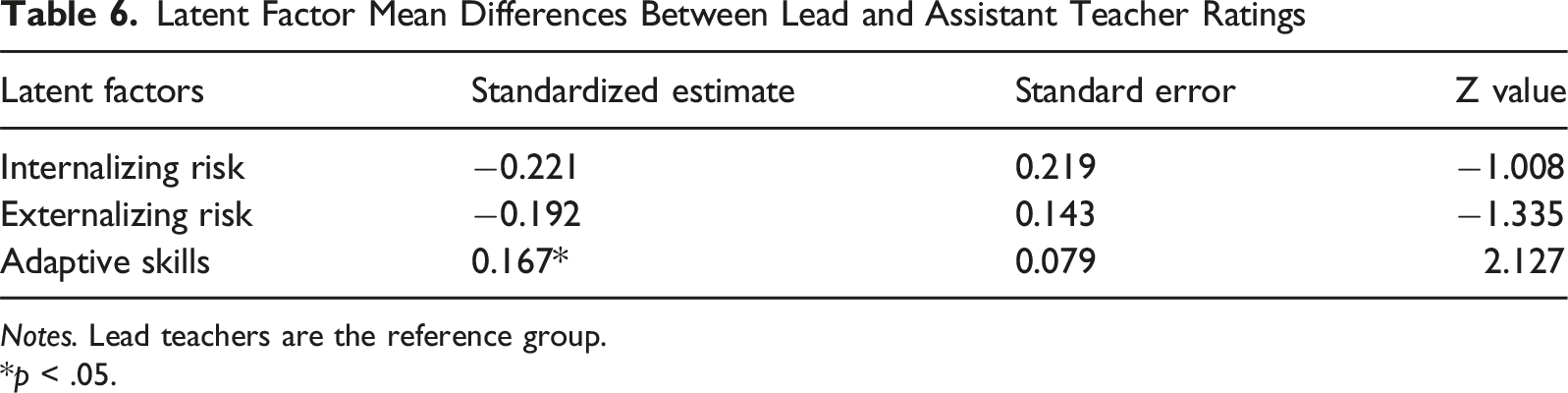

Scalar invariance is sufficient for comparing the latent mean across conditions (Meredith, 1993). After examining whether scalar invariance held, mean differences between lead and assistant teachers in their ratings of different dimensions of emotional and behavioral risk measured by BESS Teacher Form-Preschool (i.e., Internalizing Risk, Externalizing Risk, and Adaptive Skills) could be compared. Latent means testing constrained factors in the lead teacher rating group to 0, while those in the assistant teacher rating group were freely estimated. The size of the standardized latent mean differences was estimated using Cohen’s d.

Results

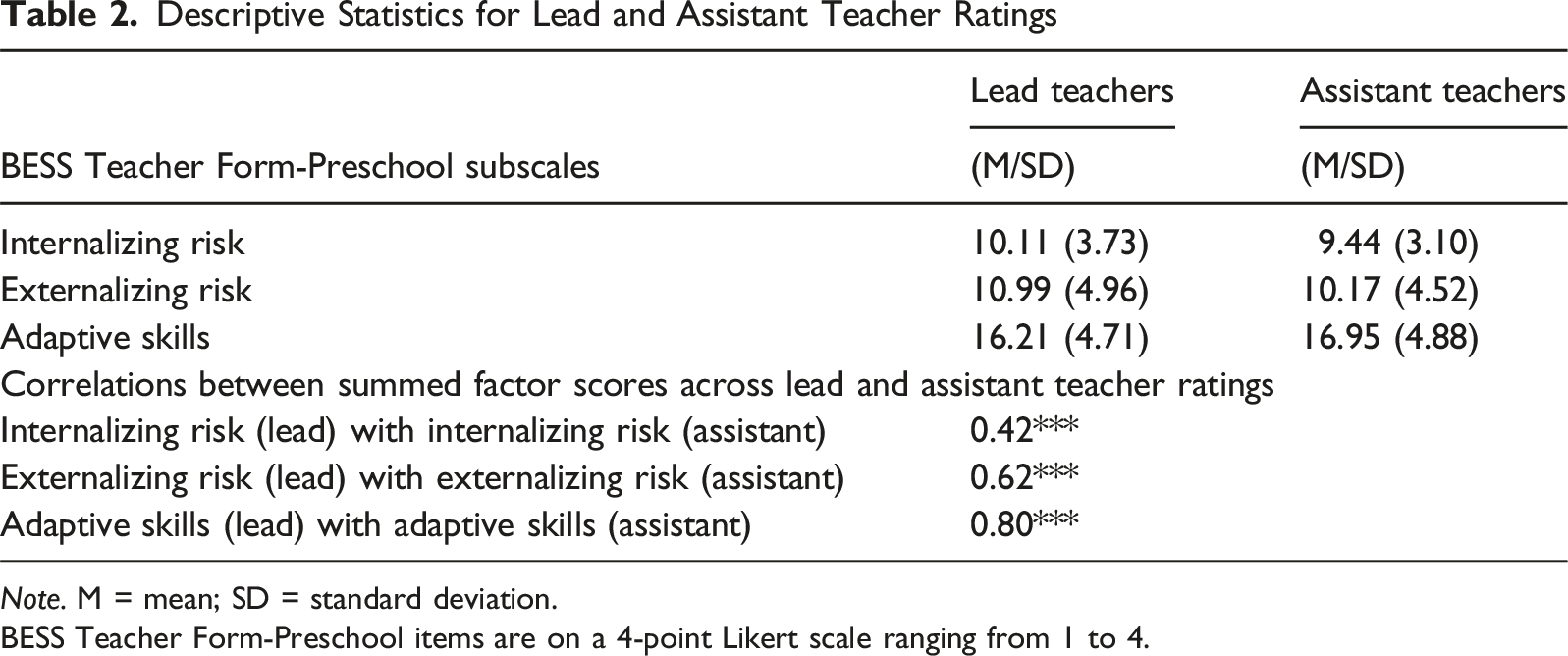

Descriptive Statistics

Descriptive Statistics for Lead and Assistant Teacher Ratings

Note. M = mean; SD = standard deviation.

BESS Teacher Form-Preschool items are on a 4-point Likert scale ranging from 1 to 4.

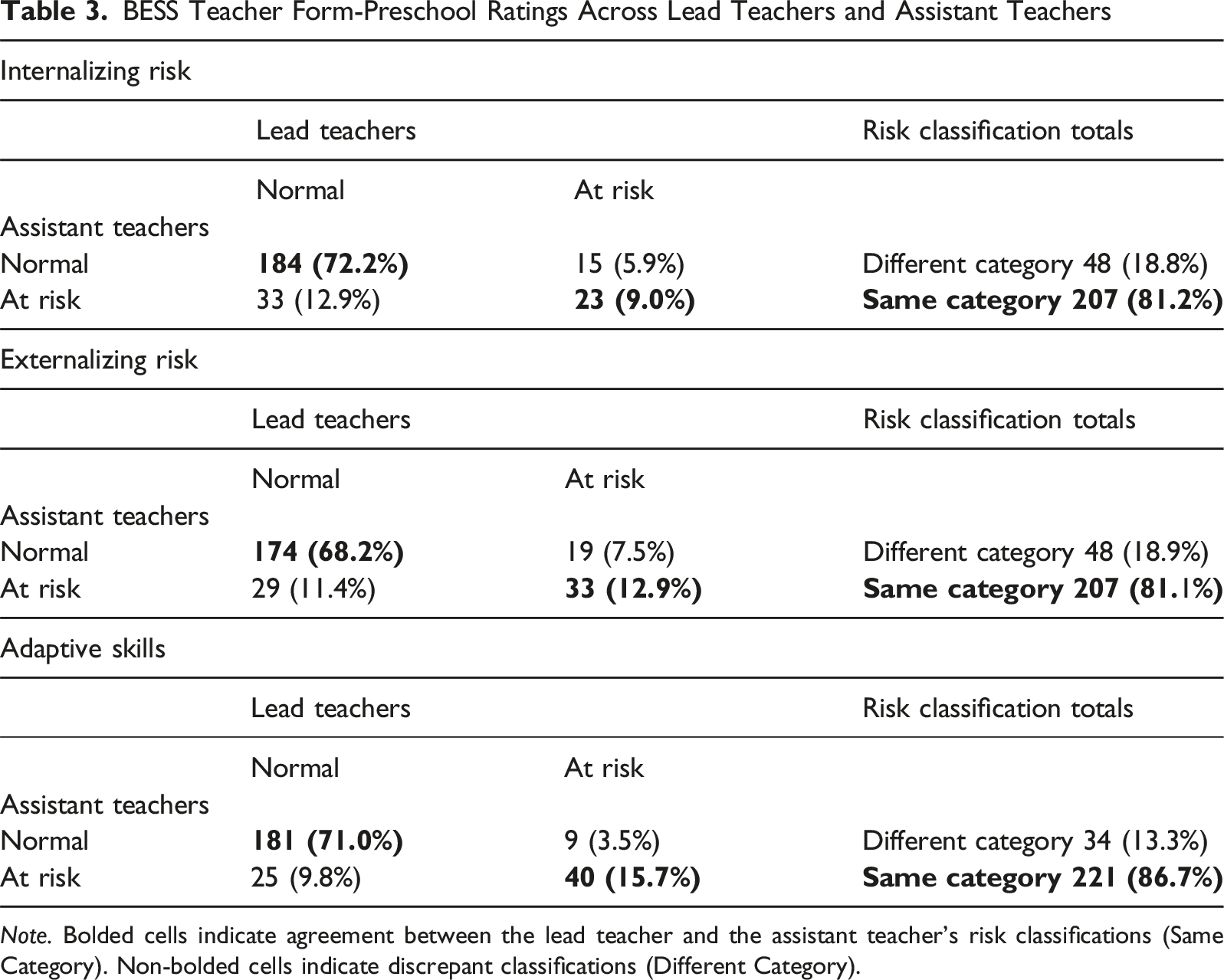

BESS Teacher Form-Preschool Ratings Across Lead Teachers and Assistant Teachers

Note. Bolded cells indicate agreement between the lead teacher and the assistant teacher's risk classifications (Same Category). Non-bolded cells indicate discrepant classifications (Different Category).

Evaluation of Non-Independence

Evaluation of the Non-Independence of Teacher and Assistant Teacher Ratings

Note. ***p < .001, **p < .01.

Factor Structure of the BESS Teacher Form-Preschool

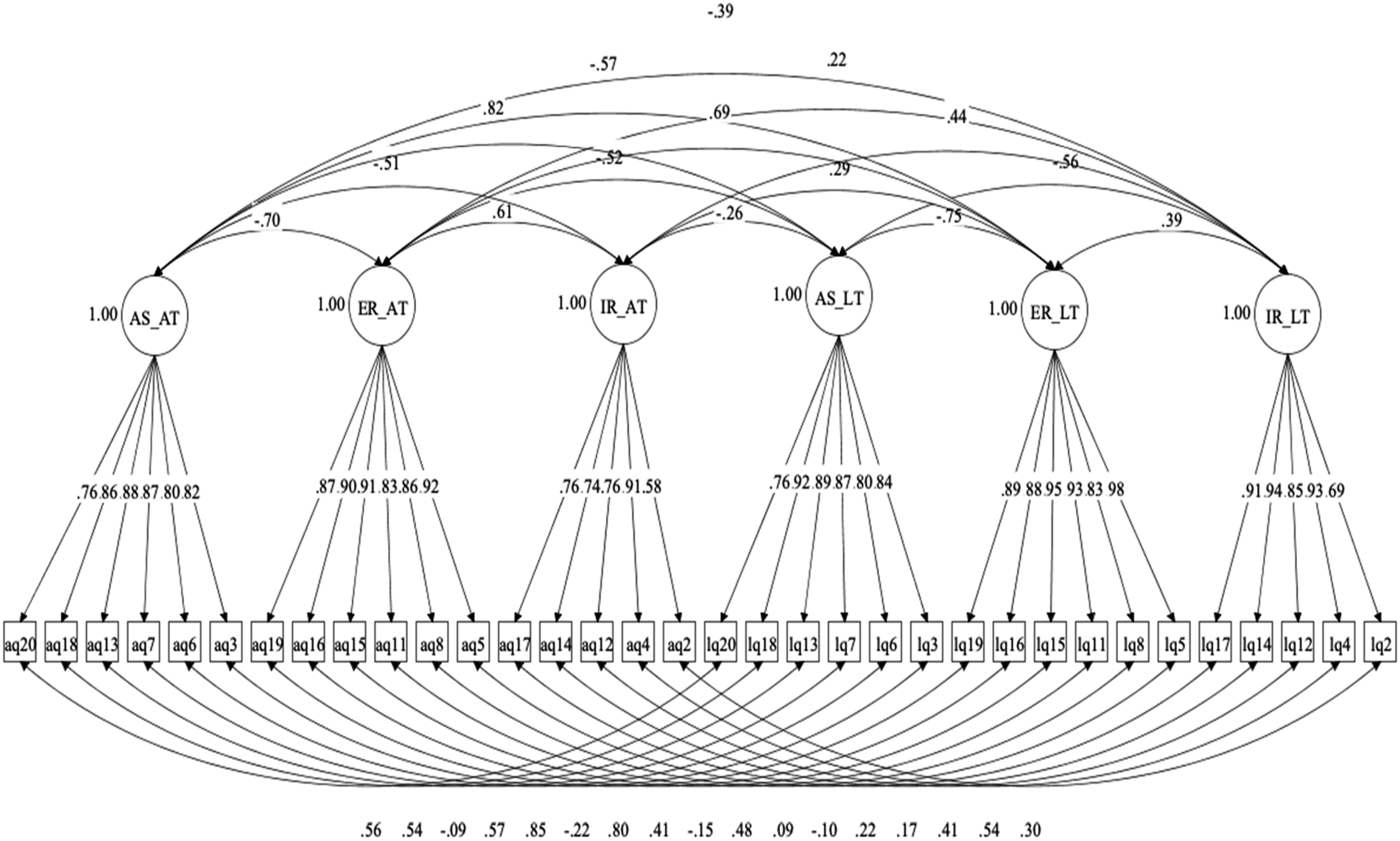

The three-factor structure of the BESS Teacher Form-Preschool was examined using dyadic CFA to establish the measurement model. Two models were tested. In Model 1, we included a covariance between common factors and a correlation between errors of common items for lead and assistant teacher ratings, but the model did not converge. The examination of the model modification indices revealed that the residual correlation between the lead teacher and the assistant teacher ratings of Item 9 (“Is easily frustrated”) indicated over-dependency. Per recommendations of dyadic analysis, Item 9 was removed (Kenny et al., 2020). The model without Item 9 (Model 2) exhibited an acceptable fit with all fit indices within the recommended boundaries (χ2 (df) = 857.59 (495), CFI = .928, TLI = .918, RMSEA [90% confidence interval] = .054 [.048–.060], SRMR = .106). The local fit of Model 2 was also good. Standardized factor loadings showed a significant association between items and latent variables. The factor loadings ranged from .69 to .98 for the lead teacher group and .58 to .92 for the assistant teacher group. Therefore, the three-factor structure without the Item 9 structure was used as a baseline model for the sequential test of dyadic MI. Figure 1 illustrates the dyadic 3-factor structure of the BESS Teacher Form-Preschool. BESS Teacher Form-Preschool three-factor dyadic structure

Measurement Invariance Analysis

Tests for Dyadic Measurement Invariance Across Lead and Assistant Teacher Ratings

Note. χ2 = chi-square; df = degree of freedom; CFI = comparative fit index; RMSEA = root mean square error of approximation; CI = confidence interval; SRMR = standardized root mean square residual; Δ = difference.

Latent Mean Comparison

Latent Factor Mean Differences Between Lead and Assistant Teacher Ratings

Notes. Lead teachers are the reference group.

*p < .05.

Discussion

As MTSS-B for providing preventative assistance to children has increased, this study investigated the comparability of lead teacher and assistant teacher behavioral ratings in the preschool environment. This study examined behavioral ratings for the BESS Teacher Form-Preschool among 255 preschool children by both lead and assistant teachers. Lead and assistant teachers have been found to be similar in emotional support and classroom organization (Curby et al., 2012), yet prior work has largely considered the groups to be independent. As the preschool classroom environment is shared, descriptive analyses of ratings and classifications were conducted to examine similarities across ratings for the same child. Also, dyadic measurement invariance was used to recognize the dependency between teacher pairs. This analysis framework has not been used in prior comparisons of lead teachers and assistant teachers within the same class; however, treating the two groups as independent may lead to biased parameter estimates and standard errors (e.g., Kenny et al., 2020).

The MTSS framework includes an assessment of child risk as an initial step, where young children with risk are identified through information, with universal ratings recommended as an optimal method to use with preschoolers (Missall et al., 2021; Shepley & Grisham-Brown, 2019). As most preschool classrooms have more than one adult providing instruction, school administrators may have a choice of raters to complete screening forms. Multi-informants have been found to be useful, especially when children are younger, to provide different views of child behavior. Here, classification of risk level as well as correlations between lead and assistant teachers were in line with information found previously by pairs of adults in similar roles (e.g., pairs of teachers, pairs of parents) observing a child in the same setting (Achenbach et al., 1987). A previous meta-analysis conducted by Achenbach and colleagues (1987) identified a mean correlation between ratings at approximately r = .60. With the BESS Teacher Form-Preschool, ratings between lead and assistant teachers exceeded this level for scores of Adaptive Skills and Externalizing Risk. However, the correlations between Internalizing Risk scores were lower. As internalizing problems are not as outwardly apparent, teachers have had a challenging time identifying these problems in the classroom setting (DiStefano et al., 2014). Latent mean scores for this dimension yielded the largest standard error, showing more fluctuation in latent scores, even after accounting for dependence among the groups. Given the developmental stage (and behavioral fluctuations) common with preschool children, it may be helpful to provide ratings of an informant from a different setting and identify a student for Tier 2 assistance if any of the ratings are elevated.

Even informants within the same environment may provide discrepancies (Achenbach et al., 1987). Here, 80% of ratings were found to provide the same classification of risk for a student. For the 20% of ratings that were discrepant, examination of classification status showed that more lead teachers noted a child to be at-risk when the assistant teacher’s ratings would classify behavior as normal development. This was more prevalent for the Adaptive Skills scale. Further, examination of mean scores for groups showed that assistant teachers rated preschool children at a lower risk level of Adaptive Skills than lead teachers. Thus, the more observable the behaviors, the more similar the ratings.

A novel aspect of this study was the use of dyadic multigroup invariance testing for lead teacher and assistant teacher ratings. The non-independence indices supported use of this as a framework; thus, dyadic analyses are suggested for other studies involving two teachers (whether co-teachers or lead/assistant pairs) in a preschool classroom. In the invariance test, full scalar invariance held, showing that the three-factor structure, loading values, and item thresholds were the same across lead and assistant teachers. Results suggested that the teachers were using the BESS Teacher Form-Preschool similarly, showing that the scale was interpreted in the same manner and that differences in latent means may be attributed to group differences rather than artifacts of measurement (Cieciuch et al., 2019).

Concerning the scores of latent variables, assistant teachers yielded lower risk levels for all scales, with differences statistically significant for adaptive skills; however, latent scores for internalizing risk and externalizing risk had higher standard errors, meaning that there was more variability between scores for these two subscales. Interpretation of preschoolers’ behaviors was seen as more positive by assistant teachers across all three subscales. As lead teachers may have more experience with student maladaptive behaviors, using solely assistant teacher behaviors might provide a more positive view of behavior. This may result in more children who need assistance not being recommended for further assistance and intervention.

Overall, ratings across lead teachers and assistant teachers are similar, but still show discrepancies across raters. If assistant teacher ratings were used in place of lead teacher ratings for screening, it is encouraging that most ratings would provide the same assessment of child risk. Assistant ratings may be used in place of teacher ratings for universal screening, with the caveat that there may be fewer children identified for risk. Universal screening takes approximately an hour to evaluate the entire preschool class (Greer et al., 2012). Further, preschool teachers did not find the duties of screening to be overly burdensome or time-consuming (Greer et al., 2012). Thus, schools may be more of a conservative approach to have lead teachers complete screening and assistant teachers contribute more to the assistance of secondary interventions for flagged students. This suggestion would flag more students for risk and involve assistant teachers in providing the more time-consuming interventions at Tier 2.

Limitations and Future Research Directions

As with any study, this study has limitations. First, we recognize that this study was conducted in one region of one state and only used one screening instrument (i.e., BESS Teacher Form-Preschool). While we do not attribute differences in the selected schools from other preschool environments in terms of the students served or classroom setup, we understand that the results could be specific to this study and/or screening instrument. Future studies may examine rating concordance and dyadic analyses across lead teacher and assistant teacher pairs in other states and with other rating scales to determine if training or discussions prior to independent ratings may improve concordance.

Second, this study investigated universal screening procedures as part of MTSS-B. Given that SEB activities are becoming more commonplace in preschool environments, the study of these activities is warranted. However, we recognize that our study was conducted using the standard protocol for universal screening with the BESS Teacher Form-Preschool. Thus, no additional training or discussions were conducted with teachers. Previous studies have found that incorporating training prior to screening can help teachers provide ratings that recognize cultural and/or sex-specific behaviors (Kilgus & Eklund, 2016). Future studies may examine how training may improve the concordance of behavioral ratings between ratings for teachers within the same classroom and reduce the amount of variability attributed to individual differences in responding.

Third, information regarding the lead teacher and assistant teachers’ educational level and years of experience in the classroom was limited. Education and training for both groups were largely unavailable. Approximately half of the lead teachers and assistant teachers reported their years of experience. Of these reports, lead teachers generally had more years of experience than assistant teachers (15% of lead teachers had under 5 years of experience, and 35% of assistants had under 5 years of experience). Greater information on both groups may have suggested further interpretations of the lead teacher and assistant teachers’ differences as informants.

Finally, we note that the use of dyadic analysis was a novel approach in the current study, as previous research has treated lead and assistant teacher groups as independent, even though they share the same work environment. These procedures are readily available within the structural equation modeling framework and may be beneficial to early childhood researchers to consider. While our findings did not show great differences among lead and assistant pairs, controlling for dependence in the data may help researchers more clearly examine differences and similarities between teachers in the preschool environment.

Given that MTSS-B activities are widespread in education, preschool classrooms may become more involved in such undertakings for early identification and intervention of social-emotional/behavioral risk. This study showed similarities and differences among lead and assistant teachers for use of a popular rating scale often associated with SEB assessment. Further, this study illustrated how use of a dyadic analysis framework can help early childhood researchers more accurately estimate parameters and standard errors when dealing with dependent data. We hope the investigation may help practitioners weigh the pros and cons of using lead or assistant teacher ratings.

Footnotes

Acknowledgements

This research was supported by the Institute of Education Sciences, U.S. Department of Education, Grant R324A190066 to the University of South Carolina. The opinions expressed are those of the authors and do not represent views of the Institute or the U.S. Department of Education.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the Institute of Education Sciences, U.S. Department of Education, Grant R324A190066 to the University of South Carolina.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.