Abstract

Keywords

Since 1905, intelligence testing has been a common practice in schools, sparking ongoing debates among researchers and school psychologists regarding its usefulness as a tool for understanding learning disabilities (Alfonso & Flanagan, 2018). Theories of intelligence can broadly be categorized into two groups: (1) a traditional quantitative approach relying on psychometric models, such as the Cattell–Horn–Carroll model (CHC), and (2) a second-generation group based on theory, for example, neurocognitive theories such as the Planning, Attention, Simultaneous, Successive (PASS) theory of intelligence (Naglieri, 2014). However, establishing a solid connection between traditional intelligence assessments and academic achievement has proven challenging, with only weak-to-moderate correlations between test results and academic achievement (Canivez & Youngstrom, 2019; Dombrowski et al., 2022). Additionally, numerous studies have pointed out that general intelligence (the psychometric g-factor) should be the primary level for interpreting test results based on the Cattell–Horn–Carroll theory (CHC theory) and that interpretation of secondary (factor) levels has a low probability of identifying specific learning disabilities (Canivez et al., 2020; Dombrowski et al., 2018; Zaboski et al., 2018). Thus, cognitive assessments must be grounded in robust theoretical frameworks to further understand cognitive functions and effective interventions.

One such framework is the Cognitive Assessment System (CAS), which is based on the PASS theory (Naglieri & Das, 1995). This model posits a unique approach to understanding cognitive processes, diverging from traditional psychometric frameworks that typically emphasize a unitary general intelligence factor. The second edition, CAS-2, operationalizes PASS theory in a test battery that includes 12 subtests, with three subtests for each of the four indexes. The PASS theory was developed by Das and Naglieri in 1991 and is based on Luria’s neuropsychological theory of three functional units (Luria, 1970). According to the PASS theory, there are four neurocognitive processes: (1) Planning involves cognitive control, the use of knowledge, intentionality, and selection of strategies, (2) Attention provides focused, selective cognitive activity over time and resistance to distraction, (3) Simultaneous processing integrates stimuli into groups, and (4) successive processing arranges stimuli in a specific serial order. The latter two units work together to code and store incoming stimuli and retrieving knowledge from long-term memory (Das, 2018; Das et al., 1994; McCrea, 2009).

Studying validity and applicability of the CAS, multiple studies have examined the relationships between cognitive test results from the CAS and academic performance, revealing significant correlations between PASS processes and reading and mathematics (Georgiou et al., 2020; Naglieri & Rojahn, 2004). It has also shown that higher scores are associated with learning and academic achievements in both full scale (Naglieri & Otero, 2024; Naglieri & Rojahn., 2004) and in the four specific processes, as the CAS scale accounted for unique variance in explaining academic achievement (Sergiou et al., 2023) compared to other cognitive test batteries, such as the WISC-IV. Regarding diagnostic specificity and specific learning disabilities, children diagnosed with ADHD have been found to have deficits in Planning and Attention factors (Canivez & Gaboury, 2016; Van Luit et al., 2005). Furthermore, dyslexia has been associated with deficits in the Successive factor (Das et al., 1994). Several studies have examined the effectiveness of cognitive strategy instruction based on CAS results and PASS theory. These studies suggest that cognitive intervention programs lead to academic improvements in mathematics (Naglieri, 2003) and reading difficulties (Hayward et al., 2007). Cognitive strategy instruction based on the PASS theory has been shown to improve math fluency, numerical operations (Iseman & Naglieri, 2011), and reading skills (Papadopoulos et al., 2004).

Supporting the validation of CAS-2, previous studies have investigated the factor structure and demonstrated consistency between the PASS theory and CAS (Canivez, 2011; Deng et al., 2011; Naglieri et al., 2006; Naglieri & Otero, 2024). CAS has been standardized in various languages, including Italian, Greek, Dutch, and Japanese (Naglieri et al., 2013; Nakayama et al., 2012; Papadopoulos et al., 2008). These studies, which examined the construct validity of the CAS in different languages and cultures, showed that the proposed four-factor model (PASS) demonstrated strong reliability and validity in relation to cognitive abilities. However, independent researchers have criticized the CAS and suggested alternative explanations for the factor structure. Specifically, according to Kranzler and Keith (1999), the Planning and Attention scales exhibited high intercorrelation, which was found in a Portuguese sample (Rosario et al., 2015). Additionally, these studies suggest that the CAS is consistent with the CHC theory of intelligence, and therefore with general intelligence (Keith et al., 2001; Kranzler & Keith, 1999). Canivez (2011) also found that the global factor (g-factor) accounted for a large part of the common variance in the CAS. However, compared to the WISC-IV and other well-established test batteries, the CAS exhibited a greater amount of variance at the subtest level, supporting a four-factor model for the PASS theory. Canivez (2011) recommended future analyses to increase the understanding of the interdependence of PASS factors and enhance the interpretive framework. Considering this criticism, Naglieri and Das (1995) argued against the proposed revision of the PASS model (merging Planning and Attention factors) to a (PA)SS model, as this disregards the support for the original model found in neuropsychological and cognitive psychology that Planning and Attention are distinct, separate factors. Based on Luria’s (Luria, 1970) theory of the working brain, the original PASS model emphasizes the distinct constructs of Planning and Attention factors, as they differ in both anatomy and cognitive function (Das, 2018; McCrea, 2009). Additionally, Planning and Attention can be better understood within the framework of executive functions (Best et al., 2011; Korzeniowski et al., 2021), as they are intertwined with various cognitive processes, including problem-solving and executing complex tasks. From a developmental perspective, the differentiation and maturation of executive functions separate basal executive functions, such as focusing and selection (attention), from more complex executive functions, including development, execution, and monitoring of problem-solving (Planning) (Best et al., 2011). Best et al. (2011) showed moderate to large correlations between executive function and academic achievement. Differences in the strength of the correlations between different types of executive functions and academic achievement were also found. Furthermore, several studies have demonstrated the validity of Planning processes in explaining mathematics achievement (Kroesbergen et al., 2004; Partanen et al., 2020).

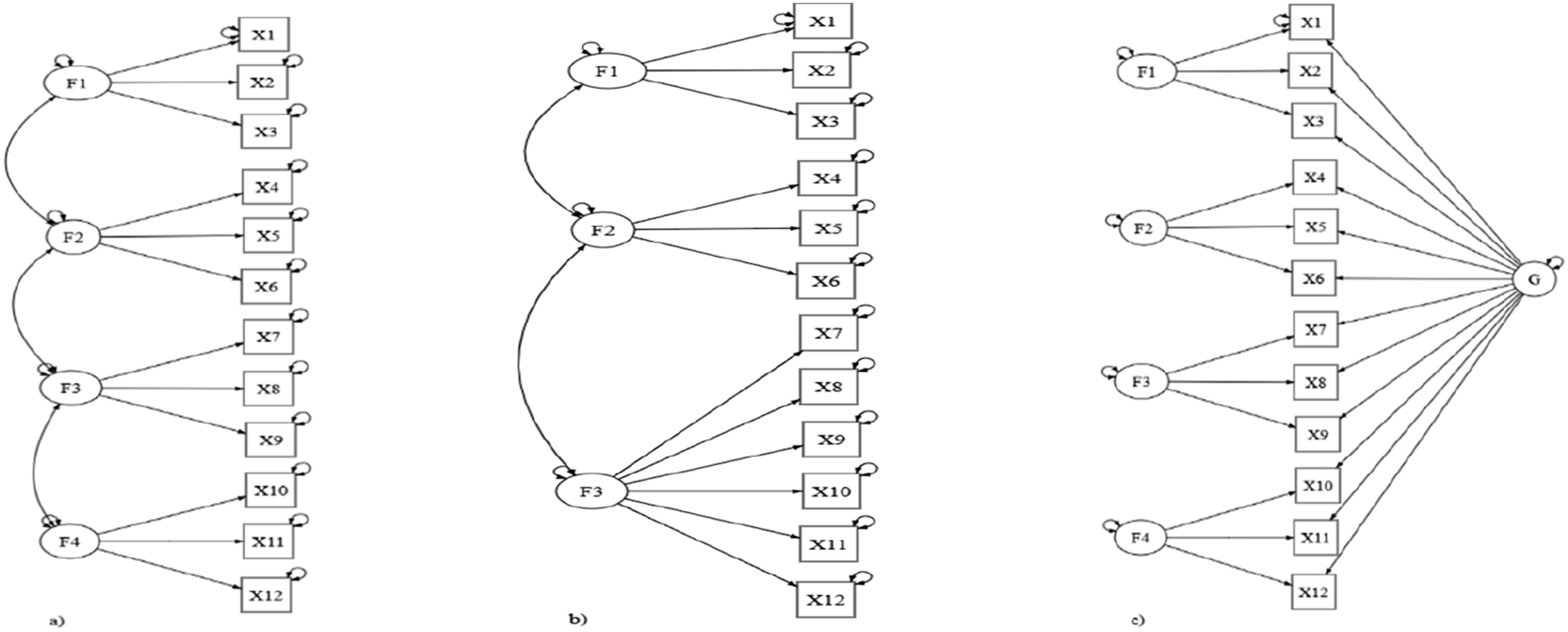

In this study, confirmatory factor analysis (CFA) was employed to examine the factor structure of the CAS-2 Swedish and Norwegian samples by testing three different models: a bifactor model; a three-factor model ((PA)SS), which merges Planning and Attention into one factor while keeping Simultaneous and Successive factors separate; and the original four correlated first-order factor model proposed by Das et al. (1994), which includes Planning, Attention, Simultaneous, and Successive processes. Comparing these three different models, we aimed to establish whether the Swedish and Norwegian versions of the CAS-2 exhibit the same four-factor structure as the original version or whether merging into a three-factor model is a better fit for this population. In addition, it was important to investigate whether an underlying general ability is present (the bifactor model). Previous research on the CAS has examined multiple structural models of intelligence, including three-factor, four-factor, and bifactor solutions. The four-factor correlated model is most consistent with the theoretical framework of PASS, whereas three-factor models have been considered because of the high correlation between Planning and Attention. At the same time, it is important to evaluate models that include a general factor, such as higher-order or bifactor models, as these allow researchers to assess how much variance in CAS scores is attributable to broad cognitive ability versus specific processes. Examining such models provides a critical benchmark for understanding the interplay between general and domain-specific influences and informs the broader debate on whether intelligence is best conceptualized as a single general factor or as multiple interdependent processes (Papadopoulos et al., 2025).

The outcome of this study will contribute to understanding how to interpret the results from the CAS-2 and apply them to recommendations related to learning disabilities in the Scandinavian population.

Method

Participants

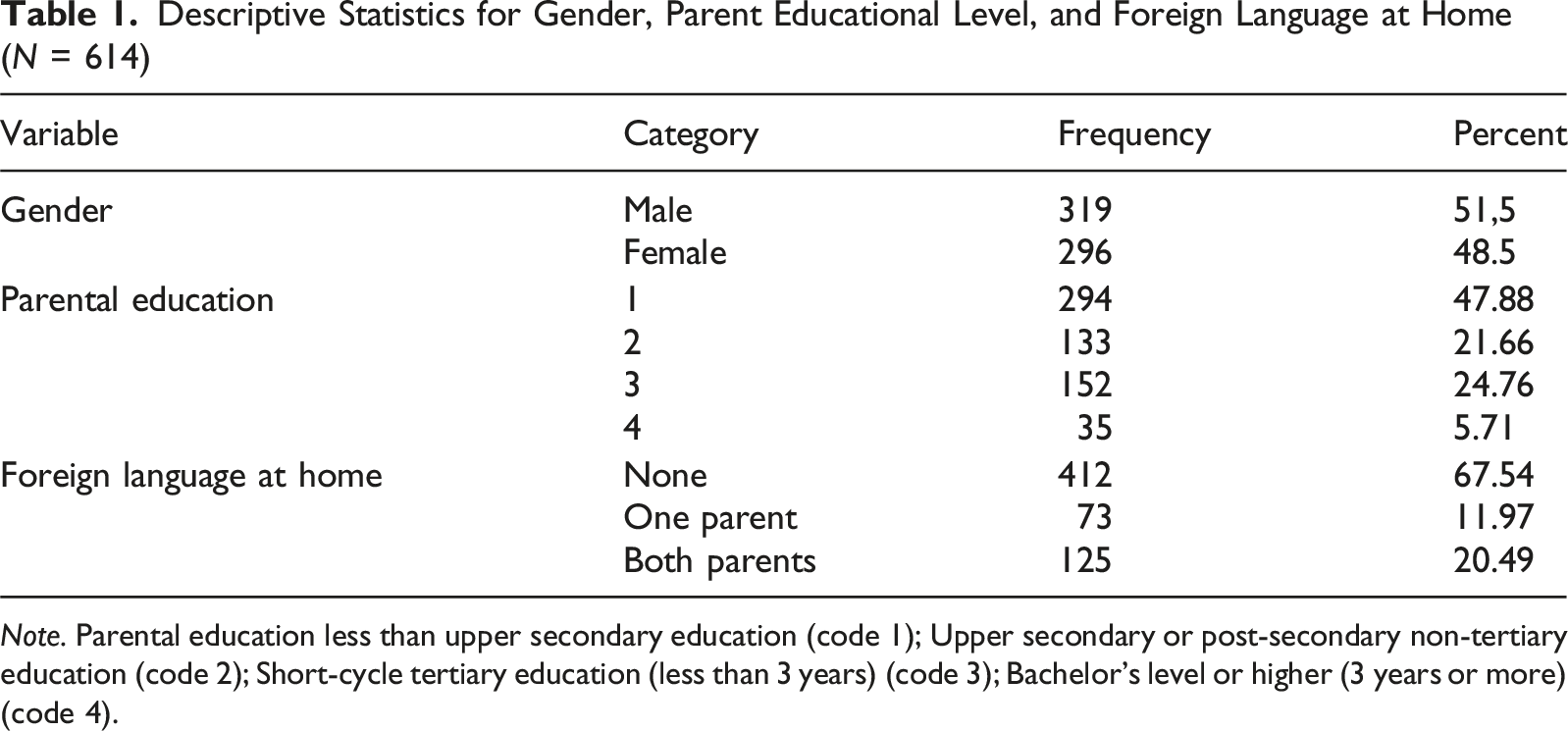

Descriptive Statistics for Gender, Parent Educational Level, and Foreign Language at Home (N = 614)

Note. Parental education less than upper secondary education (code 1); Upper secondary or post-secondary non-tertiary education (code 2); Short-cycle tertiary education (less than 3 years) (code 3); Bachelor’s level or higher (3 years or more) (code 4).

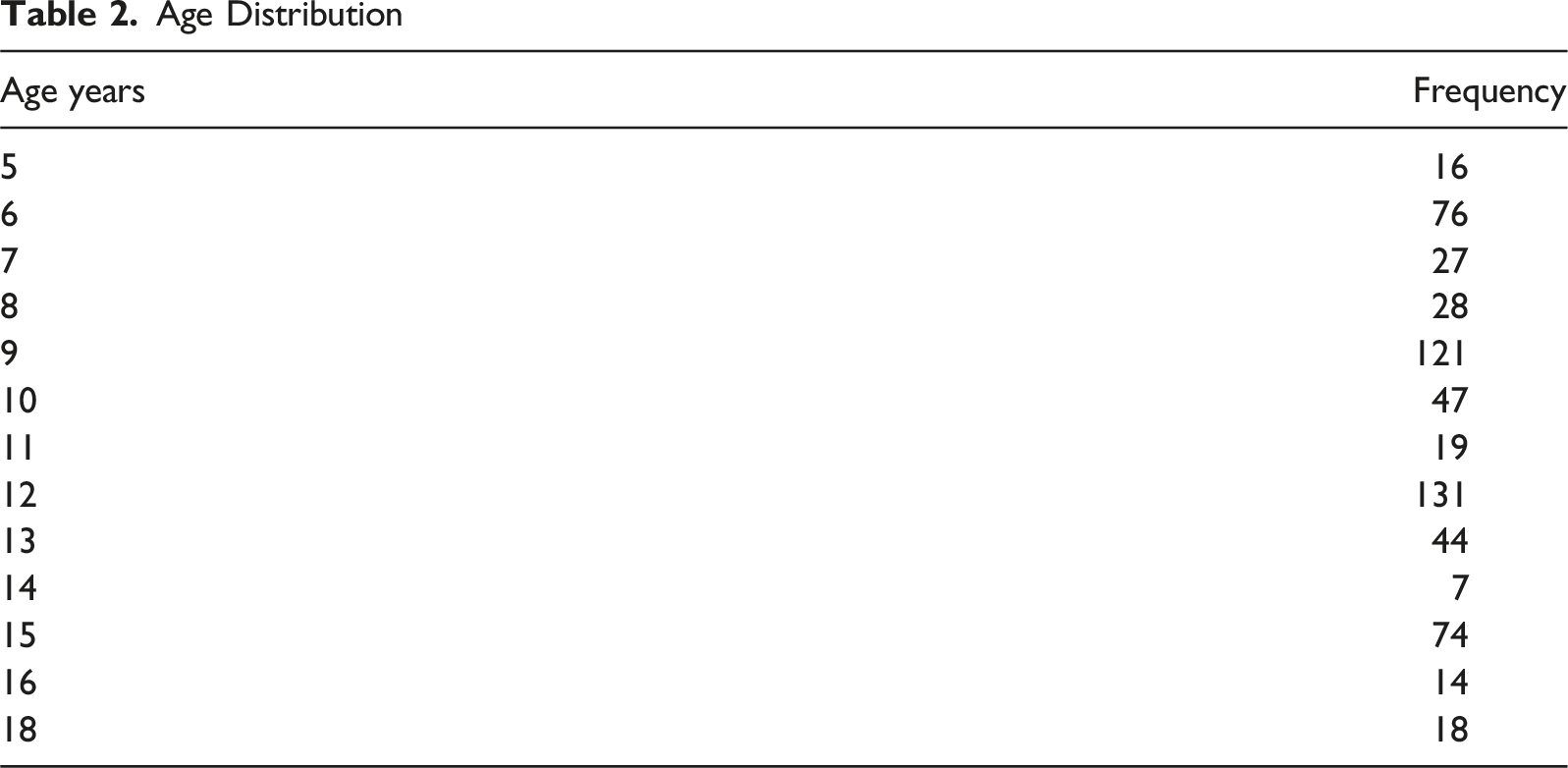

Age Distribution

Nine protocols were removed due to skewed numbers of parents with higher education compared to the national mean based on Swedish and Norwegian census data. Our sample size (N = 614) and model degrees of freedom (df = 48) provided substantial statistical power to detect even modest degrees of misfit. Power analyses indicated that, under these conditions, the study was highly sensitive to even small deviations from the specified model, particularly for root mean square error of approximation (RMSEA) values exceeding common thresholds, such as 0.05 or 0.08, depending on the context and model type. Specifically, when considering a null RMSEA closer to 0.00 (indicating perfect fit) and an alternative hypothesis suggesting values around 0.05, the model would be highly sensitive to even slight misfits (approximately 100% power). Alternatively, when the null RMSEA is closer to 0.05 (a more typical cut-off for good fit) and the alternative hypothesis suggests values closer to 0.08, the power remains very high for detecting misfit at these thresholds (approximately 100% power).

Measures

CAS-2 comprises 12 subtests, with 3 subtests producing an index for each PASS factor. These cognitive processes are critical for solving problems and activities. Planning enables cognitive control, knowledge use, intentionality, and self-regulation. It involves self-monitoring, impulse control, and generating, evaluating, and executing problem-solving strategies. Attention provides focused, selective cognitive activity over time and resistance to distraction. It allows selective focus on a stimulus while inhibiting responses to competing stimuli. Focused attention involves concentration toward a specific activity, and selective attention inhibits responses to distracting stimuli. Simultaneous processing integrates stimuli into groups. This process involves conceptualizing interrelated elements into a whole, often evaluated using visual-spatial tasks. Successive processing is used when stimuli are arranged in a specific serial order to form a chain-like progression. This process is required when information must follow a defined order, where each element relates only to those preceding it. The subtest items and scores are described elsewhere (Naglieri et al., 2014). The indices (the four PASS factors) consist of three subtests each and produce a scaled score at the subtest level (M = 10, SD = 3) and standard scores at the index and full-scale level (M = 100, SD = 15). The indices and subtests are presented below.

Planning Subtests

(1) Planned codes. A set of codes is arranged in rows and columns, and the children are asked to use their strategy to fill in the empty boxes with an appointed set of codes. (2) Planned connections. The children are required to connect a series of numbers in numerical sequence or to connect numbers and letters in either numerical or alphabetical sequence. (3) Planned number matching. The children are required to underline two identical numbers in each row as quickly as possible.

Simultaneous Subtests

(4) Nonverbal matrices. The children are required to decode a spatial or logical relation from all the given figures on pages displaying different shapes and geometric designs and then select the best answer from six alternatives. (5) Verbal-spatial relations. The children are required to choose one picture among six pictures that demonstrate the spatial relationship raised by a verbal question. (6) Figure memory. The children are exposed to a two- or three-dimensional geometric figure for 5 seconds and then asked to draw the former figure on a template page presenting a more complex geometric design containing the former figure nested.

Attention Subtests

(7) Expressive attention. The children are required to read the color words on the first page, name the colors on the second page, and read the color words written in different colors on the third page. (8) Number detection. The children are instructed to find and underline target numbers among distracters. (9) Receptive attention. The children are asked to find and underline the target letters. The targets are physically the same for the first page but namely the same letters for the second page.

Successive Subtests

(10) Word series. The children are asked to listen carefully to a series of words and then repeat them in the same order, with an increasing number of words. (11) Sentence questions (8–17 version). Well-structured, nonsensical sentences of increasing length containing color words are read aloud one by one. The children are required to answer a question by repeating the successive order of the color words correctly in their answers. (12) Sentence repetition (5–7 version). The sentences are the same as in the Sentence questions, but instead of answering the question, the children are asked to repeat the colors exactly as they are presented. The number of accurately repeated sentences is recorded. (13) Visual digit span. The children are shown signs with numbers of increasing lengths between trials for 5 seconds. After each item, they are asked to repeat the numbers in the correct successive order orally.

CAS subtest scores are scaled scores with a mean score of 10 and a standard deviation of 3. The PASS index scores and full-scale scores are presented as index scores (mean 100 and standard deviation 15).

Procedure

School psychologists collected the CAS-2 standardization data with permission from principals and municipal student support center managers. All participating test leaders were licensed psychologists with two full days of administrative training in the CAS-2. The procedure took approximately 90 minutes, and the psychologist administered and scored the data following the standard procedure from the CAS-2 manual. Two independent experts checked all protocols, and they were excluded if the procedures were not followed.

Written parental consent was obtained prior to testing. School psychologists were instructed to follow the national professional ethical procedures during testing. Children and parents were informed that testing was voluntary and that they had the right to stop participating at any point. All data was anonymized so that the standardization sample contained no sensitive information, making identification or backward tracing of individuals, schools, or geographic areas impossible. No sensitive data on medical diagnoses or health status was gathered. The regional ethical committee found that the data did not need any ethical approval as it did not include any sensitive data or could identify a specific individual.

Data Analytic Procedures

Confirmatory factor analyses were used to examine the factor structure of the CAS-2 in three different models (see Figure 1); the four correlated first-order factor model initially proposed by Das et al. (1994), including Planning, Attention, Simultaneous, and Successive processes; the second model was the correlated first-order factor model (PA)SS, merging items from Planning and Attention into one factor but keeping Simultaneous and Successive factors separated. The bifactor model tested had a general factor that loaded directly onto all indicators in the model while retaining the loadings on the specific factors (which are uncorrelated). The maximum likelihood estimation method was used because the data was normally distributed and continuous. Illustration of Three Alternative Factor Structure Models for the CAS-2 (From Left to Right): (a) Four-Factor (PASS) Model (b) Three-Factor ((PA)SS), Bifactor (Unidimensional) Mode (c)l

Apart from reporting chi-square, model fit was evaluated using the RMSEA, standardized root mean residual (SRMR), comparative fit index (CFI), and relative chi-square statistics. The cut-offs recommended by Schermelle-Engel et al. (2003) for assessing model fit were applied. RMSEA values equal to or below .05 were considered a good fitting model, and values between .05 and .08 were considered an acceptable fit. SRMR values equal to or below .05 were considered good fitting data, and values equal to or below 08 were considered acceptable. Regarding CFI, values equal to or greater than .97 are considered a good fit, and values equal to or greater than .95 indicate an acceptable fit (Schermelle-Engel et al., 2003). Akaike’s Information Criterion (AIC) and Bayesian Information Criterion (BIC) were used to identify the best-fitting model. The lowest value indicates the best-fitting model (Schermelleh-Engel et al., 2003).

The factor structure was also tested for measurement invariance using multi-group models to compare invariance for gender, age, and country (Sweden vs. Norway). Invariance was assessed in three sequential steps: configural, metric, and scale (Putnick & Bornstein, 2016). Configural invariance was assessed according to a baseline model in which parameters were freely estimated across groups and evaluated based on CFI, RMSEA, and SRMR cut-off values. Configural, metric, and scale invariances were also assessed. A sequential strategy was used to test invariance at different levels. All parameters were freely estimated across groups to establish equivalence in factor structure across the two groups (configural) model. Second, a metric model was fitted in which the factor loadings were constrained to be equal, and the fit of this model was compared to that of the configured model. Third, a scalar model was fitted in which factor loadings and item intercepts were constrained to be equal, comparing this model with the second/metric model. We report the chi-square test statistics comparing competing nested models. Although a scaled chi-square difference test for nested models can be used to index invariance between models, it suffers from the same dependency on sample size as the minimum fit function statistic; thus, changes in model fit according to CFI, RMSEA, and SRMR were used. According to the criteria suggested by Chen et al. (2008), a decrease in CFI of > −.015, in addition to an increase in RMSEA of > .015 and SRMR>.030, corresponds to an adequate criterion indicating a decrement in fit between models for sample sizes larger than 300. Measuring invariance would mean equivalence in factor structure, equal contribution from items to latent factors, equally captured shared variance of items by the latent factors, and comparable item and error variance (Putnick & Bornstein, 2016) across groups.

Finally, composite reliability (CR) was calculated as a measure of the internal consistency of the factors, with values greater than .70 indicating good reliability. CR was computed from the square sum of the factor loadings and the sum of the error variance terms for the latent variable. Two measures of validity were calculated: (1) discriminant validity was achieved when the average variance extracted (AVE) was greater than the maximum shared squared variance (MSV) and (2) convergent validity was achieved when AVE was equal to or greater than .50 and lower than CR. AVE is calculated as the mean percentage of variation explained among the items of a construct (Hair et al., 2014). All statistical analyses were performed using JASP version 0.19.3 (JASP team, 2023).

Results

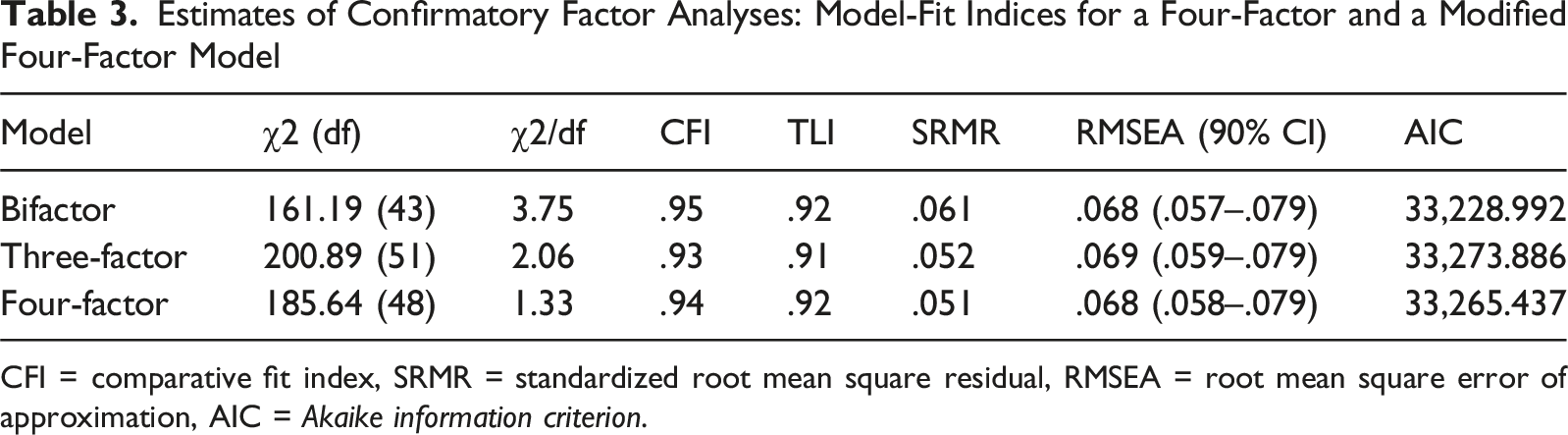

Estimates of Confirmatory Factor Analyses: Model-Fit Indices for a Four-Factor and a Modified Four-Factor Model

CFI = comparative fit index, SRMR = standardized root mean square residual, RMSEA = root mean square error of approximation, AIC = Akaike information criterion.

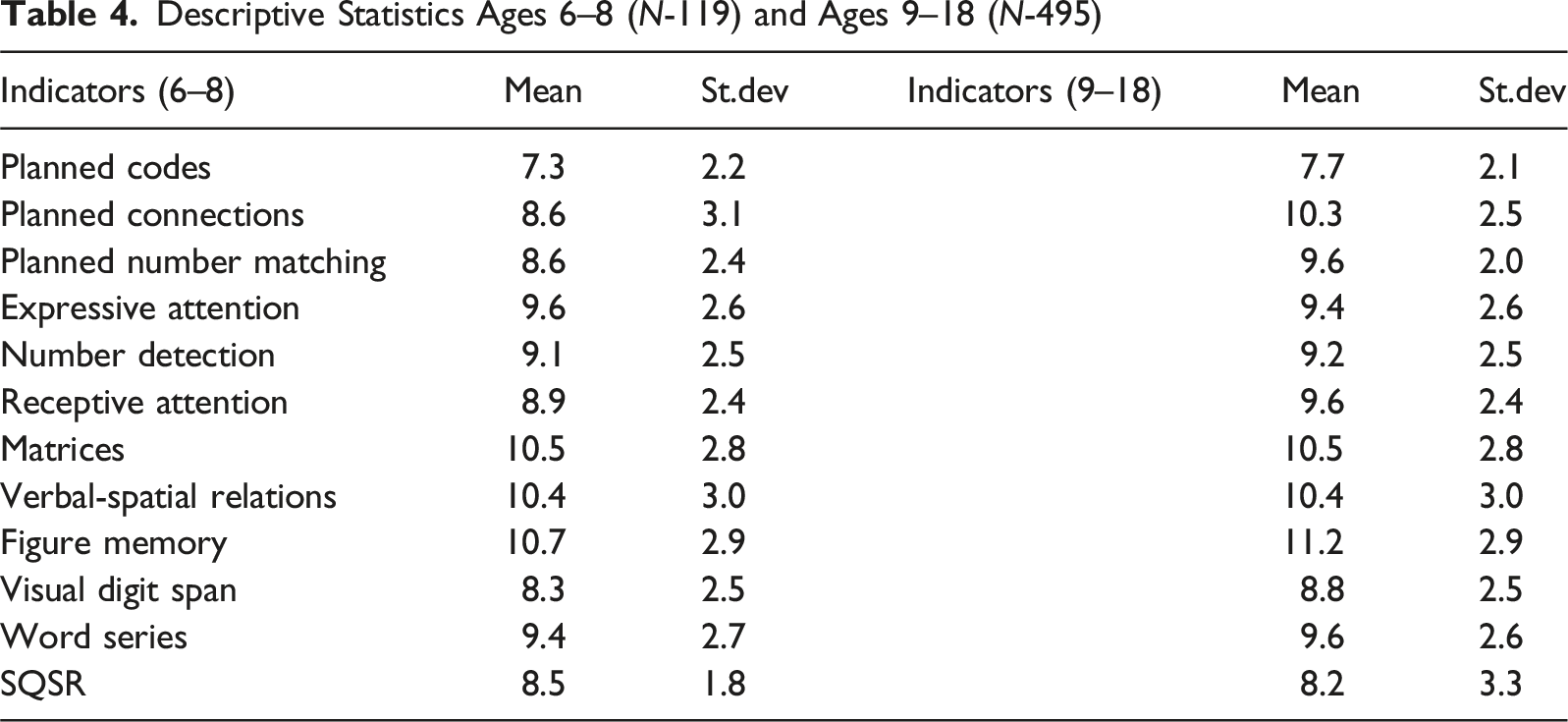

Descriptive Statistics Ages 6–8 (N-119) and Ages 9–18 (N-495)

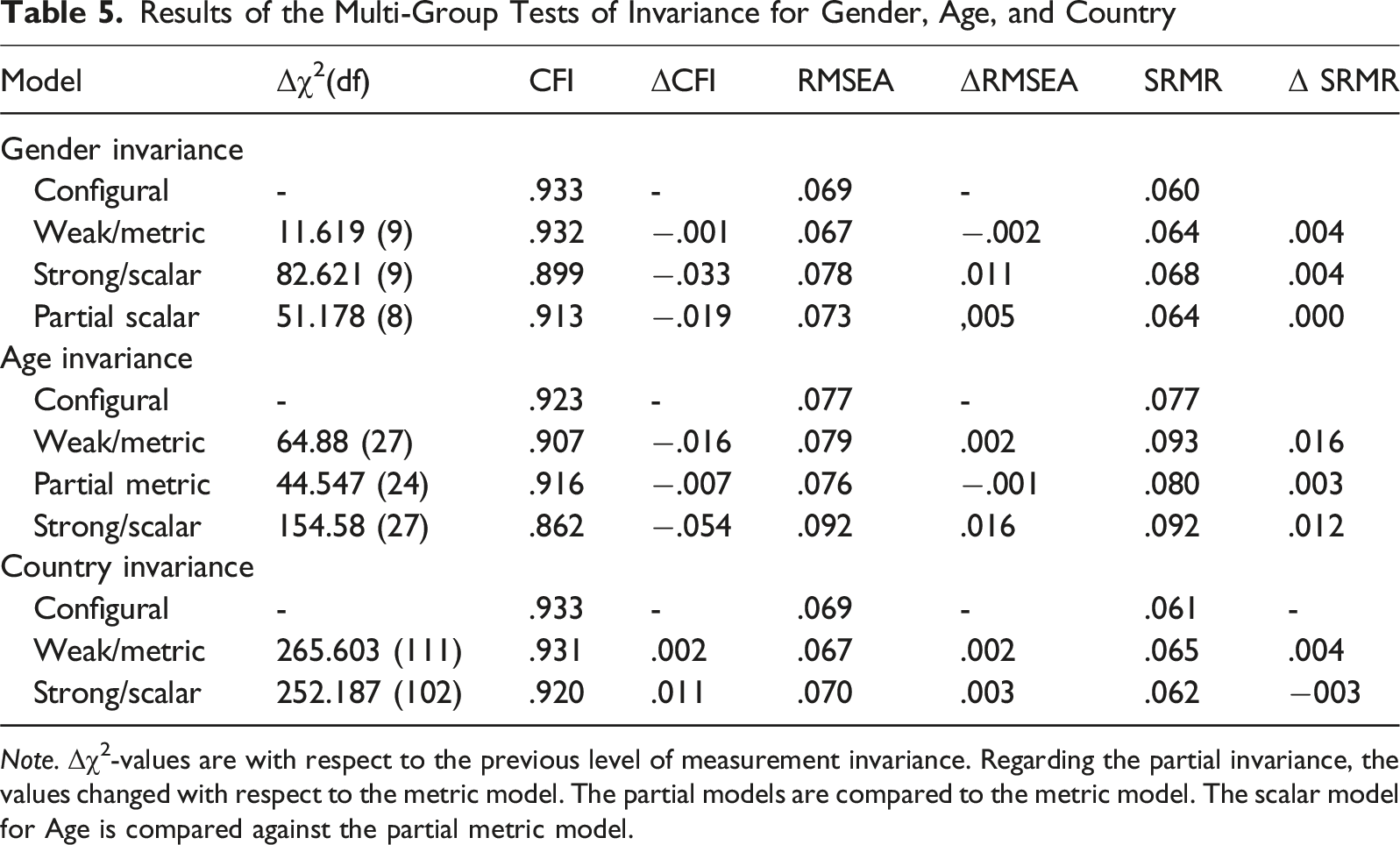

Results of the Multi-Group Tests of Invariance for Gender, Age, and Country

Note. Δχ2-values are with respect to the previous level of measurement invariance. Regarding the partial invariance, the values changed with respect to the metric model. The partial models are compared to the metric model. The scalar model for Age is compared against the partial metric model.

When comparing model fit using information criteria, the AIC slightly favored the four-factor PASS model, whereas the BIC favored the more parsimonious three-factor (PA)SS model over the four-factor model. AIC and BIC apply different penalties for model complexity: AIC tends to be more forgiving of additional parameters, focusing on predictive accuracy, whereas BIC imposes a stronger penalty for complexity, prioritizing model parsimony and generalizability to new samples (Burnham & Anderson, 2004).

The BifactorIndicesCalculator (Dueber, 2017) was used to evaluate the bifactor model. Omega hierarchical (ωH) tests the proportion of reliable systematic variance attributed to the general factor, and omega hierarchical subscale tests this for specific factors (after portioning out variability stemming from the general factor). When ωH >.80 for the general factor, it is indicative of unidimensionality. In this model, the ωH was .76 for the general factor and some notable variance were attributed to two specific factors (Simultaneous ω = .49 and Successive ω = .45), but not for the other two (ω < .12).

The percentage of uncontaminated correlations (PUC) corresponds to the percentage of covariance terms that only reflect variance from the general dimension, or put differently, measurement that is “uncontaminated” by the multidimensionality introduced by the subscales. Along with explained common variance (ECV), the PUC influences the parameter bias of the unidimensional solution. As a guideline, “when ECV is >.70 and PUC >.70, relative bias will be slight, and the common variance can be regarded as essentially unidimensional. In this model, the ECV 60.5% of common variance was attributed to the general factor, and the residual 39.5% was attributed to domain-specific factors. The PUC value for the scale was .82, indicating that the large proportion of correlations of the scale were attributed to the general factor. Altogether, as only PUC of these psychometric indices supported unidimensionality of the general factor, and only weak evidence for two specific factors, we suggest that a multidimensional factor model is more suitable than a bifactor model for the CAS-2.

The measurement invariance tests for the three-factor model are presented in Table 2. The results showed support for configural invariance regarding CFI, SRMR, and RMSEA for all group variables (suggesting a similar factor structure across age groups and gender). There was no substantial decrease in model fit in metric models (indicating equivalence between items and constructs in gender) but with some tendency for non-invariance for the scalar model. When partial scalar models were tested for each intercept individually, PCd tended to be the major source of non-invariance and freeing this indicator indicated that item intercepts are equivalent across gender. Looking at age invariance, there was some decrease in model fit in metric model due to different loadings for PNM, and freeing this loading resulted in no substantial decrease in model fit. However, on the scalar level, there was some tendency for non-invariance. The test of each intercept individually resulted in a case where no non-invariant intercept was found (likely due to small deviations in item thresholds across many indicators that accumulate, causing some non-invariance), and partial scalar invariance not fully supported. With respect to Country, invariance was achieved across all levels.

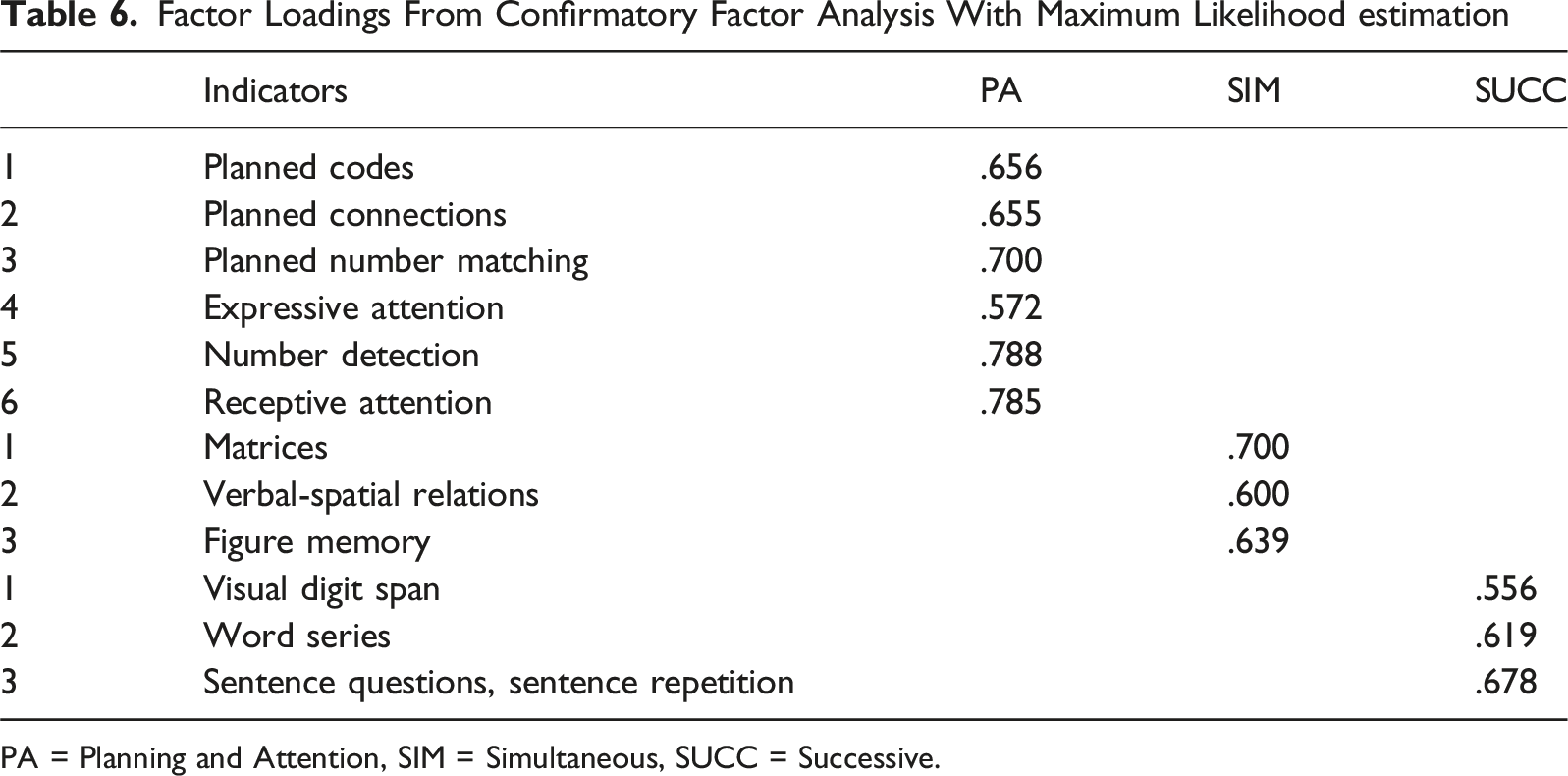

Factor Loadings From Confirmatory Factor Analysis With Maximum Likelihood estimation

PA = Planning and Attention, SIM = Simultaneous, SUCC = Successive.

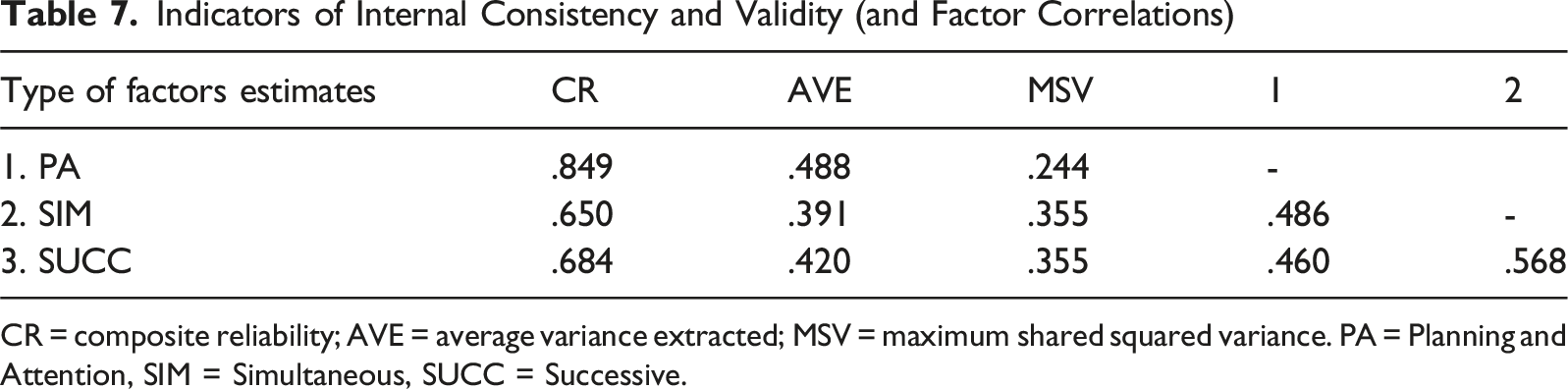

Indicators of Internal Consistency and Validity (and Factor Correlations)

CR = composite reliability; AVE = average variance extracted; MSV = maximum shared squared variance. PA = Planning and Attention, SIM = Simultaneous, SUCC = Successive.

Discussion

This study provides further insight into the psychometric properties of the Scandinavian version of the Cognitive Assessment System version 2 (CAS-2; Naglieri & Das, 1995). All three models tested, the PASS model, (PA)SS model, and bifactor model, showed acceptable fit indices, each with unique challenges, and the differences between models and fit indices were too small to be considered meaningful. The CFA results are, to some extent, consistent with the theoretical framework of PASS theory, reinforcing the foundational principles that guided the original development of the CAS (Das et al., 1994). This study provides preliminary support for the construct and validity of the CAS-2 and PASS theory, corroborating findings from diverse cultures and linguistic versions (Papadoupolos et al., 2008; Van Luit et al., 2005), although improvements are needed to fully support the four-factor model, as the CFA showed very strong correlations (.92) between the Planning and Attention factors. This correlation was expected, as previous research identified the same pattern (Keith et al., 2001; Kranzler & Keith, 1999), but the strong correlation in the Scandinavian version needs to be further explored, as this could be due to inherent variations within the population stemming from cultural or educational differences, or greater item similarity within the Planning and Attention factors due to language or translational issues. Considering the differences in the stability of the factor structure across different versions of the test contexts is of particular interest, as one of the CAS’s proposed strengths is its ability to minimize the impact of culture and prior knowledge while accurately assessing neurocognitive processes (Das et al., 1994). While invariance was achieved for Gender, there was some tendency for scalar non-invariance with respect to Age. As no single freed intercept led to an improvement in model fit, this suggests that the cumulative effect of some minor non-invariance on several indicators’ intercept was the source of model misfit, resulting in no invariant scalar model found across age groups. This pattern suggests that misfit was not dependent on one particular indicator. Therefore, mean level comparisons of the latent constructs should be interpreted with caution, as differences may partly reflect age-related responses rather than true differences in underlying cognitive processes. As the invariance analysis between the Norwegian and Swedish sample indicate invariance on all measured levels tested, supporting meaningful latent mean comparisons between countries while recognizing potential minor differences in the item intercepts. From a theoretical standpoint, this supports the cross-cultural applicability of the underlying cognitive constructs, suggesting that the Scandinavian version of CAS-2 captures comparable dimensions of cognitive processing across the two national contexts.

The competing three-factor model, which merges Planning and Attention into a single factor (the (PA)SS model), also demonstrated an acceptable fit, comparable to the four-factor model. The marginal differences between the AIC and BIC values suggest that both models provide comparable explanatory adequacy and that the preference indicated by either criterion is weak rather than decisive. Consequently, we interpret these results as supporting the coexistence of multiple plausible structural representations of CAS-2 in the Scandinavian context rather than providing definitive evidence for one model over another. This interpretation aligns with our overall neutral analytical approach and reinforces the need to consider theoretical coherence and clinical interpretability, alongside statistical indices, when selecting a preferred model.

As previous research has criticized the original CAS, suggesting that the Planning and Attention scales reflect a unified function (Kranzler & Keith, 1999), one could argue that a simpler model is preferred. A more parsimonious model that accurately captures the underlying structure with fewer factors is generally preferred because it aligns with the principles of simplicity and theoretical clarity. On the other hand, a model should not be overly simplified but should also value theoretical and practical perspectives. The authors of the original CAS (Naglieri & Das, 1995) emphasized the necessity of aligning the PASS model with Luria’s theoretical framework, which has been supported by multiple studies (McCrea, 2009; Okuhata et al., 2007). Planning is anatomically associated with frontal lobe activity, while attention is linked to basal neural structures. These processes are highly interconnected within frontostriatal and frontolimbic circuits (McCrea, 2009). It is worth noting that the CAS was developed to support clinical and educational assessments for various subgroups of students with special educational needs and disabilities. The validity of the CAS and the original PASS model, which includes the distinction between Planning and Attention, has been confirmed in several studies demonstrating that the cognitive profiles of students with ADHD and ASD vary in their Planning and Attention processes (Canivez & Gaboury, 2016; Van Luit et al., 2005). Furthermore, several studies have demonstrated the validity of planning processes in explaining mathematics achievement (Partanen et al., 2020). Further support for separating Planning and Attention factors comes from studies of executive functions, particularly from a developmental perspective (Best et al., 2011). The original PASS model clarifies conceptual distinctions of neurocognitive functions and enhances the pragmatic validity of interpreting CAS results using the original PASS four-factor model based on Luria’s theory of the working brain (Das, 2018). The strong loadings between the Planning and Attention factors in the Scandinavian version did not support the separation of the factors suggested in the original theory. As mentioned above, improvements to future versions are one way to move (Keith et al., 2001). In addition, results from the clinical and diagnostic group differences could help support either a three- or four-factor model.

In evaluating the bifactor model, our analyses provided only partial support for a general factor underlying the CAS-2. Although the model demonstrated acceptable global fit indices, closer inspection of the bifactor indices indicates important limitations, with the combined pattern of indices failing to reach the criteria generally recommended for supporting a primarily unidimensional interpretation. Taken together, the results suggest that the bifactor model, although informative for partitioning variance, does not provide strong psychometric evidence for collapsing the CAS-2 into a single general factor. In particular, the Planning and Attention subtests showed weak residual loadings after accounting for the general factor (ω < .12), indicating limited incremental contribution of these specific domains within a bifactor structure. By contrast, Simultaneous and Successive processes retained more substantial specific variance (ω = .49 and .45, respectively). This pattern is consistent with prior findings (e.g., Canivez, 2011) that the CAS captures both a meaningful general dimension and distinct cognitive processes at the subtest level.

Therefore, the bifactor model was not favored in this sample. The AVE values for Simultaneous (.39) and Successive (.42) fell below the recommended .50 threshold, suggesting that less than half of the variance in these factors was explained by their respective indicators. Although these values are relatively close to the criterion and consistent with findings in some previous CAS studies, they point to a potential limitation in convergent validity. Interpretations based on these factors should therefore be made with caution. Future research could explore whether these lower values reflect intrinsic properties of the constructs, translation or cultural adaptation effects, or item-specific issues, and consider modifications to strengthen the measurement of these domains. Canivez (2011) found that the global factor (g-factor) accounted for most of the common variance in the CAS. However, compared to the WISC-IV and other well-used test batteries, the CAS had a greater amount of variance at the subtest level, supporting a correlated first-order factor model. Regarding invariance, gender was invariant in factor structure, item’s contribution, variance, and error variance on factors, confirming the invariance found in the original CAS-2. When comparing age groups, invariance was confirmed in factor structure and variance, but not in error variance, which somewhat differ from those of the original version of the CAS-2 (Naglieri et al., 2014).

This study had some limitations. Test-retest and inter-rater reliability were not assessed due to the study design. While investigating construct validity and structural validity is critical, they cannot fully address questions related to the reliability and utility of test scores (Canivez et al., 2009; Carroll, 1997). Additionally, merging the two CAS versions (5–7 and 8–17) for the Successive factor required the assumption that the subtests measure the same latent factors, an assumption supported by the original CAS-2 version (Naglieri et al., 2014).

Future studies should include test-retest and inter-rater reliability to further validate the Scandinavian CAS-2. Future revisions should include exploring items sensitive to translation issues. Additionally, including new items that capture the theoretical differences between Planning and Attention could guide improvements in future versions of the CAS-2. In addition, future research should include participants with learning disabilities and various diagnoses, such as ADHD and ASD, to deepen the understanding of the cognitive profiles in various diagnostic groups. The potential of CAS-2 results in informing interventions for learning disabilities, especially academic achievement, warrants further investigation. Our study found that all three models trended to be equivalent in fit, with unique challenges that underscore the need to further explore linguistic and cultural differences. Future improvements in the test instrument and clinical studies can help determine if there is support for the original four-factor model or if the three-factor model is sufficient. As in most intelligence tests, there is a general factor present, and future studies can show if full-scale scores or scores based on the first-order factors are the most informative in predicting academic achievement and suggesting interventions for learning difficulties. Our results show that the Scandinavian version of CAS-2 can be used in Sweden and Norway, contributing to the view that intelligence can be seen as a multifaceted concept, which also aligns with neuropsychological theory and neuroanatomical data. However, other supporting data are recommended for interpreting differences in the Planning and Attention indexes.

Footnotes

Ethical Considerations

The authors were given permission to use the data from the test publisher. The data collection was ethically approved by the Swedish Ethical Review Authority (Dnr 2025-01169-01) and no sensitive information was recorded.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.