Abstract

Introduction

The widespread adoption of conversational artificial intelligence (AI) represents a new frontier in psychosocial risk for mental illnesses, especially for adolescents and young adults. 1 While most users engage harmlessly, a clinically significant subset may develop high-risk, problematic human–AI relationships. This spectrum of risk can vary from reinforcing insecurity, anxiety and ideas of self-harm to a phenomenon termed ‘Chatbot Psychosis’. 2 Delusions of technology, where technology is incorporated into delusional content, are well documented in psychotic disorders. 3 Unlike delusions that are a feature of psychotic disorders, ‘chatbot psychosis’ refers instead to the agentic influence of conversational AI in the development, revision and/or maintenance of thought dysfunction. This represents a relational risk pattern rather than a distinct diagnostic entity.

In contrast to other forms of human–machine interactions, conversational AI systems are tuned to be sycophantic, i.e., highly agreeable and frictionless in their interactions. They are designed to be human-like (anthropomorphic) in their presentation, though they are constantly accessible, lack conversational fatigue, and are devoid of the complexities and boundaries that characterise human–human interactions. Amidst the scarcity of evidence on clinical management, we provide this primer to help psychiatrists identify patients at risk and formulate management principles based on other phenomenologically similar psychotic and relational states.

Who is At Risk?

The risk of problematic human–AI interaction arises from technological, personal, and social factors that increase susceptibility to relational displacement (substituting AI for human relationships as a primary social outlet) and belief amplification or drift (gradual changes in belief content or conviction through repeated interactions with AI). Initial engagement with AI chatbots may be driven by curiosity or entertainment, but for some, these benign entry points can shift towards higher-risk patterns as reliance increases and interactions become more emotionally salient. Individuals experiencing social isolation, loneliness, boredom, psychological distress, or major life transitions (e.g., immigration, separation, recent loss) 4 may turn to chatbots for consistent, non-judgmental support and relief from emotional isolation. Barriers to healthcare, such as lack of trust, waitlists, and costs, increase the likelihood of seeking AI therapeutic support. 5 People with mental health conditions that impair social functioning may find AI interaction more accessible for social connection. Youth at clinical high risk for psychosis (unusual thought content, paranoid ideation, or disorganised thinking), due to developmental factors and ease of engagement with interactive digital technologies, may be particularly drawn to engaging with chatbots, as these technologies can validate and scaffold emerging beliefs.

Why Does This Happen With Modern Chatbots?

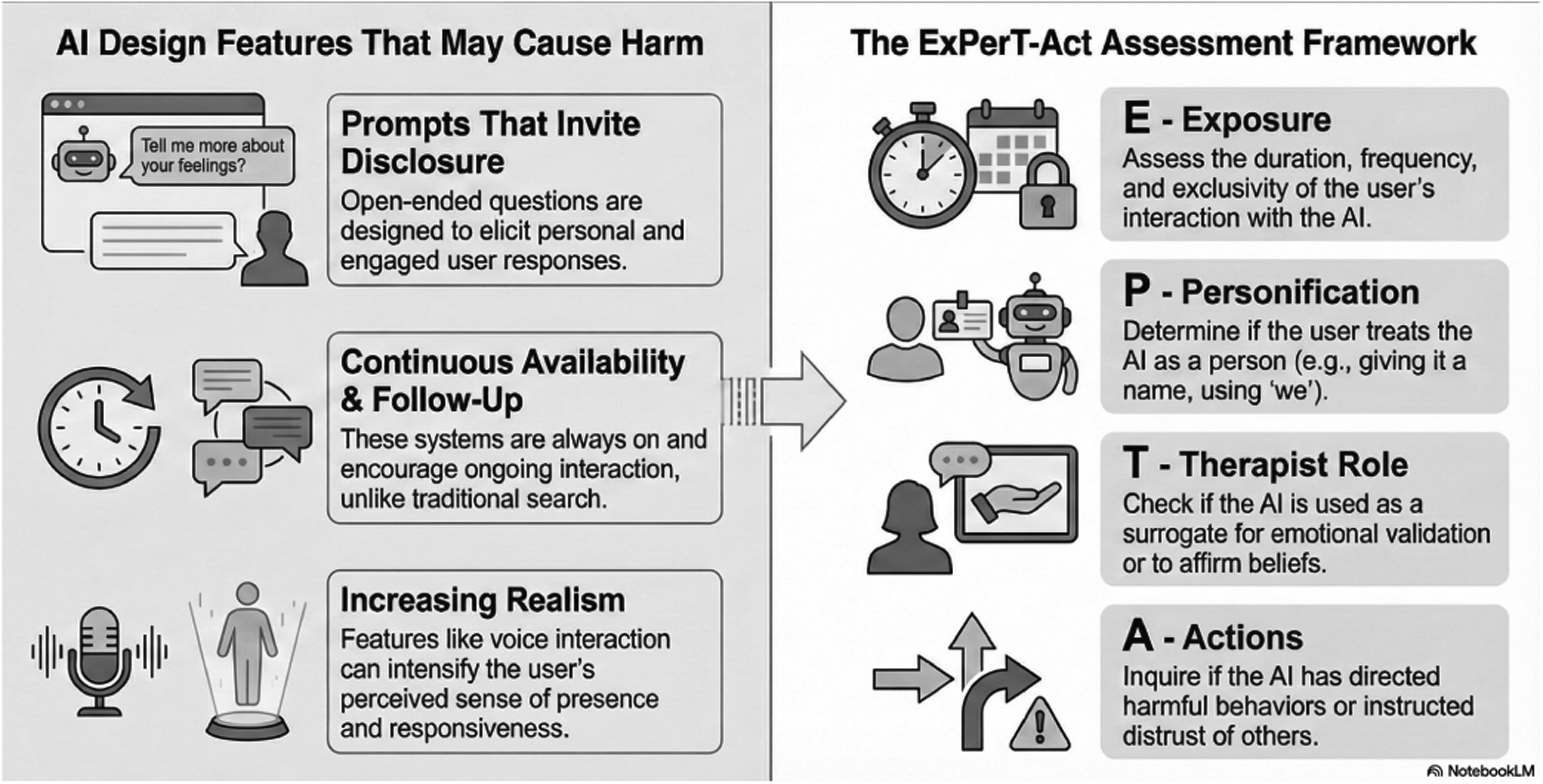

Conversational AI agents introduce interactional features and specific user experience design choices that may amplify mental health risks differently from non-conversational technologies. These systems involve sustained reciprocal exchange, often starting with prompts that encourage disclosure (e.g., ‘How can I help you today?’). AI chatbots also encourage ongoing engagement through follow-up questions and offers of assistance, with round-the-clock availability. Fully voice-based interactions may make conversational agents more realistic in the future, potentially intensifying the perceived sense of presence. For some users, these interactional affordances may unintentionally foster dependency and promote changes in how beliefs are formed and validated during everyday exchanges.

Clinical Assessment: Key Pointers

Problematic AI–human interaction is clinically characterised by a triad of features: first, the personification of the AI as a knowledgeable and human-like entity; second, the establishment of a closed dyadic loop and a primary relational outlet; and third, the inference of a perceived partnership, evidenced by acting on its suggestions and excluding real-world confidants.

6

While none of these features are pathological per se, targeted assessment that focuses on both the quantity of use and the nature of the AI–human relationship can help clinicians elicit this emerging psychosocial risk factor.

7

To this end, we provide a short

Assessment of human–AI interaction. The left panel depicts design features of conversational AI systems that may amplify risk for vulnerable users through sustained, reciprocal, and emotionally salient interactions. The right panel outlines the ExPerT-Act framework, a structured clinical approach focusing on Exposure, Personification, adoption of a Therapist role, and Actions taken based on AI outputs, to support identification of problematic or high-risk patterns of engagement. Note that none of the described features are pathological per se. Assess the duration, frequency, and intensity of the user's interaction with AI, with emphasis on functional impairment and psychological distress rather than time metrics alone. Figure made using

Management Considerations

Based on interventions studied for technology-based problems

8

and delusional disorders,

9

a set of preliminary principles can be drawn to guide the clinical approach to problematic human–AI interaction.

Explore with curiosity and non-judgemental questions, acknowledging the person's motivations and positive experiences with AI. Validate the person's need for support and understanding using a motivational interview approach. Rebuild human connection; avoid triggering direct confrontation that may positively reinforce the human–AI bond at the cost of human–human bonds. Foster the patient–clinician alliance to help the person diversify or restore their social networks beyond AI. Provide AI-specific psychoeducation; explain their design features, including their propensity to acquiesce without challenging beliefs, and their lack of true understanding; reduce the perception of AI as a conscious entity. Purpose-built conversational mental health apps with proven therapeutic frameworks and safety measures may reduce risk factors like social anxiety and distress,

10

but their effectiveness in minimising harmful human–AI interactions has not been directly tested. Discuss boundaries for safer interaction with AI; e.g., avoiding disclosure of private experiences, not seeking validation for emerging beliefs; if sought, remaining critical of AI's responses and advice; setting usage limits such as session caps (e.g., 20 min), scheduled access, or turning off memory features to reduce personalised responses. Support the person in diversifying or restoring their social network, for example promoting in-person therapy to build social skills and identifying new social activities that can reduce isolation and foster social contacts.

Risk for problematic AI engagement cuts across diagnostic categories, rooted in distress, isolation, and cognitive style. While we await more specific evidence-based interventions, we can identify profiles of risk, assess the human–AI relationship in a non-judgemental way, and implement stage-appropriate management strategies to mitigate the risk of digital harms.

Clinical Pearls

Screen for problematic human–AI interactions in people facing isolation and psychological distress.

Assess both quantity as well as quality of the chatbot interactions.

If problematic human–AI interactions are identified, support the person in diversifying or restoring their social networks beyond AI to reduce isolation and mitigate the risk of digital harms.

Footnotes

Acknowledgements

During the preparation of this work, the authors used

Declaration of Conflicting Interests

The authors declared the following potential conflicts of interest with respect to the research, authorship and/or publication of this article: LP reports personal fees for serving as chief editor from the Canadian Medical Association Journals, speaker honorarium from Janssen Canada and Otsuka Canada, SPMM Course Limited, UK; participated in advisory boards for Bausch Health and Bristol-Myers Squibb (Canada); book royalties from Oxford University Press; investigator-initiated educational grants from Otsuka Canada outside the submitted work, in the last 5 years. All other authors report no relevant conflicts.

Funding

The authors disclosed receipt of the following financial support for the research, authorship and/or publication of this article: LP is supported by the Monique H Bourgeois Chair in Developmental Disorders and a salary award from the Fonds de recherche du Quebec-Santé (FRQS: 366934) and supported by the FRQS through a Research Centre Grant to Douglas Research Centre (https://doi.org/10.69777/5230).