Abstract

Objective:

Suicide is a growing public health concern with a global prevalence of approximately 800,000 deaths per year. The current process of evaluating suicide risk is highly subjective, which can limit the efficacy and accuracy of prediction efforts. Consequently, suicide detection strategies are shifting toward artificial intelligence platforms that can identify patterns within ‘big data’ to generate risk algorithms that can determine the effects of risk (and protective) factors on suicide outcomes, predict suicide outbreaks and identify at-risk individuals or populations. In this review, we summarize the role of artificial intelligence in optimizing suicide risk prediction and behavior management.

Methods:

This paper provides a general review of the literature. A literature search was conducted in OVID Medline, EMBASE and PsycINFO databases with coverage from January 1990 to June 2019. Results were restricted to peer-reviewed, English-language articles. Conference and dissertation proceedings, case reports, protocol papers and opinion pieces were excluded. Reference lists were also examined for additional articles of relevance.

Results:

At the individual level, prediction analytics help to identify individuals in crisis to intervene with emotional support, crisis and psychoeducational resources, and alerts for emergency assistance. At the population level, algorithms can identify at-risk groups or suicide hotspots, which help inform resource mobilization, policy reform and advocacy efforts. Artificial intelligence has also been used to support the clinical management of suicide across diagnostics and evaluation, medication management and behavioral therapy delivery. There could be several advantages of incorporating artificial intelligence into suicide care, which includes a time- and resource-effective alternative to clinician-based strategies, adaptability to various settings and demographics, and suitability for use in remote locations with limited access to mental healthcare supports.

Conclusion:

Based on the observed benefits to date, artificial intelligence has a demonstrated utility within suicide prediction and clinical management efforts and will continue to advance mental healthcare forward.

Introduction

Suicide is a growing public health concern and accounts for approximately 800,000 deaths worldwide per year (World Health Organization, 2018). However, suicide etiology is highly complex with no single cause, which makes prediction efforts more challenging. In fact, suicide is invariably influenced by an interplay of risk factors across psychosocial, biological, environmental, economic and/or cultural domains (Sinyor et al., 2017; Turecki, 2014). At the individual level, neurochemical, neuroendocrine, inflammatory and genetic/epigenetic alterations have all been implicated in suicide (Turecki, 2014). Early life trauma (Zatti et al., 2017) and the presence of mental and substance use disorders also increase risk (Ferrari et al., 2014), with a previous suicide attempt being among the most potent predictors (Bostwick et al., 2016). In fact, more than 90% of individuals who die by suicide have an associated psychiatric diagnosis, most frequently major depressive disorder (Cavanagh et al., 2003). Publicizing suicide can also increase rates, as demonstrated by a 19% rise in online suicide queries following the release of the suicide-themed Netflix series ‘13 Reasons Why’ (Ayers et al., 2017). Media coverage of celebrity deaths, such as Robin Williams, Anthony Bourdain and Kate Spade, can further influence those at risk of suicide and ultimately increase suicide rates by 10–12% (Etehad, 2018). Across domains, risk factors often cluster with a greater frequency among priority populations—prisoners; Indigenous groups; immigrants and refugees; the lesbian, gay, bisexual, transgender, intersex (LGBTI) community—resulting in disproportionately high suicide rates for these groups (World Health Organization, 2018).

Despite identifying potential suicide risk factors, data have yet to be integrated into a reliable prediction model. Instead, the current process of evaluating suicide risk is still highly subjective, with risk factors being considered as part of an overall framework that heavily relies on the clinical acumen of healthcare practitioners. Moreover, traditional statistical methods of analysis, which are limited in analyzing complex data, have failed to predict suicide behaviors above chance levels (Franklin et al., 2017). In light of these current limitations, statistically advanced machine learning (ML) approaches, as a sub-field of artificial intelligence (AI), are being developed with increasing frequency to improve suicide care.

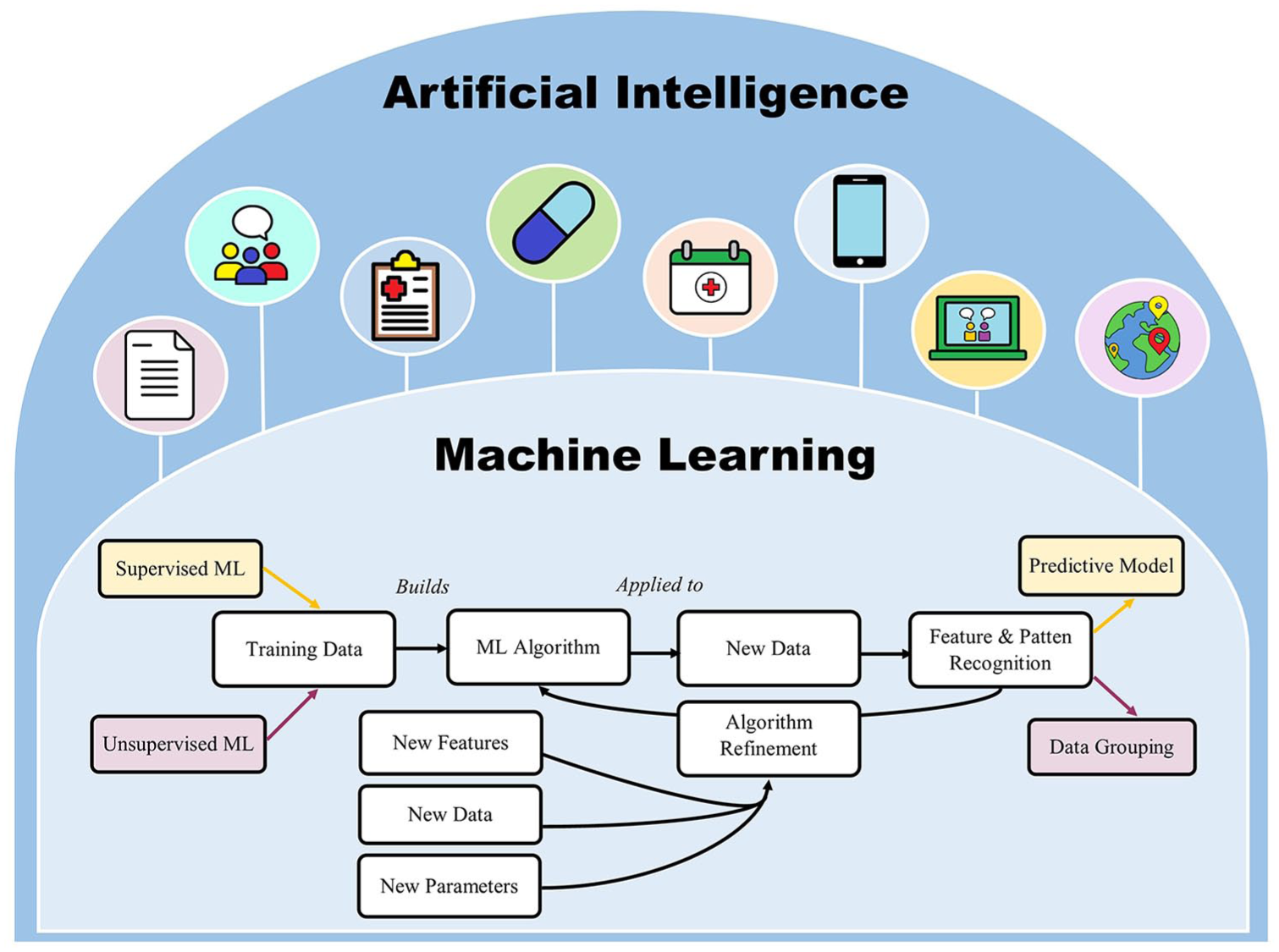

The goal of AI is to develop modalities that simulate aspects of human intelligence such as planning, reasoning, pattern recognition and problem-solving. This process involves machines (i.e. non-human entities) ‘learning’ to recognize patterns and features by rapidly and iteratively processing large datasets using mathematical models or algorithms (Miller and Brown, 2018). The current capacity of AI is still considered ‘narrow or weak’ in that it requires explicit programming to perform a specific task (Bostrom and Yudkowsky, 2014). The evolution of AI technologies is heavily dependent on ML.

The two main branches of ML are (1) supervised learning, which supports prediction analytics and (2) unsupervised learning, which categorizes data using clustering and component analysis techniques. Supervised ML involves work with datasets where the outputs are already known (training sets) to identify patterns that can be subsequently applied to predict outputs in new datasets. Unsupervised learning, in contrast, uses unlabeled training data. ML programs also continuously adapt to new data to optimize algorithm parameters and increase the predictive accuracy of the model (Miller and Brown, 2018) (refer to Figure 1). There are advantages of using ML over traditional statistical analyses: ML identifies the most effective model for a given dataset compared to traditional analyses which require a priori selection, and ML is more suited to process complex data combinations in regard to the number and type of variables (Walsh et al., 2017). However, ML strategies require comparatively larger datasets than traditional analyses.

The relationship between machine learning and artificial intelligence. Machine learning has helped develop artificially intelligent technologies that can analyze both written and audio medical information and generate responses in similar formats. This has been used to automate suicide support in areas of diagnostic assessment, treatment management, intervention delivery and visit scheduling. It has also aided in the development of suicide intervention-based mobile applications and therapeutic conversational agents. Health information collected across electronic medical records, mobile health technologies and social media can be integrated to develop predictive models of suicide risk.

One emerging framework that is used to capture ‘big data’ for ML is the ‘Internet of Things’ (IoT). The IoT refers to connecting devices to the Internet (and to other connected devices) to form a network that facilitates communication between physical and virtual domains to permit the exchange of user and context data. The IoT has become increasingly relevant within medical research and healthcare, most notably with the rapid growth of mobile applications and wearable patient devices (Dimitrov, 2016). Moreover, the continuous collection of passive data through the IoT provides a highly advantageous platform for prediction analytics.

Together, ML and AI have been used to generate predictive algorithms that can determine the effects of risk (and protective) factors on suicide outcomes, predict suicide outbreaks and identify at-risk populations. Beyond prediction initiatives, ML algorithms may also support the development of ‘smart’ technologies that, by simulating human intelligence, can monitor and respond to suicide behaviors in real time. These AI devices may be accessed directly by individuals in crisis or applied at the clinical level to support suicide risk assessment, behavior management or intervention delivery. In this review, we summarize data from studies examining the role of AI in optimizing suicide risk prediction and behavior management.

Role of AI in suicide risk prediction

Many AI efforts within mental healthcare have been directed toward suicide. At the most basic level, ML algorithms can identity suicide risk factors, not just in isolation, but also while integrating complex interactions across variables. ML techniques applied to well-characterized datasets have identified associations between suicide risk and clinical factors such as current depression, past psychiatric diagnosis, substance use and treatment history (Jenkins et al., 2014; Passos et al., 2016), with additional analytics highlighting environmental effects (Bonner and Rich, 1990; Fernandez-Arteaga et al., 2016). Multi-level modeling of Internet search patterns has also been used to identify risk factors of interest (Song et al., 2016), with Google data analytics providing a better estimate of suicide risk than traditional self-report scales in some cases (Ma-Kellams et al., 2016).

Once identified through exploratory analyses, risk factors can be used to inform prediction models. Prediction analytics have utility in identifying at-risk individuals and their likelihood of a future suicide attempt by integrating multiple factors into complex matrices that can model non-linear relationships. Large clinical databases have often been used to develop, validate and refine suicide prediction algorithms (Choi et al., 2018; Ryu et al., 2018). Two predominant suicide risk domains identified from exploratory analyses are (1) clinical risk, which encompasses diagnostic and treatment factors such as sudden mood change, recent history of low mood, self-injury, psychiatric comorbidity and previous hospitalizations (Liu et al., 2016; Passos et al., 2016), and (2) cognitive risk, which pertains to thoughts around life satisfaction, purpose, hopelessness, self-esteem and self-perceived competency (Barros et al., 2017; Jordan et al., 2018).

Beyond patients with low mood, prediction models have successfully classified suicide risk in patients with other psychiatric (Gradus et al., 2017; Hettige et al., 2017) and medical (Kalinin and Polyanskiy, 2005) diagnoses, and even among seemingly healthy demographics such as students (Mortier et al., 2018), prisoners (Bonner and Rich, 1990) and soldiers (Kessler et al., 2015, 2017). Soldiers, in particular, have been considered a high-risk group with an identified need for targeted prediction models. The Study to Assess Risk and Resilience in Service Members (STARRS) utilized data from >50,000 hospitalized, active duty soldiers to develop a model that predicts suicide risk within 12 months of hospital discharge. The authors identified male sex, age of enlistment, criminal offenses, and history of psychiatric illness, suicidality and treatment as the strongest predictors of suicide attempt among soldiers (Kessler et al., 2015). Algorithmic assessment has also demonstrated utility toward identifying suicide risk among soldiers who otherwise deny a history of ideation (Bernecker et al., 2018). Due to the relationship between post-traumatic stress disorder (PTSD) and increased risk of suicide, large datasets like the STARRS are integral to furthering PTSD-related suicide risk prediction. Similar models have already been applied in clinical practice, such as with the US Department of Veteran Affair’s REACH-VET (Recovery Engagement and Coordination for Health—Veterans Enhanced Treatment) program, which uses medical records to identify and respond to veterans at high risk of suicide or hospitalization (Eagan and Matarazzo, 2018).

ML has been further extended to natural language processing (NLP), which is a subfield of AI that allows devices to manipulate, interpret and respond to natural human language in written or spoken formats. NLP of clinical notes from electronic medical records (EMRs) provides an opportunity to predict suicidal ideation, with relatively high predictive values (Cook et al., 2016; Poulin et al., 2014) that outperform diagnostic codes from EMRs alone (Haerian et al., 2012). Beyond content analysis, word valence further indicates risk, with positively valenced words associated with a 30% reduced likelihood of suicide (McCoy et al., 2016). Moreover, the application of ML algorithms to patient writing patterns has been shown to predict a suicide attempt up to 2 months in advance, with an 80% accuracy rate (de Avila Berni et al., 2018); this may enable early identification of suicidality if applied to data sources that can be captured in real time such as social media and other personal text-based communications. NLP has also been applied to acoustic analysis of audio conversations to discriminate suicidal and non-suicidal individuals based on spoken language differences (Pestian et al., 2016). In fact, integrating text- and audio-based algorithms into one model can classify 85% of subjects according to suicide risk (Pestian et al., 2017), which is higher than classification based on clinical scale data alone (O’Connor et al., 2012; Passos et al., 2016). NLP can further distinguish between genuine and simulated suicide notes at a higher rate than mental healthcare practitioners (Pestian et al., 2008, 2010). Algorithms have also been designed to automate emotion detection in suicide notes via affective computing (Cherry et al., 2012; Wicentowski and Sydes, 2012) and assist with classifying content into specific themes (Spasic et al., 2012).

More recently, researchers from Vanderbilt University designed an algorithm to predict suicide risk using demographic and personal health data extracted from EMRs. This model predicted suicide risk with an accuracy rate of 84–92% within 1 week, and 80–86% within 2 years (Walsh et al., 2017). Comparable outcomes were obtained when ML was applied to predicting adolescent suicidality after combining more than 600 risk factors extracted from EMRs, with improved accuracy as suicide attempts increased in imminence (Walsh et al., 2018). Similarly, researchers at the University of Vermont developed a social media-based algorithm to identify signs of depression through linguistic content analysis of user accounts, with a specific focus on word count, language use, speech patterns and activity level (Holley, 2017). The development and validation of suicide prediction models hold considerable promise in improving the accuracy and rapid detection of high-risk individuals and may aid in generating hypotheses on the biological basis of suicide. However, the quality and translational potential of these algorithms is highly contingent on the datasets used to build them. Traditionally, EMRs and clinical databases have been harnessed for this purpose, but with the rise of social media and the IoT, multiple longitudinal quantitative and qualitative data points can now be captured in real time from a variety of different sources. Together, these sources provide the data infrastructure to support the ‘ecological momentary assessment’ (EMA) of suicidality, or rather, the evaluation of user experiences, behaviors, cognitions and moods to assess suicide risk in real time and in a natural setting (Burke et al., 2017).

Role of AI in the clinical management of suicide

AI technology has been integrated into suicide management to improve patient care in areas of evaluation, diagnostics, treatment and follow-up. Screening is an area of high importance to ensure those at elevated risk of suicide are identified. Desjardins et al. (2016) developed an assessment tool that predicts imminent suicide risk and makes treatment recommendations comparable to a clinician, with high levels of patient satisfaction (Desjardins et al., 2016). Computerized classification can identify suicidal behaviors with high accuracy in the short term (Delgado-Gomez et al., 2016; Mann et al., 2008), and longitudinally (Batterham and Christensen, 2012), but these tools have yet to be clinically validated. Some decision support systems can even assess the likelihood of a suicide attempt by determining whether a negative endorsement of suicide intent is reliable or not (Zaher and Buckingham, 2017). However, a key question remains as to what variables are required for accurate prediction and how algorithms can be updated dynamically as risk factors change over time.

Conversational agents

A new AI-approach to ‘therapy’ employs conversational agents, which are NLP-based computer programs that directly engage with users in simulated conversation by generating human-like responses using a text- or voice-based interface (Dahiya, 2017). Conversational technology has been increasingly integrated into the delivery of psychological interventions such as social therapy (D’Alfonso et al., 2017), cognitive behavioral therapy (Fitzpatrick et al., 2017) and trauma therapy (Tielman et al., 2017). Since the program responds to the presented dialogue, intervention delivery can be tailored to a patient’s emotional state and clinical needs (D’Alfonso et al., 2017). Similar technologies, although preliminary, are being incorporated into smartphones to allow voice assistants to recognize and respond to user mental health concerns (Miner et al., 2016).

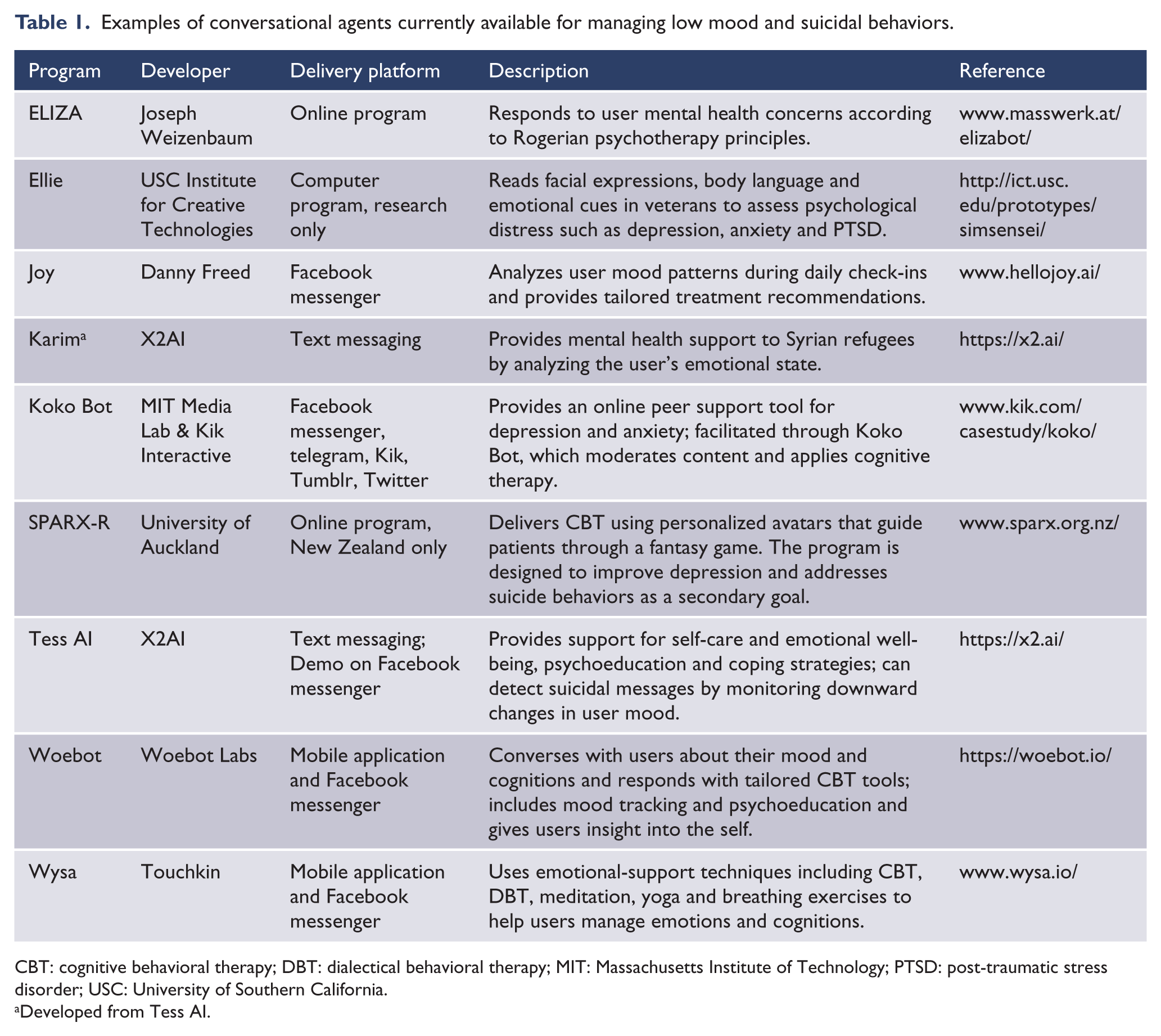

Conversational agents are also being integrated into suicide care, both as virtual counselors and as simulated patients for clinical training. Within direct patient care, conversational agents can be programmed to complete several tasks, which include collecting information on the patient’s clinical state, providing psychoeducation, making recommendations for supports and delivering patient-tailored psychotherapies (Martinez-Miranda, 2017). Several conversational agents have been developed for suicide management, with integration into mobile and web-based platforms (refer to Table 1).

Examples of conversational agents currently available for managing low mood and suicidal behaviors.

CBT: cognitive behavioral therapy; DBT: dialectical behavioral therapy; MIT: Massachusetts Institute of Technology; PTSD: post-traumatic stress disorder; USC: University of Southern California.

Developed from Tess AI.

Facebook, in particular, has become a hotspot for conversational agents, which piggyback off the site’s instant messaging interface to converse with users about their mood and cognitions, provide insights into user behavioral patterns and suggest personalized recommendations for evidence-based tools (Woebot Labs Inc, 2018). These programs are accessible at any time of day and may include extra features such as mood tracking, daily check-ins and psychoeducation (Woebot Labs Inc, 2018). Based on ML, the agents ‘learn’ about the user with each conversation, and some can even study user language patterns, facial and body language, eye movements and emotional cues to assess distress levels (Technologies, 2018). If a suicide threat is detected, some agents can signal for human assistance (AI Business, 2016). AI conversational programs have high usability scores and are well regarded for their user-adapted content and emotional responses (Bresó et al., 2016). Use of these ‘virtual assistants’ also significantly improves depression as compared to psychoeducational materials (Fitzpatrick et al., 2017).

Conversational agents have also been incorporated into hospital discharge protocols to help patients understand post-discharge recommendations. One study found that severely depressed patients were highly satisfied with an AI interface at discharge and preferred using this system over consultation with their clinician (Bickmore et al., 2010). AI-based psychiatric interviews also provide a personalized approach that is well accepted by patients (Erdman et al., 1985). In fact, conversational agents can improve reporting outcomes by increasing a patient’s willingness to disclose personal health information (PHI) (Lucas et al., 2014).

In training settings, conversational agents can simulate suicidal behaviors to educate clinical trainees on screening and assessment practices (Martinez-Miranda, 2017). Use of online role-play simulations with emotionally responsive avatars within suicide prevention programs leads to greater indices of preparedness, self-efficacy and likelihood to utilize the information among trainees (Bartgis and Albright, 2016). Moreover, virtual patient training outperforms standard video modules for teaching clinical students about suicide risk assessment (Foster et al., 2015). Giving patients and clinicians round-the-clock access to supportive conversational agents may have the ability to transform the delivery of mental health supports and training.

AI and social media

In 2017, more than 35% of the world accessed social media, with Facebook accounting for over 1.96 billion users (Statista, 2018). Combining this volume with the vast amount of information that is passively collected, the use of social media represents a promising vector to identify suicide prediction and prevention strategies. Successful application of social media in public health includes areas of surveillance, user engagement, study recruitment and intervention delivery (Sinnenberg et al., 2017). Moreover, 85% of users with mental illness express interest in social media-delivered mental health programs (Naslund et al., 2017).

Big data from social media accounts provide first-person information on user behaviors, emotional well-being and cognitions that can be used to inform suicide prediction algorithms. Once combined with surveillance, these data can also identify at-risk individuals in real time and monitor system trends to predict suicide outbreaks. Twitter, a microblogging service which allows users to share brief statuses or ‘tweets’, is one of the main social networks to have harnessed these capabilities for public health. Within mental health, linguistic signals from Twitter have been used to differentiate users with mental illness from healthy controls (McManus et al., 2015), map emotional signatures (Larsen et al., 2015), generate illness phenotypes (Lachmar et al., 2017) and detect suicidality (O’Dea et al., 2017) and contagion effects (Fahey et al., 2018). ML can identify high-risk suicide content on Twitter with 80% accuracy, with incremental gains in algorithm precision over time (O’Dea et al., 2015). In particular, deep learning has demonstrated superiority over traditional ML methods at automating the detection of psychiatric stressors for increased suicide risk from Twitter data, especially when incorporating transfer learning strategies that include model training from clinical text datasets (Du et al., 2018). Twitter was a pioneer in testing automated suicide response strategies in 2014. This involved alerting users when their followers posted distressing content that suggested suicidal ideation. However, this strategy was eventually suspended due to privacy concerns (Orme, 2014). Since then, other social media sites have added suicide surveillance and crisis response platforms that provide support to users without sharing PHI with their social network.

In 2017, Facebook automated the detection of suicide content. This was an upgrade from a simplified platform that required users to manually upload screenshots (2011) or flag (2015) suicide-based content for urgent review. If suicide risk is detected, crisis response protocols are initiated, which may include providing supportive resources and crisis line information to users, or alerting local emergency responders (Kleinman, 2015; Novet, 2018). Facebook is currently expanding automation to monitor and remove sensitive video posts to prevent live streaming of suicides (Snow, 2018). Other photo and video sharing sites such as Instagram, SnapChat and YouTube, are also monitored to mitigate the circulation of images that reference self-injury and suicide (Chhabra and Bryant, 2016; Lewis et al., 2011; Miguel et al., 2017). Similarly, social blogging and discussion forums, such as Reddit and Tumblr, have been investigated for suicide-related themes and patterns of suicidal behaviors (Cavazos-Rehg et al., 2017; Kavuluru et al., 2016). The incorporation of NLP supports automation efforts to identify emotional distress and suicide risk among users (Cheng et al., 2017; Ren et al., 2016). Logistic regression classification algorithms have also shown promise in detecting suicidal content within online posts with an 80–92% accuracy rate (Aladağ et al., 2018). This is essential to optimize suicide prevention efforts by minimizing subjective interpretation of risk, as content mistakenly perceived as low risk may be less likely to be reported (Corbitt-Hall et al., 2016).

Beyond immediate crisis response, data collected from social media accounts can also be reviewed to identify system-level trends in suicide patterns. Early in 2018, the Public Health Agency of Canada partnered with an AI company, Advanced Symbolics, to launch a pilot program to research regional suicide patterns. The company mines publicly available, anonymized data from Canadian social media accounts to examine suicide hotspots and forecast suicide spikes (Public Health Agency of Canada, 2018). Since the ultimate objective is to inform resource mobilization strategies, the model analyzes suicidal behavior without identifying or intervening in individual cases (Ruskin, 2018). Taken together, these applications have growing potential to be effective tools for suicide prediction and early detection initiatives, at least among those actively using social media.

Critical appraisal of the uptake of AI

Before AI can be successfully integrated into prediction frameworks and clinical practice, several barriers need to be addressed. The paramount concern is privacy, specifically the secure collection, storage and transfer of PHI. The use of web- and social media-based platforms presents inherent security risks that are not yet regulated under privacy legislations. In the first instance, due to built-in syncing capabilities, there is a risk that platforms may inadvertently share user data across applications or with third parties. Second, lack of consensus on what constitutes private versus public content blurs boundaries as to what data can be accessed. This can especially become an issue when anonymized PHI is linked with identifiable data. In the case of Twitter, their suicide surveillance application was suspended on the grounds that notifying a user’s social network about their mental distress violated privacy rights (Orme, 2014). Google was similarly criticized after violating Canadian privacy laws by targeting users with online advertising based on PHI (Hartmann, 2014). Since privacy violations can provoke distrust in the mental healthcare system and make patients less likely to seek supports, protective legislation for PHI will need to expand to include the risks of AI.

A second area of concern is algorithmic accuracy in correctly determining suicide intent, such as in cases of system biases or errors, before labeling a person as high (vs low) risk. There are several examples where AI pattern recognition algorithms have made incorrect classifications. For instance, the London Metropolitan Police attempted to use AI detection software to flag incriminating images of child abuse on electronic devices, but the program routinely misclassified photos of deserts as nudity by mistaking sand as human skin (Collins, 2017). Google also had issues with the precision of their image recognition algorithm (Gray, 2015). This highlights the necessity of algorithmic training to correct mistakes and build model confidence, or predictive accuracy, while not compromising processing speed. Moreover, algorithms will also need to be validated across several contexts before being made available for public use, and even still, predictive performance may vary from one setting to another.

A third area of high importance is patient safety. In particular, it is essential to ensure AI programs can appropriately respond to suicidal users and not worsen their emotional state or accidentally facilitate suicide planning. For example, the responses of smartphone voice assistants to mental health queries have been inconsistent and sometimes inappropriate (Miner et al., 2016). If not carefully managed, poorly designed AI programs could negatively impact imminent safety concerns and even deter users from seeking help or disclosing personal problems in the future.

A fourth key issue pertains to legal responsibility. If a new tool is incorporated into clinical practice, protocols would be needed to determine which patients are offered the tool, who is responsible for monitoring outcomes and responding to high-risk cases, and who bears the costs associated with the tool’s development and dissemination. Response protocols on how to safely handle high-risk cases, false positives (or negatives), and conflicting risk assessments and/or recommendations between the overseeing physician and AI program are also required. Parameters around liability would be needed in the event of a death by suicide.

Since the integration of AI into mental healthcare is relatively new, it will take considerable time and access to volumes of patient data to train an AI model to outperform clinicians. Consequently, optimal use of AI technology at the present time should occur under the supervision of a clinician who can guide application according to professional expertise and personal context. Using a combined AI–human interface will allow clinical practice and prediction efforts to capitalize on the benefits of both systems while minimizing potential errors. However, in considering that many algorithms are ‘black boxes’, more education on AI interfaces is required while maintaining transparency about potential benefits, risks and limitations.

Conclusion and future directions

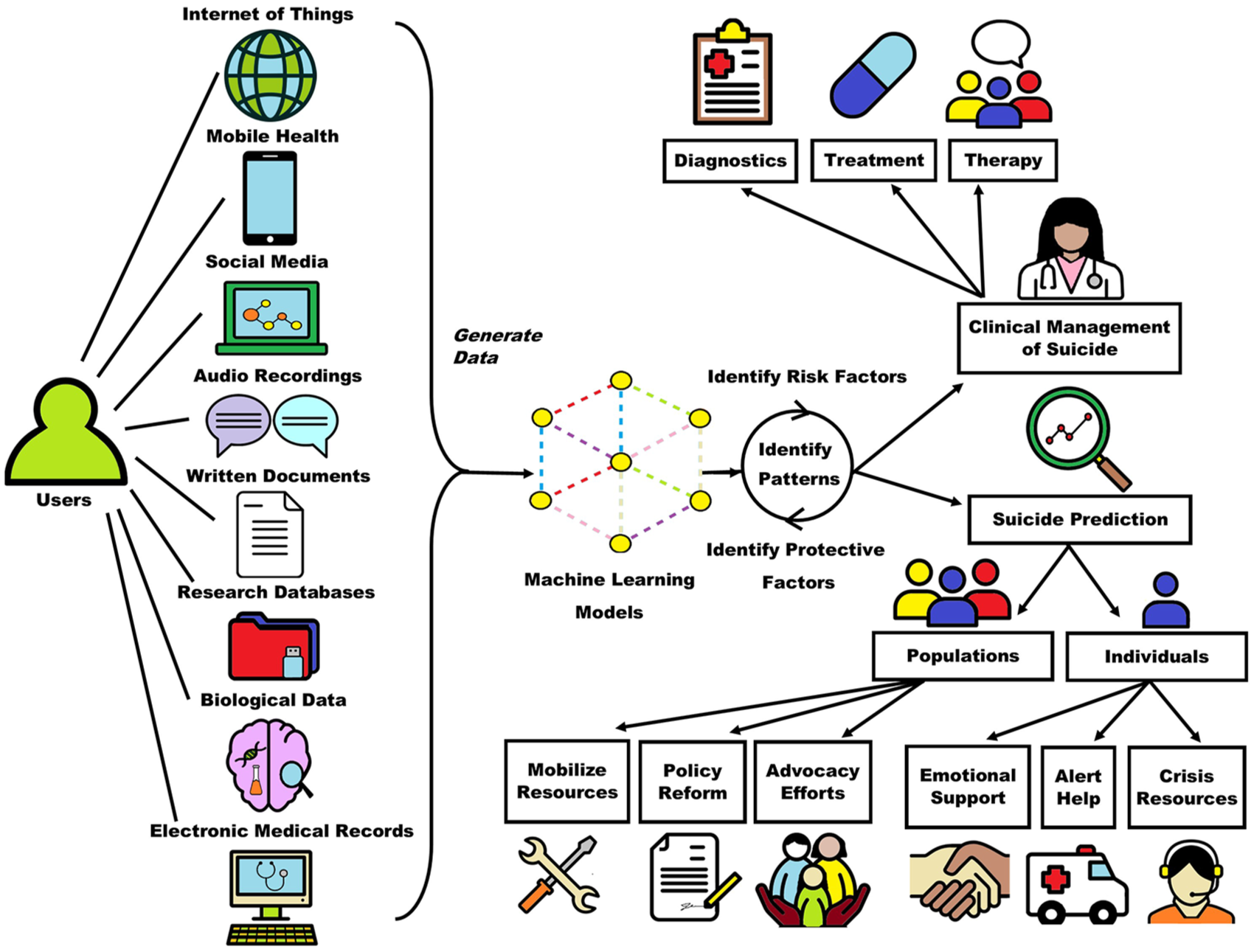

The integration of AI into mental healthcare holds considerable promise for improving suicide prevention. Patient data can be collected from multiple sources including devices connected to the IoT, mobile and other smart technologies, social media, audio recordings, personal and clinician-written documents, research databases, biological data and EMRs. These data can be used to develop ML models where patterns of suicide and suicidal behaviors, including risk factors and protective factors, can be identified and used to inform clinical management strategies and prediction analytics at the individual and population level. The outputs of ML are pivotal in advancing the field of AI toward developing technologies that can analyze both written and audio medical information and generate responses in similar formats. This has been used within the clinical management of suicide to build patient care systems that provide real-time, integrative support across algorithm-informed diagnostics, follow-up evaluations, medication management and behavioral therapy delivery (refer to Figure 2). However, a key area for the future would be to integrate AI with EMRs to offer automated alerts when elevated suicide risk is detected among patients presenting in emergency departments or clinics. Since ML has also aided in the development of social media-based suicide interventions and therapeutic conversational agents, there is likely to be an enhanced uptake of AI-delivered suicide care among youth. In fact, data from a child and adolescent inpatient unit showed that 66% of discharged patients used a smartphone, and the majority were interested in smartphone-delivered safety planning (Gregory et al., 2017). Although the extension of suicide prevention strategies to mobile applications should enhance uptake by improving accessibility, since some applications may have inaccurate or potentially harmful content, clinicians should become familiar with an intervention before recommending it to patients. As mobile- and social media-based interventions grow in popularity, more evaluations of safety and effectiveness are also needed.

The application of machine learning to the clinical management and prediction of suicide. Patient data can be collected from multiple sources and used to develop machine learning models where patterns of suicide and suicidal behaviors can be identified and used to inform clinical management strategies and prediction analytics.

Patient data can additionally be used to support AI prediction efforts, which can separately target individual users and whole populations. At the individual level, suicide prediction can help to identify individuals in crisis in order to offer emotional support, crisis and psychoeducational resources, and alert for help from emergency responders. At the population level, although real-time intervention is not feasible, algorithms can identify at-risk groups or suicide hotspots, which help inform resource mobilization, policy reform and advocacy efforts. AI-facilitated risk prediction is relatively early on in its uptake and will likely continue to expand its capabilities. Incorporating biological multi-omics—data collected across the proteome, genome/epigenome, microbiome and transcriptome—into prediction algorithms has the potential to create a precision medicine approach to suicide risk assessment. Multi-omics would also generate large datasets that can be explored to identify biomarkers of suicide. ML has already demonstrated utility in processing functional neurobiological data to discriminate suicide ideators and attempters with relatively high accuracy (85–94%) (Just et al., 2017).

AI technology provides a time- and resource-effective alternative to clinician-based screening. Since the majority of individuals who die by suicide seek clinical support (Luoma et al., 2002), readily accessible AI interfaces may have considerable impact toward mitigating risk. This is only strengthened by the reality that AI technologies have the versatility to be adapted to various clinical settings and patient demographics and can be easily employed in remote locations where access to local mental healthcare support is limited. As more AI-based programs are developed for managing suicide, design processes should continue to involve persons with lived experience to ensure applications are suitable and clinically impactful for end users. It is also important to consider that different interventions may be needed along the suicide continuum. Moreover, patterns of suicide should be extended beyond risk factors to investigate indices of resilience, which may be important in predicting suicide and also aid in the development of mental wellness interventions. Nevertheless, despite the many benefits of AI within suicide care, its application will yield the best patient outcomes when used in conjunction with clinician expertise, not in isolation.

Footnotes

Contributors

All authors had full access to the data presented in this review and take responsibility for the integrity of the data and the accuracy of the data interpretation. T.M.F., V.B. and S.H.K. contributed to the study concept and design. T.M.F. contributed to the acquisition of data. T.M.F., V.B. and S.H.K. contributed to the interpretation of data. T.M.F. contributed to drafting the manuscript. T.M.F., V.B. and S.H.K. contributed to the critical revision of the manuscript for important intellectual content. S.H.K. contributed to study supervision. All authors have given approval for the final version of the article to be published.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship and/or publication of this article: S.H.K. has received research funding or honoraria from the following sources: Abbott, Allergan, AstraZeneca, Boehringer-Ingelheim, BMS, Brain Canada, CIHR, Eli Lilly, Janssen, Lundbeck, Lundbeck Institute, Ontario Brain Institute, Otsuka, Pfizer, Servier, St. Jude Medical, Sunovion and Xian-Janssen. T.M.F. and V.B. have no conflicts to disclose.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This research was conducted as part of the Canadian Biomarker Integration Network in Depression (CAN-BIND) program. CAN-BIND is an Integrated Discovery Program carried out in partnership with, and financial support from, the Ontario Brain Institute (OBI), an independent non-profit corporation, funded partially by the Ontario government. The opinions, results and conclusions are those of the authors and no endorsement by the OBI is intended or should be inferred.