Abstract

Purpose of the Review:

Psychotherapy is crucial for addressing mental health issues but is often limited by accessibility and quality. Artificial intelligence (AI) offers innovative solutions, such as automated systems for increased availability and personalized treatments to improve psychotherapy. Nonetheless, ethical concerns about AI integration in mental health care remain. This narrative review explores the literature on AI applications in psychotherapy, focusing on their mechanisms, effectiveness, and ethical implications, particularly for depressive and anxiety disorders.

Collection and Analysis of Data:

A review was conducted, spanning studies from January 2009 to December 2023, focusing on empirical evidence of AI’s impact on psychotherapy. Following PRISMA guidelines, the authors independently screened and selected relevant articles. The analysis of 28 studies provided a comprehensive understanding of AI’s role in the field. The results suggest that AI can enhance psychotherapy interventions for people with anxiety and depression, especially chatbots and internet-based cognitive-behavioral therapy. However, to achieve optimal outcomes, the ethical integration of AI necessitates resolving concerns about privacy, trust, and interaction between humans and AI.

Conclusion:

The study emphasizes the potential of AI-powered cognitive-behavioral therapy and conversational chatbots to address symptoms of anxiety and depression effectively. The article highlights the importance of cautiously integrating AI into mental health services, considering privacy, trust, and the relationship between humans and AI. This integration should prioritize patient well-being and assist mental health professionals while also considering ethical considerations and the prospective benefits of AI.

AI-assisted interventions for depression and anxiety led to moderate to strong symptom improvement and engagement. Key concerns included data privacy, loss of therapeutic trust, and limited emotional reciprocity. Ethical safeguards, transparency, and clinical oversight are essential for responsible AI integration in mental health care.Key Messages:

Psychotherapy is a psychological intervention aimed at helping individuals overcome various mental health issues. It can be delivered through different methods and techniques, such as cognitive-behavioral and psychodynamic therapy. However, challenges such as stigma and accessibility often limit its effectiveness. Artificial intelligence (AI) offers innovative opportunities to enhance psychotherapy through personalized interventions using tools such as chatbots and precision therapeutic techniques. While prior research has primarily focused on AI’s role in diagnosing and classifying mental health disorders, our study extends the application of AI into the realm of treatment, evaluating the effectiveness of AI-based psychotherapy interventions in enhancing mental health outcomes. This study reviews existing research on AI in psychotherapy, focusing on integrating AI into mental health. It highlights its potential to enhance diagnosis and treatment, particularly for depression and anxiety, and its effectiveness in treating mental health disorders and addressing ethical implications and operational challenges. While AI’s use in medicine is well established, its application in mental health is evolving, offering cost-effective, stigma-reducing solutions accessible via smartphones. 1 AI interventions such as chatbots personalize care and support symptom management but face challenges ensuring data privacy and maintaining human empathy. The future of AI in psychotherapy promises greater accessibility and requires ongoing ethical and clinical research to optimize its implementation. The findings suggest that AI interventions can improve psychotherapy outcomes, particularly in treating depression and anxiety disorders. Further research is needed to explore AI’s efficacy and ethical considerations in psychotherapy, highlighting the evolving relationship between technology and mental health care.

Methods

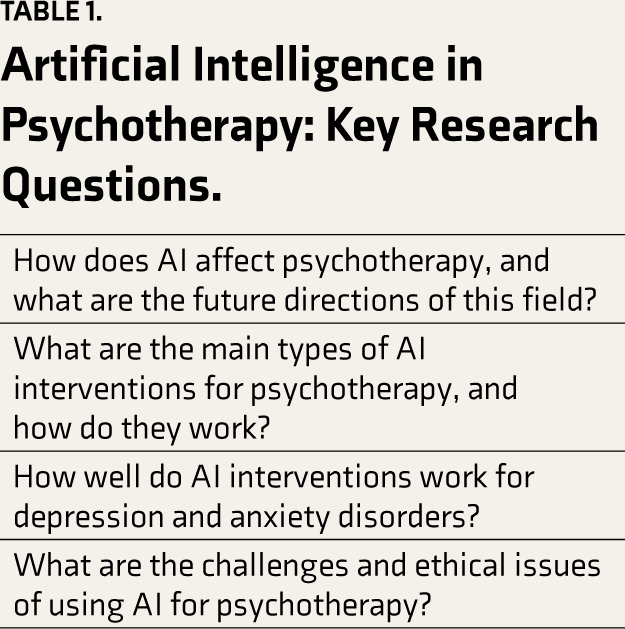

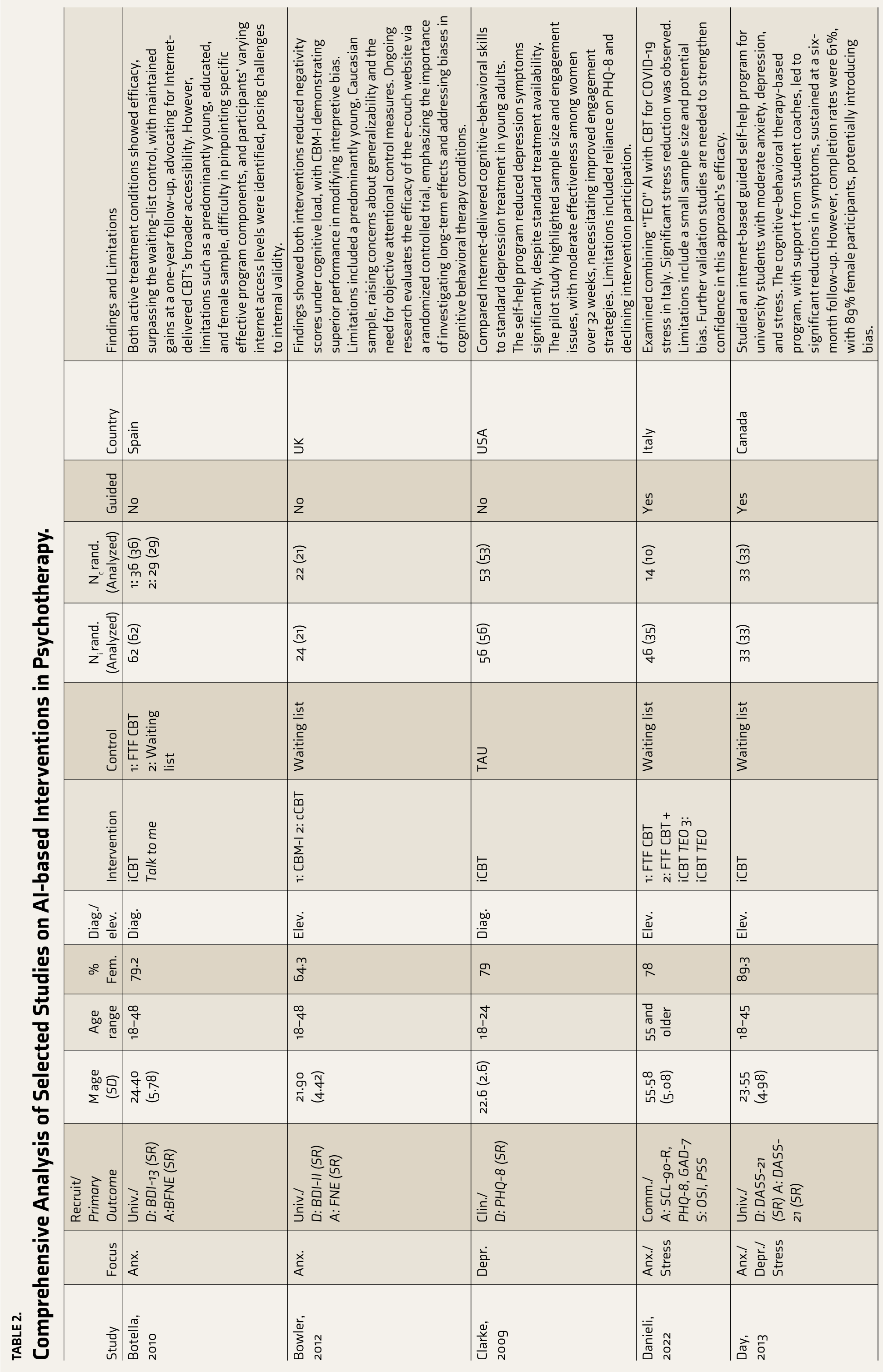

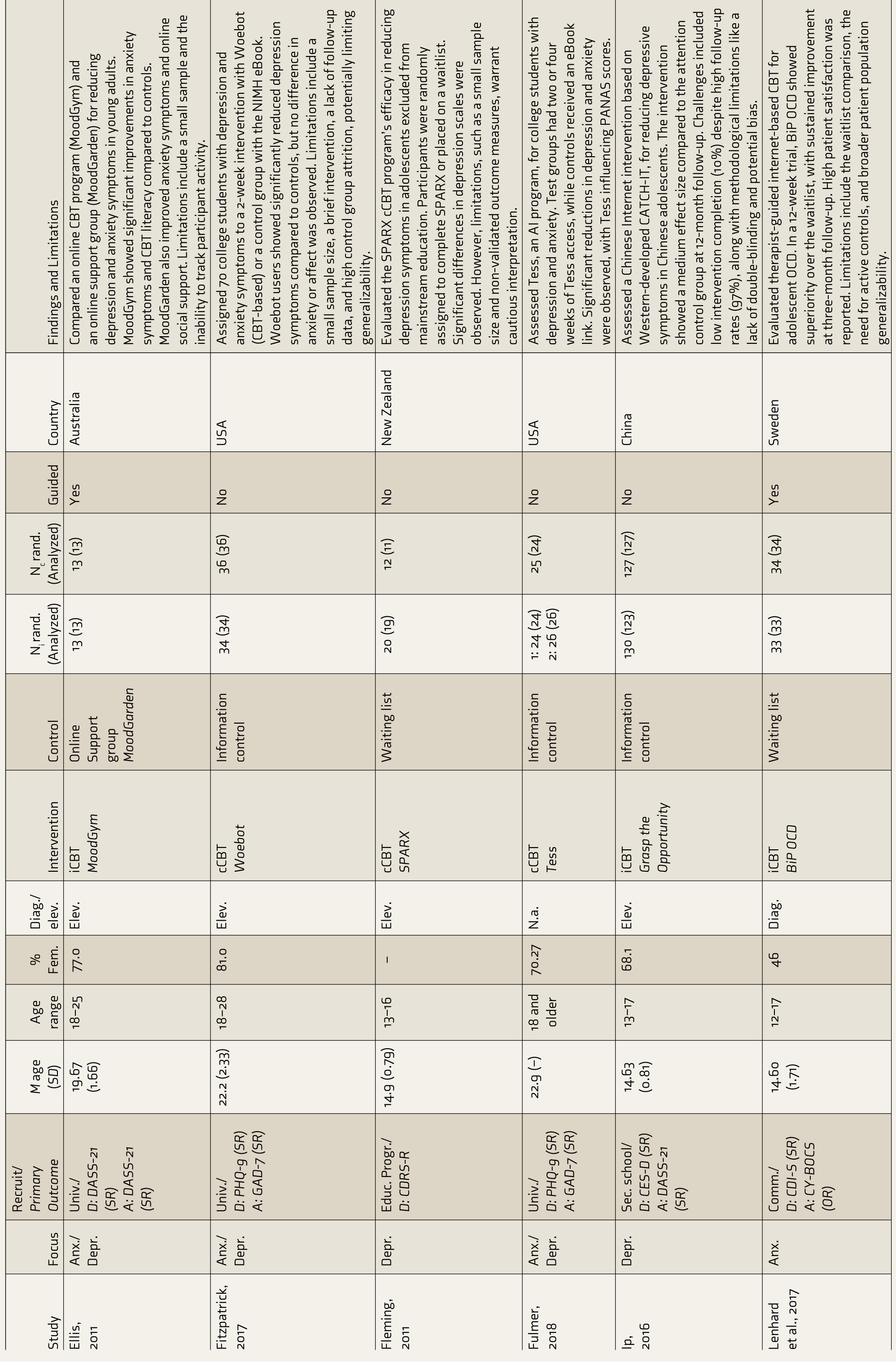

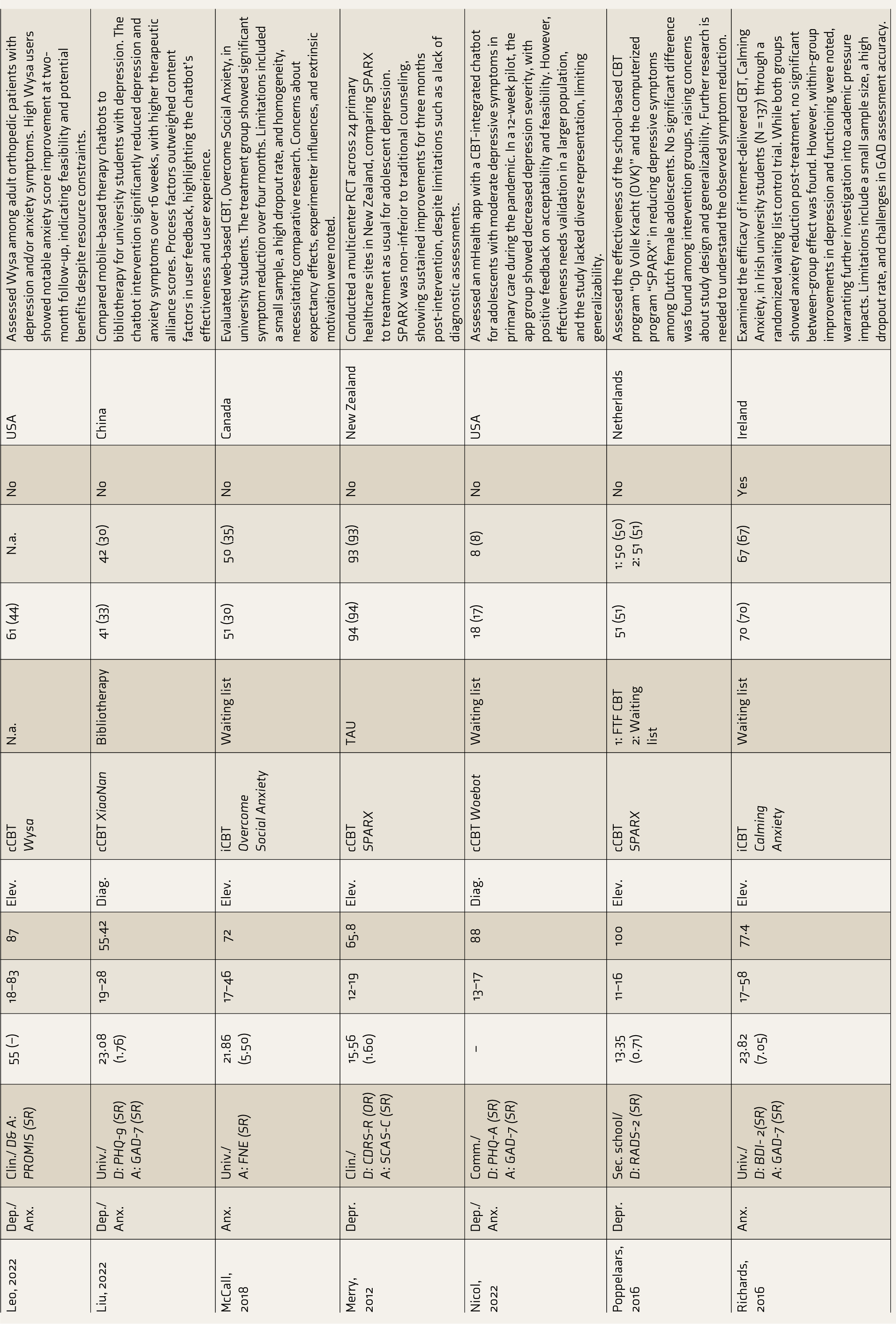

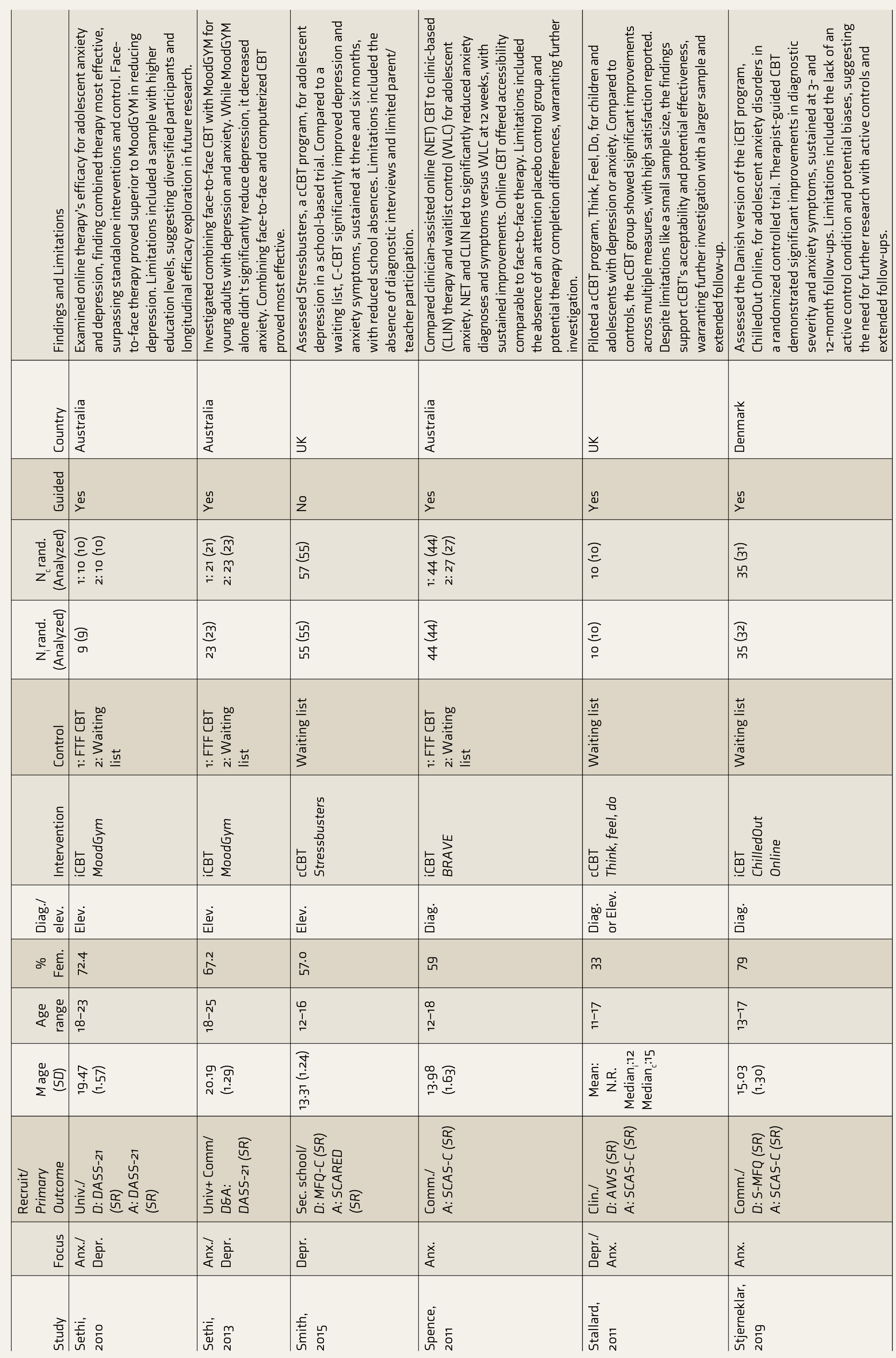

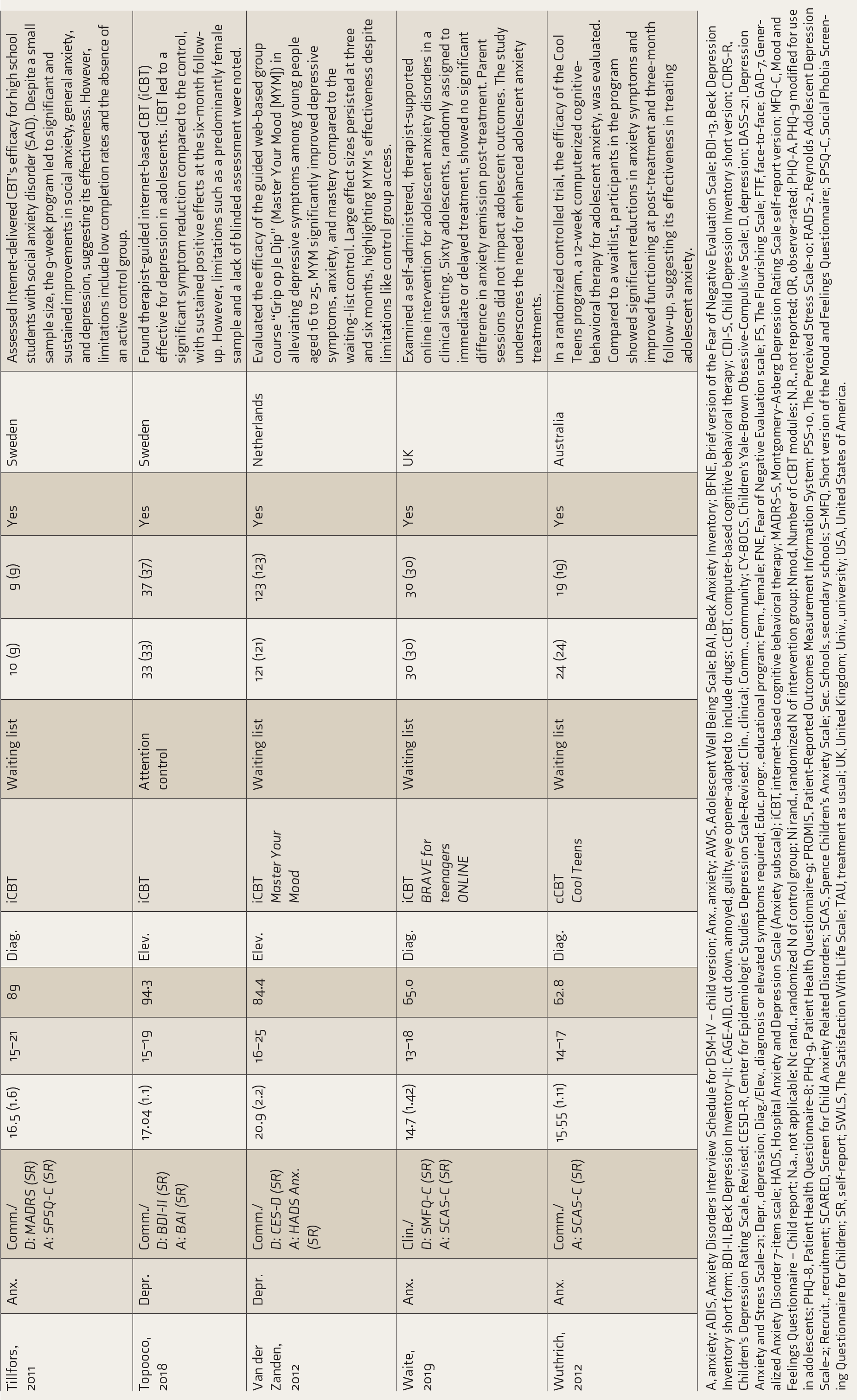

We extensively reviewed the available literature between December 2023 and February 2024. This examination involved searching via several online databases, namely, Scopus, PsycINFO, PubMed, and Google Scholar. The search queries employed were as follows: (“artificial intelligence” OR “machine learning” OR “deep learning”) AND (“mental health” OR “mental disorders” OR “psychological interventions” OR “psychotherapy”) AND (“chatbot” OR “conversational agent” OR “Woebot” OR “Joy” OR “Wysa”). In addition, we employed the Boolean operators AND, OR, and NOT to merge and enhance our search terms. We restricted our search to English papers published between January 2009 and December 2023. The search entailed employing diverse terms in numerous permutations and combinations to target the research questions in Table 1. In order to assist the selection of relevant papers for our thorough review study, we have defined the following inclusion and exclusion criteria: Inclusion: (a) articles on AI in psychotherapy as a treatment or tool; (b) studies showing AI’s efficacy or acceptance in psychotherapy; (c) focus on depressive/anxiety disorders; and (d) use of randomized trials or observational studies. Exclusion: (a) articles on AI outside of psychotherapy; (b) non-empirical discussions on AI in psychotherapy; (c) general mental health topics; and (d) non-empirical designs like opinions or editorials. The study adhered to PRISMA guidelines, with three authors independently searching the literature using set terms and criteria and consulting a fourth author for discrepancies. Publications were selected based on titles and abstracts, and full texts were further assessed. Additional articles were found through references. Data extraction was template based. The study synthesized themes, patterns, trends, gaps, and issues in AI’s psychotherapy application, providing a foundation for future research (Table 2).

Artificial Intelligence in Psychotherapy: Key Research Questions.

Comprehensive Analysis of Selected Studies on AI-based Interventions in Psychotherapy.

A, anxiety; ADIS, Anxiety Disorders Interview Schedule for DSM-IV – child version; Anx., anxiety; AWS, Adolescent Well Being Scale; BAI, Beck Anxiety Inventory; BFNE, Brief version of the Fear of Negative Evaluation Scale; BDI-13, Beck Depression Inventory short form; BDI-II, Beck Depression Inventory-II; CAGE-AID, cut down, annoyed, guilty, eye opener-adapted to include drugs; cCBT, computer-based cognitive behavioral therapy; CDI-S, Child Depression Inventory short version; CDRS-R, Children’s Depression Rating Scale, Revised; CESD-R, Center for Epidemiologic Studies Depression Scale-Revised; Clin., clinical; Comm., community; CY-BOCS, Children’s Yale-Brown Obsessive-Compulsive Scale; D, depression; DASS-21, Depression Anxiety and Stress Scale-21; Depr., depression; Diag./Elev., diagnosis or elevated symptoms required; Educ. progr., educational program; Fem., female; FNE, Fear of Negative Evaluation scale; FS, The Flourishing Scale; FTF, face-to-face; GAD-7, Generalized Anxiety Disorder 7-item scale; HADS, Hospital Anxiety and Depression Scale (Anxiety subscale); iCBT, internet-based cognitive behavioral therapy; MADRS-S, Montgomery-Asberg Depression Rating Scale self-report version; MFQ-C, Mood and Feelings Questionnaire – Child report; N.a., not applicable; Nc rand., randomized N of control group; Ni rand., randomized N of intervention group; Nmod, Number of cCBT modules; N.R., not reported; OR, observer-rated; PHQ-A, PHQ-9 modified for use in adolescents; PHQ-8, Patient Health Questionnaire-8; PHQ-9, Patient Health Questionnaire-9; PROMIS, Patient-Reported Outcomes Measurement Information System; PSS-10, The Perceived Stress Scale-10; RADS-2, Reynolds Adolescent Depression Scale-2; Recruit., recruitment; SCARED, Screen for Child Anxiety Related Disorders; SCAS, Spence Children’s Anxiety Scale; Sec. Schools, secondary schools; S-MFQ, Short version of the Mood and Feelings Questionnaire; SPSQ-C, Social Phobia Screening Questionnaire for Children; SR, self-report; SWLS, The Satisfaction With Life Scale; TAU, treatment as usual; UK, United Kingdom; Univ., university; USA, United States of America.

What Is AI?

AI, coined by John McCarthy, refers to machine-based intelligence that operates within and impacts its environment. The conditions for machine intelligence set forth by Alan Turing in his influential 1950 article have played a fundamental role in the development of AI. AI plays a crucial role in the digital revolution by enhancing various sectors, including health care and psychotherapy, with groundbreaking treatment methods. In India, AI’s role in mental health care is gaining momentum, offering new diagnostic and treatment avenues for conditions such as schizophrenia, depression, Alzheimer’s, and temporal lobe epilepsy (TLE). AI’s predictive capabilities extend to postpartum depression and burnout among healthcare workers. Despite its potential, AI’s integration into mental health services remains nascent. 2

Types of Artificial Intelligence

Machine learning (ML): an approach that uses data and algorithms to create models that autonomously predict and classify, focusing on data-driven hypothesis generation.

Natural language processing (NLP): a field dedicated to the computational handling and analysis of human language, particularly unstructured text.

Deep learning: advanced algorithms that discern intricate patterns in data, crucial for tasks such as depression detection and treatment response prediction.

Transfer learning: a technique where knowledge from one problem is applied to a related problem.

Expert knowledge systems: AI programs that solve complex issues using deductive reasoning and inference based on user queries.

Neural networks (NN): artificial neuron networks that model complex input–output relationships and detect data patterns.

Predictive analytics: techniques that forecast future outcomes using historical data, statistical analysis, and ML.

ML and NLP are pivotal for leveraging untapped mental health data. Before their full integration, it is critical to resolve ethical issues, including privacy, consent, and bias. 3

AI is gaining traction in psychotherapy, especially in regions with robust tech infrastructures. Although national mental health programs have not explicitly endorsed AI in psychotherapy, its potential is widely recognized, and there is a demand for comprehensive and secure assessments of its applications. 4 Globally, there is a call for regulatory bodies to monitor AI in health care, with organizations like WHO setting principles for AI regulation and governance. 5 The Indian Council of Medical Research (ICMR) has established guidelines for AI use in biomedical research and health care. 6 Comparative evaluations indicate that while AI may improve accessibility and flexibility, it has not yet been able to recreate the profound therapeutic bond and empathy inherent in face-to-face encounters. However, therapies based on AI have shown potential for decreasing psychological discomfort, but their effects on life satisfaction differ. To protect patient well-being and provide high-quality treatment, the use of AI in mental health care necessitates a careful equilibrium between technical progress and ethical principles. The expansion of AI psychotherapy offers a significant chance to enhance self-management abilities and health results, especially for disadvantaged areas. 7 This technological breakthrough can transform the field despite the challenges and constraints that it entails. Incorporating AI in psychotherapy presents ethical dilemmas about privacy, trust, and human–AI interaction. Future problems include tackling ethical issues about confidentiality, data security, and AI’s ability to manage the many emotional and cultural aspects inherent in psychotherapy. 8

Chatbots for Mental Health

AI-driven chatbots are increasingly utilized in mental health for therapy and support. Research indicates their potential to enhance interventions, with studies like Ly et al. showing the Shim app’s positive effects on well-being. 9 Aggarwal et al. note chatbots’ role in encouraging healthy behaviors. 10 Haque and Rubya call for more user safety and privacy research. 11 Vaidyam et al. report benefits in psychoeducation and adherence but caution against premature integration into therapy due to limitations in crisis management and information delivery. 12 Chatbots offer accessibility, affordability, and scalability over traditional methods, yet ethical, legal, and technical considerations, alongside the need for human oversight, warrant a careful evaluation before full adoption in clinical settings.

AI-assisted Language Analysis and Intervention Optimization in Psychotherapy

Recent AI advancements have transformed mental health therapy, enabling nuanced interpretations of psychotherapy dialogue and personalized digital treatments. Studies by Fleming et al. 13 and Blackwell et al.14,15 on NLP and deep learning were used to analyze and enhance therapist–patient communication, highlighting AI’s potential in improving mental health interventions, especially for anxiety disorders. Miner et al. 16 and Ryu et al. 17 further demonstrated AI’s ability to dissect and optimize therapeutic language and alliances. While AI promises to refine therapy delivery and outcomes, it also requires careful navigation of ethical considerations, including bias and privacy, to augment rather than replace the human element in psychotherapy.

Digital Phenotyping

Digital phenotyping, a personal sensing technique, leverages smartphones to identify environmental and behavioral traits, forecast psychological outcomes, and detect mental health issues. It integrates with precision medicine, tailoring treatments based on individual characteristics. Together, they enhance health care by leveraging data from devices and genetic information, transforming health care into a proactive model and innovating medical knowledge production. 18

BiAffect and Mobile Typing Kinematics in Mental Health Well-being

BiAffect, developed by the University of Illinois, Chicago, leverages an iPhone app to assess users’ typing patterns, mood, and cognitive function. Using the mobile typing kinematics approach, it accurately detects manic or depressive episodes in individuals diagnosed with bipolar disorder or severe depressive illness. This technology holds promise for enhancing healthcare accessibility, empowering patients, and improving system efficiency. However, ethical concerns arise regarding data collection and privacy protection. 19

Telepsychiatry and AI Therapy

Telepsychiatry has expanded to include AI-driven assessments, transitioning to asynchronous systems. Using ML and NLP, AI therapy enhances mental health interventions by analyzing data patterns, supporting therapists, and serving underserved regions. Pham et al. investigated AI’s response to COVID-19’s mental health challenges using chatbots and avatars and acknowledged AI’s limits in emotional depth and clinical application. 20 Balcombe stressed the importance of in-depth, long-term research to maximize AI chatbots’ effectiveness in mental health care. 21

Automation

AI is transforming psychotherapy, automating tasks for efficiency gains. Chatbots extend therapy’s accessibility and affordability, and AI-enabled virtual and robotic therapies could deepen emotional, cognitive, and social engagement. Recognizing the growing complexity of AI–human interactions, it is essential to appreciate the nuanced nature of this dynamic beyond simple data exchange initiatives, which could mitigate mental health stigma and address the deficit of mental health professionals in India, where the psychiatrist-to-population ratio is below the recommended 3 per 100,000 and smartphone and internet penetration is high. The AI-driven chatbots can diagnose, offer evidence-based treatments, and monitor patient well-being, acting as digital counselors. They streamline service initiation, enhance personalized care, and maintain user confidentiality. By automating administrative tasks and supporting human therapists, AI improves care accessibility, affordability, and efficiency, potentially addressing the mental healthcare shortage and supporting practitioner well-being. 22

AI-based Psychotherapy for Depression

Extensive research has been conducted on the efficacy of digital therapeutics in mitigating the symptoms of depression across various demographic cohorts. A study conducted by Clarke et al. discovered that a Web-based cognitive-behavioral skill program had a substantial impact on reducing depressive symptoms, particularly among female participants. Nevertheless, the study’s findings were constrained by the small number of participants and their extended involvement duration. 23 Day et al. found comparable outcomes among college students using an internet-based guided self-help program. Nevertheless, the study’s conclusions may be biased and imprecise due to the absence of confidence intervals and the high proportion of participants dropping out. 24 Ellis et al. evaluated the effectiveness of online cognitive-behavioral therapy programs, highlighting their efficacy despite certain limitations. 25 In their study, Fitzpatrick et al. investigated the effectiveness of Woebot, a conversational agent that uses text-based communication, in lowering symptoms of depression among college students. Although there were several limitations, the study included comprehensive documentation and accurate statistical evaluations. 26

Fleming et al.’s study found that the SPARX cCBT program significantly improved CDRS-R scores compared to a control group. 27 Fulmer et al. evaluated the efficacy of Tess, an AI program, in assisting college students experiencing symptoms of sadness and anxiety. The study revealed substantial decreases in symptoms as measured by the PHQ-9, which indicates Tess’s efficiency, even in accepting the presence of biases. 28 Ip et al. assessed the “Grasping the Opportunity” program in Chinese adolescents, employing randomization procedures and thorough documentation. 29 A study by Leo et al. found that Wysa effectively manages depression and anxiety in adult orthopedic patients, with 84% continuing treatment and 72% actively participating. This suggests integrating digital mental health therapy with orthopedic care, even in the face of cost constraints. 30 Merry et al. found that SPARX computerized cognitive-behavioral treatment effectively reduced depressive symptoms in teenagers despite the lack of blinding, highlighting the potential benefits of digital mental health therapy in various settings. 31

In a study conducted by Nicol et al., it was discovered that the utilization of a mobile health application and chatbot for cognitive-behavioral therapy in teenagers with moderate depression severity led to a substantial decrease in depression severity. 32 In a study conducted by Poppelaars et al., it was discovered that both school-based and computerized cognitive-behavioral therapy programs were successful in diminishing symptoms of depression among adolescent girls in the Netherlands. Nevertheless, the design of these programs exhibited flaws and insufficient reporting, necessitating additional examination. 33 A study conducted by Sethi et al. demonstrated the efficacy of combining online and face-to-face cognitive-behavioral therapy for treating anxiety and depression in teenagers. The combination approach yielded more favorable outcomes, resulting in significant symptom reductions, supported by thorough reporting and confidence intervals. 34 Sethi et al. also demonstrated the combined approach’s effectiveness, with comprehensive reporting and confidence intervals enhancing result interpretation. 35

In 2015, Smith’s study demonstrated that the Stressbusters program effectively treated depression in adolescents, with an impressive completion rate of 86%; it led to improved school attendance and also alleviated sadness and anxiety symptoms in adults with mild to moderate depression. 36 In 2011, Stallard and colleagues conducted a pilot study on “Think, Feel, Do,” a computer-based cognitive-behavioral therapy software for teenagers with anxiety. The study showed improvements in seven assessment areas but faced challenges such as high dropout rates and insufficient record-keeping, necessitating further investigation. 37

Topooco et al. conducted a comprehensive analysis of internet-based cognitive-behavioral treatment for depression in teenagers, enhancing the precision of the results by incorporating confidence intervals. 38 Liu et al. compared mobile therapy chatbots to bibliotherapy for depressed university students, facing attrition and lacking detailed methodological explanations. 39 According to Van der Zanden et al., the “Grip op Je Dip” guided Web-based group training was successful in decreasing depression symptoms in young individuals. Nevertheless, they recognized constraints such as inadequate reporting and possible biases. 40

AI-powered psychotherapy interventions have favorable prospects for enhancing depression therapies. Further investigation is required to tackle methodological obstacles and enhance their efficacy among various populations. Rigorous study designs and enhanced engagement approaches must address methodological limitations, such as sample biases and attrition rates. The use of AI technologies can enhance the personalized and adaptable nature of AI-powered solutions to cater to the unique needs of individuals suffering from depression, thus enhancing mental health outcomes.

AI Applications in Psychotherapy for Anxiety

Botella et al.’s study discovered that self-help tools such as the “Talk to Me” internet-based telepsychology program achieved greater success than a control group. However, the study also recognized certain shortcomings in its methodology. 41 Bowler et al.’s study found that computerized cognitive-behavioral therapy and cognitive bias modification of interpretations effectively decreased negative interpretative bias. However, CBM-I was more beneficial in mental load–related situations, and the trial did not show a conclusive advantage in overall treatment effectiveness. 42 In 2017, a study by Lenhard et al. found that internet-based cognitive-behavioral therapy (ICBT) effectively reduced depression symptoms in adolescents with obsessive-compulsive disorder (OCD). The study used the CY-BOCS metric and randomization to evaluate its efficacy. However, the study recommended further research to improve the empirical foundation of ICBT’s effectiveness, including comparisons with other active therapeutic approaches. 43

McCall et al.’s study found that an internet-based CBT program significantly reduced social anxiety scores in college students, as evidenced by significant improvements in the Social Interaction Anxiety Scale and Fear of Negative Evaluation Scale. The program, despite high dropout rates and inadequate reporting, showed improvements in depression symptoms, occupational performance, and social functioning. 44 Richards et al. discovered that ICBT significantly reduced anxiety symptoms in college students. 45 Similarly, Spence et al. observed that both online and clinic-based cognitive-behavioral therapy helped reduce anxiety diagnoses and symptoms in adolescents with anxiety. 46

A 2019 study conducted by Stjerneklar et al. assessed the effectiveness of a guided ICBT program in treating anxiety among teenagers. The study determined that the program was efficacious. However, it did identify potential biases and poor reporting. 47 Waite’s study found no significant difference in remission of primary anxiety disorder between immediate treatment and waitlist groups, emphasizing the need for further research to develop more effective treatments for adolescents. 48

Tillfors et al. found that ICBT is effective in treating social anxiety disorder in high school students. 49 A randomized controlled trial by Wuthrich et al. confirmed the Cool Teens program’s effectiveness, showing significant reductions in anxiety disorders, symptom improvements, and reduced life interference compared to the waitlist group. The program’s effectiveness was confirmed despite limitations such as a small sample size and short follow-up. 50

Danieli et al.’s study found that integrating conversational AI with cognitive-behavioral therapy reduced stress and alleviated mental symptoms during the COVID-19 pandemic, but its limited sample size hindered its effectiveness. 51 These findings emphasize the significance of managing anxiety in adolescents and propose that AI-enhanced CBT could serve as a beneficial instrument in mental health therapy.

Complexities of AI Integration in Clinical Environments

The integration of AI in health care faces challenges such as automation bias, blind spots, and limited generalizability. Healthcare providers may become overly reliant on AI, leading to potential biases or errors. AI models that prioritize speed may compromise accuracy for some patients. 52 AI’s restricted generalizability hinders its application across diverse populations or settings. The effectiveness of visual explanations in AI for identifying biases and aiding decision-making is still being determined. Clinicians’ overdependence on AI predictions without proper evaluation can affect decision-making. Understanding the nuances of human–AI interaction is crucial for effective decision-making. Additionally, enhancing trust and knowledge about AI among medical professionals and patients is essential for its acceptance in health care. 52

Discussion

AI-based mental health research underscores technology’s transformative potential in care delivery. AI could alleviate therapy access and cost issues. Of the 28 studies reviewed, most were from 2013-2023, with eight from before 2012, reflecting a recent research surge. The focus was on AI for depressive disorders, examining 17 papers. Clarke et al. found an online cognitive-behavioral program promising for youth depression despite sample size and engagement issues. Day et al. reported an online self-help program’s success in reducing student distress, though with suboptimal completion rates. Fitzpatrick et al. and Fulmer et al. highlighted AI’s role in lessening depression and anxiety symptoms, cautioning that small samples, biases, and brief interventions necessitate cautious interpretation.

The review of 11 studies underscores the success of ICBT in treating anxiety across demographics. Notable research by Botella et al., Bowler et al., and others showed marked symptom improvement. However, concerns over sample consistency and the absence of active control groups were noted. The generalizability of results is limited by small sample sizes and brief study durations, necessitating cautious application to wider populations.

The narrative review emphasizes the need for extensive research in AI-driven psychotherapy, requiring culturally diverse samples, improved participant involvement, comparative studies, long-term effect evaluation, clinical integration, and stringent data confidentiality measures to fully understand its potential impacts. AI’s incorporation into mental health care must balance innovation with ethical considerations. It should employ inclusive data models, align with health systems, and be subject to continuous therapeutic evaluation. 53 AI should help reduce diagnostic and treatment biases, possibly evidenced by case studies. Ethical strategies are needed for informed consent and to avoid perpetuating biases. 54 AI should enhance, not replace, human care, ensuring therapeutic integrity. Diverse data sets are vital for representative AI models, and clinician training is key to working effectively with AI and maintaining unbiased judgment. Improved explanation tools are also necessary for transparency in AI decision-making. Integrating AI in mental health care necessitates a balance between technological advancement and ethical integrity, with clear protocols to safeguard patient welfare, confidentiality, autonomy, care quality, and transparent discourse to enhance public understanding of AI’s clinical applications. 55

Our study focuses on a specific area of AI in psychotherapy and does not evaluate the quality of studies because of differences in research methods. It emphasizes the need for more investigation into AI’s ethical and practical consequences for mental health. Our focus was primarily on evaluating the effectiveness of AI in reducing symptoms. However, we also recognize the need to expand this evaluation to include health-related quality of life (HRQoL) and the therapeutic relationship. The replication of the therapeutic bond by AI, which is essential for achieving favorable results in psychotherapy, continues to be an intricate procedure. The integrated treatment techniques emphasize the importance of human relationships, which may have been constrained in the scope of our study. Therefore, future studies should investigate the broader influence of AI on HRQoL and its therapeutic relationship.

Conclusion

An analysis of 28 studies highlights AI’s potential to enhance mental health treatments. AI-based cognitive-behavioral therapy and chatbots have reduced symptoms and improved patient outcomes. Despite limitations such as small sample sizes, biases, short interventions, high dropout rates, and limited generalizability, AI offers advantages such as better accessibility, cost-effectiveness, efficiency, personalized care, and diagnostic accuracy. However, ethical concerns such as algorithmic bias, privacy, transparency, accountability, and data misuse must be addressed. While AI has transformative potential in mental health care, its adoption must be carefully considered and evaluated.

Footnotes

Declaration of Conflicting Interests

None Used.

Declaration Regarding the Use of Generative AI

The authors affirm that the manuscript was crafted without AI assistance in data gathering, analysis, image creation, or writing, ensuring full accountability for its content.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.