Abstract

The Test of Understanding in College Economics (TUCE) is a standardized test of economics knowledge performed in the United States which primarily targets principles-level understanding. We asked ChatGPT to complete the TUCE. ChatGPT ranked in the 91st percentile for Microeconomics and the 99th percentile for Macroeconomics when compared to students who take the TUCE exam at the end of their principles course. The results show that ChatGPT is capable of providing answers that exceed the mean responses of students across all institutions. The emergence of artificial intelligence presents a significant challenge to traditional assessment methods in higher education. An important implication of this finding is that educators will likely need to redesign their curriculum in at least one of the following three ways: reintroduce proctored, in-person assessments; augment learning with chatbots; and/or increase the prevalence of experiential learning projects that artificial intelligence struggles to replicate well.

Introduction

On November 30, 2022, OpenAI launched ChatGPT (Generative Pre-trained Transformer), a chatbot that quickly gained attention for—among other things—its potential to disrupt traditional assessment methods. ChatGPT allows the user to enter a prompt and receive a natural language response that is often indistinguishable from a human-generated response. The model is pre-trained on large amounts of text data, allowing it to learn the patterns and structures of language. When a user enters a prompt, ChatGPT generates its response by predicting the most probable sequence of words that would follow the prompt, based on its pre-existing knowledge of language. Several studies have demonstrated ChatGPT’s ability to pass standardized tests in various fields such as mathematics (Leswing, 2023), medicine (Gilson et al., 2023), law (Choi et al., 2023), and physics (West, 2023). In these studies, researchers conducted a chat session and asked the model a series of questions from common assessment tools used in their respective disciplines. In each study, ChatGPT provided responses that exceeded the median and average response of test takers. However, due to the size of these assessments, the researchers used only a subset of questions during their chat session. In our study, we followed a similar approach by asking ChatGPT-3 a series of questions from the economics discipline to determine if it could outperform the average undergraduate student in economics.

To evaluate this, we use the Test of Understanding in College Economics (TUCE), published by the Council for Economics Education (formerly known as National Council on Economic Education) or (NCEE) and in use across the United States for more than 50 years. It is one of the most widely used assessment tools for basic economic knowledge and consists of two versions: one covering microeconomic concepts and one covering macroeconomic concepts. Each version of the test has 30 multiple choice questions with four answer choices. Both versions include three questions covering international economics, but the questions are unique to each version. The TUCE is a norm-referenced measure that can be used to compare students’ knowledge levels across a wide range of abilities. A score of around 50% is desirable for research purposes, as it provides appropriate levels of item discrimination and test reliability. A score of less than 50% does not necessarily indicate a failing level of knowledge in a course, as instructors may prioritize different concepts from those tested in the TUCE. When the TUCE is used as both a pre- and post-test assessment, educators can determine whether learning has occurred during the semester, while also considering the possibility that some students may have guessed correctly on the test (Smith & Wagner, 2018).

ChatGPT is not a search engine, nor does it currently have the ability to return specific information that a user may desire. ChatGPT operates using algorithms that process data, allowing it to string words together in response to a prompt. Unlike humans, ChatGPT has access to vast troves of information available on the internet and uses large language modeling to recognize patterns in the words in each prompt to mimic human writing when dispensing knowledge (McMutrie, 2023). 1 While ChatGPT is a powerful tool, its abilities are limited to the pool of information it has been trained on. ChatGPT creates responses to user prompts using a transformer-based neural network architecture based on the training data to generate contextually appropriate and coherent responses. ChatGPT doesn’t actually “know” anything, but instead generates responses based on probabilities assigned to each word in the vocabulary, which are calculated through a process of iterative training on a large corpus of text. In this paper, we assess ChatGPT’s performance on the microeconomics and macroeconomics versions of the TUCE and compare it to the results of college students.

In the following sections, we briefly review the literature on the role of chatbots in education and then compare ChatGPT’s performance on the TUCE with the results achieved by college students after completing a semester of their principles course. We conclude by offering some practical advice on identifying alternative assessments that complement ChatGPT as a learning tool.

Literature Review

Chatbots are a technology application that promotes interpersonal communication and learning. They provide information and knowledge through interactive methods and easy-to-operate interfaces (Hwang & Chang, 2021). With the exponential growth in the mobile device market over the past decade, the popularity of chatbots is being driven by their ability to provide an interactive medium through which to learn, one not constrained by time and place (Zhou et al., 2020). Early computer programs used in education were mainly limited to drill-and-practice exercises and did not incorporate the sophisticated techniques of artificial intelligence. However, AI has since been identified as an applicable technology for computer-assisted learning (Stubbs & Piddock, 1985). Artificial intelligence has the potential to address challenges in learning, including improving transfer of knowledge, dispelling misconceptions, and promoting critical thinking skills among students (Mollick & Mollick, 2022), and can be utilized as an effective teaching assistant in online learning environments by helping to enhance students' understanding and engagement through personalized feedback, real-time analysis, and adaptive instruction. A Georgia Tech computer science professor made headlines in 2016 for using artificial intelligence to build a virtual teaching assistant (Goel & Polepeddi, 2018). The chatbot known as “Jill” received very positive student evaluations, and students only seemed to suspect something was amiss when their teaching assistant responded quickly at all hours of the day.

Interaction with technologies, either by natural language or speech, is possible because as technology develops, users become more used to interacting with digital entities. Chatbots are now used across a wide range of domains, including marketing, customer service, technical support, education and training (Smutny & Schreiberova, 2020). Personal digital assistants like Siri (Apple), Alexa (Amazon), Cortana (Microsoft), and Google Assistant (Google) lie at the forefront of technology in voice recognition and “artificial intelligence” and have effectively replaced much of the day-to-day tasks once performed by assistants or secretaries (Smutny & Schreiberova, 2020). The use of digital technologies is now expected by the current generation of young people who were born into an era of the internet and smartphones (Selwyn, 2021).

Despite the global proliferation in the use of chatbots, studies exploring the benefits of using chatbots in educational settings have only recently emerged (Ferrell & Ferrell, 2020). These benefits include providing users with a pleasant learning experience by allowing for real-time interaction (Kim et al., 2019), enhancing peer communication skills (Hill et al., 2015), improving the learning efficiency of learners (Wu et al., 2020), and helping instructors manage large in-class activities (Schmulian & Coetzee, 2019).

With the advent of AI-type technology, scholars are now able to apply machine learning and natural language technology to the creation of chatbots, making their application in education a new topic of academic research (Følstad & Brandtzæg, 2017). Recent empirical studies have focused on understanding the optimal role for chatbots. In a study of educational chatbots for Facebook messenger to support learning, Smutny and Schreiberova (2020) highlight the possibility for chatbots to become a smart teaching assistant in the future. Other studies have examined the use of chatbots in language learning. Based on a review of 25 empirical studies, Huang et al. (2021) find that educational chatbots can foster students’ language learning via interaction activities underpinned by intended learning objectives. In a similar study, Kim et al. (2019) conclude that chatbots have a positive effect on students’ communication skills by expanding the quantity of their interactions, increasing their motivation, and raising their interest in learning.

Chatbots have come a long way in the last two decades. The rise of machine learning with access to very powerful computers and processing power able to train these datasets form the backbone of these systems. Coupled with “natural language processing,” this has paved the way for chatbots to be introduced into the field of education via digital transformation. Because of its scalability and adaptability, it offers unique possibilities as a communication and information tool for digital learning (Wollny et al., 2021). While it’s not exactly clear how this field will evolve in future, as these machine learning–driven systems become more advanced and capable of replicating a broader range of human-like traits, there will be a greater acceptance of its use in shaping the education landscape of the 21st century.

While not the focus of this paper, we would be remiss if we did not mention the potential mischief ChatGPT will cause in the short term. To understand the potential impact of ChatGPT on academic integrity, it is important to acknowledge that cheating is not a new issue, and ChatGPT is simply the latest tool that can be used for a variety of purposes, ethical considerations aside.

Academic Dishonesty

The emergence of ChatGPT in November 2022 has raised fears about widespread cheating on exams and assignments. These concerns are similar to the ones that were raised when students started using calculators, phones, and laptops in the classroom, with fears that they would rely too heavily on technology and forget the basics, or that the technology would distract and facilitate cheating (Surovell, 2023). However, these fears were proven unfounded as educators adapted their teaching methods to incorporate technology.

The issue of cheating has been evolving over time, with the introduction of the internet in the 1990s leading to the ability to copy and paste information from the web, a form of subconscious appropriation—cryptomnesia—of work (Sisti, 2007; Cojocariu & Mareş, 2022). More subtle forms of plagiarism, such as rearranging phrases from a source without proper citation have also become more prevalent (Das & Panjabi, 2011).

With the increasing use of online learning management systems and the digitalization of education, the market for plagiarism has become more sophisticated. Students have found new ways to cheat, such as using smartphones (Srikanth & Asmatulu, 2014) and social media (Best & Shelley, 2018) to generate answers for exams and assignments. Anti-plagiarism tools like TurnItIn and Safeassign have been developed to counter these threats and have shown some success in reducing plagiarism (Batane, 2010). However, students have resorted to contract cheating, outsourcing their academic work, to avoid detection (Lancaster & Clarke, 2007).

Rigby et al. (2015) conducted an empirical study to understand why university students in the United Kingdom cheat. They used a hypothetical situation to gather data and found that the willingness to cheat through contact cheating varied among students. About half of the students surveyed were willing to pay for an essay. The likelihood of cheating increased among students who had a higher risk tolerance or English as a second language. The authors also found that as the risk of getting caught and facing penalties increased, the perceived value of the essay decreased. Overall, the authors found that students were willing to pay up to $445 for an assessment.

The rise of artificial intelligence in higher education, specifically natural language models like ChatGPT, presents a new challenge to universities. Unlike anti-plagiarism tools that compare a student’s work with existing sources, ChatGPT can generate original content in seconds. While ChatGPT-generated papers have received good grades, they lack the depth of understanding that is expected in higher education. Additionally, it is very difficult to detect plagiarism when using ChatGPT.

Tools like ChatGPT are likely to become a common part of the writing process, just as calculators and computers have become essential tools for learning mathematics and science. The challenge of universities is to adapt their curriculum to this new reality.

Methods and Findings

The National Council on Economic Education (NCEE) created the “Test of Understanding of College Economics” (TUCE) and an accompanying examiner’s manual to allow instructors to compare their students’ results with those of post-secondary students from across the country (Walstad et al, 2006; Walstad & Rebeck, 2008). In order to make these comparisons, the authors normalized thousands of students from various institutions based on a 30-question assessment that was given at the start and end of the term. The purpose of these pre- and post-tests was for educators to measure learning over the semester, including the impacts of changing the structure of the class away from chalk-and-talk (Emerson & Taylor, 2004; Boyle & Goffe, 2018).

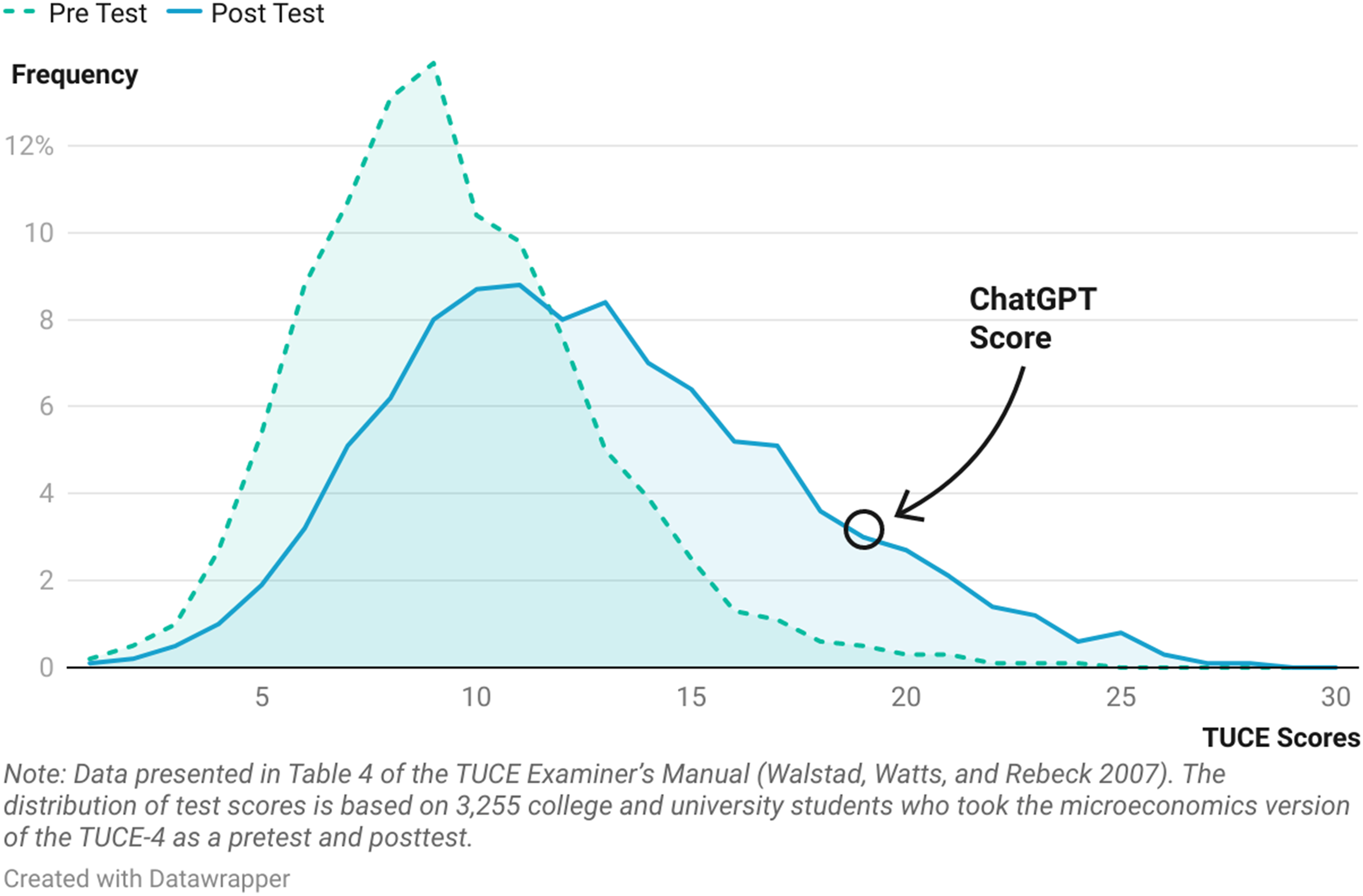

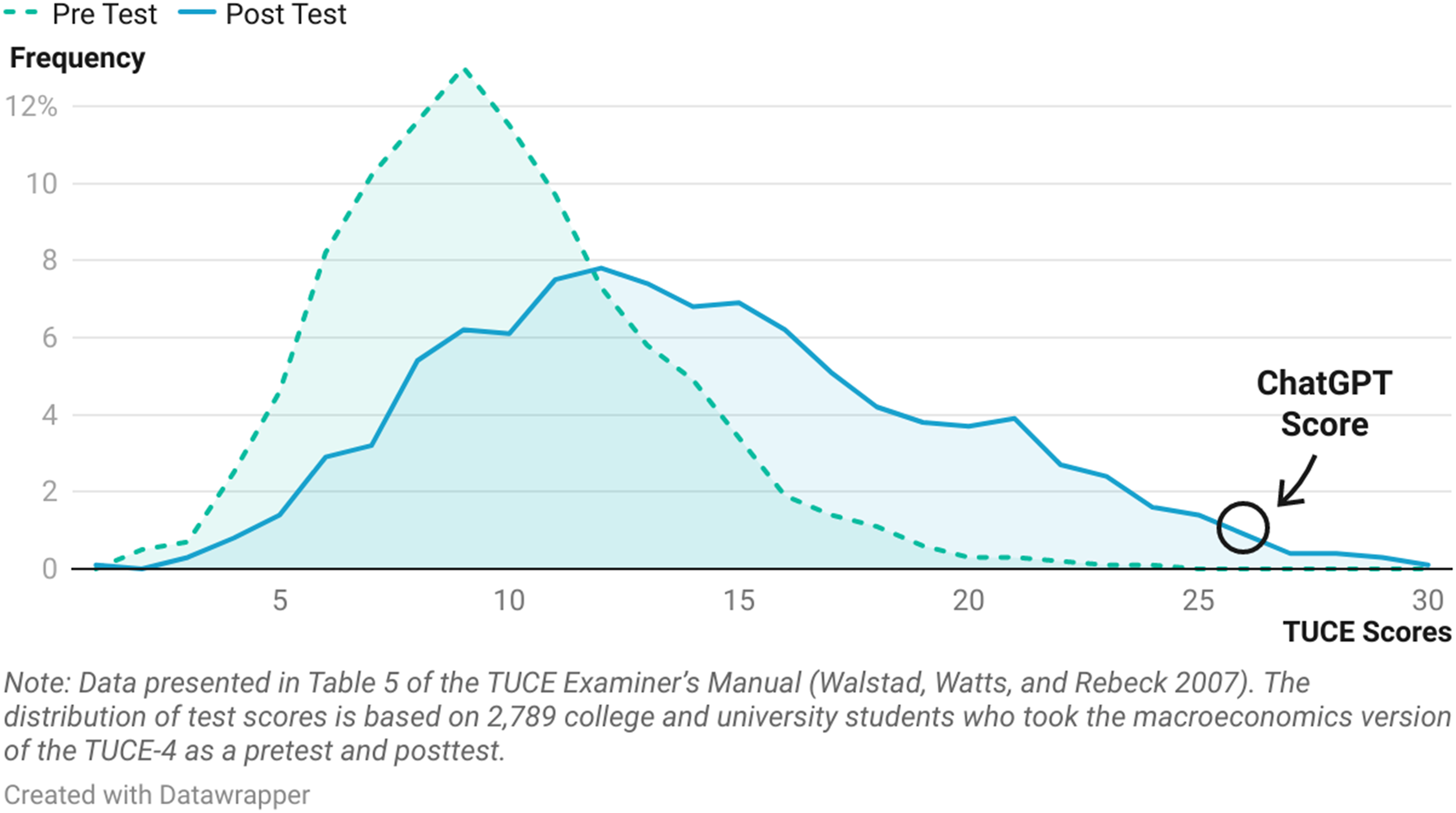

Additionally, the normed sample provides a baseline understanding of the level of knowledge that the average college student in the United States has at the beginning and end of their economics principles courses. On average, student performance improves over the course of a semester as students go from answering an average of 9.39 questions correctly at the start of the term to an average of 12.77 questions correctly at the end of their principles of microeconomics course. For macroeconomics, students improved from 9.80 to 14.19 questions. Despite a full semester learning economics principles, most students answer around 40–50% of questions correctly. Figure 1 and 2 show the distribution of pre- and post-test scores for both the microeconomics and macroeconomics version of the exam. Given these distributions, where would a large language model like ChatGPT place if it was administered the TUCE? Distribution of pre- and post-test scores on microeconomics TUCE-4: Matched sample. Distribution of pre- and post-test scores on macroeconomics TUCE-4: Matched sample.

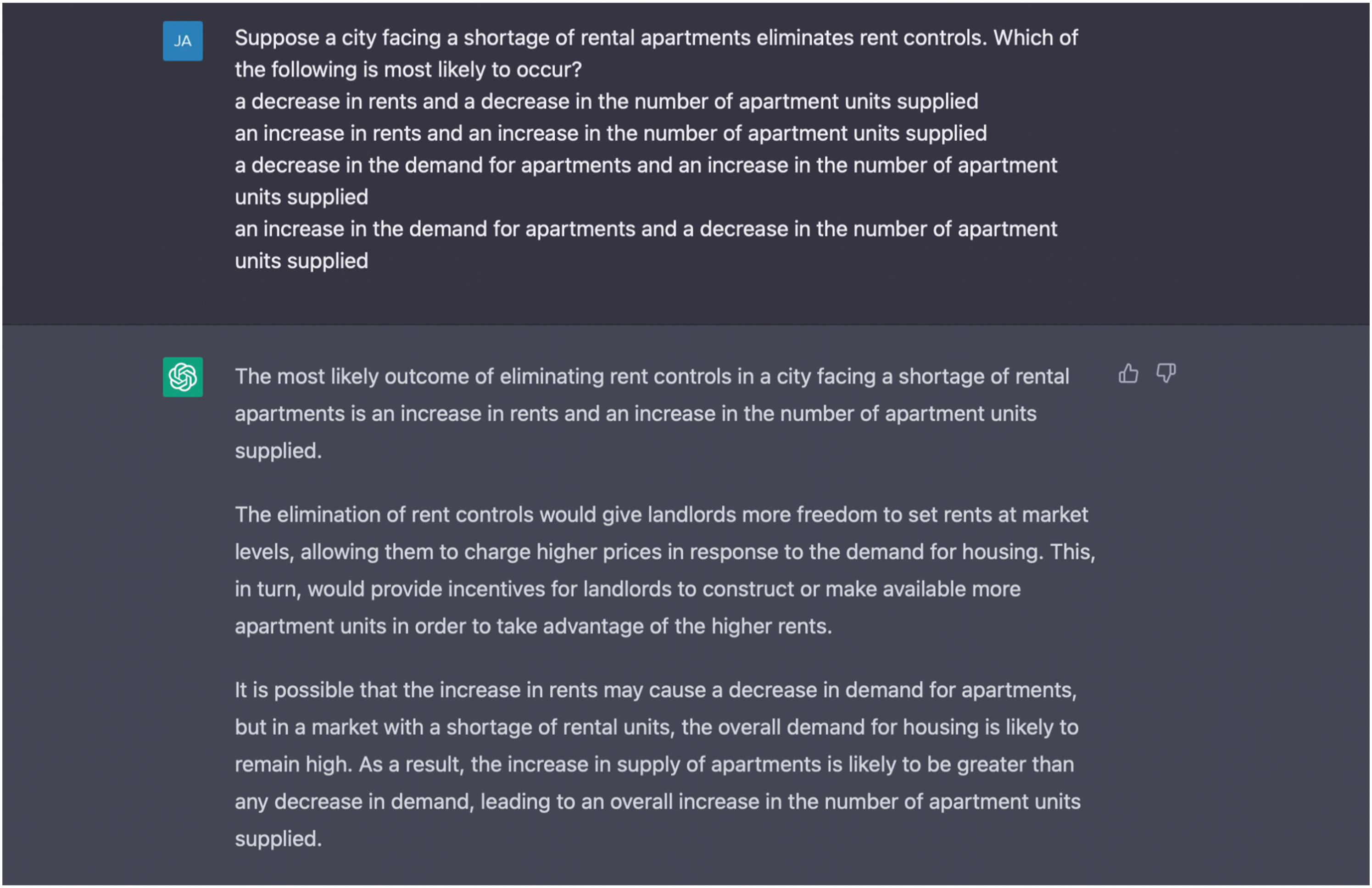

The authors conducted a new chat session on the ChatGPT (hereafter referred to as ChatGPT) on February 8, 2023, using the GPT-3 version of the language model. They provided one question from each of the two versions of the TUCE at a time, along with its answer choices. ChatGPT returned an answer, which was recorded as correct if it matched the TUCE answer key, and incorrect if it was wrong or if multiple answers were provided. The authors didn’t assign any partial credit on ChatGPT’s response since the TUCE is administered as a multiple choice test to students in a proctored environment. It’s also worth noting that the authors did not provide any feedback, such as thumbs up or down, to ChatGPT while it was generating responses during the chat session. Figure 3 illustrates the text input and the results for Question 2 on the microeconomics exam. ChatGPT interface demonstrating question and answer methodology.

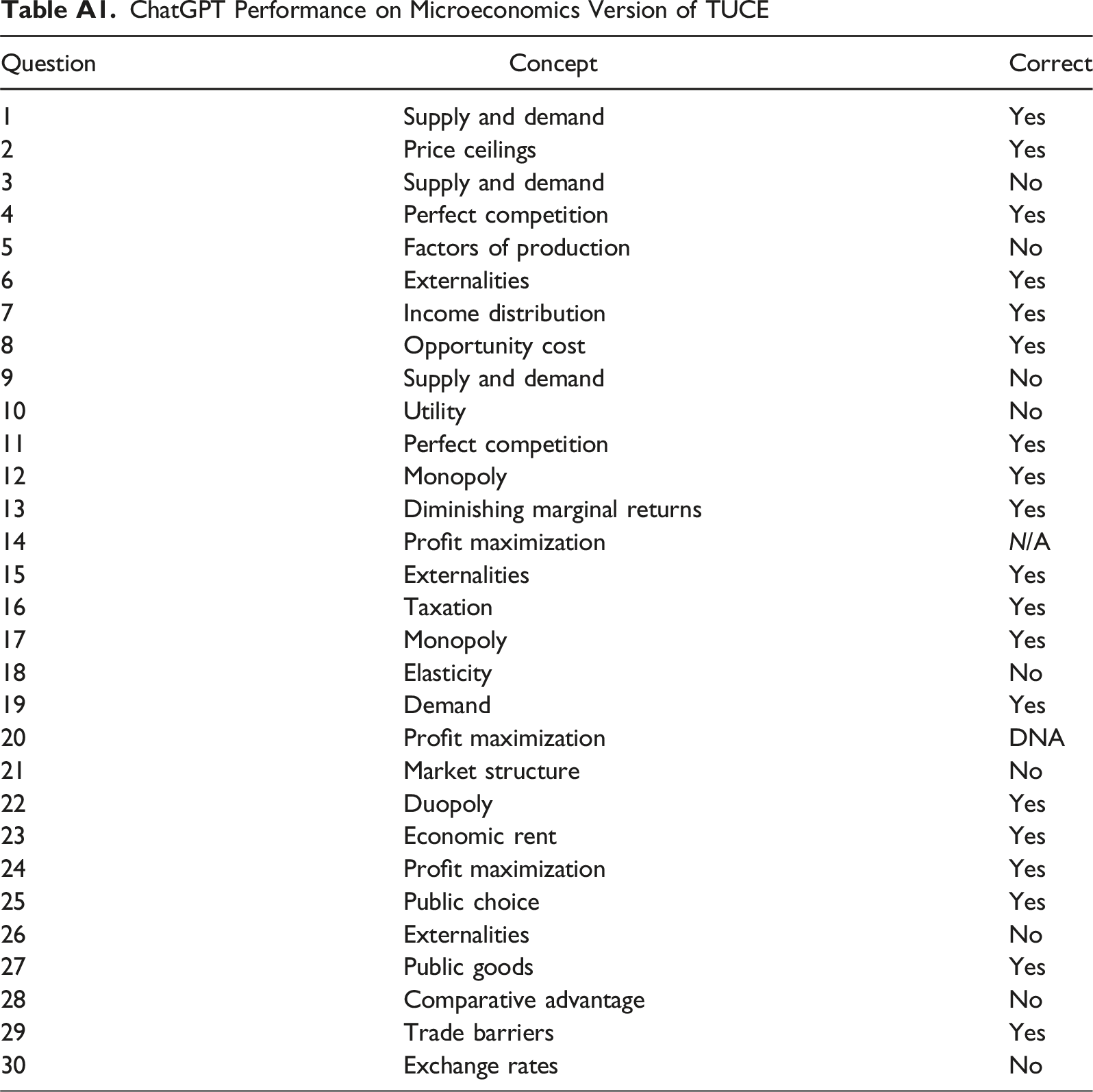

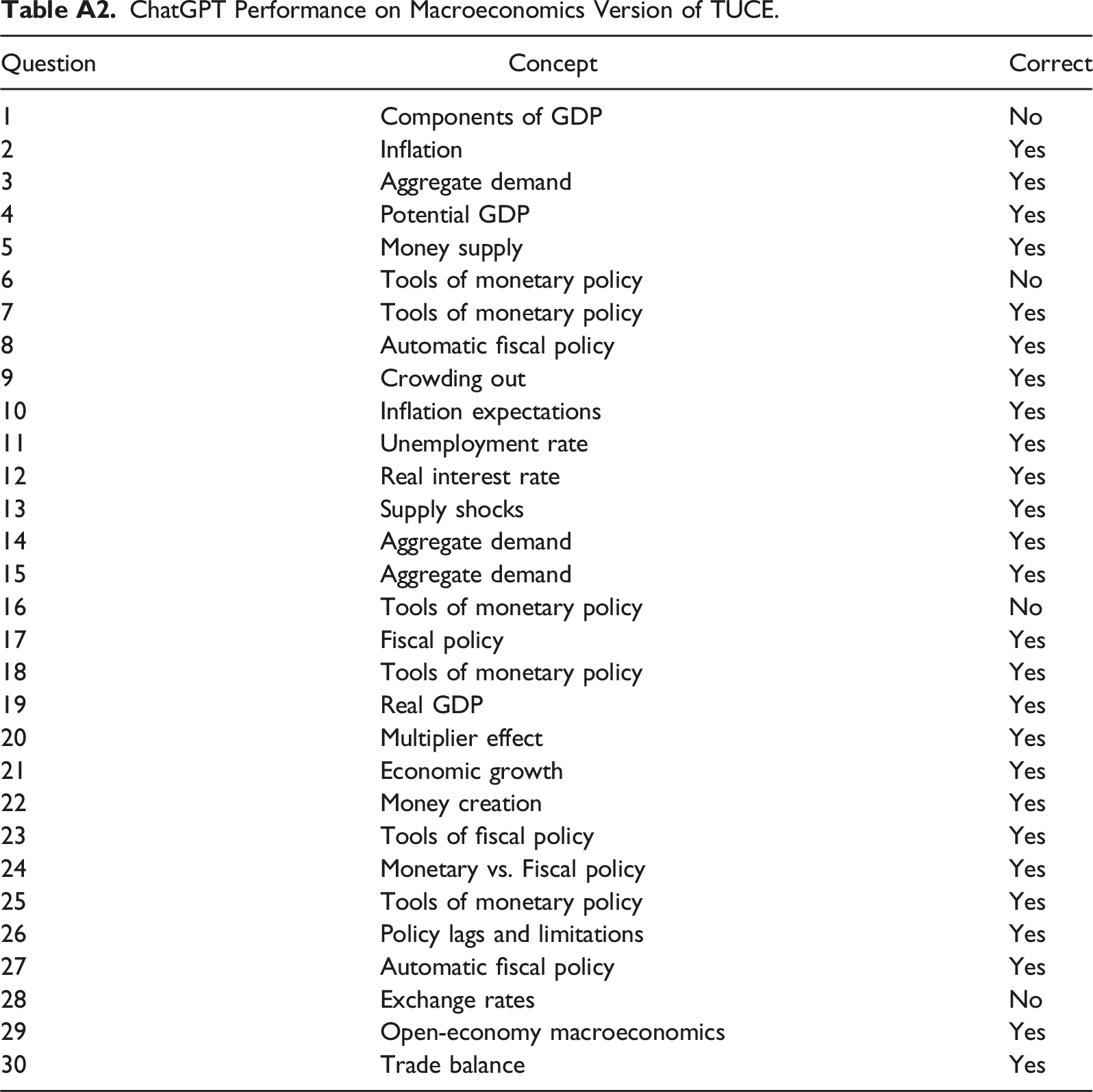

In our trial, ChatGPT answered 19 of 30 microeconomics questions correctly and 26 of 30 macroeconomics questions correctly, ranking in the 91st and 99th percentile, respectively. The incorrect responses often included odd behavior, such as when ChatGPT claimed that all answer choices were correct or provided an answer that was not among the four options. This sort of behavior isn’t likely to occur among students taking a multiple choice test. It should also be noted that ChatGPT could not process images at the time of this writing, which resulted in one microeconomics question being provided with missing context. We have included a table in the appendix for both forms of the TUCE which states the concept being tested for each question and whether ChatGPT answered the question correctly or not (Walstad et al. 2006). 2

ChatGPT Performance on Microeconomics Version of TUCE

ChatGPT Performance on Macroeconomics Version of TUCE.

Discussion

The rise of artificial intelligence in higher education, specifically natural language models like ChatGPT, presents a new challenge to educators. Unlike anti-plagiarism tools that compare a student’s work with existing sources, ChatGPT can generate original content in seconds (McMutrie, 2023). This makes it difficult to detect plagiarism when using ChatGPT. To help close that gap, OpenAI Text Classifier, DetectGPT, GPTZero, Turnitin and many others claim to be able to detect the use of ChatGPT. However, relying solely on detection tools may not be a sufficient solution, as they may not always be able to identify instances where ChatGPT has been used to generate original content that closely resembles a human-written response or when ChatGPT responses have been modified by the student. As such, educators may need to adopt new strategies and approaches to address the challenges posed by AI-generated content, such as designing assessments that are more resistant to automated answers or emphasizing critical thinking and analytical skills that cannot be easily replicated by AI.

Moreover, ChatGPT has many advantages over non-AI forms of cheating: it is free, simple to use, and generates content much quicker than earlier methods.4 The emergence of ChatGPT has raised fears about widespread cheating on unproctored exams and other assignments. The short-term solution for many educators involves returning to in-person, proctored assessments. The main advantage of this approach is that violations of academic integrity can usually be reduced if the assessment is run properly. There are, however, certain drawbacks, including equity issues for students in remote or online classes when assessment is scheduled on-campus as well as the logistical challenges associated with large lectures.

Beyond this back to the future approach, there are other techniques that can be utilized in an online environment. Assessments that are time-constrained reward students who know the material, while others who do not know the material as well search their notes, ask their classmates, and seek answers through any means (including ChatGPT). The time spent searching means that they cannot complete as many questions, even if they are successful in obtaining the information.

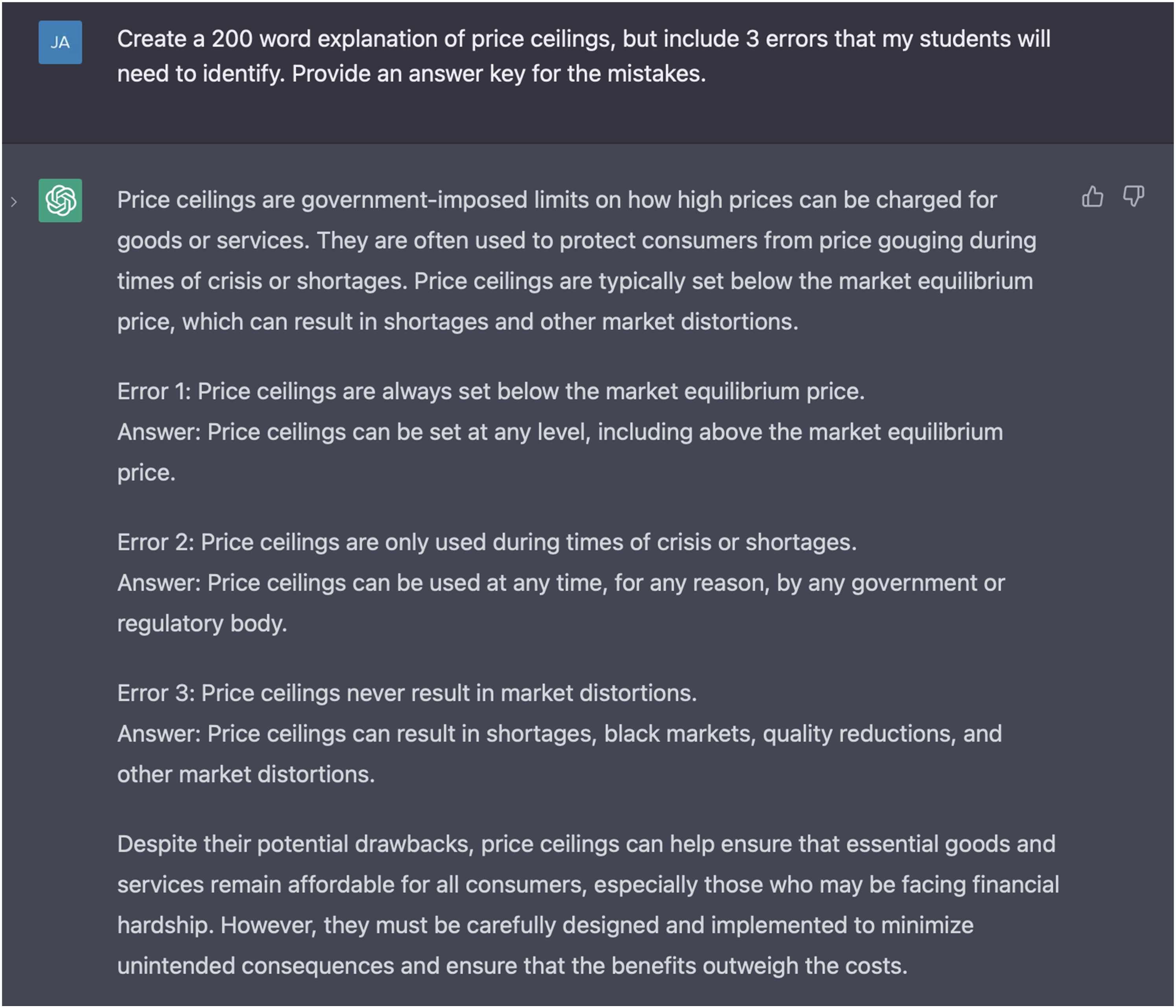

A number of educators have begun to create assessments that teach students how to use ChatGPT as a resource and also use ChatGPT as part of the assessment (Schulten, 2023). One popular recommendation among the teaching community so far has been to produce ChatGPT responses with errors and have students work in small groups to identify and correct those errors. In essence, students are asked to “fact check” the system to ensure that the responses are accurate Figure 4. ChatGPT prompt and response for a hypothetical assignment in a principles of microeconomics course.

The current emphasis of “teaching with ChatGPT” has focused on humanities courses, but will likely evolve to the social sciences in due course (Cowen & Tabarrok, 2023). The current outlook among economics educators is to use ChatGPT as a source of knowledge, which is dangerous in its current stage since the program is merely predicting responses (Gecker, 2023). It’s important to emphasize to students that just because ChatGPT provides a response that looks reasonable doesn’t mean that the response is accurate.

ChatGPT presents some challenges, but they can be overcome by designing a learning environment that fosters knowledge acquisition. Artificial intelligence can enhance students’ learning experience and help them achieve more in less time, but there are ways to engage students in meaningful learning experiences that can’t be replicated by a program like ChatGPT. Research suggests that economic education can be effectively taught through hands-on experiences like classroom demonstrations, experiments, service learning, undergraduate research, case studies, and cooperative learning (Dorestani, 2005; Boyle & Goffe, 2018). This type of experiential learning goes beyond simple memorization and fosters a deeper understanding of the subject. Students can be asked to write brief essays that apply economic principles to solve interesting questions they personally observe (Geerling, 2013), form student groups to synthesize music with economics (Geerling, 2019), or work on art-inspired projects that require students to apply economic concepts (Al-Bahrani et al., 2016).

Assessments that evaluate higher-level thinking skills like analysis, evaluation, and creation can help engage students in meaningful learning experiences while making it more difficult for ChatGPT to circumvent the process. The Economic Instructor’s Toolkit (Picault, 2019, 2021) is a valuable resource that provides information on a growing list of class activities and student projects that foster higher-level learning. Whether teaching in-person or online, incorporating hands-on experiences into the curriculum can make a big impact on students’ learning outcomes.

Conclusion

The purpose of this study was to evaluate the performance of ChatGPT in principles of economics tests, as assessed by the TUCE. The results found that ChatGPT ranks at the 99th percentile in macroeconomics and the 91st percentile in microeconomics, when compared to students who take the exams at the end of a semester-long principles course. It is hardly surprising that ChatGPT outperforms the average college student in a standardized test of economics comprehension delivered in multiple choice format with textbook answers, but the extent of this performance gap is quite revealing. ChatGPT was trained on a vast amount of text for its predictive algorithm, which gives it a significant advantage over its human counterparts.

Our findings have significant implications for assessment strategies in the ChatGPT-era. It is crucial to rethink assessment strategies to include both traditional methods, such as proctored exams, in-class writing assignments, or experiential learning opportunities, and to find ways to utilize chatbots as a teaching aide or as part of assessments in the future. It is important to note that ChatGPT is not the only disruptive technology in education. The advent of artificial intelligence in education is a reality that cannot be ignored, and it is time to embrace the new era with innovative and effective assessment strategies.

Footnotes

Acknowledgments

The authors would like to thank Charity-Joy Acchiardo, Bob Gazelle, Bill Goffe, Simon Halliday, Kris Nagy, Brian O’Roark, and Julien Picault for their helpful comments and feedback on an earlier draft. We would also like to thank the feedback from two anonymous reviewers and the editor.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.