Abstract

Background

Food environments are rapidly changing in low- and middle-income countries (LMICs), leading to dietary shifts. Many gaps exist in the measurement of food environments in LMICs making it difficult to characterize the linkages between food environments and diets.

Objective

The objective of this study was to examine the feasibility of implementing USAID Advancing Nutrition's Market Food Environment Assessment (MFEA)—a suite of 7 non-resource intensive food environment assessments.

Methods

We implemented the MFEA package in 4 countries (Liberia, Honduras, Nigeria, and Timor-Leste) and assessed the feasibility of implementing the package by conducting a descriptive analysis, using both qualitative and quantitative data of enumerators’ feedback collected through training evaluations, feedback forms, detailed notes from meetings, and final reports from in-country partners.

Results

Overall, we found it feasible to implement the MFEA, some assessments being easier to implement and more practical than others. Several key themes related to the MFEA implementation were identified across the countries, including: the potential for vendors to be hesitant to engage in assessments; the importance of ascertaining buy-in from local officials; the need to shift toward electronic, rather than paper-based, data collection; difficulties in selecting markets; the time constraints of conducting some of the assessments; and the need for better alignment between the instructions, data collection, and data analysis sheets.

Conclusions

The package of food environment assessments, with minimal additional refinement, can be used to characterize market food environments in LMIC settings to inform context-specific interventions.

Plain language title

Testing the feasibility of implementing a package of 7 assessments to measure factors influencing food access in low-resource settings

Plain language summary

Introduction

The food that people have access to is shifting in low- and middle-income countries (LMICs), with implications for diets, nutrition, and the global burden of disease. 1 While the entire food system impacts what people eat, the food environment—the immediate space in which we interact with food—is a key intervention point within food systems to improve diets and reduce the burden of malnutrition (see Box 1 for food environment definition, domains, and dimensions).

Defining food environments, their domains, and dimensions

The food environment represents the place within the food system where consumers make decisions about the foods to acquire, purchase, and consume.6–9 The food environment is influenced by the socio-cultural and political environment and ecosystem within which it is embedded. There are 2 overarching types of food environments, including the natural food environment which refers to wild and cultivated environments where people access food, and the built food environment which includes both informal and formal markets.6,7 The different attributes of the food environment, as well as how individual factors interact with them, can be categorized as the external and personal food environment domains. 7 The external domain includes the following dimensions: food availability, price, vendor and product properties, and marketing and regulation. 7 The personal domain includes the dimensions of food accessibility, affordability, convenience, and desirability. 7

Food systems drivers, including globalization, urbanization, and migration, influence food environments and can change how people acquire and purchase food around the world. 1 In LMICs in particular, the growing burden of overweight, obesity, and diet-related non-communicable diseases (NCDs) have been attributed to food environment changes, including increased reliance on the built food environment.2–4 These changes have outpaced our response to understanding them, resulting in a critical data gap related to food availability, accessibility, affordability, and acceptability in people's food environments. Methods, tools, and metrics are needed to fill this gap to support healthy diets. 5

The dynamic changes in food environments in LMICs, as well as how consumers interact with their food environments, call for multifaceted, and nuanced, approaches to assessing food environments in LMICs. 7 Most existing methods, metrics, and tools (collectively referred to as assessments) used to assess food environments were designed for high-income country contexts.5–7,9 Researchers and practitioners have recognized these shortcomings and begun developing new assessments and adapting existing methods, metrics, and tools.5,10,11 In particular, the U.S. Agency for International Development (USAID), through its partner USAID Advancing Nutrition (USAID-AN), developed a suite of standardized, easy to implement, market food environment assessments to capture data on multiple food environment dimensions in diverse contexts to inform program design, implementation, and monitoring and evaluation. The instruction manual for the finalized assessments, their data collection sheets (ie, annexes), as well as data analysis instructions and templates are available online in an open-access format (https://www.advancingnutrition.org/resources/food-environment-monitoring-and-evaluation-guidance-and-tools). To develop the suite of tools, a landscape assessment, a ranking exercise, and a survey with key food environment experts was conducted. Using this evidence-based approach, 7 unique assessments were prioritized as most suitable for assessing market food environments in diverse LMIC contexts.5,11

These 7 priority assessments comprise the basis of the Market Food Environment Assessment (MFEA) pilot study (see Box 2) that was implemented in a total of 42 markets in 4 LMICs: Liberia, Honduras, Nigeria and Timor-Leste. This paper aims to examine the feasibility and lessons learned from the implementation of the MFEA pilot.

Overview of the assessments included in the Market Food Environment Assessment (MFEA) pilot project

The MFEA pilot study included 7 assessments that were identified as most suitable for LMICs (with modifications) based on a landscape assessment, ranking exercise, and a survey with key food environment experts. The assessments included in the MFEA pilot study were: (1) Market Mapping,12,13, (2) Seasonal Calendar of Availability, 14 (3) Market Food Diversity Index (MFDI), 15 (4) Healthy Eating Index of Food Supply (HEI), 16 (5) Cost of a Healthy Diet (CoHD), 15 (6) Environmental Profile of a Community's Health (EPOCH), 12 and (7) Produce Desirability Tool (ProDes). 17 Prior to the implementation of the MFEA, these 7 assessments were modified by the study team from their original versions to be suitable for diverse LMIC contexts.

Methods

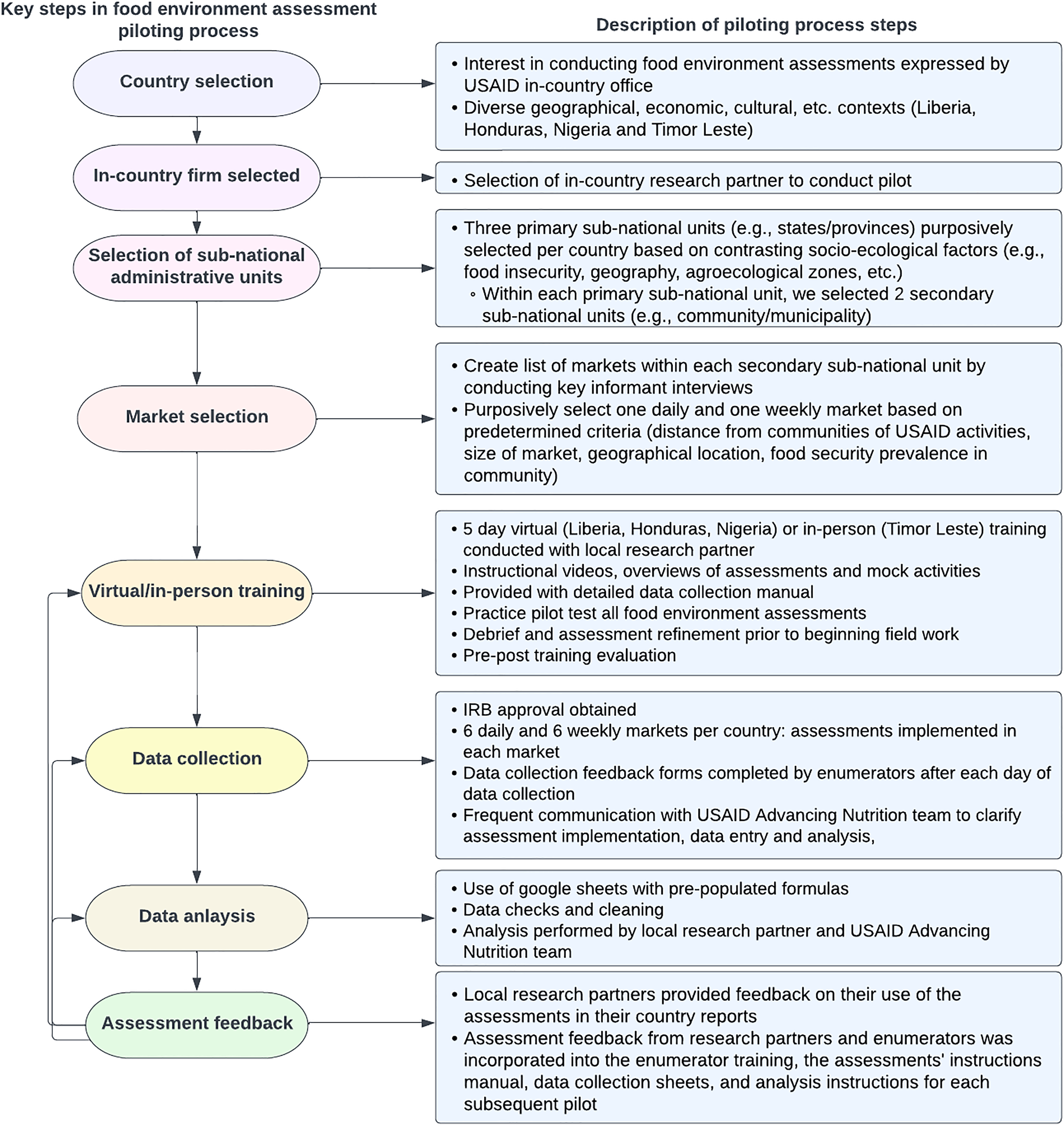

This study was led by a team working on market food environments at USAID-AN. Through a multi-phase iterative process, we implemented the MFEA and evaluated the performance to understand the feasibility of implementing the suite of methods, tools, and metrics (collectively, assessments) to measure key dimensions of market food environments in diverse LMIC contexts. The implementation of the MFEA took place between 2021 and 2023. Specifically, it was carried out in Liberia in 2021, Honduras and Nigeria in 2022, and Timor-Leste in 2023. The specific regions where the MFEA was implemented in each country were based on pre-defined criteria (see Supplemental Figure S1). Supplemental Table S1 describes the pilot settings in each country. In each of the countries, the MFEA package was implemented in its entirety using paper data collection forms. Each team had a scale to weigh foods for the assessments that required it. After the data for each assessment were collected, they were entered into Google Sheets to enable automated data analysis. Data analysis was conducted according to the guidelines for data analysis (https://www.advancingnutrition.org/sites/default/files/2024-01/Assessment_Data_Analysis_Instructions.pdf). Figure 1 describes the steps taken to pilot the food environment assessments. Supplementary File A provides an example from each assessment of how data may be visualized using the pilot data from Nigeria and Box 3 describes high level results from each of the pilot countries.

A detailed overview of the steps taken to pilot the food environment assessments.

High level results by pilot country

Assessing Feasibility of MFEA

The feasibility of the MFEA was assessed based on enumerators’ feedback on the implementation of the assessments collected through training evaluations, feedback forms, detailed notes from meetings, and instant messaging communication during implementation, as well as final reports. We used a framework adapted from Bowen et al 18 to guide our assessment of the feasibility of the MFEA. Our feasibility assessment focused on the acceptability, implementation/practicality, and adaptation of the MFEA (see Box 4).

An overview of the areas of focus of the MFEA feasibility assessment

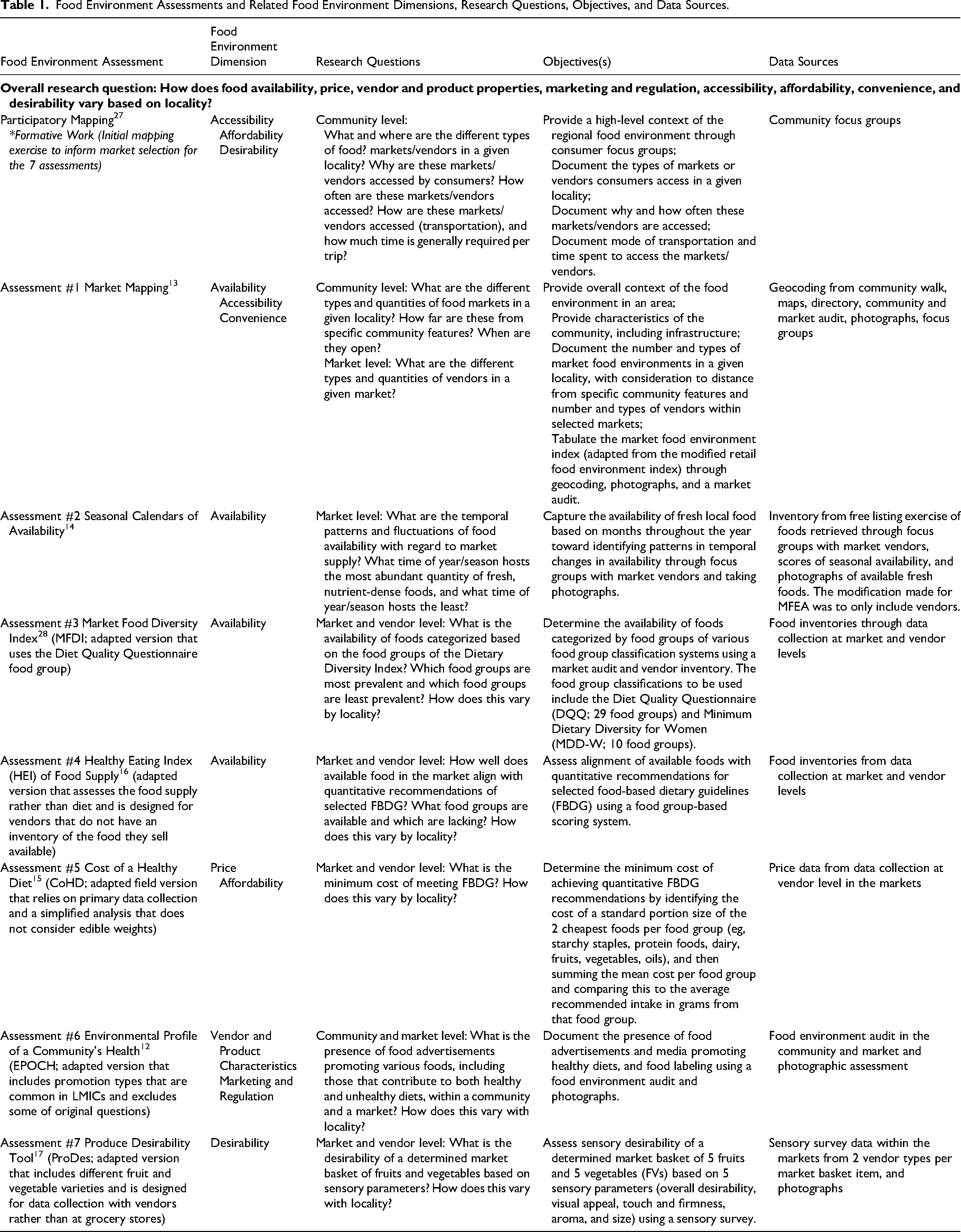

Market-Based Food Environment Assessment and Objectives

The 7 assessments of the MFEA measure key dimensions of the food environment, including both personal and external domains: food availability, food price, vendor and product properties, marketing and regulation, access to markets, affordability of food, and desirability. Table 1 provides an overview of each of the modified assessments, including the food environment dimension(s) measured, associated research questions and objectives the assessment seeks to address, and the sources to procure the data. Overall, the modifications to the assessments made before implementation included making changes to food groups to ensure that there was consistency across assessments and making adaptations to increase the applicability to LMIC contexts. In addition to the 7 assessments, we included a formative assessment, participatory mapping, based on experiences in the first 2 pilots in Liberia and Honduras to help inform the implementation of the MFEA.

Food Environment Assessments and Related Food Environment Dimensions, Research Questions, Objectives, and Data Sources.

Training Protocol for Country Implementation Teams

A detailed instructions manual was created for the MFEA in English to standardize the implementation of the pilot across study sites. The MFEA instructions manual provided an in-depth overview of the theory and application of the 7 food environment assessments, including step-by-step instructions and templates for data collection and data analysis. The in-country research partners translated the instructions manual and associated materials into their native languages. Translated materials were subsequently reviewed to ensure that they were translated accurately, in instances where a perfect translation was not possible, USAID-AN worked with research partners to agree on acceptable language.

In addition to the instructions manual and associated data collection and data analysis templates, each country's research team received 16–20 h of training from the USAID-AN team leading this pilot. This training included detailed instructional videos, overviews of assessments, and mock activities for practicing the implementation of the assessments. Research teams in 3 countries (Liberia, Honduras, and Nigeria) received virtual training due to COVID-19 and resulting travel restrictions, while the team in Timor-Leste received in-person training. Training was conducted in English in Liberia and Nigeria, in Spanish in Honduras (with real time translation conducted over zoom), and in Tetun in Timor-Leste with live translation. The enumerators in each of the pilot countries were employed by in-country research partners. Supplemental Table S2 provides an overview of the enumerators included in each of the countries and their sociodemographic characteristics as well as the number of enumeration teams in each country. In general, the enumerators did not have prior experience conducting food environment assessments. Prior to starting the official data collection for MFEA, the enumerators conducted an unofficial pilot test with each of the assessments in local markets (that were not part of our official data collection sample) to identify queries about the assessments’ implementation and seek guidance from the USAID-AN research team on how to proceed when there was a lack of clarity in the instructions provided.

Implementation of MFEA

Following implementation in the field, each assessment was evaluated by the research team, research partners and enumerators based on: (a) acceptability (ie, perceived appropriateness of the assessment), (b) implementation (ie, ease of implementation), and (c) adaptation (ie, the extent to which it can be implemented in a standardized way across different country contexts).

Data Sources for Assessing Feasibility of Implementing MFEA

To assess the feasibility of implementing the MFEA, we used the following data sources: country research reports, running notes from meetings with research partners as well as instant message communications, enumerator feedback forms, and the enumerator evaluations of the MFEA training. While all 4 countries had research reports that were used in the data analysis, only Liberia, Honduras, and Nigeria submitted enumerator feedback forms.

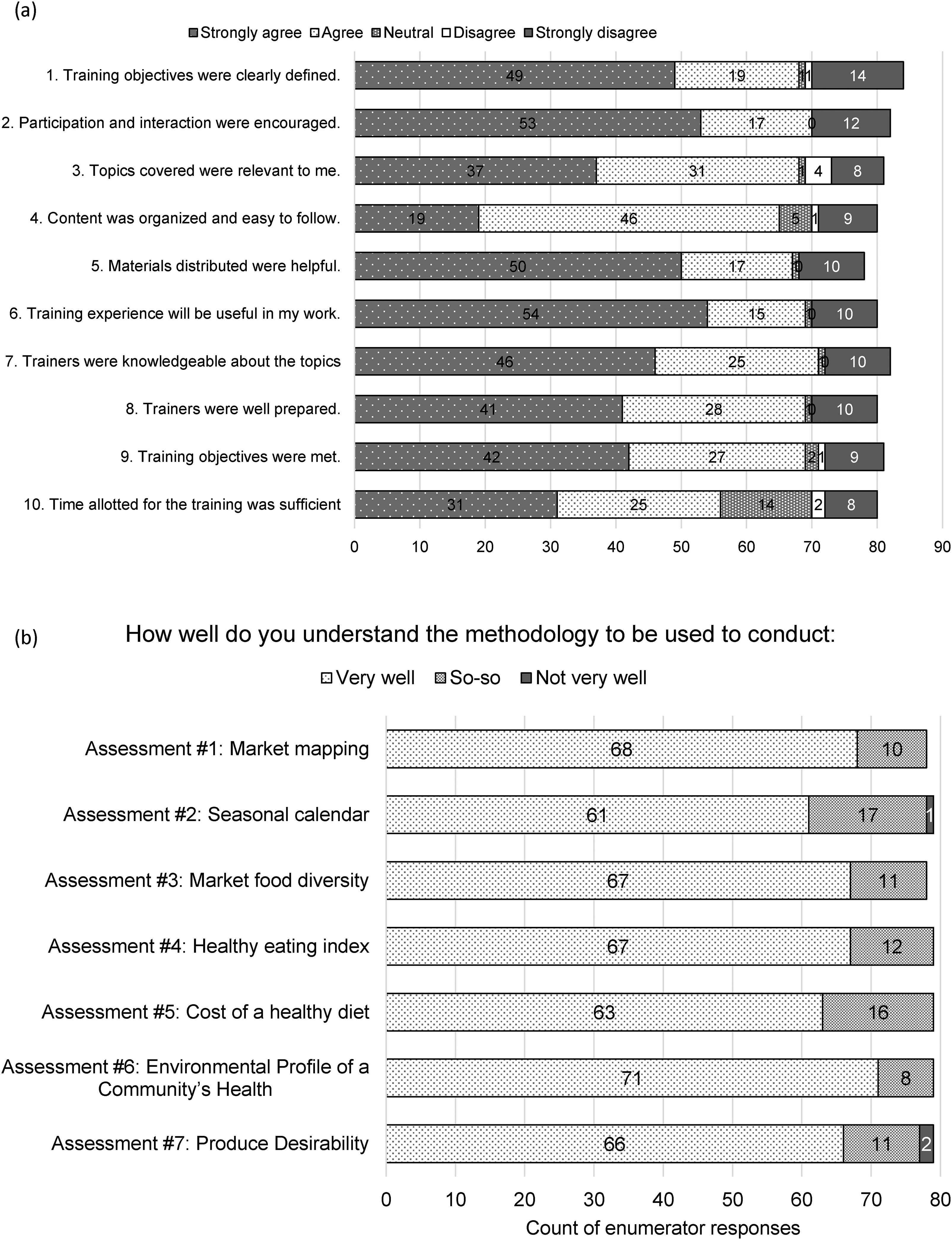

Training Evaluations

Following the training, an evaluation was circulated to understand areas of improvement for future training delivery. Enumerators were asked to answer 10 questions on a 5-point Likert scale ranging from strongly disagree to strongly agree about their views on the training. In addition, they were asked about each assessment and how well they understood the methodology (very well, so-so, or not very well). Lastly, they were asked 2 open-ended questions about what they liked most about the training and what could be improved.

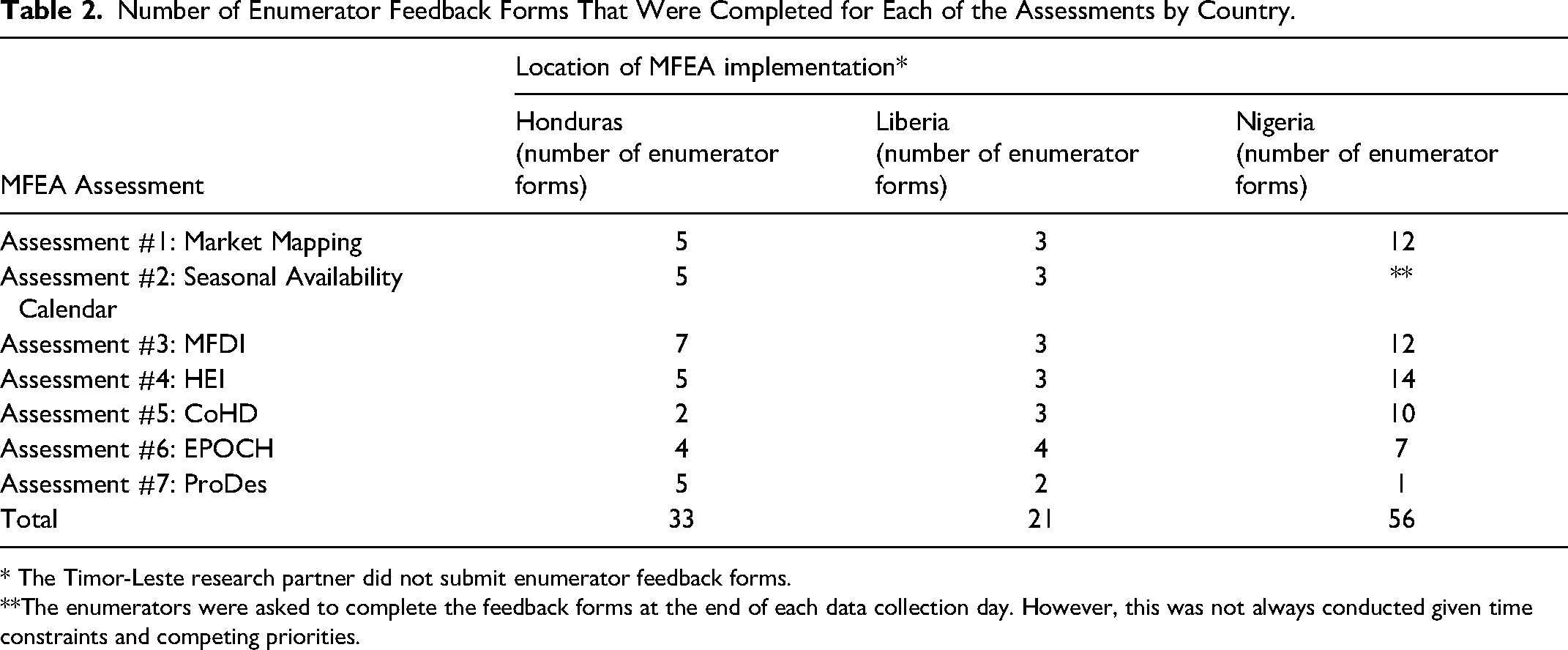

Enumerator Feedback Forms

Individual enumerator feedback forms were completed throughout the data collection process. The objective of the feedback forms was two-fold: to gain a better understanding of the feasibility of data collection for each food environment assessment and to learn about challenges experienced in data collection for each food environment assessment. The enumerators were asked to complete the feedback forms at the end of each data collection day. However, this was not always conducted given time constraints and competing priorities. In Timor-Leste, while the forms were completed in Tetun, the team felt that their debrief conducted to inform the country report was a better reflection of their feedback on the assessments and thus did not provide USAID-AN with the enumerator feedback forms. Table 2 describes the number of enumerator feedback forms that were completed for each of the assessments, in each of the pilot countries.

Number of Enumerator Feedback Forms That Were Completed for Each of the Assessments by Country.

* The Timor-Leste research partner did not submit enumerator feedback forms.

**The enumerators were asked to complete the feedback forms at the end of each data collection day. However, this was not always conducted given time constraints and competing priorities.

The feedback forms included the following questions, scored on a 5-point Likert scale: (1) How would you rate your overall experience with this assessment?; (2) How much time did it take you to fully complete the assessment?; (3) Were the instructions provided sufficient for you to complete the assessment?; (4) In your opinion, how did vendors (or others participating in this assessment) react?; (5) Please summarize any challenges you faced, with this assessment; and (6) Do you have any suggestions for improving the data collection for this assessment?

Detailed Notes from Meetings During Implementation and Instant Messaging Communication

During and after data collection, the USAID-AN team met with research partners weekly to document study implementation learnings and clarifications required to ensure accurate data collection and entry. In addition, throughout the data collection, instant messaging was used to quickly respond to queries, allowing for real-time clarification on specific assessment questions. Partner feedback was collated into summative notes and used to refine training, the instructions manual, as well as the data collection and analysis sheets.

Country Research Partner Reports

Each research partner prepared a report (ranging from 30 to 54 pages) summarizing their experience implementing the MFEA. In each of the countries, the research partners held debriefs with the enumerators to inform the feedback provided in the reports. The reports provided an overview of their perceived acceptability of the implementation of the package as a whole (acceptability), challenges they faced, and lessons learned (implementation), as well as their reflections regarding the suitability of the package to the local context (adaptation). They also reflected on the acceptability, implementation, and adaptation for each of the assessments individually.

Data Analysis

The structured responses from the training evaluations and the enumerator feedback forms were entered into Microsoft Excel and descriptive statistics were used to analyze the frequencies of responses. Responses to open-ended questions were combined with the country reports and open-coded in NVivo (version 1.7.1., 2022). We organized the reflections from the open-ended responses from the enumerator feedback forms as well as the country reports organized by assessment. We then combined these analyses with the quantitative data collected from the training evaluations, enumerator feedback forms, instant message communications, shared documents, and routine debriefs to describe key themes related to the implementation of the MFEA. Ethics approval was obtained from John Snow Inc (JSI) Institutional Review Board and the equivalent authorities in each country to ensure the study followed ethical principles and local procedures for the participation of human subjects. In Liberia approval was obtained from the National Research Ethics Board; in Honduras approval was obtained from the Comite de Etica en Investigacion Biomedica (CEIB); in Nigeria, the National Health Research Ethics Committee; and in Timor-Leste from the Instituto Nacional de Saude.

Results

Overall, the package of assessments was found to be acceptable to implement and adaptable to different country contexts. We begin by describing the overarching key themes related to implementing the package as a whole and then describe the themes related to the training and each individual assessment.

Overarching Themes Related to the Feasibility of Implementing the MFEA

Several overarching themes related to the MFEA implementation cut across the country pilots, including: (1) the potential for vendors to be hesitant to engage in assessments, (2) the importance of ascertaining buy-in from local officials, (3) the need to shift toward electronic, rather than paper-based, data collection, (4) difficulties in selecting markets, (5) the time constraints of conducting (some, but not all) the assessments, and (6) the need for better alignment between the instructions, data collection, and data analysis sheets. With each pilot, the USAID-AN team updated the instructions, data collection, and analysis sheets to respond to the feedback provided by the research partners (Supplemental Table S3 summarizes the changes made). With each pilot, additional areas for improved clarity were identified.

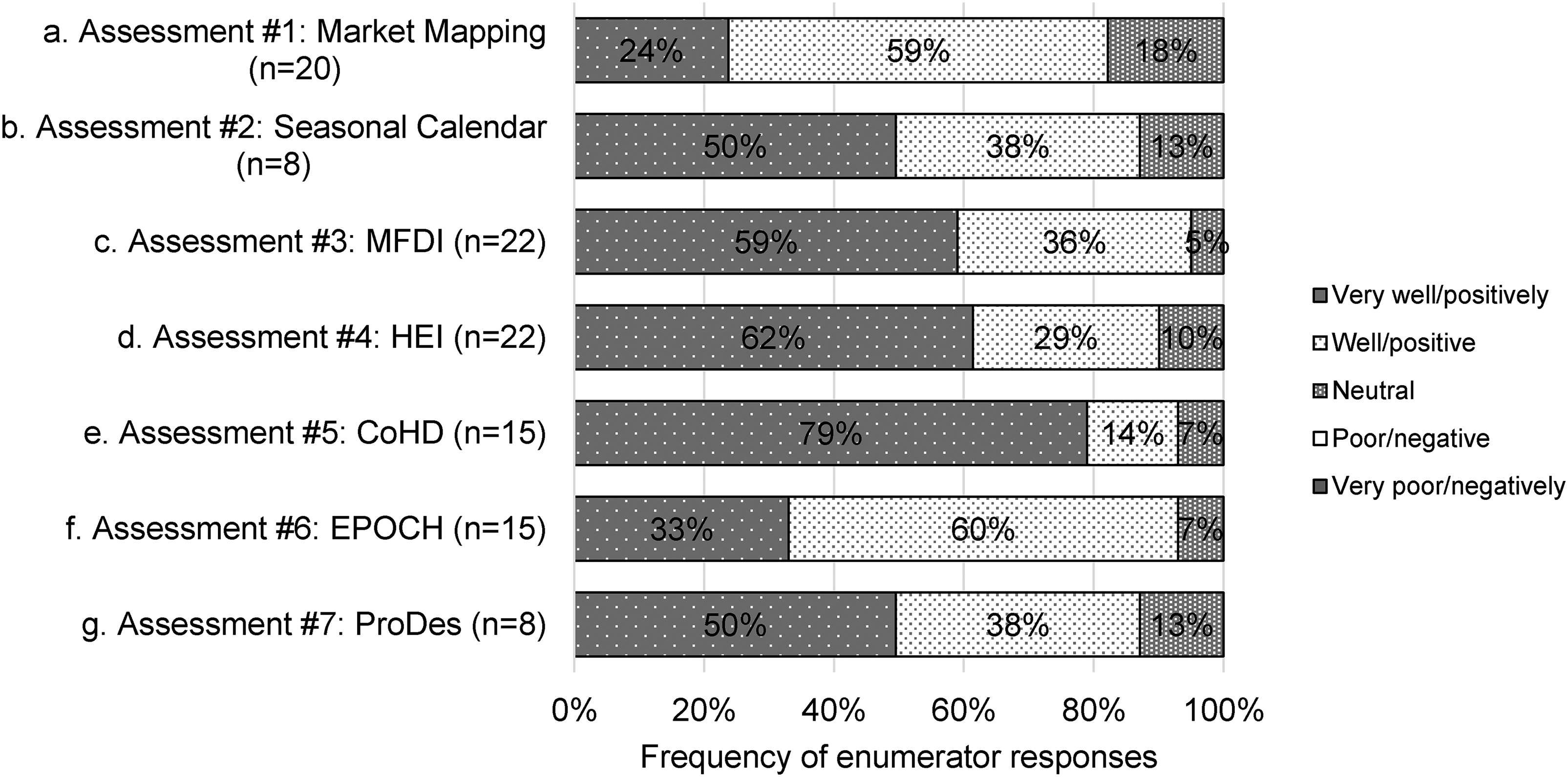

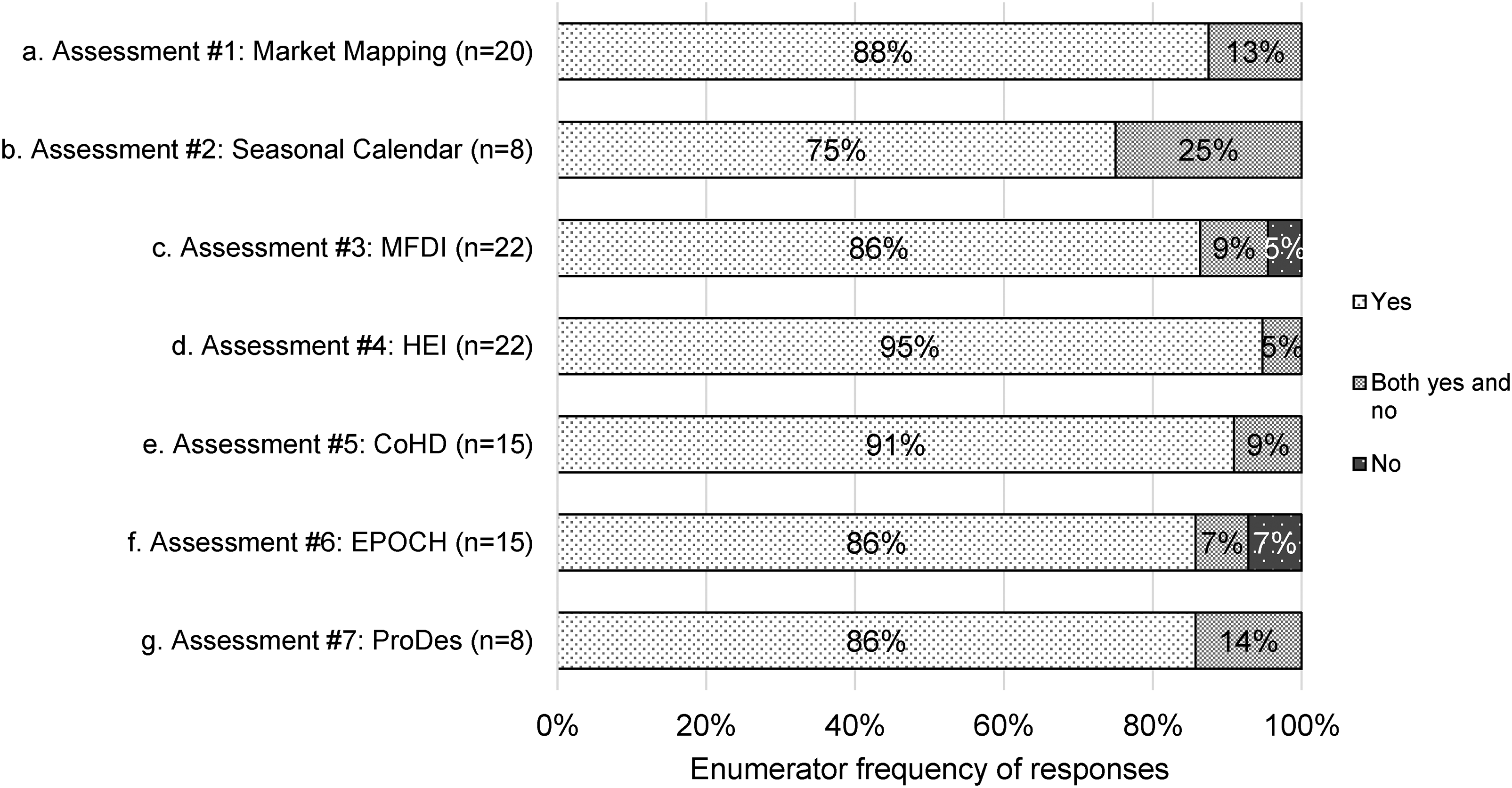

The importance of establishing trust with the vendors was identified in all but Nigeria's report. While none of the enumerators perceived vendors reacting negatively to the assessments in the feedback forms (Figure 2), the qualitative responses picked up some of these nuances. In particular, the research partners described vendors being hesitant to allow photos, providing information about hours of operation, and to sign consent forms. They also reported that vendors were generally positive about the assessments, and enumerators identified ways to increase their trust by working with local authorities, including market management, wearing identification badges and/or vests, describing the purpose of the data collection, and showing gratitude, and positive body language toward vendors. They also highlighted the importance of purchasing foods from vendors as being helpful in terms of increasing vendor cooperation, especially given that some vendors complained about the time-consuming nature of the assessments.

Enumerator perceptions of how vendors reacted to the assessments across all 4 countries rated on a 5-point Likert scale from “very well/positively” to “very poor/negatively” for each of the 7 market food environment assessments.

The research partners identified the desire to move toward electronic data collection platforms to: reduce data entry burdens, increase ease of switching between languages, currency and units, and increase their ability to have real-time data quality oversight.

One of the challenges highlighted by both the Honduras and Timor-Leste research partners related to selecting both a daily and weekly market to conduct the MFEA. In Honduras, the team described a shift away from reliance on open-air markets to convenience stores and other food outlet types. In Timor-Leste, both a daily and weekly market were not always operational in the study site included in our sample. There was a need to rely on informal markets which required local knowledge to identify, and in some cases these markets had shifted locations leading to last minute changes to data collection plans. There were some concerns about the time constraints related to conducting assessments, particularly in the weekly markets where several assessments needed to be implemented in one day. Some assessments were reported as being more time-consuming than others. Assessments #1 (Market Mapping) and #7 (Pro Desirability) required the most time to complete (8.2 and 7.0 average hours, respectively), followed by Assessments #3 (Market Food Diversity Index) and #6 (EPOCH) (6.0 and 5.6 average hours). The remaining assessments took between 4 and 5 hours to complete. However, some of the assessments were evaluated together as a group rather than standalone, or the time was listed qualitatively, such as “several hours” or a couple hours over “several days” making it difficult to make direct comparisons across assessments.

In addition to these overarching themes, the research partners identified additional contextual challenges to implementing the MFEA. The rainy season introduced challenges such as the difficulty of collecting data due to market and vendor hours being less predictable, difficulty conducting the assessments in the rain, and limited road access in remote areas. Additional challenges included the lack of strong internet connection which made uploading data difficult, as well as linguistic challenges (eg, some words not translating well to local languages) as key factors influencing the implementation of the MFEA. Moreover, in Timor-Leste, the lack of inclusion of the natural food environment, whether foods were imported or locally produced, as well as kin and community (ie, gift or exchange of food across social networks)—where many people access their food in this setting—was described as a limitation of the MFEA.

Finally, a common theme related to the adaptability of the package to diverse contexts (eg, where vendors sell foods in different ways) was the need to ensure that the vendor and food outlet types included in the instructions were better aligned with the local context.

Training

Overall, the country partners, and enumerators, viewed the training positively (Figure 3. Panel a) and felt relatively prepared to implement the assessments (Figure 3 Panel b). Most (68%) enumerators felt that the training would be useful to their own work. The country partners viewed the unofficial piloting of the MFEA in the local markets, which was conducted as part of the training, to be critical especially in terms of highlighting areas where additional clarity was needed prior to beginning the formal data collection period. Key themes related to ways to improve the training included: extending the amount of time reviewing the tools and adapting to the country context, ensuring that there was sufficient time to adequately translate all documents prior to training, and ensuring that there was real-time language translation.

Overview of training evaluations from all 4 pilot countries panel (a) includes enumerators’ views regarding certain aspects of the training based on a 5-point Likert scale ranging from strongly disagree to strongly agree. Panel (b) includes enumerators’ perceptions of how well they understand the methodology to conduct each individual assessment based on a 3-point Likert scale from “very well,” “so-so,” or “not very well.” (Values less than 5% are not labeled.)

Formative Assessment: Participatory Mapping

To address some of the challenges raised after the first 2 pilots (Liberia and Honduras) related to market selection for the MFEA implementation, we added a formative assessment of participatory mapping which was subsequently implemented in Nigeria and Timor-Leste. The formative assessment was viewed relatively favorably; however, additional formative research to inform sampling approach, vendor categories, and to collect information regarding the natural and kin and community food environments were highlighted as a needed addition to participatory mapping in one of the country reports.

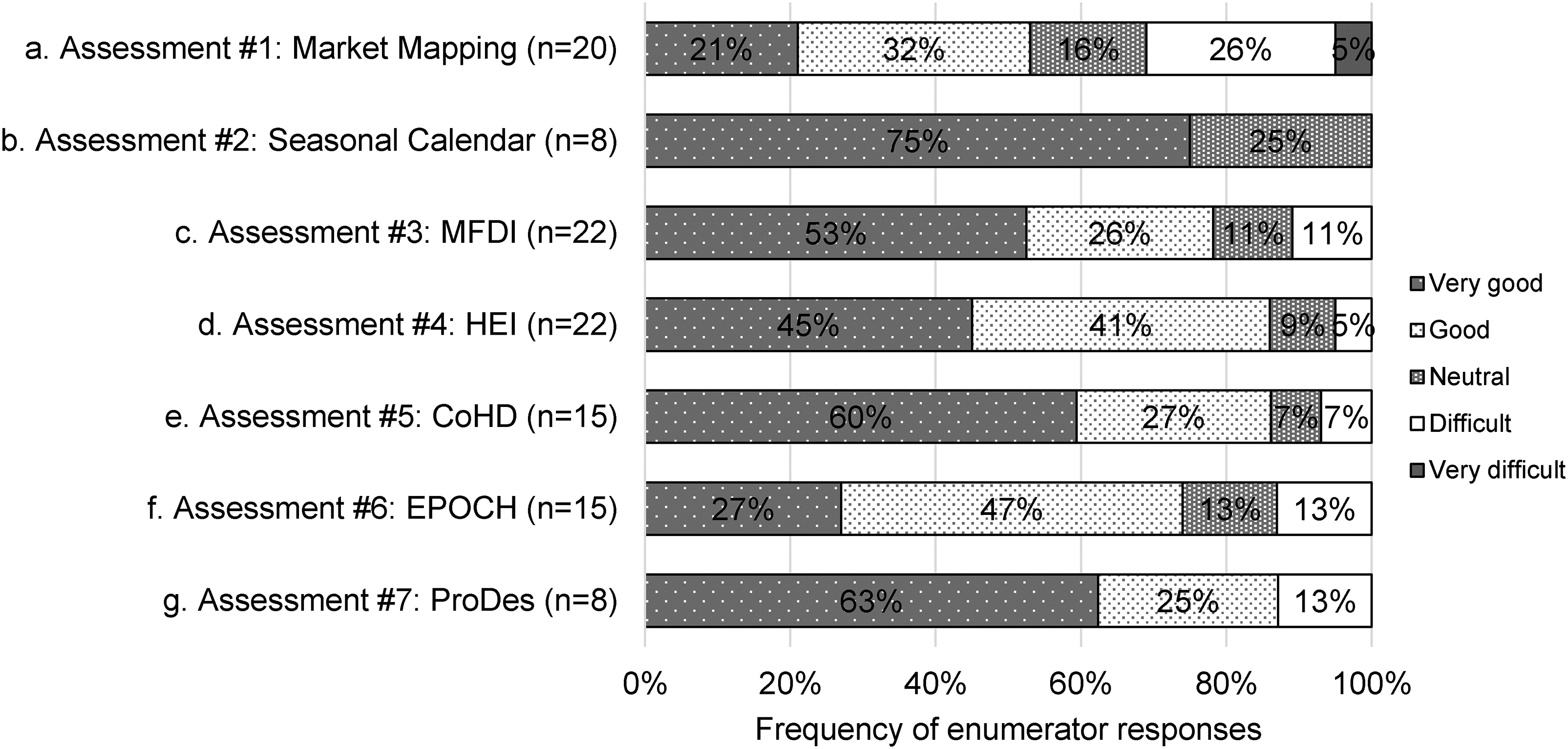

Assessment #1: Market Mapping

Half of the enumerators (52%) rated their overall experience with the market mapping assessment as “very good” to “good” (Figure 4a). Market mapping had the greatest frequency of “difficult” to “very difficult” (31%) ratings compared to the other assessments. Despite this, the majority (88%) of enumerators perceived the instructions as sufficient to complete the assessment (Figure 5a). In addition, most enumerators (82%) perceived vendors’ reactions to this assessment quite favorably, mostly ranging from “very well/positive” to “well/positive” (Figure 2a) with many describing vendors as curious, excited, and welcoming toward the enumerators and assessment. In other open-ended responses, there were some implementation challenges noted by research partners in their reports. More specifically, they indicated that it was difficult to use existing data to conduct the community mapping in some cases, given the limitations of google maps in some rural settings and the lack of informal vendors being captured, leading to a shift toward primary data collection in some countries to inform the assessment.

Enumerators ratings of their overall experience with each of the assessments across all 4 countries rated on a 5-point Likert scale from “very good” to “very difficult” for each of the 7 market food environment assessments.

Enumerators’ perceptions of whether or not instructions were sufficient to complete the assessments ratings included: “yes,” “both yes and no,” and “no.”

Three of the 4 country reports highlighted challenges related to identifying the boundaries of communities and/or markets for the assessment, as well as the difficulty in classifying some vendors as healthy or unhealthy, given the propensity of many vendors to sell a wide variety of food. Additional challenges noted in the research partner reports included: vendors selling foods that were not well displayed (eg, stored underneath a table) making it difficult to classify vendors, no system for considering the quality of the food being sold (eg, food that was spoiled), and the difficulty in capturing mobile vendors. One country team also recommended adding an additional step of conducting formative work prior to the data collection to adapt the vendor types to the local context. Lastly, only one of the research partners described the assessment as taking longer to implement than envisioned.

Assessment #2: Seasonal Food Availability Calendar

Three-quarters of enumerators (75%) rated their experience with implementing the seasonal calendar as “very good” (Figure 4b); it was the only assessment in the MFEA package that none of the enumerators rated as “difficult” to “very difficult.” Some enumerators highlighted time constraints, vendor suspicion, and literacy as challenges in open-ended responses. The majority (75%) of enumerators perceived the instructions as sufficient to complete the assessment (Figure 5b). In addition, most enumerators (88%) perceived vendors’ reactions to this assessment favorably, with responses ranging from “very well/positive” to “well/positive” (Figure 2b). Open-ended responses highlighted that once vendors were able to conceptualize the activity (ie, free listing for seasonal availability), the focus group discussion went smoothly.

Key challenges identified in the research partner reports included the long length of time to complete the assessment, particularly in the weekly markets, the low literacy levels of participants leading to difficulty conducting the free listing, and the need for a translator for the specific local dialect in one of the pilot countries. In addition, there were a few challenges related to vendor participation noted in the country reports including: the difficulty getting vendors to participate when they travel far distances to reach the market (Honduras and Timor-Leste), and cultural norms in Nigeria that led to the inclusion of only male vendors participating in the focus group discussion, resulting in dairy (which is typically sold by female vendors) not being adequately captured in the assessment.

Assessment #3: Market Food Diversity Index (MFDI)

Most of the enumerators (79%) rated their overall experience with implementing the MFDI as “very good” to “good” (Figure 4c). In the open-ended questions, some enumerators described difficulty in finding some categories of foods, vendors only selling one type of food, and time constraints. Most enumerators (86%) perceived the instructions as sufficient to complete the assessment (Figure 5c). Almost all enumerators (95%) perceived vendors’ reactions to this assessment favorably with responses ranging from “very well/positive” to “well/positive” (Figure 2c).

The main challenge to the implementation of MFDI highlighted in the country reports was the difficulty classifying foods that fall into the different DQQ food groups prior to data collection. It was suggested that this classification challenge could be overcome by providing enumerators with a comprehensive list of foods within each food group prior to conducting field work. Feedback from one pilot country indicated that they felt that engaging partners with nutrition expertise would be needed to do this.

Another challenge faced by implementing teams was the vendor sampling for this assessment. The approach to sampling for this assessment included selecting 10 different pre-specified vendor types for conducting an inventory of all the foods sold to provide insight into diversity. However, in some cases it was difficult to identify all 10 vendor types in the market. Moreover, in Nigeria, vendors were often selling only one type of food (eg, sweet potatoes), which limited the usefulness of the assessment using the predetermined sampling approach.

Assessment #4: Healthy Eating Index (HEI) of Food Supply and Assessment #5: Cost of a Healthy Diet

Most enumerators rated their overall experience with implementing the HEI (86%) and CoHD (87%) assessments as “very good” to “good” (Figure 4d and 4e, respectively). Enumerators’ descriptions of the challenges they faced in open-ended responses ranged from “none,” “no challenges,” “not stressful” to constraints with time and recruiting vendors. Almost all (96%) perceived the instructions as sufficient to complete the HEI assessment (Figure 5d) and most (91%) perceived the instructions as sufficient to complete the CoHD assessment (Figure 5e). In addition, most enumerators perceived vendors’ reactions to these assessments quite favorably ranging from “very well/positive” to “well/positive” for HEI (91%) and CoHD (93%) (Figure 2d and 2e) and enumerators described the purchase of foods as incentivizing to vendors, with some vendors being actively engaged in the assessment and conveying their enthusiasm to fellow vendors.

In contrast to the relatively positive responses received from the enumerator feedback forms, the implementation of the HEI and CoHD was described in the country reports as being “lengthy,” “not easy to administer,” and “tedious” but also provided suggestions on how the assessments could be modified to become less burdensome. Given that enumerators indicated that “bookkeeping” was not conducted by vendors, this assessment required a significant amount of weighing food. This was particularly burdensome when food was not sold in standardized weights (eg, piles rather than by kg). In Timor-Leste, the weighing of live animals (eg, chicken) was also highlighted as challenging. Three of the 4 country reports mentioned the importance of getting the right scales for these measurements, and the need for better guidance in terms of which scales to use.

In the case of CoHD, enumerators had difficulty identifying and discerning foods to include as part of (1) an “absolute least cost” diet, and (2) “lowest cost commonly purchased” diet. While the lowest cost commonly purchased foods were identified by the vendor and conveyed to the enumerator, the calculation of the vendor's absolute least cost food offering was difficult for enumerators to obtain in the field during the vendor inventory, and it was suggested that this be conducted during the data analysis rather than data collection stage. Another challenge raised in the country reports was the need for quantitative food based dietary guidelines, which none of the countries included in the pilot had. This meant that guidelines from neighboring countries were substituted.

Assessment #6: Environmental Profile of a Community's Health (EPOCH)

Almost three-quarters of enumerators (74%) rated their overall experience with implementing the EPOCH as “very good” to “good” (Figure 4f). Some challenges described in open-ended responses included time to complete the assessment was “too short,” challenges with the market walk, and the inability to find advertisements. The majority (86%) perceived the instructions as sufficient to complete the assessment (Figure 5f) and most enumerators (93%) perceived vendors’ reactions to this assessment quite favorably ranging from “very well/positive” to “well/positive” (Figure 2f).

There was a consensus among the country implementing partners described in the country reports that EPOCH was “easy to use,” without requiring significant modifications. The one component of the assessment that created some confusion among research partners was related to the assessment of fortified packaged foods where the instructions and purpose were not clear to enumerators. Additional challenges highlighted in the reports included the difficulty defining community and market boundaries, particularly in rural areas that were less populated.

Assessment #7: Produce Desirability Tool (ProDes)

Most enumerators (88%) rated their overall experience with implementing ProDes as “very good” to “good” (Figure 4g). In each of the country pilots, some market basket items were not available at the time of data collection making comparability of scores among locations difficult. The majority (86%) of enumerators perceived the instructions as sufficient to complete the assessment (Figure 5g) and most (88%) perceived vendors’ reactions to this assessment quite favorably ranging from “very well/positive” to “well/positive” (Figure 2g), with enumerators describing vendors as appreciative with the purchase of their foods to enable the scoring of the fruits and vegetables.

The reported feasibility of implementing ProDes was variable based on the country reports. While one country indicated that it was “easy to administer,” another indicated that they felt it was too subjective and would not be “comparable between sites.” In one country, they used the same enumerator for the ProDes assessments to improve consistency in terms of its implementation in each of the markets. The biggest challenge, noted in all 4 country reports, was the lack of availability of the predetermined fruits and vegetables across different markets which precluded the evaluation of the certain market basket. While in the training each country team identified the commonly consumed fruits and vegetables that they believed to be available in the markets at the time of the data collection, there were many cases where they were not available in the markets during data collection. In Timor-Leste, the lack of fruits sold in markets during the rainy season made this assessment even more difficult.

Discussion

We assessed the acceptability, feasibility, and adaptability of implementing a suite of market food environment assessments in diverse LMIC market contexts and found the assessments to be described relatively favorably by enumerators and research partners. However, there were some limitations noted related to the time burden of data collection, data collection modality, and the practical implementation of the assessments.

Lessons Learned From Implementing the MFEA

The assessment viewed least favorably by all enumeration teams (based on the enumerator feedback forms) was Market Mapping, with mixed reviews of other assessments. The Seasonal Food Availability Calendar was viewed most favorably by all enumeration teams (based on the enumerator feedback forms) and was the only assessment where none of the enumerators rated it as difficult. Despite a few challenges, particularly with HEI/CoHD, the other assessments were quite similar in having relatively high frequencies of “very good” to “good” ratings in the enumerator feedback forms. For example, MFDI was easy to interpret, apart from some enumerators having challenges categorizing food groups. In addition to the perceived ease of interpretation, an advantage of this assessment was that it can be used to identify the availability of food groups in markets according to both the MDD-W 19 and the DQQ, 20 which could subsequently be easily compared to dietary diversity at the consumer level.

While the adapted HEI received relatively positive ratings in the enumerator feedback forms, several challenges related to its implementation were highlighted in the country reports. The HEI had been identified as a promising tool for assessing the quantity of the food supply needed to meet recommended guidelines, 5 yet it was burdensome given the need to ascertain the total inventories of vendors who have limited (if any) documentation of their inventory. While this assessment may be feasible in contexts where vendors document their inventory in a detailed way, it would introduce an additional time requirement for those who do not keep detailed inventory records and therefore may not be appropriate in all contexts. We found the adapted CoHD to be easy to implement; however, in several cases enumerator confusion was found when they were asked to identify least cost foods collecting the data, which required conducting real-time calculations. By using a tablet that automates standardized units and calculations, this challenge could be overcome. It is important to note that the adapted CoHD assessment provides a rough estimate of food prices in markets, given the methodology used. However, for those who want more precise information about food prices that incorporates the edible weight of food, they may consider using the Food Prices for Nutrition protocols, 21 which includes a guide for collecting and analyzing food price data (https://sites.tufts.edu/foodpricesfornutrition/tools/). The adapted EPOCH assessment was viewed as straightforward to implement in the country reports, with the exception of the component of the assessment related to fortified foods which caused some confusion by the teams. The adapted ProDes assessment was generally viewed positively; however, the enumerators recounted some difficulties with the perceived subjectiveness of the ratings for fruits and vegetables.

Recommendations for Implementing the MFEA

Based on our pilot work, we identified both strengths and weaknesses related to each of the assessments. In recognition that in some resource-constrained settings, it may be necessary to select key assessments rather than implementing the entire MFEA package, we recommend the use of the Seasonal Calendar or MFDI to measure food availability, the CoHD as a standalone assessment (rather than using it in combination with the HEI of the Food Supply) to measure food prices, and the EPOCH assessment to measure food marketing. In addition, it may be beneficial to complement the MFEA with consumer-focused assessments to better understand the interactions between consumers and their food environments. However, the selection of assessments should be based on the needs of the researchers or practitioners. In some cases, it may only be necessary to capture a single dimension of the food environment, in which case you could solely use the assessment that targets that specific dimension. Lastly, it is important to consider the depth of information that is required for a given activity and how the information will be used.

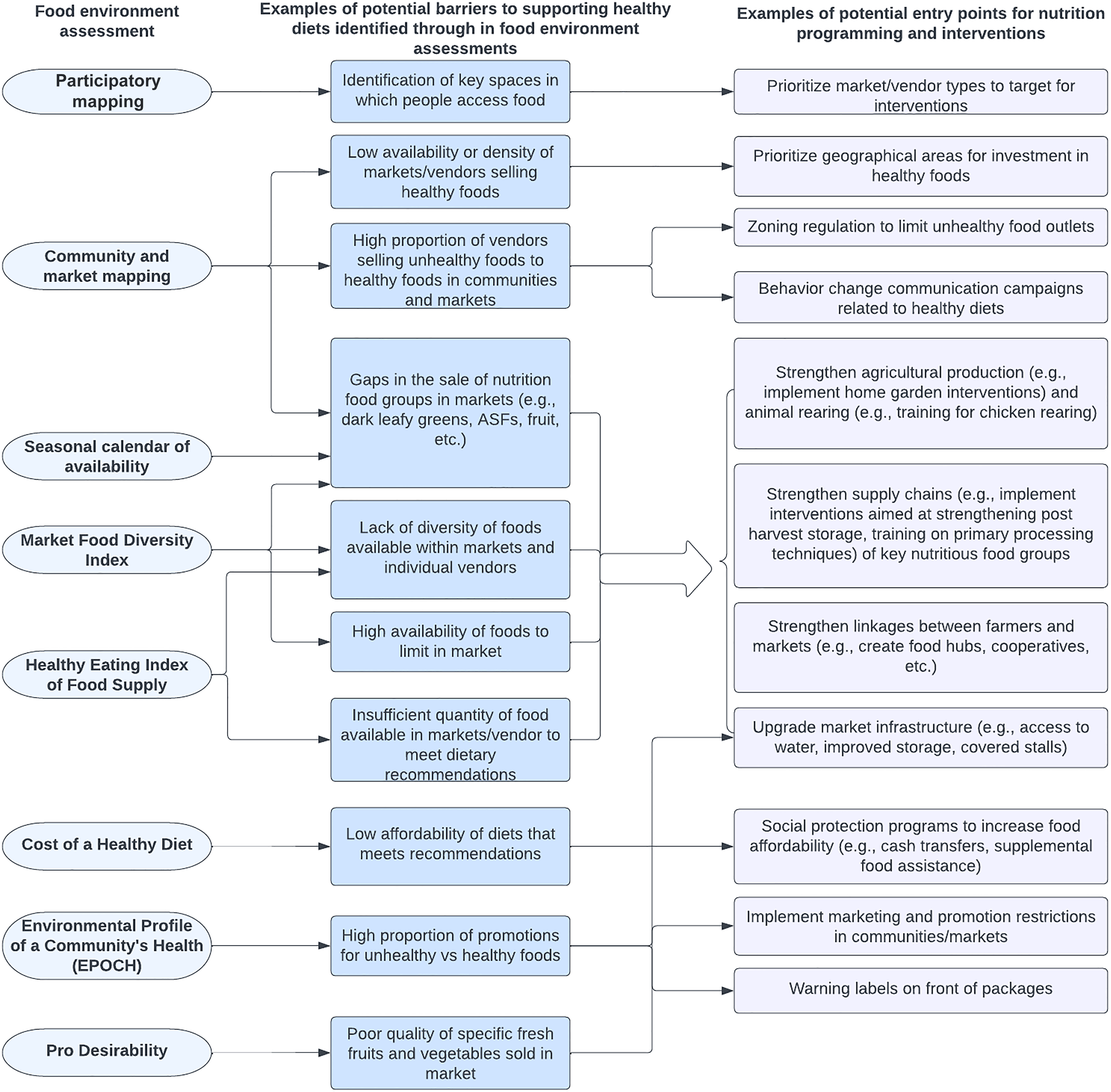

How Tools Could Inform Programs and Interventions

The suite of food environment assessments piloted in this study has the potential to inform both food environment research as well as food and nutrition programs and interventions, including identifying specific food environment dimensions where practitioners can intervene to have the greatest potential impact on food environments to support safe and nutritious diets. Figure 6 provides examples of how these assessments can inform nutrition programming and interventions targeting individuals, households, and the food environment itself. This study helps to confirm that the MFEA is relatively easy to implement, and most activity teams would likely have success including the assessments in formative work to inform program design, even with little previous food environment research expertise.

An overview of the ways in which the MFEA can inform potential entry points for nutrition programming and interventions.

Limitations of MFEA

One potential limitation of the MFEA is its main focus on market food environments, and open-air markets in particular. While markets are a critical food environment in LMICs, it is important to recognize that people are increasingly accessing foods from other market food environments (eg, convenience stores, supermarkets, etc). External shocks, such as economic shocks, the COVID-19 pandemic, and conflict can lead to markets closing and changes in where consumers access fresh food. 22 Additionally, people access food from wild and cultivated environments and kin and community.6,23 To address this gap, the MFEA package could be modified to be applicable across different food environment types and/or combined with assessments designed to capture dimensions of natural food environments. Moreover, combining these assessments with consumer-facing assessments to better understand how consumers’ perceptions influence their food choices would also increase the richness of the data obtained and help to better target programs and interventions aimed at improving diets. A second limitation is the lack of data collected on food safety. Issues of food safety, such as overuse of pesticides in fruits and vegetables, preparation methods of street foods, the sale of expired foods, etc are often raised by consumers when discussing the drivers of their food choice.6,24,25 Lastly, enumerators implementing the assessments across all 4 pilot countries had a desire for digital rather than paper data collection. There are several comparative advantages to collecting data electronically rather than paper based, 26 including that it increases the ease of data collection, can improve data quality (through the use of programming constraints in the device), and reduce data entry challenges. Lastly, we found that the lack of quantified FBDGs in countries led to the reliance on neighboring country dietary guidelines, which was sub-optimal. However, the final MFEA guidelines suggest using the Healthy Diet Basket food groups when in-country FBDGs do not exist.

Study Limitations

While this study has several strengths, including being the first study (to the authors’ knowledge) to report on the feasibility of implementing a package of food environment assessments in LMICs, there are also some weaknesses. Our data related to the feasibility of implementing the 7 assessments relies on partner reports. It is possible that some research partners did not feel comfortable sharing their honest reflections regarding the assessments. However, based on the constructive feedback received through the enumerator feedback forms and country reports, we anticipate that this bias was limited. Another possible limitation is due to small sample sizes and inconsistent assessment evaluation. For example, some assessments had more (n = 20) enumerator evaluations, while others had less (n = 8). Also, in some cases multiple assessments were evaluated on a single form making it impossible to disentangle certain nuances respective to each individual assessment. Another limitation was our inability to include the enumerator feedback forms from Timor-Leste; however, the country report was very detailed and provided many recommendations for improving the assessments.

Conclusions

Overall, we found the package of food environment assessments feasible to implement with additional modifications based on the pilot study learnings. While we identified some assessments to be more feasible to implement than others, we anticipate that the package of assessments will be useful for informing nutrition programming and interventions in LMIC contexts that are largely reliant on open-air markets for their purchasing of food. Moreover, researchers and practitioners focused on measuring specific dimensions of the food environment could choose to only implement the tools that capture those dimensions, rather than the full suite of assessments. We recommend adopting digital data collection templates and guidance for future data collection to improve data quality and reduce the burden of data entry. The suite of assessments can be strengthened by the addition of tools that collect information regarding different food environment types as well as consumers’ perspectives. Given the changing nature of food environments in LMICs, we anticipate that the MFEA will need to continue to be adapted (to some extent) in different contexts. We also recommend ongoing testing of the assessments in diverse contexts such as urban informal settlements and areas surrounding schools.

Supplemental Material

sj-docx-1-fnb-10.1177_03795721241296185 - Supplemental material for Piloting Market Food Environment Assessments in LMICs: A Feasibility Assessment and Lessons Learned

Supplemental material, sj-docx-1-fnb-10.1177_03795721241296185 for Piloting Market Food Environment Assessments in LMICs: A Feasibility Assessment and Lessons Learned by Shauna Downs, Teresa Warne, Sarah McClung, Chris Vogliano, Noni Alexander, Gina Kennedy, Selena Ahmed and Jennifer Crum in Food and Nutrition Bulletin

Supplemental Material

sj-docx-2-fnb-10.1177_03795721241296185 - Supplemental material for Piloting Market Food Environment Assessments in LMICs: A Feasibility Assessment and Lessons Learned

Supplemental material, sj-docx-2-fnb-10.1177_03795721241296185 for Piloting Market Food Environment Assessments in LMICs: A Feasibility Assessment and Lessons Learned by Shauna Downs, Teresa Warne, Sarah McClung, Chris Vogliano, Noni Alexander, Gina Kennedy, Selena Ahmed and Jennifer Crum in Food and Nutrition Bulletin

Footnotes

Acknowledgements

SD, TW, SM, CV, NA, GK, SA, and JC designed the study of feasibility of the MFEA detailed in this paper. TW conducted data analysis and created the tables and figures with support from SM, CV, and NA. SD, SA, GK, and JC conceptualized the broader research effort adapting food environment assessments for use in LMICs. SD, TW, and CV led the writing of the manuscript with support from SM and NA. JC and GK provided reviews of manuscript drafts. Authors also acknowledge the contributions of research partners MYPE Consultores in Honduras, ADARA in Liberia, Ipsos in Nigeria, and Bridging Peoples in Timor-Leste. Additionally, authors acknowledge the support of Christopher Rue and Ingrid Weiss at the USAID Bureau for Resilience, Environment, and Food Security.

Author's note

At the time that this work was conducted, Sarah McClung and Gina Kennedy were also affiliated with Global Alliance for Improved Nutrition, Chris Vogliano was affiliated withHelen Keller International, and Noni Alexander and Jennifer Crum were affiliated with JSI Research & Training Institute, Inc. Selena Ahmed iscurrently affiliated with American Heart Association.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research and/or authorship of this article: The research was supported by the US Agency for International Development USAID; contract number 7200AA18C00070; USAID Advancing Nutrition).

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.