Abstract

Generative AI has seen rapid development and adoption in educational settings, presenting both benefits and challenges for universities. This paper examines the response of UK universities, particularly the Russell Group of research-led universities, in developing policies and guidance on the use of generative AI. The analysis covers the implementation of the Russell Group's principles across member institutions, addressing areas such as academic integrity, assessment practices, student use, and guidance for staff. It also explores the varying levels of policy development at non-Russell Group universities, finding that some institutions may struggle to find resources for this issue. The paper situates these institutional responses within theoretical frameworks, highlighting how generative AI has disrupted established practices in higher education. It concludes by emphasizing the need for ongoing collaboration, policy development, and responsiveness to student perspectives as universities navigate this technology.

Introduction

Background on generative artificial intelligence (AI)

Generative AI has made headlines around the world since late 2022 as a result of the launch of ChatGPT by OpenAI, a start-up partly financed by Microsoft. The system was made freely available online, enabling millions to experiment with its capabilities. OpenAI has since produced a more powerful Version 4, available with limitations unless with a subscription.

Microsoft incorporated successive versions of ChatGPT into its service, now called Copilot. Google launched a similar product, originally Bard, now called Gemini, which is continually updated and learns from user inputs, web searches and Google’s Knowledge Graph. Claude, from Anthropic, offers similar functionality through free and paid versions. The situation is evolving rapidly, and the landscape of generative AI may have changed considerably by the time this article is published.

These systems can produce bodies of text, analyse content, perform mathematical operations and generate programming code. Consequently, they are being used by students for essays or coursework, creating plagiarism concerns when work is not completely produced by students (Barnett, 2023; Dwivedi et al., 2023).

JISC (2023) points out that AI was already delivering value in education before ChatGPT, citing examples including ‘Bolton College’s Ada and FirstPass, adaptive learning with CENTURY Tech at Basingstoke College of Technology, the Beacon digital coach in Staffordshire University, and the Taylor digital assistant in the Open University’. JISC's (2024: 11) study on student perceptions found that ‘Students/Learners have clearly articulated the need for comprehensive support from their institutions, including access to generative AI tools … the development of critical information literacy skills, and guidance on ethical use’. Shepherd’s (2023) report for JISC outlines the terms of generative AI systems, noting variations in whether user inputs and resulting outputs are used for further training.

Institutional response

Universities’ responses to this challenge have varied: Oxford and Cambridge initially banned ChatGPT use (Burnett, 2023) before modifying their stance to approve general use while banning it for essay writing (Stephens, 2023). Overall, universities are formulating policies to address these concerns around academic standards.

The UK's Russell Group (2023) of 24 research universities collaboratively issued a statement of principles, including:

Supporting students and staff to become AI-literate; Equipping staff to support appropriate student use; Adapting teaching and assessment to incorporate ethical AI use; Ensuring academic rigor and integrity; Working collaboratively to share best practices.

Each principle is elaborated on, addressing concerns such as plagiarism risks when ‘generative AI tools re-present information developed by others’ (Russell Group, 2023: 2).

In the USA, the US Department of Education's Office of Educational Technology (2023) produced a report on teaching and learning with AI, with principles applicable to tertiary education. The UK Department for Education’s (2023: 35) evidence summary concluded: ‘Increased academic misconduct, pupil over-reliance on AI, and data security and privacy issues were prominent concerns’ with ‘a clear appetite among respondents for support and intervention’.

The International Association of Universities’ survey on ‘the digital transformation of higher education’ (European University Association, 2024) includes questions on generative AI applications. The European Commission Directorate-General for Research and Innovation’s (2024) ‘Living guidelines on the responsible use of generative AI in research’ addresses ethical use, legislation, transparency and intellectual property rights. UNESCO's (2021) publication AI and Education, while predating recent developments, remains relevant in its concern for enhancing inclusion, quality learning and educational management.

Research objectives

The objectives of the research reported here are twofold: first, to determine how far the Russell Group principles have been applied by members of the Russell Group of universities and, second, to discover the extent to which policymaking on generative AI has been undertaken in universities that are not members of the Russell Group. The research also has potential relevance for institutions worldwide that are addressing generative AI management.

Literature review

Current state of research on generative AI policies

A search of the Web of Science for articles using the search terms ‘generative AI’, ‘policy OR policies’, ‘universities OR higher education’ and ‘England OR United Kingdom’ produced only two results, with 47 articles retrieved when geographical limitations were removed. After filtering out irrelevant articles, only 16 remained, all published in 2023 or 2024. This limited literature reflects the recency of generative AI as an institutional concern.

Challenges to traditional academic models and integrity

Several researchers highlight how generative AI fundamentally challenges established educational paradigms. Walczak and Cellary (2023) note that generative AI presents a challenge to the role of higher education teachers and the traditional model of teaching and learning. Michel-Villarreal et al. (2023) argue that ChatGPT's impact on universities will be revolutionary, raising concerns about academic integrity, plagiarism detection and critical thinking skills. Their ‘interview’ with ChatGPT using ‘thing ethnology’ (Nicenboim et al., 2020) identified key policy priorities, including policy development, education and training, collaboration, research, ethical review processes and continuous monitoring.

Boháček (2023: 4) demonstrated practical concerns by comparing genuine high-school student essays with ChatGPT alternatives, finding that ‘ChatGPT can quickly produce high-school-level coursework that peers consider better than human-written text, even in a low-resourced language like Czech’. Importantly, AI text detectors failed to identify these essays as AI-generated, emphasizing the need for robust institutional policies.

In a focused study on academic-integrity policies, Perkins and Roe (2023) analysed 142 international policies and ‘revealed a significant gap in these policies concerning the role and use of AI tools and other emerging technologies in academia’ (12). They recommend that policies ‘should explicitly outline the role of AI tools and other emerging technologies in academia, and clarify the ethical boundaries of using these tools in academic work’ (12).

Group perspectives and generational divisions

Research reveals significant variation in how different interested parties perceive generative AI. Chan and Lee (2023) examined the generation gap between Generation Z students and Generations X and Y teachers, finding students were generally positive about AI benefits while teachers expressed concerns about over-reliance and ethical considerations. They conclude that ‘educational institutions must foster critical thinking, digital literacy, and AI literacy skills in students, ensuring that they are able to evaluate the credibility of information and use GenAI technologies in a responsible and ethical manner’ (Chan and Lee, 2023: 18).

Chan and Hu (2023) similarly studied Hong Kong university students’ perceptions, finding generally positive attitudes but significant concerns regarding accuracy, privacy, ethical implications and the lack of clear institutional policies. They conclude that institutions should address these concerns by ‘harnessing the power of GenAI [generative AI] to enhance teaching and learning outcomes while also preparing students for the future workforce in the AI-era’ (Chan and Hu, 2023: 15).

From the perspective of educators, Chiu (2023) studied 88 schoolteachers and educational leaders regarding generative AI's impact on education. The findings address issues across student learning, teacher training, assessment procedures and administration, suggesting the need for new assessment methods, revised curricula and improved technology training.

Policy development frameworks and equity considerations

When this author asked Google Bard about important policy concerns for UK universities responding to generative AI, the system identified five categories that parallel the Russell Group statement: academic integrity and assessment design; ethical AI use and digital literacy; teacher training and support; transparency and communication; and ongoing research and evaluation.

Bakare et al. (2023) highlight equity considerations, noting the significant skills gap between developed and developing countries that must be addressed for the effective and ethical use of these technologies. The most comprehensive policy framework comes from Chan (2023), who surveyed 457 students and 180 teachers and administrators across Hong Kong universities. The findings suggest ‘an openness to adopting generative AI technologies in higher education and a recognition of the potential advantages and challenges’ (Chan, 2023: 12). Chan developed a three-dimensional policy framework: the governance dimension (involving senior management and ensuring equity in access); the pedagogical dimension (involving teachers and addressing assessment and employment preparation); and the operational dimension (involving teachers and information technology staff and providing training and support).

Conclusion

While the research literature on generative AI policymaking remains limited, the rapid publication rate suggests growing interest as new systems are implemented. The reviewed studies consistently emphasise the need for comprehensive institutional policies addressing academic integrity, stakeholder training, ethical guidelines and equitable implementation. Chan’s (2023) three-dimensional framework provides perhaps the most useful structure for institutions developing their own policies.

Theoretical frameworks

A number of theories exist that seek to explain policymaking in organizations – for example, institutional theory (Meyer and Rowan, 1977) suggests that policy is influenced by external factors, such as laws, regulations and social norms. The external factor in the case examined here is a technological innovation of a kind that was not experienced by organizations in the 1970s, but is certainly of primary significance today. Meyer and Rowan (1977) suggest that, among other things, organizational rules and policies aim to increase the probability of the survival of the institution. The threats to higher education resulting from generative AI are already the subject of speculation, with a focus on the loss of jobs as a result of AI taking over from teachers, and problems of equity and access arising out of a lack of infrastructure or unequal access to the technology (e.g. Chan and Tsi, 2023). However, from the various documents considered earlier, institutional survival does not seem to have been a primary concern.

Different interest groups certainly exist in relation to higher education: teachers, researchers (often the same people), students, administrators and central government officers. This might suggest that advocacy coalition theory (Sabatier and Weible, 2007) would offer an appropriate framework. This theory assumes that policy actors are members of coalitions of interested parties – in the case of higher education, this would be students, teachers, administrators and technical staff. These groups may have different views on the policy directions to be taken by the institution and the policy that emerges will reflect these views, or at least the views of those with sufficient power to influence policy. In the case of generative AI, however, the research that exists at this point suggests quite a high degree of unanimity among the different interest groups on the kinds of policies needed.

Rational choice theory (Scott, 2000) assumes that organizations make rational decisions by weighing the costs and benefits, and that policies are developed on the basis of an organization's self-interest. The theory arose as an explanation for human behaviour in the work of economist and philosopher Adam Smith (1776), rather than institutional behaviour. However, in the 20th century, it has been adopted by economists, sociologists, organizational theorists and game theorists to explain both individual and organizational behaviour. It would seem that universities in the UK are applying rational choice to some degree in the development of generative AI policies. Clearly, the effective training of students and teachers in the ethical use of these systems will have costs and, at a time of increasing financial constraints, there are unlikely to be any new sources of income to cover those costs. Some rational analysis of costs and benefits must take place if the benefits are to be shown to bring about cost reductions in some part of the educational process.

Policy diffusion theory originally evolved to explain how policies adopted in one branch of government or in one country spread to other branches or countries. It drew on Everett Rogers' (1962) diffusion of innovation theory and the notion of interdependency among organizations. Research has identified four mechanisms for the diffusion of policy: learning, competition, coercion and emulation. The core of policy diffusion, however, relates to how policymakers react to decisions made by other units of government or other organizations. In the case of the Russell Group principles, the action to develop policy does not appear to have been driven by policies developed elsewhere, but by the significance of the potential problems of academic ethics, assessment and so on. The case of the non-Russell Group universities is rather different, and they might be seen as learning from the work of the Russell Group and/or emulating the Russell Group's policies.

Punctuated equilibrium theory offers another perspective on policymaking, emphasizing that policy change is not always gradual but can occur in sudden, transformative shifts. Originating in evolutionary biology (Eldredge and Gould, 1972), the concept was adapted to public policy by Baumgartner et al. (1993), who argued that policy systems often remain stable for long periods due to institutional inertia and resistance to change but are punctuated by episodes of rapid transformation, triggered by shifts in public attention, external shocks or the reconfiguration of power dynamics. In the UK, the last occasion when punctuated equilibrium was experienced was probably the introduction of the Research Assessment Exercise (Morgan, 2004), which radically changed the research culture in UK universities. Today, however, it would be difficult to argue that UK universities are in a state of equilibrium, with tensions arising as a result of financial problems, the marketization of higher education and the sudden reduction in overseas student numbers.

Method

Data collection

The websites of the Russell Group universities were scanned to locate policy documents on the application of generative AI systems. As noted above, the Russell Group has prepared a general policy document on this topic (Russell Group, 2023). As each web page was located, its subject content was assessed to identify the broad themes discussed. In general, the pages reflected the topics dealt with in the Russell Group principles, although, occasionally, a page would deal with more than one topic – for example, a page intended for undergraduate students could deal with both the ethical and unethical use of generative AI. No more detailed thematic analysis was conducted, since greater detail was not required to satisfy the research objectives.

As a result of this process, the documents were found to deal with the following topics: policy on generative AI in general; academic integrity; assessment; undergraduate use; postgraduate and research use; and academic staff use. Variations on these headings were often used in the headings of the retrieved documents, and these are used in the results section below.

The web pages of a second set of 24 universities outside the Russell Group were compared thematically with the first group. The second group of universities was chosen by random selection (using an online random number generator). The aim in undertaking this second round of search and analysis was to determine the extent to which the Russell Group's principles had spread beyond the members of the Russell Group. There are, after all, 164 university institutions in the UK, and the Russell Group represents just under 15% of this total. An analysis of the web pages of a sample of the other universities, therefore, gives us additional evidence on the spread of policies on generative AI.

The limitation of this method is that not all internal documentation is on a university's publicly available website. Some universities use restricted intranets for their internal documentation or simply retain it on common, password-protected drives, and, of course, information on AI for students may be kept on online-learning platforms. These strategies clearly prevent external access to policy documents.

Appendix 1 lists the documents analysed, with links to copies in the Internet Archive.

Results

Russell Group universities

As noted above, some of the universities in the Russell Group have password-controlled areas of their websites, which, presumably, contain information thought not to be of public interest, and are restricted to access by students and staff. Others have the information on online-learning platforms, which are inaccessible to external viewers. Consequently, no information was available for five of the universities, including Oxford and Cambridge. Two universities provide only minimal information: Queen Mary University of London and the University of Nottingham; again, it is assumed that relevant information exists on password-protected systems. The documents were analysed in terms of the following themes: general policies, academic integrity, assessment, undergraduate use, use by academic staff, and postgraduate and research use.

General policies

Understandably, given that these institutions are members of the Russell Group, the overall policy direction is related to the Russell Group principles: universities set up working parties to address the problems of plagiarism and the role of AI in modes of assessment; university libraries evolve instructions on correct citation and reference practices; study skills units develop policies on plagiarism and effective AI use, often with case studies; and individual departments establish task forces to establish best practice for their discipline. Collaborative work is carried out not only between different academic and administrative departments and committees, but also involving student input. Academic staff are advised to discuss the issues with students (e.g. Queen Mary University of London, n.d. a) and some institutions specifically involve students in the policymaking process – for example, Queen's University Belfast (n.d.) has a video showing student reaction to generative AI.

Academic integrity

In general, the universities recognize the benefits that generative AI can bring to student learning and seek to establish a balance to avoid misuse. The most commonly perceived misuse is a form of plagiarism, in which the output of the AI system is presented as the student's own work. Thus, the University of Manchester notes: Our University's position is that when used appropriately AI tools have the potential to enhance teaching and learning, and can support inclusivity and accessibility. Output from AI systems must be treated in the same manner by staff and students as work created by another person or persons, i.e., used critically and with permitted license, and cited and acknowledged appropriately. (University of Manchester, 2023)

Assessment

There is an obvious connection between academic integrity and assessment in that the issue of plagiarism is related to both. The University of Glasgow (n.d.) suggests that reliance on memorization and the regurgitation of facts as a mode of assessment may result in plagiarism through AI being more likely. A paradigm shift is suggested in which assessments could require students to critically analyse sources, integrate their own ideas and evaluate the credibility of sources.

King's College London (n.d. b) emphasizes the ethical use of AI tools in assessment procedures, while agreeing in general with Glasgow's position. King's is concerned about the inherent biases in the tools, which have been developed outside the higher education environment. The University of Warwick takes a different approach, drawing attention to the relevance of assessment design principles and suggesting: Whilst we should consider incorporating technologies such as AI in our assessment approaches to keep our assessments relevant and aligned to the competencies and attributes of students, care should be taken only to include technologies where they enrich the student experience. (Fischer et al., 2023: 46)

The Working Group on Artificial Intelligence Tools in Teaching and Assessment at Imperial College London has produced assessment guidance that references the Russell Group principles and recommends: To ensure academic standards are maintained, a department may choose to conduct ‘authenticity interviews’ on their submitted assessments. This means asking students to attend an oral examination on their submitted work to ensure its authenticity, by asking them about the subject or how they approached their assignment. (Working Group on Artificial Intelligence, 2023: 4)

In all cases, it is evident that work on assessing the impact and potential problems relating to the use of generative AI in educational assessment is ongoing. Virtually all of the institutions note that the documents they have produced are part of a work in progress and that, as experience with these systems and their use and misuse grows, further developments in policy may be expected.

Undergraduate use

All of the Russell Group universities’ websites include information for undergraduate students. Understandably, the focus is on the potential misuse of generative AI that can be labelled ‘plagiarism’, while the potential benefits are also emphasized. The direction is towards promoting responsible use of the technology rather than forbidding its use. The University of Edinburgh (2023), for example, emphasizes the importance of students’ work being original while acknowledging the potential benefits of AI, and King’s College London (n.d. a) points to the development of AI literacy as a key skill. Similar advice is provided by the University of Birmingham (2024a), Queen Mary University of London (n.d. b), the University of Liverpool (2024) and most of the rest. Some universities have rather scattered resources, while others have produced a well-structured resource.

Under the heading ‘How to use Gen AI for assessment’, the University of Sheffield has an extensive page created by the Academic Skills Centre (2024). The page defines generative AI and explains its uses, giving examples of prompt engineering, explaining the limitations, defining unfair use and providing further reading links. There is another page on generative AI for essay and report writing, which notes that legitimate use may be for:

Scoping and background research: identifying possible avenues to explore further. Planning and structuring your work: suggesting how you might present your ideas as part of a coherent argument or report. Translating: helping you to develop your English language skills. Improving your spelling, grammar and punctuation. Proofreading: providing feedback on your writing and suggestions for improvements. (University of Sheffield, n.d.)

There is a page on the University Library (2024) site that provides information on the unfair use of AI, and tells us that ‘the University uses Google Apps, as part of this Gemini is the supported Gen AI tool’.

Of course, universities are aware that they need to prepare people to work in contexts where generative AI will be used to support, or even replace, tasks in the workplace. The Careers Service at Imperial College London, for example, provides guidance on using generative AI to help prepare curricula vitae (CVs) and job applications, noting: One way generative AI could help with this would be to upload your CV and the text from the job advert and ask the interface where your skills match up. It might identify things you were unaware of, or it might help you identify where your skills aren’t coming through enough in your CV. (Careers Service, 2024)

All of the universities provide guidance on how to cite and reference the use of generative AI. This is often provided on the university library pages and may draw attention to services such as Cite Them Right. 1

Use by academic staff

The Russell Group universities are as concerned about the use of generative AI by academic staff as they are about its use by students. Generally, the aim is to take advantage of the potential benefits while minimizing the possible risks to ethical conduct and academic integrity. In general, the policies are permissive of experimentation with the technology – for example, the University of Edinburgh allows the use of AI by staff: The University allows staff to use generative AI for their work and work outputs, providing that you do not claim work generated by AI as your own original work. AI can be an excellent tool as an auxiliary aid in creating that work. (University of Edinburgh, n.d.)

The university also offers guidance on associated legal issues, pointing out, for example, that intellectual property rights may be infringed by AI systems; systems may not be compliant with the UK Data Protection Act; and, consequently, personal data should not be entered into these systems.

King's College London, in its ‘Micro-level guidance’, has a focus on AI literacy, noting: It is important to note that AI literacy is something that we will all need to grow and that even those with extensive computer science expertise are still finding their way through the implications of generative AI on teaching and assessment practices. (King’s College London, n.d. c)

The guidance encourages experimentation with different generative AI systems, noting that the university's use of Microsoft's Copilot allows increased data security. It also suggests that all modes of assessment will need a ‘radical overhaul’.

The web pages of the universities of Leeds, Liverpool, Manchester and Newcastle, among others, offer very similar types of guidance to that from King's College London and the University of Edinburgh. Inevitably, these institutions are wrestling with the same problems, and Newcastle University (2024) notes the need for collaboration: ‘We recognise the challenges that lie ahead and will continue to value the input of others, along with contributing expertise to the national and international discussions about generative AI and its applications within teaching, learning, assessment and support’.

Postgraduate and research use

Specific guidance for postgraduate students and research staff is less prominent on the Russell Group sites than that for students and staff in general. This is understandable, since the information for students and staff applies to these categories of users. There is one significant area of difference, however, in that postgraduate students and researchers will be preparing either a thesis, a series of journal articles or a report to a funding agency, and the misuse of generative AI for these purposes could have serious consequences for the reputation of their institution.

Some universities do have specific guidance, however. The University of Birmingham, for example, has a page relating to the use of generative AI in the preparation of postgraduate-taught (PGT) course dissertations. The basic instruction is quite clear: ‘Students should not submit, within any part of their PGT dissertation, material or content that has been generated by AI tools unless their use has been specifically permitted by the School’ (University of Birmingham, 2024b). The document also points out some of the hazards of using generative AI tools, even in those circumstances where their use is permitted, such as the potential for bias in the material presented, uncertainty over the intellectual property status of the material, and the possibility that the system itself is plagiarizing.

The University of York's ‘Use of generative artificial intelligence in PGR [postgraduate research] programmes’ is similarly cautious in its approach. Postgraduate researchers should ‘avoid or be extremely cautious about using generative AI’ in their research (University of York, n.d.). In common with other institutions, the guidance emphasizes that AI use should be meticulously recorded and explicitly declared in any document resulting from the research.

The University of Manchester's information on the topic is part of its ‘Code of practice for postgraduate research degrees’ and appears to offer a little more latitude regarding the use of generative AI, noting: ‘The appropriate uses of generative AI tools are likely to differ between academic disciplines and so engagement and dialogue between you and your supervisor(s) is important in establishing a shared understanding’ (University of Manchester, n.d.). However, the guidance on the appropriate use and the hazards and risks of using generative AI is spelled out in much the same way as in the Birmingham and York documents.

The use of generative AI in other research contexts is rarely mentioned in the documents. However, the University of Bristol Library (2024) has a page, ‘Using AI in research’, that is rather basic, as the policies depend on the schools and departments rather than the university as a whole.

The University of Leeds specifically addresses the research activities of academic staff, noting: Staff engaged in the development or use of AI in any type of research should ensure that the AI usage is ethical and conforms to the University of Leeds regulations, including where appropriate and necessary by obtaining authorisation from a faculty research ethics committee. (University of Leeds, 2024)

Non-Russell Group universities

As noted earlier, a sample was drawn of 24 universities outside the Russell Group. However, although the Russell Group may be seen as having a degree of homogeneity in self-identifying as research-led universities, no such homogeneity exists for institutions outside the group. Boliver (2015) produced a set of distinctive clusters for UK universities, using data from the Higher Education Statistics Agency. The key variables relating to the clusters included research income, research assessment scores, spend per student on academic services, and endowment and investment income. She found that Oxford and Cambridge constituted Cluster 1, while the Russell Group members belonged to Cluster 2, along with other ‘old’ universities (i.e., those established before 1992, such as the universities of Aberdeen, Bath, Dundee, Kent and St Andrews). Cluster 3 universities, which are a mixture of ‘old’ and ‘new’ universities, have less research income than those in Cluster 2, fewer postgraduate students and lower research assessment scores. Cluster 4 consists of the economically poorest universities, which accept students with lower entry scores than the average and have less favourable student–staff ratios.

The 24 universities in the sample of universities outside the Russell Group were distributed over all of Boliver's clusters (except Cluster 1), with four in Cluster 2, 13 in Cluster 3, and seven in Cluster 4. The extent to which information on generative AI was available varied according to the cluster. Thus, of the four universities in Cluster 2, two (50%) had no publicly available information; of the 13 in Cluster 3, five (38%) had no publicly available information; and of the seven in Cluster 4, three (42%) had nothing publicly available. Of the Russell Group universities, considered earlier, 22 were in Bolivar's Cluster 2 (Oxford and Cambridge were in Cluster 1), and, by comparison, only three (14%) had no publicly available information. While these figures need to be treated with caution, because the number of institutions involved is so small, they do suggest that the economically poorer universities are finding it more difficult to devote resources to determining policy on generative AI.

Turning to the content provided by the non-Russell Group institutions, it is not necessary to perform the same kind of analysis since the approaches to the issues discussed above are very similar. What differentiates these universities is the attention they can give to the issues and the amount of information they provide. Once again, it is necessary to enter the caveat that this research is based on what is publicly available on the institutions’ websites; in some cases, more information may be available on online learning platforms or local password-protected systems.

On this basis, we can distinguish three categories of institution. The first group has information on quite a comprehensive set of policies relating to generative AI and includes two universities from Cluster 2 (Dundee and St Andrews), four from Cluster 3 (Leeds Beckett, Manchester Metropolitan, Teesside and West of England) and one from Cluster 4 (London Metropolitan). The web pages of these institutions present very similar information to that presented by the Russell Group universities, covering matters such as best study practices, available tools, citation and referencing, and academic-integrity issues.

The second category consists of institutions with more limited publicly available information, including four from Cluster 3 (Abertay Dundee, Bangor, and Canterbury Christ Church) and three from Cluster 4 (Leeds Trinity, Liverpool Hope and Wolverhampton). In the case of these institutions, the focus appears to be on the problems of plagiarism and the consequences for students of failing to observe the rules of academic integrity.

Finally, the third group comprises those with (as far as could be determined) no publicly available documentation. This group includes two from Cluster 2 (Goldsmith's and Sussex), five from Cluster 3 (Nottingham Trent, Plymouth, Queen Margaret, Gloucestershire and Stirling) and three from Cluster 4 (Anglia Ruskin, Southampton and Trinity Saint David).

Discussion

This study reveals a complex landscape of generative AI policy development in UK universities, with emerging patterns of diffusion and adaptation, particularly influenced by the Russell Group principles. While one might expect AI policies to be integrated into general information policies – promoted by the funding councils in the 1990s (Allen and Wilson, 1996) – there is no evidence to suggest such integration. It remains possible that such information is retained in non-public systems. The varying adaptation of the Russell Group principles highlights the role of institutional context and resources in policy development.

While all Russell Group universities stress AI literacy, the support provided varies. Some, like the University of Birmingham, offer comprehensive frameworks, while others provide more general guidance. This aligns with ‘local rationality’ in policy adaptation, where institutions tailor principles to their specific needs. Non-Russell Group universities exhibit more uneven policy diffusion, reflecting Boliver's (2015) clustering of UK universities. Cluster 2 universities show patterns similar to the Russell Group, whereas Clusters 3 and 4 tend to have less comprehensive policies, suggesting that resource availability and institutional focus influence policy development.

Challenges and opportunities in policy implementation

The key challenges in policy implementation include balancing AI's benefits with academic integrity, redefining plagiarism, and evolving assessment strategies while fostering critical thinking. Equitable AI access is another concern, as disparities in resources may exacerbate existing inequalities in higher education. However, opportunities exist. Many universities adopt collaborative approaches, involving students in policy development and emphasizing AI literacy. This enhances inclusivity and fosters critical thinking skills that are applicable to AI-driven workplaces. A notable example of collaboration is the Generative AI Network (GAIN), managed by the University of Liverpool's Centre for Innovation in Education (n.d.), which facilitates the sharing of policy and best practices. However, the lack of a dedicated website limits access to shared outputs.

University libraries are increasingly central to AI literacy efforts, supporting students in citation practices, plagiarism concerns and ethical AI use. While institutional responsibility for these issues varies, libraries are playing a consistent role, which will likely expand as part of broader information literacy training.

Theoretical implications

The findings align with multiple policy analysis frameworks. Institutional theory (Meyer and Rowan, 1977) is reflected in the comprehensive policies of well-resourced institutions, while advocacy coalition theory (Sabatier and Weible, 2007) is evident in collaborative policymaking processes. Rational choice theory also applies, as universities seek to maximize AI benefits while mitigating academic-integrity risks.

Policy diffusion theory is particularly relevant to the spread of the Russell Group principles beyond its members. While the Russell Group universities have naturally adopted the principles they developed, non-Russell Group institutions appear to be learning from and emulating these policies. The rapid institutional response to generative AI also supports the theory of punctuated equilibrium (Baumgartner et al., 2009), where technological disruption forces significant policy shifts. Generative AI represents such a ‘punctuation’, prompting universities to reassess their assessment practices and ethical guidelines amid broader sectoral challenges, including financial constraints and shifts in student demand.

Implications of the research

This research has the following implications:

Collaboration in policy development is essential. Universities should engage administration, faculty, students, information technology professionals and librarians in policymaking, while inter-university collaboration, such as GAIN, could reduce costs and improve outcomes. Resource disparities remain a concern, affecting policy development and implementation. Training staff and students, acquiring plagiarism detection tools and adapting assessment methods all require funding, raising equity issues between well-resourced and less-resourced institutions. Transforming assessment is critical. As AI-generated content becomes harder to distinguish, universities may need to emphasize formal examinations and oral assessments. Developing effective strategies will require additional resources. University libraries are key to AI literacy and their role will need to expand to training students on ethical AI use, citation practices and related skills. Research practice must adapt to AI's role in postgraduate and faculty research, necessitating standardized guidelines on ethical AI use in research, publication preparation and data analysis. Staff training will be crucial. The global implications are evident, as universities worldwide face similar AI-driven challenges. Lessons from the Russell Group and GAIN could inform international policy development.

Limitations

The primary limitation of this research is the rapid evolution of the field. As institutions gain experience, policies and documentation will continue to develop. However, this study offers insight into initial institutional responses and emerging common approaches.

Conclusion

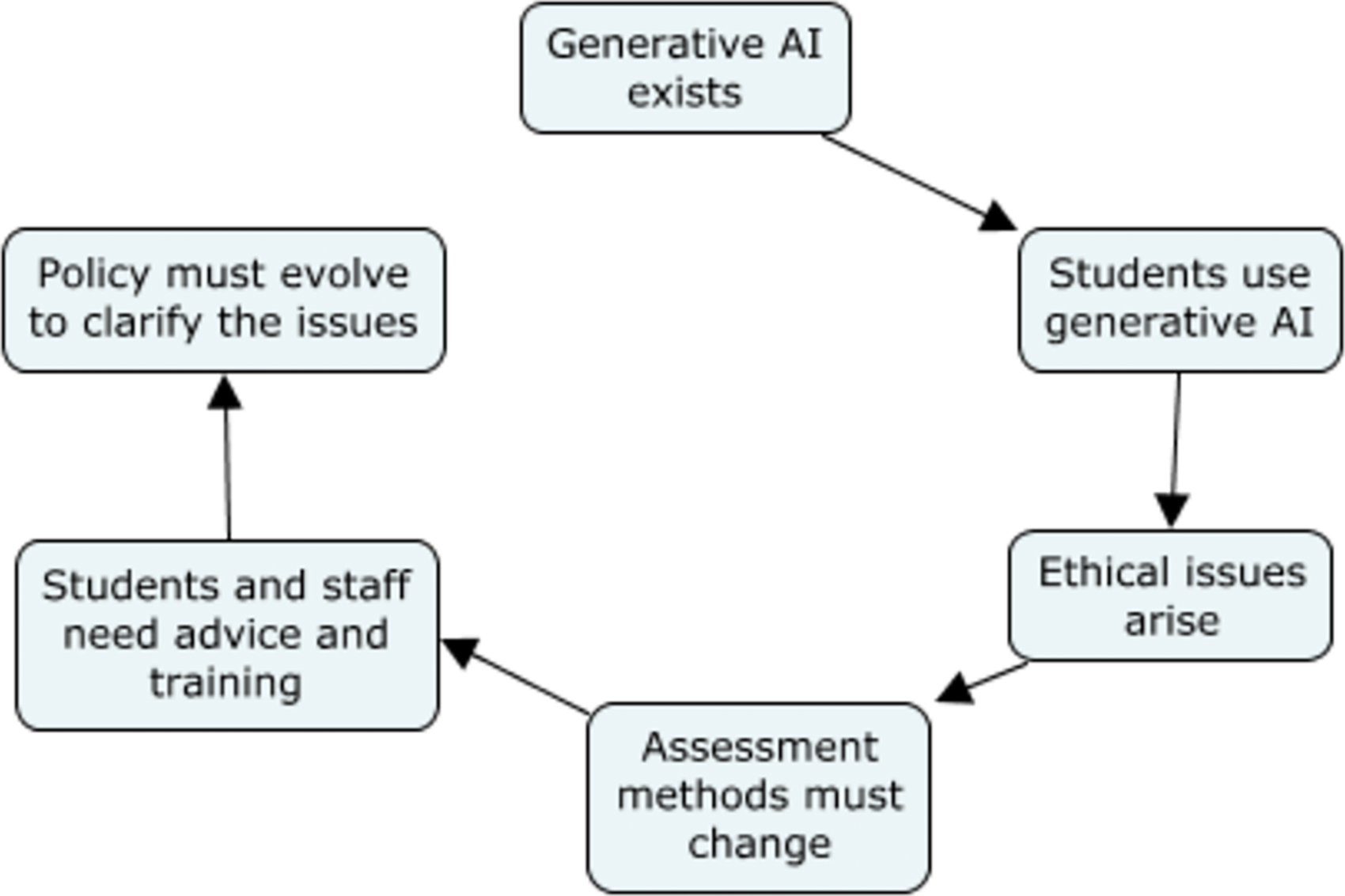

The rapid emergence and adoption of generative AI technologies has prompted a swift response from UK universities, particularly those of the Russell Group. This research shows that the diffusion of the Russell Group principles has significantly influenced the development of policies in member institutions. These universities have addressed the challenges and opportunities presented by generative AI by focusing on key areas, such as academic integrity, assessment strategies, and the promotion of AI literacy among students and staff. From the priority given to these different areas, a causal chain can be suggested (see Figure 1).

The findings also reveal a disparity in policy development and implementation across different university clusters. While Russell Group universities and some of Boliver’s (2015) Cluster 2 universities have developed comprehensive policies, many in Clusters 3 and 4 appear to lag behind, possibly because of resource constraints or differing institutional priorities. This disparity raises concerns about equity in AI education and preparedness across UK higher education institutions.

The study highlights the dynamic nature of policy development in this rapidly evolving field. Universities are grappling with complex issues, such as redefining plagiarism, developing assessment strategies that can cope with AI, and balancing the benefits of AI with maintaining academic standards. The collaborative approach adopted by many institutions, involving various stakeholders including students, suggests a shift towards more adaptive and inclusive policymaking processes.

Looking ahead, several key areas merit further investigation:

Long-term impacts: future research should explore the long-term effects of these policies on student learning outcomes, academic integrity and pedagogical practices. Such research could provide valuable insights into the effectiveness of current policies. Comparative analysis: international comparative studies could give a broader perspective on global trends in AI policy in higher education. Best practices and novel approaches could be identified. Policy development capacity: further research into the impact of resource levels on the ability of universities to develop and implement policies could help to support institutions in coping with generative AI. Evolving technologies: AI technologies will continue to develop and, consequently, policies will need continuing revision. Further research on these developments and institutions’ coping strategies will be needed. Ethical considerations: future studies should consider the ethical implications of AI use, including issues of privacy, data protection and the problem of bias in AI systems.

AI policy in UK higher education is in a state of flux. Significant progress has been made among Russell Group universities, but there remains a need for continued research and the standardization of approaches. As institutions gain more experience with these technologies, we can anticipate the evolution and further refinement of policies to ensure that UK universities can maintain the integrity and quality of higher education while benefiting from generative AI.

It is rather surprising and somewhat disturbing that so many of the documents on university web pages lack a publication date. In some cases, there is a copyright symbol but no date attached. A date is not legally required but it sets the timeline for the copyright and is a reminder of when renewal may be due. It also tells the reader how up-to-date the information may be. Almost from the beginning of the World Wide Web, guidance (e.g., from the World Wide Web Consortium), suggested that dating pages is essential.

The logic of policy development.

Footnotes

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author received no financial support for the research, authorship and/or publication of this article.