Abstract

Background

Artificial intelligence (AI) technologies are transforming medicine and healthcare. Scholars and practitioners have debated the philosophical, ethical, legal, and regulatory implications of medical AI, and empirical research on stakeholders’ knowledge, attitude, and practices has started to emerge. This study is a systematic review of published empirical studies of medical AI ethics with the goal of mapping the main approaches, findings, and limitations of scholarship to inform future practice considerations.

Methods

We searched seven databases for published peer-reviewed empirical studies on medical AI ethics and evaluated them in terms of types of technologies studied, geographic locations, stakeholders involved, research methods used, ethical principles studied, and major findings.

Findings

Thirty-six studies were included (published 2013-2022). They typically belonged to one of the three topics: exploratory studies of stakeholder knowledge and attitude toward medical AI, theory-building studies testing hypotheses regarding factors contributing to stakeholders’ acceptance of medical AI, and studies identifying and correcting bias in medical AI.

Interpretation

There is a disconnect between high-level ethical principles and guidelines developed by ethicists and empirical research on the topic and a need to embed ethicists in tandem with AI developers, clinicians, patients, and scholars of innovation and technology adoption in studying medical AI ethics.

Keywords

Introduction

Artificial intelligence (AI) has shown exceptional promise in transforming healthcare, shifting physician responsibilities, enhancing patient-centered care to provide earlier and more accurate diagnoses, and optimizing workflow and administrative tasks.1,2 Yet, the application of medical AI into practice has also created a wave of ethical concerns that ought to be identified and addressed. 3 Global and regional legal regulations, including recent guidelines issued by the WHO 4 and European Union, 5 provide guidance on how to stay abreast of an ever-changing world and placate growing concerns and worries about the moral impacts of medical AI on the provision of healthcare delivery.

Legal regulations are informed by theoretical/conceptual ethics models that serve to facilitate decision-making when it comes to applying medical AI in education, practice, and policy. 6 Medical AI ethics has been supported by the four principles of biomedical ethics (autonomy, beneficence, nonmaleficence, and justice) and ethical values related to fairness, safety, transparency, privacy, responsibility, and trust.3,7–9 These principles and values inform legal regulations, organizational standards, and ongoing policies in terms of how to focus on and mitigate the ethical challenges that impede the use of medical AI.

However, ethical frameworks that support medical AI legal regulations and guidelines are often developed without dialogue between ethicists, developers, clinicians, and community members.6,10 This may mean that regulations used to inform best practices may not align with community members’ experiences as medical AI users. The ethical concerns identified by policymakers and scholars may not be the same ones identified by actual patients, providers, and developers. This creates a disconnect whereby ethical decision-making tools may not be perceived as applicable to actual users of medical AI. Regulative practices are often informed by higher-level ethical theories and principles rather than examining actual knowledge and practices of medical AI ethics with real-world examples.9,11,12 Identifying empirical work on the knowledge, attitudes, and practices of medical AI ethics can lead to mechanisms that may assist educators, researchers, and ethicists in clarifying and addressing perceived ethical challenges.13,14 Medical AI interactions involve patients, families, and healthcare providers, and it is, therefore, critical to understand individuals’ awareness of the ethical issues that may impact their AI utilization in health delivery and determine how these results can inform medical AI development and research. In identifying what stakeholders (e.g. patients, clinicians, and developers) see as the ethical risks of medical AI, we can initiate dialogue and develop practice guidelines, organizational standards, and legal regulations to determine ethically informed AI interventions that are relevant and applicable to practice.

The goal of this systematic review is to assess and bridge the gap between the theoretical frameworks of AI ethics and the actual stated worries and concerns of present and future stakeholders of medical AI. To that end, we provide an overview of the existing empirical studies on medical AI ethics in terms of their main approaches, findings, and limitations. Findings from our study can inform medical education and lead to novel strategies to translate medical AI ethics into public discourse and legislation guidance.

Method

Eligibility criteria

This study was guided by Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA). 15 We examined empirical studies in medical AI ethics published in journals and conference proceedings after 2000. Specifically, the inclusion criteria for this study were the following six: (1) must be empirical studies, (2) must be published in English, (3) must be peer-reviewed, (4) must be published in a journal or conference proceeding, (5) must be published from January 2000 to June 2022, and (6) must be a study on medical AI, where ethics must be a substantial part of the investigation. Empirical studies referred to qualitative or quantitative studies driven by scientific observation or experimentation. By substantial, we meant that either the study was solely focused on ethics or at least one RQ or hypothesis was focused on ethics. Studies that only mentioned ethics a couple of times in a discussion of the findings as an afterthought were excluded.

Data sources and search strategy

The articles for this study were retrieved in June 2022. We searched a total of seven databases in the fields of information science, medicine, sociology, and communication: IEEE Xplore, PubMed/MEDLINE, CINAHL Complete, JSTOR, Sociological Abstracts (ProQuest), ACM Digital Library, and Communication Source (EBSCOhost). Based on the exploratory literature search, we used three categories of search terms: medical terms (medical, health, healthcare, and medicine), AI (machine learning, algorithm, AI, robotics, pattern recognition, automated problem solving, and natural language), and ethics (ethics, bias, bioethics, ethical, bioethical, autonomy, beneficence, nonmaleficence, justice, and responsibility).

We limited the initial search to abstracts and obtained a total of 5854 articles from the seven databases. First, the second author checked all initial articles for duplicates and removed 708 duplicated titles. Second, articles written in a language other than English, not published (such as those from preprint repositories), and published before 2000 (n = 372) were removed. Next, the abstracts of all remaining articles were reviewed, and pieces that were nonempirical and unrelated to medical AI ethics were removed (n = 4722), yielding 52 valid abstracts. The first and second authors both read the 52 full articles and identified 30 articles that met the inclusion criteria. The third author served as a tie-breaker when an agreement was not reached. The references of the above 30 articles were further reviewed, and an additional six articles meeting the inclusion criteria were identified. In the end, 36 articles were retained for this systematic literature review. Figure 1 shows the article inclusion flow diagram.

Systematic reviews flow diagram illustrating literature selection process.

Coding procedure

In reviewing these articles, we coded the demographic information of each article, including the year of publication, journal/conference of publication, and discipline of the said journal/conference. Next, we coded the stakeholders studied in each study, including AI developers, clinicians, patients, patient family members, and other stakeholders. The type of medical AI technology studied in each article was also coded. Ethical principles studied were another variable coded. We started with five principles used in data collection, including autonomy, beneficence, nonmaleficence, justice, and responsibility. We coded whether each of the studies examined any of these principles. At the same time, we allowed additional principles to emerge from the data. Finally, we coded the research method used and the main findings of each article. The first two authors coded these articles independently and compared their results. They discussed the discrepancy to reach a consensus. When an agreement was not reached, the third author served as an arbitrator. See Table 1 for a summary of these studies.

Overview of empirical studies on medical AI ethics.

Abbreviations: AI, artificial intelligence; CDSSs, clinical decision support systems; CEOs, Chief Executive Officers; CNN, convolutional neural network; CT, computed tomography; EHR, electronic health record; EMR, electronic medical records; HHR, home healthcare robot; ICU, intensive care unit; LVEF, ventricular ejection fraction; ML, machine learning; MRI, magnetic resonance imaging; NLP, Natural language processing.

Results

The 36 studies included in this systematic review were published between 2013 and 2022, with the most published during or after 2020 (n = 27), showing the topic's novelty. In terms of disciplines, the largest number of articles were published in journals of medicine (n = 18), followed by informatics (n = 11), science (n = 2), public health (n = 2), medical ethics (n = 2), and others (n = 1). The three main types of AI technologies studied in these articles were risk prediction,16–21 diagnosis and screening,22–33 and intelligent assistive technology.20,34–38

Almost all studies were conducted based on data from Western countries, with the most from the USA. Other countries included Canada,39,40 the UK,22,34,41,42 France,27,38,43 Denmark,36,44 Germany, 33 Spain, 35 and Australia. 28 One study collected data from both North America and Asia, 45 and another study included data from three European countries (Switzerland, Germany, and Italy). 20 One study used data from nine Western countries. 34 Two non-Western countries/regions appeared in our collection of studies: sub-Saharan Africa 18 and India.19,46

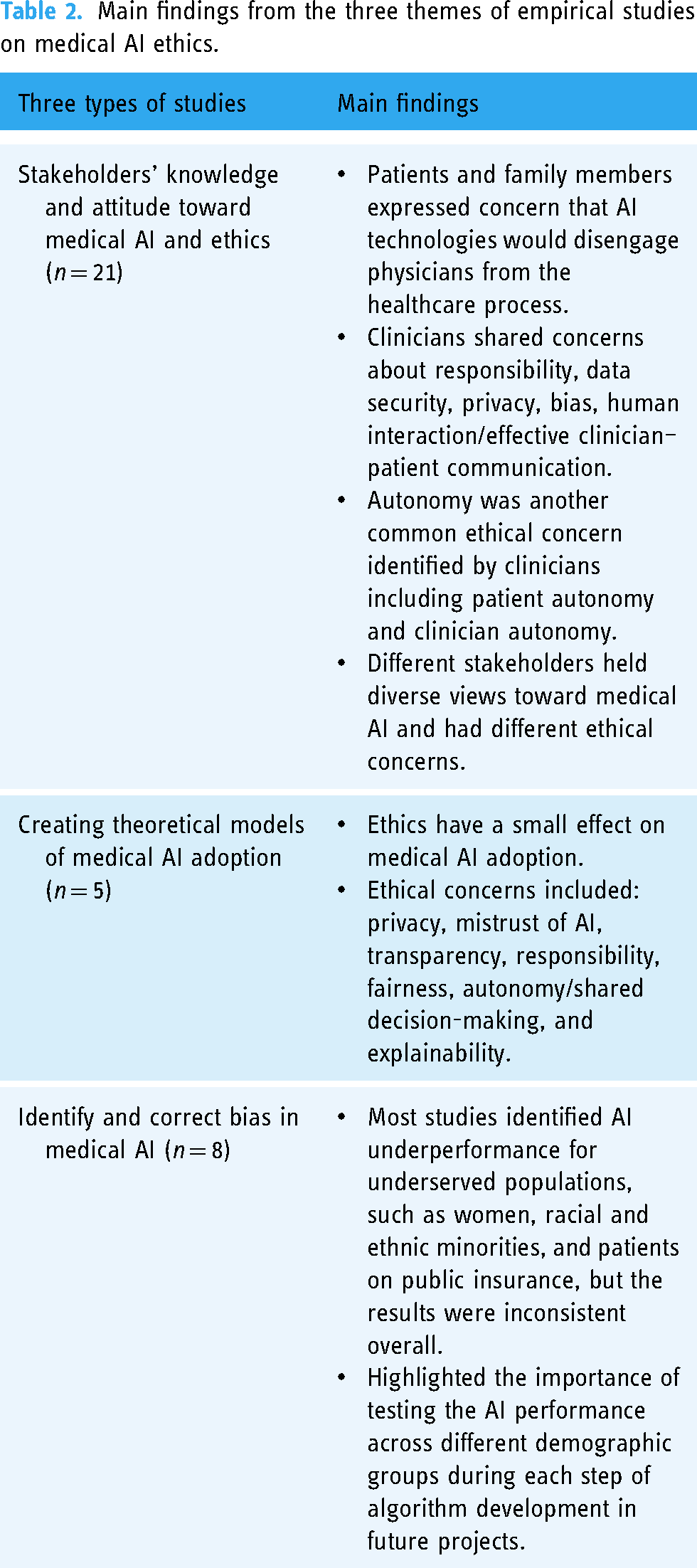

These were categorized into three themes: (1) exploratory studies of stakeholder knowledge and attitude toward medical AI and ethics, (2) theory-building studies testing hypotheses regarding factors contributing to stakeholders’ acceptance or adoption of medical AI, and (3) studies measuring and correcting biases in medical AI. We summarized the main findings of each of these categories of studies in Table 2.

Main findings from the three themes of empirical studies on medical AI ethics.

Studies on stakeholder knowledge and attitude toward medical AI and ethics (n = 21)

The largest group of empirical studies on medical AI ethics explored different stakeholders’ attitudes toward medical AI and their ethical concerns through an inductive approach. Very few of the studies20,39 were focused exclusively on ethics. The remaining studies included ethics as one dimension in stakeholder attitudes toward medical AI in general and often framed ethics broadly in terms of concerns, barriers, and potential challenges. Participant stakeholders included clinicians (such as physicians, radiologists, and dentists), patients, patient family members, and occasionally researchers and governmental, NGO, or industry leaders. Data collection methods included administered surveys, focus groups, or interviews. Approaches to data analysis were informed by either descriptive statistics (surveys) or qualitative data analysis methods (focus groups, interviews), including grounded theory and thematic analysis to identify patterns and themes associated with stakeholders’ ethical concerns.

Overall, stakeholders in these studies were most concerned with the ethical impacts of medical AI on human connection, autonomy, privacy, data security, and fairness. Clinicians generally reported more ethical concerns related to the use of medical AI than patients. Human connection, predominantly in the form of the clinician–patient relationship, was the most frequently identified ethical issue by both clinicians and patients. Many studies showed that clinicians and patients believed that personal touch and human relationships facilitated ethical communication. This was especially true when explaining medical diagnoses and treatment options. The stated ethical concerns with medical AI from stakeholders are that it will depersonalize medicine, eliminate the need for human interaction, and reduce investment in patient-centered care.

Overall, six studies examined the attitudes of patients and their family members toward medical AI ethics. Surveys and interviews relying primarily on open-ended questions were administered to examine patients’ or family members’ attitudes toward medical AI and the perceived benefits and risks of incorporating AI. These studies typically used different medical scenarios to understand stakeholder perspectives, including advanced care planning, 40 skin cancer diagnosis, 32 radiology, 31 and COVID-19 hospitalization. 35 Overall, patients and family members expressed moderate support for medical AI, while identifying ethics as a major barrier/concern in accepting medical AI. Major ethical concerns included responsibility,22,35 privacy,32,38,40 data security, 22 bias and accuracy, 22 and lack of human interactions.22,31,32,35 Overall, patients and family members expressed concern that AI technologies would disengage physicians from the healthcare process, demonstrating a preference for physician involvement in diagnosis, decision-making, and clinical communication. These results demonstrate that both clinicians and patients have stated ethical concerns about the impacts of medical AI on trustworthy relationships and transparent and informed communication that facilitate healthcare decisions.

Ten articles studied how clinicians, including physicians, psychiatrists, radiologists, radiographers, and other experts, perceived the use of AI technology in medicine and their ethical concerns. Like patients and family members, these professionals shared concerns about responsibility,28,29,43 data security,20,27,28 privacy,18,27,28 bias,18,45,47 and human interaction/effective clinician–patient communication.20,36,41,47 In addition, clinicians were also concerned with equality, 36 equity, 45 safety and accountability, 41 stigma and discrimination, 18 and affordability (distributive justice). 20 These findings may demonstrate that clinicians have a deeper understanding of the ways in which medical AI may have disproportionate impacts on social justice. This may mean that ethical concerns related to social justice, discrimination, and equity may arise from more knowledge on the uses of and impacts of medical AI in practice. Autonomy was another common ethical concern identified by clinicians, including patient autonomy and clinician autonomy. Medical professionals often worried that AI would infringe on their professional autonomy in making decisions or even the future of their specialty. 42 Patient autonomy referred to whether patients were mentally capable of giving informed consent to utilize medical AI in their care. 20 Occasionally, the term autonomy was used without any definition or explanation. 36

Five studies examined multiple stakeholders simultaneously.33,34,39,43,46 They often sought to provide an overview of the public opinion toward medical AI. Stakeholders studied included patients, caregivers, clinicians, academic researchers, industry leaders, and journalists. These studies typically found that different stakeholders held diverse views toward medical AI and had varied ethical concerns. For instance, a study of multiple stakeholders in Canada found that patients were more concerned with fairness in resource allocation, while all stakeholders considered privacy a major concern. 39 Similarly, a study of multiple stakeholders in India showed that patients were more concerned with ethical challenges such as privacy, confidentiality, ethical violations, security, and data ownership. 46 In contrast, CEOs were mostly concerned with the human resource challenges in developing and deploying AI-enabled telepsychiatry, and psychiatrists were more concerned with technical challenges.

Studies creating theoretical models of medical AI adoption (n = 5)

Five studies used surveys or experiments to test theoretically driven hypotheses that explained or predicted the adoption of medical AI. Theories such as technology acceptance theories 37 and the value-based AI acceptance model48,49 were used to create hypotheses. These studies often utilized fictional vignettes to elicit participants’ attitudes toward medical AI and their behavioral intentions. They found that several factors, including social influence, 37 technological concerns,37,48,50 ethical concerns,37,44,48–50 regulatory concerns, 49 and situational factors such as the nature of the illness and tasks,44,48 were likely to affect the perceived risks of medical AI, preference, and/or intention to adopt. While ethical concerns influenced the acceptance of medical AI or adoption intention, its effect was smaller than other factors, such as social influence 37 and technology concerns. 48 Among the ethical concerns examined, privacy,37,48 mistrust of AI, 49 transparency, 48 responsibility, 44 fairness (i.e. free from discrimination), 44 autonomy/shared decision-making, 50 and explainability 44 were found to be significantly or nearly significantly related to adoption intention or preference. Overall, these studies suggested that ethics has a small effect on medical AI adoption, and few ethical issues stood out as more important.

Identify and correct bias in medical AI (n = 8)

The last category of studies focused on the ethical principle of fairness by measuring the biases in medical AI algorithms and some experimented with ways of correcting such biases. Even though this group of studies did not collect data from AI researchers per se, their research provided insights into ethical issues that AI researchers perceive as most important to address and mitigate. This line of research also provides empirical validation for the presence of AI biases. Recent research has shown that AI-based predictions and diagnoses were often plagued with biases against certain demographic groups, such as women and racial and ethnic minorities and such biases were likely to perpetuate existing health disparities. 51 Systematic biases and missing data in training data sets (such as electronic health records (EHRs) and insurance claims) contributed to the disparity in AI performance among different demographic groups. Eight articles in the sample tested the performance of AI prediction and diagnosis algorithms across different races/ethnicity, sex, and insurance status by comparing the error rates of diagnoses among different groups or comparing predictions with actual patient data. Most studies identified AI underperformance for underserved populations, such as women,16,19,23 racial and ethnic minorities,16,21,23,26 and patients on public insurance.16,23 However, not all studies found underdiagnosis for underserved populations. For instance, one study showed that a convolutional neural network (CNN) model trained using data from non-Hispanic White patients performed equally well for other racial and ethnic groups in ECG analysis. 24 Another study using EHR data to predict COVID-19 hospital admission, ICU admission, ventilation use, and death showed that their algorithms performed marginally better for female and Latinx patients but worse for older patients and male patients. 17 These results demonstrated the unpredictability in the relationships among demographic variables, biophysical data, and algorithm performance and highlighted the importance of testing the AI performance across different demographic groups during each step of algorithm development in future projects.

Other

Two studies did not fit into any of the previous three groups. One was a content analysis of media coverage of the application of AI in medical screening and diagnostics. It found that media coverage was overwhelmingly positive, with a very small percentage (<10%) incorporating the ethical, legal, and social implications of such technologies. 25 The other outlier was an examination of the reporting of demographic information in 23 publicly available chest radiograph data sets, as earlier research suggested that unbalanced training data sets might lead to bias in predictions. 52 The study found that while most data sets reported demographic data regarding age and sex, few reported race, ethnicity, and insurance status. Among those data sets that did report such data, certain groups (such as female patients) were often underrepresented. Such underreporting was likely to contribute to the bias in the algorithms trained using such data sets.

Discussion

Our systematic literature review of published empirical studies of medical AI ethics identified the three main approaches taken in this line of research and the major findings in each approach. The largest group of studies examines the knowledge and attitudes of medical AI ethics across various stakeholders. These studies demonstrate that the primary concern shared among patients and clinicians is the impact of medical AI on reducing human interaction and trustworthy healthcare communication. Although patients showed concerns with other ethical issues, clinicians identified principles of autonomy and justice as major considerations in the application of medical AI. Their shared concerns of autonomous decision-making as clinicians with respect to patient care and their responsibility of attaining full informed consent from their patients was an important finding to incorporate into medical education. Additionally, clinicians voiced concerns with respect to equity, discrimination, stigma, and distributive justice. These reported findings indicate that providers are concerned about patients’ access to and affordability of healthcare and how this may influence treatment options and care plans.

Another group of studies utilized theoretical models to examine the adoption of medical AI technologies. These empirical findings demonstrate that ethical issues have a significant yet small effect on acceptance and adoption of medical AI in practice. The last group of studies was mathematical and technical in nature to identify and correct biases in the prediction by medical AI based on race, gender, and insurance status. These findings showed that although there are medical AI biases that may perpetuate existing health disparities, there may be novel ways in which to reduce and mitigate these biases in medical AI algorithms.

Our review of these 36 studies identified a few concerns or limitations in the current academic research on medical AI ethics: a disconnect between high-level ethical principles and the concerns reported by different stakeholders, a lack of research that focuses on ethics of medical AI per se, and a need for consistent definition and operationalization of ethics-related concepts and terminologies. In this section, we will further discuss these limitations and call for truly interdisciplinary collaboration in medical AI ethics research.

Overall our study shows the urgent need to connect high-level ethical principles and guidelines developed by ethicists and international agencies and the empirical research on the topic. There is no empirical research on AI developers’ knowledge and attitudes toward ethical issues. We can only deduce that bias and addressing fairness are the concerns of AI developers, as evidenced by the large number of studies aiming at measuring and correcting biases in algorithms. However, there is no existing research on AI developers’ knowledge, attitude, and practices regarding AI ethics other than their research to repair AI biases, connected to the ethical principles of fairness and justice. The lack of inclusion of AI developers as integral stakeholders in empirical research could be also caused by the fact that this systematic review focused on published empirical studies in peer-reviewed journals and studies done on AI developers could potentially be found in the grey literature or technical publication. Nevertheless, future research needs to include AI developers as participants to understand their knowledge and practices of medical AI ethics and their ability to connect fears of bias with principles of justice, fairness, and social responsibility. Understanding the perspectives of AI developers can provide insights into the potential ethical challenges in the development of medical AI and create interdisciplinary collaborations to create truly ethical medical AI.

The systematic review also reveals several additional limitations in this body of literature. First, researchers’ primary aims are not focused on ethics. Instead, in qualitative, open-ended interviews and focus group studies, ethics emerged naturally in dialogue with stakeholders, showing its importance in stakeholders’ evaluation of medical AI. In quantitative surveys, ethics is one of the variables measured in addition to technical, social, legal, and regulatory factors. This can be tentatively attributed to the fact that most of these studies have been conducted by medical researchers who want to explore stakeholder opinions about medical AI in their respective subfields. Ethics scholars are surprisingly absent in the research teams conducting empirical studies of medical AI. Only three authors (1.8%) of these studies are affiliated with an ethics-related department or institution.

Second, we observed significant divergence in the definition and measurement of ethics terminology across studies. Researchers seldom define ethics or ethical principles in their research design, administered surveys, or interview guides with participants. Many ethical principles were mentioned in researchers’ analyses without being accompanied by specific conceptual and operational definitions. In interviews and focus group studies, stakeholders (patients or clinicians) mention concerns or challenges of utilizing medical AI technologies that researchers then label as “ethics.” In administered surveys, there is no consistency in the items used to measure different ethical concerns and no efforts to validate the scales used. Most of the existing studies rely on open-ended questions, and only a few studies used scales to quantitatively measure these variables31,38,48–50; however, the scales used were not validated. Researchers sometimes label items as ethics without context, and consequently, it is not clear whether these items were ethical issues, rooted in value conflict between two ethical courses of action, or whether these were more practice-based issues. For instance, a study of home healthcare robot (HHR) adoption measured ethical concerns using three items: HHR would change their behaviors over time, HHR would replace doctors, and HHR would make mistakes. 37 Furthermore, the same study also measured common ethical concerns such as privacy as a separate category. Another study on patients’ perceived risks of AI medical devices measured ethical concerns in three categories: privacy concerns, fairness concerns (social bias), and “mistrust in AI,” and finds that only mistrust in AI predicts perceived risks. 49 Sometimes, ethical concerns are intermingled with technical, legal, or regulatory challenges of AI. The lack of universal or shared ethics definition may be the result of limited collaboration with ethicists or lack of intention to focus on ethical concerns. Rather, without proper definitions or categorizations of ethical issues, researchers may be unknowingly confuse ethical issues with practice-based issues that may arise from AI utilization. Without context as to what makes these ethical issues (as opposed to development, practice, or legal issues), it is up to the readers to make sense of these items and connect them to ethical principles and values in order to develop ethically informed care. Rather, concerns and challenges are often simply thematically organized as ethics rather than potentially seeing these responses as a comprehensive review of overall risks. This type of analysis may lead to a dearth of standardized and validated measurement tools to capture stakeholders’ knowledge and attitude about medical AI and ethics. The lack of validated and common measurement scales prevents the comparison of different studies and reduces the generalizability of their findings and creates difficulty in assessing the stated ethical concerns as opposed to practice-based or generalized risks of incorporating medical AI into practice.

Our findings highlight the need to foster collaborations between ethicists, AI researchers, medical professionals, and scholars of innovation and technology adoption in studying medical AI ethics. Ethics scholars should inform AI developers, clinicians, and patients through each step of the medical AI pipeline. In other words, ethicists should be members of interdisciplinary and interprofessional research groups. We adopt the idea of “embedded ethics” developed by McLennan et al. (2022) to describe the importance of incorporating ethicists and ethical principles in the development, utilization, and understanding of medical AI during ongoing, interactive conversations. 6 The framework proposed by McLennan and colleagues (2022) asserts that there ought to be reciprocal interactions between ethicists and AI developers: ethicists educate AI developers on ethical principles and guidelines, while AI developers provide feedback to ethicists about feasibility, practicality, and process of development. While McLennan's approach focuses on the collaboration between medical AI developers and ethicists, we argue that it is imperative to broaden this model to include reciprocal dialogue with medical AI users, such as clinicians and patients. Medical AI technology is different from other medical technologies; neither AI developers nor clinicians alone can predict or explain the predictions made by medical AI and explain the results to patients. 44 Consequently, to inform AI development and ethics, there ought to be reciprocal dialogue throughout the entirety of the development and implementation process. In turn, we envision three components: (1) medical AI users need to actively participate in AI development conversations along with ethicists and developers, (2) AI developers ought to be included in practice outcomes of medical AI through conversations with patients and clinicians after development, and (3) ethicists need to be involved in the design, development, implementation, and interpretation of medical AI products from development to practice.

In our expanded model, we envision an ongoing and long-term collaboration to inform medical AI utilization and data interpretation. The integration of clinicians and patients into medical AI conversations can contribute to the development of transparent communication regarding informed consent, human interaction, and fairness. Collaborative dialogue between ethicists and clinicians can involve issues related to the decision to use medical AI technologies in the diagnosis and treatments of different types of patients, informed consent, and communication of AI-based diagnoses with patients. Ethicists’ interactions with patients may include mitigating issues related to the protection of informed consent or refusal. Ethicists can play the additional role of mediating the conversation between AI developers, clinicians, and patients to create genuinely open and equitable discussion, especially when patients from historically marginalized groups are involved. Finally, by providing their feedback to ethicists, AI developers, clinicians, and patients can influence organizational ethical guidelines to address reported ethical concerns and obstacles in employing and utilizing medical AI.

Finally, while medical AI has the potential to benefit low- and middle-income countries as well as less developed regions in high-income countries by enabling better public health surveillance and providing more and better healthcare to underserved populations, 4 all except three empirical studies on medical AI ethics were conducted in high-income countries, and most based in large urban hospital settings. It is imperative to engage stakeholders in low- and middle-income countries and regions in developing and implementing medical AI, recognizing the diversity in stakeholder opinions and the existing digital divide as medical AI becomes a global phenomenon.

Footnotes

Acknowledgments

None.

Contributorship

LT and SF conceptualized the study. JL collected the articles reviewed and performed initial screening. LT and JL conducted the analysis. SF confirmed the analysis. All three authors contributed to the writing of the manuscript.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical approval

This study is a review of published studies and does not constitute human subject research.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Melbern G. Glasscock Center for Humanities Research Summer Research Fellowship, Texas A&M University, and National Institute of Health, USA (grant number: 3U01AG070112-02S2).

Guarantor

LT, the first author, takes full responsibility of this article.