Abstract

This study examines generative AI integration in Lithuania’s social sciences research and education following ChatGPT’s 2022 release, exploring usage patterns and challenges faced by researchers. Semi-structured interviews were conducted with 13 Vilnius University social sciences faculty members, selected for diverse AI-related expertise. Content analysis examined perceptions, roles and ethical considerations of AI use in academic activities. AI was perceived as valuable for information-seeking and research productivity, but tensions exist between adoption benefits and challenges related to academic integrity, methodological standards and variable output quality. Participants emphasised the need for clearer AI usage norms. Generative AI integration in social science research at Vilnius University presents significant opportunities and challenges. Addressing tensions between effort-saving and competence acquisition, unclear guidelines and AI output reliability is crucial for positive academic AI utilisation. The research highlights the importance of comprehensive guidelines and enhanced digital literacy to take advantage of AI benefits while maintaining academic integrity.

Introduction

Since

The items were analysed to determine which AI system was being discussed, the related area of application, and whether or not a specific problem was dealt with. Overall, the most common topic of interest was generative AI in general (105 items) and within this category, business applications were the most frequently addressed (35 items), followed by education (24), law (13), medicine (4) and science and scientific research (4). The remaining 25 items dealt with a variety of topics, from journalism, through industry and scholarly publishing, to government.

The most common individual system discussed was

The majority of news items dealt with generative AI in general terms, noting the problems, but also commenting on the potential uses, and the impression gained is that generative AI is rapidly gaining acceptance in higher education as a quarter of the news items were devoted to these issues. Some institutions have banned its use, but the majority is evolving policies to guide the ethical use of systems and, where appropriate, to incorporate the study of these systems into the curriculum. This explains why the majority of research-based news dealt with investigation into the higher education issues, such as policy and use by students. Social science research was not prominent in the

A total of 40 items dealt with research and higher education, of which 33 were research-based. Twenty-five of the 33 items were general articles on the use of AI in education, and only eight dealt with specialised disciplines: three on business education; three on nursing, one on law and one on engineering.

As these results suggest, significant implications have arisen in higher education, with universities facing major challenges regarding academic integrity, assessment methods and the fundamental nature of teaching and learning. Researchers also feel significant impact of AI on their work, both positive (e.g. enabling faster processing of data) and negative (e.g. making it easier to falsify research) [1,2].

Generative AI technologies produce human-like text, visual content and complex problem-solving responses with remarkable sophistication [3]. These technologies use neural networks trained on huge datasets, allowing them to generate contextually appropriate outputs, which, for university students, present opportunities for textual composition, coding and problem-solving while raising complex ethical dilemmas.

Ethical considerations for students are nuanced: while generative AI can function as a powerful research assistant and learning aid, its unrestricted usage raises questions about intellectual ownership, academic honesty and the fundamental purpose of educational assessment [4,5]. Students must balance technological innovation with academic integrity and personal intellectual development.

An article in

Moreover, the uneven access to these technologies introduces additional layers of potential research inequality, as some researchers may have greater difficulty in accessing this technology and, consequently, use it less. As shown in van Noorden and Perkel [1], 20% of researchers studying and using AI come from North America, 28% from Asia and around one-third from Europe. According to a European Commission [6] AI research and development activity score the inequality exists across Europe, with Germany, Spain, France and Italy leading the list and some lagging behind with almost non-existent scores, Lithuania included. In addition, the inequality may exist within countries, especially in education, as students with greater technological resources may gain disproportionate advantages in academic performance and skill acquisition [7].

This prompted us to explore what is happening in AI use for research and education in Lithuanian social sciences, as doubly lagging behind the higher performers. This may be of interest for other countries in the same situation. We have started with a small exploratory qualitative study in Vilnius University. Our research presented in this article examines the use of generative AI systems by university social science researchers in the University, taking an information behaviour and activity theory perspective, rather than seeking to understand the totality of their interaction with such systems. Having reviewed AI’s role in social research and AI application areas in the scientific research process (see below), we have formulated the following research problem: how does Lithuania’s social sciences academic community experience the use of AI in academic activities?

Following the formulated research problem and using the activity theory framework, research questions were formulated as follows:

What are the needs and motives of Lithuanian social science researchers in using AI tools?

How do social science researchers perceive the role and the value of AI tools in the context of their academic and research activities?

What rules and norms are emerging in the Lithuanian social science academic community regarding the use of AI tools?

Literature review

This literature review examines the current state of research on artificial intelligence (AI) use in social science research, structured around three key gaps that justify our qualitative study of Lithuanian social science researchers. Despite the growing popularity of AI tools in academic contexts, there remains a significant scarcity of empirical research examining how social scientists actually adopt, perceive and regulate AI use in their research practices: this was confirmed by our background searches on the subject.

A Google Scholar search using keywords ‘generative AI’, ‘generative artificial intelligence’ and ‘social sciences’ revealed that, during 2023–2024, only 33 out of 200 publications were related to AI usage in scientific research. Some studies focus on AI applications in educational settings or business sectors, rather than examining researchers’ actual experiences and practices (e.g. Cotton et al. [3] and Chowdhury et al. [8]). One of the articles explored factors of acceptance of generative AI in higher education demonstrating that intention to adopt this new tool correlates positively with personal innovativeness and social influence, but adversely with price value [9]. There were some that explored AI in higher education in a limited national context (e.g. Hamamra et al. [10]), but did not tackle research.

Almost half of found relevant articles were reviews, mainly literature reviews (e.g. Burger et al. [11]; Xu et al. [12]; Lund et al. [13]; Kasneci et al. [14]; Lindgren and Holmström [15]). However, some are based on survey or interview data (e.g. Van Noorden and Perkel [1]; Chubb et al. [16]), analysis of experts’ opinions (e.g. Dwivedi et al. [17]) and even experimentation [18].

The literature surveys were based on either very general or very narrow areas of AI in social science and, when dealing with AI use, focus on advantages and problems that AI brings to social science researchers. We have capitalised on their conclusions in building our own understanding of the problem and formulating our research questions.

Research gap 1: understanding researchers’ needs and motives for AI use

The existing research provides some insight into why researchers turn to AI tools. Van Noorden and Perkel’s [1] large-scale survey of 1600 researchers identified key benefits that may drive AI adoption: faster data processing, computation assistance, programming support and resource saving. For generative AI specifically, researchers cited benefits in writing, editing, translation, text summarization, coding assistance, administrative task acceleration and creative support.

Studies have identified primary areas where AI is being integrated into research workflows: scientific problem identification through literature searches and review preparation [12]; research verification processes including data collection and analysis; and manuscript writing and conclusion formulation [19]. The appeal appears to centre on AI’s ability to eliminate human errors when information is accurate and responsibly processed (Wu et al. [20]), to produce repeatable results [11] and to analyse large datasets quickly [12].

However, these studies focus on functional benefits for research work in any area rather than deeper motivational factors. Missing from the literature is an understanding of the personal, professional and contextual factors that drive individual researchers to adopt AI tools. We lack insight into how researchers’ disciplinary backgrounds, research methodologies and institutional contexts shape their needs and motivations for AI use. Furthermore, existing research has not examined how these needs and motives might vary across different national academic contexts, particularly in smaller academic communities like that of Lithuania.

Research gap 2: researchers’ perceptions of AI’s role and value

The literature reveals multiple conceptualizations of AI’s role in research. Researchers perceive AI as a tool that influences research productivity and efficiency [16,21]. Some view AI as a communication tool, emphasising the interactive, cyclical nature of researcher-AI collaboration [22], but also as a valuable assistant capable of adding value in different phases of research up to engaging in a dialogue with the researcher [22,41] or the publisher of research journals [13].

Others conceptualise AI as a source of innovation, capable of generating new knowledge and identifying unexplored research areas [8,18]. Xu et al. [12] have recently focused on studies of advancements offered by AI as ‘ these machine-learning models pretrained on vast amounts of text data are increasingly capable of simulating human-like responses and behaviors, offering opportunities to test theories and hypotheses about human behavior at great scale and speed. (p. 1108)

This variety suggests that AI functions as a

As in the case of Research Gap 1, despite identifying these multiple roles, the literature lacks depth in understanding how researchers actually experience and make sense of AI’s value in their specific research contexts. Most studies present AI’s roles as discrete categories rather than examining how perceptions evolve through actual use. Missing is an understanding of how researchers negotiate tensions between AI’s potential benefits and risks, how they integrate AI into their existing research practices and how their perceptions change over time through experience.

Moreover, the literature focuses primarily on technical capabilities rather than examining AI’s perceived impact on research quality, creativity and scholarly identity. We lack qualitative insights into how researchers in social sciences – disciplines that traditionally emphasise human interpretation and contextual understanding – reconcile AI use with their epistemological commitments.

Research gap 3: emerging rules and norms in academic communities

The literature acknowledges concerns about AI use in research, including the proliferation of unreliable information, introduction of mistakes and biases, facilitation of fraud and plagiarism, increased inequity and environmental impact [1]. Researchers recognise the need for critical evaluation of AI-generated information and the importance of maintaining researcher expertise in conceptualising and interpreting AI outputs [23].

Some work has begun to address ethical frameworks for AI use in research. Victor et al. [24] identify knowledge and ethical awareness requirements for social work researchers, emphasising understanding of AI construction, potential and limitations, ethical responsibilities and continuous learning. The ethical foundations of advice given to students and researchers has been discussed by T.D. Wilson [25], who has also reviewed the impact of the guidance given by the Russell Group of research-oriented universities in the UK [26]. Wang et al. [27] conceptualise

However, the literature provides limited insight into how academic communities are actually developing and implementing norms around AI use. Most discussions remain at the prescriptive level, focusing on what researchers should do rather than examining what they actually do. We lack understanding of how informal rules, practices and norms emerge through community interaction and negotiation.

Particularly missing is research on how national academic communities develop context-specific approaches to AI governance. The literature has not examined how factors such as academic culture, disciplinary traditions, institutional policies and peer networks shape the emergence of AI-related norms. The Lithuanian context, with its specific academic traditions and relatively small research community, provides an opportunity to examine these processes in a bounded setting.

Conclusion: methodological approach and comparative context

The gaps identified above point to the need for qualitative, ethnographic approaches that can capture the nuanced ways researchers experience and make sense of AI use. While quantitative surveys like van Noorden and Perkel’s [1] provide broad patterns, they cannot capture the contextual factors, meaning-making processes and community dynamics that shape AI adoption and regulation. The literature reviews reveal common functional use and summarise the problems identified by social science researchers, but miss particularities of researchers’ activities within specific contexts.

Recent ethnographic studies in information science and higher education provide models for this approach. Research examining technology adoption in academic settings has demonstrated the value of qualitative methods for understanding how new tools are integrated into existing practices and how communities develop norms around their use. Our study lies within this area and is a thematic analysis of qualitative interviews with social science researchers, seeking their direct experience and attitudes on AI use in research, rather than in education.

The Lithuanian context is particularly valuable for examining these questions, as the academic community is small enough to observe community-level norm development while being diverse enough to capture varied approaches to AI adoption. As noted, Lithuanian social scientists are increasingly interested in AI, but their actual experiences remain largely undocumented beyond abstract philosophical discussions or legal considerations [28,29].

Theoretical framework: activity theory

Cultural-historical activity theory was created by Lev Vygotsky in the 1930s to explain, psychologically, the learning process of human beings. At present, one of the most influential developments is that of Engeström [30], who developed several iterations of his model that can be used to explore different activity systems, whether individual or organisational.

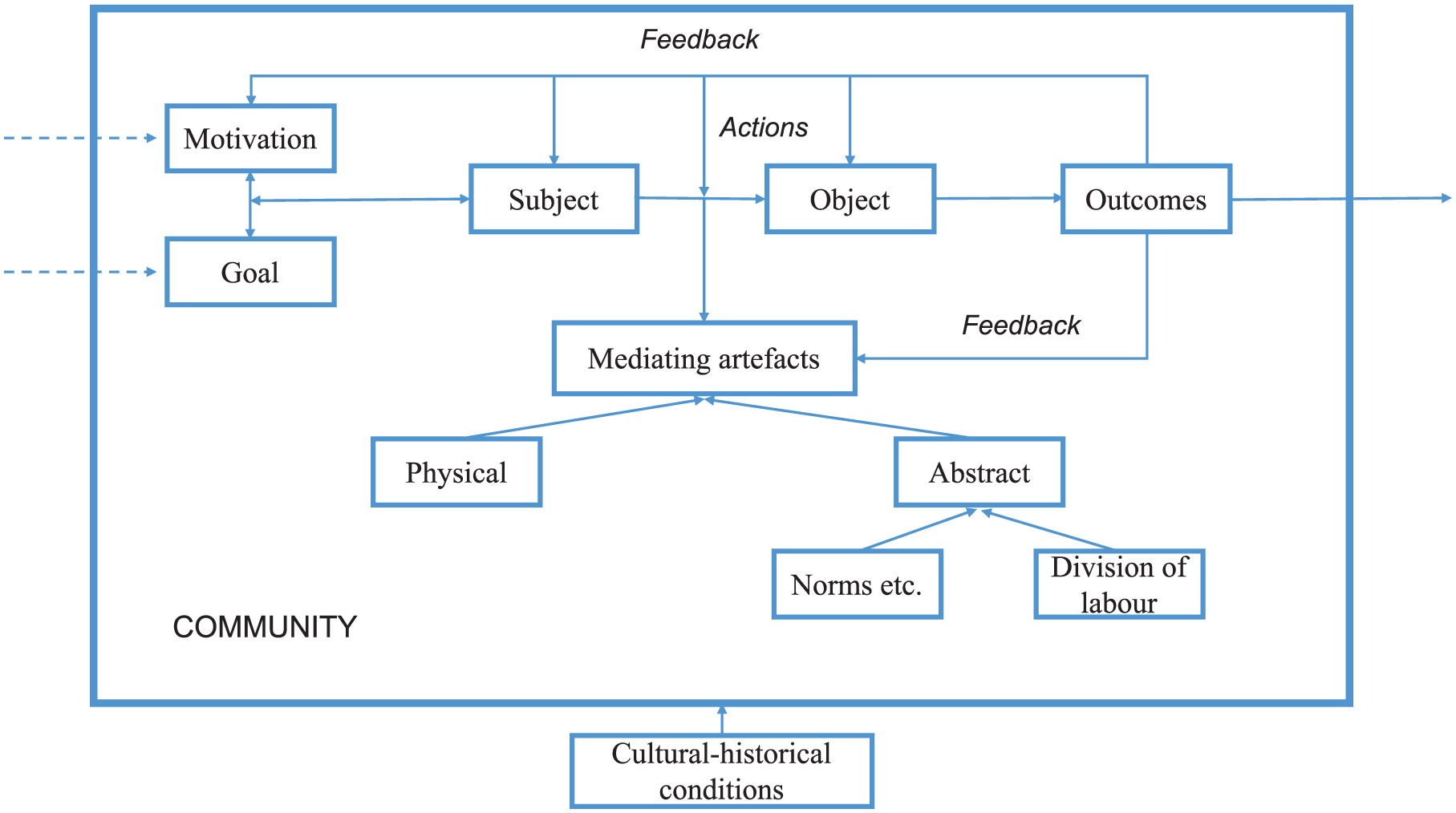

The activity system demonstrates how the subject working with its object is connected to it indirectly through the use of instruments (tools or signs) used to change the object and also through compliance with the norms and rules, including the division of labour, created by the community to which the subject belongs. Thus, the outcome of the activity depends not on the aims or motives of the subject, which instigate the subject’s activity, but more on the instruments used and the community’s influence. The models created by Engeström do not depict an important element of the activity – namely, the cultural and historical conditions that have formed the activity system and its elements. It is also seen as a static rather than dynamic presentation of the activity. Therefore, some other researchers have tried to amend the previous model. One of them is presented in Figure 1.

A process model of activity theory [31].

Figure 1 uses the original term ‘mediating artefacts’ instead of instruments. Mediating artefacts can be physical or abstract. The abstract ones include not only language, but also norms and division of labour. The subject using them acts upon the object to achieve the outcomes. The feedback loop is shown to change the motivations and goals of the subject, as well as the mediating artefacts used. The community to which the subject belongs, the external environment of the moment and the cultural-historical conditions are present in this diagram as influences outside the activity system.

The most important feature of any activity system, tensions and contradictions, does not appear in any of the diagrams, as these can occur within any of the elements, between different elements, between the internal and external conditions of the activity system and even between different systems. The concept of contradictions within an activity emerges from the distinction between an individual action and the totality of actions that form an activity. Engeström [32] identified a ‘clash between individual actions and the total activity system’ (p. 66). The tensions are visible symptoms of deep contradictions that can cause either a breakdown of the system or its further development. Thus, contradictions help to improve and develop activity only when they are noticed and reflected upon with the purpose of finding a way to resolve the contradiction or diminish tension.

The most important feature of any activity system, tensions and contradictions, does not appear in any of the diagrams, as these can occur within any of the elements, between different elements, between the internal and external conditions of the activity system and even between different systems. The concept of contradictions within an activity emerges from the distinction between an individual action and the totality of actions that form an activity. Engeström [32] identified a ‘clash between individual actions and the total activity system’ (p. 66). The tensions are visible symptoms of deep contradictions that can cause either a breakdown of the system or its further development. Thus, contradictions help to improve and develop activity only when they are noticed and reflected upon with the purpose of finding a way to resolve the contradiction or diminish tension.

For our purposes, we use the model in Figure 1 and interpret social researchers as subjects working on one or several objects in one or several activity systems (e.g. education and research), using innovative mediating artefacts – namely, generative artificial intelligence systems – as instruments to help achieve the outcomes. The use of this tool is regulated by the norms and rules emerging in their communities, depends on the external environment, or rather on other activity systems existing in their universities (e.g. management and administration), in the Lithuanian academic environment, and elsewhere. The activity system is also affected by the contradictions that led to the introduction of a new instrument and the tensions that it causes in other elements of the system. We have used this framework to formulate our research questions, develop the interview schedule and analyse the data.

Method

When constructing a research design based on activity theory, it is important to review the infrastructure and contextual situation of the studied organisation.

To achieve the stated objective, a qualitative research method was employed by interviewing academic staff working in the social sciences faculties at Vilnius University. This approach aimed to ensure that all artificial intelligence-related needs and uncertainties are captured as close as possible to the research and educational contexts, which helps address the raised research questions.

Participant recruitment and sampling strategy

Thirteen social scientists were selected to participate in the study, conducting research in various areas such as organisational data and information management, information and data security, legal regulation of information and communication, digital humanities, computational linguistics, digital heritage and cultural studies, history and political and cultural sociology. This purposive sampling strategy ensured the inclusion of participants with significant technical competence and experience in technology-intensive areas of social science. Our purpose was to ensure diversity of social science disciplines and diversity in the use and understanding of the AI systems and these criteria were used to recruit the participants by personally approaching them. The sampling method was a mix of convenience and snowballing as we have addressed at first the colleagues known to us and further followed their recommendations. The sample also was varied from the point of view of academic positions, ages of respondents and sex, though these were not the primary features sought.

Interview design and data collection

The semi-structured interview consisted of three question blocks corresponding to the main elements of activity theory:

Block 1: Identified the AI tools used during scientific research, the motivation for AI use, objectives and AI’s role in the research process.

Block 2: Explored the areas of AI use during scientific research, AI benefits and limitations.

Block 3: Analysed the applied rules and guidelines that define AI integration into the scientific research process and the need for rules and guidelines to ensure ethical AI use.

The interview schedule was piloted with two respondents, resulting in some slight changes to question wording.

The interviews were conducted remotely using Teams and recorded with the participants’ consent. The transcribed interview texts were then analysed using the content analysis method.

As our interview structure was based on the research questions, the concepts of activity theory and findings from the previous research, we were looking for repeated patterns related to our research questions and the main theoretical concepts of activity theory. Thus, we have stopped collecting interview data when the theoretical saturation was reached, that is, when we could identify repeated patterns in the data related to the theoretical concepts [33] such as expressed motives, areas and tasks of research, modes of AI use and norms guiding the use of AI systems in research activity.

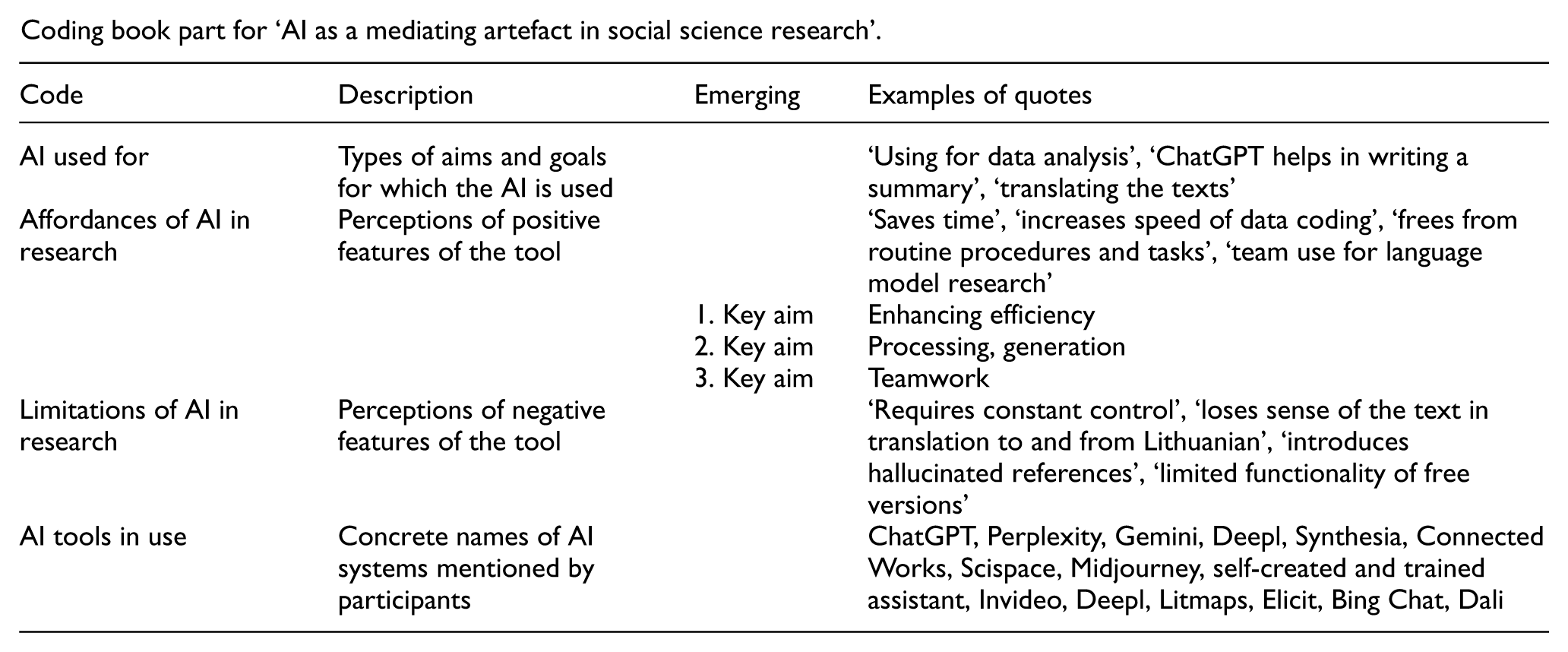

Data analysis and coding

The coding scheme was prompted by the theoretical framework providing the main themes but remained open to allow new categories to emerge during the analysis. We have identified the main themes in relation to the research problem and emphasised the mediating artefact (in this case, the AI) searching for the aims of its use, the variety of applications, norms and rules guiding this application. The main themes were also broken into sub-themes when they emerged in the coding process, for example, aims of AI usage were divided into three types (see in the Appendix 1 showing a fragment of a code book) and the perceptions of the AI as a mediating artefact included affordances and shortcomings of AI systems, norms and rules also included the attitudes about competence expressed by individual researchers and perceived as demands of their community. The second stage involved the identification of tensions and contradictions emerging from the data. Two researchers did the interpretation and coding of the data. The researchers have reviewed each other’s coding results finding that in the codes mainly coincide and no major discrepancies were found, except some difference in more general and specific codes (e.g. ‘outcome of AI use’ and ‘reduced time for literature analysis’). The researchers have revised a few instances where they differed to reach common interpretation. Interview data were anonymized, and summarised information was presented according to the research questions. The quotes used in the text were selected as typical examples out of several utterances.

Results

The report on the results of the survey are presented in terms of the elements of activity theory, discussed earlier. We refer to our participants as respondents – R1–R13.

Cultural and historical background

To contextualise the qualitative research findings, it is essential to examine the cultural and historical framework of Vilnius University. Founded in the 16th century, Vilnius University is one of the region’s foremost academic institutions. Throughout its history, the university has been distinguished by its strong liberal traditions and significant achievements in various fields, including astronomy, engineering, mathematics and medicine.

Key milestones in the university’s technological development include:

1963: Acquisition of the first electronic computer and the establishment of the VU Information Technology Application Centre (now the Information Technology Service Centre).

1970: Introduction of programming in social science curricula.

1993: Launching of the first electronic catalogue of the University Library, marking the beginning of its digital transformation.

Currently, advanced digital technologies are both studied and utilised across social sciences and humanities disciplines.

The library has expanded its digital services significantly, contributing to open access repositories, implementing cultural heritage digitisation programmes and developing innovative approaches to research evaluation and information literacy training [34, 35].

The COVID-19 pandemic catalysed substantial changes, accelerating the adoption of information and communication technologies for research collaboration and teaching across all disciplines. Contemporary social science researchers and lecturers utilise diverse computing and mobile devices for:

Internet-based data collection

Telecommunications

Quantitative and qualitative data analysis

Digital object creation and investigation.

In response to these developments, the university has implemented a comprehensive Virtual Learning Environment for course delivery and administrative functions. This initiative has enhanced technological adoption, digital literacy, creative collaboration and student-lecturer interaction (https://www.vu.lt/it/epaslaugos).

This technological infrastructure and adaptive organisational culture, characterised by established protocols for innovation adoption, now provide a foundation for the integration of generative AI systems. The Faculty of Mathematics and Informatics established the first AI laboratory in 2020 [36]. Since then, discussions about AI in research and education have been regularly featured in VU news and podcasts (https://naujienos.vu.lt/tag/dirbtinis-intelektas/), with significant relevance to the social sciences.

Doctoral students can attend AI courses through the Artificial Intelligence Doctoral Academy (https://www.vu.lt/studijos/doktoranturos-studijos/aktualijoareinvited/dirbtinio-intelekto-akademijos-mokymai). In addition, the Faculty of Law has experimented with AI ‘knowledge twins’ (or avatars) in the learning process [37].

On 18 June 2024, the Senate of Vilnius University approved the ‘VU Guidelines for AI Usage’. These guidelines provide definitions of AI and generative AI, affirming the benefits of using AI tools by lecturers, researchers and students. They also specify user responsibilities, recommend ethical representations of AI usage and provide suggestions for data security and citations for AI-generated content. However, it is important to note that these guidelines mainly address education, while research recommendations are limited to adhering to academic ethics norms and publishers’ guidelines [38].

Motives for using generative AI

According to activity theory, the motive is a critical element that encourages the application of AI capabilities in the scientific research process.

Our exploration into the uses of AI has identified several key aims among respondents:

Enhancing efficiency: Participants used AI tools as working instruments, aiding in research and education, personal learning and competence development.

Team experience with AI: Some respondents have indicated that their teams have been utilising AI for 10 years, while others have started more recently (from one to several years ago). A few participants are still experimenting with different tools without consistent regular use.

Idea generation and information processing: Research participants specifically employ AI as an initial source for scientific ideas, data searches and information processing. The initial collection and processing of information through AI allow scientists to manage their research time more effectively, enabling them to focus on creative and interpretative tasks.

For example, one participant mentioned:

But it may also be useful in more creative ways, such as:

In their research activities, participants clearly delineate tasks that AI can perform versus those requiring human intervention:

The most common tasks assigned to AI include:

Searching for information sources and literature

Translation

Editing

Many respondents have a long history of using AI. Some utilise this technology for more complex tasks, such as generating ideas and analysing data. Specifically, in linguistics, tasks often involve several steps, including recognising speech patterns and simplifying text. Translation and editing are particularly prevalent for writing in non-native languages.

Several respondents also mentioned using AI to enhance the quality of ‘other invisible work’, such as:

writing e-mails;

preparing summaries for administrative purposes, such as report descriptions; and

standard tasks that are typically perceived as tedious.

One respondent elaborated further:

In summary, the primary motive for using AI in research is to save effort and time by alleviating the burden of routine tasks and facilitating assistance with tasks that would otherwise require external help (e.g. translation and editing).

Generative AI as a research tool

Respondents mentioned a diverse range of AI tools, including:

ChatGPT

Synthesia

Invideo

Midjourney

Deepl

Dali

Perplexity

Gemini (previously Bard)

Litmaps

Elicit

Bing Chat

Connected Works

Scispace

One self-created research assistant

One respondent identified as many as six AI tools they have used or tested, while two participants had never used any AI tools for work-related tasks. The remaining respondents named between one to three AI tools that they regularly employ for various research tasks.

When integrating AI into the research process, an interaction emerges between the researcher and the AI, with AI serving as a helpful instrument or mediating artefact. This interaction primarily occurs during the preparatory work stage of scientific research, including problem identification and verification. Respondents noted that AI is utilised for literature searches and reviews in the process of generating research questions.

One respondent noted:

Importantly, researchers only use AI as an initial search source and do not limit themselves to the content generated by AI. They will often extend their search to additional databases and resources. For instance:

AI also plays a critical role in preparing literature reviews. According to one participant:

In this context, the researcher-AI interaction shapes the AI’s role within the scientific research process. Researchers perceive AI as an assistant, taking on the responsibilities of a ‘research assistant’, or even an ‘inexperienced research assistant’. As respondents said:

However, researchers have identified certain limitations associated with AI in the literature analysis and summarisation stages. Participants noted that AI-generated content often lacks authenticity and originality, stating:

A significant limitation highlighted by respondents is that AI’s processing of large datasets can eliminate crucial contextual information, which is essential for studying social science subjects. Respondents noted:

Researchers also emphasised the importance of the emotional dimension in social research, particularly when formulating problems and analysing data – an aspect that AI as a mediating artefact struggles to interpret. According to participants, traditional data processing tools, such as SPSS (Statistical Package for the Social Sciences), remain their primary working instruments:

Social science researchers underscored various methodological challenges encountered when collecting and processing large datasets for content analysis-related research. One noted:

In summary, the results indicate that AI use in the scientific research process positively affects research efficiency. By employing AI as a mediating artefact, researchers enhance their data search and processing capabilities. However, scientists also observe that the application of AI within the research process presents limitations, notably that AI cannot fully grasp contextual nuances and the intricate characteristics of human behaviour, raising new methodological questions in social science research.

These challenges are vital to ensuring the reliability and reproducibility of research results. Thus, we can conclude that AI in the research process serves as a mediating artefact that not only enhances a researcher’s technical capabilities but also introduces new methodological considerations.

Rules and norms

To comprehensively analyse AI applications in the sociological research process, evaluating researchers’ attitudes towards AI regulation is essential. According to social science researchers, AI democratisation is currently underway (i.e. making tools accessible to a broader audience, beyond the expert). AI technologies are becoming universally accessible to both skilled users and novices. Interview data revealed that researchers utilise AI capabilities primarily through experimentation.

However, respondents indicated a lack of comprehensive computer and digital literacy competencies, which affects their ability to fully exploit AI’s potential. Notably, the understanding of AI usage ethics emerged as another important competency:

There is a pronounced need for ethical rules, particularly in pedagogical activities. Researchers feel it is crucial to provide students with information about the ethical application of AI in academic work:

It is important to emphasise that researchers directly encounter AI use regulations when submitting publications to scientific journals. The significance of applying these rules revolves around adherence to academic ethics principles and ensuring the validity of research data; however, their understanding in relation to AI remains ambiguous.

Some respondents were unaware of any guidelines developed within their academic unit or at Vilnius University, while others knew of their existence and character. A few had heard of EU documents and encountered certain ethical limitations within the AI tools. Regardless of their awareness of the guidelines, each respondent expressed that the rules for using AI tools should adhere to the same general ethical norms applied in research, such as:

plagiarism;

proper attribution of authorship;

integrity in data processing and application; and

protection and security.

Nevertheless, the majority expressed a need for guidelines pertaining to the ethical use of AI that are specific to their context.

Community and division of labour

In the interviews, direct use of AI tools within research teams was mentioned only once:

Several respondents implied that AI is a shared concern among researchers in their teams, collectively testing some of the systems, using the plural pronoun ‘we’:

Some respondents speculated on how AI could assist or hinder their team efforts:

One respondent envisioned a collaborative approach, detailing the labour division between researchers from different areas:

More frequently, AI assumed the role of a collaborator, taking over tasks previously managed by researchers. However, it has also replaced roles traditionally filled by other professionals, for example, translators or editors. As one participant remarked:

Most respondents had

Some respondents indicated that collaborative use might depend on the study area and discipline; in some cases, it may work well, while in others, it may not.

In addition, it emerged that rules and norms regarding AI use are determined by the university community through the establishment of working parties and committees focused on addressing ethical and other relevant issues. These topics are also discussed within various faculties and individual research groups. Wider communities have formed due to collective concerns regarding the use of AI in publishing and among international bodies, which also involve researchers in establishing their guidelines.

Division of labour occurs within the working parties and committees established to tackle potential AI-related challenges. Representatives from various sectors of the university community participate in these discussions. Thus, student representatives may advocate for a clear understanding of how they might employ generative AI in their work and how to avoid potential plagiarism charges. Meanwhile, staff representatives would also raise similar concerns and emphasise the need for guidance on identifying AI-generated plagiarism and appropriate responses.

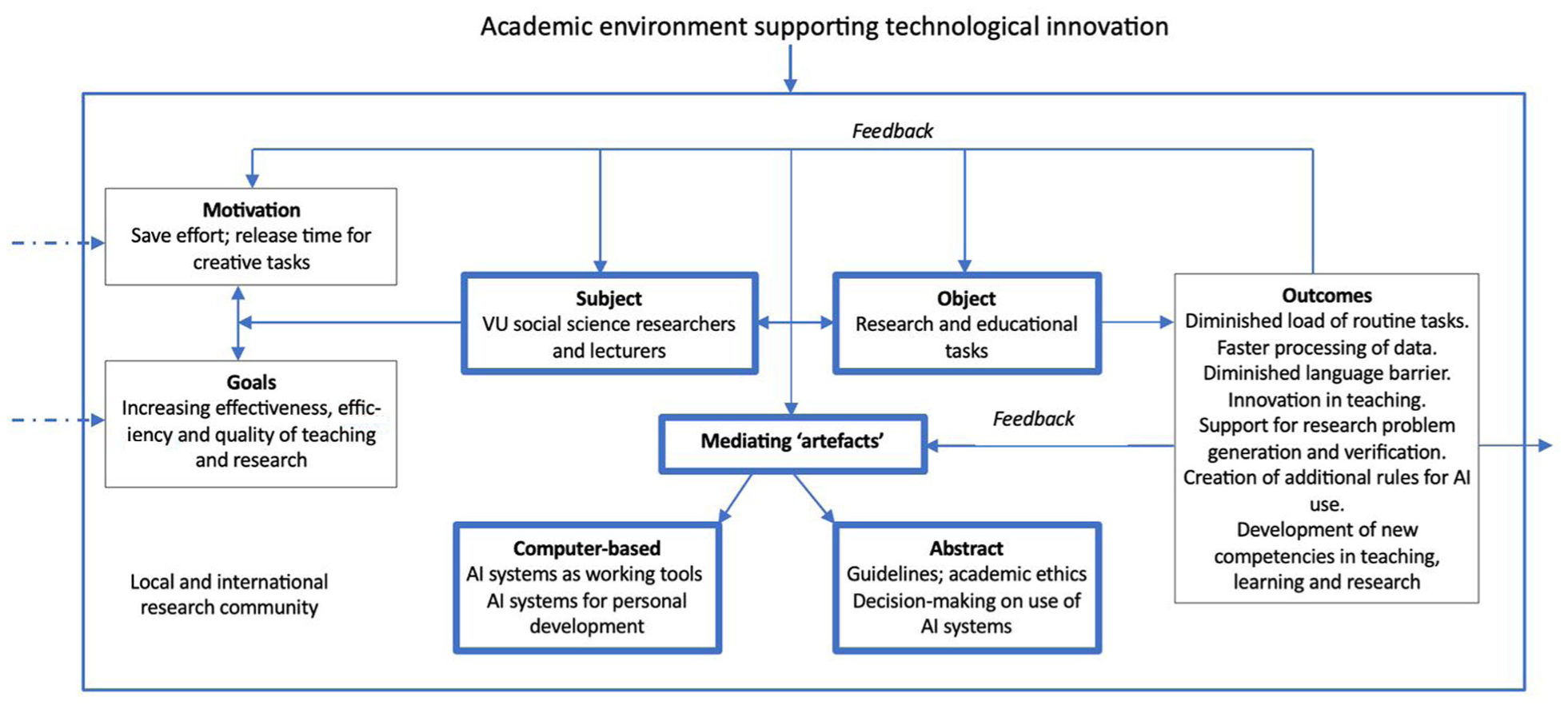

Discussion and conclusions

Activity theory provides a new perspective on generative AI adoption, framing it as a human behaviour issue rather than merely a technological one. This framework illuminates various aspects of research work, including motivation, community roles, guidelines and objectives, which often receive less attention in discussions about AI integration. Figure 2 is a development of Figure 1, with specific examples of different constituents of the activity system under study.

Research activity system with the focus on AI as mediating artefact – developed on the process model of Figure 1.

We have identified several

One of the tensions within the research activity system relates to researchers’ overall workload, which is shaped by external demands stemming from both the academic environment and the expectations of research communities. The need to balance teaching, administrative responsibilities and other tasks with the production of high-quality research encourages the adoption of instruments – particularly information technologies – that reduce the time and effort required to complete repetitive or technical research activities. These perceived efficiencies are among the most appealing promises of AI use. Our analysis indicates that generative AI can enhance research processes and information-seeking behaviour, especially for routine or laborious tasks. Researchers primarily utilise AI at two key stages: generating research questions via literature searches and verifying these questions through data collection.

However, the introduction of this new mediating artefact also generates fresh tensions within the system. While it improves efficiency, it is accompanied by a sense of distrust due to the current limitations of AI technologies and the discomfort associated with delegating critical thinking and creative tasks to machines. Respondents’ emphasis on improving efficiency echoes concerns around productivity highlighted by Chubb et al. [16] and Liebrenz et al. [19]. The central tension here lies between the subject (the researcher) and AI as a working tool: researchers wish to benefit from AI’s support without undermining their own expertise or sense of agency. Consequently, AI is transforming researchers’ information-seeking behaviour, with many blending AI-generated results with traditional database searches. Typically, researchers rely on AI for initial exploration but independently validate the information obtained.

This tension is further intensified by concerns regarding the overall quality, accuracy, trustworthiness and originality of AI outputs. Similar concerns have been raised by Christou [23] and Victor et al. [24]. As participants noted, current AI systems often omit vital contextual information, necessitating further refinement. Consequently, some researchers prioritise traditional methods for higher-quality analysis. In addition, inconsistencies in AI output, depending on the version or system used, can result in unreliable findings. These issues highlight the tension between the goals of rigorous research and the limitations of AI-generated outputs.

Another significant tension pertains to the competence and expertise of researchers. While AI is increasingly adopted as a research tool, a clear divide remains: some researchers confidently create and train AI assistants, while others are hesitant or inexperienced, even in routine tasks. This disparity may reflect discipline-specific trends, as suggested by Van Noorden and Perkel [1], but our findings indicate that such differences can also exist within the same field (e.g. linguistics).

The widespread desire for AI literacy training among participants reflects this skills gap and indicates a tension between the rapid proliferation and increasing functionality of AI systems and the lack of institutional support to help researchers manage and master these developments. Enhanced digital and AI literacy is essential to maximise the benefits of generative AI, yet many researchers rely on trial-and-error approaches. Wang et al. [27] have also identified AI literacy as crucial for the critical selection and evaluation of AI systems. Researchers, therefore, face the dual challenge of pursuing time-saving strategies while also needing to acquire the skills necessary for effective use, often through inadequate means.

Generative AI is also used by participants for creating educational tasks, managing administrative duties and supporting personal development and coincide with other findings (as in Chowdhury et al. [8], Hamamra et al. [10] and T.D. Wilson [25]). In these areas, some report more confident use than in research. Nonetheless, tensions persist here as well, particularly in reconciling the goals of efficiency and quality with the uncertainty surrounding AI’s role in assessing the originality of student work and research outputs. This results in competence-related tensions – between AI as a functional tool and the abstract evaluative tools required for its use, as well as between the purposes of using AI and the norms of ethical academic conduct.

With regard to the latter, our respondents report that unclear and inconsistent norms across academic communities complicate the use of generative AI. Although some institutional guidelines exist, researchers’ awareness of them is limited, resulting in a disconnect between practice and regulation. This issue is compounded by broader pressures within the academic environment for researchers to comply with standards related to data protection, participant safety, honesty and integrity – standards not yet fully integrated with emerging AI-related policies. Dean [39] notes that ‘Proposing and adopting policy…can take considerable amounts of time to wind through the university governance process’, leading many researchers to adopt a cautious stance due to uncertainty over ethical guidelines.

Two key

The second contradiction lies in the research community’s aspiration for universal recognition of AI’s value for research, which is undermined by inequalities in access and expertise in the same community. These inequalities manifest through all investigated issues and contribute to differing perceptions of AI’s utility. Further research is needed to investigate the nature and origins of these disparities, which must be understood before effective solutions can be developed.

Limitations of the research

While this study provides valuable insights into the use of generative AI by social science researchers at Vilnius University, it has several limitations. The research is based on a relatively small sample of 13 interviews, which may not fully capture the diversity of perspectives across the university. Moreover, as the study focuses on a single institution, the findings may not be generalisable to other academic contexts or other countries. To some extent we could identify similar results in the articles by other authors from other countries and institutions, but this does not diminish the bias of sampling and the chosen case. The study also relies on self-reported data, which may be subject to bias or incomplete responses. In addition, the research reflects a particular moment in time, and given the rapid development of generative AI, usage patterns and attitudes may already have shifted – particularly in light of the growing availability of new systems and researchers’ expanding experience. These limitations indicate a need for further research involving larger sample sizes, cross-institutional comparisons and longitudinal designs to track the evolving role of AI in academic research.

Further research

As outlined in the Introduction, this exploratory qualitative study has uncovered a range of significant tensions and contradictions within the research activity system involving AI use. The data reveal disparities among researchers, suggesting unequal access to and competence with AI tools – particularly in relation to professional versions and advanced functionalities. It would be worthwhile to investigate how such inequalities correlate with research fields, general IT competence, workload levels or demographic variables. Addressing these questions would require a larger-scale survey of Lithuanian research community and we consider this small-scale study as a step towards it.

Another pressing area for investigation is the development of guidelines that support researchers’ integrity in using AI, rather than focusing solely on ethical use by students or in assessment processes. Gaining a deeper understanding of researchers’ concerns in this domain and exploring inclusive strategies for involving them in the development of such guidelines may be of interest to social scientists across different academic contexts.

Footnotes

Appendix 1

Coding book part for ‘AI as a mediating artefact in social science research’.

| Code | Description | Emerging | Examples of quotes |

|---|---|---|---|

| AI used for | Types of aims and goals for which the AI is used | ‘Using for data analysis’, ‘ChatGPT helps in writing a summary’, ‘translating the texts’ | |

| Affordances of AI in research | Perceptions of positive features of the tool | ‘Saves time’, ‘increases speed of data coding’, ‘frees from routine procedures and tasks’, ‘team use for language model research’ | |

| 1. Key aim |

Enhancing efficiency |

||

| Limitations of AI in research | Perceptions of negative features of the tool | ‘Requires constant control’, ‘loses sense of the text in translation to and from Lithuanian’, ‘introduces hallucinated references’, ‘limited functionality of free versions’ | |

| AI tools in use | Concrete names of AI systems mentioned by participants | ChatGPT, Perplexity, Gemini, Deepl, Synthesia, Connected Works, Scispace, Midjourney, self-created and trained assistant, Invideo, Deepl, Litmaps, Elicit, Bing Chat, Dali |

Ethical considerations

To ensure ethical research practices, the study obtained informed consent from all participants and maintained their confidentiality in the presentation of the findings according to the procedures of the VU Joint Committee on Research Ethics in Social Sciences. The researchers also examined Vilnius University’s internal documents (reports, information bulletins and AI usage regulations) to illuminate the general context of technological innovation implementation and analyse the approved regulations for AI use in scientific and teaching/learning activities.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.