Abstract

Fellowships are competitive training posts, often in a subspecialty area. We performed a quality assessment of potential interviewer bias on anaesthesia Fellow selection. After research locality approval, we analysed interview scores for all Fellowship applications to our department over six years. Panel interviewers participated in a structured interview process, asking a series of standardised questions to rate applicants. A mixed model analysis of total applicant rating with crossed effects of applicants and interviewers was used. A total of 94 applicants were interviewed by 27 panel members, with between two and four panel members per interview, giving a total of 329 applicant ratings. The random effect of applicants accounted for 45.8% of total variance in ratings (95% confidence intervals (CI) for intraclass correlation (ICC) 35.8%–57.2%) while interviewer effects accounted for 13.4% of total variance (95% CI for ICC 5.3%–30.0%). We found no evidence of bias for most potential sources after analysing multiple applicant and interviewer factors. After adjusting for interviewer training programme, applicants from other training programmes were rated a mean of 1.87 points lower than Australian and New Zealand College of Anaesthetists (ANZCA) applicants (95% CI 0.62–3.12, P = 0.003) and 1.84 points lower than Royal College of Anaesthetists (RCoA) applicants (95% CI 0.37–3.32, P = 0.014). After adjusting for applicant gender, female clinicians rated applicants 1.12 points higher (95% CI 0.19–2.06, P = 0.019) on average than male clinicians. The observed differences in interview scores amongst male and female clinicians and lower scores in applicants from programmes other than ANZCA/RCoA were small, and require confirmation in independent studies.

Introduction

Anaesthesia Fellowships are competitive training posts, often in a focused area or subspecialty, in the final year of specialty training or immediately after completion of specialty training. In the Department of Anaesthesia and Perioperative Medicine, North Shore Hospital, Auckland, applicants are initially screened based on eligibility and structured scoring of their curriculum vitae (CV), with approximately two-thirds invited to undergo a formal interview. A composite score based on the CV (35%), interview (60%) and subjective overall impression (5%) is used to rank applicants for final selection. It is possible for interviewer bias to adversely influence this selection process. To our knowledge, there is no research investigating potential interviewer bias for selection at the end of specialty training, i.e. in Fellowship or consultant post selection. Bias in selection to leadership roles is a cause of inequity, and this is a topical issue in many industries. The hiring processes in corporate institutions are managed by trained human resources personnel, in contrast to hospital departments where interviews are largely conducted by clinicians with minimal training in this area. This has contributed to the paucity of research related to interviewer bias in any clinical setting and reinforces why a separate study of this cohort is warranted.

The objective of this quality assessment study was to investigate the presence of possible interviewer bias as a factor in the selection of anaesthesia Fellows in our institution.

Methods

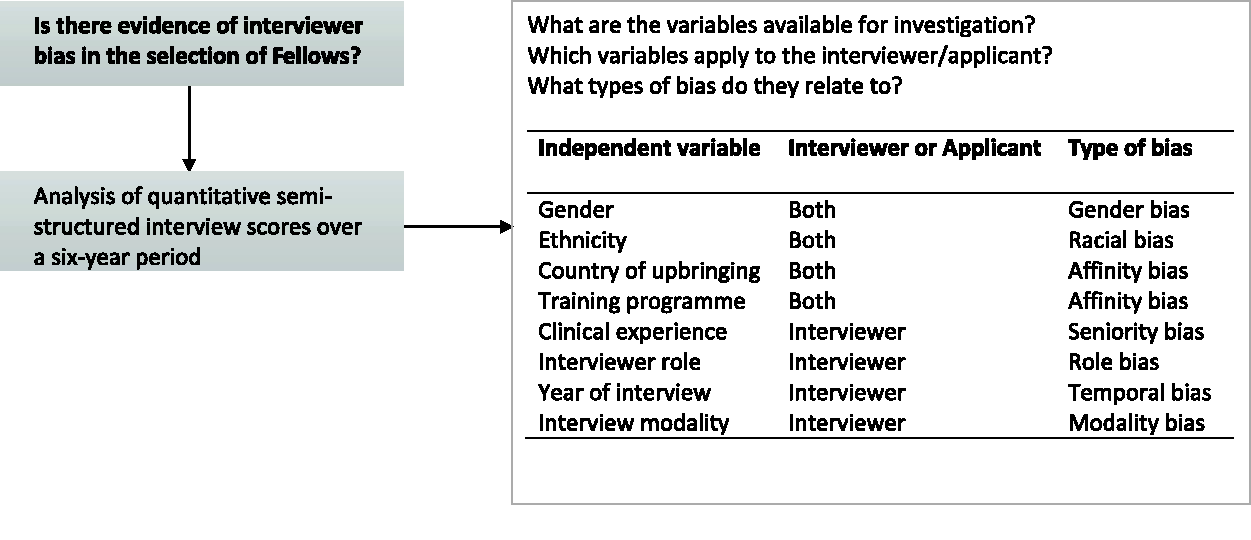

Research locality approval was granted by the Awhina Research and Knowledge Centre, Waitemata District Health Board (ID: RM14402). In our institution, multiple measurable variables in the interview process exist as possible sources of systemic bias, which we illustrate in a conceptual framework (Figure 1). Some variables apply to both interviewers and applicants (e.g. gender) while others apply only to interviewers (e.g. interviewer role as clinician or manager).

Conceptual framework to investigate possible sources of systemic bias in anaesthesia Fellow interviews.

We analysed all interview scores for Fellowship applications to our department from 2014 to 2019, selecting this specific period because data prior to 2014 were not available. Panel interviewers typically consisted of two to three consultant anaesthetists and a manager, though this was not achievable for every interview due to staffing constraints. A structured interview process was employed, with panel interviewers taking turns to ask the applicant a series of questions in four domains: Abilities and Experience, Communication and Teamwork, Self-Management, and Contemporary Issues. Each domain consists of four to five topics or scenarios framed as questions. Panel members separately assign ratings of 1–5 for each domain using a behaviourally anchored rating scale (1 = weak match to job requirements, 3 = good match to job requirements, 5 = strong match to job requirements) for a total score out of 20. Identical standardised interview questions were utilised throughout the study period. Data were collected directly from interview scoring sheets and coded on an electronic spreadsheet after anonymisation.

Statistical analysis

Potential biases were assessed by considering associations between total interview scores and the following variables: applicant and interviewer gender, interviewer role (clinician versus manager), applicant and interviewer country of upbringing and country of training programme (NZ, UK or other), applicant and interviewer ethnicity (Caucasian versus non-Caucasian), year of interview, and interview modality (face-to-face versus video link). As there were no male managers in the study, the potential effects of interviewer role was also considered amongst female interviewers only, and possible gender effects were considered for clinicians only. Chi-square tests were used to check for potential imbalances within and between applicant and interviewer characteristics. Interview ratings had a crossed data structure, whereby each applicant was rated by two to four interviewers, and each panel member interviewed between 1 and 93 applicants. This was taken into account using a linear mixed effects model with crossed random effects for applicants and interviewers, which accounts for the correlations between ratings both within applicants and within interviewers. The intraclass correlation (ICC) was calculated separately for applicants and interviewers, to estimate the proportion of the total variance in applicant ratings accounted for by clustering for each of these factors. Each factor was considered in a separate model. Where a variable applied to both the applicant and interviewer (i.e. training programme, ethnicity and gender), however, these factors were considered in one model together to explore whether the effect of applicant level factors may differ according to that same interviewer level factor (or vice versa). Interactions between applicant and interviewer level factors were tested, for example, to evaluate whether the effect of applicant gender on ratings varied according to interviewer gender. Normality of residuals and homoscedasticity (constant variance of error terms) was checked for all models. Simple linear regression was used to assess the effects of interview modality and year of interview, since these factors are at the applicant level only. Pairwise comparisons between specific groups were considered if there was overall evidence of a difference in total interviewer ratings according to a factor of interest. Analyses were exploratory, with the aim of generating hypotheses regarding factors that may contribute to interview bias in this setting. As such, adjustment was not made for multiple comparisons and results require confirmation in independent studies. All data description and analysis was conducted in Stata (version 16) and statistical significance was set at P < 0.05.

Results

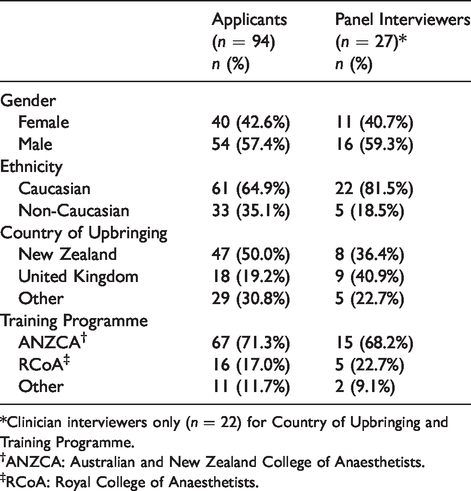

From 2014 to 2019, 94 applicants were interviewed by 27 panel members, with between two and four panel members per interview, giving a total of 329 applicant ratings. Table 1 summarises the characteristics of the applicants and the interviewers (panel members). A greater proportion of female applicants were Caucasian (82.5%) compared with male applicants (51.8% Caucasian, P = 0.002). There were no associations between applicant and interviewer characteristics. On average, 16 applicants were interviewed each year, ranging from 11 in 2017 to 21 in 2014. The majority of interview panels (n = 54, 57.4%) had four members, while 33 (35.1%) comprised three members and seven (7.4%) had only two members. Clinicians comprised 22 of the 27 interviewers (81.5%; all staff specialists employed in the department), of whom six (27.3%) were women. The remaining five interviewers were managers, all of whom were women. Clinician panel members had an average of 10.7 years’ experience as a specialist (standard deviation (SD) 7.7, range 1–32 years). Over half (n = 56, 59.6%) of the applicants were interviewed in person, with those remaining interviewed via video link. The mean number of interviews conducted by the 27 interviewers was 12.2 (12.5 for 22 clinicians, 10.8 for five managers). One interviewer was on 93/94 interview panels; the next highest number of interviews conducted by any panel member were 47, 31 and 23. The remaining interviewers were all involved in less than 20 interviews.

Characteristics of applicants and panel interviewers.

*Clinician interviewers only (n = 22) for Country of Upbringing and Training Programme.

†ANZCA: Australian and New Zealand College of Anaesthetists.

‡RCoA: Royal College of Anaesthetists.

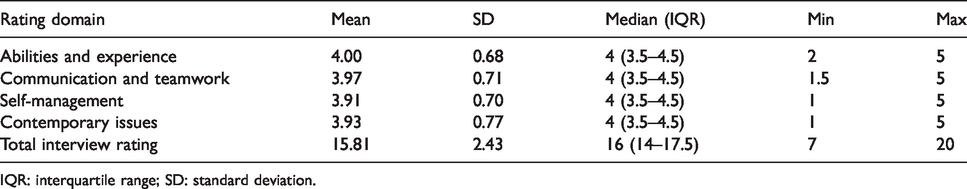

The average total interview rating across all applicants and all interviewers was 15.81 out of 20, with a median score of 4/5 in each of the domains (Table 2). In 329 ratings, the lowest total interview score was 7/20 (n = 1) while eight applicants achieved the highest possible score of 20 from one of their interviewers.

Average ratings of 94 applicants, including 329 total ratings from up to four interviewers per panel (including clinician and manager).

IQR: interquartile range; SD: standard deviation.

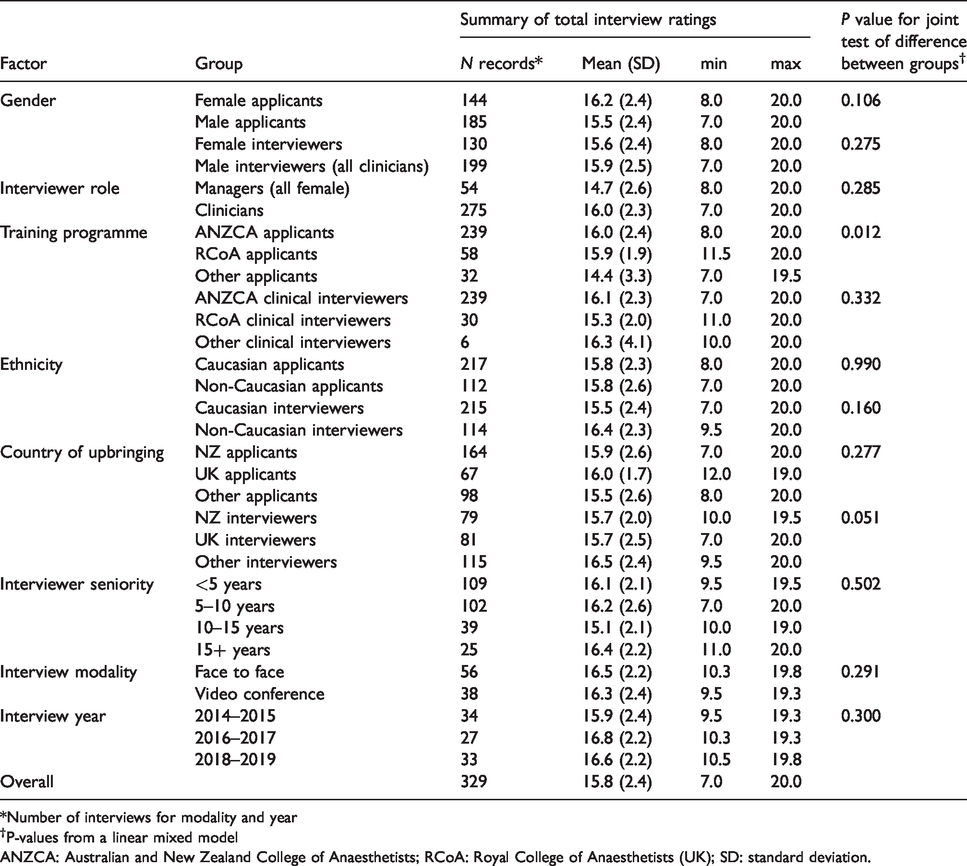

In a linear mixed model analysis, the random effect of applicants accounted for 45.8% of the total variance in ratings (95% confidence intervals (CI) for ICC 35.8%–57.2%) while interviewer effects accounted for 13.4% of total variance (95% CI for ICC 5.3%–30.0%). We found no evidence of association between the following factors and applicant ratings in our dataset: gender (applicant or interviewer), interviewer role, ethnicity (applicant or interviewer), interviewer seniority, interview modality, year of interview, country of upbringing (applicant or interviewer), or interviewer training programme (P ≥ 0.05 for all factors, Table 3).

Total interview ratings by interviewer and applicant characteristics.

*Number of interviews for modality and year

†P-values from a linear mixed model

ANZCA: Australian and New Zealand College of Anaesthetists; RCoA: Royal College of Anaesthetists (UK); SD: standard deviation.

On average, applicants from programmes other than the Australian and New Zealand College of Anaesthetists (ANZCA) and the Royal College of Anaesthetists (RCoA) scored lower on their interview ratings (Table 3). Adjusting for interviewer training programme, applicants from other training programmes were rated with a mean of 1.87 points lower than ANZCA applicants (95% CI 0.62–3.12, P = 0.003), and 1.84 points lower than RCoA applicants (95% CI 0.37–3.32, P = 0.014). There was no statistically significant interaction between these two factors, i.e. the effect of applicant training programme on the ratings did not vary according to interviewer training programme (P = 0.225).

There was no overall effect of gender or interviewer role on interview scores (Table 3). However, amongst clinicians there was some evidence of an effect of gender on interview scores; adjusting for applicant gender, female clinicians rated applicants 1.12 points higher (95% CI 0.19–2.06, P = 0.019) on average than male clinicians. Amongst female interviewers, clinicians rated applicants 1.34 points higher on average compared with female managers, but this difference was not statistically significant (95% CI –0.21 to 2.89, P = 0.090).

Discussion

To our knowledge, this is the first quality assessment study investigating interviewer bias in selection at an advanced level in any clinical specialty. After analysing multiple applicant and interviewer factors, we demonstrated an absence of bias across almost all potential sources. Applicants from the two largest training programme pools in our study received higher ratings than others, but data for the latter were obtained from a relatively small sample. There was a minor gender-based rater leniency–stringency effect among clinicians after adjusting for applicant gender. The observed average rating differences were small but possibly meaningful in our setting, because most scores were in a relatively narrow range. For example, the difference in scores between applicants ranked at the 50th percentile and the 75th percentile was only 1.5 points out of 20. Further discussion will draw on rating literature in medical selection interviews and clinical performance ratings, as similar principles apply for assessor behaviour in both, and the latter has a larger evidence base.

Leniency and stringency are both types of scale usage error, defined as a consistent tendency on the part of interviewers or assessors to provide ratings which are too high (leniency) or low (stringency) compared with actual performance levels. 1 , 2 Although ‘leniency’ is the term most commonly used in rating literature, 1 we have used ‘leniency–stringency’ in our discussion to avoid possible pejorative connotations with labelling any particular group. Leniency–stringency is not the same as bias, which is an inclination or prejudice for or against a person or group in a way that is considered to be unfair.

The existence of relative leniency or stringency between individual assessors is well-recognised in the literature, traditionally known as the hawk–dove effect. 3 It is a stable characteristic, even when measured using different instruments. 2 Assessors’ perceptions of themselves as hawks or doves are frequently inaccurate. 4 A study investigating the relationship between personality traits and rating leniency–stringency revealed that individuals who were more ‘sensitive’, ‘warmhearted’ and ‘tenderminded’ tend to award higher ratings. 1 Furthermore, it has been suggested that those in different roles are attuned to different behavioural cues, 5 thus clinicians, nurses or managers may observe and utilise different aspects of applicant responses and behaviour in making judgements. The presence of hawks or doves on an interview panel does not invalidate the selection process. Rather, it underlines the importance of having a consistent interview panel for all applicants in a selection cycle.

The evidence for gender-based leniency-stringency effects is inconclusive. An analysis of medical student selection in one Australian institution over a two-year period revealed an interviewer gender leniency–stringency effect, with women awarding higher scores than men, irrespective of applicant gender (P = 0.003 in one year and P = 0.03 the next). 6 A study of medical and dental programme selection using multiple mini interview (MMI) data from 207 interviewers and 686 applicants revealed that interviewer leniency–stringency effects accounted for 8.9% of variance, though gender association was not analysed. 2 An analysis of 142,030 clinical examination marks awarded to 10,145 candidates over a three-year period revealed a 12% variance based on assessor leniency–stringency. 3 The authors described a gender-based assessor leniency–stringency effect that did not reach statistical significance (P = 0.076 for 1040 male and 123 female assessors), and was not present after multivariate analysis. 3 An analysis of 31,920 clinical interview scores awarded by 67 examiners (51% women) showed a 44.2% effect due to assessor leniency–stringency, with no gender effects. 4 Although we discovered a small gender-based leniency–stringency effect among clinician interviewers in our sample, there was no evidence of bias.

Previous studies have investigated the effect of assessor role on rating leniency–stringency in clinical performance assessment. A comparison of ratings on humanistic behaviour in 76 house officers by 259 medical specialists and 2270 nurses showed higher rating scores awarded by clinicians (mean score by clinicians 3.44 versus 3.24 by nurses (scores out of 4), P < 0.001). 5 Others have demonstrated poor reliability between specialist clinician and nurse ratings in clinical performance assessment, though no comment was made on role leniency–stringency.7,8

It is difficult to interpret the lower scores achieved by applicants not from the ANZCA or RCoA training programmes, as they were small in number (11/94 or 11.7%) and very heterogeneous. We did not observe any bias based on the interviewers’ training programme but the number of non-ANZCA/RCoA interviewers were again very small (2/22 or 9.1%). We are unable to interpret these differences in ratings as a bias due to the absence of an independent assessment. It is possible that these represent accurate, non-biased ratings.

Recent commentary on gender equity in anaesthesia highlights the lack of women in leadership groups,9–11 and specialty honour awards, 12 drawing attention to the need for robust systems to minimise systemic bias. Despite accounting for only 42.6% of applicants in our study, women received the highest interview scores in four of the six years, were 66.7% of the top three highest interview scores in each year, and 63.3% of the top five, during the six-year study period. Our results are important in showing that the performance of women in our interview process was not due to bias from male or female interviewers.

Our study revealed that interview modality (face-to-face versus online video) did not influence applicant scores. This finding is important in the context of recently imposed restrictions on face-to-face meetings to minimise the spread of severe acute respiratory syndrome coronavirus 2 (SARS-CoV-2) and avoiding unnecessary travel to mitigate factors contributing to climate change.

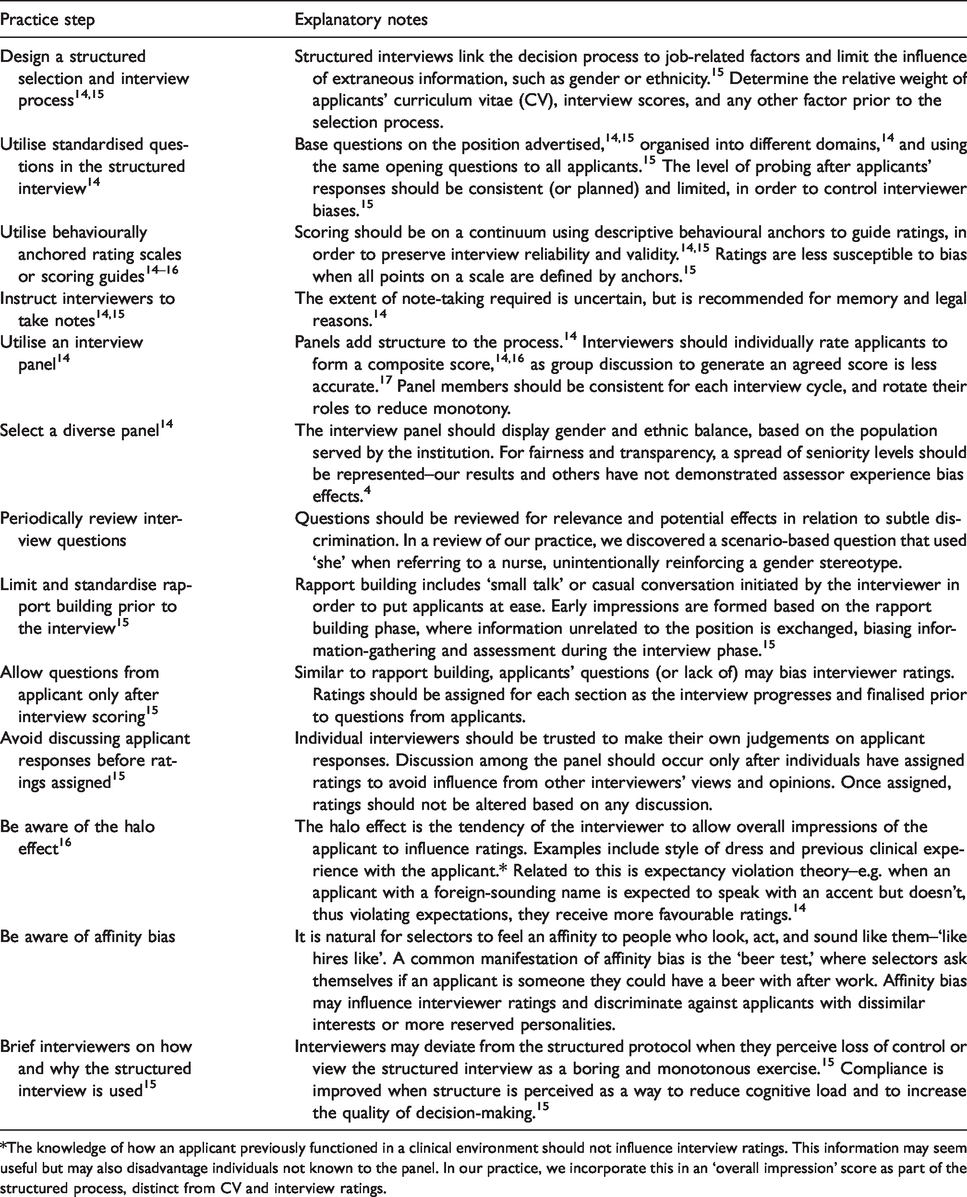

Whilst recommendations on avoiding systemic interviewer bias exists, this information is not embedded in clinical training and clinicians may not be aware of its relevance in selection processes. The concepts underpinning interviewer bias broadly apply to appointments of all clinical grades but some distinctions exist for senior appointments (i.e. Fellows and staff specialists). Institutional human resources departments concerned with junior doctors closely manage the selection process for interns and house officers. For selection into anaesthesia training, practices vary between training regions. Some organise a centralised process while others conduct local interviews. Interview recommendations from ANZCA for trainee selection are currently limited to maintaining formal documentation, administering questions related only to selection criteria and job requirements, implementing a ranking system, and ‘using processes based on employer policies’. 13 In our collective experience, the interview process for Fellow and staff specialist selection is conducted by clinicians, with minor input from human resources or management. This differs from the professional interview process conducted in corporate settings. Hospital policies on interviews may lack appropriate detail on systemic bias, vary significantly between institutions, frequently lack context, rarely explain their rationale and may not be conveyed to clinicians conducting interviews. Utilising comments from selected pertinent literature, we outline practical recommendations to reduce the effect of systemic bias in interviews in Table 4. These are summarised here for the convenience of readers, as a resource for conducting selection interviews for Fellows or staff specialists, and is not constructed from data obtained from our study.

Practical recommendations to reduce the effect of systemic bias in interviews for selection of advanced level clinical posts.

*The knowledge of how an applicant previously functioned in a clinical environment should not influence interview ratings. This information may seem useful but may also disadvantage individuals not known to the panel. In our practice, we incorporate this in an ‘overall impression’ score as part of the structured process, distinct from CV and interview ratings.

Limitations

This quality assessment study was conducted in a single institution with specific processes underpinning the interview and selection process, and its results may not be generalisable to all departments or settings. Whilst we cannot exclude the possibility of false negatives due to the exploratory nature of these analyses, the mean differences between groups were very small for most factors and are therefore unlikely to represent meaningful differences in other settings, even if type II errors did occur. Our panel interviewers were heterogeneous in profile and large in number, increasing the likelihood of our findings being generalisable if similar interview and selection processes were in place. This was an exploratory study, in which we assessed a number of factors for association with applicant ratings but did not adjust for multiple testing. Adjusted data findings should be interpreted with caution as statistical power in this study was limited. We therefore cannot rule out the potential of false positive findings, and our observed associations require confirmation in an independent dataset. It should be noted also that although we reported no significant interaction effects, our study had limited power to assess these. We did not investigate the effect of other factors such as applicants’ CV or job preference in the final selection and appointment process. The study investigated the effect of individual interviewers for the differences in ratings, with the implication that potential applicant success may be influenced by the makeup of the interview panel. Due to the lack of male managers in our sample, it is difficult to clearly distinguish the intersecting effects of interviewer role and gender. Other interviewer biases may have been present, but unable to be measured or investigated (e.g. affinity bias, halo effect). Applicants are motivated to create a positive impression during interviews. This ‘applicant impression management’ is positively correlated with higher interviewer ratings. 14 Honest impression management is when applicants actively portray a positive image without being dishonest, while deceptive impression management is a clear and conscious intention of being dishonest in the way they are portraying themselves. 14 Both could exist in the same interview situation. It is likely that all applicants employ impression management to a degree, with some to better effect than others, thus influencing the validity of our results in a manner that is difficult to quantify. Methods to measure honest and deceptive impression management are complex and resource-intensive. 14

Conclusion

An analysis of interview scores over a six-year period in our institution revealed an absence of bias across almost all potential sources. ANZCA and RCoA applicants were awarded higher ratings than applicants from other training programmes. After adjusting for applicant gender, a small interviewer gender leniency–stringency effect amongst clinicians was found. Being from one institution, these results inform discussion but require confirmation from other centres. Increasing the awareness of evidence-based interview practice and selection processes may reduce the effect of bias and increase fairness and transparency.

Footnotes

Acknowledgements

We acknowledge the Waitemata Anaesthesia Research (WAR) committee and the Pupuke Anaesthesia Trust for funding this study.

Author contribution(s)

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.