Abstract

Fellowship selection interviews evaluate candidates’ suitability for specialised anaesthesia training. Applicants are not commonly provided with specific details of questions or topics although these are often sourced from previous applicants. This study explored whether increased transparency in the interview process, by providing a list of discussion topics beforehand, impacts applicant performance and experience. Data from 91 applicant interviews over four years (2021–2024) were analysed. The traditional interview format was employed in 2021 and 2022. A novel format was introduced in 2023, in which applicants were provided with a list of 14 discussion topics 3 weeks before the interview. 2024 interviews reverted to the traditional format. Applicant performance was compared across the study period, with feedback collected from the 2023 cohort. No significant difference in mean interview scores or variance ratios was found between the novel and traditional formats, nor between local and non-local applicants. A total of 58.3% of applicants preferred the novel format, citing reduced anxiety and improved preparation. One-third preferred the traditional format, arguing that transparency might disadvantage those who typically prepare for interviews independently. Interviewer feedback indicated no perceived disadvantages from increased transparency, and probing questions effectively elicited detailed responses without making answers seem rehearsed. Providing applicants with interview topics in advance did not impact overall ratings but positively affected their experience by reducing anxiety and improving perceptions of the interview process. The findings support the implementation of transparency in selection interviews to enhance fairness and candidate experience without compromising the validity of the selection process.

Introduction

Anaesthesia fellowships are competitive positions focused on specific areas or subspecialties. In New Zealand and Australia, these are typically pursued in the final year of specialty training or immediately after completion of training. At our department, applicants are initially screened based on their curriculum vitae (CV) and invited to participate in a formal panel interview. The final selection is determined by a composite score, which includes the CV (35%), the interview (60%), and subjective overall impression (5%). A review of multiple applicant and interviewer factors from interviews conducted in our unit between 2014 and 2019 showed no evidence of bias in interview ratings. 1

Structured job interviews are essential for evaluating an applicant’s suitability for a specific role, particularly in medical training. These interviews are commonly used for entry into specialty training, fellowship positions at the end of specialty training, or for substantive consultant/staff specialist posts. The questions often assess various aspects of professionalism, teamwork, communication, and the ability to handle challenging situations. However, an applicant’s ability to provide a well-crafted response does not necessarily reflect their real-world behaviour. Likewise, a nervous applicant who struggles with a question might still be clinically competent, professional, and effective in team settings. There is no evidence suggesting that the ability to manage stress during an interview correlates with the ability to handle stressful situations in the workplace. Elevated anxiety levels during interviews are correlated with poor interview performance, impacting women more than men. 2

Applicants may have obtained interview questions from colleagues applying for the position in the same or previous cohorts. It is assumed that this knowledge confers an advantage and creates a disparity, as some applicants may not have equal opportunities to prepare for the interview or lack awareness about the required level of preparation. This scenario is analogous to only a selection of candidates having access to the syllabus before a high-stakes examination, and could lead to the rejection of otherwise competent applicants. Conversely, it could be argued that prior knowledge of interview questions in structured situational or behavioural description interviews should not inflate ratings because the answers are not always apparent to the applicant.

Additionally, fellowship interviews impose significant emotional and financial burdens on applicants, and result in lost clinical time.3–5 Adopting virtual interviews can address the financial burden of paying for travel, accommodation, and using precious leave allocation. 6 In our institution, virtual interviews have been offered to applicants for many years, and a review of ratings from 2014 to 2019 showed no significant difference between in-person and virtual modalities. 1 To address other equity issues, some US training programmes have provided various resources to their trainees, such as mock interviews and interview preparedness seminars.7,8 Commercial interview training for medical school selection is also common, with participants reporting increased confidence during actual interviews and achieving higher match rates.7,8 However, access to these resources is not universal, leading to significant disparities among applicants.

In our local context, it is neither feasible to prevent applicants from preparing nor to maintain complete confidentiality of interview questions while trying to ensure a level playing field. Using different questions for each applicant could reduce fairness, reliability, and validity.9,10 One approach to ensure a fairer process is to improve interview transparency. Transparency in structured interviews can be defined as the degree to which applicants are informed about specific requirements posed by the questions in the interview. 11 Transparency exists in a continuum. A non-transparent interview would be one in which applicants receive no indication at all as to the domains or criteria by which their responses will be rated. 11 Transparency may be introduced in a graded fashion, for example: (a) simple labels of domains being assessed; (b) domain labels plus a brief description of what they mean; (c) topics to be discussed; and (d) the actual questions that address the discussion topics.

This novel approach to selection interviews has not been reported in the medical literature. We hypothesise that improving interview transparency will allow all applicants to be better prepared and less anxious, providing a fair opportunity for everyone to showcase their abilities, without a detrimental effect on the validity of the interview as a selection tool. By reverting to a traditional interview format the following year, we can assess if the prior distribution of topics provides an advantage to locally based applicants.

Objectives of the study were to: (a) compare performance of the novel interview format with the traditional interview format; (b) compare ratings between local and non-local applicants in a traditional interview format after topic distribution the previous year; (c) compare applicants’ self-reported anxiety, stress, and confidence levels between the novel and traditional interview formats; and (d) obtain qualitative feedback from applicants and interviewers on the novel interview format.

Materials and methods

We obtained locality approval for this research from the Research and Knowledge Centre, Waitematā, Health New Zealand (RM15492). The requirement for formal ethical review was waived.

Our structured interview format evaluates applicants across four domains: abilities and experience, communication and teamwork, self-management, and contemporary issues. Each domain is scored independently by each of the four interviewers on a scale from one to five, resulting in aggregate scores for each applicant ranging from 16 to 80. Interviewers assign their scores independently, and discussions about individual performances only occur after ratings are finalised. Six provisional fellowship positions were available each year during the study period, open to those in their final year of training or immediately after completion of training.

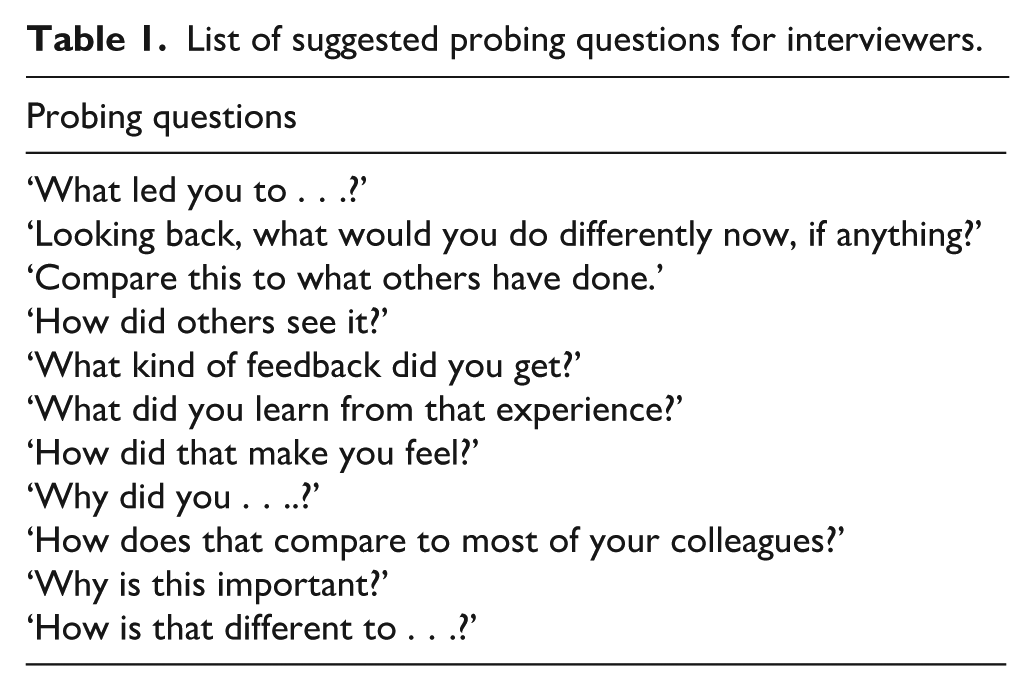

Following a review of our interview questions in early 2023, we reduced the number of questions in each domain from five to three or four. We added a list of generic probing questions for interviewers to use at their discretion (see Table 1). We designated questions prior to the review as ‘set A’ and post-revision as ‘set B’. The interviewer panel consisted of three clinicians and one manager from the department, all of whom had previous experience in conducting these interviews and were briefed on the changes to the format.

List of suggested probing questions for interviewers.

Three weeks prior to the interview, all applicants received an email introducing the selection panel and outlining 14 topics to be discussed during the interview. Follow-up contact was made to ensure applicants had acknowledged receipt of the email and the interview topics.

Applicants had the choice of an in-person or virtual interview. After each interview, once ratings were finalised, applicants were provided with a participant information sheet. For virtual interviews, this information was communicated verbally. The sheet emphasised that participation in the survey was voluntary, responses would be anonymous, and that participation/responses could not influence interview ratings or final selection. An electronic copy of the information sheet and survey link was emailed to applicants after all interviews were concluded and before interview outcomes were disclosed. The ‘anonymous responses’ option of the online survey collector (Survey Monkey) was activated, with no email or IP addresses collected. A follow-up invitation was sent via email to all applicants 2 days later.

We compared interview scores from applicants over a 4-year period. Interviews in 2021 and 2022 followed the traditional format with identical questions (set A). The 2023 interviews employed the novel format with revised questions (set B). The 2024 interviews used the revised questions (set B) but reverted to the traditional format. Applicants were classified as residing locally if they were part of the Auckland regional training programme.

Statistical analyses

Data analysis was conducted using Microsoft Excel to calculate summary statistics and create visual data representation. We compared performance between the two formats by examining the difference in central tendency and variance. We performed an independent samples t-test to compare means of different groups. An F-test was performed to compare the variance ratios between groups, supplemented by visual comparison of box and whisker plots. We used GraphPad statistical software to perform statistical analyses. A two-tailed P value of less than 0.05 was considered statistically significant.

Results

Interview ratings and performance

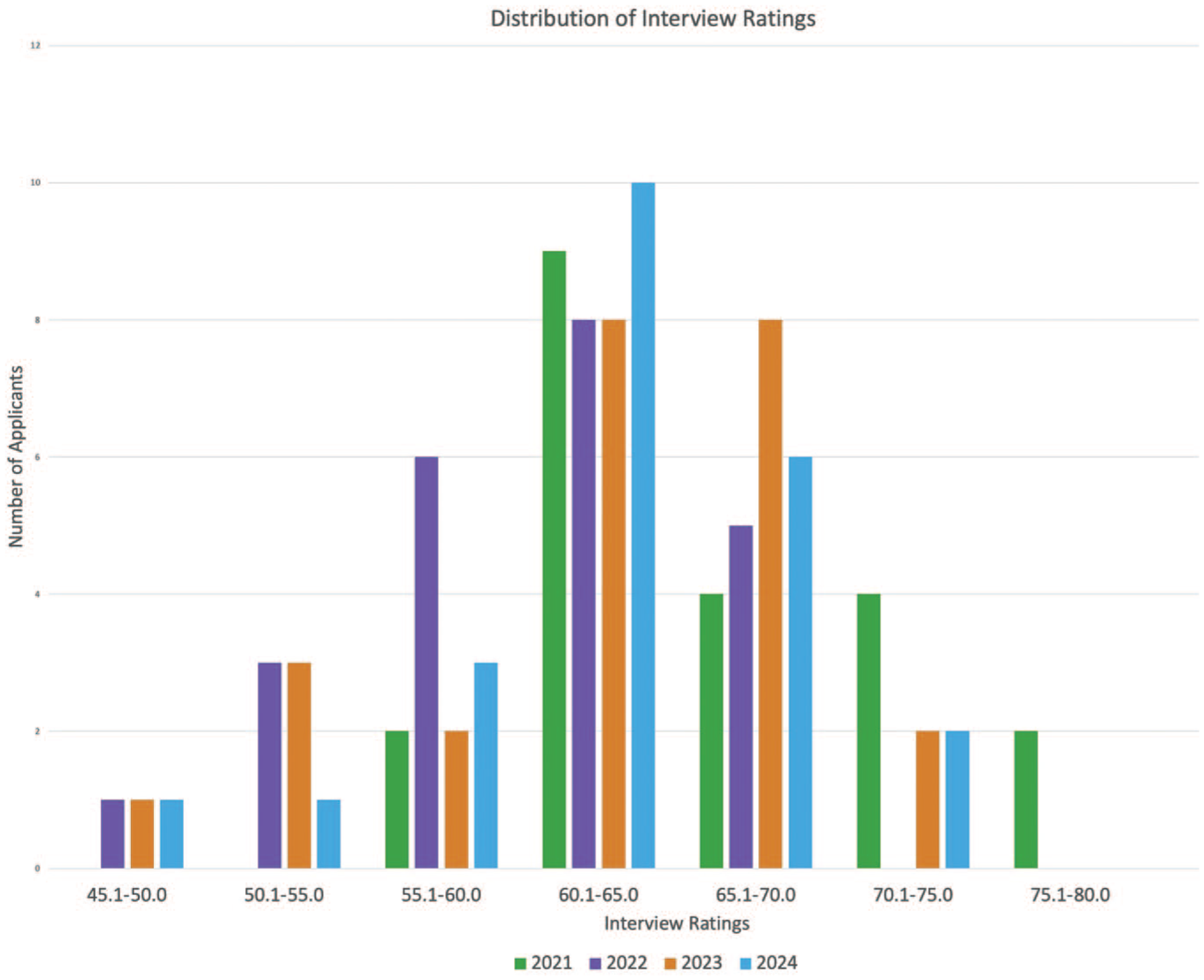

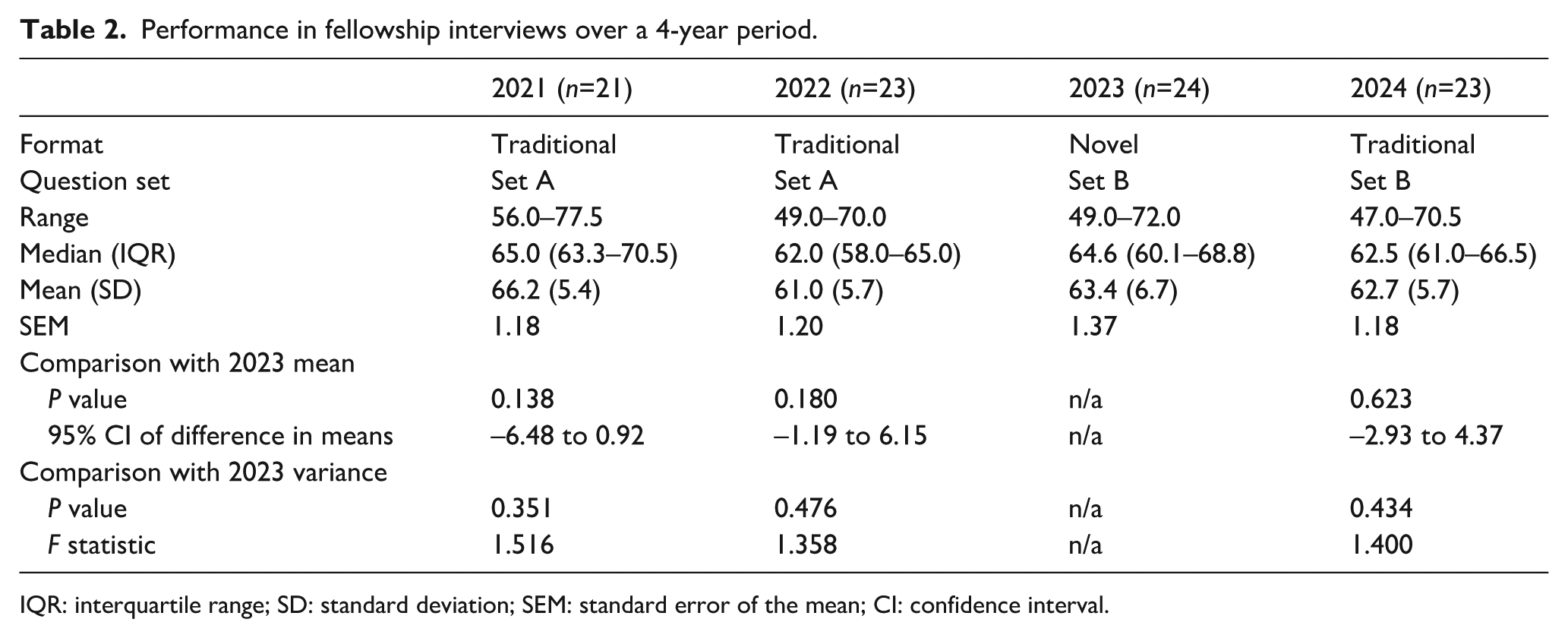

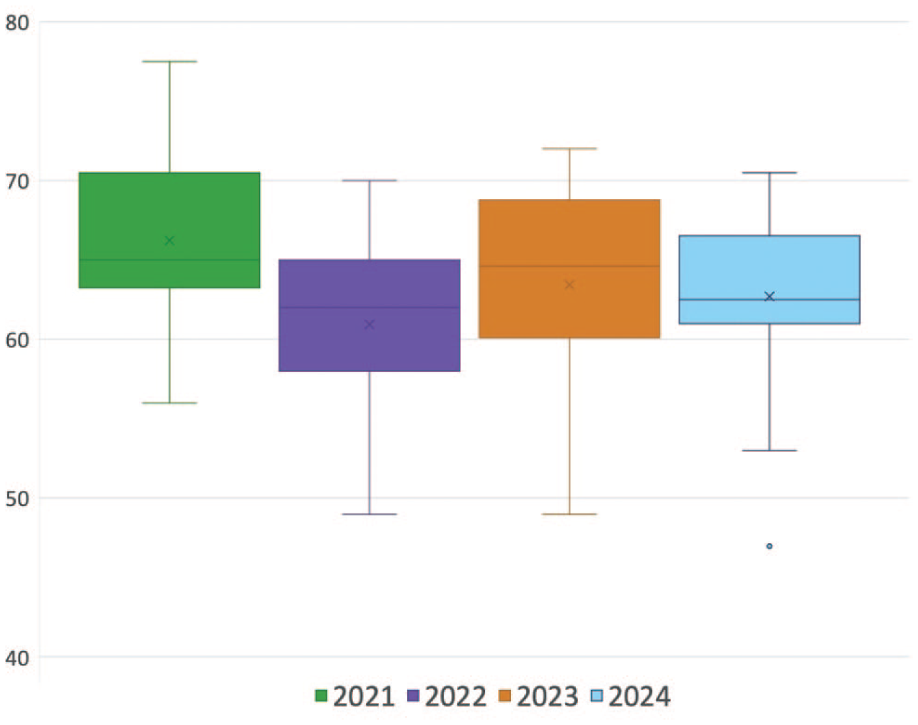

A total of 91 applicants were interviewed over the 4-year period. The distribution of interview scores was broadly normal across this timeframe (Figure 1). Overall ratings and performance are summarised in Table 2. There was no significant difference in ratings between the novel format used in 2023 and the traditional formats of 2021 and 2022, nor when reverting back to the traditional format in 2024 using the same new questions (set B). The spread of ratings within each cohort is visually represented in Figure 2.

Distribution of interview scores across four cohorts.

Performance in fellowship interviews over a 4-year period.

IQR: interquartile range; SD: standard deviation; SEM: standard error of the mean; CI: confidence interval.

Box and whisker plot showing variance of ratings within each interview cohort.

In the 2024 cohort, 13 applicants were local residents, while ten were from outside the region. There was no significant difference in mean ratings between the two groups (local 62.6 (standard deviation (SD) 5.3) vs. non-local 62.8 (SD 6.4), P=0.938, 95% confidence interval (CI) –5.25 to 4.87). This trend was similar to the 2023 cohort, when all applicants received topics prior to the interview, with 12 applicants in each group (local 64.1 (SD 7.1) vs. non-local 62.8 (SD 6.5), P=0.626, 95% CI −4.39 to 7.14). No significant difference in variance ratios was observed between these groups in 2024 (F-statistic = 0.700, P=0.554) or 2023 (F-statistic = 1.184, P=0.785).

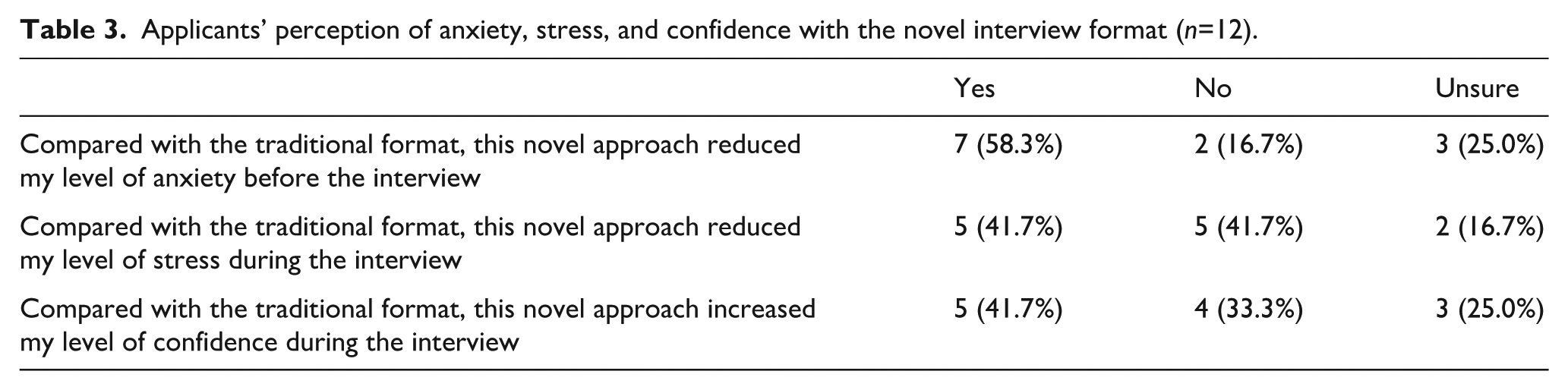

Survey results

Of the 24 applicants in the 2023 cohort, 12 (50.0%) provided responses to the survey. Among the respondents, 11 (91.7%) reported that receiving the list of topics prompted them to prepare for the interview. Most respondents had experienced traditional format interviews multiple times since completing medical school (five or more times: seven of 12 (58.3%), three to four times: two of 12 (16.7%), twice: two of 12 (16.7%), once: one of 12 (8.3%)). In contrast, ten respondents (83.3%) had never experienced the novel format before, while one had done so once and another twice. Table 3 outlines applicants’ perceptions of anxiety, stress, and confidence with the novel interview format.

Applicants’ perception of anxiety, stress, and confidence with the novel interview format (n=12).

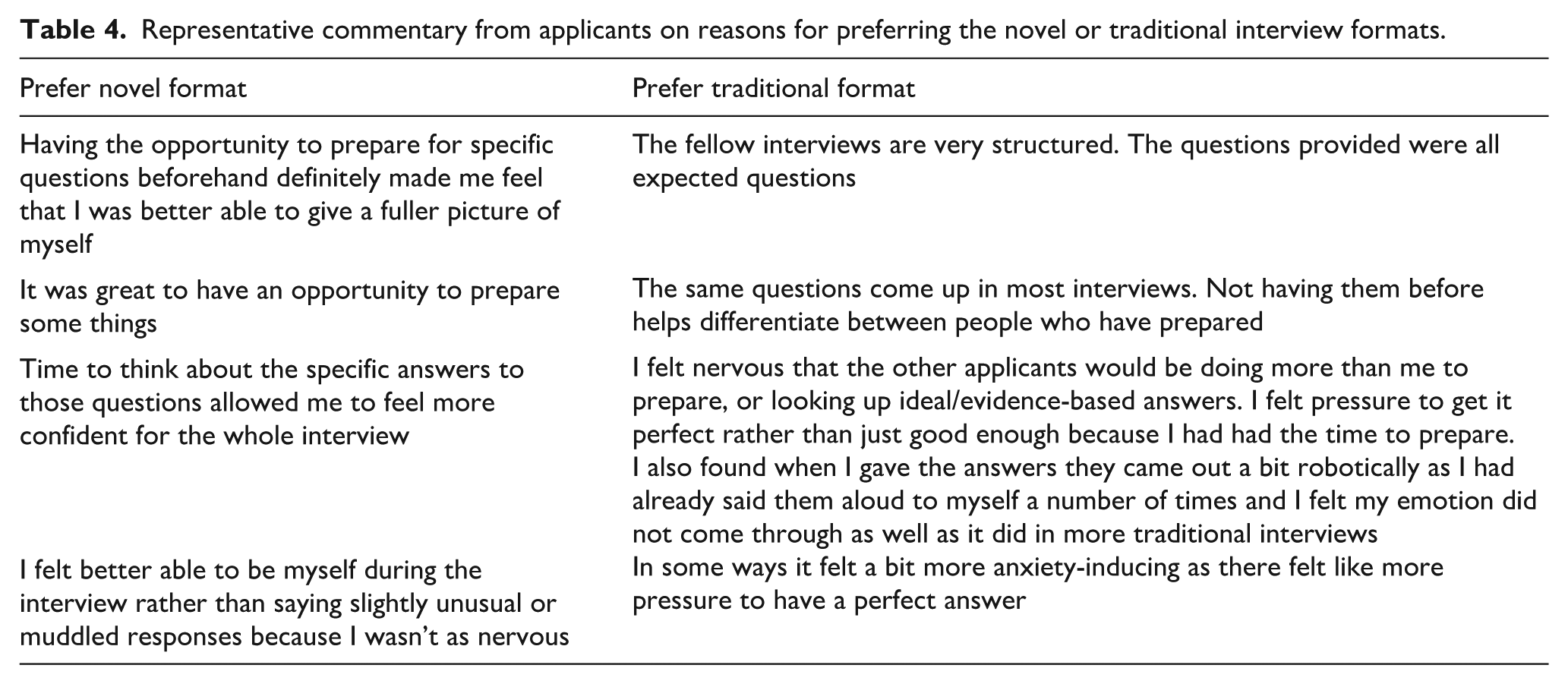

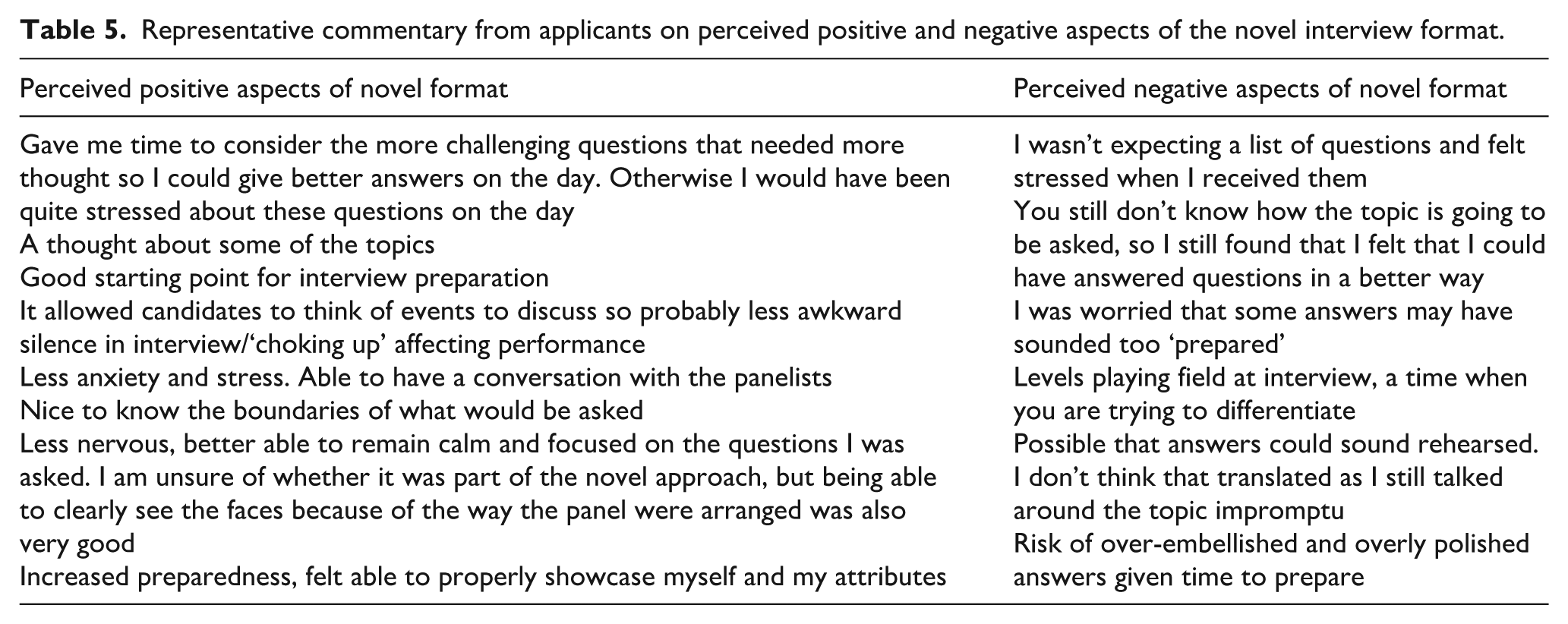

A small majority (seven of 12 or 58.3%) preferred the novel format, while a third (four of 12 or 33.3%) favoured the traditional format, and one respondent was unsure. Table 4 provides the reasons for their preferences. Table 5 outlines the positive and negative aspects of the novel format as perceived by applicants.

Representative commentary from applicants on reasons for preferring the novel or traditional interview formats.

Representative commentary from applicants on perceived positive and negative aspects of the novel interview format.

Three-quarters of respondents felt that using the novel format gave a positive perception of the department’s selection process, with the remaining respondents holding a neutral view. No respondent felt it gave a negative perception. Reasons for why selection using the novel format was perceived positively included tacit acknowledgement of the stress of a selection interview and attempts to address it; gave an indication of what the department values; gave an impression that the department was friendly, less intimidating, wanted the best for applicants, and was not bound by traditional approaches; helpful for those who had not considered interview preparation; and that the process was transparent, well-organised and compassionate.

Interviewer feedback

All four interviewers responded to the anonymous survey after the 2023 interview phase was completed. Two interviewers felt applicants displayed more confidence, while two thought there was no difference from previous years. All interviewers believed the novel format allowed applicants to show a genuine/authentic representation of themselves. None of the interviewers felt that applicants’ responses were ‘too rehearsed’.

Discussion

This study evaluated the impact of increasing transparency in anaesthesia fellowship selection interviews by providing applicants with interview topics in advance. Our findings suggest that this increased transparency did not significantly affect overall interview scores or the ability of interviewers to distinguish between strong and weak candidates. However, it did reduce self-reported applicant anxiety before the interview, while stress and confidence levels during the interview remained unchanged. While this was a small study and there will always be a range of views about changing an interview process, there is some indication that using this novel format may enhance the interview experience without compromising the ability to assess applicant performance effectively.

Our study is the first to explore the effects of increased transparency in medical selection interviews. In contrast to our findings, studies in non-medical settings have shown that prior knowledge of interview domains or questions can enhance performance. For example, one study involving 120 university students found that those with knowledge of the interview questions scored higher and perceived the process as more fair. 12 Similarly, another study with 123 university students demonstrated that applicants informed of the domains being assessed performed significantly better. 11 However, these studies involved interviews conducted for training or research purposes rather than high-stakes selection, which may explain the differing results. Another possible reason our study did not observe a performance difference may be our use of probing questions, which allowed interviewers to explore applicants' responses more deeply. However, the discretionary use of probing questions may have led to inconsistent application across interviews.

Survey responses indicated that most applicants appreciated the opportunity to prepare for specific questions, as it allowed them to represent themselves better. However, about a third of respondents preferred the traditional approach, arguing that transparency might disadvantage those who typically prepare for interviews independently. In other words, those advantaged by the existing lack of transparency perceived a disadvantage when transparency was improved. Some also felt increased pressure to deliver polished responses due to the expectation of having prepared answers.

Interestingly, the prior distribution of topics did not seem to provide an advantage to local applicants in the following year's traditional interviews using the same question set. This is based on the assumption that local applicants were more likely to have prior knowledge of topics while non-local applicants were unlikely to have the means to gain that knowledge. However, we did not directly ask applicants if they indeed had prior knowledge of the topics, which remains a limitation. In our setting, we are reassured that local applicants do not possess an unfair advantage by potentially having prior knowledge of interview topics.

It is vital to distinguish between transparency and coaching. While transparency aims to increase fairness by providing information equally to all applicants, coaching involves active training to improve performance through techniques such as modelling, skills practice, and behavioural feedback.11,13 Previous studies have shown that comprehensive coaching is associated with higher interview scores. 13 Our study did not address coaching explicitly. It is possible that coaching contributed to performance variations among applicants.

Feedback from interviewers was generally positive, noting no perceived disadvantage from the increased transparency. Probing questions were seen as effective in eliciting more detailed responses, and there was no concern that answers were overly rehearsed. However, the small sample of interviewers means these observations should be interpreted with caution.

This study has several limitations. It was conducted in a single department and our findings may not be translatable to other locations. The sample size was relatively small, especially compared with similar studies in non-medical settings. Our control groups were previous interview cohorts, and there was no randomisation. There is a risk of responder bias in the applicant survey. Additionally, interviewers were not blinded to the intervention, which could introduce bias. The study authors were on the interview panel. Finally, we did not control for the potential influence of coaching, and cannot comment on its prevalence across all four cohorts.

Conclusion

In conclusion, increasing transparency in anaesthesia fellowship interviews by providing a list of discussion topics in advance created a more positive experience for applicants without impacting their overall ratings. Applicants reported reduced anxiety and improved preparedness, and they perceived the department more favourably. These findings suggest that increasing transparency in traditional interviews could enhance the selection process, making it more applicant-friendly while maintaining its effectiveness.

Footnotes

Author contributions

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Disclosures

JF is the Fellowship Programme Director and NSS is a former Fellowship Programme Director.