Abstract

Online harms and the resultant safeguarding approaches are a key challenge for those working in the children’s workforce. However, safety narratives and a wish to prevent harm, rather than mitigate risk, have arguably caused a safeguarding environment that is neither mindful of children’s rights nor in their best interests. When supporting adopted and looked after children, there are some specific challenges that can result in further caution in supporting children in their use of digital technology. Empirical data presents observations on a professional environment where, with a dearth of training or policy direction, professionals are left to bring their own biases and beliefs into safeguarding judgements and, in the rush to protect, often forget the importance of working across stakeholders rather than trying to resolve issues independently.

Plain Language Summary

In this article, we explore the concerns of professionals around the support of adopted and looked after children, addressing fears of online harm. Drawing upon different perspectives (from legal cases, young people and professionals), we highlight a tension between the concerns of these professionals and the challenges that adopted and looked after children face in balancing the importance of online technologies to keep in touch with friendship groups and their offline world, while remaining safe. While clearly the safety of the children is paramount for the professionals, we highlight the potential harms that can arise from excessive concern and personal biases, which are unsurprising given the lack of national coordination and media narratives. We propose a stakeholder model to balance the concerns of those who have safeguarding responsibilities with the rights of the child.

Introduction

Teacher: They play these violent video games, then they’re violent in school.

Interviewer: No, there isn’t much evidence of that.

Teacher: Well, I’ve seen it.

Interviewer: There really isn’t – this is a causation policymakers and the media have been trying to show for over 40 years and there is no evidence of it existing.

Teacher: Well, that’s what I reckon. They shouldn’t be allowed to play them.

The above is a paraphrased conversation between the first author and a safeguarding lead at a school in 2020, which took place as part of a long-term research project in Cornwall (Phippen and Street, 2022). The professional was clear that they had observed those who engage in violent video games acting out this behaviour in their school. When challenged on the concept of causation and the lack of evidence, their view was unshaken – it was what ‘they reckoned’.

This opening comment is presented to highlight the challenges faced by professionals when tackling issues of online harms. As discussed in detail in Phippen and Street (2022) and, indeed, discussed with the practitioner, there is a propensity to bring opinions as facts into these debates even when presented with evidence to the contrary. As meta-analyses have shown (for example, Dill and Dill, 1998; Ferguson, 2015), there is a rich history of attempts to show causation between video-game violence and real-world aggression that have always failed. A lack of empirical data and methodological problems are frequent talking points in the literature (for example, Ferguson and Colwell, 2020; Gunter and Daily, 2012) which does explore video-game violence, with many attempting to show causation using small sample sizes and controlled environments that do not stand up to further scrutiny. Indeed, in our own empirical work (Phippen, 2016) there is a view among young people who identify as gamers that games such as sport simulations (for example, the FIFA series) and world building (for example, Minecraft) are more likely to result in aggressive responses.

Furthermore, if we are to apply critical thinking to this scenario, the argument fails – the Call of Duty franchise has sold well over 100 million copies. 1 If there was a clear causation between video-game and real-world aggression, we would expect to see significant if not epidemic levels of violent crime. Isolating specific variables in complex social environments rarely draws out the causations or even correlations the researchers might be looking for.

It should be clear, we feel, that safeguarding judgements should have their foundation in evidence and consistent responses, not what someone reckons. We use the above quotation as an illustration that in a lot of professional safeguarding decisions, feelings will frequently be used in response to a lack of guidance, evidence or training.

In this article, we consider more specifically how the world of online harms manifests among professionals working with adopted and looked after children. While the focus is on a mix of primary and secondary analysis of practice around online harms, we are mindful that simply presenting evidence falls short if we also wish to inform practice. As such, this article presents research findings with consideration of best practice responses, informed by the perspectives of young people.

In considering these issues, we begin by broadly exploring the literature around online safety against concepts of risk and harm. In light of this context, we argue that alignment with risk, rather than prohibition, is more constructive and, when informed by youth-focused literature, in more tune with rights-based discourse. In making this argument we draw evidence from a number of sources that focus upon the concerns of professionals who work with adopted and looked after children, in contrast with more youth-focused discourses. This evidence comes initially from an analysis of recent legal cases, reflecting the concerns raised in the literature around prohibitive motivations rather than a more inclusive approach that aligns with young people’s rights. These points are developed by drawing upon primary data sources from a variety of our own research activities which have explored professionals’ concerns and their wish to ensure that those with whom they are working are safe. We further consider the unique issues faced by those working with adopted and looked after children, exploring their personal motivations and how knowledge is developed against a dearth of training or policy direction.

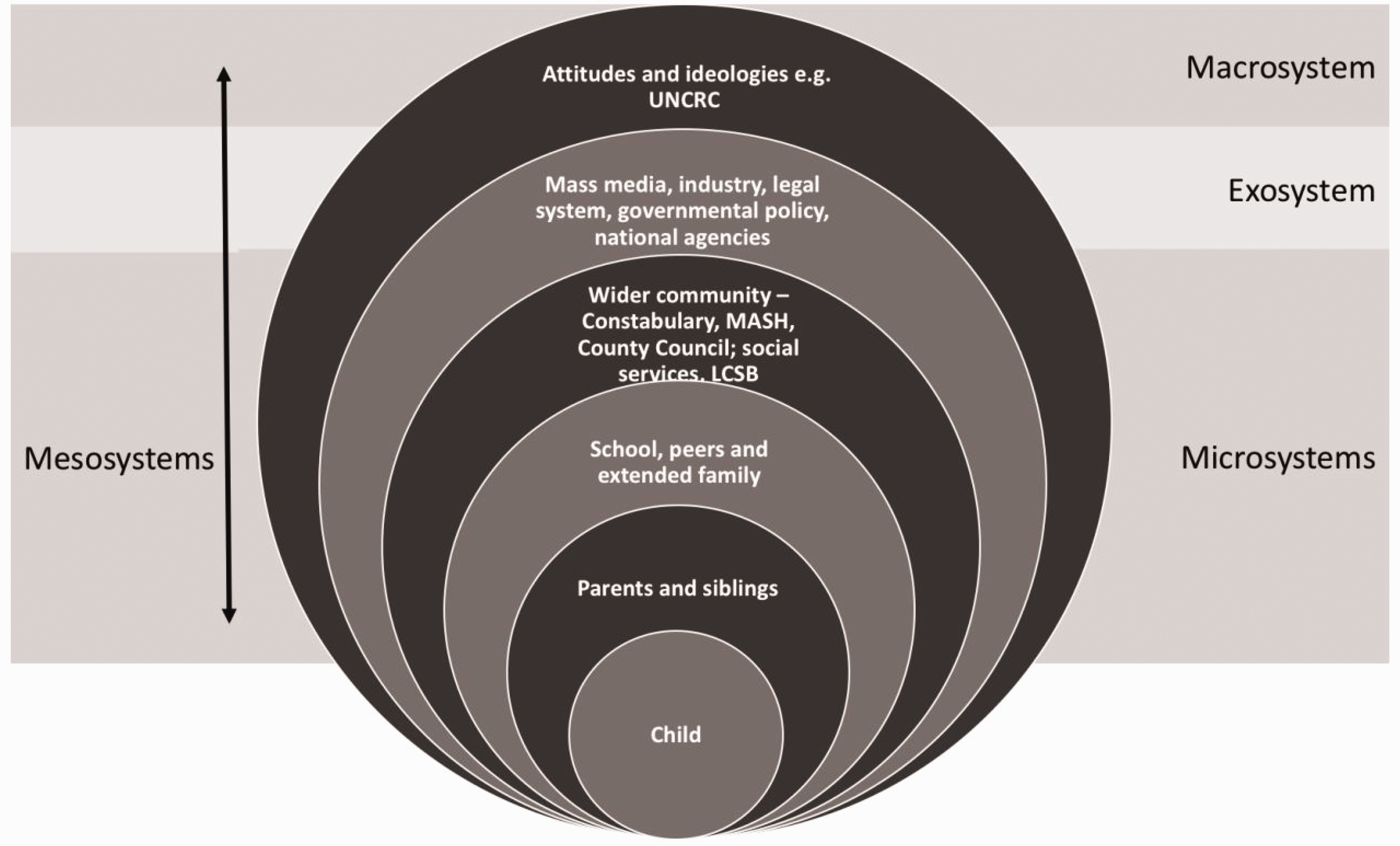

This is juxtaposed with the literature on young people’s voices and our own observations from such projects, which suggest that a more inclusive, rights-based approach will arguably address the potential for harm more effectively and promote working with vulnerable young people in a more inclusive manner. From this discussion, we present a stakeholder model that embraces the work of Bronfenbrenner (1979). Bronfenbrenner proposed an ecosystem of interconnections that facilitate the development of the child and highlighted the different roles of the various players in the system. This seminal work highlights the importance of different elements of the ecosystem interacting in order to most effectively support their development. While different elements may have greater or lesser roles, crucially, Bronfenbrenner’s work clearly showed that there is no one independent entity that ensures the positive development of a child. It is cooperative systems and the interactions between them that result in healthy development. Throughout this exploration of risks, concerns and responses to adopted and looked after children related to online harms, we propose that the multi-stakeholder approach and its co-dependencies are often forgotten in the rush to prevent harm, rather than mitigate risk.

Risk versus safety

The digital environment redefines the concept of vulnerability because it challenges conventional theories of place, identity and situation. This is something that Jewkes and Yar (2013) refer to as the problem of ‘who’ and the problem of ‘where’. In the digital world, traditional boundaries to vulnerability are removed – someone is not ‘safe’ because they have moved themselves from the environment where the harm might occur, as there are no physical boundaries to this in the online world. By taking away geographical boundaries, providing far more complex opportunity for anonymity, and presenting the potential abuser with the opportunity to access potentially millions of victims, the online world challenges social norms around harm and risk.

Rapidly changing technological landscapes have blurred the boundaries between public and private spheres and altered the contours of risk in relation to self identity (Bond, 2014). Yet as Stokes (2010: 321) observes: … conceiving of the ‘web’ as a dimension of reality rather than a separate space frees us from the traditional false dichotomies found in social science and the false analogy of ‘virtual’ and ‘real’.

The concern for safety in ‘virtual’ space is a ‘real’ one for those experiencing abuse, victimisation and online crimes. Yet arguably, the obsession with safety as a concept that ameliorates risk reflects a ‘Just say no’ culture that we have seen in other areas of public policy, for example around drugs awareness (Beck, 1998), which is now being challenged with the arguably more effective ‘harm reduction’ approaches in providing those who wish to engage in such practices with the education to make informed choices and take measured risk mitigation approaches (Ritter and Cameron, 2006).

However, we still see, as discussed below, a ‘Just say no’ culture permeating the discourse around online safety. ‘Surely the best way to reduce harm is to stop behaviours from occurring’ seems to be a common theme. For example, we have, many times, been asked by policymakers: ‘How do you stop teenagers sending intimate images?’, the rationale being that in a small number of cases such images may be passed on to others without consent, and the originator of the image might become a victim of abuse.

Risk-profiling, a central facet of modernity (Giddens, 1991), involves both human and non-human entities (Bond, 2014) and dominates both public discourse and private behaviours. The concept of risk is, according to Beck (1992), directly bound to reflexive modernisation, and we would argue that online risks are a prime example of his thesis on risk society. In late modernity, self identity has become, according to Giddens (1991), a reflexively organised behaviour in which individuals make choices about lifestyle and life plans. Concerns over identity theft, online scams, image-based abuse, fear of being stalked and harassed online or downloading a virus influence how we use the internet, how we behave online, what we post and share and why we invest in software to protect ourselves and others in our care.

Beck (1992) and Giddens (1990; 1991) and the risk society provide a useful theoretical framework for examining the contemporary world, its hazards and social change, which shape and influence political comment, policy direction and the lives and futures of individuals. They argue that the concept of risk has changed in late modern society from natural hazards to the unintended consequences of modernisation itself.

Anxieties and uncertainty related to the internet and associated developments in the form of mobile devices appear as consequences of technological advances. The concept of risk has dominated media discourses on mobile technologies and, while much of the reporting reflects determinist approaches (Bond, 2014), it is important to remember that the relationship between risk and vulnerability in virtual environments is actually a highly complex one. Risk is helpfully defined by Beck as: A systematic way of dealing with hazards and insecurities induced and introduced by modernisation itself. Risks, as opposed to older dangers, are consequences which relate to the threatening force of modernization and to its globalisation of doubt. (Beck, 1992: 21) … an idea in its own right relatively independent of the hazard to which it relates. Risk is thus understood in relation to perception that is generated by social processes – such as representation and definition – as much as it is by actual experience of harm. (Burgess, Wardman and Mythen, 2018: 2)

Online risk and vulnerability affect everyone. Online risks and responding to them connect the individual and society. However, the increasingly interventionist role of the state, as discussed below, plays a problematic part in transforming social, legal and cultural constructions of everyday experiences of risk through what Foucault (1977) identifies as ‘disciplinary networks’ for spatial control.

Many of the discourses on risk and online protection have been dominated to date by child protection concerns and constructions of childhood in late modernity based on notions of innocence, naivety and dependence. Policy initiatives are based on protectionist ideals derived from adultist perspectives that aim to prevent harm through control of access, monitoring of behaviour and formal and often legal restrictions. Jenks (2005) develops Foucault’s ideas of spatial control to suggest that the exercise and manipulation of space is a primary example of adults controlling children’s worlds, and he suggests that the postmodern diffusion of authority has led not to a democracy but to an experience of powerlessness, which is not a potential source of identity but a perception of victimisation. However, as Lee (2001: 10) argues, such ‘adult’ authority, writ large in online safeguarding, has been called into question: So far, then, we have seen that adult authority over children, the ability of adults to speak for children and to make decisions on their behalf, has been supported by the image of the standard adult. We have also briefly noted that there are good reasons to be suspicious of the degree of authority that adults have, and that, in the light of these suspicions, adult authority has become controversial. But beneath this controversy, widespread social changes have been taking place that are bringing those forgotten questions of whether adults match up to the image of the standard adult to the fore. In fact, these changes are eroding standard adulthood. Over the past few decades, changes in working lives and in intimate relationships have cast the stability and completeness of adults into doubt and made it difficult and, often, undesirable for adults to maintain such stability.

Analysing the attitudes of those who work with adopted and looked after children towards online safeguarding

In considering professionals’ and carers’ attitudes towards online safety, particularly in relation to adopted and looked after children, we move from exploring concepts of risk to how adults respond to online harms. We will draw upon both secondary and primary sources in this exploration.

It is worth noting that while there is a wealth of literature around online safeguarding (see review by Bond and Rawlings, 2018), there is far less focus in this literature on adopted and looked after children; the majority explores young people’s use of technology, rather than safeguarding concerns or professional responses. However, research that does explore adult concerns regarding looked after childern (for example, Badillo-Urquiola, Page and Wisniewski, 2019; Forenza, Bermea and Rogers, 2018) generally finds that those with responsibilities for these children will take the view that they are more at risk of harm and abuse than other young people. As a result, they will generally adopt a more conservative, and prohibitive, approach to care, and this sometimes results in a lack of access or excess monitoring (as we explore in the empirical discussions below).

However, studies that focus on young people’s voices see a far more positive attitude towards technology. For example, Denby, Gomez and Alford (2016) identified the importance of the device for staying connected with friends and peers and having contact with others when geographical distance might restrict physical interaction. Other studies, such as that of Hammond, Cooper and Jordan (2018), found similar positive attitudes towards the use of technology, which can also relate to interactions with professionals. What emerges from much of the literature presenting the youth voice (and which is reflected in our own findings) is that, similar to other young people, many looked after children view technology extremely positively, but they are more reliant upon it for connections and sustaining a ‘normal life’ than others. Rueda, Brown and Geiger (2019) spoke with young people in foster care who, like many others of their age, were making great use of technology in dating and forming relationships.

Recent Court of Protection judgements are a fruitful starting point for our discussion of the challenges of online harms with adopted and looked after children and the prohibitive professional mindsets that can follow. Case analysis, published as publically available judgements, allows us to evaluate the challenges faced in such extreme cases that judicial intervention is required. In each case, the care teams are so concerned about the behaviour of the young person in their care that they feel it is in their best interests to have access to the internet and social media withdrawn. In the present day, it could be argued that this constitutes a serious deprivation of liberty.

[2021] Ewcop 20

In this rather complex case, the young person, EOA, was 19 years old and had lived in foster care for five years. There were significant concerns around the conduct of EOA’s birth parents in relation to physical and emotional abuse, as well as extreme religious indoctrination and social isolation. In this case, which considered capacity on a number of issues, one argument from the care team was that there was a risk that EOA might be in contact with their abusive parents via social media and that they did not have capacity to appreciate the potential for risk in this context. A consultant psychiatrist also presented the view that EOA did not have capacity to engage with social media because they did not effectively demonstrate that they understood online risk. Therefore, the default position presented by professionals was that in order for EOA to remain safe, they should not have internet access.

In the development of the judgement, it was clearly articulated that while EOA did not demonstrate a good understanding of the risk, this might be the result of them not being presented with the opportunity to understand. Furthermore, the judgement made it clear that a distinction should be made between general social media usage and the use of social media to contact parents. This is significant and important: it would seem that the initial position was that because they might engage with one risky practice online, it was better just to prevent them from going online altogether.

The final judgement was that EOA should be allowed internet access and be given the opportunity to learn about the potential risks of online contact with their family, rather than having access withdrawn.

[2021] Ewcop 28

In this case, the young person, DY, had also been in foster care for a number of years and was 18 years old at the time of the ruling. They had been diagnosed with chromosomal duplicities, fetal alcohol spectrum disorder and had a moderate learning difficulty. They also, in the view of a clinical psychiatrist, had developmental trauma disorder or complex post-traumatic stress disorder. Among other challenges to capacity, the care team had, again, argued that DY did not have capacity to use the internet or social media, evidenced in that they had engaged in sexually graphic message exchanges at school and in their foster home, which were considered to be inappropriate by the care team.

However, in the ruling it was stated that a person must be assumed to have capacity unless it is established that s/he lacks capacity, as defined in section 1 of the Mental Capacity Act 2005 (UK Government, 2005). The judge, Knowles J, stated: I have also reminded myself against imposing too high a test of capacity, as to do so would run the risk of discriminating against persons suffering from mental disability and thereby deprive them of autonomy.

In the final ruling, Knowles J concluded that DY did have capacity and a care plan was a better outcome for them to mitigate risk, rather than prevention through deprivation of liberty.

Both this case and the one outlined previously are drawn from extreme concerns, and undoubtedly the respective care teams presented their cases with the best of intentions. However, what is clear is that the decision to argue lack of capacity here did not come from an informed position; reading court proceedings shows care teams’ bias and discomfort with the potential behaviours of those in their care. While many professional decisions do not end up in the Court of Protection, they can have implications for those whom we wish to ‘protect’.

Conversations with looked after children

We have throughout our work adopted what Kellett and colleagues (2004: 330) refer to as a rights-based agenda, in that the ‘perspective of children as social actors places them as a socially excluded, minority group struggling to find a voice’. In the development of our model of online safeguarding, presented at the end of this article, we strongly reiterate the importance of rights frameworks as a fundamental aspect of any safeguarding decision, alongside the importance of interconnections among stakeholders in a child’s welfare. In building an evidence base around this model, we present below excepts from our discussions with looked after young people to highlight the issues they face when using online technology, including its benefits, and the tension between their views and those of professionals.

In 2016, we gathered the views of looked after teenagers in the Suffolk region of the UK (Bond and Agnew, 2016), exploring their relationships with digital technology and how they felt adults might better support them. Young people were contacted through the local authority and engaged with both online methods such as virtual scrapbooks and more traditional hour-long focus groups that were recorded and transcribed. The qualitative data drawn from this study, which was subject to rigorous ethical scrutiny by the University of Suffolk, allowed us to consider online harms.

‘It allows me to keep in touch with friends regardless of where I’m placed’ (young woman, 15)

Unsurprisingly, the feedback from the young people matched other studies. While expressing some concerns around the potential for abuse and upset, they were clear that having access to digital technology and online services was a good thing. In some cases these young people had been in several placements in different locations, and their phones and digital services were a ‘lifeline for normality’; while they may not be able to physically meet up with friends, they could, at least, use digital technology to communicate and have relationships with their peers.

‘Adults tend to overreact, we know the risks and don’t need our phones checking’ (young man, 16)

The view of some was that, for looked after children, access to technology was more important than for young people with a stable, less transitory home life and essential for maintaining social capital. They acknowledged, of course, that digital technology could create risk, but most concerns were about arguments and fall-outs with peers, rather than the typically ‘extreme’ worries of adults with caring responsibilities. In relation to grooming concerns, most were of the view that they were aware of the risks and took steps to mitigate them, such as ensuring they only connected with friends or friends of friends, and that they were resilient to requests from strangers.

‘Someone I know is tracked by their foster family, that would do my head in’ (young man, 16)

However, one of the most significant negatives for these young people was the ‘paranoia’ of carers and professionals around their use of social media. Adults, we were told, tended to overreact not to concerns expressed by the young people themselves but to things they had ‘heard about’. As such, they anticipated that the young people would engage in harmful behaviours and would excessively monitor or, in some serious cases, take devices away from them – an issue reflected in the legal cases we have discussed above. The young people viewed this as placing them at greater risk even though they were not doing anything to justify the concerns for the withdrawal of a device in the first place: without the device and connection to support networks, they might fall in to using the phones of others or looking to obtain sufficient money to get access to devices through legal or illegal means.

Professional perspectives

Drawing parallels once again with drugs policy, where there have been growing calls from academics and clinical professionals to have policy and practice informed by evidence rather than hyperbole (Wood et al., 2010), we can see a great deal of practice and decision-making in online safeguarding conducted against a dearth of evidence. The quote at the start of this article demonstrates that, in some cases, a professional will rather go with their ‘gut feeling’ than the evidence if it contradicts their personal views. We would argue that a paucity of training and formal knowledge development around online safeguarding results in biases and opinions formed on instincts and reactions to stories in the media.

We would also argue that there is a lack of national policy to guide care professionals on these issues. The recent UK government update to the Children Act 1989 for looked after children (UK Government, 2021) makes just a single mention of social media: 3.216 The Care Planning, Placement and Case Review (England) Regulations 2010 (as amended) require that each looked after child’s placement plan must make clear who has the authority to take decisions in key areas of the child’s day-to-day life, including:

medical or dental treatment; education; leisure and home life; faith and religious observance; use of social media; and any other areas of decision-making considered relevant with respect to

the particular child.

There is nothing about training or professional development around online issues. Therefore, we might argue that this document actually makes things worse – care workers are told that they are required to make decisions around looked after children’s use of social media, but there is no expectation for them to receive training on these issues.

In recent work with a local authority in South East England, we surveyed 156 care professionals to get an understanding of the knowledge of professionals in two geographic areas, their training experiences and their perceived needs around online safeguarding. Working in partnership with practitioners in the local authority, and at their request, we conducted both an online survey and discussion groups with the professionals. These professionals were broadly within the children’s workforce. Some specifically worked with adopted and looked after children, and most had some case work with those elements. While the study is described in far more detail in Bond and Phippen (2022), there are a number of salient issues related to our discussion in this article. The struggle the local authority experienced in getting professionals to carry out the survey (even though the survey gatekeeper was a colleague) was of note. We explored this in our follow-up discussions with a group of 15 professionals in the local authority. Key comments around the lack of response included:

Some did not see why this survey would be relevant to their role. Some had not had to support anyone regarding online safeguarding issues so did not see any need to do the survey. Some were so busy/overloaded they did not have time to do the survey, although they did take the time to respond to say they felt it was important. Some felt that as they had done some training, they did not need to respond.

We are sure there is nothing unique about these findings. Overworked care workers without time for personal and professional development are, in our experience, not unusual. However, of those who did respond it was clear that many of these professionals dealt with complex cases in supporting clients. These included:

engaging with strangers online, or wanting to make friends online; exchanging intimate images online with friends or strangers; people wishing to meet friends they had met online and, in some cases, doing so; providing education around online harms and differentiating between real friends and online friends; managing individuals’ online privacy; online connections moving to sexual relationships; general issues of cyberbullying and abuse; financial exploitation and coercion; making capacity assessment for individuals considered at risk of harm online.

However, perhaps most interesting was a comparison in confidence of knowledge and access to training. Just over 95% of respondents said that they had at least ‘some’ knowledge and understanding of online issues. Almost 60% said that they had ‘good’ or ‘excellent’ knowledge. Respondents were also, generally, at least ‘somewhat’ confident they could support people they worked with around issues of online harms, with 88% of respondents expressing this view.

When asked about training and professional development, only 41% of respondents said they had received any training around online safeguarding issues at all. Furthermore, when probed on the nature of the training, it included topics such as:

cybersecurity; data protection handling; fire safety; IT training; Child Exploitation and Online Protection Unit (CEOP)

2

ambassadors training.

Of all the respondents who described their training, only two mentioned either discussion groups or case study analysis – both of which are very important when developing knowledge in this area (Bond and Dogaru, 2019). This begs the question: if knowledge is not developed from training, then where does it come from?

In the follow-up discussion group a lot of professionals were honest in saying that because they used these technologies themselves, they felt confident that they could support others. However, when discussed further, it was generally acknowledged that this was not a solid foundation of knowledge upon which to make safeguarding judgements.

We further followed up with professionals who saw working in adoption and foster care as their main role. They were drawn from the commissioning local authority and two local authorities in neighbouring locations. In total, we spoke with 15 professionals in small group discussions (comprising three to five professionals).

Our key conclusion from these discussions was that there is much concern among professionals around the use of social media by adopted and fostered children. We also posed the questions of whether these concerns arose from actual case work, were things discussed among professionals or were a result of what they had come across in the media. In the majority of cases there were not specific issues they had faced as professionals, although two disclosed that they had worked with looked after young people where foster families had raised concerns about their use of social media, one related to contact with birth family and one related to the sharing of ‘inappropriate’ images. Furthermore, a number said that concerns had been raised around screen time and ‘constant’ or excessive use of technology by foster carers.

It was clear from these discussions that whether based on actual concerns raised, hypothetical worries arising from discussions with fellow professionals or personal opinion, there were some relevant factors that we might consider to be typical in our work across the children’s workforce, such as:

accessing inappropriate content, such as pornography, images of peers and violent/graphic content in games; inappropriate contact/grooming: carers and professionals were not sure who young people were in contact with and were worried they might be being groomed by strangers; excessive screen time/social media use/gaming: while no professionals had a clear quantification of the term ‘excessive’, there was some discussion around concerns from carers and the professionals themselves that young people they work with were ‘constantly’ on their devices. When we explored this further, there was an acknowledgement that ‘constantly’ is probably an exaggeration, given time in education, doing sports, interacting with peers, and so on; sending/sharing intimate images: unsurprisingly some professionals raised concerns about young people taking intimate images and sharing them either with partners or peers. When asked about concerns around these behaviours, some talked about the risk of further distribution and coercion/pressure to send images, while others were mainly concerned that the practice was illegal; ‘cyberbullying’: this term was used frequently as a ‘catch all’ for all manner of child-on-child abuse or abuse by strangers in environments such as gaming. There was broad concern about exposure to abusive discourse, such as in multiplayer gaming.

However, the discussions also raised concerns specific to adoption and fostering including:

adopted and fostered children having unrestricted contact with birth families via social media, bypassing contact agreements; those who have fostered or adopted children risking exposure of the child by posting on social media themselves; friends of adopted and fostered children posting their images and details, which might result in identification and access by birth families; birth parents posting information about, for example, custody cases online.

As already touched upon above, it was clear that a lot of the professionals’ concerns were not a direct result of interactions with young people but inherited from others.

When asked about training specific to online safeguarding, a third of the professionals (five out of 15) said that they had received formal training. For those who had received training, this was in all cases a typical ‘two-hour’ presentation by an external speaker, with little chance for discussion or consideration of case studies. For the majority, therefore, their knowledge was developed from a mix of personal experience with digital technology, discussions with professional peers and things they had read in the press or online. There was a general agreement that this was not ideal but that they were responding to genuine concern for young people. In some cases, there were concerns about children and young people engaging in illegal activity and not being put off these behaviours, with the view that telling young people something is illegal should be sufficient for them to stop.

We also discussed their frustrations about the lack of national co-ordination around support for those working with adopted and looked after children. Therefore, those with caring responsibilities for the child were left to make decisions in a policy vacuum. And while professionals knew that something needed to be done, they were not sure what that something might look like. In one particular example, a professional talked about the risk of engaging in sensitive online issues with a young person, without the support of national policy or at least a senior manager. In this case, the professional raised concerns that a young person aged 16 was asking about accessing pornography and how they might go about this. The professional’s view was that the young person would be doing something illegal, and it would place them at great professional risk to give any advice other than ‘Don’t look at it, it's illegal’. We discussed that, in fact, wishing to access pornography is a very typical behaviour for a 16-year-old (for example, see Horvath et al., 2013; Office of the Children’s Commissioner, 2023). We were reminded of Knowles J’s comments, stated above, about setting a higher bar for the vulnerable than for others, and we pointed out to the professional that a 16-year-old accessing pornography is not breaking the law. Furthermore, giving practical, risk-focused advice on staying on mainstream platforms with legal content was better than the alternative of a young person deciding to access sexual content using dark web technologies to avoid detection, which might result in them accessing illegal content, such as extreme pornography or images of child abuse.

There was also some discussion of how technology might be used to either monitor access or ensure that the young people could not access ‘inappropriate’ material. While no participants in the groups stated that they did this themselves, they were aware of professionals who would create fake social media profiles to connect with young people in their care so they could ‘monitor’ their behaviour online.

There was certainly a prevailing view that some form of monitoring is best to ensure that young people are not breaching contact agreements or engaging in risky online behaviour. Some talked of scenarios where professionals would ‘spot check’ devices or ask for access passwords or codes so devices could be checked whenever carers or professionals wished. While some felt uncomfortable with these sorts of practices, there was a view among most professionals that the risks are so high that such practices are sometimes justified in order to reassure themselves that the young people are ‘safe’. However, we should reiterate that professionals who had worked with young people in relation to specific online harms were very much in the minority. While all professionals shared these concerns, most had not worked with young people who had anything but positive relationships with technology.

When we discussed the literature and our own experiences with both looked after young people and others, who express both the positive role (within reason) that technology plays in their lives and the frustration with adults ‘freaking out’ about the potential risk, the professionals could see this perspective. However, there was also a view that young people do not understand the risks, which is why adults have to intervene in ways which, arguably, impact upon their human rights. When challenged upon whether these concerns were sufficiently valid to encroach on a young person’s privacy, the general view was that it was necessary. However, with further discussion some professionals did acknowledge that monitoring might be excessive and perhaps not conducive to building the most effective and trusting relationships with young people.

Implications for practice and conclusions

We would argue that there is a lack of understanding in the stakeholder space because of a lack of understanding of the stakeholder space itself (Bond and Phippen, 2019). It is little surprise that the wider stakeholder space remains so poorly informed without sufficient national guidance. In our own discussions, we have observed increasingly frustrated professionals wanting to do something but in need of more formal guidance and permission to do so.

We have, in recent years, defined a stakeholder model for online child protection, which is an adaptation of the seminal work of Bronfenbrenner (1979) and his ecological framework of child development. Perhaps the most significant aspect of Bronfenbrenner’s model was the importance of ‘mesosystems’ – the interactions between the different players in child development. Arguably, this is something we have lost sight of in the online safeguarding world. By adapting this ecosystem for online safety, we can see both the breadth of stakeholders’ responsibilities for safeguarding and how the stakeholders interact (see Figure 1).

A stakeholder model for online safeguarding.

The value of the model is that it shows the many different stakeholders involved in online safeguarding and the importance of interactions (mesosystems) between them, as well as the distance a given stakeholder is from the child they wish to safeguard. In our discussions with professionals, many would say that they felt they needed to ‘solve’ the safeguarding issue on their own, rather than adopting a more holistic view of working with others to support the young person in their online risk-taking and decision-making.

There are many microsystems around the child, with which the child directly interacts, before we even approach the role of technology in this safeguarding model. From the broad policy perspective, the focus of solution provision, and also social responsibility it would seem, is entirely within the exosystem. And while we drive our expectations of responsibility at this layer, we lose focus on the roles in the microsystem or the fact that encompassing all of this – the macrosystem – should be the rights of the child. For adopted and looked after children the microsystems will be extended to include care teams, adoptive parents and foster carers closer to the child than they might be for a young person in a more typical familial situation. However, the microsystems still interact, and it is important than professionals are mindful of the broad stakeholder space, rather than feeling they need to provide all of the solutions themselves.

Within this model, we have defined the United Nations (UN) Convention on the Rights of the Child (CRC) (1989) as the fundamental macrosystem around which the entire stakeholder space is enveloped. This should be in any practitioner’s decision-making or care choices, so that they ask themselves: is this decision in the best interests of the child and does it negatively impact upon their rights?

The recent UN comment on the CRC in digital environments (Committee on the Rights of the Child, 2021) attempts to focus thinking on the provision of platforms and the responsibilities of nation states in supporting young people in digital environments. The digital focus is to be applauded and reiterates an inclusive, supportive approach where young people are entitled to, for example, freedom of expression, access to education, rights to privacy and a right to identity, which states should be mindful of when implementing policy solutions around online harms. It is, therefore, disappointing that the UK Government continues to believe in a model that ignores the rights of the child and adopts a prohibitive mindset, intended more to challenge platforms than to support children (UK Government, 2022).

Children’s rights (and a cognisance of them), are arguably still the weakest aspects of online child safeguarding. They are sometimes viewed as a barrier to solutions, rather than the foundation of policy development or practice innovation. There can also be a view that, when it comes to safeguarding, we have to accept an erosion of children’s rights. We should be clear that this is not the view of the young people we have spoken with.

How this looks in terms of best practice will be a mixture of education, support and negotiation with the young person. Rather than them being told that something is being imposed upon them ‘for their own good’, providing them with a care route that informs them of potential risks, and provides them with risk mitigation strategies, is far more in line with what young people desire than prohibitive approaches.

Professionals need to bear in mind that most of the time, the majority of young people’s experiences with digital technology are positive. As looked after children have told us, they arguably have a greater reliance upon technology to stay connected with friends and peers and see it as a touchstone for ‘normality’ when their life might be somewhat migratory and disrupted. The last thing they need is to have their device taken from them as a result of professional concern. Far better, they tell us, that we provide them with support and help them if they need guidance. They will raise concerns if they know they will be listened to.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research/authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.