Abstract

Music’s power to trigger memories has rarely been tested; in particular, it is not clear what mechanisms govern memory retrieval elicited by musical cues. Previous research has suggested that memory retrieval is underpinned by two mechanisms: (1) distinctiveness—the probability that a cue will retrieve a memory declines with the number of stimuli previously encoded with that same cue and (2) incongruence—a cue encoded with emotionally incongruent targets triggers more memories of the stimuli associated with it than a cue encoded with emotionally congruent stimuli. Our participants experienced an implicit encoding phase where they were presented with auditory-visual pairs of stimuli (pieces of music and images of facial expressions). In the retrieval phase, participants were asked to remember encoded stimuli triggered by music. As expected, musical cues encoded with emotionally incongruent facial expressions triggered more memories than cues encoded with congruent facial expressions. Contrary to our prediction and to previous findings, music from distinctive pairs of stimuli triggered fewer memories than cue pairs displayed multiple times in the encoding phase. Our finding suggests that the manipulation of stimuli at encoding is crucial when using musical cues to trigger memories.

The remarkable power of music to trigger memories is intuitively familiar. Even when one randomly presents excerpts from popular songs, one can expect that, on average, three excerpts out of 10 will elicit a personal memory in healthy individuals (Janata et al., 2007). Popular songs selected on the basis of participants’ preferences would logically trigger a higher percentage of memories; 72% in the general population according to the recent study by Michels-Ratliff and Ennis (2016). Evidence from five case studies suggests that patients with acquired brain injury report more autobiographical memories in response to music than verbal prompts (Baird & Samson, 2013). Several studies have investigated the capacity of music to enhance memories in individuals suffering from dementia, which is believed to be governed not by familiarity, but by arousal and mood, according to the arousal-mood hypothesis (El Haj et al., 2012; Foster & Valentine, 2001; Irish et al., 2006). For instance, listening to classical orchestral music—Vivaldi’s

Studies of music-evoked memories have also addressed the content of such memories (Cuddy et al., 2017; El Haj et al., 2015). For instance, it has been suggested that music elicits more self-defining memories (concerning oneself; Singer et al., 2013), rather than personal semantic memories and autobiographical episodes, which, according to Conway and Pleydell-Pearce (2000), refer to general knowledge about oneself and of specific events, respectively (El Haj et al., 2015). Earlier studies have also shown that music triggers more remote and medium-remote life eras than cafeteria noise (Foster & Valentine, 1998). In their study, Irish et al. (2006) used Kopelman, Wilson, & Baddeley (1991) semi-structured interview, which captures autobiographical memories over the course of three life periods (childhood, early adult life, and recent life). Moreover, music-evoked memories were rated by participants as highly vivid and involuntary (El Haj et al., 2012; Jakubowski & Ghosh, 2021). For instance, in the study by Belfi et al. (2016), music evoked more vivid autobiographical memories than facial expressions did.

Music as a cue to memory has mostly been studied with diary methods wherein participants are asked to record their memories in response to a given musical cue (e.g., Ford, 2011; Jakubowski & Ghosh, 2021). For instance, Jakubowski and Ghosh (2021) asked their participants to record details of music-evoked memories in a diary. Despite their value, diary methods do not control the encoding memory phase; it is not clear what mechanisms govern the memory triggered by musical cues.

Why does music have the astounding power to evoke memories? As mentioned earlier, music can sound familiar and be associated with emotional reactions. However, other factors evidenced in the literature can influence music induced memories. It has been suggested that stimuli perceived as different from the context trigger more memories than “ordinary” stimuli (Berntsen et al., 2013). It has been also suggested that stimuli that are emotionally incongruent to the situation trigger more memories than congruent stimuli. Guided by our research question on how the manipulation of certain variables in the encoding phase affects memories cued by music, we will present the

Distinctiveness

It has been suggested that music’s capacity to trigger episodic memories may be facilitated by the frequency of which we listen to it (Jakubowski & Ghosh, 2021; Janssen et al., 2007). However, several studies have demonstrated the opposite effect; auditory stimuli presented multiple times triggered fewer memories than auditory stimuli presented just once (e.g., Berntsen et al., 2013; Mieth et al., 2015; Staugaard & Berntsen, 2014). The authors explain that effect by means of the principle proposed by Watkins and Watkins (1975) that claims that a memory declines based on the number of stimuli previously encoded with a given cue.

The distinctiveness effect on memory has been presented in a series of studies with auditory stimuli (Berntsen et al., 2013). It has been suggested that distinctive auditory cues (i.e., cues heard once in a particular context) increase the depth of memory recording. Conversely, non-distinctive stimuli (heard many times and thus associated with different events) lessen it. To illustrate this effect, one might remember a scene from the movie

We aimed to investigate whether the distinctiveness effect would appear in response to musical cues; in other words, would distinctive musical excerpts lead to more productive memory retrieval than non-distinctive musical excerpts? This knowledge will contribute to an understanding of whether the findings of previous research (Berntsen et al., 2013; Staugaard & Berntsen, 2014) are generalisable to other types of stimuli than neutral sounds. The evidence that the distinctiveness effect determines memories triggered by music may also be used in developing psychological interventions aiming to enhance memories in different populations (e.g., for those suffering from anxiety and depression or Alzheimer’s patients).

Congruence

When reviewing studies on congruence and memory, different research areas can be distinguished: (1) acquisition of vocabulary, when stimuli (music) match the words (Fritz, Schütte, Steixner, Contier, Obrig, & Villringer, 2019; Koelsch et al., 2004; Steinbeis & Koelsch, 2008); (2) congruence related to the detection of an imbalance between a product of first necessity (food) and its description (Mieth et al., 2015); and (3) congruence in the social area (communication) when stimuli (verbal, audio) match a particular behavior or emotion (for a meta-analysis, see Stangor & McMillan, 1992).

In the first area,

In the two other areas mentioned earlier, the contrary effect has been suggested: contrasting or incongruent events are better remembered than congruent ones. In the earliest studies with non-musical stimuli, it was argued that certain behaviors perceived as unexpected or incongruent with a general impression about a person are better remembered than behaviors perceived as unsurprising or congruent (Stangor & McMillan, 1992). According to Hastie and Kumar (1979), the extent to which behaviors are congruent with the impression of an individual determines “informativeness” and impacts the depth of cue encoding. A similar effect revealed in pioneering studies—the

One might also wonder whether memories triggered by music are governed by the (in)congruence of the cue associated with a stimulus (e.g., joyful music associated with a sad facial expression versus joyful music presented with a happy facial expression). We expect the incongruence to facilitate recall because the music presented is heard along with socially important information, these being facial expressions. Incongruent pairings will be inconsistent with the participants’ expectations and therefore facial expressions from these will be better retrieved than facial expressions from the congruent pairings. To our knowledge, no past studies have attempted to answer this question. The rationale for this question is to grasp the role of incongruence between music and other stimuli within a social situation.

Voluntary and involuntary retrieval

Recently, a growing body of research has attempted to differentiate episodic memories triggered spontaneously (involuntary memories) or deliberately (voluntary memories; for example, Ball & Little, 2006; Schlagman & Kvavilashvili, 2008). Involuntary memories have been defined as memories that come to mind without any retrieval attempts, while voluntary memories do need such attempts. Voluntary memories are described as “directive” and “social” and respond to a certain aim that is present “now,” while their involuntary counterparts refer to distant life events and do not have such a specific function (Rasmussen et al., 2014). That difference in function might be explained by the assumption that voluntary memories are underlined by top-down cognitive process that favors memories relevant to the life story and/or current goals. In turn, involuntary memories characterized by reduced executive control and thus less goal-oriented. Moreover, involuntary memories are evoked faster than their voluntary counterparts (e.g., Staugaard & Berntsen, 2014); that estimate is in line with the notion of effortless retrieval process in case of involuntary memories. Most studies investigated them though diary methods wherein participants were asked to note and write down memories that cross their mind in a spontaneous way. However, it has been established that the most robust method is to invite participants to go through an attention demanding task and record involuntary memories (e.g., Giambra, 1989; Smallwood & Schooler, 2006). For instance, Berntsen et al. (2013) asked their participants to pay attention on a bright yellow star and to press

To gain a more comprehensive understanding of episodic memories triggered by musical cues, we replicated Berntsen et al.’s (2013) memory paradigm and extended it to musical cues. This paradigm addresses the limits of diary methods by allowing two types of memory retrieval to be captured while controlling the information presented in the encoding phase. In our experiment, the neutral auditory stimuli (e.g., sound of a bell, a chainsaw, and an engine) were replaced by the excerpts of film music (Eerola & Vuoskoski, 2011). The visual stimuli (IAPS; Lang et al., 2008) were replaced by the images of facial expressions, as these are among the most common stimuli encountered by participants (face-to-face interaction).

First, we postulated that distinctive musical cues would increase the frequency of memories of previously encoded images in comparison to repeated stimuli. Second, in accordance with the incongruence effect, we assumed that cues from incongruent pairs (e.g., sad music paired with a happy facial expression) would trigger more memories than cues from congruent pairs (e.g., sad music paired with a sad facial expression). Finally, we expected that our participants would recall more memories in involuntary group than in voluntary group. We also expected shorter retrieval times for involuntary than voluntary memories.

Method

Participants

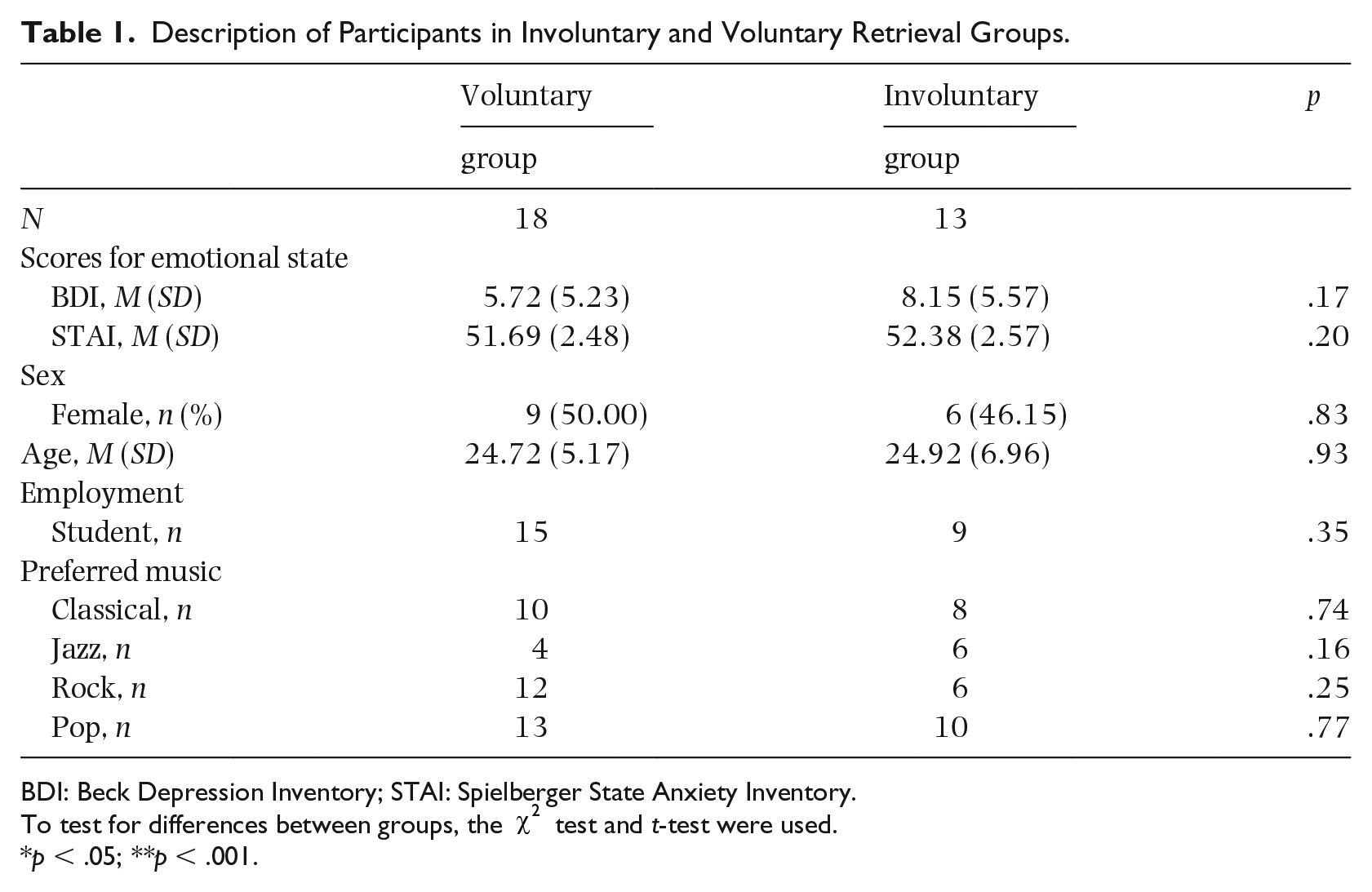

Thirty-one fluent English-speaking participants (

Description of Participants in Involuntary and Voluntary Retrieval Groups.

BDI: Beck Depression Inventory; STAI: Spielberger State Anxiety Inventory.

To test for differences between groups, the

The sample size was defined with a power analysis run with G*Power 3.1.9.2. (2014) (Universität Kiel, Kiel, Germany; c.f. Faul et al., 2007) in order to obtain a 90% chance of detecting an increase in the primary outcome (distinctiveness effect), with an alpha level of 5%. The effect size from the previous study was used for the power analysis (partial eta-squared

The study was approved by the ethics committee of the Department of the Psychology of the University of Geneva. All participants signed a consent form and were treated in accordance with ethical principles guiding participation and personal data protection.

Materials

The memory task was developed with Inquisit 4.0.8.0 (2014; Millisecond Software, Seattle, WA). Thirty-eight musical excerpts, validated for their emotional valence and arousal, were obtained from Eerola and Vuoskoski’s (2011) data set. Our choice was based on a type of musical excerpt, we chose film music which aims to accompany scenes, establish atmosphere, and, according to Eerola and Vuoskoski (2011) is an ecologically valid material. Images of facial expressions were selected from the extended Cohn-Kanade data set (Lucey et al., 2010). The data set provides images of facial expressions coded for facial action units and emotion labels validated with respect to the Facial Action Coding System Investigators Guide (Ekman, Friesen, & Hager, 2002). Both musical excerpts and images of facial expressions were happy and sad.

In the encoding phase 32 trials were created. Our program picked happy and sad musical excerpts in a random order and paired them with happy and sad images of facial expressions. As a result, we had emotionally congruent and incongruent sorts of stimuli. Also, eight happy and eight sad musical excerpts were distinctive because they were heard by the participants only once. In the retrieval phase, 36 musical excerpts were presented: 18 familiar ones, already heard in the encoding phase and 18 unfamiliar ones, selected from the same categories of the stimulus set. Images of facial expressions were presented on a computer with a 19-inch (48 cm) LCD monitor set, taking up 50% of the screen.

Procedure

The procedure is based on the above-described memory paradigm (Berntsen et al., 2013; Staugaard & Berntsen, 2014). The BDI, the STAI, and demographics were measured at the beginning of the experiment.

Implicit encoding phase

Participants were presented with 32 auditory-visual pairs. Each trial was displayed for 4 s, with each facial expression presented centrally on the computer screen; the music was played through headphones. Participants were instructed to pay attention to both visual (facial expressions) and auditory (music) stimuli.

Retrieval phase

The retrieval phase was completed in either a voluntary or an involuntary condition. Thirty-six musical excerpts were presented: 18 familiar ones (already heard in the encoding phase) and 18 unfamiliar ones, selected from the same categories of the stimulus set. Music was played for the same duration as in the encoding phase.

In the

Dependent variable

Dependent variable was represented by self-reported memory of initial stimuli (images of encoded facial expressions triggered by musical cues). In other words, were operationalized the dependent variable as the number of instances where participants thought that they remembered an image of facial expression being paired with a given music excerpts. This operationalization is different from the study of Berntsen et al. (2013) wherein participants’ responses were noted as either correct or incorrect. We made this choice because false memories were not in our interest and we believed that in non-clinical individuals their potential presence would not influence the results.

Statistical analysis

To assess the impact of the type of emotion and type of facial expression on memory, we entered observations at the stimulus level in a multilevel logistic regression, with a random intercept at the individual level. This type of mixed model assesses nested structures (e.g., multiple stimuli for a single participant), thereby providing accurate parameter estimates with acceptable type I error rates (Boisgontier & Cheval, 2016). Mixed-effects models do not require an equal number of responses from all participants, which means that participants with missing observations need not be excluded (Raudenbush & Bryk, 2002). The independent variables included were (1) emotion of music (happy vs. sad), (2) congruence of music and image (congruent vs. incongruent), and (3) distinctiveness of music (distinctive vs. non-distinctive). Analyses were done using

Results

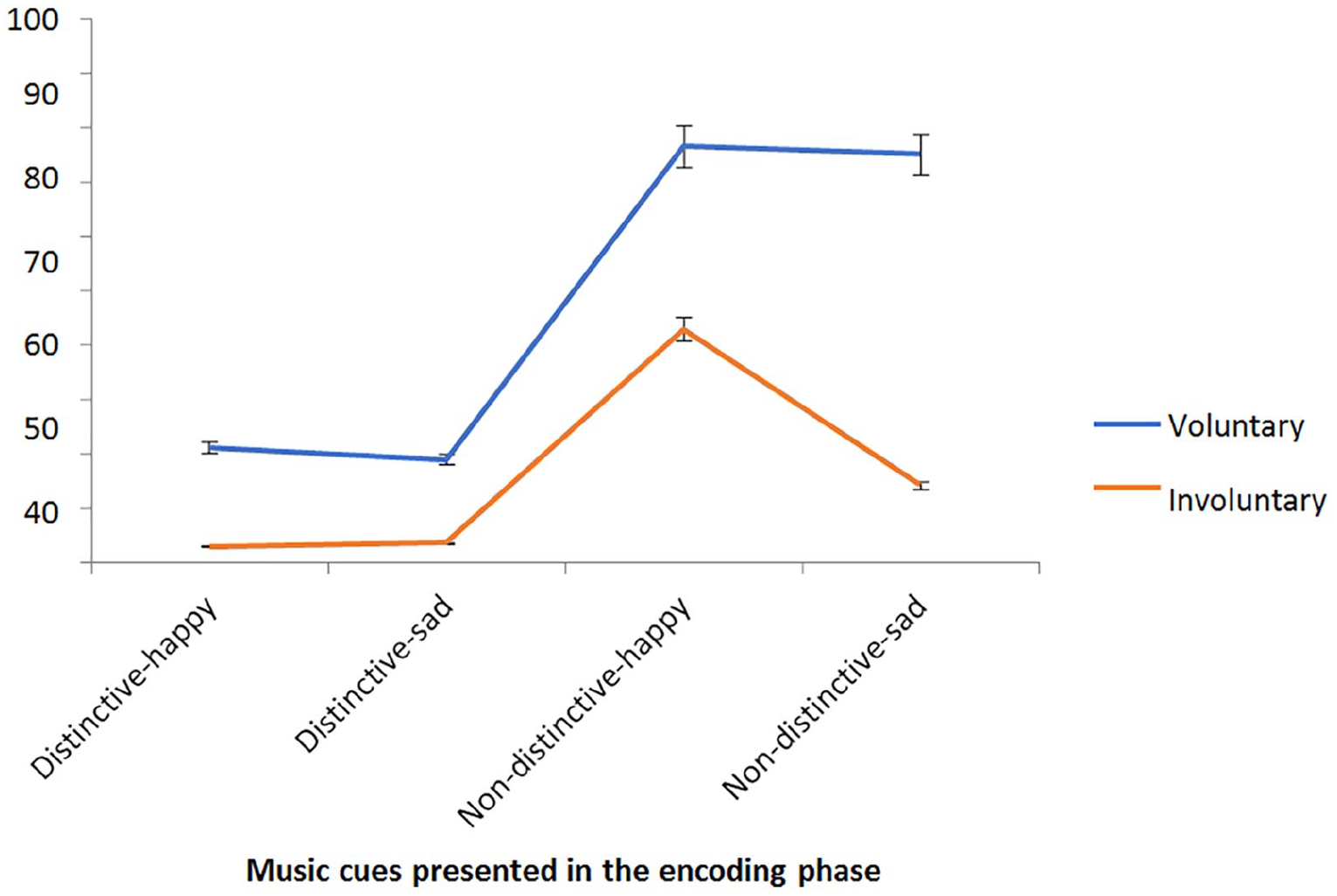

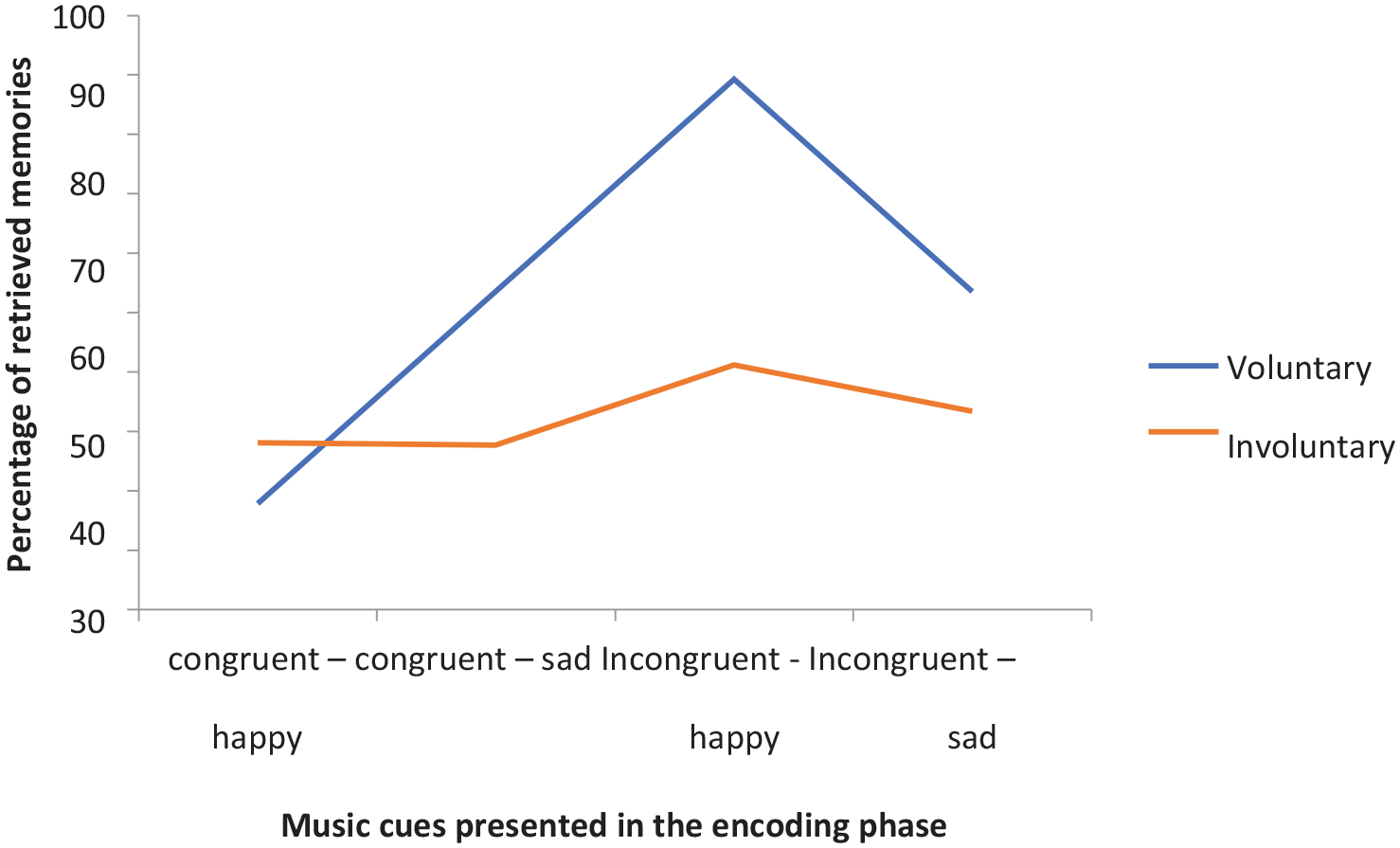

We calculated the relative percentage of retrieved memories, referring to how many images of facial expressions a cue would retrieve compared to non-retrieved images. First, we conducted univariate analyses for

Percentage of Memories Retrieved by Distinctive and Non-Distinctive Musical Cues.

Percentage of Memories Retrieved by Congruent and Incongruent Musical Cues.

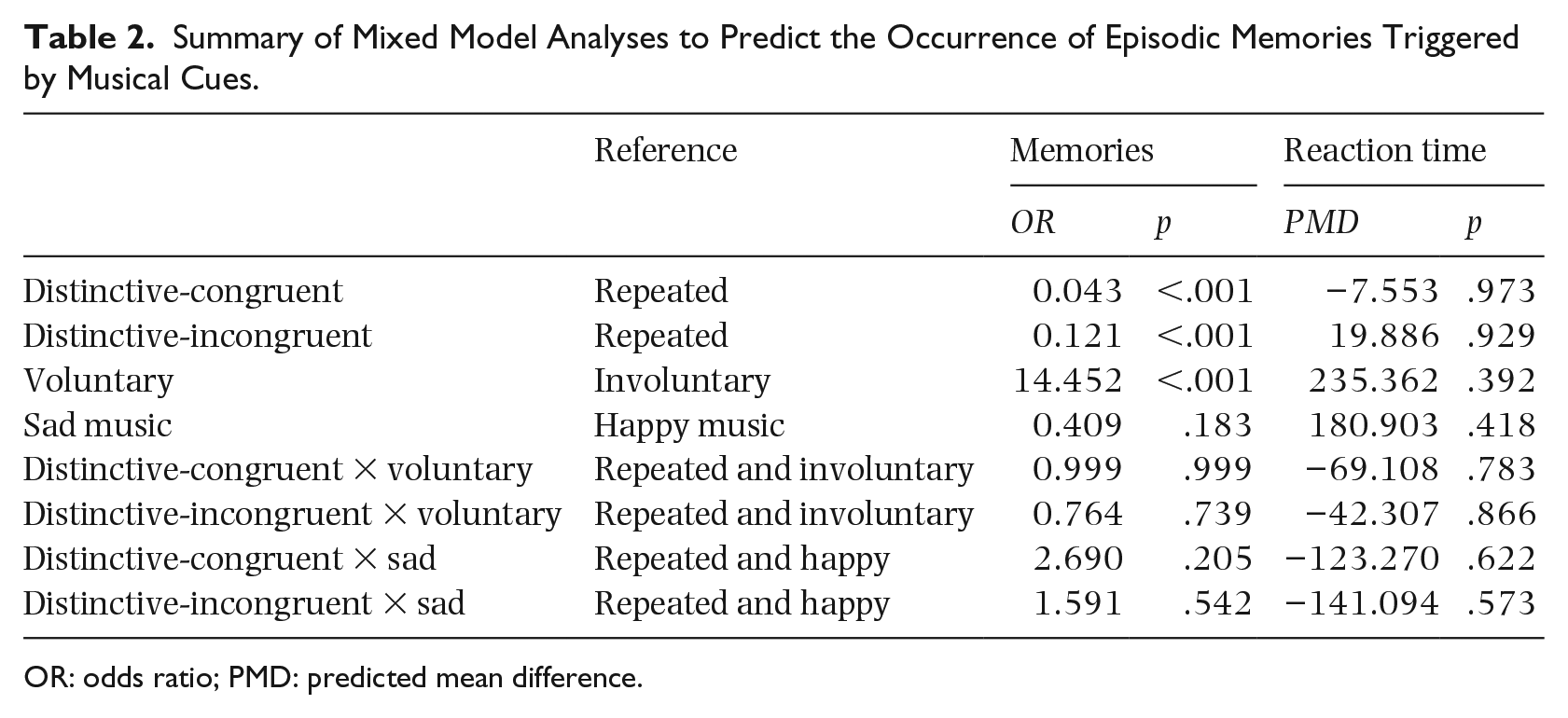

Including all variables in the model, the effects of distinctiveness and congruence remained significant (

Summary of Mixed Model Analyses to Predict the Occurrence of Episodic Memories Triggered by Musical Cues.

OR: odds ratio; PMD: predicted mean difference.

Discussion

In order to enhance the current knowledge on cognitive processes underlying memories triggered by music, in this study, we implemented an experimental design with a controlled encoding memory phase. We were particularly interested in two memory effects recently emphasized in the research on auditory and visual stimuli but not previously tested with musical stimuli: the

In this study, no interaction has been found to be significant. We will present our results on 1-Distinctiveness effect, 2-Incongruence effect, and 3-Voluntary

Distinctiveness

The distinctiveness effect refers to the assumption that cues with unique associations (music heard in association with an event) trigger more memories than non-distinctive cues (music listened to every morning). In a contrast to our initial hypothesis about the

Congruence

The incongruence effect suggests that cues encoded with emotionally incongruent stimuli (joyful music with images of sad facial expressions) trigger more memories than cues encoded with emotionally congruent stimuli (joyful music with images of joyful facial expressions). In accordance with the second hypothesis, our results revealed that memories triggered by music are governed by the incongruence effect. In other words, incongruent musical cues (sad music presented with a happy facial expression in the encoding phase) triggered more voluntary and involuntary memories than congruent musical cues (sad music presented with a sad facial expression). No valence effect was found (sad vs. happy). These findings are new in the research field aiming to further our understanding of the complex nature of music and the cognitive processes that guide memories triggered by music.

We suppose that this effect is contrary to semantic congruence, demonstrated with musical excerpts (Fritz et al., 2019; Koelsch et al., 2004; Steinbeis & Koelsch, 2008), because music is associated with socially important information (facial stimuli). It can be viewed as similar to the data provided by studies examining the incongruence effect with non-musical stimuli. It has been documented that we have an ability to detect and memorize better discordance between a product of first necessity (food) and an associated description. For instance, Mieth et al. (2015) observed that participants are better at retrieving images of unappetizing-looking food encoded with appetizing descriptions than images presented in a congruent way.

In our opinion, a facial expression cued by music that was emotionally incongruent with it in the encoding phase would be more easily retrieved than a facial expression cued by congruent music, because here we are dealing with socially important information. Over the centuries, music has accompanied social important events (ceremonies). Its properties can show empathy, for example, by using a perceived sad tonality to accompany a loss (funeral); transmit acceptance by starting and finishing melodies with the tonic (in music, this is the note upon which the other notes of a musical piece are hierarchically organized); emphasize a joyful event; or calm anxiety. (For a meta-analysis, see Panteleeva et al., 2018.)

It has been evidenced that behaviors perceived by participants as incongruent with a general impression of a person are better retrieved than congruent behaviors (Hastie & Kumar, 1979; for a meta-analysis, Stangor & McMillan, 1992). According to the explanation provided in the study, the extent to which behaviors are incongruent determines perceptual distinctiveness, which, in turn, increases the depth of cue encoding. This effect has also been documented for facial expressions (Light et al., 1979). The inconsistency effect is interpreted as reflecting the difficulty comprehending inconsistent items and thus their longer retention in working memory. During that period, inconsistent items are associated with other information in order to help with the interpretation. Therefore, inconsistent items are linked to more information than consistent items and these “additional” associations could serve as a retrieval cue (Srull et al., 1985). We might suppose that in our experiment music from “inconsistent” pairs of stimuli (joyful music paired with sad facial expressions) led to a search for new information that could explain that incongruence (e.g., perceiving the facial expression as a fake).

Voluntary and involuntary retrieval

Our study also attempted to capture and differentiate between two types of memory retrieval: voluntary and involuntary retrievals. We found the larger number of memories in the voluntary retrieval group than in the involuntary retrieval group. This result is in line with the data provided in the study by Staugaard and Berntsen (2014).

Limitations, future research, and clinical applications

This study replicated the experimental design used in Berntsen et al.’s (2013) studies and extended it to musical cues. Our dependent variable was operationalized as the number of instances where participants thought that they remembered an image of facial expression from the encoding phase. We have not checked whether the memory was correct or not. Therefore, further studies should capture false memories and associated with them factors. However, we asked our participants to describe their memories and we obtained quite detailed descriptions (e.g., “a young happy guy with short hair,” “a curled hair redhead guy with a great smile on his face”). Therefore, we consider that the manipulated valuables in the encoding phase influenced the memory retrieval.

The findings of our study should also be considered in light of their potential clinical applications. The restorative capacity of music has been highlighted in recent studies (e.g., El Haj et al., 2015; Ratovohery et al., 2018). Moreover, it has been documented that memories triggered by musical cues are more vivid and contain more detail than memories triggered by other memory cues in Alzheimer’s patients (Foster & Valentine, 2001; Irish et al., 2006) and in non-clinical samples (Belfi et al., 2016). We have added the finding that, as in social psychology, an incongruence effect is observed when using music excerpts and facial expressions.

Recent studies emphasize the effectiveness of Neurologic Music Therapy (Impellizzeri et al., 2020; Thaut & Janzen, 2019). Neurologic Music Therapy consists of two techniques: (1) Associative Mood and Memory Training and (2) Music in Psychosocial Training and Counselling. Associative Mood and Memory Training involves using music to induce a mood that accompanies episodic memories, and particularly autobiographical memories. That mood state is viewed as a process that underlies memory retrieval; through receptive (listening to music) or active (singing) music techniques; an individual experiences a shift in mood or an increase of mood. That in turn activates an associative memory network and generates access to memories (Impellizzeri et al., 2020). The incongruence effect demonstrated in our study may guide further psychological interventions. For instance, music that incongruently accompanies a scene or a facial expression may cause the listener to smile and induce a further discussion on how an individual manages these unexpected events and what emotions are elicited by them (e.g., fear, anxiety). According to our findings, it also seems that, in order to trigger memories, musical cues should be heard many times and not necessarily be linked to one particular event, but this finding should be further investigated.

Strong emotions such as happiness and/or excitement elicited by music-evoked memories (Barrett et al., 2010; Juslin & Sloboda, 2010; Schulkind et al., 1999; Zentner et al., 2008) and the differential responses of patients to music (Choppin et al., 2016) should also be taken into account when developing psychological interventions that make use of music. In Juslin’s (2013) theoretical framework, memories elicited by music are viewed as one of the cognitive processes that underlie music’s impact on emotions. Memories triggered by music might therefore “unlock” emotions in individuals suffering from dementia and/or depression (Campen, & Ross, 2014), or individuals with trauma (Amir, 2004).

Music’s capacity to trigger memories and stimulate mental imagery is used in therapies based on music (e.g., the Bonny Method of Guided Imagery and Music; Ventre, 1994). According to Helen Bonny, its founder, GIM is a music-centered approach that uses sequences of classical music and involves listening to music in order to trigger imagery for different sensory modalities. Sequences of images are possible thanks to pitch range, melodic shape, rhythm, timbre, and form (Marr, 2001). For instance, with this method, congenitally blind individuals experience gustatory, olfactory, auditory, and tactile representations of imagery (Samara, 2016).