Abstract

Can you tell what’s inside a sealed container just by touching it? Prior work in “container haptics” has focused on numbers—how many marbles are rolling around, or how full a bottle is. Here, we explore whether humans can make qualitative judgments—what kind of thing is inside—without seeing it. Across three studies, participants explored containers filled with dry food items (e.g., flour or granola) using touch, with or without sound. Surprisingly, even with no visual (or auditory cues), participants could often identify, or at least describe, the contents based on texture, size, and density. These findings suggest that your hands are better at guessing container contents than you might think.

Can you guess what’s inside a container—say, a wrapped birthday present or a spice jar—if you were blindfolded and wearing headphones? In our daily lives, we often infer what’s in a container, whether it is a box, a bottle, a pouch, or a bag, without ever seeing it. You shake it. You squeeze it. You feel the inertia, compliance, or resistance of the thing inside. This process—sometimes called container haptics—is surprisingly underexplored. Past studies have shown that people can estimate how much liquid is in a bottle or how many balls are inside a container (Frissen et al., 2023, 2025; Jansson et al., 2006; Plaisier & Smeets, 2017). But can we identify what is in there?

To find out, we ran three quick studies using opaque plastic containers (7 cm wide, 5 cm tall) filled with dry food items that differ in texture, size, and density; the containers were of similar weight and filled to no more than two-thirds of their capacity, leaving at least one-third empty so the contents could still move around (see Figure 1). The suspects: cloves, flour, granola, lentils, couscous, vermicelli, bulgur, and nigella (no, not the TV chef, the tiny black seeds).

Containers used in the experiment, filled with different dry food items, each with distinct combinations of texture, size, and density properties. During the experiments, there were lids on the containers.

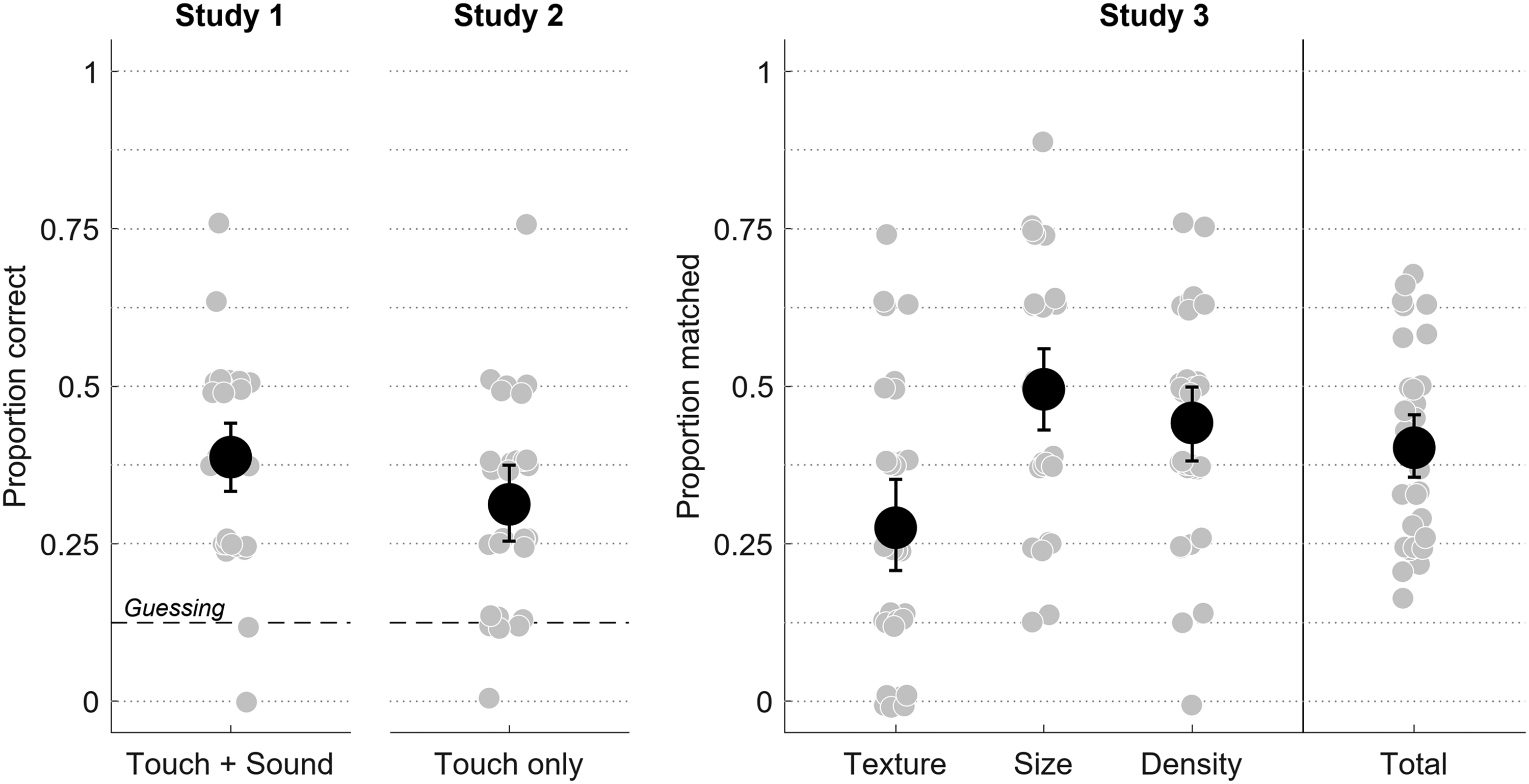

Study 1 gave 30 participants a multiple-choice test: shake the container (with sound), pick what’s inside from a list with the suspects. With both touch and sound available, participants did quite well (39% on average; Figure 2)—better than chance (i.e., 12.5%; t(27) = 6.02, p < 0.001). Items with distinctive properties such as coarse granola (73%) or fine flour (87%) performed best, while vermicelli (13%) and nigella (17%) performed worst.

Left panels: Proportion of correct responses in Study 1 (touch + sound) and Study 2 (touch only). Right panel: In Study 3, proportion of correct feature matches (texture, size, and density). Grey dots show individual scores; black dots show means. Error bars represent 95% empirical bootstrapped (

Study 2 was the same as the first, but quieter—participants (

Together, Studies 1 and 2 show that touch is sufficient to do the task but also that sound helps, which echoes what other container studies have found (Hummel et al., 2022; Overvliet et al., 2023; Pittenger et al., 1997). However, it is important to note that these results may primarily reflect an ability to match touch and sound to a fixed list of labels, rather than true recognition. That is, participants may not have identified flour per se, but instead selected the option that best approximated what they felt-even if no exact match was perceived.

In Study 3, we took a looser and more natural approach and had 33 participants just tell us what they thought was inside the container. The only information provided was that the containers held dry food items. For the analysis, we didn’t only count exact answers, we also scored whether the response matched the content’s texture, size, and density. The five most common guesses were salt and rice (each about 11% of all responses), followed by flour (10%), sugar (7%), and beans (6%). Exact identifications were rare (no one is clairvoyant), but participants reliably picked up on key features. Over 40% of the time, their responses matched the content on at least one dimension (Figure 2). For instance, if the container held bulgur and the response was salt, that counted as a match on all three features. But if the response was coffee powder, only density would match. Size was most frequently matched (50%), followed by density (44%), while texture proved the trickiest (28%).

So what’s going on? It seems that people don’t necessarily identify specific categories (e.g., “this is rice”) as much as they infer properties (e.g., “this feels granular and heavy, maybe it’s rice or bulgur”). It’s like shaking a wrapped gift and thinking “this is heavy and boxy,” not “definitely a toaster.”

These findings extend what we know about container haptics, showing that people infer more than just quantities—they can also infer qualitative features such as granularity and density, even with minimal input. This challenges the notion that direct contact is required for meaningful material perception (Ziat, 2023).

While we didn’t set out to pinpoint the specific sensory channels involved, this paradigm offers a way to explore how physical cues—such as shifts in inertia or granular vibration—support perceptual inferences. Whether these map onto known mechanoreceptor pathways is an open question, but our results show that even brief mechanical interactions can convey surprisingly rich material information.

The haptic container paradigm is becoming a promising avenue for future work into the role of prior knowledge in haptic perception. For instance, it enables experimental control over the quality of sensory information (Frissen et al., 2025), while also allowing researchers to systematically vary contextual cues. This makes it possible to probe how bottom-up sensory processes interact with top-down cognitive and semantic processes (Sekuler & Blake, 1994)—a dynamic at the core of real-world object recognition.

Footnotes

Author Contribution(s)

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.