Abstract

Digital microscopy (DM) has been employed for primary diagnosis in human medicine and for research and teaching applications in veterinary medicine, but there are few veterinary DM validation studies. Region of interest (ROI) digital cytology is a subset of DM that uses image-stitching software to create a low-magnification image of a slide, then selected ROI at higher magnification, and stitches the images into a relatively small file of the embedded magnifications. This study evaluated the concordance of ROI-DM compared to traditional light microscopy (LM) between 2 blinded clinical pathologists. Sixty canine and feline cytology samples from a variety of anatomic sites, including 31 cases of malignant neoplasia, 15 cases of hyperplastic or benign neoplastic lesions, and 14 infectious/inflammatory lesions, were evaluated. Two separate nonblinded adjudicating clinical pathologists evaluated the reports and diagnoses and scored each paired case as fully concordant, partially concordant, or discordant. The average overall concordance (full and partial concordance) for both pathologists was 92%. Full concordance was significantly higher for malignant lesions than benign. For the 40 neoplastic lesions, ROI-DM and LM agreed on general category of tumor type in 78 of 80 cases (98%). ROI-DM cytology showed robust concordance with the current gold standard of LM cytology and is potentially a viable alternative to current LM cytology techniques.

Keywords

The term telepathology was first introduced in 1986 to describe an emerging technology and emphasize the need for distributed pathological expertise in rural areas. 17 Telepathology has several potential advantages over traditional glass light microscopy (LM), including time efficiency, decreased cost, and ease of consultation with remote specialists. 15 These telepathology technologies are now lumped under the interchangeable terms digital pathology and digital microscopy (DM), which refer to the viewing of a microscope slide on a computer screen using a robotic microscope, region of interest (ROI) software, whole-slide imaging (WSI) scanner, or static telepathology (the capture of individual images from a microscope camera or eyepiece). 2,9 These modalities all share the computer visualization of sample material but differ in how much of the slide is visualized (individual fields, scanned regions of interest, or the entire slide) and whether that image is stored (static telepathology, WSI, and ROI scans) or viewed “in real time” (robotic microscopy).

The use of DM has expanded in concert with decreasing hardware costs and increasing Internet speed and server size, which allows for the storage of large image files. 14 Digital microscopy has traditionally been used for research, teaching, and second opinion consultation purposes in both human and veterinary pathology, but the emergence of WSI scanners has allowed for more widespread use for the primary diagnosis of human diagnostic biopsy samples. 2 In 2017, a commercial WSI histopathology system received US Food and Drug Administration (FDA) approval for primary diagnostic use following a large multicenter validation study involving 1992 cases interpreted by multiple pathologists across 4 medical centers. 10 A recent veterinary WSI validation study of 80 canine cutaneous tumors demonstrated a similar concordance for histopathologic diagnosis across multiple time points and pathologists. 3 Other studies have similarly indicated the reliability of ROI-DM scans, which evaluate smaller scanned regions of cells of interest for histologic frozen-section diagnosis. 12

The paucity of data evaluating DM in cytology arises, in part, from unique technical aspects of this modality. Unlike histopathologic sections, cytology slides are a heterogeneous preparation of single and aggregated cells of varying thickness, and the uneven 3-dimensional layering can make finding optimal focus and image quality more challenging. 5 However, several human pathology studies show ROI-DM cytology is accurate and reproducible among pathologists for both liquid-based gynecologic and nongynecologic cytology samples, as well as fine-needle aspirate (FNA) cytology. 6,7 Use of DM cytology for rapid on-site evaluation in a human academic cancer center had a 93% concordance with final cytologic opinion and led to a significant increase in the number of diagnostic specimens being sent to the pathologist for full review. 8

Despite advances in veterinary digital pathology, very few studies have been conducted to examine the efficacy of this technology for diagnostic veterinary cytology. A single study found strong diagnostic concordance using static digital images from 20 veterinary cytology samples, 9 although the images were captured by highly trained pathologists, which may limit the conclusions about clinical utility. Interestingly, in that study, the median time to capture images was 30 minutes (range, 20-40 minutes), and the median time for a telecytology diagnosis was only a few seconds, highlighting potential rapid diagnostic capability of digital veterinary cytology. 9 No other studies have evaluated DM cytology in veterinary medicine.

The purpose of this study was to examine the diagnostic concordance between ROI-DM scans and traditional LM (glass slides) for common veterinary cytology samples. We hypothesized that diagnoses from ROI-DM scans would be concordant with those of traditional glass slides, supporting a role for digital microscopy in veterinary diagnostic cytopathology.

Materials and Methods

Case Selection

Cases were selected from the Colorado State University (CSU) clinical pathology archives from May through September of 2017; selection was based on clarity of the original cytologic diagnosis. Cases with “suspect,” “probable,” or “possible” interpretations were minimized in the study to reduce the potential for a poor-quality sample or equivocal cytologic findings that could affect the assessment of concordance between ROI-DM and glass diagnoses. Fluid samples were not included in this study given the reliance on other laboratory data for successful interpretation and the likelihood of a recall bias for cases with substantially more information. For each case, all submitted slides were obtained from the archives and examined by a board-certified clinical pathologist (P.R.A.). Slides were scanned at low magnification until a slide was identified that contained adequate cellular material. There was no detailed effort to find the optimal slide or any effort to corroborate the initial cytology diagnosis. All slides had been stained using an automated stainer with a modified Wright-Giemsa (ELITechGroup, Logan, UT). Slides previously stained with rapid aqueous Romanowsky-type stains were not included in the study.

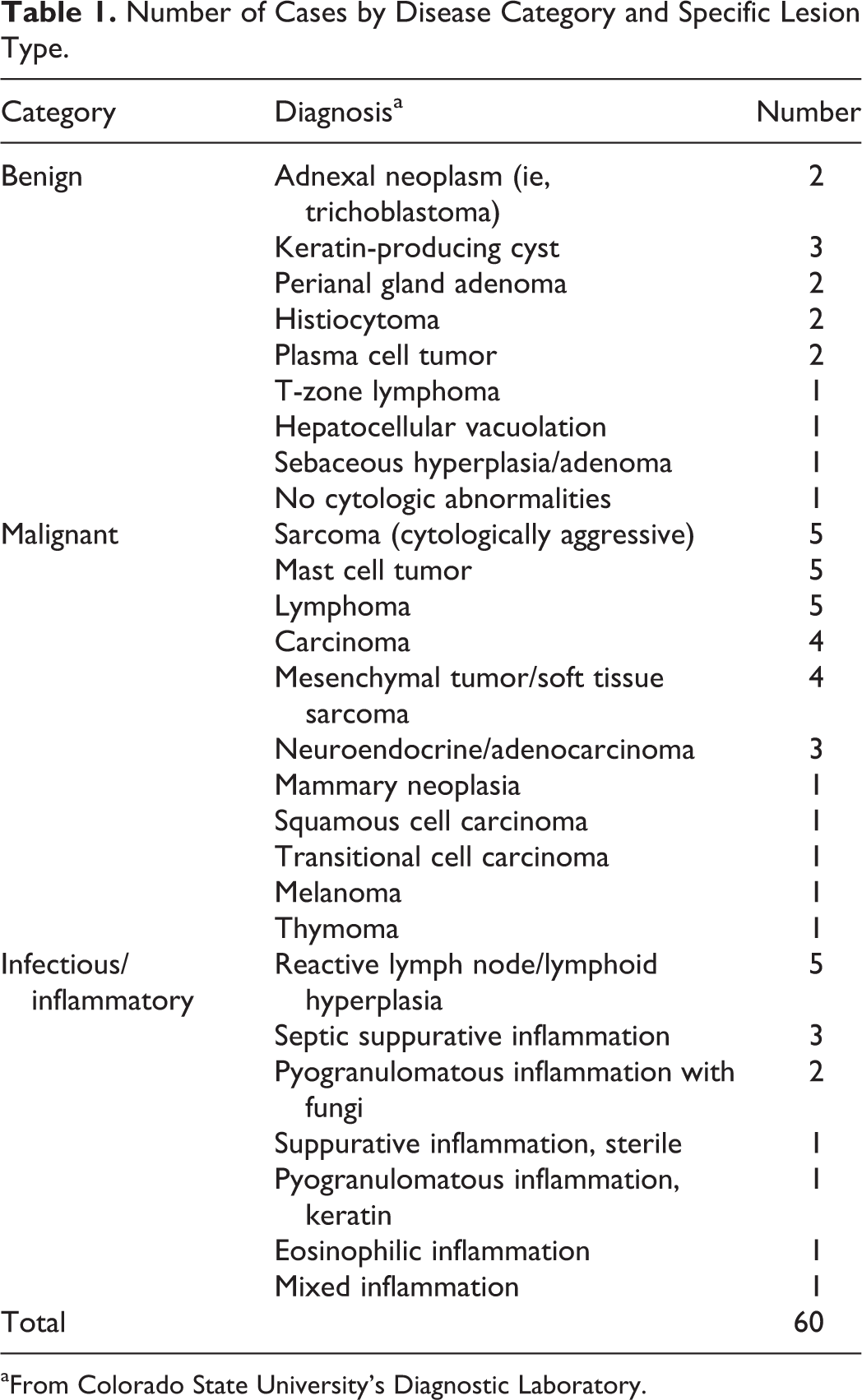

A total of 60 cases (57 canine, 3 feline) were selected that represented a range of common pathologic diagnoses encountered at the diagnostic laboratory as determined by a board-certified clinical pathologist (P.R.A.). Anatomic sites were diverse and included cutaneous/subcutaneous masses (n = 28), lymph nodes (n = 14), liver (n = 4), internal organs/masses (n = 4, 1 each from spleen, prostate, mediastinal mass, mesenteric mass), anal/perianal masses (n = 4), and 1 each of the following sites: tongue mass, mandibular mass, right frontal sinus, retrobulbar mass, cranial stifle mass, and an oral/gingival mass. One cytology slide from each case was included in the study, and smears were derived from FNA methods. Diagnoses included 31 cases of malignant neoplasia, 14 cases of inflammatory/infectious disease, and 15 cases of benign neoplasia or hyperplastic lesions (Table 1; Suppl. Table S1).

Number of Cases by Disease Category and Specific Lesion Type.

aFrom Colorado State University’s Diagnostic Laboratory.

A sample size of 60 cases was selected based on the industry standard for validation of digital pathology techniques. 11 Patient information was obtained either from the CSU Veterinary Teaching Hospital (CSU-VTH) or from the referring veterinarian for patients not treated at the CSU-VTH and entered into an online platform (Lacuna Diagnostics version 1.0; Lacuna Diagnostics, Fort Collins, CO). Patient species, age, and cytology sample site were the only information included for each sample to minimize recall bias between the digital and glass slides.

ROI-DM image acquisition occurred through the Lacuna 360 system (Lacuna Diagnostics) and consisted of a Blackfly camera (model U3-23S6C-C, FLIR Integrated Imaging Solutions, Richmond, BC, Canada) digital video camera mounted on a Nikon E200 (Nikon Instruments, Melville, NY). This microscope had 4×, 10×, 40×, and 60× objectives (Nikon CFI E PLAN-NC 4×, CFI E PLAN-NC 10×, CFI E PLAN-NC 40×, CFI ACHRO FLAT FIELD-NC 60×). The camera was attached to a HP Z1 computer (Hewlett Packard, Palo Alto, CA). Image acquisition through the Panoptiq software (version 4.3.0; Panoptiq, Vancouver, BC) and all case information, communication, and interpretations occurred through the Lacuna Diagnostics digital portal system (version 1.0).

Slide Scanning

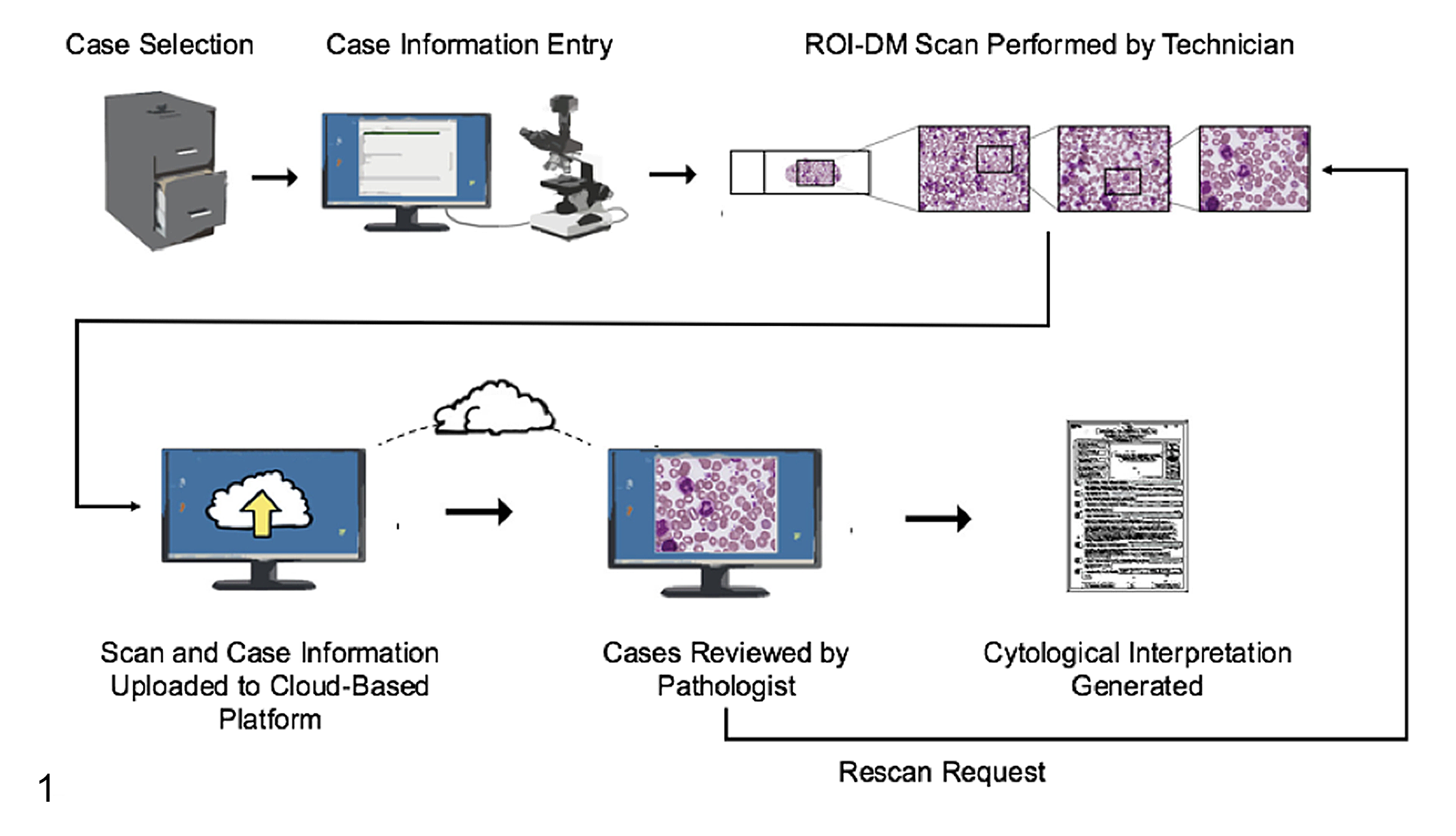

All slides were scanned by a certified veterinary technician trained on the technology. The technician received instruction directed toward (1) identifying typical areas of adequate cellular density, (2) identifying an approximate monolayer on a sample, and (3) differentiating intact cells from ruptured cellular material. The technician was given the study slides and allowed to choose areas of interest at will to better mimic a clinical situation. Scans of the entire slide were created at 4× magnification with the intent of guiding higher magnification scans and giving pathologists a global perspective of the slide. Once the technician identified a region (or regions) of interest based on their training and Standard Operating Protocol (SOP), further scan layers were created at 10×, 40×, and 60× at the identified area (Figure 1). All slides had a “dry” coverslip in place to improve focus on 40× and 60× objective scans. The interpreting pathologists had the ability to request additional scans of other regions of interest based on the 4× whole-slide scan. The technician would perform the scans and resubmit the case for pathologist review (Figure 1).

Region of interest (ROI)–digital microscopy (DM) workflow. Cases were selected from the archives, and basic demographic information was entered into the platform. ROI-DM scans were performed by scanning the entire slide with a 4× objective, followed by multiple progressively higher magnification areas of interest with 10× to 60× objectives. Scans were uploaded to the cloud platform and evaluated remotely by pathologists who could report their findings or send cases back for more information and/or rescanning areas.

Scan Quality Rating

Pathologists were required to rate the scan on a 1 to 5 ordinal scale (1 = poor-quality scan, minimally diagnostic; 5 = excellent diagnostic quality) based on a holistic evaluation for both technical scan quality and perceived diagnostic value. In addition to the quantitative scan rating, blinded pathologists entered subjective qualitative comments. These metrics were constructed to both provide feedback to the technician scanning the slide and assess whether the lower scan rating corresponded with poorer diagnostic quality.

Pathologists and Case Interpretation

Cases were accessed via an online digital pathology platform (Lacuna Diagnostics version 1.0) in order for the pathologists to view patient information and the ROI-DM scan. Two reading pathologists (A.G.M. and E.J.F.) independently viewed the ROI-DM scans and entered microscopic description, interpretation, and comments into the Lacuna Diagnostics platform (Figure 1). The pathologists were not given specific guidelines for interpretations and were asked to approach the case as they would any diagnostic submission. Both reading pathologists were blinded to all clinical information other than species, age, and lesion site. Reading pathologists varied by years of experience (1 vs 8 years since specialty certification) and specific experience with digital cytology (1 with moderate experience with static telepathology and the other with no specific exposure to digital cytology prior to the study). After a washout period of at least 30 days after submission of ROI-DM scan interpretations, pathologists received the corresponding glass slide. Visualization of the glass slide was done with standard LM, and microscopic description, interpretation, and comments were entered via the online platform as per the digital samples.

Concordance

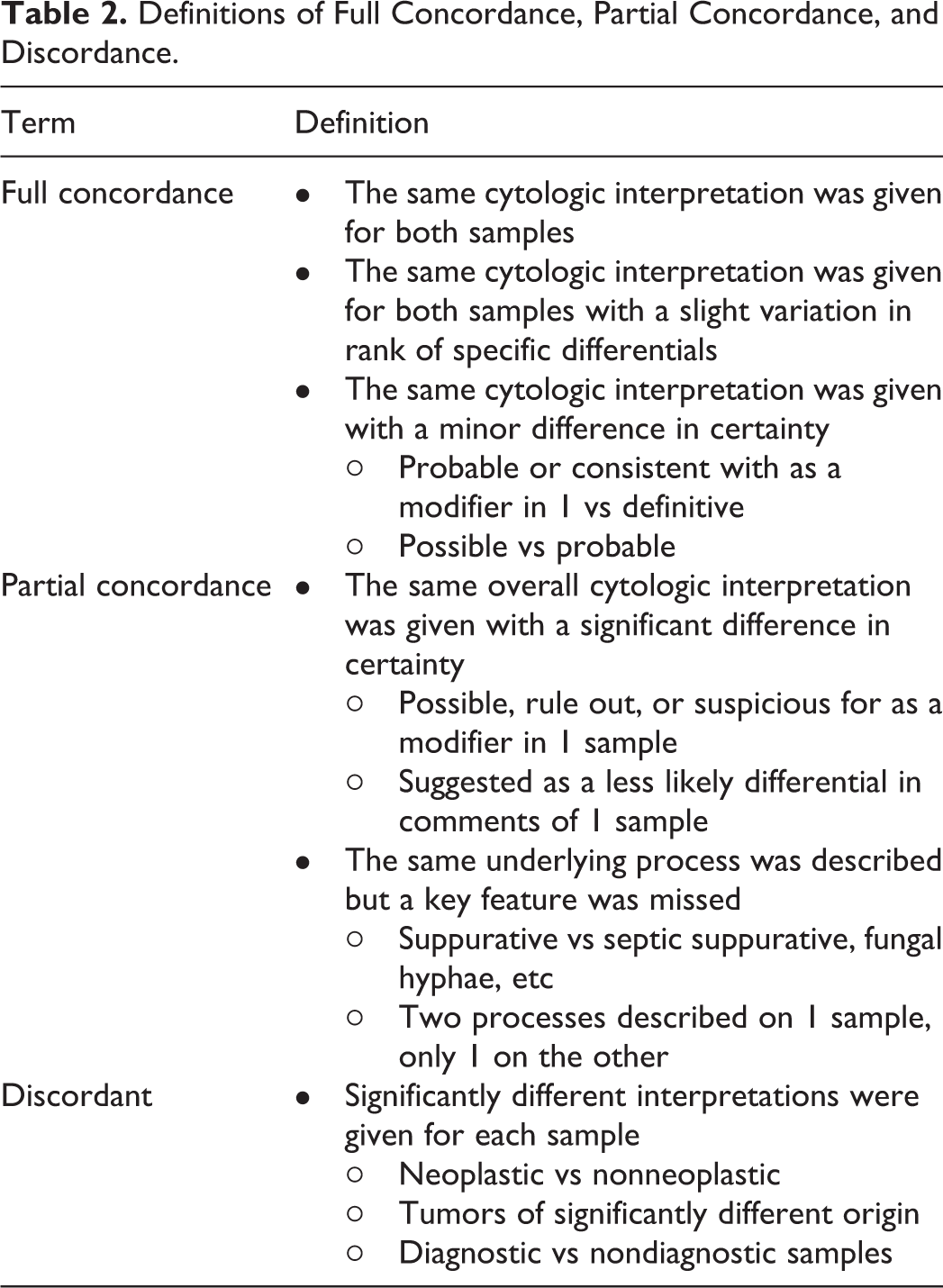

Given the range of possible interpretations over the diverse cytological samples, 2 nonblinded adjudicating, board-certified, clinical pathologists (P.R.A., L.A.S.) compared the ROI-DM scan interpretations to those of the corresponding glass slide to reach a consensus conclusion of concordance. The pathologists considered the microscopic description, interpretation, and comments for each case and made a determination of full concordance, partial concordance, or discordant (Table 2). Full concordance was determined by paired reads between ROI-DM cytology and LM with an identical diagnosis (eg, large cell lymphoma). Modifiers and nonessential information were generally not considered in determining agreement (eg, “mast cell tumor” vs “mast cell tumor and eosinophilic inflammation” would be considered fully concordant). Partial concordance was used when diagnoses were in broad agreement but varied by pathologic subtype or important cytologic distinction (eg, pyogranulomatous inflammation with fungal hyphae on LM, pyogranulomatous inflammation without identification of organisms). Discordant cases were cases where the ROI-DM cytology and LM showed significantly different interpretations for each sample (ie, neoplastic vs nonneoplastic) and/or 1 modality could not provide an interpretation (ie, nondiagnostic sample).

Definitions of Full Concordance, Partial Concordance, and Discordance.

Statistical Analysis

The primary outcome variable assessed in this study was concordance (full and partial) and discordance between ROI-DM cytology and LM. The distribution of concordance across pathologists and disease categories was compared by χ2 and Fisher’s exact tests as appropriate. Scan quality ratings were compared using Student t test. All statistical analyses were performed using R version 3.2.2 (R Foundation for Statistical Computing, Vienna, Austria), and P values <.05 were considered statistically significant.

IACUC Review

These proposed Material and Methods were reviewed by the Colorado State University Institutional Animal Care and Use Committee (IACUC) and neither IACUC protocol nor additional review by the clinical review board were required.

Results

ROI-DM Concordance Compared to Light Microscopy: Overall Concordance

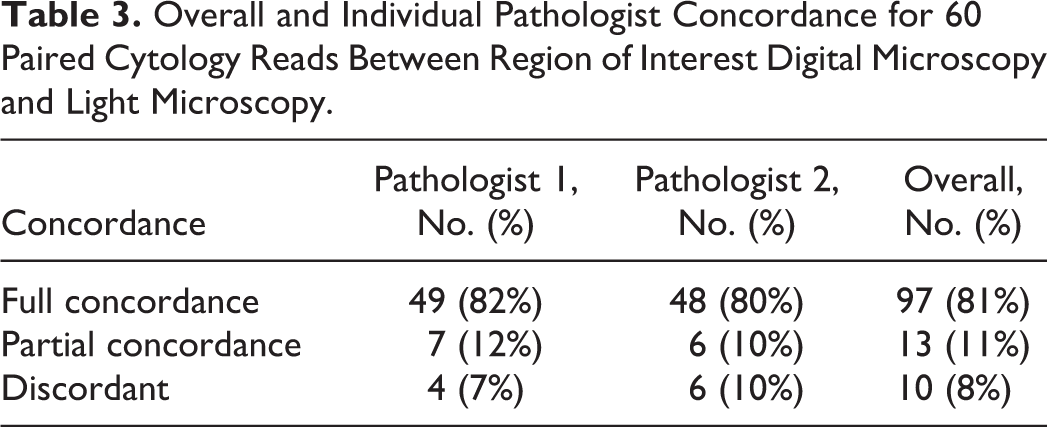

Overall, ROI-DM and LM were in full or partial concordance in 92% of cases when averaging between both pathologists, with 8% discordance between the modalities (Table 3). There was no significant difference in the distribution of full concordance between pathologists (P = .784; 82% vs 80%; Table 3).

Overall and Individual Pathologist Concordance for 60 Paired Cytology Reads Between Region of Interest Digital Microscopy and Light Microscopy.

While overall concordance between ROI-DM cytology and LM in this study was moderately high, 10 of 120 reads were discordant (8%) (Table 3; Suppl. Table S2). Two cases were discordant for both pathologists, with similar reasons for mismatch. Case No. 33 was a perianal gland tumor with abundant blood contamination, and the ROI scans were deemed nondiagnostic by both pathologists due to the exclusion of the nucleated cells in the higher magnifications. Case No. 30 was a complex case clinically and cytologically; there is still a lack of consensus regarding the diagnosis among the 4 pathologists even with access to the glass slide (histopathology was not performed on this sample). Other cases that were discordant varied by pathologist, including case No. 14 and case No. 38, where the ROI-DM scans overemphasized nonrepresentative areas of the lesion, causing 1 pathologist to misinterpret the lesion.

ROI-DM Accuracy by Disease Category

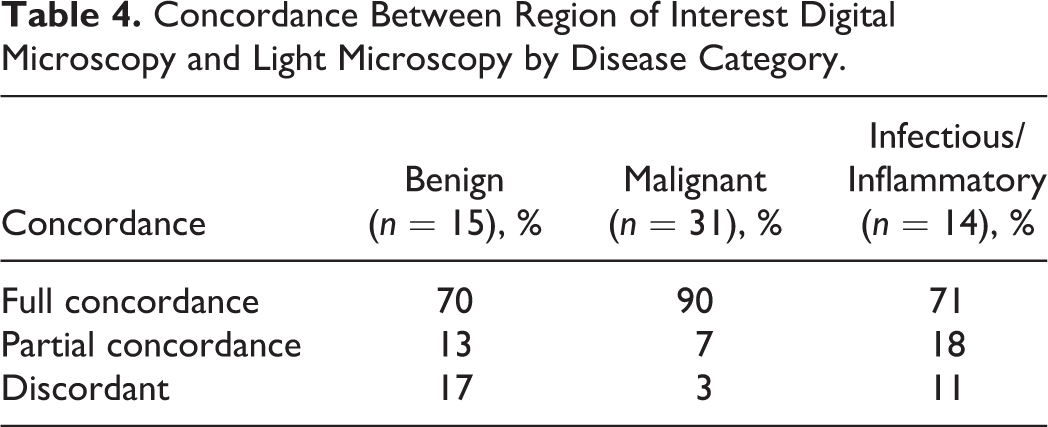

Concordance between ROI-DM and LM was further analyzed within the 3 lesion categories: benign/hyperplastic, inflammatory/infectious, and malignant (Table 4). Concordance was significantly higher for malignant lesions (overall concordance 97%: 90% full concordance, 7% partial concordance) compared to benign/hyperplastic lesions (overall 83% concordance: 70.0% full concordance, 13% partial concordance) (P = .022). Inflammatory/infectious lesions also had high overall concordance (89%), but comparatively fewer were fully concordant relative to malignant lesions (71% full concordance, 18% partial concordance); this difference was not statistically significant (P = .071).

Concordance Between Region of Interest Digital Microscopy and Light Microscopy by Disease Category.

For the 40 total neoplastic lesions (31 malignant and 9 benign neoplasms read by each pathologist), ROI-DM and LM agreed on general category of tumor type (ie, epithelial, mesenchymal, round cell, melanocytic) for 78 of 80 (98%) paired reads between pathologists. One discordant instance was case No. 38 for pathologist 1, where the ROI-DM scan areas overemphasized the mesenchymal component of an adnexal tumor and led to an opinion of low-grade spindle cell tumor vs spindle cell variant of trichoblastoma. The other discordant instance was case No. 14 for pathologist 1, in which the ROI-DM scan captured mostly areas of bland, individualized, discrete cells that resembled a round cell tumor, resulting in an opinion of histiocytoma for this adnexal tumor.

Diagnostic Scan Quality Impact on Accuracy

The interpreting pathologists (E.J.F. and A.G.M.) had the ability to annotate their digital slides at the 4× scan and request higher magnification scans to more closely investigate areas not previously scanned, allowing for further investigation of areas of interest on the slide. Out of the 120 case reads, 21 rescans representing 16 cases were requested. Five cases were requested for rescan by both pathologists. The number of rescans varied substantially between pathologists (pathologist 1, n = 5; pathologist 2, n = 16). Of the 21 slides that were rescanned, 20 were either fully or partially concordant, and 1 was discordant with the diagnosis based on the glass slide.

Overall, the average quality rating was 3.63 (out of 5), with 88% (n = 105) of slides rated as 3.0 or above. Mean quality rating was significantly different between pathologists (P = .028; mean = 3.80 for pathologist 1 and 3.47 for pathologist 2 using a paired t test). However, there was no significant difference (P = .194) in average quality rating for discordant cases (mean = 3.20) compared to those with partial or full concordance (mean = 3.67).

Discussion

In this study, ROI-DM cytology showed substantial concordance with the current gold standard, LM, of glass cytology slides. The concordance percentages in this study are similar to previously published digital pathology concordance studies in human and veterinary medicine 3,4,9,10 and higher than the rates of multiple-pathologist assessment of second/third opinion histopathology. 13 Some degree of interobservation variation in diagnoses was expected due to differences in the pathologists’ pathology training institutions, years of experience, and prior exposure to digital pathology. Importantly, the frequency of full concordance did not statistically vary between pathologists in this study.

While ROI-DM cytology was frequently in concordance with LM, 10 of 120 (8%) paired findings were discordant (Suppl. Table S2). The scan quality rating was not significantly different for any category of agreement for either pathologist, suggesting absolute technical scan quality was not the primary driver of discordance. Rather, discordance was likely related to selection bias introduced by the scanning technician selecting nonrepresentative slide areas for ROI-DM capture, which was an intrinsic risk of a nonpathologist performing the scanning. Several examples of this issue occurred in this study, such as when both pathologists found the ROI-DM scan in case No. 33 nondiagnostic because it only contained blood. Similarly, a slide of a benign adnexal tumor in case No. 38 resulted in discordance because the ROI-DM scan overemphasized an area with mesenchymal cell and extracellular matrix components relative to the basal cell component.

These errors may be a consequence of the study design, which used nonveterinarians with minimal cytology training to perform the scanning; however, this choice was intentional as it more accurately mimicked the real-world application of this technology in a laboratory setting. Such mismatches could potentially be mitigated by scanning additional regions of interest and/or recommending rescans for suboptimal cases. These mismatches emphasize the importance of pathologist-hospital communication with this modality of digital cytology. Finally, there were cases where additional clinical information could have made a critical difference in the cytological interpretation (ie, cases marked as “skin mass” where the lesion location was in the mammary or perianal region). This information was intentionally withheld to avoid recall bias after the washout period.

The higher diagnostic agreement for malignant neoplasia in this study fits with previous cytology-histopathology correlation studies that demonstrate cytology usually has lower sensitivity, specificity, and positive predictive value for inflammatory and benign lesions than malignant neoplasia. 1 Potential reasons for this finding could include (1) higher cellularity and distinctive features of many neoplasms; (2) low infectious organism burden, making identification of bacteria/fungi more difficult when a subsection of a scanned slide is evaluated; and (3) the generally low cellularity and indistinct features of many benign diagnoses. Samples with a high index of suspicion for an underlying infectious etiology may require additional pathologist request scans or may warrant a subsequent review of glass slides. The limits of ROI technology will likely be mitigated as the price of whole-slide imaging technology falls within the reach of veterinary medicine. The ability to scan the entire slide (or slides) at higher magnification will better allow for the detection of rare organisms or cellular features of interest.

In 2 cases (case Nos. 60 and 41), the pathologist’s ROI-DM interpretations more closely matched the interpretation in the original pathology report than their own diagnosis of the glass slide. This suggests that while selection bias in scanning can exclude important cytologic elements, high-quality ROI-DM scans also have the potential to emphasize important cytologic features that may be overlooked in LM cytology.

One limitation of this study was that the design did not allow for repeated evaluation of LM and ROI-DM at several time points to evaluate intraobserver variability for each modality. Some degree of ROI-DM and LM discordance could be due to this well-documented phenomenon, rather than intrinsic differences between the modalities themselves 11 (Table 3). Another limitation involved the experience of the reviewing pathologist with digital ROI scans. Both pathologists were given a set of 10 training cases to review and gain familiarity with the online platform and digital slides. Despite this introductory training, other studies have indicated the importance of extended periods of training in digital pathology techniques. 16 Due to the asynchronous nature of ROI-DM and potential rescans, this study was unable to assess the reading pathologist time spent on each case and potential effect on concordance.

Another limitation of this study was the intentional identification of cases with a previous definitive diagnosis and the selection of slides by a board-certified pathologist. In an attempt to diminish this bias, the slide that was ultimately scanned was chosen based on a 10× review solely aimed at identifying sufficient cellular material, not optimal cellular contents. There was no specific attempt to confirm the original cytologic diagnosis at this selection step. In addition, the slide was then scanned by a veterinary technician without any specific guidance. This artificial case selection process allowed for greater clarity of comparison between ROI-DM and glass cytology modalities but simultaneously did not evaluate the modalities in situations when the diagnosis was less clear. Future studies should examine these topics in greater depth, using more pathologists with multiple ROI-DM and glass slides. Also, studies with an inadequate washout period between digital and glass slide reads introduce the possibility of recall bias. 10 In an effort to minimize bias, our washout period met or exceeded the requirements suggested for the assessment of digital pathology systems. 11 These limitations highlight the fact that, while this study provides initial concordance data, it is not a true validation study of the technology. This would require a prospective study using clinic submitted materials of even broader sample types of all possible diagnostic quality.

Conclusions

In this study, ROI-DM cytology yielded acceptable diagnostic quality samples and frequently agreed with standard LM across a range of veterinary case material. The discordant cases in this series highlight the potential importance of an active dialogue between clinicians and pathologists. This rapid, electronic transmission of data seems particularly well suited for this purpose. These findings further suggest that ROI-DM cytology may be a robust alternative for cytological diagnosis in situations warranting immediate diagnosis and/or in locations or situations where traditional light microscopic evaluation by a pathologist may be unavailable, cumbersome, or unacceptably delayed due to transport times.

Future prospective studies are necessary to truly validate point-of-care digital cytology by determining the diagnostic sensitivity, specificity, positive predictive value, and negative predictive value of ROI-DM for various disease contexts as well as the use of commonly used clinical stains.

Supplemental Material

Supplemental Material, DS1_VET_10.1177_0300985819846874 - Evaluation of Region of Interest Digital Cytology Compared to Light Microscopy for Veterinary Medicine

Supplemental Material, DS1_VET_10.1177_0300985819846874 for Evaluation of Region of Interest Digital Cytology Compared to Light Microscopy for Veterinary Medicine by Conor J. K. Blanchet, Eric J. Fish, Amy G. Miller, Laura A. Snyder, Julia D. Labadie and Paul R. Avery in Veterinary Pathology

Footnotes

Acknowledgements

We thank Jesse Henderkott for her assistance with scanning slides for this study and Ashli Hirai for administrative aid.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: C.J.K.B., P.R.A., E.J.F., and L.A.S. are stakeholders in Lacuna Diagnostics.

Funding

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.