Abstract

Aims

This study aimed to develop and validate a multimodal predictive model for the risk of strangulation in adhesive small bowel obstruction by integrating deep learning–based computed tomography imaging features and clinical electronic health records.

Methods

A retrospective, observational, multicenter study was conducted across three hospitals, with data from 225 patients used for model development and 123 patients for external validation. A three-dimensional convolutional neural network with a ResNet50 backbone was used to segment abdominal regions from computed tomography scans and classify strangulation risk. The multimodal model integrated deep learning predictions with top electronic health record features using the XGBoost algorithm; global and local interpretability were achieved through variable importance ranking and local interpretable model-agnostic explanations.

Results

The multimodal model demonstrated superior performance in predicting strangulation within 7 days of admission, achieving an area under the curve of 0.915 in the training set and 0.912 in the test set, outperforming single-modality models. Calibration plots showed good alignment between predicted and observed outcomes, decision curve analysis demonstrated significant clinical utility, and net reclassification improvement confirmed that deep learning enhanced the model’s predictive ability.

Conclusion

This study highlights the potential of multimodal artificial intelligence combined with clinical data to improve diagnostic accuracy and support clinical decision making in adhesive small bowel obstruction.

Keywords

Introduction

Adhesive small bowel obstruction (ASBO) remains a leading cause of emergency surgical admissions. 1 In line with the current Bologna guidelines, ASBO can be managed nonoperatively with intravenous fluid administration, nasogastric tube placement, and the prescription of oral water-soluble contrast, provided there is no suspicion of intestinal ischemia, i.e. strangulation.2,3 Immediate surgical intervention is mandatory if strangulation is suspected. The overall mortality rate following laparotomy for ASBO exceeds 7%, and a delay in emergency laparotomy beyond 72 hours after admission is associated with higher 30-day postoperative mortality and prolonged hospital stays. When nonoperative management fails, costs escalate more than seven fold.4,5 However, identifying patients at high risk of strangulation remains challenging. Robust predictive models that can accurately forecast strangulation could facilitate earlier surgical decision making and ultimately improve both short- and long-term outcomes.

Despite the fact that adhesions are not directly visible on computed tomography (CT) scans, CT imaging can accurately differentiate among various causes of bowel obstruction by ruling out other etiologies.6,7 CT scans are widely acknowledged as the preferred imaging modality when there is uncertainty regarding the diagnosis of SBO, and they are essential for assessing the need for urgent surgery. 8

Recent advances in clinical artificial intelligence have highlighted the transformative potential of multimodal models, which integrate diverse data types (e.g. tabular clinical data, imaging, and text) to enhance diagnostic and prognostic accuracy.9–11 Multimodal fusion leverages complementary information from heterogeneous sources, addressing the limitations of single-modality approaches and aligning with clinicians’ holistic decision-making processes. 12 The integration of imaging and nonimaging data enhances predictive power by resolving data heterogeneity, whereas multimodal approaches emulate clinicians’ reliance on multiple data types for diagnosis and prognosis, mirroring real-world workflows.13,14

Based on this rationale, this study identified clinical risk factors at admission associated with the risk of strangulation in ASBO from basic demographics, symptoms, laboratory tests, and abdominal CT imaging features analyzed using three-dimensional (3D) deep learning. A multimodal model combining tabular electronic health record (EHR) data with deep learning–based CT features was developed to provide a critical basis for rational decision making on the timing of surgery for ASBO.

Methods

Study design

This investigation was a retrospective, observational, multicenter trial conducted across three hospitals: the First Affiliated Hospital of Soochow University, the Jintan Affiliated Hospital of Jiangsu University, and Suzhou Yongding Hospital. The prediction models were developed using data from patients at Soochow University Hospital and externally validated using data from patients at Yongding and Jiangsu University hospitals. The study recruitment period spanned January 2016 to December 2024. Ethical approval was obtained from the ethics committee of the First Affiliated Hospital of Soochow University (approval number: 2022098). Informed consent was waived for this retrospective study. The study flowchart is depicted in Figure 1.

Flowchart of the study.

Participants

Patients presenting to the surgical emergency department with CT-confirmed ASBO and clinically relevant blood samples were included. Exclusion criteria included patients younger than 18 years of age, pregnant individuals, those who had undergone abdominal surgery within the preceding 30 days, patients with inflammatory bowel disease, and those with SBO resulting from intraluminal obstruction, abdominal wall hernia, or peritoneal carcinomatosis. No additional trial interventions were performed after inclusion; patients were managed according to standard hospital protocols. Emergency surgery was performed if strangulation was suspected; otherwise, nonoperative treatment was initiated, including intravenous hydration and nasogastric tube insertion.2,5,15 Signs of strangulation during nonoperative treatment or failure to resolve the obstruction were indications for urgent surgery. 16

Outcome and variables

The primary study outcome was strangulation, defined by operative findings of intestinal ischemia (either bowel necrosis or reversible ischemia) within 7 days of admission. Data collected at admission from EHRs included the following: (a) patient demographics, medical conditions, prior abdominal surgeries, and SBO; (b) clinical symptoms and signs; and (c) laboratory tests, including complete blood counts, liver and kidney function tests, electrolytes, and markers of glucose and lipid metabolism. Non–contrast-enhanced (plain) CT images obtained within 24 h of admission were also collected. Researchers collecting the data were blinded to patient outcomes. Missing data were handled with median imputation for continuous variables and mode imputation for categorical variables, as part of the standard preprocessing pipeline before model development. Specific definitions for key variables were as follows: 1. Fever was defined as a body temperature ≥38.0°C at the time of admission; 2. abdominal guarding was recorded based on the attending physician’s physical examination, indicating involuntary muscle contraction upon palpation, a sign of peritoneal irritation; 3. prior SBO events were identified from the patient’s medical history documented in the EHR, including any previous clinical or radiological diagnosis of SBO; and 4. all laboratory values (e.g. white blood cell (WBC) and C-reactive protein (CRP)) were the first test results obtained within 24 hours of admission.

Deep learning–based CT model

We developed a supervised deep learning framework using a 3D convolutional neural network (CNN) with a ResNet50 backbone to segment abdominal and pelvic regions from CT digital imaging and communications in medicine (DICOM) files and classify them into binary categories (strangulation present or absent).

Abdominal and pelvic region segmentation

Segmentation of the abdominal and pelvic regions from CT DICOM files was the initial step in the framework. This preprocessing step was crucial to focus the model on relevant regions while reducing computational load and minimizing irrelevant noise.

Data preprocessing

CT DICOM files were converted into a format suitable for deep learning. Each CT scan, comprising multiple two-dimensional slices, was stacked into a 3D volume. Hounsfield unit values were normalized to ensure consistency across scans.

Segmentation model

A U-Net architecture, renowned for medical image segmentation, was used. The model was trained on annotated CT scans with manually delineated abdominal and pelvic regions by expert radiologists. The model learned to identify these regions based on anatomical features and intensity patterns in the CT images.

Postprocessing

After segmentation, the abdominal and pelvic regions were resized to a standard dimension (e.g. 256 × 256 ×64) to ensure uniformity for subsequent processing. This step also involved noise reduction and preservation of anatomical integrity.

Supervised deep learning model for binary classification

The second component of our framework was a supervised deep learning model designed to classify segmented abdominal and pelvic regions into binary categories (strangulation present or absent).

Model architecture

A 3D CNN with a ResNet50 backbone was utilized. The model, which comprised multiple convolutional layers with batch normalization and rectified linear unit–activation functions, extracted spatial and volumetric features from CT scans to capture complex patterns associated with strangulation.

Training and validation

The model was trained on segmented CT scans labeled as “strangulation present” or “absent” based on clinical diagnosis. The dataset was divided into training (80%) and validation (20%) sets. Training was performed using the Adam optimizer with a learning rate of 0.001 and a batch size of eight. The binary cross-entropy loss function was used for training.

Multimodal model

To select EHR variables, we first performed univariate analyses comparing clinical and laboratory features between the strangulation and nonstrangulation groups in the training cohort. Variables demonstrating significant differences and high clinical relevance were selected for the next step. A two-way multimodal model for ASBO strangulation was then constructed by integrating predictions from the deep learning–based CT model with the top four tabular EHR features using the XGBoost algorithm. 17 This approach ensured the use of robust, interpretable, and widely available clinical predictors for multimodal integration.

Visualization

The characteristics and performance of the multimodal model were evaluated using multiple techniques, including global interpretation (variable importance ranking), local interpretation (local interpretable model-agnostic explanations, LIME), calibration plots, and decision curve analysis (DCA) plots.

LIME provides visual explanations of each feature’s contribution to predictions, offering transparency and interpretability for individual predictions. 18 Partial dependence plots (PDPs) provide a global perspective on the average marginal effect of each feature on the predicted probability of strangulation. Calibration plots assess the alignment between predicted probabilities and observed outcomes, with deviations from the diagonal indicating miscalibration. 19 DCA evaluates the clinical utility of predictive models by quantifying net benefit across decision thresholds, incorporating clinical consequences, and comparing models against default strategies. 20

Statistical analysis and software

Statistical analyses were performed using R (version 4.1.0) and Python (version 3.9). Continuous variables were reported as means ± SDs for normally distributed data, and as medians with interquartile ranges for non-normally distributed data. Differences in variable distributions between groups were assessed using t-tests or Mann–Whitney U-tests for continuous variables and χ2 or Fisher’s exact tests for categorical variables. The area under the curve (AUC) was calculated for receiver operating characteristic curves. Model AUCs were compared using DeLong’s test, and the net reclassification improvement (NRI) was used to assess risk prediction improvements. 21 The tidymodels platform (version 0.2.0) and rms package (version 6.2-0) were used for tabular data–based model development, whereas deep learning models were constructed using the Keras Python platform with TensorFlow 2.8.0 as the backend.

Results

Patient characteristics

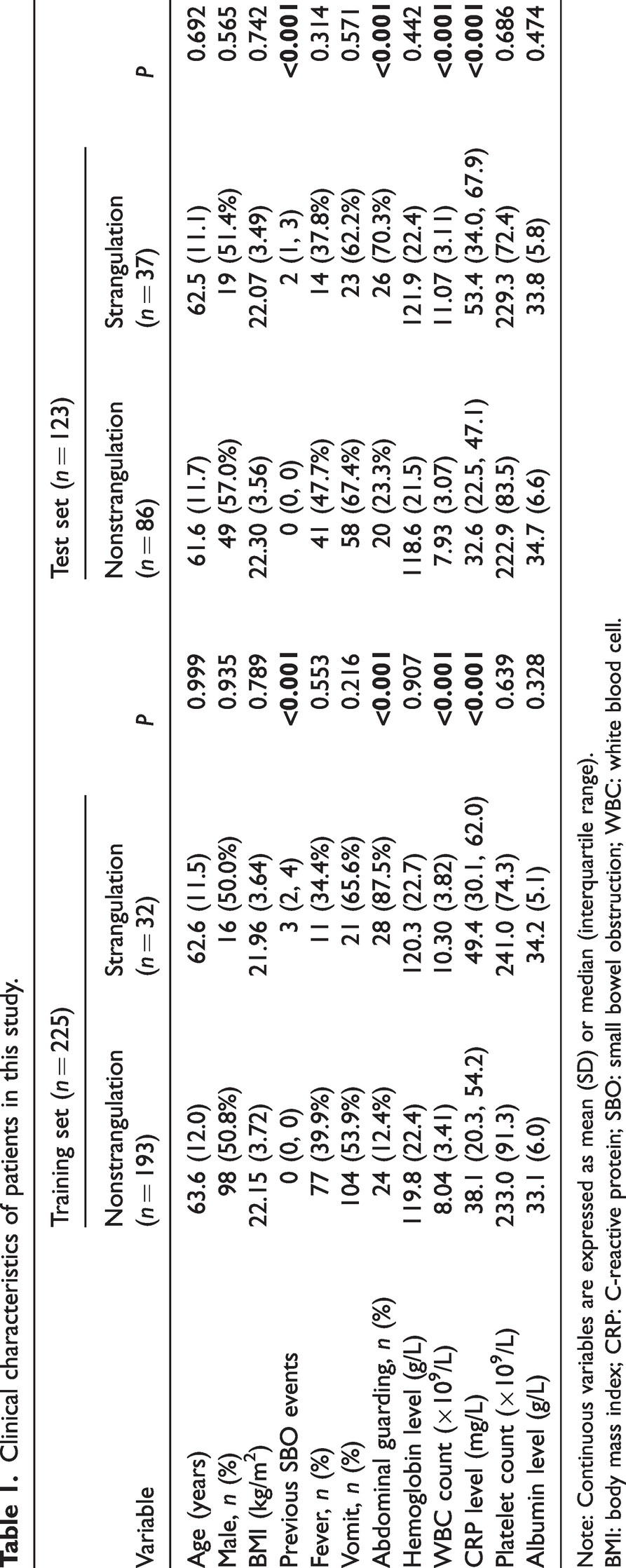

A total of 225 patients with ASBO from the First Affiliated Hospital of Soochow University were included in the training set, whereas 123 patients from Suzhou Yongding Hospital and Jintan Hospital of Jiangsu University were included in the test set. The demographic and clinical characteristics of these patients across the two sets are summarized in Table 1.

Clinical characteristics of patients in this study.

Note: Continuous variables are expressed as mean (SD) or median (interquartile range).

BMI: body mass index; CRP: C-reactive protein; SBO: small bowel obstruction; WBC: white blood cell.

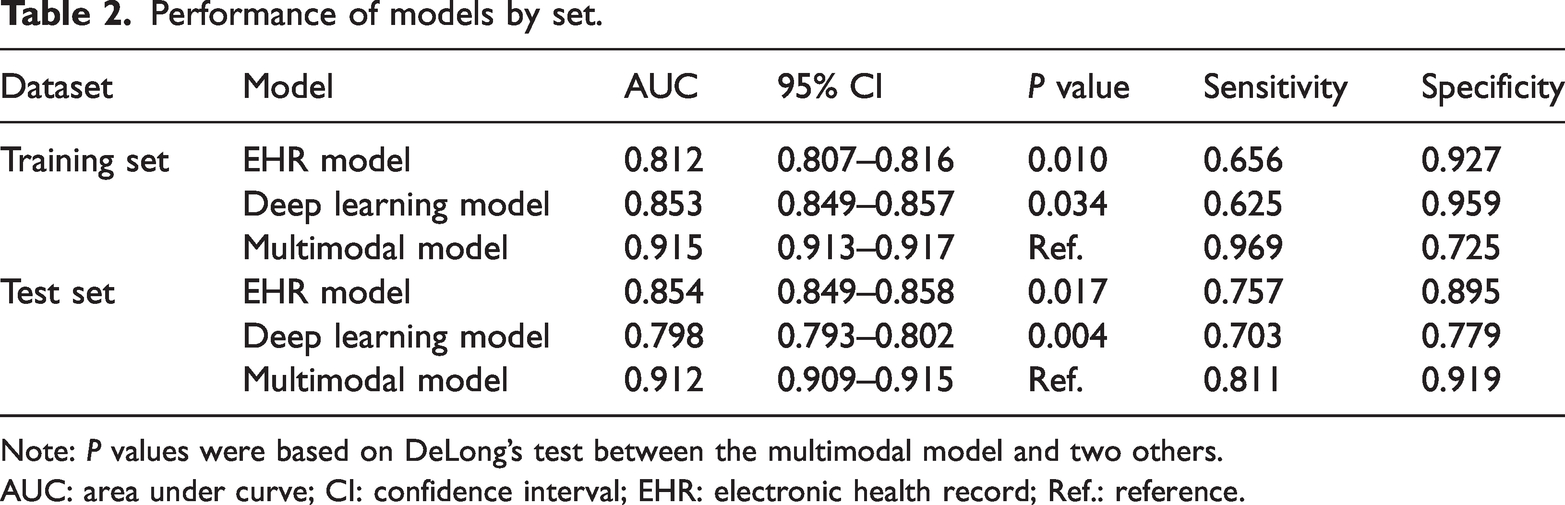

Model performance

As illustrated in Table 2 and Figure 2(a) and (b), the multimodal model exhibited superior predictive performance for identifying strangulation within 7 days of admission in the training set (AUC: 0.915). This was significantly higher compared to single-modality models (deep learning–based CT model, AUC: 0.853, P = 0.034; tabular data–based EHR model, AUC: 0.812, P = 0.010). Similarly, in the test set, the multimodal model achieved the highest performance (AUC: 0.912), outperforming the single-modality models (deep learning–based CT model, AUC: 0.798, P = 0.004; tabular data–based EHR model, AUC: 0.854, P = 0.017). In terms of sensitivity, the multimodal model demonstrated higher values (0.969 in the training set and 0.811 in the test set) than the single-modality models in both cohorts.

Performance of models by set.

Note: P values were based on DeLong’s test between the multimodal model and two others.

AUC: area under curve; CI: confidence interval; EHR: electronic health record; Ref.: reference.

ROC of models. (a) The training set and (b) the test set. ROC: receiver operating characteristic.

Global explainability of the multimodal model: variable importance

As shown in Figure 3(a), the XGBoost algorithm was used to assess the relative importance of the five input features in the multimodal model: the predicted probability from the deep learning CT model and the four pre-selected EHR variables (WBC, CRP, abdominal guarding, and prior SBO episodes). Feature importance was calculated based on the total gain from splits using each feature, reflecting its contribution to the model’s predictive performance. The deep learning CT prediction emerged as the most important feature, followed by WBC, CRP, abdominal guarding, and the number of previous SBO episodes. This ranking highlights the complementary value of both imaging and clinical data, with inflammatory markers and physical signs playing significant roles in the final prediction. PDPs (File S2) illustrate how these features influence the model’s predictions. A PDP shows the average predicted outcome as a function of a single feature, marginalizing over the values of all other features. This helps visualize the relationship between a feature and the predicted risk, revealing whether the relationship is linear, monotonic, or more complex.

Visualized explanation for the multimodal model. (a) Global interpretation: variable importance ranking. (b and c) Local interpretation: LIME. LIME: local interpretable model-agnostic explanations.

Local explainability of the multimodal model: LIME plots

Figure 3 also presents the prediction process of the multimodal model for two randomly selected cases. For an ASBO patient without strangulation (Figure 3(b)), the model’s predicted value was 0.008, which was consistent with the patient’s actual outcome. The five key variables contributing to this prediction were the deep learning model (−0.4557), prior SBO events (never), CRP (29.6 mg/L), WBC (5.35 × 109/L), and abdominal guarding (absent), with respective contributions of −0.097, −0.054, −0.022, −0.015, and −0.005.

Conversely, Figure 3(c) illustrates the prediction for an ASBO patient with strangulation. The model’s predicted value was 0.961, consistent with the patient’s true outcome. The five key contributors to this prediction were CRP (57.3 mg/L), the deep learning model (0.9153), abdominal guarding (present), WBC (10.40 × 109/L), and prior SBO events (three episodes). Their respective contributions were +0.337, +0.311, +0.062, +0.006, and +0.004.

Calibration plots of the multimodal model

In the training set (Figure 4(a)), the multimodal model demonstrated good calibration, with no significant difference between the observed and predicted probabilities (mean absolute error: 0.022). In the test set (Figure 4(b)), the mean absolute error was 0.019, indicating accurately predicted probabilities.

Evaluation of the multimodal model. Calibration plots: (a) The training set. (b) The test set, DCA plots. (c) The training set. (d) The test set, NRI plots (standard model: based on clinical tabular variables; new model: based on clinical tabular variables + prediction of deep learning–based CT model). (e) The training set and (f) the test set. CT: computed tomography; DCA: decision curve analysis; NRI: net reclassification improvement.

DCA plots of the models

Figure 4 also displays the DCA plots, with the y-axis representing the net benefit. The green line corresponds to the tabular data–based EHR model, the red line corresponds to the deep learning–based CT model, and the blue line corresponds to the multimodal model. The “All” line assumes that all ASBO patients had strangulation, whereas the “None” line assumes no ASBO patient had strangulation.

In the training set (Figure 4(c)), the multimodal model yielded net benefit across all patients and outperformed the single-modality models, except at threshold probabilities of 85%–95%. In the test set (Figure 4(d)), the multimodal model again provided a net benefit across all patients and outperformed the single-modality models, except at threshold probabilities of 90%–92%.

NRI plots: prediction of deep learning–based CT model added to tabular data

In the training set, as shown in Figure 4(e), the NRI plot indicated better case reclassification with the multimodal model compared to the model based solely on the top four tabular variables. A positive NRI (0.250) confirmed that adding deep learning predictions led to a net improvement in risk stratification. Similarly, in the test set (Figure 4(f)), the NRI was 0.271, indicating that integrating deep learning model predictions into the tabular data significantly enhanced the model’s classification ability.

Discussion

This study integrates clinical tabular data with deep learning–based CT features to develop a multimodal model for predicting strangulation in patients with ASBO within 7 days after admission. The multimodal model demonstrated superior performance in the training set, outperforming single-modality models while maintaining robust calibration and clinical utility. Additionally, the model’s robustness and generalizability were confirmed through validation in two independent centers. The use of explainable methods, such as variable importance and local prediction interpretation, significantly enhanced the model’s transparency and interpretability.

ASBO is a prevalent surgical emergency associated with high morbidity and mortality.2,5,15 Surgeons should recognize that adhesions leading to such obstructions are often the result of prior abdominal surgeries or underlying diseases. Guidelines recommend nonoperative management for all patients with ASBO unless signs of peritonitis, strangulation, or bowel ischemia are present. 3 Although operative treatment slightly reduces recurrence risk, it does not justify preferring a primary surgical approach. 22 Emergency surgical exploration carries significant morbidity, with a notable risk of bowel injury and potential for reduced postoperative quality of life. Surgeons frequently face difficult decisions regarding whether and when to perform surgical resection for patients with ASBO.16,23,24 Operating too early can increase costs and expose patients to surgical risks compared to nonoperative treatments, whereas delayed surgery may lead to prolonged hospital stays and a higher risk of intra-abdominal sepsis. Traditional clinical evaluations for assessing bowel viability are often insufficient in terms of timing and accuracy.4,25

Current helical CT scans offer excellent diagnostic performance for SBO, with approximately 90% accuracy in predicting strangulation and the need for urgent surgery.6,7,26 CT imaging remains the most commonly used radiologic method for assessing the severity of ASBO. 8 Previous studies have developed prediction models combining radiologic, laboratory, or clinical features to detect strangulation or predict nonoperative management failure. 27 In 2010, Zielinski et al. 4 identified preoperative risk factors for strangulating SBO, including free intraperitoneal fluid, mesenteric edema, lack of the “small bowel feces sign,” and vomiting, with a sensitivity of 96% and a positive predictive value of 90%. Similarly, Maraux et al. 28 identified risk factors for nonoperative management failure, including a Charlson index ≥4, distal obstruction, and a small bowel diameter-to-vertical abdominal diameter ratio >0.34. More recently, Räty et al. 29 developed and validated prediction models for strangulation and nonoperative treatment failure in ASBO across three Finnish hospitals, incorporating variables such as neutrophil–leucocyte ratio, previous SBO, abdominal guarding, and CT findings. However, these studies primarily relied on radiologist evaluations or radiomics for CT feature extraction, rather than deep learning. To date, no prediction model has utilized a 3D deep learning algorithm for CT feature extraction to guide surgical timing in ASBO.

Multimodal artificial intelligence in clinical medicine involves integrating diverse data types, including tabular EHRs, medical imaging, radiomics, genomic data, and unstructured text, to enhance diagnostic and prognostic accuracy. 30 Although single-modality models have shown utility, they often fail to capture the complexity of real-world clinical decision making. 31 Multimodality is reshaping precision medicine by reflecting clinician cognition and surpassing traditional scoring systems. 14

Our study demonstrates that integrating deep learning–based CT features with clinical EHR data offers a robust tool for predicting the risk of strangulation in patients with ASBO. The study is characterized by the application of a deep learning algorithm for CT feature extraction and fusion with clinical tabular data to offer better predictive accuracy compared to models relying solely on clinical or CT data. The proposed framework effectively segments the abdominal and pelvic regions from CT DICOM files and accurately classifies them into binary categories (strangulation present or absent). A 3D CNN with a ResNet50 backbone enables comprehensive feature extraction from CT scans, resulting in high classification accuracy. This framework has the potential to significantly enhance the efficiency of bowel obstruction evaluation in clinical settings, ultimately improving patient outcomes. Moreover, the model’s ability to handle high-dimensional and multimodal data enhances its accuracy and robustness. Additionally, the model’s interpretability is bolstered through global and local interpretability techniques. Calibration, DCA, and NRI plots further substantiate the model’s accuracy and clinical benefit.

This study is subject to certain limitations. First, the study population is primarily drawn from the Chinese population, which may limit the model’s applicability to other racial and regional groups. Future research should validate the model in diverse populations to ensure its broad applicability. Second, retrospective data collection from multiple centers resulted in some missing data. Although strict criteria and large sample sizes can mitigate these issues, prospective studies are necessary to further validate the multimodal model’s performance. Third, the case studies were sourced from the emergency department, and the deep learning model was developed using plain CT images. Future research should explore the potential benefits of contrast-enhanced CT scans, which may provide more detailed imaging and enhance the model’s applicability in broader clinical settings.

This study successfully developed and validated predictive models for the risk of strangulation in patients with ASBO by integrating data from multiple modalities across three medical centers, including clinical EHR-based tabular variables and deep learning–based CT prediction. This approach may assist medical practitioners in identifying high-risk cases that require early operative treatment and in enhancing management strategies for patients with SBO.

Supplemental Material

sj-pdf-1-imr-10.1177_03000605251378951 - Supplemental material for Multimodal predictive model for strangulation risk in adhesive small bowel obstruction using deep learning and electronic health record data

Supplemental material, sj-pdf-1-imr-10.1177_03000605251378951 for Multimodal predictive model for strangulation risk in adhesive small bowel obstruction using deep learning and electronic health record data by Han Wang, Jing Wu, Xianglin Ding, Zhaocheng Ruan, Shiqi Zhu, Yu Wang, Lihe Liu, Jiaxi Lin, Jinzhou Zhu and Xin Chen in Journal of International Medical Research

Footnotes

Acknowledgments

We thank Dr Gu Chenqi and Dr Huang Zhou for their involvement in the computer tomography–manual annotation.

Author contributions

H. Wang, L. Liu, and J. Lin wrote the manuscript; J. Wu, Z. Ruan, Y. Wang, and S. Zhu collected clinical data; H. Wang, L. Liu, J. Lin, and J. Zhu analyzed the clinical data; and X. Chen and X. Ding contributed to the study design.

Data availability statement

The code used in this study is available upon reasonable request from the corresponding author.

Declaration of conflicting interests

The authors declare no competing interests and financial disclosure.

Funding

This study was supported by The National Natural Science Foundation of China (82000540), Suzhou Clinical Center of Digestive Diseases (Szlcyxzx202101), Youth Program of Suzhou Health Committee (KJXW2019001), the Open Fund of Key Laboratory of Hepatosplenic Surgery, Ministry of Education (GPKF202304), Frontier Technologies of Science and Technology Projects of Changzhou Municipal Health Commission (QY202309), and Wujiang Health Committee (WWK202513).

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.