Abstract

1. Looking Back to Look Forward: The Big Data and Administrative Data Wave

A decade ago, the research community witnessed a surge of excitement about “Big Data.” There were ambitious aspirations of supplementing or even replacing surveys with new data streams, such as social media data and administrative records. In Europe, and here within the Journal of Official Statistics, Daas et al. (2015) played an instrumental role in showcasing the large potential of digital data streams such as administrative records, credit card data, and other digital footprints to provide more real-time insights compared to traditional survey approaches.

Internationally, organizations like the American Association for Public Opinion Research (AAPOR) have addressed public concerns about the potential “death” of surveys, given the theoretical ability of vast digital data to reveal patterns in human behavior, as well as the growing threat of data collection costs outpacing available budgets in official statistics. AAPOR’s interdisciplinary task force report (Japec et al. 2015) on this topic remains relevant today, as the issues it raised continue to hold significance.

Government panels and commissions worldwide deliberated on how to integrate administrative data sources into official statistics. Prominent in the U.S. was the Commission for Evidence-Based Policymaking, ultimately laying the groundwork of new legislation that came into pass in the US in 2018 requiring each government agency to develop and maintain a comprehensive data inventory and designate a Chief Data Officer (https://www.congress.gov/bill/115th-congress/house-bill/4174). The council of such Chief Data Officers was charged to establish government-wide best practices for the use, protection, dissemination, and generation of data and for promoting data sharing agreements among agencies. The process is still ongoing with many concrete suggestions in discussion (Lane 2020), some inspired by New Zealand’s Integrated Data Infrastructure, which was established in 2011 (Jones et al. 2022).

Simultaneously, research centers started to develop robust cloud-based infrastructures and secure data enclaves. These environments could feasibly house multiple data sources from both the public and private sectors. A wave of new training initiatives and skill-building programs emerged to educate government employees and researchers about blending administrative records, social media streams, and wearable device data with traditional survey data (Kreuter et al. 2019). Many expected that the “Big Data revolution” would fundamentally transform the way large-scale surveys were conducted or replace such surveys altogether.

And yet, even as these data streams opened exciting possibilities, the field encountered real obstacles. Various forms of missingness, coverage problems, complex sampling biases, and ethical concerns around personal data usage became more apparent. Researchers soon realized that while Big Data could be a strong supplement, it often failed to stand alone as a replacement for carefully designed survey samples. In response, the field began to think more creatively about how to integrate a broader array of data sources into study designs. That shift—where a conventional survey, administrative data, and digital trace data are fused into a common data ecosystem—remains a key consideration as we look to the future (National Academies of Sciences, Engineering, and Medicine 2023).

2. Renewed Emphasis on Surveys During the COVID-19 Pandemic

Any discussion of data collection in the 2020s cannot ignore the role of the COVID-19 pandemic. In early 2020, numerous governments and public health agencies needed up-to-the-minute information that could signal an impending surge in infections. While hospital data and other administrative sources continued to be invaluable, and tracing data spurred a lot of interest, some of the fastest and most flexible insights came from rapidly deployed surveys.

One major example was a worldwide survey initiative carried out through collaborations among universities—including my own, the University of Maryland—public health entities (e.g., the World Health Organization), and social media platforms such as Meta Inc. (then still Facebook). By administering short surveys to a random sample of active Facebook users, researchers could rapidly collect self-reported symptom data from millions of participants across different regions. The aggregated results, shared swiftly with global and local health authorities, allowed the early detection of potential COVID-19 hotspots (Astley et al. 2021; Salomon et al. 2021).

Although this survey sample was obviously not a perfect mirror of every population (the demographic composition of social media users deviates from the composition in the general population to different degrees across countries), for the specific purpose of real-time outbreak detection, the data signaled infection trends effectively enough to guide public health responses.

Importantly, the pandemic shined a spotlight on the need to evaluate the appropriateness of a data stream for a given research or reporting questions. Instead of reflexively trying to create a single “perfect” sample with 100% response rates—an increasingly elusive ideal—researchers began considering whether a given data stream answered the specific question at hand, and whether any biases in that data source critically undermined the reliability of the intended analysis. In practical terms, it meant balancing the cost, speed, and data quality trade-offs in novel ways. Many suspect that this more flexible, integrative approach is worth exploring further (Radermacher 2020), especially as budgetary pressures drive agencies toward efficient, multipurpose designs like standing panels, but also as new crises demand quick, targeted data collection that prioritizes timeliness over a single uniform standard of perfection. A recent Nature commentary by Lane et al. (2024) explores such a flexible, research question focused approach in the context of measuring the economic impact of public investments in AI.

3. New and Evolving Roles for Large Language Models (LLMs)

In the last two to three years, the conversation around data collection has pivoted sharply to the potential of AI. While machine learning models have been used for a while, especially in the context of survey weighting or prioritizing case selections (Buskirk et al. 2018), the advent of large language models (LLMs) such as GPT-type systems has created a massive amount of experimentation and innovation. Early adoption has shown that these models could be leveraged in numerous ways across the data collection lifecycle—from questionnaire design and automated coding of open-ended responses to more experimental uses like synthetic data generation (Learner 2024; Rothschild et al. 2024; Schmidt et al. 2024).

3.1. AI-Assisted Questionnaire Design

The act of writing survey questions may appear straightforward to the uninitiated, but experts know that designing well-structured, unbiased, and methodologically sound survey instruments is time-consuming and requires specialized skills (Krosnick and Presser 2010). There is also a scarcity of questionnaire design experts to meet the volume of requests in both academic and market research contexts.

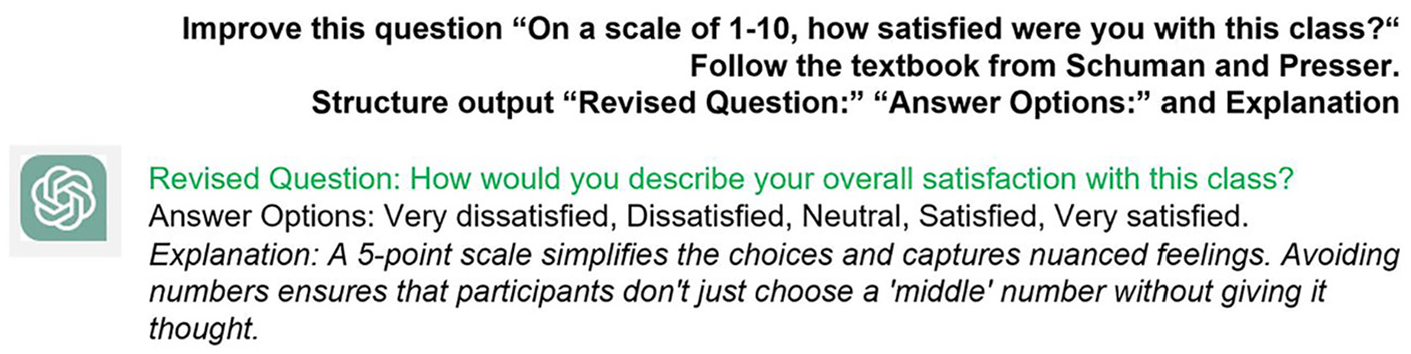

Large language models are surprisingly capable of assisting with this process. By prompting an AI system with clear instructions—such as “draft a set of questions that measure satisfaction with a university course, for an audience of adult learners with at least a high school education”—researchers can obtain a decent first draft of a questionnaire. The real value emerges when one refines that prompt: for instance, by mentioning specific guidelines in question design, referencing best practices from recognized experts, or explicitly requiring explanation of the changes it makes (see Figure 1). Early findings suggest that when carefully directed, these models can correct vague or biased questions, propose more precise wording, and recommend suitable response scales (Buskirk et al. 2024).

Example question improvement based on known and published guidelines.

Moreover, language models can immediately translate draft instruments into multiple languages, adjusting reading levels and verifying that key concepts are consistent across cultural contexts, at least for high resourced languages, though the field is developing fast for others (Lai et al. 2024). This function could be particularly useful in large-scale multinational surveys. If a language model also has exposure to existing methodology research (e.g., from high-quality textbooks or articles), it can incorporate recognized best practices. Language models have also been used in lieu of people to pretest the readability of question text for specific populations. Although the final product still requires careful human review—expert input is essential for detecting subtle flaws—there is broad enthusiasm for the efficiency gains and near-instant creativity these AI models can offer. Here, as well as in the other two areas discussed below, research is needed to assess quality and reliability.

3.2. Synthetic Data Generation and Imputation

A more controversial but increasingly discussed application of large language models is using them to generate synthetic data (Argyle et al. 2023 created quite a buzz with their paper for a method looked at in the survey sciences for many years, see Drechsler and Haensch (2024) for a recent overview). Bisbee et al. (2023) tested this approach by feeding a model detailed “persona vignettes” describing hypothetical survey respondents from the American National Election Study (ANES). The persona might include a fictional respondent’s age, location, gender, or other characteristics. Then, the model was asked how such an individual would respond to various ANES questions. When comparing these AI-generated responses to the actual ANES data, they found reasonable alignment of marginal distributions—especially for broad, simple metrics like “feeling thermometers” about political parties.

However, problems arose when they scrutinized correlations between variables, such as how certain subgroups answered multiple questions. The synthetic data often missed these more nuanced relationships, a shortcoming reminiscent of classical regression-based imputation. Additionally, attempts to replicate such exercises in multi-party political systems (like Germany’s) were far less accurate, partly because the model’s training data is less aligned with multi-party contexts and recent shifts in political opinion (von der Heyde et al. 2024).

While many researchers remain skeptical of using large language models to conjure entire datasets as a replacement for direct human response, there is a recognized potential for certain uses: to fill in specific missing responses within a partially completed dataset (akin to imputation), or to simulate hypothetical scenarios. If the model is trained on relevant historical data—as Kim and Byungkyu (2023) did for the General Social Survey—and includes an understanding of the question context, it could produce plausible guesses that might be, at times, preferable to omitting entire cases, though an open question is if such approaches are preferable to established methods. This is especially pertinent in large-scale, repeated surveys where partial nonresponse is common, and one must weigh the trade-offs of losing observations.

Still, major concerns remain about transparency, reliability, trustworthiness, and bias (Ashwin et al. 2023; Gallegos et al. 2024; Ntoutsi et al. 2020). The models’ so-called “reasoning” is often opaque, and different AI systems can produce different outputs. These limitations highlight the ongoing need for caution, rigorous validation, and disclaimers whenever synthetic or imputed data are generated by AI.

3.3. AI in Interviewing and Coding

Another fascinating question concerns whether AI could replace or assist human interviewers. Historically, face-to-face and telephone interviews have afforded participants a sense of empathy and deeper engagement—qualities that until recently were hard to replicate through automated systems. Yet, with the advancement of conversational interfaces (e.g., chatbots), researchers have begun experimenting with AI-powered interviewer bots.

Preliminary findings from small-scale experiments indicate that these bots can follow an interview script reliably and, when integrated with speech recognition, can capture open-ended responses without the manual transcription an interviewer normally does (Wuttke et al. 2024). They can also propose codes for job titles or occupations in real time, effectively implementing conversational coding on the fly. While the human-to-human rapport is partly lost, historic data on self-administered data collection modes suggest that for certain topics, the machine interviewer’s perceived “lack of judgment” can foster openness in respondents.

A more mature area of AI application is automated coding of open-ended responses (Zenimoto et al. 2024). By “reading” text, an LLM can classify job descriptions, reasons for unemployment, or other textual data into relevant categories. In a system that allows a human-in-the-loop approach, the AI might generate, say, five possible standardized codes, and the interviewer or respondent selects the correct one (Schierholz et al. 2018). This design reduces the burden on the interviewer and can streamline data collection. Even so, the AI’s performance depends heavily on the quality of its training, including contextual examples from the relevant population, language, and classification system.

4. Challenges for Funding Agencies, Journals, and Research Organizations

As the field moves forward, questions inevitably arise about how to ensure quality, transparency, and reproducibility in data collection that relies on AI. Funding agencies, such as the National Science Foundation in the U.S. or research councils in Europe, must establish guidelines and best practices to govern proposals that integrate LLMs for survey instrument design, synthetic data creation, or automated coding. Journal editors, too, face the question of how to evaluate manuscripts that use partially AI-generated data. What documentation or disclosures should be mandated before an article is considered for publication?

Researchers in AI often warn about “model drift”—the phenomenon where a model’s parameters and behaviors shift over time, especially when the training corpus gets updated. In other words, a question asked to a chatbot in August may generate a different result if the underlying model is retrained in December. This has implications for reproducibility, a cornerstone of the scientific method. Should teams “freeze” a version of the model they used for a study to permit exact replication of results? How can users precisely document the model’s version, hyperparameters, or training data?

In the near term, open-source LLMs are considered more promising for reproducibility. A research team could download a stable version of an AI model, run it on their own computing infrastructure, share relevant code or “prompts,” and thereby guarantee that others can (at least somewhat) replicate the environment. By contrast, a fully closed proprietary system that updates continuously without disclosing changes is a more difficult environment in which to guarantee reproducibility.

Further complications arise from privacy considerations. If LLMs were trained explicitly on microdata from individuals, that might raise concerns about inadvertently exposing personally identifiable information. If, however, data are aggregated or the model training relies on differentially private transformations, risk can be mitigated. The best path forward likely will require close collaboration between data stewards, statisticians, privacy experts, and AI researchers. Ensuring fairness is still an open task for the community (Schenk and Kern 2024).

5. Toward a Network of Archives and Data: Aligning the Next Generation of Models

One compelling proposal is a grand collaboration among major data archives—places such as the UK Data Archive, ICPSR, and the German GESIS, among others—to explore how curated, high-quality social science data might improve LLMs’ representation of population-level truths. Currently, many LLMs are trained on vast swaths of internet text of widely varying quality. If an AI model could systematically learn from recognized, vetted survey data, it might generate more accurate predictions for social science questions and reflect real distributions more reliably. Such efforts would need to address critical legal and ethical questions. For example, the data would need to be aggregated or transformed so as not to reveal individual-level information while still preserving essential patterns for training.

Going forward, funding high quality survey data collections to continoulsy update LLMs would be necessary, and funding such an initiative would be no small challenge. However, partnerships with major technology companies—like the relationship ICPSR has established with Meta for secure data sharing—offer a potential template. The technology giants have both the computational resources and motivation to refine these models, while academic institutions provide the methodological expertise and carefully curated datasets.

Likely the final product is not a single “supermodel” but rather better-tailored domain-specific LLMs. Either way, the impetus stands: improved data produce better model outputs. From the standpoint of the social sciences, it is crucial to ensure that an AI’s understanding of, say, unemployment or mental health is not just gleaned from random internet text but is informed by carefully measured phenomena in large-scale population surveys.

6. Prospects and Cautions

Despite the newness and potential of LLMs, the challenges that confronted Big Data initiatives a decade ago remain: complexity, measurement error, missing data, representation biases, and privacy constraints do not disappear simply because we embed AI in the pipeline. Indeed, AI often just shifts the burden to later downstream processes or more specialized personnel. The future might thus be marked not by cost savings but by strategic redeployment of resources, employing highly trained data scientists, cryptographers, and machine learning engineers rather than focusing only on conventional field operations.

For the future of data collection, these developments herald an even greater emphasis on “research question first” thinking. Instead of treating one approach—be it Big Data, surveys, or AI-driven interviewing—as a blanket solution, successful practice will likely involve combining multiple data streams, each chosen for its unique strengths, while recognizing the inherent biases and modeling them appropriately.

At the same time, the field must embrace interdisciplinary dialog. The computer scientists who design and train large language models must collaborate with survey methodologists and social scientists who can highlight the complexities of human behavior, sampling, questionnaire design, and data interpretation. Likewise, social scientists must learn to speak the language of modern AI—understanding prompts, fine-tuning, data curation pipelines, and issues of model drift—to employ these tools effectively and responsibly.

Lastly, there is a call to ensure that we do not lose sight of the value of face-to-face engagement. Although AI interviewers might provide faster or cheaper ways to collect data, genuine human connection sometimes fosters richer or more candid responses—especially for sensitive topics. We recently did a small experiment in which an AI chatbot interviewer was found to have reduced empathy and engagement compared to a human interviewer (Wuttke et al. 2024). While that might not ruin the validity for certain factual questions, it could matter in research on mental health, relationships, or other nuanced phenomena in which empathy bolsters participation and data quality.

The sentiment is clear: the future of data collection is bright and expansive, with emergent technologies offering new capabilities that must be thoughtfully integrated into longstanding research traditions. As the community moves forward, it will collectively chart a course that retains the best of rigorous survey practice while embracing the opportunities opened up by Big Data, administrative sources, and AI-driven innovation.

Footnotes

Acknowledgements

Piet passed away unexpectedly on Friday, December 6th, 2025. We are forever grateful for his contributions and his humor when approaching these new topics.

Author Note

This articel is based on a keynote given at the 75th Anniversary Symposium of the Institute for Social Research at the University of Michigan.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was done while the author was visiting the Simons Institute for the Theory of Computing.

Received: January 9, 2025

Accepted: January 21, 2025