Abstract

A variety of autonomous exploration tasks have been successfully performed in several types of environments using different types of robotic platforms. The robotic task, the operational environment, and the robot embodiment represent the dimensions of the “problem space” in robot exploration. At the same time, a lot of exploration strategies are documented in the literature that provide partial solutions to the exploration problem. They define the “solution space” in robot exploration. To our knowledge, no previous work has provided a methodical overview of robot exploration strategies from the point of view of both the problem and solution spaces. In this systematic mapping study, we build a taxonomy of autonomous robot exploration strategies and application requirements and classify existing approaches according to it. The goal is to analyze research trends over time, and identify possible research gaps, open challenges, and promising future directions in order to support researchers and practitioners in generalizing, communicating, and applying the findings of the robot exploration knowledge field.

1. Introduction

Robotic exploration can be defined as the process of building a map of an unknown environment with a mobile robotic system, achieving full coverage of the area of interest with data from a set of onboard sensors (Vidal et al., 2020).

During an exploration mission, the robot gathers information that is useful for a variety of tasks (e.g., search and rescue of victims in a disaster environment) or in future missions (e.g., periodic inspection of an industrial plant). Typically, useful data for these tasks are the geometric and topological structure of the environment and the location and semantics of objects and places.

The exploration process consists of three main steps that the robot performs repeatedly: (i) updating a map of the environment with sensor data acquired from its current position, (ii) identifying unexplored zones in the map, and (iii) planning the best exploration step (called “next-best-viewpoint” in Connolly (1985)) to observe new portions of the environment and gather new information. Here, “best” may correspond to minimizing the exploration time, maximizing the map accuracy, minimizing the robot’s pose uncertainty, or enforcing other application requirements. How the “next-best-viewpoints” are identified and selected is specific to the exploration strategy (Taylor and Kriegman, 1993).

In the literature, we found that a variety of autonomous exploration tasks (e.g., search for objects, target pursuing, or terrain learning) have been successfully performed in several types of environments, that is, aerial, aquatic, terrestrial field, planetary surface, indoor building, confined and subterranean areas, and collapsed structures. For these exploration tasks, different types of robot embodiment have been used, which range from single robots to homogeneous or heterogeneous multi-robot systems.

The robotic task, the operational environment, and the robot embodiment represent the dimensions of variability in robot application requirements, the “problem space” in robot exploration (García et al., 2022).

A lot of exploration strategies are documented in the literature that provide partial solutions to the exploration problem, the “solution space” in robot exploration. Each application requirement places limits on the usability of the various exploration approaches. For example, an exploration strategy may build high resolution 3D geometric maps of indoor buildings using UAVs, but it cannot be used in home environments for safety reasons or in large subterranean environments due to on-board memory constraints and limitations in remote communication.

To our knowledge, no previous work has provided a methodical overview of robot exploration strategies from the point of view of both the problem and solution spaces.

In this paper, we present a systematic mapping study (SMS) (Petersen et al., 2008) of the state of research in mobile robot exploration strategies as it is reported in the literature. An SMS is a type of secondary study designed to give an overview of a research area through the classification of a specific type of phenomenon. It differs from a systematic literature review, which aim at comparing similar phenomena and synthesizing evidence of their properties. In contrast, a SMS identifies the patterns of occurrence of the phenomena and establishes that a statistically relevant correlation exists between two phenomena. The strength of a SMS is its replicability. It is based on a rigorous research methodology (Brereton et al., 2007) that minimizes the bias of the experts who conduct the study.

The aim of our SMS is to (i) define a taxonomy of autonomous robot exploration strategies and application requirements, (ii) classify relevant primary studies found in the literature according to the proposed taxonomy, (iii) analyze research trends over time, and (iv) identify possible research gaps, open challenges, and promising future directions.

The goal is to support researchers and practitioners in generalizing, communicating, and applying the findings of the robot exploration knowledge field.

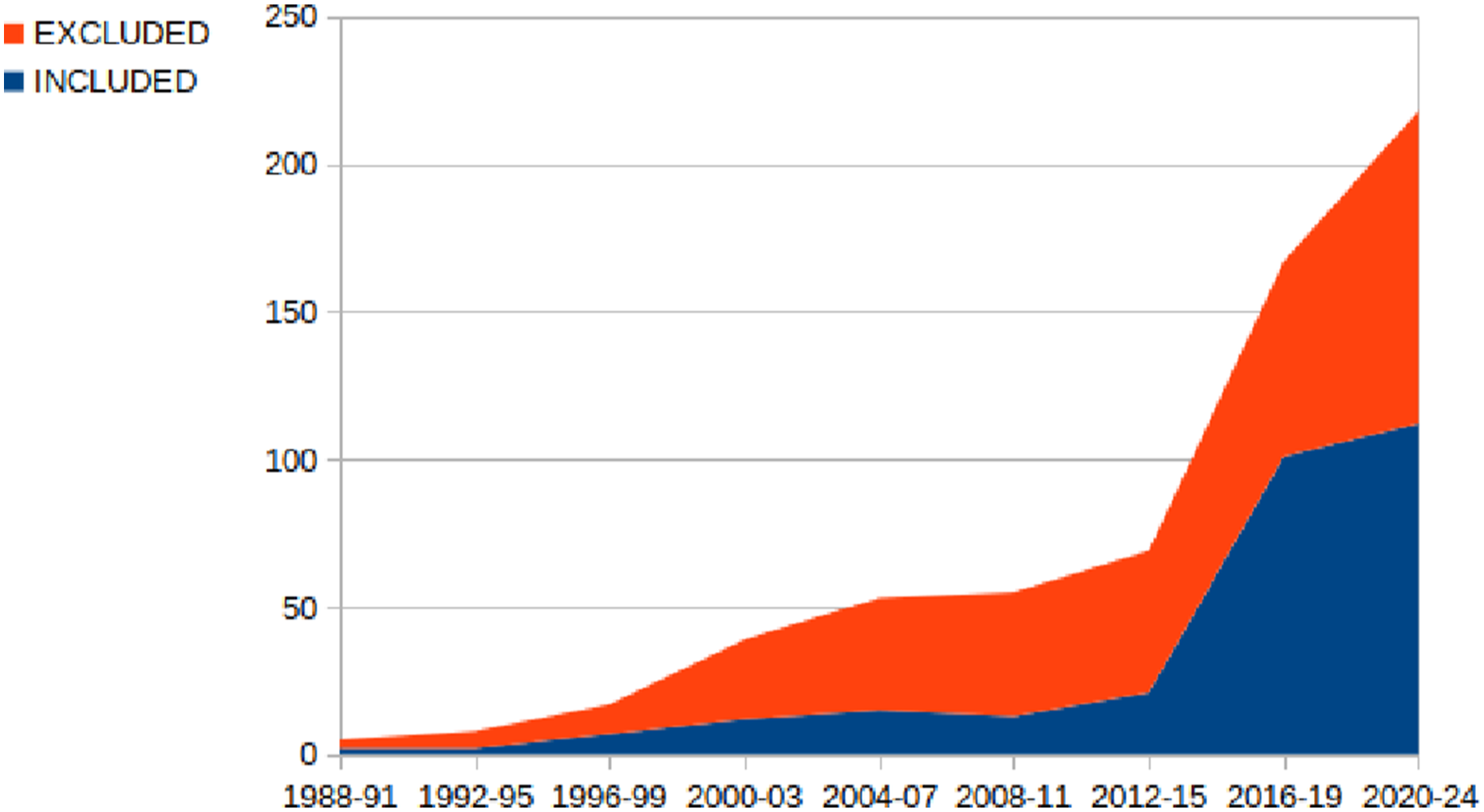

The taxonomy and the classification of primary studies are based on the screening of papers published between 1986 and 2023 extracted from different sources. An initial set of 4876 papers was retrieved through an automatic search on three digital libraries, that is, Scopus, IEEE Xplore, and ACM DL. An additional set of 24 papers were retrieved from secondary studies. A final set of 303 primary studies was selected through a multi-step screening process based on title, abstract and full-text reading. For 62 primary studies, we have also retrieved the related open software projects from online repositories and classified them according to relevant quality attributes. We complemented the analysis and classification of the exploration strategies with information about the type of experimental validation documented in the primary studies and with the list of used simulators.

It should be noted that more than half of the selected primary studies have been published during the last 5 years. This demonstrates the fast evolution of the research field and the appropriate timing of our SMS.

The main contributions of this study are the following: • A taxonomy of application requirements (problem space) and of strategies (solution space) for autonomous robot exploration that can be used by researchers to contextualize future research results with respect to the current state of the art. • A systematic mapping of the state of the art in robot exploration strategies and of the available open source software libraries, valuable for both researchers and practitioners. • An analysis of the main implications of the obtained results in terms of current trends and open research challenges for both researchers and practitioners working on autonomous robot exploration. • A replication package containing the spreadsheets used in this work, which enables the verification and replication of this study.

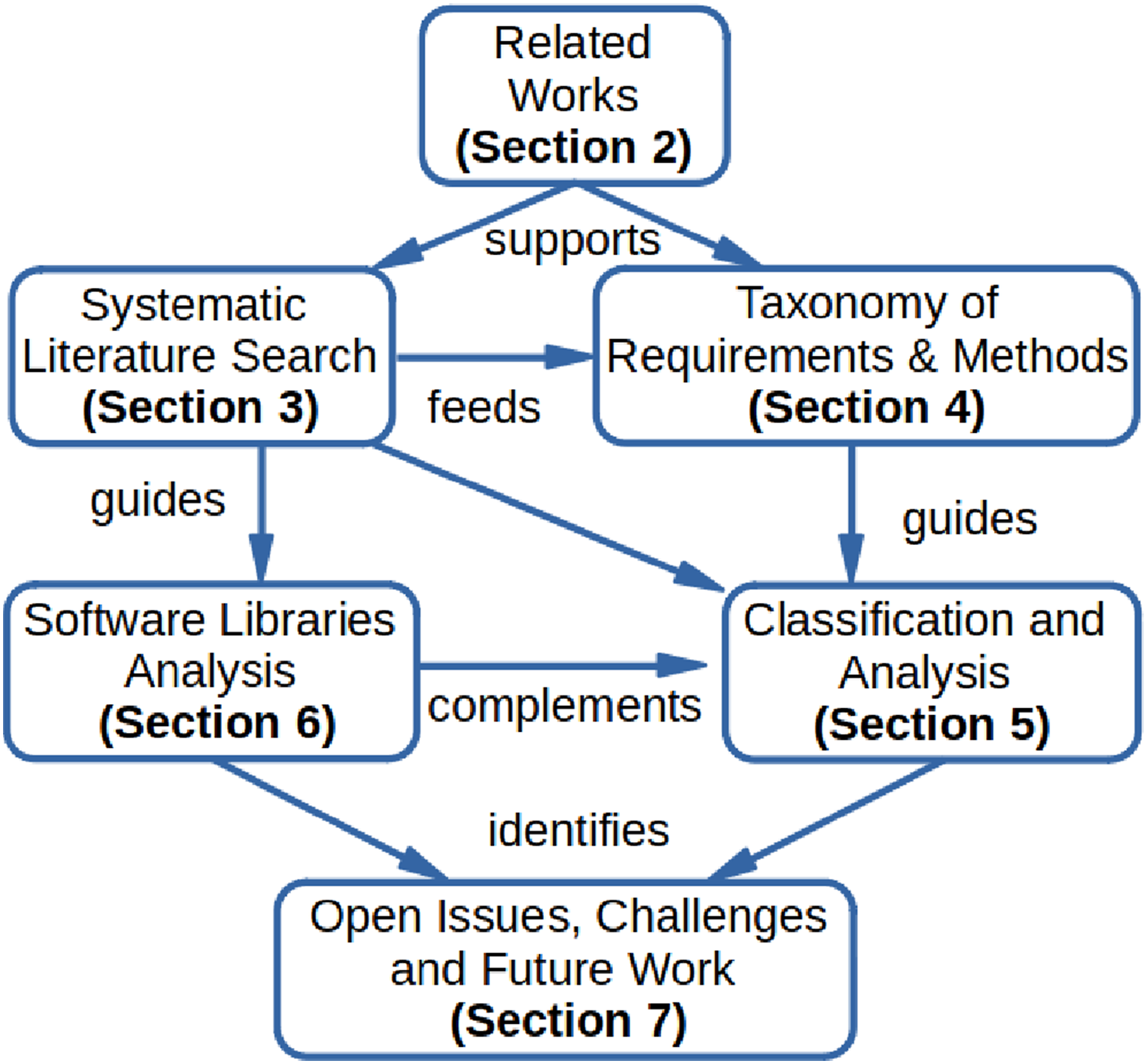

The rest of the paper is organized as shown in Figure 1. Section 2 introduces key concepts of robot exploration and provides an overview of previous secondary studies related to this work. Graphical outline of the paper structure.

In Section 3, we describe in detail the methodology of our systematic mapping study, including the research questions, the definition of the search string for the automatic retrieval of papers from several digital libraries, the screening process to identify relevant primary studies, and the data extraction process to answer the research questions.

In Section 4, we introduce the taxonomy for classifying exploration strategies identified during the systematic literature search and their application requirements.

In Section 5 we first present some statistics of the set of primary studies in our analysis, then illustrate their classification according to the previously defined taxonomy, and interpret the analytic results.

In Section 6, we illustrate the process for identifying the open-source software libraries that implement the methods and approaches described in the selected primary studies, and report some statistical data on their maturity and popularity.

Finally, in Section 7, we summarize and discuss all the findings from this systematic literature review, identify open issues and challenges in robot exploration research, and discuss future work.

2. Related works

A few secondary studies have been published in the past years on various aspects of mobile robot exploration. They partially focus on the specific topic of our study and provided useful insights for the initial version of our taxonomy of application requirements and strategies for robot exploration.

Typically, they address specific technologies (e.g., reinforcement learning or occupancy maps) for robot exploration or review exploration approaches for specific robotic tasks (e.g., environment monitoring) or for environments with specific characteristics (e.g., static indoor environments).

To the authors’ best knowledge, the literature lacks a systematic mapping study that analyses recurrent patterns and relationships among various aspects of robot exploration strategies. This is the main motivation for our work.

Garaffa et al. (2023) provide an overview of the most recent studies that propose exploration strategies for single and multiple robots employing Reinforcement Learning (RL) techniques. Exploration strategies are classified into two main categories: two-stages strategies use RL techniques for viewpoint selection and navigation, while end-to-end strategies use RL techniques to directly map sensor data to control actions. Each category is divided into two subcategories: goal-guided strategies regard tasks whose goal is to find or reach a specified target, while non-guided strategies regard tasks whose main goal is to cover a whole area. In our taxonomy, we included the distinction between end-to-end and two-stages exploration strategies and between goal-guided and area coverage exploration tasks. Moreover, Learning is a cross-cutting concern in our taxonomy and several categories refer to learning methods.

Ramakrishnan et al. (2021) review algorithms for visual exploration in 3D environments. They formalize the exploration process as a finite-horizon partially observed Markov decision process (POMDP) and experimentally evaluate four reward functions in the POMDP: curiosity, novelty, coverage, and reconstruction. They conclude that the coverage and reconstruction reward functions are more effective in small and cluttered environments, while the novelty reward function is stronger in large and open environments. In our taxonomy, we introduced the distinction between small and large environments and between open and cluttered environments.

Lluvia et al. (2021) review studies regarding the exploration of static indoor environments by single robots with the goal of building geometric maps using the Simultaneous Localization and Mapping (SLAM) approach (Durrant-Whyte and Bailey, 2006). This type of exploration is called Active SLAM (ASLAM) (Davison and Murray, 2002), as the robot autonomously selects its destinations during the whole mapping process. In particular, this survey analyses two aspects of exploration strategies that we have included in our taxonomy: the techniques to extract promising best-view positions from the current map and the criteria to select the optimal destination. We have included the keyword Active SLAM in the search string for the automatic literature search described in Section 3.

Another survey on Active SLAM is presented in Placed et al. (2023), where the exploration problem is decoupled into three stages that identify, select, and execute potential navigation actions. In our taxonomy, we have included the keywords Viewpoint Generation and Viewpoint Selection, which correspond to the first two phases. The survey reviews several approaches for the selection phase, which are classified into three categories. (1) Naive Cost Functions are geometric heuristics, such as the Euclidean distance to the goal location, the time required to reach it, or the expected size of the area to visit. In our taxonomy, this category corresponds to the keyword Cost / Utility Function. (2) Information Theory-based approaches use the concept of entropy to quantify the uncertainty in the joint belief state. (3) Theory of Optimal Experimental Design approaches try to quantify uncertainty directly in the task space. In our taxonomy, the latter two categories correspond to the keyword Information Gain.

Stachniss (2009) has edited a book that collects contributions focusing on two categories of exploration approaches: those that assume accurate robot pose information and those that explicitly account for pose uncertainty. In our taxonomy, we have introduced the keyword Severe odometry drift (Schmid et al., 2021) as a possible environmental barrier and we have identified the exploration strategies that take it into account explicitly.

Da Silva Lubanco et al. (2020) consider a specific technique to extract best view positions from an occupancy map, that is, frontier-based exploration (Yamauchi, 1997a) and compare different cost or utility functions to select the optimal destination. Specific cost functions are the Euclidean distance from the current robot pose to a viewpoint and the steering angle to heading toward a viewpoint. Some utility functions are the number of contiguous frontier cells or the number of frontier cells visible from the current robot position.

Juliá et al. (2012) propose a classification of exploration strategies for multi-robot systems that specifically considers methods for multi-robot coordination (e.g., market-based). In our taxonomy, we consider both homogeneous and hybrid (e.g., ground and aerial vehicles) multi-robot systems and various coordination techniques and strategies.

Amigoni et al. (2017) classify multi-robot exploration approaches from the point of view of communication and connection requirements. In particular, the authors distinguish between centralized and decentralized exploration strategies. In our taxonomy we also consider this distinction.

Aniceto dos Santos and Teixeira Vivaldini (2022) review approaches to environment monitoring that use UAVs. In particular, they consider primary studies related to the Informative Path Planning research area, which, similarly to robot exploration, addresses the problem of autonomous decision making for collecting information about the environment. We have included the keyword Informative Path Planning for the automatic literature search described in Section 3, and we have added the keyword Area Monitoring to our initial taxonomy.

Azpúrua et al. (2023) discuss state-of-the-art robotic exploration strategies for complex subterranean confined scenarios, including single and cooperative approaches with homogeneous and heterogeneous teams of aerial and terrestrial robots, which build 2D or 3D maps. Typically, these scenarios are GPS-denied, communication-restricted, and characterized by poor illumination. Our taxonomy specifies various types of environmental barriers that characterize application requirements for robot exploration.

3. Literature review methodology

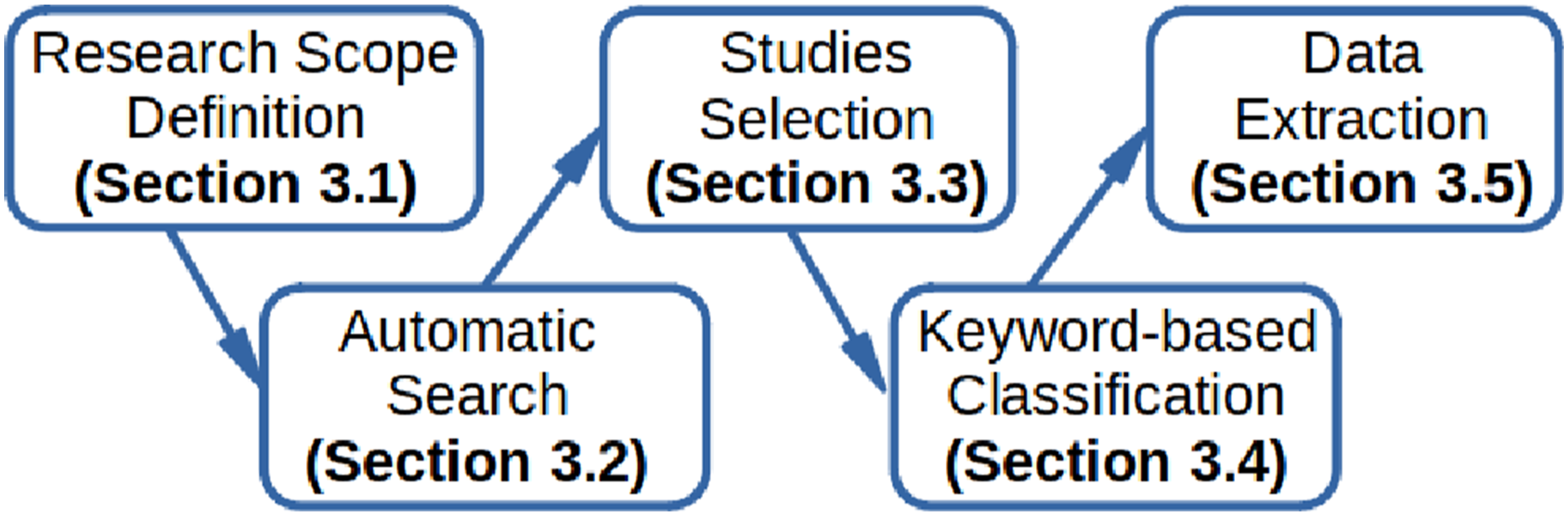

We conducted this systematic mapping study following the established guidelines defined in Petersen et al. (2008, 2015), which specify a research protocol consisting of five steps as illustrated in Figure 2 and presented in the following subsections. In addition, we have complemented the literature search with the identification of open-source software projects related to the relevant primary studies. The five steps of a systematic mapping study.

A full replication package is available as Supplementary Material. 3 It includes the scripts used for automating the search, the spreadsheet with the bibliographic data of the primary studies identified with the automatic search, the spreadsheet used for the data extraction with the link to the analyzed primary studies and open source libraries, and a report with all the classification data, including those that have not been presented in this paper for the sake of conciseness.

3.1. Research scope definition

As discussed in the previous sections, we aim to propose a framework to classify exploration strategies for autonomous robots. Based on this objective, we derived the following research questions. • RQ1: What would be a taxonomy that allows to categorize and compare state-of-the-art strategies for robot exploration systematically? This question aims at defining a systematic structure of common characteristics to enable the classification and comparison of exploration strategies according to application requirements (e.g., search and rescue in collapsed buildings with UAVs) and underlying methods and techniques (e.g., Reinforcement Learning for viewpoints selection on a 2D Occupancy Maps). • RQ2: What type of exploration strategies have been proposed over the years with respect to the above taxonomy? This question is devoted to measuring the evolution of research on exploration strategies and assessing their suitability with respect to the variety of application requirements. • RQ3: Which open-source software libraries are available that implement existing exploration strategies? This question aims to assess the usability of the exploration software library in terms of openness, maturity, and user satisfaction. • RQ4: What are the open challenges for further investigation? This question aims at identifying research gaps and emerging solutions that are an indication of future research.

The scope of this mapping study is precisely defined by the following set of exclusion (E) and inclusion (I) criteria that guided the selection of relevant studies. • E1. Studies that are not written in English; • E2. Studies that are not available for download in full text from IEEE Xplore, ACM Digital Library, or SpringerLink, and whose authors did not reply to our request; • E3. Duplicated studies or studies that are extended by another paper in this study; • E4. Secondary and tertiary studies (reviews, surveys); • E5. Studies not focused on robot exploration (e.g., Sewtz et al. (2023)); • E6. Studies not focused on fully autonomous robot exploration, that is, approaches that rely on human–robot cooperation to identify target positions (e.g., Ginting et al. (2022)); • E7. Studies that present approaches that do not maintain a consistent and online reconstructed map of the environment (e.g., Reinhart et al. (2020)) or rely on an a-priory map of a known environment to search for objects (e.g., Zhu et al. (2021c)) or learn some properties of the environment (e.g., human motion patterns (Molina et al., 2022)); • E8. Studies that present approaches based on educational robotic systems (e.g., Serri (2004)). • I1. Studies presenting approaches to autonomous robot exploration that explicitly describe the exploration strategy; a study is not included if the presented approach only focuses on other aspects of robot exploration, such as novel robot kinematics structures for the exploration of rough terrains (e.g., Arzo et al. (2023)). • I2. Studies providing information about the operational context of the exploration approach, that is, the task, the environment, and the embodiment; a study is not included if the environment is a non-realistic virtual map such as in Chen et al. (2020). • I3. Studies that discuss the experimental evaluation of the proposed approach either with a simulator or a real robotic system; • I4. Studies published between January 1986 and January 2024;

3.2. Automatic search

In this stage, we aim to retrieve the scientific studies that are of interest for answering our research questions by performing an automated search on three digital libraries: Scopus, IEEE Xplore, and ACM DL.

For this purpose, we extracted relevant keywords for the automated search phrase from the secondary studies described in Section 2. In particular, we found that several terms are used as synonyms of robot exploration, such as “active mapping,” “map learning,” and “map building.” In addition, we found that some articles focusing on “active slam” and “informative path planning” present exploration strategies for map building. We decided to also include these keywords in our automatic search, even though most of the resulting articles turned out to be irrelevant to our study.

The definition of the search phrase was challenging due to the generic meaning of the obvious search terms: “robot” and “exploration.” A search on Scopus with these two terms in the title or in the abstract returned more than 13.000 results, that is, low precision.

After extensive experimentation, we found that the search phrase is conveniently structured as the logical conjunction of two search strings.

The first string aims at returning papers that contain the keyword “exploration” or one of its synonyms in the title. The second string limits the results to papers that have the keyword “robot” or its derivations (e.g., “multi-robots” or “robotic system”) in the title, abstract, or keywords.

The Scopus digital library provides several options to narrow the automatic search. In particular, we excluded several keywords, such as “Machine design,” “Manipulators,” “Robotic Arms,” and “Remote control.”

The replication package contains the precise definition of the search strings used for the automatic search on the three digital libraries considered for this study.

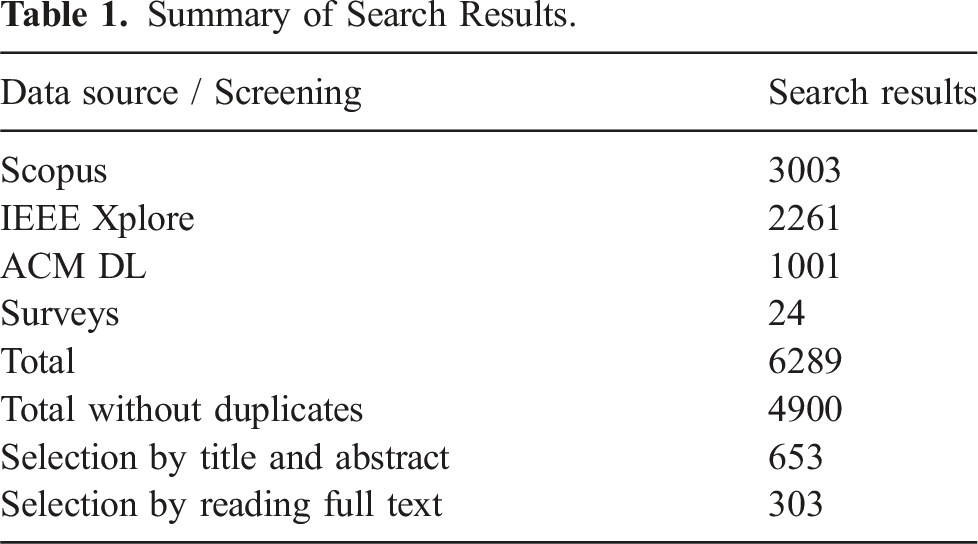

Summary of Search Results.

We have also included the papers cited in the secondary studies discussed in Section 2, which satisfy our inclusion and exclusion criteria and were not retrieved by the automatic search.

3.3. Studies selection

The total number of 4,900 studies were exported in an Excel file that records standard bibliographic information such as title, authors, abstract, and publication details of each study. The studies selection happened in two rounds.

In the first round, the first two authors analyzed all the candidate studies through title reading and discarded all the studies (3,082) that were clearly out of scope based on some exclusion criteria. Most of the titles are worded as “Exploratory studies” of some technology (e.g., “intention expression for robots”), or refer to different types of exploration like “Children’s Exploratory Learning.” The disagreement was limited to 5% of the studies and was resolved by a joint discussion among the three authors.

The remaining 1,818 studies were analyzed through abstract reading. This reduced the set of valid papers to 653 primary studies and 21 secondary studies. For this step, the disagreement was limited to 11% of the studies and was resolved again by a joint discussion among the three authors.

In the second round, the remaining 653 primary studies (see Table 1) were then randomly divided into three groups. A different author screened each group through full text reading. We excluded papers that did not satisfy all the inclusion criteria and those for which some excluding criteria (e.g., E3 and E5–E7) had not been properly assessed in the first round.

If the relevance or classification of a paper was in question, it was analyzed by all the three authors. A total of 25 primary studies required a joint discussion of the critical aspects in light of the inclusion and exclusion criteria. This stage thus yields a final set of 303 primary studies (see Table 1).

3.4. Keyword-based classification

The goal of this task is the definition of a taxonomy to systematically categorize and compare state-of-the-art strategies for robot exploration. We present the resulting taxonomy in Section 4. The taxonomy has been defined in two stages.

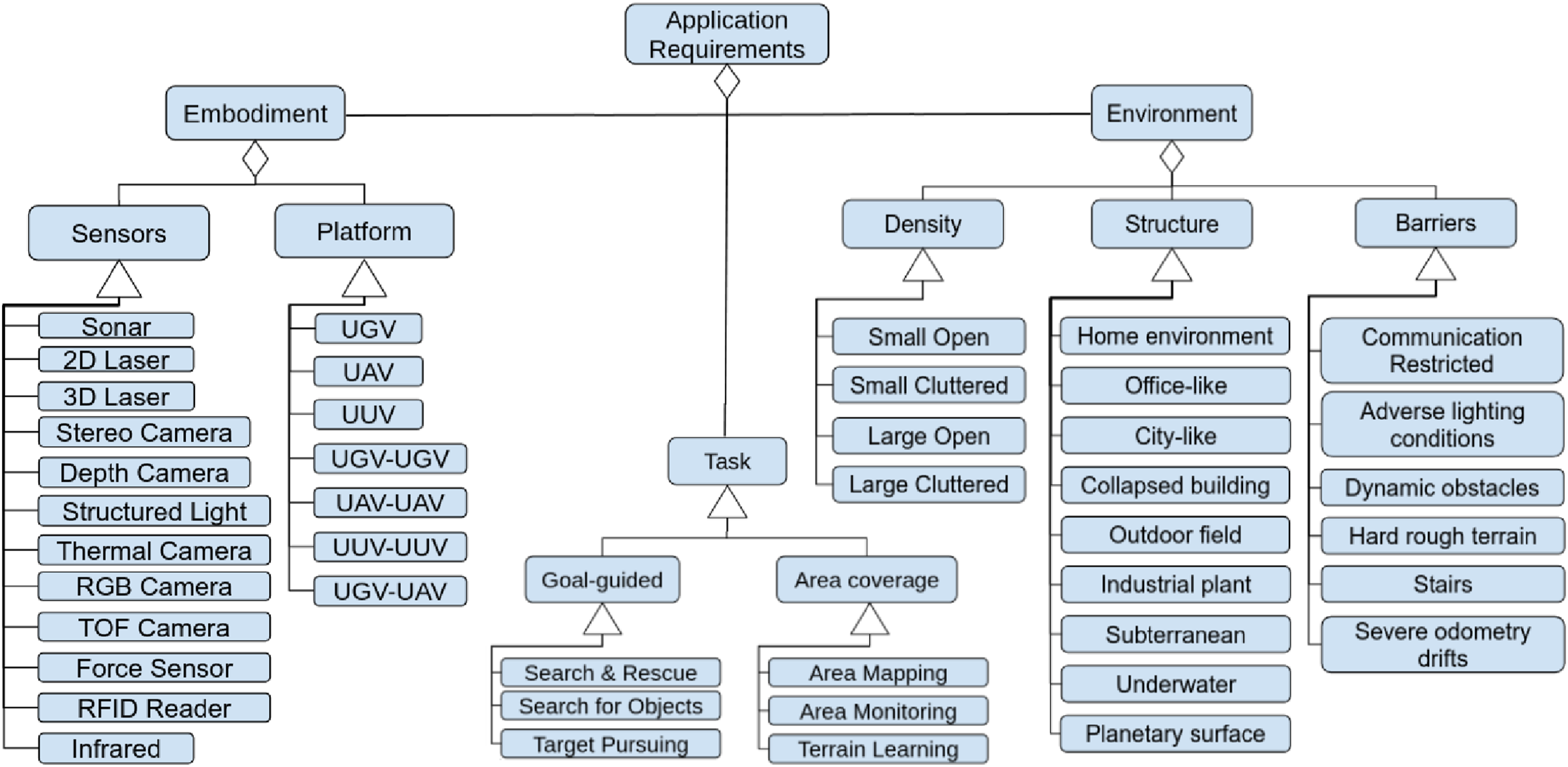

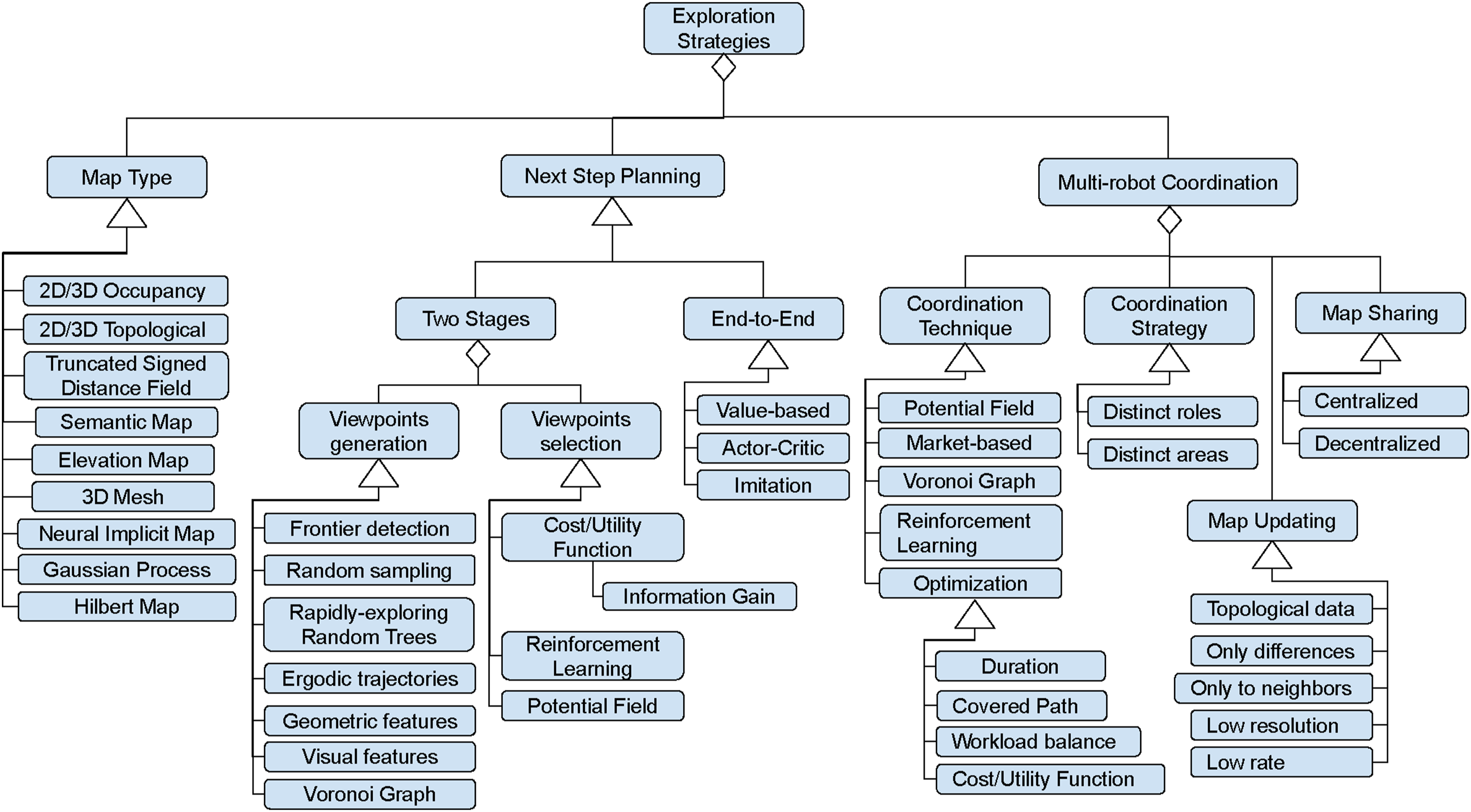

• Stage 1: An initial version of the taxonomy was defined based on the author’s experience and the concepts identified in the secondary studies discussed in Section 2. We structured the taxonomy into two main parts related to the variability in application requirements and the variability in methods and techniques. Each part consists of keywords that describe concepts related to robot exploration and are organized in a tree-like structure. The root nodes of the two parts are “Application Requirements” (see Figure 3) and “Exploration Strategies” (see Figure 4). Intermediate nodes correspond to classification categories (e.g., Type of map), called facets (Kwasnik, 1999). Leaf nodes correspond to specific instances (e.g., 2D occupancy map) of a classification facet. The taxonomy of application requirements. The taxonomy of exploration strategies.

• Stage 2: The taxonomy was iteratively extended during the full-text review of the primary studies. When a new application requirement or a new method for defining exploration strategies was identified in a selected primary study, a new leaf node was added to an existing classification facet or to a newly added facet.

3.5. Data extraction and analysis

The goal of this task is to cluster the primary studies into the classification facets defined in the taxonomy of requirements and strategies. Relevant information about robot exploration strategies is extracted from the selected primary studies, coded according to the taxonomy, and documented in a data extraction form (Kitchenham and Charters, 2007), which can be found in the replication package of this study.

The data extraction form is an Excel file with as many rows as the number of selected primary studies. For each study, each column records specific values of the corresponding classification facet (e.g., 2D occupancy map). In some cases, a primary study can have multiple values for a single facet (e.g., 3D Laser and Depth Camera). An additional column records any kind of information that is deemed relevant but that does not fit within the data extraction form.

Once all primary studies had been analyzed, we explored the extracted data in order to identify potential patterns and trends and uncover the existence of statistically significant relations between classification facets through contingency table analysis. Next, we performed a narrative synthesis (Popay et al., 2006) of the obtained data in order to answer our research questions.

The results of this analysis and synthesis are presented in Section 5.

3.6. Threats to validity

In this section, we present the validity threats to the findings of our SMS and measures to mitigate them.

The three typical problems faced by systematic secondary studies are the possibility of missing relevant articles, incorrect classification, and inaccurate analysis.

The primary defense against threats to the identification and selection of primary studies has been to follow a systematic review methodology (cf. Section 3), which is based on a protocol clearly defined before undertaking the review and precisely followed throughout the review. A significant challenge is related to the definition of the key phrases for the automatic searches on three digital libraries, because the key terms for our domain suffer from having both low precision (the term “exploration” is used with different meanings in a variety of domains) and low recall (many synonyms are used to indicate the robot ability to autonomously build a map). In order to mitigate this issue, the key phrases include synonyms identified during the review of relevant secondary studies (cf. Section 2), even if this search strategy significantly increased the search work.

The incorrect classification threat refers to the possibility that different authors interpret data extraction and classification in different ways. This could lead to an inconsistent classification of exploration approaches. We argue that this issue represents a moderate threat to the validity of our SMS. Indeed, the taxonomy of application requirements and exploration strategies includes high-level abstract categories (like Embodiment or Map Type) that have been identified in previous secondary studies and have a well-defined meaning in robotics, while the more concrete low level categories (like Search and Rescue or Information Gain) are keywords that have been extracted from the selected primary studies and iteratively added to the taxonomy. Only a few categories are subject to more subjective interpretation, such as the environment density (e.g., Small open, Large cluttered).

The first action to mitigate this threat was to identify examples of primary studies for each category and agree on a common definition. The second mitigation action was to design an online shared spreadsheet to be used by all authors during the data extraction. This allowed each of the three authors to easily compare the classification of each primary study with the classification of similar studies analyzed by the other authors. In case of dubious classification, we perform a cross-validation of the extracted data in order to ensure a common understanding.

The inaccurate analysis threat refers to the possibility that the conclusions drawn from the extracted data do not answer the research questions accurately, that is, the taxonomy is not complete (RQ1), the evolution of research on exploration strategies (RQ2) and the availability of open-source software libraries (RQ3) are not properly assessed, and the research gap and emerging solutions are not correctly identified. Despite we followed a rigorous methodology for conducting our SMS, as acknowledged in various studies (e.g., Petersen et al. (2015) and Wohlin et al. (2013)), it is likely that only a sample of the relevant primary studies has been obtained. Our SMS includes 303 primary studies from several research sub-areas, published between 1986 and 2023 in 68 unique publication venues (journals, conference proceedings, etc.). These figures indicate that our primary studies are not limited and biased toward a specific time period, publication venue, and research area. We can argue that our SMS includes a large and representative sample of relevant studies and adding more primary studies to the analysis should not change the qualitative conclusion.

4. Taxonomy

In this section, we illustrate the taxonomy introduced in Section 3. A taxonomy is a systematic classification of entities organized in a hierarchical structure to identify the patterns of recurrence, called species, that can be observed, understanding the relationships and similarities among them. The objective of providing the following taxonomy is to answer our research question RQ1, allowing to compare state-of-the-art robot exploration strategies.

Figures 3 and 4 depict the application requirements taxonomy and the exploration strategies taxonomy, respectively. Here, we use the graphical notations of UML Class Diagrams, 1 a semi-formal language for modeling software systems, which has a well-defined syntax. The connector with a diamond shape at one end indicates the aggregation (part-of) relationship (e.g., the Environment may be characterized by some Barriers). The connector with a triangle shape at one end indicates the specialization (is-a) relationship (e.g., Sonar is a specific type of Sensor).

We want to remark that the various facets and keywords of the taxonomy were extracted from the selected primary studies. They are illustrated in the following subsections.

4.1. Application requirements taxonomy

As introduced in Section 1, application requirements are characterized by three dimensions of variability: Environment, Embodiment, and Task.

4.1.1. Environment

Robotic exploration tasks are strongly affected by the characteristics of the environment that the robot has to explore. In the proposed taxonomy, we characterize the environment with three classification facets: Structure, Density, and Barriers.

Structure identifies the type of scene in which the robot is performing the exploration task. This can be indoor areas like Office-like and Home environments, which are characterized by several rooms and corridors that are similar to each other. Home environments are generally less structured than office-like areas, due to the higher variety in furniture and layouts. Collapsed buildings are characterized by a cluttered composition due to the presence of elements such as pipes, wires, debris, or even destroyed surfaces. Subterranean environments, both natural like caverns and artificial like underground areas, are very large corridor-like scenarios with repetitive tunnels (Ohradzansky et al., 2020). In the primary studies that we have analyzed, Industrial plants are large outdoor areas characterized by industrial buildings. In City-like scenarios the main elements are residential buildings, streets, cars, and eventually people. Other scenarios include Outdoor fields like in forests or agricultural scenarios, Underwater environments with diverse ecosystems, and Planetary surfaces characterized by dust and rocks.

The Density classification facet characterizes the volume of the scene and the spatial distribution of obstacles. In particular, we describe the environment as Small in the case of a single room and as Large for indoor scenarios that are made of multiple rooms as in Grontas et al. (2020) and for outdoor environments. In addition, an environment is considered Open if it is characterized by large passages compared to the robot size (e.g., an empty room, a hospital ward, a lawn). Otherwise, it is considered Cluttered (e.g., a forest or a crowded room). In the taxonomy, we considered a combination of these two characteristics. Examples of Small Open and Small Cluttered are presented by Wang et al. (2019a) and Naazare et al. (2022), respectively. While Batinovic et al. (2022) and Zhong et al. (2022) proposed exploration strategies in Large Open and Large Cluttered environments.

The environment can be further characterized by the presence of Barriers that can limit communication between robots (Best et al., 2023), affect the accuracy of mapping and localization due to poor illumination, the absence of natural landmarks in the environment (Papachristos et al., 2019b), cause severe odometry drifts (Schmid et al., 2021; Zhang et al., 2022c), or make the navigation more risky due to the presence of dynamic obstacles (Zheng et al., 2022a), hard rough terrain (Lehner et al., 2017), or even stairs (Bouman et al., 2020).

Other barriers are implicitly imposed by the type of environment and are not explicitly represented in the taxonomy. For example, indoor, subterranean, underwater, and planetary surfaces are GPS-denied.

4.1.2. Task

As suggested by Garaffa et al. (2023), the proposed taxonomy distinguishes between goal-guided and non-guided exploration scenarios.

In non-guided scenarios, the task focuses on the exploration of unknown areas of the environment for Area Mapping, that is, building a map that can be later used for navigation, or for more specific purposes. In particular, Terrain Learning consists in using specific sensors (e.g., force sensors or RGB cameras) to acquire soil compact information for traversability analysis (Fentanes et al., 2018). Similarly, Area Monitoring consists of inspecting an entire area and collecting information, such as the salinity level of the water, as in Suresh et al. (2020), and differs from target-searching applications, where the task ends once a target is located.

In goal-guided scenarios, the process of exploring an unknown environment still produces a map, but it is guided by information provided by additional sensors, such as visual information in Search & Rescue (Niroui et al., 2019) and Search for Objects (Goel et al., 2021) operations, or a target position with regards to a reference frame in Target Pursuing (Arvanitakis et al., 2018).

4.1.3. Embodiment

The robot’s kinematics, its mobility mechanisms, and the onboard sensors characterize the robot Embodiment, which is typically designed to take into account the characteristics of the operational environment and exploration task.

In our taxonomy, we consider three categories of mobile robots, that is, Unmanned Ground Vehicle (UGV), Unmanned Aerial Vehicle (UAV), and Unmanned Underwater Vehicle (UUV). According to the analysis of the primary studies, UGVs are the most used platform to perform exploration and include both wheeled robots (Zurbrugg et al., 2022) and legged robots (Bouman et al., 2020). UAVs differ for their dimension and include micro UAVs (Respall et al., 2021). Typically, UUVs have a torpedo shape as in (Palomeras et al., 2019a).

The taxonomy also considers multi-robot systems composed of robots of the same type, that is, UGV-UGV (Smith and Hollinger, 2018), UAV-UAV (Renzaglia et al., 2020), and UUV-UUV (Griffiths Sánchez et al., 2018). In the selected primary studies we have found only examples of hybrid systems composed of one UGV and one UAV like in Kulkarni et al. (2022) and represented in the taxonomy with the classification facet UGV-UAV.

Some approaches documented in the selected primary studies (e.g., Kan et al. (2020)) are based on the use of different sensors for navigation and mapping and for collecting observations from the environment as in terrain learning applications (e.g., Papachristos et al. (2019b)).

In the proposed taxonomy, the sensors for navigation and mapping are Sonar, 2D Laser and 3D Laser scanners, Stereo Camera, Structured Light Camera, and Time-of-Flight ToF Camera. Some authors generically indicate that their approach uses depth images without specifying the type of sensor that generates them. In this case, we use the category Depth Camera. Additional sensors for acquiring semantic information are Thermal Camera, RGB Camera, Infrared Sensor, Force Sensor, and RFID Reader.

Exploration strategies strongly depend on the set of onboard sensors, which are chosen taking into account the environmental conditions and other application requirements. For example, in case of poor illumination, a stereo camera does not provide accurate and reliable information, while a laser device can retrieve the 3D shape of the environment even in such conditions. Another example can be the use of thermal cameras that is generally linked to applications like search and rescue operations, to be able to identify more easily the person to be rescued (Doroodgar et al., 2014).

4.2. Exploration strategies taxonomy

From the analysis of the secondary studies described in Section 2, we have discovered that exploration strategies are typically characterized by three dimensions of variability (see Figure 4): the Map type for representing explored and unexplored regions of the environment, the techniques to plan the next exploration step, and the Multi-robot coordination policy when the exploration task involves a team of robots.

Most commonly, the exploration tasks in which the robot is guided to cover an unknown environment are carried out in a receding-horizon fashion where the robot has to plan a feasible path, follow the path, update the map with new information acquired through the sensors, and repeat this process until the task is completed (Bircher et al., 2016).

4.2.1. Map type

The main outcome of an exploration task is the map of the environment, which represents the robot’s knowledge about its workspace and surrounding environment as well as objects contained therein (Kucner et al., 2023).

A review of the various types of maps is beyond the scope of this SMS and highly cited secondary studies can be found in the literature, such as Thrun (2003). In Section 5, we will discuss the recurrent associations found in selected primary studies between each type of map, the exploration strategies, and the application requirements.

Here, for the sake of clarity, we briefly recall the common definitions of the Map Types that have been used for the exploration approaches documented in the selected primary studies.

2D/3D Occupancy maps employ a tessellation of the environment into cells, that is, a discrete regular decomposition, where each cell stores the probability that a corresponding area or volume is occupied. The state of a cell is defined as a discrete random variable with two values, occupied and empty (Elfes, 1989).

More general models of occupancy maps have been proposed to overcome the limitations of the original discrete representation, such as the inability to capture structural correlations present in the environment.

As illustrated by O’Callaghan and Ramos (2012) and Kim and Kim (2015), the Gaussian Process occupancy map is a continuous occupancy representation of the environment, which overcomes the assumption of independence between grid cells. This type of map has been used by Ghaffari Jadidi et al. (2019) for computing frontier cells efficiently using the gradient of the Gaussian Process occupancy map.

Hilbert maps (Ramos and Ott, 2016) are a continuous version of occupancy maps that capture statistical relationships between sensory measurements, thus being more robust to outliers. One significant advantage of Hilbert maps for robot exploration is the compression capabilities of the model, which can reduce the cost of communicating maps between the members of a multi-robot team. Francis et al. (2019) present an example of an exploration strategy based on Hilbert maps.

Truncated Signed Distance Field (TSDF) is a volumetric representation of a scene that was originally proposed as a method for integrating range images by Curless and Levoy (1996). It is similar to 3D occupancy grids, where each voxel stores the distance to the nearest surface (Werner et al., 2014). It is well suited for incremental updating and for the implementation of data-parallel algorithms on GPUs. We have found a significant number of recent primary studies that use this type of representation, such as Schmid et al. (2021) and Zhang et al. (2022c).

A more recent method for 3D mapping is represented by Neural implicit representations (Lionar et al., 2021), which implicitly represents the scene by means of a feedforward artificial neural network, where shapes and appearance are encoded in the weights of the neural network. One main advantage of Neural implicit representations is the ability to decouple the memory cost of the representation from the actual spatial resolution. This means that a fixed number of parameters can encode a surface with arbitrarily fine resolution.

Elevation map, generally addressed as a 2.5D map, represents the environment as a two-dimensional surface that is discretized in cells, each one encapsulating the elevation of the corresponding area of the terrain (Hebert et al., 1989).

3DMesh, or polygon mesh, is a group of vertices, edges, and faces that represent the 3D shape of a polyhedral object (Lorensen and Cline, 1987).

Topological map is a symbolic representation of the space that comprises a set of vertices describing a certain area and the involved edges, connecting the vertices to indicate connectivity (Thrun, 1998).

Sematic Maps enrich spatial representation of the environment with semantic labels (Maturana, 2021). As an example, the exploration strategy proposed by Asgharivaskasi and Atanasov (2023) builds a 3D occupancy grid, where each cell is labeled with an environmental category, such as building, vegetation, and terrain. In this case, and similar cases, we classify the approach with both categories, that is, Sematic Map and 3D Occupancy. Similarly, the approach proposed by Zurbrugg et al. (2022) extends TSDF maps with semantic information related to the objects typically found in a home environment: floor, bed, table, door, etc.

4.2.2. Planning the next exploration step

As described in Section 1, the central aspect of an exploration strategy is the identification at each iteration of the best accessible pose in the explored area represented in the current map, where the robot is supposed to observe new portions of the environment and gather new information. Each strategy defines what “best” means and exploits specific techniques for planning the next exploration step.

In theory, all the physically reachable destinations in the current map should be evaluated, as they may reveal new information about the unknown part of the environment. However, in real applications, the brute-force approach is not feasible due to the computational complexity that grows exponentially with the search space (Lluvia et al., 2021). For this reason, alternative definitions of the search space have been proposed. Instead of optimizing over the space of reachable locations, some approaches optimize over actuator controls or trajectories. The advantage of these approaches is the possibility to optimize the exploration activity taking into account additional constraints explicitly, such as total exploration time, available energy, and communication distance.

As proposed by Garaffa et al. (2023), exploration strategies can be classified into two categories: two-stages approaches and end-to-end approaches.

Two-stages approaches perform two steps in sequence: Viewpoint Generation and Viewpoint Selection.

Viewpoint Generation (see the list of papers in Table 8) consists of generating an adequate number of candidate poses where the next perception action can be performed (searching the space of locations, controls, or trajectories) with a sort of filter that reduces the size of the search space. In our taxonomy, we consider three families of viewpoint generation approaches: those based on partitioning the free area of the map, those that are based on the random generation of candidates, and those based on the identification of visual features.

The Area partitioning family includes four methods. The Frontier detection method was first proposed by Yamauchi (1997b). It consists in exploiting the information of occupied and unknown areas of the environment to detect the boundary regions among them, called frontiers. The frontiers are most commonly extracted from a geometric map and are grouped in clusters. For each cluster, the algorithm identifies a viewpoint. Some approaches (e.g., Cieslewski et al. (2017)) adopt a more reactive strategy and restrict the considered frontiers to those that are in the current field of view of the onboard sensor. This strategy specifically takes into account the limited power of UAVs avoiding the effort to significantly change the current velocity. Other approaches (e.g., Shen et al. (2012)) consider as frontiers unstructured regions of the map, which are identified by simulating the dispersion of particles through the known and unknown space. This strategy is preferable to the classical frontier-based approach when the environment is very cluttered. Since frontiers identification is a computationally intensive task, some strategies (e.g., Shade and Newman (2011)) adopt optimization methods instead of the classical geometric method.

Voronoi Graphs are used to decompose the space into regions based on the distance between a set of points. This process is also called “tessellation.” These regions are then used to find clear routes in space, extracting collision-free candidate poses (Kim, 2014).

Ergodic trajectories are a recent method applied in robotic exploration that comes from the ergodic theory, a branch of mathematics that focuses on the statistical properties of a deterministic dynamical system. In particular, it refers to the fraction of time spent in the sampling area, which should be equal to some metric (varying with the application) that quantifies the information density in that particular area (Miller and Murphey, 2013).

Geometric features, such as segments, circles, or distances extracted from a map of the environment, are used to define the set of points to consider as candidate viewpoints (Gao et al., 2019).

The Random generation family of approaches includes the Rapidly-exploring Random Trees (RRT) (LaValle, 1998) and other area sampling approaches. A specific advantage of this class of algorithms is that they can deal with high-dimensional and constrained problems.

The Visual features family includes approaches that extract points or areas of interest from camera images. They are used to sample candidate positions that the robot can explore to proceed with the exploration task as in Cimurs et al. (2022) and Yang et al. (2024).

Viewpoint Selection is the second step in an exploration strategy (see the list of papers in Table 9). It consists of identifying among the selected viewpoints the best candidate according to some optimality criteria.

Cost/Utility function is the most used approach to select the best viewpoint. The function can be based on different cost factors like the distance with respect to the candidate positions (Wang et al., 2019c) or the energy required to navigate toward certain areas. The utility is typically measured as the number of frontier cells or the volume of unknown space that can be seen from a viewpoint.

A more specific approach to evaluate the utility of visiting a viewpoint consists in computing the Information gain (Henderson et al., 2020). Here the objective is to select the candidate that maximizes the information acquired by visiting it. Ideally, to evaluate the information gain associated with the candidates, the robot should take into account future (controllable) actions and future (unknown) measurements (Lluvia et al., 2021). In practice, concrete approaches use heuristics such as the expected Shannon’s entropy of the map given a new sensor observation (Bai et al., 2016) or the Cauchy–Schwarz quadratic mutual information (Charrow et al., 2015).

Originally, Potential fields were used as a technique for reactive navigation (Wang et al., 2017). In the context of exploration, potential fields are used, for example, by Yu et al. (2021) to guide the robot through the space by being repulsed from occupied areas and attracted to the frontiers of unexplored areas.

More recently, a technique that has been applied to robotic exploration is Reinforcement Learning (RL), a sub-field of machine learning that enables an agent to learn behaviors through trial and error interactions (Kaelbling et al., 1996). The use of RL methods for exploration allows to be more flexible and robust to noise with respect to geometric methods, as stated by Bigazzi et al. (2022). A common way to use RL for Viewpoint Selection is the identification of an optimal policy to maximize information gain as in Zheng et al. (2022b). The approach presented in Tao et al. (2023) uses deep learning to predict map completion and information gain.

End-to-end approaches use deep learning techniques to learn the mapping between perception and control like using camera image observations to guide a robotic arm for closing a bottle (Levine et al., 2016). In particular, Reinforcement Learning algorithms explore the space of possible control strategies and select the strategy that maximizes a given reward function. End-to-End Approaches accomplish robot exploration tasks as a black box as they do not distinguish between viewpoint generation and selection. Strengths and limitations of reinforcement techniques applied to robotics have been analyzed by Kober et al. (2013).

Similarly, end-to-end approaches for robot explorations feed a learning algorithm with sensor data to compute the control action that guides the robot to perform the next exploration step. We classify end-to-end exploration strategies according to three learning methods (see Figure 4).

Value-based RL methods use a value function to estimate how advantageous is for the robot to perform a control action.

Actor-Critic RL methods split the policy search process into two phases: The actor predicts the actions, and the critic computes the value function.

Imitation learning (Hussein et al., 2017) is an alternative to RL. Instead of optimizing a reward function to select the optimal control policy, the imitation learning algorithm is trained to imitate a provided expert policy.

4.2.3. Multi-robot coordination

Multi-robot coordination is a part of robotic exploration involving the use of multiple robots that collaborate to efficiently explore and navigate the environment. The use of more than one robotic platform is advantageous when the environment is very large or has a complex structure that requires robots with different motion capabilities. In this case, to avoid overlapping, the exploration strategy should also include multi-robot coordination. In the proposed taxonomy, we distinguish between Coordination Strategy and Coordination Technique.

In the primary studies, we have identified two coordination strategies. Distinct areas refers to partitioning the environment in areas that are explored by a single robot, like in Yu et al. (2021). Distinct roles refers to having different robots that execute different tasks, like in the case of a leader robot that collects sensory data, computes a common map to share with the other robots, and plans for all the others as proposed by Griffiths Sánchez et al. (2018).

Coordination techniques refer to the assignment of exploration tasks to each robot. Voronoi Graph decomposes the environment in distinct areas to be explored by individual robots. Potential fields repulse each robot from navigating in areas occupied by other robots. Reinforcement Learning is used by Yang et al. (2024) and Luo et al. (2019) to assign frontier nodes to each robot in a topological map.

Optimization methods are based on a global team manager that assigns different exploration tasks to the robots of the team, in such a way that some performance metric is optimized, such as the total covered path, the duration of the exploration task, the workload balance among the robots, or a more general cost/utility function.

Market-based approaches allow the robots to trade tasks and resources with one another in a decentralized manner in order to maximize the efficiency of the team.

Multi-robot coordination is also concerned with the methods to efficiently share map data among the robots involved in the exploration task in order to limit communication bandwidth. In our classification, we distinguish between Map Sharing and Map Updating.

Map Sharing indicates where the global map is stored and we have identified two approaches. The Centralized approach is used when the robots communicate with a base station that stores the updated global map and coordinates the tasks of the robot’s team, such as planning their paths to the next viewpoint. The base station could be also one of the robots in the team, which has significant computational resources, such as in Qin et al. (2019), where an UGV builds a coarse map of the environment with its onboard sensors and receives fine map data from an UAV. The Decentralized approach is used when all the computation is performed onboard each robot, each of which holds a copy of the updated global map.

Map Updating specifies the strategy to limit the amount of data that each robot has to share to update the global map with new measurements from its onboard sensors. From the analysis of the primary studies, we have identified the following categories.

Each robot communicates specific types of data. Topological data may correspond to frontiers extracted from locally updated occupancy maps, or edges and nodes of a local topological map. Only differences refers to the portion of the local map that has been updated with the most recent sensor measurements. Low resolution means that some data compression technique is used to reduce the size of data to be transmitted by each robot.

Alternatively, each robot communicates only when possible. Only to neighbors indicates that each robot transmits data only to the closest team members because there are communication barriers. Low rate indicates that every local map update is shared with other robots, but this imposes a severe limit on the update rate.

Some approaches combine multiple updating strategies. For example in Hu et al. (2020) and Masaba and Li (2021), robots communicate Topological data and Only to neighbors.

We have found only one approach that combines Centralized map sharing and Only to neighbors communication. This is the case of Williams et al. (2020), where a team of robots explore a subterranean environment with communication barriers. Every robot returns to the base station periodically to upload map data that are merged and used to coordinate the multi-robot team.

5. Classification and analysis

In total, we included 303 papers in our mapping study. Each paper has been classified according to the facets of the taxonomy described in Section 4. Tables from 2 to 12 in Appendix A specify for each facet and keyword of the taxonomy the corresponding list of papers. The following paragraphs analyze the results of our systematic mapping study to answer research question RQ2 which has been stated in Section 3.

We proceed by (i) giving an overview of the included literature, (ii) combining the various facets of the taxonomy in evidence maps, and (iii) analyzing frequencies of primary studies for the various facets to discover recurrent relationships among them and gaps, which may be due to a variety of reasons, such as the limited scope of previous research, the limited efficacy of the research methodologies employed, or simply the absence of recent studies that report new findings.

5.1. Overview of the literature

The graph of Figure 5 reports a breakdown in nine periods from 1988 until 2024 of the 653 primary studies selected by title and abstracts. This data provides an overview of research progress in the field of robot exploration. For each period, the graph shows the primary studies included in the SMS and those excluded after reading the full text (see Section 3.3). We can identify three phases of the research progress by analyzing the temporal distribution in this graph and the list of papers in tables of Appendix A. Overview of the included and excluded primary studies per year.

Noteworthy are the first exploration approaches that build a 2D topological map (Kuipers and Byun, 1991), and exploit reinforcement learning (Duckett and Nehmzow, 1999). We have classified 12 papers related to this period.

5.2. Recurrent patterns and gaps

In this section, the taxonomy facets are combined to reveal relationships and interactions among the category values that arise in the selected primary studies, thus providing the foundation for subsequent discussions. We use contingency tables to support dependency analysis among the facets of the taxonomy and to display the multivariate frequency distribution in sets of simple or composite facets.

5.2.1. Application requirements

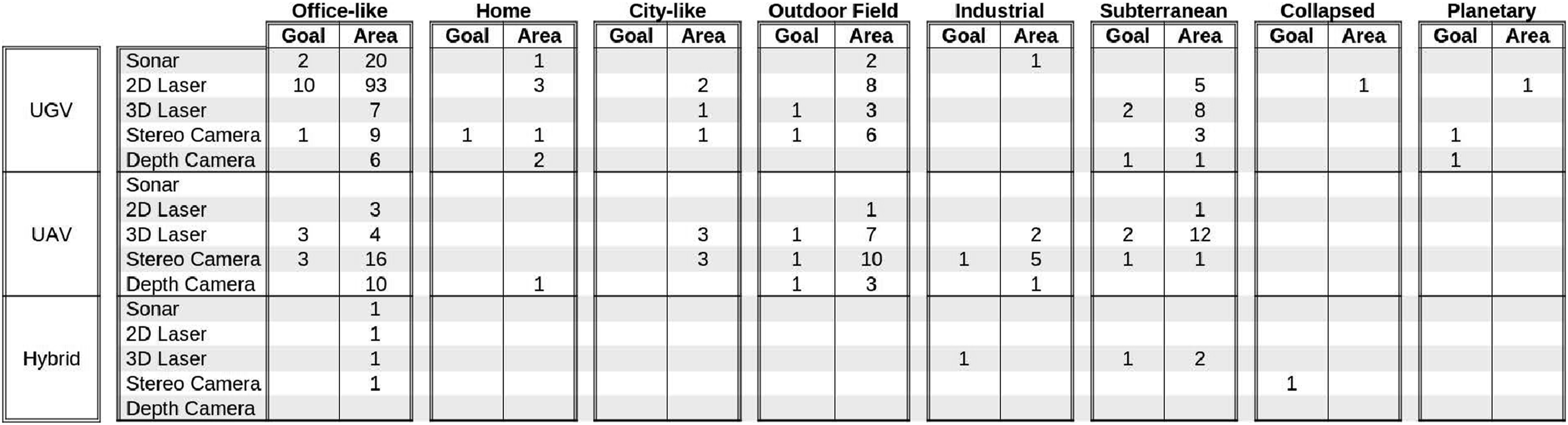

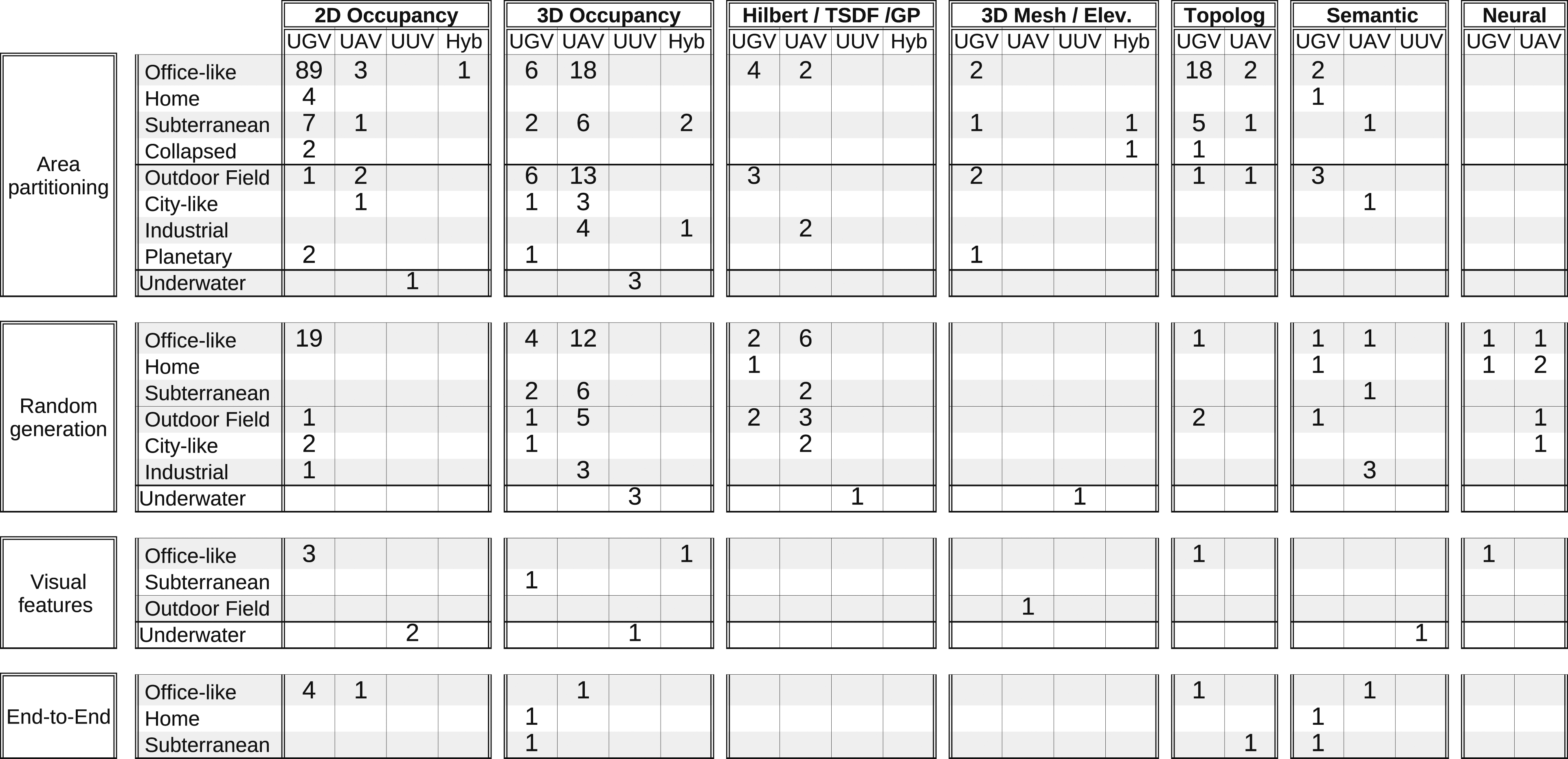

The tables in Figure 6 show the most recurrent patterns of application requirements in the selected primary studies. Recurrent patterns in application Requirements.

It should be noted that the various types of environment included in the Taxonomy of Figure 3 result from the analysis of the experimental scenarios described in the primary studies. The large majority of the proposed exploration strategies are tested in only one environment, either real or simulated, with only one type of sensor and platform. This does not necessarily mean that the same strategy is not suitable for the exploration of other types of environments with different sensors and platforms. Some authors claim that their approach is generic, but do not provide evidence of it.

Each table corresponds to a type of environment and is structured in three main rows, which correspond to different types of robotic platforms. For the sake of clarity, facet UGV groups both single UGV and multi-UGV systems, facet UAV groups both single UAV and multi-UAV systems, while facet Hybrid refers to hybrid UGV and UAV systems. For the same reason, we have omitted the Underwater environment because we have found a limited number of approaches. Each of the three main rows groups four rows, which correspond to the different types of sensors. Here, Depth Camera includes also ToF Camera and Structured Light cameras. Each table has two columns, which refer to the type of task, that is, Goal-guided and Area coverage. The number in each cell indicates how many primary studies address the specific combination of application requirements.

The majority of approaches have been tested in office-like indoor buildings (65%), where typically research labs are located, with both simple and multi-robot systems of UGVs and UAVs equipped with an entire variety of sensors. In particular, 2D Lidars (54%) are popular because they have an affordable price and good performance in indoor environments. Similarly, depth cameras (31%) combine the advantage of low cost with the possibility of acquiring semantic information about the scene from RGB images. Sonar (11%) has been used in office-like environments mostly for historical reasons.

A number of approaches have been tested in outdoor fields (15%). Indeed, several robotics applications, such as search and rescue, disaster response, agriculture, and monitoring, require the employment of robots in open-air environments.

Subterranean environments are also well represented in our SMS (12%). According to Azpúrua et al. (2023), a variety of sensors can be used for the exploration of subterranean environments. Interestingly, our SMS shows a significant prevalence of 3D Lasers (73%), despite them being the most expensive and energy-demanding type of sensor. This is due to many advantages offered by LiDAR sensors, such as being active sensors with high range, high accuracy, and high density. Other sensors that are commonly used in subterranean environments are 2D Lasers (16%), Stereo Cameras (14%) and Thermal Cameras (8%) and ToF Cameras (5%).

Only a few approaches have been tested in home environments (3%). This is probably due to the difficulties in arranging the typical home furniture in a research laboratory and in conducting experiments in a real home environment due to safety constraints. Nevertheless, service robots for home environments, such as floor cleaning devices, require the ability to build a map autonomously to perform their tasks efficiently without human support. Home environments present challenging characteristics (e.g., moving and movable objects, changing lighting conditions) that demand robust and cost-effective solutions to autonomous robot exploration. The existing gap in the analyzed studies will certainly be filled in the near future, given the development of robotic systems working alongside humans in domestic environments as robot caregivers or collaborative humanoid assistants.

Surprisingly, we have found very few approaches for the autonomous exploration of collapsed buildings (1%). At the same time, we have encountered several approaches for search & rescue tasks that have been tested in office-like or outdoor environments (69%). Certainly, this is due again to the difficulties in conducting experiments in real environments.

Regarding planetary exploration (1%), our SMS reports only a few occurrences since these kinds of studies are a niche in the research community. Moreover, most of the time, the exploration is not completely autonomous since a remote operator usually provides the plan the robot needs to follow on a global map.

A few approaches (7% in total) have been tested in more than one environment, such as Office-like and Outdoor Field (4%), Office-like and Subterranean (2%), Outdoor Field and Industrial Plant (1%) and only 2% of the approaches address the problem of exploring a dynamic environment.

Relatively few approaches enable the robot to perform tasks different than simple area mapping during an exploration mission: Search for Objects (5%), Search and Rescue (4%), and Target Pursuing (3%). This is because, typically, for more complex missions, it is assumed that the map of the environment has been built first.

To conclude the analysis of application requirements, it should be noted that only 20% of approaches are based on multi-robot systems.

5.2.2. Exploration strategies

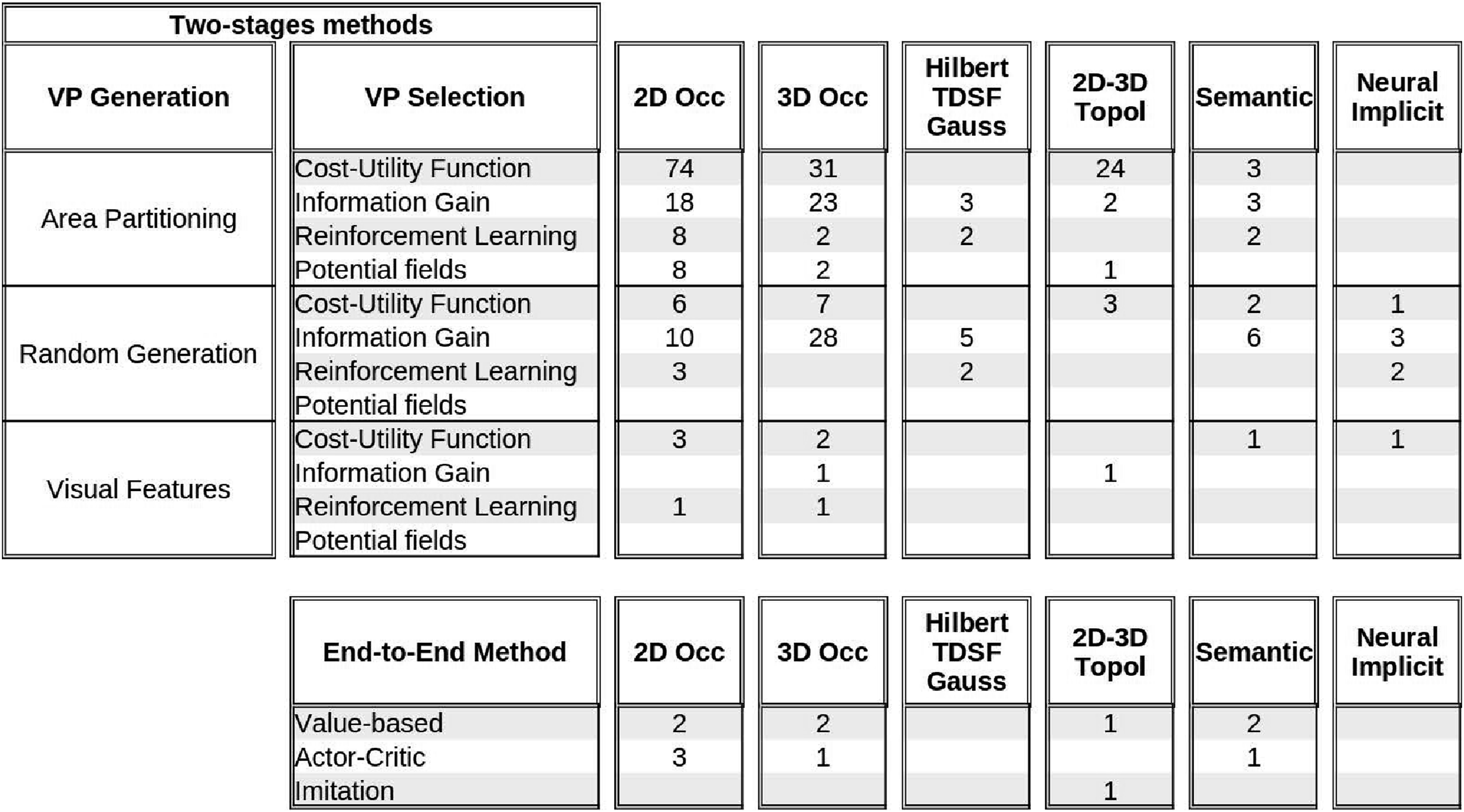

The tables in the upper part of Figure 7 show the most recurrent patterns among the facets used to classify two-stages exploration strategies documented in the selected primary studies. Recurrent patterns in two-stages and end-to-end exploration strategies.

For each type of map each row reports the number of primary studies for viewpoint generation and viewpoint selection method. We have grouped in a single table Hilbert Maps, Truncated Signed Distance Field, and Gaussian Processes because they are variants of volumetric maps, we have grouped 2D Topological and 3D Topological maps because the latter have been used in a very limited number of approaches, and we have grouped 3D Mesh and Elevation maps because they are specific types of 3D geometric maps.

As described in Section 4.2, we have grouped facets Frontier Detection, Geometric features, Voronoi Graph, and Ergodic trajectories in a single category for VP Generation named Area partitioning as they represent different techniques for partitioning the free space in regions, where promising viewpoints can be found. Similarly, we have grouped facets Random Sampling and Rapidly-exploring Random Trees in a single category named Random generation.

With regards to Area Partitioning techniques for viewpoint generation, the Cost-Utility Function emerges as the predominant approach for viewpoint selection in 2D topological maps (86%), 3D topological maps (83%), 3D geometric maps (62%), 2D occupancy grids (69%), and 3D occupancy grids (54%).

We contend that its prevalence is due to the possibility to easily define cost and utility parameters related to the structure of the environment (e.g., the distance to the centroid of a Voronoi region, the length of an ergodic trajectory, and the size of a frontiers cluster) and to easily balance different cost and utility parameters (e.g., the cost of a viewpoint is high if it is outside a room that has not yet been fully explored). In addition, Area Partitioning techniques have been defined since the foundation phase of the research on robot exploration. Conversely, when viewpoints are generated using Random sampling approaches, techniques based only on the computation of the Information Gain are more commonly adopted.

Interestingly, Visual features viewpoint generation is employed in relatively few works (4%). Only one of them generates Semantic maps (for Underwater environments) (Girdhar and Dudek, 2016) and only one generates 2D Topological maps (for Office-like environments). We argue that other approaches for Office-like environments could be extended to exploit visual features also for the identification of topological elements of the environment and their semantics (rooms, corridors, passages).

As one would reasonably expect, 2D/3D Topological maps are mostly used in combination with Area Partitioning viewpoint generation. Viewpoint selection approaches based on Potential Field (4%) and Reinforcement Learning (7%) have been applied to robotic exploration relatively recently and are primarily associated with 2D Occupancy maps and Area Partitioning.

On the contrary, Semantic maps are equally used in combination with Area Partitioning and Random viewpoint generation.

The tables in the lower part of Figure 7 show the most recurrent patterns among the facets used to classify end-to-end exploration strategies documented in the selected primary studies. Here, the three rows report the number of primary studies for each type of map and for each learning method. Our analysis of the primary studies shows that End-to-end approaches for exploration tasks are not yet as numerous in the literature, especially for non-guided tasks, whose main goal is to cover a whole area. This is coherent with the analysis reported in the survey by Garaffa et al. (2023), where it is shown that End-to-end approaches are mostly used for tasks whose goal is to find or reach a specified target. Additionally, our analysis of the primary studies shows that most approaches are based on traditional types of maps, that is, occupancy grids and topological maps. Unsurprisingly, some End-to-end approaches exploit Semantic maps, in particular for search and rescue tasks, such as in Pham et al. (2018) and Petříček et al. (2019), because RL algorithms can efficiently deal with a large number of states.

5.2.3. Correlations between application requirements and exploration strategies

In this section, we aim to analyze the recurrent correlations between application requirements and exploration strategies.

For this purpose, Figure 8 shows a table with seven main columns, one for each type of map, and four main rows. The first three rows correspond to VP Generation methods of two stages approaches and the fourth row corresponds to end-to-end methods. Thus, main rows and main columns correspond to categories of the exploration strategies taxonomy. Recurrent associations between application requirements and exploration strategies.

Each main column aggregates four internal columns, one for each type of robotic platform, where Hyb stands for hybrid platforms. Here, we do not distinguish between single robots (e.g., UGV) and homogeneous multi-robot systems (e.g., UGV-UGV).

To improve the readability of the tables, we have omitted the columns without entries. Each main row aggregates the types of environments for which at least one exploration strategy was documented in the primary studies. Thus, internal columns and rows correspond to categories of the application requirements taxonomy.

Data in this table show that 2D and 3D occupancy grids have been used to explore every type of environment and with every type of robotic platform. This is probably due to the fact that occupancy is among the first types of maps used in robotics and a significant variety of open source libraries are based on occupancy grids.

Interestingly, 2D/3D Topological maps are mostly (65%) generated while exploring indoor environments with UGV platforms. This result seems reasonable for large structured environments that can be naturally described in terms of rooms, halls, and passages. Indeed, the large majority of these approaches use Area partitioning techniques for viewpoint generation. Beside Office-like environments, several approaches use 2D/3D Topological maps for the exploration of subterranean environments, as they are typically characterized by long underground tunnels as discussed, for example, in O’Brien et al. (2023) for multi-UGV-based exploration or in Yang et al. (2021) for UAV-based exploration.

We found 20 primary studies, where Semantic maps are used for the exploration of almost every type of environment, with the exception of Collapsed and Planetary environments. Most of them refer to Area Mapping (35%) or Terrain Learning (35%) tasks, while the others refer to Search for Objects (20%) and Search&Rescue (10%) tasks.

Viewpoint generation based on Area partitioning is predominant (66%) for every type of environment and every type of platform, Random generation is well represented (27%), while Visual features are seldom exploited (4%).

Interestingly, in the primary studies we did not find any experimental scenarios evaluating random viewpoint generation techniques in Collapsed, and Planetary environments. We do not see any specific reason for this result, which could be considered as a gap that will be filled by future research.

As expected, UAVs, whether single-robot or multi-robot systems, are almost always used for generating 3D maps. Similarly, UGVs are typically used to build 3D maps of open outdoor fields and subterranean environments.

Interestingly some approaches use UGVs to generate 3D maps in office-like environments (5%). In these cases (e.g., Li et al. (2020c)), the onboard sensors mounted on a fixed position do not allow the robot to fully perceive the 3D environment, where objects may be partially visible from a predefined height. For these approaches, the goal is not to build a complete map of the 3D environment but only a 3D map that the robot can use for subsequent navigation tasks.

Surprisingly, only one approach for 3D mapping with an UGV has been tested in home environments (Zurbrugg et al., 2022). This is probably due to the current availability of simple commercial devices like floor cleaners, which only need 2D maps for navigation. If multi-legged robots (e.g., robot pets), mobile manipulators, and humanoid robots will become affordable commodities, they certainly will need autonomous exploration capabilities for building accurate 3D maps for a variety of navigation and manipulation tasks.

The random generation of viewpoints has been used in very few approaches to building topological maps because structured approaches (such as Area partitioning) offer a better approach regarding efficiency and map completeness.

End-to-end approaches have been mostly developed for UGV platforms in easily accessible environments, that is, Office-like and Home environments, where the experimental tests can be conveniently performed with real robots. One possible reason is that end-to-end methods tested in the real world typically use computationally heavy CNNs, which require adequate onboard computing resources.

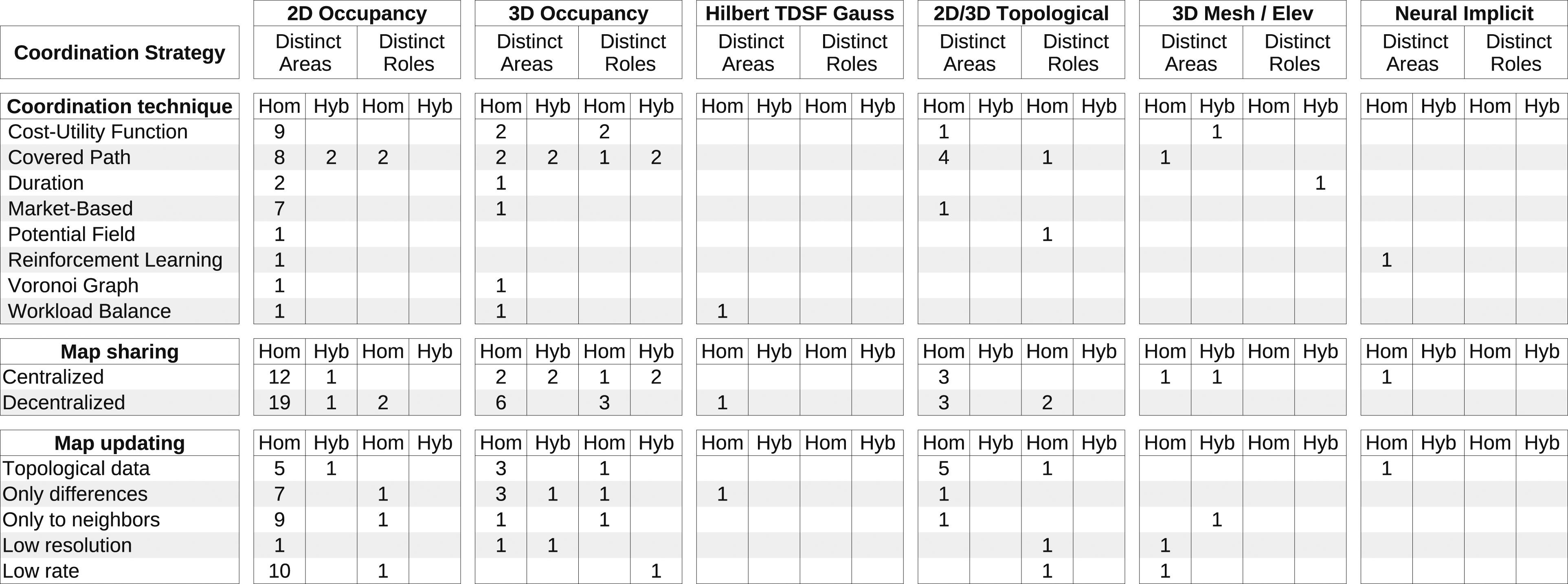

5.2.4. Multi-robot coordination

The table in Figure 9 shows the most recurrent patterns related to multi-robot coordination in exploration tasks. The table has six main columns corresponding to the map types defined in the exploration strategies taxonomy (see Figure 4). Semantic maps are not included in the table because we have not found any multi-robot exploration approach that produces a semantic map. For each map type, two distinct columns correspond to different coordination strategies, that is, Disinct Areas and Disinct Roles, which are further detailed in two columns indicating the type of platform, either homogeneous or hybrid. Recurrent patterns in coordination strategies for multi-robot exploration.

The whole table is structured into three main rows, corresponding to the Coordination technique, the Map sharing, and the Map updating methods.

Data in this table show that the coordination strategies employed for multi-robot exploration are mainly obtained with robots allocated in distinct areas of the environment (83%) rather than with distinct roles (17%). As expected, this prevalence is associated with the prevalence of homogeneous multi-robot-systems (87%) over hybrid multi-robot-systems (13%). Nevertheless, there are coordination approaches that assign distinct roles to robots with the same type of platform, as in the work by Mahdoui et al. (2020), where a specific robot of the team plays the role of leader and is in charge of making cooperative decisions. Similarly, there are approaches that assign the exploration of different areas to robots with different platforms, as in the case of subterranean environments that require hybrid multi-robot systems with heterogeneous capabilities (Best et al., 2023).

On top of this consideration, it can be seen that the most employed coordination strategies, regardless of the type of map used, optimize the covered path (40%) and some cost-utility function 25%). It is worth highlighting that a significant number of studies (13%) employed market-based techniques to coordinate the robots during the exploration.

With regards to Map sharing there is a slight prevalence of Decentralized (59%) over Centralized (41%) methods. This prevalence is higher for 2D Occupancy grids and Topological. 2d Occupancy maps are the most commonly used map type when robots are tasked with exploring different areas and they are mostly shared uncompressed at low communication rate or only to nearby robots.

It seems reasonable that Neural implicit maps are not commonly used for the exploration by multi-robot systems because RL algorithms require adequate onboard computing resources. Notably, only the approach proposed by Yang et al. (2024) builds a topological variant of Neural implicit maps, which are stored on a central server.

Similarly, it seems reasonable that semantic maps are not commonly used in multi-robot exploration because they typically store a large amount of information about the environment characteristics, which would require a high communication bandwidth among robots.

Interestingly, there is a significant number of approaches that share topological data used only for coordination, which are extracted from locally updated 2D / 3D Occupancy grids.

5.3. Exploration strategies evaluation

In this section, we complement the analysis and classification of the exploration strategies with information about the type of experimental validation documented in the primary studies and with the list of used simulators (see Table 13).

We categorized the studies based on whether they were tested only in simulated environments, only through real robot experiments, or both (see Table 14).

Our analysis shows that one third of the primary studies report experimental evaluations of the proposed exploration strategy conducted only in simulated scenarios, while 10% of the strategies have been tested only in real scenarios. Both approaches have strengths and limitations. On one side, experiments with real robots provide critical insights into the practical challenges and performance of the strategies in real-world settings. Real-robot experiments help validate the robustness and applicability of the strategies, accounting for real-world factors such as sensor drift, dynamic obstacles, and varying environmental conditions. On the other side, simulation tools allow researchers to test their methods in a variety of controlled and repeatable environments, facilitating rapid prototyping and initial validation. Simulations are particularly useful for evaluating performance under various hypothetical scenarios and large-scale environments that may be impractical to test in reality.

While the majority of primary studies report experimental evaluations conducted both in simulation and with real robots, typically the problem of transferring the results of fine tuning of relevant parameters from a simulated to a real robot is not specifically addressed. As pointed out by Garaffa et al. (2023), a challenging problem is the difficulty in transferring behavior learned through simulation to real-world scenarios, especially in the case of exploration strategies for multi-robot systems based on reinforcement learning.