Abstract

This article presents a robotic pick-and-place system that is capable of grasping and recognizing both known and novel objects in cluttered environments. The key new feature of the system is that it handles a wide range of object categories without needing any task-specific training data for novel objects. To achieve this, it first uses an object-agnostic grasping framework to map from visual observations to actions: inferring dense pixel-wise probability maps of the affordances for four different grasping primitive actions. It then executes the action with the highest affordance and recognizes picked objects with a cross-domain image classification framework that matches observed images to product images. Since product images are readily available for a wide range of objects (e.g., from the web), the system works out-of-the-box for novel objects without requiring any additional data collection or re-training. Exhaustive experimental results demonstrate that our multi-affordance grasping achieves high success rates for a wide variety of objects in clutter, and our recognition algorithm achieves high accuracy for both known and novel grasped objects. The approach was part of the MIT–Princeton Team system that took first place in the stowing task at the 2017 Amazon Robotics Challenge. All code, datasets, and pre-trained models are available online at http://arc.cs.princeton.edu/

Keywords

1. Introduction

A human’s remarkable ability to grasp and recognize unfamiliar objects with little prior knowledge of them is a constant inspiration for robotics research. This ability to grasp the unknown is central to many applications: from picking packages in a logistic center to bin-picking in a manufacturing plant; from unloading groceries at home to clearing debris after a disaster. The main goal of this work is to demonstrate that it is possible, and practical, for a robotic system to pick and recognize novel objects with very limited prior information about them (e.g., with only a few representative images scraped from the web).

Despite the interest of the research community, and despite its practical value, robust manipulation and recognition of novel objects in cluttered environments still remains a largely unsolved problem. Classical solutions for robotic picking require recognition and pose estimation prior to model-based grasp planning, or require object segmentation to associate grasp detections with object identities. These solutions tend to fall short when dealing with novel objects in cluttered environments, because they rely on 3D object models that are not available and/or on large amounts of training data to achieve robust performance. Although there has been inspiring recent work on detecting grasps directly from RGB-D pointclouds as well as learning-based recognition systems to handle the constraints of novel objects and limited data, these methods have yet to be proven in the constraints and accuracy required by a real task with heavy clutter, severe occlusions, and object variability.

In this article, we propose a system that picks and recognizes objects in cluttered environments. We have designed the system specifically to handle a wide range of objects novel to the system without gathering any task-specific training data from them.

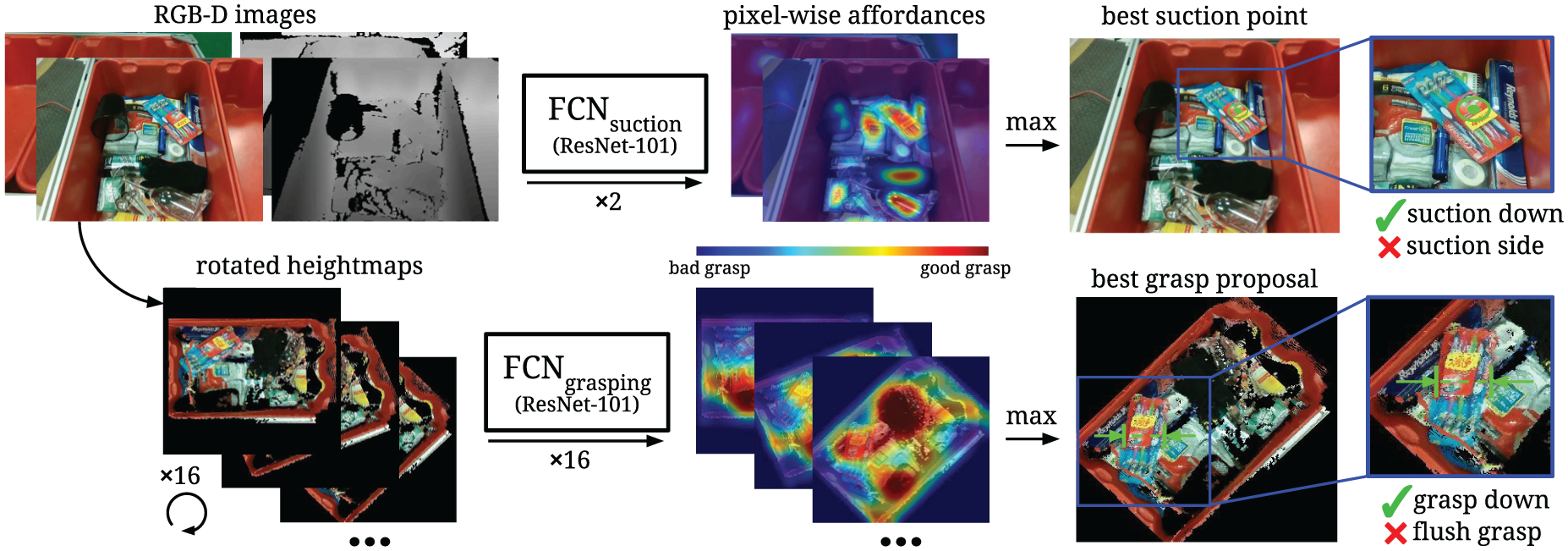

To make this possible, our system consists of two components. The first is a multi-affordance grasping framework that uses fully convolutional networks (FCNs) to take in visual observations of the scene and output dense predictions (arranged with the same size and resolution as the input data) measuring the affordance (or probability of picking success) for four different grasping primitive actions over a pixel-wise sampling of end-effector orientations and locations. The primitive action with the highest inferred affordance value determines the picking action executed by the robot. This picking framework operates without a priori object segmentation and classification and, hence, is agnostic to object identity.

The second component of the system is a cross-domain image matching framework for recognizing grasped objects by matching them to product images using a two-stream convolutional network (ConvNet) architecture. This framework adapts to novel objects without additional re-training. Both components work hand-in-hand to achieve robust picking performance of novel objects in heavy clutter.

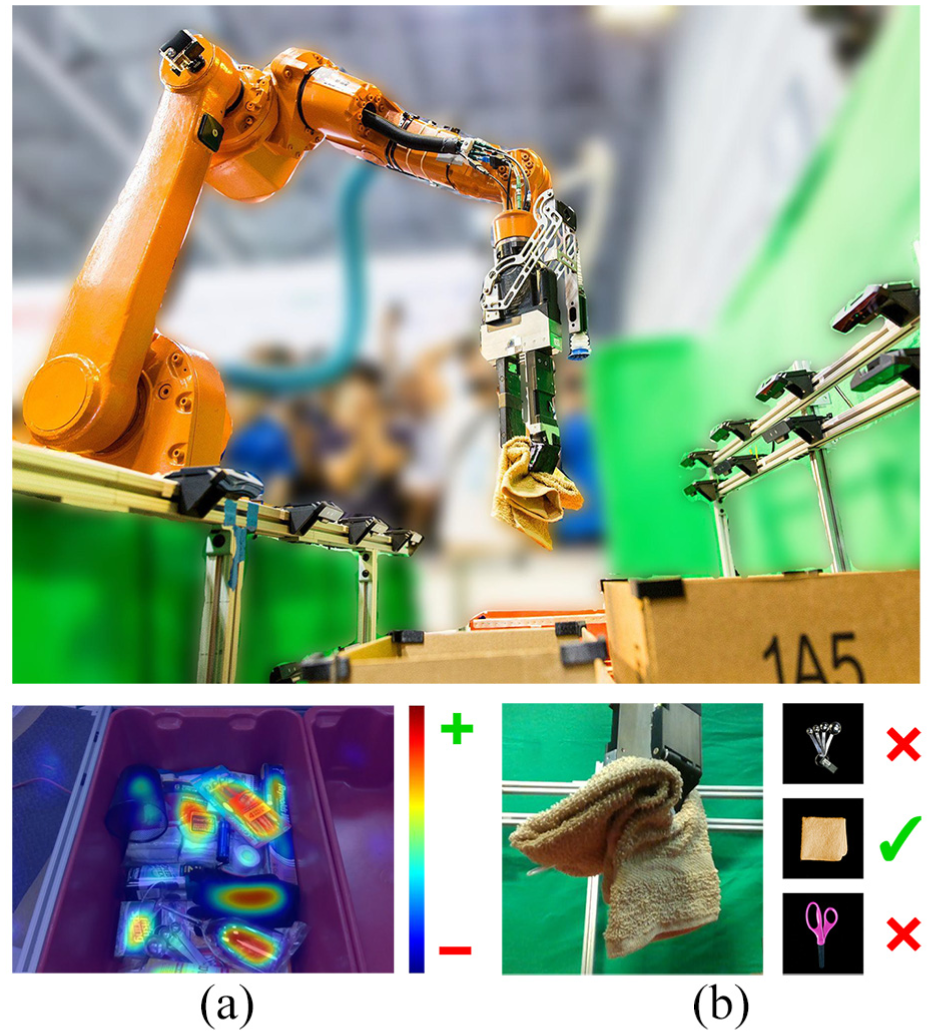

We provide exhaustive experiments, ablation, and comparison to evaluate both components. We demonstrate that our affordance-based algorithm for grasp planning achieves high success rates for a wide variety of objects in clutter, and the recognition algorithm achieves high accuracy for known and novel grasped objects. These algorithms were developed as part of the MIT–Princeton Team system that took first place in the stowing task of the Amazon Robotics Challenge (ARC), being the only system to have successfully stowed all known and novel objects from an unstructured tote into a storage system within the allotted time frame. Figure 1 shows our robot in action during the competition.

Our picking system computing pixel-wise affordances for grasping over visual observations of bins full of objects, (a) grasping a towel and holding it up away from clutter, and recognizing it by matching observed images of the towel (b) to an available representative product image. The key contribution is that the entire system works out of the box for novel objects (unseen in training) without the need for any additional data collection or re-training.

In summary, our main contributions are as follows.

An affordance-based object-agnostic perception framework to plan grasps using four primitive grasping actions for fast and robust picking. This utilizes FCNs for inferring dense pixel-wise affordances of each primitive (Section 4).

A perception framework for recognizing both known and novel objects using only product images without extra data collection or re-training. This utilizes a two-stream ConvNet to match images or picked objects to product images (Section 5).

A system combining these two frameworks for picking novel objects in heavy clutter.

All code, datasets, and pre-trained models are available online at http://arc.cs.princeton.edu. We also provide a video summarizing our approach at https://youtu.be/6fG7zwGfIkI.

2. Related work

In this section, we review works related to robotic picking systems. Works specific to grasping (Section 4) and recognition (Section 5) are in their respective sections.

2.1. Recognition followed by model-based grasping

A large number of autonomous pick-and-place solutions follow a standard two-step approach: object recognition and pose estimation followed by model-based grasp planning. For example, Jonschkowski et al. (2016) designed object segmentation methods over handcrafted image features to compute suction proposals for picking objects with a vacuum.

More recent data-driven approaches (Hernandez et al., 2016; Schwarz et al., 2017; Wong et al., 2017; Zeng et al., 2017) use ConvNets to provide bounding box proposals or segmentations, followed by geometric registration to estimate object poses, which ultimately guide handcrafted picking heuristics (Bicchi and Kumar, 2000; Miller et al., 2003). Nieuwenhuisen et al. (2013) improved many aspects of this pipeline by leveraging robot mobility, whereas Liu et al. (2012) added a pose correction stage when the object is in the gripper. These works typically require 3D models of the objects during test time, and/or training data with the physical objects themselves. This is practical for tightly constrained pick-and-place scenarios, but is not easily scalable to applications that consistently encounter novel objects, for which only limited data (i.e., product images from the web) is available.

2.2. Recognition in parallel with object-agnostic grasping

It is also possible to exploit local features of objects without object identity to efficiently detect grasps (Gualtieri et al., 2017; Lenz et al., 2015; Levine et al., 2018; Mahler et al., 2017; Morales et al., 2004; Pinto et al., 2017; Pinto and Gupta, 2016; Redmon and Angelova, 2015; ten Pas and Platt, 2015). Since these methods are agnostic to object identity, they better adapt to novel objects and experience higher picking success rates in part by eliminating error propagation from a prior recognition step. Matsumoto et al. (2016) applied this idea in a full picking system by using a ConvNet to compute grasp proposals, while in parallel inferring semantic segmentations for a fixed set of known objects. Although these pick-and-place systems use object-agnostic grasping methods, they still require some form of in-place object recognition in order to associate grasp proposals with object identities, which is particularly challenging when dealing with novel objects in clutter.

2.3. Active perception

The act of exploiting control strategies for acquiring data to improve perception (Bajcsy and Campos, 1992; Chen et al., 2011) can facilitate the recognition of novel objects in clutter. For example, Jiang et al. (2016) described a robotic system that actively rearranges objects in the scene (by pushing) in order to improve recognition accuracy. Other works (Jayaraman and Grauman, 2016; Wu et al., 2015) explored next-best-view-based approaches to improve recognition, segmentation, and pose estimation results. Inspired by these works, our system uses a form of active perception by using a grasp-first-then-recognize paradigm where we leverage object-agnostic grasping to isolate each object from clutter in order to significantly improve recognition accuracy for novel objects.

3. System overview

We present a robotic pick-and-place system that grasps and recognizes both known and novel objects in cluttered environments. We refer to “known” objects as those that are provided to the system at training time, both as physical objects and as representative product images (images of objects available on the web), whereas “novel” objects are provided only at test time in the form of representative product images.

The pick-and-place task presents us with two main perception challenges: (1) find accessible grasps of objects in clutter; and (2) match the identity of grasped objects to product images. Our approach and contributions to these two challenges are described in detail in Sections 4 and 5, respectively. For context, in this section we briefly describe the system that will use those two capabilities.

3.1. Overall approach

The system follows a grasp-first-then-recognize workflow. For each pick-and-place operation, it first uses FCNs to infer the pixel-wise affordances of four different grasping primitive actions: from suction to parallel-jaw grasps (Section 4). It then selects the grasping primitive action with the highest affordance, picks up one object, isolates it from the clutter, holds it up in front of cameras, recognizes its category, and places it in the appropriate bin. Although the object recognition algorithm is trained only on known objects, it is able to recognize novel objects through a learned cross-domain image matching embedding between observed images of held objects and product images (Section 5).

3.2. Advantages

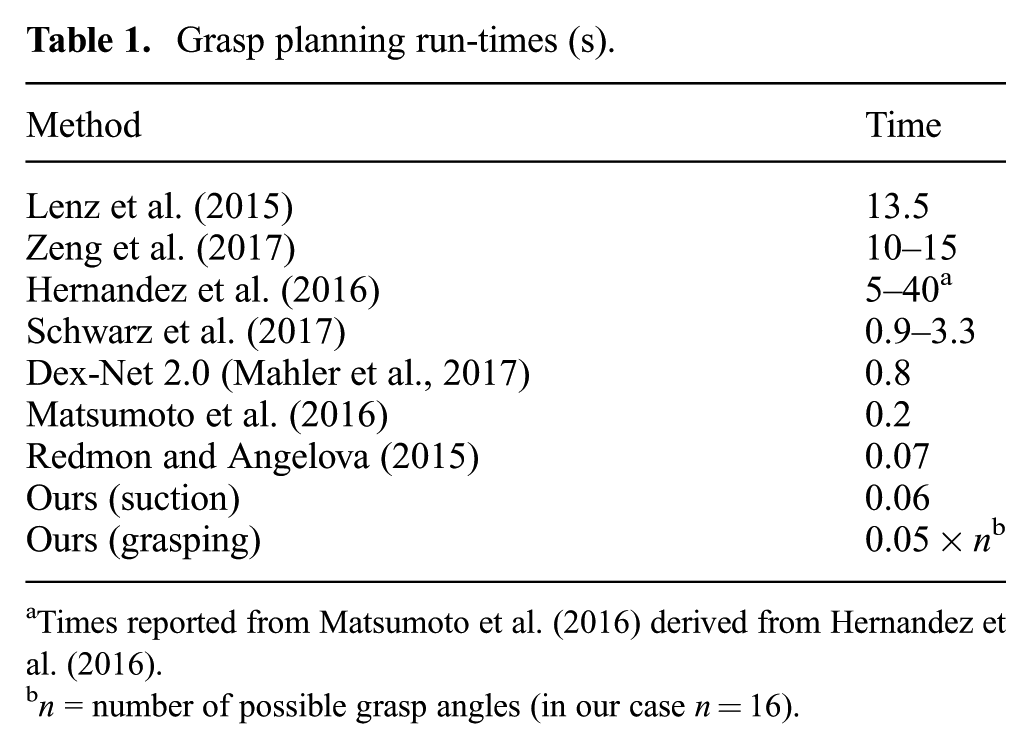

This system design has several advantages. First, the affordance-based grasping algorithm is model-free and agnostic to object identities and generalizes to novel objects without re-training. Second, the category recognition algorithm works without task-specific data collection or re-training for novel objects, which makes it scalable for applications in warehouse automation and service robots where the range of observed object categories is large and dynamic. Third, our grasping framework supports multiple grasping modes with a multi-functional gripper and, thus, handles a wide variety of objects. Finally, the entire processing pipeline requires only a few forward passes through deep networks and, thus, executes quickly (run-times are reported in Table 1).

Grasp planning run-times (s).

Times reported from Matsumoto et al. (2016) derived from Hernandez et al. (2016).

3.3. System setup

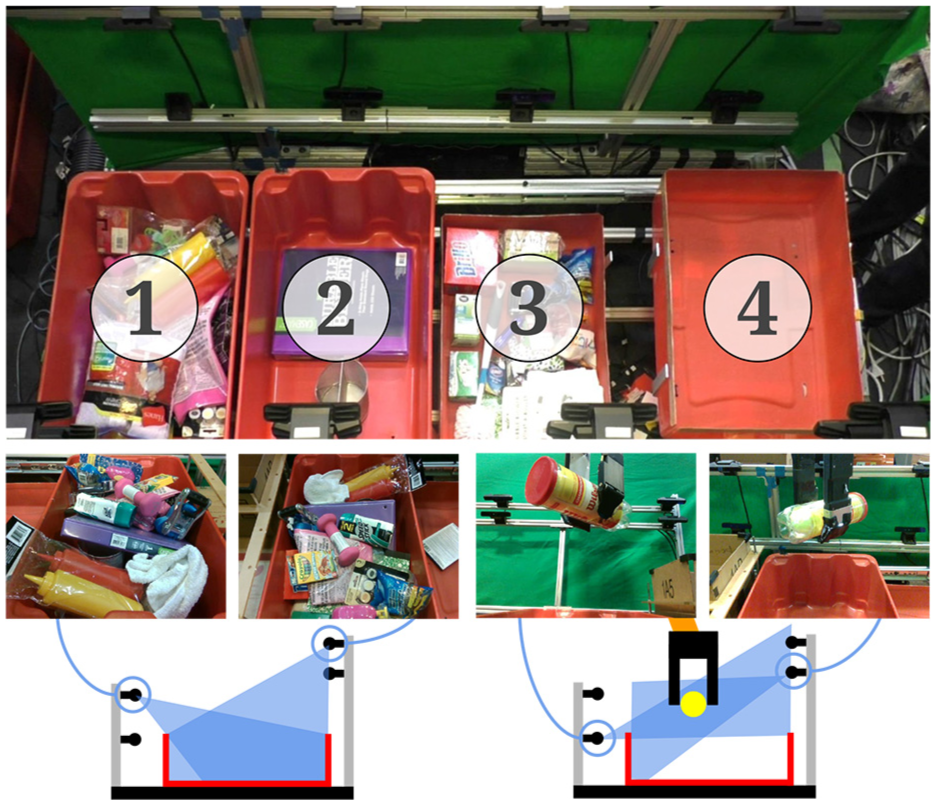

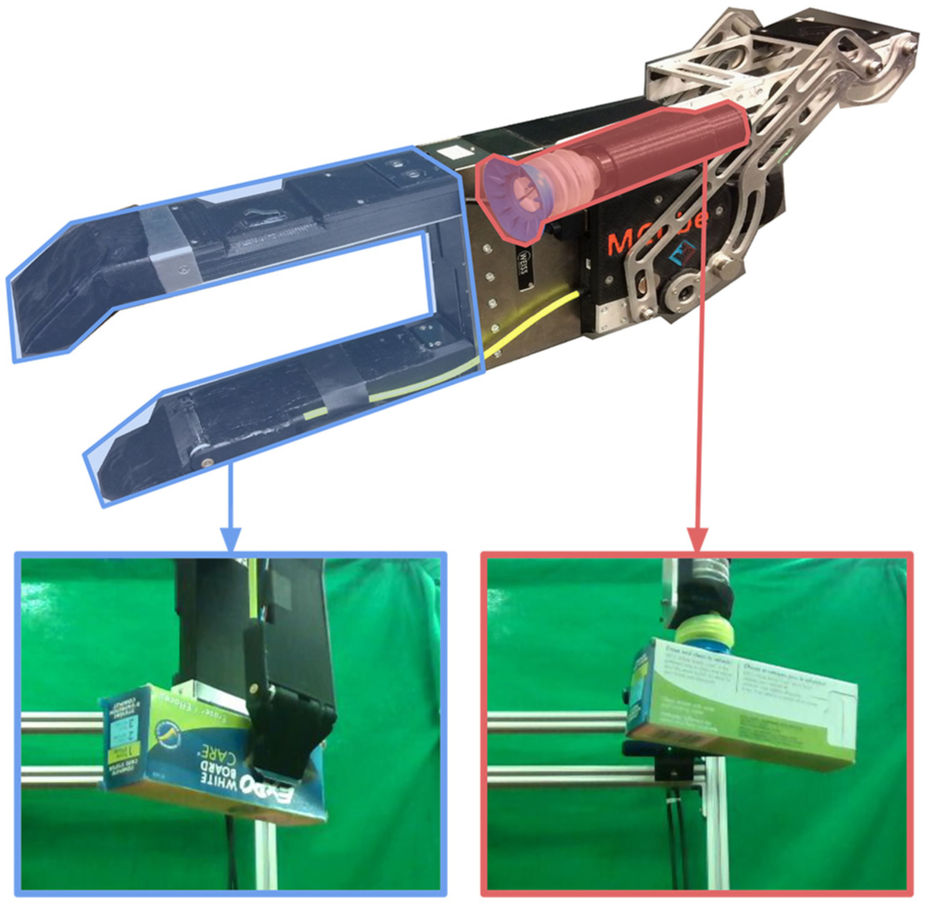

Our system features a six-degree-of-freedom (6DOF) ABB IRB 1600id robot arm next to four picking work cells. The robot arm’s end-effector is a multi-functional gripper with two fingers for parallel-jaw grasps and a retractable suction cup (Figure 3). This gripper was designed to function in cluttered environments: finger and suction cup length are specifically chosen such that the bulk of the gripper body does not need to enter the cluttered space.

Each work cell has a storage bin and four statically mounted RealSense SR300 RGB-D cameras (Figure 2): two cameras overlooking the storage bins are used to infer grasp affordances, whereas the other two pointing upwards towards the robot gripper are used to recognize objects in the gripper. For the two cameras used to infer grasp affordances, we find that placing them at opposite viewpoints of the storage bins provides good visual coverage of the objects in the bin. Adding a third camera did not significantly improve visual coverage. For the other two cameras used for object recognition, having them at opposite viewpoints enables us to immediately reconstruct a near-complete 3D point cloud of the object while it is being held in the gripper. These 3D point clouds are useful for planning object placements in the storage system.

The bin and camera setup. Our system consists of four units (top), where each unit has a bin with four stationary cameras: two overlooking the bin (bottom-left) are used for inferring grasp affordances whereas the other two (bottom-right) are used for recognizing grasped objects.

Multi-functional gripper with a retractable mechanism that enables quick and automatic switching between suction (pink) and grasping (blue).

Although our experiments were performed with this setup, the system was designed to be flexible for picking and placing between any number of reachable work cells and camera locations. Furthermore, all manipulation and recognition algorithms in this paper were designed to be easily adapted to other system setups.

4. Challenge I: Planning grasps with multi-affordance grasping

The goal of the first step in our system is to robustly grasp objects from a cluttered scene without relying on their object identities or poses. To this end, we define a set of four grasping primitive actions that are complementary to each other in terms of utility across different object types and scenarios, empirically broadening the variety of objects and orientations that can be picked with at least one primitive. Given RGB-D images of the cluttered scene at test time, we infer the dense pixel-wise affordances for all four primitives. A task planner then selects and executes the primitive with the highest affordance.

4.1. Grasping primitives

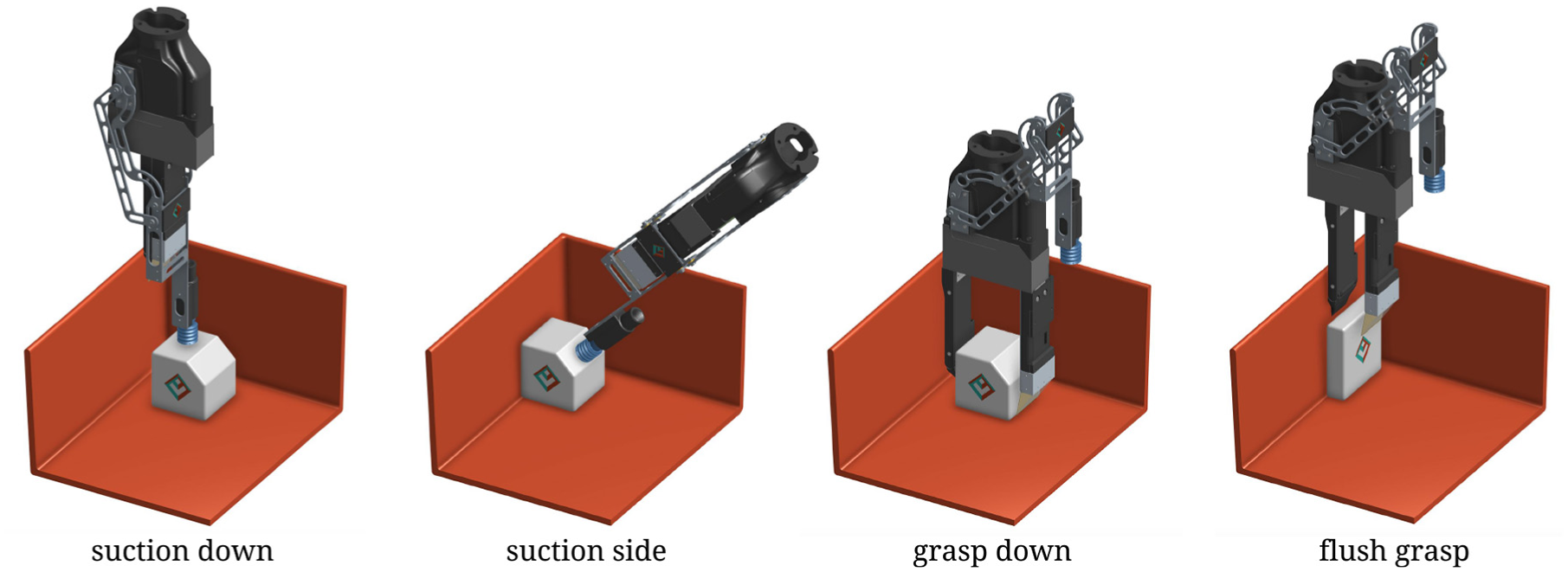

We define four grasping primitives to achieve robust picking for typical household objects. Figure 4 shows example motions for each primitive. Each of them is implemented as a set of guarded moves with collision avoidance using force sensors below the work cells. They also have quick success or failure feedback mechanisms using either flow sensing for suction or force sensing for grasping. Robot arm motion planning is automatically executed within each primitive with stable inverse kinematic-based controllers (Diankov, 2010). These primitives are as follows.

Multiple motion primitives for suction and grasping to ensure successful picking for a wide variety of objects in any orientation.

Suction down grasps objects with a vacuum gripper vertically. This primitive is particularly robust for objects with large and flat suctionable surfaces (e.g., boxes, books, wrapped objects), and performs well in heavy clutter.

Suction side grasps objects from the side by approaching with a vacuum gripper tilted at a fixed angle. This primitive is robust to thin and flat objects resting against walls, which may not have suctionable surfaces from the top.

Grasp down grasps objects vertically using the two-finger parallel-jaw gripper. This primitive is complementary to the suction primitives in that it is able to pick up objects with smaller, irregular surfaces (e.g., small tools, deformable objects), or made of semi-porous materials that prevent a good suction seal (e.g., cloth).

Flush grasp retrieves unsuctionable objects that are flushed against a wall. The primitive is similar to grasp down, but with the additional behavior of using a flexible spatula to slide one finger in between the target object and the wall.

4.2. Learning affordances with FCNs

Given the set of pre-defined grasping primitives and RGB-D images of the scene, we train FCNs (Long et al., 2015) to infer the affordances for each primitive across a dense pixel-wise sampling of end-effector orientations and locations (i.e., each pixel correlates to a different position on which to execute the primitive). Our approach relies on the assumption that graspable regions can be deduced from local geometry and visual appearance. This is inspired by recent data-driven methods for grasp planning (Gualtieri et al., 2017; Lenz et al., 2015; Levine et al., 2018; Mahler et al., 2017; Morales et al., 2004; Pinto et al., 2017; Pinto and Gupta, 2016; Redmon and Angelova, 2015; Saxena et al., 2008), which do not rely on object identities or state estimation.

4.2.1. Inferring suction affordances

We define suction points as 3D positions where the vacuum gripper’s suction cup should come into contact with the object’s surface in order to successfully grasp it. Good suction points should be located on suctionable (e.g., non-porous) surfaces, and near the target object’s center of mass to avoid an unstable suction seal (e.g., particularly for heavy objects). Each suction proposal is defined as a suction point, its local surface normal (computed from the projected 3D point cloud), and its affordance value. Each pixel of an RGB-D image (with a valid depth value) maps surjectively to a suction point.

We train a fully convolutional residual network (ResNet-101 (He et al., 2016)) that takes a

Learning pixel-wise affordances for suction and grasping. Given multi-view RGB-D images, we infer pixel-wise suction affordances for each image with an FCN (top row). The inferred affordance value at each pixel describes the utility of suction at that pixel’s projected 3D location. We aggregate the inferred affordances onto a 3D point cloud, where each point corresponds to a suction proposal (down or side based on surface normals). In parallel, we merge RGB-D images into an orthographic RGB-D heightmap of the scene, rotate it by 16 different angles, and feed them each through another FCN (bottom row) to estimate the pixel-wise affordances of horizontal grasps for each heightmap. This effectively produces affordance maps for 16 different top-down grasping angles, from which we generate grasp down and flush grasp proposals. The suction or grasp proposal with the highest affordance value is executed.

Our FCN is trained over a manually annotated dataset of RGB-D images of cluttered scenes with diverse objects, where pixels are densely labeled positive, negative, or neither. Pixel regions labeled as neither are trained with 0 loss backpropagation. We train our FCNs by stochastic gradient descent with momentum, using fixed learning rates of

During testing, we feed each captured RGB-D image through our trained network to generate dense suction affordances for each view of the scene. As a post-processing step, we use calibrated camera intrinsics and poses to project the RGB-D data and aggregate the affordances onto a combined 3D point cloud. We then compute surface normals for each 3D point (using a local region around it), which are used to classify which suction primitive (down or side) to use for the point.

To handle objects that lack depth information, e.g., finely meshed objects or transparent objects, we use a simple hole-filling algorithm (Silberman et al., 2012) on the depth images, and project inferred affordance values onto the hallucinated depth. We filter out suction points from the background by performing background subtraction (Zeng et al., 2017) between the captured RGB-D image of the scene with objects and an RGB-D image of the scene without objects (captured automatically before any objects are placed into the picking work cells).

4.2.2. Inferring grasp affordances

Grasp proposals are represented by (1) a 3D position which defines the middle point between the two fingers during top-down parallel-jaw grasping, (2) an angle that defines the orientation of the gripper around the vertical axis along the direction of gravity, *3) the width between the gripper fingers during the grasp, and (4) its affordance value.

Two RGB-D views of the scene are aggregated into a registered 3D point cloud, which is then orthographically back-projected upwards in the gravity direction to obtain a “heightmap” image representation of the scene with both color (RGB) and height-from-bottom (D) channels. Each pixel of the heightmap represents a 2 mm × 2 mm vertical column of 3D space in the scene. Each pixel also correlates bijectively to a grasp proposal whose 3D position is naturally computed from the spatial 2D position of the pixel relative to the heightmap image and the height value at that pixel. The gripper orientation of the grasp proposal is always kept horizontal with respect to the frame of the heightmap.

Analogous to our deep network inferring suction affordances, we feed this RGB-D heightmap as input to a fully convolutional ResNet-101 (He et al., 2016), which densely infers affordance values (between 0 and 1) for each pixel, thereby for all top-down parallel-jaw grasping primitives executed with a horizontally orientated gripper across all 3D locations in heightmap of the scene sampled at pixel resolution. Visualizations of these densely labeled affordance maps are shown as heat maps in the second row of Figure 5. By rotating the heightmap of the scene with

We train our FCN over a manually annotated dataset of RGB-D heightmaps, where each positive and negative grasp label is represented by a pixel on the heightmap as well as an angle indicating the preferred gripper orientation. We trained this FCN with the same optimization parameters as that of the FCN used for inferring suction affordances.

During post-processing, the width between the gripper fingers for each grasp proposal is determined by using the local geometry of the 3D point cloud. We also use the location of each proposal relative to the bin to classify which grasping primitive (down or flush) should be used: flush grasp is executed for pixels located near the sides of the bins; grasp down is executed for all other pixels. To handle objects without depth, we triangulate no-depth regions in the heightmap using both RGB-D camera views of the scene, and fill in these regions with synthetic height values of 3 cm prior to feeding into the FCN. We filter out inferred grasp proposals in the background by using background subtraction with the RGB-D heightmap of an empty work cell.

4.3. Other architectures for parallel-jaw grasping

A significant challenge during the development of our system was designing a deep network architecture for inferring dense affordances for parallel-jaw grasping that (1) supports various gripper orientations and (2) could converge during training with less than 2,000 manually labeled images. It took several iterations of network architecture designs before discovering the one that worked (described previously). Here, we briefly review some deprecated architectures and their primary drawbacks.

Parallel trunks and branches (

copies)

This design consists of

One trunk, split into

parallel branches

This design consists of a single FCN architecture, which contains a multi-modal ResNet-101 trunk followed by a split into

One trunk, rotate, one branch

This design consists of a single FCN architecture, which contains a multi-modal ResNet-101 trunk, followed by a spatial transform layer (Jaderberg et al., 2015) to rotate the intermediate feature map from the trunk with respect to an input grasp angle (such that the gripper orientation is aligned horizontally to the feature map), followed by a branch with three spatial convolution layers, spatially bilinearly upsampled, and softmaxed to output a single affordance map for the input grasp angle. This design is even more lightweight than the previous architecture in terms of GPU memory consumption, performs well with grasping angles for which there is a sufficient amount of training samples, but continues to performs poorly for grasping angles with very few training samples (less than 100).

One trunk and branch (rotate

times)

This is the final network architecture design as proposed above, which differs from the previous design in that the rotation occurs directly on the input image representation prior to feeding through the FCN (rather than in the middle of the architecture). This enables the entire network to share visual features across different grasping orientations, enabling it to generalize for grasping angles of which there are very few training samples.

4.4. Task planner

Our task planner selects and executes the suction or grasp proposal with the highest affordance value. Prior to this, affordance values are scaled by a factor

Suction first, grasp later

We empirically find suction to be more reliable than parallel-jaw grasping when picking in scenarios with heavy clutter (10 or more objects). Among several factors, the key reason is that suction is significantly less intrusive than grasping. Hence, to reflect a greedy picking strategy that initially favors suction over grasping,

Avoid repeating unsuccessful attempts

It is possible for the system to get stuck repeatedly executing the same (or similar) suction or grasp proposal as no change is made to the scene (and, hence, affordance estimates remain the same). Therefore, after each unsuccessful suction or parallel-jaw grasping attempt, the affordances of the proposals (for the same primitive action) nearby within a radius of 2 cm of the unsuccessful attempt are set to 0.

Encouraging exploration upon repeat failures

The planner re-weights grasping primitive actions

Leveraging dense affordances for speed picking

Our FCNs densely infer affordances for all visible surfaces in the scene, which enables the robot to attempt multiple different suction or grasping proposals (at least 3 cm apart from each other) in quick succession until at least one of them is successful (given by immediate feedback from flow sensors or gripper finger width). This improves picking efficiency.

5. Challenge II: Recognizing novel objects with cross-domain image matching

After successfully grasping an object and isolating it from clutter, the goal of the second step in our system is to recognize the identity of the grasped object.

As we encounter both known and novel objects, and we have only product images for the novel objects, we address this recognition problem by retrieving the best match among a set of product images. Of course, observed images and product images can be captured in significantly different environments in terms of lighting, object pose, background color, post-process editing, etc. Therefore, we require an algorithm that is able to find the semantic correspondences between images from these two different domains. While this is a task that appears repeatedly in a variety of research topics (e.g., domain adaptation, one-shot learning, meta-learning, visual search, etc.), in this paper we refer to it as a cross-domain image matching problem (Bell and Bala, 2015; Saenko et al., 2010; Shrivastava et al., 2011).

5.1. Metric learning for cross-domain image matching

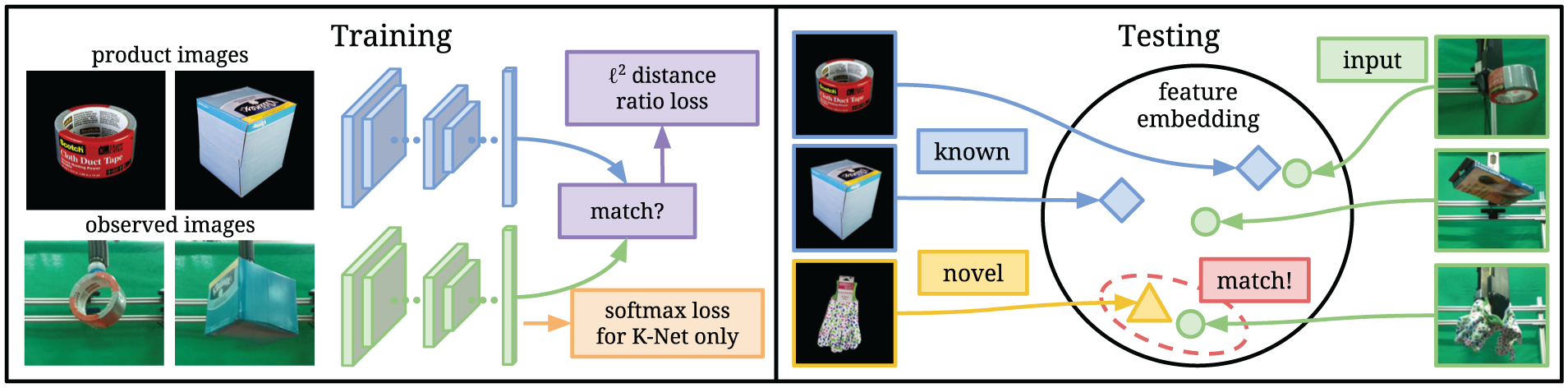

To perform the cross-domain image matching between observed images and product images, we learn a metric function that takes in an observed image and a candidate product image and outputs a distance value that models how likely the images are of the same object. The goal of the metric function is to map both the observed image and product image onto a meaningful feature embedding space so that smaller

We model this metric function with a two-stream ConvNet architecture where one stream computes features for the observed images, and a different stream computes features for the product images. We train the network by feeding it a balanced 1:1 ratio of matching and non-matching image pairs (one observed image and one product image) from the set of known objects, and backpropagate gradients from the distance ratio loss (triplet loss (Hoffer et al., 2016)). This effectively optimizes the network in a way that minimizes the

Recognition framework for novel objects. We train a two-stream convolutional neural network where one stream computes 2,048-dimensional feature vectors for product images whereas the other stream computes 2,048-dimensional feature vectors for observed images, and optimize both streams so that features are more similar for images of the same object and dissimilar otherwise. During testing, product images of both known and novel objects are mapped onto a common feature space. We recognize observed images by mapping them to the same feature space and finding the nearest neighbor match.

5.1.1. Avoiding metric collapse by guided feature embeddings

One issue commonly encountered in metric learning occurs when the number of training object categories is small: the network can easily overfit its feature space to capture only the small set of training categories, making generalization to novel object categories difficult. We refer to this problem as metric collapse. To avoid this issue, we use a model pre-trained on ImageNet (Deng et al., 2009) for the product image stream and train only the stream that computes features for observed images. ImageNet contains a large collection of images from many categories, and models pre-trained on it have been shown to produce relatively comprehensive and homogenous feature embeddings for transfer tasks (Huh et al., 2016), i.e., providing discriminating features for images of a wide range of objects. Our training procedure trains the observed image stream to produce features similar to the ImageNet features of product images, i.e., it learns a mapping from observed images to ImageNet features. Those features are then suitable for direct comparison to features of product images, even for novel objects not encountered during training.

5.1.2. Using multiple product images

For many applications, there can be multiple product images per object. However, with multiple product images, supervision of the two-stream network can become confusing: on which pair of matching observed and product images should the backpropagated gradients be based? For example, matching an observed image of the front face of the object against a product image of the back face of the object can easily confuse network gradients. To solve this problem during training, we add a module called “multi-anchor switch” in the network. Given an observed image, this module automatically chooses which “anchor” product image to compare against ( i.e., to compute loss and gradients for) based on

5.2. Two-stage framework for a mixture of known and novel objects

In settings where both types of objects are present, we find that training two different network models to handle known and novel objects separately can yield higher overall matching accuracies. One is trained to be good at “over-fitting” to the known objects (K-net) and the other is trained to be better at “generalizing” to novel objects (N-net).

Yet, how do we know which network to use for a given image? To address this issue, we execute our recognition pipeline in two stages: a “recollection” stage that determines whether the observed object is known or novel, and a “hypothesis” stage that uses the appropriate network model based on the first stage’s output to perform image matching.

First, the recollection stage infers whether the input observed image from test time is that of a known object that has appeared during training. Intuitively, an observed image is of a novel object if and only if its deep features cannot match to that of any images of known objects. We explicitly model this conditional by thresholding on the nearest-neighbor distance to product image features of known objects. In other words, if the

In the hypothesis stage, we perform object recognition based on one of two network models: K-net for known objects and N-net for novel objects. The K-net and N-net share the same network architecture. However, during training the K-net has an “auxiliary classification” loss for the known objects. This loss is implemented by feeding in the K-net features into three fully connected layers, followed by an

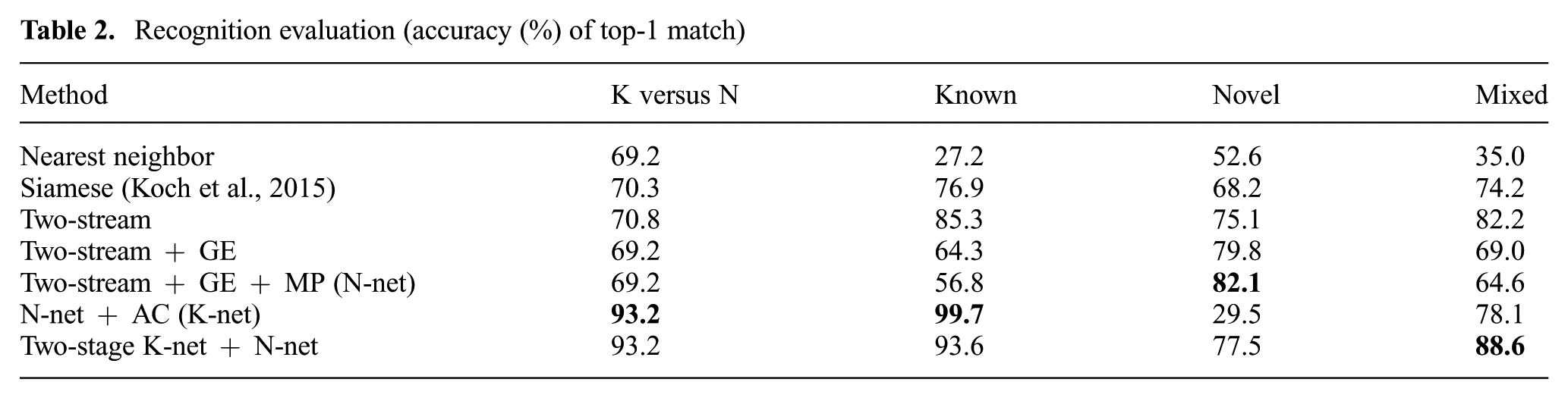

By adding the recollection stage, we can exploit both the high accuracy of known objects with K-net and good accuracy of novel objects with N-net, though incurring a cost in accuracy from erroneous known vs novel classification. We find that this two-stage system overall provides higher total matching accuracy for recognizing both known and novel objects (mixed) than all other baselines (Table 2).

Recognition evaluation (accuracy (%) of top-1 match)

6. Experiments

In this section, we evaluate our affordance-based grasping framework, our recognition algorithm over both known and novel objects, as well as our full system in the context of the 2017 ARC.

6.1. Evaluating multi-affordance grasping

6.1.1. Datasets

To generate datasets for learning affordance-based grasping, we designed a simple labeling interface that prompts users to manually annotate good and bad suction and grasp proposals over RGB-D images collected from the real system. For suction, users who have had experience working with our suction gripper are asked to annotate pixels of suctionable and non-suctionable areas on raw RGB-D images overlooking cluttered bins full of various objects. Similarly, users with experience using our parallel-jaw gripper are asked to sparsely annotate positive and negative grasps over re-projected heightmaps of cluttered bins, where each grasp is represented by a pixel on the heightmap and an angle corresponding to the orientation (parallel-jaw motion) of the gripper. On the interface, users directly paint labels on the images with wide-area circular (suction) or rectangular (grasping) brushstrokes. The diameter and angle of the strokes can be adjusted with hotkeys. The color of the strokes are green for positive labels and red for negative labels. Examples of images and labels from this dataset can be found in Figure 7. During training, we further augment each grasp label by adding additional labels via small jittering (less than 1.6 cm). In total, the grasping dataset contains 1,837 RGB-D images with pixel-wise suction and grasp labels. We use a 4:1 training/testing split of these images to train and evaluate different grasping models.

Images and annotations from the grasping dataset with labels for suction (top two rows) and parallel-jaw grasping (bottom two rows). Positive labels appear in green while negative labels appear in red.

Although this grasping dataset is small for training a deep network from scratch, we find that it is sufficient for fine-tuning our architecture with ResNets pre-trained on ImageNet. An alternative method would be to generate a large dataset of annotations using synthetic data and simulation, as in Mahler et al. (2017). However, then we would have to bridge the domain gap between synthetic and real 3D data, which is difficult for arbitrary real-world objects (see further discussion on this point in the comparison with Dex-Net in Table 3). Manual annotations make it easier to embed in the dataset information about material properties that are difficult to capture in simulation ( e.g., porous objects are non-suctionable, heavy objects are easier to grasp than to suction).

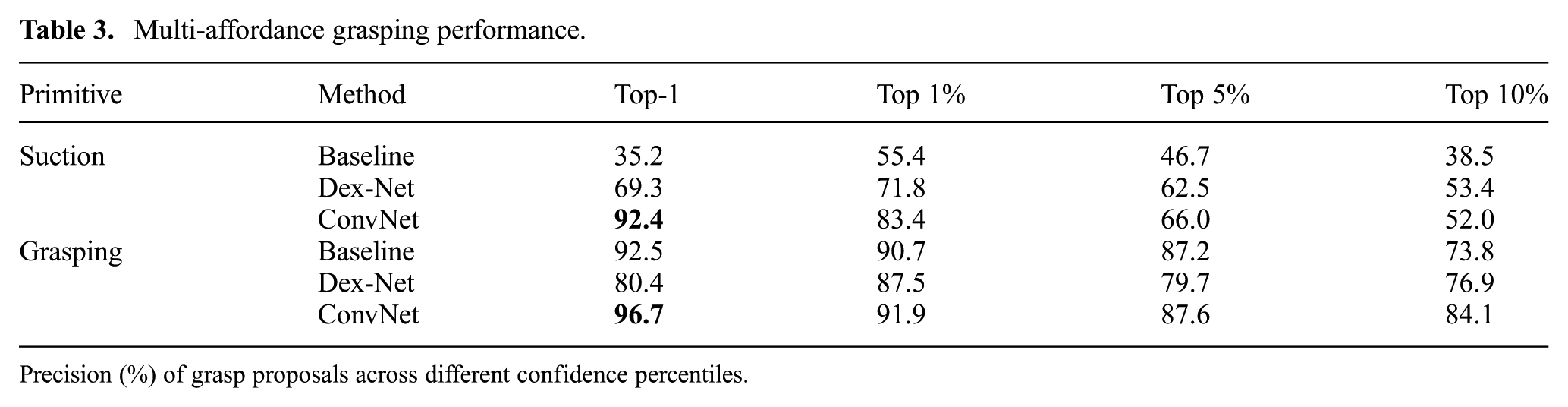

Multi-affordance grasping performance.

Precision (%) of grasp proposals across different confidence percentiles.

6.1.2. Evaluation

In the context of our grasping framework, a method is robust if it is able to consistently find at least one suction or grasp proposal that works. To reflect this, our evaluation metric is the precision of inferred proposals versus manual annotations. For suction, a proposal is considered a true positive if its pixel center is manually labeled as a suctionable area (false positive if manually labeled as an non-suctionable area). For grasping, a proposal is considered a true positive if its pixel center is nearby within 4 pixels and 11.25° from a positive grasp label (false positive if nearby a negative grasp label). We report the precision of our inferred proposals for different confidence percentiles across the testing split of our grasping dataset in Table 3. We compare our method with a heuristic baseline algorithm as well as with a state-of-the-art grasping algorithm Dex-Net (Mahler et al., 2017, 2018) versions 2.0 (parallel-jaw grasping) and 3.0 (suction) for which code is available. We use Dex-Net weights pre-trained on their original simulation-based dataset. As reported in Mahler et al. (2017, 2018, 2019), fine-tuning Dex-Net on real data does not lead to substantial increases in performance.

The heuristic baseline algorithm computes suction affordances by estimating surface normal variance over the observed 3D point cloud (lower variance = higher affordance), and computes anti-podal grasps by detecting hill-like geometric structures in the 3D point cloud with shape analysis. Baselines details and code are available on our project webpage (http://arc.cs.princeton.edu). The heuristic algorithm for parallel-jaw grasping was highly fine-tuned to the competition scenario, making it quite competitive with our trained grasping ResNets. We did not compare with the other network architectures for parallel-jaw grasping described in Section 4 because those models could not completely converge during training.

The top-1 proposal from the baseline algorithm performs quite well for parallel jaw grasping, but performs poorly for suction. This suggests that relying on simple geometric cues from the 3D surfaces of objects can be quite effective for grasping, but less so for suction. This is likely because successful suction picking not only depends on finding smooth surfaces, but also highly depends on the mass distribution and porousness of objects: both attributes of which are less apparent from local geometry alone. Suctioning close to the edge of a large and heavy object may cause the object to twist off due to external wrench from gravity, while suctioning a porous object may prevent a strong suction contact seal.

Dex-Net also performs competitively on our benchmark with strong suction and grasp proposals across top 1% confidence thresholds, but with more false positives across top-1 proposals. By visualizing Dex-Net top-1 failure cases in Figure 8, we can observe several interesting failure modes that do not occur as frequently with our method. For suction, there are two common types of failures. The first involves false-positive suction predictions on heavy objects. For example, shown in the top-left image of Figure 8, the heavy (2 kg) bag of Epsom salt can only be successfully suctioned near its center of mass (i.e., near the green circle), which is located towards the bottom of the bag. Dex-Net is expectedly unaware of this, and often makes predictions on the bag but farther from the center of mass (e.g., the red circle shows Dex-Net’s top-1 prediction). The second type of failure mode involves false-positive predictions on unsuctionable objects with mesh-like porous containers. For example, in the bottom-left image of Figure 8, Dex-Net makes suction predictions (e.g., red circle) on a mesh bag of marbles; however, the only region of the object that is suctionable is its product tag (e.g., green circle).

Common Dex-Net failure modes for suction (left column) and parallel-jaw grasping (right column). Dex-Net’s top-1 predictions are labeled in red, whereas our method’s top-1 predictions are labeled in green. Our method is more likely to predict grasps near objects’ center of mass (e.g., bag of salt (top left) and water bottle (top right)), more likely to avoid unsuctionable areas such as porous surfaces (e.g., mesh bag of marbles (bottom left)), and less susceptible to noisy depth data (bottom right).

For parallel-jaw grasping, Dex-Net most commonly experiences two other types of failure modes. The first is that it frequently predicts false-positive grasps on the edges of long heavy objects: regions where the object would slip due to external wrench from gravity. This is because Dex-Net assumes objects to be lightweight to conform to the payload (

Overall, these observations show that Dex-Net is a competitive grasping algorithm trained from simulation, but falls short in our application setup owing to the domain gap between synthetic and real data. Specifically, the discrepancy between the ≥90% grasping success achieved by Dex-Net in their reported experiments (Mahler et al., 2017, 2018, 2019) versus the 80% on our dataset is likely due to two reasons: our dataset consists of (1) a larger spectrum of objects, e.g., heavier than 0.25 kg; and (2) noisier RGB-D data, i.e., less similar to simulated data, from substantially more cost-effective commodity 3D sensors.

6.1.3. Speed

Our suction and grasp affordance algorithms were designed to achieve fast run-time speeds during test time by densely inferring affordances over images of the entire scene. Table 1 compares our run-time speeds with several state-of-the-art alternatives for grasp planning. Our numbers measure the time of each FCN forward pass, reported with an NVIDIA Titan X on an Intel Core i7-3770K clocked at 3.5 GHz, excluding time for image capture and other system-related overhead. Our FCNs run at a fraction of the time required by most other methods, while also being significantly deeper (with 101 layers) than all other deep learning methods.

6.2. Evaluating novel object recognition

We evaluate our recognition algorithms using a 1 versus 20 classification benchmark. Each test sample in the benchmark contains 20 possible object classes, where 10 are known and 10 are novel, chosen at random. During each test sample, we feed to the recognition algorithm the product images for all 20 objects as well as an observed image of a grasped object. In Table 2, we measure performance in terms of average percentage accuracy of the top-1 nearest-neighbor product image match of the grasped object. We evaluate our method against a baseline algorithm, a state-of-the-art network architecture for both visual search (Bell and Bala, 2015) and one-shot learning without retraining (Koch et al., 2015), and several variations of our method. The latter provides an ablation study to show the improvements in performance with every added component.

Nearest neighbor. This is a baseline algorithm where we compute features of product images and observed images using a ResNet-50 pre-trained on ImageNet, and use nearest-neighbor matching with

Siamese network with weight sharing. This is a re-implementation of the work of Bell and Bala (2015) for visual search and Koch et al. (2015) for one-shot recognition without re-training. We use a Siamese ResNet-50 pre-trained on ImageNet and optimized over training pairs in a Siamese fashion. The main difference between this method and ours is that the weights between the networks computing deep features for product images and observed images are shared.

Two-stream network without weight sharing. This is a two-stream network, where the networks’ weights for product images and observed images are not shared. Without weight sharing the network has more flexibility to learn the mapping function and, thus, achieves higher matching accuracy. All the models described later in this section use this two-stream network without weight sharing.

Two-stream + guided-embedding (GE). This includes a guided feature embedding with ImageNet features for the product image stream. We find this model has better performance for novel objects than for known objects.

Two-stream + guided-embedding (GE) + multi-product-images (MP). By adding a multi-anchor switch, we see more improvements to accuracy for novel objects. This is the final network architecture for N-net.

Two-stream + guided-embedding (GE) + multi-product-images (MP) + auxiliary classification (AC). By adding an auxiliary classification, we achieve near-perfect accuracy of known objects for later models, however, at the cost of lower accuracy for novel objects. This also improves known versus novel (K versus N) classification accuracy for the recollection stage. This is the final network architecture for K-net.

Two-stage system. As described in Section 5, we combine the two different models, one that is good at known objects (K-net) and the other that is good at novel objects (N-net), in the two-stage system. This is our final recognition algorithm, and it achieves better performance than any single model for test cases with a mixture of known and novel objects.

6.3. Full system evaluation in the ARC

To evaluate the performance of our system as a whole, we used it as part of our MIT–Princeton entry for the 2017 ARC, where state-of-the-art pick-and-place solutions competed in the context of a warehouse automation task. Participants were tasked with designing a fully autonomous robot system to grasp and recognize a large variety of different objects from unstructured bins. The objects were characterized by a number of difficult-to-handle properties. Unlike earlier versions of the competition (Correll et al., 2016), half of the objects were novel to the robot in the 2017 edition by the time of the competition. The physical objects as well as related item data (i.e., product images, weight, 3D scans), were given to teams just 30 minutes before the competition. While other teams used the 30 minutes to collect training data for the new objects and re-train models, our unique system did not require any of that during those 30 minutes.

6.3.1. Setup

Our system setup for the competition features several differences. We incorporated weight sensors to our system, using them as a guard to signal stop for grasping primitive behaviors during execution. We also used the measured weights of objects provided by Amazon to boost recognition accuracy to near-perfect performance as well as to prevent double-picking. Green screens made the background more uniform to further boost accuracy of the system in the recognition phase. For inferring affordances, Table 3 shows that our data-driven methods with ConvNets provide more precise affordances for both suction and grasping than the baseline algorithms. For the case of parallel-jaw grasping, however, we did not have time to develop a fully stable network architecture before the day of the competition, so we decided to avoid risks and use the baseline grasping algorithm. The ConvNet-based approach became stable with the reduction to inferring only horizontal grasps and rotating the input heightmaps.

6.3.2. State tracking and estimation

We also designed a state tracking and estimation algorithm for the full system in order to perform competitively in the picking task of the ARC, where the goal is to pick target objects out of a storage system (e.g., shelves, separate work cells) and place them into specific boxes for order fulfillment.

The goal of our state tracking algorithm is to track all the objects’ identities, 6D poses, amodal bounding boxes, and support relationships in each bin (

add (

remove (

move (

touch (

When

For the

The

The

Combined with our affordance prediction algorithm described in Section 4, we are able to label each grasping or suction proposal with corresponding object identities using their tracked 6D poses from the state tracker. The task planner can then prioritize certain grasp proposals (close to, or above target objects) with heuristics based on this information.

6.3.3. Results

During the 2017 ARC final stowing task, we had a 58.3% pick success with suction, 75% pick success with grasping, and 100% recognition accuracy during the stow task of the ARC, stowing all 20 objects within 24 suction attempts and 8 grasp attempts. Our system took first place in the stowing task, being the only system to have successfully stowed all known and novel objects and to have finished the task well within the allotted time frame.

Overall, the pick success rates of all teams in the ARC (62% on average reported by Morrison et al. (2018)) are generally lower than those reported in related work for grasping. We attribute this mostly to the fact that the competition uses bins full of objects that contain significantly more clutter and variety than the scenarios presented in more controlled experiments in prior work. Among the competing teams, we successfully picked the most objects in the stow and final tasks, and our average picking speed was the highest (Morrison et al., 2018).

6.3.4. Postmortem

Our system did not perform as well during the finals task of the ARC owing to a lack of sufficient failure recovery. On the systems side, the perception node that fetches data from all RGB-D cameras lost connection to one of our RGB-D cameras for recognition and stalled during the middle of our stowing run for the ARC finals, which forced us to call for a hard reset during the competition. The perception node would have benefited from being able to restart and recover from disconnections. On the algorithms side, our state tracking system is particularly sensitive to drastic changes in the state (i.e., when multiple objects switch locations), which causes it to lose track without recovery. In hindsight, the tracking would have benefited from some form of simultaneous object segmentation in the bin that works for novel objects and is robust to clutter. Adopting the pixel-wise deep metric learning method of the ACRV team described by Milan et al. (2018) would be worth exploring as part of future work.

7. Discussion and future work

Interest in robust and versatile robotic pick-and-place is almost as old as robotics. Robot grasping and object recognition have been two of the main drivers of robotic research. Yet, the reality in industry is that most automated picking systems are restricted to known objects, in controlled configurations, with specialized hardware.

We present a system to pick and recognize novel objects with very limited prior information about them (a handful of product images). The system first uses an object-agnostic affordance-based algorithm to plan grasps out of four different grasping primitive actions, and then recognizes grasped objects by matching them to their product images. We evaluate both components and demonstrate their combination in a robot system that picks and recognizes novel objects in heavy clutter, and that took first place in the stowing task of the 2017 ARC. Here we present of the most salient features/limitations of the system.

7.1. Object-agnostic manipulation

The system finds grasp affordances directly in the RGB-D image. This proved faster and more reliable than doing object segmentation and state estimation prior to grasp planning (Zeng et al., 2017). The ConvNet learns the visual features that make a region of an image graspable or suctionable. It also seems to learn more complex rules, e.g., that tags are often easier to suction that the object itself, or that the center of a long object is preferable than its ends. It would be interesting to explore the limits of the approach. For example, learning affordances for more complex behaviors, e.g., scooping an object against a wall, which require a more global understanding of the geometry of the environment.

7.2. Pick first, ask questions later

The standard grasping pipeline is to first recognize and then plan a grasp. In this paper, we demonstrate that it is possible and sometimes beneficial to reverse the order. Our system leverages object-agnostic picking to remove the need for state estimation in clutter. Isolating the picked object drastically increases object recognition reliability, especially for novel objects. We conjecture that “pick first, ask questions later” is a good approach for applications such as bin-picking, emptying a bag of groceries, or clearing debris. It is, however, not suited to all applications: nominally, when we need to pick a particular object. In that case, the described system needs to be augmented with state tracking/estimation algorithms that are robust to clutter and can handle novel objects.

7.3. Towards scalable solutions

Our system is designed to pick and recognize novel objects without extra data collection or re-training. This is a step forward towards robotic solutions that scale to the challenges of service robots and warehouse automation, where the daily number of novel objects ranges from the tens to the thousands, making data-collection and re-training cumbersome in one case and impossible in the other. It is interesting to consider what data, in addition to product images, is available that could be used for recognition using out-of-the-box algorithms such as ours.

7.4. Limited to accessible grasps

The system we present in this work is limited to picking objects that can be directly perceived and grasped by one of the primitive picking motions. Real scenarios, especially when targeting the grasp of a particular object, often require plans that deliberately sequence different primitive motions. For example, when removing an object to pick the one below, or when separating two objects before grasping one. This points to a more complex picking policy with a planning horizon that includes preparatory primitive motions such as pushing whose value is difficult to reward/label in a supervised fashion. Reinforcement learning of policies that sequence primitive picking motions is a promising alternative approach that we have started to explore in Zeng et al. (2018a).

7.5. Open-loop versus closed-loop grasping

Most existing grasping approaches, whether model-based or data-driven are for the most part, based on open-loop executions of planned grasps. Our system is no different. The robot decides what to do and executes it almost blindly, except for simple feedback to enable guarded moves such as move until contact. Indeed, the most common failure modes are when small errors in the estimated affordances lead to fingers landing on top of an object rather than on the sides, or lead to a deficient suction latch, or lead to a grasp that is only marginally stable and likely to fail when the robot lifts the object. It is unlikely that the picking error rate can be trimmed to industrial grade without the use of explicit feedback for closed-loop grasping during the approach–grasp–retrieve operation. Understanding how to make an effective use of tactile feedback is a promising direction that we have started to explore (Donlon et al., 2018; Hogan et al., 2018).

Footnotes

Acknowledgements

The authors would like to thank the MIT–Princeton ARC team members for their contributions to this project and ABB Robotics, Mathworks, Intel, Google, NVIDIA, and Facebook for hardware and technical support. This paper is a revision of a paper appearing in the proceedings of the 2018 International Conference on Robotics and Automation (Zeng et al., 2018b).

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the NSF (grant numbers IIS-1251217 and VEC 1539014/1539099).