Abstract

The integration of generative artificial intelligence (AI) tools is a paradigm shift in enhanced learning methodologies and assessment techniques. This study explores the adoption of generative AI tools in higher education assessments by examining the perceptions of 353 students through a survey and 17 in-depth interviews. Anchored in the Unified Theory of Acceptance and Use of Technology (UTAUT), this study investigates the roles of perceived risk and tech-savviness in the use of AI tools. Perceived risk emerged as a significant deterrent, while trust and tech-savviness were pivotal in shaping student engagement with AI. Tech-savviness not only influenced adoption but also moderated the effect of performance expectancy on AI use. These insights extend UTAUT’s application, highlighting the importance of considering perceived risks and individual proficiency with technology. The findings suggest educators and policymakers need to tailor AI integration strategies to accommodate students’ personal characteristics and diverse needs, harnessing generative AI’s opportunities and mitigating its challenges.

In the dynamic landscape of marketing education, the integration of generative artificial intelligence (AI), such as Dall-E, Midjourney, Microsoft Copilot, and ChatGPT, signifies a transformative shift in innovative learning and assessment methods (Guha et al., 2024; Michel-Villarreal et al., 2023). This emergent technology, underpinned by large language models (LLMs), promises to revolutionize education through enhanced content creation and by fostering critical analysis (McAlister et al., 2023). Educators, therefore, are increasingly being encouraged to integrate generative AI into their curricula (Guha et al., 2024), given its potential to personalize learning and improve learning engagement and outcomes (Mogavi et al., 2024). Despite widespread acknowledgment of its potential, the factors driving whether and how higher education students use generative AI in coursework remain underexplored (Strzelecki & ElArabawy, 2024). This raises concerns about its implications for academic integrity and students’ ability to harness its full potential (Cotton et al., 2023; Perkins, 2023).

The burgeoning interest in generative AI within higher education necessitates a deeper understanding of the technology’s adoption and use. The integration of generative AI is closely linked with technology acceptance (Baytak, 2023; Yilmaz et al., 2023). While the Unified Theory of Acceptance and Use of Technology (UTAUT) model has provided initial insights into a tapestry of factors that contribute to student acceptance of ChatGPT (Gulati et al., 2024; Masa’deh et al., 2024; Strzelecki, 2023; Strzelecki & ElArabawy, 2024), the definition of the nuanced role of perceived risk remains ambiguous and the moderating effects of individual differences, such as tech-savviness, on adoption behaviors need further investigation. For instance, Gulati et al. (2024) investigated perceived risk but reported no significant deterrent effect on students’ intention to use technologies like ChatGPT. This finding is intriguing, given the practical concerns that emerge as student transition from intention to actual use—where the anticipation of risks, including data privacy breaches, misinformation, and potential academic dishonesty, becomes more pronounced and tangibly influences behavior (Alshahrani, 2023). These discrepancies between intention and action underscore the crucial need for a deeper exploration into how perceived risk affects not just intent but actual engagement with generative AI tools in education. The literature has yet to adequately address how individual differences, such as age and tech-savviness, increase or attenuate students’ intention to use generative AI and their actual use of AI (Pinto dos Santos et al., 2019). The possibility of a moderating relationship points to factors like tech-savviness not merely as a skill set but as a lens through which adoption and use patterns can be considered. Given these gaps and considerations, students’ adoption and use of AI offers fertile ground for further study. This study seeks to fill these gaps, proposing a nuanced examination of how perceived risk and the moderating influence of tech-savviness shape student engagement with generative AI. The valuable insights may guide educators and policymakers in optimizing AI integration within pedagogical frameworks (Peres et al., 2023). This study is guided by the following research questions:

This study contributes to our understanding of students’ adoption and use of generative AI in four ways. First, to the best of our knowledge, this study is a pioneering endeavor to use the UTAUT model to examine student use of generative AI tools more broadly than ChatGPT. While ChatGPT is among the most popular text generative AI tools used in higher education (at the time of writing; Ansari et al., 2023; Guha et al., 2024), other categories of generative AI tools (e.g., image generators) such as Midjourney, Gemini, Copilot, Stable Diffusion, Dall-e, Adobe Firefly, and Altist are also popular (McAlister et al., 2023). Second, this study considers the unique effect of perceived risk, given that use of wider generative AI tools––as opposed to text-only generation––may change the nature and perception of risk among students. Third, this study contributes to our understanding of student adoption of generative AI by examining the moderating influence of individual characteristics on both their intention to use generative AI and actual use, addressing calls for research to investigate the influence of alternative factors and heterogeneity among students (Gulati et al., 2024; Strzelecki & ElArabawy, 2024). Fourth, this study provides guidance for university educators and AI developers regarding the essential elements for encouraging the right use of generative AI tools for active learning among undergraduates.

Theoretical Framework and Hypothesis Development

Generative AI in Higher Education

Generative AI has emerged as a transformative force in higher education. It is an umbrella term to describe machine learning solutions trained to generate novel output such as text, images, or audio content based on user prompts, often by learning the structure and distribution of a given data set (Feuerriegel et al., 2024; Guha et al., 2024). With the capability to autonomously produce human-like text and images and engage in intellectual tasks, these AI systems have disrupted traditional approaches to pedagogy and learning methodologies in higher education (Dwivedi et al., 2023).

Research on generative AI’s application in this sector is nascent, marked by limited empirical study. Research has explored the determinants of AI adoption by educators, focusing on factors like perceived usefulness (Y. Wang et al., 2021) and instructor efficiency (Nair et al., 2023). It has assessed AI’s potential benefits and hurdles in educational contexts through a predominantly anecdotal and secondary analysis approach (Bearman et al., 2023; Chen et al., 2020; Rudolph et al., 2023; Yang & Evans, 2019). For instance, Yang and Evans (2019) emphasized an urgent need for clear policies, guidelines, and frameworks to integrate AI into higher education. They also highlighted the need for empirical research to understand user experiences and perception. Despite this call, empirical research addressing the adoption of generative AI from the perspective of the learner is scarce.

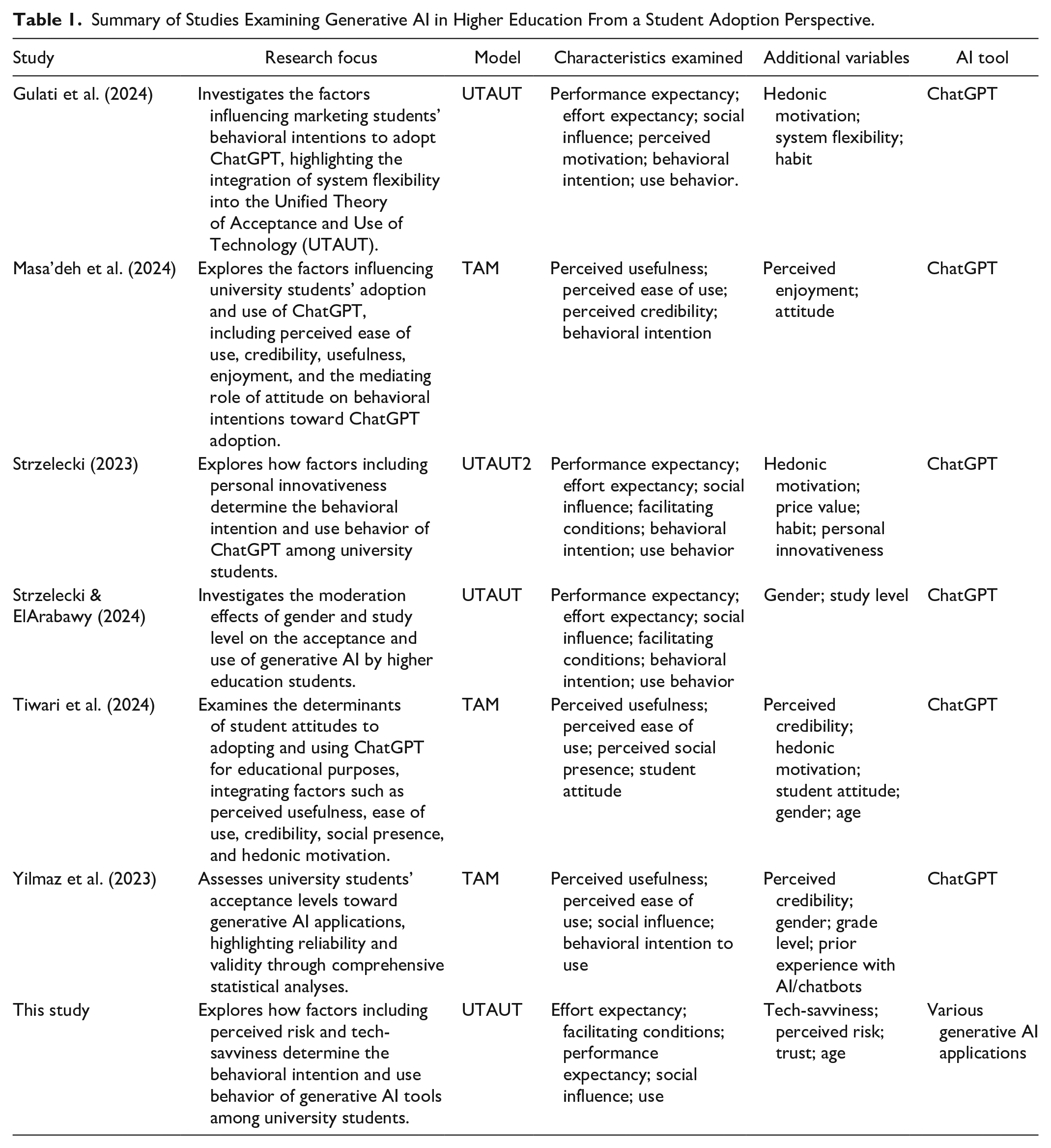

What we do know is that generative AI is opening a world of possibilities. We argue that it is not a question of whether generative AI will be influential-it already is. What remains to be discussed is how influential it will become. A first key step is a deeper understanding of learners’ readiness and willingness to use AI to support their learning. To date, only a handful of studies have empirically examined the factors driving use of generative AI among students (see Table 1 for a summary of key studies). These studies have considered performance expectancy (Gulati et al., 2024), perceived usefulness (Masa’deh et al., 2024), and social influence (Strzelecki, 2023; Strzelecki & ElArabawy, 2024). There is, so far, limited understanding of the role of risk in students’ willingness to use AI in the classroom. Emerging research suggests that students are less hesitant to embrace generative AI tools than educators due to risks such as loss of creativity and information integrity (Smolansky et al., 2023), but there are little empirical data to support this view. Existing studies that have examined what drives students to adopt AI have often failed to account for their skill level. These are crucial gaps in the literature and were the focus of our study.

Summary of Studies Examining Generative AI in Higher Education From a Student Adoption Perspective.

We posited that students decided to embrace generative AI tools for learning or remain cautious in their use based largely on their subjective assessment of the risks involved. We also posited that students would differ in terms of their technical skill level, which could have a profound impact on their motivation to use generative AI and would consequently affect their use behavior. We aimed to advance the body of knowledge on this subject based on the extended UTAUT, incorporating risk perception, trust, and skill level in understanding student adoption of generative AI.

Unified Theory of Acceptance and Use of Technology

The UTAUT model is one of the most important theories for predicting and explaining technology acceptance. Like its predecessors, the theory of reasoned action (TRA; Ajzen & Fishbein, 1975), the technology acceptance model (TAM; Davis, 1989), and the theory of planned behavior (TPB; Ajzen, 1991), the model attempts to explain how individual differences influence technology use. Specifically, it posits that a user’s intentions and consequent use behavior can be explained by measuring the effect of four key independent constructs––performance expectancy, effort expectancy, social influence, and facilitating conditions (Venkatesh et al., 2003). We chose UTAUT as a theoretical foundation to develop the hypotheses because it has been empirically tested and proven superior to other competing models (Venkatesh et al., 2003; Venkatesh & Zhang, 2010). The model has been empirically examined in various contexts and has consistently shown that all four key constructs positively impact users’ intentions to use technology and their actual use behavior (Lin & Bhattacherjee, 2008; Zhu et al., 2020). Therefore, we expected that students’ perceptions of these constructs would influence their adoption of, and intention to use, generative AI in higher education.

Perceived Risk

The integration of generative AI tools in higher education is transforming the learning environment by providing new opportunities for creativity, personalized learning, and efficiency. However, the adoption of these tools is accompanied by significant challenges, particularly due to the concerns and negative perceptions of both educators and students. These perceptions, often framed as perceived risk, refer to the degree to which individuals sense potential threats or uncertainties associated with adopting new technology (Featherman & Pavlou, 2003). In the context of generative AI, these risks may include concerns about data privacy, the reliability of generated content, and the potential loss of jobs or roles due to automation (Ooi et al., 2023; Wach et al., 2023). Research has suggested that perceived risk negatively influences an individual’s intention to use technology (Marafon et al., 2018; Van et al., 2021). Kim et al. (2010) demonstrated that perceived risk significantly affected user attitudes to adopting new technologies.

Despite the importance of understanding perceived risk, empirical evidence focusing on this concept from the higher education student perspective is limited. Studies have suggested that concerns about privacy and data security are particularly pronounced when it comes to generative AI tools (Irfan et al., 2023; Salloum, 2024). For example, Huang (2023) highlighted that the collection and processing of personal data by AI systems could lead to apprehensions about potential privacy breaches and the misuse of academic work, which could deter students from fully engaging with AI tools. This tendency to prioritize personal information security over the perceived benefits of generative AI is a significant barrier to adoption. Concerns about AI bias—where algorithms may produce biased or misleading information––further compound students’ distrust (Omughelli et al., 2024). The opaque nature of AI algorithms exacerbates this issue, as students may struggle to understand how generative AI reaches its conclusions or generates content, leading to skepticism and reluctance to rely on these tools.

Another critical concern among students is the potential negative impact of AI on skill development. According to Habib et al. (2024), there is a growing hesitancy among students to become overly dependent on generative AI tools, fearing that such reliance could stifle their creativity and undermine their ability to develop independent-thinking and problem-solving skills. Students worry that using generative AI tools might hinder their capacity or willingness to think for themselves and reduce their motivation or ability to engage deeply with academic challenges, such as writing, research, and analytical thinking (Chan & Hu, 2023). This concern is especially relevant in disciplines where the cultivation of these skills is central to the educational experience.

Given these concerns, we posited that when students perceived a high risk of academic dishonesty or skill degradation associated with generative AI, they were likely to view these tools as less beneficial to their academic progress, thereby diminishing their intention to use them. Similarly, if privacy risks were perceived as significant, students would find generative AI tools less user-friendly, further reducing their likelihood of adoption.

Trust

Trust in this context represents an individual’s confidence and belief in the reliability, integrity, and benevolence of a technology or its provider. Trust has been identified as a key determinant of technology acceptance and use in various contexts (Alrawad et al., 2023; Bhattacherjee, 2002; Kesharwani & Singh Bisht, 2012). According to Venkatesh et al. (2003), trust is one of the key determinants influencing an individual’s behavioral intentions to adopt new technology. In the context of generative AI in higher education, student trust in generative AI tools and their developers can significantly influence their perceptions and ultimately their use behavior. Gefen et al. (2003) showed that trust positively affected intentions to use technology, suggesting that higher levels of perceived trust in generative AI systems would likely lead to greater acceptance and use among students in higher education settings. We posited that trust would positively impact students’ use of generative AI in higher education.

The Moderating Effect of Tech-Savviness

Tech-savviness, or digital literacy, reflects an individual’s proficiency and comfort level when using technology (Spica, 2022). Technology is now integrated into almost every field of life, and individuals can be categorized as having either a basic or advanced technological knowledge. Research has suggested that an individual’s experience and skills with technology can affect technology adoption and use (Marangunić & Granić, 2015; Neumeyer et al., 2020; Yu et al., 2017). L.-H. Wang et al. (2022) studied consumer intentions to use robot hotel stays and observed that tech-savviness was a potential predictor of intention to use. The authors found that tech-savvy individuals with experience and familiarity with modern technology had different (and more positive) perceptions and responses to technology adoption than those less au fait or comfortable with modern technology. Similarly, Yu et al. (2017) examined users’ technology experience in connection with their adoption of information and communication technology (ICT). The authors found that information literacy and digital skills reduced a user’s “technostress,” which in turn influenced acceptance or adoption of ICT.

Based on the above reasoning, we posited that a student’s tech-savviness would influence their ability to navigate the benefits of generative AI tools in higher education. Specifically, we argued that students who were more tech-savvy would perceive generative AI as less effective when performance expectancy was high, given their awareness of the potential risks and limitations involved. However, they would find it easier to use, leading to lower levels of performance expectancy and effort expectancy. We expected, therefore, that tech-savvy students would demonstrate varying responsiveness to adopting and using generative AI tools in their academic pursuits based on their performance expectancy.

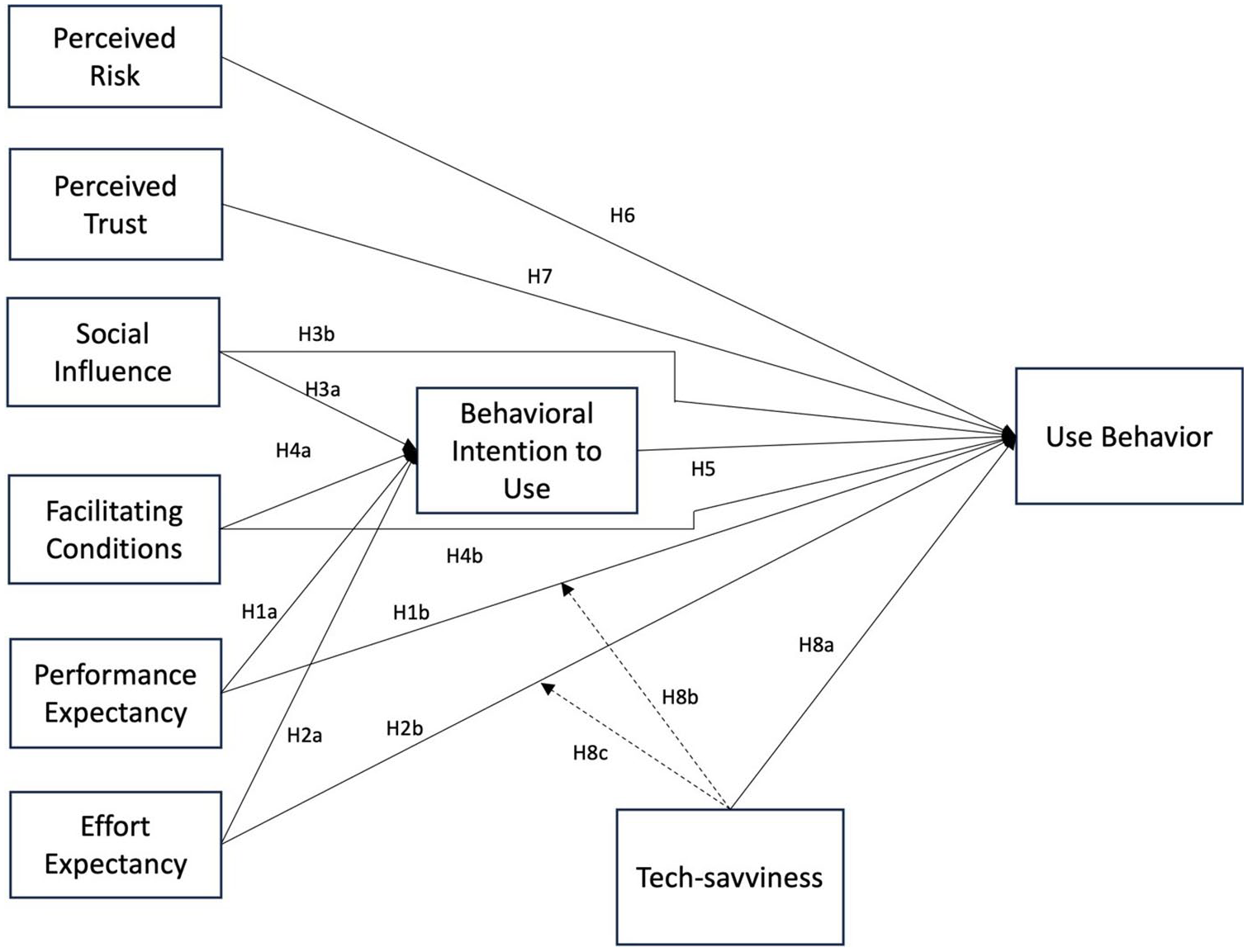

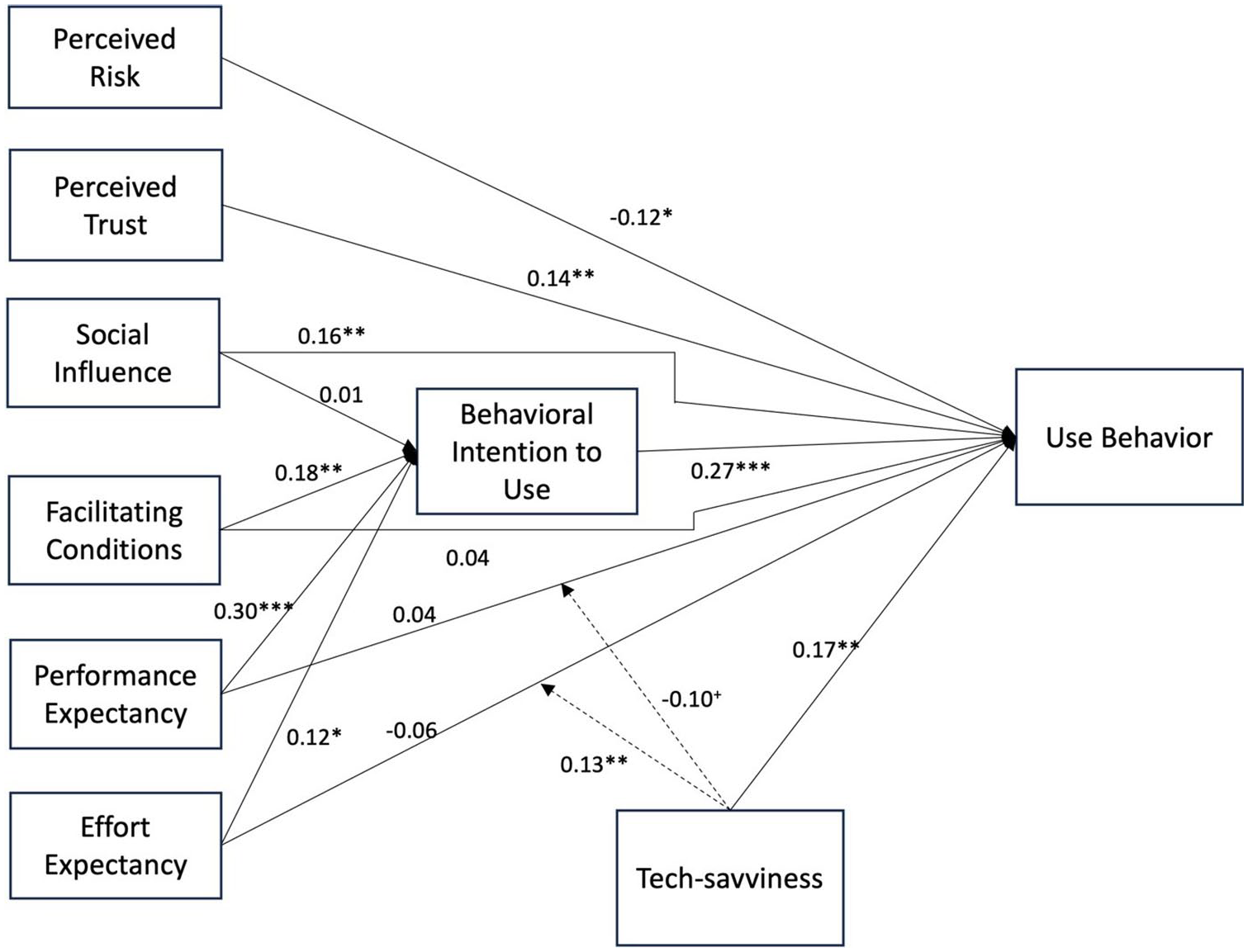

Our research hypotheses are summarized in the conceptual model in Figure 1.

Conceptual Model.

Method

Data Collection Procedure and Sample Characteristics

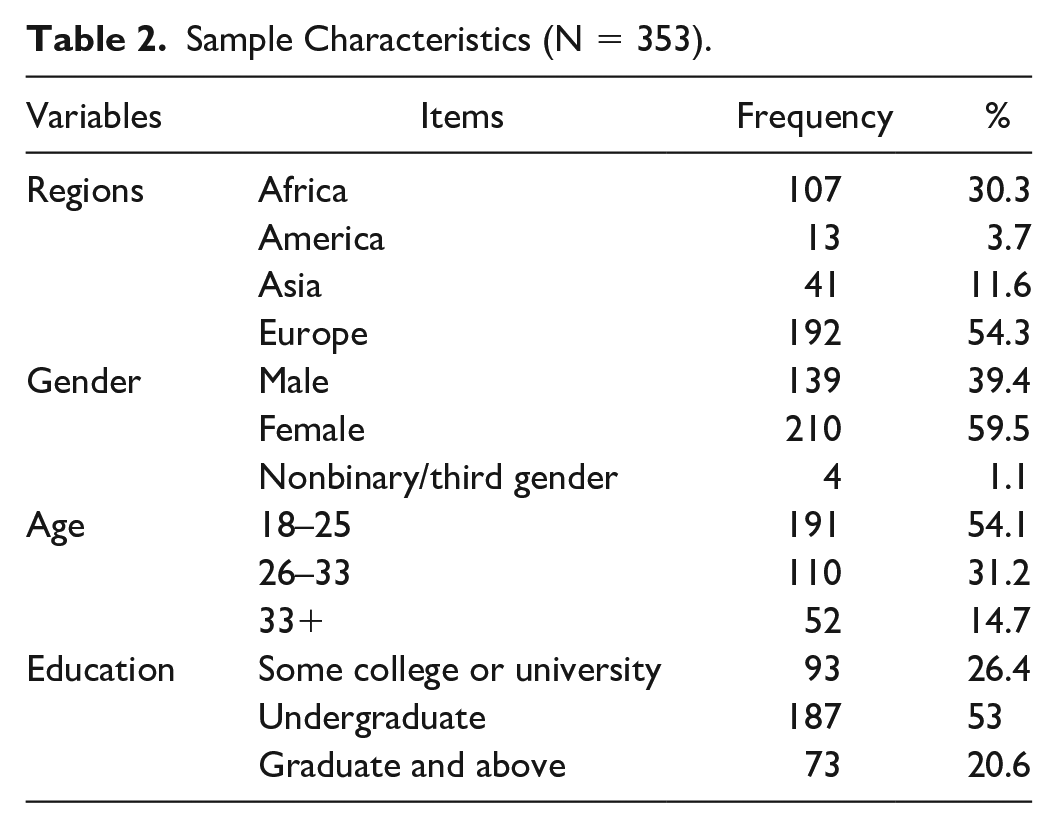

Using an online panel (Prolific), we carried out the study with a heterogeneous, purposive sample of 353 students, who all used generative AI tools as part of their studies. The sample included individuals with different income levels, with varying education levels, and from different countries to avoid limiting the generalizability of the findings. Table 2 gives an overview of sample characteristics.

Sample Characteristics (N = 353).

After voluntarily accepting to take part and providing their informed consent, participants were presented with definitions and examples of generative AI tools and their features. They then answered a randomized series of questions concerning the constructs used in the study (e.g., performance expectancy and perceived risk) for using generative AI tools in higher education coursework. They were also asked questions relating to their level of tech-savviness, intention to use, and actual use. Participants also recorded their answers to a set of simple demographic questions before receiving a small monetary reward.

Measurement Items

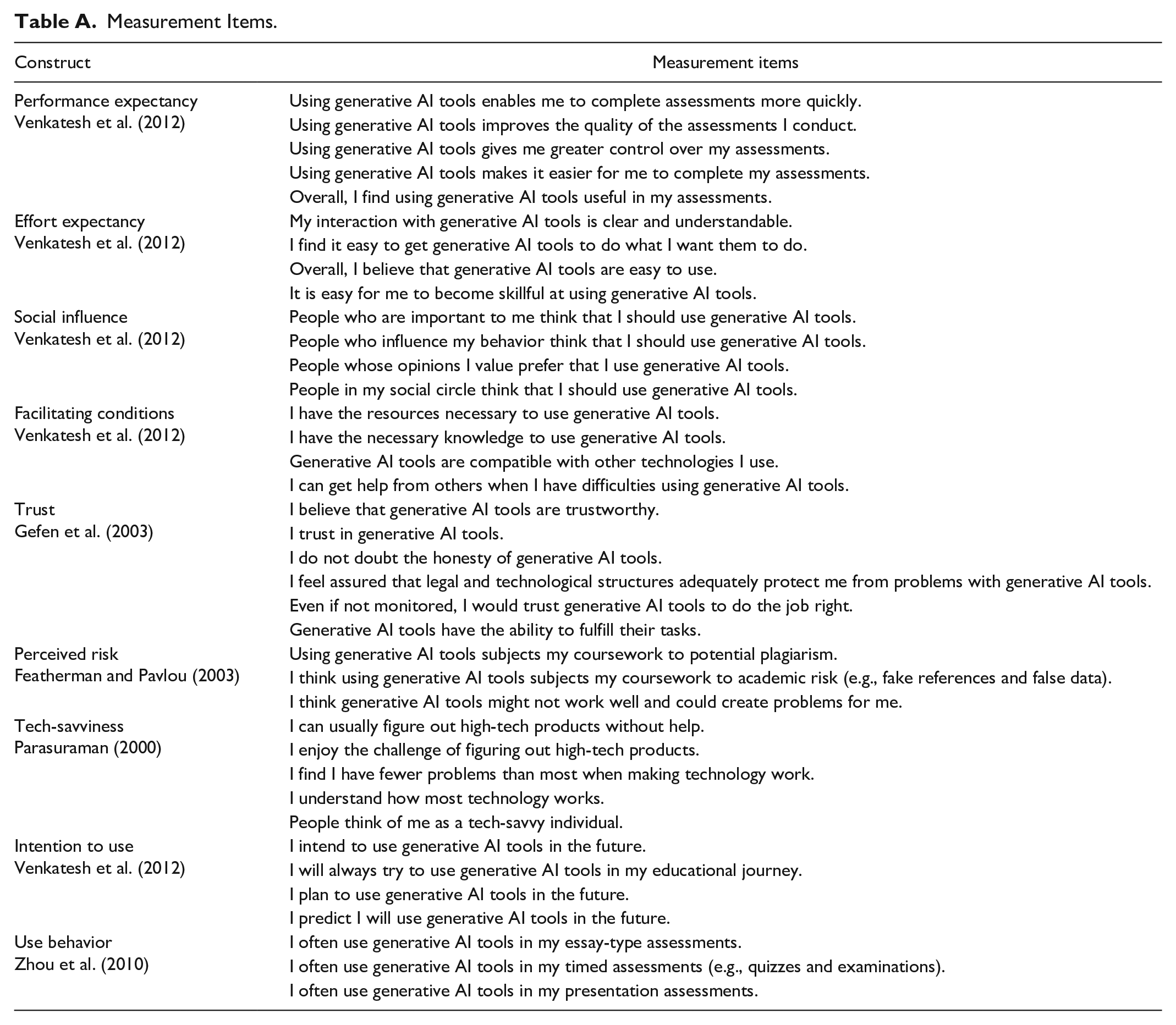

We adapted previously validated scale items from the literature to measure the constructs of interest, ensuring that they were contextually relevant to our study. For all measurement items, we used a 7-point Likert-type scale, where “1” indicated “strongly disagree” and “7” indicated “strongly agree.” We adapted five items from Venkatesh et al. (2012) to measure the performance expectancy of the generative AI tool, along with three items for effort expectancy, four items for social influence, four items for facilitating conditions, and four items for intention to use. We adapted six items from Gefen et al. (2003) to measure student trust and three items from Featherman and Pavlou (2003) to measure the perceived risks. We also adopted tech-savviness items from Parasuraman (2000) and, finally, adapted three use behavior items from Zhou et al. (2010).

The adaptation involved minor modifications to the wording of the items to better reflect the specific context of generative AI, while maintaining the core meaning and intent of the original items. For instance, the performance expectancy scale from Venkatesh et al. (2012) originally included items such as “Using mobile internet helps me accomplish things more quickly.” This was adapted to “Using generative AI tools enables me to complete assessed work more quickly.” Similarly, effort expectancy items were tailored by replacing general technology references with specific mentions of generative AI tools, such as adapting “It is easy for me to become skillful at using the mobile internet” to “It is easy for me to become skillful at using generative AI tools.” The adapted questionnaire, including all items and their sources, is provided in Appendix Table A.

Data Analysis

We used a two-step approach for data analysis, following practices similar to those by Merkle et al. (2022) and the recommendations of Sarstedt et al. (2021). In the first step, we analyzed the measurement model by assessing the reliability and validity of the measurements. In the second step, which involved analyzing the structural model, we assessed the relationships among the latent constructs. We preferred the partial least squares structural equation modeling (PLS-SEM) approach for hypothesis testing since this method has minimal limitations on sample size, measurements, and residual distribution. We used SmartPLS 4.0 to analyze both the measurement and structural models (Sarstedt et al., 2021). PLS-SEM is a prominent method in information systems and marketing, renowned for its robustness in theory testing (Bentler & Huang, 2014; Sarstedt et al., 2021).

We assess collinearity with the variance inflation factor (VIF). A VIF value greater than 3.3 is undesirable, while values above 10 indicate a serious collinearity problem (Kock, 2015). Our VIF values suggested there was no concern regarding collinearity in our model. Harman’s single-factor test assesses common method bias. Variance explained by a single factor needs to be below 50% (Podsakoff et al., 2003). The single factor was responsible for 31.3% of the total variance in the overall model and thus acceptable.

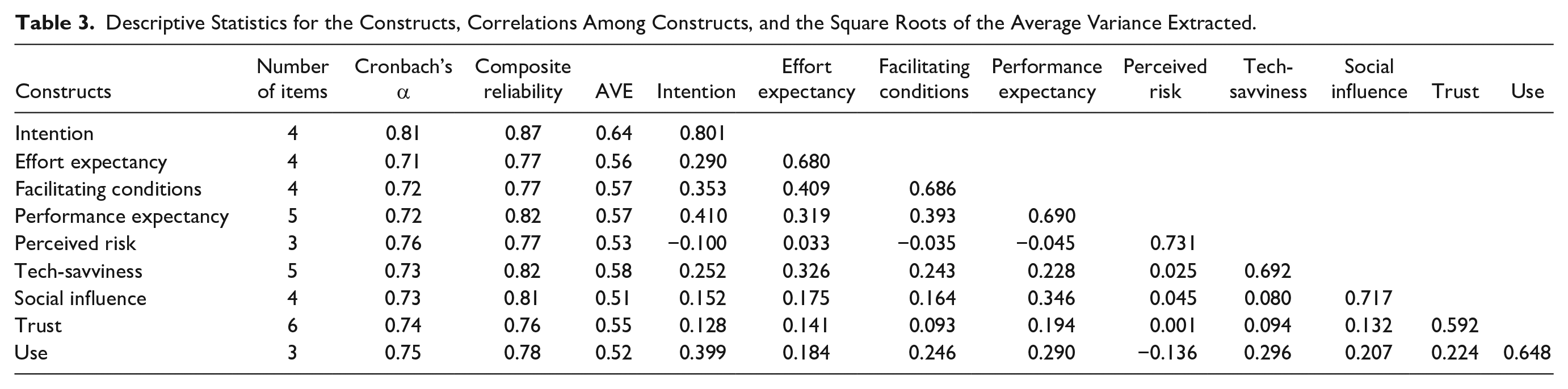

We calculated Cronbach’s alpha for each latent construct in the model to assess internal reliability. All Cronbach’s alphas were above 0.7, indicating construct reliability. Cronbach’s alpha and composite reliability values are presented in Table 3. The average variance extracted (AVE) score of every construct should be above 0.5, and the composite reliability of all constructs should be above 0.7 (Bagozzi & Yi, 2012; Fornell & Larcker, 1981).

Descriptive Statistics for the Constructs, Correlations Among Constructs, and the Square Roots of the Average Variance Extracted.

Exploratory Discussions

Following the survey, we conducted in-depth interviews to gain a deeper understanding of student attitudes to generative AI tools in higher education. These interviews were designed to explore perceived risks, trust, and tech-savviness in AI adoption. Given the exploratory nature of the research, this method allowed us to capture how these factors shaped AI use in assessments, enabling students to express their views and actions in their own words. This method was similar to approaches used in previous marketing education studies (Harrigan & Hulbert, 2011).

Interview Procedure and Sample Characteristics

The interviews were conducted with a purposive sample of 17 students who had participated in the initial survey and volunteered for the follow-up qualitative study. These participants represented a mix of undergraduate (63%) and postgraduate (37%) students from various academic disciplines, including business management, economics, and accounting. The diversity in academic backgrounds, as well as in their familiarity with AI tools, allowed for a comprehensive examination of how students with different levels of tech-savviness and risk perceptions engaged with generative AI in their academic work. The participants were selected to capture a broad spectrum of experiences and perceptions, ensuring that the interviews provided insights applicable to a wide range of students.

Interviews were conducted, recorded, and transcribed via Microsoft Teams, with each lasting between 55 and 103 min, with an average duration of 75 min. The interviews continued until data saturation was reached, with no new themes emerging after the 17th interview. This sample size was deemed adequate to develop a robust understanding of student perceptions of AI tools (Creswell, 2003).

Interview Guide

We used a semistructured interview guide to maintain consistency across interviews while allowing for flexibility to explore specific areas of interest in more depth. The guide included open-ended questions designed to uncover student perceptions of AI tools, perceived risks, and their experiences with using these tools in assessments. The questions focused on key constructs such as performance expectancy, effort expectancy, trust, and perceived risk, which are central to the UTAUT framework. Sample questions included

“How do you feel about using AI tools for assessments and learning? Is there a difference in the process of using AI for coursework versus learning?”

“What risks do you associate with using AI tools in your studies?”

“Do you perceive different levels of risk with different AI tools (e.g., ChatGPT and image generation tools)?”

“How do your instructors’ attitudes to AI tools influence your own perceptions and use?”

“What measures do you think could mitigate the risks you associate with AI tools?”

These questions were designed to elicit detailed responses, probing students’ thoughts on the advantages, challenges, and risks of using AI tools in their academic work. Follow-up questions were used as needed to explore experiences and perceptions further.

Data Analysis

Following the interviews, the transcripts were uploaded to MAXQDA software for coding and analysis. The analysis followed Braun and Clarke’s (2006) six phases of thematic analysis, beginning with familiarization with the data through repeated reading of the transcripts. Two researchers independently conducted open coding, focusing on identifying key themes related to perceived risk, trust, tech-savviness, and other relevant constructs.

A codebook was developed to guide the coding process, and codes were assigned to relevant segments of text based on recurring themes. For example, segments related to concerns about data privacy or academic integrity were coded under “Perceived Risk,” while responses discussing ease of use and comfort with AI tools were coded under “Tech-savviness.” The coding process was iterative, with the researchers comparing their coding results and resolving any discrepancies through discussion. The average inter-rater agreement was 0.92, indicating a high level of consistency between coders.

Findings

We tested our causal model using PLS-SEM after confirming that the reliability and validity results were within acceptable ranges. We employed PLS bootstrapping with 5,000 subsamples and a 95% confidence interval to test each hypothesis: In any given relationship, the t-statistic should be above 1.96 or the p value below 0.05. The results are shown in Figure 2.

Model Estimation Results by SmartPLS.

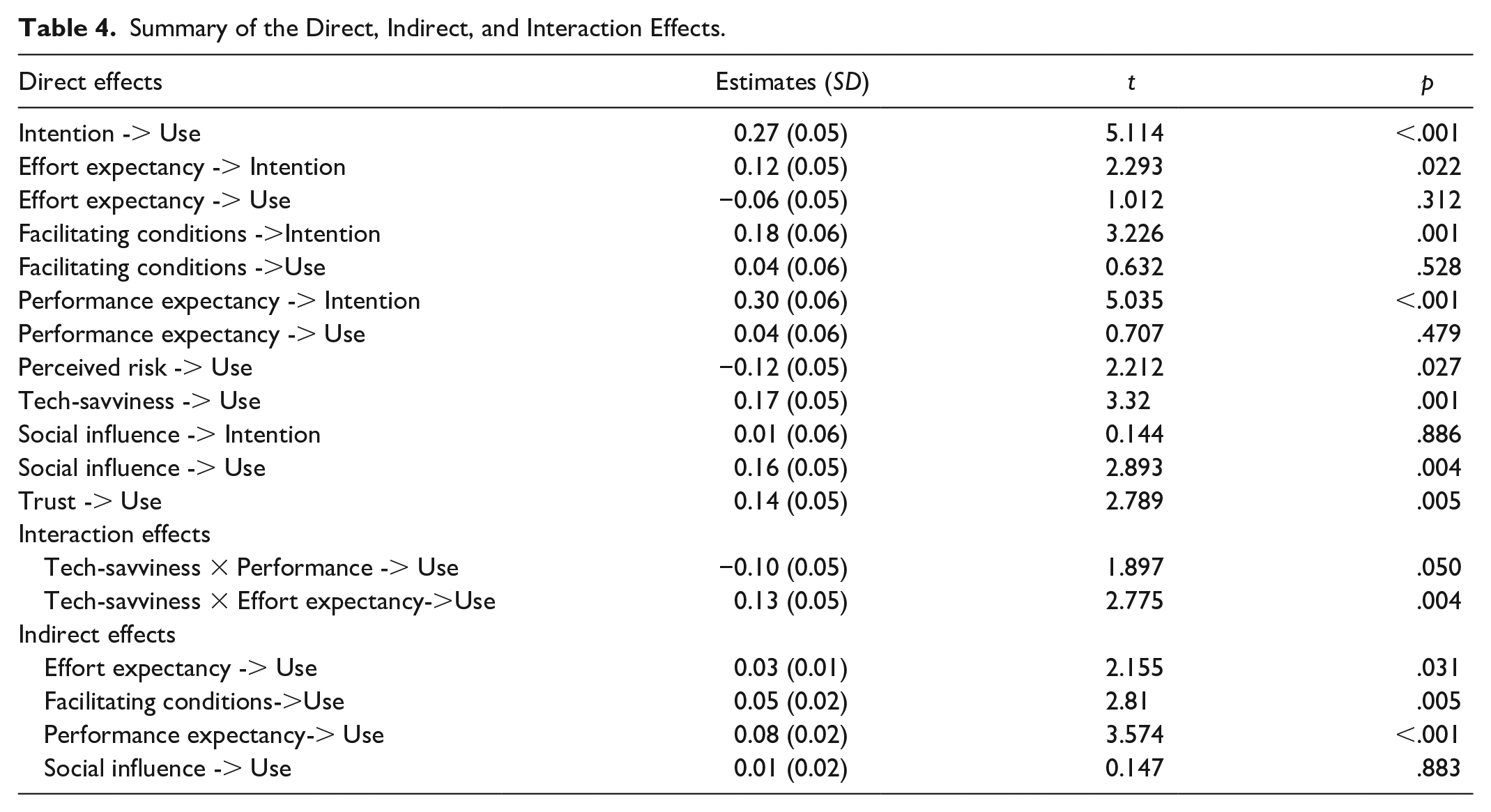

Table 4 presents the direct, indirect, and interaction effects. The relationship between behavioral intention to use (hereafter referred to as “intention”) and use behavior (hereafter referred to as “use”) demonstrated a strong positive effect (β = 0.279, t = 5.349, p < .001), confirming H5. Looking at the performance-related factors, the path from effort expectancy to intention also showed a positive effect, albeit less substantial (β = .117, t = 2.289, p = .022), confirming H2a. However, the direct impact of effort expectancy on use was negative and not statistically significant (β = −0.043, t = .812, p = .417), thus failing to confirm H2b. Therefore, although there was no direct impact of effort on use, analyzing the indirect effects on use revealed that effort expectancy had a modest yet statistically significant positive impact (β = .033, t = 2.176, p = .03). Performance expectancy’s influence on intention (H1a) was quite strong (β = .296, t = 5.045, p < .001), but its direct effect on actual use (H1b) was not significant (β = .052, t = .912, p = .362). However, performance expectancy showed a more pronounced positive indirect effect (β = .083, t = 3.656, p < .001), indicating a strong indirect influence on use.

Summary of the Direct, Indirect, and Interaction Effects.

The relationship between facilitating conditions and intention (H4a) was positive and significant (β = 0.187, t = 3.36, p = .001). Similarly, facilitating conditions also exhibited a significant positive indirect effect on use (β = .052, t = 2.866, p = .004), confirming H4b. Social influence did not significantly predict intention (β = .009, t = .157, p = .875), failing to confirm H3a. It did, however, have a significant positive effect on use (β = .156, t = 2.951, p = .003), confirming H3b. Social influence did not appear to have a statistically significant indirect effect on use behavior (β = .003, t = .162, p = .871), suggesting that it may not be a crucial factor in this context when considering indirect impacts.

There was a significant negative effect of perceived risk on use (β = −0.121, t = 2.247, p = .025), confirming H6. Trust in the generative AI tools showed a positive and significant effect on use (β = .136, t = 2.794, p = .005), supporting H7. The results suggest that students decrease their use of general AI tools when their perception of risk is high, and their level of trust is low. Tech-savviness demonstrated a significant positive effect on use (H8a) (β = .173, t = 3.447, p = .001), indicating that students who are more open to new technologies are more likely to use generative AI tools.

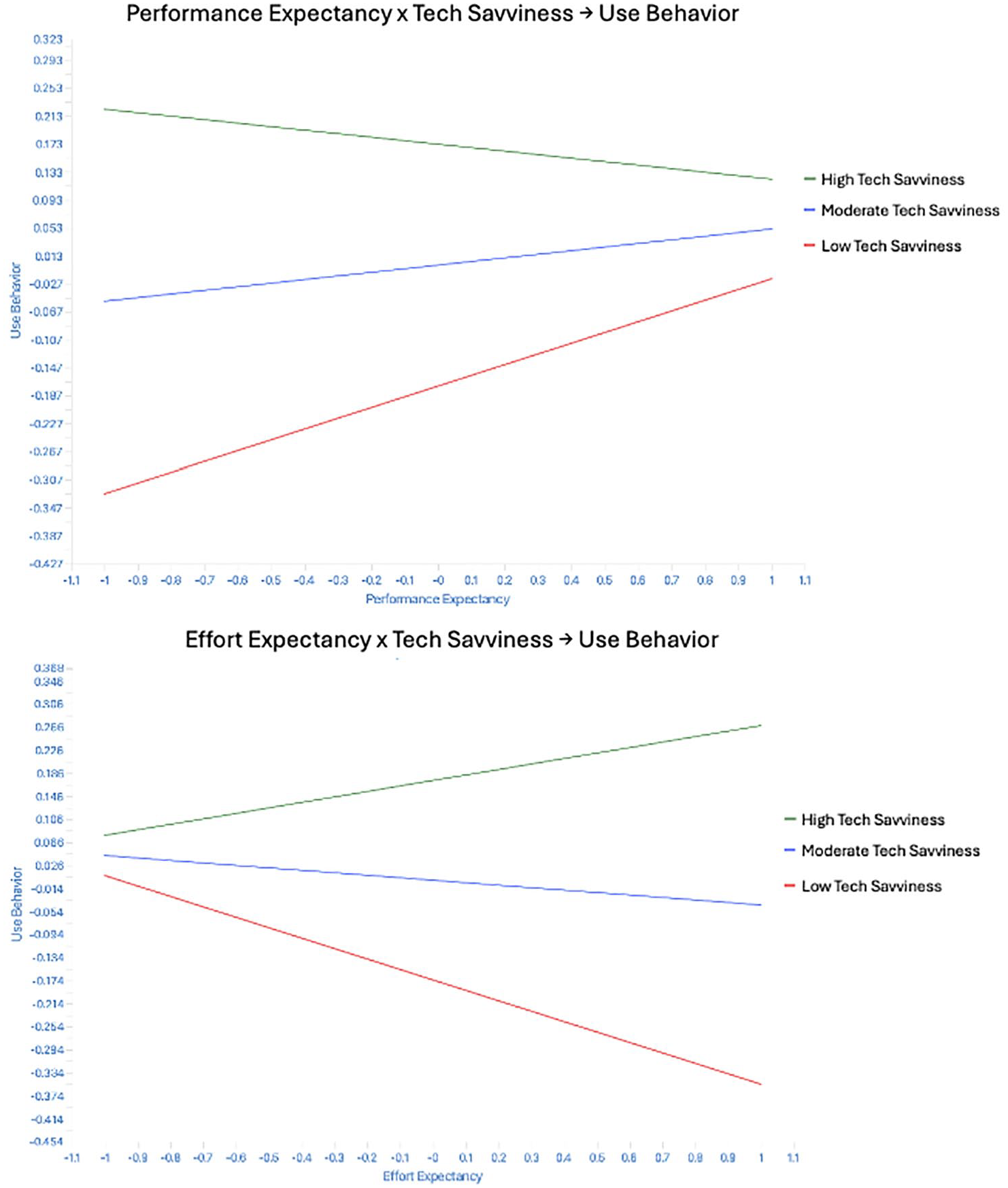

We also examined the moderating effects of tech-savviness on the relationship between performance factors and use behavior. The interaction term between tech-savviness and performance expectancy on use was negative and significant (β = −0.102, t = 1.963, p = .05), supporting H8b. This suggests a nuanced relationship: Tech-savvy students do not increase their use of generative AI tools based on performance expectations, whereas those less tech-savvy are more likely to use these tools when they have performance expectations. Finally, the interaction between tech-savviness and effort expectancy on use was positive and significant (β = .138, t = 2.908, p = .004), confirming H8c. This indicates that less tech-savvy users, who find generative AI tools difficult to use, are less likely to use them (see Figure 3).

Interaction Effects.

Qualitative Exploration of Risk Perceptions

The thematic analysis of in-depth interviews with students provided a rich, multifaceted understanding of the factors shaping their adoption of generative AI tools in higher education. Six key themes emerged: data privacy and confidentiality, academic integrity and authorship, skill degradation, trust and tech-savviness, the influence of external factors (instructor attitudes), and the interplay between trust in AI and institutional acceptance.

Students expressed a wide range of views on data privacy and confidentiality when using AI tools. Some participants were relatively unconcerned, with one student mentioning, “I don’t feel very worried . . . I accept all the cookies anyway, so I’m not that worried about it.” This suggests that, for some, the perceived risks associated with AI tools are minimal, particularly when compared with their general online behavior. Conversely, other students were more cautious, particularly regarding the potential access and storage of their data by third parties. One student questioned, “Who is going to have access to the work that you’re putting in there? If it’s an AI tool leveraged by the university, do members have access to it, or will it be anonymized?.” This uncertainty reflects deeper concerns about data management protocols. A less intuitive but relevant concern was about the long-term ownership of data stored on AI platforms. Some students worried that they might lose access to important academic work after graduation, raising questions like, “Will I lose all of that data because it’s tied to my university account?” These concerns extend beyond immediate privacy issues to future utility and ownership, underscoring a broader spectrum of perceived risk.

Academic integrity and authorship were also of crucial concern. Students feared that generative AI could blur the lines between their original work and AI-generated content, potentially leading to academic dishonesty. One participant remarked, “It’s hard to say what is somebody’s actual work and what is something that is fed by AI.” This ambiguity raises ethical concerns, particularly for students struggling with language skills who might disproportionately benefit from generative AI tools. An intriguing insight was that some students felt they were competing against generative AI itself, not just peers, with one student noting, “It’s almost like I’m competing against the AI to see who can produce better content.” This perception further complicates the dynamics of academic integrity. However, students’ perceptions of risk were not uniform for different types of generative AI tools. While some students were particularly concerned about the potential for text-based AI tools like ChatGPT to fabricate references, they viewed image-generating tools as less risky, primarily due to the lower stakes involved in academic integrity.

AI-induced skill degradation was also a prominent theme, with students recognizing the convenience of AI for routine tasks but fearing that over-reliance could stifle intellectual development. One student articulated, “If the default is that I’m going to the AI tool to answer questions that I should be answering by myself, then yes, at some point, it will impact my critical thinking skills.” This concern reflects the broader implications of becoming dependent on generative AI, potentially sacrificing the richness of learning experiences to gain efficiency.

Trust in AI tools and tech-savviness emerged as nuanced and context-dependent factors. Trust varied significantly among students, often linked to their experiences and the perceived reliability of AI tools. For example, “The quality of AI tools like ChatGPT is significantly better if you pay for the premium version,” noted one student, highlighting how trust can vary based on the specific tool and version. Tech-savviness was identified as a crucial moderator, shaping how students interacted with generative AI tools. Tech-savvy students were generally more confident and willing to experiment with AI, reducing perceived risks. Conversely, less tech-savvy students expressed higher anxiety. One student remarked, “For someone who’s grown up with technology, AI is just another tool in the toolkit. But I can see how less tech-savvy students might struggle and feel more anxious about using it.”

The influence of external factors (instructor attitudes) significantly shaped student perceptions of AI-related risks. Educators supportive of generative AI instilled confidence in students, reducing perceived risks, while skeptical instructors heightened anxiety and uncertainty. “If my professors are leveraging AI too, that gives me more confidence in using it. But if they’re skeptical, I start to question whether I should trust AI,” commented one student. Interestingly, some students felt that their trust in generative AI could be undermined if they perceived their instructors as less knowledgeable about AI, leading to a potential erosion of trust in both AI tools and the educational guidance they receive.

The interplay between trust in AI and institutional acceptance revealed a complex dynamic. Students were concerned about whether AI-generated work would be judged fairly by instructors, assessors, and institutions. This concern was particularly pronounced regarding grading and feedback, with students fearing that generative AI-assisted work might be viewed as less authentic. One student said, “I’m relying on the other person to trust me, but is everybody who’s submitting doing the right thing or not? That uncertainty is stressful.” This highlights a potential misalignment between student trust in generative AI tools and their trust in institutional acceptance, which could significantly influence their adoption decisions.

General Discussion

Contributions to Theory

This study aimed to examine the factors influencing student adoption and use of generative AI tools for marketing assessments. It makes three contributions to the literature on the adoption of technology in higher education. First, it extends the application of the UTAUT model beyond the prevailing focus on specific AI tools like ChatGPT (Strzelecki, 2023; Strzelecki & ElArabawy, 2024), to a broader range of generative AI technologies, including image generators such as Dall-E and Midjourney. This broadens the scope of UTAUT and allows for a more comprehensive understanding of how different types of AI tools influence behavior.

Our investigation revealed that student interactions with generative AI tools are not uniform and that the perceived benefits and risks vary significantly depending on the nature of the tool. For example, text generators may increase student concerns about academic integrity, whereas image generators may provoke fewer worries about plagiarism but more about creative authenticity. By extending the UTAUT model, we offer a more nuanced framework that accounts for the diversity of tools students might use, thereby enhancing the model’s applicability in the dynamic education landscape.

Second, this study addresses a crucial gap in the literature by empirically examining the role of perceived risk and trust in the adoption of generative AI tools in higher education. While previous research has connected these constructs to the UTAUT model in areas such as e-services and mobile banking (Featherman & Pavlou, 2003; Van et al., 2021), our work was the first to apply them within an academic context. This application is vital given the specific challenges in education, where concerns about data privacy, academic integrity, and skills development are especially significant. Our findings align with recent studies highlighting the importance of perceived risk in student adoption of generative AI tools (Dwivedi et al., 2023; Kasneci et al., 2023), revealing that perceived risk is a substantial barrier to actual AI use. This suggests that while research focused on intention (Wang et al., 2022) and on data privacy risk (Gulati et al., 2024) may underestimate the impact of risk, this becomes more pronounced as students move from intention to actual use. Contrary to Gulati et al. (2024), who found no effect of perceived risk on AI use, we demonstrated that this was a significant obstacle. Our findings also highlighted that student risk perceptions varied significantly across different AI tools, with concerns about academic dishonesty being more pronounced for text-generating tools, while image-generating tools were perceived as posing fewer risks to academic integrity.

The interviews further revealed that perceived risks extended beyond technical concerns, encompassing fears about AI tools potentially diminishing critical thinking skills and creativity. This highlights an important theoretical implication: the need to expand existing models like UTAUT to incorporate not only the technical aspects of perceived risk but also the broader intellectual and pedagogical risks that influence student adoption and use of AI in education settings.

Consistent with prior research (Gefen et al., 2003; Kesharwani & Singh Bisht, 2012), our findings highlighted that students were more likely to engage with AI tools they perceived as trustworthy. The interviews revealed that this trust extended beyond the technology, showing that trust was also substantially influenced by instructors’ attitudes toward generative AI tools. We found that students were more likely to trust and use AI tools when they perceived their educators as knowledgeable and supportive of AI integration in line with (Gonsalves, 2024). While prior research focused on trust in the focal technology or its providers (Gefen et al., 2003; Venkatesh et al., 2003), our findings highlighted the significant role of social trust, as evidenced by the influence of educators in facilitating trust.

Third, our study highlighted the moderating role of tech-savviness in the process of adopting AI tools. We found that tech-savvy students were more likely to adopt and effectively use generative AI tools, perceiving them as easier to use and more beneficial. However, tech-savviness also moderated the relationship between performance expectancy and actual use, indicating that more tech-savvy students relied less on performance expectations when deciding to use AI tools. These findings suggest that student familiarity with technology reduces their dependence on specific performance outcomes, allowing them to explore AI tools more freely. This finding aligns with Mogaji et al.’s (2024) call for an evolution of the UTAUT model in line with younger, digital natives (Prensky, 2001), as their tech-savvy nature leads to more sophisticated evaluations of perceived ease-of-use and usefulness of generative AI tools.

Implications for Marketing Education

Our findings point to the need for educators to consider how these tools are brought into the classroom. The finding that perceived risks significantly deter students from adopting generative AI tools suggests that educators and institutions must be proactive in alleviating these concerns, paying particular attention to addressing student concerns, building trust, and enhancing their comfort with technology. Institutions should create clear guidelines that help students understand the benefits of AI and also the potential risks, as well as outlining protocols to mitigate these risks and the safeguards to protect them. Transparency about data privacy and security is also essential. When students feel confident that their personal information is safe and that the use of generative AI tools will not undermine their learning or integrity, they may be more likely to embrace them. Just as companies such as Google and Deloitte have developed AI principles for their operations, institutions should establish frameworks to guide the ethical use of AI in academic settings. Educators should also foreground the ethical dimensions of AI, helping students grasp the broader implications of their interactions with these technologies. By creating an environment in which students feel confident in the tools they are using, educators can significantly enhance the adoption and effective use of generative AI in marketing education.

To further validate our findings, we conducted informal interviews with five marketing lecturers from different U.K. universities. We found a spectrum of perspectives on generative AI’s role in academia––some educators integrated generative AI tools into coursework and others banned their use. These informal interviews reflected the academic community’s diverse approach to adopting these technologies. These insights emphasized the vital need for balanced pedagogical strategies in marketing education that address both the opportunities and challenges presented by generative AI.

Building trust in AI tools is crucial, and this trust must be cultivated through comprehensive educator support. Instructors should be familiar with AI technologies, integrating them into their teaching in a way that highlights their reliability and pedagogical value. This might involve using generative AI tools during class, showcasing successful case studies of AI-driven projects, and offering tailored training sessions that build student confidence. Institutions, too, should play a role in endorsing and facilitating generative AI use, ensuring that the necessary professional development, resources, and infrastructure are in place to facilitate their effective use.

Tailoring AI integration to meet the diverse levels of tech-savviness among students is essential. For students less comfortable with technology, educators should provide structured and supportive learning experiences that gradually introduce generative AI tools. This could include practical, hands-on sessions such as step-by-step tutorials, peer mentoring, and workshops that focus on real-world benefits. This incremental approach would build digital literacy while making students feel supported rather than overwhelmed. In contrast, more tech-savvy students should be encouraged to dive deeper into advanced AI features, sparking experimentation and innovation in their studies. Tailoring AI integration in this way could bridge the gap between varying technological abilities and student engagement with AI, ensuring that tech-savviness becomes an asset rather than a barrier. However, to enhance the success of AI integration and to truly encourage adoption, institutions should make technology resources and knowledge accessible to all students, regardless of the level at which they start. This would mean investing in robust training programs that go beyond the basics, fostering creativity, independent-thinking, and problem-solving skills, while also addressing both the benefits and potential pitfalls of AI, inside and outside the classroom.

Limitations and Future Research

While this study makes significant contributions to the literature, several limitations must be acknowledged. First, the cross-sectional design of the survey data limits the ability to draw causal inferences about the relationships between the variables. Although the use of PLS-SEM offers robust insights into the associations between constructs, longitudinal studies would be necessary to confirm the temporal dynamics—how relationships between variables such as perceived risk, trust, and tech-savviness evolve over time—and the causal pathways suggested by the model. This would provide a deeper understanding of how student perceptions and behaviors may change as they gain more experience with generative AI tools.

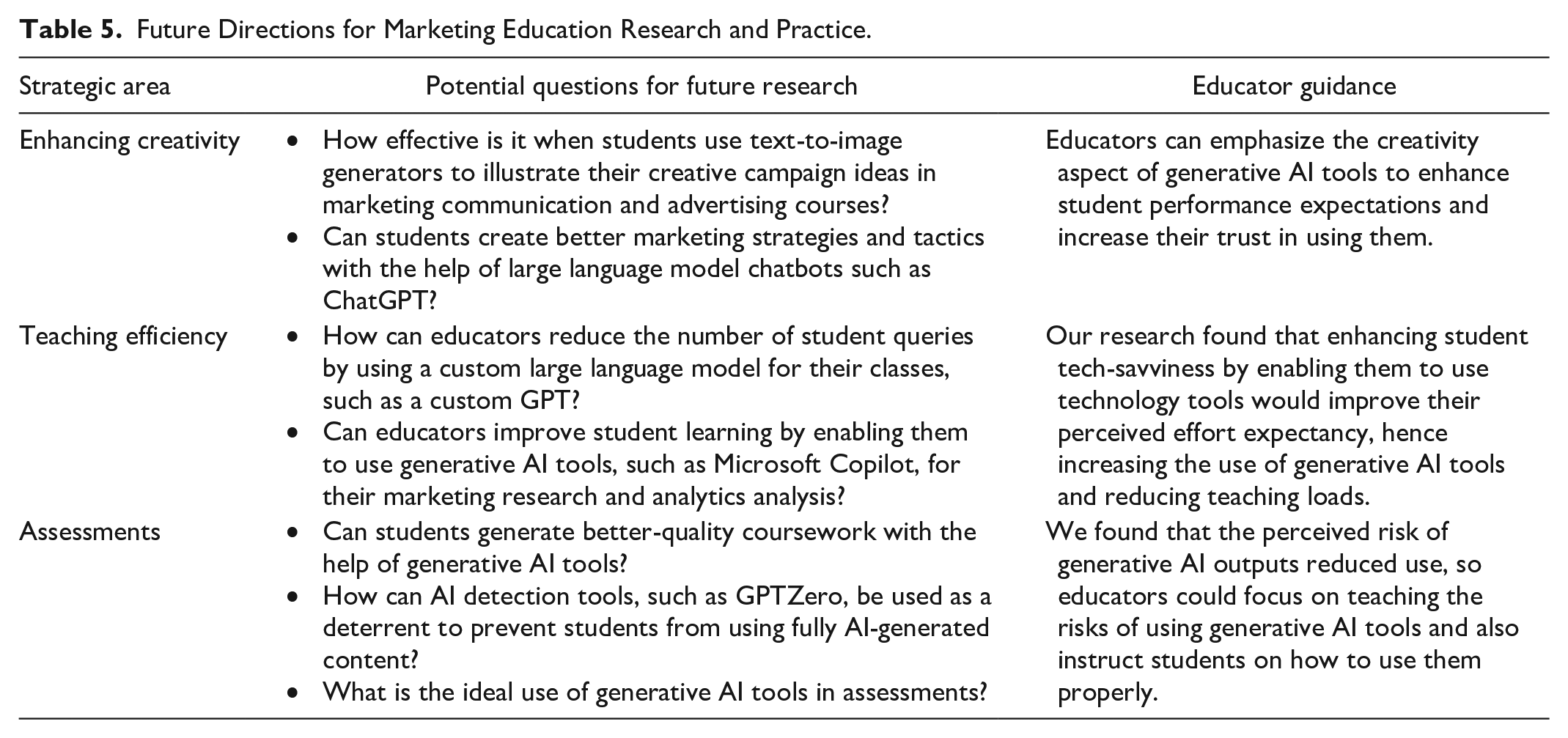

Another limitation of this study lies in the use of established and validated constructs of perceived risk and trust (Featherman & Pavlou, 2003; Gefen et al., 2003), which, while robust, may not fully capture the nuanced and evolving nature of these concepts in the context of generative AI tools in higher education. Although these constructs provided a solid foundation for our quantitative analysis, they may lack the specificity needed to explore more intricate aspects of risk and trust that are particularly relevant to AI adoption. To address this limitation, we incorporated in-depth interviews with students, allowing us to probe these constructs more deeply and uncover richer insights into their perceptions and experiences. However, following precedents such as Yilmaz et al. (2023), future research could focus on developing new, more nuanced scales and further qualitative investigations to refine these constructs. In Table 5, we outline practical use cases for instructors considering the adoption of generative AI tools in marketing education, as well as suggesting potential directions for further research in this evolving field.

Future Directions for Marketing Education Research and Practice.

Finally, as our findings highlight the significant impact of generative AI tools on student learning experiences, future research should investigate how these technologies might not only support but fundamentally transform pedagogical practices. Exploring the integration of AI with emerging technologies, such as virtual reality or blockchain, could offer new perspectives on enhancing student adoption and use (Yilmaz et al., 2023). While this study provides valuable insights into the factors influencing the adoption of generative AI in higher education, future research should continue to explore these dynamics, particularly as AI technologies continue to evolve and become more embedded in education.

Footnotes

Appendix

Measurement Items.

| Construct | Measurement items |

|---|---|

| Performance expectancy Venkatesh et al. (2012) |

Using generative AI tools enables me to complete assessments more quickly. |

| Using generative AI tools improves the quality of the assessments I conduct. | |

| Using generative AI tools gives me greater control over my assessments. | |

| Using generative AI tools makes it easier for me to complete my assessments. | |

| Overall, I find using generative AI tools useful in my assessments. | |

| Effort expectancy Venkatesh et al. (2012) |

My interaction with generative AI tools is clear and understandable. |

| I find it easy to get generative AI tools to do what I want them to do. | |

| Overall, I believe that generative AI tools are easy to use. | |

| It is easy for me to become skillful at using generative AI tools. | |

| Social influence Venkatesh et al. (2012) |

People who are important to me think that I should use generative AI tools. |

| People who influence my behavior think that I should use generative AI tools. | |

| People whose opinions I value prefer that I use generative AI tools. | |

| People in my social circle think that I should use generative AI tools. | |

| Facilitating conditions Venkatesh et al. (2012) |

I have the resources necessary to use generative AI tools. |

| I have the necessary knowledge to use generative AI tools. | |

| Generative AI tools are compatible with other technologies I use. | |

| I can get help from others when I have difficulties using generative AI tools. | |

| Trust Gefen et al. (2003) |

I believe that generative AI tools are trustworthy. |

| I trust in generative AI tools. | |

| I do not doubt the honesty of generative AI tools. | |

| I feel assured that legal and technological structures adequately protect me from problems with generative AI tools. | |

| Even if not monitored, I would trust generative AI tools to do the job right. | |

| Generative AI tools have the ability to fulfill their tasks. | |

| Perceived risk Featherman and Pavlou (2003) |

Using generative AI tools subjects my coursework to potential plagiarism. |

| I think using generative AI tools subjects my coursework to academic risk (e.g., fake references and false data). | |

| I think generative AI tools might not work well and could create problems for me. | |

| Tech-savviness Parasuraman (2000) |

I can usually figure out high-tech products without help. |

| I enjoy the challenge of figuring out high-tech products. | |

| I find I have fewer problems than most when making technology work. | |

| I understand how most technology works. | |

| People think of me as a tech-savvy individual. | |

| Intention to use Venkatesh et al. (2012) |

I intend to use generative AI tools in the future. |

| I will always try to use generative AI tools in my educational journey. | |

| I plan to use generative AI tools in the future. | |

| I predict I will use generative AI tools in the future. | |

| Use behavior Zhou et al. (2010) |

I often use generative AI tools in my essay-type assessments. |

| I often use generative AI tools in my timed assessments (e.g., quizzes and examinations). | |

| I often use generative AI tools in my presentation assessments. |

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.