Abstract

This study examined the effects of practice-based coaching with scripted supports designed to support paraeducators and speech-language pathology assistants (SLP-As) as they implement evidence-based shared book reading strategies with preschoolers with language delays. A single-case, multiple baseline across behaviors was employed. Five U.S. educators (three SLP-As and two paraeducators) participated in the study. The primary dependent variable was percentage of strategies correctly implemented; the secondary dependent variable was expressive and receptive vocabulary. Results demonstrate that all five educators were able to successfully implement the strategies with high and consistent levels of fidelity during the intervention and maintained similar levels of fidelity even after coaching and scripted supports were faded or removed. All five preschool participants showed gains in both expressive and receptive vocabulary. Social validity results support the feasibility and usefulness of the intervention. Implications for research and practice are provided.

Keywords

Paraeducators (paras) and speech-language pathology assistants (SLP-As) are on the front lines of education for preschool-age students. Paras provide instructional support, assist with classroom management, and participate in parental involvement activities (U.S. Department of Education, 2017). Similarly, SLP-As are support personnel who work with licensed SLPs to engage in prevention activities, prepare for and implement treatment sessions, communicate with students and families, and perform administrative tasks (Council for Clinical Certification, 2020). However, in many cases, paras and SLP-As are not included in professional development (PD) opportunities. As a result, paras and SLP-As may not be implementing best practices. Adequate preparation and training for paras and SLP-As are critical for students to achieve the best outcomes (Brock & Carter, 2013). As such, we used practice-based coaching (PBC) to examine the barriers and facilitators for paras and SLP-As to implementing evidence-based (EB) practices. We focused on shared book reading (SBR) as the tool for delivering evidence-based vocabulary instruction.

Paraeducators/Speech-Language Pathology Assistants

Paras and SLP-As play a vital role in the instruction of students with disabilities. In fact, there are more special education paras employed in preschool through high school settings than special education teachers (U.S. Department of Education, 2017). Therefore, early childhood (EC) PD must be designed to address the unique EC contexts (Winton et al., 2015) and range of education and certification levels (Artman-Meeker et al., 2014; P. Snyder et al., 2012). Training requirements vary by state and are often unclear (Hall & Odom, 2019). A small number of states have taken steps to ensure their paras are adequately prepared for their roles. However, teachers, parents, and administrators have been concerned about paras’ lack of training to work with students with disabilities (Giangreco et al., 2011). Similarly, there is variability across states regarding how SLP-As are trained and supervised (American Speech-Language Hearing Association [ASHA], n.d), including how many hours of PD is required per year. Over 30 states have no requirements for continuing education of school-based SLP-As. This is troublesome considering the varied populations on SLPs’ caseloads, and the need to implement EB approaches tailored to children’s needs. Taken together, there is a need for a structured and supportive approach to providing robust PD to paras and SLP-As.

Vocabulary Instruction During Shared Book Reading (SBR)

Emergent literacy skills are strong predictors of later success in reading (Whitehurst & Lonigan, 1998). Oral language skills are predictive of reading comprehension later in life, so preschool is a crucial time for children to develop oral language (Wasik & Iannone-Campbell, 2012). One way to measure children’s oral language development is through their expressive and receptive understanding of vocabulary words; research suggests children with language impairments are able to increase vocabulary knowledge during SBR (Storkel et al., 2017).

SBR is a common routine in most preschool classrooms. Through SBR, children can engage in conversations with adults and peers while hearing novel vocabulary words they may not encounter in typical conversations. Different styles of SBR exist, one being dialogic reading (DR); DR is an interactive, conversational style of SBR (Whitehurst & Lonigan, 1998). It includes a specific series of steps an adult can follow to scaffold interactions while reading to the child (PEER—Prompt, Evaluate, Expand, Repeat) as well as various prompts that are used to encourage practice with oral language (van Kleeck et al., 2006). This style of SBR has yielded positive effects on oral language development for typically developing preschool students (What Works Clearinghouse [WWC], 2007, p. 1), and it also has “potentially positive effects” on the communication skills of children with or at risk for disabilities. One way to improve implementation of DR strategies is to use scripts (van Kleeck et al., 2006). Scripts can be personalized to meet the individualized needs of educators, detail the specific instruction, and ensure the intervention is natural and accessible (L. R. Dennis et al., 2022; Toub et al., 2018; van Kleeck et al., 2006). In the current study, scripts are used as an implementation guide to support educators’ acquisition and maintenance of taught strategies.

Scripting as an Implementation Guide

Many effective practices have been identified to support children with disabilities, but there remains a gap between the published research and the implementation of the practice in ECSE (Hebbeler et al., 2012). Similarly, there may be a gap in knowledge necessary for effective implementation. This may be particularly true for paras and SLP-As who spend a substantial amount of direct time with children, yet often have less training (Every Student Succeeds Act [ESSA], Sec. 1013(c)(1)). As such, practitioners may need scaffolding to help bridge knowledge and utilization to promote implementation fidelity of a practice(s) (Sexton & Rush, 2021).

Implementation fidelity is the degree to which an intervention is delivered as intended and is critical to successful translation of EB interventions into practice (Sexton & Rush, 2021). Implementation guides, or scripts, could provide, in part, a key driver to the consistent and competent implementation of EB practices as well as the necessary support for practitioners to “hit the ground running” immediately after training. The immediacy of the practitioner's success may help with positive outcomes, earlier buy-in, and longer practice as they habituate (Sexton & Rush, 2021). Systematic professional development (PD) is one type of implementation support that increases the capacity of professionals to use an intervention (Odom et al., 2014). Several features are thought to support changes in practice across EC settings (a) explicit teaching of content, (b) frequent job-embedded opportunities to practice and reflect (c) coaching on real-world practice with enough intensity to increase implementation, and (d) and follow-up support to ensure lasting changes in practice (P. Snyder et al., 2012).

Practice-Based Coaching (PBC): A Systematic Professional Development Support

PBC is robust, individualized, and supportive, yielding positive outcomes for EC teachers and children (P. A. Snyder et al., 2015). PBC is a cyclical process starting with collaborative partnerships, shared goals and action planning, focused observation, and reflection and feedback. The use of a workshop followed by PBC can increase teachers’ use of embedded instruction (P. A. Snyder et al., 2015), literacy practices (Diamond & Powell, 2011), and social-emotional instruction (Artman-Meeker et al., 2014). There is evidence that PBC can be offered flexibly to meet individual and program needs. For example, PBC can be delivered live in the classroom or at a distance through technology. Researchers have used email (Baughan et al., 2019), video (McLeod et al., 2019), and web-based self-coaching (P. Snyder et al., 2018) to deliver PBC. In a more recent study, L. Dennis et al. (2023) found that a paraeducator was able to implement SBR reading strategies with fidelity after participating in PBC with scripted supports.

Current Study

We evaluated the effects of practice-based coaching (PBC) intended to increase paras’ and SLP-As’ (collectively referred to as educators) use of explicit vocabulary instruction while reading one-on-one to a preschool-age child with a language delay. The following research questions were addressed:

Method

All aspects of this project were approved by the Internal Review Board at a university located in the southeastern United States.

Participants

Educators

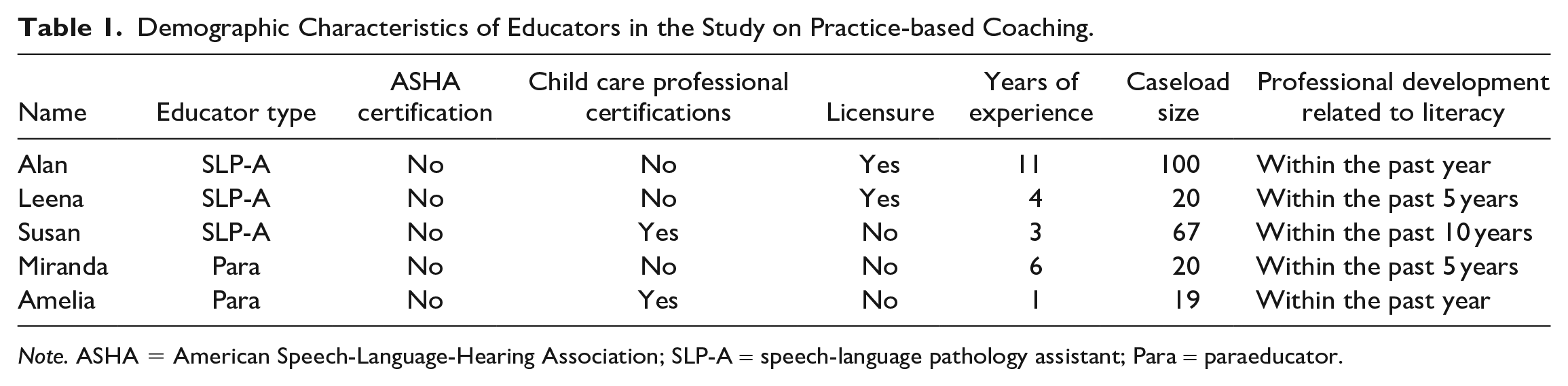

We were interested in the extent to which educators could achieve fidelity to a SBR intervention while receiving coaching and scripted supports. Thus, our primary participants of interest were five educators (three SLP-As [one male, two female] and two paras [both female]), from five different U.S. states. Participants were recruited from social media (i.e., Instagram and Facebook) and professional communities (e.g., Special Interest Groups for ASHA). No participants were familiar with or known to any person on the research team. Once they consented, participants completed a one-time demographic survey with the following three sections: inclusionary demographics, certification and licensure, and job satisfaction (not discussed in the present investigation). All participants met the following inclusionary criteria: (a) currently working full-time in an educational setting in the United States with preschool-age children at least 3 days per week, and (b) not currently enrolled in, nor planning to enroll in, a graduate program. On average, the participants had 7 years of experience (range = 1–21) and managed caseloads of approximately 42 children (range = 19–100). Given the state-level variability in certification and licensure requirements, the demographic survey included questions regarding whether participants were certified by ASHA or had additional professional certifications (e.g., child development associate). See Table 1 for demographic data.

Demographic Characteristics of Educators in the Study on Practice-based Coaching.

Note. ASHA = American Speech-Language-Hearing Association; SLP-A = speech-language pathology assistant; Para = paraeducator.

Children

Five preschool-age children were selected from the caseloads or classrooms of the consented educators. Educators were asked to recruit children who had an Individualized Education Program (IEP) with at least one goal targeting language. This information was provided by the educator and confirmed by the parent. The parents of all study-selected children completed a demographic questionnaire. Children ranged in age from 47 to 59 months (i.e., 3:11–4:11 years), with 53 months being the average (4:5 years). Three of the children were White/Caucasian, one was Black, and one was both Black and white/Caucasian. Two of the children were female and three were male.

Setting

The setting for the intervention was determined by each educator, typically with child and educator seated next to each other at a table. Each SBR session was one-on-one and took place within the child’s preschool setting. The details of the sessions varied per participant, depending on available space and schedule. Although session length varied by book, sessions typically lasted 10 to 15 minutes. Educators were asked to read at approximately the same time and maintain consistency within the environment (e.g., similar seating and table arrangement). Coaches and educators communicated via email and/or text to schedule virtual, weekly coaching sessions via Zoom at a time that was mutually convenient.

Materials

Coaching and implementation materials were designed by the researchers; most of the implementation materials were made accessible to the educators via Google Drive during weekly coaching sessions. Coaching materials included a performance feedback sheet (i.e., graph and summary of data) and action plan form (i.e., goal and associated actions). Implementation materials included (a) age and developmentally appropriate storybooks, (b) scripted supports for each of the three strategies for each storybook (i.e., teacher worksheet), (c) researcher-provided iPad for use with receptive vocabulary probe (i.e., pictures) and for recording sessions and uploading to Vosaic (explained below), and (d) vocabulary probes (i.e., expressive and receptive; pre and posttest). Vocabulary targeted in the study met the following criteria: (a) high-utility or words children are likely to encounter in future conversation or readings (Beck et al., 2013), (b) relate to the context of the story in a significant way, and (c) can be taught effectively.

Dependent Variables and Recording Method

The primary dependent variable was the percentage of educator’s correct use of three SBR strategies during one-on-one reading sessions. The first strategy, Question/Evaluate, included three explicit elicitation questions: labeling, definition, and inference. Labeling was defined as providing a child-friendly definition of a target word and asking, “What’s that called?” For example, the teacher says, “This is a flying insect with beautiful wings. What’s it called?” (butterfly). Non-examples include the teacher pointing to a picture and asking, “What’s this?” or not asking a question. Definition was defined as explicitly asks the child to define a target word. For example, the teacher says, “What is a butterfly?” Non-examples include the teacher saying, “Point to the butterfly” or not asking a question. Inference was defined as asking a question that requires child to integrate information from the book with prior knowledge or experience. For example, the teacher asks, “What does a butterfly eat?” Non-examples include the teacher asking, “What color is a butterfly?” and not asking a question. The second strategy, Expand, was defined as adding one to two more words to the child’s response. For example, if the child said, “butterfly” the teacher would expand by saying, “a beautiful butterfly.” Non-examples include the teacher saying, “That’s right, a butterfly” or not expanding. The third strategy, Repeat, was defined at prompting the child to repeat the teacher’s expansion. For example, the teacher would prompt, “You say, ‘a beautiful butterfly’” and the child would respond, “beautiful butterfly.” Non-examples include the teacher not prompting the child to repeat. These data were collected via video recording by the educator and uploaded to Vosaic, a secure, cloud-based coding platform. Use of question/evaluate, expansion, and repeat prompts were calculated as a fidelity percentage; each data point represented percentage of strategy use during each of the three book reading sessions. Child responses on the expressive and receptive vocabulary probes were an indirect measure of the educators’ use of SBR strategies; the educator recorded responses into a researcher-provided form in Google Drive. Changes in scores from pre- to posttest were calculated to summarize the receptive and expressive vocabulary data for the child participants.

Intervention Fidelity Checklist

Educators read a different study-selected book each week, three times per week. Book readings were coded to determine fidelity to the three SBR strategies. During baseline and intervention, the educators’ behaviors were coded in a binary fashion according to strategy use. However, during all intervention sessions, the educators were also provided with scripted supports (i.e., teacher worksheet). The first strategy, Question/Evaluate, included three types of elicitation questions: labeling (target word), definition (definition of target word), and inference (integration of book information with prior knowledge or experiences). Responses to each question were evaluated as either correct, the teacher confirmed and repeated (e.g., child says, “wolf,” teacher says, “That’s right, it is a wolf”), or incorrect, the teacher provided a direct model (e.g., child says, “I don’t know,” teacher says, “It is a wolf”). The second strategy, Expand, was operationally defined as adding 1 to 2 more words to the child’s response. The third strategy, Repeat, was operationally defined as prompting the child to repeat the adult’s expansion. Educator behaviors were coded as a percentage of correct opportunities out of twelve (i.e., four target words per book × three strategies). Initially, scripts were developed for all intervention books by the coach and educator during coaching sessions. Over time, the educators independently developed scripts with coach feedback. Scripts included the three target strategies as well as corrective feedback for evaluating the child’s response. Target vocabulary words were determined by the child’s score on a screener, described below.

Expressive and Receptive Vocabulary Probes

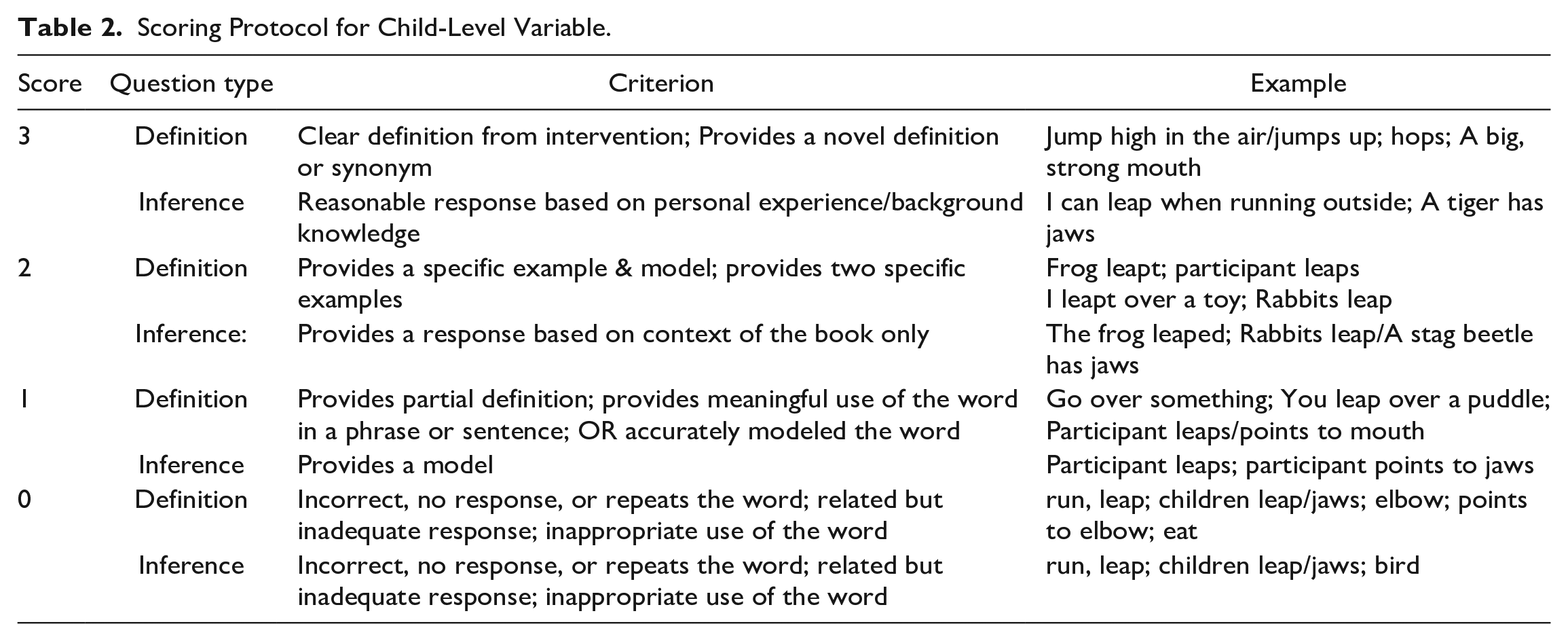

Children were only eligible for participation in this study if they had an IEP with at least one goal targeting language. A common target for young children with language delays is vocabulary (Schmitt et al., 2014), as children with language delays require more than twice the repetitions and exposure to learn new words compared to typically developing children (Gray, 2004). From each book, our research team chose six vocabulary words to target. Each child participant was screened on their definitional knowledge of those six target vocabulary words before each book was introduced. Only words for which the child could not provide a definition or multiple examples were considered for inclusion in the intervention. For each child, four words per book were selected (e.g., dance, creep, giraffe, and jungle) for the intervention condition. The specific four words varied by child, based on their performance on the pre-test screener for that book. Researcher-developed receptive and expressive vocabulary probes were administered weekly by the educator. Note that the weekly probes were specific to the study-selected book for that week. The time between pre- and post-test was between 3 and 4 days. The expressive vocabulary probe had two parts: providing a definition and answering inferential questions. These two parts were scored separately for each word, for each child. The scoring procedure for the expressive probe was adapted from Dennis et al. (2016) and included a scale of knowledge (i.e., 0–3) for both the definition and the inferential questions (see Table 2 for more details and examples). Thus, for any one word, a child could earn up to 6 points total. For each book, we pre-tested the six researcher-selected vocabulary words, provided intervention on four of the words the child did not know, and post-tested the four unknown words only. The receptive probe was presented on a table with a menu of four picture choices (i.e., Google images) that required the child to point to the correct image, following the prompt, “Show me . . . [target vocabulary word]”. Responses were scored in a binary fashion (1 = correct, 0 = incorrect). No feedback was provided, but the educator could praise the child for working and listening.

Scoring Protocol for Child-Level Variable.

Experimental Design

A single-case multiple-baseline across behaviors design was used to examine the effects of PBC with scripted supports on implementation of SBR strategies. A multiple-baseline design is used to assess treatments designed to improve desirable behaviors (Ledford & Gast, 2018) and are more appropriate for academic or non-reversible, trial-based behaviors. Educators completed book readings across conditions (i.e., baseline, intervention, and maintenance) three times per week. Educator behaviors were functionally independent; introduction of the independent variable to one behavior would not bring about change in other untreated behaviors.

Procedures

Following the consent process, the baseline condition commenced. When visual analysis indicated a low or decelerating trend, the educator was coached on the question/evaluate strategy and immediately moved into intervention. When visual analysis indicated high and consistent implementation of behavior one, the participant was trained on expansions. The same procedures were followed for the repeat strategy. Social validity information was collected from the educators following the study.

Baseline

Educators began the baseline condition by reading a self-selected book to the child participant, using their business-as-usual approach to reading. Due to time constraints, four data points were collected in baseline each representing percentage of fidelity for the three strategies.

Intervention

The independent variable was PBC with scripted supports for the three SBR strategies for each book and consisted of a training session on each strategy and weekly coaching sessions. Prior to implementation of each of the SBR strategies, a 15-minute training session was conducted by the coach using a PowerPoint presentation, following scripted procedures where the individual strategy was identified, described, and examples/opportunities to practice provided. Following the training session when the first behavior was introduced and taught, the educator used the next book from a randomized list created by the research team. The same book was read three times over the course of a week using the taught strategy; the educator was also provided with the teacher worksheet (i.e., scripted supports). Behaviors two and three followed the same procedures. Educators were instructed to implement scripted prompts (i.e., 4 question/evaluate, 4 expand, and 4 repeat = 12 total) within each storybook. Educators participated in weekly coaching sessions over the course of the intervention. The length of the educator intervention varied, based on participants’ implementation of targeted behaviors. Coaching sessions were scheduled after the educator completed three readings with the same book, lasted approximately 45 to 60 minutes, and followed the PBC framework (P. A. Snyder et al., 2015). Prior to each coaching session, the coach reviewed the educator’s book reading sessions for that week (i.e., typically 3) and completed a performance feedback sheet. Included on the sheet was the educator’s goal for the week (the first goal was developed after the first week of intervention), graphed data representing progress toward the weekly goal, a written summary of the data, and video clips representing strategy use as well as missed opportunities. Together, the educator and coach reflected on and identified successes, what could be improved upon, and whether the weekly goal had been met. Next, the coach provided guided practice and modeling of strategy use using the teacher worksheet. Last, specific supports needed to meet the goal were identified by the educator, and a timeline established for meeting the goal were recorded on the action plan form.

Maintenance

The maintenance condition was similar to baseline but utilized materials from the intervention condition. However, coaches no longer worked with the educator to develop scripted supports for the books and formal coaching sessions were not held. The educators had access to the teacher worksheet and all participants scripted their own books. Maintenance data were collected 1 and 2 weeks upon conclusion of intervention for each participant.

Coaching Fidelity

Two full-time doctoral students served as the coaches. Both had prior experience coaching educators and working with preschoolers. The first author provided training on the specific behaviors and described the steps for implementation (e.g., read the page, ask the scripted question, provide 3–5 seconds of wait time, and evaluate the child’s response). Next, the coaches and first author reviewed and practiced all items on an implementation fidelity checklist until 100% criterion was reached (i.e., after two sessions). All training sessions were analyzed by the first author to determine adherence to the scripted procedures described above; 100% fidelity was met. The first author randomly selected 20% of videos from each coach and independently coded those videos. To calculate fidelity, the number of procedural elements correctly implemented was divided by the total number and multiplied by 100. Mean fidelity was 95%.

Inter-Observer Reliability

Four coders were trained by the first author on all target strategies, and they simultaneously viewed and independently coded the strategies on a researcher-created coding form embedded within the Vosaic platform, for 100% of all videos across the three conditions. Two coders were assigned to Alan, Miranda, and Susan and two separate coders were assigned to Leena and Amelia for the duration of the study. The first author conducted training in two phases. During Phase 1, the coders received instructions detailing procedures for using the coding form, as well as operational definitions of all codes. Phase 2 of coding training consisted of watching training videos where all coders simultaneously and independently documented the presence or absence of each behavior. Immediately after each coder completed scoring, results were compared and discussed. Differences in ratings were compared to the operational definitions, and rectified. The same process occurred for two training videos until coders were able to demonstrate at least 80% fidelity on the coding form. Total reliability was calculated by dividing the number of indicators where the coders agreed by the total number of indicators that were simultaneously coded, then multiplying the quotient by 100. Inter-observer reliability for each educator was as follows: Alan was 93% (range = 80%−100%); Amelia was 98% (range = 92%−100%); Leena was 95% (range = 77%−100%); Miranda was 92% (range = 77%−100%); and Susan was 100%.

Social Validity

Educators’ perceptions of the intervention were assessed through a survey, targeting the acceptability, appropriateness, and effectiveness of the intervention. The survey included 24 questions that were a combination of select all that apply/ranking (n = 2) yes/no (n = 4), open-ended response (n = 11), and 5-point Likert-type scale (n = 7). The Likert scale items ranged from strongly agree to strongly disagree, with neither agree nor disagree as a neutral response.

Data Analysis Procedures

Visual analysis was used to determine the impact of the coaching intervention on the educator’s fidelity use of strategies across all conditions. Within-condition visual analysis included evaluating the level, trend, and variability of data. Between-conditions visual analysis analyzed the immediacy of effect, overlap of data in adjacent conditions, and the consistency of data patterns in other conditions (Ledford & Gast, 2018). Visual analysis is the most frequently utilized data analysis method for single-case research design for evaluating the presence of a functional relation. Tau-U estimates were also used to determine the amount of overal across condition. Tau-U is a calculation used in single-case research design because it incorporates intervention condition level when measuring non-overlapping data (Lee & Cherney, 2018).

Results

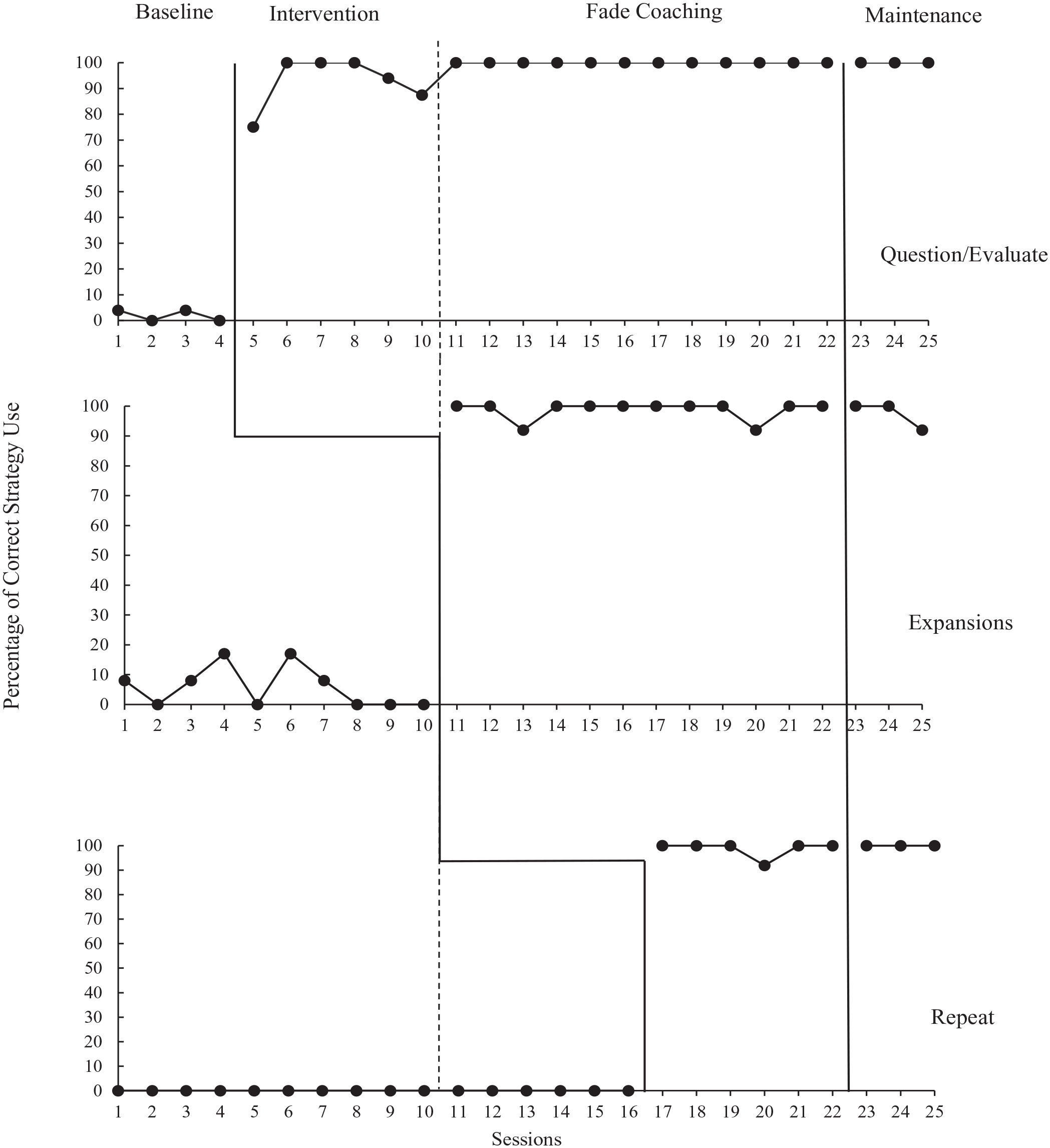

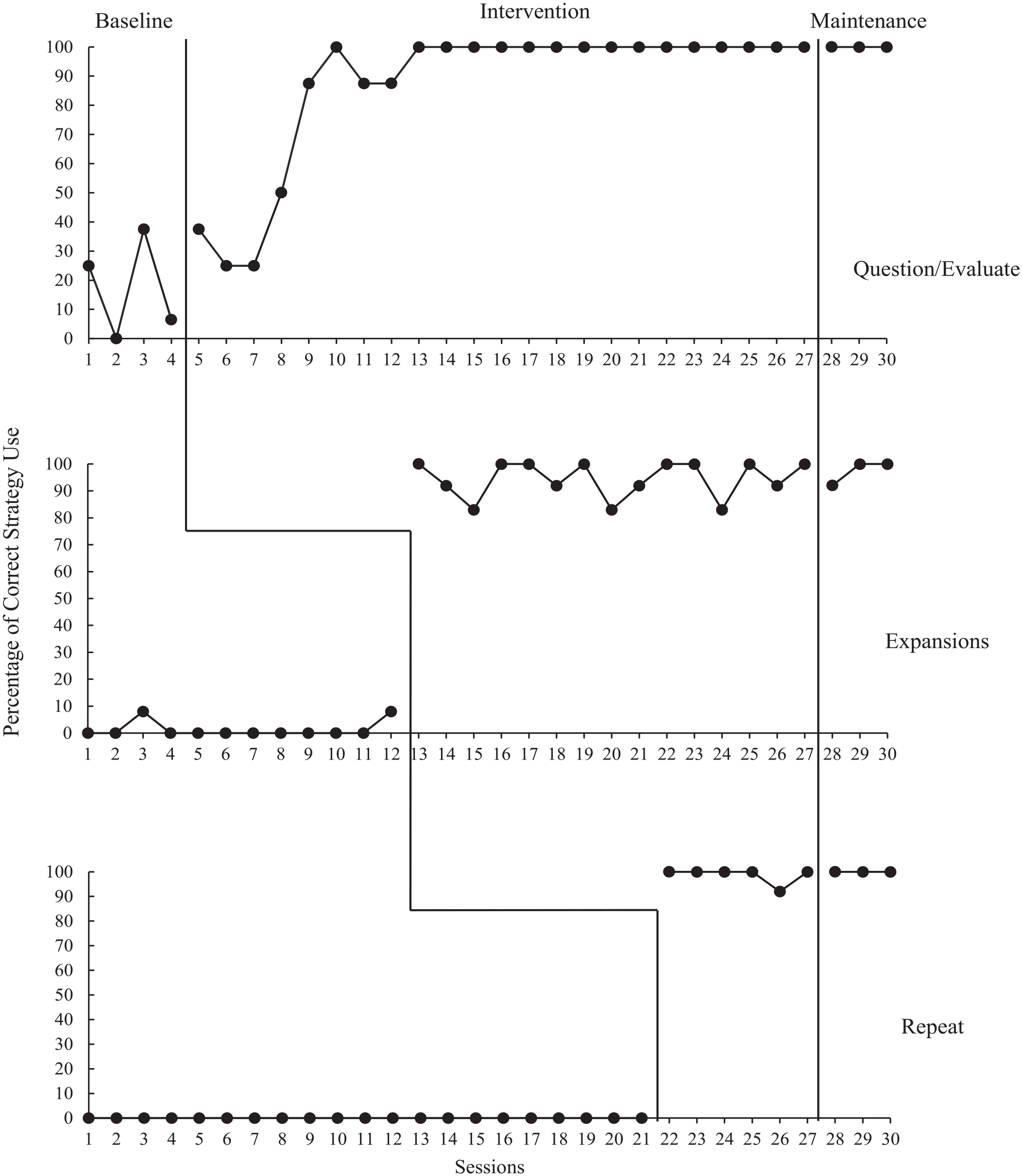

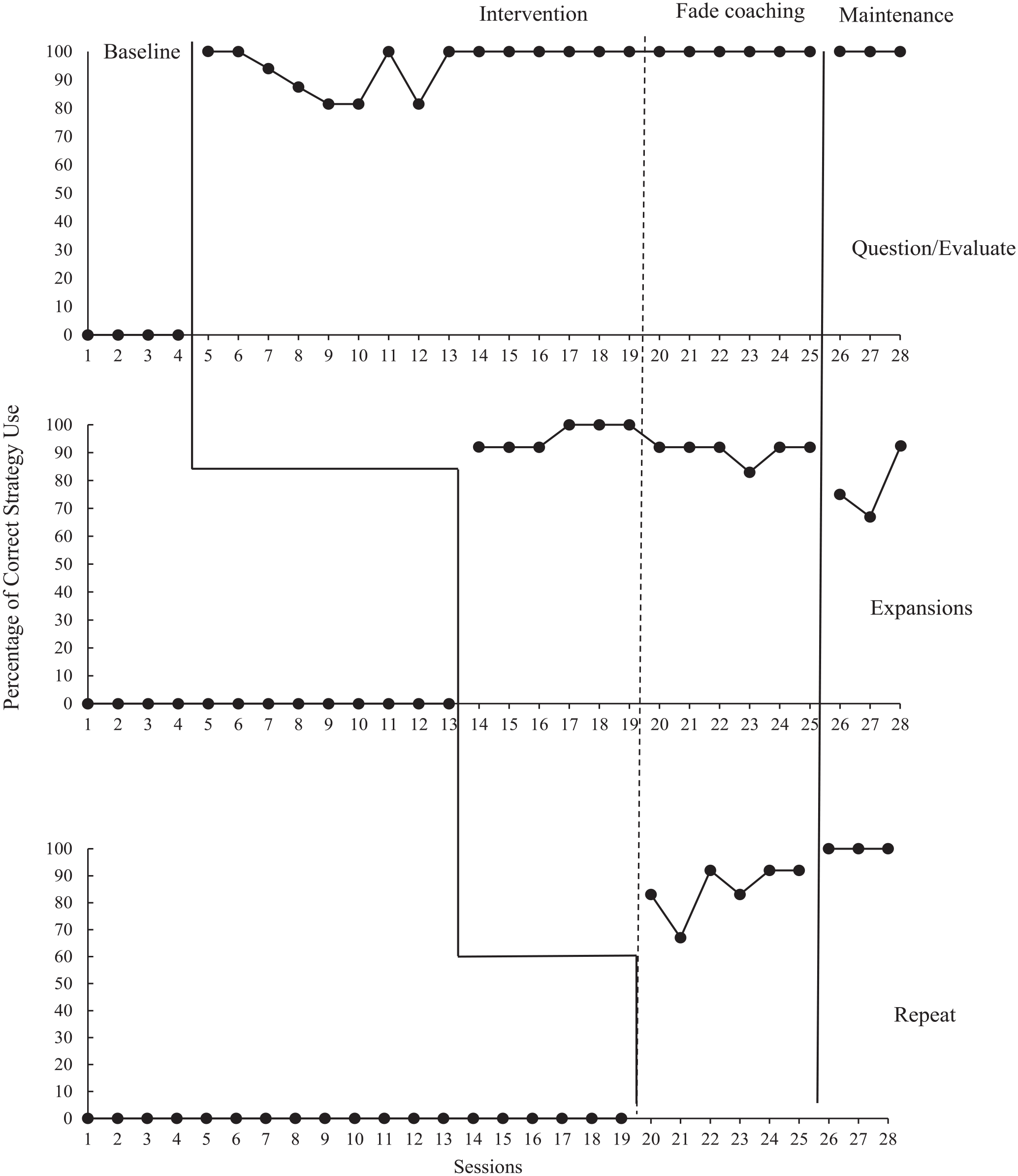

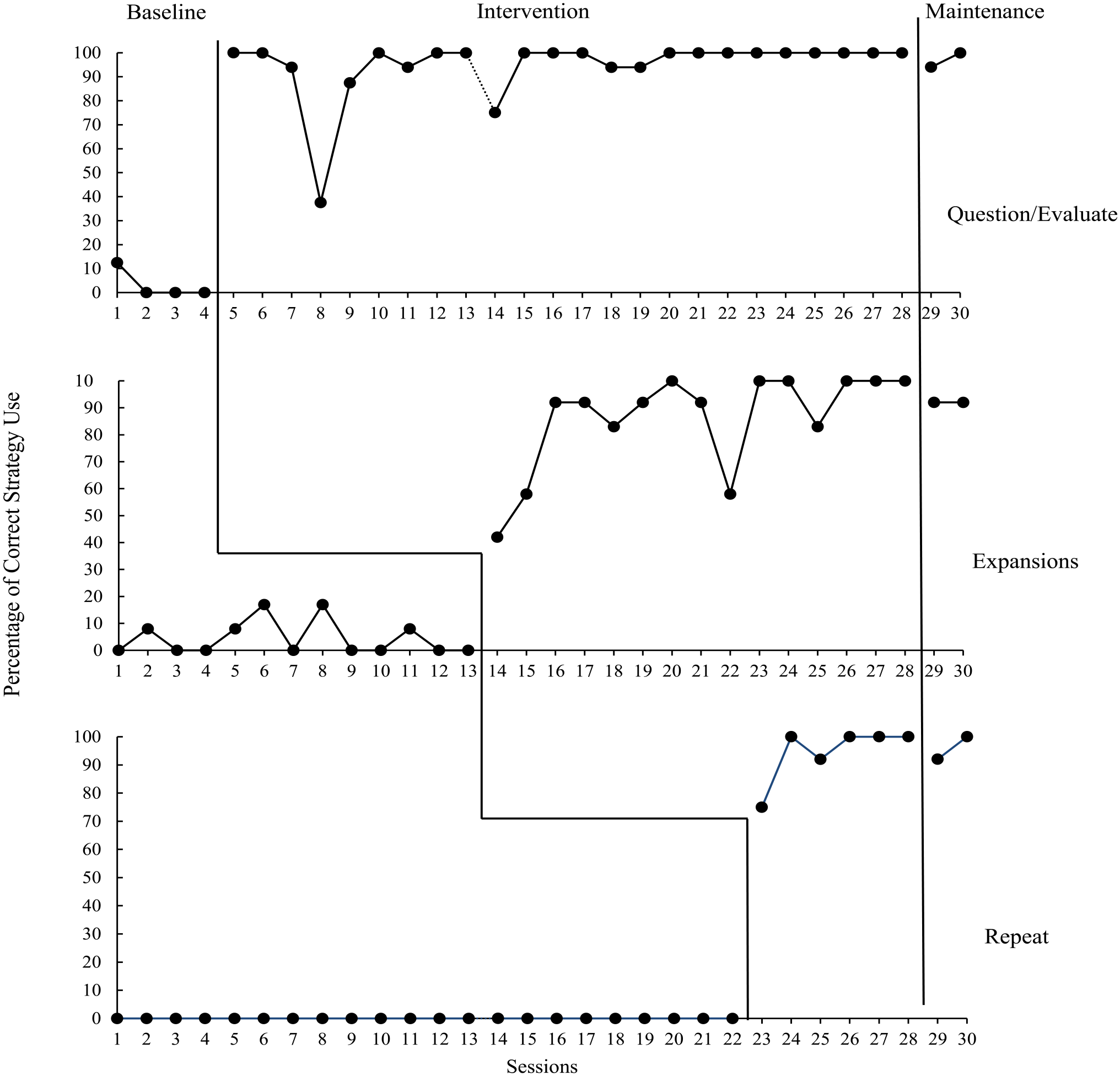

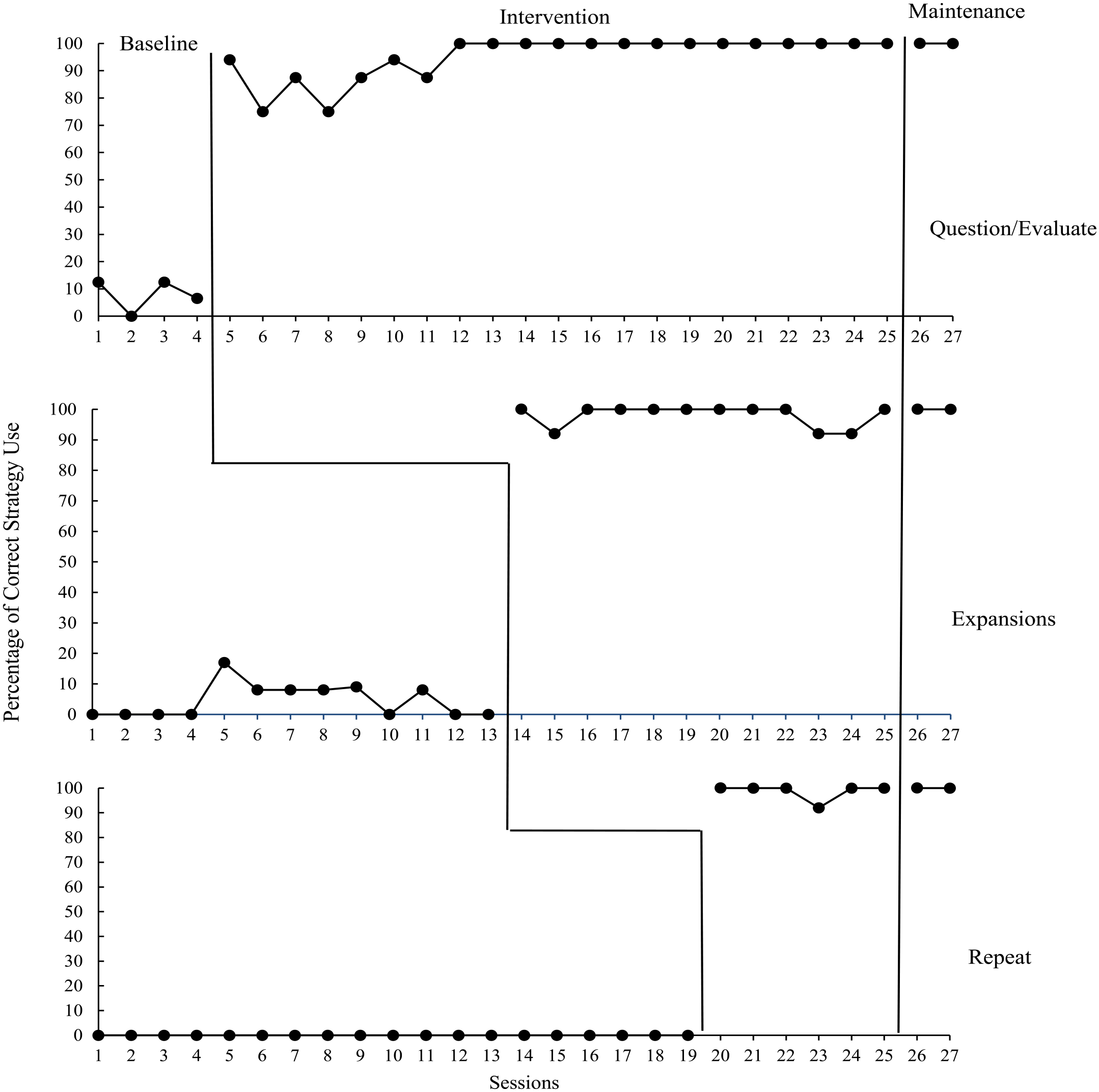

To answer the first research question, data for the total number of strategies used by condition were analyzed visually and calculated as a fidelity percentage (see Figures 1–5). To answer the second research question, gain scores from the expressive and receptive vocabulary knowledge pre- and post-tests were computed. To address the third research question, responses to the social validity survey were analyzed for all five participants.

Alan’s percentage of strategy use.

Amelia’s percentage of strategy use.

Leena’s percentage of strategy use.

Miranda’s percentage of strategy use.

Susan’s percentage of strategy use.

Research Question 1: Educators’ Fidelity to instruction and Strategies

Alan

Alan read a total of six books over the course of the intervention condition. During baseline, question/evaluate and expand strategies were implemented by Alan 20% or fewer times; the repeat strategy remained at zero. When question/evaluate was introduced, there was an immediate increase in level and a positive upward trend. In the first intervention session, Alan implemented question/evaluate with 75% fidelity and increased to 100% fidelity in the second session. Immediate changes in level and positive upward trends were also observed for behaviors two and three, increasing to 100% on the first intervention session, remaining high and stable (range = 92%−100% fidelity). For all behaviors, fidelity remained above 80%. There were no overlapping data between baseline and intervention for any behaviors. During coaching sessions, Alan completed the teacher worksheet with little assistance from the coach; therefore, supports were faded at coaching Session 3 (prior to reading session 11). With fading of coaching support, time was used to (1) review previous data, (2) provide feedback on independent work, and (3) introduce the third strategy (coaching session prior to reading session 17). Maintenance data indicated Alan was able to consistently use the strategies at a high and stable level. Tau-U was equal to 1 for question/evaluate (p < .001), expansions (p < .001), and repetitions (p < .001), indicating a very little overlapping data.

Amelia

Amelia read a total of eight books over the course of the intervention condition. During baseline, visual analysis indicated variability for the question/evaluate strategy (range = 0%−37.5%), low levels of implementation (less than 10%) for expansions, and no implementation of the repeat strategy. Upon introduction of behavior one, Amelia demonstrated an increase to 37.5%. Data for Sessions 6 and 7 decreased to 25% but showed an increasing trend to 50% by Session 8. By Session 9, data showed a significant upward trend to 87.5% and remained high and stable for the remaining sessions. An immediacy of effect was demonstrated for behaviors 2 and 3 and data remained stable ranging from 83% to 100% fidelity for expansions, and 92% to 100% for repeat, for all remaining sessions. There were overlapping data between baseline and intervention for the question/evaluate strategy; no overlap was noted for expansions or repeat strategies. By the sixth coaching session (prior to reading Session 20), Amelia was able to complete the teacher worksheet for the question/evaluate strategy with minimal assistance from the coach; therefore, supports were faded. With fading of coaching support, time was used to: (1) review previous data, (2) provide feedback on independent work, and (3) introduce the final strategy (prior to reading session 22). Tau-U was equal to 1 for question/evaluate (p = .0017), expansions (p < .001), and repetitions (p < .001), indicating a very little overlapping data. Maintenance data remained high and stable for Amelia.

Leena

Leena read a total of seven books over the course of the intervention condition. During baseline across all behaviors, visual analysis revealed no strategy use. Following introduction of behavior one, Leena immediately increased use to 100% fidelity. Data remained stable for behavior one ranging from 83% to 100% fidelity. An immediacy of effect, trending upward, was also seen for behaviors two and three. Behavior two (range = 83%−100% fidelity) remained stable and there was no overlapping data between baseline and intervention. In the two sessions immediately after behavior three was introduced, Leena demonstrated lower use of the repeat strategy (83% and 67%, respectively). However, after a coaching session, Leena demonstrated an increase to 92%, averaging 85% through the end of the intervention condition. By the sixth coaching session (prior to reading Session 20), Leena wrote prompts in the teacher worksheet for question/evaluate prompts independently prior to coaching and coaching support was faded. With fading of coaching support, time was used to (1) review previous data and (2) provide feedback on independent work. Tau-U was equal to 1 for question/evaluate (p = .0019), expansions (p < .001), and repetitions (p < .001), indicating a very little overlapping data. Maintenance data for Leena demonstrated she was able to consistently use the question/evaluate and repeat strategies while data for expansions was low and variable.

Miranda

Miranda read a total of eight books over the course of the intervention condition. Miranda’s use of all three behaviors during baseline was low and stable, some variability was observed for expansions, but remained below 20%. Upon introducing the intervention, an immediate increase in level and a positive upward trend was observed. Behavior one was variable through Session 19, averaging 92% (range = 38%−100%), then remained at 100% for the remainder of the intervention. After an initial immediacy of effect for behavior 2, Miranda’s data were variable, averaging 86% (range = 42%−100%). An immediacy of effect was observed for behavior 3, with data remaining high and stable, ranging from 75% to 100%. Miranda had to quarantine during the study; therefore, intervention was inconsistent, resulting in gaps (approximately 2 weeks between Sessions 13 and 14) in data collection. Supports were faded beginning at coaching Session 6. Miranda maintained all three behaviors at 92% or above. Tau-U was equal to 1 for question/evaluate (p = .0017), expansions (p < .001), and repetitions (p = < .001), indicating a very little overlapping data.

Susan

Susan read a total of seven books over the course of the intervention condition. Susan demonstrated low levels of all target behaviors during baseline, ranging from 0% to 17%. Upon introduction of the first behavior, an immediacy of effect was observed, Susan’s implementation increased to 94%. The next six sessions were variable, averaging 84% (range = 75%−94%), until Session 12 when Susan reached 100% and remained. An immediacy of effect was visible upon introduction of expansions and repeat, both remained high and stable, averaging 94% and 98%, respectively. Coaching was provided for Susan for the duration of the intervention but was primarily focused on developing strategies to support the child. Susan maintained use of all behaviors with no supports provided. Tau-U was equal to 1 for question/evaluate (p < .001), expansions (p < .001), and repetitions (p < .001), indicating a very little overlapping data.

Research Question 2: Children’s Vocabulary Knowledge

All participating children made gains on the expressive vocabulary knowledge portion (data available as online Supplemental Material). Note these gains are reflective of knowledge acquired over the course of 1 week, during three shared book reading sessions. A maximum of 24 points (i.e., 4 words × 3 points each; 12 for literal; 12 for inference) were possible for each book.

Alan read six books to his child participant. Average definition score at pretest was .16 for the definition question and .29 words for inference questions. Scores at posttest for definition and inference were .3 words and .91 words respectively. Amelia read eight books to her child participant. Average scores at pretest for definition and inference were .19 words and .65 words. Scores at posttest were .96 words and .65 words for definition and inference respectively. Leena read seven books to her child participant. Average definition scores at pretest were .1 words and average inference scores were .08 words. At posttest average scores were .5 words for both definition and inference. Miranda read eight books to her child participant. Average scores at pretest were 1.6 words for definition and 1.2 words for inference. At posttest, average scores for definition and inference were 1.59 and 1.88, respectively. Finally, Susan read seven books to her child participant. Average pretest for definition was .1words and for inference was .2 words. At posttest, average for definition and inference was .5 words.

On the receptive vocabulary measure, all but one child made gains. A maximum of 32 points was possible, and score on pre-test ranged from 15 to 20. On post-test, scores ranged from 19 to 29. The average gain score was 6, with a range of 0 to 11. To examine maintenance for targeted vocabulary words, a total of 10 words were chosen for each child participant. This included five words on which the child made a gain from pre- to post-test and five words with no gain. For the expressive vocabulary knowledge portion, a maximum score of 60 points was possible (6 points per word, for 10 words). The average score for the group was 22.4 and scores ranged from 13 to 30. For the receptive vocabulary knowledge portion, a maximum score of 10 points was possible. The average score for the group was 9.6 and scores ranged from 8 to 10.

Research Question 3: Effectiveness of PBC Program

Social Validity

Overall, participants responded positively to each section of the social validity questionnaire, averaging 4.5/5. The four most helpful coaching components identified by participants were: working together with the coach to create examples for strategy attempts, teaching of and reviewing strategy specifics, watching video clips with examples of successful and unsuccessful strategy attempts, and working together with the coach to troubleshoot individual child needs. Participants indicated they would not include any additional components. Feedback related to the coaching sessions was positive. One participant said, “The coaching sessions were a great way to teach the target, definition, inference vocabulary learning process. Being able to watch examples, watch yourself, and come up with specific strategies tailored towards you and your student helped a ton.” All participants strongly agreed that they would recommend participation in a coaching intervention to their peers, and agreed the coaching process was acceptable. One participant explained, “The components were explained fully and easy to understand. The process was organized and easy to follow.” The feasibility of the coaching intervention was rated as “strongly agree” by all participants. As one participant further explained, “The understanding and flexibility of my coach as well as being able to use zoom and do the coaching sessions online made it feasible in a year with staffing shortages and huge caseload numbers.”

Participants shared which strategies they felt were easiest and most difficult to learn. Two participants indicated Expand was the most difficult strategy to learn. All participants strongly agreed the book reading strategies are helpful in supporting children’s language development. One participant stated, “The child started to vocalize things more and gain more vocabulary. That one-on-one time alone was awesome for her!” Four out of five participants strongly agreed that the book reading strategies are appropriate for a variety of preschool age children as well as the child participant in the current study. One participant somewhat agreed.

General feedback on the study included suggestions for book choices, target word choices, and definitions used. Two participants commented on the challenge of coming up with child-friendly definitions: “It is difficult to shorten definitions in a way that can be easily understood and spoken by our little ones. That was the most challenging aspect for me.” All participants strongly agreed they were comfortable with and would use the book reading strategies in the future. Several participants also indicated they would use the SBR strategies in small group settings.

Discussion

The current study utilized a single-case, multiple baseline across behaviors design to examine the effects of PBC on educators’ fidelity of implementation of SBR strategies. This PD intervention can be used to enhance the quality of vocabulary instruction for educators working with preschool children with language delays. In what follows, we will expand upon two primary findings. First, the results of the study indicated educators were able to implement SBR strategies with fidelity and at consistently high rates. Second, all child participants showed increases in both their expressive and receptive vocabulary knowledge of targeted words.

Fixsen et al. (2010) identified implementation drivers as critical to the success of improving practitioners use of EB practices. One specific type of implementation driver noted by Sexton and Rush (2021) is the use of implementation guides to “provide practitioners with the supports and structure needed to ensure they are equipped with concrete ideas about how to immediately operationalize the practices in the field” (p. 16). Included in this study were scripted supports, a variation of an implementation guide, that helped the paras and SLP-As use the SBR strategies with fidelity and maintain a high-level of fidelity once coaching supports were removed. Our results align with other coaching-based studies, in that job-embedded practices, reflection, coaching, and follow-up support is effective in invoking change in how educators implement EB practices (Romano & Woods, 2018; P. Snyder et al., 2012). Watching one’s practice, reflecting, and receiving feedback from a coach helps to support learning (Romano & Schnurr, 2022). Reflective feedback was included to support educators in reaching fidelity, aligning with research suggesting that on-the-job PD with hands-on practice is more effective than didactic PD (Joyce & Showers, 2002). Opportunities for practice with coaching are critical for paras and SLPAs who are often tasked with delivering specific interventions without the appropriate training.

Our second main finding was that the child participants exhibited gains in expressive and receptive vocabulary knowledge. It appeared that vocabulary learning occurred after educators directly taught the child the definition of the word and tied it to their background knowledge through specific question prompts with evaluation, expansions, and opportunities to repeat. All children in this study had language delays and were receiving special education and/or related services. As such, the present intervention adds to the body of literature showcasing that children with language delays can learn new words in the context of storybooks (Storkel et al., 2017).

Overall, the PD program was positively reviewed by participants, increasing its utility, feasibility, and acceptability. The five educators stated they enjoyed the PD program and will continue using strategies in the future. The virtual coaching format allowed scheduling flexibility for both coaches and educators and lends itself to future research in which individualized coaching sessions with a trusted coach or colleague; for example, ECSE teachers and/or SLPs could be incorporated.

Implications for Research and Practice

Several implications for practice can be noted. SBR is a common routine in preschool settings; therefore, incorporating books that are rich in vocabulary would be feasible. In this study, popular, widely available storybooks were used. The cost of the storybooks is affordable, and most schools are more likely to have storybooks in the library. Additionally, this study incorporated several EB practices shown to improve language development for preschool age children (e.g., providing rich linguistic input through modeling, expanding, etc.), all of which can be integrated into early educators’ practice with relative ease. This study focused on shared book reading as the primary tool for delivering vocabulary instruction, future research could look at expanding instruction to include other teachable moments throughout the preschool day.

It is reasonable to expect that educators may require varying levels of support to account for individual differences that arise depending on their background, training, and experience. Ideally, professional development is offered at the lowest dose and frequency required to affect behavior change (e.g., Olswang & Prelock, 2015). Future research could explore the use of adaptive interventions (AI), comprised of pre-specified, replicable sequence of decision rules that guides whether, how, when, and which measures to use to make critical decisions about interventions (Nahum-Shani & Almirall, 2019). AI are particularly meaningful for a heterogeneous population, such as school professionals, because they allow for trainers to capitalize on differences in learners’ response to the training procedures, thereby better meeting a range of instructional needs.

Limitations

Target words selected for this study were not controlled for phonological complexity, frequency, or other lexical characteristics that contribute to word learning (e.g., Farquharson et al., 2014). Future work may consider manipulating, or controlling for, these factors. Measuring vocabulary gains for preschool age children, particularly those with disabilities, remains a challenge. One limitation could be the use of a scoring rubric to measure depth of vocabulary knowledge. Adapted from a previous study (Dennis & Whalon, 2021), the scale was not interval, and the distinct scoring criteria may not accurately represent children’s depth of knowledge. For example, a child who was able to provide two examples and a clear definition of a word would receive the same score as a child who provided only a clear definition. Future work could consider an approach measuring both vocabulary and comprehension of inferences (Dicataldo et al., 2022), which may better capture and represent the preschoolers’ knowledge. We also acknowledge child gains cannot be 100% attributed to this intervention. Additionally, not examined in this study is the differential effects of the scripting versus the coaching. Future research could explore these differences. Finally, although we worked closely with the participants, we did not directly ask them to discuss the details of their work environment.

Summary and Conclusions

This early efficacy study was conceptualized to develop a sustainable and feasible PD program that could be delivered to a specific population of EC educators in a variety of settings. Paras and SLPAs spend a substantial amount of time with children who have language delays, yet they often receive less training, or are not prioritized to receive PD (L. Dennis et al., 2023). Our results support that a structured PBC approach is effective at increasing educators’ use of explicit vocabulary instruction during shared book reading, which had downstream effects on children’s vocabulary knowledge. Finally, educators’ responses to the social validity survey indicated appropriateness and feasibility.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This research was funded by a multidisciplinary grant from FSU’s Council for Research and Creativity (PI: Dennis, Co-I: Farquharson). Reed and Summy are supported by the Institute of Education Sciences, U.S. Department of Education, through Grant R305B200020 to the Florida Center for Reading Research at Florida State University.

Supplemental Material

Supplemental material for this article is available on the Topics in Early Childhood Special Education website with the online version of this article.