Abstract

In their pursuit of public health goals, policymakers increasingly turn to innovative apps to complement existing health measures. However, findings from previous research in non-IT health contexts indicate that individuals may compensate for new interventions (e.g., start exercising) by reducing existing preventive health behaviors (e.g., eating fewer healthy foods). However, the findings are inconclusive, and it is unknown when people tend to engage in behavioral compensation. Building on this observation, we draw on rational choice theory to substantiate the subjective rationality of compensation behavior and develop a utility maximization model that suggests circumstances under which adoption of technological innovation may lead to users reducing existing preventive health behaviors. This research provides evidence from a multi-wave study on COVID-19 contact-tracing apps that confirms the existence of what we term the technology-behavioral compensation effect: Individuals who perceive the app to be highly useful or actively use it reduce other preventive health behaviors (e.g., social distancing) after app adoption. Ironically, this technology-behavioral compensation effect indicates a hitherto-overlooked tension between two established IS design goals (i.e., perceived usefulness and active use) and the successful exploitation of technology to support users’ health. We expand research on dark side effects of IS use by revealing a previously neglected type of unintended consequence and elaborate on its implications for research well beyond the health context. Our findings also will help policymakers make decisions on the design of societal technologies.

Keywords

Introduction

Public health goals—for instance, reducing obesity, accidental deaths, or infections—are top concerns for policymakers and support organizations. More recently, in their pursuit of public health goals, user-centric health apps have served as innovative, technology-driven health interventions that complement well-known non-technological health measures, such as nutrition labels, mandatory seat belt use, and social distancing. Indeed, extant research provides evidence that health app use is related to immediate positive health outcomes. For example, fitness app use is associated with increased physical activity (Wolf et al., 2021), safe-driving apps can alert inattentive drivers (Bergasa et al., 2014), and contact-tracing apps—if a large share of the population adopts them—can slow the spread of viruses significantly (Ferretti et al., 2020). As a result of these promises, the global market for health apps grew to more than $80 billion in 2022 (Swain and Kharad, 2022).

However, multiple unintended consequences potentially can arise with the introduction and use of new technologies. For example, using IT can affect users’ health adversely through technostress, addiction, or compulsive use (e.g., Ayyagari, Grover, and Purvis, 2011; Kwon et al., 2016; Wang and Lee, 2020; Nastjuk et al., 2023). Unintended outcomes from technology use in the health context may not be limited to such negative psychological and behavioral outcomes. Furthermore, the health context is characterized by an interplay between multiple measures and behaviors that are all intended to contribute to an overarching health goal (e.g., reducing meal sizes and increasing participation in sports simultaneously to lose weight). As people adapt their actions to situational changes (Hedlund, 2000; Peltzman, 1975), an intervention, such as adding a health app to an existing system of preventive health measures, might disrupt individual behavioral patterns by replacing existing preventive health behaviors with new technologically mediated ones. From a policymaker or support organization perspective, the introduction of a new health app could be considerably less effective or even backfire if people begin to compensate for the adoption of a health app by reducing their engagement in existing health behaviors that contribute to the same health goals.

Previous literature on non-IT interventions has uncovered effects indicating such compensation behavior in which, for example, the introduction of seat belts is associated with riskier driving behaviors (Streff and Geller, 1988), and the use of sunscreen is related to increased sun exposure (Autier et al., 1998). However, although behavioral compensation has been found in many contexts (e.g., injury prevention, vaccination, and healthy eating), several studies have failed to verify this effect in general (e.g., Lardelli-Claret et al., 2003; Schleinitz, Petzoldt, and Gehlert, 2018). These inconclusive findings call for a theoretical approach that can explain when individuals indulge in compensation behavior.

Against this background, this paper aims to understand when newly introduced health technologies can undermine targeted health goals by disengaging users from existing preventive health behaviors. To pursue this goal, we develop a utility maximization model (UMM) drawing upon rational choice theory (RCT) that can explain compensation behavior based on cost-benefit considerations. We use this model to derive a technology-behavioral compensation framework that links IS design factors that drive benefit perceptions (i.e., high perceived usefulness and active use; Seddon, 1997; DeLone and McLean, 2003) with neglect of existing preventive health behaviors. More specifically, the framework postulates that people who adopt a new health app subsequently will reduce other (still necessary) preventive health behaviors (1) if they perceive that the app is useful, or (2) if they actively use the app—an effect that we term the technology-behavioral compensation effect (i.e., reduction in existing positive non-technological behaviors induced by adoption of technology).

To test our hypotheses, we collected longitudinal data on actual COVID-19 contact-tracing app adoption and other corresponding preventive health behaviors in Germany. We then analyzed our multi-wave survey data using a difference-in-differences (DID) modeling approach. Our results provide evidence of the existence of a technology-behavioral compensation effect: Individuals who installed the app tended to cut back on other preventive health behaviors (e.g., avoiding social contact with several people from different households at once, not visiting individuals from high-risk groups, and avoiding physical contact with others) if they perceived that the app was effective in terms of being useful for health prevention (i.e., app efficacy trap) or if active use reminded them that they already were engaging in health prevention (i.e., app engagement trap).

Our findings make three major contributions to IS research. First, we contribute to the literature on the dark side of IS use by spotlighting a so-far-underexplored dark side effect that harms IT interventions’ efficacy and, therefore, its users indirectly by triggering reductions in other non-technological goal-supporting behaviors. The technology-behavioral compensation effect complements investigations of negative psychological and behavioral outcomes from IS use, such as technostress or addictive use (e.g., Ayyagari, Grover, and Purvis, 2011; Kwon et al., 2016), but differs in the sense that achievement of the technology’s overarching goal (e.g., public health) may be jeopardized through unexpected behavioral changes that counteract the technology’s primary purpose, namely, reducing other goal-supporting behaviors.

Second, we contribute to the literature on effective IS design. An emphasis on common user-centered design goals of effective IS, such as perceived usefulness and active use (Davis, 1989; DeLone and McLean, 2003), may be misleading if, after adopting the technology, people refrain from existing behaviors (e.g., avoiding social contact during an outbreak) necessary to achieve their overarching goals (e.g., preventing viral infection). Ironically, we find that this technology-behavioral compensation effect is fostered by factors that entail strong perceptions of technological benefits, such as perceived usefulness or active use. Therefore, our findings call for a more holistic perspective on IS design that does not examine its efficacy in isolation, but rather extends beyond the technology itself and accounts for spillover effects into other behaviors that may contribute to (or diminish) the stakeholder’s goal achievement.

Third, our theoretical lens employing RCT and the proposed UMM is not bound to our empirical setting and suggests the potential occurrence of compensation behaviors in other contexts in which technological innovations are one building block in a system of multiple measures toward an overall goal. This actually pertains to many IS research streams, for instance, privacy control, IT security, and decision support systems (e.g., Hoehle et al., 2019; Jardine, 2020; Rowe, 2005). Our findings point toward new perspectives from which to study technological innovations’ impact in these areas and may serve as an explanation for unexpected outcomes after the introduction of effective technologies.

The remainder of this paper is structured as follows. We first synthesize previous knowledge on dark side effects of IS use and contrast these existing perspectives against the proposed behavioral compensation effect. We then review theories and empirical findings from health-related studies in various contexts to exemplify the general existence of compensation behavior and highlight the gap in theory and in literature on when it occurs. To address this gap, we draw on RCT to explain the rational cost-benefit considerations behind compensation behavior and develop a UMM that can further explain when compensation behavior occurs after adopting new technology interventions. We then contextualize the general mechanism to the field of IS design and develop hypotheses regarding the impact of perceived usefulness and active use of technology on existing preventive health behaviors. Next, we lay out the research design, analysis, and results from our multi-wave survey to test our hypotheses empirically. Finally, we discuss our findings and reflect on their theoretical and practical implications.

Theoretical foundation

Dark side effects of IS use

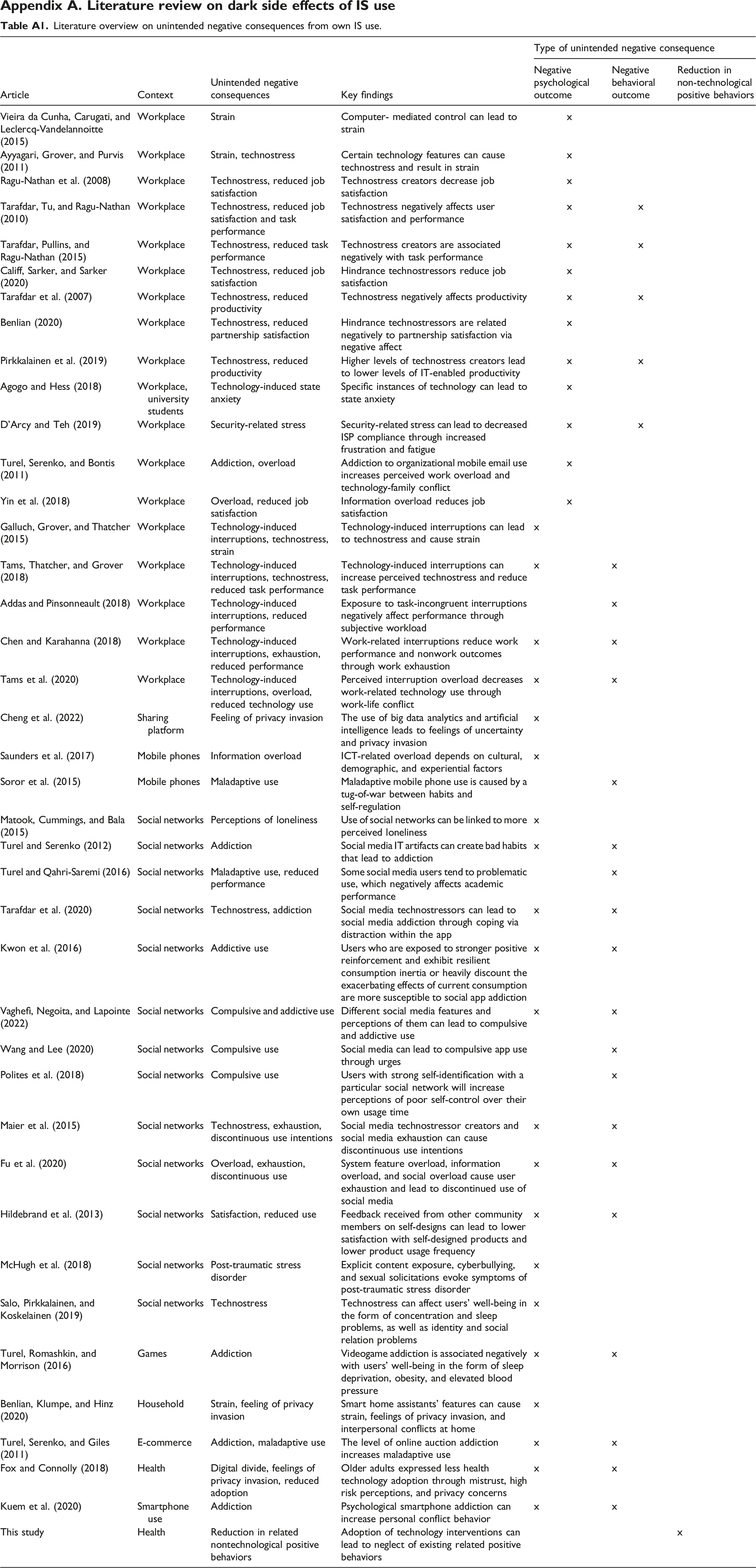

Over the past decade, IS researchers have begun to consider and examine the unintended consequences and dark side effects of IS use more closely (Agogo and Hess, 2018; Tarafdar, Gupta, et al., 2015a). While well-designed technology undoubtedly supports user goal achievement in many ways, its use also can lead to adverse and often unexpected consequences for individuals. Technostress, technology-induced interruptions, information overload, technology addiction, and compulsive use are among the most studied negative consequences of IS use (Nastjuk et al., 2023; Tarafdar, Gupta, et al., 2015b; Wang and Lee, 2020). Appendix A provides an overview of prior studies that empirically examined dark side effects of IS use in which users experienced unintended negative consequences from their own technology use.

Broadly speaking, previous findings on the dark side effects of IS use regarding individuals’ behavior or mental well-being can be categorized into two types of unintended negative consequences that may corrupt the efficacy of the IS. 1 The first is negative psychological effects that arise directly from IS use. This includes technostress, technology-induced interruptions, information overload, strain, or feelings of privacy invasion (Tarafdar, Gupta, et al., 2015a, 2015b), just to name a few. The occurrence of such negative psychological outcomes from IS use has been demonstrated in different contexts, such as the workplace (e.g., Ayyagari et al., 2011; Galluch et al., 2015; Vieira da Cunha et al., 2015), mobile phone use (e.g., Saunders et al., 2017), and social networks (e.g., Fu et al., 2020; Matook et al., 2015). These negative psychological effects often have further downstream consequences, such as reduced job and partnership satisfaction (e.g., Benlian, 2020; Califf et al., 2020; Ragu-Nathan et al., 2008; Tarafdar et al., 2010), weaker task performance (e.g., Tams et al., 2018; Tarafdar et al., 2010), and lower productivity (e.g., Tarafdar et al., 2007). To sum up, these negative psychological effects can elicit either negative consequences on the efficacy of the IS itself (e.g., technology-induced interruptions that reduce productivity) or are secondary side effects that do not affect IS efficacy itself (e.g., violating privacy while or after using a technology).

The second type is negative behavioral effects, which can manifest, for instance, as compulsive use, addictive behavior, or discontinued use (Tarafdar, Gupta, et al., 2015a, 2015b). For example, social network sites and their features can create addictive and compulsive use (e.g., Tarafdar et al., 2020; Turel and Serenko, 2012; Wang and Lee, 2020). The behavioral effects described here are characterized by the triggering or reinforcement of a fundamentally negative behavior (e.g., discontinuance intentions or maladaptive usage (Maier et al., 2015; Turel, Serenko and Giles, 2011).

With this research, we characterize a third type of unintended consequence from technology use: reduction in existing positive (non-technological) behaviors. To the best of our knowledge, previous research on the dark side effects of IS use has not yet considered a reduction in existing positive (non-technological) behaviors caused by the introduction of a new IT, which also could harm technological goal achievement indirectly. More precisely, as technology complements and interferes with all parts of our lives more and more (Turel et al., 2020), it is usually only one of many complementary measures to achieve a goal. For example, if a person wants to lose weight, they can exercise more or eat less. If the person does both, they might lose weight faster than changing only one behavior (i.e., the behaviors are complementary). However, if a person believes that they no longer need to be careful with their eating habits because they are exercising more, they indirectly jeopardize the intended goal of weight loss. Transferred to a technological context, this would imply that while IT helps users achieve their goals, it also might affect users’ existing behaviors that are also instrumental to achieving these goals. In the context of health apps, IT might be effective in supporting and protecting users’ health, but its adoption—under certain circumstances—might cannibalize the application of existing preventive health behaviors, even if they are intended to be complementary. While this compensation behavior also can be viewed as a type of negative behavioral effect, it differs from the previously mentioned type of negative behavior in that it reduces an existing positive behavior, rather than creating a negative behavior that emerges during technology use. Likewise, the reduction in existing positive behavior differs from potential consequences of negative psychological effects (e.g., reduced productivity) in that it is not directly related to the IT itself, but rather to other positive behaviors that affect the same overarching goal. In the next section, we broaden the scope by synthesizing prior empirical findings on behavioral compensation and their underlying theories in various health contexts outside of IS.

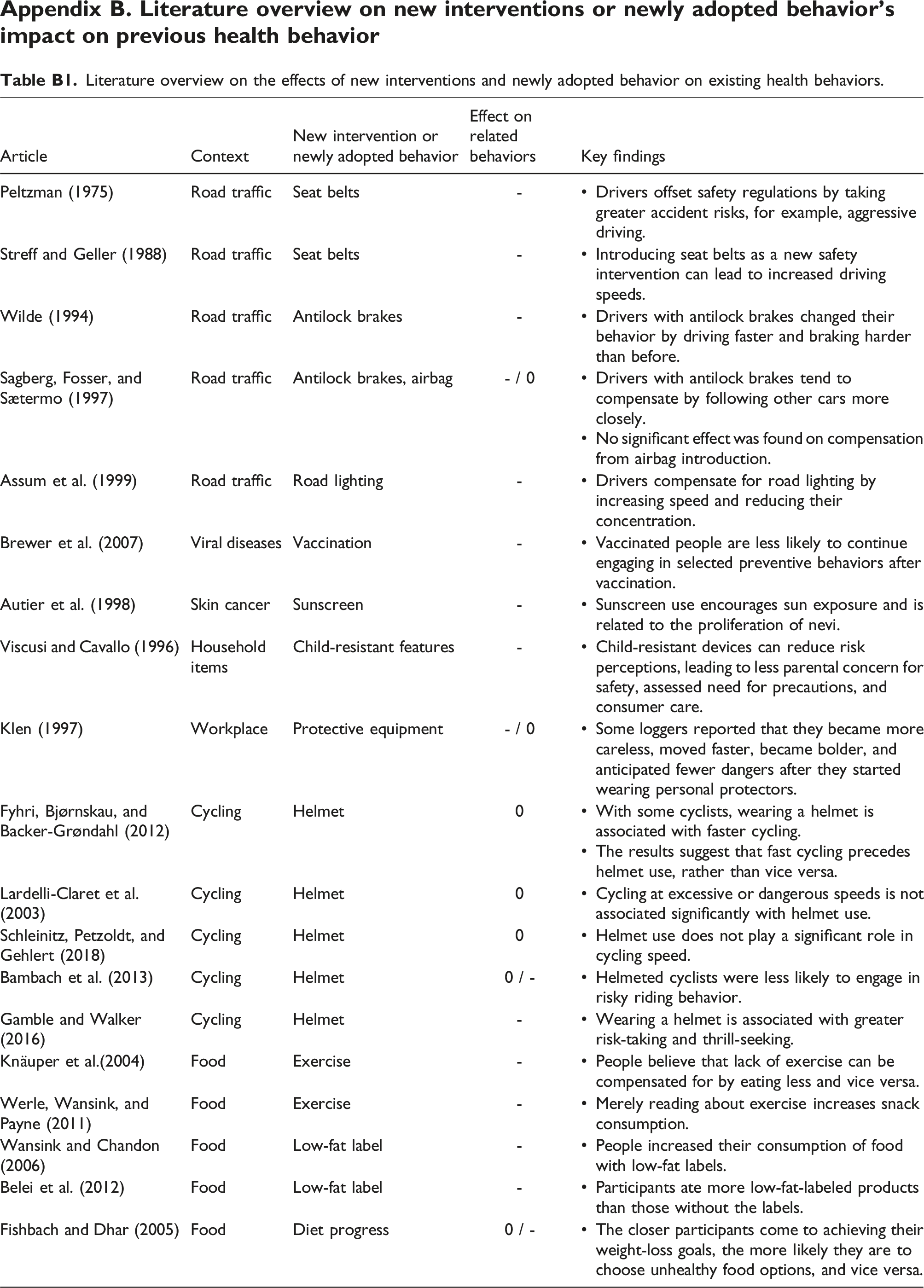

Prior theoretical approaches to explain compensation behavior in the health context

Behavioral compensation in the health context has been studied in various areas, such as safety and food consumption. This ranges from road traffic and disease prevention interventions to exercise measures and food labels. Appendix B provides an overview of studies from different non-IT contexts that assess how new interventions or newly adopted behaviors can affect previous health-related behavior. To sum up, the studies provide strong evidence for the existence of compensation behavior. However, the results are inconclusive in terms of when people compensate and when they do not, as people are not always inclined toward compensation behavior (e.g., Fishbach and Dhar, 2005; Sagberg et al., 1997).

Extant studies on compensation behavior rely on specific psychological mechanisms such as personal target risk level (e.g., Lardelli-Claret et al., 2003; Peltzman, 1975; Sagberg et al., 1997; Schleinitz et al., 2018; Wilde, 1994) or self-licensing (e.g., Belei et al., 2012; Fishbach and Dhar, 2005; Knäuper et al., 2004; Werle et al., 2011). In doing so, they draw on either risk homeostasis theory or the self-licensing effect to explain the compensation behavior; however, these theoretical approaches can inform our study to only a limited extent. First, risk homeostasis theory argues that people compare the amount of risk they perceive in every situation with their target risk level. Behavioral change then results from resolving the differences between perceived and target-level risks (Hedlund, 2000; Wilde, 1988). Thus, people adjust their existing behavior in response to a situational change when, for example, their new risk-reducing intervention influences their perceived risk level. In addition to criticism of risk as the sole explanatory mechanism for compensation behavior (e.g., Haight, 1986), the theory lacks other factors that could explain or determine new interventions’ influence on perceived risk level and, thus, the likelihood of compensation behavior (Hedlund, 2000). Second, the licensing effect occurs when people allow themselves to partake in more self-indulgent behavior after attaining a positive self-concept (Khan and Dhar, 2006). The theory suggests that the previous behavior consistent with the self-concept helps justify the choice of a gratifying hedonic option, instead of behaviors that fit with the long-term goal. Similar to risk homeostasis theory, licensing effect theory and the corresponding literature lack explanatory factors that determine the likelihood of compensation behavior (e.g., Blanken et al., 2015). In acknowledging these theoretical perspectives’ value, they focus on specific psychological biases to explain why compensation behavior occurs. However, they seem less effective in describing when people tend to compensate behaviorally.

Considering that compensation behavior, as a consequence of adopting newly introduced technologies, is new for IS research and can occur in several contexts, we aim to avoid limiting our investigation to pre-specified biases, such as those inherent in the theoretical lenses discussed above. Simultaneously, we require a lens that is instrumental in identifying the mechanism that can lead to compensatory behavior. Thus, we address this phenomenon by drawing on rational choice theory (RCT). We rely on RCT, rather than the theoretical approaches applied in prior compensation behavior research, because it allows us to view behavioral compensation as a rational behavior based on individual cost-benefit considerations. Thus, RCT forgoes a contextual explanation mechanism, which can be very diverse. Therefore, we argue that RCT allows us to (1) explain that compensation behavior results from rational decision-making without relying on a specific psychological bias, and (2) develop an economic model based on its tenets that determines when compensation behavior occurs in the context of newly introduced health preventive technologies. Finally, RCT’s broad scope in terms of its application possibilities provides an established theoretical foundation from which the research stream on compensation behavior can be advanced.

Rational choice theory and compensation behavior in the health context

Rational choice theory (RCT) posits that before engaging in a given behavior, individuals balance its costs and benefits to form decisions that generate the maximum utility for them (Liang et al., 2017; Mellers et al., 1998). RCT already has been employed in IS to explain user behavior in areas such as compliance in organizational settings (e.g., D’Arcy and Lowry, 2019), security behavior (e.g., Bulgurcu et al., 2010), and online health information use (e.g., Liang et al., 2017). According to RCT, individuals evaluate each behavioral alternative’s possible outcomes by relating the benefits’ utility to the costs’ disutility. While the decision process is rational in nature, evaluations of alternatives’ features are subjective; thus, individual perceptions of benefits and costs can differ among people (D’Arcy and Lowry, 2019). By comparing each measure’s overall utility, individuals can decide which behaviors are right for them.

RCT’s core tenets are suitable for explaining compensation behavior in the health context: (1) People are self-interested; (2) people decide rationally; and (3) the decisions are based on cost-benefit considerations (D’Arcy and Lowry, 2019; Jackman, 1993). In the context of health technologies, users want to maximize their personal health utility by considering technological, preventive health interventions and non-technological behaviors based on their benefits and costs. In the presence of diminishing marginal utility (Oliver, 2018) and given an individual’s cost-benefit considerations, the chosen level of engagement in technology-mediated and non-technological preventive health behaviors does not result in a maximization of these behaviors because both behaviors contribute to the same goal, even though each contributes to health protection in its own right. Notably, people make rational decisions based on individual perceptions of costs and benefits, rather than externally calculated costs and benefits (McCarthy, 2002). In terms of health technologies, this means that people's perceptions of the same technology’s efficacy may vary from person to person simply because of the information they have received from their environment.

Rational choice is defined as the process of determining what alternatives are available, then selecting the preferred alternative according to some consistent criterion. This typically is modeled as an optimization problem. To illustrate how individuals optimize their decisions, we next develop a utility maximization model (UMM) that indicates how reducing existing preventive health behaviors in light of a newly introduced preventive health technology intervention can be rational, although both measures individually help achieve an overarching goal. More specifically, the model prescribes that perceived technological benefits drive behavioral compensation in that individuals reduce existing preventive health behaviors more strongly. To illustrate that technology-behavioral compensation can be a rational human choice under certain circumstances, the UMM considers the introduction of a preventive health technology in the presence of other existing preventive health behaviors, contributing to the same overarching goal. More precisely, the model focuses on individuals’ adaptations of existing behaviors after adopting the newly introduced health technology. Thus, the UMM considers that a balance between increasing utility through health benefits and increasing disutility costs determines preventive health behaviors. Individual optimizing behavior leads individuals to engage in more preventive health behaviors until the expected utility through health benefits is lower than the expected disutility through additional costs. In the final step, the UMM considers the effect of the benefit perceptions of the technological intervention to protect the individual from health threats on existing preventive health behaviors after adopting the technology. The complete derivation of the UMM can be found in Appendix C.

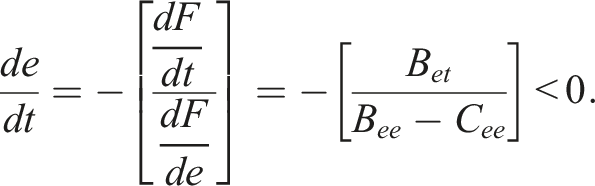

The resulting equation is:

We can see that an increase in perceived protection from health threats through the technology intervention will induce individuals to decrease their existing preventive efforts. 2 More precisely, increasing the perception of benefits from the adopted technological intervention will elicit increased compensation behavior (i.e., reduction of existing non-technological preventive health behaviors). Thus, the UMM prescribes the conditions under which behavioral compensation occurs, that is, technology-behavioral compensation is driven by the perceived benefit of the newly adopted intervention or behavior.

To sum up, the theoretical foundation provided by RCT and the developed UMM supports the notion of an adopted new preventive health technology as the driver of compensation behavior by accounting for cost-benefit considerations and their interactions with multiple health measures. The UMM also indicates when compensation behavior occurs, namely, it appears with increasing perceived benefits of the newly introduced technology. Thus, the RCT-UMM combination suggests perceived benefit as a mechanism for the occurrence of compensation behavior, but it does not specify which explicit factors are responsible for perceptions of technological benefits that fuel the compensation behavior. To identify these, we utilize the RCT and UMM findings and contextualize them with the literature on effective IS design to derive our research framework.

A technology-behavioral compensation framework

Maximizing perceived benefits always has been a critical goal of IS design. A significant literature stream has tackled the question of what determines technology’s benefits (DeLone and McLean, 2003; Petter et al., 2012). Therefore, we draw upon this literature when contextualizing our RCT-based general understanding of behavioral compensation and derive a technology-behavioral compensation framework that suggests two established factors for effective IS design to trigger compensation behavior through their major impact on perceptions of technological benefits.

Considering health apps, the overarching goal is to help users preserve or improve their individual health. Thus, from a user’s perspective, the technology should be as beneficial as possible for their health. This is consistent with the general understanding of successful IS, in which the technology is effective in supporting the stakeholder’s goal (i.e., in the context of personal health apps to provide health benefits for the user; Seddon, 1997; DeLone and McLean, 2003).

The literature on IS success already has examined the indicators for successful IS extensively (e.g., DeLone and McLean, 2003; Seddon, 1997). Broadly speaking, the literature agrees that to make technologies beneficial, IS designers’ goal is to specify and implement IS features that facilitate perceived usefulness and foster the use of technology (Davis and Venkatesh, 2004; DeLone and McLean, 2003). These two factors—perceived usefulness and active use—are identified as key variables in realizing benefits from IS use (DeLone and McLean, 2003; Petter et al., 2008; Seddon, 1997). Both factors are important and independent of each other, as technologies may be perceived as useful, but not actively used due to certain circumstances. Likewise, technologies can be used actively and frequently without necessarily being useful (e.g., based on habits or introjected regulation; DeLone and McLean, 2003; Seddon, 1997). Therefore, both factors are of particular interest to researchers and designers, and can be viewed as key objectives of IS design.

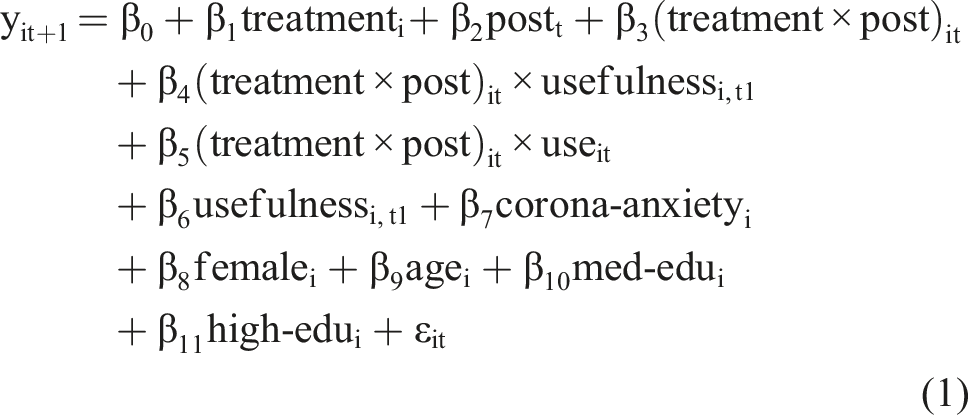

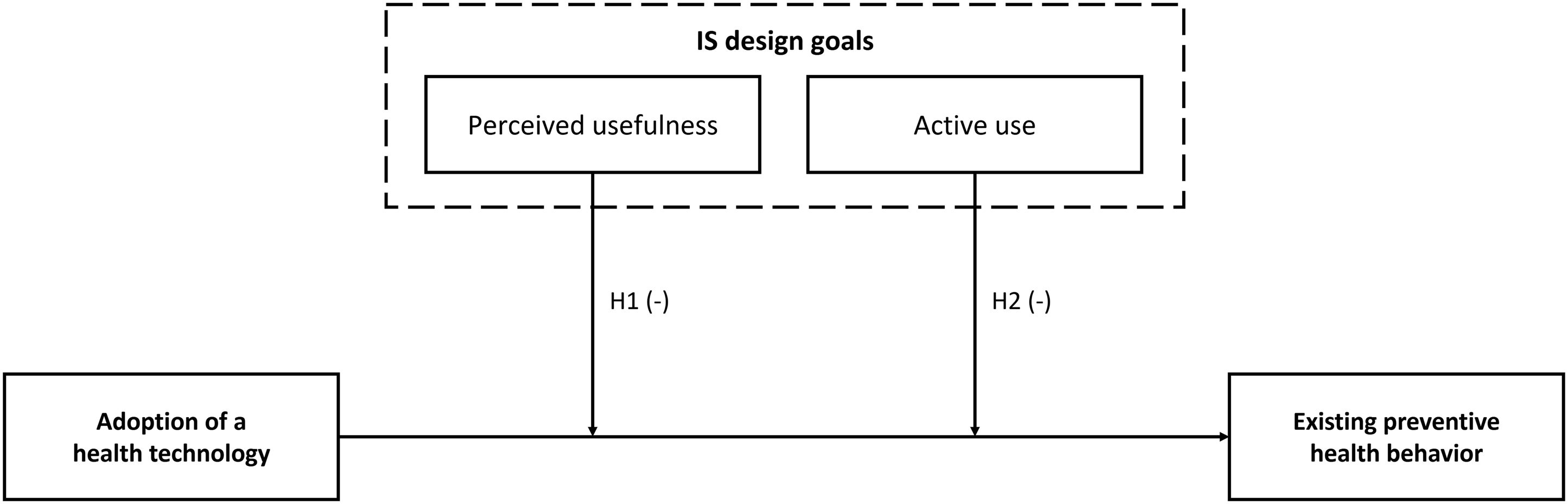

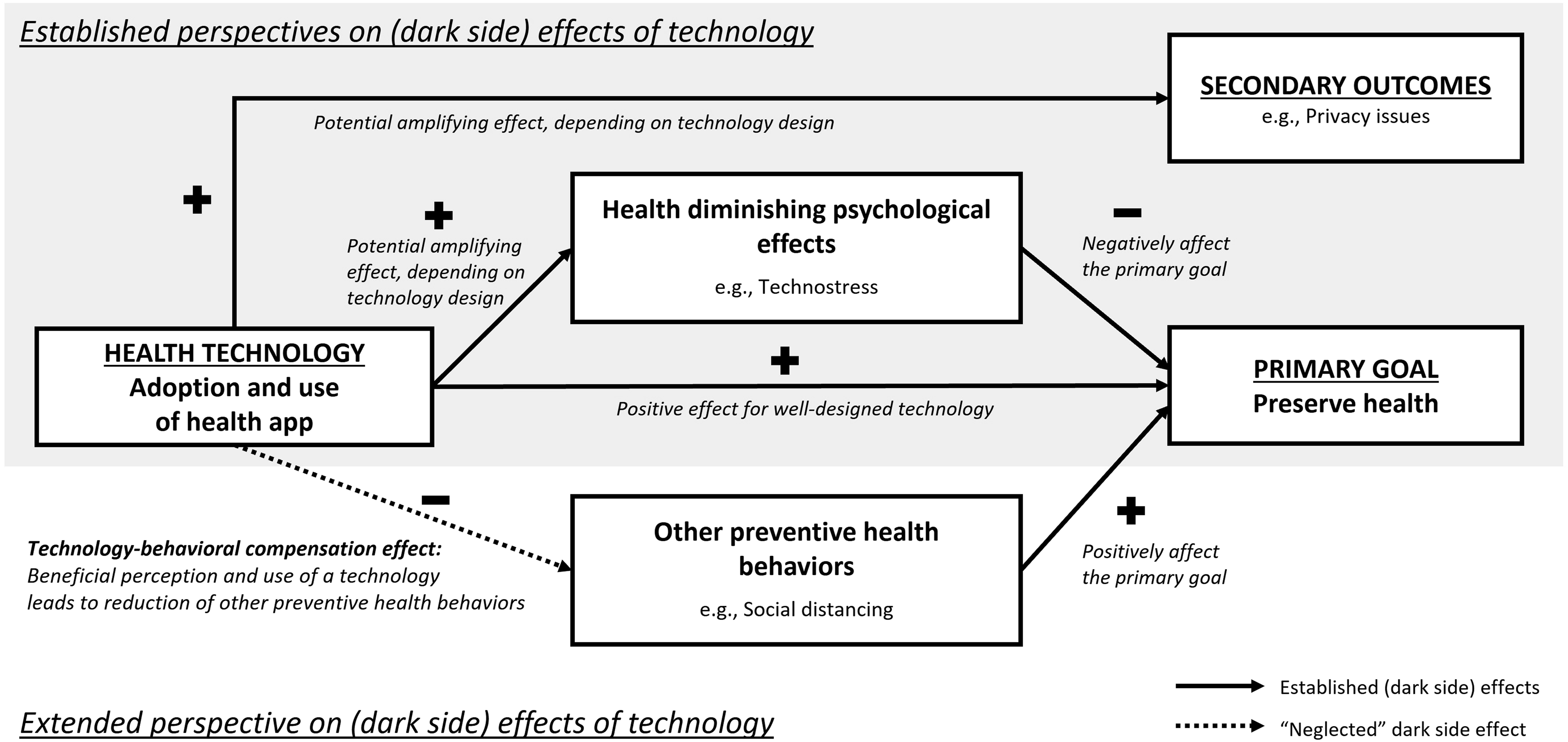

When theorizing on behavioral compensation concerning health apps, we combine this literature on effective IS design with theoretical considerations drawn from RCT. Our synthesis of the compensation behavior literature in the health context suggests that the mere adoption of new interventions does not necessarily induce neglect of existing preventive behaviors. The UMM developed above supports these findings and suggests further that when the perception of benefits from a preventive health technology intervention increases, it is more likely to reduce existing health behaviors. However, this insight points to potential tension between what is desirable for effective IS design, namely, perceived usefulness and active use––and what drives compensation behavior—that is, high perceived benefit. More precisely, when designing for usefulness, the health app is perceived as more effective in terms of its health benefits, which can trigger reductions in existing preventive health behaviors (the app efficacy trap). Similarly, active app use reminds people continuously of their preventive behavior with the health app, which can lead to neglect of other preventive health behaviors (the app engagement trap). Figure 1 illustrates the moderating effects of perceived usefulness and active use of health apps on other preventive health behaviors. In the following section, we discuss the two postulated relationships of our technology-behavioral compensation framework in detail. A framework for technology-behavioral compensation in the health context.

Perceived usefulness-the app efficacy trap

IS design’s general goal is to provide the most beneficial technologies to support stakeholder goals (DeLone and McLean, 2003; Seddon, 1997). Thus, IS is designed to be useful in fulfilling its users’ goals. Concerning health apps, perceived usefulness stems, among other factors, from a user’s evaluation of the technology’s capabilities to support the goal of health protection or preservation. For example, can a fitness app provide suitable workouts to ensure that all muscle groups are trained sufficiently? However, if a technology is perceived as more useful and, therefore, more beneficial in fulfilling the user’s goal, it favors compensation behavior: Our RCT-based UMM suggests that increased perceived benefits from a newly adopted technology would enhance the likelihood that other preventive health behaviors would be neglected. For example, if the fitness app can provide a comprehensive muscle workout, the user might no longer feel the need to adhere to their regular movement to remain healthy. Thus, the more useful a health app is perceived, the more likely that adoption of the technology will be compensated for by a reduction in other preventive health behaviors. Thus, we propose:

The adoption of health apps leads to a reduction in other preventive health behaviors if users perceive that the app is highly useful.

Active use–the app engagement trap

The second factor in the IS design literature, viewed as one of the most important goals for beneficial IS, is the active use of a technology (DeLone and McLean, 2003), which reflects behavioral engagement, that is, the time and effort that the user invests in a technology (Flaherty et al., 2019). We suggest that active app use reminds users of their app adoption as an investment in their health prevention and, therefore, promotes users’ impression that they constantly are doing something beneficial for their health. Consequently, based on the UMM developed above, users are more likely to neglect other preventive health behaviors because they perceive increased health benefits from actively using the app. For example, users of a pedometer app who regularly engage with the app are constantly aware of doing something for their physical health and, therefore, might underestimate the provided additional utility of healthy eating, resulting in behavioral compensation. In turn, inactive users simply might not be aware that they already are doing something for their physical health and have no reason to seek behavioral compensation. Thus, the more actively one uses a health app after adoption, the more salient the goal-promoting behavior, and the more likely users are to engage in compensation behavior. Thus, we propose:

The adoption of health apps leads to a reduction in other preventive health behaviors if users actively use the app.

Research design

Study setting and data collection

To test our hypotheses and seek evidence of the technology-behavioral compensation effect, we collected multi-wave data between May 2020 and October 2020 on the perception and use of a German COVID-19 tracing app (“Corona-Warn-App”), as well as on other preventive health behaviors. 3 The context is particularly suitable because the government enacted other measures to protect against infection—for example, quarantines, social distancing, and stay-at-home interventions—which together helped maintain public health goals. This system of non-technological measures then was enhanced with a contact-tracing app to help reduce infection risks further by providing the technological means to supply citizens with information on their health status (i.e., by indicating risky exposures to infected individuals) based on tracing their contacts. More precisely, the app registers locally encrypted encounters with other app users via Bluetooth. Data storage is decentralized, and the user has full control of the data sharing.

At the time of data collection, the app’s function was limited to informing users about individual risk exposures. Accordingly, only users received retrospective information about whether they had an encounter with a COVID-19-infected person if that person registered their infection in the app. The app did not have push notifications, so users had to access the app manually to check whether they had a reported risk encounter. The app indicated in green when one had a low risk of infection because of no contact with a reported infected person, and in red when one had increased risk due to contact with an infected person. Therefore, the app had a purely informational function that did not provide direct protection against a COVID-19 infection. Furthermore, this context allowed us to determine whether the introduction of a health app affected other (still necessary) preventive health behaviors.

We used a major Western European crowdsourcing platform (i.e., Clickworker) to recruit study participants for a multi-wave survey, and we followed recommended approaches to avoid potential biases and overcome limitations associated with recruiting professional survey-takers via online data panels (Jia et al., 2017; Lowry et al., 2016). As a priori and procedural remedies, we implemented attention and comprehension checks, offered moderate monetary compensation, warned participants that lack of attention would lead to ineligible participation, thoroughly explained the importance of the research, and chose neutral wording.

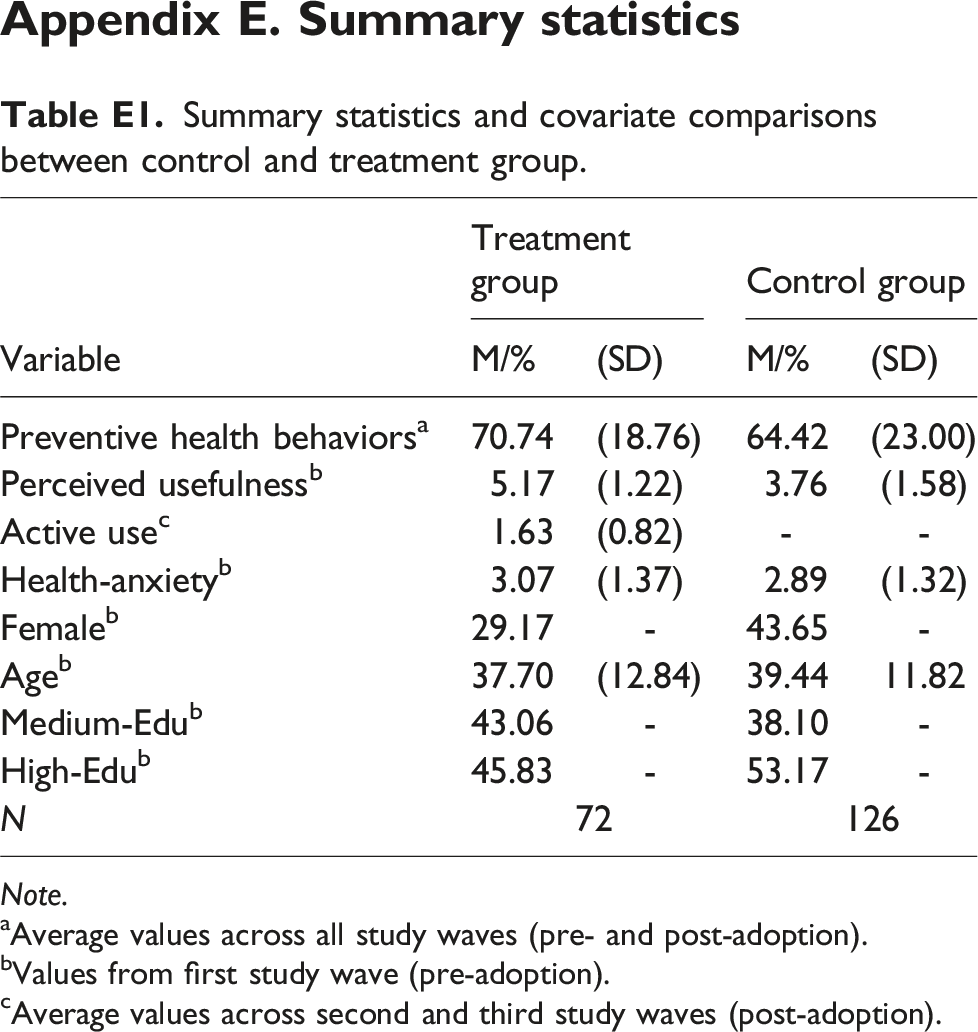

We relied on four survey waves conducted in 2020, with one before (end of May 2020; t1) and three after the introduction of the COVID-19 contact tracing app (end of June, August, and October, respectively; t2, t3, and t4). All participants were screened for exclusion criteria, such as attention checks, click-through patterns, overly short response times, and inconsistent response behavior on a repeated question in the survey. The final sample comprised 198 participants (38% female, Mage = 38.81) who completed the surveys in all waves and had or had not adopted the app throughout the periods. For our later analysis, we adopted established difference-in-differences (DID) terminology (e.g., Goh et al., 2013; Haferkorn, 2017; Qiu and Kumar, 2017; Rishika et al., 2013) and refer to individuals in the sample who decided to adopt the Corona-Warn-App after the German government introduced it as the treatment group and those who did not adopt the app as the control group. We temporally separated measurement of the dependent and independent variables to mitigate common method bias (Hulland et al., 2018) and measured preventive health behavior retrospectively (i.e., at t2, we captured behavior between t1 and t2, etc.). Thus, our final data set is in a 198 × 3 data matrix and comprises 594 participant-period observations.

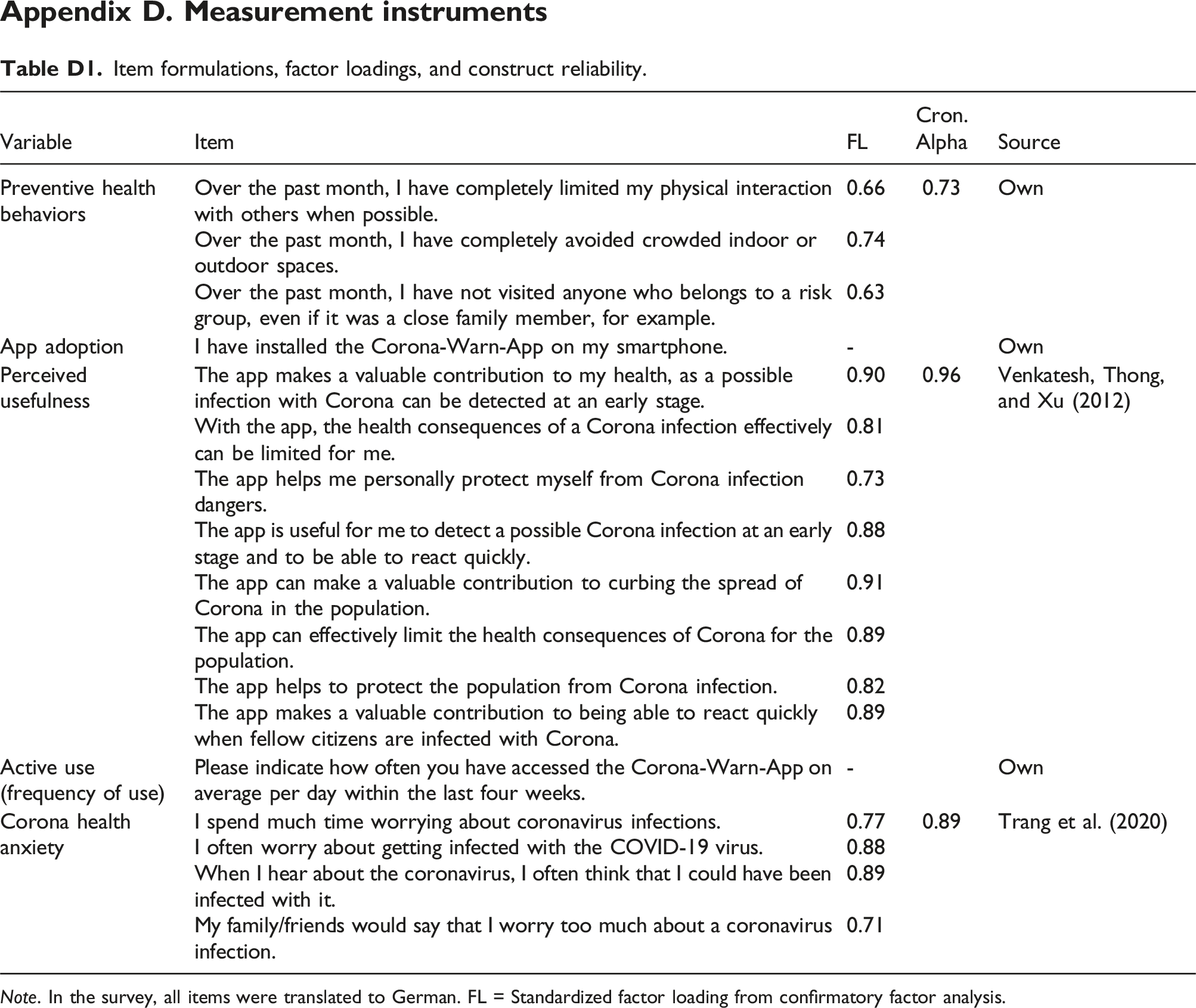

Measures

We used seven-point Likert scales anchored by 1 = “do not agree” and 7 = “fully agree” to measure the focal constructs unless noted otherwise. For the dependent variable, we measured preventive health behaviors at multiple time points using three self-developed items (anchored by 1 = “do not agree” and 100 = “fully agree,” for example, “Over the past month, I have completely limited my physical interaction with others when possible.” Cronbach’s α = 0.73). 4 We used a single item-capturing behavior concerning app installation (anchored by 0 = “no” and 1 = “yes,” that is, “I have installed the Corona-Warn-App on my smartphone.”) to measure app adoption. As for the moderators, we measured perceived usefulness in the first wave of the survey and used eight items adapted from Venkatesh et al. (2012; for example, “The Corona-Warn-App helps me to personally protect myself from the dangers of a Corona infection.” Cronbach’s α = 0.96). We assessed active use as the self-reported frequency of use with a single item with an open entry (i.e., “Please indicate how often you have accessed the Corona-Warn-App on average per day within the last four weeks.”). For the control variable, Corona health anxiety, we adapted the four-item scale by Trang et al. (2020; for example, “I spend a lot of time worrying about coronavirus infections.” Cronbach’s α = 0.89). All items and reliability statistics appear in Appendix D.

Empirical analyses

Difference-in-differences model

Our analysis aims to understand how perceived usefulness and active use moderate tracing app adoption’s impact on other preventive health behaviors. We followed prior IS research (e.g., Goh et al., 2013; Haferkorn, 2017; Qiu and Kumar, 2017; Rishika et al., 2013) and used the well-established DID approach to compare changes in the preventive health behaviors of individuals who adopted the tracing app (treatment group) with those of individuals who did not install the app (control group). That is, we treated our research setting as a quasi-experimental research design to gauge tracing app adoption’s impact on other health preventive behaviors as a quasi-experimental treatment effect. The DID approach helps exploit longitudinal data by comparing the treatment and control group before and after treatment by constructing a quasi-experimental design. Notably, this treatment is not randomized genuinely, as in a lab experiment setting; however, it represents a shock that only affects individuals in the so-called treatment group, making the control group an adequate counterpart unaffected by the shock (Goldfarb et al., 2022). Thus, DID modeling allows us to overcome the limitations of observed and non-randomized quasi-experimental settings to estimate an unbiased treatment effect, which identifies whether adopters of the tracing app engage more or less in preventive health behaviors than non-adopters.

Notably, to estimate an unbiased treatment effect, it is insufficient merely to examine the difference in the preventive health behaviors of those individuals who adopted the tracing app because potential extraneous factors that may affect behavior during the period before the app was introduced, but not after (and vice versa), then would be ignored, which might lead to biased results (Rishika et al., 2013). Thus, the DID approach is advantageous for the present research context because it defines a pre- and post-treatment period for the treatment and the control group to account for time-varying, extraneous factors that might affect all participants’ preventive health behaviors, both observed and unobserved (e.g., Gu and Kannan, 2021). Such factors could include, for instance, expert recommendations in the news or changes in climate. Furthermore, as the DID model examines the difference between engagement in preventive health behaviors before and after app adoption for each participant, it also considers individual differences (i.e., unobserved heterogeneity) in absolute levels of preventive health behaviors between the study participants.

We formally specify a multi-period DID model for individual i in period t as follows:

in which yit+1 is the mean score of preventive health behavior items of individual i reported in period t+1. The binary-coded variable treatmenti takes on the value 1 if individual i adopted the tracing app and had it installed during both post-adoption periods (i.e., t2 and t3) and 0 if they did not adopt the tracing app during these two periods, while postt is a binary-coded variable that takes on the value 1 for the two post-adoption periods for all individuals and 0 otherwise. The coefficient β3 represents the DID estimate of the treatment effect of tracing app adoption on preventive health behavior (modeled as the interaction term of the treatmenti and the postt variables). To examine whether perceived usefulness (active use) moderates this treatment effect, we also modeled the three-way interactions between treatmenti, postt, and usefulnessi,t1 (useit), represented by β4 (β5). 5

As control variables, we consider an individual’s mean score of coronavirus anxiety items (corona-anxietyi), gender using the binary variable femalei, age (agei), and education using the dummy variables med-edui and high-edui, with lower-level education (e.g., middle school) as a reference group. Finally, we also included a time-fixed effect for period 2 to control for further time-dependent variations in the dependent variable.

Sample self-selection

As the individuals in the treatment group self-selected to adopt the tracing app, self-selection bias represents a major concern in the chosen research context (Gu and Kannan, 2021). We must consider that the chosen DID approach does not control for potential endogeneity resulting from the fact that app adoption and engagement in other health preventive behaviors may be affected by further unobserved factors. Therefore, we employed the Heckman-type self-selection correction procedure (Heckman, 1976) to model the selection decision (i.e., tracing app adoption) as a function of observed predictor variables and used these estimates to control for self-selection bias in our DID model. In the first step, based on the full sample of 594 participant-period observations, we estimated an individual’s decision to adopt the tracing app using a binomial probit model in both t2 and t3 periods (coded as 1), compared with not adopting it (coded as 0) as a function of the following predictor variables identified in prior research: general health anxiety (Salkovskis et al., 2002); general IS privacy concerns (Malhotra et al., 2004); and social influence regarding the tracing app (Venkatesh et al., 2012). All effects were significant in predicting the decision to install the tracing app (all p < 0.05). During the second step, we then used the estimates from the above probit model to calculate the inverse Mills ratio (i.e., Heckman correction factor) and included it as a control variable in the DID model (Equation 1). 6

Results

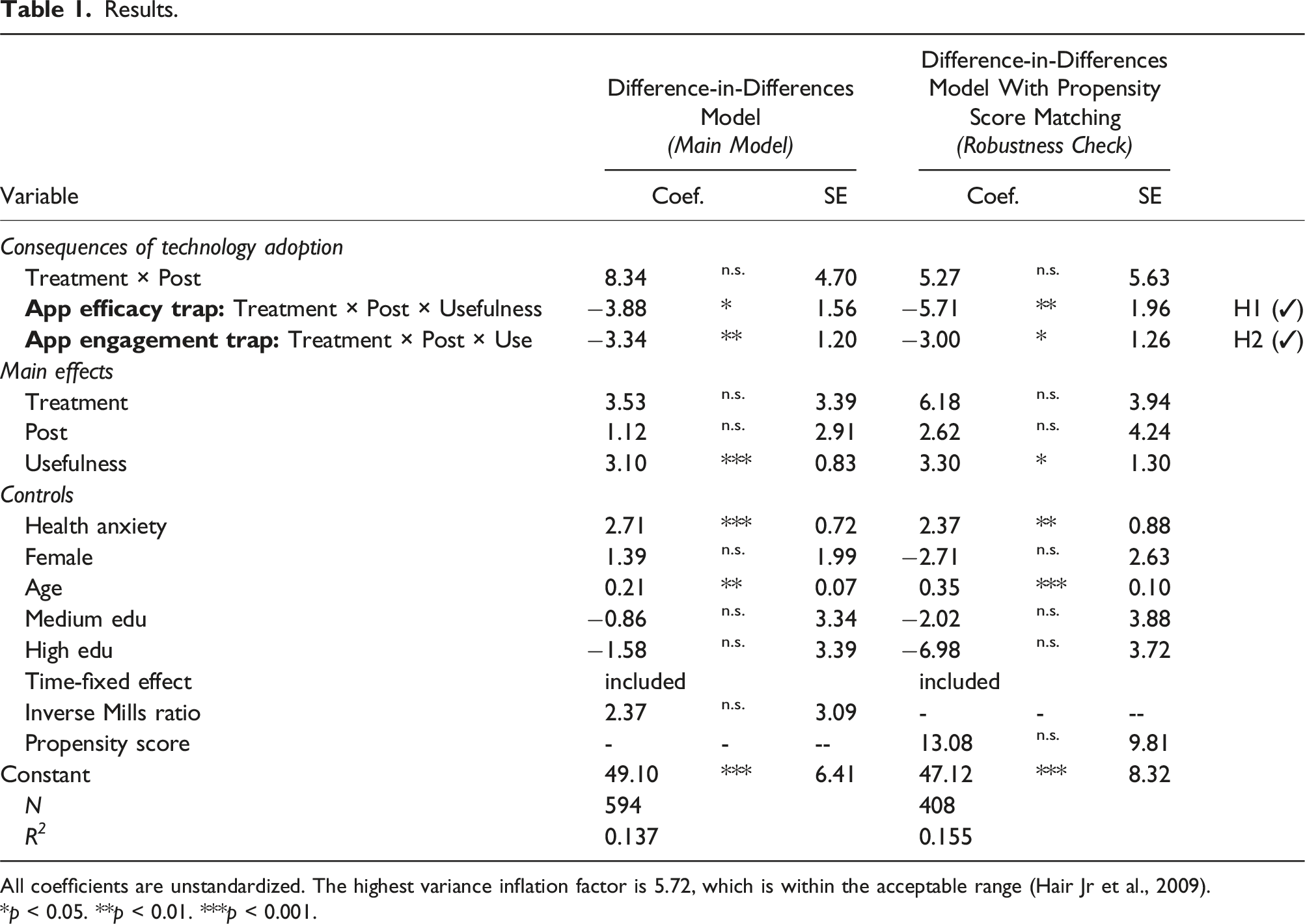

Results.

All coefficients are unstandardized. The highest variance inflation factor is 5.72, which is within the acceptable range (Hair Jr et al., 2009).

*p < 0.05. **p < 0.01. ***p < 0.001.

To assess the consequences of tracing app adoption, we first examined the coefficient of the DID-interaction term (treatment × post), as it represents the DID for preventive health behavior between the treatment group (app adopters) and control group (app non-adopters) between the periods before and after (i.e., post) adoption of the tracing app, adjusted for biases due to extraneous factors. Interestingly, the results indicate that tracing app adoption alone does not impact preventive health behaviors, as the DID-interaction term, treatment × post, is not significant (β3 = 8.34, p > 0.05). This finding supports our notion that the mere introduction of a new health intervention and an individual’s compliance with it (i.e., adopting the health app) do not necessarily lead to neglect of other preventive behaviors and, therefore, does not trigger compensation behavior. Thus, this finding suggests that mere tracing app adoption does not necessarily affect engagement in other preventive health behaviors.

Second, to assess the hypothesized moderation of the treatment effect, we turned our attention to the coefficients of the two three-way interactions between the treatment, post, and perceived usefulness and frequency of use variables, respectively. We find that the three-way interaction between treatment, post, and perceived usefulness on preventive health behavior (App efficacy trap: Treatment × Post × Usefulness) is negative and significant (β4 = −3.88, p < 0.05). That is, the more useful the users perceive the app, the more they will reduce preventive health behaviors after app adoption. This finding supports H1, as it suggests that these users’ adoption of the tracing app is compensated by a reduction of other preventive health behaviors. H2 suggests that users of health apps who actively use the technology will reduce other preventive health behaviors. The results support this hypothesis, as the three-way interaction between treatment, post, and frequency of use on preventive health behavior (App engagement trap: Treatment × Post × Use) is negative and significant (β5 = −3.34, p < 0.01). That is, the more active users engage with the app, the more they will neglect other preventive health behaviors after app adoption and, thus, compensate for app-adoption-induced preventive health behavior.

We also conducted a robustness check using propensity score matching (PSM) to compute a more unbiased estimate of the treatment effect and its interactions (see Appendix F for a more detailed explanation of the approach). The results are consistent with the results from our main model (right panel in Table 1). Our results’ consistency strengthens our confidence in the causal inference made above.

Discussion

In pursuit of public health goals, governments and support organizations (e.g., health maintenance organizations, insurance companies) have been introducing an increasing number of health technologies (e.g., fitness trackers, nutrition apps, and contact-tracing apps). Although such interventions are promising when examined in isolation (Ferretti et al., 2020; Wolf et al., 2021), they typically complement a system of other health-related measures that jointly have led to a common health goal. We set out to uncover and explain these technological interventions’ potential unintended influences on other behaviors (i.e., compensation behavior). In doing so, we uncover a new class of unintended consequences that must be taken into account when evaluating both the “bright side” and the “dark side” of societal digitization projects.

Our review of prior research on compensation behaviors in other fields of research points toward mixed results, and the employed theories lack factors that could explain when compensation takes place (Blanken et al., 2015; Hedlund, 2000). To advance this understanding, we drew on RCT to explain the rationality of compensation behavior and further developed a UMM that proposes that the perception of technology intervention benefits should drive technology-behavioral compensation. Ironically, maximizing these benefits is highly desirable from an IS design perspective. Our longitudinal study confirms that two factors (i.e., perceived usefulness and active use), which are viewed as indicators of effective IS design (i.e., beneficial to stakeholders’ goals), actually trigger neglect of existing preventive health behaviors. These findings point toward two IS design traps (i.e., the app efficacy trap and the app engagement trap) for IS managers and designers. They suggest that the reason why newly introduced health technologies are undermining intended health goals by diverting users from previous preventive health behaviors may be a result of their effective design.

The app efficacy trap refers to situations in which perceived usefulness, a desirable factor for IS success (e.g., Seddon, 1997), leads to high benefit perceptions, thereby triggering a change in individuals’ overall cost-benefit calculations. This can cause users to reduce existing behaviors that may be instrumental to health goals. IS researchers have expended significant effort trying to understand how technology’s usefulness, or perceived usefulness, can be increased (e.g., Hassan et al., 2019; Wortmann et al., 2019), but its desirability is less certain when considering systems of measures. In our empirical study, different design features of the COVID-19 app could have caused the app efficacy trap. For example, the app claimed that users had a low risk of infection when they did not have contact with a registered infected person. However, a large proportion of all citizens would have had to install the app (which was not the case in Germany) and voluntarily share their infection in the app for this statement to be reliable. Thus, this design exaggerates the technology’s effectiveness to reflect the actual risk accurately. Furthermore, the signaling feature in terms of green and red color may have lulled users into a false sense of security concerning their risk of infection.

The app engagement trap refers to situations in which active use, which is also a desirable factor for IS success (e.g., DeLone and McLean, 2003), may be a focus for IS designers. Extant IS research has identified and tested various approaches to increase the frequency of interactions with technologies (Garaialde et al., 2020; Wolf et al., 2021), but our findings suggest that this could turn out to be a trap when considering how technologies are part of a system of measures toward a certain goal. In the case of the COVID-19 app that we examined, the app’s lack of automation could be the cause of the app engagement trap. Users had to open the app to get any information about their possible encounters with infected people, which might have created the impression that the users are constantly protecting their health by checking the app.

Taken together, the findings suggest that IS adoption and use can lead to undesirable side effects, even if the app is well-designed from behavioral and efficacy perspectives. The core tenets of RCT suggest that this finding may extend well beyond the context of health technologies. In the following section, we discuss the implications for theory and practice in greater detail.

Theoretical implications

Our study contributes to IS research in several ways. First, we expand the emerging body of research on dark side effects of IS use (Tarafdar, Gupta, et al., 2015b). Previous studies on these effects have focused on negative psychological (e.g., Ragu-Nathan et al., 2008; Yin et al., 2018) and behavioral (e.g., Turel and Qahri-Saremi, 2016; Wang and Lee, 2020) effects that may impact the technology’s primary goal directly or elicit negative side effects. This study theoretically and empirically demonstrates a so-far-overlooked collateral behavioral consequence of IS use. The complementary perspective on dark side effects postulates that adoption of a new technology can drive users to reduce other (non-technological) existing goal-supporting behaviors. This could harm a technological intervention’s efficacy, even if the technology itself is well-designed and purposefully used. Unlike the established dark side effects of IS use, this mechanism is driven by an induced reduction of other goal-supporting behaviors, rather than negative effects that occur from interacting with the technology itself.

We illustrate the mechanism of this additional type of dark side effect of IS use together with other well-known negative consequences of technology use by employing the example from our empirical research context: a COVID-19 contact-tracing app (see Figure 2). First, app use can induce negative secondary outcomes that do not immediately interfere with the technology’s primary goal. In our example, the health technology could trigger side effects, such as privacy issues (e.g., Benlian et al., 2020), but it still may be effective at preserving people’s health. Second, app use can trigger negative psychological effects, such as technostress (e.g., Ayyagari et al., 2011; Nastjuk et al., 2023), which might affect users’ psychological health directly, for example, by weakening their immune systems, or indirectly, for example, through discontinued health app use (i.e., negative behavior; Maier et al., 2015). These two general and established ways that IS use may lead to primary or secondary negative consequences are illustrated in Figure 2’s gray box. Our research expands on these perspectives by introducing a new mechanism that triggers primary negative consequences on an indirect path, namely, through technology-behavioral compensation. In our context, this implies that app use can influence other existing preventive health behaviors, for example, social distancing (indicated with the dotted arrow), negatively, which can endanger users’ health. Therefore, we suggest that the negative impact of IS use on other existing, goal-supporting user behaviors may be as relevant as other well-known negative effects, but its indirect impact makes it far more difficult to detect in adoption and use studies focusing on the technology alone. An extended perspective on the dark side effects of technology use, exemplified in the context of a health app.

These insights also contribute to the comparative paucity of literature on how an individual’s dark side behavior affects society (Tarafdar, Gupta, et al., 2015b). In the context of COVID-19 tracing apps, the technology-behavioral compensation effect implies that individuals will reduce preventive health behaviors after adoption if they perceive greater benefits from the app (use)—jeopardizing not only their own health, but also that of others.

Second, we contribute to the literature on design goals for effective IS, particularly the evaluation of health app success. The dominant perspective on IS efficacy focuses on technology design’s direct influence on its ability to support stakeholder goals (DeLone and McLean, 2003; Seddon, 1997). Our study suggests that this perspective may draw an incomplete picture of the effects of IS design decisions. More precisely, the technology itself can be effective in facilitating its goals, but also can influence other behaviors negatively, which, in turn, can counteract the technology’s positive direct effects. As such, our findings call for a more overarching perspective on technology’s impact that does not examine its efficacy in isolation, but instead considers spillover effects, such as adverse effects on other behaviors that may contribute to the stakeholder’s goal achievement (e.g., health prevention). Extending the common perspective to determine technological efficacy is particularly important because we reveal that two design goals viewed as key for successful technologies—perceived usefulness and active use (Davis, 1989; DeLone and McLean, 2003; Seddon, 1997)—are factors that promote this unintended technology-behavioral compensation effect. Our findings indicate that if the technology is only one intervention among many in achieving the desired goal, then the factors driving perceived benefits should be questioned in terms of backfiring effects.

Third, our study carries implications for research in settings where technologies are introduced to serve as building blocks in systems of measures to achieve an overall goal (e.g., effective decision-making, well-being). Supporting goal achievement through technological innovations constitutes a core element in many IS research streams, such as privacy control (e.g., Hoehle et al., 2019), IT security (e.g., Jardine, 2020), or decision support systems (e.g., Rowe, 2005), to name a few. While our empirical study is situated in the context of health apps, our RCT-based UMM suggests that compensation behaviors are generally contingent on perceptions of benefits from the introduced technology. In other words, the model is not restricted to our empirical context of health apps. As a result, the introduction of a (on its own effective) technological solution may not contribute to the overall goal as intended or may even be counterproductive due to compensation behaviors. For example, the privacy literature investigates how to design compelling privacy controls and promote privacy by design (e.g., Hoehle et al., 2019). Based on our theoretical framework, the introduction of effective privacy measures may lead to users cutting down on other privacy-protection behaviors, for instance, using anonymizers, withholding sensitive data, or avoiding malicious websites. Our contextualized perspective on compensation behaviors and the analytical identification of factors that drive compensation behavior enrich the traditional IS perspective on technology’s efficacy and may inspire other research streams that examine technologies’ impact as parts of complex systems of measures.

Finally, from a theoretical perspective, this paper complements existing theories that have been used to explain compensation behavior (i.e., risk homeostasis theory, self-licensing effect). Expanding on and supplementing these theories, we draw on RCT to contemplate compensation behaviors in light of individuals’ cost-benefit considerations and reveal that compensation behavior can be a rational choice. However, more importantly, in combination with our UMM, it also provides new insights into when compensation behavior is more likely. By uncovering a potential explanation for the occurrence of compensation behavior, we not only expanded on what previously applied theoretical perspectives predicted but also shed light on inconclusive findings from previous literature on compensation behavior in the health context (e.g., Blanken et al., 2015; Hedlund, 2000). Our empirical study in the context of health apps supports the UMM’s general prediction that compensation behavior is particularly dependent on (strong) benefit perceptions of the intervention. In this way, we enabled research on compensation effects to move beyond explaining compensation to address the factors that drive its occurrence.

Practical implications

Our results carry important implications for health-app providers, policymakers, and app users. First, health app providers and designers should be careful when implementing mechanics for user engagement in their systems. While encouraging frequent and active use aids users and helps firms achieve financial success (DeLone and McLean, 2003; Seddon, 1997), our findings indicate that active use can backfire and trigger compensation behavior. Thus, app designers should assess to what extent behavioral user engagement is really helpful for achieving the overarching goal (e.g., public health prevention). For example, regarding COVID-19 contact-tracing apps, active use might not even be necessary to achieve the public health goal. Thus, tuning down mechanics for user engagement might avoid this trap of the technology-compensation behavioral effect. Furthermore, our findings indicate that perceived usefulness also can induce behavioral compensation. Thus, app providers should not oversell their health apps’ efficacy. More precisely, in the health context, several behaviors are often necessary to facilitate people’s health, and although apps can be effective in supporting health, they can hardly pertain to all needed behaviors. Thus, presenting an app as the solution to a health problem entails risk and may result in neglect of complementary preventive health behaviors, which might harm users’ health and even the app provider in the long run.

Second, policymakers and support organizations should be aware that technological interventions carry the risk of unintended consequences, even though they can be an extremely valuable addition to existing preventive health behaviors. Our findings suggest that people, contrary to policymakers’ intent, do not necessarily use health apps to maximize their health protection. Our RCT-based UMM’s results uncovered the mechanism behind this, indicating that people using health apps tend to compensate for behavior based on their benefit perception (i.e., perceiving the apps as very useful or actively using them). For policymakers, this could be a tricky situation, leading to a trade-off decision between app adoption and avoidance of such backfiring behavior. Mass acceptance of health apps, such as the COVID-19 tracing app, is necessary so that the technology can be effective, suggesting that framing the technology as highly beneficial would be strategically advisable (Trang et al., 2020). When the perception of a highly beneficial app simultaneously reduces other preventive health behaviors, policymakers must find a way to nudge people into adoption without creating perceptions that the app can be a “stand-alone” solution for health prevention. With this in mind, the prospect of compensation behavior should not stop policymakers from pursuing promising technological interventions for health prevention; however, it is essential to plan ahead to ensure that the technology application’s contribution significantly outweighs any potentially offsetting constraints. Policymakers and health organizations must work on their communication to address this issue and manage potential optimism about technological interventions by communicating clearly and broadly that they alone cannot reduce the health risk sufficiently. Efforts to promote mass acceptance of societal health apps clearly should indicate the technologies’ limitations and strongly emphasize the need to perform several preventive health behaviors at once (Mena et al., 2020). Another more drastic way to avoid the technology-behavioral compensation effect is to refrain from making compliance with interventions voluntary. A compensation effect can be prevented when making all necessary preventive health behaviors mandatory.

Third, users of health apps should not overestimate technology’s impact and its use. Overpredicting technological benefits can harm personal health through neglect of natural health-preserving behaviors in daily life. It is common practice for app providers to “market” their technologies as overly beneficial to convince people to adopt and use their apps. People should bear in mind that technologies’ benefits are limited and guard themselves against false promises, bad communication, and social pressure, which might harm their health by causing compensation behavior. As technology becomes more ubiquitous in all areas of life, it is becoming increasingly necessary for users to develop an educated and critical approach to technology use. For example, the low adoption rate of a COVID-19 tracing app makes the technology nearly ineffective in informing users about whether they had a high-risk encounter. Thus, a holistic assessment of technologies’ function and their contribution to health goals is becoming increasingly important to avoid being misled into “wrong” behavior. Technology is a promising solution for many health problems, but it cannot solve them alone. This applies not only to technologies, but also can be generalized to other interventions in the health sector.

Limitations and future research

Aside from the theoretical and practical implications, our research has some limitations that highlight avenues for further research. First, our study focused on the neglect of existing preventive health behaviors after health app adoption, which is an important first step in assessing overall IS efficacy. However, we did not consider the technology-behavioral compensation effect’s absolute impact on overarching health goals. Quantifying individual existing behaviors’ effect on achieving particular health goals already is proving to be difficult and subject to significant scientific debate, and their relative impact is even more difficult to assess (Brauner et al., 2021; Chu et al., 2020). Nevertheless, future studies should try to determine when the technology-behavioral compensation effect reduces technological interventions’ positive impact on health protection and under what conditions it actually damages public health. Second, our empirical study is situated in the context of COVID-19 contact-tracing apps, and we believe that the technology-behavioral compensation effect also will occur in contexts with other motivational characteristics and encourage studies in areas such as sports and nutrition, in which individual health promotion is emphasized more than public or social health protection. Third, our multi-wave study design included behavioral data from users and nonusers up to about four months after the app’s launch. While this allowed us to uncover technology-behavioral compensation after initial adoption, we cannot assess whether this is a long-term effect. We hope to inspire future studies that could, for example, investigate whether behavioral compensation will decrease over time due to a habituation effect. Finally, our study does not empirically demonstrate how the technology-behavioral compensation effect can be avoided. We believe that focusing on mitigation mechanisms to overcome this “unexplored” dark side effect of IS use is a fruitful avenue for future research (Tarafdar, Gupta, et al., 2015b).

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Volkswagen Foundation (Participatory surveillance through tracing apps; 99796).

Notes

Appendix A. Literature review on dark side effects of IS use

Literature overview on unintended negative consequences from own IS use.

Article

Context

Unintended negative consequences

Key findings

Type of unintended negative consequence

Negative psychological outcome

Negative behavioral outcome

Reduction in non-technological positive behaviors

Vieira da Cunha, Carugati, and Leclercq-Vandelannoitte (2015)

Workplace

Strain

Computer- mediated control can lead to strain

x

Ayyagari, Grover, and Purvis (2011)

Workplace

Strain, technostress

Certain technology features can cause technostress and result in strain

x

Ragu-Nathan et al. (2008)

Workplace

Technostress, reduced job satisfaction

Technostress creators decrease job satisfaction

x

Tarafdar, Tu, and Ragu-Nathan (2010)

Workplace

Technostress, reduced job satisfaction and task performance

Technostress negatively affects user satisfaction and performance

x

x

Tarafdar, Pullins, and Ragu-Nathan (2015)

Workplace

Technostress, reduced task performance

Technostress creators are associated negatively with task performance

x

x

Califf, Sarker, and Sarker (2020)

Workplace

Technostress, reduced job satisfaction

Hindrance technostressors reduce job satisfaction

x

Tarafdar et al. (2007)

Workplace

Technostress, reduced productivity

Technostress negatively affects productivity

x

x

Benlian (2020)

Workplace

Technostress, reduced partnership satisfaction

Hindrance technostressors are related negatively to partnership satisfaction via negative affect

x

Pirkkalainen et al. (2019)

Workplace

Technostress, reduced productivity

Higher levels of technostress creators lead to lower levels of IT-enabled productivity

x

x

Agogo and Hess (2018)

Workplace, university students

Technology-induced state anxiety

Specific instances of technology can lead to state anxiety

x

D’Arcy and Teh (2019)

Workplace

Security-related stress

Security-related stress can lead to decreased ISP compliance through increased frustration and fatigue

x

x

Turel, Serenko, and Bontis (2011)

Workplace

Addiction, overload

Addiction to organizational mobile email use increases perceived work overload and technology-family conflict

x

Yin et al. (2018)

Workplace

Overload, reduced job satisfaction

Information overload reduces job satisfaction

x

Galluch, Grover, and Thatcher (2015)

Workplace

Technology-induced interruptions, technostress, strain

Technology-induced interruptions can lead to technostress and cause strain

x

Tams, Thatcher, and Grover (2018)

Workplace

Technology-induced interruptions, technostress, reduced task performance

Technology-induced interruptions can increase perceived technostress and reduce task performance

x

x

Addas and Pinsonneault (2018)

Workplace

Technology-induced interruptions, reduced performance

Exposure to task-incongruent interruptions negatively affect performance through subjective workload

x

Chen and Karahanna (2018)

Workplace

Technology-induced interruptions, exhaustion, reduced performance

Work-related interruptions reduce work performance and nonwork outcomes through work exhaustion

x

x

Tams et al. (2020)

Workplace

Technology-induced interruptions, overload, reduced technology use

Perceived interruption overload decreases work-related technology use through work-life conflict

x

x

Cheng et al. (2022)

Sharing platform

Feeling of privacy invasion

The use of big data analytics and artificial intelligence leads to feelings of uncertainty and privacy invasion

x

Saunders et al. (2017)

Mobile phones

Information overload

ICT-related overload depends on cultural, demographic, and experiential factors

x

Soror et al. (2015)

Mobile phones

Maladaptive use

Maladaptive mobile phone use is caused by a tug-of-war between habits and self-regulation

x

Matook, Cummings, and Bala (2015)

Social networks

Perceptions of loneliness

Use of social networks can be linked to more perceived loneliness

x

Turel and Serenko (2012)

Social networks

Addiction

Social media IT artifacts can create bad habits that lead to addiction

x

x

Turel and Qahri-Saremi (2016)

Social networks

Maladaptive use, reduced performance

Some social media users tend to problematic use, which negatively affects academic performance

x

Tarafdar et al. (2020)

Social networks

Technostress, addiction

Social media technostressors can lead to social media addiction through coping via distraction within the app

x

x

Kwon et al. (2016)

Social networks

Addictive use

Users who are exposed to stronger positive reinforcement and exhibit resilient consumption inertia or heavily discount the exacerbating effects of current consumption are more susceptible to social app addiction

x

x

Vaghefi, Negoita, and Lapointe (2022)

Social networks

Compulsive and addictive use

Different social media features and perceptions of them can lead to compulsive and addictive use

x

x

Wang and Lee (2020)

Social networks

Compulsive use

Social media can lead to compulsive app use through urges

x

Polites et al. (2018)

Social networks

Compulsive use

Users with strong self-identification with a particular social network will increase perceptions of poor self-control over their own usage time

x

Maier et al. (2015)

Social networks

Technostress, exhaustion, discontinuous use intentions

Social media technostressor creators and social media exhaustion can cause discontinuous use intentions

x

x

Fu et al. (2020)

Social networks

Overload, exhaustion, discontinuous use

System feature overload, information overload, and social overload cause user exhaustion and lead to discontinued use of social media

x

x

Hildebrand et al. (2013)

Social networks

Satisfaction, reduced use

Feedback received from other community members on self-designs can lead to lower satisfaction with self-designed products and lower product usage frequency

x

x

McHugh et al. (2018)

Social networks

Post-traumatic stress disorder

Explicit content exposure, cyberbullying, and sexual solicitations evoke symptoms of post-traumatic stress disorder

x

Salo, Pirkkalainen, and Koskelainen (2019)

Social networks

Technostress

Technostress can affect users’ well-being in the form of concentration and sleep problems, as well as identity and social relation problems

x

Turel, Romashkin, and Morrison (2016)

Games

Addiction

Videogame addiction is associated negatively with users’ well-being in the form of sleep deprivation, obesity, and elevated blood pressure

x

x

Benlian, Klumpe, and Hinz (2020)

Household

Strain, feeling of privacy invasion

Smart home assistants’ features can cause strain, feelings of privacy invasion, and interpersonal conflicts at home

x

Turel, Serenko, and Giles (2011)

E-commerce

Addiction, maladaptive use

The level of online auction addiction increases maladaptive use

x

x

Fox and Connolly (2018)

Health

Digital divide, feelings of privacy invasion, reduced adoption

Older adults expressed less health technology adoption through mistrust, high risk perceptions, and privacy concerns

x

x

Kuem et al. (2020)

Smartphone use

Addiction

Psychological smartphone addiction can increase personal conflict behavior

x

x

This study

Health

Reduction in related nontechnological positive behaviors

Adoption of technology interventions can lead to neglect of existing related positive behaviors

x

Appendix B. Literature overview on new interventions or newly adopted behavior’s impact on previous health behavior.

Literature overview on the effects of new interventions and newly adopted behavior on existing health behaviors.

Article

Context

New intervention or newly adopted behavior

Effect on related behaviors

Key findings

Peltzman (1975)

Road traffic

Seat belts

-

• Drivers offset safety regulations by taking greater accident risks, for example, aggressive driving.

Streff and Geller (1988)

Road traffic

Seat belts

-

• Introducing seat belts as a new safety intervention can lead to increased driving speeds.

Wilde (1994)

Road traffic

Antilock brakes

-

• Drivers with antilock brakes changed their behavior by driving faster and braking harder than before.

Sagberg, Fosser, and Sætermo (1997)

Road traffic

Antilock brakes, airbag

- / 0

• Drivers with antilock brakes tend to compensate by following other cars more closely.

• No significant effect was found on compensation from airbag introduction.

Assum et al. (1999)

Road traffic

Road lighting

-

• Drivers compensate for road lighting by increasing speed and reducing their concentration.

Brewer et al. (2007)

Viral diseases

Vaccination

-

• Vaccinated people are less likely to continue engaging in selected preventive behaviors after vaccination.

Autier et al. (1998)

Skin cancer

Sunscreen

-

• Sunscreen use encourages sun exposure and is related to the proliferation of nevi.

Viscusi and Cavallo (1996)

Household items

Child-resistant features

-

• Child-resistant devices can reduce risk perceptions, leading to less parental concern for safety, assessed need for precautions, and consumer care.

Klen (1997)

Workplace

Protective equipment

- / 0

• Some loggers reported that they became more careless, moved faster, became bolder, and anticipated fewer dangers after they started wearing personal protectors.

Fyhri, Bjørnskau, and Backer-Grøndahl (2012)

Cycling

Helmet

0

• With some cyclists, wearing a helmet is associated with faster cycling.

• The results suggest that fast cycling precedes helmet use, rather than vice versa.

Lardelli-Claret et al. (2003)

Cycling

Helmet

0

• Cycling at excessive or dangerous speeds is not associated significantly with helmet use.

Schleinitz, Petzoldt, and Gehlert (2018)

Cycling

Helmet

0

• Helmet use does not play a significant role in cycling speed.

Bambach et al. (2013)

Cycling

Helmet

0 / -

• Helmeted cyclists were less likely to engage in risky riding behavior.

Gamble and Walker (2016)

Cycling

Helmet

-

• Wearing a helmet is associated with greater risk-taking and thrill-seeking.

Knäuper et al.(2004)

Food

Exercise

-

• People believe that lack of exercise can be compensated for by eating less and vice versa.

Werle, Wansink, and Payne (2011)

Food

Exercise

-

• Merely reading about exercise increases snack consumption.

Wansink and Chandon (2006)

Food

Low-fat label

-

• People increased their consumption of food with low-fat labels.

Belei et al. (2012)

Food

Low-fat label

-

• Participants ate more low-fat-labeled products than those without the labels.

Fishbach and Dhar (2005)

Food

Diet progress

0 / -

• The closer participants come to achieving their weight-loss goals, the more likely they are to choose unhealthy food options, and vice versa.

Appendix C. A utility maximization model for compensation behavior

In the following utility maximization model, we assume that a health threat is omnipresent; therefore, the goal of maximizing one’s health utility is always a given.

Appendix D. Measurement instruments

Item formulations, factor loadings, and construct reliability. Note. In the survey, all items were translated to German. FL = Standardized factor loading from confirmatory factor analysis.

Variable

Item

FL

Cron. Alpha

Source

Preventive health behaviors

Over the past month, I have completely limited my physical interaction with others when possible.

0.66

0.73

Own

Over the past month, I have completely avoided crowded indoor or outdoor spaces.

0.74

Over the past month, I have not visited anyone who belongs to a risk group, even if it was a close family member, for example.

0.63

App adoption

I have installed the Corona-Warn-App on my smartphone.

-

Own

Perceived usefulness

The app makes a valuable contribution to my health, as a possible infection with Corona can be detected at an early stage.

0.90

0.96

Venkatesh, Thong, and Xu (2012)

With the app, the health consequences of a Corona infection effectively can be limited for me.

0.81