Abstract

Robotic process automation (RPA) is often used in organisational digitalisation efforts to automate work processes. RPA, and the software robots at its heart, is an equivocal and contentious technology. Adopting the products of theorising approach, this study views metaphors as central sensemaking and sensegiving devices that shape the interpretation of RPA among stakeholders towards a preferred reality of ways of seeing and experiencing software robots. The empirical materials are drawn from research in three Australasian organisations that have implemented RPA. Grounding our analysis in the domains-interaction model, we identified three root metaphors: person, robot and tool, their constitutive conceptual metaphors, and intended use as heuristics devices. Our findings show that metaphor is a powerful device that employees rely on to make sense of their experiences with a new digital technology that can potentially shape their roles, work practices and job design. In addition, managers and automation team members intentionally leverage metaphors to shape others’ perceptions of a software robot’s capabilities and limitations, its implication for human work and its expanding benefits for organisations over time, among others. Metaphor as a precursor to more formal theory provides scholars with a vocabulary to understand disparate experiences with an emergent automation technology that can be further developed to generate a theory of seeing automation and working with automated agents.

‘New technologies … create unusual problems in sensemaking for managers and operators’ (Weick, 2000: 789)

Introduction

Contemporary organisations have used digitalisation as part of broader digital transformation initiatives to maintain relevance in a digital era. Digitalisation through an automation of work processes inevitably transforms the work performed by human employees with profound changes to the role of the human actor in the workplace (Andersson et al., 2022; Klein and Watson-Manheim, 2021; Ranerup and Henriksen, 2019; Staaby et al., 2021; Wessel et al., 2021). Organisations often initiate their automation programme with robotic process automation (RPA), a low-level intelligent automation, to automate routine work (Andersson et al., 2022; Hofmann et al., 2020; Staaby et al., 2021; Syed et al., 2020) or decision making (Ranerup and Henriksen, 2019) previously performed by human employees. To maximise the full potential of automation technologies, organisations will subsequently integrate RPA with advanced intelligent automation technologies (e.g. AI) to deliver end-to-end service automation (Lacity and Willcocks, 2021). The COVID-19 pandemic has led to the significant uptake of automation technologies and the acceleration of digitalisation efforts by 3–4 years (LaBerge et al., 2020). For example, in 2020, 73% of 320 surveyed global executives jumped on the bandwagon of intelligent automation, an increase of 58% reported in 2019 (Horton et al., 2020).

RPA can be defined as ‘a preconfigured software instance that uses business rules and predefined activity choreography to complete the autonomous execution of a combination of processes, activities, transactions, and tasks in one or more unrelated software systems to deliver a result or service with human exception management’ (IEEE Corporate Advisory Group, 2017: 11). In short, RPA automates repetitive and monotonous tasks by configuring software agents, known as software robots or ‘bots’, with clearly defined rules to mimic the actions of a human employee (Wellmann et al., 2020). Since RPA is a relatively new phenomenon, the literature is currently nascent and has mostly focused on its technical capabilities (Syed et al., 2020) or potential and realised organisational benefits (Lacity and Willcocks, 2016a). For example, in their literature review, Syed et al. (2020) found that 45% of research studies and industry white papers follow a technological perspective by describing RPA products that are currently available, their main components – such as an orchestrator that manages the logic and decision making of robot work process, and possible future developments of RPA. Another popular and dominant stream of research takes an organisational perspective by highlighting RPA promises and benefits of increased efficiency, improved service quality, lowered costs, reduced delivery time and significant returns on investment (Hallikainen et al., 2018; Lacity and Willcocks, 2016b; Ranerup and Henriksen, 2019).

However, RPA, and the software robots at its heart, is an equivocal and contentious technology (Weick, 2000). This stems from the novelty and interactive complexity of the technology itself, as well as its impacts on organisational structures, work processes and work relations between human employees and software robots (Andersson et al., 2022; Klein and Watson-Manheim, 2021; Staaby et al., 2021). In particular, RPA is likely not to ‘fully substitute human work but rather lead to a reconfiguration of work, creating new tasks, types, and divisions of work while substituting or transforming other tasks’ (Klein and Watson-Manheim, 2021: 3). This can be seen in how automation of public services favours codifiable work for software robots while excluding work elements that require human judgement and empathy (Andersson et al., 2022).

Despite a few studies that take on a human employee’s perspective to understand impacts of RPA on their work (Andersson et al., 2022; Seiffer et al., 2021; Staaby et al., 2021; Waizenegger and Techatassanasoontorn, 2022), IS research has yet to develop empirically grounded theoretical artefacts (Lowry et al., 2020) with a broader perspective to explain how key organisational participants including developers, managers and employees engage with this new digital technology and shape the dynamics of change and transformation of work. Previous studies found that managers and developers play an important role in driving changes induced by a new digital technology implementation (Andersson et al., 2022; Hekkala et al., 2018; Staaby et al., 2021). For instance, Andersson et al. (2022) and Staaby et al. (2021) found that managers actively engaged in portraying RPA in a positive light as helping free up human employees’ time to do more value-added work and openly acclaim software robots’ ability to work all hours of the day. On the other hand, some human employees may feel that RPA implementation could make work less satisfying and enjoyable, provide less opportunity for advanced skills development, dehumanise systems interaction and lead to redundancies, among others (Riemer and Peter, 2020). These viewpoints on RPA from key organisational actors are likely to influence how changes to work configuration and outcomes unfold.

In this study, we are interested in how different organisational participants engage in sensemaking and sensegiving (Gioia and Chittipeddi, 1991; Weick, 1995b) to facilitate the construction of meaning of software robots, a primary transformational agent of RPA technology, to better understand consequential effects on the way RPA is implemented and functions in an organisation (Syed et al., 2020). Adopting the products of theorising approach (Weick, 1995a), the aim of this study is to specify, clarify and define new concepts that help us to theorise how different organisational participants engage in sensemaking and sensegiving in their everyday interactions with RPA and the implications of these meaning construction efforts on their perceptions and likely actions. In line with Weick’s (1995a) view of theory as a continuum of approximations, the products of theorising in this study are a set of root metaphors, their underlying metaphors and their use as heuristic devices that shape the interpretation of software robots among RPA stakeholders towards a preferred reality of understanding and experiencing software robots. Our product of theorising offers insights into metaphors as a form of sensemaking and sensegiving (Gioia and Chittipeddi, 1991) around an emergent digital technology that can be further developed to generate a theory of understanding automation and working with automated agents (Hassan et al., 2021). Various authors have argued that new concepts are necessary products of the theorising process in IS research that directly engages with the rapidly changing nature of the IT artefact and its contexts of use (Hassan et al., 2019, 2022; Markus and Saunders, 2007). Emergent digital technologies such as RPA are central to organisational automation efforts with consequential changes to organisational and work life (Andersson et al., 2022; Klein and Watson-Manheim, 2021; Riemer and Peter, 2020; Staaby et al., 2021). The new generation of digital technologies is having a profound and transformational impact on human and machine configurations in the workplace (Baptista et al., 2020; Klein and Watson-Manheim, 2021) and the evolution of ‘human-computer integration’ (Farooq and Grudin, 2016). Therefore, now is the time to theorise such emergent phenomena by creating products of theorising to understand conceptions of reality in organisational participants’ experiences with software robots and RPA (Hassan et al., 2021).

Robotic process automation

RPA is a lightweight automation technology which has been implemented across a wide range of industries, such as finance and insurance, telecommunication and healthcare (Ivančić et al., 2019). The extensive uptake of RPA has been driven by its technical capabilities for automation of routine work (Anagnoste, 2017; Hofmann et al., 2020; Santos et al., 2019) and expected benefits for organisations, employees and customers (Syed et al., 2020). From an implementation perspective, RPA automates on the user interface level and does not require integration with existing systems, thus making it relatively easy to deploy without changing their underlying program logic (Aguirre and Rodriguez, 2017; Lacity and Willcocks, 2016b; ThinkAutomation, n.d.; Willcocks and Lacity, 2016). RPA is considered an automation solution suitable for routine processes with a high volume of transactions and requiring access to multiple systems (Aguirre and Rodriguez, 2017; Fung, 2014). Previous studies identify recruiting and onboarding, invoicing, customer management and payroll processes as good candidates to be automated through RPA (Anagnoste, 2017; Eikebrokk and Olsen, 2020; Willcocks et al., 2015).

RPA uses software robots – automated software agents – that follow a choreography of control flows to mimic how human employees perform tasks and can operate in unattended or attended modes (Hofmann et al., 2020). Unattended bots work autonomously and are more suitable for simple processes that usually do not vary between process instances. Attended bots allow some human involvement to trigger the bots to execute parts of the process and monitor their performance (Hofmann et al., 2020; Syed et al., 2020). In addition, software robots can be classified as rule-based, knowledge-based and learning-based. While rule-based software robots rely on structured data input and repeatedly apply predefined rules, knowledge based-bots can deal with unstructured, patterned data input and can, for example, search for information across systems. Learning-based bots, the most sophisticated form of software robots, can use unstructured data and leverage machine learning to improve their performance (Hofmann et al., 2020; Kroll et al., 2016). The case study organisations we studied mainly implemented rule-based bots.

Much of the emerging body of literature on RPA and software robots either focuses on technical capabilities of RPA (Engel et al., 2022; Hofmann et al., 2020; Leno et al., 2021; Van de Weerd et al., 2021; Van der Aalst et al., 2018) or explores RPA from an organisational perspective (Hallikainen et al., 2018; Lacity and Willcocks, 2016a, 2021; Santos et al., 2019). Typically, studies that focus on a technological perspective provide conceptual foundations of RPA with an overview of its constituting concepts, underlying mechanisms, main characteristics and its potential integration with advanced automation technologies. For example, Hofmann et al. (2020) explain that software robots follow a pre-defined, rule-based logic to automate routine tasks while interacting with existing IT systems in a similar manner that human employees do. A study by Leno et al. (2021) proposes process mining techniques as a way to identify work routines that are good candidates to be automated with RPA. In addition to promoting a technical understanding of RPA, some studies (e.g. Engel et al., 2022; Van de Weerd et al., 2021) propose an integration of RPA with artificial intelligence (AI) as a way to expand automation efforts to more complex and less well-defined work tasks.

Another dominant stream of research is concerned with examining the value of RPA to organisations. These studies present use cases to highlight benefits of RPA and how to maximise results for organisations (Hallikainen et al., 2018; Lacity and Willcocks, 2016b, 2017). For instance, benefits in terms of operational efficiency and error reduction are often mentioned in the literature since bots can work constantly and often faster than human employees, with less cost and fewer errors (Kroll et al., 2016; Syed et al., 2020). Since RPA is highly scalable, it can help organisations manage high-volume transactions and peak demands because additional bots can be introduced quickly to ease the backlog (Kroll et al., 2016; Lacity and Willcocks, 2017; Santos et al., 2019; Syed et al., 2020). In addition, some studies emphasise that organisations can achieve mutual benefits for organisations, employees and customers (Lacity and Willcocks, 2021). For instance, RPA can deliver improved service quality with round-the-clock service delivery for customers (Denagama Vitharanage et al., 2020; Lacity and Willcocks, 2017, 2021; Syed et al., 2020). Employees can benefit from the implementation of RPA as mundane and repetitive tasks are transferred to software robots to free human employees to focus on more value-adding tasks such as data analysis, decision-making and interpretation of reports (Denagama Vitharanage et al., 2020; Güner et al., 2020; Santos et al., 2019).

Despite the proposed beneficial outcomes of RPA for employees, automation of work can lead to fear of job loss, competition between robots and employees, downsizing and redundancies (Eikebrokk and Olsen, 2020; Lacity and Willcocks, 2016a; Santos et al., 2019; Suri et al., 2017). This is because automation of routine work with RPA has consequential impact on work and workplaces, leading to changes in organisational structures and redesigned roles for human employees (Staaby et al., 2021). A few studies that pay attention to impacts of RPA on human employees suggest that changes as a result of an RPA implementation take place through an emergent, relational process that involves interactions between the technology, the organisation-centric approach enacted by managers and developers, and human employee responses to software robots (Andersson et al., 2022; Seiffer et al., 2021; Staaby et al., 2021; Waizenegger and Techatassanasoontorn, 2022). However, there is a lack of research that pays close attention to how key organisational participants, including developers, managers and employees, engage with RPA and shape the dynamics of changes to work and workplaces. In this study, our concern is with these organisational stakeholders’ everyday experience of and perspectives on software robots. In particular, we examine sensemaking efforts (by employees) and sensegiving activities (mostly by developers and managers) as ways to facilitate their interpretations of the meaning of software robots, in order to unpack the dynamics of automation of work and consequential effects of RPA in an organisation.

Theorising approach

When people encounter a new technology, they try to make sense of it. As part of this sensemaking process, people ‘develop particular assumptions, expectations and knowledge of the technology, which then serve to shape subsequent actions toward it’ (Orlikowski and Gash, 1994: 175). Such interpretations ‘represent an imagined technology that people assume parallels the actual technology’ (Weick, 2000: 816). Various aspects or features of the new technology and its implementation in a particular context trigger ‘active thinking’ as its users attempt to make sense of the phenomenon (Griffith, 1999). The relative unfamiliarity of the new technology causes them to employ more of their own interpretations to understand what is occurring in their interactions with it (Weick, 2000). In parallel to these sensemaking efforts by those who are directly affected by a new technology implementation, other organisational members, particularly managers, often engage in sensegiving to simultaneously frame positive images of the future and influence employees’ construction of reality in ways that facilitate organisational transformation (Andersson et al., 2022; Cornelissen et al., 2011; Gioia and Chittipeddi, 1991; Sackmann, 1989).

We argue that sensemaking and sensegiving around RPA is encapsulated in the language that people use to confer meaning and interpret this complex technology. To make sense of situations that are new, problematic, ambiguous or unsettled, such as an RPA implementation, people tend to turn to symbolic language, including metaphors to understand and experience something by transferring meaning from a context that is well understood to one that is not (Boraita et al., 2020; Frost and Morgan, 1983; Schultze and Orlikowski, 2001). From a conceptual metaphor perspective (Lakoff and Johnson, 1980), ‘the metaphors we naturally use in language are not linguistic artifices, but indicators of our mental representations. They are cognitive processes that allow us to represent the world around us’ (Boraita et al., 2020: 2). Paying attention to the metaphors that people use in relation to RPA is important because the visualisations, ideals and characteristics they encompass confer meaning on unfamiliar objects, enhance understanding and shape people’s views of this new technology, thus guiding actions in their practice around it (Hassan et al., 2019; Lowry et al., 2020; Schultze and Orlikowski, 2001). As well as their cognitive functions, metaphors, through their proximity to perceived experience, are also vehicles for giving expression to emotions (Fainsilber and Ortony, 1987; Ortony, 1975; Sackmann, 1989). In this sense, they capture the emotional reality of our subjective experience of, say, a new or unfamiliar technology, expressing in language ‘a personal experience, primarily a perceptual experience, but one that probably also has an emotional (affective) dimension’ (Carston, 2018: 200). The emotional dimension of metaphor in sensemaking thus expresses how a perceptual experience makes us feel. Conversely, by influencing employees’ feelings about a phenomenon, the deployment of metaphor in sensegiving can facilitate organisational transformation (Sackmann, 1989).

To be clear, the notion of metaphors is not entirely new in the context of service automation. Some argue that the metaphorical term ‘robot’ in robotic process automation is contentious because RPA does not involve physical robots in an RPA implementation. Instead, RPA relies on a piece of software script that automates tasks previously performed by humans. In this sense, the term ‘robot’ may offer a misleading imagination of possible positives and negatives of RPA. On the other hand, the metaphorical terminology of ‘robot’ and ‘robotic’ offers a useful understanding of the nature of tasks that robots take over from humans are repetitive and mundane, thus the phrase that ‘RPA takes the robot out of the human’ (Willcocks, 2020a).

To build on this anecdotal observation of metaphors used in RPA, our study focuses on the use of metaphors as a form of pragmatic sensemaking and sensegiving around an emergent digital technology like RPA. Sensemaking involves ‘meaning construction and reconstruction by the involved parties’ as they attempt to understand the environment particularly in the context of organisational change (Gioia and Chittipeddi, 1991: 442). Sensegiving refers to the process of how some organisational participants (e.g. managers) shape and ‘influence the sensemaking and meaning construction of others toward a preferred redefinition of organisational reality’ (Gioia and Chittipeddi, 1991: 442).

Metaphor is ‘a basic structural form of experience through which human beings engage, organize, and understand their world’ (Morgan, 1983: 601). In essence, metaphors can be seen as both a product (ways of looking at things or organising principles of thought and experience) and a process that reflects how new perspectives of reality materialise and facilitate our understanding of the world (Cornelissen et al., 2008; Lakoff and Johnson, 1980; Schön, 1993; Tsoukas, 1993). Schön (1993) uses the notion of generative metaphor to invoke a mechanism in which metaphors carry over frames or perspectives from one domain of experience to another to generate new meanings (Morgan, 1980; Oswick and Oswick, 2020).

In an organisational context, metaphors are mostly used either as sensemaking devices for understanding environments or as sensegiving tools to create or shape environments and influence perceptions and actions of others (Cornelissen et al., 2008; Oswick and Oswick, 2020). Metaphors are powerful devices to aid understanding due to ‘their vividness, compactness, and ability to convey the inexpressible’ (Oswick and Oswick, 2020: 69). For example, the metaphor of a computer’s central processing unit as a brain helped transfer familiar functions of the brain to a relatively unknown object in the early days of computing, thus allowing us to understand new experiences in terms of a familiar domain (Hassan et al., 2022). However, it is important to recognise that a specific metaphor highlights part of the concept while hiding others (Lakoff and Johnson, 1980). Therefore, to fully comprehend an abstract concept such as a software robot will likely require several metaphors, each of which gives a partial view of this novel phenomenon. For example, Riemer and Peter (2020) and Willcocks (2020b) employ the robo-apocalypse metaphor, which paints a picture of widespread job losses, in engaging in the debate on the implications of RPA. In another study, Klein and Watson-Manheim (2021) use the master–slave relationship metaphor to refer to the human–robot relation where a human is in control by directing the actions of the robot.

In addition to its role in guiding our interpretations of reality, metaphors have been found to provide a justification for action, shape organisational reality and influence people’s thinking and feelings, among others (Cornelissen et al., 2008, 2011; Gioia et al., 1994; Inns, 2002). This way of using metaphors (i.e. sensegiving) is particularly pertinent in the context of organisational change because metaphors are useful devices to facilitate the construction of meaning and interpretation of the change. For example, Gioia et al.’s (1994) study identified several metaphorical representations used by an incoming university president to provide rationales for the chosen strategic planning process, to influence stakeholders’ thinking and avoid conflicts. These metaphorical expressions sourced imagery from music: ‘We are trying to identify a dominant chord from amid the cacophony of individual planning documents’; and war: ‘I know we have to bite the bullet, but I don’t want to immerse this thing in a huge struggle’, and ‘We have to make them comfortable with this or we are in a political war’. This approach of using metaphors fits with what Inns (2002) call metaphor as a hegemonic tool to influence perception and interpretation. In another study on organisational transformation, Sackmann (1989) found a range of gardening metaphors such as ‘cutting’, ‘pruning’ and ‘planting/nurturing’ employed to convey the tone of growing and working together in the midst of hard decisions.

In the IS literature, studies have mostly focused on specific metaphors employed by individuals to better understand issues in IS development (Hekkala et al., 2018; Hirschheim and Newman, 1991), IS use (Dudézert et al., 2021; Jackson, 2021; Kendall and Kendall, 1993) and different discourses in the study of IS related phenomena (Schultze and Leidner, 2002; Schultze and Orlikowski, 2001). For example, Hekkala et al. (2018) looked at how IS project team members make sense of the system and the IS development project. Although they found a range of metaphors used by the project members, war-related metaphors (e.g. civil war, picking ones’ battles, and bombs are falling) were the most prevalent and influential on the trajectory of the project and outcomes. To help practitioners visualise the importance of culture in IS use, Dudézert et al. (2021) discussed the use of the well-known French metaphor of Astérix to develop a deeper understanding of cultural aspects of (non) appropriation dynamics in knowledge management systems. In his illustration of the discourse dynamics approach, Jackson (2021) showcased the use of metaphors such as journey, illness and anatomy to offer preliminary insights into general workplace automation experiences and implications.

There are different views of how metaphor operates. The comparison model emphasises a carrying over of characteristics from a metaphor often in a concrete source domain to an entity in an often-abstract target domain (Oswick et al., 2002). In particular, this model focuses on a unidirectional mapping process from a source to a target domain by highlighting similarities or overlapping areas between them (Oswick and Oswick, 2020). However, growing evidence in the cognitive science literature indicates that the comparison model cannot account for inferences generated beyond the similarities (Fauconnier and Turner, 1998, 2002; Glucksberg and Keysar, 1990). In other words, the underlying analogical process is a form of conceptual blending that involves an interaction between the source and target domains to generate new meaning. In this sense, metaphor involves ‘the conjunction of whole semantic domains in which a correspondence between terms or concepts is constructed, rather than deciphered, and where the resulting image and meaning is ‘creative', with the features of importance being emergent (Cornelissen, 2005: 751). Building on this line of thinking, Cornelissen (2005) developed the domains-interaction model with the underlying metaphorical explanation involving a structural analogy (i.e. mapping of correlated structures between a source domain and a target domain) and further conceptual blending (i.e. an elaboration on further instance-specific transferral of information between the target and source concepts). We use the domains-interaction model as a framework to ground our analysis of the role and implications of metaphors in sensemaking and sensegiving around software robots in this study.

In our subsequent analysis, metaphors form an explanatory account of different ways of making sense of software robots that render visible experiences of RPA and different understandings of this emergent digital technology (Morgan, 1986; Oswick et al., 2002; Tsoukas, 1993). In this sense, metaphor as a knowledge product serves as a precursor to more formal theory that offers meaningful insights in the early phases of theory development on sensemaking and sensegiving around automation and software robots (Boxenbaum and Rouleau, 2011; Cornelissen, 2005).

Method

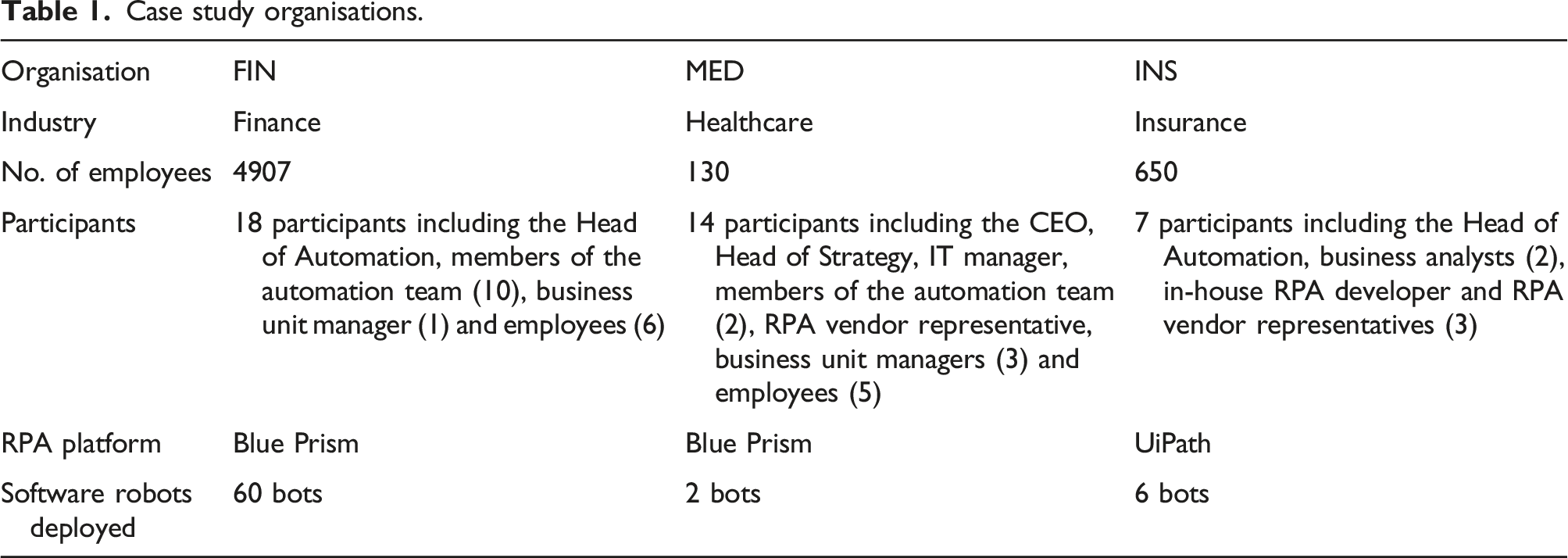

Case study organisations.

The first organisation is a financial institution where we conducted 18 semi-structured interviews with members of the RPA automation team, managers and employees of relevant business units. The financial institution started to implement RPA in 2016 using Blue Prism as an RPA platform provider. At the time of the study, they had deployed 60 attended and unattended software robots that execute a range of business processes including customer address changes, strategic pricing, credit alteration approvals, anti-money laundering management and retirement scheme switches, among others. For example, the automation of the customer change of address process means that the process is now performed by a combination of human employees and software robots. Mailroom staff open a returned customer mail and scan it. An optical character recognition (OCR) application reads the customer’s details so that a software robot can compare the scanned details with the information in the customer relationship management system and stop the delivery of correspondence to the address if the customer details do not match. The bot also generates an email to the customer requesting them to update their address. In the credit alteration approval process, a software robot assists human employees in their work by removing a time-consuming part of the process. An administrative employee uses a smart form to ‘trigger’ the bot with a customer’s internal identifier. The bot uses that to retrieve details of the customer’s financial information from different organisational systems. It then populates a spreadsheet with the retrieved information and uses the spreadsheet’s formulas to calculate the loan-to-value ratio, before sending the spreadsheet to another employee for approval of the application. In the case of the retirement scheme switching process, bots are completing a customer’s switching request by updating the required systems without the involvement of a human employee.

The second organisation is a healthcare organisation, in which we conducted 14 interviews with senior managers, members of the digitalisation team overseeing RPA implementation, the IT manager, a representative from the RPA vendor, and managers and employees from customer-facing and back-office functions. The organisation has automated the receipting process in the finance department and the online patient booking process in the contact centre with the help of an external consultancy using the Blue Prism software. For the receipting process, the software robot uploads relevant claims to various health insurance claims portals. The bot works autonomously at designated times during the day and night. If it encounters any errors, it registers those cases as business exceptions and sends the exception report to the administration staff who processes the exceptions. When patients book their appointments through the organisation’s online booking system, another software robot is used to register the appointment in the patient management system due to the lack of integration between the two systems. Contact centre employees then check each booking that the bot has made by looking at a doctor’s referral and comparing it with the appointment that the patient has made. If there is a match, the employees confirm the booking. If not, they make changes to the booking by liaising with the patient.

The third organisation is an insurance company where we interviewed seven people: the Head of Automation, two business analysts and the in-house RPA developer, as well as three representatives from the RPA vendor: the project manager, a designer and a developer. The company has automated six processes using the UiPath platform in different areas of their operations including claims processing, policy cancellation and supplier payments. For example, the company has automated parts of its vehicle glass claims process, using software robots to perform manual actions previously undertaken by customer representatives and claims centre administrators. At one end of the process, a bot validates submitted online claims by checking claim details such as customer identity and coverage against policy records in the company’s customer management system. If the details match, the bot generates a form that is sent to a contracted windscreen repairer, who then contacts the customer directly to arrange the repair. The company subsequently automated the final step of the process, once the repairer’s invoice has been paid by the company, using another bot to check the details against the customer’s policy so that the claim can be closed. Even though this final step represents a very short task for a human employee to perform, the volume of claims to be closed every day meant that automating it removed a mundane activity.

Based on an interpretative research approach (Myers and Walsham, 1998; Walsham, 2006), our initial orientation to the data analysis was explorative (Monteiro and Parmiggiani, 2019). We started by examining our empirical data to investigate how our participants talked about the process, work practices and tools used in RPA implementation. We initially developed descriptive codes that captured our participants’ views and reflections on RPA development and implementation. In working inductively, we noticed that our participants often used metaphors and similar linguistic expressions to refer to the software robots central to RPA in their interpretive accounts. This caused us to analyse the range of metaphors we encountered and conceptualise the various ways in which they were deployed by the participants, together with the effects or consequences of doing so.

Our metaphorical analysis comprised five steps and was informed by the domains-interaction model proposed by Cornelissen (2005), Cameron’s (2007) analysis of metaphor in language use and the approaches adopted by Schultze and Orlikowski (2001) and Hekkala et al. (2018). In the first step, we analysed the interview transcripts for their metaphorical content. Reading through each transcript, we identified all of the linguistic expressions used by participants in relation to software robots that we considered to be metaphorical. Each instance of a metaphorical expression was extracted and placed in a data table, together with the participant and their organisational role, plus a short note outlining the significance of the instance to the researcher. Following Hekkala et al. (2018), we excluded common idioms that seemed to indicate an established conversational usage rather than a way of making sense of a software robot (e.g. ‘firefighting’ in dealing with problematic bots). In the second step, drawing on our initial notes and interpretation, we grouped together linguistic expressions that we thought might reference a particular metaphor based on the similarity of their components. For example, we formed a group of expressions that seemed to belong to the semantic field of children (e.g. ‘Robby’s [a bot] dad’, ‘He was being naughty’, ‘He’s a toddler’, ‘These are teenagers’). This initial grouping enabled us to focus on similar instances when analysing each metaphorical expression in more detail.

Step three involved a more detailed metaphorical analysis of each linguistic expression in the data table. In much of our data, the contrasting terms that indicated metaphorising was occurring were not explicitly juxtaposed (e.g. the software robot is a child). Instead, the linguistic expression typically contained lexical items (sections of language) that referred implicitly to what was being talked about and that served as a metaphorical ‘vehicles’ (Cameron, 2007). Identification of the source domain of the potential metaphor thus relied on our examination of the linguistic evidence and ability to show that extra meaning could be produced by making a connection between the terms (e.g. by imputing that someone wanting to ‘smack’ the software robot implicitly positions the bot as a child). For each linguistic expression, we: 1. Established the contextual meaning of the lexical item in the target domain. That is, how it applied to the software robot entity in the context of the interview account (Cameron, 2007). 2. Determined the more basic meaning of the item in its source domain. If this meaning was in contrast to the contextual meaning, and there was potential for extra meaning to be generated as a result of bringing the two together, then we were confident that we had identified a metaphorical expression (Cameron, 2007). 3. Established the structural similarity between the concept referenced in the lexical item in the source and target domains. That is, what is shared between the target and source that produces metaphorical coherence (Cornelissen, 2005; Schultze and Orlikowski, 2001). 4. Explored specific connections that involved implications from the source domain transferred to and blended with elements of the target domain (software robots in the context of RPA) to generate emergent meaning (Cornelissen, 2005). 5. Focused on what the metaphorical expression appeared to enable organisational participants to do. That is, how the expression might be used in sensemaking and sensegiving around RPA development and use. In doing so, we paid attention to which particular groups of people or organisational roles used each expression (e.g. automation team, employees and managers) in their interview accounts (Hekkala et al., 2018).

For example, consider this comment by an employee in the healthcare organisation: ‘She [the bot] doesn’t answer back, and she can work all night.’ This short phrase contains a number of lexical items that suggest robots are able to work continuously and without questioning authority. The basic meaning of these items relates to stereotypical behaviours of a human employee or worker. When transferred from this source domain to the target context, they create a disjunction or incongruence. Clearly software robots, as pre-programmed scripts, cannot ‘answer back’ and ‘do not need to sleep’. The structural similarity between the two domains is that both human employees and software robots are workers. There is an element of humour in comparing the bot’s attributes as a worker with those of a human employee. The further connections made in blending these elements generate the meaning of a software robot as a member of the work team, albeit one that has the ‘ideal’ traits of submissiveness and hard work.

In the fourth step of our analysis, we confirmed our tentative groupings of metaphorical expressions based on their semantic connectedness and suggested a potential conceptual metaphor that we felt best represented each grouping. For example, the various metaphorical expressions that were semantically related to children or their characteristics were represented by the metaphor software robot is a child. This was not a straightforward process, and we went through several iterations in changing and amalgamating the groupings (Cameron, 2007). The eventual six conceptual metaphors that we propose are only potential since ‘we only have access to the language of participants and not to their cognitive processes, other than as revealed through language use’ (Cameron, 2007: 116).

The fifth and final step consisted of aggregating the seven identified metaphors into a smaller number of higher-level, more theoretical categories. These categories group metaphors that we argue belong together and that comprise distinctive and different ways of seeing and constituting software robots (Cornelissen and Kafouros, 2008). We use the concept of a ‘root metaphor’ to articulate these categories. Root metaphors can be defined as ‘the dominant or defining way of seeing’ (Inns, 2002: 309). They are frames that support a broad area of meaning and provide a reasoned basis for understanding more specific phenomena (Smith and Eisenberg, 1987). As the product of our analysis, root metaphors offer a way to theorise inductively how people make sense of software robots in their situated contexts using metaphors. We suggest that they are heuristic devices that offer insightful perspectives (Cornelissen, 2005) that help us to think about and better understand people’s engagement with the emerging digital technology of RPA.

Findings

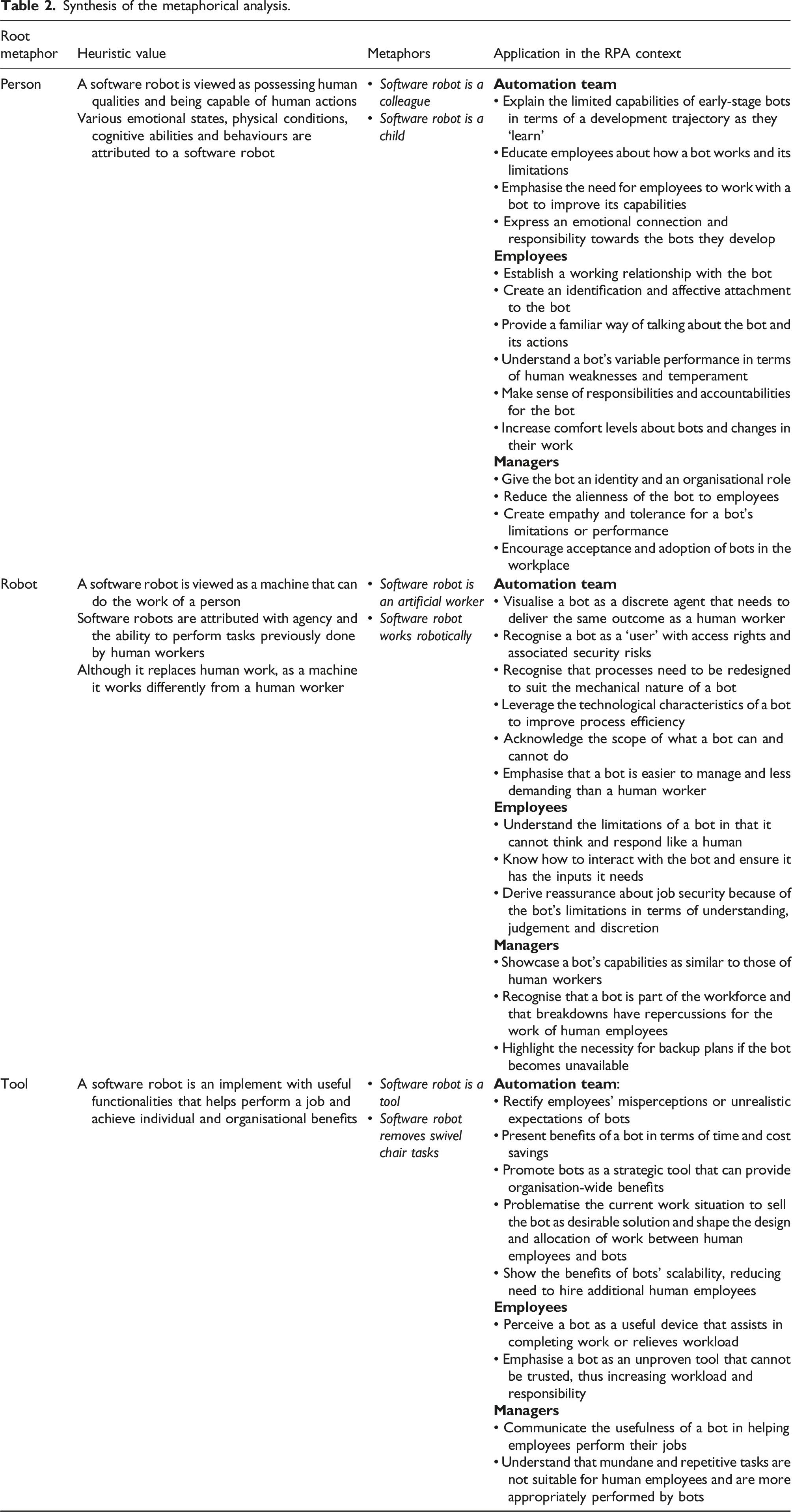

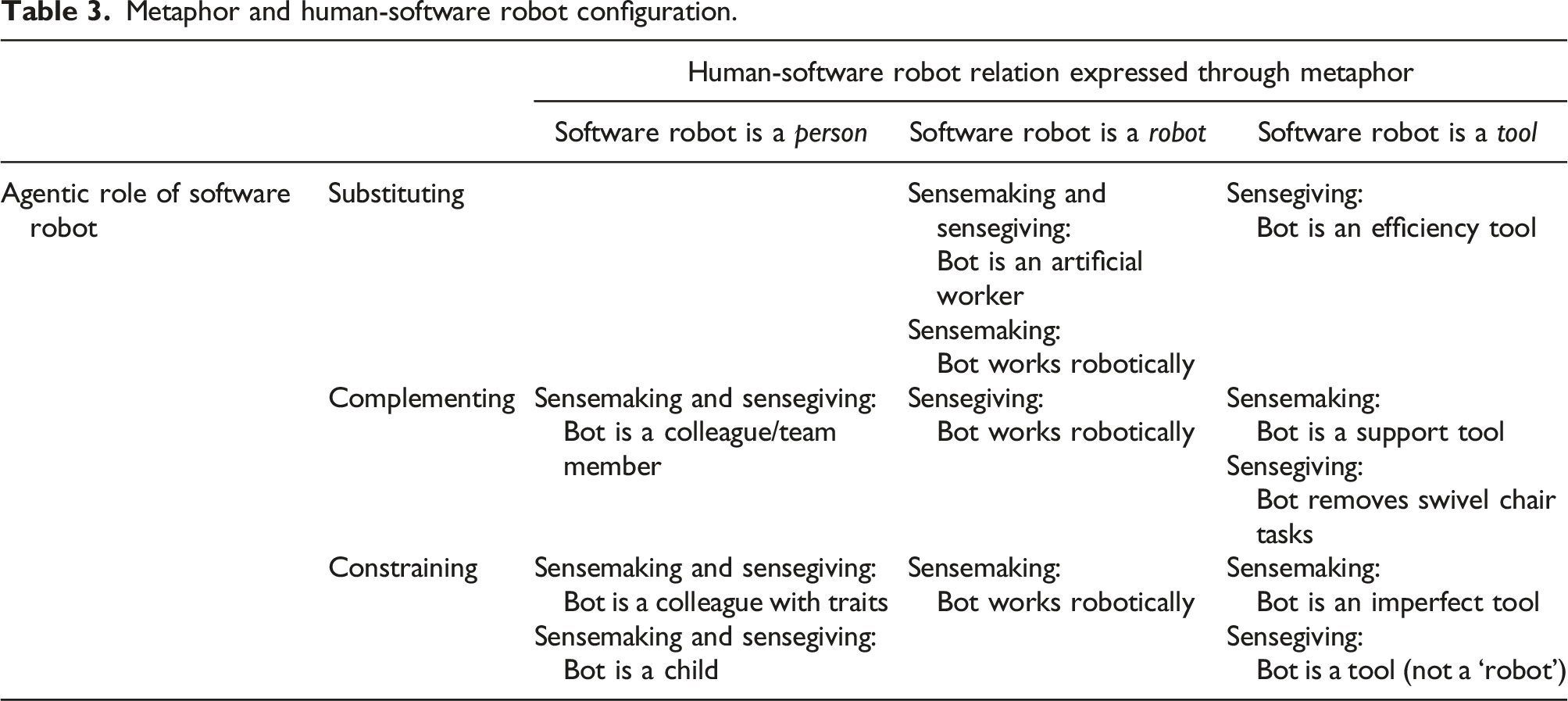

Based on our analysis, we identified the three root metaphors of person, robot and tool. Each root metaphor encompasses distinctive yet nuanced conceptual metaphors that are semantically connected to the root metaphor. For example, the software robot is a child metaphor, which is grouped under the ‘person’ root metaphor, highlights dependence on caregivers, basic cognitive and behavioural abilities, and the necessity to learn and be taught. Mapping this structure to the target domain of the software robot allows participants to make and give sense of the rudimentary state of early-stage bots that still need to be further refined to reach their full capacities. The person root metaphor also includes the specific metaphor of software robot is a colleague. The ‘robot’ root metaphor encompasses the software robot is an artificial worker and software robot works robotically metaphors, while the ‘tool’ root metaphor comprises the software robot is a tool metaphor as well as a distinctive ‘swivel chair’ metaphor.

Drawing on the structural analogy between the source and target domains and further conceptual blending of elements between the two (Cornelissen, 2005), for each of six conceptual metaphors that we identify, we elaborate on their metaphorical operation, the way employees, managers and automation team members use them to make or give sense of software robots, and the implications of this meaning-making around working with this emergent technology. It is important to note that participants sometimes use different metaphors to express their perceptions of software robots. For example, they describe the software robots with the attributes of a child yet also refer to it as a robot or colleague in the same sequence. In those cases, we highlighted the dominant metaphor as reflected in the original voice of the participants yet want to acknowledge that there is often no exclusive ‘way of seeing’ software robots.

All names of participants, organisations, software robots and systems have been disguised using pseudonyms.

Person metaphor

Personification involves the metaphorical transfer or attribution of human characteristics to an inanimate object, ‘where something inanimate is treated as if it has human qualities or is capable of human actions’ (Knowles and Moon, 2004: 7). In the context of RPA, personification metaphors involve a cross-domain mapping where a software robot is specified as being a person, with aspects more correctly belong to a human being (Dorst, 2011). There were numerous examples in our data of the metaphorical transfer of typical human traits like emotions, physical states, cognitive abilities and behaviour patterns from the source domain of a human person to the target domain of software robots. Each personification emphasised specific human features that were mapped onto the non-human bot, generating three prevalent conceptual metaphors that depicted software robots as a particular kind of person (Dorst, 2011): as a colleague or as a child.

Software robot is a colleague

When people use this personification metaphor, they talk about the software robot as if it were a human colleague that performs tasks, works alongside them in processes and generally fulfils their work responsibilities. Often this was associated with referring to the software robot by a nickname within the work team, something that occurred in all three of the organisations we studied. These names were typically human names, sometimes a diminutive or corrupted form of ‘robot’ or reflecting some aspect of the process a bot was working on: ‘Everyone of course has different names for it … Every team calls their robot something else … Like, I heard a few people calling the [strategic pricing process] robot Sparky’ (FIN_15, Business Analyst).

Giving it a name was an essential step towards treating the bot as a person and associating with it as a colleague: People talk about the robot as if it’s like a person. They go, ‘The robot – it’s kicking out a lot of exceptions today’. And I know one of the other teams calls it [the bot] Robby … Their nickname for it is Robby and they’re like, ‘Robby’s having a few troubles this morning’ … And no one has ever told them to do that. They just … People do start to associate with it. (FIN_4, Risk Manager)

In the above excerpt, ‘kicking out’ and ‘having a few troubles’ are linguistic expressions that in this context are suggestive of human experience. Part of establishing a working relationship with the software robot (Willcocks, 2020a) involves giving it human characteristics typical of a colleague, including behaviours and personality traits. Thus, a bot that is temporarily unavailable might be ‘sleeping’ or being ‘lazy’ – respectively, a human behaviour or trait that might be ascribed to a colleague: ‘Every time Bobby [the bot] used to go offline, they’d be like, ‘Oh, he’s sleeping again. He’s so lazy.’ He’s very much become a character. People do refer to him like a person’ (MED_4, Digitalisation Coordinator). It is important to note that every reference to a bot by a human name did not always indicate an affective attachment to a personified software instance. When the names were used outside the work team, for instance by technical staff, it was often as shorthand to simply indicate the process being automated. Nonetheless, this usage reflects an acceptance of the wider practice of referring to a software robot as if it were a person.

Associating personality traits with a software robot also allows employees to make sense of the bot’s way of working. In the following excerpt, a senior manager portrays the bot as a ‘stickler for rules’ in order to explain why the bot is unable to execute its task (booking an appointment) if the name the patient provides is not a perfect match with their registered name in the patient database: The most recent one we’re doing is that Bobby is a real stickler for rules. We try to talk about him as a person so that people like him. ‘He’s a stickler for rules’, and you sort of put these personality traits onto Bobby. ‘Oh, yes. He follows rules really tightly’. That means that if ‘Sam Smith’ tries to book an appointment and in our patient database he’s ‘Samuel Smith’…., he [Bobby] says, ‘There’s no match’. (MED_2, Head of Strategy)

The use of ‘Oh, yes’ here is intended to indicate comprehension on the part of an employee understanding an aspect of the bot’s operation. The implied personality trait in the metaphorical expression ‘He’s a stickler for rules’ reminds the employee of the inflexibility of the underlying rules in the bot’s script. The same manager explained that attributing human capabilities to the bot also had an educational benefit in helping employees without technical knowledge to understand how software robots work and interact with other systems: When you explain it like that, people … get what you’re talking about. Instead of explaining that we are now going to use an API [application programming interface] between – now he’s [the bot] going to really chat to that backend of – We try to use more colloquial terms. (MED_2, Head of Strategy)

Colloquial terms like ‘chatting’ humanise the actions of the bot and explain what the bot is doing in metaphorical language that makes sense of the bot as a colleague. This approach was confirmed by another participant in the same organisation: ‘Not everyone is very good at understanding IT and technical stuff. I mean trying to talk to people about how he [the bot] works and what way, it’s easier just to imagine him as a person’ (MED_4, Digitalisation Coordinator). However, taking the personification of software robots to its logical conclusion risked employees overestimating the bot’s cognitive abilities: ‘Maybe that’s one of the downfalls of pretending he’s a human, because they [employees] assume that he will think’ (MED_2, Head of Strategy).

Personification creates a sense of identification with software robots as participants invest in them psychologically (Willcocks, 2020a). For example, one manager noted ‘I would talk to Mary [a bot] like she was a person. When she actually doesn’t work or she needs an update, I say, “Mary’s having a little hissy fit or tantrum”’ (MED_3, Finance Manager). This extended to conversations with colleagues: ‘In the team, we talk about Mary like she’s one of the team. [For example,] Mary is actually down so she must be sick. Then I would ring [the IT Manager] and go, “What’s going on with Mary?”’ (MED_3, Finance Manager). Having a tantrum or becoming sick are human behaviours readily associated with a colleague. Using the colleague metaphor in this way helps participants to make sense of and relate to problems encountered in working with the bot. Just as human colleagues can have ‘bad days’ where they are upset, go slow or refuse to work, so do software robots when technical issues occur that prevent them from working as intended.

Seeing a software robot as a colleague could involve employees transferring typical human behaviours like sleeping and waking from the source domain to the target domain, providing a way of understanding the bot’s work schedules and requesting a change to improve their collaboration with the bot by aligning their work schedules: So, they [my team] would say to me…. ‘Can you ask what time is Robby waking up and what time is Robby going to sleep?’ And we need Robby to wake up earlier … I've got one [employee] that comes in at six o’clock. So, if she wanted to send a smart form to Robby but Robby only wakes up at eight, she’s going to sit for two hours before she can do anything … So, then they extended Robby’s waking hours. (FIN_11, Business Unit Manager)

The healthcare organisation senior manager quoted earlier suggested that personifying the bot is an intentional strategy to reduce the alienness of software robots and promote empathy towards them among employees: ‘We try to talk about him as a person so that people like him’. Indeed, a common collegial role assigned to a software robot was that of a team member: ‘It’s good because he [the bot] is a part of the team, and the team does have to work with him. It’s nice to consider him in that way’ (MED_4, Digitalisation Coordinator). This deliberate personification (Dorst, 2011) involves the purposeful use of metaphor to change the employees’ perspective on the software robot (the target of the metaphor) by making them see it from the different conceptual source of a colleague. In this case, it emphasises a close working relationship with the bot (as being part of their team) and not just a computer system that they use. Consider the following excerpt in which two back-office employees at the healthcare organisation discuss the bot that they work with: MED_7: We refer to her as Mary … MED_8: She is part of our finance team. MED_7: My colleague.

Acknowledging the bot as part of the team and knowing that its breakdown has repercussions for its human colleagues, one of the back-office employees kept other colleagues informed to establish transparency and clarify delay in work progress: I think the branch had an email on Tuesday just to say, ‘Hey guys, sorry, but Mary has not worked since Saturday. You'll have noticed that none of your insurance [claims] have been receipted’. Because some of them [branch employees] are waiting for the receipting to happen … I always like to keep the branches up to date because I've been there. I sent them one on Wednesday morning saying, ‘She [Mary] has worked overnight and gotten your insurance [claims]’. I got three emails back from three of my branches, [saying], ‘You slavedriver. Did she have a break?’ She is looked at as being part of our team. (MED_8, Back-office Employee).

While meant in a humorous way, the reactions of the branch employees calling the back-office employee a ‘slavedriver’ for making the bot work all night without ‘a break’ clearly reinforce the personification that assigns the bot a role as a colleague in their team. Here, the conceptual metaphor of the software robot as a colleague also has an affective element that creates empathy and makes the bot seem less alien (Cameron, 2008). This affective function of the bot’s personification was similarly observed by a participant in the financial institution: ‘There’s a sort of affection now with – especially with the workflow person that … [is] dealing a lot with Robby [the bot], yeah’ (FIN_11, Business Unit Manager).

In one of the organisations in our study, the healthcare organisation, we even observed participants deliberately attributing gender to the software robots. In the linguistic expressions that did this, typical stereotypes associated with human gender roles are transferred from the source domain in order to convey specific (desirable) characteristics of software robots. For example, by giving the software robot a female name, the (female) finance manager emphasised that the bot possesses the stereotypical strengths of women, including the ability to multitask, being efficient and paying attention to detail – all of which made the bot a preferred colleague in a predominantly female team: That’s why we named her [Mary] as well. And I call it ‘her’ because I believe that women are more efficient. We can multitask a lot better. I'm not saying that men can’t do it, but we’re more efficient and we can multitask. We pay attention to detail. We wanted a woman in the team. (MED_3, Finance Manager)

Gender was also utilised when comparing the relative performances of different software robots. The CEO observed ‘When we started the onboarding process, Bobby was making a lot of mistakes, so the team was giving Bobby a lot of grief. There was even talk like Mary was way better because Mary is a woman’ (MED_1, CEO). Indeed, a team member noted: ‘We had a meeting with our IT manager, and he was saying how Mary was working great and Bobby was having some issues. Someone made a comment like, “Oh, a typical man”’ (MED_5, Digitalisation Coordinator). Despite the poorer performance of the ‘male’ bot arising from the underlying complexity of the automated process, the number of system interfaces required, the complexity of those interfaces and the relative newness of the bot, the participants playfully attribute its many mistakes to it being a ‘male’ robot. This assignation of gender, a human characteristic, to an inanimate object suggests both a high degree of identification with the software robots and their inclusion in the traditional banter between colleagues in a diverse workforce.

Software robot is a child

Another conceptual metaphor that depicted software robots as a particular kind of person was that of a software robot as a child. When participants used this metaphor, they often allocated typical family roles to members of their team in relation to the bot, such as parents: That’s what everyone calls me [Robby’s mum]. Because I went on holiday and they were like, ‘Oh Robby missed you, Robby missed his mum. He was being naughty. He was sick while you were gone’. And I was like, ‘Sorry!’ (FIN_13, Back-office Employee)

Being ‘naughty’ and missing one’s mother are all behaviours associated with a child. Using the child metaphor allowed this employee to express her emotional reaction towards the bot when it worked slowly in feeding cases back to the human employees for further processing: ‘I get annoyed with Robby. I just want to smack Robby and be like, “Why didn’t you quickly send these to me before everyone went home?”’ (FIN_13, Back-office Employee). Traditionally, and in some cultures today, parents smack their children if they misbehave and cause trouble for the parents. Wanting to ‘smack Robby’ transfers a parental disciplinary measure for ‘being naughty’ from the source domain of the child to the target domain of the software robot.

The use of these metaphorical expressions allocates certain caring responsibilities to those employees in such ‘parental’ roles, as well as a degree of accountability for the behaviour or misbehaviour of the software robot. In this case, the back-office employee given the role of the bot’s ‘mother’ exercises her responsibility for the bot by escalating the incident to another colleague in the automation team, the bot’s ‘father’: ‘I’m always like “Guys, Robby’s feeling sick. Please just be mindful. I’ve contacted Robby’s dad and hopefully Robby’s dad can fix him and give him some medicine”, you know?’ (FIN_13, Back-office Employee). This metaphorical language suggests an emotional attachment to the bots on the part of employees. This attachment was very striking in the language used by a member of the automation team who oversees the software robots working in the financial institution: ‘[I’m] still heavily invested. They’re still my babies. So, I see one missing, I’m going to check on that’ (FIN_10, Robot Controller).

Using the child metaphor also promoted understanding and acceptance of the limited capabilities of bots that are in the early stages of development. Transferring the mental models that we have of human children with often basic cognitive, mental and behavioural abilities from the source domain to the target domain of the software robot allows employees to understand that just as children follow a trajectory of human development, software robots need to be taught in order to be able to execute tasks and processes effectively: ‘We’re like, “Yeah, but we need to teach our bot how to do that. So can you talk us through it a little bit slower?”’ (INS_2, Business Analyst). The automation team used the child metaphor to help employees understand that developing the bot requires time and effort, but they can harvest the benefits in the long run: He’s [a bot] a toddler. We need to teach him everything. He doesn’t know everything yet. I think that helps people relate a bit more to him, like, ‘Oh, we need to teach him and work with him, and he will improve. We just need to put in the groundwork.’ Which they’ve seen the rewards of now, but it took a while. (MED_5, Digitalisation Coordinator)

Robot metaphor

Perhaps unsurprisingly given the RPA terminology, some participants invoked the metaphor of a robot when making sense of software robots. Here we need to distinguish between the labelling of automated agents as ‘software robots’, itself metaphorical, and how the conceptual metaphor is mobilised in participants’ talk about these software products (Boraita et al., 2020). The metaphorical ‘robot’ designation originally used by proponents of RPA appears to have been deliberate, in order to convey the sense of an ‘artificial worker’ that could substitute for human labour as part of a ‘virtual workforce’ (High, 2019; Hodson, 2015; ThinkAutomation, n.d.). From the online Britannica Dictionary, the basic meaning of a robot is ‘a machine that can do the work of a person and that works automatically’ – in other words, an automaton. Unlike traditional robots and automata, which have a tangible, physical presence, software robots or ‘bots’ are digital and invisible (Lebeuf et al., 2019; ThinkAutomation, n.d.). In the context of RPA, our participants drew a structural similarity between the concept of a robot as automaton and a software robot in two important ways: as an artificial person that works – an artificial worker, and as a machine that works quite differently to a human. That is, a software robot is ‘robotic’ in acting as a human worker in system interactions (Syed et al., 2020) and ‘robotic’ in how it works automatically with ‘robotic accuracy and efficiency’ (ThinkAutomation, n.d.).

Software robot is an artificial worker

The metaphorical imagery of the software robot as a non-human, automated agent that performs tasks that a human worker would otherwise do is powerful and persistent. In the most obvious sense, the bot as an artificial worker replaces a human worker (either partially or completely) in the performance of the organisation’s work: ‘In this particular case, they have processes where you completely remove the human out of the equation’ (FIN_9, Data Analyst). Conceptualising software robots as analogous, but non-human, workers, appears to enable members of the automation team to visualise a bot as a discrete entity or agent that needs to perform a set of discrete tasks to achieve the same outcome as a human worker: ‘We can just imagine how a human or a robot will perform the task and get a very detailed explanation of each action’ (INS_6, RPA Developer - Consultant). Developers can see things ‘from a robot’s perspective’ (INS_5, RPA Designer - Consultant). The dominance of the robot characterisation among the automation team over, for example, the personification seen among many business unit employees is reflected on by a participant who worked with both groups: ‘I guess it depends on who I’m talking to. If I’m talking to the [technical] support team, he’s a robot, if I’m talking to the call centre, he’s a person’ (MED_4, Digitalisation Coordinator).

As artificial workers, software robots are perceived as entities that act, that have agency in the performance of their work. For example, we hear of bots finding and fetching, matching and checking or looking and putting: ‘From that point, the robot will go through that list each day and find out … fetch out the new claims and then do all the client record matching, the checks, make sure everything’s all correct’ (INS_7, Project Manager–Consultant). Similarly, ‘Then the robot would go looking here for that information; the robot goes there and then the robot puts it there’ (FIN_11, Business Unit Manager). Although, presumably the participants who are describing these actions of the software robot are well aware that it is a pre-programmed script executing these functions, their references to actions performed by an artificial worker reinforce the task-oriented and performative view that dominates the technical RPA process: ‘And our bot will pick that [request from a customer] up, which comes through as an email, and actually perform the human actions of cancelling the policy, processing a refund if it’s available’ (INS_4, RPA Developer).

In a further connection between the software robot and an artificial worker, rather than a piece of software interacting with another computer system, the bot becomes analogous to a human user, complete with a user account and specific access rights: ‘They have the same access to applications as humans do. They operate under the same controls as humans do. Why [give] them system accounts rather than human [user] accounts …, rather than thinking this is sort of mimicking a human?’ (FIN_1, Head of Automation). Levels of access to organisational databases, systems and applications are determined by the human worker role the bot is ‘mimicking’: ‘Like human beings, right? You only have this role, [so] you can only approve certain things … So, the ABC robots will not have access to those employee access levels, the ones that the XYZ robots would have’ (FIN_10, Robot Controller). Conceptualising the bot as an artificial worker performing a defined organisational role further helps the technical staff to understand and implement the necessary system security and privacy standards for the software robots. The meaning that emerges from this blend of information from the source concept of a robot as an artificial worker and the target concept of a software robot involves an image of the bot as a user capable of presenting a security risk: ‘We don’t want the robot to be a superuser as an individual bot can do random stuff to the database, which is quite risky’ (INS_5, RPA Designer - Consultant).

As artificial workers, software robots were sometime perceived as superior to human workers. For example, consider the phrase, ‘All my robots are fine. I can run them at midnight, it’s fine. They don’t complain and don’t take holidays even … And like I said, they don’t need tea breaks’ (FIN_10, Robot Controller). This sequence contains lexical items that suggest software robots are able to work late, do not complain and do not take tea breaks or holidays. These items reference stereotypical behaviours of a human employee or worker. When transferred from this source domain to the target context, they create an incongruence in that software robots cannot complain and do not need a break. While the structural similarity between the two domains suggests that both human employees and software robots work, the further connections made in blending these particular elements of human workers’ behaviours with those of software robots generate the meaning of an artificial worker that works robotically rather than as a human worker would. The participant who made this statement was responsible for overseeing the operation of software robots in her organisation. Her deployment of this metaphorical expression was intended to convey to the interviewer that as artificial workers, software robots were easier to manage and could work continuously compared to their human counterparts.

On the other hand, as artificial workers, software robots also at times presented problems for their managers. In the following excerpt, an analyst explains how the non-availability of a bot, presumably for technical reasons, is analogous to a human calling in sick: ‘Robots also disappear, like they terminate. Terminate basically means your employee’s called in sick. So, it’s like … I was expecting ten employees to come [to work], [but] only eight are working today’ (FIN_15, Business Analyst). Thus, like regular employees, bots do not always turn up for work, necessitating a plan to cover for their absence: ‘You’ve always got to have a backup plan, so if Robby [the bot] is not working today do we all just fall down and go home, or can we just do what we’ve always done?’ (FIN_11, Business Unit Manager). This would normally involve a human member of the team taking over the task again. However, newer staff members might not have the training or experience to do so. In the following excerpt, the same manager discusses how, when human employees learn to work alongside software robots as artificial workers, they may come to rely on the bot’s ‘expertise’: There’s a new [employee] … She’s never done the manual process. All she’s been taught to do is a smart form and send it to Robby [the bot]. If [Robby] was sick … she wouldn’t be able to prepare the memo [that the bot does] … because she’s never done that … She doesn’t have the understanding of all of that. That’s the only thing when you do get a robot to do a task, you lose that value of understanding for the employee. (FIN_11, Business Unit Manager)

In such examples, the division of labour between humans and automated agents becomes clearly distinguished and more absolute in a sense.

Software robot works robotically

The preceding discussion emphasised the perceived status of a software robot as a worker, albeit an artificial one. Eventually, however, this metaphorical focus breaks down when the automation team are confronted with the differences from a human worker arising from the bot’s artificial nature. As a machine, a software robot is a made object, a technical artefact, with particular ways of working and associated limitations and strengths. While a software robot may do the work of a person, it does those tasks differently: ‘We’re making the robots work in a bit of a different way to a person’ (INS_1, Head of Automation). Although a robot replaces human work, ‘it may not resemble human beings in appearance or perform functions in a humanlike manner, (Moravec, 2021). One participant described this vividly as ‘Robots don’t have eyes, right?’ (FIN_9, Data Analyst) to highlight the differences to human workers. Instead of using eyes and a brain to identify and combine separate pieces of customer information from multiple computer screens, the software robot of course reads lines of XML and combines data fields to get the same information. As the same participant explained ‘When you’re thinking about a robotic process, yes, ideally you want to mimic what the human does. But mimicking doesn’t mean do exactly this thing that a human does. It’s mimicking the output of the step the human does’ (FIN_9, Data Analyst). In other words, ‘When you design the future process, you’re designing it for a robot rather than a human’ (FIN_1, Head of Automation).

Focusing on the software robot as artificial and non-human reminds the automation team that while the outcome of the process automation will be the same, the way the software robot works offers technical advantages that can improve process execution. For example, when the bot can more efficiently connect directly to other applications and databases using an application programming interface rather than via a (human) user interface: ‘The robot doesn’t have to use an Outlook [email] user interface. It could just use an Outlook API, things like that, to improve the current process’ (INS_5, RPA Designer - Consultant).

This focus emphasises the ‘robotic’ or programmed nature of how the process is automated (Willcocks, 2020a) and the limitations associated with that. Software robots are rule-based and execute tasks by following a sequence of predefined steps: ‘Is it all logic rules based, everything’s in an Excel spreadsheet sort of thing?’ (INS_4, RPA Developer). Bots cannot handle the ‘grey areas’ of tasks that require judgement, understanding, even human discretion or empathy: ‘Robotics is great for processes that are black and white. You know, it’s either this or it’s that, there’s nothing in between or there’s really strict rules … A robot can [only] do what it’s programmed to do’ (FIN_12, Back-office Employee). The methodical, even mechanical, nature of how a software robot was perceived to work was reflected in metaphorical expressions that referenced bots ‘breaking down’, especially if something ‘throw[s] a spanner in the works for your bot’ (INS_2, Business Analyst): Actually, watching it [the bot] work was very interesting, because … I can see what it’s doing. It’s doing the same things a human would do, just in a very specific, methodical order. But any time there was a spanner [in the works] it was just really interesting watching it struggle. (FIN_12, Back-office Employee)

Metaphorically emphasising the robotic way a bot works also helps the automation team explain to the business unit what the bot can and cannot do, for example, when soliciting information on a work process to be automated: We actually educate them on … how granular we do have to get when building a bot, because they’re so used to just instinctively doing something a certain way … A bot can’t do it instinctively. We have to build rules around it. (INS_2, Business Analyst).

Similarly, human workers interacting with a bot need to understand its limitations, such as the need for precision in data entry: ‘It’s about feeding the robot the information that it needs to do the steps that it wants to take’ (MED_5, Digitalisation Coordinator). In addition, the limitations of the bot make it less threatening to human workers that co-exist alongside it. Here, the difference between artificial and human workers is reassuring (potentially not always the case with intelligent automation as the automation field advances): They realised that … it can’t learn by itself. It’s a very simple robot. It needs to have yes/no answers …, very simple base rules. That changes everyone’s perceptions, so they’re not as intense. Like, ‘Oh, it’s not going to take my job.’ (FIN_6, Digital Workplace Manager)

Tool metaphor

In addition to the person and robot metaphors used to make sense of a software robot, participants across all organisational roles, including employees, automation team members and managers, variously saw a software robot as a tool with different connotations. From its source domain definition, a tool is described by the online Britannica Dictionary as an implement used to do a job or to help achieve something. In this functional sense, a tool is often associated with the characteristics of usefulness and helpfulness.

Software robot is a tool

Some employees drew on an underlying correspondence of a tool as a useful device to highlight a software robot’s benefit to their work. In contrast, other employees drew on the limited usefulness of an unhelpful tool to highlight a bot’s failures and shortcomings and suggest that it is an imposed and unproven add-on from an organisation that increases their workload due to its dubious performance. Those employees who saw a software robot as a useful tool with helpful functionalities did so because the bot assists them by either completing their tasks or executing tasks autonomously and, therefore, reducing their workload. For example, a call centre employee at the healthcare organisation explained that a software robot, Bobby, collects necessary information about patients and their referrals as part of an online patient booking process. The collected information is helpful when call centre employees need to contact patients to ask for further details: It [the bot] is a pretty handy tool. We get the referrals through it. It takes out a lot of stress. Like, we know what the patients are talking about because we’re looking at their referral. It’s really helpful in that way. (MED_11, Call Centre Employee)

Although the usefulness and helpfulness characteristics of a tool are often linked to assisting a user to complete a task, this common characteristic between a software robot and a tool enables the participant to create new meaning of a bot’s usefulness, which is to lessen work strain.

In contrast, other employees saw a software robot as an unproven tool with unacceptable performance that had been imposed on them by the organisation. As a result, they did not develop trust in the bot’s work, suggesting that it did not complete the work properly or was too slow. In particular, these employees often compared the bot’s performance unfavourably with that of human workers. For example, despite referring to the software robot, Bobby, as ‘him’, this manager in the healthcare organisation viewed the bot as a tool rather than as a colleague: No, no, as a tool. And I think that’s why I double-check him [Bobby]. Because if it was a person, I don’t know, I guess because if you’ve got this credibility, I wouldn’t tend to double-check your work all the time. I guess I look at him as a tool. (MED_12, Receptionists Manager)

By attributing credibility to a person and juxtaposing a lack of credibility to the software robot as a tool, this participant is emphasising the lack of desirable usefulness of the bot. Further, the bot is perceived as essentially redundant and adding unnecessary work to an already significant workload: ‘It’s a tool that’s on top of everything else that we’ve got to do … A lot of people were very thorough to begin with so they’re like, “Actually, I’m fine, I’m good … I don’t need Bobby”’ (MED_12, Receptionists Manager).

Similarly, for an employee from the financial institution, redesign of a process so that a software robot populated the memos required for a lending decision did not offer a useful solution for her work. Instead, the shift of responsibility from a human co-worker to a bot aggravated her uneasiness and increased the perceived burden of accountability: But more responsibility lies on us if we don’t actually check the memo and something goes wrong. I get the blame too for not rechecking everything. So, more responsibility means that we have to go and check each and every thing, yeah … Just another system. (FIN_13, Back-office Employee).

Although the automation teams at the financial institution and insurance company also use the tool metaphor in relation to software robots, they do so to create different meanings. For example, some purposefully employ a tool metaphor to correct employees’ misperceptions or high expectations of what a bot can achieve by highlighting that a bot as a tool mostly works, but will have occasional failures: You will have exceptions. We have STP [Straight Through Processing] 80 per cent, so 20 per cent of the instances you will have an exception. You can have bad days where that happens more than often … It’s just a tool and it works very well, and it saves you time, but sometimes… it doesn’t. (FIN_9, Data Analyst)

Another automation team member attempted to explain that a software robot is rather a simple script-following tool and not a high-functioning ‘robot’ to help employees develop a more realistic expectation of a bot’s capabilities and performance: When we first started talking about robotics, some people … were thinking that it might be a real robot. And it is a bit of a marketing term, like it’s really just like a macro kind of thing. And so, in the beginning we did a lot of roadshows saying, ‘Here’s the myths about robotics and this is what it actually is; it’s just a dumb piece of software’. (FIN_2, Process Automation Manager)

In addition to using a tool metaphor to help correct employees’ perceptions about a software robot’s performance, the automation team at the financial institution generalised the task-specific usefulness of a tool in the source domain to build up a case for the bot’s benefits in the target domain to increase its visibility and acceptance among managers and employees. They deliberately elevated a software robot from an operational tool to a strategic tool that can deliver organisation-wide benefits: Our strategy served us well … We didn't want it being seen as an operations tool. So again, the benefits are big and broad. The tool can do many things for many different people. So, our strategy there was to try and share the love, is sort of how we described it. (FIN_1, Head of Automation)

Similarly, automation team members at the insurance company mostly use the cost and time savings benefits of a software robot to not only showcase the typical value of a bot as a tool that can perform straightforward and mundane tasks for human employees but also as a tool that allows an organisation to shape the design and allocation of work activity from the time saved deploying bots: ‘We generally like to look at let’s say how many transactions are processed a month obviously … There may be recorded data on how long somebody has taken to carry that out. It could be costs as well, costs associated with not having a bot do it’ (INS_3, Business Analyst) ‘How much time that actually equates to handing back to the business based on the original estimates for a human to process’. (INS_4, RPA Developer)

Swivel chair