Abstract

Public concern about ‘fake news’ skyrocketed following the 2016 US presidential election and the Brexit referendum, and has only intensified since then. A burgeoning body of research on the topic is emerging, and conceptual clarity is vital for this research to converge into a cumulative body of knowledge; the purpose of this article is to underline and address some of the conceptual clutter and ambiguities around the concept of fake news and situate it within its social context. To do so, we first discuss the problems with current terminology and conceptualisation, and then draw on recent developments on the ontology of digital objects and their attributes to shift the focus from fake news to false messages, a type of syntactic digital objects comprised of content and structure and characterised by attributes of editability, openness, interactivity, and distributedness. Then we expand this concept further by placing it within a network of actors and digital objects. Our analysis uncovers several areas of research that have been overlooked in the study of fake news.

Introduction

During the run-up to the 2016 US presidential election and its aftermath, the phrase ‘fake news’ entered the vernacular, as the (then-Republican-contender) US President Donald Trump and his supporters used (and continue to use) the phrase to discredit negative media coverage. In contrast, the mainstream media and some scholars use the phrase to refer to false news stories disguised as authentic ones (e.g. Allcott and Gentzkow, 2017; Lazer et al., 2018). The phenomenon – in either sense – has become a universal cause for concern, as evident from the introduction of new laws that aim to stifle independent journalism under the pretext of fighting fake news in several countries (McKirdy, 2018), as well as the circulation of false or misleading news stories during election times and beyond across the world. Furthermore, the concern has spread beyond the domain of politics. For example, business scholars and practitioners have raised concerns about and studied the issue (e.g. Aral, 2018; Ipsos, 2018; Knight and Tsoukas, 2019; Moravec et al., 2019; Ordway, 2018; Wardle, 2018a), while the World Health Organization (WHO) has described the immense wave of false information around the COVID-19 pandemic as an infodemic (Ågerfalk et al., 2020; Mirbabaie et al., 2020). Environmental sustainability represents another area that is particularly vulnerable to the threat of fake news (Cavanagh, 2018).

While fake news, propaganda, misinformation, disinformation, and related concepts are not new to disciplines such as communication and political science (Brummette et al., 2018; Humprecht, 2019; Tandoc et al., 2018), there is still much to learn about this recent wave that is wreaking havoc across the globe (Bernard et al., 2019). Among these, a fundamental question concerns the terminology and the ontology of fake news: What constitutes and qualifies as fake news, and is fake news an accurate and adequate term to label our phenomenon of interest? In this article, we posit that the answers to these questions are not straightforward and that addressing them will yield valuable theoretical and practical insights. Early evidence of this can be found in disciplines such as communications, philosophy, journalism, and even computer science, where several scholars have tried to address these questions, generating actionable insights into the process (e.g. Finneman and Thomas, 2018; Habgood-Coote, 2019; Tandoc et al., 2018; Wardle, 2018b; Zhou and Zafarani, 2020). However, despite an emerging body of research on fake news in information systems (e.g. Bernard et al., 2019; Clarke et al., 2021; Janze and Risius, 2017; Kim and Dennis, 2019; Kim et al., 2019; Moravec et al., 2019, 2020; Torres et al., 2018), we are not aware of any attempts to clarify and delineate the concept of fake news in the information systems (IS) literature.

A second and related missing piece in the puzzle of fake news research is placing the concept in its socio-technical context (Orlikowski and Iacono, 2001). In particular, we need to consider how the role of digital and social media (with their vast reach, and novel and unique features and business models) has introduced new twists to the old story of fake news – specifically, how easy-to-consume and attention-grabbing content published in digital formats (e.g. time-limited user stories, short user-generated videos), innovative methods of news delivery (e.g. through chat apps), and the emergence of novel media platforms (e.g. social journalism platforms such as Medium) have fundamentally transformed media ecosystems (Anderson, 2017; Ferne, 2017; Kallinikos and Mariátegui, 2011). In addition, an array of social actors with different motives and modi operandi are involved in the production and consumption of fake news (Allcott and Gentzkow, 2017; Wardle, 2018b). Finally, algorithms and crowds have taken over the role of news gatekeeping (Allcott and Gentzkow, 2017; Aral, 2018). As such, positioning fake news within its socio-technical context will help us build a more comprehensive picture of the phenomena and to highlight opportunities for further research (Bernard et al., 2019).

The emerging IS research on fake news so far has focused on examining important but narrow empirical questions, and we are not aware of any attempts to delineate the broader social network of this concept. We believe that IS scholars are well placed to contribute these missing pieces, given that the discipline has considerable research depth in a number of relevant areas. Specifically, as we explain in this article, we argue that fake news and other types of falsehood are better conceptualised as a subset of a broader concept, which we call a false digital message defined as a digital object, with content, structure, and several important attributes such as editability, interactivity, openness, and distributedness (Faulkner and Runde, 2009, 2013, 2019; Kallinikos et al., 2013). Moreover, we place these messages in their personal, social, and technological contexts. Where appropriate, this is complemented by drawing on other relevant areas of IS research, such as social media (Alaimo and Kallinikos, 2017; for example, Aral et al., 2013; Kitchens et al., 2020; Leonardi, 2018; Leonardi and Vaast, 2017; Miranda et al., 2016) and deception in computer-mediated communication (e.g. Abbasi et al., 2012; George et al., 2008, 2013, 2018; Ludwig et al., 2016; Zhang et al., 2016a; Zhou et al., 2004).

Therefore, our work offers two main contributions. First, it provides clarity around the contested concept of fake news. Such clarity is important in many respects, including improving research rigour through construct clarity (MacKenzie et al., 2011; Suddaby, 2010) and by highlighting how a newly popular phenomenon such as fake news relates to ‘a more generic, archetypal’ phenomenon in the wider discipline (Rai, 2017: v). This is further underlined in our second contribution, which is placing the new concept in its social context, and in doing so we uncover several areas for future research. Yet our goal here is not to produce a final solution to these issues. Rather, we intend to highlight the need for a more critical scrutiny of the concept of fake news and its associated terminology.

The rest of this article is organised as follows. First, we provide an overview of various definitions and conceptualisations of fake news in the literature and highlight some concerns. Then we lay out our proposed conceptualisation of a false digital message and examine its material and social aspects. Then we position this false digital message within the broader net to highlight the actors, processes, and impacts, as well as critical relationships. We conclude by discussing the implications of this new conceptualisation and highlight several future research directions for IS research on false messages.

Current definitions of fake news and the need for conceptual clarity

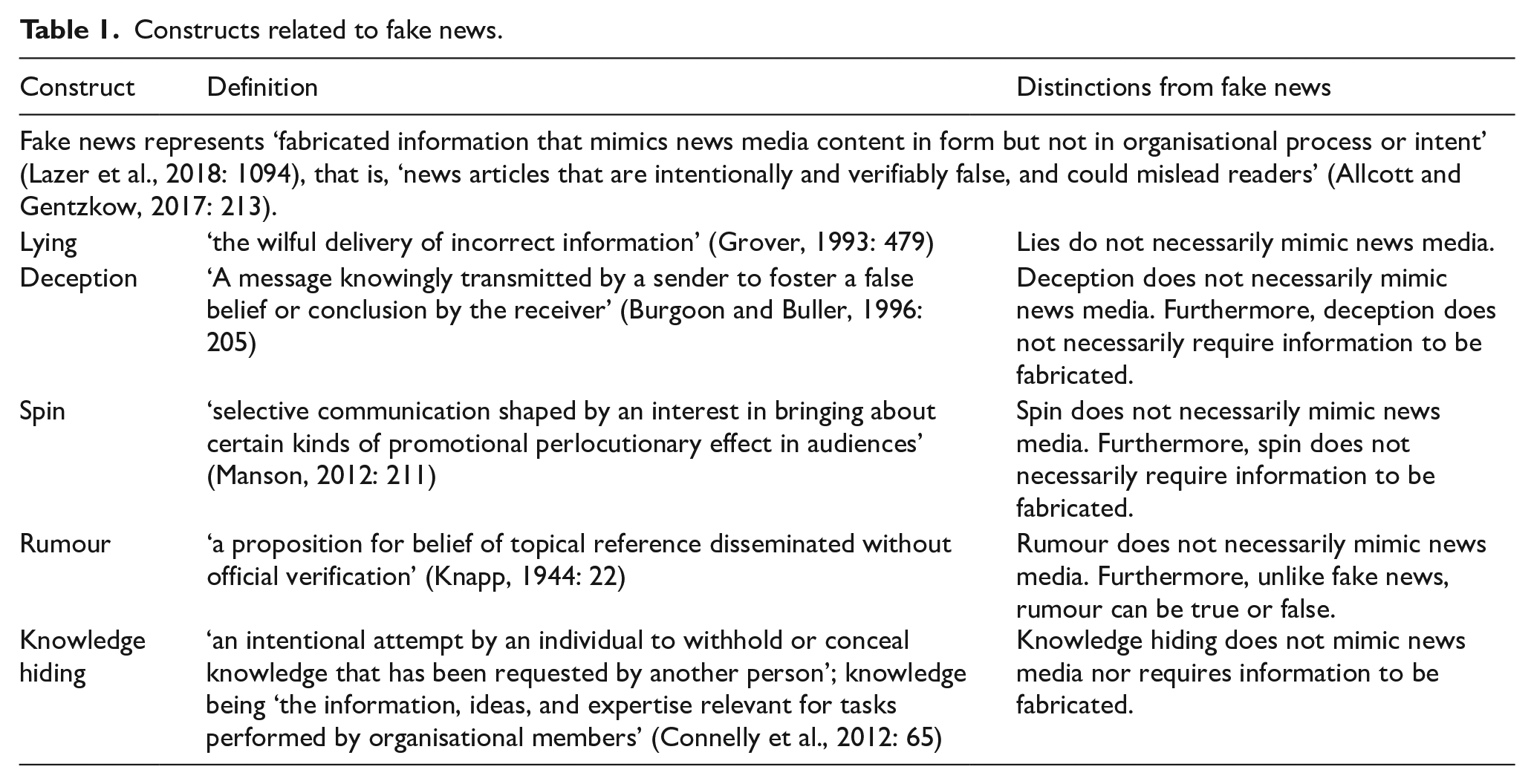

Several definitions of fake news have been offered in scholarly work, most of which include falsehood and the news form as common factors. For example, in their analysis of fake news reach during the 2016 US elections, Allcott and Gentzkow (2017: 213) define fake news as ‘news articles that are intentionally and verifiably false, and could mislead readers’. Similarly, Lazer et al. (2018: 1094) define fake news as ‘fabricated information that mimics news media content in form but not in organisational process or intent’. However, conceptual and empirical evidence increasingly suggests that fake news is not a clear-cut concept. For example, the term has a history of its own in academia, particularly in journalism and mass communication studies, predating its current claim to fame and not necessarily used in the same sense (Tandoc et al., 2018), as explained later in the article. Furthermore, it has been argued that fake news does not adequately cover the entire range of the falsehood and deceit-related problems circulating on the Internet today (Wardle, 2018b). In addition, while the interest in fake news in its current popular usage is new, its referent phenomenon is much older (Farkas and Schou, 2018), and therefore has been examined under many other guises (see Table 1). A final concern arises from the term’s highly politicised status (Brummette et al., 2018) and frequent use to denigrate critical journalism (Habgood-Coote, 2019; Lazer et al., 2018; Vosoughi et al., 2018; Wardle, 2018b). This is supported by some empirical evidence that the use of the phrase in academic discourse might prime the public audience to lower their trust in real news (Van Duyn and Collier, 2019).

Constructs related to fake news.

Despite these concerns, we are not aware of any attempts in IS to address these issues using our reach of cumulative body of knowledge. This is somewhat alarming given that IS and management scholars have highlighted the need for concept and construct clarity, arguing that its lack may lead to ‘confusion about what the construct does and does not refer to, and the similarities and differences between it and other constructs that already exist in the field’ (MacKenzie et al., 2011: 295). As a result, ‘scholars fail to build theory, communicate effectively, and think creatively’, hindering the creation of a cumulative research stream (Bansal and Song, 2016: 106). Specifically, in the context of fake news, concerns about whether the construct has gained any surplus meaning – ‘meaning beyond the parameters of its original intended definition’ (Suddaby, 2010: 348) – from prior scholarly and popular use as well as whether it adequately covers the essential characteristics of the phenomenon under study appear salient, as we discuss below.

It is possible that IS scholars have thus far overlooked this need for conceptual development because of a sense urgency in addressing more practical concerns such as how to identify and counter fake news, and a concern that a focus on concepts and definitions might divert our attention from these important questions. They may argue that it suffices for researchers to provide a definition of fake news within the scope of their studies, and that fake news is the most appropriate terminology by virtue of its popularity, which can increase the relevance of IS research on the topic. Although we concur that addressing the more practical concerns such as the mitigation of fake news is important, evidence from other disciplines demonstrates that attempts at defining and clarifying its conceptual aspects will not necessarily distract researchers from addressing such concerns. In those disciplines, conceptual studies have already proven relevant by informing practitioners about the implications of using specific terminology. For example, based in part on expert testimony from scholars such as Claire Wardle (e.g. Wardle, 2018a, 2018b; Wardle and Derakhshan, 2017), the UK House of Commons recommended that ‘the Government rejects the term “fake news”, and instead puts forward an agreed definition of the words “misinformation” and ‘disinformation’’ (House of Commons Digital, Culture, Media and Sport Committee, 2018: 8). The UK Government concurred and adopted this recommendation (House of Commons Digital, Culture, Media and Sport Committee, 2019). In addition, for us as researchers, questions of rigour (such as concept and construct clarity) are as important as questions of relevance.

As such, we contend that the natural starting point for IS fake news research is to provide conceptual clarity. In the following sections, we first elaborate on three problems with the concept and terminology of fake news, namely, its prior use in scholarly work, its current politicised use in the vernacular, and its inadequacy to cover the entire range of falsehood circulating on the Internet. Then, drawing on recent IS developments on the ontology of digital objects (e.g. Faulkner and Runde, 2013, 2019; Kallinikos et al., 2013), we put forward our own conceptualisation of fake news as a subset of false messages.

Prior use of fake news in scholarly work

Based on a review of the journalism literature during the period 2003–2017, Tandoc et al. (2018) identified six operationalisations of fake news: (a) news satire, (b) news parodies, (c) news fabrication, (d) photo manipulation, (e) advertisement and public relations, 1 and (f) propaganda. What is common across these apparently divergent operationalisations is ‘how fake news appropriates the look and feel of real news’ (Tandoc et al., 2018: 147). However, this is not always done with the intention to deceive and induce false beliefs in the recipient. For example, Tandoc et al. (2018) found that the most common operationalisation of fake news in the prior literature represents news satires (e.g. Achter, 2008; Amarasingam, 2011; Balmas, 2014; Baym, 2005; Gaines, 2007). News satire (e.g. The Daily Show) ‘represents programming where either the program’s central focus or a very specific and well-defined portion is devoted to political satire’ (Balmas, 2014: 432). News satires sometimes mimic news programmes in style, but they are promoted primarily as entertainments programmes, and their use of exaggeration and other satirical devices does not promote false information.

Tandoc et al. (2018) classified all the operationalisations of fake news identified in the academic literature along two common axes: their facticity (truthfulness) and their source’s intention to deceive. They note that the current use of the term primarily maps onto the quadrant where facticity is low and the intention to deceive is high (i.e. news fabrication and photo manipulation) and excludes other operationalisations.

Current use of the phrase in the vernacular

In addition to its academic use, fake news has a history in the vernacular, with its use being documented as early as 1898 (Mourão and Robertson, 2019). However, the most concerning aspect of its colloquial use arises from its current popularity in politics and media. Consequently, Vosoughi et al. (2018: 1146) contend that the phrase fake news ‘has been irredeemably polarised in our current political and media climate’, to the point that ‘it has lost all connection to the actual veracity of the information presented, rendering it meaningless for use in academic classification’. Empirical evidence seems to support these claims. A study of Twitter space usage of fake news (Brummette et al., 2018) found that the term is indeed highly politicised and appears commonly among Twitter handles and hashtags related to US President Donald Trump (e.g. @realdonaltrump, #trump) as well as media outlets he frequently targets (e.g. @cnn). Similarly, a content analysis of social and other digital media found that fake news is used to advance three political projects: to question ‘digital capitalism’ (the use of digital media for economic gains), to question right-wing politics and media, and to question liberal politics and media (Farkas and Schou, 2018). Another study found that the use of the term fake news by the elite, even when used in a different sense from politicians, negatively impacts public trust in news media and the ability to identify genuine news, through a priming effect (Van Duyn and Collier, 2019).

As such, while some scholars believe that fake news could be a useful term because its popular appeal draws more attention to this pressing issue and makes it more salient (e.g. Lazer et al., 2018), others find it too politically loaded to be useful for scholarly research (e.g. Aral, 2018; Vosoughi et al., 2018). Furthermore, while the political nature of fake news is salient to some disciplines and research settings (Farkas and Schou, 2018), it may be of less interest to IS scholars.

Inadequacy of fake news as a concept

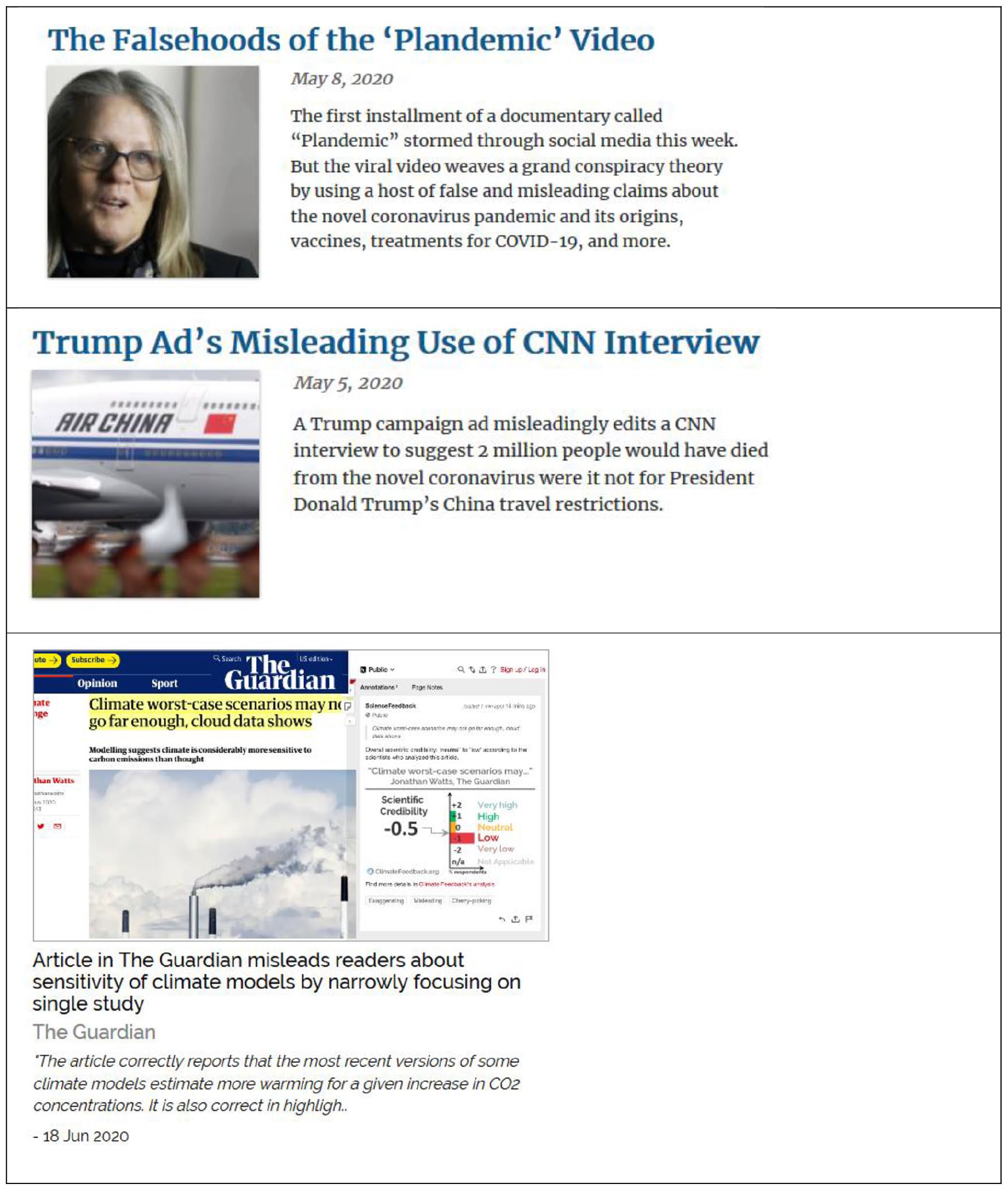

In addition to the issue of ‘surplus meaning’ from its previous use, fake news also does not cover the range of interrelated falsehood problems we face on social media and other online environments (Habgood-Coote, 2019; Wardle, 2018b). Figure 1 represents several such examples. The top and middle examples are taken from the fact-checking website factcheck.org, 2 while the bottom example is taken from Climate Feedback, 3 which are independent fact-checkers affiliated with universities and non-profits:

The top image depicts a viral documentary-style video interview with a discredited scientist, which was later removed from several popular social media platforms because it contained false claims and conspiracy theories about COVID-19 (Travis, 2020).

The middle example represents a misleading political advertisement by the US President Donald Trump’s re-election campaign, in which the audio of a CNN interview is edited and combined with an unrelated video footage to suggest that CNN correspondents acknowledge the effectiveness of the President’s COVID-19 policies, whereas in reality, the correspondents were discussing policies adopted by local authorities. 4

The bottom example demonstrates an evaluation of an article published in the reputed outlet The Guardian about climate change that was deemed misleading by Climate Feedback because it selectively and narrowly reports on results from a single model and underreports competing explanations. 5

Various types of false digital messages.

We argue that none of these examples represent an instance of fake news, that is, ‘news articles that are intentionally and verifiably false, and could mislead readers’ (Allcott and Gentzkow, 2017: 213) or ‘fabricated information that mimics news media content in form but not in organizational process or intent’ (Lazer et al., 2018: 1094). The first example represents conspiracy theories presented in the form of a documentary film rather than news; the second example represents a political advertisement rather than news; and the third example represents a misleading news article; however, it does not contain fabricated information under the guise of news, nor is it entirely and possibly intentionally false (most scientists evaluating it acknowledged that it contained truthful but misleading information). A recent content analysis of nearly 400 fake news stories supports our claim; specifically, they found that messages are a blend of false information, sensational and biased content, and clickbait (Mourão and Robertson, 2019) that appear in various forms including memes (e.g. Smith, 2019), social media posts, and advertisements, among others (Wardle, 2018b). The question is then, from a rigour perspective, can researchers study these examples as fake news? If not, does it mean that these examples are not of interest or relevant to fake news research?

We argue that neither of these choices are satisfactory. On one hand, labelling all forms of online falsehood as fake news when they are not presented in news format is a violation of research rigour expected from high-quality research (MacKenzie et al., 2011; Suddaby, 2010; Zhang et al., 2016b). On the other hand, excluding non-news forms of falsehood from fake news research could undermine its relevance by overlooking real-world issues and particularly several high-stakes existential problems faced by humanity today, such as the denial of climate change and the so-called ‘anti-vaxxer’ movement that are fuelled by a mixture of fraudulent or disputable research, reports or advertising, and partisan opinions repeated in online echo-chambers. In addition, it may also result in ‘Type III’ errors in formulating IS research problems, that is, focusing on the immediate issue without considering ‘a more generic, archetypal problem’ under which the immediate issue might be studied, thus preventing us from making a ‘broader and long-lasting scholarly contribution at the level of the generic problem’ (Rai, 2017: v; emphasis in original).

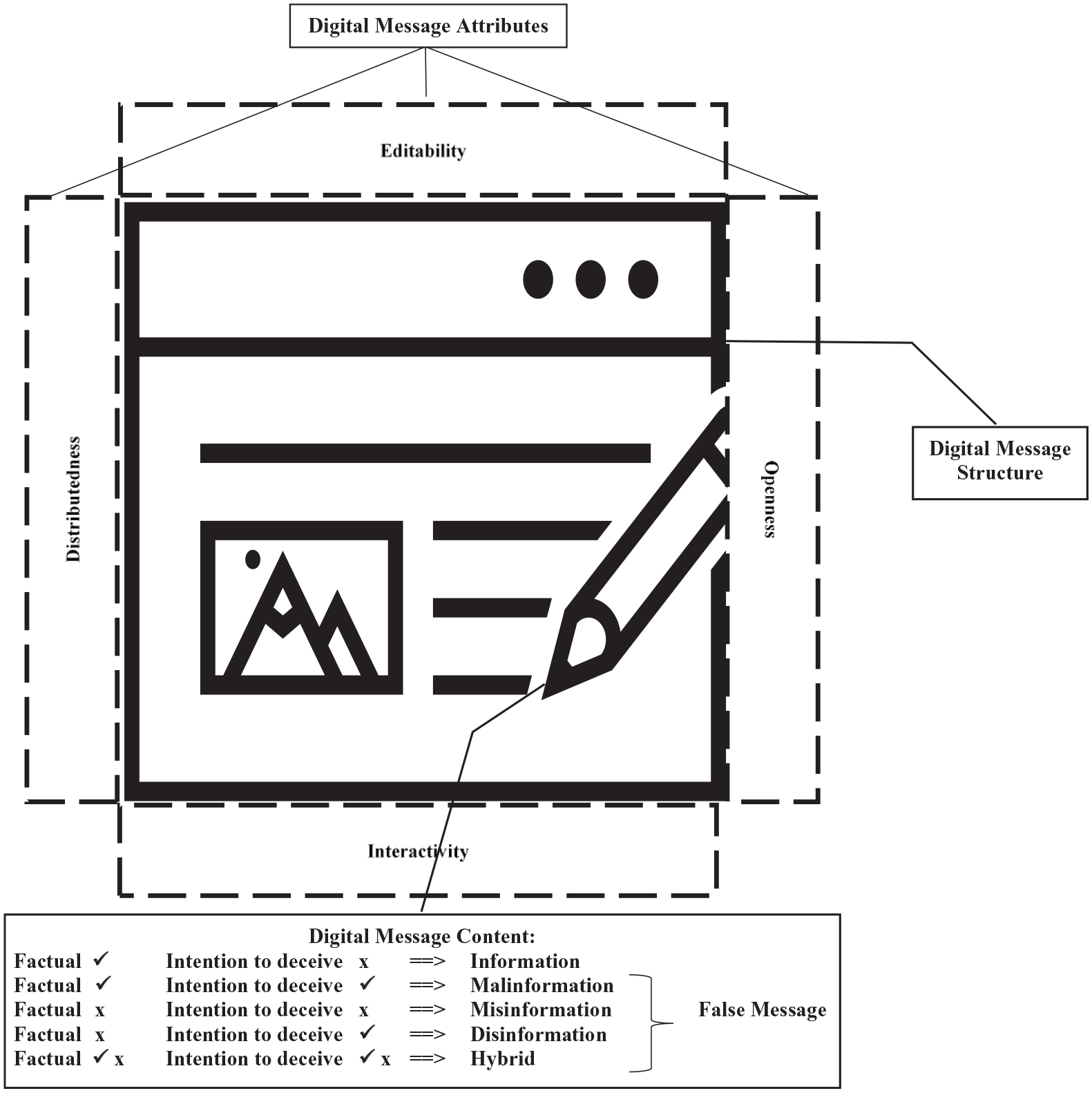

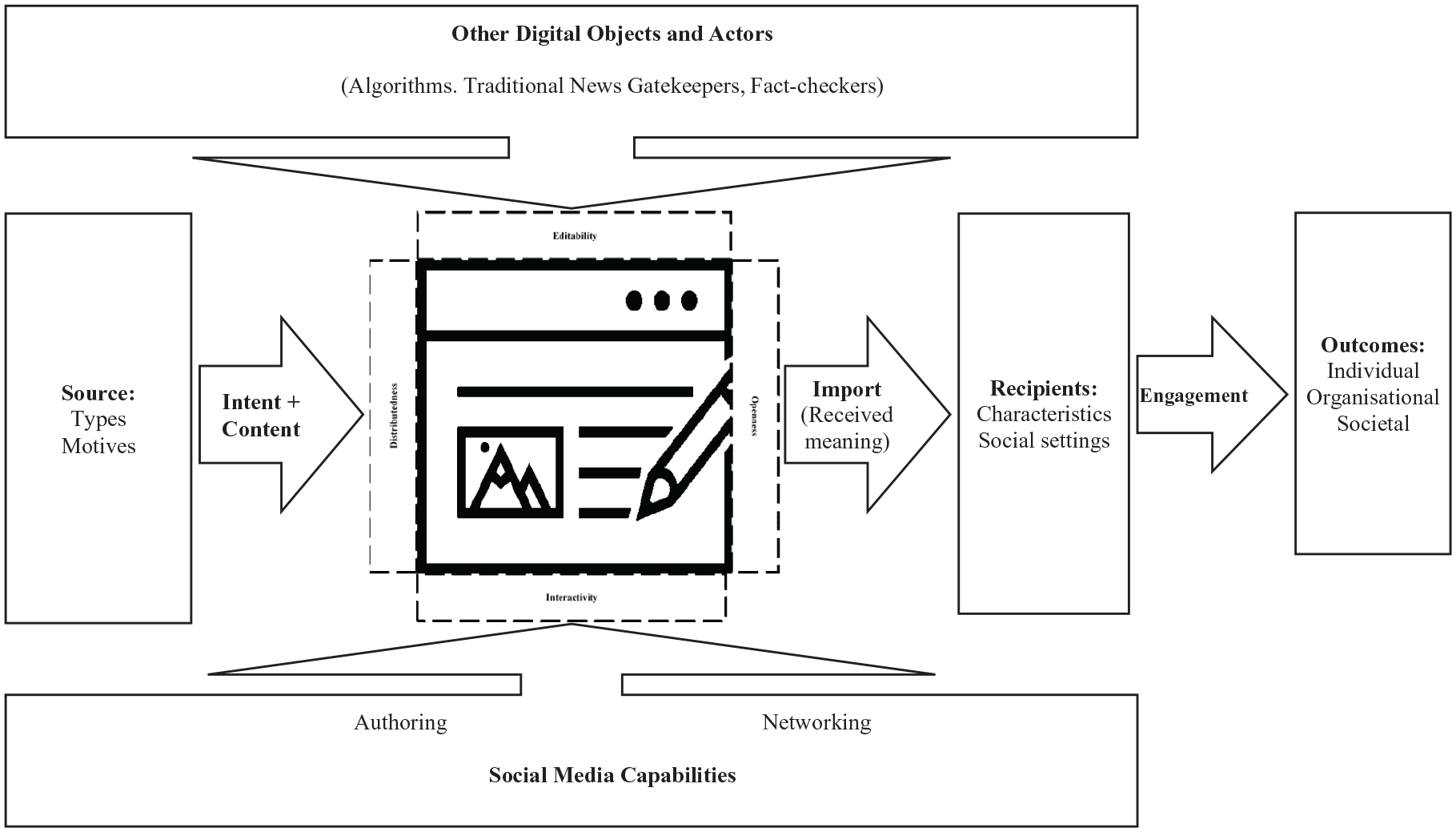

Given the conceptual and practical concerns outlined above, we posit that there is a clear need for further elaboration and better conceptualisation of our construct of interest. Therefore, in the following section, we briefly discuss some of the existing attempts to address this issue and then build on these attempts by proposing a broader construct, which we term a false message (Figure 2), conceptualised as a type of digital object (e.g. Faulkner and Runde, 2013, 2019; Kallinikos et al., 2013; Markus and Silver, 2008).

A (false) digital message.

From fake news to false digital messages

In recent years, scholars in areas such as journalism, communication studies, and philosophy have attempted to clarify the construct of fake news and define its boundaries with adjacent constructs. In the previous sections, we discussed one such attempt (i.e. Tandoc et al., 2018). Another example that is closely relevant to our work is Wardle’s (2018b) contention that fake news refers to several categories of ‘information disorder’, which can be summarised into three ideal types: misinformation, disinformation, and malinformation. We will elaborate on these concepts shortly; here we highlight an issue with Wardle’s (2018b) proposition that we try to address: the three ideal types only capture the informational content of fake news. But information is rarely communicated without being put into a clear and purposeful structure. A hypothetical newspaper with bits of (true or false) information strewn across its pages without being arranged into distinct news stories, columns, and other structures will indeed cater to a very small niche. Rather, information needs to be framed and formatted into a package that makes sense to the audience or fulfils another function (Borah, 2011). We call this package a message (McCornack, 1992; Mingers and Willcocks, 2017), and more specifically a digital message. We argue that such a message is best modelled as a syntactic object, that is, a digital object comprised of digital content (information), put into a specific structure (Faulkner and Runde, 2013, 2019). In addition, we will discuss how editability, openness, interactivity, and distributedness, as important attributes of digital objects (Kallinikos et al., 2013), facilitate the creation and propagation of digital messages. Pushing this concept one step further, when such a message contains false content information, it becomes a false digital message, of which false digital news (for short, false news) is one subtype. We will define and elaborate on these terms in the following sections, but here we provide a brief introduction to syntactic digital objects and their content, structure, and digital attributes and then discuss each of these in more depth in connection with false messages.

False message as digital object

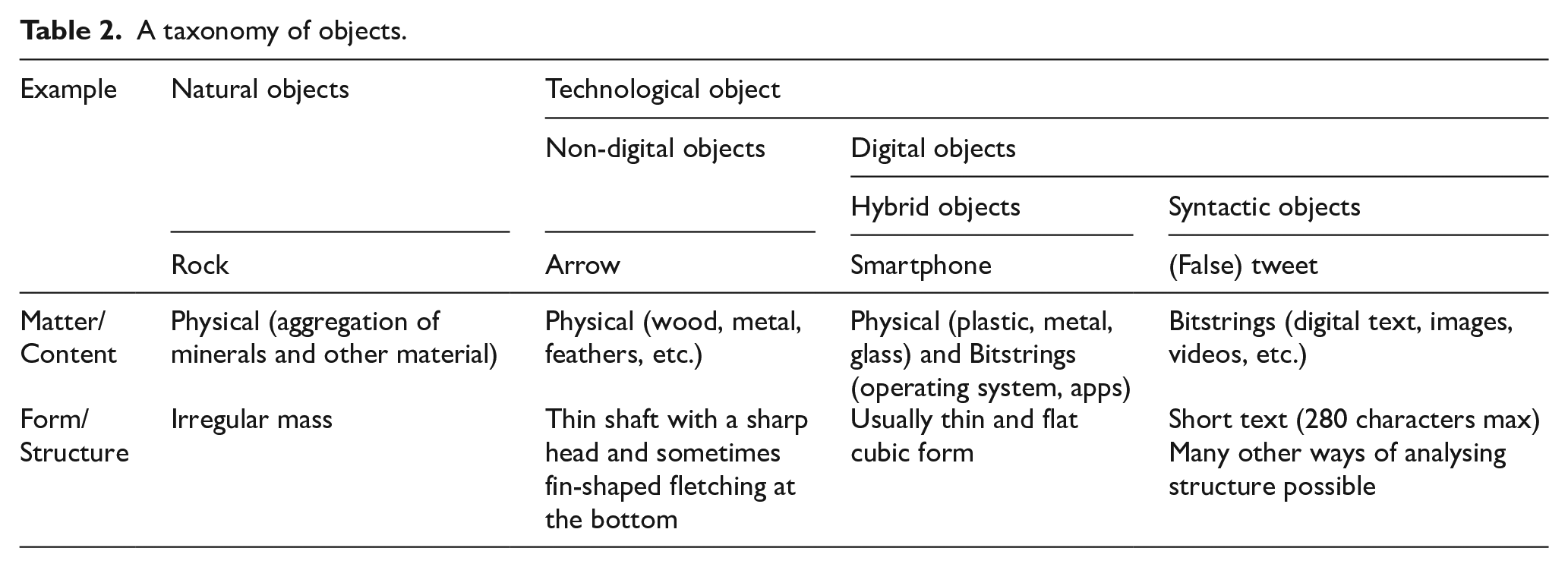

Throughout most of human history, objects were constituted from physical matter (solid, liquid, gas, plasma, etc.) structured into a specific form (cylinder, sphere, etc.), either through natural processes or by humans (Faulkner and Runde, 2013). Humans usually create objects as a means to achieve some end (e.g. to kill prey, to transport large objects), and such objects are called technological objects (Faulkner and Runde, 2013; Kallinikos, 2012; Leonardi, 2012). During the last few decades, digital objects have emerged as a new class of technological objects. These are ‘objects whose component parts include one or more bitstrings’ (Faulkner and Runde, 2019: 7).

While some digital objects are hybrids of digital and physical components (e.g. hardware), the advent of digital objects also gave rise to a new category of nonphysical objects called syntactic objects, comprised of digital material, arranged in accordance with a machine-readable and/or human-readable syntax (Faulkner and Runde, 2019). As such, syntactic objects are different from other technological objects in two aspects. First, they are constituted not from physical but from digital material (Leonardi, 2012). To use a more concise term, we call this digital material the content of digital objects. Second, rather than physical forms, this content is arranged in accordance with syntactic rules, which we call the structure of digital objects (Faulkner and Runde, 2019). Examples of such objects include digital news articles (Faulkner and Runde, 2019) and, we suggest more broadly, digital messages, including false ones. Here, we provide an example to clarify the concepts of content and structure. A person creating a false message might start typing the content (comprising false information) in a word processor such as Microsoft Word and save it as a DOCX, a file format developed and used by Microsoft. Therefore, one might say this content is structured as DOCX. The same content may later be posted on a website/blog, structured as HTML, converted to one or more Facebook posts or Twitter tweets (eventually also structured as HTML), or a screenshot, structured as JPG or PNG image file. In addition, the Word file might be structured along one or more paragraphs and pages, sequentially arranged into one or more pages, whereas the website and social media posts will be arranged based on their own structuring logics, which highlights different levels of structure: from those that are recognisable to humans (e.g. paragraphs) to those that are recognisable to machines (e.g. file formats, code syntax).

The ease with which such content can be moulded into different structures and move across different media highlights some important attributes of false messages as digital objects, namely, editability (being pliable and modifiable), interactivity (allowing various forms of use and adjusting to user behaviour), openness (accessible and modifiable by other digital objects), and distributedness (being contained across networks and locations) (Kallinikos et al., 2013). In the following sections, we will examine the content and structure of false messages more closely and outline how the four attributes of digital objects have impacted the content and the structure of false messages in the digital ages.

False message content

All objects are created from some material: physical matter for physical objects and content for syntactic ones (and a combination for hybrid objects, such as a computer). In addition, both physical material and digital content can be studied at different levels of granularity. For example, as demonstrated in Table 2, one might say that a rock comprises minerals and similar physical material. However, this material itself is composed of chemical compounds and elements, which in turn are composed of atoms, which in turn are composed of subatomic particles and so on. The question of which level of granularity best represents the materiality of a rock is best answered from the perspective of the research question under study. Similarly, under the current prevalent computing paradigm, the content of a digital message at its most fundamental level is represented by bitstrings (i.e. strings of 0s and 1s: Faulkner and Runde, 2019). However, for our purpose, little can be gained from examining bitstrings. Instead, we opt for a less granular level of analysis, focusing on (false) information as the content of (false) digital message. This is because we are building on previous studies that conceptualise fake news as problem of information disorder (e.g. Wardle, 2018b) and a growing body of IS research that emphasises the importance of studying information (e.g. Boell, 2017; McKinney and Yoos, 2010, 2019; Mingers and Standing, 2018; Petter et al., 2018). Next, we draw on insights from this research to outline the role of information as the content of digital messages.

A taxonomy of objects.

Information

Mingers and Standing (2018) suggest that information is ‘the propositional content of signs’ (p. 87). Information is propositional content in that it proposes that a specific state of the world exists: it is ‘what must be the case in the world for the sign to exist as and when it does’ (Mingers and Standing, 2018: 87). Furthermore, it is propositional content of signs because for the most part, our experience of the outside world is mediated through signs, whether smoke signifying fire, a fire alarm, or a fire icon on a digital screen.

Next, we consider what constitutes false information. The answer to this question is not straightforward. It is important to simultaneously consider the intentions of the source (such as deception) and the propositional content (information) they are communicating (e.g. Fallis, 2014, 2016; Wardle, 2018b). Within IS and the broader information literature, two opposing views of information exist. Some have argued that information is by definition true (e.g. Dretske, 1983; Floridi, 2013; Mingers and Standing, 2018), whereas others believe that information can be true or false (e.g. Fallis, 2016; Fox, 1983). Those who subscribe to the latter view will have no objection to the phrase false information. However, those who subscribe to the former view will argue that false information is an oxymoron. We argue that false has several meanings in English, including both ‘incorrect’ and ‘not genuine or real’ (Cambridge Dictionary, 2018; Merriam-Webster Dictionary, 2018). Our purpose is not to settle the dispute between the two camps: those who subscribe to the first view can take false information to mean what pretends to be information, but is not. Those who subscribe to the second view may take false information to simply mean inaccurate information.

False information

As false information remains understudied in IS, we turn to related areas such as the philosophy of information where false information is more thoroughly examined (e.g. Fallis, 2014, 2016) and journalism studies where scholars examine fake news as a problem of information disorder or false information (e.g. Wardle, 2018b). Following Tandoc et al. (2018) and Wardle (2018b), we classify false information based on two aspects: facticity and intention to deceive.

Misinformation

Propositional content of signs that misrepresents the state of the world without the intention to deceive is called misinformation. One area where this type of false information is quite common is health advice in online communities (Venkatesan et al., 2014), where many people spread false information unintentionally (Myers and Pineda, 2009).

Disinformation

Propositional content of signs that misrepresents the state of the world with the intention to deceive is called disinformation. Most current IS research on fake news (e.g. Kim et al., 2019; Moravec et al., 2019, 2020; Torres et al., 2018) and related streams such as deception (e.g. George et al., 2018) targets this type of false information.

Malinformation

Propositional content of signs that truthfully represents the state of the world with the intention to deceive is called malinformation. This may sound counterintuitive at first glance: how can one deceive by truthfully representing the state of the world? It is often assumed that deception appears in the form of or as result of bald-face lying and other forms of disinformation. However, deception can equally happen in the form or as a result of subtle manipulation of information that does not necessarily misrepresent the world but is intended to deceive (McCornack, 1992; McCornack et al., 2014; Wardle, 2018b). Examples include half-truths and spin, which refer to incomplete or selective information provided with the intention to deceive (Fallis, 2016). Malinformation should be of particular interest to business and management studies: issues such as selective disclosure of material information (e.g. Lee et al., 2014), creative accounting practices (e.g. Hail et al., 2018), and greenwashing (e.g. Marquis et al., 2016) are important areas of research and practice. This also highlights the fact that true information not only faithfully represents the state of the world as suggested by Mingers and Standing (2018), but it also does so without the intention to deceive.

Summarising the preceding discussion, the four ideal types of information, misinformation, disinformation, and malinformation constitute the content of (false) digital messages. However, more often than not, a false message such as a deceptive news story, Internet meme, tweet, or corporate news release may contain a combination of these (McCornack, 1992; McCornack et al., 2014). In addition, as we argued earlier, for this information to be transferred through digital media and received by the recipients, it needs to be structured in accordance with proper syntax (Dourish and Mazmanian, 2013; Mingers and Willcocks, 2017). Therefore, in the next section we look at the structure of false digital messages.

False message structures

Structure refers to the way in which content is arranged and is the digital objects’ equivalent of form in physical objects (Faulkner and Runde, 2013, 2019). Structure is an important and yet usually overlooked aspect of a (false) message (Te’eni, 2001), as it has important implications for how these recipients interact with messages: [T]he particular forms that information takes – graphical and lexical expressions, columns of numbers, or records in a relational database – shape the questions that can be easily asked of it, the kinds of manipulations and analyses it supports, and how it can be used to understand the world. (Dourish and Mazmanian, 2013: 8)

This view is supported by extensive research in IS, marketing, accounting, and psychology, demonstrating that the representation of information and its interaction or fit with factors such as task and individual characteristics impact information processing and task outcomes (e.g. Baker et al., 2009; Bettman and Kakkar, 1977; Dilla et al., 2010; Kelton et al., 2010; Peng et al., 2019; Walden et al., 2018).

Similar to matter and content, the form of physical objects and the structure of syntactic objects can be analysed at several levels. As shown in Table 2, we may say that the form of a rock represents an irregular mass at first glance. But if examined at a more micro level, the rock may be arranged into different types of crystals or even look at the arrangement of its atoms or subatomic particles. Similarly, we can examine the structure of a digital message at different levels. For example, (false) information, which in our view represents the content of a (false) digital message, can be considered a digital object in itself with its own structure (Faulkner and Runde, 2019). For example, we might say that information comprises words or other signs and symbols structured in accordance with the syntactic and semantic rules of a specific language (e.g. English). Furthermore, structure can be examined from many other perspectives, for example, in terms of genre (Buozis and Creech, 2018; Yates and Orlikowski, 1992), framing (Han and Federico, 2018), and whether it is structured as a narrative or advocacy (Dunlop et al., 2010), a conspiracy theory, a news story, or opinion piece (Wardle, 2018b); its size, distribution, organisation, and formality (Te’eni, 2001); the semiotic modality of the message (e.g. text, images, videos, multimodal); or the syntactic and semantic arrangement of these resources (e.g. the text is grammatically correct and meaningful or the quality of images/videos and whether they are properly encoded) (Mingers and Standing, 2018; Shin et al., 2020).

More importantly, digital media platforms are experimenting with new structures that are designed to engage younger audiences. For example, the traditional 800-word news articles are being replaced with shorter formats that can be read and shared quickly through mobile devices (Anderson, 2017; Ferne, 2017). In addition, such devices enable this younger audience to easily create and disseminate their own engaging content. The immense popularity of the short-format video sharing platforms such as TikTok (Wang, 2020) and time-limited multimedia story-telling platforms such as Snapchat (Bayer et al., 2016) and Instagram (Haenlein et al., 2020), particularly among younger users, showcase the effectiveness of these innovative structures. The informal style and the hedonic nature of such structures also make them ideal vessels for delivering false messages (Moravec et al., 2019). It is no coincidence that some of these platforms are also hotbeds for false messaging (e.g. Chee, 2020; Tardáguila, 2019). These new structures and new types of content are made possible in part because digital objects are characterised by a number of important and unique attributes that make them distinct from physical objects. Therefore, in the following section we examine these attributes more closely.

False message attributes

As digital objects, (false) digital messages have several inherent unique attributes that facilitate their creation and proliferation. In particular, the four important attributes of editability, openness, interactivity, and distributedness (Kallinikos et al., 2013) allow for the creation of innovative content and novel structures that contribute to the ubiquity of messages, specifically false messages on social and digital media. First, let us consider how these attributes impact the content of false messages. Editability, which refers to the possibility of being pliable and modifiable, and the closely related concept of openness, which refers to accessibility and modifiability by other digital objects, go hand in hand to allow users create new content. For example, snopes.com, a well-known fact-checking website (Vosoughi et al., 2018), rates some messages as ‘Miscaptioned’, which refers to photographs and videos that are ‘real’ (i.e. not the product, partially or wholly, of digital manipulation) but are nonetheless misleading because they are accompanied by explanatory material that falsely describes their origin, context, and/or meaning.

6

Such messages, which by some estimates comprise the bulk of ‘fake news’ in countries such as India (Oduyemi et al., 2019), are made possible because digital content can be easily manipulated and mixed (editability) by a range of digital objects, such as word and graphic editors and similar capabilities built into social and digital media (openness). If such content is shared on social and digital media platforms, users may interact with such content by adding supportive or critical comments, liking or downvoting it (Ksiazek et al., 2016). This user-generated content may blend the original material for subsequent users or at least effect how they understand the original content. This highlights a third attribute of digital messages, namely, their interactivity, which refers to the quality of digital objects being responsive to users’ input and diversity of pathways in which users can activate and explore the content of such objects (Kallinikos et al., 2013). Finally, true and false content, created by diverse and unrelated sources distributed across different network nodes and locations (e.g. eyewitness images and videos), can be linked together using HTTP and other web protocols, creating mis-, dis-, and malinformation, highlighting the last attribute of false messages as digital objects, namely, distributedness.

Next, we examine how these four attributes impact the structure of (false) digital messages. Editability and openness allow different formats (e.g. text, audio, and video) to be easily modified, converted, or mixed using readily accessible and inexpensive tools, creating the highly engaging short videos on TikTok, Snapchat, and Instagram stories (Bayer et al., 2016; Haenlein et al., 2020; Wang, 2020), which could be miscaptioned, omitting an important piece of information, or otherwise manipulated. In addition, interactive structures, such as those found on social media, or, more recently, interactive data visualisations enable users to engage more deeply with false messages, potentially increasing their reach and impact. Finally, content distributed across the Internet can be structured into a single package using web protocols, creating a distributed and loosely linked false message.

In summary, we conceptualise a digital message as a digital object with content, structure, and four important attributes that allow for the manipulation of content and structure and facilitate the creation and proliferation of such messages. These are graphically presented in Figure 2. False news (i.e. fake news) is a specific type of false digital messages containing false information, structured as news. Having clarified the focal concept of a false digital message, we now move on to place it in its individual, social, and technical context.

False digital messages in context

As syntactic digital objects, digital messages are situated within a network of related digital objects and human actors, which play an important role in the production and outcomes of these messages (Faulkner and Runde, 2013, 2019). Therefore, delineating the context of a false digital message will help paint a more complete picture of these messages. In addition, we need to explain how a syntactic object is able to communicate meaning between the source of a message and its recipient. 7 To elaborate on this point, consider a false digital message crafted in French (or any other language), delivered through social media to a recipient who does not know the language, with the intent of deceiving the recipient. This message will not fulfil its purpose because it does not convey any meaning to the recipient, regardless of how well it follows the syntax of the French language and regardless of the content of the message or the intent of its source. Expanding on this example, even if the recipient is fluent in the French language and successfully interprets and understands the message, they may not be deceived if they have previous personal encounters with similar messages, if they are naturally sceptic about such messages, if they were trained to identify them, and so on.

These examples demonstrate that communication of false messages involves more than the message itself. Rather, this process happens at the intersection of digital (and physical) objects, individual characteristics, and the social context within which the messages are situated (Mingers and Willcocks, 2014, 2017). The objects provide the means to produce and transmit a false digital message. However, in producing and interpreting the message, the human actors involved in this process (i.e. the source and the recipient) are bounded by their individual characteristics such as attitudes, emotions, and skills, as well as their cultures, languages, and other social frames of references. In our first example above, the process of communicating a false message fails because the sender and receiver do not share an important component of their social worlds, namely, the language, while in the second case the process fails because of the personal attributes, skills, and experiences of the recipient.

Therefore, while as IS researchers our primary interest lies in false messages as digital objects, we clearly need to study individual characteristics and social settings to be able to paint a complete picture. To achieve this, we expand on Figure 2 by adding human actors and placing them in their social settings, and highlighting some of the most salient aspects of these to the creation, proliferation, identification, and mitigation of false messages (Figure 3). We examine different parts of this model by first completing our analysis of the digital objects beyond the message itself, and then we discuss the characteristics of sources and recipients of false messages and their social settings.

A false digital message in context.

Social media capabilities

While many aspects of fake news are open to debate, there appears to be a consensus that social media platforms play a key role in the current tsunami of false messages (Allcott and Gentzkow, 2017; Aral, 2018; Brummette et al., 2018; Ordway, 2018; Wardle, 2018a), with researchers suggesting that social media have greater impacts than traditional media because of their speed, reach, and personalisation (Janze and Risius, 2017). Social media are defined as ‘a group of Internet-based technologies that allows users to easily create, edit, evaluate, and/or link to content or to other creators of content’ (Majchrzak et al., 2013: 38). This definition highlights two types of social media capabilities that play an important role in the creation and propagation of false messages: capabilities related to user-generated content and those related to user social activities (Alaimo and Kallinikos, 2017). The first type enables the production of false messages on social media platforms, utilising features such as authoring and editing of user-generated content (Leonardi and Vaast, 2017; Treem and Leonardi, 2013). We will refer to these as authoring capabilities. The second type supports the propagation of false messages on social media platforms through network articulation, which refers to the visibility of individuals and their social connections, and social transparency, which refers to the visibility of user interactions with other users as well as user-generated content (Leonardi and Vaast, 2017; Treem and Leonardi, 2013). These capabilities are enabled by features such as sharing, tagging, friending, and following (Alaimo and Kallinikos, 2017). We will refer to these as networking capabilities.

We contend that the prevalence and reach of false digital messages on social media stem from the interaction of these capabilities and the four attributes of editability, openness, interactivity, and distributedness of these messages. More specifically, authoring capabilities in combination with editability, openness, and distributedness allows for false content to be easily created and edited from scratch on the social media itself or be imported from other digital objects (e.g. word processors, photo and video editors, or the camera and the related app on a smartphone). As we explained earlier, such content can be brand new or be a collage of existing content or a manipulation of such content, and it can be pulled from anywhere on the web through an HTTP link. The networking capabilities of social media go hand-in-hand with interactivity of false messages to increase the reach of these messages. For example, interactivity allows for users to like or comment on a false message, making it visible to their network of social connections, some of whom may also engage with this message and in turn make it visible to their own connections, and so on.

Social media perhaps represent the most important digital object involved in creation and communication of false digital messages, but other digital objects also contribute to these processes. For example, research shows that many pieces of false news shared on social media originate on websites (Allcott and Gentzkow, 2017). Among all such digital objects, algorithms play a growing and important role in today’s media ecosystems (Sundar, 2020), including in both producing and combatting false digital messages, as we demonstrate next.

Other digital objects: algorithms

We conclude this section by highlighting another type of digital objects that is at the centre of an active stream of research on false messages, namely, algorithms (e.g. Conroy et al., 2015; Horne et al., 2020; Janze and Risius, 2017; Sharma et al., 2019). Broadly speaking, an algorithm could be any rule or formula used for prediction tasks (Dietvorst et al., 2015); however, here we are interested in those computing algorithms that play a role in the production, communication, or detection of false messages.

Perhaps the most common types of such algorithms are those developed to detect and sometimes remove false messages on social media and other communication channels. Different classes of such algorithms exist that, broadly speaking, either rely on content and/or structure of false messages for detection or on users’ reactions to these messages, such as likes or comments on social media (Sharma et al., 2019; Zhou and Zafarani, 2020). The link to our conceptualisation of false messages and their attributes is obvious: both types of algorithms rely on some aspect of false messages (e.g. content and structure) or individual and social interactions with the message (see the next section on personal and social worlds). These algorithms also rely heavily on the openness of false messages, which allows these algorithms to connect to false messages and extract their content, structure, and other aspects and create datasets that are used to train such algorithms.

Algorithms are also involved in other parts of the false news creation and communication process. Twitter bots that are programmed to tweet, retweet, respond to, like, or otherwise interact with Twitter messages are examples of algorithms acting as an ostensible source or curator of potentially false messages (Bolsover and Howard, 2019; Chu et al., 2012; Gruzd and Mai, 2020). These early bots, which were mostly rule-based, are now supercharged by the power of machine learning, creating deepfake (deep learning + fake) tweets that are more deceiving and less easy to detect (Fagni et al., 2020). Finally, algorithms are used on social media platforms and other communication channels as news selectors and curators, and in these roles they have contributed to the creation of echo-chambers and filter bubbles, virtual spaces in which false messages circulate among like-minded individuals and groups, potentially facilitating and reinforcing their beliefs in these messages (Groshek and Koc-Michalska, 2017; Kitchens et al., 2020; Krafft and Donovan, 2020). Once more, all of these roles are supported by the four attributes of false digital messages and their social and personal contexts.

From our discussion in this section, it must be evident that algorithms represent another important category of digital objects involved in the process of generating and communicating false messages, and studying them constitutes a very important and fruitful avenue for future research. We will highlight some of these opportunities in the final section of this article. At this point, having completed our analysis of digital objects, we turn our attention to the individual and social contexts of human actors involved in the process of producing and communicating false messages.

Individual characteristics and social settings

At the beginning of this section, we argued that it is important to demonstrate how false messages as syntactic digital objects are able to convey meaning, which is a semantic issue rather than a syntactic one (Mingers and Standing, 2018). Addressing this issue brings us to the realm of social and individual contexts, since generating and communicating meaning are by nature a social phenomenon (Poulsen and Kvåle, 2018) which is also influenced by the individual characteristics of the sources and recipients of communication (Mingers and Willcocks, 2014, 2017).

Source

Source is the initiator of the communication process. Several aspects of the sources of false messages, including their type, credibility, and intent, could be of interest to researchers and practitioners (Sundar, 2016; Wardle, 2018b). For example, from one perspective, sources of false digital messages can be of several types, including the original author, or those who may redistribute the messages knowingly or unknowingly on social media, or those who may edit such messages to make them more or less false, utilising the attributes of digital messages. These possibilities complicate any later attempts at verification by message recipients (Torres et al., 2018) or determining potential criminal responsibility. From another perspective, sources could be different entities such as individuals, organisations, or governments (Wardle, 2018b), who greatly differ in their access to resources, capabilities, and motivations to engage in the production and circulation of false messages. Sources can also be humans, bots (e.g. Twitter bots), or cyborgs (human-bot hybrids), which differ in how they create false messages (e.g. frequency, regularity, content), and such differences can help in identifying such messages and their sources (Chu et al., 2012).

Sources also differ in their motives, that is, their intents. In addition to those who created and circulated false messages with ideological motives to defame and defeat the rival camp or to demonstrate their producers’ political orientation and identities, a large number of false news articles circulated during the 2016 US election cycle was reportedly generated by several residents of a small village in the Republic of North Macedonia, primarily for economic motives (Bakir and McStay, 2018; Tandoc et al., 2018). As another example, the infamous ‘Pizzagate’ conspiracy theory was in part fuelled by false messages generated by foreign agents seeking to sow confusion and undermine the US democratic institutions and processes (Robb, 2017). In the context of false messages, this means that some types of content (e.g. completely falsified information vs half-truth) or structures (e.g. news vs memes) might be more or less conducive to political or monetary gains, and that in their effort to mislead, producers of false messages may discover new or improved content types or structures that are more conducive to their desired goals. Initial evidence of such evolution has been identified in false messages related to the 2020 US presidential elections, compared to those circulated during the 2016 elections (Scott and Overly, 2020).

In a previous section, we introduced content and structure as the two important aspects of digital messages. In addition, we explained that the content of false messages comprised misinformation, disinformation, or malinformation or a combination of these (and possibly some true information) and that these differ in their facticity and the intent behind them. Here we add that content, structure, and intent originate from the source, as they design and encode their messages (McCornack, 1992; Shannon and Weaver, 1949). An intelligent source will choose the content and structure and encode the message so that it conveys a specific meaning to the recipient. However, while the source may have some control over how the message is received and interpreted, for example, by framing the message in a specific way (Maheswaran and Meyers-Levy, 1990; White et al., 2011), interpretation and meaning-making on the recipient’s side are guided and bounded by the recipient’s own individual characteristics and social settings.

Recipient

Recipients are the other major human actors in the social network of false messages. Once a message is received by the recipient, it is interpreted and conveys an import to the recipient. Import is the meaning the message conveys to recipient’s mind (Mingers and Standing, 2018). Is this the same meaning that the source intended to communicate? Several factors influence the import conveyed to the recipients, including both their individual characteristics and their social settings. For example, a person with higher levels of information and media literacy will perhaps interpret a false message with more caution (Vraga and Tully, 2021) and will likely assign a different import (meaning) to the message. In addition, extant research on false news suggests that recipients will spend varying levels of mental effort and scrutiny in evaluating and interpreting a message, depending on how much the message resonates with their existing beliefs, a phenomenon known as confirmation bias (Moravec et al., 2019, 2020). Individuals will also differ in their emotional states, which has been demonstrated to impact their interpretation of false messages (Scheufele and Krause, 2019; Weeks, 2015). Earlier, we also gave the example of a false message that is written in a language that a recipient did not understand. These examples all demonstrate that for meaning to arise from a syntactic object, the recipient has to interpret the syntax based on their social frames of reference and under the influence of their individual characteristics. For the meaning communication to occur, there must be some overlap between these social and individual contexts of sources and recipients (Mingers and Willcocks, 2014, 2017).

The four attributes of false digital messages and the way recipients utilise them also have a significant influence on the process of meaning generation and communication. As we have explained throughout this article, these attributes render such messages more malleable by allowing various actors to easily manipulate the content and structure of these messages. Some of these manipulations could change the original meaning of the message. For example, a neutral image may be transformed into a pointed racist message by adding a line of text or by editing the image itself, or through comments and other interactions by social media users. Several of such transformation may occur before the message reaches its final recipient, with each transformation adding a new layer of meaning to the message. This is why unlike more traditional media audiences, those on social media platforms are more accurately thought of as active recipients (Hermida, 2011). They do not just read a (false) message, but rather, leveraging the editability, openness, and particularly interactivity attributes of such messages, as well the authoring and networking capabilities of social media, they actively contribute to the content, structure, and propagation of such messages. In addition, because these recipients have some degree of control over the content they see, through features such as customisable newsfeed, and the choice to connect to like-minded people on social media, they might perceive the source of false messages being themselves, making them evaluate and interpret such messages less critically (Kang and Sundar, 2016; Sundar, 2016).

Finally, social settings play an important role in users’ interpretation of false messages. For example, research shows that in highly polarised societies, such as those dealing with national crises and those with strong extremist communities, false messages have more currency (Humprecht, 2019), suggesting that users are more likely to accept such messages. After the recipients receive and interpret a message, they may decide to disregard the message or engage with the message in various forms. These behaviours potentially lead to different types of outcomes.

Other actors

Before discussing and concluding our arguments, here we briefly highlight some other actors that are part of the social context of false messages. First, a recent survey has found that 62% of US adults get their news from social media (Allcott and Gentzkow, 2017), and a similar study of Canadian news consumers found similar results (Hermida et al., 2012). This heavy reliance on social media decoding information has resulted in a diminishing of the traditional role of media as information gatekeepers. Conventional media and professional journalists were often regarded as nonpartisan objective gatekeepers of information flow to the public (Lasorsa et al., 2012), but this role has been challenged by the authoring, networking, and filtering affordances of social media (Bozdag, 2013; Bro and Wallberg, 2014). We have moved from relying on professional journalists for the gatekeeping and filtering of information to algorithmic gatekeeping (Bozdag, 2013) and audience gatekeeping (Kwon et al., 2012), that is, ‘audience members providing information to each other about their favored news items’ (Shoemaker et al., 2010: 61). This makes it easier for small but dedicated groups of users or automated software agents (such as Twitter bots) to influence the flow of messages on social media platforms. Furthermore, studies have found that conventional professional journalists themselves are adapting to norms of news dissemination on these platforms, such as expressing their personal opinions more openly and sharing user-generated content, which is generally in conflict with norms of conventional journalism, such as bipartisanship, neutrality, and professionalism (Lasorsa et al., 2012). This shift in roles and influence dynamics is enabled by both the authoring and networking capabilities of social media as well as the attributes of digital messages that provide ordinary people with possibilities previously in the realm of professional journalism.

Concerns about the threats and consequences of pervasive false message circulation on social media have given rise to a new type of actors, generally referred to as fact-checkers. However, scholars have differentiated between fact-checking, verification, and source-checking (Wardle, 2018b). Fact-checking refers to ex- post efforts to evaluate claims made in public messages, whereas verification refers to ex ante evaluation of the veracity of messages before sending them to the recipient. Finally, source-checking is the intersection of fact-checking and verification, exemplified by Facebook’s ‘About this Website’ feature. These efforts are complicated by the editability, openness, interactivity, and distributedness of false messages, as well as the authoring and networking capabilities of social media. For example, as we explained, editability and openness, in combination with the authoring capabilities of social media, allow for truthful content to be easily deprived of its contextual information and combined with and incorporated into other content, making it difficult to evaluate the facticity of this new content. In addition, fact-checking efforts may be less effective in the face of a large number of users (perhaps organised for this specific purpose) interacting with a message by liking, sharing, or adding supportive comments. Moreover, given the networking capabilities of social media, the propagation of false messages will widely outpace fact-checking efforts.

False message outcomes

The outcomes of false messages can be studied at the individual level (e.g. a change in beliefs, attitudes, or behaviours) and/or similar outcomes at the group, organisational, and societal levels (Mingers and Standing, 2018). Furthermore, we may consider the outcomes of false messages in the short and long terms, as well as in the context of specific events and crises (Oh et al., 2013; Vosoughi et al., 2018).

Regarding individual-level outcomes, such as change in beliefs and other cognitive states, some empirical evidence suggests that false political news does not change recipients views, as their cognitive biases will prevent them from believing disconfirming stories (e.g. Moravec et al., 2019). However, this might be specific to the highly polarised domain of politics, as research shows that in less polarised areas such as health, it is possible to correct misinformation (e.g. Bode and Vraga, 2018). The individual-level outcomes of false message may also manifest in the form of recipients sharing such messages on social media without necessarily believing or even evaluating them. Research has demonstrated that individuals share news stories on social media because it gratifies their need for socialising and influence (Lee and Ma, 2012; Oeldorf-Hirsch and Sundar, 2015). In this regard, the sensational nature of some false messages (Mourão and Robertson, 2019), as well as the hedonic mindset with which individuals use social media, may make it more rewarding to share such messages.

At the group level, an outcome of interest is the formation of echo-chambers and filter bubbles on social media. These are ‘information-limiting environments’ that ‘constrain the information sources that individuals choose to consume, shielding them from opinion-challenging information and encouraging them to adopt more extreme viewpoints’ (Kitchens et al., 2020: 2). Research on false news at the organisational level of analysis is scant (Bernard et al., 2019), yet organisations are concerned about the consequences of this phenomenon for their reputation and bottom line (Ipsos, 2018). Surprisingly, there is also a death of research on the societal outcomes of false messages, although the proliferation of these messages may have very destructive messages for societies (Bernard et al., 2019). However, extant research on media impacts suggests some possible insights into such outcomes through mechanisms such as agenda-setting and priming (Scheufele and Tewksbury, 2007). Agenda-setting theory (McCombs and Shaw, 1972) explains how media set the agenda for public discussions by choosing to focus on certain issues (Weaver, 2007). The rise of social media has raised the possibility of reverse agenda-setting, that is, public discussions on social media setting the agenda for traditional mass media conversations (Neuman et al., 2014). More recent research on the agenda-setting power of social media (e.g. Feezell, 2018; Neuman et al., 2014) and fake news, more specifically (e.g. Guo and Vargo, 2020), reveals complex and reciprocating agenda-setting processes between traditional and social media platforms. That is to say, on some issues, social media discussions, including those invoked by false messages, set the discussion agenda for traditional media and vice versa, for which the exact reasons are not yet well understood (Neuman et al., 2014). Closely related to agenda-setting, priming in communication studies refers to changes in the standards that the recipient of messages use to make evaluations of those discussed in the messages (Iyengar and Kinder, 1987). For example, pervasive circulation of false messages that deny anthropogenic climate change or diminish its impact on social media echo-chambers might prime their recipients to negatively evaluate any public or business leaders who engage in pro-environmental speech or behaviour.

Discussion and future research directions

In this article, we strived to clarify some of the conceptual clutter and confusion around ‘fake news’ and to situate it within a wider social context. We addressed the first issue by providing an overview of some of the existing terminology and highlight their strengths and weaknesses. In this, we were motivated by recent calls from researchers, practitioners, and policy makers alike for more accurate terminology and conceptualisation of fake news (Habgood-Coote, 2019; House of Commons Digital, Culture, Media and Sport Committee, 2019; Oremus, 2017; Wardle, 2018b). We argued against the use of the term fake news because of concerns with its conceptual clarity, as a result of its use in prior scholarly work with a multitude of meanings, its current, politically loaded and controversial use in the vernacular, and its inadequacy to cover the entire range of inter-related falsehood currently circulating on the Internet. We then laid out our own concept of a false digital message, a digital object comprised of content arranged in an appropriate structure (Faulkner and Runde, 2013, 2019) and characterised by editability, openness, interactivity, and distributedness (Kallinikos et al., 2013). From this perspective, we argue that ‘fake news’ represents one type of false digital messages where false content is structured as news, and we accordingly use the phrase false news to refer to this type of false messages. Similarly, for other types of false messages, the appropriate terminology should be used based on their content and structure (e.g. a false meme, a false viral video clip).

We addressed our second goal by delineating the social context of false messages, consisting of human actors and digital objects involved in the creation, propagation, and mitigation of false messages, and discussed the relationship between these objects and actors. Specifically, we examined the important role of social media capabilities and their potent enmeshment with the four digital attributes of false messages, in the creation and propagation of these messages. We further discussed some of the more important characteristics of sources and recipients of false messages, as well as the possible outcomes of engaging with and consuming such messages. As such, this article makes several important contributions and uncovers interesting opportunities for future research. Naturally, our work also comes with a number of limitations that provide additional opportunities for future research.

First, by closely examining and disentangling the conceptual clutter around fake news, we lay the foundation for rigorous research that can lead to a cumulative body of knowledge in this area. Future research can build on this foundation in several ways. For example, future research can translate our concept of false messages into more concrete constructs required for empirical research (Barki, 2008; Suddaby, 2010) and further develop the nomological network of these constructs, as well as appropriate scales and instruments for measuring these constructs (MacKenzie et al., 2011). Second, our conceptualisation of false messages as digital objects situates the research on false news within one of the core and burgeoning areas of IS research, namely, digitalisation (e.g. Faulkner and Runde, 2019; Kallinikos et al., 2013; Yoo et al., 2010, 2012), and underlines its relevance for IS research. In addition, by exploring the material and social aspects of false messages, and situating them within a communication network, this conceptualisation opens the door for adoption of emerging theoretical and methodological perspectives, such as sociomateriality (e.g. Burrell, 2012; Cooren et al., 2012; Leonardi, 2012) and semiotics (Mingers and Willcocks, 2014, 2017), to this stream of research, which could produce fresh insights into the topic and open up fruitful avenues for further research.

Third, our discussion of content, structure, and the four attributes of editability, openness, interactivity, and distributedness coincide with emerging and potentially game-changing developments in the media industry, as media organisations experiment ‘with a wide range of innovative formats, both super-short, sharable, and often ephemeral content distributed via social media and messaging apps and high production value fully immersive virtual reality experiences intended to have a much longer shelf life’ (Anderson, 2017: 26). Yet our exploration of these developments is limited by the scope and space limitations of a single research paper as well as a dearth of empirical research on these issues. Future research opportunities abound and would greatly contribute to the emerging body of research on false messages.

Fourth, we discussed how different types of false information (misinformation, disinformation, and malinformation) are defined based on the facticity of information and the intent of the source. There are many open questions in this area as well. For example, despite the fact that most fact-checking websites determine the facticity of messages on a nuanced scale (e.g. PolitiFact’s Truth-O-Meter has six levels 8 ), extant research on false news has paid little attention to variations in information facticity and its implications for the production, propagation, and outcomes of false messages. In addition, there is a dearth of research on possible techniques for determining or inferring intents behind false messages, despite the significance of such judgements for accountability and law enforcement. Foundations of such research exist in areas such as deception detection where researchers have studied the use of linguistic cues and non-verbal behaviour to detect deceptive intent by both human evaluators and artificial intelligence techniques (e.g. Ekman, 2001; George et al., 2018; Ho et al., 2016; Kumar et al., 2019; Nunamaker et al., 2016; Wu and Liu, 2020), and future research can build on this foundation to advance our knowledge in this area.

Fifth, our extended model diagrammed in Figure 3 uncovers several opportunities for future IS research on actors and digital objects involved in the production and communication of false messages. For example, despite the fact that social media and their features represent an ostensive example of the highly desired and elusive IT artefact in IS research (Benbasat and Zmud, 2003; Orlikowski and Iacono, 2001), little IS research thus far has closely examined their capabilities and affordances for false news creation ad propagation or has developed design principles to mitigate the potential abuse of such capabilities for the creation and propagation of false messages. The design science tradition of IS research (e.g. Hevner et al., 2004; McKay et al., 2012; Peffers et al., 2007; Sein et al., 2011) as well as the emerging IS research on digital platforms and ecosystems (e.g. Barrett et al., 2016; de Reuver et al., 2018; Tiwana et al., 2010) appears to be particularly relevant to this area.

Many open questions remain on other components outlined in Figure 3, including source, recipient, and other actors and digital objects involved in the creation, propagation, and mitigation of false messages, as well as their outcomes. For example, IS research thus far has paid little attention to the sources of false messages, particularly organisational and governmental sources. Examples of this type of research exist in other areas (e.g. Allcott and Gentzkow, 2017; Mejias and Vokuev, 2017) and can provide foundations for future IS research. On the recipient side, information and media literacy interventions (e.g. Vraga et al., 2020; Vraga and Tully, 2021) represent another avenue for future research. Concerning the outcomes, while some research findings suggest that initial concerns about the influence of false messages on US voters might have been exaggerated (e.g. Moravec et al., 2019), we need to examine how the barrage of false messages over the long term may impact outcomes such as trust, reputation, and social capital for individuals, organisations, and societies (Bernard et al., 2019). In addition, researchers need to urgently examine conditions under which such messages may lead people to take drastic actions, such as the ‘Pizzagate’ incident (Tandoc et al., 2018) and the Indian WhatsApp lynchings (Arun, 2019). Finally, given that algorithms are playing an ever-growing role in the creation, propagation, and mitigation of false messages, we see significant opportunities in studying their design, use, and outcomes in the context of false messages. An interesting and emerging example is the combination of algorithmic and behavioural interventions, such as those that use algorithms to generate advice for news consumers on the veracity of news (e.g. Horne et al., 2020). Many other research opportunities exist in examining other actors and objects highlighted in Figure 3, including fact-checking, source-checking, and verification, and the influence of traditional media and professional journalists.

In conclusion, the general public, scholars, businesses, and governments across the globe appear to be united in their concern about ‘fake news’. However, they attach this label to different phenomena, in accordance with their views, interests, and agendas. This has led to much confusion and abuse, to the point of becoming, some would argue, a distracting hysteria. In this environment, one of the main contributions of scholarly work is to help clarify the confusion and set the agenda for public and scholarly debate. We took an initial step towards this goal and invite our peers to help move this work forward.

Footnotes

Acknowledgements

The authors would like to thank Jane Webster, Indrani Karmakar, and Plínio Silva de Garcia, for their contributions to an earlier version of this paper.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.