Abstract

In online environments, listening involves being able to pause or replay the recording as needed. Previous research indicates that control over the listening input could improve the measurement accuracy of listening assessment. Self-pacing also supports the second language (L2) comprehension processes of test-takers with specific learning difficulties (SpLDs) or, more specifically, of learners with reading-related learning difficulties who might have slower processing speed and limited working memory capacity. Our study examined how L1 literacy skills influence L2 listening performance in the standard single-listening and self-paced administration mode of the listening section of the Test of English as a Foreign Language (TOEFL) Junior Standard test. In a counterbalanced design, 139 Austrian learners of English completed 15 items in a standard single-listening condition and another 15 in a self-paced condition. L1 literacy skills were assessed via a standard reading, non-word reading, word-naming, and non-word repetition test. Generalized Linear Mixed-Effects Modelling revealed that self-pacing had no statistically significant effect on listening scores nor did it boost the performance of test-takers with lower L1 literacy scores indicative of reading-related SpLDs. The results indicate that young test-takers might require training in self-pacing or that self-paced conditions may need to be carefully implemented when they are offered to candidates with SpLDs.

Just as in one’s first language (L1), authentic listening in a second language (L2) increasingly involves listening to online materials, which can be paused and rewound for comprehension purposes at the will of the listener. In naturalistic conversations, listeners might also stop the interlocutor and ask them to repeat or clarify the message. Therefore, except for live formal monologues (e.g., lectures, performances), listening rarely happens through the delivery of a single uninterrupted unit of text. This has led to increased research interest in L2 assessment on the impact of self-pacing on the validity and reliability of listening tests (e.g., Goodwin, 2017).

Self-pacing of the listening input might also be beneficial for L2 learners with specific learning difficulties (SpLDs), including those affected by dyslexia, who often have smaller working memory (WM) capacity and slower speed of linguistic processing, and tend to experience challenges understanding not only written but also spoken L2 input (e.g., Geva & Massey-Garrison, 2013; Kormos et al., 2019). Individuals with SpLDs, particularly those with reading-related difficulties, tend to score below their peers in tests of low-level L1 skills such as word-level decoding, reading and word-naming speed, and phonological awareness (for an overview, see Hale et al., 2010). These abilities form a continuum, and cut-off points below which one can be identified as having an SpLD are problematic (Fletcher et al., 2014). Consequently, using information on learners’ L1 skills may provide viable insights for identifying learners with SpLDs, particularly in contexts such as Austria where official diagnosis systems for SpLDs are limited. Therefore, in our study, we decided to measure a range of L1 skills indicative of underlying SpLDs and treat them as continuous variables rather than relying on a potentially flawed arbitrary categorical division (i.e., dyslexic vs. non-dyslexic).

Our aim was to gain insights into how lower L1 skills affect L2 listening performance in the standard single-listening and self-paced administration mode of two sets of test items based on the Test of English as a Foreign Language (TOEFL) Junior Standard test. Comparing the performance of young test-takers with different L1 literacy profiles in these two test administration conditions allows us to draw conclusions on whether self-paced listening might constitute a viable adjustment in assessing the L2 listening abilities of young L2 test-takers with a profile that is indicative of SpLDs.

Our research examines how the listening comprehension of children with different L1 literacy skills is affected by the opportunity to stop and rewind the oral input and whether the benefits of this form of test-taker control vary depending on participants’ L1 literacy skills. We report the findings of a study that was conducted with young Austrian learners of English who took the listening component of the TOEFL Junior Standard test and whose phonological short-term memory, naming speed, and word- and text-level written text decoding was measured using a standardized test battery for German L1 speakers. In a counterbalanced design, participants completed one half of the test in a single-listening-only condition and the other half in a self-paced condition. We used Generalized Linear Mixed-Effects Modelling (GLMM) to investigate the effects of the mode of administration and L1 literacy variables while accounting for variance due to random differences between students, testlets, and test items.

L2 listening competence among young learners and the role of low-level L1 skills in L2 listening performance

The real-time cognitive processing demands of listening make it particularly challenging for young or beginning L2 learners as it involves multiple simultaneous processes and places high demands on the listeners’ WM and attentional resources. The complex interplay of various types of mental activities during listening renders it demanding for most L2 learners, regardless of their age or cognitive characteristics. However, learners with SpLDs and, more specifically, reading-related learning difficulties are likely to face additional difficulties during L2 listening activities. They might find segmentation and parsing challenging due to phoneme identification problems and limited vocabulary knowledge, and they might not be able to successfully transfer segmentation strategies from their first language (Goh & Vandergrift, 2021; Wagner, 2022). Issues with this foundational layer of listening comprehension might then result in difficulties in holding segmented units in their (oftentimes limited) WM in order to combine words, identify grammatical structures between elements (Field, 2008), form and update larger propositions, and continuously build a representation of an utterance without forgetting what they heard or missing information provided in the input (Goh, 2000).

A central tenet of the current study is that the underlying mechanisms which affect learners’ L1 literacy acquisition also bear an impact on their L2 language development. Both Geva and Ryan’s (1993) common underlying processes framework and Sparks and Ganschow’s (1993) Linguistic Coding Differences Hypothesis explain how inter-individual differences along cognitive variables which influence L1 language skills may also impact L2 development. Several studies have also shown that tasks measuring phonological awareness, rapid automatic naming, and timed word reading in the L1 are predictors of L2 reading skills (e.g., Alderson et al., 2015; Kormos et al., 2019). The hypothesis that L1 literacy skills affect L2 listening is also supported by Grainger and Ziegler’s (2011) finding that orthographic and phonological processing skills are strongly interrelated and listeners and readers resort to both phonological and orthographic processing when decoding spoken or written language. Beyond the cognitive variables, there are also a number of studies that confirm that learners with SpLDs experience challenges with L2 reading comprehension (Alderson et al., 2015; Kormos & Mikó, 2010; Kormos & Ratajczak, 2019). However, the L2 oral text comprehension abilities of learners with SpLDs have been examined by only a few studies, and they have produced inconclusive results.

In a large-scale study focusing on young learners (10–11 years old or Grade 5 in the Canadian school system), Geva and Massey-Garrison (2013) compared the language skills of monolingual (n = 50) and English as a Second language learners (n = 100) with different reading abilities. Three reading measures—a pseudoword reading test, a word reading test, and a reading comprehension test—were combined to group the participants into typical readers, poor decoders, and poor comprehenders. With the exception of receptive vocabulary, Geva and Massey-Garrison found no difference in performance between learners with different L1 status in the cognitive and language measures they examined. However, poor decoders and poor comprehenders were significantly weaker than typical readers in a number of cognitive functions (nonverbal reasoning, WM, rapid automatized naming, and phonological awareness) and scored lower in receptive oral language measures (receptive vocabulary, syntax, and listening comprehension). The reading tasks also revealed differences between poor decoders and poor comprehenders in that poor decoders were significantly weaker than poor comprehenders in the rapid automatized naming and phonological awareness, while poor comprehenders were significantly weaker than poor decoders in their inferential listening comprehension. As Geva and Massey-Garrison (2013) concluded, these findings suggest that L1 literacy skills, regardless of L1 or L2 language status, are predictors of weak listening skills.

Kormos et al.’s (2019) research also found a significant relationship between low-level L1 literacy skills and L2 listening scores of young Slovenian learners of English. L1 timed reading and L1 non-word reading predicted L2 reading performance, while L1 dictation, dyslexia status, and L1 timed reading predicted L2 listening performance. Moreover, their findings suggest that students with no official dyslexia identification may also experience challenges with L2 reading and listening. The findings of Helland and Kaasa’s (2005) study with Norwegian learners of English, however, were less conclusive. Their data showed no significant difference between the controls and better performing dyslexic children along several L2 receptive (oral sentence comprehension) and productive (sentence production) language measures.

Given these findings, further research in this area is warranted. Previous studies vary considerably in the operationalization of the listening construct (shorter passages or sentence comprehension) and control over or variety of listening conditions. Therefore, the goal of this research is to provide insights both into the relationship of low-level L1 literacy skills—a key indicator of SpLDs such as dyslexia—and L2 listening comprehension, as well as the novel technological opportunities that self-pacing might offer in accommodating young learners with SpLDs in L2 listening assessment.

Assessment accommodations as a means of striving for fairness and validity

As educational opportunities around the world are made more accessible for learners with various needs, the number of individuals with SpLDs taking language proficiency tests is increasing (Tsagari & Spanoudis, 2013). It is therefore essential that neither the test design nor test implementation procedures create an unfair barrier for these learners. Tests need to provide accurate information about L2 learners’ competences, and therefore their procedures need to be valid and fair in terms of enabling learners to adequately display their competences in the respective skill areas (Kormos, 2017). The Standards of the American Educational Research Association (AERA), American Psychological Association (APA), the National Council on Measurement in Education (NCME), & Joint Committee on Standards for Educational and Psychological Testing (U.S.) (2014) also state that “Test developers are responsible for developing tests that measure the intended construct and for minimising the potential for tests being affected by construct-irrelevant such as [. . .], cognitive, cultural, physical or other characteristics” (p. 64), thereby highlighting the importance and interrelatedness of validity and test fairness (Kunnan, 2004).

Regarding SpLDs, one of the most important aspects of fairness concerns appropriate testing conditions. Tests need to be administered under circumstances which allow “students to participate in [. . . ] assessments in a way that assess abilities rather than disabilities” (Lehr & Thurlow, 2003, p. 2). The Standards (AERA et al., 2014) list six types of test modifications, four of which can be considered special arrangements that might not affect the construct being tested: modifying the presentation format, the response format, timing, and the test-setting. Special arrangements that might offer support to candidates with SpLDs can include extended time (e.g., Kormos & Ratajczak, 2019), frequent breaks, and reading out the text for the candidates in reading tests (Košak-Babuder et al., 2019). For listening tests, however, evidence as to which special arrangements might offer useful adjustments is scarce. For example, little is known about how reading out instructions and bimodal (written and spoken) presentation of test items might benefit test-takers with SpLDs.

One key issue in the discussion of accommodations is how any special arrangement might affect validity. Accommodations, by definition, should not change the nature of the construct being assessed, but differentially affect a student’s or group’s performance in comparison to a peer group (Hansen et al., 2005). To explore how accommodations influence test-taker performance, studies need to compare the performance of L2 learners with and without SpLDs under testing conditions. This could determine if and to what extent examinees without SpLDs may also benefit from the accommodation (Phillips, 1994), or if a special arrangement gives a differential boost to test-takers with SpLDs and would thus make a viable accommodation (Pitoniak & Royer, 2001). Adjustments that do not impinge on validity should result in overall score gains for learners with SpLDs, but in no or limited gains for those without SpLDs (Zuriff, 2000). If everyone gains from a testing accommodation, it means that candidates may not be able to display the best of their abilities under standard testing conditions in the first place, and hence validity claims might be compromised for the general test-taker population.

While there has been some research to date that has investigated the effect of special arrangements for young L2 learners with SpLDs on reading comprehension scores (e.g., Kormos & Ratajczak, 2019; Košak-Babuder et al., 2019), relatively little is known about how educators and language assessment specialists can ensure that young test-takers with SpLDs have a fair chance to display their abilities in listening tests. In addition, special arrangements for young learners in particular are under-researched, which might be due to the inherent complexities of investigating this group of test-takers. Research conducted with children needs to take into account their shorter attention span, not yet fully developed self-regulation abilities, and the fact that they are still undergoing cognitive growth (McKay, 2006). Young learners with SpLDs may face even greater challenges in these areas of executive functioning and cognitive development, which makes it crucial to identify appropriate special arrangements for this group of test-takers.

Self-paced listening

Traditional listening tests are generally administrator-paced and group-administered in that an examiner plays a recording once or twice to a group of test-takers (Goodwin, 2017). Herein, control over the input text lies in the hands of the administrator, and the listening is per definition linear (Lawless & Brown, 1997). Recent technological developments and new types of listening texts offer the possibility of self-paced listening. Goodwin (2017), for example, recommended “putting the control (playing, pausing, and audio position) of the input in the hands of examinees” (p. 1). The term self-paced might suggest that test-takers can not only pause and replay, but also regulate the speed of delivery. However, definitions and operationalizations of self-paced listening usually do not involve self-regulating the speed of delivery but only refer to(un)pausing and replaying options (see Goodwin, 2017).

Nowadays, there is a strong authenticity argument for self-paced listening tests. Self-pacing options are available in authentic listening situations and reflect the non-linear nature of digital listening (e.g., Goodwin, 2017; Gruba & Suvorov, 2019; Holzknecht, 2019). Holzknecht (2019) posited that new media and devices, such as podcasts, audio books, online lectures, recorded (online) conversations, and videos offer the possibility to pause and rewind. Beyond calls for more authenticity and multi-modality in listening exams (e.g., Aryadoust, 2022; Ockey & Wagner, 2018), control functions such as pause and rewind have also been reported as a way of offering learner assistance in computer-assisted language learning research (Cross, 2017; Lawless & Brown, 1997). However, the factors that underpin self-paced listening and whether different test-takers might benefit from these support options is still poorly understood.

In a self-paced condition, Roussel (2011) found that while pauses generally improved comprehension, there seemed to be large individual differences. Lower-performing students appeared to pause more frequently, longer, and at less predictable points, for instance, in-between passages and units of meaning. In a small-scale study, Middelkoop-Stijsiger (2018) also demonstrated that time spent on task, which is indicative of pausing time, was negatively related to comprehension. Goodwin (2017), however, found no correlation between time spent on task and listening scores.

While there seems to be a general tendency for item difficulty to decrease if test-takers are given the possibility to listen more than once (Field, 2015; Holzknecht, 2019; Ruhm et al., 2016), the effect of replay in self-paced listening on listening scores remains unclear. Roussel (2011) showed in a small-scale study (n = 29) that learners of L2 German aged between 14 and 16 years scored higher in a self-paced condition in contrast to administrator-paced single or double play conditions. Goodwin’s (2017) more comprehensive study whose participants (n = 100) were in their mid-20s examined performance on an administrator-paced single-play listening test to self-paced conditions with and without a time limit. The study corroborated the tendency that self-pacing makes items slightly easier, yet below significance level. A many-facet Rasch analysis of the items also revealed that they discriminated well and often even better in the self-paced condition without time limit than the single-play condition. Stimulated recall interviews (n = 8) based on screen capturing the self-pacing behaviours on 22 items showed no systematic relationship between self-pacing and listening proficiency levels. Goodwin concluded that self-paced listening tests seem to be valid and reliable instruments that are apt to improve the accuracy of measurement.

In sum, little is known about how young L2 learners make use of the self-pacing mode and who might benefit from it. Hence, there is a need to investigate self-paced listening as a potential special arrangement for younger students with SpLDs such as dyslexia so that test providers and classroom teachers can arrive at evidence-based decisions as to how much navigational freedom they should grant their test-takers.

Research question and hypotheses

Based on a review of the literature, we designed a study to investigate the following research question: How does the self-paced administration mode impact on the test scores of young L2 learners with different L1 literacy skills?

We formulated three accompanying hypotheses which were investigated via GLMM:

H1. Self-paced administration will improve performance.

H2. Lower L1 literacy skills will be associated with lower L2 listening scores.

H3. There will be a statistically significant interaction between L1 literacy skills and listening mode. Learners with lower L1 literacy skills will benefit more from self-paced listening than higher L1 literacy learners.

Method

The study involved one dependent variable, the students’ TOEFL Junior Standard test listening scores across two conditions (standard listening vs self-paced listening) and four independent variables measuring L1 literacy skills.

Participants

The number of test-taker participants was 139 (mean age = 13.95, SD = 0.65; 45.32% female, 53.96% male). All participants were eighth-grade students from four lower-secondary schools in Austria. The language teaching curriculum in Austria for these schools is communicatively oriented, with the Common European Framework of Reference for Languages (CEFR) as the curricular foundation (Council of Europe, 2001, 2020). At this point of their school careers, students are usually in their fifth year of formally learning English as a foreign language (mean years of formal English education = 5.20, SD = 1.64). The learning target for this grade is the A2 level according to the CEFR. Learning difficulties are a relatively neglected issue in Austria, with much of the responsibility of how to deal with such difficulties placed with individual teachers. Although schools are required to develop process-oriented plans for supporting students with SpLDs, in reality, there is relatively little assistance provided because inclusive policy recommendations are rather vague, particularly when it comes to assessment accommodations. The unsystematic identification of SpLDs, and often inadequate classroom learning and assessment support are also due to the fact that educational policies and provision vary across the federal provinces of Austria.

To be included in the sample, participants had to be L1 speakers of German or use German as a home language. Of the 139 participants, 114 (82.01%) only used German at home, while 25 (17.99%) spoke German and at least one additional language at home. Turkish (n = 10, 40%), English (n = 5, 20%), and Arabic (n = 3, 12%) were the three most frequently listed additional languages.

Materials

Listening test

A research form of the TOEFL Junior Standard test was customized for the purposes of this study and used as a measure for L2 listening comprehension. The TOEFL Junior Standard test is designed to assess “the academic and social English-language proficiency of young students ages 11+” (Educational Testing Service [ETS], 2022b) in terms of their receptive skills (i.e., reading, listening, and vocabulary and grammar knowledge) with the option of including an additional speaking module. The listening section of the test typically contains 42 multiple-choice items with four answer options and takes 40 minutes to complete. The input is based on short recordings of monologues and dialogues which involve peers or adults in a school context and present situations that may take place during school lessons or within the wider context of school life in general. Sample questions of the TOEFL Junior Standard test can be accessed via the official website (ETS, 2022a).

The customized research form for the current study consisted of two equivalent sets of 15 items; Sets A and B with three discrete items (with one item per recording) and three testlets (with four items per recording). The two sets were designed to be parallel forms of listening tests by Educational Testing Service (ETS) in terms of item difficulty (based on extensive piloting and available live administration data), the targeted listening sub-skills, the number of monologues and dialogues as well as formality of settings. The test was computer-delivered and presented in a counterbalanced design. Participants would first complete either Set A(standard) or B(standard) in their original form (standard condition: single listening), before then completing the complementary Sets B(self-paced) or A(self-paced) in the modified condition. Test Form A presented Set A in standard and then Set B in self-paced condition. Test Form B presented Set B in standard and then Set A in self-paced condition. Both test forms therefore contained 30 items in total.

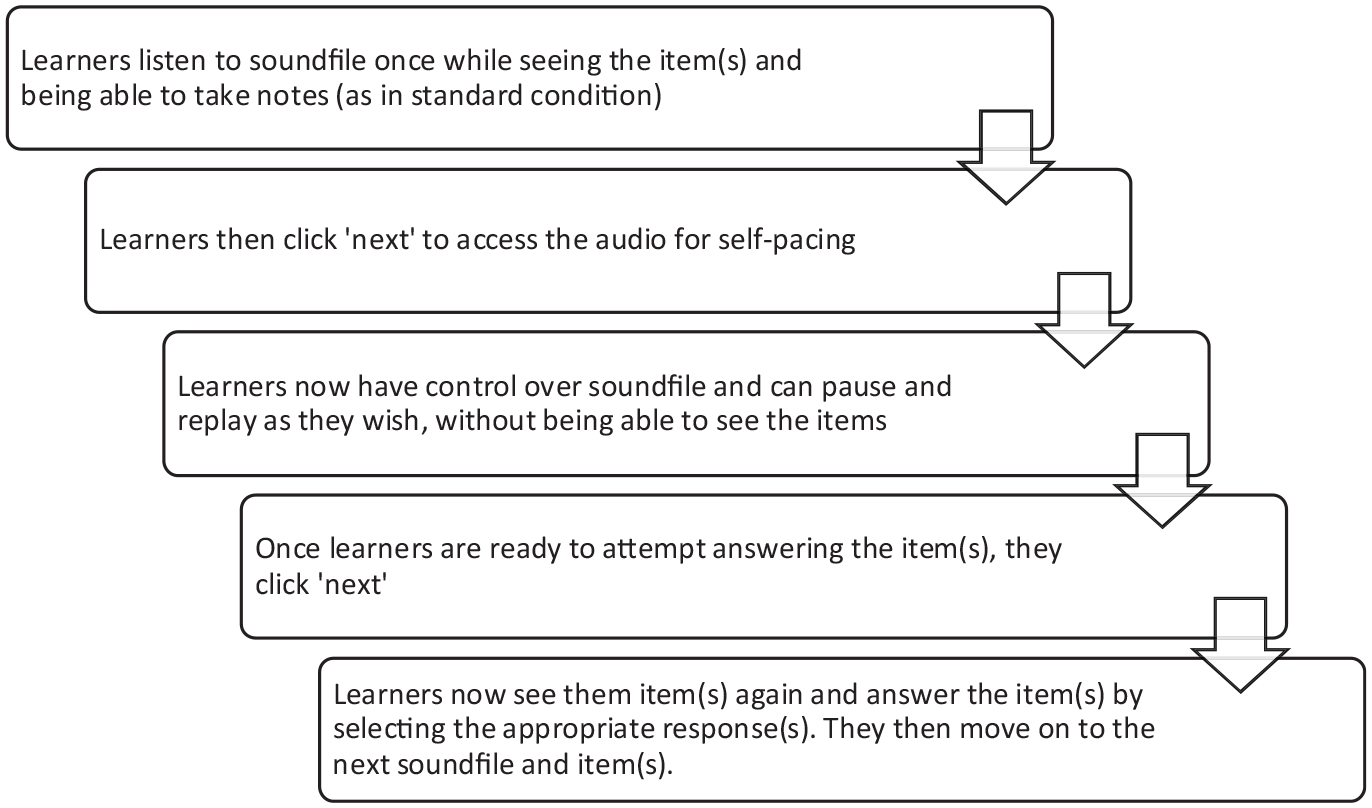

In the self-paced condition, participants first saw the items in their original format (single listening) and were then given the control over an embedded media player. Thus, the participants could choose for themselves whether they wanted to listen to the entire sound file or jump to specific sections. While participants had access to the media player controls, they could not see the items. Only when they moved on to answering the questions did they see the items again (see flowchart in Figure 1). In the standard single-listening condition, the question was visible during the listening. 1

Flowchart of self-paced condition.

This was done so that we could investigate the potential added benefit of self-paced listening only, without any effects that added reading time of items might have. Participants could take as much time as they needed in the self-paced listening condition. All items were scored 0 if the participant did not answer correctly and 1 point was awarded for correct answers. To ensure that participants understood how to respond to the test and use the media player, the customized research form included multi-modal instructions and practice items for each type of item (i.e., 1-question or 4-question testlet) and condition (i.e., single-play vs self-paced).

L1 literacy measures

The Zürcher Lesetest–II (ZLT-II; Petermann & Daseking, 2019) formed the basis for assessing the participants’ L1 literacy skills. The ZLT-II was developed to identify and monitor reading difficulties associated with dyslexia in German-speaking children through a series of flexible components and has been normed to be administered to children aged 6–14. The fourth and completely revised edition of the ZLT-II includes seven subtests. We included those five components of the ZLT-II which tap into key predictors of dyslexia (reading accuracy, reading speed, naming speed, and phonological short-term memory, see also Verwimp et al., 2021), but excluded two tasks that measure phonological awareness as previous studies found a ceiling effect for the age group of our study (e.g., Kormos et al., 2019; Landerl et al., 2013). The ZLT-II was administered and scored individually in face-to-face sessions with each child.

Three tasks were used to tap into the dimensions of reading accuracy and speed of written text decoding (Petermann & Daseking, 2019): a word reading task, a non-word reading task, and a text reading task. The word reading task consisted of two sets (Sets 3 and 4 for this age group) of 16 two-to-six syllable words (total = 32 items). The non-word reading task comprised 15 two-to-five syllable words. The text reading task contained two short reading passages (Passages 5 and 6 for this age group). For all three tasks, participants were asked to read the words or texts out clearly and accurately while the researchers recorded the number of mistakes and overall time needed per set.

The non-word repetition task from the ZLT-II was used as a measure of phonological short-term memory and the rapid naming task as a measure of rapid automated naming (processing speed). The non-word repetition task contained five word lists (a total of 25 non-words), ranging from two to six syllables in length. To standardize test administration, we produced a recording of the non-words with a rhythm of one second per syllable which was then played to the participants. The researchers recorded the number of errors and the longest syllable span that participants could recall correctly. The rapid naming task consisted of two parts. For the first part, participants named 25 images arranged on one sheet of paper, which were repetitions of the same five objects (sun, heart, glasses, car, and fish). For the second part, participants had to quickly name 25 different images. Researchers recorded the total time needed to name all images for each part.

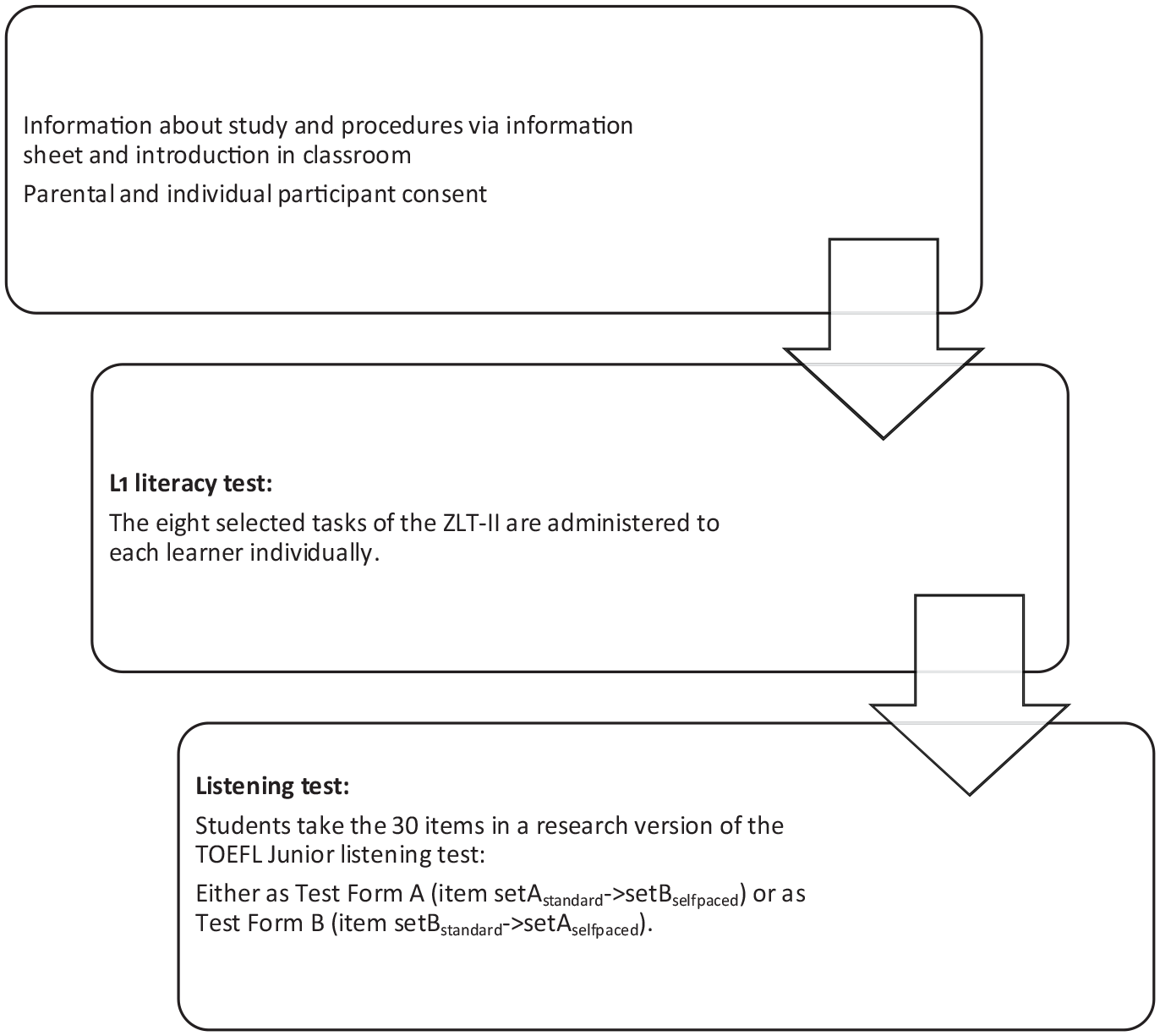

Procedures

The study was approved by the ethics committee of the Faculty of Teacher Education at the University of Innsbruck. Parents as well as all participating students provided informed consent prior to data collection. Data collection took place between the beginning of April and beginning of June 2022, at four Austrian lower-secondary schools. After being introduced to the main goals of the research project, participants would either complete the ZLT-II, or Forms A or B of the research version of the TOEFL Junior Standard test, on their school premises. To avoid participant fatigue effects, participants completed only one component (either the ZLT-II or the listening test) per session.

The ZLT-II was administered individually by two trained research assistants and took approximately 15 minutes. One research assistant functioned as interlocutor and the second research assistant scored the participants’ performance. All research assistants (N = 6) were either graduates of a teacher training or language programme or working towards their degrees. Prior to data collection, the assistants were trained in a half-day, face-to-face workshop in how to administer and score the ZLT-II. The ZLT-II manual includes a thorough explanation of the targeted constructs and exhaustive guidelines on how to score participant performance. The training included activities such as scoring a recorded ZLT-II performance of a 13-year-old student that we produced specifically for training purposes. During the training session, it became clear that scoring some of the non-word repetition items can be challenging. To resolve this and ensure scoring reliability, all non-word repetition responses were recorded and scored post hoc by a senior researcher.

The listening test was administered in groups in the schools’ computer labs. A minimum of two research assistants were in the room for each administration. Participants received instructions in German and opportunities to practice before and during the administration of the listening test. In a 15-minute session at the beginning of the listening test session, the research assistants introduced the participants to the multiple-choice item formats. This presentation included several sample items and screenshots to illustrate the self-pacing functions that would be available in the second half of the listening test. Following ETS guidelines for test administration, the research assistants also informed participants that they may take notes if they wished, and participants were provided blank sheets of paper and pens for this purpose. Furthermore, participants were advised that the test would not penalize guessing and that they may opt to choose the most likely answer even if they were not fully certain about an option. Non-graded sample items and English instructions were also included in the listening test itself (see “Materials” section). On average, it took participants approximately 40 minutes (M = 39.96, SD = 5.71) to complete the full listening test. A flowchart of the procedure overall is presented in Figure 2.

Flowchart of data collection procedure.

Data analysis

To test the hypotheses regarding the effects of self-paced administration mode and L1 literacy skills on L2 listening comprehension, we built the Generalized (logistic) linear mixed-effects modelling (GLMM) in R statistical software version 4.0.2 (R Core Team, 2020) to estimate the probability of correct responses in our L2 listening materials, using lme4 package (Bates et al., 2015). We entered the correctness of individual test items (either correct [1] or incorrect [0]) as the outcome variable. GLMM was theoretically appropriate for this analysis, because we had item-level accuracy data that followed a binomial distribution. In other words, for each item, the only possible outcome was either a correct response or an incorrect response. Thus, we had to model the probability of getting a listening comprehension item right taking into account random variation, and GLMMs allowed us to do that. Another benefit of using GLMM was that it helped us minimize the Type I error rate of predictions, by considering random variation between participants and test items.

Regarding the predictor variables, we decided to construct the latent variables of L1 literacy skills to reduce the potential effects of measurement errors of our observed variables of L1 literacy skills (for a similar approach, see Suzuki & Kormos, 2022). As Word Reading Test 3 showed little variability and a ceiling effect, we excluded it from the current factor analyses (see Supplementary Materials for the descriptive statistics of all ZLT-II tasks). We conducted an exploratory factor analysis with the remaining L1 measures. Kaiser–Meyer–Olkin value = .774 exceeded the recommended .60, and Bartlett’s Test of Sphericity reached statistical significance (p < .001), supporting the factorability of the correlation matrix. The scree plot revealed a clear break after the fourth component (see Supplementary Materials for scree plot). Four factors with Eigenvalues over 1 were thus extracted (L1 reading speed: Eigenvalue = 5.159; Non-word repetition: Eigenvalue = 1.675; L1 reading accuracy: Eigenvalue = 1.415; L1 naming speed: Eigenvalue = 1.214), which together explained 78.85% of the variance (for factor loadings of the variables, see Supplementary Materials). Based on the results of the factor analysis, we deemed it appropriate to create composite scores using regression factor scores (Tabachnik & Fidell, 2001) for L1 reading speed, non-word repetition, and L1 reading accuracy, and the mathematical average of the two naming speed measures (hereafter, Naming speed). For the sake of the interpretability of GLMM results, all these factor scores were inversed and then submitted to the subsequent GLMM building processes.

Another predictor variable was the administration mode of the listening comprehension tasks. This variable has two levels (the original condition vs the self-paced condition), and we applied simple contrast coding (–0.5, 0.5). Moreover, we included the interaction term between the administration mode and four L1 literacy factor skills as fixed-effect variables. While entering these predictor variables, we also included the random-effect variables of participants, testlets, and test items. Note that individual test items are nested within a particular testlet.

Given the confirmatory nature of our research aims (see the “Research question and hypotheses” section), we initially ran the GLMMs with a maximal random effects structure to minimize the rate of Type I error (Barr, 2013; Barr et al., 2013), including the random intercepts of individual participants and individual items nested in the corresponding testlets, as well as the random slopes for the administration mode across individual test items nested in the testlets. However, the proposed model with the maximal random effects structure failed to converge. Therefore, we excluded the random slopes. The final model only included the random intercepts of individual participants and texts and is described as follows:

Response accuracy – Listening mode × (L1 reading speed + L1 reading accuracy + Non-word repetition + Naming speed) + (1|Participant) + (1|Listening Testlet /Item).

Results

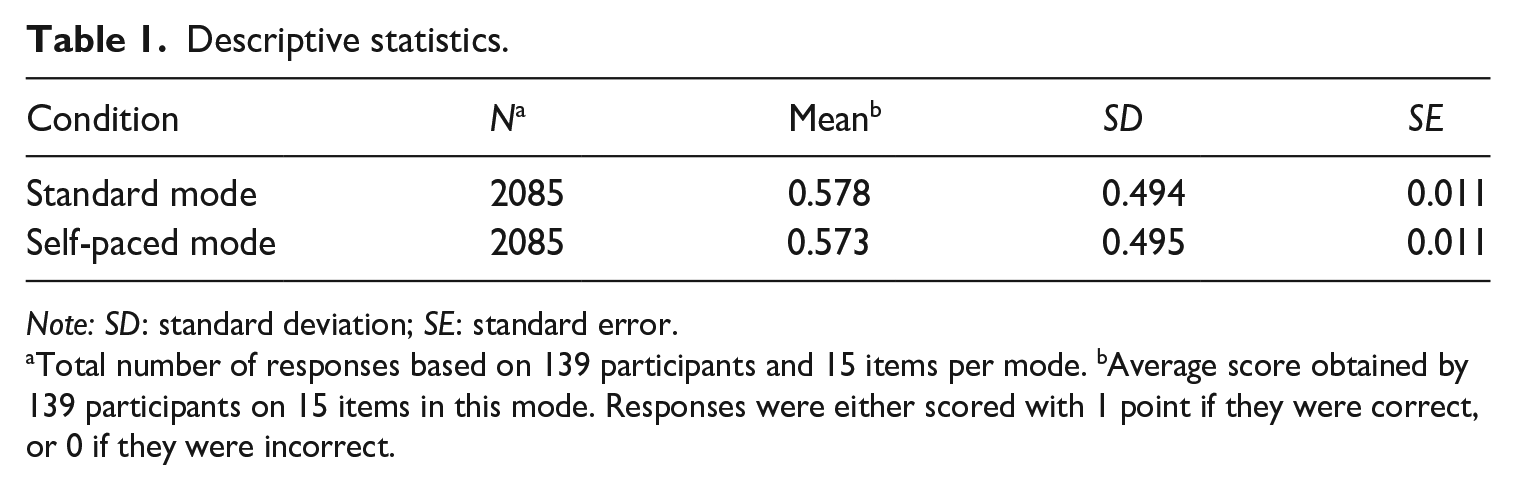

Table 1 summarizes the descriptive statistics for accuracy rate across two administration modes. As can be seen in the table, participants on average scored 57.8% in the standard listening mode and 57.3% in the self-paced mode. Regarding our first hypothesis (i.e., the effect of self-paced administration), these results show that students under both conditions of listening mode did not differ in their accuracy rate of responses. The result of our GLMM analysis also confirmed the lack of significant difference between two listening conditions (β = −.021, p = .781). This suggests that our first hypothesis that participants would perform better in the self-pacing condition needs to be rejected.

Descriptive statistics.

Note: SD: standard deviation; SE: standard error.

Total number of responses based on 139 participants and 15 items per mode. bAverage score obtained by 139 participants on 15 items in this mode. Responses were either scored with 1 point if they were correct, or 0 if they were incorrect.

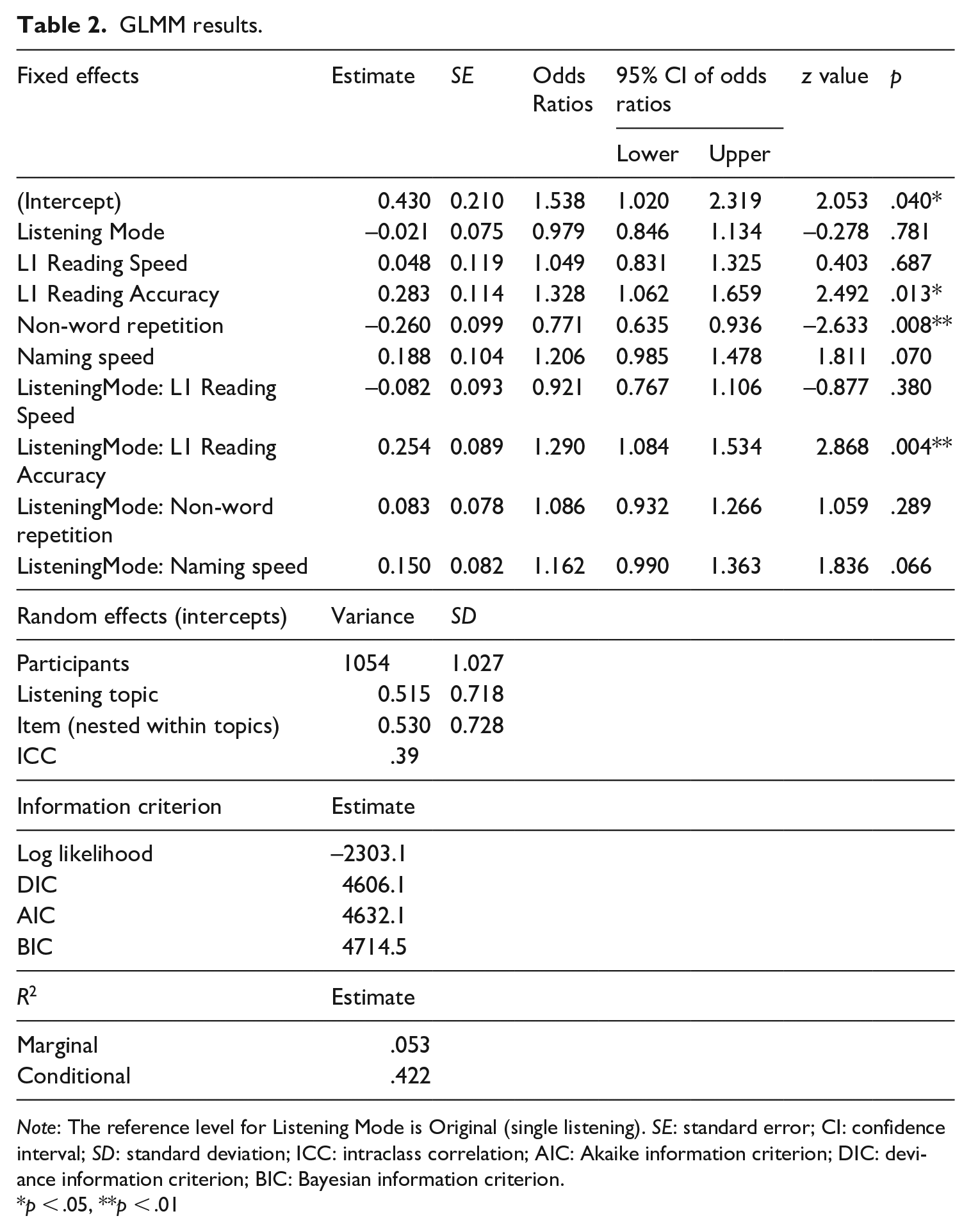

Our second hypothesis is concerned with the influence of L1 literacy skills on L2 listening performance. Our GLMM result (Table 2) indicated that the participants with L1 reading accuracy 1 SD higher than the group mean were 1.33 times more likely to respond correctly than those with an average L1 reading accuracy score (β = .283, p = .013), whereas their L1 reading speed may not contribute to listening performance (β = .048, p = .687). Meanwhile, the participants with non-word repetition skills 1 SD higher than the group mean were 1.30 times (1/.771) less likely to respond correctly than those with the average non-word repetition score (β = −.260, p = .008). Albeit the marginal level of statistical significance, it was also suggested that students with naming speed 1 SD higher than the group mean might be 1.21 times more likely to respond correctly (β = .188, p = .070). These results lend partial support to our second hypothesis that a low L1 literacy profile would be associated with lower L2 listening scores.

GLMM results.

Note: The reference level for Listening Mode is Original (single listening). SE: standard error; CI: confidence interval; SD: standard deviation; ICC: intraclass correlation; AIC: Akaike information criterion; DIC: deviance information criterion; BIC: Bayesian information criterion.

*p < .05, **p < .01

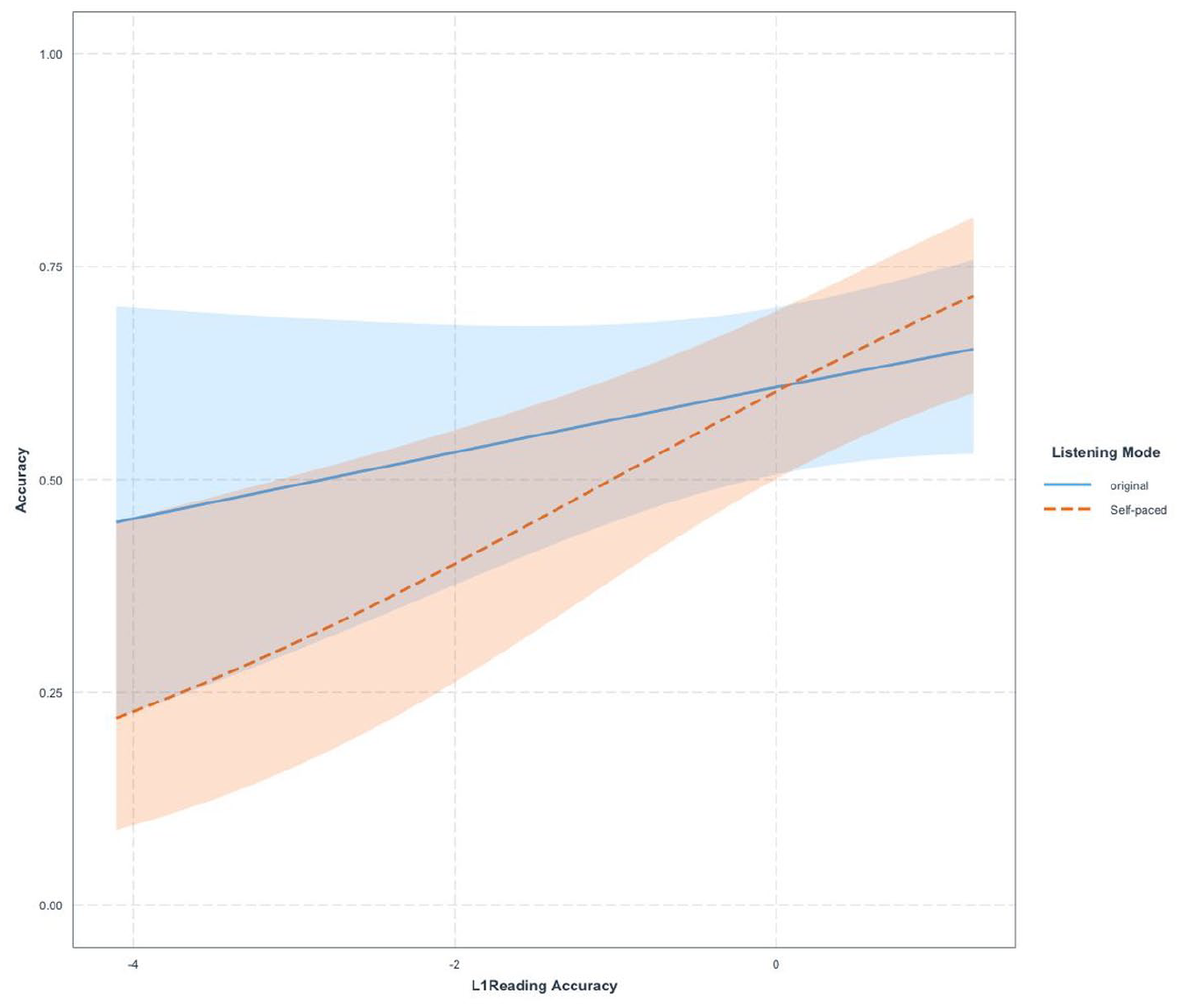

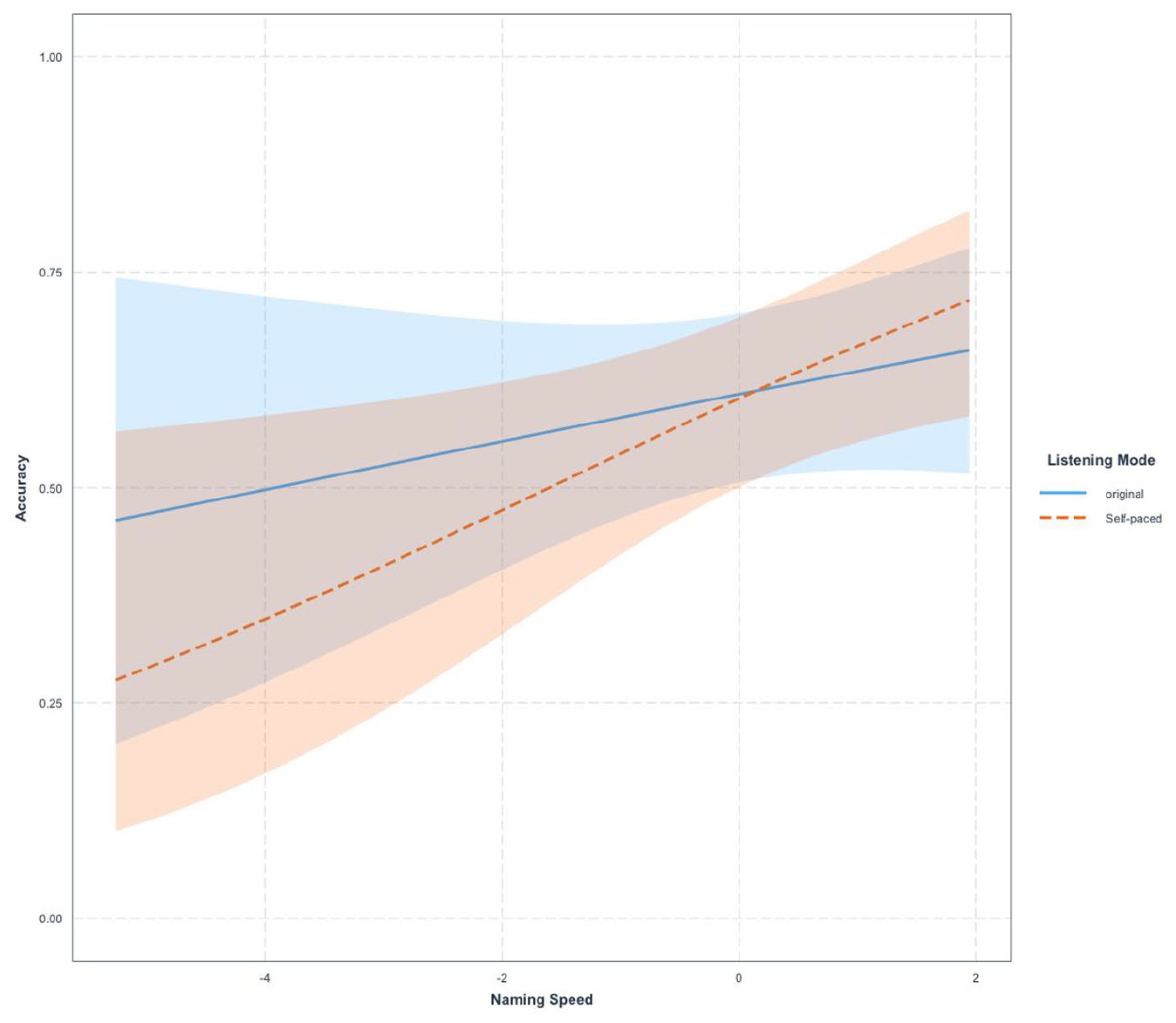

Finally, the GLMM model suggested the significant interaction between listening mode and L1 reading accuracy (β = .254, p = .004). As visualized in Figure 3, the positive direction of the estimate of the interaction term means that the students with higher L1 reading accuracy may perform better in the self-paced listening mode than in the original mode. The GLMM model also indicated that the effects of self-paced listening mode may tend to be moderated by students’ naming speed (β = .150, p = .066). As with L1 reading accuracy, students with fast naming speed tend to perform better in the self-paced mode than in the original mode (see Figure 4). These findings are in line with the hypothesis that there would be a significant interaction between low-level L1 literacy skills and listening mode. However, the results also reveal that contrary to our predictions, participants with higher L1 literacy skills perform better in the self-pacing condition than in the standard administration mode.

Interaction between listening mode and L1 reading accuracy.

Interaction between listening mode and naming speed.

Discussion

The purpose of this study was to examine the effect of self-paced listening on the test scores of young learners of English and investigate whether providing a self-paced listening option might support test-takers with reading-related SpLDs. The study is timely in that self-paced listening has become more authentic with the widespread integration of media players in digital and online learning environments. Moreover, there is a growing number of language learners with SpLDs, and this study addresses the pressing need to examine various accommodation options to ensure that fair test procedures are in place for an increasingly diverse population of test-takers.

Based on the results of our study, our first hypothesis that self-paced administration would improve performance needs to be rejected. There are several reasons why the opportunity to pause and replay the recording on the second listening might not have resulted in score gains. First of all, because students’ general performance in the test was relatively low, it is possible that the option to listen to the recording several times with the self-pacing option could not support participants’ use of meta-cognitive listening strategies such as planning, focussing attention, monitoring comprehension, elaborating and reorganizing the information gained, and evaluating their answers to the test questions (see Goh & Vandergrift, 2021). Although in previous studies no clear link between pausing frequency and listening abilities was established (e.g., Goodwin, 2017), it is possible that higher proficiency learners did not need to stop or rewind the recording, and lower proficiency learners were not supported in their comprehension processes by this option because they lacked the relevant linguistic knowledge. The mean listening item score of .58 in the traditional format and .57 in the self-paced format might also suggest that our participants might not have reached the relevant proficiency threshold for the use of effective meta-cognitive listening strategies (see Wang & Treffers-Daller, 2017). Second, just as in McBride’s (2011) study, our participants might not have used the self-pacing options frequently and might not have utilized the opportunities provided by it for self-regulated listening. 2 As in classroom and exam contexts in Austria teacher- and administrator-controlled listening tasks and tests tend to be used, students might need explicit training in exploiting the benefits of navigational freedom to enhance their meta-cognitive strategy use (see Lawless & Brown, 1997; Roussel, 2011).

Our second hypothesis that lower L1 literacy skills would be associated with lower listening comprehension scores was partially supported. We found significant effects of L1 reading accuracy and non-word repetition scores on L2 listening, but no significant link between L1 reading speed and L1 word-naming and L2 listening was established. Reduced phonological short-term memory capacity, which is the construct assessed with our non-word repetition test and low word-decoding accuracy, are key predictors of L1 reading difficulties. Therefore, in line with findings of previous studies (e.g., Crombie, 1997; Geva & Massey-Garrison, 2013; Kormos et al., 2019), it can be hypothesized that our participants with potential reading-related SpLDs also experienced challenges in comprehending orally presented information. Several influential models of L1 reading comprehension such as the Simple View of Reading (Gough & Tunmer, 1986) and the Modified Simple View of Reading (Tunmer & Chapman, 2012) postulate a strong link between oral language comprehension abilities and written text comprehension in L1. Our results lend support to the assumptions of these models in L2 and provide some initial evidence for the relevance of a more recent model of L1 reading comprehension, Y.-S. G. Kim’s (2020) Direct and Indirect Effects Model of Reading (DIER) to the L2 domain. This model hypothesizes that word decoding, oral text comprehension, and reading fluency are both direct and indirect contributors to written textual understanding, and that WM capacity is a direct predictor of oral text comprehension as well as word reading.

The importance of verbal WM capacity to L2 listening ability has been examined in a number of studies, but the results have been somewhat contradictory. Several studies which, in a similar way to our research, used a measure of verbal WM capacity found a link between L2 listening skills and verbal WM (e.g., Andringa et al., 2012; Brunfaut & Révész, 2015; Vulchanova et al., 2014). However, in Kormos and Sáfár’s (2008) research, this relationship was not statistically significant. In Wallace’s (2022) recent study using the TOEFL Junior Standard test with Japanese high school students, verbal and visual WM measures were not significant predictors of L2 listening scores either. One of the reasons for the differences might be the level of proficiency of the participants as WM storage and processing functions are relevant when L2 learners engage in conscious and effortful processing (Serafini & Sanz, 2016). Another factor can be related to the cognitive load of the listening task. In our study, participants had to process the input text, pay selective attention to the information requested by the stem of the test items, and keep the possible answer options active in their phonological short-term memory. This might have increased the intrinsic cognitive load (see Sweller et al., 2019) and could explain the lower scores of participants with smaller capacity for the intermediate storage of verbal information. Although the listening passages of the TOEFL Junior Standard test are neither long nor particularly dense in detail, the input texts are all informational and items primarily focus on understanding the main idea and detailed information. Therefore, test-takers with lower phonological short-term memory storage capacity might find listening assessment focussing on informational detail challenging (for a recent study on the role of WM in processing dense information in L2 listening, see M. Kim et al., 2022), particularly when the task involves answering multiple-choice questions.

An interesting finding of our study was that speed measures of L1 skills were not related to L2 listening scores despite the fact that rapid automated naming and text reading fluency are also contributors to written text comprehension difficulties and have been associated with oral text comprehension (e.g., Y.-S.G. Kim’s [2020] DIER model). A possible reason for this finding might be related to the fact that the listening test was administered under very low time pressure. Participants could preview and read and answer the test items at their own speed, and in the self-pacing condition, no time limit was set for stopping and replaying the recording. This suggests that the generous timing of the test did not put test-takers who are slow readers at a disadvantage, and reading and processing speed was in all likelihood not an intervening variable in the assessment.

In contrast to reading speed, L1 reading accuracy played a significant role both in overall listening comprehension outcomes as well as in the self-paced condition. As mentioned earlier, the test format of the TOEFL Junior Standard test requires a certain amount of L2 reading, and it seems that those who might not be accurate readers in their L1 might have experienced challenges with reading and understanding the test items. Despite our hypothesis that self-paced listening would support the comprehension processes of students who have shorter WM capacity and L1 literacy-related challenges, in fact the self-paced condition seems to have put these test-takers at a disadvantage. Based on the finding that L1 reading accuracy is a moderating factor in listening in a self-paced condition, we might hypothesize that because in the self-paced condition test-takers did not see the items while being given the option to control the recording, they might not have been able to revise and refine their understanding of the test items during second listening. As phonological short-term memory capacity was not a mediating variable in the listening mode, we can assume that the self-pacing condition did not present an additional intrinsic cognitive load, but its drawback was that test items were not visible. Fully aware of this potential limitation, this was a deliberate methodological decision on our part to investigate the potential added benefit of self-paced listening only, and to disentangle its effect from possible beneficial effects that added reading time of items might have had. Another possible explanation for the poorer listening performance of participants with lower L1 reading accuracy might be related to Field’s (2019) hypothesis that question preview might allow test-takers to use lexical matching strategies and answer items correctly by focussing on what lexical items in the recording overlap with items in the stem and options. As in the self-paced condition, the test items were only displayed during the first listening, test-takers with low L1 reading accuracy might not have been able to rely on this strategy.

Conclusion

This study has explored a particular implementation of self-pacing as a potential accommodation for learners with L1 profiles indicative of reading-related SpLDs and the role of low-level L1 skills in L2 listening comprehension. By comparing the performances of learners with different L1 literacy skills in the standard single-play versus a self-paced administration mode of the listening section of the TOEFL Junior Standard test, the impact of this special arrangement on test scores of young L2 learners was investigated. Mixed-effects modelling revealed that the self-paced administration mode did not improve our participants’ performance markedly. The lack of difference between scores might have been due to the lower level of proficiency of the young learner group investigated and might suggest that there is a proficiency threshold above which self-pacing can support the use of efficient meta-cognitive listening strategies of young learners (cf. Wang & Treffers-Daller, 2017). Therefore, future research should be conducted with higher proficiency test-takers to better understand the potential benefits of self-pacing. It is also possible that young learners demonstrate lower levels of meta-cognitive awareness and might need explicit training and more practice in regulating their strategic listening behaviour in order to be able to improve their performance with the help of self-pacing (cf. Wang & Treffers-Daller). The score equivalence might also suggest that test difficulty does not change as a result of offering the self-pacing option. Future research would be needed to examine other aspects of test validity such as cognitive validity by means of asking test-takers about the cognitive processing they engage in during self-pacing and observing their behaviour through direct and indirect process-tracing (e.g., eye-tracking, screen capturing, keystroke logging, and concurrent think-aloud or stimulated recall). Furthermore, future studies should also examine the impact of self-pacing on test-taking anxiety as the stress induced by the listening task can lead to construct-irrelevant variance (Holzknecht, 2019).

Our research also showed that lower L1 reading accuracy is associated with lower listening comprehension scores and lends support to previous studies that have highlighted that L2 learners with reading-related learning difficulties might need additional assistance in developing their L2 oral comprehension skills (e.g., Crombie, 1997; Geva & Massey-Garrison, 2013; Kormos et al., 2019). From a theoretical perspective, our study also provides some initial evidence for the applicability of Y.-S.G. Kim’s (2020) DIER model to L2 learning. However, future research that includes a wider range of L1 predictors, and L1 and L2 written comprehension measures would be needed to examine direct and indirect predictors of text comprehension in more depth. The finding that young L2 learners with phonological short-term memory limitations perform worse in the listening test should be carefully considered in test design. It is important to decrease the need to keep large amounts of verbal information active in WM in listening assessment, otherwise test-takers with lower phonological short-term memory capacity are systematically disadvantaged. Furthermore, as L1 reading accuracy was also associated with listening performance, options for the multi-modal presentation of test items might also be considered to assist those who might have reading difficulties.

Most crucially, our study showed that learners with lower L1 literacy skills did not benefit more from self-paced listening than high L1 literacy learners. In fact, this particular implementation of self-paced listening administration, in which candidates are allowed to pause and replay after the first listen but without seeing the associated test items during the self-pacing, appeared to disadvantage test-takers who have shorter WM capacity and L1 literacy-related challenges. Self-paced listening, though intended and certainly potentially capable of supporting the comprehension processes of such students, was not found to be suitable as a special arrangement to increase L2 listening scores for young learners with lower L1 literacy skills indicative of reading-related learning difficulties. As we explained, one of the reasons for this unexpected finding might have been the fact that test-takers could not see the test items while exercising control over the recording in the self-paced condition. Therefore, future research should examine whether displaying test items together with the self-control option might support young learners with SpLDs. It is also important to highlight that candidates with disabilities need training in assistive tools to be able to exploit their potential. Hence, L2 learners with SpLDs, just as their peers with no learning difficulties, would benefit from classroom activities that raise awareness of meta-cognitive listening strategies and enhance comprehension monitoring.

Supplemental Material

sj-docx-1-ltj-10.1177_02655322221149642 – Supplemental material for Investigating the impact of self-pacing on the L2 listening performance of young learner candidates with differing L1 literacy skills

Supplemental material, sj-docx-1-ltj-10.1177_02655322221149642 for Investigating the impact of self-pacing on the L2 listening performance of young learner candidates with differing L1 literacy skills by Kathrin Eberharter, Judit Kormos, Elisa Guggenbichler, Viktoria S. Ebner, Shungo Suzuki, Doris Moser-Frötscher, Eva Konrad and Benjamin Kremmel in Language Testing

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by the Educational Testing Service (ETS) under a TOEFL® Young Students Series Research Program grant. ETS does not discount or endorse the methodology, results, implications, or opinions presented by the researchers.

ORCID iDs

Supplemental material

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.