Abstract

Studies from various disciplines have reported that spatial location of options in relation to processing order impacts the ultimate choice of the option. A large number of studies have found a primacy effect, that is, the tendency to prefer the first option. In this paper we report on evidence that position of the key in four-option multiple-choice (MC) listening test items may affect item difficulty and thereby potentially introduce construct-irrelevant variance.

Two sets of analyses were undertaken. With Study 1 we explored 30 test takers’ processing via eye-tracking on listening items from the Aptis Test. An unexpected finding concerned the amount of processing undertaken on different response options on the MC questions, given their order. Based on this, in Study 2 we looked at the direct effect of key position on item difficulty in a sample of 200 live Aptis items and around 6000 test takers per item.

The results suggest that the spatial location of the key in MC listening tests affects the amount of processing it receives and the item’s difficulty. Given the widespread use of MC tasks in language assessments, these findings seem crucial, particularly for tests that randomize response order. Candidates who by chance have many keys in last position might be significantly disadvantaged.

Keywords

Assessing listening is a complex endeavour in which a multitude of factors can affect task difficulty. These factors can be related to both the listeners themselves as well as the listening assessment task (Brunfaut, 2016). Listener-related factors include linguistic characteristics such as language proficiency (Vandergrift, 2006), lexical knowledge (Andriga et al., 2006), background knowledge (Macaro, Vanderplank, & Grahams, 2005), knowledge and use of listening strategies (Vandergrift & Goh, 2012), working memory capacity (Kormos & Sáfár, 2008; Brunfaut & Révész, 2015), or affective dimensions such as anxiety (MacIntyre & Gardner, 1991; Elkhafaifi, 2005) and motivation (Vandergrift, 2005). Task-related factors, on the other hand, encompass characteristics of the listening text including, but not limited to, linguistic complexity (Révész & Brunfaut, 2013) or speed of delivery (Rosenhouse, Haik, & Kishon-Rabin, 2006), and features of the assessment task such as the number of plays (Field, 2015; Holzknecht, 2019) or note-taking (Carrell, 2007). Another crucial task-related factor that can impact task difficulty is response format.

One of the most common response formats in language assessment, and in educational assessment more generally (Butler, 2018), is that of multiple-choice (MC) items. MC items usually consist of a question (or stem) and a number of response options, out of which test takers need to choose the correct answer. Generally, only one response option is correct (often referred to as the “key”), with the other options serving as distractors. MC items are also popular in assessing listening (Green, 2017), and they tend to be easier in terms of item difficulty compared to open-ended formats (In’nami & Koizumi, 2009). However, research on the particular idiosyncrasies of MC items in listening assessment has been sparse.

With the present study, we attempted to fill this gap by investigating response order effects in four-option MC listening test items. We were interested in whether the position of the key affects the difficulty of the item. In particular, we set out to do the following: (1) explore the effect of the location of response options in MC listening test items and the probability of that response being chosen; (2) attempt to explain the mechanisms behind this effect at a person level, if it is found to exist; and (3) draw conclusions and make recommendations about best practice in the construction of listening items to minimize bias in test score interpretation. Before outlining the study, we review relevant literature on this topic according to the following broad categories: ordering effects in general, ordering effects in MC testing, and ordering effects in MC language testing.

Ordering effects

Researchers from diverse disciplines, such as psychology, animal behaviour, travel research, or marketing, have found that when people (and other animals) are presented with several options from which to choose, the spatial location of the individual options in relation to processing order can have an impact on the final choice. A large number of studies in this strand of research have found evidence for a

However, studies have also found that positional preference in choosing between individual options depends on the type of judgement involved. Christenfeld (1995) showed that when presented with a number of objectively identical options, people generally prefer the middle options in grocery shopping, in toilet selection (for men), and in choosing between four identical symbols in a row, but the last option when deciding on a route through a maze or when planning a route on a map. Other studies have found that performers in the Eurovision Song Contest and in international figure skating contests are judged more favourably when they appear later (Bruine de Bruin, 2005), that travellers booking hotels online prefer the top and bottom listings (Ert & Fleischer, 2016), or that food choices placed at the top and at the bottom of menus are more popular than choices in the middle (Dayan & Bar-Hillel, 2011).

Carney and Banaji (2012) argued that these different results could be explained by the degree of automaticity of the judgements involved. Based on findings of their own and of previous research, they proposed that decisions involving automatic processing are prone to a primacy effect, but “when controlled processing is possible, other influences can (as they rationally should) override the automatic reliance on the first” (Carney & Banaji, 2012, p. 4).

More related to language testing, Winke and Lim (2015) provided strong evidence for primacy effects in the use of a rating scale for grading students’ writing performances. In their eye-racking study, raters displayed a clear left-to-right bias in that they would focus longer on the criteria displayed towards the left. Ballard (2017) partly replicated Winke and Lim’s study, and her findings confirm this primacy effect. In addition, Ballard found that the raters would also consider the criteria on the left more important, and if they skipped the reading of a criterion altogether, the skipping was much more likely to happen when the criterion was placed on the right rather than on the left.

Ordering effects in MC testing

Ordering effects have also been investigated in relation to key position in MC testing, as they could potentially introduce construct-irrelevant variance into interpretation of test scores. If a systematic response order bias were to be found in MC testing, it could mean that individual test takers might be unfairly disadvantaged to a certain degree. For example, if the above-mentioned primacy effect also played a role in MC tests, questions with the key in first position might be answered correctly more often than questions with the key in last position. Consequently, when response options are randomized (as is common practice among many testing boards), test versions with a large number of keys in last position might lead to a higher number of incorrect answers. Studies in this area have been conducted mostly in relation to testing knowledge domains such as psychology, chemistry, or trivia (among others) and have revealed mixed results, as we discuss in the following sections.

Studies that found no effect of response position on item difficulty

Whether option ordering matters has been investigated off and on for a long time. For example, in 1963, Marcus randomly assigned 434 psychology students to one of four groups. Each group (of 104 to 113 students) took one version of a 100-item four-option MC psychology achievement test. The key position was randomly distributed, and it was ensured that the key for each item appeared in different positions across the four test versions. Marcus reported that in terms of the percentage of observed correct responses, no statistically significant bias in relation to key position emerged.

Fast-forward more than 40 years, and similar work in revealed similar results. Taylor (2005) had 60 psychology students take three versions of a 30-item, four-option MC psychology achievement test, within which key distribution was different for each version. In version 1, keys were distributed equally across the four positions. In version 2, 40% of keys were in in the first position, 40% in the second position, and 10% in the third and fourth position. In version 3, this was inverted (10% in the first and second position, and 40% in the third and fourth position). Taylor found no effect on item difficulty in relation to key position and suggested that balancing the key might not be as important as widely believed.

These two studies, however, display a number of notable limitations. The first concerns the sample size. With a total of only 113 candidates per group in Marcus (1963) or 60 in Taylor (2005), potential practically significant effects might not have emerged because the implied powers of the experiments were low. In addition, the studies were primarily cross-sectional, with each group split up for the comparative analyses (one group for each test version). The power to look at variables or factors with potentially small, but possibly important, effects can be improved by increasing the number of observations. This can be done by (a) increasing the sample size, or (b) increasing the number of observations through a within-subjects design (having all learners participate in all conditions). Finally, neither of the studies was conducted within the context of the assessment of L2 learning, which makes it difficult to interpret the findings for language testing purposes.

Studies that found an effect of response position on item difficulty

Other research reported an effect of response order on item difficulty, however, none of these studies were conducted within the context of L2 language testing. Cizek (1994), for example, investigated the effect of correct response placement in an experimental study. During a medical certification examination with 200 items, the participants (380 in group 1 and 379 in group 2), who were all “graduates of medical specialty residency training programs” (Cizek, 1994, p. 11), were given two different answer forms for a 20-item MC segment. Each item had to be answered based on a projected visual stimulus and respondents could select from 30 possible answers. Although the sequence of items and length of display was kept constant, the sequence of responses on the answer form was scrambled by the researcher to create two forms. Despite relatively small numbers in terms of items and group sizes, four out of 20 items displayed statistically significant differences in difficulty. However, Cizek was not able to detect any predictable patterns in item difficulty differences and concluded that no linear relationship between correct response placement and item difficulty could be established based on this data set.

In another study researching a more conventional MC format, Attali and Bar-Hillel (2003) found that test takers were more likely to choose middle positions when guessing. They looked at the performances of about 4000 candidates taking an Israeli university entrance admissions test measuring various scholastic abilities. Their data consisted of 161 MC items, each taken by at least 220 candidates, divided into two groups. One group received the original test (with the response options in their original positions 1, 2, 3, and 4), whereas the other group received the same test with a different response order for each item (2, 1, 4, and 3 instead of 1, 2, 3, and 4). The authors focused on wrong answers, assuming that these would be guesses, and found that, on average, wrong answers in middle positions were chosen 3% more often than wrong answers in extreme positions. In addition, when the key was placed in either of the two extreme positions, the number of correct responses decreased by 3% and item discrimination (biserial) increased by 0.05 points. This effect was bigger on more difficult items. Analyses of live-test data on more than 4500 items and several thousand candidates confirmed the results. The authors concluded that MC tests with many keys in middle positions are slightly easier and less discriminating than tests with many keys in the two extreme positions. However, the authors did not look at individual response positions, nor did they specify which types of items are mostly affected by middle bias.

Contrary to the findings by Attali and Bar-Hillel, a number of studies reported an effect in line with the primacy effect discussed above; that is, test takers prefer earlier options to later ones. Clark (1956), for example, inferred that in five-option MC tests, about 10% of test takers did not read the last two responses. He analysed several thousand candidates’ wrong answers on a scholastic aptitude test, a psychology test, and a mental ability aptitude test, controlling for response order, and found that, throughout these tests, the last two responses were chosen 10% less often as a wrong answer than the first three.

Similarly, Fagley (1987) reported a significant positional bias towards earlier responses in four-option MC tests for 10% of test takers. In her investigation, 60 candidates took a 32-item test on television trivia and a 28-item test on learning skills. Fagley found a statistically significant bias for early responses for six candidates in terms of chosen wrong answers. No significant bias was detected for the sample as a whole. This, however, might again be related to the relatively small sample size of the study.

Tellinghuisen and Sulikowski (2008) also reported a primacy effect in their investigation on the effect of response order in a 38-item four-option MC chemistry test. They analysed test scores of candidates who took the same test twice, once in August (697 students) and once in December (half of the students). On both occasions, two versions of the test were administered, differing in item order and response order. When comparing the results of the two administrations, the authors found that for the 12 items where the difference in correct answers between the two occasions exceeded 6%, 10 were items where the key was in an earlier position (i.e., these 10 items were significantly easier when the key appeared earlier).

Ordering effects in MC language testing

To our knowledge, only two investigations have looked at response order bias in MC tests in the field of language testing. In an unpublished study employing an experimental research design (i.e., response position was experimentally manipulated creating different versions of the test), Sonnleitner, Guill, and Hohensinn (2016) found a significant first position bias for a four-option MC vocabulary test taken by 10-year-olds. For this test and population, the same items (with the same distractors) were answered correctly significantly more often with the key in the first position as compared to the fourth position. However, the authors did not find significant effects for an MC reading comprehension test taken by the same students, nor for reading comprehension tests taken by students aged 14 years or older. In another study, Hohensinn and Baghaei (2017) looked at response order effects in the context of the Iranian National University Entrance Test for English studies: a four-option MC test including items on grammar, vocabulary, and reading comprehension. Hohensinn and Baghaei showed that items were answered correctly slightly less often as the key moved to later positions. However, they concluded that this effect was small and that random distribution of answer options is a valid practice (Hohensinn & Baghaei, 2017, p. 107).

Potential causes of primacy in MC listening tests

We were not able to find studies that have examined primacy effects in the selection of responses to listening test items. This is surprising, as it could be argued that listening tests might be more prone to such an effect than reading, vocabulary, or grammar tests, or tests of knowledge domains. Whereas most of the support for a primacy effect in the literature reviewed above comes down to preference for certain positions when given a range of choices, in listening tests the preference of an earlier option could be further exacerbated by cognitive demands. This is because listening effort can be linked to attentional capacity (Strauss & Francis, 2017). In listening tests, candidates not only have to engage in multi-modal processing – listening to the text while simultaneously reading the questions and answers, as well as matching potential answers to the questions – but also pay attention to the specific characteristics of the text, such as phonology, accents, prosodic features, speech rate, or hesitations (Buck, 2001). In addition, for some listening tests the audio files are played only once, without the chance to hear them again, which puts a further restraint on candidates (Field, 2015; Holzknecht, 2019). For example, in the current versions of widely used high-stakes listening test such as TOEFL, IELTS, TOEIC, PTE Academic, or GEPT, all listening texts are played only once.

Thus, listening test takers might be more reliant on the order of the responses than reading test takers when deciding on answers to MC items, as they have less processing capacity available to carefully read all of the responses. Such an effect would likely be aggravated for lower language proficiency test takers, as they would take more time to read the options than higher proficiency test takers. As shown by Winke and Lim (2014), less proficient (and more anxious) candidates take significantly longer to process answer options than more proficient (and less anxious) candidates. For these reasons, with the time pressures of many listening comprehension tests, language learners may not be able to read properly the options presented in later positions. It is, therefore, reasonable to assume that in MC listening tests, responses presented higher up on the screen (for computerized tests) or on the test paper (for paper-and-pencil tests) might be more easily accessible to test takers and item difficulty could be influenced more strongly by such an effect than in reading tests or tests of knowledge domains.

The present study

If primacy does play a role in MC language testing, as some of the findings presented above seem to suggest, language test developers would need to rethink their practices to avoid introducing test method-related construct-irrelevant variance into their scores. Given the inconclusive findings on response order bias in relation to MC testing of knowledge domains, the limited number of studies addressing this issue in regard to language testing, as well as the lack of research in relation to listening assessment, it seems prudent to investigate this further. The current paper attempts to shed light on this by looking at response order bias in an MC listening test using two novel methods in this line of research: eye-tracking and linear mixed effects modelling.

The paper reports on two studies. The first study (Holzknecht et al., 2017) looked at cognitive processing in listening items on the Aptis General Test using eye-tracking and stimulated recall. The study did not focus on response order. In fact, we used it as a control variable, but we found a strong and unexpected effect of order in the eye-tracking data. In the current paper, we are recasting response order as the main variable under investigation. The second study, which led on from the first, looked at the direct effect of response order on item difficulty on 200 live Aptis listening items.

Methods

Regression models

Both studies presented in this paper use multiple regression models to respond to the goals of the research. For a non-technical yet comprehensive explanation of these kinds of models see, for example, Field (2013). Study 2 uses a standard multiple regression model, whereas Study 1 uses a slightly more complex linear mixed effects multiple regression model. However, fundamentally these two models are very similar in that they allow us to examine the association between one outcome variable (i.e., the dependent variable in which we are particularly interested) and one or more explanatory variables which are used to explain the observed values of the dependent variable.

Whereas correlation coefficients only allow us to examine the relationship between two variables, multiple regression looks at the relationships between multiple variables simultaneously in order to investigate one variable of interest. This is particularly useful when we want to control for (i.e., statistically take into consideration) the effect of specific variables. For example, we may see a correlation between

Study 1: Eye-tracking study of correct response location

Participants

A total of 30 participants (14 male, 16 female) took part in this study. The participants were all native speakers of German and were aged between 20 and 61 years, with a mean age of 28.5 years. All participants completed three Aptis General components (grammar and vocabulary, listening, and reading,) and attained high average scores out of a maximum of 50 points: grammar and vocabulary (

Materials

A retired Aptis General listening module consisting of 25 MC items was used in the data collection. All 25 MC items had a question, a stem, and four options from which to choose, with one correct option and three distractors for each item. The test included several items from four CEFR levels: A1 (

The stimuli were created as html files using Verdana (font size 32px/24pt) on a 23-inch monitor (1920 × 1080) and then integrated into the Tobii Studio eye-tracking software. The original layout of the Aptis Test was slightly altered in that the response options were moved further apart by including more blank space between the options (see Figure 1). This step helped improve data quality as areas of interest could be specified more clearly. The eye-tracking was conducted using a Tobii TX300 (300Hz sampling rate, accuracy 0.4°). All participants sat approximately 63cm from the monitor and the distance from screen was monitored throughout the experiment in the “Track Status” window of Tobii Studio (see also the detailed description of the procedure below). The experiment consisted of eight sets of three-to-four items each, with a total of 25 items for each candidate. None of the items required scrolling. The Tobii I-VT filter was used, with a velocity threshold of 30 degrees per second, a window length of 20ms, and a minimum fixation duration of 60ms (see Olson, 2012 for the rationale of these values for the collection of reading data). Velocity threshold (VT) filters are considered particularly suitable for the analysis of reading data from high-speed eye-trackers (Holmquist et al., 2011) such as the one used in this experiment.

Example stimulus item.

Procedure

After obtaining ethical approval from the ethics committee at the university of the researchers, participant recruitment commenced. All participants received written information outlining the study and signed consent forms. Before the experiment, participants were shown a sample MC item and were instructed on how to start the sound file and respond to items. Participants were also told that they could decide themselves whether or not they wanted to listen to a sound file once or twice. This is consistent with the operational Aptis General Test. Participants were reminded to answer the items as if they were taking an actual language test. The instructions were provided in the participants’ L1 and they were encouraged to ask questions if something seemed unclear.

After these initial instructions, each participant also received instructions and explanations concerning the eye-tracking. This included adjusting the participant’s seating position and a short demonstration on how moving the body or head impacts eye-tracking quality. In a last step before commencing data collection, participants were instructed to place the index finger of their left hand on the ESC key to be able to move on from one item to the next, and their right hand onto the mouse. These instructions were adapted for left-handed participants and were meant to help all participants navigate the test items without looking off-screen.

The experiment started with a standard five-point calibration of the Tobii TX300 eye-tracker and finding a comfortable and optimal seating position (i.e. approximately 63cm from screen, as measured by the Tobii Studio software) for the participant. Calibration was repeated until reaching a satisfactory level of accuracy before starting the series of experiments. The eye-tracker was then recalibrated at the beginning of each set of items which helped ensure accuracy throughout the data collection (see also Conklin, Pellicer-Sánchez, & Carrol, 2018 for practical recommendations for setting up eye-tracking experiments). The participants were asked to remain as still as possible after calibration. Then the first set of three items was presented. Throughout each set of items, the researchers monitored the head position and eye-tracking quality via the “Track Status” function of Tobii Studio and provided feedback in between the items in case a participant changed their position. However, as the Tobii TX300 allows for some natural movement of the head this was not necessary with most participants. Great care was taken to avoid discomfort or strain for the participants while working on the items and they were given shorter breaks upon completing a set of items and a longer break after the first five sets (i.e., after the first 15 items).

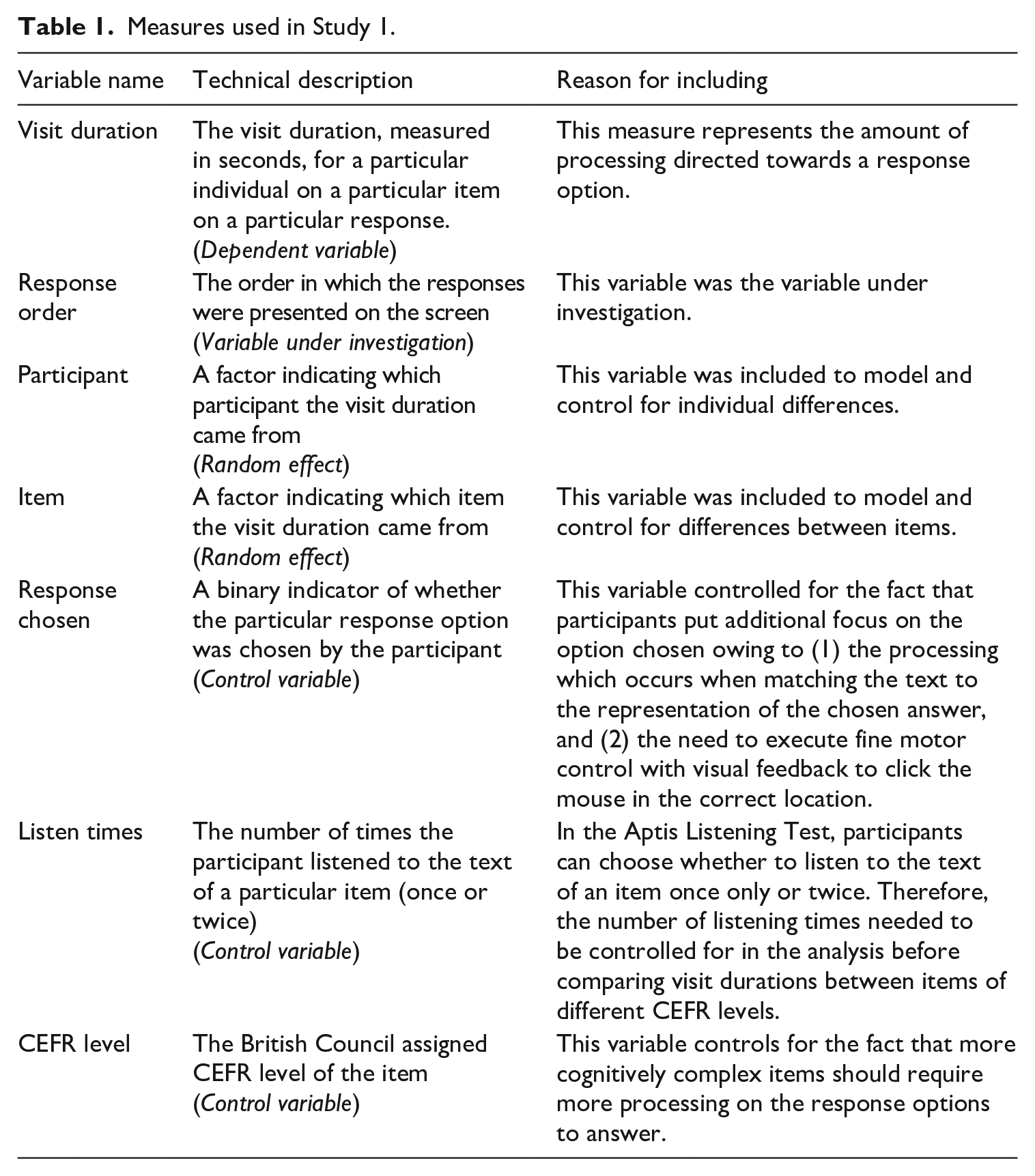

Measures

While there are no additional stimuli such as pictures or graphs presented in Aptis Listening Test items, we still expected that eye-gaze patterns on the textual information of the items could reveal insights into participants’ test-taking behaviour. To be able to test hypotheses related to eye gazes, areas of interest were defined for each aspect of the stimuli relevant to the research aims. Each of the four response options was defined as a separate area of interest. The dependent variable for subsequent analyses then was the total visit duration on each of the four options. Total time is defined as the summed duration of all visits by each participant and on each area of interest and needs to be understood as a global measure as it aggregates all gaze activity, such as first fixation durations as well as any re-reading activities within an area of interest (Godfroid, 2019). It is thus useful in assessing global effects such as comparing length of processing for each of the four response options. The hypothesis to be tested was whether total visit duration was the same for each of the four options, after controlling for a number of variables as outlined in the following.

A regression model was used to allow a multivariate analysis of the total visit durations for each response, on each item, and by each person. As outlined above, the advantage of using multiple regression models is that all variables can be modelled jointly, without having to use average values across variables and while still being able to control for different variables. Table 1 below outlines all variables included in the analysis. The three control variables added to the model were the response chosen (participants were found to focus naturally more on their final choice, as they had to move the mouse towards their chosen option), the number of times they listened to the sound file (participants could choose between listening once or twice as is operational in the Aptis Listening Test and this impacted the time available for looking at the options), and the CEFR level of the item (items at different levels were developed with the intention to tap into different cognitive processing). Not controlling for these three variables might run the risk of drawing wrong conclusions on the basis of confounded variables.

Measures used in Study 1.

Statistical analysis

For the data analysis, a mixed effects linear regression model (Gelman & Hill, 2006) including random intercepts was chosen. This method of analysis has been used successfully in other linguistics research projects and has been recommended by various researchers (Baayen, Davidson, & Bates, 2008; Winter, 2013). In mixed effects models, the predictor variables can be classified as either fixed or random, whereby the fixed parameters are the factors under investigation and the random variables come from a whole range of potential parameters.

As listed in Table 1, the fixed variables for this study were the three control variables (response chosen, audio replayed or played once, and CEFR level) and the variable under investigation (response order). These four factors were considered relevant to the processing of an individual test taker when answering particular items and response options. The random effects were participant and item. The goal of this study was to investigate the effect of response order to be able to generalize from the sample to a broader population of items and participants. However, certain individuals or certain items may be more prone to produce a certain visit duration and be correlated. The strength of the mixed effect model approach is that such correlations can be taken into account by including random effects in the model. Not including random parameters in the model can distort results and lead to invalid inferences about the statistical significance of the fixed effects (Crawley, 2007).

The regression analysis was carried out with the package

Study 2: Investigation of item difficulty based on correct response position

Materials

The materials for this study represent psychometric characteristics of 200 live Aptis listening comprehension items. In terms of CEFR level, 40 of these items were aimed at A1, 52 at A2, 50 at B1 and 58 at B2. The psychometric properties of the items were based on a total sample of between 5821 and 6166 test takers, differing slightly for individual items.

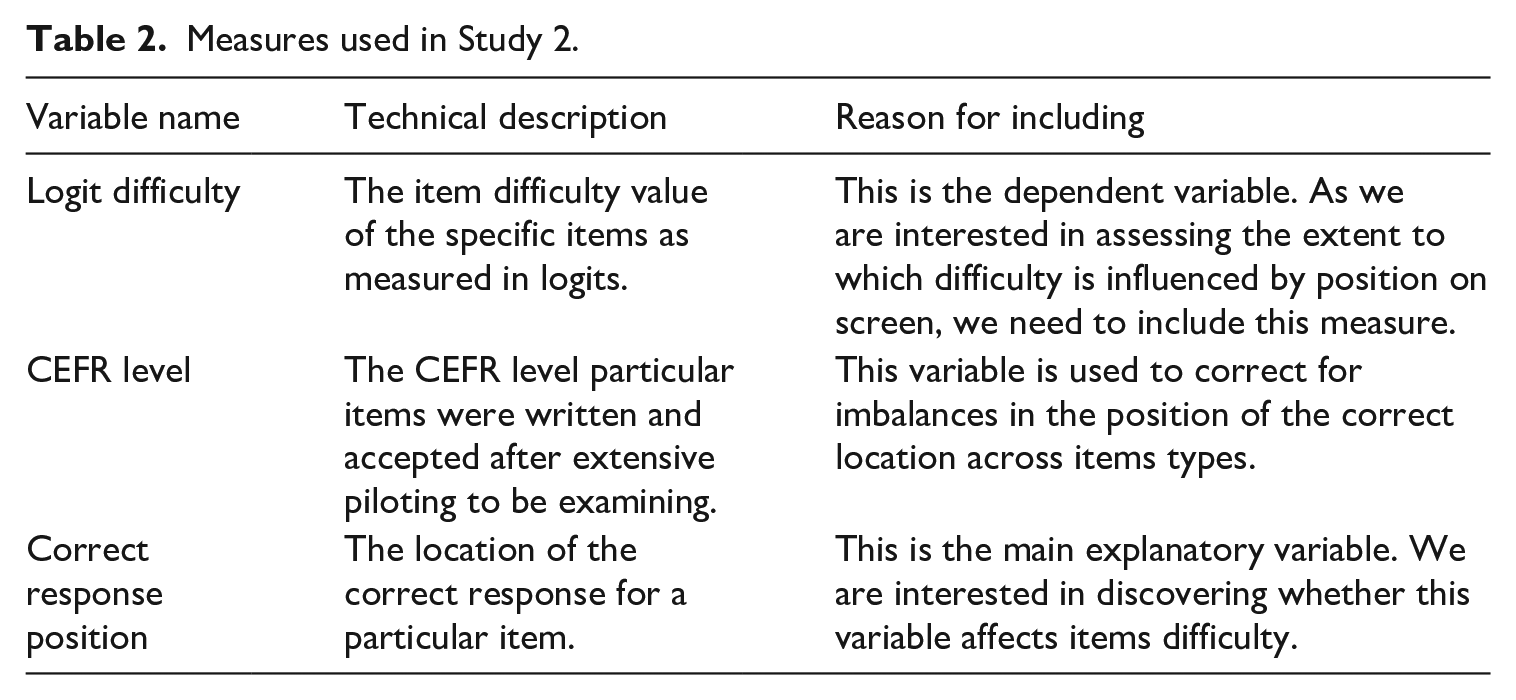

Measures

Three measures were modelled and descriptions of these measures are shown in Table 2. The dependent variable

Measures used in Study 2.

Statistical analysis

A linear regression model, fit via Ordinary Least Squares, was used to estimate parameters. The function

Results

Study 1: Eye-tracking study of correct response location

Visit duration, the dependent variable, emerged as highly negatively skewed. Therefore, we decided to

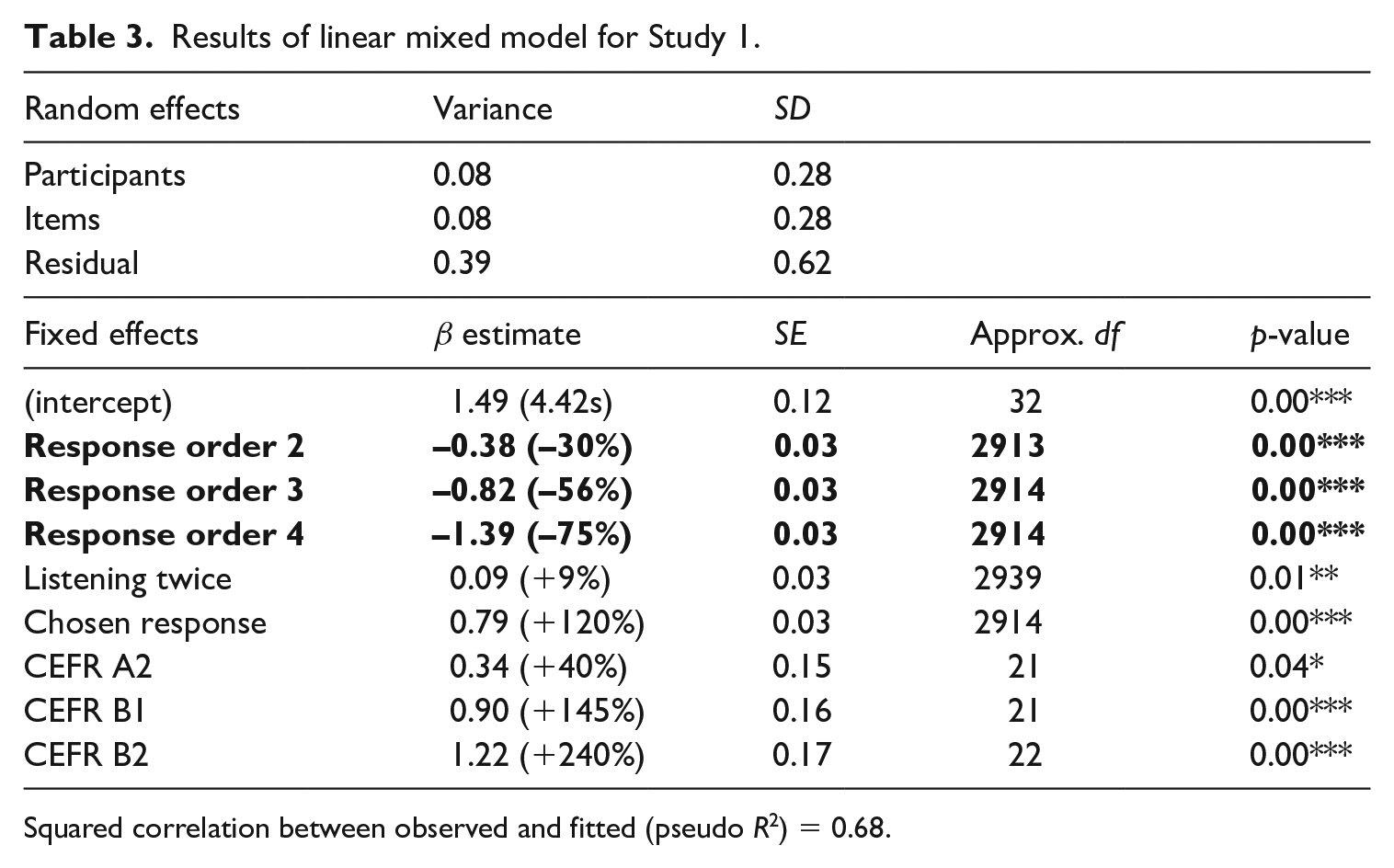

Table 3 shows the results of the linear mixed model. Considering model parsimony, including both random effects, participants and items, was found more useful than including none or just one (Akaike, 1974). While some of the total variance is explained by the two random effect variables, the majority of variance remains unexplained as a residual. Table 3 also reports the raw

Results of linear mixed model for Study 1.

Squared correlation between observed and fitted (pseudo

Without

The item was at A1 level.

The response option was first on the page.

The response option was not chosen by the participant.

The participant listened to the text once.

The participant had listening, reading, and eye-tracking test scores of 0.

In the following, the results on the different fixed effects included in the model as displayed in Table 3 will be described.

Response order

As can be seen in Table 3, the position in which a response option is presented to a participant plays a clear and highly statistically significant role: the further up a response on the page the longer the participant would look at this particular response and the lower down the response, the shorter the visit duration. Relative to the first option, the total visit duration decreased by 30% for the second option, by 56% for the third option and by 75% for the fourth option.

Listening twice

There is a statistically significant effect of listening twice to the sound file in that visit duration on the response options increases by 9%. As this effect appears surprisingly small, it might be the case that this variable correlates with the CEFR level. In other words, higher CEFR-level items tended to generate more instances where a candidate listened to the sound file twice, meaning that the variance of this variable is mainly explained by the CEFR level. Nonetheless, it is important to include this variable as a predictor to control for the fact that whether or not candidates listened once or twice was not regulated by the experimental design.

Chosen response

The response that participants finally opted for during the experiment affected the total visit duration of that response by an increase of 120%. This result is not surprising for two reasons. First, participants are likely to have spent more time looking at an option that they finally selected because of increased processing of this option and confirming their choice. Second, participants had to select manually the option via mouse click, which also increases the time they spend near the option to perform the clicking correctly. As with the variable of listening twice, this variable was included into the model, as it cannot be controlled for in the experiment and could lead to confounding with response order, for reasons discussed above, and thus with the CEFR level.

Study 2: Investigation of item difficulty based on correct response position

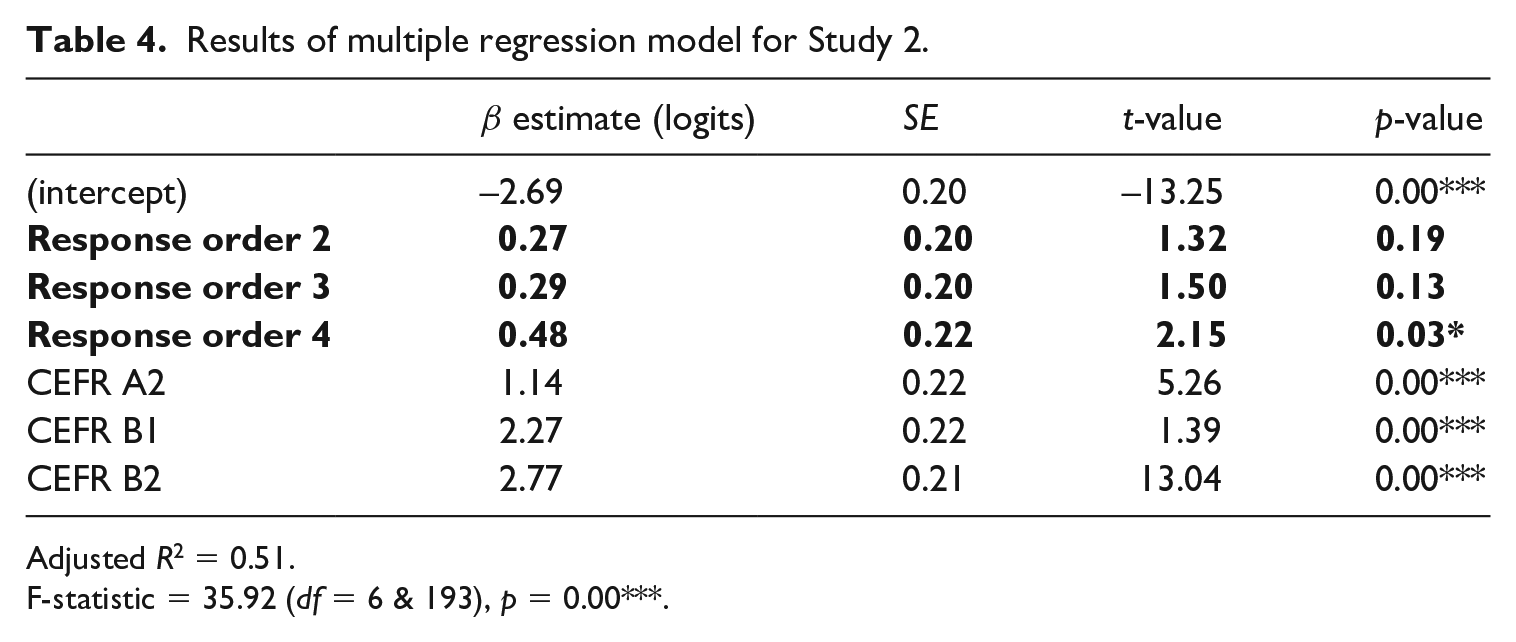

Table 4 shows the results of a linear regression model explaining item difficulty, as measured in logits, with the intended CEFR level of the item and the location of the correct response on the page. As shown at the bottom of the table, a large amount of variance is explained by this model (adj

Results of multiple regression model for Study 2.

Adjusted

F-statistic = 35.92 (

The model suggests that responses in the second, third and fourth positions on screen are 0.27, 0.29 and 0.48 logits more difficult than those in the first position, respectively. Although there is only one statistically significant difference between the categories in the response order variable, (i.e. the difference between the first and fourth), it should be noted that (1) the difficulties are in line with the hypothesis and (2) this analysis is only based on a relatively small number of items and is thus unlikely to find significance for what are relatively small but practically important effect sizes.

Discussion

Given the widespread use of MC tasks in language assessment programs around the world (both in low-stakes and high-stakes situations), potential test method effects on item difficulty need to be investigated thoroughly. The current study adds to the body of research on effect of response order on item difficulty in MC tests, as previous findings have been inconclusive. It did so by looking at such effects in relation to L2 listening assessment (a skill not yet researched in this area), using two new methods in this line of research: eye-tracking and linear mixed effects modelling.

In the first part of the study, we showed, through linear mixed effects modelling of eye-tracking data, that when solving items on the Aptis Listening Test, participants focused significantly longer at responses higher up the screen, with a clear progression from the top to the bottom of the screen. Test takers looked at the first responses 30% longer than at the second, 56% longer than at the third, and 75% longer than at the fourth. To our knowledge, it is the first time that such an effect is shown in relation to MC testing. One factor that could have contributed to these results is that test takers may not read subsequent options if they identify the correct option in the earlier response positions. This is also a commonly taught test taking strategy and especially useful for tests where time is a concern. However, although this phenomenon seems consistent with the primacy effect hypothesis found in research across disciplines, it does not explain whether item difficulty is affected by it.

In order to test whether items are more difficult when the key is placed in later positions, responses on 200 Aptis Listening Test items by about 6000 candidates for each item were modelled with regards to key position and item difficulty. The regression analysis showed that items with the key in fourth position are significantly more difficult than items with the key in first position, with a difference of 0.48 logits. These results confirm findings by Sonnleitner et al. (2016), who reported the same effect for MC vocabulary items. However, the findings from the present study might be taken to be a more appropriate reflection of a test-taking candidature as the Sonnleitner et al. (2016) study was based on a less authentic experimental setup. Our findings are also in line with results from Hohensinn and Baghaei (2017), who found the same tendency for a test consisting of grammar, vocabulary, and reading items, but argued that the effect was only very small and not of practical significance. However, Hohensinn and Baghaei (2017) only looked at a total of 60 four-option MC items across three different competency areas, so statistically significant results may not have emerged owing to the small sample size. Our sample of items was considerably larger (200) and more homogeneous as it only consisted of listening items.

These results are of importance for the development of MC listening tests, for three main reasons. First, test developers need to consider this primacy effect when deciding on the number of options. Other research has highlighted the advantages of three options compared to four options or more. For example, Haladyna and colleagues have shown that MC items with four options mostly have only one or two distractors with acceptable discrimination (Haladyna & Downing, 1993), and that developing a third distractor is also difficult from an item writer perspective (Haladyna, Downing, & Rodriguez, 2002). In addition, Rodriguez (2005) argued that more items can be administered when using only three options as test takers need less time to read all options, which in turn can be beneficial for reliability and construct representation. Our study supports the argument for three options by indicating that for four-option MC listening items ordering effects impact item difficulty, but that this effect is less pronounced when only three options are used.

Second, the findings show that in the item writing process careful consideration needs to be given to the positioning of the key, as this might influence item difficulty. Our results indicate that MC listening tests with many keys in later positions would likely lead to fewer correct answers than tests with many keys in earlier positions. This effect should thus be taken into account when comparing item difficulty across tests but also across different versions of the same test.

Lastly and most importantly, the findings question the commonplace practice of randomizing correct answer position for each individual candidate. Any given item may therefore vary in difficulty for individual candidates. This is particularly pertinent as it is currently unclear whether the effect is the same across items targeting different proficiency levels. For example, it might be the case that the effect is more pronounced in more difficult items. Since any item in this scenario has four different difficulty levels depending on the correct response position, randomization would introduce construct irrelevant variance. This then makes this practice an issue of fairness, as randomization would mean that for some candidates the same test would be more difficult than for others.

Future research

Future research should continue to explore this issue in MC listening assessment using controlled experimental design with larger sample sizes. Following Sonnleitner et al.’s (2016) research design, researchers could manipulate the position of the correct answer to counterbalance answer positions across participants and items. This could be done in both computerized and paper-and-pencil tests to probe whether a response order effect can be detected regardless of delivery mode, or whether different modes are affected differentially. In addition, such experimental designs might find it useful to control for a number of comprehension behaviours targeted in items to investigate whether, for instance, items eliciting comprehension of specific details are affected differently than items assessing inferencing. Moreover, it may be relevant to examine the effect in variations of MC items (three, four, five, or more options).

In addition, it would also be of interest to investigate potential explanatory variables of response order bias. For example, one could hypothesize that a primacy effect might be stronger in impulsive test takers. Using standardized questionnaires to establish test takers’ levels of impulsiveness (e.g., the Barratt Impulsiveness Scale, Barratt, 1994) would thus shed further light on test takers’ response processes and help to explain what person-level factor may cause not reading all the response options equally. Finally, future studies could further investigate potential primacy effects across different skills, candidates of different proficiency levels, and items targeting different proficiency levels.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Part of this research was funded by the British Council through the Assessment Research Awards and Grants scheme (AR-G/2017/3).