Abstract

During the 2020/2021 academic year, I conducted a mixed quantitative and qualitative analysis of the drivers of student engagement in the School of Politics and International Relations at Queen Mary University of London. My main finding was that students vary widely in their ability to manage the competing demands on their time. Those who are able to avoid scheduling conflicts between taught classes and off-campus commitments engage at higher rates and achieve better marks. Those who struggle – whether due to under-developed time management skills, a lack of confidence in asking for assistance, or both – miss out on vital learning opportunities, and their attainment suffers. While there is little a department can do about the broader socio-economic forces that require students to take on significant responsibilities beyond the classroom, my findings suggest there are things we can do to improve engagement among those who struggle. I conclude by recommending actions to help students resolve clashing commitments, to build their confidence in and ability to seek support from staff, and to develop positive peer networks.

Like many comparable UK-based academic departments, the School of Politics and International Relations (SPIR) at Queen Mary University of London (QMUL) has grown significantly in recent years. Seven years ago, we admitted around 120 students per year across our programmes; that number has since trebled. Growth has also meant significantly increased diversity. Around two-thirds of our undergraduates now come from a Black, Asian, or Minority Ethnic background. Approximately 70% are Home/UK students, 90% commute from the family home, and approximately 90% attended non-fee-paying schools. On average, they enter with 124 UCAS tariff points, equivalent to the half-way point between ABB and BBB at A-Level. Approximately 30% join us through clearing.

The rapid switch to full-scale online provision for undergraduate students necessitated by the Covid-19 pandemic raised serious questions about how we do things. In particular, it turbocharged our existing concerns about student engagement. We were already worried about variable levels of attendance, preparedness for taught sessions, and assignment submission and deeply aware of how little we really knew about our students’ lives beyond the classroom. Suddenly finding ourselves in an environment where we generally could not even see the people we were teaching made those concerns all the more pressing.

Against this backdrop, I designed and ran a two-stage study intended to identify and explain the key drivers of student engagement in our programmes. I first fielded a large online survey aimed at testing three main hypotheses – covering students’ time commitments, experiences of teaching and learning, and ability to access advice and support. On the basis of this survey, I then conducted four online follow-up focus groups to generate additional qualitative insights.

My main finding was that, in SPIR, the primary driver of student engagement is the ability to manage competing time commitments successfully. This is not, however, simply a function of the volume of outside time commitments individuals face. Instead, it reflects two things: first, the relative predictability and (in)flexibility of students’ outside commitments, and second, how prepared they are to manage potential conflicts. Both of these things are unevenly distributed across the population, in predictable ways; students from more ‘traditional’ backgrounds generally enjoyed more control over their outside commitments, understood more readily the need to take greater responsibility for their own learning in a university environment, and felt more confident asking for advice, support, and adjustments when necessary.

I begin by clarifying my conceptual approach, reviewing prior research on student engagement, developing three hypotheses about what might drive differential engagement rates in an undergraduate population, and explaining my research design and method. I then report my findings, initially reviewing each hypothesis separately before building an over-arching model, supplementing my quantitative analysis with discussion of the qualitative data generated by both free-text survey responses and focus group participants. Finally, I draw out some conclusions and highlight some implications, both for SPIR specifically and for colleagues in similar circumstances more generally.

Identifying the drivers of student engagement

Defining ‘engagement’

I began by reflecting on what ‘engagement’ actually is. As Trowler (2010: 17) makes clear, there is no ‘shared, universal’ definition. The result is that too many studies of engagement uncritically ‘measure that which is measurable’, an approach which leads ‘to a diversity of unstated proxies for engagement’, conceptual confusion, and a lack of mutual comprehension between studies. Different accounts focus on behaviour, psychology, culture, and political context and combinations of each (Kahu, 2013), without a clear common vocabulary.

In the hope of at least partially bucking this trend, I will use this section to explain what my understanding is and how I propose to operationalise it empirically. I define ‘engagement’ in constructivist terms. Constructivists think that ‘to learn is to see the meaning or significance of an experience or concept’; to be able to interpret it, evaluate it, and apply it in novel contexts (Carlile and Jordan, 2005). From this perspective, engagement is the opposite of ‘alienation’ – a state in which students continue to study, but do so in a surface fashion without deep learning as a result of feeling disconnected from both the material and the learning environment (Jones, 2001; Mann, 2001) – rather than of ‘non-completion’ (Axelson and Flick, 2010; Sinclair et al., 2003). Constructivists believe, in other words, that students sometimes look engaged when in fact they are not.

That distinction – between deep and performative engagement – is hard but not impossible to get at empirically. Some studies, for example, look at voluntary ‘contacts’ with teachers and peers, as well as academic support professionals, as proxies for students taking responsibility for their learning (Kuh and Gonyea, 2003). Others focus on ‘actions’ – such as the completion of defined study tasks, online or offline, synchronous or asynchronous (Krause and Coates, 2008), noting that students who engage with well-designed learning activities should achieve improved outcomes – in terms of satisfaction, the development of critical thinking skills, and improved performance in assessments (Greenwood et al., 2002; Kuh et al., 2008; Trowler, 2010). More engaged students learn more, however one defines ‘learning’.

This all makes sense to me. But I want to go further, by flipping it on its head and suggesting that where teaching, learning, and assessment are well-aligned, we would expect students who attend taught classes and complete assigned independent study tasks to attain higher marks, even after controlling for confounding factors such as prior attainment. In other words, if we are asking our students to do the right kinds of things, they should achieve the objectives we set them. Where we do see an association between task completion and attainment, even after controlling for differential prior attainment, we can reasonably infer that task completion constitutes a valid proxy for deep, rather than purely performative, engagement.

There are broader ways of thinking about engagement – Krause (2005), for example, posits an ‘oppositional’ form of alienated engagement, in which students actively challenge established pedagogies, while Carey (2013) talks about student engagement in university decision-making and governance and Zepke (2017) frames engagement as both accommodation with and opposition to the neo-liberalisation of education. My goal, however, was to inform SPIR’s approach to teaching and learning specifically. For this reason, I focused on how our students engaged with timetabled teaching and assigned learning tasks, on the basis that doing so should improve their learning at an individual level (Trowler, 2010).

Developing hypotheses

H1. Students with fewer outside demands on their time will be more engaged.

H1 introduces a fairly straightforward idea – that students vary in terms of how much time they can spend studying in light of other commitments they have away from university, and that those who have more time available will be more engaged (Baron and Corbin, 2012). I was particularly concerned at the outset of this study by the suggestion that some of our students were spending much of their time pursuing paid employment instead of working on their degrees. Financial difficulties are the main reason why students drop out of UK university courses, especially in more expensive parts of the country (Bennett, 2003; Pollard et al., 2019). Faced with rising living costs, stagnant financial support, and the prospect of long-term debt, students are increasingly undertaking paid work alongside their studies (Krause, 2005). Incoming students, moreover, often have unrealistic expectations of what university will be like (Money et al., 2017), underestimating both the differences in structure, expectations, and support systems between their earlier educational experiences and higher education and the extent to which they will have to manage their time actively to carve out study sessions (Kuh, 2007). Students undertaking more than 20 hours of paid employment per week are notably more likely to fail to complete their studies than classmates, including those who work up to (but less than) 20 hours per week (Hovdhaugen, 2015). So time commitments should affect engagement.

H2. Students who feel more positive about their learning experiences in SPIR will be more engaged.

H2 holds that students engage more when they enjoy the teaching they receive and the independent study tasks we ask them to complete. Recent research suggests that student satisfaction and engagement are linked, particularly in online learning environments (Rajabalee and Santally, 2021). SPIR taught online for the whole 2020/2021 academic year, so the survey results reflect a mixture of online and in-person experiences, making this point especially salient. Those who feel most positive about and confident in directing their own learning are particularly likely to engage more consistently in online and asynchronous study activities, reflecting the importance of self-motivation in these environments (Greener, 2008). In part, this reflects the alignment of student expectations and experiences. Students whose expectations of learning align with reality experience higher levels of satisfaction and greater engagement (Schwarz and Zhu, 2015). Students who engage in active classroom behaviours – such as reading aloud or contributing to discussions – also achieve better outcomes than those who do not (Greenwood et al., 2002). Students who perceive a clear relationship between desired goals, engagement, and outcomes are more likely to focus, to adopt deep learning strategies, to put in greater levels of effort, and to persist in the face of setbacks than those who do not (Miller et al., 1996). So enjoyment and positivity should matter, too.

H3. Students who feel better able to access advice and support will be more engaged.

H3 proposes that students who feel more supported in their studies will be more engaged. The word ‘support’ is deliberately left vague; indeed, one of the goals of the qualitative stage of this study was to define what students think ‘support’ looks like. Three broad definitions dominate the literature. The first understands support as something students seek out from academic staff, recognising the importance of social ‘ease’ in facilitating student–staff engagement (Jack, 2016). The second treats support as something students draw from their peers. Those who feel most strongly embedded in a learning community, and are able to call on classmates for guidance and advice, tend to be more engaged (Pittaway and Moss, 2006). The third definition treats support as something students do for themselves. Those who feel empowered to take responsibility for their own engagement, and who have access to tools to measure and understand how they are getting on, tend to be more engaged overall (Dragseth, 2019; Whitley and Dietz, 2018). That sense of empowerment is, however, unevenly distributed (Thiele et al., 2017). More ‘traditional’ students – hailing from families with prior experience of higher education or from high-participation schools – have more of it.

Research design and methods

As the theoretical discussion above implies, studying engagement is difficult. This continues to be true even once we accept the necessity of focusing on observable behaviours. Students at different levels engage in different ways (Carini et al., 2006). There are differences in terms of how students engage with their studies in fully online setting versus on-campus settings (Robinson and Hullinger, 2008). Some studies rely on student self-reporting, on random sampling, and on various measures of (perceived) teaching quality, each of which has distinct strengths and weaknesses (Fredricks and McColskey, 2012). Several studies struggle to distinguish adequately between measures of engagement, drivers of engagement, and the consequences of engagement (Kahu, 2013) – for example, mixing up data on student attitudes, attendance, and marks without identifying clear (if theoretical) causal relationships. Others point out that these things inevitably interact – that students who possess more of the resources that support engagement in higher education will also benefit from these resources in terms of apparent outcomes such as graduate employment and earnings (Crawford and Vignoles, 2014; Friedman et al., 2017).

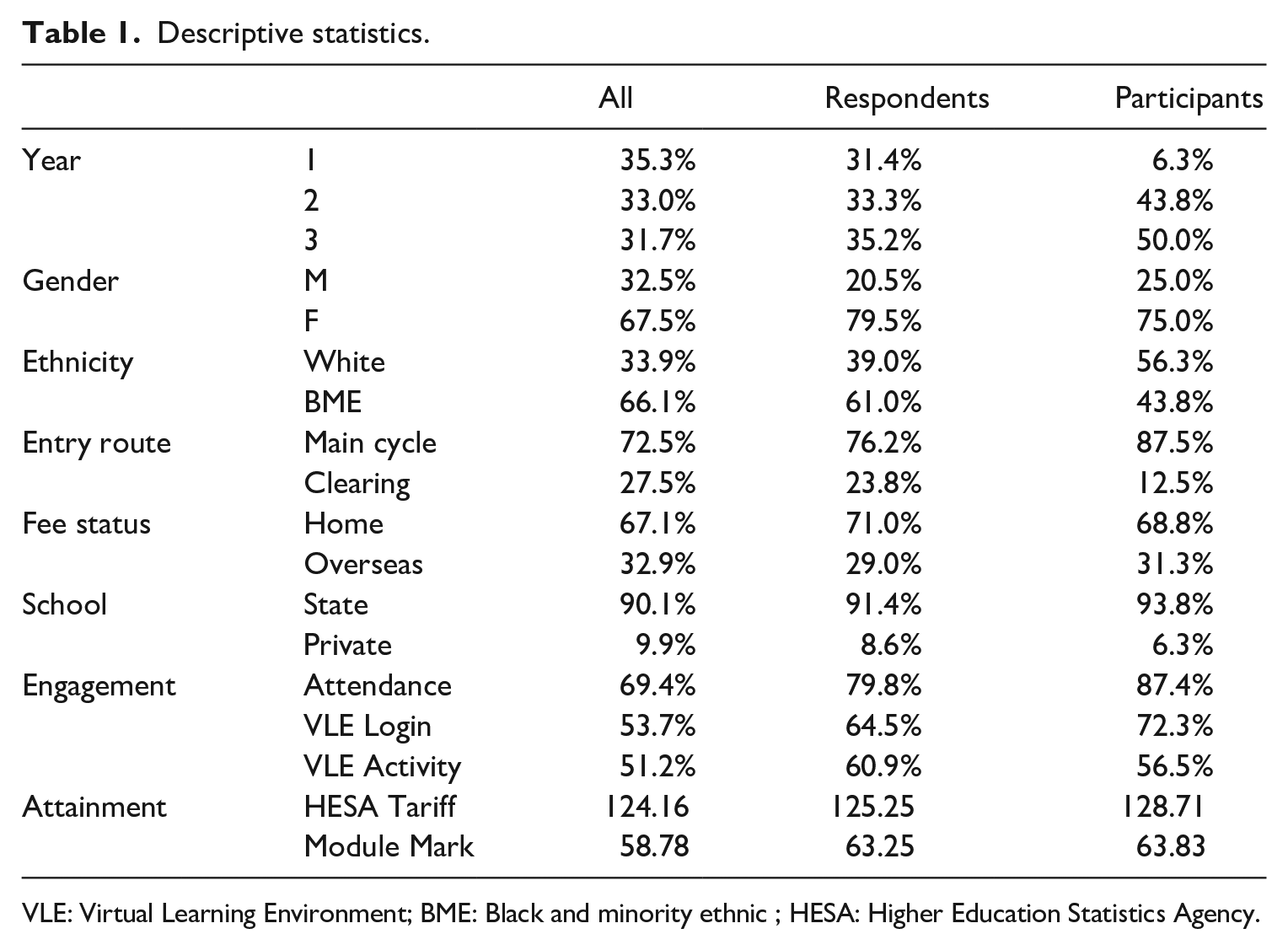

The biggest challenge, though, is a simple one – less-engaged students tend to respond less frequently to studies aimed at understanding engagement than their more engaged classmates (Clarksberg et al., 2008). This is a critical point for this study. As Table 1 shows, the average survey respondent was more engaged than the average student, and the average focus group participant (I will use the terms ‘respondents’ and ‘participants’ to indicate whether I am discussing survey or focus group data throughout the following analysis) was more engaged than the average survey respondent. There are also some further discrepancies; first-year students are under-represented among focus group participants. BME (Black and minority ethnic) students (particularly Black students), male students, and students who entered via Clearing are under-represented among survey respondents and focus group participants.

Descriptive statistics.

VLE: Virtual Learning Environment; BME: Black and minority ethnic ; HESA: Higher Education Statistics Agency.

In response to these observations, I did two things. First, I was cautious about generalisability. It is possible that distinct patterns of engagement exist among non-respondent students that the survey simply cannot see. I think it is relatively unlikely; less-engaged students are under-represented but not unrepresented among survey respondents. But it is possible. More significantly, I was careful not to treat my results as representative of the distribution of resources in the population as whole. In other words, I think the relationships I identify below between resources and engagement are likely to exist across the whole population, but that the distribution of those resources will differ. Moreover, given I know the non-respondent population was less engaged than the respondent population, I can reasonably infer that there are lower levels of the resources needed to engage successfully among non-respondents than among respondents. To put it another way, I think it is reasonable to expect that non-respondents experience the same opportunities and challenges as respondents, but to different degrees. I would anticipate, consequently, that any intervention designed to address challenges raised by respondents would also benefit – perhaps disproportionately so – non-respondents.

Second, I will be cautious in interpreting some of the qualitative data generated through free-text survey responses and focus group discussions. On occasion, I may suggest reading against the evidence, recognising that it tells us the most about the students we are least concerned about.

To reflect the multi-faceted nature of engagement, I devised a two-stage, mixed-methods approach to testing my hypotheses. In stage 1, with support from two colleagues, I fielded a large online survey to all 968 undergraduates registered for programmes offered by SPIR at the start of the 2020/2021 academic year. This survey posed a series of questions about student engagement, contextualised using data from QMUL’s Learner Engagement Analytics dashboard, covering seminar attendance, engagement with the Virtual Learning Environment (VLE), and assignment marks (provisional in the case of first year students).

Building on the discussion of constructive alignment above, I first conducted a prior analysis which showed that seminar attendance, frequency of engagement with the VLE, and level of engagement with the VLE were each significantly positively correlated with average module mark, controlling for prior educational attainment. This relationship is also somewhat visible in Table 1, which shows that survey respondents and focus group participants are more engaged and achieve higher module marks than the average student, despite having similar levels of prior attainment as measured through HESA tariff scores. On this basis, I consider these metrics acceptably valid proxies for deeper engagement.

In stage 2, I conducted four online focus groups with a total of 16 survey respondents selected to represent a cross-section in terms of demographic characteristics and programme of study, and with reference to what appeared to be significant survey responses. I invited three groups of students to participate in focus groups; the first reported that they did not feel confident speaking up in class, the second that they had sometimes missed taught classes due to clashes with external commitments, and the third that they would prefer to spend more time on their studies but were unable to do so. I received ethical approval from QMUL’s Research Ethics Committee (QMERC2020.001) for this approach.

Results

Some 210 SPIR students responded to the online survey, which ran for 3 weeks over the 2020/2021 Christmas vacation, a response rate of 21.7%. In this section, I present a series of regression models designed to identify correlations between survey responses, engagement, and attainment, supplemented by a discussion of qualitative comments made by survey respondents and focus group participants.

H1: external time commitments

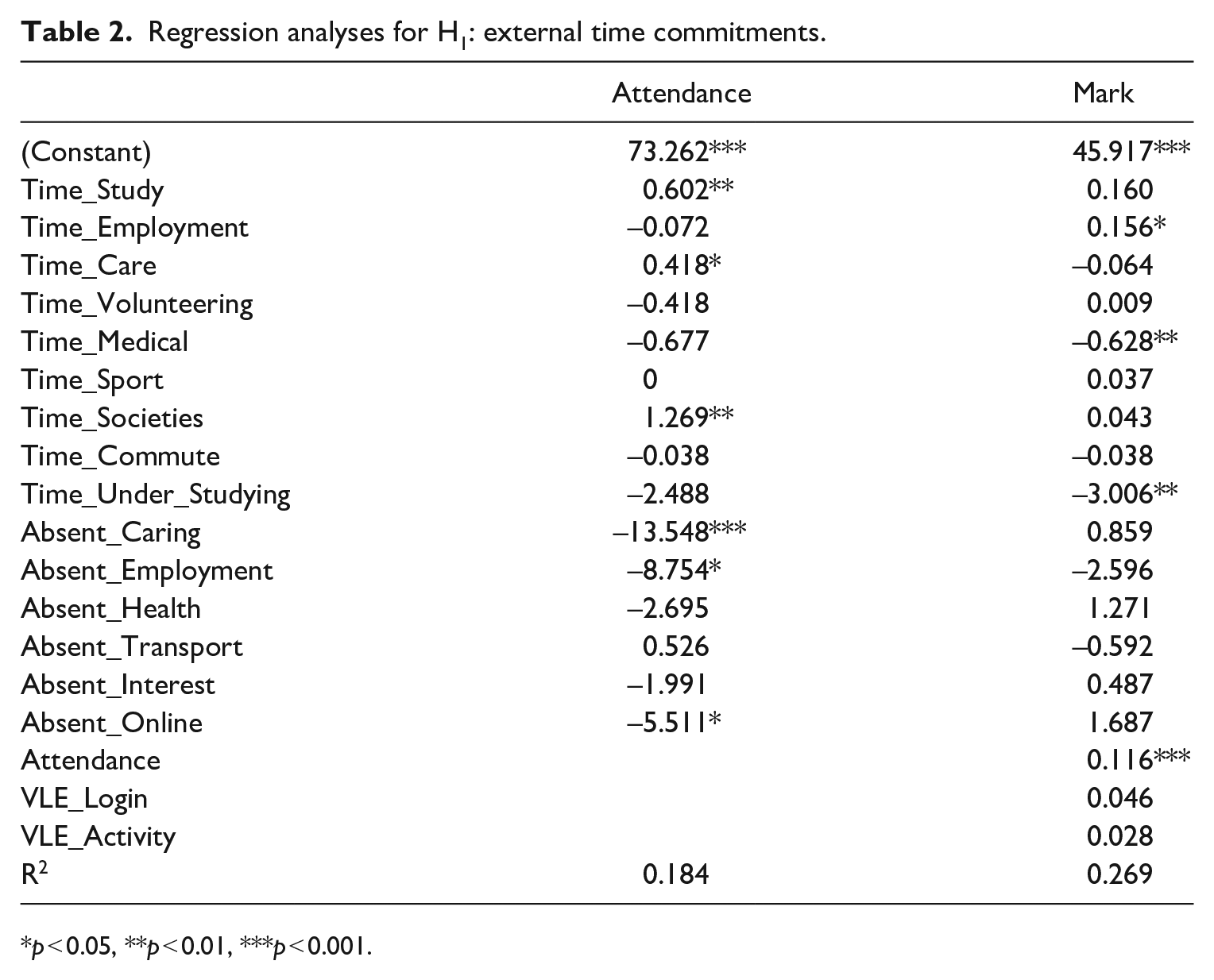

Table 2 reports the results of regression analyses using data on how respondents spend their time. To begin with, there is a positive association between self-reported weekly study hours and attendance. This suggests that students who attend taught classes more regularly also spend more time completing independent study activities, which in turn implies that attendance can serve as a valid proxy for engagement overall. Attendance is, in turn, strongly correlated with attainment.

Regression analyses for H1: external time commitments.

p < 0.05, **p < 0.01, ***p < 0.001.

There are three significant associations between the absolute amount of time respondents said they spent on external commitments and attendance. Interestingly, all are positive. Respondents who reported spending more time each week studying, fulfilling caring responsibilities, or participating in student societies had higher average attendance rates than those who spent less time on those things. There was, however, a negative association between having ever missed a seminar due to work, caring responsibilities, or Internet connectivity issues and seminar attendance. This suggests that, for students with external commitments, the issue is not so much the absolute amount of time those commitments require, but the fact they sometimes clash with taught classes.

This is actually quite an important finding, for two reasons. The first reason is that it somewhat goes against our initial intuitions within SPIR. One of the concerns driving this project was the sense that many of our students were spending too much time on outside activities – especially paid work – leaving them too little time for their studies. We cannot rule that out. It is possible that there are significant numbers of students doing just that, but that too few of them were able to respond to the survey to make them visible. But even among the handful of respondents who were trying to balance full-time paid employment with full-time study, the average attendance rate was 75.35% and the average module mark 62.1 – below the average for survey respondents more generally, but above the average for the population as a whole. The second reason is that it raises an issue that we might actually be able to do something about. There is not much an academic department can do about students needing to work full-time to make ends meet, or, indeed, needing to take on major care responsibilities. But we probably can help those students handle timetabling clashes.

Both the free-text survey responses and the focus group discussion shed important additional light on the relationship between outside commitments and attendance. Survey respondents focused in particular on the perceived flexibility offered by some aspects of online learning. They broadly welcomed the use of pre-recorded videos to provide instruction, noting that pre-recorded videos could be paused and replayed at a student’s preferred pace and watched at a time that fit around other commitments. Some respondents also commented positively on the relative flexibility offered by online seminars and advice and feedback hours. They were less enthusiastic about the online experience more generally, finding it alienating compared to face-to-face teaching.

Generally speaking, the focus group participants had much higher attendance rates than the average, so this is an example where I believe the right approach is to read against the evidence. The key finding in this regard was that participants reported feeling empowered to ask permission to attend a different seminar group in the event that they encountered an irreconcilable clash with outside responsibilities. The most common reason that participants reported for needing to miss a seminar was a last-minute change of shift allocation at work. Some had also experienced needing to take charge of caring for siblings at short notice. Participants reported variation between modules in terms of whether convenors would advertise the possibility of attending a different seminar session or give permission to do so. Given we can reasonably infer that at least some students will not feel confident asking for accommodations that have not previously been advertised to them, here the key finding is that we should at least consider establishing a school-wide policy on whether and when students may attend a seminar other than the one to which they are assigned on their timetable and ensuring that this is widely communicated by teaching staff.

Controlling for the engagement variables, there is a positive association between self-reported weekly hours of paid employment and average mark, suggesting that students who have paid jobs achieve better marks than classmates who do not, provided they are able to attend at the same rate – which in turn seems to depend on the extent to which their employment commitments clash with their classroom commitments. There is a negative association between weekly hours spent receiving medical treatment and average mark. This is the only type of outside time commitment that appears to negatively affect how well students perform in assessments in this way. Given there is no significant association between this variable and attendance, it suggests that there are students experiencing ill health who manage to attend timetabled classes, complete VLE activities, and submit coursework, but who nevertheless achieve lower average marks than classmates who otherwise appear similar in the data.

There was also a negative association between average mark and whether a respondent reports that they would spend more time studying if they had fewer outside commitments. Again, this variable is not significantly associated with attendance, suggesting it points to students struggling to dedicate sufficient time to independent study – a finding that should caution us against relying entirely on attendance as a guide to engagement. In many cases, respondents appeared to under-estimate the amount of time that they should routinely dedicate to independent study; a finding also reflected in their self-reported weekly study hours. A majority of respondents reported spending less than 30 hours per week on their degree programme (including taught classes), which means a majority of respondents were spending less than our minimum recommended number of weekly study hours on their degree (and, recall, survey respondents were more engaged than non-respondents, so actually the picture is even worse than this). Several respondents reported feeling like they did not have sufficient time available to complete the required independent study tasks. In some cases, this clearly reflected under-developed time management skills. But it also clearly reflected respondents having under-estimated the time commitment expected of them. It is hardly surprising if students are struggling to complete tasks designed to take 6 hours if they are only allocating 3 hours to them.

H2: attitudes to learning experiences

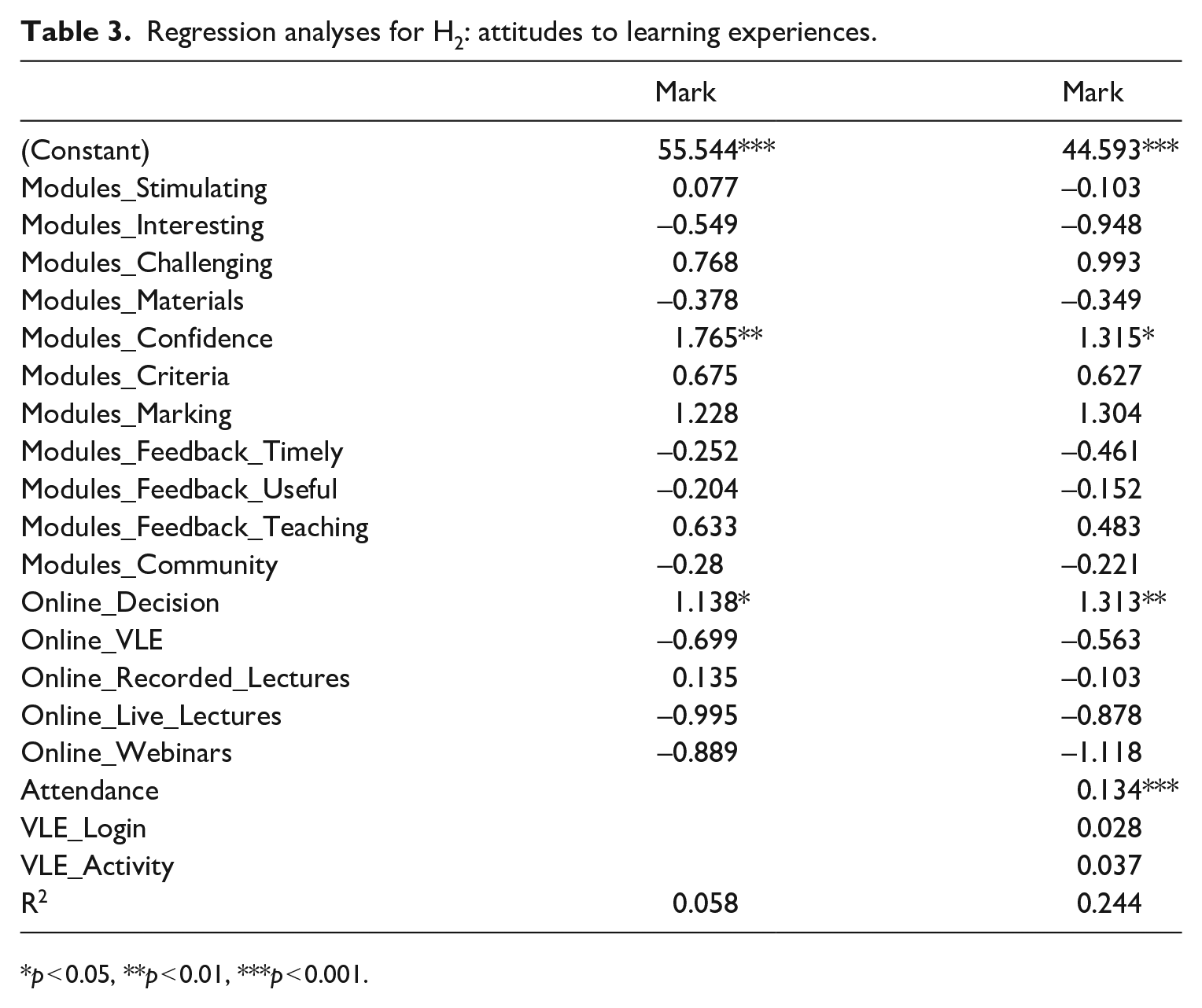

Table 3 reports significant positive associations between respondents feeling that their experiences in the classroom had boosted their confidence and believing that QMUL made the right decision to teach online in 2020–2021, and average mark. Both associations remain significant after controlling for engagement. There were no significant independent associations between any of these variables and attendance.

Regression analyses for H2: attitudes to learning experiences.

p < 0.05, **p < 0.01, ***p < 0.001.

The fact that respondents who supported the decision to teach online during 2020/2021 achieved significantly higher module marks, even after controlling for engagement, likely reflects the contrast between ‘alienation’ and ‘engagement’ described above (Jones, 2001; Mann, 2001). It suggests that even among students who otherwise looked similarly engaged – attending webinars at comparable rates, for example – different levels of satisfaction contributed to different outcomes.

The question of what drives confidence is a complex one, but it appears to boil down to how students experience the transition to university-level learning. In the free-text comments, survey respondents highlighted how jarring that transition might be. For many of our students, moving from pre-university to university study meant a shift in the balance of responsibility away from a teacher-led model in which most learning happens in the classroom to a student-led model in which it mostly happens elsewhere; and a shift in the object of study, away from a model based around mastering a clearly defined body of knowledge to one in which they were expected to develop and exercise independent critical judgement in the face of a potentially unlimited body of knowledge. These are big shifts. It is unsurprising that (at least some) students find them daunting. But the effect is compounded by their very nature; moving from a more-structured, more-certain environment to a less-structured, less-certain one perhaps inevitably instils anxiety, especially in students who have – by definition, in a selective university programme – mastered pre-university study.

The effect is further compounded by our students’ uneven prior preparation for university. While the survey respondents largely dwelt on the differences between their pre-university and university experiences, the focus group participants reported considerable variation in terms of how well-prepared they had been. Some schools clearly explicitly orient their A-Level students towards what we might recognise as a university mindset – requiring them to take responsibility for planning and conducting independent study tasks and using both classroom time and formative assessments to develop their critical thinking skills. Others focus solely on getting their students through their A-Levels, heavily structuring and supervising their nominally ‘independent’ learning, and never moving beyond the description and evaluation of defined bodies of knowledge to the assessment and interpretation of broader fields of learning that characterises the difference between pre-university and university study. The difference is not visible in terms of A-Level marks; there may well be advantages in terms of raw A-Level performance of the latter approach. But it appears suddenly once the university term starts. And it is structured, though not entirely determined, by background characteristics. In general, participants from more ‘traditional’ backgrounds were more likely to have experienced at least a taste of university-style study while at school; those from less ‘traditional’ backgrounds were more likely to have never encountered genuinely independent learning. Here I think we can take the focus group participants’ experiences as representative of differences that exist in the population and reasonably infer that the average student is less likely than the average focus group participant to have been well-prepared for university study by their prior experiences.

Two further factors came out in the qualitative data that help make sense of how these transition factors impacted students. First, and concerningly, a non-trivial proportion of survey respondents reporting having been positively surprised by how approachable university staff were. While this looks like a good finding at first sight – hey, we’re not that scary after all! – it actually reflects something potentially concerning (and, again, unevenly distributed). Some focus group participants told me that their school teachers told them not to expect to be able to seek advice and support from academics. Those who start out feeling more confident are likely able to ignore such statements – the focus group participants largely fell into that group, although members of the group selected because they expressed a lack of confidence reported that it took them some time to get comfortable with the idea of approaching an academic. But those who already feel nervous about transitioning into an unfamiliar environment are less likely to take the risk, and so less likely to find out what their more confident classmates find out – that their teachers were wrong. These factors compound each other. Some respondents reported having been surprised and overwhelmed by the differences between pre-university and university education, and then on top of that not having felt confident asking staff for help because of a prior belief that university staff were not willing to offer such help. The most frequent point both respondents and participants made when asked what advice they would give their younger self about starting their studies was to make greater use of staff advice and feedback hours.

Second, both the survey respondents and the focus group participants emphasised how important it was for teaching staff to help them bridge the gap between pre-university and university study. In particular, they talked about the need to make traditional academic source materials more accessible and the importance of making classroom spaces ‘safe’. They understood, accepted, and indeed welcomed the fact that studying politics and international relations in a large and diverse school inevitably involved spending some time on topics, texts, and activities that they did not personally prefer. They did not want their studies to be ‘dumbed down’ – they wanted to be challenged. But they did point to the importance of what we might recognise as effective pedagogy. They felt more enthused by, and therefore more likely to engage with, learning activities that clearly aligned content delivery, independent study, and assessment. They recognised that their perception of the alignment of these things reflected both their actual underlying alignment and the clarity with which teaching staff explained the rationale of what they were being asked to do. Engaging students, in other words, means both aligning teaching, learning, and assessment and explicitly explaining this alignment.

It also means creating a classroom atmosphere in which students feel able to try things out and make mistakes, which was what they meant by making classrooms ‘safe’. There was no sign in my data of so-called ‘snowflake’ behaviour, in which students refuse to engage with ideas that contradict their own existing beliefs. Quite the contrary, in fact – the focus group participants, in particular, wanted more opportunities to debate with classmates who disagreed with them – an observation that also, admittedly, reflects the fact that they tended to be more enthusiastic about the material in the first place and more likely to be on top of their independent study work. They also wanted, however, to be able to be under-prepared, or just plain wrong, during a discussion, without being humiliated for it – this was a particular issue for participants who had expressed a lack of confidence in speaking up in class. They wanted staff to recognise effort as well as accuracy – ‘good try, but here’s why that’s not quite right’ – to show warmth and compassion towards them and to avoid putting them ‘on the spot’ without prior warning. Reflecting the discussion above, both survey respondents and focus group participants appreciated when teachers made clear what level of preparation was expected before a session, but also understood that sometimes students would fail to prepare adequately, either because of time constraints beyond their control or simply because they had not yet fully developed the capacity to manage their time independently.

H3: access to advice and support

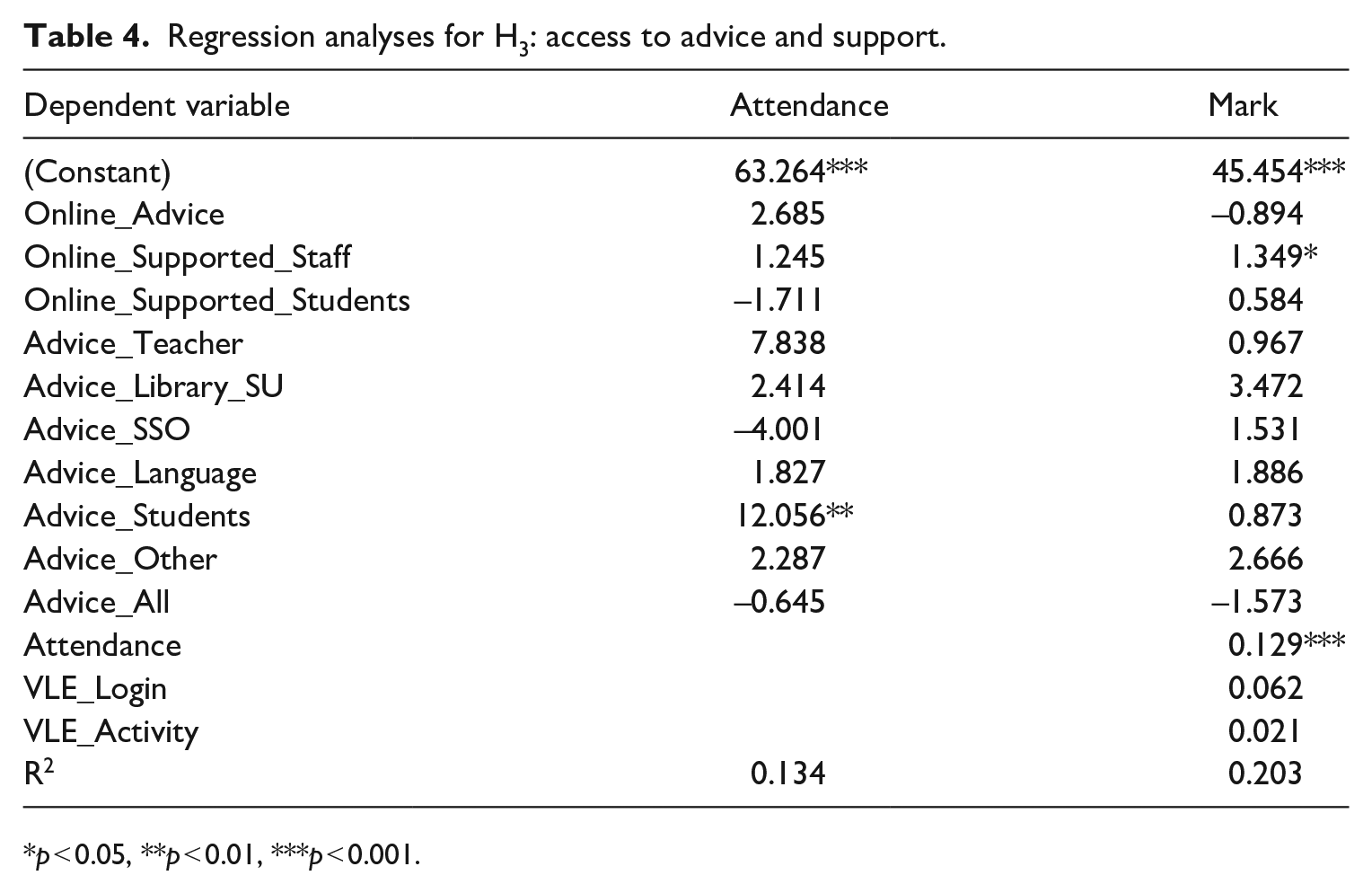

Table 4 reports a strong positive association between respondents reporting they had sought advice from classmates and attendance, and between feeling supported by staff online and average module mark. Controlling for the engagement variables, respondents who felt supported by staff during online learning had higher average marks than those who did not, suggesting that there is a direct link between a general sense of being supported and student outcomes. Moreover, respondents who had sought advice and guidance from their fellow students had much higher attendance rates – which matters because attendance is also strongly positively correlated with average marks.

Regression analyses for H3: access to advice and support.

p < 0.05, **p < 0.01, ***p < 0.001.

Most of the free-text comments under this heading repeated insights mentioned already. For example, respondents who felt confident approaching staff for advice felt satisfied with the support they received, but some respondents did not feel confident asking for help in the first place. That level of confidence reflected background factors such as prior educational experience. But it also reflected classroom behaviours; staff could build confidence by visibly working to bridge the pre-university to university gap.

Perhaps the most important finding of the focus groups – a finding that echoed comments by survey respondents – was a tentative definition of ‘support’. Participants generally felt empowered to seek out advice and feedback when they needed it, though some reported that it had taken them some time to work out when that was – and, again, it is worth remembering that these students are more engaged than the average. But what they really wanted was for us to be more pro-active about contacting them. In particular, they praised colleagues who periodically wrote to them (either as their advisor or as a seminar tutor or module convenor) to ask if they were ok. They also suggested increasing the number of formally scheduled advisor meetings, noting that few students actively sought contact with their advisor unless prompted to do so. Once again, the implications of expecting students to take responsibility for seeking out advice – when we know that some are better prepared than others to accept this responsibility – are clear.

Respondents also commented on the extent to which they felt a sense of community within SPIR. Here, the most interesting observations related to our size and scale. In many cases, the seminar tutor is the only person in the room who knows the name of everyone else in the room. We are big enough, and offer a wide enough range of modules, that this can be true even for students in their final year. They can walk into a seminar room and never have met anyone there before – a factor compounded for those with heavier off-campus responsibilities or who live further away. This is an issue because survey respondents repeatedly made clear they felt most happy about attending and participating in taught sessions when they felt a personal connection to their classmates. One implication of this is that time spent building social bonds among students studying together is likely time well spent, even if it is not directly focused on substantive material. The same goes for school-wide, and student-staff, social activities; several respondents pointed to our semi-structured Coffee and Politics sessions, which bring staff and students together to talk informally about topical political issues, in this regard.

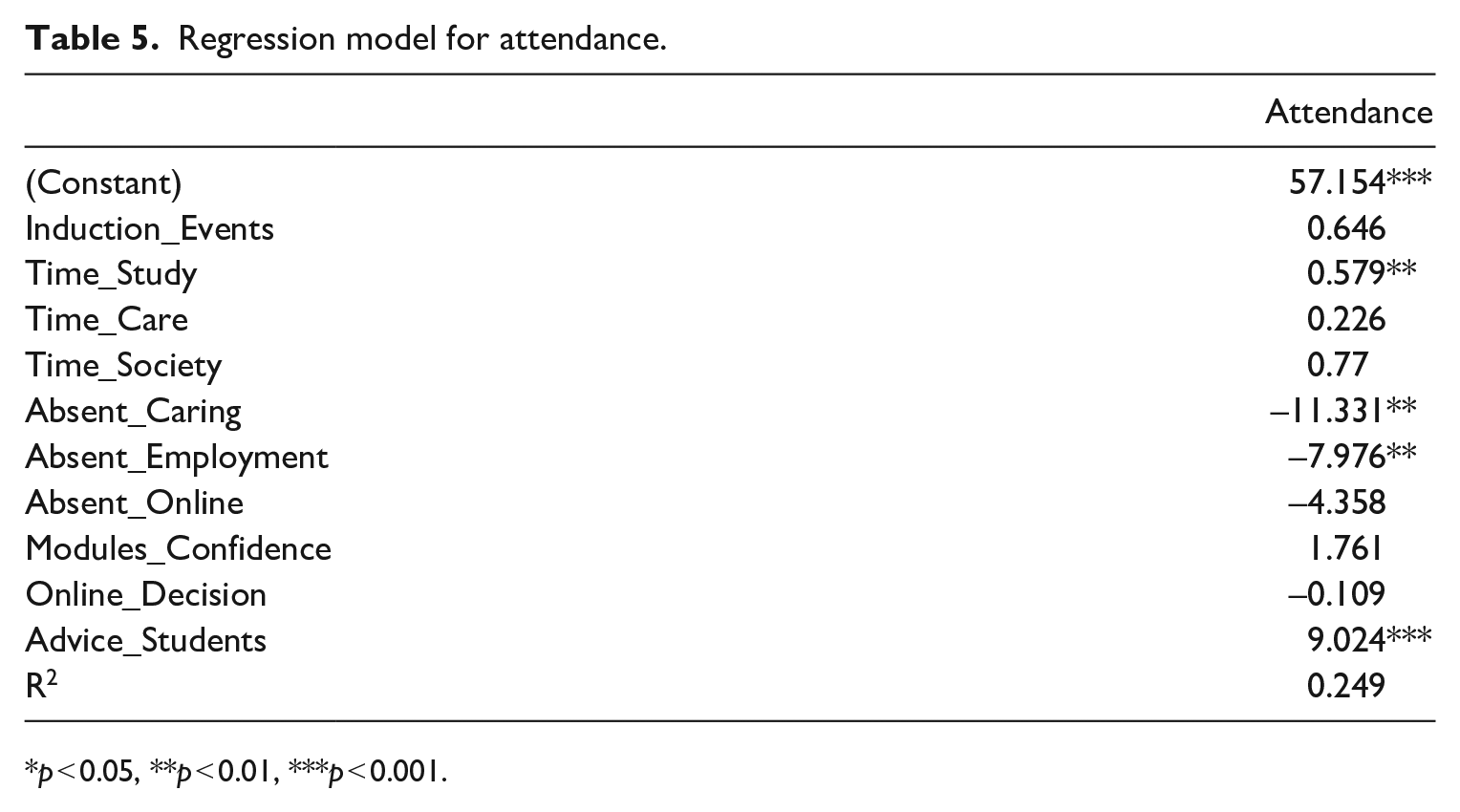

All variables

Attendance is consistently associated with outcomes among survey respondents. Understanding the drivers of attendance is therefore crucial. Table 5 uses the variables identified as possible predictors of engagement in Tables 2–4 to construct a more focused model. It shows that, controlling for the other variables, respondents who reported spending more time studying and having sought advice from their fellow students had significantly higher attendance rates, while those who reported ever having missed a taught session due to caring or employment responsibilities had significantly lower attendance rates.

Regression model for attendance.

p < 0.05, **p < 0.01, ***p < 0.001.

This model offers the clearest indication so far of what factors really affect student engagement, reinforcing the points made in the discussion above that what matters most is not the total volume of outside commitments students face, but the inflexibility of those commitments relative to their class timetable. It also points to the importance of feeling part of a community with other students – all else being equal, survey respondents who reported that they had sought advice from their classmates in the past had attendance rates nearly 10 percentage points higher than those who said that they had not. H1 is, in other words, the most significant of the three, followed by H3. Time commitments matter most, followed by feeling able to access advice and support (from fellow students). Students’ experiences of teaching and learning do still matter, as the discussion of H2 shows. But, with the exception of the fact that classroom experiences can help students feel more comfortable seeking support from their peers, the most important things that SPIR can do to improve engagement involve supporting students to manage the competing demands on their time and to develop positive peer networks.

Conclusion

I began this study in the hope of identifying not only what was driving differential engagement rates among SPIR students but also gauging the distribution of different assets and understanding how they had an impact. In this sense, I was encouraged by the results. A significant proportion of SPIR students took time out from their Christmas vacation to complete the survey. Their answers offered real insights into the resources they can draw upon in engaging with their studies and the challenges that they face, although there are limits to how far we can generalise from the survey results given differential response rates among different groups. They also showed an encouraging level of interest in, and open-mindedness about, the different ways politics and international relations students might effectively learn. I have read caricatures of undergraduates looking to take the easy route to a qualification, demanding a certificate in return for the tuition fees they pay. I did not see evidence of such thinking here.

My key finding was that the main driver of engagement among survey respondents and focus group participants was not how much time they were able to spare from their outside commitments to spend on their degree, but rather their ability to manage direct clashes between timetabled teaching and their other responsibilities. While there was some evidence that having more time available enabled respondents to spend more time on independent study tasks, this did not appear as significant as the question of scheduling conflicts. Crucially, given the presence of attainment gaps among students of different demographic backgrounds, it emerged from the qualitative evidence generated by both the survey and the focus groups that those students who felt most prepared to manage their own time commitments, and those who felt confident asking for accommodations in the event of schedule conflicts, were largely able to mitigate them. In other words, we would expect students whose pre-university experiences – educational, familial, or social – had best prepared them for the responsibility associated with undergraduate study to be better able to overcome potential conflicts without detriment to their engagement or attainment. Their classmates, by contrast, whose prior experiences may not have equipped them with the time management skills required to balance competing priorities, or with the confidence to seek advice and support when unable to resolve a problem on their own, were more likely simply to miss out on learning opportunities, with negative consequences for their attainment overall. This is a significant finding, speaking as it does to the otherwise invisible consequences of a diverse student body’s wide range of prior knowledge.

It is also significant for the fact it points to plausible interventions that might significantly improve the situation. Several stand out. We clearly need to pay much more attention to the process of transitioning to university study, recognising that students whose pre-university qualifications look similar on paper will have had very different experiences in obtaining them. Both the survey respondents and the focus group participants strongly urged that we increase our (paid) employment of current students in our recruitment, admission, and induction activities, pointing out, for example, that only other students can effectively convey the message that staff really are accessible. We also need to think about our teaching. Our students seem to know good teaching when they see it and to have logical reasons for wanting a certain amount of effort on our part to bridge the gap between what they have done in the past and what we expect them to do now. Simply understanding – as I hope this study helps us do – the challenges they face and the resources they need to succeed should make us better able to engage them. We need to work on community building, recognising that good classroom performance reflects good underlying social ties. And we need to look at ways of helping our students to manage their time effectively, but also to respond flexibly when they encounter immovable conflicts. We should, finally, consider whether we can be more pro-active in the ways we offer support, perhaps making greater and more timely use of Learner Engagement Analytics to identify students beginning to struggle.

Although my results pertain initially to SPIR, there are reasons to believe that similar patterns might recur elsewhere. To begin with, the resources I identified as key drivers of student engagement are likely to be replicated for students on other degree programmes and at other institutions. All students need time to study. Some will experience greater and more inflexible demands on their time beyond the classroom than others. Some will be better prepared for university, in terms of their ability to manage their time, their understanding of the nature of independent study at university level, and their confidence in engaging with staff. These patterns will follow other, predictable, patterns; students from lower participation backgrounds and schools, or less privileged socio-economic circumstances, are less likely to possess the resources needed to engage effectively than their better-prepared, less schedule-conflicted classmates. Different departmental practices will also have an impact; others may well be ahead of us in the areas I pick out. So while the precise distributions of the drivers I identified will inevitably vary between institutions, I still think my qualitative findings in particular are relevant to other schools of politics and international relations within the UK higher education sector, and potentially to other subject areas too.

Research Data

sj-xlsx-1-pol-10.1177_02633957221086879 – for Identifying and understanding the drivers of student engagement in a school of politics and international relations

sj-xlsx-1-pol-10.1177_02633957221086879 for Identifying and understanding the drivers of student engagement in a school of politics and international relations by James Strong in Politics

Footnotes

Acknowledgements

Thanks to Javier Sajuria and Pedro Rubio Teres for advice and support, to the Westfield Fund and Queen Mary Academy for funding, to the editorial team and two anonymous reviewers for constructive and helpful feedback, and to the students of the School of Politics and International Relations for participating seriously and enthusiastically in the research reported here.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was funded by Westfield Fund and Queen Mary Academy.

Author biography

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.