Abstract

I offer some further reflections on the relationship between student employment and classroom engagement, in response to Hanretty’s discussion of my original article on the topic. First, I note that the data we’d ideally need to study this relationship properly doesn’t exist. Second, I suggest that Hanretty and I are pursuing subtly differing goals – he seeks the best estimate of a statistical relationship, while I am trying to make practical policy recommendations at the level of an academic department. Third, I gently push back against Hanretty’s injunction against the use of a post-treatment variable in my original paper, noting that there are good theoretical reasons for thinking my original argument – that not all hours of employment affect attendance equally – should work. Finally, I conclude that while it is true that students who work more hours are less likely to engage well with their studies, this relationship is conditional in part on factors that academic departments might realistically be able to influence.

I’d like to start by thanking Hanretty (2022) for engaging seriously with my original piece (Strong, 2022). As a former editor of Politics, I’m aware that pedagogic work doesn’t always attract the kind of sustained, thoughtful response that he offers here. I’m grateful both for the work he has put in and for the way he went about putting together his response, which was eminently constructive and collegial. I’m also grateful to the current editors for the opportunity to offer a few further reflections.

I don’t want to get too far into the weeds in my response, not least because I am happy to concede that Hanretty’s quantitative skills are superior to mine. I do however think there are some useful further reflections that I can offer.

My first reflection is that I think both Hanretty and I agree that the data we’d ideally need to make sense of the impact of outside commitments on student engagement does not currently exist. In an ideal world, every time a student missed a timetabled teaching session, they would log the reason for their absence. That would enable us to track precisely how many classroom hours each student missed for each possible reason (employment, caring, illness, etc.). There would be considerable practical and potential ethical issues associated with setting up a system like this, but it would be possible and it would facilitate the sort of analysis Hanretty and I both attempt to conduct. In its absence, we are inevitably left to rely on imperfect measures.

To an extent, this is inevitable in pedagogic research. As I found in conducting my original study, it is often possible to access data on one’s own students that (under UK data protection law) can lawfully be used within an institution to improve the services we offer, but cannot be published externally without specific consent. So it’s not uncommon to wind up hamstrung in terms of what you can use in a journal article compared to what you can use in-house. It’s also difficult to run cross-institutional studies, since – in the United Kingdom at least – we are in theory supposed to be competitors, though I suspect many academics would resist that framing. Few politics departments are large enough to support large-n studies on their own. So the data are never going to be ideal. And we do look quite different – Queen Mary University of London (QMUL) is an outlier among Russell Group universities for the profile of its students, who are much more likely to hail from an ethnic minority or to have previously received free school meals than their peers at other Russell Group institutions – 16% of QMUL students are in this category against a Russell Group average of around 2% (Britton et al., 2021).

My second reflection is that I think Hanretty and I are talking somewhat at cross-purposes, or at least that our goals are subtly but critically different. His goal is to derive the best possible estimate of the effect of a treatment variable (hours in employment) on an outcome variable (seminar attendance). In pursuit of this goal, he is willing to disregard additional data that might undermine the rigour of his analysis; a perfectly correct approach for a social scientist studying an abstract relationship. My goal, however, is to identify specific policy actions that I can take within my academic department in order to improve student engagement. It’s not that I don’t care about getting the best possible estimate of the relationship between employment and attendance, but rather that I need to maximize the amount of behaviour I observe in order to achieve my goals.

Hanretty essentially winds up recommending that academics should tell their students that if they take up paid employment, their studies will suffer. That is a reasonable way of communicating the most rigorous possible interpretation of the available data. But it isn’t terribly helpful as a pedagogic approach. In effect, it amounts to telling poorer students (and, in the case of my institution, that is a significant proportion of the whole student body) that their academic chances are inevitably limited by their poverty. I do not see how that would improve their engagement, especially in light of my finding that there is a strong relationship between student confidence and engagement. As I said in my original piece, if the problem is the wider socio-economic system in which we operate, the solution cannot lie at the level of academic departmental policies. But that doesn’t mean there is nothing we can do at that level to mitigate wider systemic pressures, at least in part. In other words, I’d rather have a less rigorous analysis that offers actionable policy suggestions than a more rigorous analysis that offers nothing actionable. This, again, is the difference, as I see it, between pedagogic research aimed at shaping teaching practice and social science in general.

My third reflection is a little more of a direct response. I’m simply not persuaded that the use of a post-treatment variable for this sort of analysis is quite the cardinal sin that Hanretty implies. His illustration of why post-treatment variables are bad actually works quite well from my perspective, too. He argues, in effect, that controlling for rank in a gender pay gap analysis can lead you to obscure the true relationship between gender and pay. But that’s only true if you’re asking the question in the abstract – ‘is there an association between gender and pay in this organisation?’. If you’re asking a practical question – ‘does this organisation pay people differently by gender?’ – it’s not including rank that will bias your results, but excluding it. The key lies in ensuring you interpret your results correctly. If you find a gender pay gap in your organisation that disappears once you control for rank, it doesn’t mean you don’t have a gender pay gap. It means you have a gender pay gap that is driven by gender disparities in rank not by gender disparities in pay. In other words, you aren’t paying people differently by gender, you are hiring and promoting people differently by gender, and possibly also grading roles traditionally performed by people of one gender (e.g. cleaning) at a lower rank than comparable roles traditionally performed by people of another gender (e.g. portering). It’s not that you don’t have a problem – you do – but your pay policies aren’t the cause, meaning that changing your pay policies is probably not the best solution. The use of a post-treatment variable may have made your analysis of the relationship between gender and pay less rigorous, but it has also uncovered the fact that that relationship is in fact being confounded by the relationship between gender and rank.

My question is not ‘is there a relationship between hours spent in paid employment and attendance?’, it is ‘what can I do to improve student engagement in my school?’. Through my survey, I found that students who reported similar levels of paid employment nevertheless had quite varied attendance records. In particular, those who indicated they had ever had to miss class due to a clash with paid employment – a proxy for inflexible employment conditions and/or a lack of the sort of social or economic capital that might enable someone to mitigate or avoid such conflicts – had significantly lower attendance records than classmates who indicated they had never had to miss class due to a clash. Having discussed this finding in focus groups, and identified variation in how entitled students felt to ask for accommodations, either from managers at work or from academic staff at the university, I concluded that we might be able to mitigate some of the negative effect of paid employment on attendance by eliminating the need for students to ask for special treatment in the event of an unexpected clash. This is not an answer to Hanretty’s question, but it is an answer to my question.

I also think it holds up better than his analysis implies it might. He effectively assumes that there must be a direct relationship between hours of paid employment and attendance; his argument is that we don’t learn anything from asking students if they have ever missed a class due to employment, because by definition only those who undertake employment will answer ‘yes’, and all those who answer ‘yes’ have a less-than-perfect attendance record. But, in principle, there is no reason why working more hours must inevitably lead to students missing classes. Nor is there, in fact, a direct relationship between number of hours worked and attendance in the actual underlying data.

To explain my argument in principle, let me invent two hypothetical students, Student A and Student B. Both undertake 15 hours of paid employment per week in addition to their studies. According to Hanretty’s analysis, both will be equally likely to have lower attendance, and therefore attainment, than classmates who do not spend any time on paid employment, and both should be advised to cut back on paid work. But what if Student A has a job in the Students’ Union Bar, meaning they only work evenings and have an employer who understands and supports their need to prioritise their studies, while Student B works in a distribution centre for a large online retailer, where they are expected to accept whatever shifts are assigned to them and where their shift patterns fluctuate on a weekly basis? Obviously, we would expect Student B to miss a larger number of taught classes due to their employment than we would Student A. What, in turn, if Student B gets chatting with Student A after class, and winds up quitting their retail job to take up shifts on campus instead? Obviously we would expect their attendance to improve.

That is in fact what we see in my data; students who work longer hours have poorer attendance records, on average. The relationship is, however, conditional. As the qualitative evidence generated through the focus group stage of the project made clear, the effect is heavily conditioned by two related factors – job type and the student’s individual social capital. Those students who were better able to control their working hours, or to adjust their study hours, had better attendance records than classmates who undertook the same number of paid hours but did not have that level of control. This, I argue, is just as intuitive a finding as the big picture point that more hours leads to worse attendance: of course job type matters, and of course social capital has an effect.

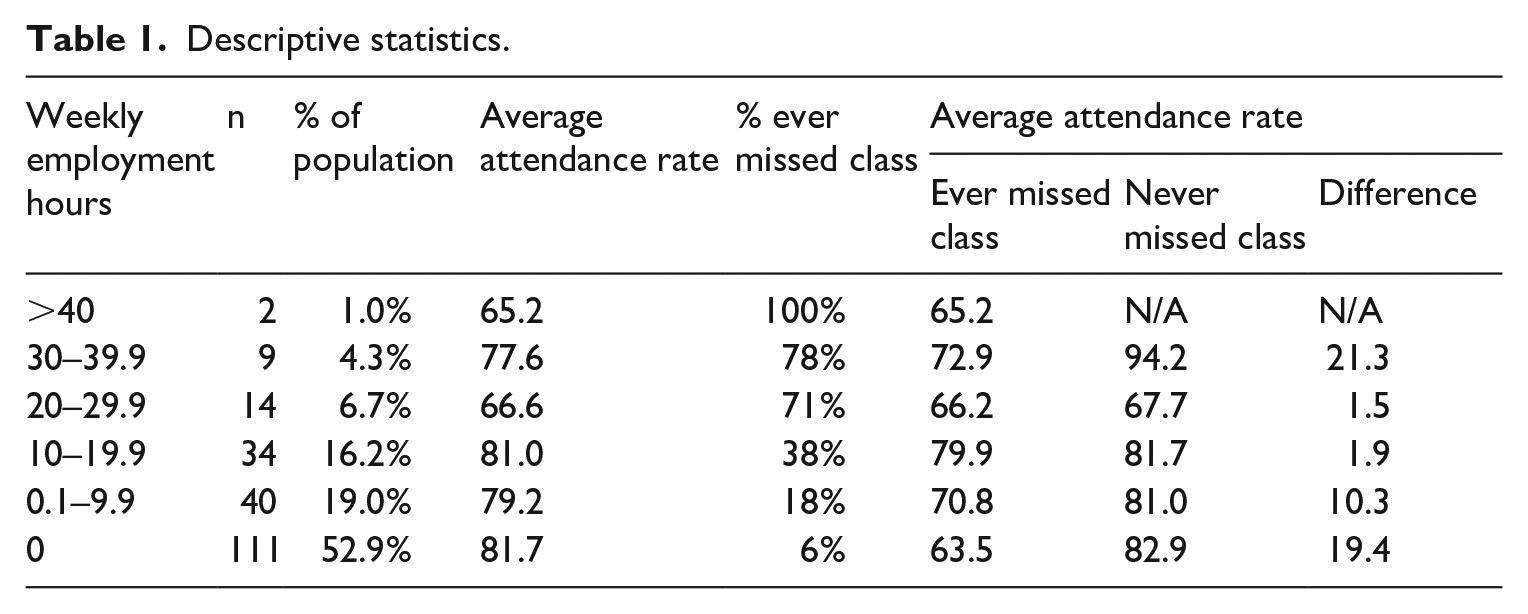

We can further illustrate the point by looking at some simple descriptive statistics. Table 1 does just that. It shows a number of interesting things. First, it shows that the majority of survey respondents did not undertake any paid term-time employment at the point of survey completion, though some of this group had done so at some point during their studies. Second, it shows that the relationship between hours spent in paid employment and attendance is conditioned by other factors. Respondents who undertook 10–20 hours per week of paid employment had attendance rates that were higher than those who worked up to 10 hours and very similar to those of respondents who did not work at all. As the hypothetical illustration above implies, there is no reason why more hours of employment must automatically equate to lower attendance, at least at the kinds of levels we are talking about (eventually there would be too few hours in the week, but we are a long way from that point). So while we would expect an association between hours of employment and Absent_Employment, we would also expect to see variation between individuals. Third, we do indeed see such variation. Across each category, respondents who reported spending similar numbers of hours in paid employment per week, but who reported having ever had to miss a class due to a clash employment responsibilities, had significantly lower attendance rates than those who did not. This is especially pronounced in the 30–40 hours bracket, and in the two largest brackets – up to 10 hours and 0 hours per week.

Descriptive statistics.

My final reflection is that I think Hanretty is right, ultimately, to argue that students who spend more time in paid employment are likely to have lower engagement. Even if they are able to avoid conflicts, the fact they are expending energy on paid work limits how much they can put in to their studies. If I implied in my original piece that that was not the case, I misstated my findings and take full responsibility. But I still think, both in terms of what the data say and what they imply in policy terms, that I drew the right practical conclusions in the original piece. Students who have experienced irresolvable conflicts between paid employment and timetabled teaching have significantly lower attendance records than students who undertake similar levels of paid employment but who have not experienced such conflicts, a finding that is entirely in keeping with one of the broader lessons in my qualitative data, that my students vary widely in their ability to navigate higher education as a function of their past experiences and accumulated social and economic capital. While we do see differences in attendance levels between students who have not experienced conflicts depending on how many hours of paid employment they undertake, these differences are minimal in the largest groups – the 88% of respondents who work for up to 20 hours per week. In other words, the relationship between hours in employment and attendance is conditional – for the majority of students, it is not the hours in employment that lower attendance, but specific clashes between employment shifts and timetabled classes. That makes intuitive sense – Why should a Sunday afternoon shift in a shop prevent a student attending a Wednesday morning seminar? It still, therefore, follows that trying to help mitigate such clashes is a sensible policy. Crucially, it is also something that a single academic department can practically do.

Footnotes

Funding

The author received financial support from the Queen Mary University of London Westfield Fund and the Queen Mary Academy for the research underpinning this article.