Abstract

Divergent thinking (DT) tests are sometimes used to select students for gifted programs. Studies on these tests, mostly conducted on non-gifted students, suggest that performance is influenced by the type of instruction given (standard vs. hybrid “be fluent AND original”) and time-on-task. The current study aimed to examine the effect of instructions and time-on-task on divergent thinking performance in gifted and non-gifted students in a 2 [gifted versus non-gifted] × 2 [standard versus hybrid instructions] design. The results showed that gifted students outperformed non-gifted students in fluency, while no significant difference was found between the two groups in originality. Creativity instructions improved both originality and fluency scores in verbal but not figural tests. As for time-on-task, gifted students took more time when completing DT tests as well as when they were given explicit instructions to “be creative.” Implications for gifted identification are discussed.

Introduction

The gifted education field has evolved remarkably in many ways, including identification (Johnsen, 2018), services (Johnsen, 2012), program evaluation (Neumeister & Burney, 2019), and perhaps most importantly, the conception of giftedness (Sternberg & Ambrose, 2021). While high intelligence quotient was initially considered the sole indicator of giftedness (Sternberg et al., 2011), subsequent theories challenged that notion. There is a large consensus on the idea that giftedness is a multidimensional construct that includes both cognitive and non-cognitive aspects (Abdulla Alabbasi, Ayoub, & Ziegler, 2021; AlSaleh et al., 2021; Gagné, 2020). In the 1960s, Ellis Paul Torrance observed that hidden talent and unrecognized potential are often missed in gifted programs owing to common unfavorable characteristics (Torrance, 1995). Modern theories have gone beyond Terman’s (1925) initial conception, and most include creativity as a core component (Gagné, 2020; Preckel et al., 2020; Renzulli, 1986; Sternberg, 2005).

Divergent thinking (DT) is the ability to think in many different directions (Taylor, 1988). DT tests are often used to assess students’ creative potential as part of the gifted identification process. The most widely used is the Torrance Test of Creative Thinking (TTCT; Abdulla Alabbasi et al., 2022b). DT tests are influenced by internal and external factors, two of which have been extensively studied using non-gifted samples: the role of the explicit instruction to “be creative” 1 and the amount of time-on-task (TOT). These two factors are the focus of this study.

Role of explicit instructions in DT tests

Explicit instructions such as “be fluent,” “be flexible,” “be original,” and “be fluent AND original” (hybrid) when taking a DT test have been studied extensively (Harrington, 1975; Reiter-Palmon et al., 2019; Runco, 1986; Torrance, 1966; Wallach & Kogan, 1965). Explicit instructions, one aspect of test administration procedures, convey the respondents’ tasks and responsibilities. Through these explicit instructions, the process of testing and outcome quality may vary (Reiter-Palmon et al., 2019). This is most likely with DT tasks because they are open-ended, and the nature of responses may in part be owing to the instructions provided. The alternative is to administer DT tests without explicitly directing examinees to “be creative” or generate original ideas because it can be useful to understand what individuals do spontaneously (Acar et al., 2020). Thus, when non-explicit (standard) instructions are given, the results may be more indicative of DT as it occurs in the natural environment (natural performance), while explicit instructions may be more indicative of examinees’ potential and what is possible (maximal performance) (Klehe & Anderson, 2007). Such potential is of particular interest when DT tests are used to identify students for gifted programs. Additionally, explicit instructions tend to lead to highly reliable performances (Harrington, 1975). Yet another advantage of explicit instructions is that they are likely to minimize individual differences in task perception. Examinees’ performances then are more indicative of actual ability.

Some studies (Evans & Forbach, 1983; Forthmann et al., 2016; Harrington, 1975) have investigated the effect of explicit instructions to “be creative,” while others direct examinees to be original (i.e., generate rare and unusual ideas) or fluent (i.e., generate many ideas, without regard to quality) (Kaya & Acar, 2019; Runco et al., 2005). Some studies found that explicit instructions to “be creative” significantly enhanced originality but not fluency and flexibility (Hong et al., 2016; Runco, 1986; Said-Metwaly et al., 2020). Other researchers reported that the effects depended on which DT test was administered (Chand & Runco, 1993; Cummings et al., 1975). To resolve these inconsistencies, two meta-analyses were conducted on the effect of explicit instructions on DT performance (Acar et al., 2020; Said-Metwaly et al., 2020). Acar et al.’s (2020) main finding was that explicit instructions improved DT performance when both “be original” and “produce as many ideas as you can” were emphasized (i.e., hybrid). When only instructions to be original were provided, DT performance was not affected. Said-Metwaly et al. (2020) concluded that explicit instructions only enhance originality.

With one exception (Runco, 1986), studies examining the effect of instructions on DT performance have not considered giftedness status (i.e., gifted vs. non-gifted). Gifted students’ reactions to explicit instructions may differ from non-gifted students because of their superior ability to comprehend and process the instructions (Devall, 1981; Guignard et al., 2016), higher perceptual reasoning (Guignard et al., 2016), and stronger self-regulation skills (Bouffard-Bouchard et al., 1993; Risemberg & Zimmerman, 1992; Shore & Kanevsky, 1993; Tortop, 2015), which allow them to adapt to the task requirements. These factors explain Runco’s (1986) findings that gifted students’ originality scores increased when they received explicit instructions, although the same instructions suppressed their fluency and flexibility. Originality instructions may have led participants to prioritize quality (originality) over quantity (fluency). Lower performance on flexibility may reflect strong fluency confound on flexibility (Acar et al., 2022; Weiss & Wilhelm, 2022). Since Runco (1986), empirical research has not revisited the role of explicit instructions for gifted students. Therefore, our first objective was to compare the DT performances of gifted and non-gifted students who were given two kinds of instructions: (a) hybrid, which emphasizes fluency and originality and (b) standard. Different from Runco (1986), we use a non-Western sample in the present study considering potential variation by culture.

Effect of TOT on DT

TOT is another factor that can influence students’ performances on DT tests. Christensen et al. (1957) administered several DT tests such as Plot Titles, Brick Uses, Unusual Uses, and Figure Concept, observing an increase in uncommon responses and remoteness of associations when examinees were given more time. However, it was Wallach and Kogan’s seminal work (1965) that greatly influenced this line of research advocating that students generate more original results under untimed (game-like) conditions. Wallach and Kogan (1965) concluded that when DT tests were administered in a game-like environment, DT and intelligence quotient were more independent enhancing discriminant validity of the former. Subsequent studies utilized the following experiments and conditions: (a) timed versus untimed conditions and (b) different time intervals (e.g., 2.5 versus 5 versus 7.5 minutes). While most studies that tested timed versus untimed conditions concluded that the latter resulted in higher DT scores (Akinboye, 1982; Cropley, 1972; Foos & Boone, 2008; Torrance, 1969; Torrance & Ball, 1978; Vernon, 1971), those comparing different time intervals reported inconsistent findings. For instance, Plucker et al. (2006) reported that TOT was significantly related to DT originality and fluency, whereas Hattie (1980) concluded that test-like conditions were optimal. However, Sajjadi-Bafghi and Khatena (1985) and Johns et al. (2000) reported that TOT had no significant effect on DT performance. A further group of researchers reported mixed results (Beaty & Silvia, 2012; Khatena, 1972, 1973; Morse et al., 2001). To resolve this contradiction, Paek et al. (2021) and Said-Metwaly et al. (2020) conducted two meta-analyses and concluded that: (a) there was a positive relationship between DT and TOT and (b) more time has a positive effect on originality but not on fluency or flexibility.

Regarding the nature of the association, Plucker et al. (2006) found no evidence for a curvilinear relationship between DT and TOT. However, Paek et al. (2021) found evidence of a J-shaped relationship. Most studies on the effect of TOT on DT have been conducted with the general population. Given that most gifted programs require students to take a DT test and considering that previous studies show that TOT influences DT performance, our third objective was to examine the effect of TOT on DT performance among gifted students (compared with their non-gifted peers). Although more time is often better for more and higher-quality responses in the general population, how TOT impacts the performance of gifted and non-gifted students is yet to be investigated. Gifted students’ use of time on DT tasks may be different because of their shorter reaction time to the task instructions (Duan et al., 2013) and longer task planning time (Davidson & Sternberg, 1984; Shore & Lazar, 1996). According to Case (1985), faster processing helps problem solving as fast processing of simple tasks allows for more time for complex tasks. This applies to DT because the ability to generate early ideas quickly leaves more time for generating ideas based on cognitive strategy use, which tends to happen later in the ideation process (Gilhooly et al., 2007). Thus, high-speed processing does not necessarily shorten the process for gifted students as they can use the time more efficiently and effectively when a single solution is not expected, as with DT tasks. In fact, this may explain the non-linear relationship between DT and TOT because DT may benefit from longer TOT; this benefit may be a consequence of fast cognitive processing that allows both the quick production of ideas and greater space for strategy use and higher-order thinking skills. The fourth objective, therefore, was to test the nature of the relationship between DT and TOT in gifted and non-gifted samples.

Research questions

We address the following research questions: 1. Does DT performance (fluency and originality) vary between gifted and non-gifted students based on the type of instructions (“be creative” versus standard instruction)? 2. Does DT performance (fluency and originality) vary between gifted and non-gifted students based on TOT? 3. Is there a significant interaction effect between the type of instructions and TOT on DT performance? 4. Is there a curvilinear relationship between DT performance and TOT? That is, does the relationship between DT performance and TOT vary between the low and high TOT groups?

Methods

Participants

The sample consisted of 120 female high school students (Mage = 16.02; SD = .83) divided into four equal groups: (a) gifted students who received instructions to be fluent and original, and to produce as many unusual ideas as possible; (b) gifted students who received standard instructions; (c) non-gifted students who received instructions to be fluent and original, and to produce as many unusual ideas as possible; and (d) non-gifted students who received standard instructions. Students were selected randomly from the Eastern region of Saudi Arabia. Because the second author, who collected the data, is a woman, we only had access to girl’s schools — boys and girls are segregated in both government schools and public higher education institutes in Saudi Arabia (Baki, 2004; El-Sanabary, 1994).

The National Center for Assessment in Saudi Arabia has developed an identification system for students from the 3rd to 9th grades. The identification system begins with teachers or students nominating those who have promising talents. The second stage includes administering the following assessments: (a) the Multiple Mental Process Scale, which assesses mental flexibility, mathematical and spatial reasoning, scientific and mechanical reasoning, and linguistic reasoning and reading comprehension; (b) Creativity Scale, which assesses components of creative thinking, including fluency, flexibility, originality, sensitivity to problems, and perception of details; and (c) the Multiple Personality Traits Scale, which assesses a variety of non-cognitive abilities. 2 After completing the abovementioned assessments, those who score at or above the 95th percentile in at least two assessments (and above the 90th percentile in the third) are admitted to gifted programs. All the assessments were developed and normed for use in Saudi Arabia.

Instruments

Alternative uses test (AUT)

The AUT (Wallach & Kogan, 1965) checks verbal fluency and originality. Wallach and Kogan (1965, p. 31) listed eight items for the AUT, each of which began with the following statement: “Tell me all the different ways you could use …” The three items selected for the current study are alternative uses for: (a) a spoon, (b) a wheel, and (c) a toothbrush. Fluency was defined as a participant’s total number of responses related to each item (i.e., stimuli). Participants received 1 point for fluency for each new (unrepeated) response related to the stimuli. Originality was scored based on statistical infrequency (3% cutoff criterion). A score of 1 was given for each original idea.

Figural DT test

A figural DT test from the Runco Creativity Assessment Battery (1986) was used to assess figural fluency and originality. The test consisted of three figural stimuli like those in the Line Meaning Test (Wallach & Kogan, 1965). The participants were asked to generate interpretations relevant to each stimulus. The same method for scoring fluency and originality as the AUT test was applied.

Torrance tests of creative thinking (TTCT) - verbal form

The third instrument was the verbal form of the TTCT, consisting of six activities: (a) asking, (b) guessing uses, (c) guessing consequences, (d) product improvement, (e) unusual uses, and (f) “just suppose.” To ensure consistency with the previous two instruments, only fluency and originality were scored. As no norms were developed in Saudi Arabia for this test, the same method for scoring fluency and originality as the AUT and figural DT test was applied.

Time-on-task

A special mobile phone application developed for this study calculated time in seconds. All tasks were uploaded to the application and participants used iPads to complete the tests. The mean time for completing the three DT tests was 33.35 minutes (SD = 14.9).

Explicit instructions

Participants were divided into four groups, and each was given one of four codes and a login ID for the app. The four groups were (a) gifted students given “be creative” instructions (n = 30), (b) gifted students given standard instructions (n = 30), (c) non-gifted students given “be creative” instructions (n = 30), and (d) non-gifted students given standard instructions (n = 30). The verbatim instructions to “be creative” in the AUT were as follows: Now, in this game, I am going to name an object — any kind of object, like a light bulb or the floor — and it will be your job to tell me many and unique ways that the object could be used. Any object can be used in a lot of different ways. For example, think about string. What are some of the ways you can think of that you might use string? Let’s begin now. And remember, think of all the different and original ways you could use the object that you will see on the next page.

The standard instructions for the AUT were as follows: “Tell me all the different ways you could use the items on the next pages.” The verbatim instructions to “be creative” for the figural DT test were as follows: In this game, I am going to show you three figures, and after you have looked at each one, I want you to tell me all the different and original things they make you think of. You can turn it any way you want. List as many things as you can that this figure might be or represent. This is not a test. Think of this as a game and have fun with it! The more ideas you list, the more original, the better.

The standard instructions for the figural DT test were as follows: “Tell me all the different things the following figures might represent.”

Finally, we used the verbatim instructions for the six TTCTs found in Torrance’s (2017) verbal response booklet. The standard instructions for each of the six tasks asked participants to: (a) write out questions related to the picture (Activity 1); (b) list possible causes for the depicted actions (Activity 2); (c) list possibilities for what might happen as a result of what was taking place in the picture (Activity 3); (d) look at the elephant toy and think of ways of changing it so that children would have more fun playing with it (Activity 4); (e) list unusual uses for cardboard boxes (Activity 5); and finally, (f) You will now be given an impossible situation—one that will probably never happen. Think of things that would happen because of it. Here is the situation: Just suppose clouds had strings attached to them that hang down to Earth. What would happen? (Activity 6).

Note that in the no-instruction condition, nothing like “list all possible ideas” and/or “be original and think of ideas that no one else would think of” was mentioned.

Procedure

Data collection was approved by the Ministry of Education and the Foundation, and all participants provided informed consent. Two schools were randomly selected from the Eastern region of Saudi Arabia. In Saudi Arabia, gifted and non-gifted students study in the same school. Gifted students have access to pull-out programs. The test was administered in the computer labs, where all participants were provided with an iPad to complete the tests. In both schools, the tests were administered in the second class/session for consistency. The second author was available to answer any questions. Once the participants completed the figural and verbal tasks, an Excel file was generated, which included demographic information, students’ responses to the DT tasks, and the time taken to complete the DT tasks. The time for completing the consent and demographic information pages was not calculated; thus, only the time for the DT tasks was calculated.

Results

Reliability

The internal consistency reliability of fluency and originality (alpha coefficient) for the three AUT tasks were .83 and .61, respectively, while the reliability of fluency and originality for the three figural tasks were .86 and .78, respectively. Finally, the reliability of fluency and originality in the six verbal TTCT tasks were .82 and .81, respectively. We formed composite scores for AUT-Fluency, AUT-Originality, TTCT-V Fluency, TTCT-V-Originality, Figural DT-Fluency, and Figural DT-Originality and used them in the respective analyses.

DT performance by instructions and gifted status

The first research question focused on DT performance and how it might be influenced by the explicit instructions and gifted status. We analyzed fluency and originality scores separately.

Fluency scores

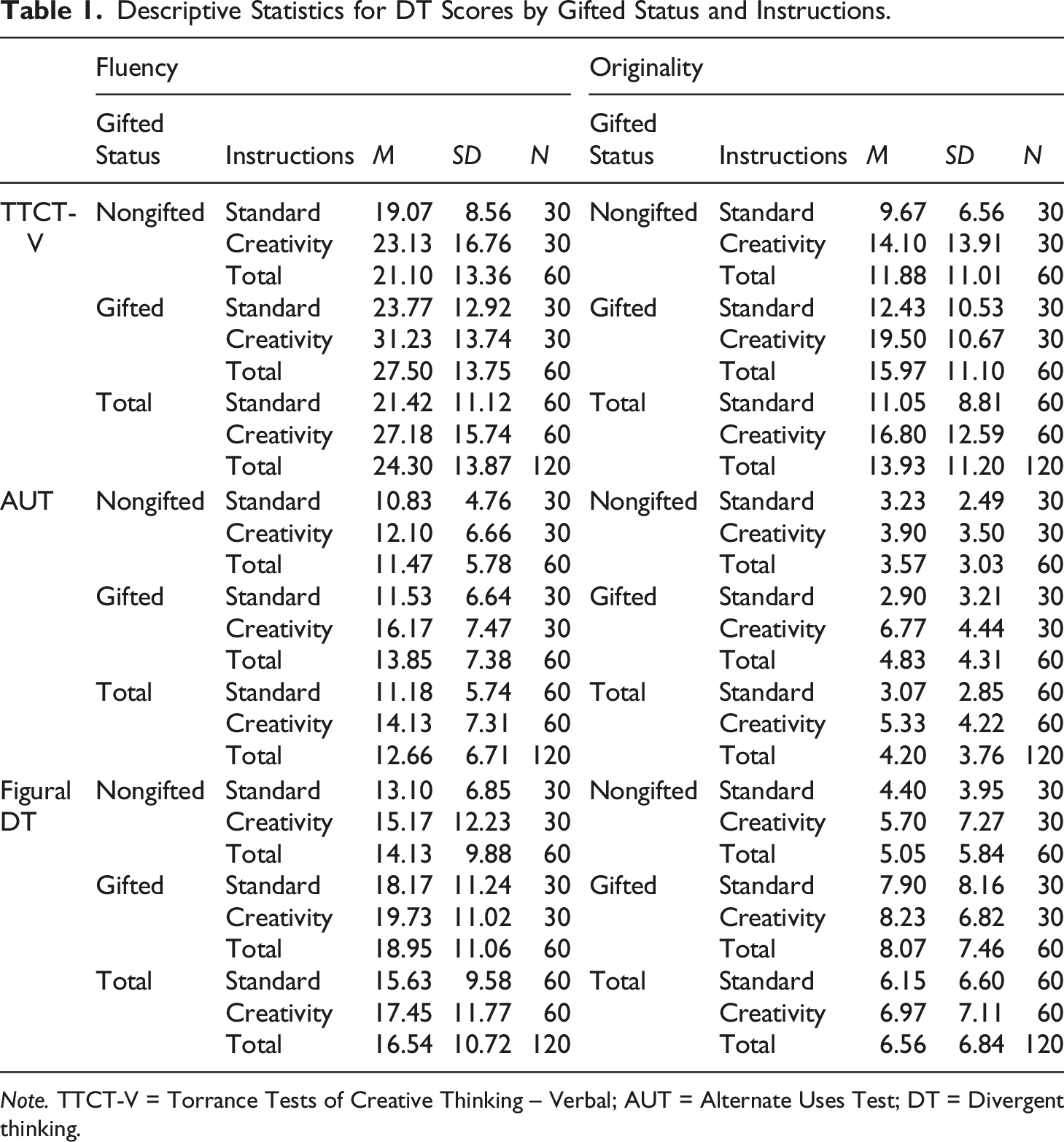

Descriptive Statistics for DT Scores by Gifted Status and Instructions.

Note. TTCT-V = Torrance Tests of Creative Thinking – Verbal; AUT = Alternate Uses Test; DT = Divergent thinking.

Both analyses above used fluency composite scores from verbal DT tests. Next, we ran the same analyses with the fluency scores from the figural-DT test. There was a significant main effect for Gifted Status, F (1, 116) = 6.27, p = .014, η2p = .051. The main effect for Instructions, F (1, 116) = 0.89, p = .347, η2p = .008, and the interaction effect for Gifted Status × Instructions, F (1, 116) = 0.02, p = .90, η2p = .000, were not statistically significant. Table 1 presents the descriptive statistics.

To summarize, gifted students had a higher fluency score for all DT tests, including verbal and figural. Explicit instructions to “be creative” enhanced the scores on verbal DT tests, but not on figural ones. Gifted students did not react to instructions differently from non-gifted students in terms of their fluency scores.

Originality scores

We repeated the same set of analyses for originality. Additionally, we added fluency as a covariate to control for confounding by fluency. Analyses with TTCT-V-Originality indicated a significant main effect for Fluency, F = (1, 115) = 1363.48, p = .001, η2p = .922, and Instructions, F (1, 115) = 5.24, p = .024, η2p = .044, whereas the main effects for Gifted Status, F (1, 115) = 2.37, p = .127, η2p = .020, and the interaction effect for Gifted Status × Instructions, F (1, 115) = 0.00, p = .999, η2p = .000, were not significant. Originality scores were higher with “be creative” instructions (Table 1). The same pattern was evident when AUT-Originality scores were used. The main effects for both Fluency, F (1, 115) = 427.40, p < .001, η2p = .788, and Instructions, F (1, 115) = 8.06, p = .005, η2p = .066, were significant; however, the main effect of Gifted Status was not significant F (1, 115) = .18, p = .671, η2p = .002. Additionally, the Gifted Status × Instructions interaction effect was significant, F (1, 115) = 7.20, p = .008, η2p = .059. AUT-Originality scores were the highest when gifted students received hybrid instructions.

When Figural DT-Originality scores were used as the dependent variable, the main effect for Fluency, F (1, 115) = 1031.61, p < .001, η2p = .900, was significant, whereas Gifted Status, F (1, 115) = 0.06, p = .815, η2p = .000, Instructions F (1, 115) = 0.53, p = .469, η2p = .005, and the Gifted Status × Instructions interaction effect, F (1, 115) = 0.72, p = .400, η2p = .006, were not significant.

Overall, results of the analyses with originality scores (where fluency was used as a covariate) were parallel to those of fluency in terms of instructions: hybrid instructions improved both originality and fluency scores in verbal but not figural tests. On the contrary, Gifted Status was not significant for originality in any of the three tests and the Gifted Status × Instructions interaction effect was only significant for the AUT. AUT originality scores were the highest when gifted students received explicit creativity instructions whereas fluency scores remained uninfluenced. None of the effects were significant for figural originality except fluency, used as a covariate.

DT performance and time

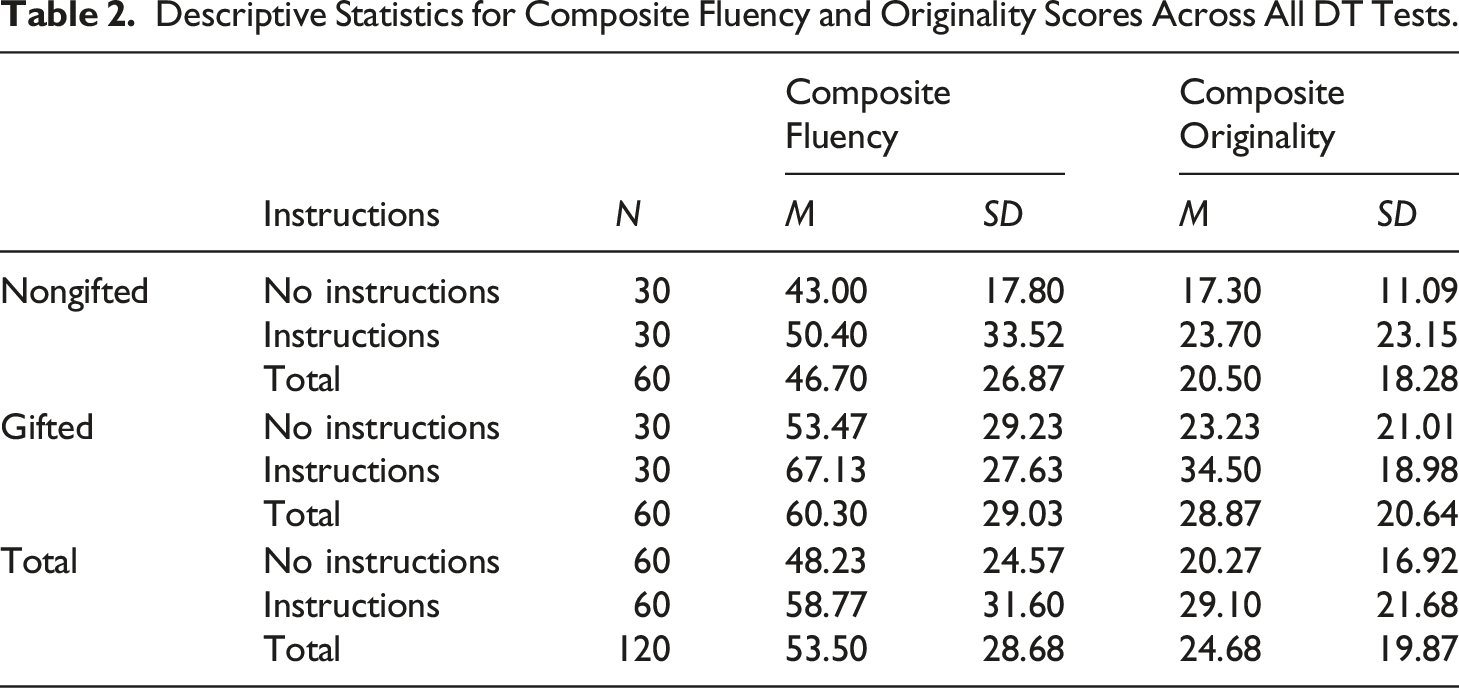

Descriptive Statistics for Composite Fluency and Originality Scores Across All DT Tests.

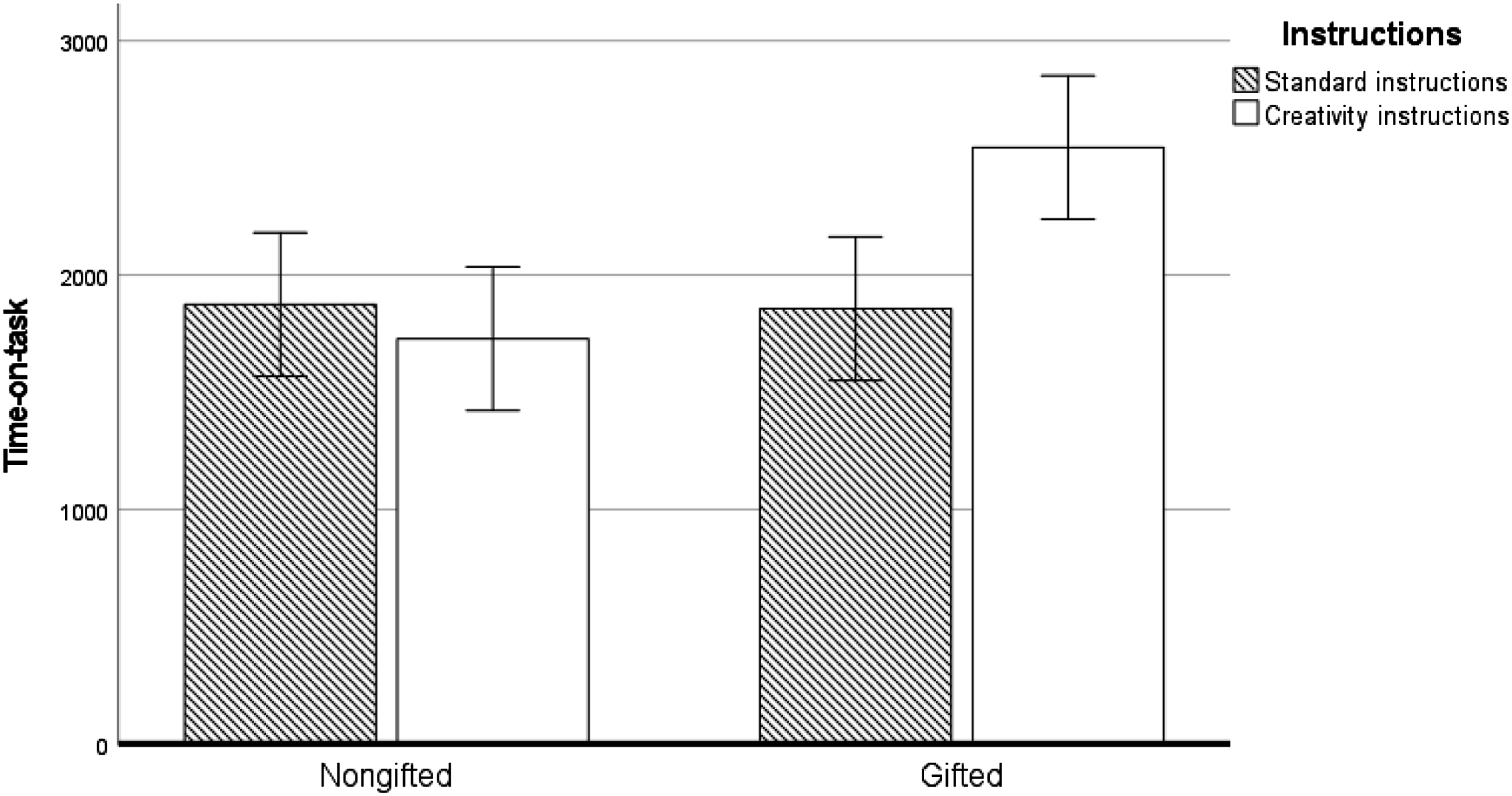

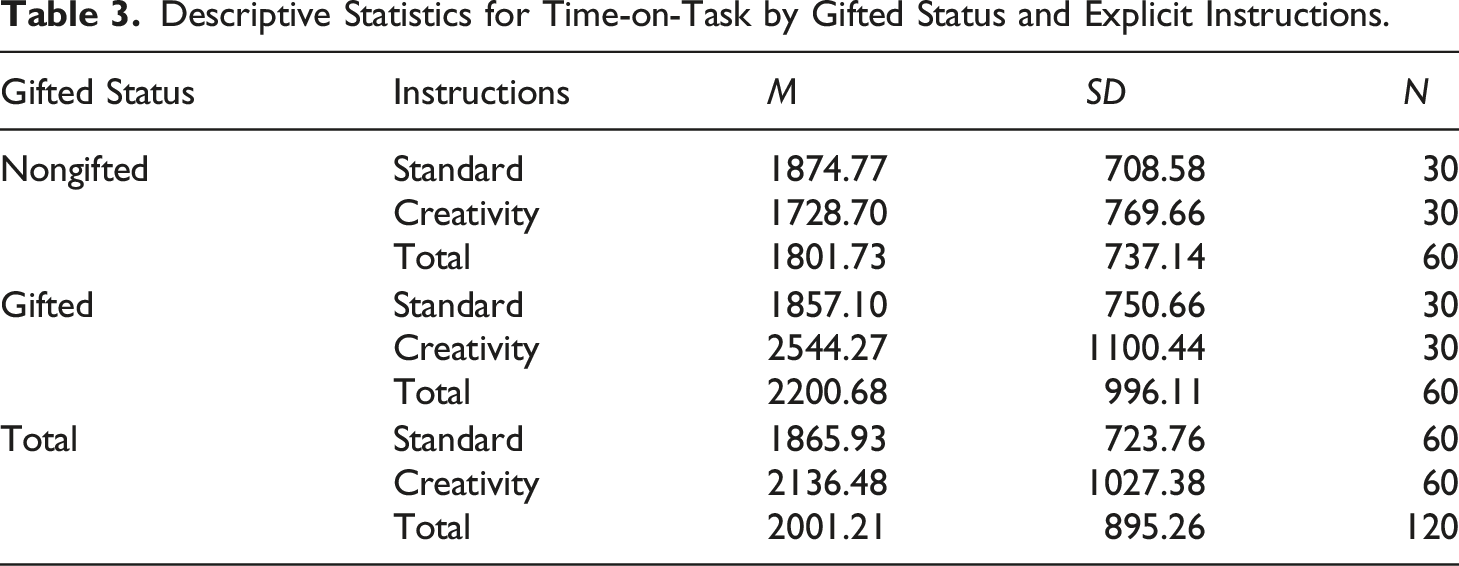

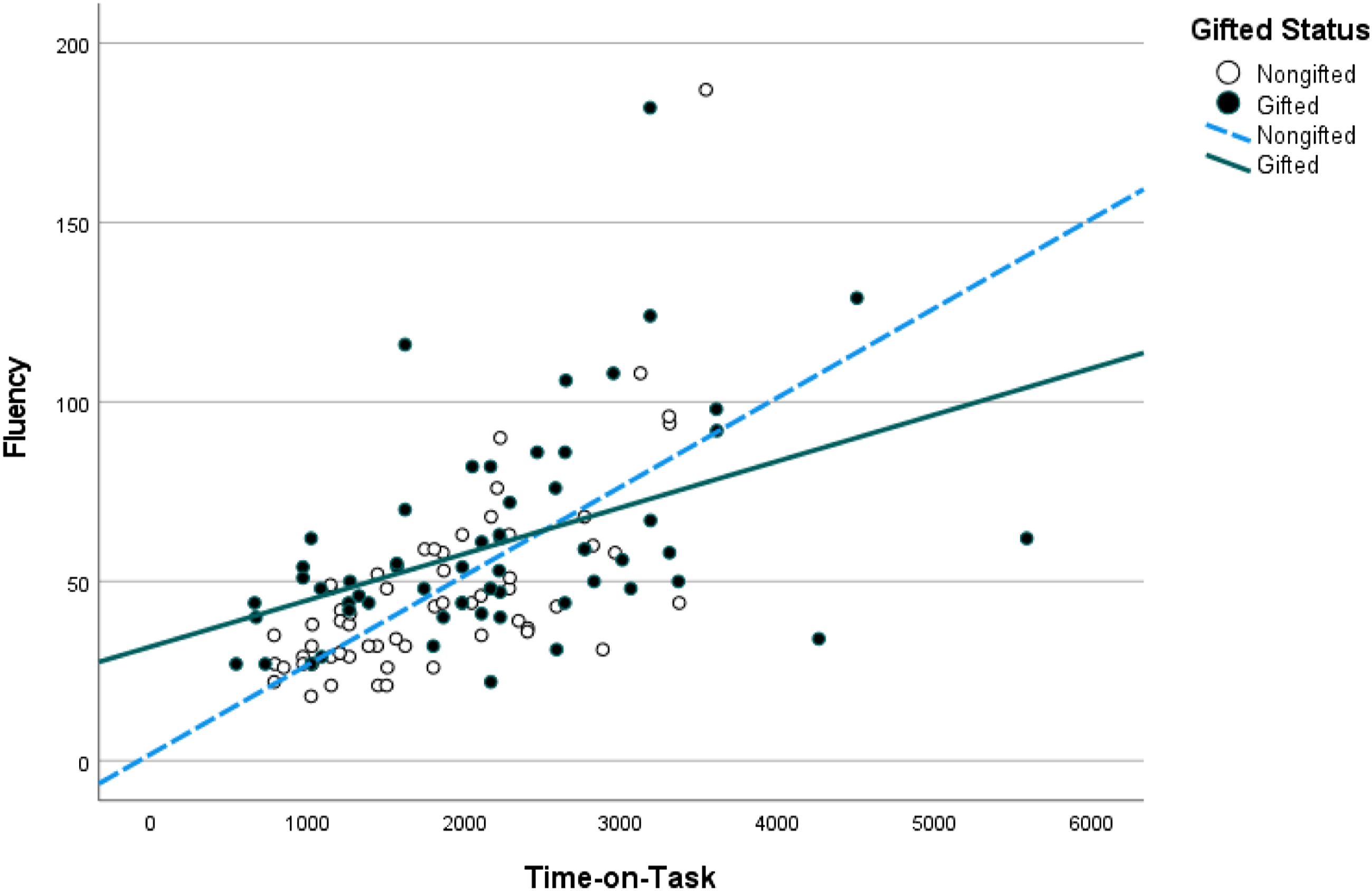

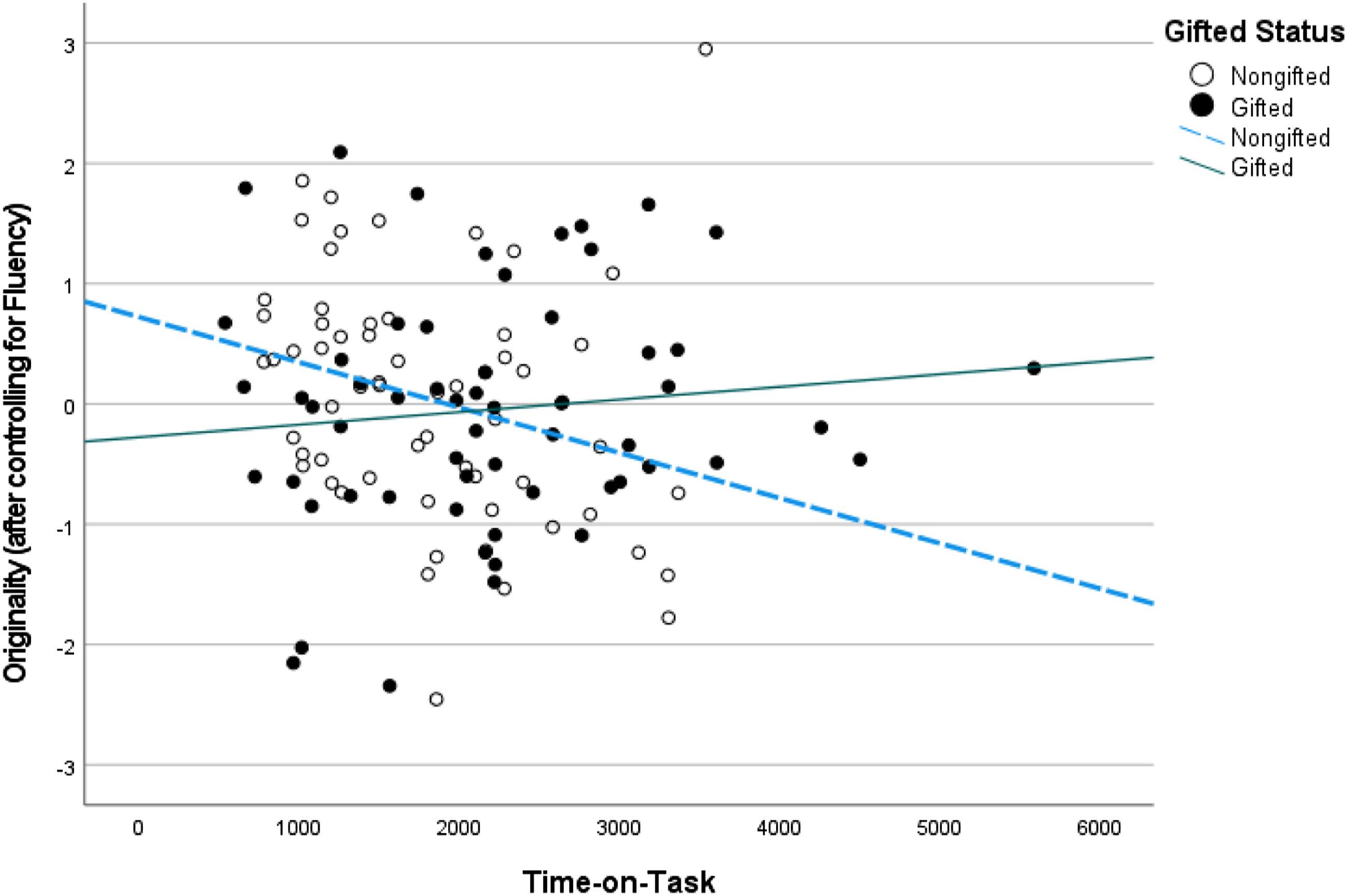

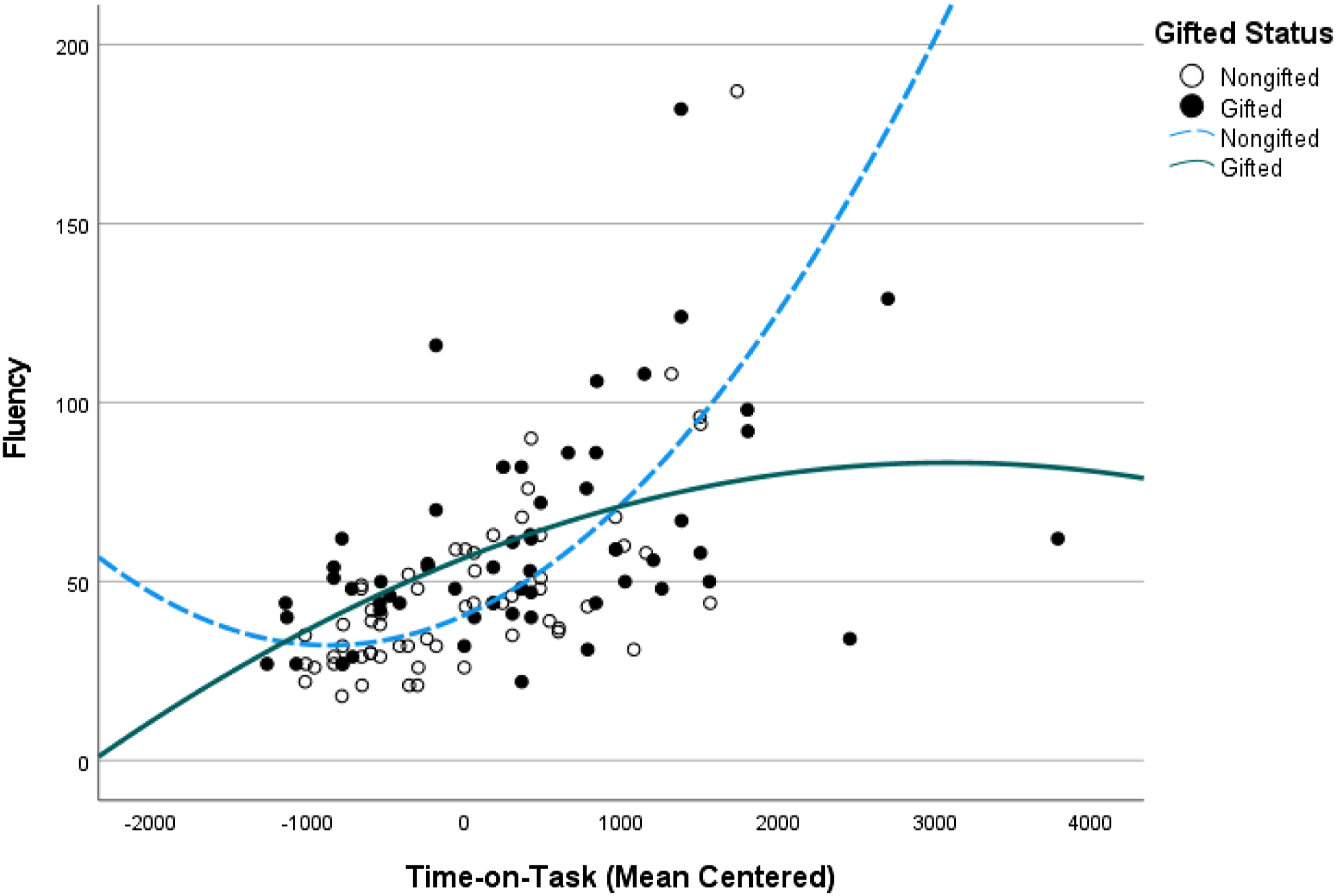

The correlation of time was significant with both fluency (r = .56, p < .001) and raw (summed) originality (r = .53, p < .001). The partial correlation of originality and time controlling for fluency, given the fluency confounding, was r (120) = −.11, p = .253. Time seemed to be associated with greater fluency, implying that generating more responses requires more time, but time was not related to originality when fluency was controlled. To examine if gifted students took more time than non-gifted students for completing DT tests, we used TOT as the dependent variable and Gifted Status and Instructions as the independent variables. There was a significant main effect for Gifted Status, F (1, 116) = 6.66, p = .011, η2p = .054, whereas the main effect for Instructions was not significant, F (1, 116) = 3.06, p = .08, η2p = .026. There was also a significant Gifted Status × Instructions interaction effect, F (1, 116) = 7.26, p = .008, η2p = .059. Thus, gifted students took longer to complete the DT tests, and took even more time when they were given explicit instructions to “be creative” (Figure 1, Table 3). One could argue that taking more time may simply be owing to greater fluency. Accordingly, we added Fluency into the model as a covariate. Indeed, Fluency was significant, F (1, 115) = 43.92, p < .001, η2p = .276, whereas Gifted Status, F (1, 115) = 1.75, p = .189, η2p = .015 and Instructions, F (1, 115) = .56, p = .455, η2p = .005, were not. The Gifted Status × Instructions interaction effect was still significant, F (1, 115) = 7.66, p = .007, η2p = .062. Gifted students spent more time with creativity instructions even when fluency was controlled. Time-on-task by gifted status and explicit instructions. Descriptive Statistics for Time-on-Task by Gifted Status and Explicit Instructions.

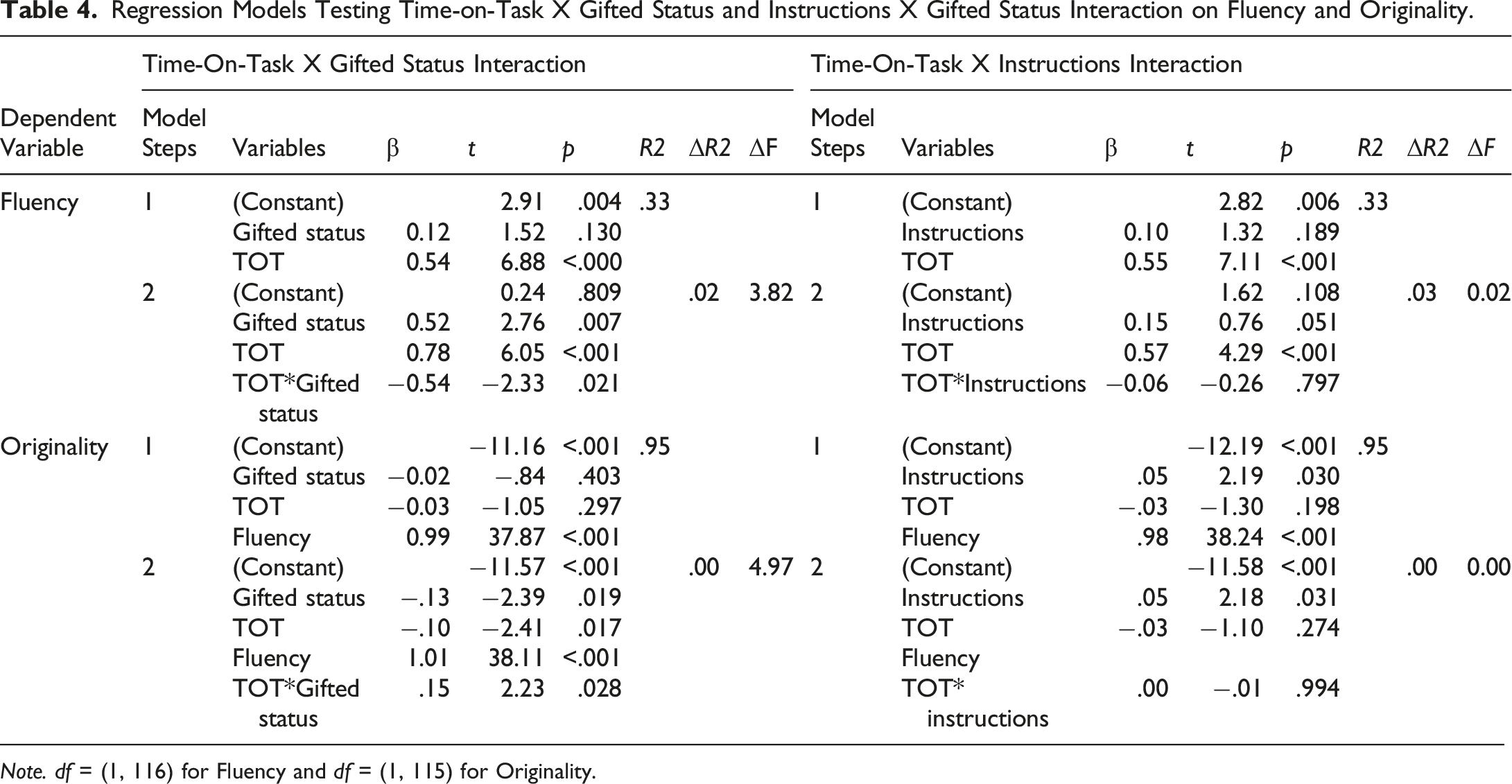

Regression Models Testing Time-on-Task X Gifted Status and Instructions X Gifted Status Interaction on Fluency and Originality.

Note. df = (1, 116) for Fluency and df = (1, 115) for Originality.

Time-on-task X gifted status interaction effect on fluency.

Time-on-task X gifted status interaction effect on originality.

When the TOT × Instruction interaction effect was examined with composite fluency and composite originality scores respectively, none of them was significant. The relationship between TOT and DT performance did not vary by the type of instructions.

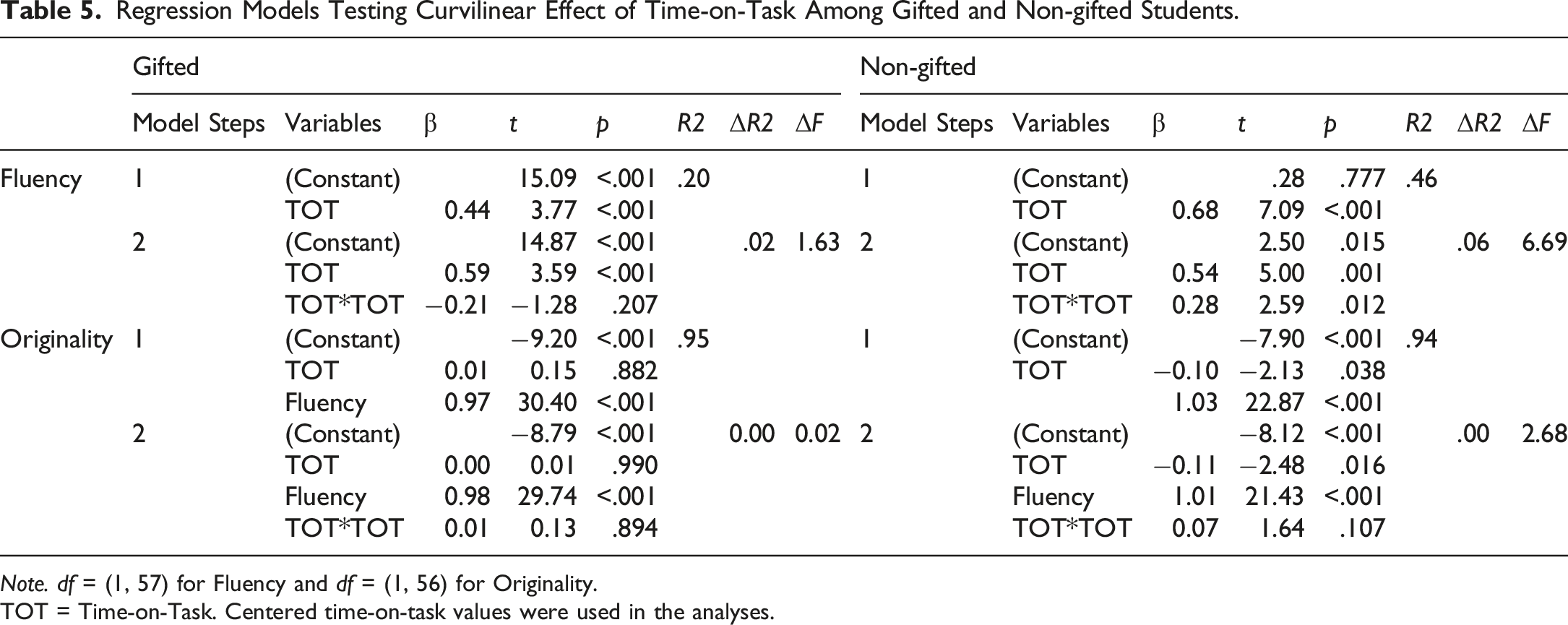

Curvilinear effects of TOT

Regression Models Testing Curvilinear Effect of Time-on-Task Among Gifted and Non-gifted Students.

Note. df = (1, 57) for Fluency and df = (1, 56) for Originality.

TOT = Time-on-Task. Centered time-on-task values were used in the analyses.

Curvilinear effect of time-on-task on fluency.

Discussion

DT tests are sometimes used for selecting students for gifted programs. Students should be aware of the nature of the tasks they will encounter and what is expected from them before they start working on tasks and activities. Such an awareness is essential for students to show maximum performance and minimize individual differences in task perception. Previous studies that did not consider gifted status showed that instructing students to “be creative” resulted in higher scores compared with standard instructions (Harrington, 1975; Runco & Okuda, 1988). Although research on the characteristics of gifted students suggests that they have high levels of task commitment (Heller et al., 2005; Renzulli, 1986; Subotnik et al., 2011), and therefore, minimum instructions might be sufficient, our findings did not support this argument. In fact, both gifted and non-gifted students scored significantly higher in fluency and originality under instructions to “be creative” in the verbal tasks. Such a positive effect of the “be creative” instruction on DT performance was not supported in the figural DT. One plausible explanation for this difference is the shared domain of instructions and performance; namely, verbal instructions may have matched verbal DT better than verbal instructions for figural DT tasks. To test this possibility, performance differences between verbal only and visually supported instructions in figural DT tasks should be tested in future studies.

One relevant and somewhat contrary finding by Chen et al. (2005), who did not use classic DT tasks on their sample of Chinese students, showed better performance on mathematical and artistic creativity than verbal creativity with explicit instructions. Whether this discrepancy is due to cultural or task differences requires further investigation. A closer look at the mean scores for the standard versus “be creative” instructions in the figural test shows that there is a slight difference in favor of the latter; thus, at least the instructions to “be creative” did not negatively affect students’ scores in figural fluency and originality. Therefore, it is safe to conclude that administering DT tests with explicit hybrid instructions to “be creative” will result in higher performance on DT, consistent with recent meta-analyses (Acar et al., 2020; Said-Metwaly et al., 2020). The difference between gifted and non-gifted students on fluency is consistent with previous works, indicating that gifted students possess higher levels of DT (Abdulla Alabbasi et al., 2021c). Yet, this was not applicable to originality scores. We found no significant differences between gifted and non-gifted students in originality, except in one case where gifted students outperformed non-gifted students when they received creativity instructions for the AUT.

Above, the focus of our interpretation was on obtaining maximum output from the DT tasks. Another way to interpret the results is the usefulness of the instructions in terms of gifted identification — the capability of the tests to distinguish gifted from non-gifted students. Our findings from the Gifted Status × Instructions interaction were non-significant, except for AUT-Originality, where gifted students outperformed non-gifted students with creativity instructions. This finding implies that when the AUT is used for gifted identification, using creativity instructions will distinguish gifted from non-gifted students. Here, the gifted designation was primarily based on general cognitive ability. Using explicit instructions for the AUT will yield more consistent results with those from other cognitive ability measures. Those who hope to diversify the profile of identified students, which is the hallmark of multiple criteria (McBee et al., 2014), through creativity measures might not opt for creativity instructions as they reinforce the gifted identification method based primarily on cognitive ability. Nevertheless, this is only the case when the AUT is used for identification purposes. In general, the use of creativity instructions improves the DT output but does not impact the performance of gifted and non-gifted students differently.

TOT is another factor that affects students’ performance on DT tests. This study is the first to test the effect of TOT in a gifted sample in a non-Western culture. Moreover, this is one of the few studies in which time was automatically calculated in seconds and milliseconds (Acar et al., 2019). Unlike some studies that compared different time intervals (Khatena, 1971, 1972, 1973) or those comparing timed versus untimed conditions (Bleedorn, 1980; Foos & Boone, 2008; Johns & Morse, 1997), we decided not to mention to the students that there was a time restriction to understand the nature of the relationship between DT performance and TOT. In line with Paek et al.’s (2021) meta-analysis, more time positively correlated with higher fluency for both gifted and non-gifted students. The Gifted Status × Instructions interaction was significant, indicating that gifted students take more time on DT tasks, and when instructed to “be creative,” they spend more time working on DT tasks compared with the standard instruction condition. This finding indicates that creativity instructions keep gifted students on the task for longer and allow them to utilize more of their creative potential. From this perspective, using creativity instructions seems useful. Are gifted students spending more time on DT tasks with explicit creativity instructions as a result of producing more ideas (i.e., higher fluency)? This does not seem to be the case because the interaction effect (Gifted Status × Instructions) was significant even when fluency was added as a covariate. Putting these together, gifted students spend more time on DT tests when they are given creativity instructions, perhaps because they are more committed to the tasks when they have a better idea of what is expected of them.

The present data also allowed for testing if taking more time on DT tasks impacts gifted and non-gifted students’ performances differently. In general, gifted students’ performance was more stable compared to non-gifted students. Contrarily, whereas fluency-TOT correlations were larger (positively) among non-gifted students, the originality scores had the opposite pattern (negative). One way to interpret these results is that more time helps with fluency for both groups, but its added benefit is more pronounced for non-gifted students. When fluency is controlled, this added benefit comes at the cost of diminishing originality for non-gifted students.

Interestingly, a significant curvilinear effect was observed in non-gifted students in fluency and originality. Compared with their gifted peers, non-gifted students benefited more when more time was provided. This could be explained in terms of processing speed, which characterizes gifted learners (Duan et al., 2010; Feldhusen, 2005). It might be that gifted students produce more ideas in a shorter period compared with non-gifted students, and thus, exhaust their ideas earlier. Duan et al. (2010) compared processing speed between members of gifted programs and their peers receiving standard education and concluded that gifted students’ reaction time was faster than that of their peers. Moreover, Bahar and Ozturk (2018) examined the relationship between processing speed and creativity, identifying a positive relationship between processing speed and both fluency and originality among gifted boys but not gifted girls. Motivational factors might also play a role. Students who take more time can be those who are more motivated, which might help close the performance gap with gifted students. Within limited time, non-gifted students with high motivation are unable to keep up with gifted students. In this case, limited time rewards those with a higher cognitive speed. Again, the practical suggestion on extended versus short time is contingent upon the conception of giftedness and the purpose of identification. If creativity tests are supposed to diversify the pool, more time is preferrable to allow more motivated yet not necessarily high cognitive-speed individuals to have a higher chance of being eligible for gifted programs. Such an approach would also be consistent with the non-unitary view of giftedness (Renzulli, 2005). Still, this practice would not necessarily make much difference in total originality scores. Extended time use is more useful for non-gifted students’ fluency scores.

The practical implications of the current results are that educators need to consider the positive effects of instructions and explicitly target both fluency and originality when administering DT tests. Moreover, educators should allow more time when administering DT tests. This is true for non-gifted samples (Paek et al., 2021), and the current study showed that it is also the case for gifted learners. However, the question of what the ideal amount of time is for eliciting more ideas (fluency) and unique ideas (originality) is something future research might investigate. Future research could also test the difference in optimal time based on the method of administering DT tests (i.e., paper-and-pencil vs. online). Additionally, the present investigation did not examine task-related variation in DT performance in relation to explicit instructions, gifted status, and TOT.

This study had one major limitation, which is including only female participants. However, this was unavoidable due to the cultural differences in the educational systems of Western and Middle Eastern countries. In Saudi Arabia, where this study was conducted, there are separate directorates of education in each governorate: one each for boys’ and girls’ schools; thus, we did not have access the boys’ schools. Future researchers are encouraged to replicate the current study with a larger sample and include both male and female participants, although a recent meta-analysis study showed that girls outperformed boys only slightly in DT (Abdulla Alabbasi, Paek, et al., 2022).

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.