Abstract

The objective of this study was to analyse the moderating effect of metalinguistic reflection (MR) on linguistic resources to predict the quality of the scientific explanations produced by 359 fourth-grade students who participated in an instructional proposal on scientific explanations of two different scientific processes. The students’ MR level on the changes in their written explanations was assessed at the beginning and end of each unit according to three dimensions: conceptual, discursive and textual. The results show that in the first unit, the effect of metadiscursive resources on quality was diminished by the moderating effect of MR. In the second unit, the moderating effect of MR increased the effects of nominalization on the quality of the texts. The results show that the relationships between MR, linguistic resources and writing explanations are complex and dynamic. More studies are needed to grasp their development and their effects on disciplinary learning and writing.

Writing is a key skill in order to participate actively in different social spaces. Because of its role in learning, one of the most important settings is the school (Uccelli et al., 2020). Writing to learn does not have a single meaning (Klein & Boscolo, 2016); generally speaking, it refers to using writing as a vehicle to reinforce, expand and deepen knowledge (Graham & Perin, 2007; Rijlaarsdam et al., 2012). Different studies provide evidence to explain the impact of writing to learn (e.g., Graham et al., 2020; van Dijk et al., 2022), but the mediators and moderators of these effects still need to be researched in different settings. Our study contributes to this by exploring the joint contribution of the linguistic and metalinguistic dimensions to text construction.

Different studies show that linguistic resources (lexical, syntactic, textual and discursive) and the relationships among them can predict text quality, but that the results depend heavily on the writing tasks, the topics, the disciplinary expectations, the ways quality is assessed and the writers’ sociodemographic variables (we recommend the review by Crossley, 2020, on this topic). In the effective text construction process, the choices of linguistic and discursive resources must be orchestrated (Stavans & Tolchinsky, 2021). This orchestration may be influenced by metalinguistic reflection (MR), understood as the ability to consciously attend to and manipulate language to create the desired meanings considering socially shared conventions (Myhill & Jones, 2015). Thus, different levels of MR (higher or lower degree of explicitness and more or less integration of diverse dimensions of reflection) may be differently related to the linguistic resources chosen to construct high-quality texts that show students’ learning.

Based on the above, in this study we review the theoretical models used the most to explain the processes involved in writing (Bereiter & Scardamalia, 1987; Hayes & Flower, 1980) and analyse the relationships and the role of linguistic resources and metalinguistic reflection (MR) in writing within the context of writing to learn. Specifically, we conducted an integrated study of the contribution of certain linguistic resources and the MR level to the quality of scientific explanations produced by students in the fourth grade of primary school. Given that few studies analyse MR and linguistic resources, we compared two different models to gain a better understanding of the relationships among the variables analysed. In the first model (MR direct effect), we introduced MR and linguistic resources as independent predictors of the quality of the explanations. The second model (MR moderation) is based on the conceptualization of MR as the ability to monitor and manipulate the language to construct meanings, and we assessed the interactions between MR and linguistic resources in predicting the quality of the explanations.

Transforming knowledge: reflection as a facilitator

One proposal for explaining the role of writing in constructing knowledge comes from Bereiter and Scardamalia (1987) regarding two different models of text composition according to the writers’ experience. In the knowledge-telling model, the writers generate the content by constructing chains of ideas retrieved from memory through automatic processes launched through writing. The thinking-telling process is developed until the writers have no further ideas to retrieve. The knowledge-transforming model has two problem spaces: content and rhetoric. The key is to transform problems in the rhetorical space into sub-goals to achieve within the content space, and vice versa. For example, one could write a letter to the director to express the problems of a recently implemented policy. In this context, the writer would deal with the rhetorical problem of making an idea convincing, which would translate into sub-goals like explaining the reasons behind their statements, providing examples and defining the concepts associated with the policy, among other possibilities (Scardamalia & Bereiter, 1992).

Efforts have been made to understand how to go from knowledge-telling to knowledge-transforming by studying the mental representations available to writers and what they are able to do with them (Scardamalia & Bereiter, 1992). However, because mental representations are not observable, they have to be deduced from indirect evidence. When asking 12 writers of different groups (sixth grade, tenth grade and adults) to recall what their texts where about, it was observed that the students recalled as much of the texts as the adults, but most young students reproduced their texts word for word or paraphrased them. The adults referred to their intentions (e.g., ‘I tried to put all those things together’), to cues (by indicating, e.g., ‘say something like. . .’) and to structural aspects of the text (e.g., ‘third paragraph’) (Scardamalia & Bereiter, 1992). The more advanced writers were able to work at different levels by interconnecting their representations — problems of meaning, expression and organization — in a coordinated manner without losing control of the overall process. To help the students to operate with knowledge-transforming, they suggested introducing reflection cycles into composition routines, which would lead students to reconsider their decisions and change the pattern of content chains which are retrieved from memory.

In this way, the capacity to reflect on decisions seems to be an important part of the model of knowledge-transforming. We shall focus on metalinguistic reflection for writing, which has been given different names: metalinguistic awareness, activity or reflection (Hugo & Meneses, 2021; Myhill & Jones, 2015). We take an interdisciplinary approach which considers the cognitive, social and linguistic facets involved in a multidimensional writing model (Myhill & Chen, 2020). MR is defined as ‘the explicit bringing into consciousness of an attention to language as an artifact, and the conscious monitoring and manipulation of language to create desired meanings grounded in socially shared understandings’ (Myhill & Jones, 2015, p. 853). MR can help the writer to choose the linguistic resources for text construction (Myhill et al., 2020), facilitating the connection between the two problem spaces to transform knowledge. As Camps and Milian (2000) state, the operations involved in revising, assessing, detecting, diagnosing and repairing the difficulties of a text seem to require knowledge about language, which can have different levels of explicitness. Cülioli (1990), for example, distinguishes between unconscious metalinguistic or epilinguistic activity and conscious metalinguistic activity, which can be unverbalized but observable through the control of linguistic uses, or verbalized by using common terms or metalanguage. In this way, students’ difficulties with detecting, diagnosing and repairing the problems in texts may be related to low explicit levels of this type of knowledge about the language (Camps & Milian, 2000), while more explicit levels may facilitate these processes.

Linguistic resources as options: towards a complex view of the translation process

Hayes and Flower (1980) describe text composition based on the sub-processes of planning, translating and reviewing. This model was developed with competent writers, so translation was not examined in depth. However, higher translation skills may contribute to writing quality by providing access to a broader linguistic repertoire that can be used to create and review sentences (Saddler & Graham, 2005).

However, a broad repertoire is not enough; critical rhetorical flexibility is also needed, that is, the ability to adopt or reject the linguistic resources required by specific genres or registers. Indeed, in the functional view, writers are agents who analyse and reflectively choose the language they use to generate their own meanings (Uccelli et al., 2020). This critical rhetorical flexibility is developed in the interplay of skills, linguistic repertoires and metalinguistic reflection to assess the effects of linguistic decisions, taking into consideration genre and register demands, as well as the purpose of writing and the audience (Berman, 2016; Hugo & Meneses, 2022; Uccelli et al., 2020). Thus, the translation process is not merely about converting ideas into language; it is a complex process that involves different skills and knowledge. Noting this complexity is a way to emphasize writers’ linguistic agency (Phillips Galloway et al., 2019). Investigating the role of MR in selecting resources for text construction entails exploring possible ways to promote it. In our study, we shall analyse the linguistic resources that facilitate the construction of scientific explanations generated in a context of writing to learn.

Linguistic resources to construct scientific explanations

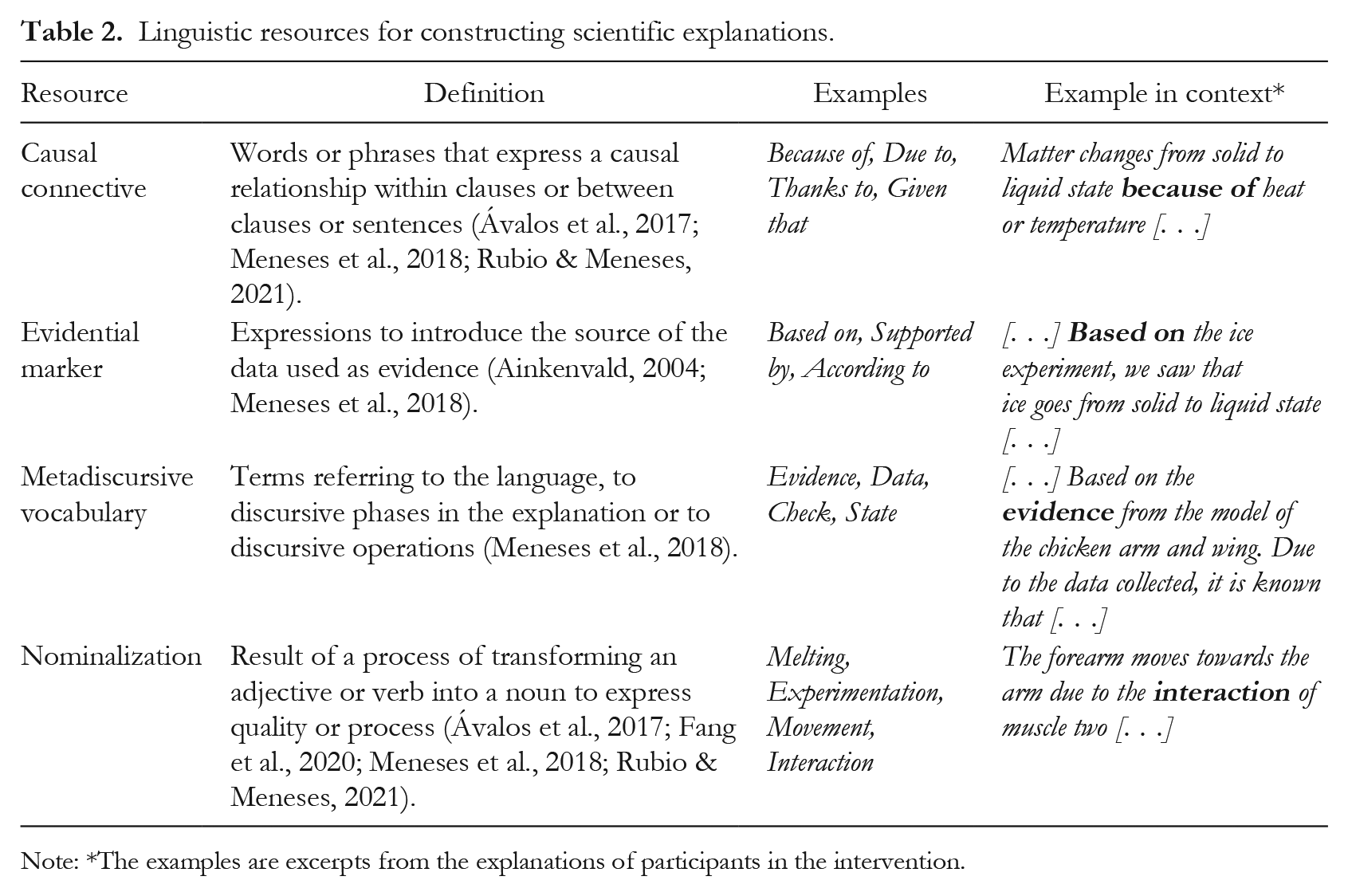

Explanations are authentic practices that facilitate the representation and understanding of natural phenomena (Rubio & Meneses, 2021), so they are one of the discursive genres that students frequently read and write in order to learn. The linguistic resources that are the most likely to be chosen to construct scientific explanations are related to the objectives and phases of this genre. Pedagogical adaptations have posited that its organizational phases are: statement, in which the scientific process is identified and the ideas are introduced to share how or why a phenomenon occurs; evidence, in which scientific data are presented to support the proposed idea; and reasoning, which consists of the explanation of the principles or cause-and-effect relationships underlying the process (McNeill & Krajcik, 2012; Meneses et al., 2018). These phases, which refer to core cognitive processes in scientific explanation, have specific linguistic counterparts, that is, linguistic resources that writers must learn to produce precise explanations. Causal logical relations are explained via causal connectives (Ávalos et al., 2017; Fitts et al., 2020). Evidence tends to be introduced via evidential markers which reveal where the information came from. Nominalizations that synthesize information abstractly are also used (Ávalos et al., 2017; Rubio & Meneses, 2021).

Thus, generating scientific explanations from the knowledge-transforming model with a complex view of the translation process entails considering that the writer builds knowledge and deepens it by establishing connections between their ideas about content, the rhetorical operations of the genre and their repertoire of linguistic resources. Understood as the ability to consciously attend to language, MR should facilitate the choice of resources and interactions across the different domains. Hence, it is worthwhile to gain a better understanding of its role in writing for learning.

This study

In this study, we analyse an MR task as indirect evidence of the mental representations available to the students when writing. Students whose reflections relate the content of the explanations with discursive and textual aspects of the genre would be operating with the knowledge-transforming model (Bereiter & Scardamalia, 1987). MR is conceptualized as the monitoring and manipulation of language to construct meanings, leading us to consider that MR could impact quality by selecting specific linguistic resources relevant to the discursive genre. We analyse how different levels of MR — which vary according to the quantity and specificity of the dimensions comprising it — interact with linguistic resources in the construction of scientific explanations to predict their quality.

Our questions in this study are:

(1) How does students’ MR performance vary according to the thematic unit?

(2) What are the relationships between students’ MR performance levels, the linguistic resources of their scientific explanations and the quality of these explanations, depending on the disciplinary topic?

(3) Does the MR performance level act as a direct predictor or as a moderator of the prediction of linguistic resources’ impact on the quality of the scientific explanations?

Methodology

Context

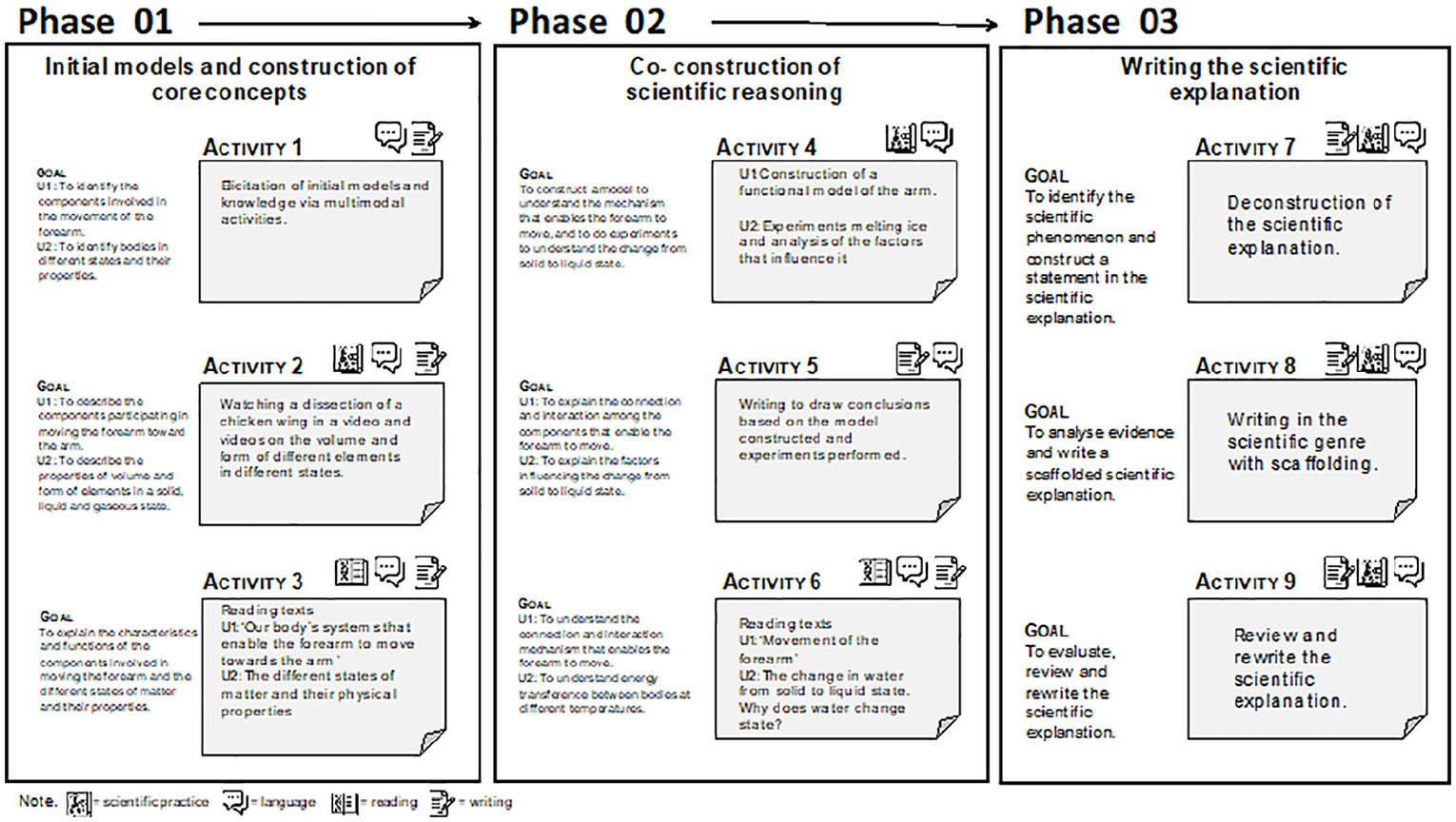

This research is part of a secondary study framed within an instructional proposal that promotes learning in sciences via the construction of scientific explanations in the fourth grade of primary school (age 10) at several schools in the Metropolitan Region of Santiago de Chile. The instructional proposal was developed in two teaching units each lasting four to five weeks, with three hours per week and three learning phases. Unit 1 (U1) was related to the musculoskeletal system and unit 2 (U2) to changes in the state of matter. Figure 1 presents the activities and learning objectives of each phase.

Phases and activities for the construction of scientific explanations.

Participants

A total of 359 students (52.9% girls; 47.1% boys) participated in the study. They attended four schools in 10 different classes — with comparable characteristics and without statistically significant differences in terms of their sociodemographic composition — taught by four science teachers who applied the instructional proposals in 2017 and 2018 after participating in a professional teacher development programme. The participating schools serve students from the lower-middle socioeconomic group (the average schooling of the mother is 10 years and the average monthly household salary is between US$437 and US$688) (Agencia de Calidad de la Educación, 2013). We chose the schools that taught the highest percentage of the activities in the proposal. The students and their teachers voluntarily filled out the assent and consent forms approved by the university ethics committee.

Tasks

Scientific explanation tasks

In this study, we analysed the handwritten scientific explanations at the end of two units. In the proposal, the students were encouraged to work like scientists, explaining scientific practices like constructing models, experimenting and explaining. The tasks were done in the scientific workbooks designed especially for the instructional proposal, which contained both the tasks analysed in this article and the readings and the activities summarized in Figure 1. The students were told that scientists from the university would read their responses in the workbooks. The instructions for U1 were: ‘Scientifically explain how the forearm moves towards the arm.’ The instructions for U2 were: ‘Scientifically explain how matter changes from a solid to a liquid state.’ The explanations (a total of 614) were transcribed with the spelling mistakes corrected to avoid assessment biases and to facilitate the analysis of the linguistic resources. We analysed a total of 318 explanations from U1 and 296 from U2.

MR task

For this study, we analysed 571 reflections, 293 from U1 and 278 from U2.

The instructions for the task were: ‘Now go back to page X and check what you initially wrote. How did your scientific explanation change?’ This task asks students to refer in writing to the perceived changes when comparing the initial and the final written work they produced.

Measures

MR performance level

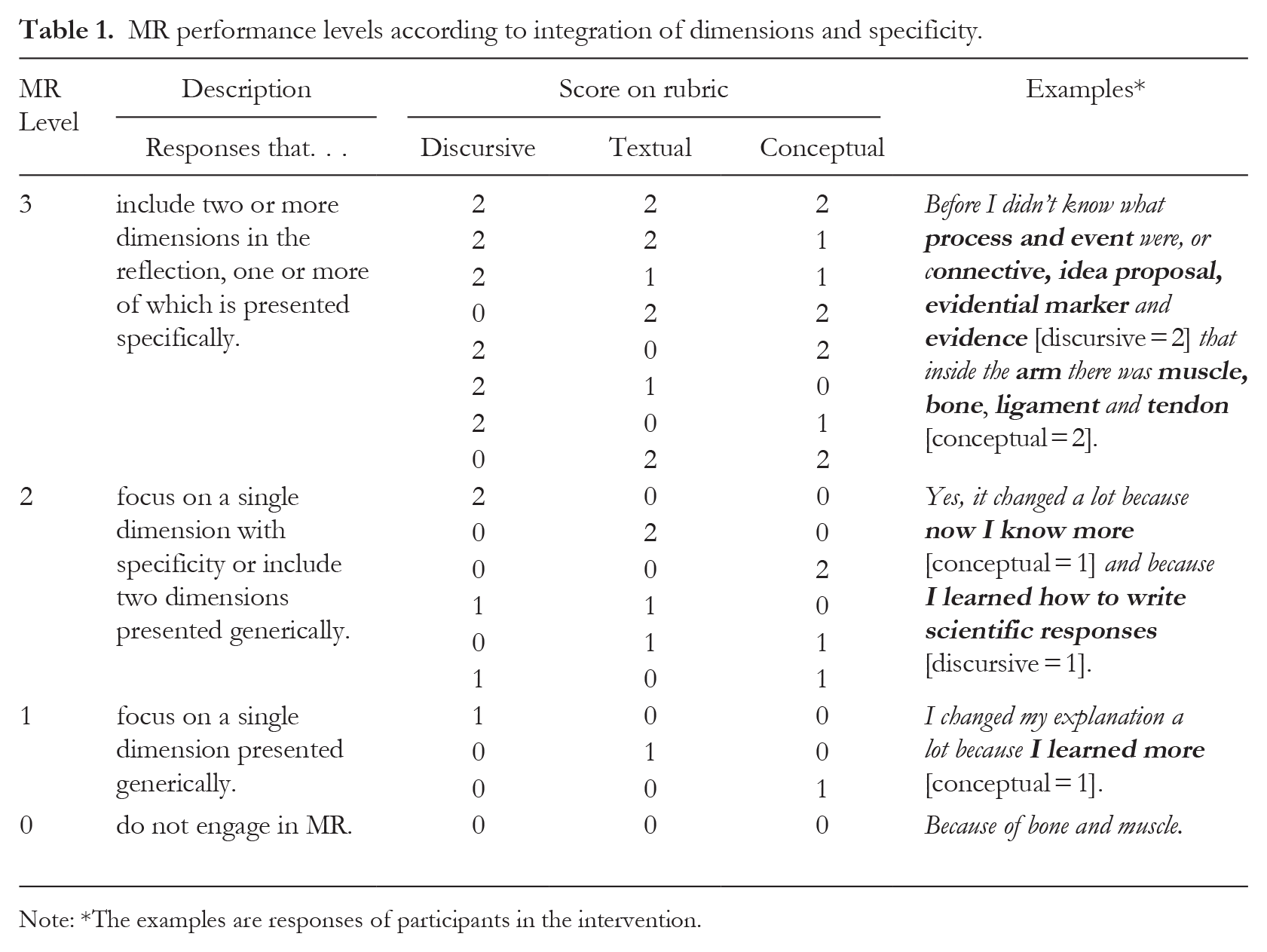

In a prior study (Hugo et al., 2024), we designed an analytical rubric considering the different dimensions on which the students reflected. In the discursive dimension, we considered mentions of aspects of the discursive genre and the register (like the discursive phases of the explanation: statement with the proposed idea and process, and evidence with the evidential marker); in the textual dimension, we considered the characteristics of textuality (length, completeness, specificity, clarity, comprehensibility) 1 ; and in the conceptual dimension, we considered references to the thematic content of the unit. We gave each reflection a score per dimension, with three different possible scores (0 = ‘does not appear’; 1 = ‘appears generally but unconnected to the genre or theme’; 2 = ‘it appears specifically, connected to the genre or theme’). Two linguists applied the rubric, and consistency was calculated by double-coding 20% of the reflections (114 MRs). Consistency in the application of the rubric was calculated, yielding a very high Kappa coefficient (k = .91, p < .001). After that, they applied the rubric individually to the remaining reflections, and if any doubts arose, they reviewed them together. In this study, we used the scores earned when applying the rubric to determine the MR performance levels (MR level). The reflections with the highest level referred to two or three integrated dimensions, with at least one of them connected to the genre or the theme; that is, they are reflections that simultaneously consider the content and the rhetorical space (Table 1).

MR performance levels according to integration of dimensions and specificity.

Note: *The examples are responses of participants in the intervention.

Prototypical linguistic resources in the explanations

We codified different resources in the explanations and chose four that contributed to fulfilling the rhetorical objectives of the genre (Table 2).

Linguistic resources for constructing scientific explanations.

Note: *The examples are excerpts from the explanations of participants in the intervention.

Four linguists coded the resources in the explanations semi-automatically. First, we devised a total list of words in the corpus (96,611 words and 2,954 different words). Each linguist coded a resource type from all the different words, and a second person checked this codification. Cases of disagreement or doubt were checked until agreement was reached. We used version 4.1.0 of the R program in Mac OS X (R Core Team, 2022) to identify the resources in each explanation, version 1.4.0 of the stringr library (Wickham, 2019) and version 0.7 of gsubfn (Grothendieck, 2018). We worked with the frequency of the resources, as the explanations are brief, with an average of 39.61 words (U1) and 46.74 words (U2), and we were interested in understanding the specific choices of the resources.

Quality of scientific explanations

Through randomized comparative judgement, we obtained a score on the overall quality of the explanations written by the students using NoMoreMarking®. After reaching agreement on the characteristics of the genre and the conceptual aspects, 10 different judges checked two written productions chosen randomly to choose the best one. Each explanation was compared 10 times (interjudge reliability = .90).

Analysis plan

To answer question 1 on performance levels on MR tasks, we analysed the distribution of the responses in the two units. To answer question 2 on the relationship among MR level, linguistic resources of the explanations and the quality of those explanations, we used non-parametric analysis to compare MR levels and correlations among the variables of interest to determine their relationships. To answer question 3 on whether the effects of MR level on the quality of the explanations were direct or indirect via the linguistic resources, we compared two models. The first model included a direct effect of MR level and linguistic resources. The second model included these direct effects and interactions between the MR level and the resources for each unit (moderation). Each model was estimated using a step-by-step fit approach. We began with a model that included all the variables of interest, and at each step we eliminated the variable that lowered the value of AIC, following the process outlined in Venables and Ripley (2002). We chose to use AIC instead of BIC as the criterion because it seemed more adequate due to the presence of decreasingly small effects due to interactions. However, it is necessary to emphasize that the model obtained via this method minimizes the information given the sample used, and the choice of the model may vary according to the sample size, as outlined by Burnham and Anderson (2004). The analyses were performed with version 4.1.3 of the R program (R Core Team, 2022).

Results

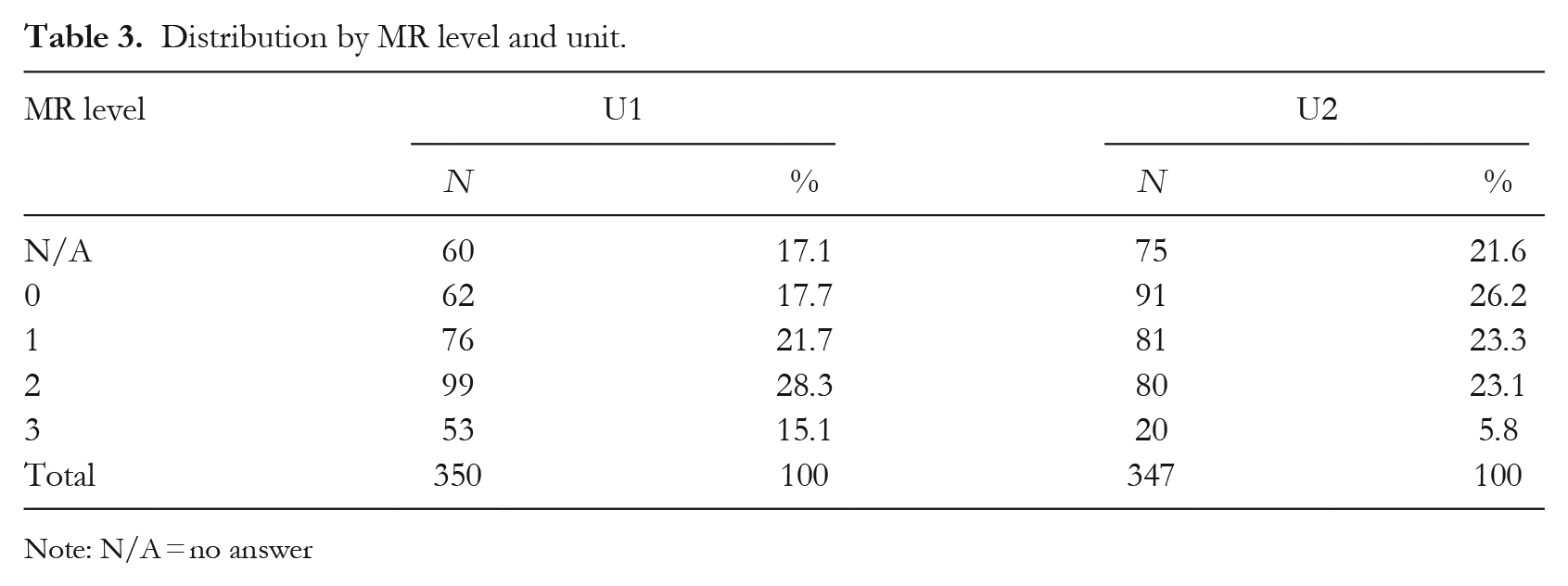

RQ1: Variation of student MR performance according to thematic unit

Table 3 shows the distribution of MR performance levels by unit. In U1, 17.7% of the students did not engage in metalinguistic reflection, while 28.3% reflected at level 2, meaning that they expressed only a single dimension with specificity or generically integrated two dimensions. Meanwhile, 15.1% reflected by integrating two or three dimensions into their response, with at least one presented specifically (MR level 3). In U2, the percentages of students who did not engage in metalinguistic reflection (level 0) are higher than in U1 (χ2(1) = 9.73, p < .002). Likewise, the percentage of students who generated level-3 reflections is significantly lower than in U1 (χ2(1) = 13.86, p < .001). One possible interpretation is that the more abstract nature of the topic makes integrated and specific reflection more challenging. When analysing the students’ responses with MR level 0, we found that some of them referred to matter’s change of state more than to the changes in their explanations, so the task may also influence this.

Distribution by MR level and unit.

Note: N/A = no answer

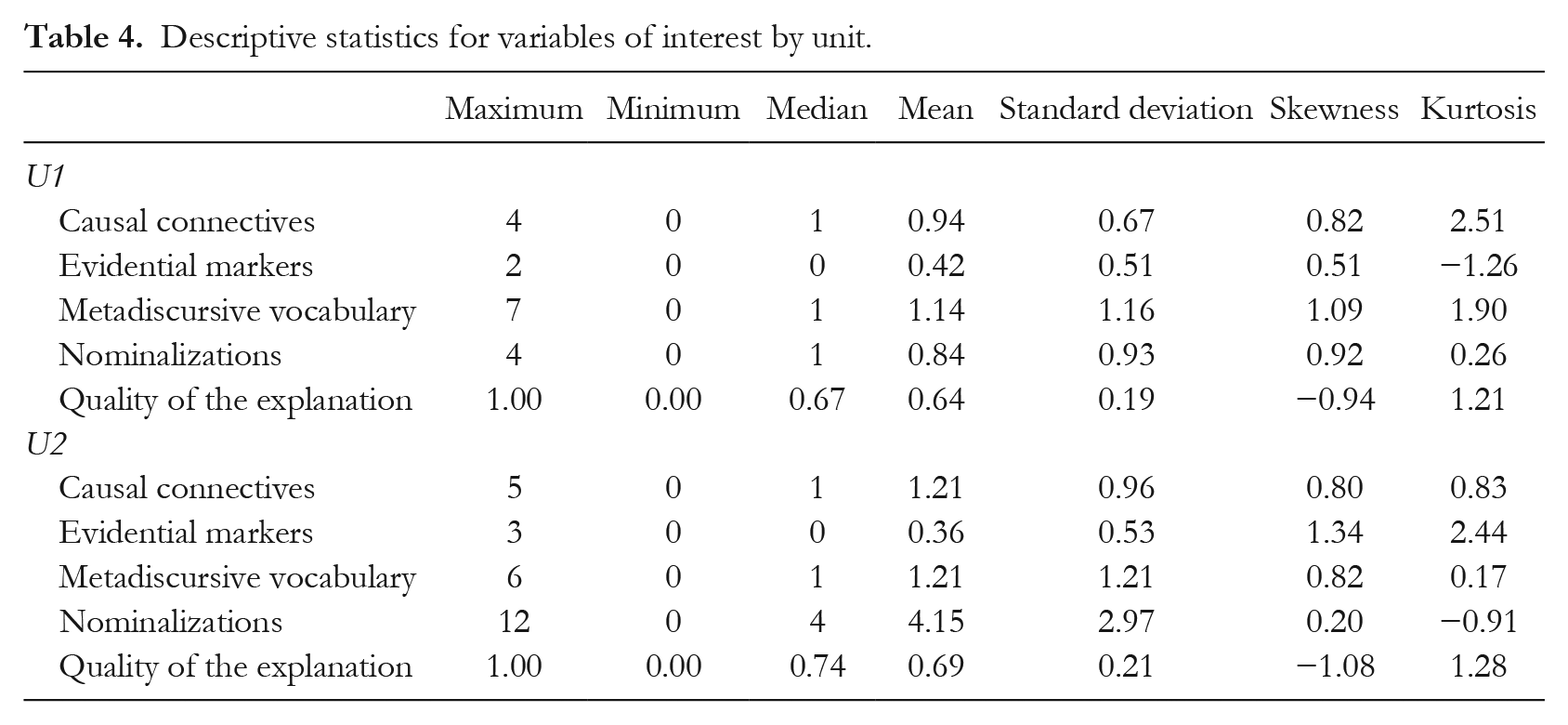

RQ2: Relations between MR performance level, linguistic resources and quality of the explanations according to disciplinary topic

Table 4 presents the descriptive statistics for the resources and quality of the scientific explanations according to the unit. Table 5 presents the performance of the linguistic resources variables according to the unit and the MR level. In U1, the performance increased on all the variables of interest as the MR level increased, which implies that, on average, the students who integrated different dimensions with specificity in their reflections also earned the highest averages in using linguistic resources and quality of their explanations.

Descriptive statistics for variables of interest by unit.

Descriptive statistics for variables of interest by MR level.

We employed the Kruskal-Wallis test to compare the distributions of medians for MR levels according to the use of linguistic resources and the quality of explanations. In U1, we found significant differences for evidential markers (χ2(3) = 33.40; p < .001; level 0 lower than level 2, 0 lower than 3 and 1 lower than 3); metadiscursive vocabulary (χ2(3) = 48.00 p < .001; level 0 lower than 1, 0 lower than 2, 0 lower than 3 and 1 lower than 3); and nominalizations (χ2(3) = 55.80 p < .001; only levels 1 and 2 are not different). We also found significant differences in quality (χ2(3) = 43.55; p < .001; level 0 lower than 1, 0 lower than 2, 0 lower than 3 and 1 lower than 3).

In U2, the results show a different pattern. In almost all the variables of interest, the means of the group with MR level 1 were lower than those with the Level 0 group, and in some variables the means of the group with MR level 3 were lower than those of the group with MR level 2. We found significant differences for causal connectives (χ2(3) = 20.04; p < .001; with level 0 lower than 2, 1 lower than 2); evidential markers (χ2(3) = 12.37; p < .006; with level 1 lower than 2); metadiscursive vocabulary (χ2(3) = 30.99; p < .001; with level 0 lower than 2, 0 lower than 3 and 1 lower than 2); and nominalizations (χ2(3) = 48.68; p < .001; with level 0 lower than 2, 0 lower than 3, 1 lower than 2 and 1 lower than 3). We also found significant differences in the quality of the explanations (χ2(3) = 18.83; p < .001; with level 0 lower than 2 and level 1 lower than 2).

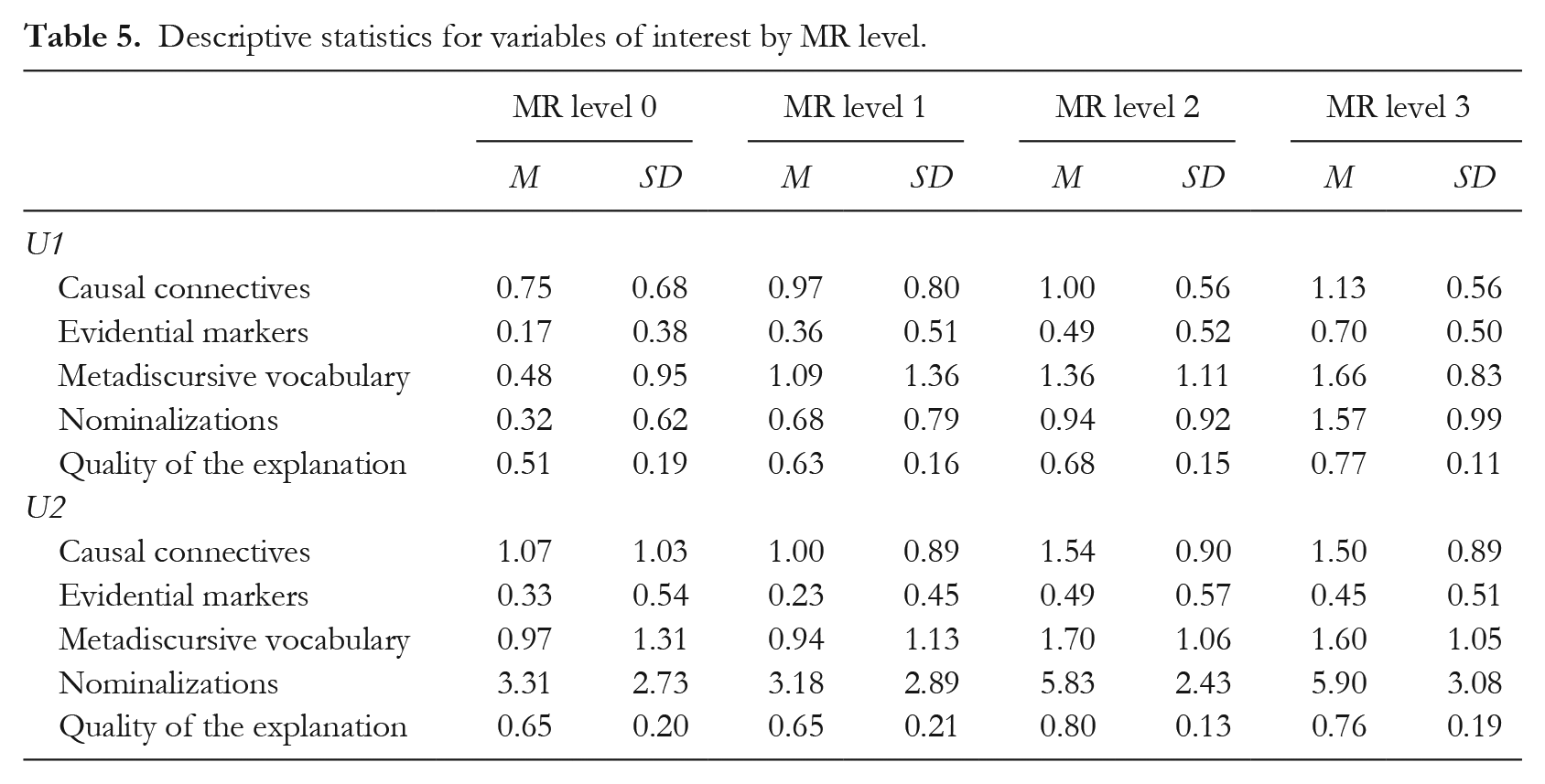

Table 6 shows the correlations for the variables of interest by unit. In U1, all the variables are significantly correlated. We found very low positive, significant correlations between MR level and causal connectives (r = .17), low ones for evidential markers (r = .34) and metadiscursive vocabulary (r = .33) and moderate ones for nominalizations (r = .46). Regarding quality, we found low correlations for quality and causal connectives (r = .34) and source markers (r = .39), and moderate ones for metadiscursive vocabulary (r = .40) and nominalizations (r = .41). Metadiscursive vocabulary and nominalizations stood out in this unit for their relationship with both MR and quality.

Correlations among variables of interest.

Note: *p < .05; **p < .01; ***p < .001

In U2, we also found significant correlations among all the variables. There were very low positive, significant correlations between MR level and causal connectives (r = .19) and evidential markers (r = .14), and low ones between MR level and metadiscursive vocabulary (r = .26) and nominalizations (r = .37). We found low correlations between quality of the explanation and evidential markers (r = .34), moderate ones between quality of the explanation and causal connectives (r = .52) and metadiscursive vocabulary (r = .52) and high ones between quality of the explanation and nominalizations (r = .65). Once again, the resources with the highest correlation coefficients corresponded to metadiscursive vocabulary and nominalizations in relation to both MR level and quality of the explanations. Likewise, in U2, the correlation between metadiscursive vocabulary and nominalizations is high (r = .70).

Furthermore, in both units, we found a positive, significant relationship between MR level and the quality of the explanations, a moderate one in U1 (r = .46) and a low one in U2 (r = .24), which enables us to proceed to a predictive phase.

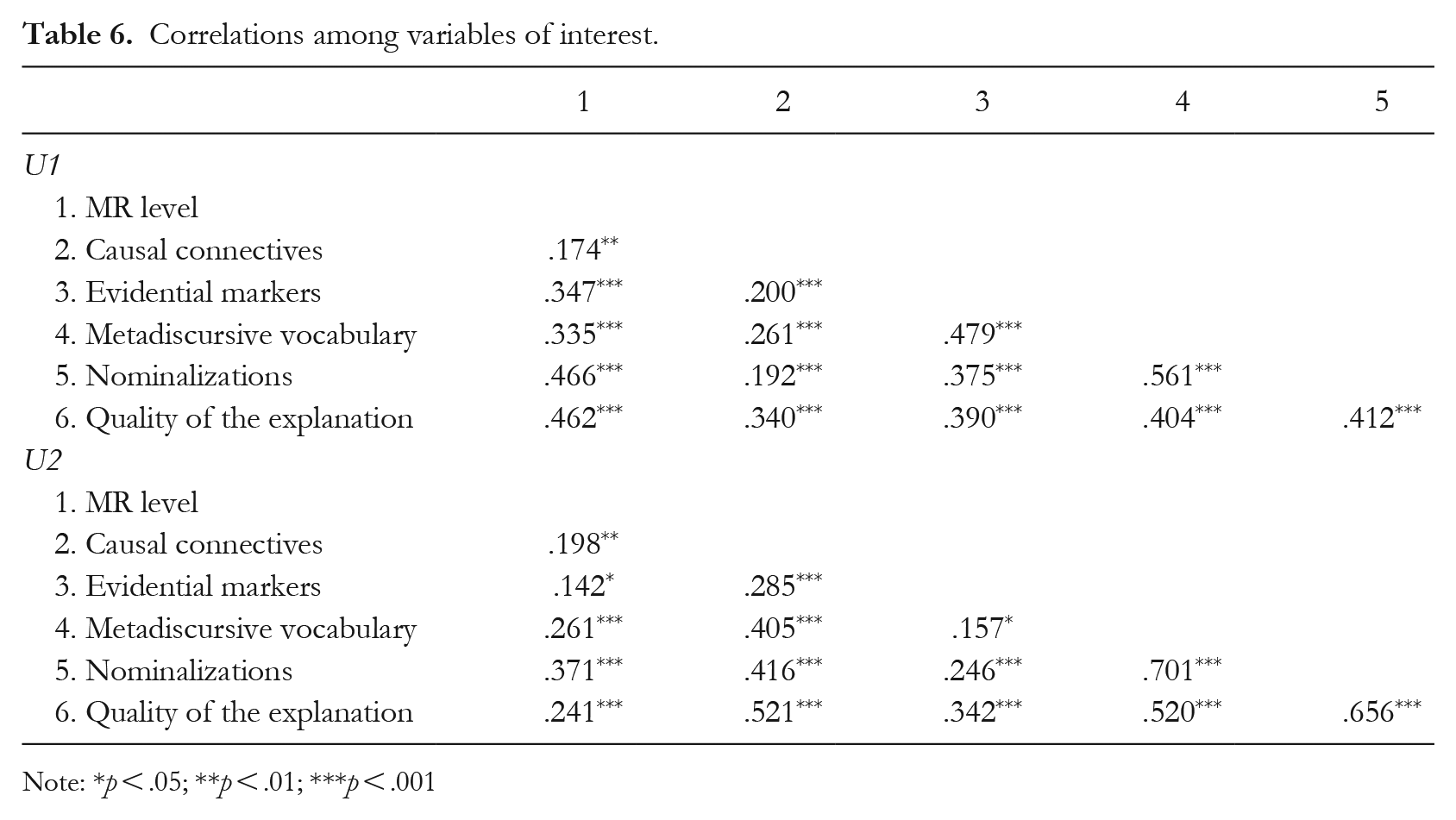

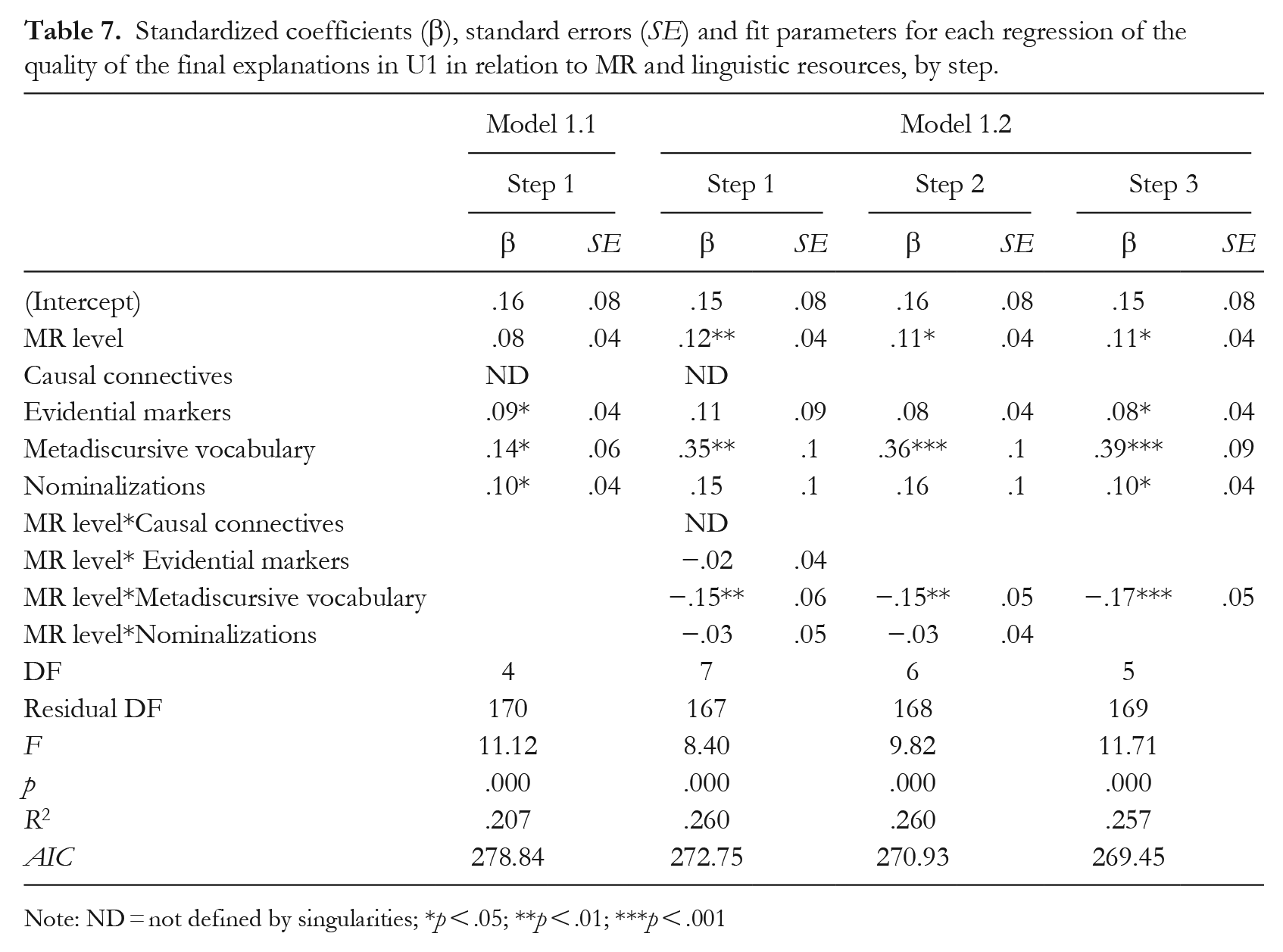

RQ3: MR performance level: direct predictor or moderator of the linguistic resources on the quality of the explanations?

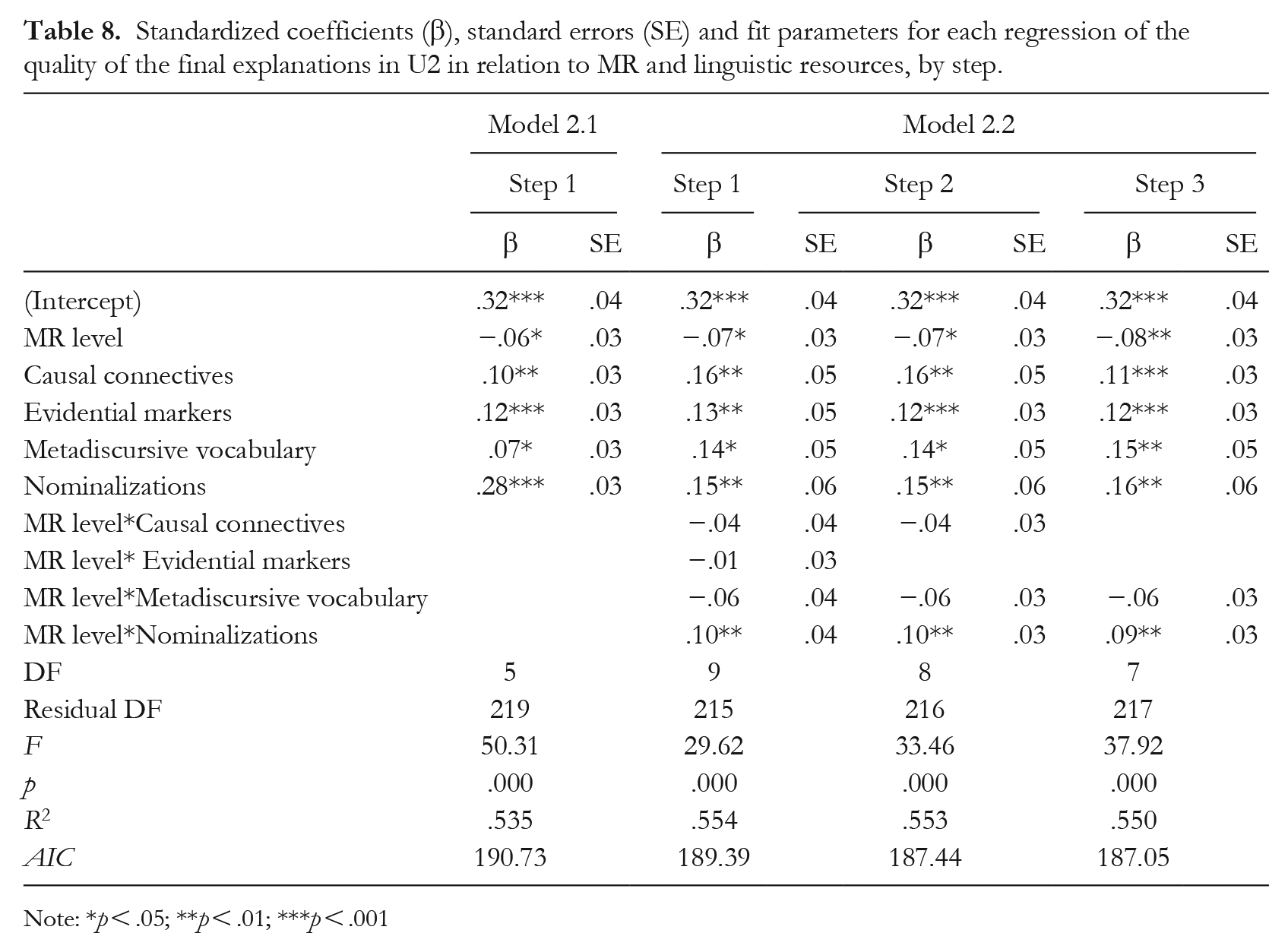

To answer question 3, we compared two models per unit to explain the quality of the explanations. In the first model (MR direct effect) (regression 1.1 for U1 and regression 2.1 for U2), we introduced the MR level and the four linguistic resources as independent predictors without including interactions. In the second model (MR moderation) (regression 1.2 for U1 and regression 2.2 for U2), we added interaction between MR level and linguistic resources, in addition to considering them independent predictors (operationalization of the moderation). The values obtained for the coefficients and fit at each step in the regression are outlined in Table 7 for U1 and Table 8 for U2.

Standardized coefficients (β), standard errors (SE) and fit parameters for each regression of the quality of the final explanations in U1 in relation to MR and linguistic resources, by step.

Note: ND = not defined by singularities;

p < .05; **p < .01; ***p < .001

Standardized coefficients (β), standard errors (SE) and fit parameters for each regression of the quality of the final explanations in U2 in relation to MR and linguistic resources, by step.

Note: *p < .05; **p < .01; ***p < .001

We checked whether the models met the conditions needed to apply a linear regression analysis through graphs of residuals vs. predictions, residuals vs. normal distribution, scale-location and residuals vs. leverage. Figures S1 to S4 in the supplementa materials illustrate these graphs and show that the deviations in the conditions are small and generally only found at the far ends of the ranges.

In U2 (Table 7), model 1.2 (MR moderation) showed significant fit (F(6, 16) = 11.71; p < .001) and explained a higher percentage of variance (25.7%), with higher fit indexes. MR level, evidential markers, metadiscursive vocabulary, nominalizations and the interaction between MR level and metadiscursive vocabulary were significant. This means that high MR levels and a high presence of linguistic resources predicted high scores on quality. However, we found that the coefficient for the interaction between MR level and metadiscursive vocabulary was negative, such that a change of one unit in MR level lowered the quality by .17 per element of metadiscursive vocabulary present. In both models, causal connectives were not a predictor of quality. One possible interpretation is that the resource appears as a form (because, thanks to) but without the function of causality, which would mean that the students use connectives without fully understanding their meaning and the way they are used.

In U2 (Table 8), model 2.2 (MR moderation) also showed higher explained variance (55%) and better-fit indexes. The interaction between MR level and nominalizations appeared and was significant, while the interaction level between MR level and metadiscursive vocabulary lowered its negative impact and was no longer significant. Thus, a change of 1 unit in MR increased each element of nominalization found in the explanation by .09; therefore, the effect of nominalization increased as the MR level increased.

In short, the models with moderation explain higher percentages of the variance with better fits in both units. The only interactions that significantly predict quality are the interactions between MR level and metadiscursive vocabulary (U1) and between MR level and nominalizations (U2).

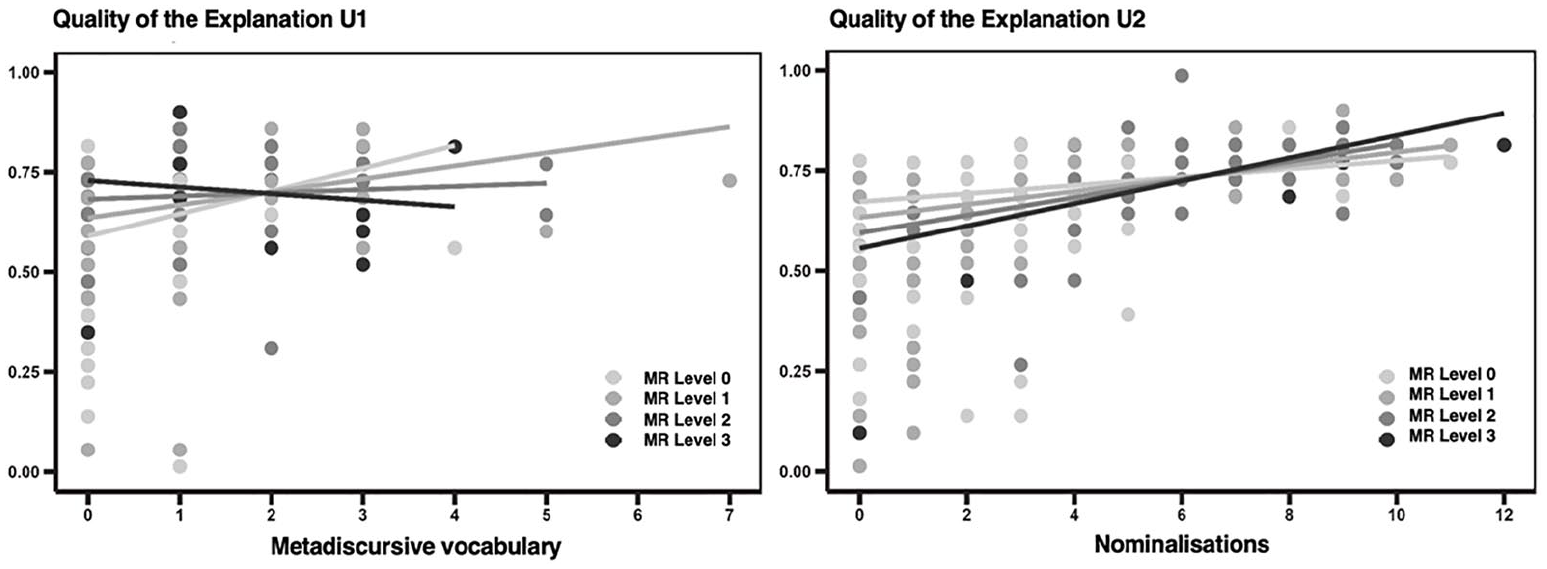

Figure 2 shows the moderating effect of MR level for the relationship between metadiscursive vocabulary and quality of the explanation in U1 and between nominalization and quality of the explanation in U2. The lines represent the linear regression evaluated at each MR level and the dots represent each student’s performance. In U1, we found that the interaction is manifested in the linear regression as a different slope for each MR level. Even though we found that the higher frequency of metadiscursive vocabulary means a higher quality explanation (positive slope), we can see that this trend (slope) is stronger for MR level 0 and drops as the MR level increases. Thus, the effect of metadiscursive vocabulary on the quality of the explanations is higher for students with a lower MR level than for those with a higher level. In U2, the students with a higher MR level show a stronger trend (slope) for the effect of nominalizations on quality, the opposite of what we found for U1 for metadiscursive vocabulary.

Moderating effect of MR level for the relationship between metadiscursive vocabulary and quality of the explanation in U1 and between nominalization and quality of the explanation in U2.

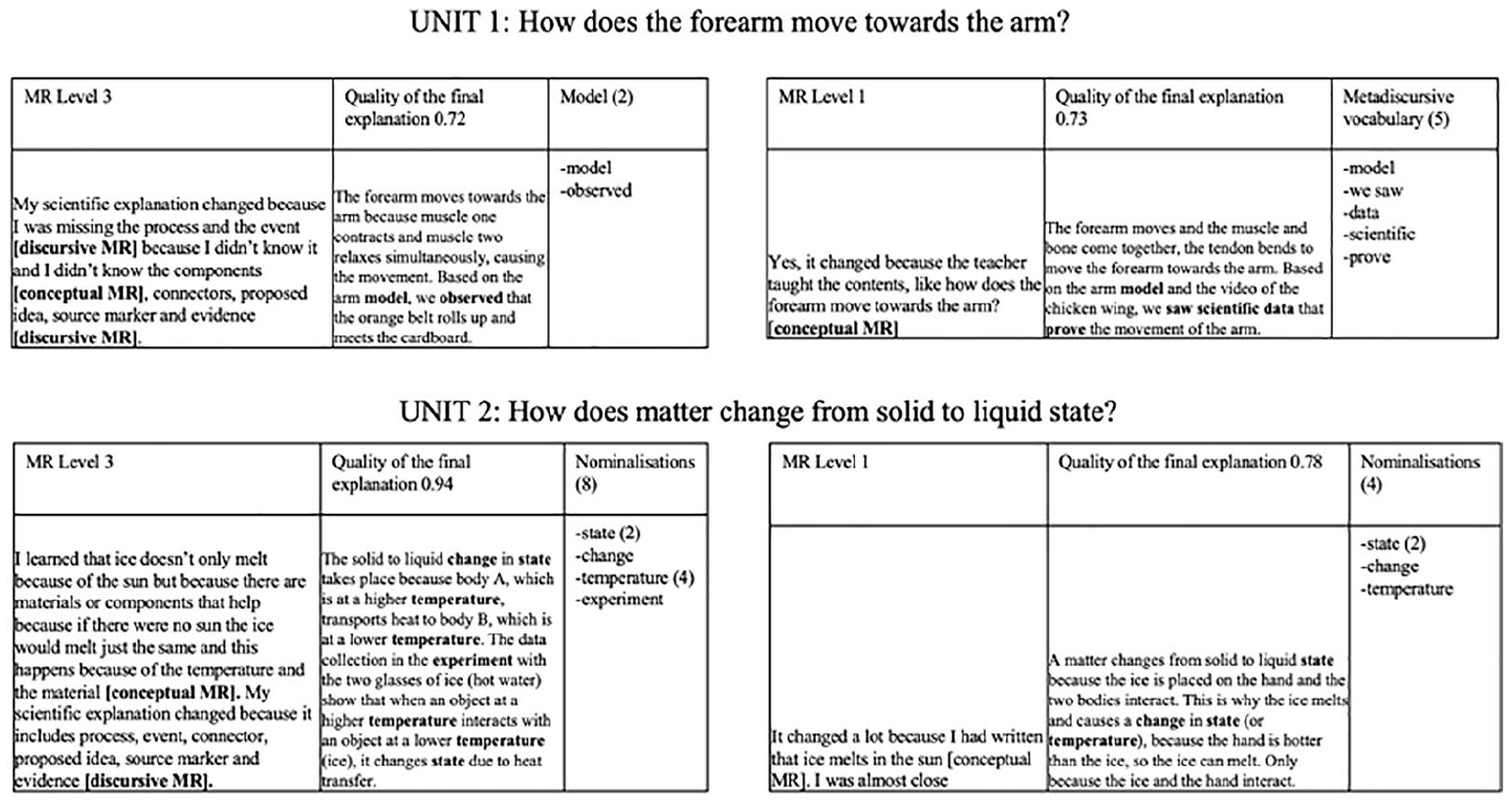

Figure 3 shows the interaction relationships explained above in the students’ written productions. CASE A and CASE B show a similar quality (72nd and 73rd percentiles) but differ in MR level and the amount of metadiscursive vocabulary. Example A represents students with a high MR level but infrequent use of metadiscursive vocabulary in their explanations, while example B shows a low MR level but frequent use of metadiscursive vocabulary in their texts. Thus, B shows how metadiscursive vocabulary supports the construction of the evidence phase, even though specific scientific content is absent. CASE C shows a high MR level, high quality (94th percentile) and frequent use of nominalizations. CASE D represents the students’ written productions with a low MR level, high quality — although lower than in CASE C — (78th percentile) and infrequent use of nominalizations. These are examples in which a high MR level fosters the presence of nominalizations, which in turn enhances the quality of the explanations.

Examples of written productions that show the interactions between metalinguistic reflections, resources and explanations.

Discussion

Given that MR involves consciously monitoring and manipulating the language to construct meanings (Myhill & Jones, 2015), it may play an important role in choosing linguistic resources for disciplinary genres that the students write to learn. In this study, we analysed an MR task as indirect evidence of the mental representations available to students when writing and how this metalinguistic dimension interacts with the linguistic dimension in the construction of scientific explanations by fourth-grade students who participated in an instructional proposal.

Metalinguistic reflection is a complex process. Approximately 15% of the students in U1 and a little over 5% in U2 demonstrated reflections/representations that integrated conceptual and discursive dimensions connected to the topic and the genre. However, our study provides evidence that young students (fourth grade) were able to operate with the knowledge-transforming model with the additional challenge of responding to the MR task in writing without support to delve deeper into the ideas (Myhill et al., 2020).

This exploratory study provides evidence of the direct and indirect effects of MR on the quality of written scientific explanations. The indirect effects found, though small, are primarily related to the use of metadiscursive vocabulary and nominalizations. No student received explicit training in MR, given that the intervention focused on supporting the writing of scientific explanations and scientific learning. Despite this approach, our results reveal that more than half of the students engaged in MR, which enabled them to adjust the use of linguistic resources, and that this adjustment varied by topic.

In U1, performance on all the variables of interest was higher as the levels of MR proficiency increased. The interaction takes place with the metadiscursive vocabulary, diminishing the impact of metadiscursive resources on the quality as the MR level increases. We posit that students who have a mental representation that integrates the content space with the rhetoric space available when writing their explanations (MR level 3) can operate with it without the need to include their discursive knowledge in the explanation to construct quality explanations. Students with a low MR level (MR level 0), who probably do not have this mental representation available to them, depend more on the presence of this type of vocabulary, which is strongly associated with rhetoric, to generate quality explanations. This type of vocabulary may help them to activate their discursive knowledge by acting as a regulator. To further explore this interpretation, we need more studies that help us to better understand the relationship between discursive knowledge, metadiscursive vocabulary and metalinguistic reflection. This could be done, for example, by analysing the type of metadiscursive vocabulary that students use in their explanations, the discursive phases of the explanation and the references they make to the changes in discursive level in their reflections.

In U2, we found that the MR level was negatively related to quality. The results may have been influenced by the fact that the students did not interpret the task metalinguistically. However, MR is a skill that requires attention and control, and coupled with the abstraction of the topic of changes in state, it can create significant cognitive demands. More studies are needed to test the hypothesis that the topic of the text influences the type of representation/reflection. In U2, we found a positive, significant interaction between MR level and nominalization: the higher the MR level, the higher the effect of nominalization on quality. Prior studies analysing scientific explanations written by students claim that nominalizations are associated with high-performing texts (Ávalos et al., 2017) or with texts by students from the high socioeconomic group in contexts without previous instruction (Rubio & Meneses, 2021). Just like MR, nominalization requires abstraction, thus revealing a common factor. Furthermore, the interaction between MR and metadiscursive vocabulary appears consistently, albeit not significantly, which reveals that the possible regulating effect observed in U1 may still exist.

This study is not without limitations. We analysed only a single written MR task; thus, despite the methodological advantages of access to a large sample and individual performance, it does not allow for the generation of follow-up questions, which would have been particularly useful in U2. Complementing it with oral elicitation or other non-written tasks would be desirable. Another limitation is related to the fact that MR was not explicitly taught; in other words, there was no intentional focus on language during writing, which hinders access to factors associated with teaching MR.

Nevertheless, this research contributes to developing a more nuanced understanding of the translation process and the importance of reflection in resource selection (Myhill, 2009; Myhill & Chen, 2020; Uccelli et al., 2020). We observe that the impact of certain resources on the quality of the explanations varies according to MR levels, leading us to conclude that the relationships among MR, language and writing are complex and dynamic, highlighting the need for explicit attention to these dimensions in the context of writing to learn. More studies are required to gain a better understanding of their development and interactions and how to foster their learning.

Footnotes

Notes

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.