Abstract

This study examined multiple text integration in the academic writing of L1 and L2 English speakers. Participants read two texts describing the costs of college and rates of college enrollment in Latin America and the United States. Texts were organized either by region (e.g., cost and enrollment rates in the US) or by topic (e.g., discussing cost in both the US and Latin America), requiring students to make either intertextual or intratextual connections to compare regions. Students wrote comparative-contrastive reports, which were scored based on the number of connections (i.e., either intertextual or intratextual) generated. While no differences were found across experimental groups or by language status, supplemental analyses found English L2 students to rate texts about Latin America particularly interesting and comprehensible. Moreover, students were better at describing intratextual and intertextual contrasts rather than comparisons, with this effect also conditional on language status.

The demands of college-level writing for English L2 students include both the challenges experienced by all students (e.g., having to understand rhetorical structures, and using academic vocabulary; Allen et al., 2014; Casanave, 2002; Shi, 2004) and challenges that are unique to writing in a second language (e.g., first language interference, bidirectional transfer, Urdaneta, 2011; Uysal, 2008). Specifically, studies on the experiences of L2 college students have shown that learning to write in their specific fields and understanding rhetorical texts are two of the most common challenges for ESL students (Casanave, 2002; Shi et al., 2017; Zamel & Spack, 2004). This is the case for a variety of reasons, including transfer of first language (L1) to second language (L2) reading and writing (Boroditsky, 2001; Cummins, 2008), first language writing expertise (Cumming, 1989) and the ways in which students learned to write in English (Tang, 2013). Therefore, helping L2 students to write effectively in English is a key challenge for faculty and universities serving an increasingly international and linguistically diverse national audience (Bergey et al., 2018). Moreover, understanding the interaction between language and cognition is necessary to develop culturally and linguistically appropriate writing instruction strategies.

Considerable research has been devoted to understanding and improving L2 students’ English academic writing (e.g., Hyland, 2019; Ramanathan & Atkinson, 1999; Ruiz-Funes, 2015). We define academic writing as students’ expository writing (e.g., compare and contrast) based on external sources of information (e.g., multiple texts, Du & List, 2020; Segev-Miller, 2004; Spivey & King, 1989). Research on L2 writing has examined students’ fluency, grammar and spelling (Gustilo, 2016; Kobayashi & Rinnert, 1992; Ong & Zhang, 2010). While important, these also constitute relatively low-level writing skills. Still limited has been work examining students’ higher-level writing skills, particularly across L1 and L2 samples (e.g., Doolan, 2021; Karimi & Mousavi-Moghadam, 2022; Kobayashi & Rinnert, 1992). In this study, we focus on one such higher-level writing skill, integration; namely, we examine L1 and L2 English students’ capacities for intertextual and intratextual connection formation within and across texts.

Theoretical background

This study is guided by Spivey’s (1984) model of discourse synthesis, referring to a form of academic writing wherein writers construct a personal understanding of an issue, described across multiple texts, by integrating information across text, and then reflect such personal understanding through a newly formed written product (Spivey, 1984; Spivey & King, 1989). Three main processes are involved in discourse synthesis — selection, organization and connection formation or integration — with each of these processes carried out during both reading and writing. During selection, learners choose information from sources to include in their mental models, or cognitive representations of texts. Learners then select ideas from these mental models to include in the written products that they compose. During organization, learners utilize cues from the source texts (e.g., headings, text structures) to structure or pattern their mental models, with such organizational features serving as a framework that students can use to cognitively represent information from multiple texts. Learners externalize the structure of their mental models through writing by using various discourse markers (e.g., first, further) and adhering to commonly used text structures (e.g., cause/effect, problem/solution). Finally, during connection formation or integration, learners generate links between relevant information within and across texts and represent these intratextual and intertextual connections in their mental models. Learners may also use discourse markers during writing (e.g., moreover, however; Dueñas, 2009; Martínez, 2004) to reflect connections between texts. In this study we focus on this last process, integration. We focus on integration both because this is the culminating cognitive process required for discourse synthesis and because multiple text integration has been found to be a particular challenge for both L1 and L2 writers (Doolan, 2021; List et al., 2019; Plakans & Gebril, 2017).

Multiple text integration

The term ‘integration’ refers to students’ linking or connecting of shared ideas introduced in different sections of the same text (i.e., intratextual integration) or across disparate texts (i.e., intertextual integration; Britt et al., 1999; Primor & Katzir, 2018). Integration is essential for students to develop cohesive mental models or understandings of issues described across texts (Britt et al., 1999; Perfetti et al., 1999). Additionally, both intratextual and intertextual integration have been identified as essential features of students’ successful writing based on multiple texts (List et al., 2019; Wineburg, 1991). In this study, we focused on multiple text integration referring specifically to the distinct process of connecting information from different texts.

Students’ integration has commonly been assessed through writing (e.g., Du & List, 2020; Latini et al., 2019; McCarthy et al., 2022). Three main approaches to assessing integration in writing have been introduced. First, at a holistic level, students’ written responses have been classified according to the extent to which these reflect the Documents Model Framework (DMF; Britt et al., 1999; Perfetti et al., 1999). In particular, the DMF classifies students’ mental representations of multiple texts to the extent to which these feature source use and intertextual linking. That is, students may create mush models, reflecting integrated ideas from multiple texts but failing to track sources of origin. Alternatively, students may fail to create connections across texts, separately representing each text’s content or constructing separate representations models of texts. Finally, students may create documents models, which reflect both the successful integration of content across texts and the tagging of information to sources of origin. The DMF describes the types of cognitive representations of texts that students form; empirical work has found these types of cognitive representations to also be reflected in students’ writing (Barzilai et al., 2021; List, 2019). For instance, List et al. (2019) classified the types of written responses that students composed based on six texts arguing for and against the legalization of sex work, in accordance with the DMF. In List et al.’s sample, 36% of the written responses exhibited a separate representation model of multiple texts; this included students formulating separate representation models with (24%) and without (13%) citations. Twenty-two per cent of written responses reflected mush models, and 20% of responses reflected documents models of multiple texts, with other responses not able to be coded.

A second approach to coding integration has been to analyse the specific types of connections that students formed when writing based on multiple texts (e.g., Cerdán & Vidal-Abarca, 2008; List, 2019; Olsen et al., 2018). For instance, List et al., (2019) examined the extent to which students included instances of explicit (i.e., using connective terms) and implicit (i.e., using a unifying statement) integration in their writing, finding implicit integration to be more common. Additionally, List et al. (2019) classified each instance of implicit or explicit integration that students generated as reflecting an evidentiary (i.e., focused on supporting details), thematic (i.e., focused on main ideas) or contextual (i.e., reflecting author- or source-level relations) connection. Across these levels of connection formation, evidentiary and thematic connections were most commonly formed. Here, rather than examining the specificity of students’ intertextual connections, we examine their structure. In particular, here, we examine students’ abilities to form comparisons and contrasts within and across texts.

As a third approach to scoring integration in students’ writing, lexical indicators have been analysed as evidence of connection formation (e.g., Du & List, 2020; Sonia et al., 2022). For example, Wiley and Voss (1999) compared integration across English L1 participants who were asked to complete a summary, explanation, argument or a narrative writing task based on texts presented as a single textbook chapter or as multiple documents. Wiley and Voss coded the number of connective terms used in students’ essays (e.g., at the same time, then), finding that students who were presented with multiple documents included more connectives, overall, and more causal connectives (e.g., because, due to) in their writing as compared to students reading a textbook chapter. In this study, we draw on this prior work to assess students’ connection formation within and across texts. We use holistic, categorical and lexical approaches to integration scoring.

Reading and writing in English as a second language

Studies examining students’ integration in writing have primarily focused on first language (L1) writers (i.e., Anmarkrud et al., 2014; Britt & Aglinskas, 2002; Cerdán & Vidal-Abarca, 2008; Wiley & Voss, 1999) and, to a more limited extent, on L2 writers (e.g., Cheong et al., 2019, Karimi & Mousavi-Moghadam, 2022; Plakans, 2009). When L2 students’ integration in writing has been examined, investigations have most commonly taken place within the context of high-stakes testing (e.g, Test of English as a Foreign Language, TOEFL; Uludag et al., 2019). For example, Plakans and Gebril (2017) examined discourse synthesis in a sample of L2 individuals, from 73 different countries speaking 47 different languages, completing a TOEFL writing assessment, with a specific focus on students’ organization and cohesion in writing. Organization was captured by examining the summarization patterns that students used in writing. Summaries were categorized according to whether these preferred one text over another (i.e., a written or an oral text) or summarized texts in a balanced fashion, and according to whether or not these included additions or commentary from the students writing. Importantly, students’ integration of the written and oral texts was not explicitly coded for.

Cohesion was assessed using the linguistic analysis tool Coh-Metrix to capture the number of connectives and logical operators (e.g., and, or, if) in students’ responses and the degree of overlap or ‘cohesion through repetition’ in students’ writing. In terms of organization, Plakans and Gebril (2017) found 60% of individuals’ responses to reflect balanced summaries of the two texts, while only 6% of responses included any additional commentary by writers. In terms of cohesion, students’ connectives use did not seem to differ across overall levels of writing performance (i.e., scored from 1–5). More recently, Karimi (2024) studied how L2 students’ written representations of controversial issues are influenced by their pre-existing beliefs, with a bias towards information that supports these beliefs. Although descriptively interesting, these results leave unanswered questions regarding L2 students’ integration in writing and the extent to which this may be fostered. In this study, we examine both L1 and L2 students’ intratextual and intertextual integration in writing, in response to texts organized in two different ways.

In particular, in this study, we present students with texts organized either by theme or by region, with content otherwise identical across texts, such that students have to form intratextual (i.e., in the theme condition) or intertextual (i.e., in the region condition) connections across texts when tasked with comparing college education in the US and Latin America. We do this to examine connection formation or integration across L1 and L2 writers. The role of text structure in fostering integration has been suggested in some prior work (Kurby et al., 2005). For example, Nash et al. (1993) presented participants with two passages about eagles and racoons. Passages were organized either by topic (i.e., eagle or racoon) or chronologically by life stage, and participants received either consistently (i.e., two texts by topic) or inconsistently (i.e., one by topic and one chronologically) organized texts. Nash et al. found that writers most frequently used the organizational structure of the first passage they read as a basis for organizing their essays. Moreover, students produced higher-quality essays when presented with texts following a consistent organizational structure. However, Nash et al. (1993) did not examine the extent to which students integrated information about eagles and racoons across the two texts. Here, we build on this prior work to determine whether varying texts, such that students have to form intratextual or intertextual connections within or across texts, influence integration in writing.

Present study

In this study, we adopt the design of Nash et al. (1993) to enable us to examine intratextual and intertextual integration across L1 and L2 English writers. English L1 and L2 college students were asked to write a research report comparing and contrasting college education in the United States and Latin America. Students were asked to do so based on two texts, organized either by region (i.e., the United States and Latin America) or by topic (e.g., cost of college education in the United States and Latin America; enrollment rates in the United States and Latin America). Texts were created such that those organized by region required students to form intertextual connections; in contrast, texts organized by topic only required intratextual integration for comparisons to be formed. The following research questions guide this study:

(1) Are there differences in the number and quality of comparisons generated by L1 and L2 English writers asked to form intratextual or intertextual connections within and across texts?

(2) Are there differences in connectives use by L1 and L2 English writers asked to form intratextual or intertextual connections within and across texts?

Given the design of the task, asking students to compare and contrast features of college education across two regions (i.e., Latin America and the United States), and the design of our texts, intratextual connections were always formed when students read topically structured texts; intertextual connections were always formed when students read texts structured by region. Thus, research questions only focus on students’ intratextual or intertextual connection formation, rather than comparing connection formation within regions but across topics.

Method

Participants

Participants were 141 college students (age: M = 22.09, SD = 3.81) recruited through Prolific, an online recruitment platform. Online data collection is valid (Casler et al., 2013) and offers benefits over in-person data collection (Follmer et al., 2017). Casler et al. (2013) found online samples to be more ethnically and socioeconomically diverse as compared to in-person samples. Moreover, Hauser and Schwarz (2016) demonstrated that online samples tended to submit more valid data than college students.

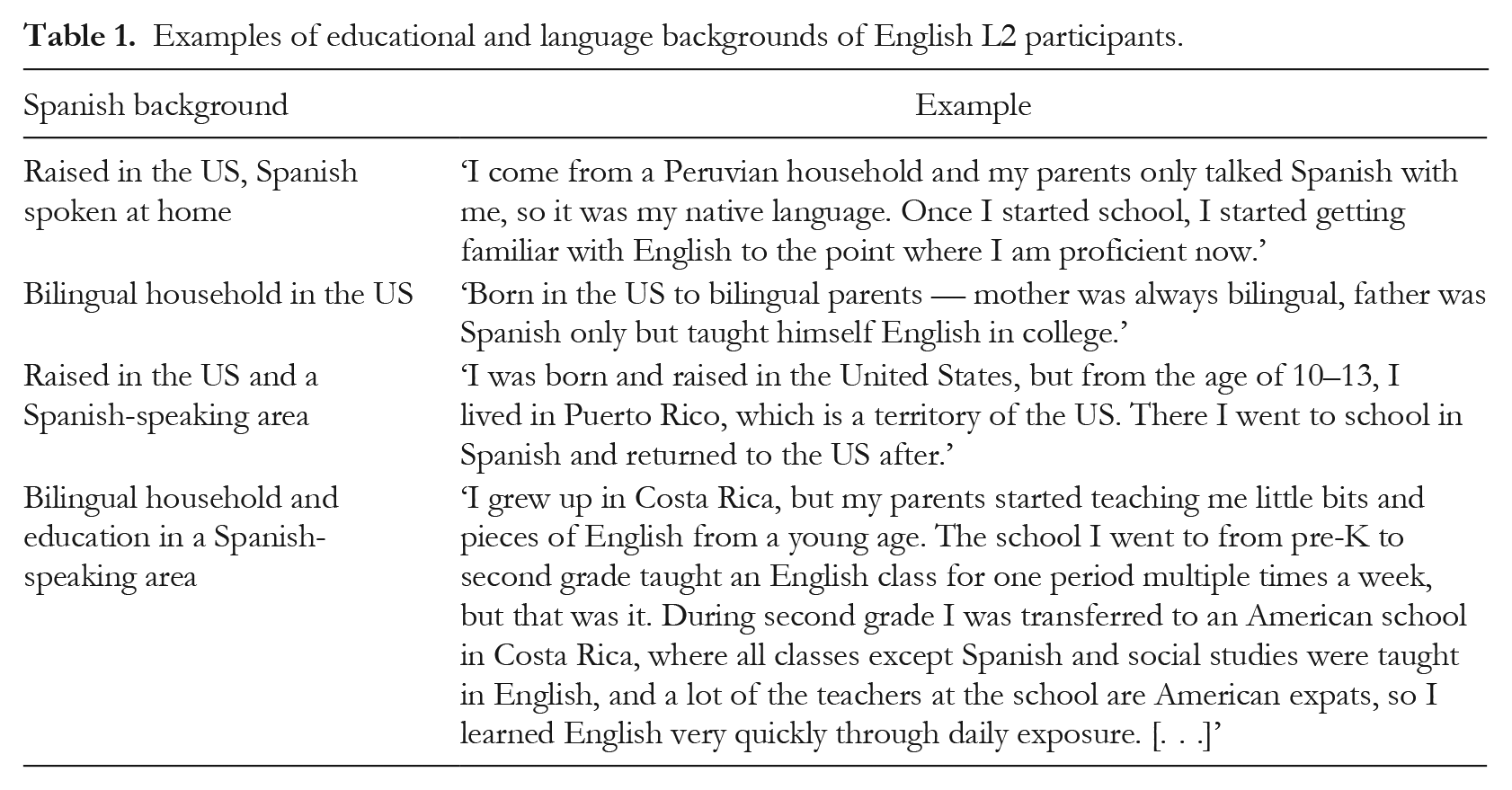

In the sample, 49.64% (n = 70) of participants identified Spanish as their first language and English as their second language, and 50.35% (n = 71) identified English as their first language. Participants in the Spanish group completed a four-question screener for Spanish language proficiency (M = 3.21, SD = .77). This screener was added to validate individuals’ self-reported language background. More descriptions of students’ language and educational backgrounds are in Table 1. The sample included 36.17% (n = 51) males, 48.93% (n = 69) females and 2.12% (n = 3) non-binary participants; 18 participants opted not to report their gender identity. Participants’ ethnicities included Latinx (44.68%), White (25.53%), Asian (6.38%) and Black (3.54%). Demographics do not include students who preferred not to report gender or race/ethnicity.

Examples of educational and language backgrounds of English L2 participants.

Design

The study followed a 2 x 2 quasi-experimental design. Language status was an individual difference factor with two levels. Text structure was a randomly assigned factor, also with two levels. That is, L1 and L2 students were randomly assigned to read texts organized either by region or by topic.

Measures

Prior knowledge

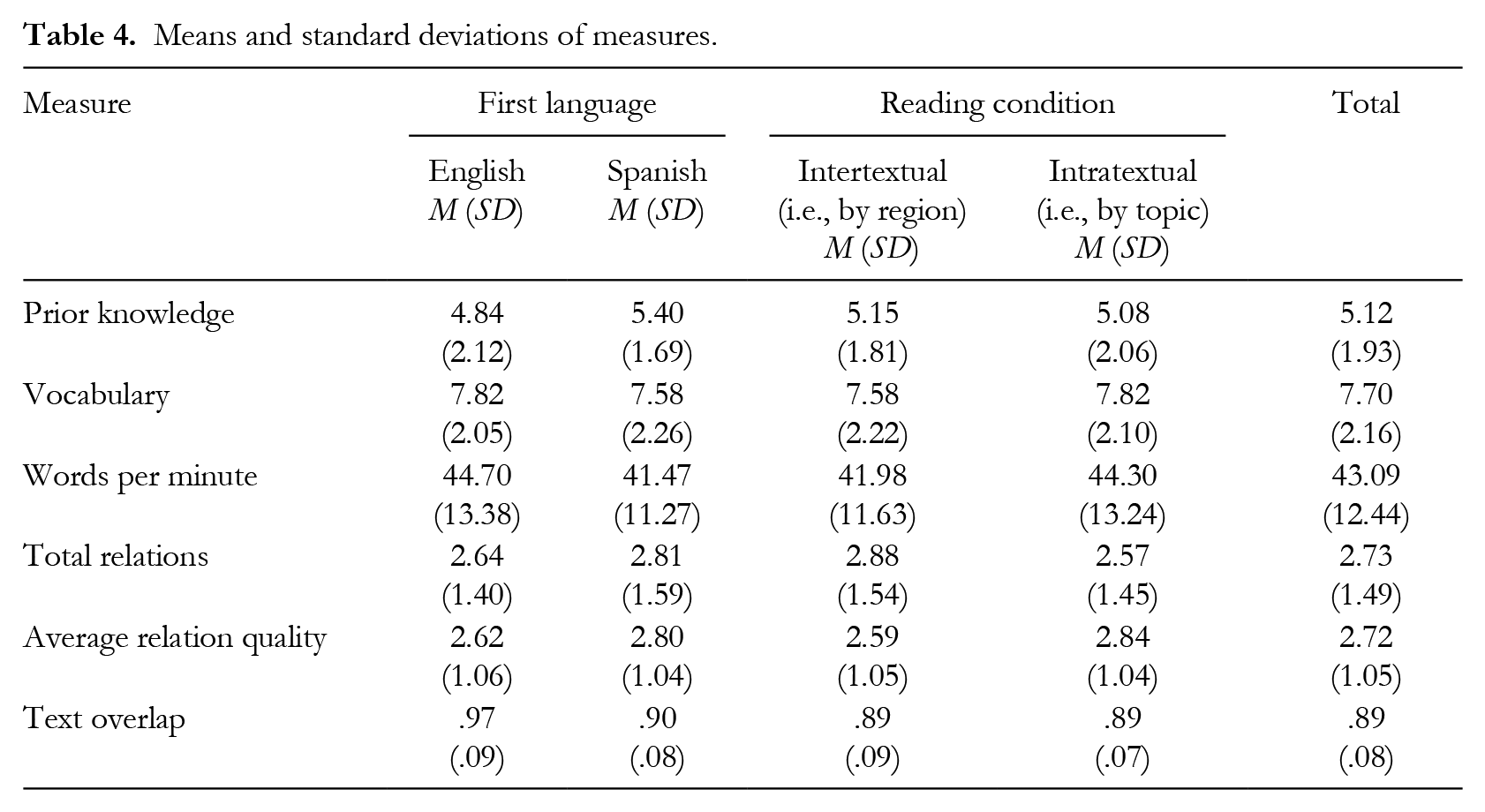

Participants answered 14 multiple-choice questions based on information in the two texts. Cronbach’s alpha reliability was .41 for the measure. The prior knowledge measure was researcher-created, based on key concepts and main ideas drawn from the study texts. Sample items are provided in Appendix 1. Due to the low reliability of this measure, scores are presented only descriptively (see Table 4).

Writing fluency

Writing fluency was assessed by asking participants to describe their favourite movie or TV show for two minutes. Specifically, participants were instructed to type as much as possible about their favourite TV show for two full minutes. This was done so that we could capture individuals’ writing fluency, or composition speed based on memory, with no reading materials provided. Participants generated between 17 and 75 words per minute.

English vocabulary

Ten pairs of analogies were taken from an old edition of the vocabulary section of the SAT. Cronbach’s alpha reliability was .52, and this increased to .56 when one item was deleted. Due to the low reliability of this measure, English vocabulary scores are only presented descriptively (see Table 4).

We compared participants’ scores on measures of prior knowledge, writing fluency and vocabulary across experimental conditions and language groups; however, no significant differences were found. These are, therefore, presented descriptively.

Multiple text task

The multiple texts task consisted of a reading phase and a writing phase.

Reading phase

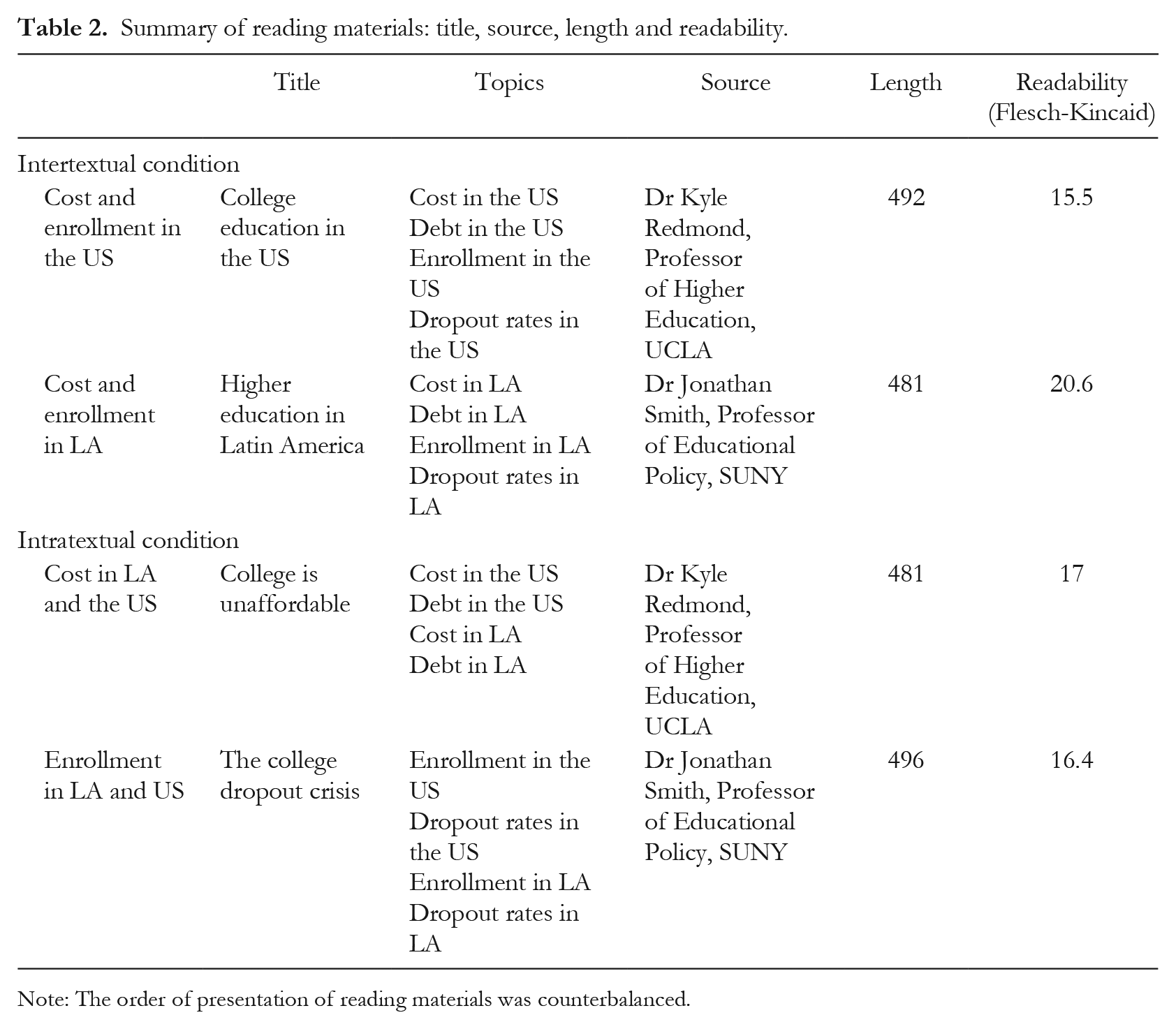

Participants were randomly assigned to two different reading conditions (i.e., requiring intratextual or intertextual connection formation). Students in the intertextual condition were presented with two texts organized by region (i.e., one text on Latin America and the other text on the United States), such that comparing college education across these regions required students to form intertextual connections across texts. Students in the intratextual condition were presented with two texts organized by theme (e.g., graduation rates in Latin America and the United States, college cost in Latin America and the United States), such that comparing college education across these regions required students to form intratextual connections within texts. Texts were counterbalanced. Table 2 overviews topics in the texts.

Summary of reading materials: title, source, length and readability.

Note: The order of presentation of reading materials was counterbalanced.

All four texts were designed to be trustworthy, attributing information to reputable academic authors. Crucially, students received identical information across intertextual and intratextual text conditions, only organized in different ways. To capture students’ recall and abilities to translate text-based content from the mental models that they formed into writing, students did not have the study texts available during writing.

Participants were asked to rate three items after reading each text: (a) ‘How interesting was this text?’ (b) ‘How well did you understand this text?’ and (c) ‘How well did you comprehend the text?’ All items were rated on a seven-point scale, and self-reported comprehension scores were computed by averaging the latter two items.

Writing phase

Students were then asked to compose a report comparing and contrasting education in the US and Latin America. On average, the reports produced were 249 words in length (SD = 133.70). Coding of essays was informed by prior work (i.e., Du & List, 2020).

Comparison quantity

We determined the total number of comparisons and contrasts those students identified and the overall number of comparisons generated. Although students could have generated any number of relations across topics and texts, there were four main types of relations we expected students to draw: comparing and contrasting the cost, rates of student debt, rates of enrollment and dropout rates across the United States and Latin America.

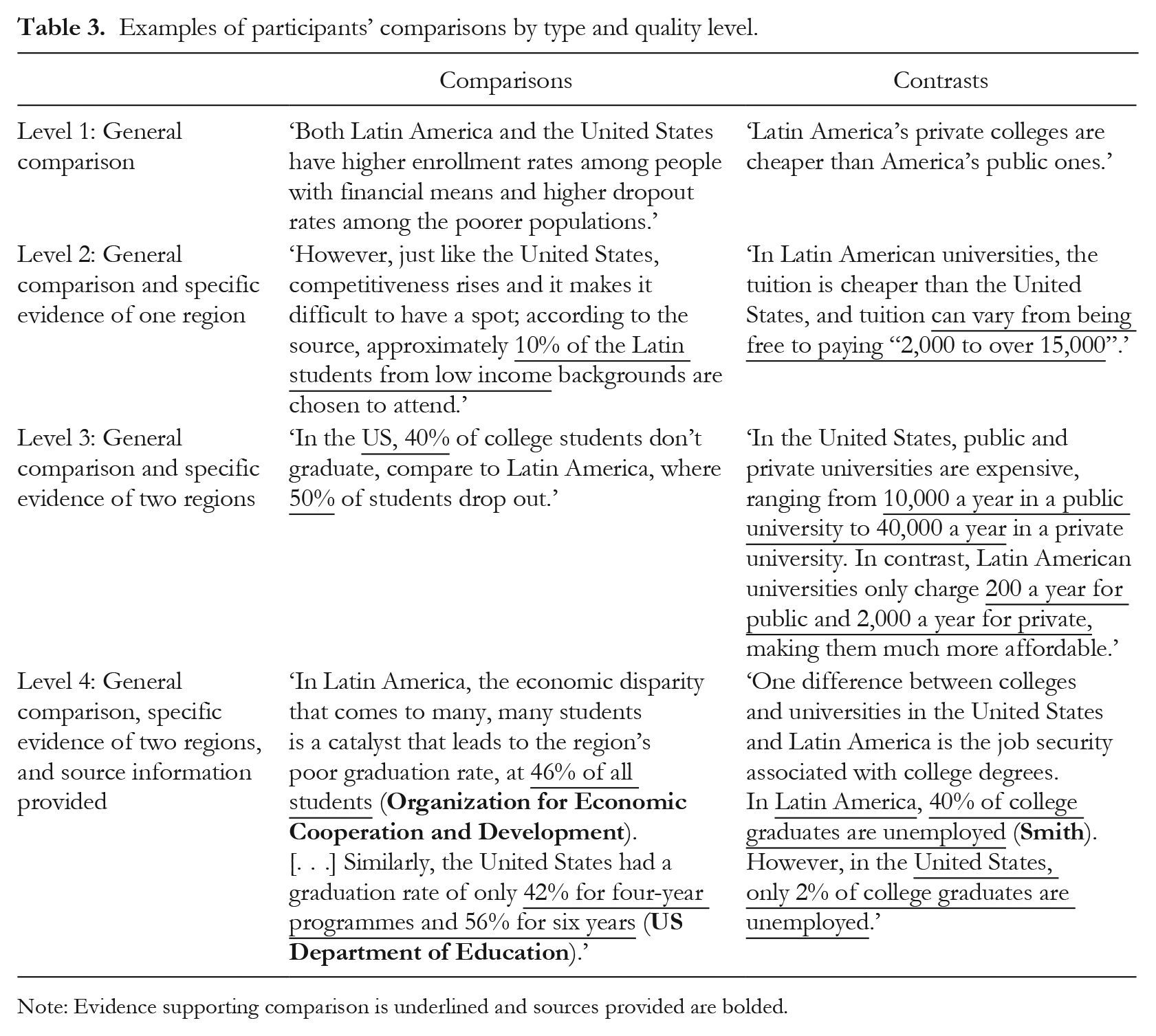

Comparison quality

Second, each comparison generated was scored for quality using a four-point rating scale. A score of one was assigned to general statements of comparison (1). A score of two or three was assigned when specific evidence about one (i.e., 2) or both (i.e., 3) regions was included in the comparison. A score of four was assigned when comparisons were both specific and elaborated (4). Students’ scores for each comparison were totalled and divided by the total number of comparisons generated, to create an average comparison quality score. Please see Table 3 for sample comparisons. Cohen’s Kappa inter-rater reliability, for all responses scored, was .75 (exact agreement: 81%).

Examples of participants’ comparisons by type and quality level.

Note: Evidence supporting comparison is underlined and sources provided are bolded.

Connectives use

We use the Tool for the Automated Analysis of Cohesion (TAACO; Crossley et al., 2016) to calculate the number of connectives included in each response. We included all of the classes of connectives analysed by TAACO, including conjunctions (e.g., and), disjunctions (e.g., or), additions (e.g., further) and contrasts (e.g., on the contrary), among others (see Crossley et al., 2016 for a full list). We considered connectives use to reflect integration (List et al., 2019; Wiley & Voss, 1999), in addition to being an indicator of cohesion (Crossley et al., 2019).

Procedure

First, students completed a series of preliminary questions assessing their knowledge of concepts related to college education in the US and Latin America and completed a measure of writing fluency. Second, students completed a multiple text task which involved reading two texts comparing college education in the US and in Latin America and composing a written response comparing and contrasting education across these two regions. Texts were not available to students during writing. Finally, students completed an English vocabulary measure and provided demographic information. Before starting the online survey, all students were presented with a consent form and assented to participation.

Results

Quantity and quality of relations, connectives use and textual overlap across language groups and experimental conditions

We performed a two-way Multivariate Analysis of Variance (MANOVA) with reading condition (i.e., intertextual or intratextual) and first language (i.e., English or Spanish) to examine each of the four outcome measures of interest (i.e., number of comparisons generated, average relation quality, connectives use and text overlap). Normality and homogeneity assumptions were met. Descriptive information is included in Table 4.

Means and standard deviations of measures.

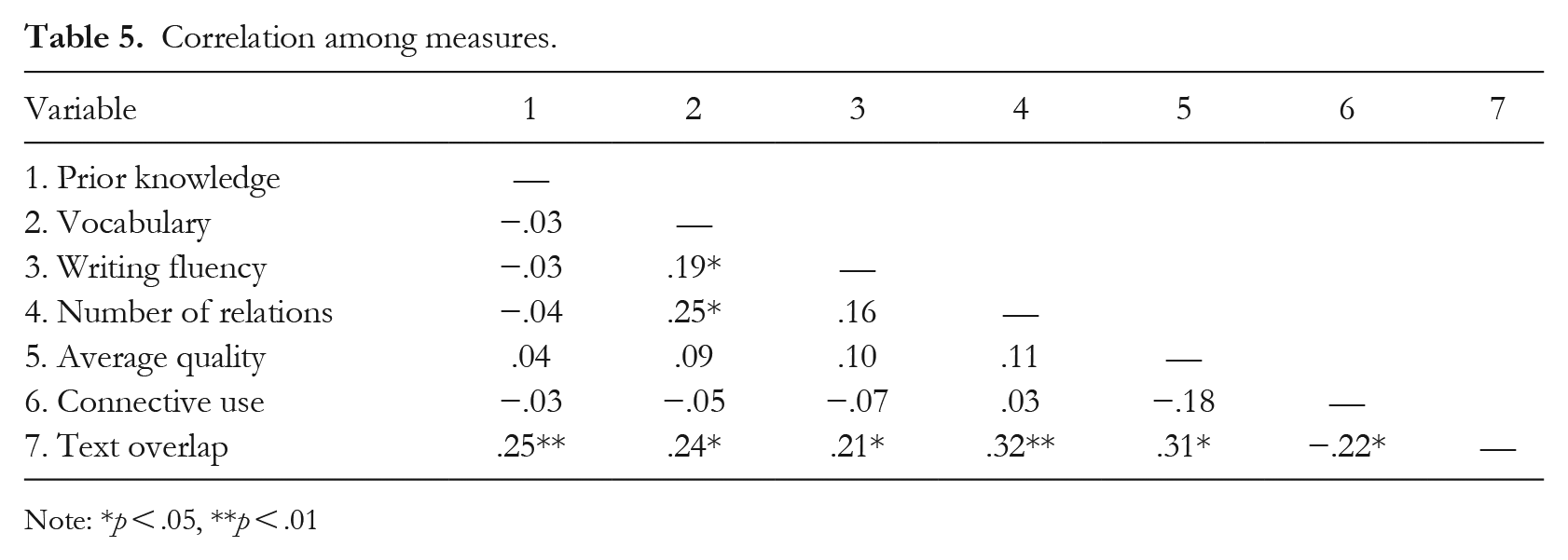

No significant differences were identified. That is, neither students’ language status nor the organizational structure of texts, nor their interaction, were associated with differences in the number comparisons (ps > .32) and quality (ps > .18) of relations generated, with students’ connectives use (p > .17) nor overlap with texts (ps > .20). See Table 5 for correlations.

Correlation among measures.

Note: *p < .05, **p < .01

Supplemental analyses

Given that no differences were found, we ran two sets of supplemental analyses. First, we examined differences in students’ ratings of text interestingness and comprehensibility across language groups and text conditions. Second, we analysed whether students’ generated relations differed in quality according to the type of comparison drawn (i.e., a comparison or a contrast). Prior research has examined the role of topic interest as a component of topic familiarity in reading comprehension (e.g., Bråten et al., 2018; McCrudden et al., 2016) and the role of interest to select successful reading strategies (Salmerón et al., 2006).

Differences in ratings of texts’ interestingness and comprehensibility

First, we examined the effects of language group and reading condition on students’ ratings of text interestingness and comprehensibility.

A two-way MANOVA indicated that there were no significant main effects of language group or reading condition (ps > .051 on ratings of interestingness of comprehensibility. However, we found a significant interaction between reading condition and language status in students’ ratings of texts’ interestingness, F(1, 130) = 4.07, p < .05, η2 = .03 and comprehensibility F(1, 130) = 5.18, p < .03, η2 = .04. Follow-up two-way ANOVAS reflected the same significant interactions. Bonferroni-adjusted comparisons indicated that English L2 speakers rated texts as more interesting when these were organized by region (M = 5.69, SD = .21) as compared to their English L1 counterparts (M = 4.92, SD = .22), p = .01. Similarly, Bonferroni adjusted comparisons showed that L2 participants reported a significantly higher level of comprehensibility of texts organized by region (M = 6.32, SD = .75) than texts organized by topic (M = 5.71, SD = 1.03), p < .01. L1 English speakers did not differ in their ratings of texts’ comprehensibility across conditions.

Furthermore, a positive correlation was found between ratings of interestingness and comprehensibility r(132) = .55, p < .001. That is, students who reported higher interest levels also rated the texts as easier to comprehend.

Differences in quality across comparison types

Finally, we were also interested in whether students’ generation of comparisons vis-à-vis contrasts differed in quality. We conducted a three-way mixed-effects ANOVA with reading condition and language status entered as the between-subjects factors and comparison type (i.e., comparisons or contrasts) entered as the within-subjects factor. Results showed that the types of comparisons that students generated differed significantly in quality, F(1, 121) = 20.64, p < .001, η2 = .15. Participants better described contrasts (M = 2.49, SD = 1.54) as compared to comparisons (M = 1.73, SD = 1.47). Moreover, there was a significant interaction between the type of comparisons drawn and participants’ first language status, F(1, 121) = 4.53, η2 = .04, p < .05. Specifically, English L1 participants obtained, on average, higher scores when drawing comparisons across texts (M = 1.83, SD = 1.47) as compared to their Spanish-speaking counterparts (M = 1.64, SD = 1.47). In contrast, English L2 participants obtained higher scores when drawing contrasts (M = 2.76, SD = 1.48) as compared to L1 English participants (M = 2.23, SD = 1.59).

Discussion

We sought to examine integration among L1 and L2 college-level English writers, composing written responses based on multiple texts. This study contributes to prior research in two primary ways. First, this study was designed to both look at students’ writing based on multiple sources of information and to compare writing between participants from two different language backgrounds (i.e., English and Spanish). Previous studies looking at multiple text integration and organization have mostly examined students from one language group (e.g., Gil et al., 2010; Plakans & Gebril, 2017). However, we did not find differences across language status in the variety of writing-related measures examined. This stands in contrast to our hypotheses and substantial prior work with elementary and secondary students finding differences in writing and reading performance across English L1 and L2 students (Hyland & Milton, 1997; Lee, 2003; Lesaux et al., 2010).

Second, we examined how organizational structure can play a role in writing, when students were asked to compose a response based on multiple texts. We did not find differences in writing performance across reading conditions. We discuss results first, in terms of (the lack of) findings related to language status and then in terms of texts’ organizational structure.

Language status

Although we examined a variety of outcome measures of interest (e.g., comparison quality and quantity), none of these integration-related measures were found to differ in association with students’ language status. We think this lack of significant differences can be attributed to the plethora of educational backgrounds represented in our sample (see Table 1). Specifically, several participants in the L2 group reported completing their K-12 education in the United States. Other participants reported speaking Spanish only at home, but English everywhere else. These results highlight the diversity of linguistic and educational backgrounds represented among Spanish speakers in the United States. Moreover, given that the L1 and L2 English writers included in our sample did not differ on either measure of writing fluency nor vocabulary performance, it is logical that no differences in writing performance emerged (see Table 4).

At the same time, it is important to recognize that our findings are inconsistent with a large body of work documenting challenges in English language abilities among L2 students in the United States (Beck et al., 2013; National Center for Education Statistics, 2011). These findings can be explained in a number of ways. First, all of the participants in our study were college students, meaning that they likely had previous experience writing from informational sources and that their writing abilities may have been a selective factor in their admission to college. In other words, by targeting only college students, we likely reduced the variability in writing skills evidenced among L2 writers, particularly given that even L2 speakers would have needed to demonstrate English writing proficiency for college admission, at least to some extent. Sánchez and de Tembleque (1986) previously highlighted the importance of being conscious of students’ language skill level as well as the environment surrounding students to provide the appropriate educational interventions and assessments. Second, the writing task we used focused on students’ abilities to draw comparisons between two short texts. In this way, the task we used does not allow us to evaluate students’ more complex writing (e.g., argument formation based on more than two academic texts of considerable length). Using more demanding measures of academic writing may point to differences in L1 and L2 students’ English writing proficiency.

Reading condition

We further did not find differences in integration performance across reading conditions. We think this is attributable to the texts used in our study. That is, we created texts to maximize students’ abilities to form connections. We did this by including only two texts, presenting topics in a parallel fashion and including headings to indicate main ideas. In this way, both sets of texts were likely to facilitate intratextual and intertextual integration (e.g., headings have been found to promote integration and recall: Bråten et al., 2011; Meyer & Poon, 2001; Schwartz et al., 2017). Moreover, the texts we used were relatively short and written for a general audience, possibly facilitating comprehension and integration as a result.

Supplemental analyses

Although our target manipulations were not significant, a number of interesting findings emerged from supplemental analyses in this study. First, there was both an interestingness and comprehensibility advantage afforded by introducing L2 English students to a text about college education in Latin America, per se. While the importance of culturally relevant texts has long been emphasized (Ebe, 2012; Sharma & Christ, 2017; Steffensen et al., 1979), results from this study may provide some further quantitative evidence for the importance of students’ interest in and responsiveness to texts. Indeed, students’ perceptions of texts’ interestingness and personal relevance, in this case, may have further buoyed their perceptions of texts’ comprehensibility. Second, examining students’ abilities to identify comparisons vis-à-vis contrasts, while there was a quality advantage found for students’ abilities to identify contrasts overall, this was further moderated by language status. Prior work has suggested that the inclusion of conflict across texts prompts more elaboration on the part of learners than does the inclusion of consistent cross-textual information (Braasch & Bråten, 2017; Braasch et al., 2012; List & Du, 2021). Results from this study suggest that this conflict advantage extends to students being asked to identify contrasts across texts, even when texts do not specifically conflict, and, moreover, that this advantage may interact with students’ language status. As such, these results, as well as others from supplemental analyses, point to the importance of collecting more process data and micro-level writing indicators to examine L1 and L2 English students’ writing in English.

Limitations

We must acknowledge a number of limitations. First, the prior knowledge measured had low reliability, suggesting that our questions did not fully capture the extent of students’ familiarity with the topic of college education in the US and Latin America. However, recent studies have shown low reliability to be a common problem for prior knowledge measures (Kulikowich et al., 2023); thus, prior knowledge needs to be assessed multidimensionally in future work (McCarthy et al., 2023). Likewise, the reliability of our vocabulary measure was low, likely due to the limited number of items included. Thus, the reliable assessment of individual difference factors constitutes an important direction for future work. Second, students were not able to return to the two texts provided after completing the reading phase of the study, and were not required to take notes. This was because the writing task was intended to capture students’ translations of their mental models of multiple texts, formed during reading, into writing. Nevertheless, in more naturalistic settings, students often have texts available as they write and are able to take notes. Note-taking has been shown to promote different cognitive processing when learning from texts (Gil et al., 2008). Two additional limitations may be drawn in relation to the multiple text synthesis writing task used in this study. First, it is possible that the reading materials used were too easy for our university sample, such that students were able to draw intratextual and intertextual connections, regardless of language status or text structure condition. Evidence for this comes from students’ average relation scores, which were 2.73 out of a possible 4. Moreover, while we assumed that university students would be familiar with the comparison and contrast genre, students’ task conceptions were not assessed in this study. Likewise, it is possible that differences in intratextual and intertextual connection formation would have emerged had students been asked to complete a more challenging writing task, such as composing an argument which involves greater difficulty when selecting information (González-Lamas et al., 2016).

Conclusion

This study was conducted at the intersection of research on students’ synthesis writing based on multiple texts and efforts to examine the experiences of L1 and L2 writers. In particular, this study explored three indicators of integration in students’ writing (i.e., the types of document models reflected, the types of connections formed and the lexical markers used) to identify discernable patterns across L1 and L2 English writers. No differences were identified, suggesting either that, at the college level, students’ L1 and L2 English writing is highly similar, contrary to what has been found in work with younger samples, or that additional markers of higher-level writing should be investigated to identify discernable patters in these two samples. Exploratory analyses did find that language background interacted with texts’ organizational structure in impacting students’ interest and comprehensibility ratings of texts. In particular, L2 speakers rated texts focused exclusively on Latin America (i.e., organized by region) to be more interesting and easier to comprehend than texts organized by topic. Although preliminary, this is a potentially important finding, linking students’ language or cultural backgrounds to their interactions with texts during synthesis writing. We are hopeful that this finding, as well as additional higher-level writing processes, will be explored in future work.

Footnotes

Appendix 1

| Approximately what percentage of US college students attend private institutions?. |

| 13% |

| 27% |

| 45% |

| 57% |

| I don’t know |