Abstract

There are substantial differences between the information required in a diagnostic histopathology report and an experimental pathology report. A diagnostic report rests on the authority of the person issuing it, so there is less emphasis on the data and analysis on which the diagnosis is based being in the report. By contrast, an experimental report gains its authority from the integrity of the data and the objective analysis of that data. It is recommended that the current diagnostic reporting of the histopathology component of toxicological studies be changed to a more experimental report approach.

Introduction

The hope is that the questions in this article will stimulate a wide-ranging discussion of what constitutes the appropriate reporting of the methods, data, and results of the histopathology component of toxicological experiments. It should be made clear that the article deals only with reporting a diagnosis or experimental result itself; it is not intended to extend to how a result is interpreted, or what actions should be implemented once a diagnosis has been reached. It raises issues outside of the objectives in “Best Practice Guideline: Toxicologic Histopathology” (Crissman et al. 2004, section 2). Although not explicitly addressed in the current article, similar considerations also apply to the reporting of gross findings.

This article may appear to emphasize some weakness in diagnostic reporting practice, so it must be stressed that for reporting diagnostic work, diagnostic reporting practice is very adequate. The question raised here (amongst others): is a diagnostic reporting standard appropriate for toxicological experimental work? In the first section of this article, the broad differences between the diagnostic and the experimental processes will be made. Subsequently, the reporting implications of these differences to pathology diagnoses and experimental pathology reports will be made.

I would urge that initially the issue of regulatory compliance be deliberately excluded from any discussions of this subject, and our current regulatory practices be continued. If a consensus on one or more approaches emerges, and they are not current practice, then how they are implemented in a regulatory setting can be decided as a separate issue.

Fundamental Distinctions

There is a very large distinction to be drawn between diagnoses and experimental results, especially with regard to how they are determined. What is an acceptable diagnostic practice may not be valid in experimental work. This has consequences for how valid data should be reported from the two processes, and they should not be confused.

Diagnosis

This article follows the approach taken to the diagnostic procedure of Eddy and Clanton (1988) and Leadley and Lusted (1959), but in a much abbreviated form. A diagnosis is an opinion on a disease or condition. The process of arriving at a clinical diagnosis starts with an original complaint, so there is the initial assumption that there is something that needs to be explained. Routine, nonspecific, investigations (e.g., history, clinical examination) are then made. Findings are commonly qualitative and recorded with ordinal grades such as mild, marked, or severe. On this information, the clinician’s differential diagnoses list of possible conditions is drawn up. Furthermore, more specific tests can then be requested with the object of sorting the differential diagnosis list to one most probable cause (the diagnosis) and the other, less probable, members of the differential diagnosis list. Methods include “diagnosis by exclusion,” in which specific tests have ruled out all but one of the differential diagnoses, so the remaining member of the list is the diagnosis, without there being any confirmatory evidence for that diagnosis; and “presumptive diagnosis,” in which one of the differentials may be so compelling and urgent that it must be assumed to be present on the slightest indication—to veterinarians any sign of foot and mouth disease, or to medical clinicians any indication of bacterial meningitis, would be examples. The tests used are ad hoc and can be recursive, the results of one test determining which subsequent tests are run and how they are interpreted. The interpretation of test results rests on the diagnostician using his or her training and experience to decide what a positive or negative result is. At the end of this open-ended process there is a diagnosis. On a single set of clinical signs, several diagnosticians can each reach their own diagnosis, all of which may be mutually discordant and yet all still valid. So an essential part of the diagnosis is who promulgates it. Several different diagnoses can all be true if the animal has several different diseases simultaneously. If a diagnosis is wrong, the original complaint usually persists and the process automatically restarts.

A diagnosis rests ultimately on the authority of the individual who proposes it. So, in the UK it is a statuary criminal offence under the Veterinary Surgeons Act (1966) to utter a diagnosis of an animal’s condition without being a member of the Royal College of Veterinary Surgeons (veterinarians fully qualified in other countries have been threatened with prosecution for diagnosing conditions in animals in the UK).

Experimental Results

An experimental result is the outcome of a substantially different process. Two or more, mutually incompatible, hypotheses are initially postulated. H0: the “null hypothesis” is almost universally one of the hypotheses, which assumes there are no differences between the groups. H1: a simple or composite hypothesis of one or more specific differences is the minimum further hypothesis required, which must be incompatible with H0. A planned procedure is applied to randomly assigned groups of samples or individuals, so that the data should distinguish between the mutually incompatible hypotheses under test. An objective method of analysis is chosen, ideally even before the data are gathered. The most common toxicological experimental design involves a control group, in which the compound of interest is omitted, and these samples, or individuals, are taken as representing the background range of conditions of animals without treatment, normal animals. Comparisons between groups are made under the null hypothesis (H0)—this is the explicit initial assumption that there is no difference between the groups. This assumption can only be rejected if there is still objective evidence from the data that H0 is very substantially improbable, usually at the 95% confidence limit. Although historic information may be used to frame the hypotheses, or in interpreting a result, historic information is not used when deciding if H0 can be rejected; only data generated under the conditions of the experiment itself are used. So the result of an experiment rests on the validity of the methods used, the data, and their analysis. Who generates and analyzes the data is immaterial. If the data are plausibly open to different analyses that give different conclusions, then an experiment is regarded as reaching no result. Wrong experimental conclusions do not automatically re-present themselves for reanalysis in the same way that misdiagnosed patients usually do. Experimental reports are normally peer-reviewed prior to publication, although this process is not usually included in the report.

Methods that are acceptable in diagnostic practice can be considered to be totally invalid in arriving at an experimental “result.” A “presumptive result”—based on the practical or economic consequences of missing a particular result rather than the dispassionate analysis of data—is clearly unacceptable. A “result by exclusion”—the experimenter has ruled out all but one of the many conclusions of which she or he can think, so the result must be this last one (without any positive evidence for that conclusion)—is unsupportable because there is always the possibility that an experimenter’s list of possible results is not exhaustive.

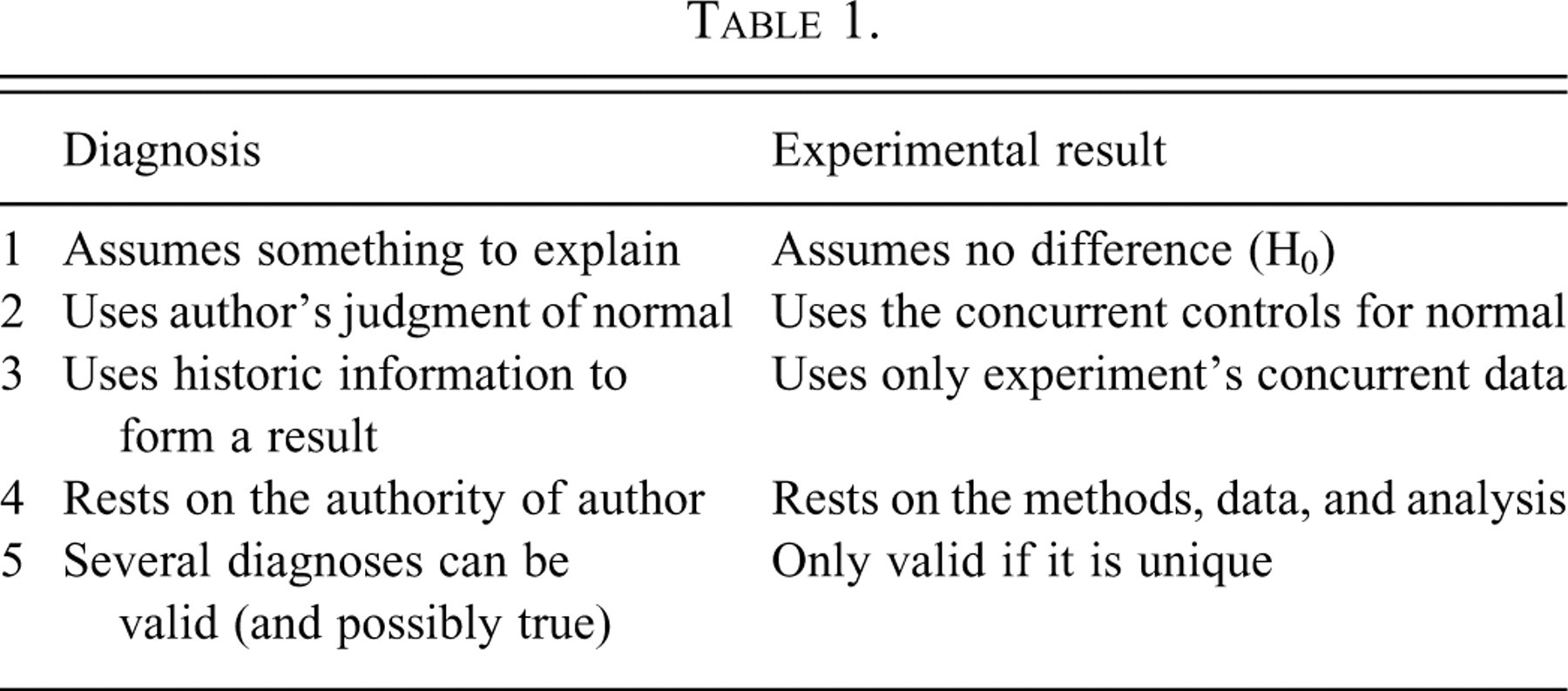

Attention is drawn to these differences in the process of arriving at a diagnosis compared with an experimental result (Table 1).

Reporting Consequences

These differences give rise to large differences in the information required in reports.

For a histopathological diagnostic report to be valid, it needs to include the identity of the material examined (who it came from, what it was), the diagnosis, and its author. In anatomic pathology reports, it is customary to give a description of the material and its examination, but this is not an essential part. This description is commonly done “by exception”; so all the individual normal features are covered only in general terms such as “no abnormality detected” or “otherwise unremarkable.” Only germane abnormal features are included individually, often in only very brief, stylized, terms. For simple histological diagnoses, it is common practice to simply state the diagnosis without any description, and sign off the report. In the reports of other branches of pathology, such as microbiological reports, the diagnoses are only ever simply stated without any description of the morphological or cultural evidence to support them.

An experimental report demands that the materials used, the method by which these materials were employed, the data generated, the logical process by which the data have been analyzed, and the result are all reported. The traditional standard is sufficiently detailed so that another competent researcher with the report should be able to repeat all stages of the work (see Instructions to Authors for Toxicologic Pathology, for example, at http://www.toxpath.org/instructions.asp). Although it is customary for the contributors to scientific reports to identify themselves, this is not always necessary. There are well-known examples of individuals deliberately not putting their names to their work (authors avoiding persecution by a repressive regime, e.g., Lise Meitner) or using pseudonyms (where openly publishing threatened his employment, e.g., William Gosset) and their work is still fully accepted by the scientific community.

The common features of these two reporting procedures are The material examined needs to be identified. The conclusion needs to be given, either a diagnosis or a result. The methods in sufficient detail to repeat the work independently need to be given for experimental reports, but are not essential to support a diagnosis. The data need to be given for an experimental report to be valid, but are not essential to support a diagnosis. A logically sound analysis of the data giving a unique outcome needs to be given to support an experimental result, while a simple statement of an opinion is frequently sufficient for a diagnosis. An experimental result is valid independently of its authors; a diagnosis is dependent on the authority of its author.

The differences are

Reporting Toxicological Pathology Studies

In toxicological pathology, we currently report by blending the two distinct traditions of diagnosis with experimental results.

The process by which histological data are generated and reported in routine anatomic toxicological studies follows this general pattern:

The slides are delivered and the pathologist examines them—commonly on an animal basis, but some prefer an organ-based approach. A record is made of abnormalities, sometimes recording above a baseline, sometimes without a baseline. This is usually an ordinal categorizing process with a grade or score to indicate the severity of the finding (e.g., mild, moderate, marked). From this examination, a list of lesions and organs putatively affected by treatment is drawn up. If a treatment effect and no observed effect level (NOEL) can be easily identified, the reporting is relatively simple. The methods are outlined; the data are drawn up as individual animal findings; a tabulation of the scores against sex and dose, a description of the salient features, and the narrative results are all written; and this then constitutes the report. The process is then “peer-reviewed,” in which a second pathologist confirms the validity of the work (although this process is not included in the report itself, so it will not be examined further in this article).

Potential problems arise when the data are not so clear-cut that the result is obvious. This is a common situation, especially when deciding a NOEL.

Equivocal Findings

When the initial qualitative examination gives an equivocal result as to a change’s relationship to treatment, pathologists may undertake additional examinations of the slides. They gather more detailed data and use better analysis to form their opinion. For example, some pathologists will examine (in a manner blinded to group) the first control animal with the first animal of the treated group of interest and decide which shows the greater degree of the putative, treatment-related change. They will then take the second (then third and so on) pairs and repeat the process exhaustively. At the end, they have a measure of the difference between the groups in how many of these pairings fall to the control or treated groups. The result is decided on the total numbers of pairings with the greater effect from each group. For example, with group sizes of 10, some pathologists will use >7 pairings with the treated of each pair showing the greater degree of change, (some use >6, others only >8) as convincing evidence of a treatment-related effect.

Whether the pathologists who work thus recognize it, like it, or just ignore it, they are producing data and are analyzing it in the appropriate manner for the Sign test (Conover 1999). Should they care to, they can put formal confidence limits on the data that they have generated and analyzed—those who accept >8 are testing at the 99% confidence limit, those who use >7 are testing at the 94% confidence limit, and those who use >6 are rejecting the null hypothesis at a rather lax 82% confidence limit. They are conforming to the experimental norm of producing unbiased data that distinguishes the null from the alternative hypotheses and are then testing it under the null hypothesis with a mathematically rigorous objective method. If the result of such an examination is reported, but not the method, data, and its analysis, then the norms of scientific reporting are being ignored.

Articles giving the full range of these ad hoc tests have been published: a survey to establish their frequency of use (Holland 1996, 2001), their relative power and more detailed examination of their strengths and weaknesses (for example, in the face of nonresponding animals, Holland 2005, 2010).

When toxicological pathologists were asked in an anonymous survey to give the frequency with which they use the various methods beyond simple scoring, all responders claimed to routinely or commonly use at least one of them (the full data set is available in Holland 1996). Also, during peer review, when differences of opinion arise as to the association to treatment of a slight change, if the reviewer specifically asks, it is usual to find that the reporting pathologist has used at least one further method beyond simple scoring. So generating of further data that is more specific and extensive than simple scoring is a very common, if unreported, practice.

The question for debate here is, how should these more specific methods, the data generated, and their analysis be reported, if at all?

Taking a diagnostic standpoint: any test, and its analysis, is a matter for the diagnostician, and there is no compelling need to report the detail of how they arrive at their diagnosis—ultimately the conclusion rests on their authority. This is explicitly the position of Dodd (1988) on toxicologic pathology results. So currently the notes, aide-mémoire, data, analyses produced by pathologists in reaching their conclusions, are not needed as part of the report of the study. Hence, these further examinations are common, but then simply excluded from the report. This is implicitly the diagnostic approach.

On the other hand, an experimental report needs to include the methods used, the data and its objective analysis, as well as the result. The level of detail required needs to be sufficient to allow faithful repetition of the experiment. This detail of reporting is needed so that others can form an independent assessment of the soundness and validity of the work reported and, if need be, confirm or refute it by repetition. Not reporting the method, the data, or its analysis when claiming a result based on them removes the possibility of independent assessment of the scientific validity of the results. Is there any other branch of experimental science in which experiments are reported and results claimed in which the methods, data, and analysis that support those results are not reported with them? In the behavioral sciences, very ill-defined concepts such as the effect of non-drug (talking and behavioral) treatments of depression have been rigorously compared with each other and drug therapies using the subjective opinions of mentally ill patients as the raw data and then reported fully to experimental reporting standards (e.g., McLean and Hakstian 1979). Can we not reach this universally accepted standard in experimental toxicologic histopathology? Our raw data are also subjective opinions, but of mentally healthy, highly trained professionals under laboratory conditions.

Personal Recommendation

A range of attitudes to the issues above are supportable. My personal view is that histopathology experimental reports should satisfy the norms of science, so I would take the editor’s relevant instructions to authors of Toxicologic Pathology as an excellent starting point. The following should be included in the study records and report: sufficient detail of methods to allow repetition of the examination, all the relevant data, the objective analysis of that data, and the result with its probability estimate.

Although I am not advocating purely blind examinations of toxicological histopathological examinations, I know of pathologists who have generated data sets genuinely blind to treatment (hence untainted by any observer bias) and have conducted full statistical analyses by qualified statisticians to arrive at an objective probabilistic result on a specific lesion. Then they reported only the traditional individual animal data, contingency tables of arbitrary scores against sex and dose, and a narrative report with the result. The methods used to generate the blind data, who generated the blind data, the data themselves, the formal statistical test used, the critical region taken, if a one-tailed or two-tailed formulation was used, the formal probability estimate; none of these have been included in the report. All are open to valid scientific criticism. Only the result of this part of their work was given in the report. We do not do justice to our experimental discipline to work at the bench to the highest experimental standards available, but then report our work to a diagnostic standard. The justification for taking this diagnostic reporting approach is provided by the regulator, emphasizing the signed report as the raw data (

United States Federal Register 1987—“only the signed and dated report of the pathologist comprises the raw data respecting the histopathological evaluation”). In experiments, the raw data are the observations as they stand at the time they are taken, and the experimental report includes the methods, the data, the objective analysis, and the unique conclusion.

Footnotes

Acknowledgments

This work is drawn from a Fellowship Thesis of the Royal College of Veterinary Surgeons (![]() ). I owe a great debt to my two supervisors, Mr. Peter Lee and Dr. John Glaister. I gratefully thank Dr. Annabelle Heier and Dr. Pascal van Troys for supplying examples of diagnostic reports and their discussions and constructive criticism of the text, and Dr. John Foster for his encouragement and help with the article.

). I owe a great debt to my two supervisors, Mr. Peter Lee and Dr. John Glaister. I gratefully thank Dr. Annabelle Heier and Dr. Pascal van Troys for supplying examples of diagnostic reports and their discussions and constructive criticism of the text, and Dr. John Foster for his encouragement and help with the article.