Abstract

Bibliometrics has been embedded in higher education since the 1960s and now plays a central role in research evaluation and institutional visibility. Despite its strategic importance, many practitioners enter the field without formal training. This study presents an updated version of the 2019 Bibliometrics Competencies Model, developed through an iterative, evidence-based process grounded primarily in a global survey conducted in 2020. The first survey (2020; n = 130) gathered community feedback on the original framework and informed a revised model released in 2021. A second survey (2022; n = 64) subsequently assessed awareness and use of the updated framework and identified emerging competencies. Across both surveys, a substantial proportion of respondents (76% in 2020; 72% in 2022) reported having no formal bibliometrics education, despite holding high levels of responsibility for bibliometric analyses. Respondents also reported gaps between competencies required in practice and those addressed in formal education. Overall, the findings reveal a persistent mismatch between professional demands and structured training opportunities, underscoring the need for higher education programmes to embed bibliometrics systematically within their curricula.

Keywords

1. Introduction

Bibliometrics is a well-established discipline in the research community. It helps identify emerging topics, potential collaborators and suitable publication venues, and it can also enhance the visibility of research. Because of these advantages, bibliometrics has become increasingly popular among academics and researchers in higher education institutions, who are showing growing interest in citation and publication indicators [1].

In today’s rapidly evolving big data society, universities and research organisations are increasingly adopting quantitative methods to analyse research outputs and conduct research evaluation. This shift is largely driven by the simplicity, speed and ease of use these methods provide. Their perceived objectivity, transparency and efficiency have captured the attention of researchers and practitioners worldwide. This goes back to Narin [2], who developed evaluative bibliometrics as the practice of utilising bibliometrics indicators to assess research quality and impact. In recent decades, the role of quantitative measurement in evaluating scientific performance has gained even more importance because of the scarcity of research resources. The evaluation of scientists’ work often relies on publication counts, citations and the h-indexes to determine resource allocation, promotions or hiring decisions. This has caused a widespread feeling among scientists and researchers that metrics, as a tool used to evaluate their work, do not accurately reflect important aspects and nuances of their research and can often be misleading. They emphasise that the measurement of research performance is highly complex, and it should be made in the context of their academic careers [3]. Also, an unhealthy fixation on indicators or metrics, comparisons, competitions, rankings and league tables has been observed among certain individuals and organisations, prompting concerns in the research community that this metrics infatuation may undermine research integrity, diversity and cause corruption in science. It is also known that citation counts can be easily gamed and manipulated, and disadvantage early-career academics [4–6]. This is why, particularly in the European context, there are frequent discussions regarding the removal of research metrics in national evaluation exercises, in favour of narrative curriculum vitae (CV)s [7–9].

An indicator commonly used in research evaluations is the journal impact factor (JIF), developed by Eugene Garfield. It was originally created as a tool to aid in selecting journals for the newly established Science Citation Index (SCI), proving especially useful for librarians in deciding which journals to acquire [10]. However, in recent years, the JIF has frequently been misused as a proxy for predicting citations at the level of individual researchers or articles – an application for which it was never intended. It has also been inappropriately employed as a measure of the quality of individual articles or researchers.

1.1. Responsible metrics initiatives and manifestos

As a result, the JIF has faced severe criticism and remains a primary target in efforts to promote more responsible use of bibliometrics, particularly for evaluating individual researchers. In recent years, several initiatives, manifestos and movements have addressed the inappropriate use of evaluative metrics. The Declaration on Research Assessment (DORA) declaration was the first to articulate that journal-based metrics – such as the JIF – should not be used as a proxy for assessing the quality of individual research articles, or researchers, nor to inform hiring, promotion or Funding decisions [11]. A more nuanced framework for responsible metric use, the Leiden Manifesto, was published in Nature in 2015 [12]. In the United Kingdom, the Forum for Responsible Research Metrics released the influential report The Metric Tide [13], which was revised and updated in 2022. These developments have encouraged many universities and research institutions worldwide to adopt responsible research metrics statements, demonstrating their commitment to the appropriate use of bibliometrics and other indicators in research evaluation.

Later initiatives include the Hong Kong Principles for assessing researchers, developed in 2020 [14], which aim to reduce perverse incentives that may lead to questionable research practices or other forms of misconduct. In addition, the INORMS SCOPE framework provides a five-stage model for conducting responsible research evaluation. It offers a practical, step-by-step process to support research managers – and anyone involved in evaluation – in planning new assessments and reviewing existing ones [15].

In July 2022, the Agreement on Reforming Research Assessment [16] set a shared direction for reforming the evaluation of research, researchers and research organisations, with the overarching goal of maximising research quality and impact. The Agreement outlines key principles, commitments and timelines for change, laying the foundation for a coalition of organisations dedicated to implementing these reforms. It also led to the creation of the Coalition for Advancing Research Assessment (CoARA), which promotes a shared vision in which research assessment recognises the full diversity of research outputs, practices and activities that contribute to high-quality, impactful research. CoARA emphasises that assessments should rely primarily on qualitative judgement – centred on peer review – supported by the responsible use of quantitative indicators.

Many researchers agree that relying solely on quantitative indicators is overly simplistic and fails to capture the full scope of research quality. Although peer review is often considered the primary method for evaluating research, numerous studies have highlighted its limitations, noting that it can lack objectivity and may be susceptible to bias [17]. As a result, there is a growing consensus that the most effective approach to research evaluation combines bibliometric indicators with informed peer judgement.

Bibliometric data providers need to be more vigilant than ever about the potential misuse of their data and take necessary measures to prevent it. Some have already implemented mechanisms to help users understand their indicators, such as providing guidelines for their metrics on their websites or offering training for the community. Others have taken further steps to demonstrate their commitment to the responsible use of metrics; for example, Elsevier is now a signatory of the San Francisco DORA.

During this discussion, we often hear about data providers, policymakers, institutions, initiatives, organisations shaping the responsible use of bibliometrics – but what about the role of bibliometric practitioners who work directly with bibliometric data within institutions?

1.2. Bibliometric practitioners: the missing piece in the responsible metrics debate?

In this context, bibliometric practitioners are not necessarily scholars or academic researchers studying bibliometrics itself. Rather, they are professionals – primarily employed in research libraries and research offices – whose main role is to support their institution’s mission by using bibliometric tools and analyses to enhance research visibility, track performance and inform decision-making. Their work is deeply technical and practical, focused on applying metrics in service of institutional goals.

In most cases, staff working in bibliometrics begin their roles without formal education or training in the field, acquiring their skills on the job, which can make their work more challenging. Bibliometric competencies should also be developed through formal education, such as programmes offered by Information Science schools, as well as through specialised training courses and workshops provided by international bibliometric institutions and societies [18]. However, such educational opportunities remain limited. Bibliometric education is widely recognised as weakly institutionalised and not commonly available [19–26].

The absence of consistent, formalised educational pathways has prompted community-led initiatives to establish a shared foundation of knowledge and skills in bibliometrics. For example, the international community of library and information professionals developed a Bibliometric Competencies Model that defines the core skills required for bibliometric work and identifies areas needing targeted development to support high-quality practice across institutions [27].

The professional landscape of bibliometric practice differs across countries. In the United Kingdom, most bibliometric professionals are based in libraries, whereas in countries such as Belgium and Germany, expertise is also found within research offices. Academic and research librarians have shown strong interest in developing bibliometric skills, building on their extensive experience in managing bibliographic data and research outputs. It is therefore unsurprising that research libraries began establishing dedicated bibliometric units as early as the mid-2000s, creating positions specifically for bibliometricians [28].

Today, bibliometric services and units have become an established reality in academic libraries across many countries, including the United Kingdom, Spain, Sweden, Austria, the Netherlands, Germany and the United States [21,28–35]. In some regions, such as Australia, New Zealand and the United Kingdom, national research assessments also rely heavily on bibliometric indicators to evaluate research quality at universities [34,36–39].

In addition to supporting research evaluation, bibliometric methods are increasingly applied to guide library decisions – such as collection development and journal selection – and to provide strategic intelligence for universities and research institutions [29,33]. Despite this growing reliance, formal and systematic training remains limited in many countries, leaving practitioners to develop their expertise primarily through informal, on-the-job learning [40].

This lack of structured training is reflected in library and information science (LIS) education, where formal bibliometric instruction is largely absent [41]. Recent studies indicate that North American LIS programmes provide very limited coverage of the topic, highlighting a broader disconnect between LIS research and education in the region. In most programmes, bibliometrics is addressed only briefly – typically as a small component of research methods courses – while citation databases are treated merely as one category among many in information-searching courses.

This gap between practice and formal training is particularly striking within Information Science itself. Despite the prominence of bibliometric research in LIS scholarship – demonstrated by numerous studies analysing LIS literature [42,43] – bibliometrics remains largely underrepresented in LIS educational programmes [41]. While some postgraduate programmes include bibliometrics as part of research methods training, no universities in the United Kingdom currently offer it as a dedicated undergraduate or postgraduate module. By contrast, certain universities in Spain do provide such options, highlighting regional differences in the integration of bibliometrics into LIS curricula. This discrepancy underscores the broader challenge: although bibliometrics is increasingly applied in library decision-making and research evaluation, formal education and training opportunities remain inconsistent and often limited across regions.

1.3. Bibliometrics Competencies Model (origin, revisions, aims)

The LIS Bibliometrics Committee is a group of information professionals that meets regularly to discuss international bibliometric issues and support the global LIS bibliometrics community. In 2016, the Committee commissioned a study – conducted by Cox, Petersohn and Gadd and funded by Elsevier Research Intelligence – to develop a Bibliometric Competencies Model [27]. This initiative responded to evidence that most staff engaged in bibliometrics had received little or no formal training during their LIS degrees. The framework was designed to ensure that bibliometric practitioners are equipped to perform their duties effectively and responsibly.

The model served three main objectives:

a. Helping individuals evaluate their own bibliometric skills and identify areas needing further training.

b. Guiding organisations in developing training programs to equip staff with necessary bibliometric skills.

c. Assisting Information Schools in designing curricula that ensure future information professionals acquire essential bibliometric competencies.

Designed as a living framework, the original model outlined core, entry-level and specialist competencies, providing guidance for individual skills assessment, organisational training development and the design of LIS study programmes. As bibliometric practice evolved – driven by new indicators, open data sources and growing attention to responsible research evaluation – the model needed an update.

In 2020, Lancho Barrantes, Vanhaverbeke and Cox initiated a formal revision of the Bibliometrics Competencies Model, launching a survey that year and updating the model in 2021 [44]. A subsequent community survey in 2022 gathered further insights about the framework. Since then, the competencies model has been widely adopted, including in professional development workshops and institutional assessments, and continues to serve as a foundational structure for understanding the evolving roles, expectations and expertise required in contemporary bibliometric work.

Librarians at the University of Otago observed that bibliometrics is addressed only briefly in New Zealand’s Master of Information Science programme. By contrast, graduate programmes in scientometrics, bibliometrics, webometrics and informetrics have recently emerged at the University of British Columbia, although such offerings remain rare. While this approach may suffice for librarians in the sciences, those working in the arts and humanities frequently lack practical experience. To address this gap, the University of Otago applied the competencies model [27] to design face-to-face workshops based on the 11 IATUL Research Impact Things, targeting entry- and core-level competencies.

The competencies model has also been implemented at other institutions; for example, Stellenbosch University adopted it to assess the skills of its librarians [45]. Tasks such as designing and executing bibliometric reports – evaluating departmental or institutional performance – are classified as ‘specialist tasks’, representing the highest level of professional competency [46]. Overall, the model provides a framework for examining the growing literature on bibliometrics within evaluative and policy contexts and offers theoretical insights into the characteristics of a ‘competent bibliometrician’ [47]. Findings suggest that enhanced advocacy, targeted training and strengthened technical expertise can substantially increase the value and impact of bibliometric work.

As a living framework, the competencies model is regularly reviewed to reflect the evolving field, particularly given the developments in open research and research integrity. Open science has notably reshaped bibliometric practice by promoting greater transparency and access to data. Comparisons of open metadata sources such as OpenAlex with proprietary databases like Web of Science and Scopus show that OpenAlex offers comparable coverage for many recent publications, making reproducible analyses more feasible. New tools and infrastructures, such as the openalexR package, facilitate direct data collection from OpenAlex, while open-access journals and datasets are increasingly included in analyses, highlighting the growing importance of data-level metrics.

These developments underscore the need for the Bibliometric Competencies Model to be continuously reassessed. Accordingly, this article critically examines the model, positioning it as a key framework for understanding the evolving roles and skill sets of bibliometric practitioners. To contextualise and evaluate the model’s relevance and applicability, the analysis draws on empirical findings from two international surveys conducted in 2020 and 2022. In doing so, the study not only advances the theoretical development of the competencies model but also supports the broader professionalisation of bibliometric practice, offering practical recommendations for training, education and institutional capacity building.

2. Data and methods

The first questionnaire was distributed to the bibliometrics community in early 2020. It focused on topics such as emerging data sources, new analytical tools, the growing push for more responsible use of metrics and the qualifications and experiences of respondents. After several rounds of revisions incorporating feedback from the LIS Bibliometrics Committee, the final version of the questionnaire was agreed upon. It was then disseminated via four of the most prominent Information Science professional mailing lists, based on the assumption that these platforms reach most professionals engaged in bibliometric work across Europe and the Americas.

LIS bibliometrics community, a bibliometrics discussion list for the LIS community, mainly but not exclusively focused in the United Kingdom. https://www.jiscmail.ac.uk/cgi-bin/webadmin?A0=LIS-BIBLIOMETRICS

IweTel is the largest electronic forum on libraries, information science and communication that exists in the Spanish language. https://listserv.rediris.es/cgi-bin/wa?A0=IWETEL

INCYT was created in June 2008 to put it at the service of research groups in bibliometrics and scientific information. https://listserv.rediris.es/cgi-bin/wa?A0=INCYT

ISSI – Scientometrics, Informetrics and Cybermetrics. List dedicated to the dissemination of professional activities and discussion of methodological aspects in the themes of scientometrics, informetrics, cybermetrics and other aspects related to the quantitative description of the activity and scientific and technical communication. https://www.rediris.es/list/info/issi.html

While these lists together provided broad coverage of the bibliometrics community, some limitations should be acknowledged. For instance, LIS bibliometrics is highly concentrated in the United Kingdom, and IweTel and INCYT primarily reach Spanish-speaking regions. Consequently, respondents from other regions, particularly outside Europe and Latin America, may be underrepresented, potentially biasing the findings towards European and Spanish-speaking perspectives. Nonetheless, the combination of lists was intended to balance practitioner and researcher viewpoints, linguistic diversity and engagement with core bibliometric activities, providing a meaningful cross-section of the community.

In addition to the survey, we organised a workshop titled ‘Don’t Stop Thinking About Tomorrow! – Future Competencies Needed in Bibliometrics’ at the LIS Bibliometrics Conference on The Future of Research Evaluation, held at the University of Leeds in March 2020. The goal was to gather input from attendees to complement the responses collected through the mailing list questionnaire. Participants – both in person and online – were invited to respond to several questions on selected topics via an online poll.

Following the collection of responses and feedback from the LIS bibliometrics community, the new Competencies Model was revised and updated (see Appendix 1). The updated version was subsequently launched in July 2021 [44].

As with the previous version, the updated Bibliometrics Competencies Model is structured across three main levels – Entry, Advanced and Expert – and organised into three core sections: Knowledge in the Field, Responsibilities & Tasks and Technical Skills. A foundational principle of the model is that professional integrity is essential for all practitioners, regardless of their level of expertise. To support engagement with the model, we also developed a series of visualisations that use colour gradients to illustrate progression. These show that individuals at the Advanced level are expected to have mastered the competencies of the Entry level, while those at the Expert level are expected to have acquired the knowledge and skills associated with both Entry and Advanced levels.

After the release of the updated Competencies Model, a revised questionnaire was distributed to the bibliometrics community in 2022 to assess engagement with the new framework and to enable comparison with the earlier survey. As before, the questionnaire was circulated through the same bibliometric mailing lists and invited respondents to indicate the extent to which they had interacted with the updated model.

Both surveys were treated as independent samples, with no procedures implemented to identify repeat participants, so comparative analyses reflect aggregate trends rather than individual-level changes over time. Completely blank or insufficiently completed questionnaires were excluded, while partially completed responses were retained if key questions were answered. Multiple-response questions were coded so that each selection was counted individually, capturing the full scope of respondent engagement with the Bibliometric Competencies Model.

The data were collected and analysed by the same research team, and no independent or external analysis was performed. A limitation of this study is that the data analysis was not conducted independently, which could have introduced confirmation bias. Future research could incorporate independent or external review or coding procedures to minimise potential bias.

Building on this data, this article presents findings from the community surveys conducted in 2020 and 2022. It also provides an overview of the revisions made to the Bibliometrics Competencies Model, which has gained widespread recognition and adoption within the information science community. These updates reflect ongoing efforts to align the model with current professional practices, emerging trends and the diverse requirements of practitioners and educators in this specialised domain.

3. Results

3.1. General responses across both surveys

3.1.1. Overview and response rate

Table 1 shows a decline in total survey responses, from 130 in 2020 to 64 in 2022. While this decrease may suggest reduced engagement or challenges in outreach, it could also result from changes in recruitment strategies, timing or survey conditions. Differences between the total sample size and the number of responses reported in specific tables reflect typical variations in item-level response rates. Some participants did not answer every question or were not eligible for certain items, leading to smaller response totals for analyses. These differences in sample size should therefore be considered when interpreting percentages and making year-to-year comparisons.

Total respondents.

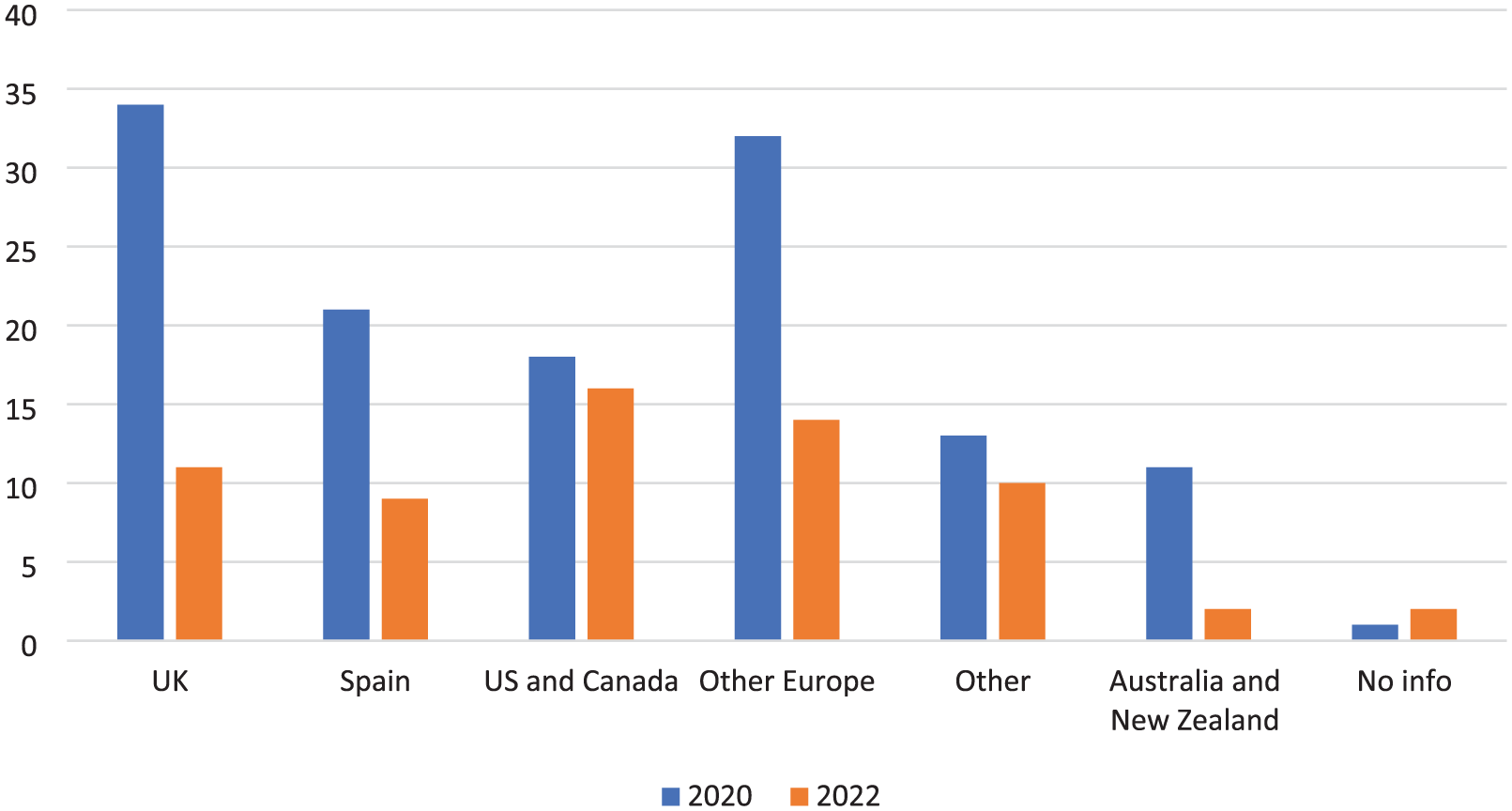

As illustrated in Figure 1, respondents came from various regions, with the United Kingdom, Spain, the United States and Canada consistently dominating. Despite the surveys’ international reach, this clustering suggests underrepresentation of the broader global bibliometrics community.

Geographic distribution.

Table 2 shows that bibliometric work is primarily conducted within libraries, accounting for approximately 60% of responses in both years. The remaining participants include researchers and academic staff, highlighting the diverse professional landscape of the field.

Professional affiliation of participants.

Although the survey was primarily aimed at practitioners, it also attracted researchers with a broader interest in bibliometrics, underscoring the topic’s cross-disciplinary relevance and its appeal across different professional roles. It is worth noting that some colleagues may have selected more than one professional setting – for example, those who work in a library while also holding a research-focused role – which can influence how the totals are interpreted. This is a possible question allowed multiple roles.

Table 3 shows that most bibliometric practitioners work across disciplinary boundaries. When a disciplinary focus was specified, the Social Sciences, Humanities and Life Sciences emerged as the most prominent areas of activity. This distribution often reflects the structure and priorities of the institutions where practitioners are based. For instance, in institutions primarily focused on medical research, bibliometric work tends to be more concentrated in the Life Sciences. These patterns suggest that both institutional context and disciplinary orientation play a significant role in shaping the scope and focus of bibliometric practice.

Disciplinary focus.

3.2. Training and education

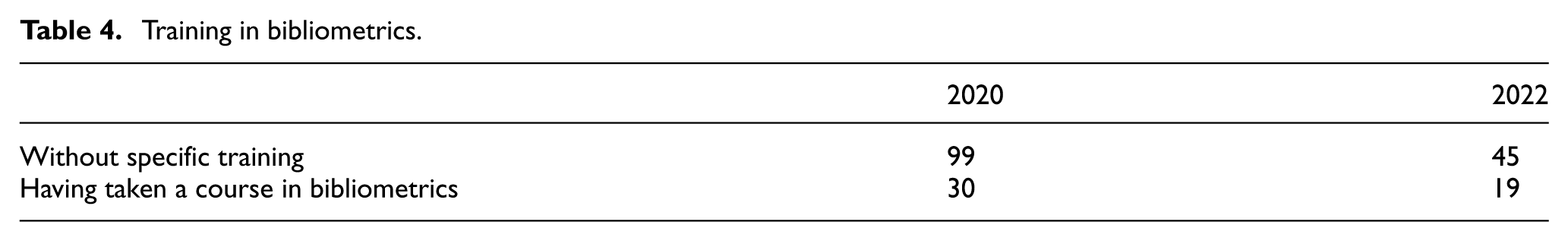

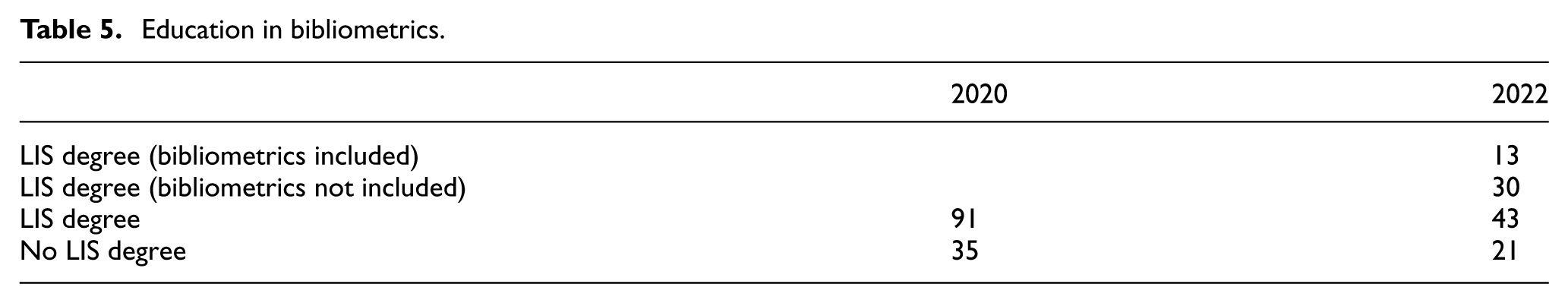

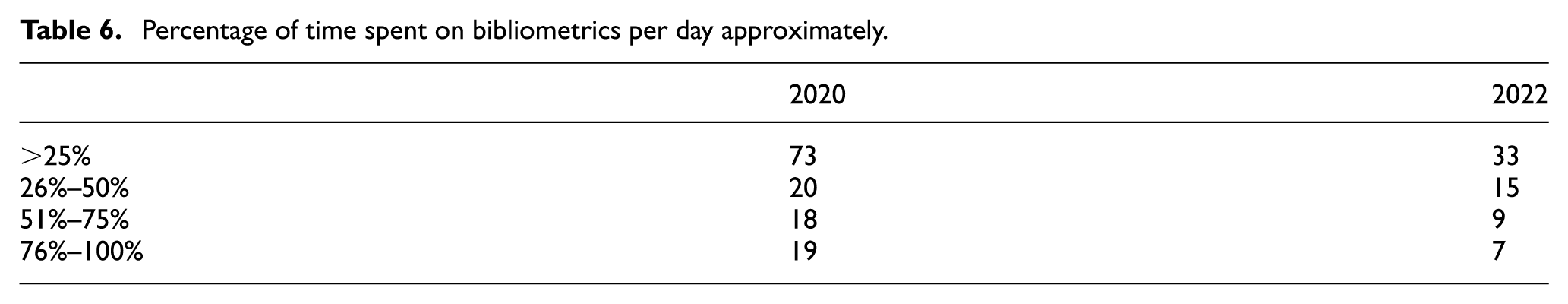

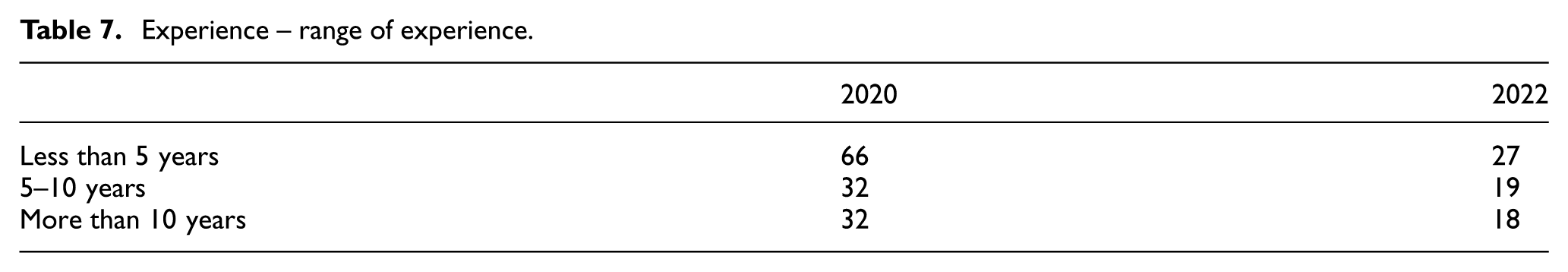

Understanding the educational and training pathways of bibliometric practitioners provides valuable context for interpreting their professional capabilities and development needs. Tables 4 and 5 explore the extent of formal training in bibliometrics and the presence of bibliometric content within LIS degree programmes. Tables 6 and 7 present the findings concerning respondents’ time involvement and experience in bibliometrics.

Training in bibliometrics.

Education in bibliometrics.

Percentage of time spent on bibliometrics per day approximately.

Experience – range of experience.

In 2020, 99 practitioners reported beginning their bibliometric work without any formal training, while 30 had completed a bibliometrics course. In 2022, 45 practitioners reported no formal training, and 19 had received training. Although the smaller number of respondents in 2022 makes direct comparisons difficult, these figures still suggest a continuing recognition of the value of structured guidance in bibliometrics and a gradual move towards more formalised training pathways within the field.

Not all participants provided a response to this question; therefore, totals do not correspond to the overall sample size.

Table 5 presents the educational background of bibliometric practitioners in 2020 and 2022. In 2020, 91 respondents reported holding a LIS degree, while 35 did not. Notably, the 2020 questionnaire did not ask whether bibliometrics was included in the LIS curriculum; as a result, the corresponding cells are blank, and direct comparison with the 2022 data is not possible. By 2022, the survey provided a more detailed view of the relationship between LIS education and bibliometric training: 13 participants had completed an LIS degree that included bibliometrics, 30 held an LIS degree without bibliometric content and 21 did not hold an LIS degree. These findings provide valuable insights into the role of bibliometrics within formal LIS education. Although many practitioners hold LIS degrees, the 2022 data indicate that bibliometric training is still not widely integrated into these programmes. Most LIS graduates reported that their degrees did not include bibliometrics, highlighting a gap between academic preparation and the practical skills required in the field.

Not all participants provided a response to this question; therefore, totals do not correspond to the overall sample size.

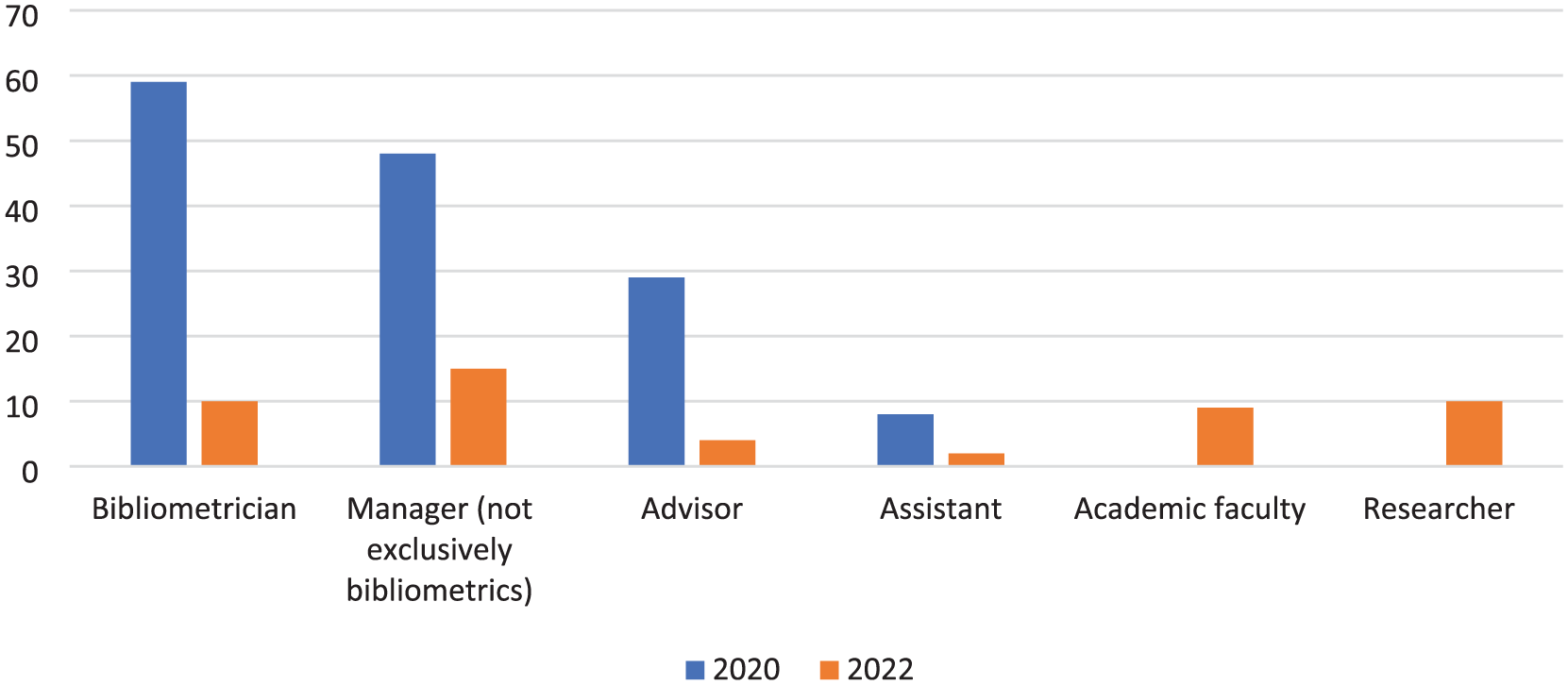

The distribution of professional roles among participants varied between 2020 and 2022 (Figure 2). In 2020, most participants identified as Bibliometricians (59), followed by Managers (48), whose responsibilities often extended beyond bibliometrics. Other roles included Advisors (29) and Assistants (8). By 2022, participants reported a wider variety of roles. Bibliometricians were represented by 10 participants, Managers by 15, Advisors by 4 and Assistants by 2. In addition, new categories appeared, including Academic Faculty (9) and Researchers (10), reflecting an expanded range of professional roles among respondents.

Roles and responsibilities.

This question permitted multiple responses; as a result, totals do not correspond to the overall sample size.

These changes suggest a shift away from ‘Bibliometrician’ as the dominant role, potentially reflecting evolving job responsibilities or changing preferences in job titles. The appearance of Academic Faculty and Researcher roles indicates a growing integration of bibliometric activities into broader academic and research contexts. At the same time, the continued presence of Managers and Assistants underscores the organisational diversity of bibliometric teams. It remains unclear, however, whether ‘Bibliometrician’ is widely recognised as a formal job title or more often used informally to describe a person’s functional role.

In both 2020 and 2022, most participants spent a quarter or less of their time on bibliometric tasks. Few reported focusing on bibliometrics full-time (76%–100%), highlighting its typical status as a secondary responsibility. Mid-level engagement (26%–50% of time) increased slightly, from 16% in 2020 to 23% in 2022. These patterns likely reflect the project-based nature of bibliometric work, with fluctuating demands that make time allocation difficult to quantify precisely.

In 2020, participants reported a wide range of experience in bibliometrics, with 66 indicating less than 5 years of experience. In 2022, only 27 respondents fell into this category; however, this reduction reflects the smaller overall number of participants rather than a true decline in the proportion of early-career practitioners. It remains unclear whether these figures represent total career experience or only time spent with their current employer.

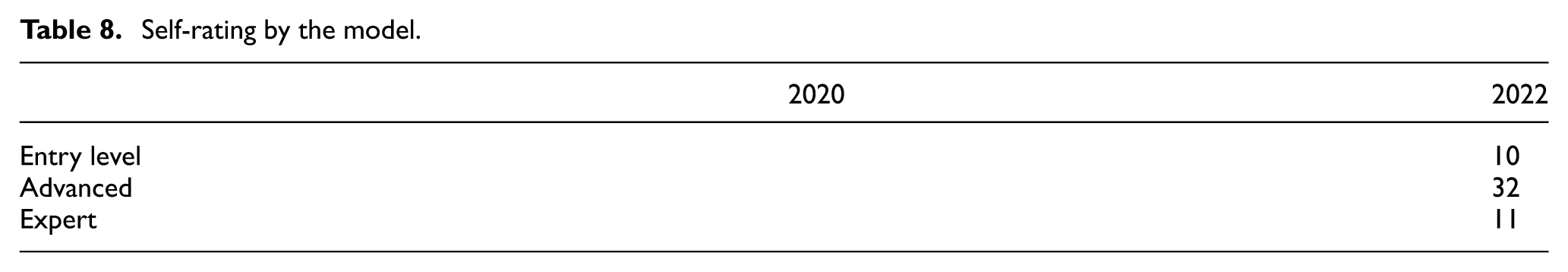

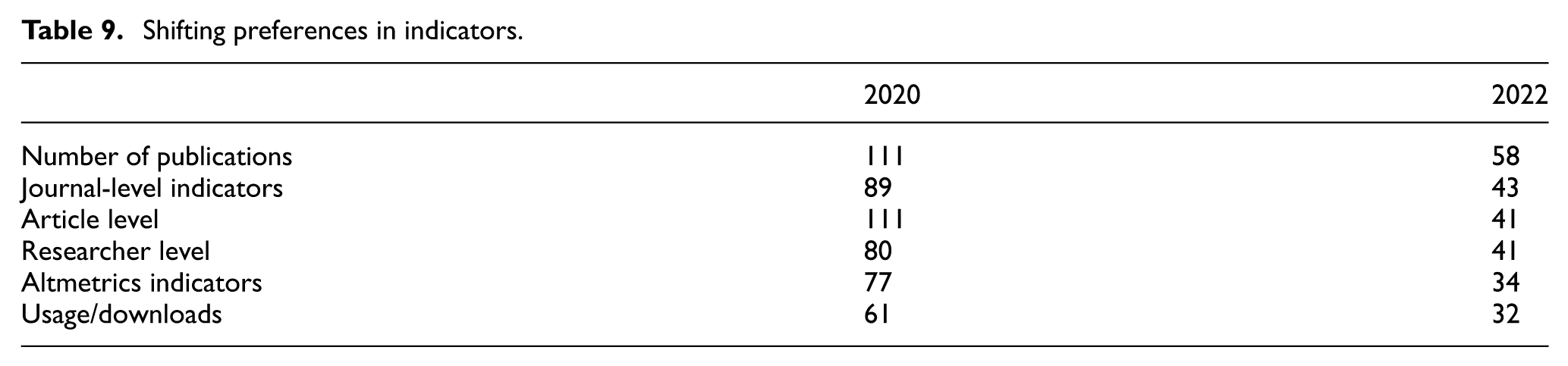

Table 8 shows how practitioners compared the tasks and skills required in their roles with the competency levels defined in the new Bibliometrics Competencies Model. Using the updated model, participants self-assessed their own capabilities. Most rated their knowledge as advanced, with entry-level and expert-level ratings appearing in similar proportions. Overall, the sample presented as highly skilled. However, because the survey was distributed through a specialist mailing list, the results likely overrepresent individuals who have a strong interest or background in bibliometrics. This potential bias is particularly relevant when interpreting the findings related to the activities and tools used in bibliometrics, as those most engaged with the field may report more frequent or advanced use than the broader practitioner population. Table 9 presents the distribution of preferences for different bibliometric indicators in 2020 and 2022. Although the absolute frequencies are lower in 2022, this pattern should be interpreted with caution, as the total number of responses differed between the two years.

Self-rating by the model.

Shifting preferences in indicators.

3.3. Activities and tools in bibliometrics

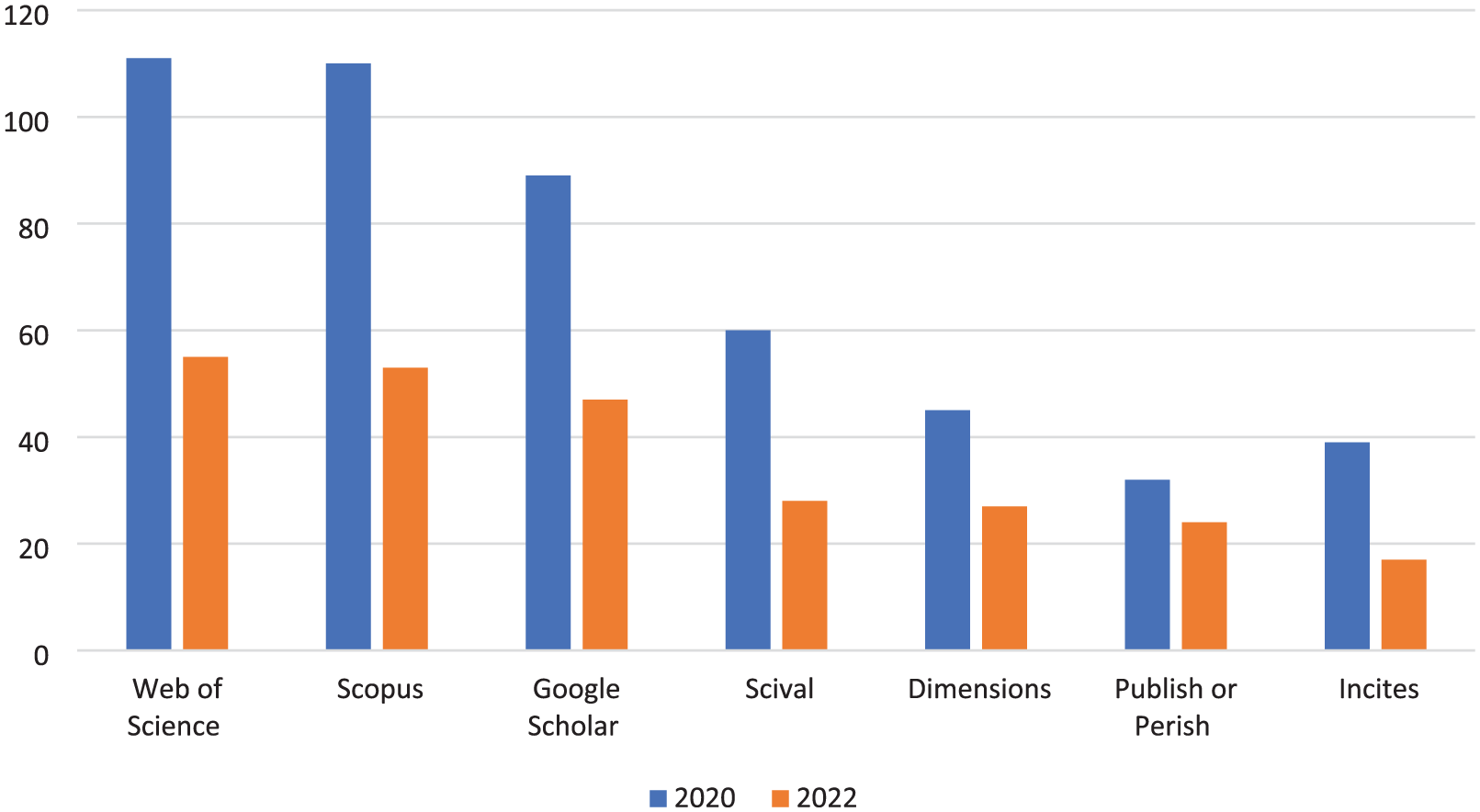

Figure 3 presents the most frequently used tools among bibliometric practitioners in 2020 and 2022. As expected, multidisciplinary databases such as Web of Science and Scopus remained the primary sources, each used by over 80% of respondents in both years. Google Scholar surpassed SciVal. Tools like Dimensions, Publish or Perish, and InCites showed moderate but consistent usage, suggesting a diverse toolset tailored to specific analytical needs. Overall, the results reflect a stable preference for core data sources, alongside a gradual diversification in tool usage.

Most frequently used tools.

Across both years, the number of publications and article-level indicators remained the most frequently used measures, suggesting their continued centrality in bibliometric practice. Journal-level indicators, altmetrics and usage/downloads appeared less commonly, although all indicators showed lower counts in 2022 – likely influenced by a smaller number of respondents. Without proportional data, it is difficult to determine whether practitioners truly shifted preferences, but the consistently lower use of altmetrics and usage/downloads may point to ongoing uncertainty about their robustness or relevance for formal evaluation.

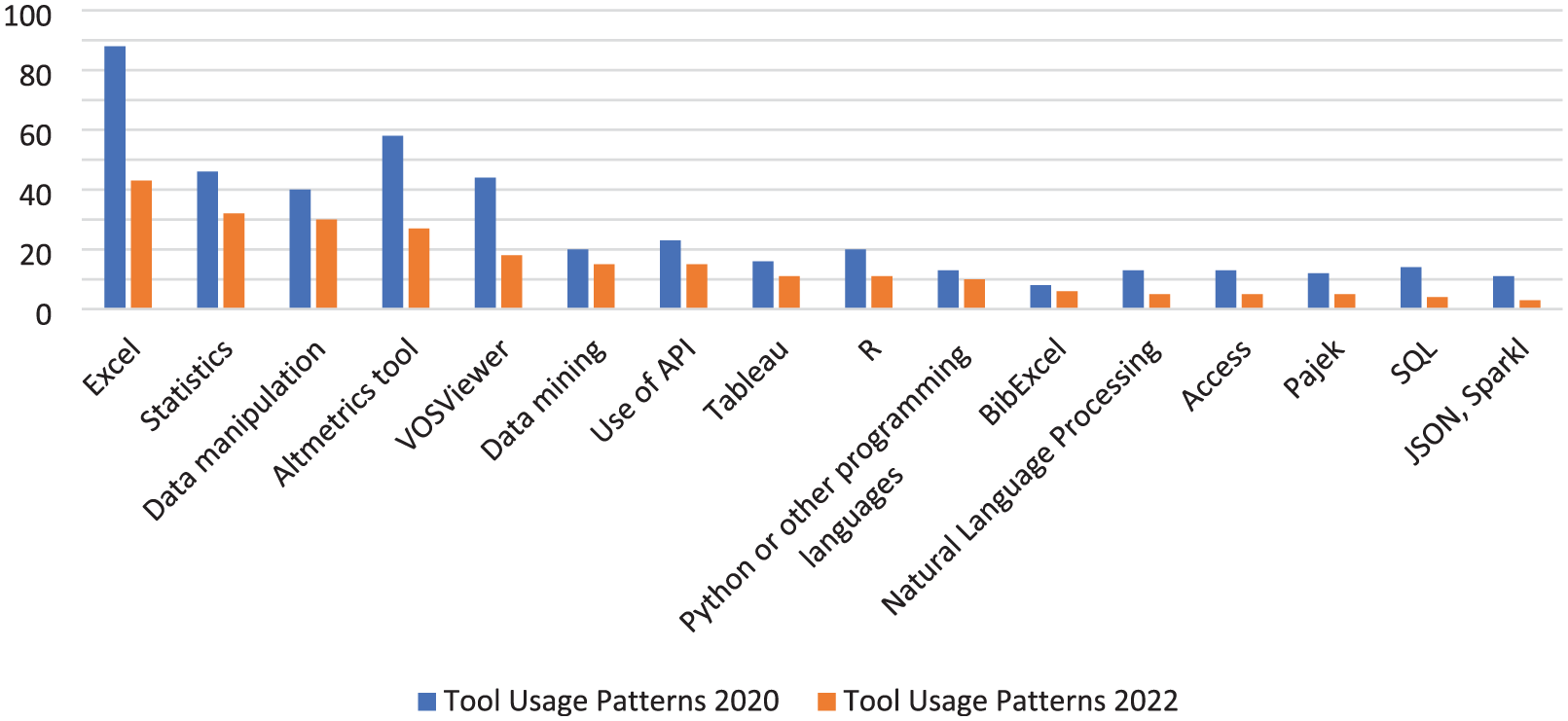

Excel remains the most widely used tool, reinforcing its role as an accessible, low-threshold entry point for bibliometric work, as shown in Figure 4. In contrast, the increased uptake of more advanced tools – such as statistical packages, data manipulation software and APIs – in 2022 suggests a gradual professionalisation of practice and a shift towards higher technical capability among practitioners. At the same time, the reduced use of VOSviewer and NLP tools indicates that, despite their analytical value, visualisation and text-mining tools may be underutilised or perceived as more challenging to adopt. This points to a continuing need for targeted training, especially in areas that bridge bibliometrics with data science techniques. The field, therefore, appears to be at a transitional stage: moving beyond basic tools but not yet fully embracing more complex analytical technologies. Regarding training needs and preferences, respondents expressed a strong interest in further training opportunities, particularly among the 2022 participant group. Their preference for webinars, recorded sessions and interactive tools highlights a desire for flexible, accessible learning formats that accommodate varying levels of expertise.

Tool usage patterns.

3.4. Training needs and preferences

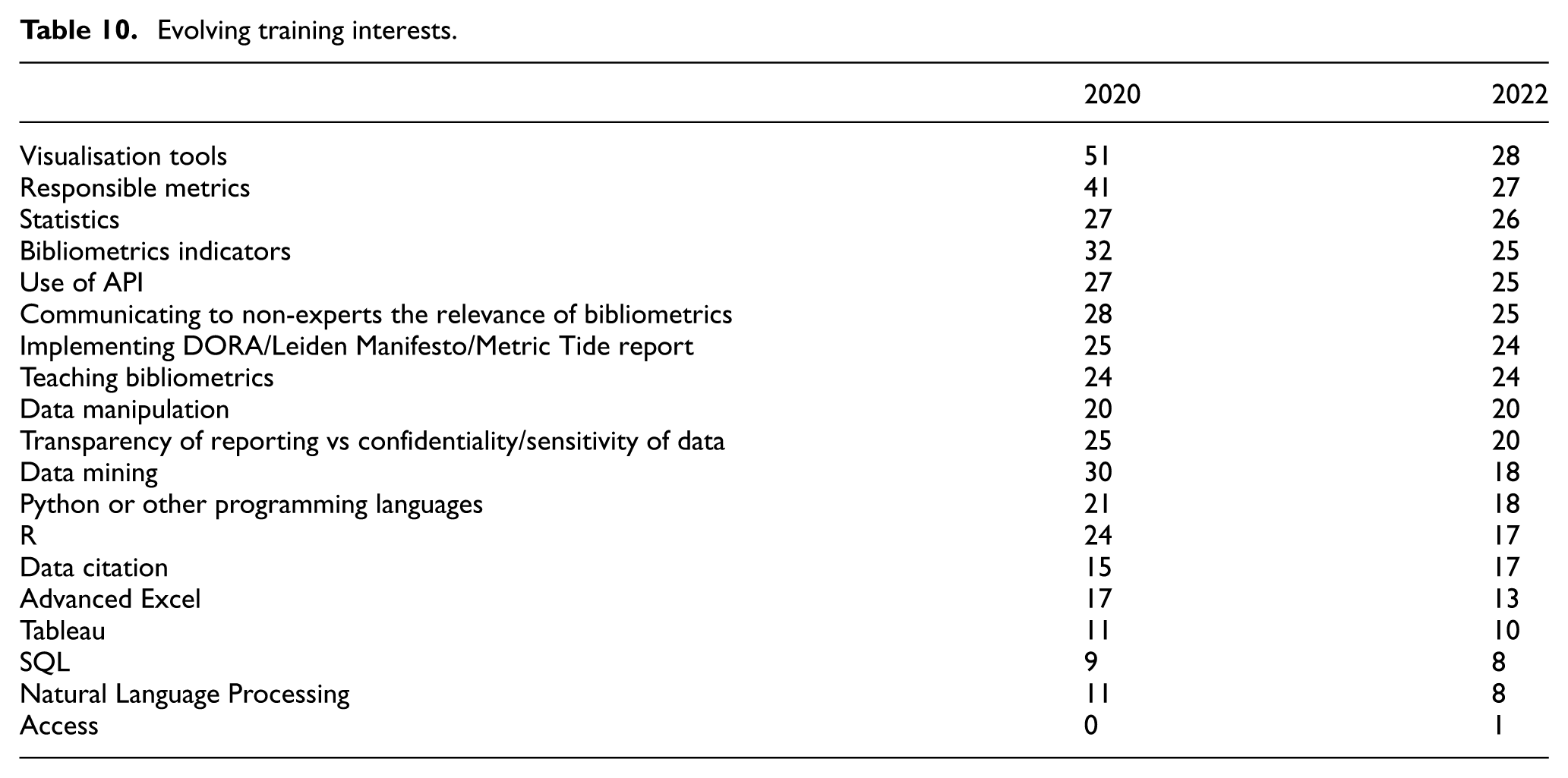

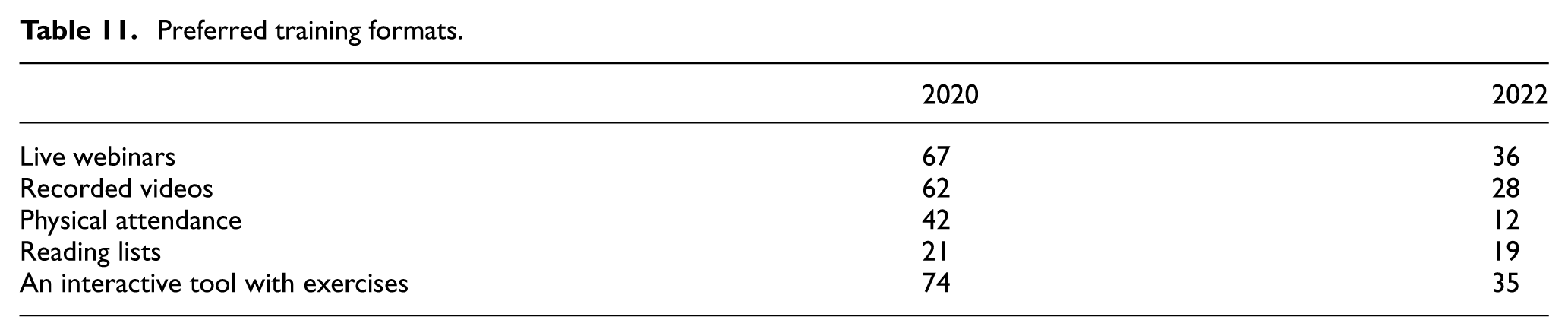

The findings from Tables 10 and 11 reveal a significant broadening of training needs among bibliometric practitioners between 2020 and 2022.

Evolving training interests.

Preferred training formats.

While visualisation tools and responsible metrics remained high-priority training topics, demand increased notably for more technical skills, including statistics, APIs and programming languages such as Python and R. Interest in communicating the relevance of bibliometrics to non-experts also rose sharply.

These shifts point to two parallel developments in the field. The first is a technical turn, reflecting the growing importance of computational and data-centric methods in bibliometric work. The second is a communicative turn, highlighting the need for practitioners to explain research impact in ways that resonate with audiences beyond the expert community. Together, these trends illustrate an expanding skill set for practitioners, who must now navigate both analytical complexity and effective science communication.

Preferences for training formats reinforce this evolution. Across both surveys, live webinars and interactive tools with exercises were consistently the most favoured options, indicating a preference for flexible, hands-on learning experiences. In contrast, interest in in-person training fell substantially, likely reflecting post-pandemic workplace changes as well as a broader shift towards digital, self-paced learning environments.

Taken together, these findings point to a growing recognition that effective bibliometric practice increasingly relies on a combination of technical proficiency and translational communication skills. Training programmes and competency frameworks must therefore adapt to support development in both areas.

Within this context, the bibliometric competency model provides a valuable structure for identifying, assessing and developing the core skills required in the field. It supports not only individual self-assessment but also professional development planning, team coordination and the design of educational programmes.

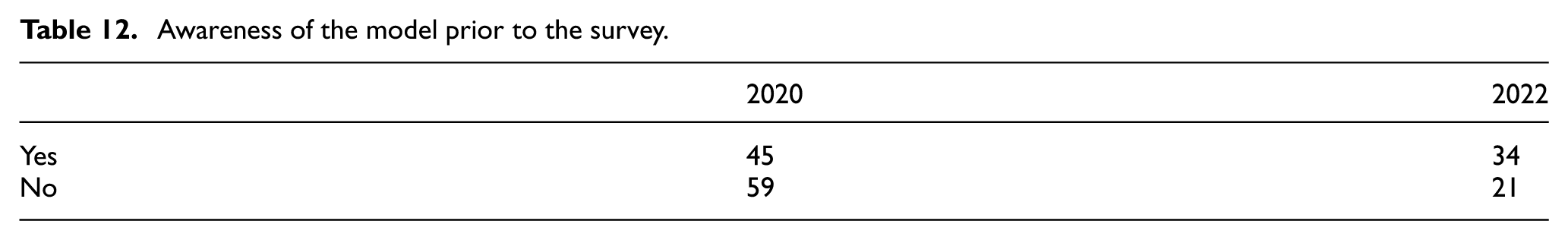

3.5. Awareness and perceived quality of the Bibliometric Competency Model

To understand the reach and relevance of the model, participants were asked about their prior awareness of it (Table 12). Findings indicate a substantial increase in recognition. This suggests growing dissemination and integration of the model within the professional community.

Awareness of the model prior to the survey.

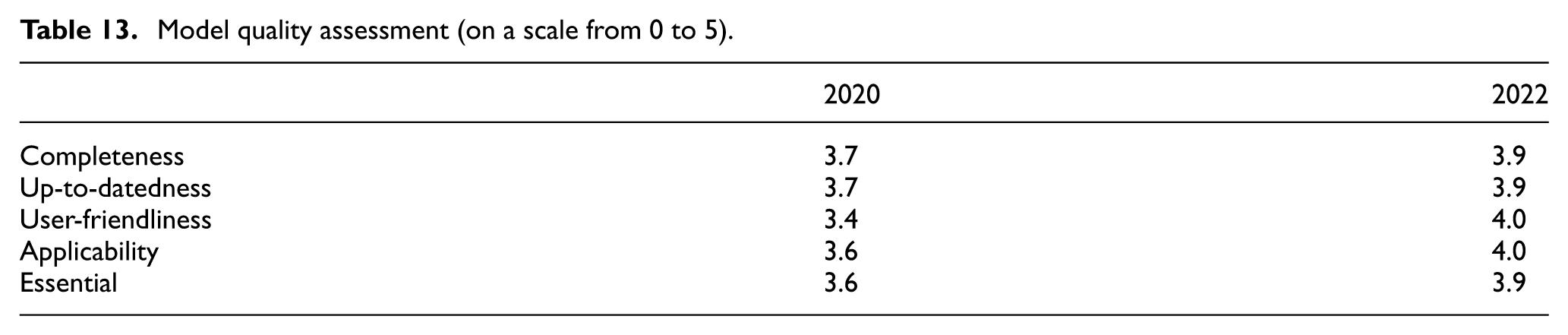

Beyond general awareness, participants were also asked to assess the quality of the competencies model across several dimensions using a 0–5 rating scale (Table 13). Overall, the results show an improvement in every category from 2020 to 2022, suggesting that the model has evolved in ways that better align with the expectations and practical needs of bibliometric professionals.

Model quality assessment (on a scale from 0 to 5).

The most notable improvement was in user-friendliness, which rose from 3.4 to 4.0. This suggests that the revised model – potentially enhanced through improved visual design or a clearer structure – has become more accessible to users. Applicability similarly reached 4.0 in 2022, underscoring its increasing relevance to real-world bibliometric practice.

Taken together, the increases across all dimensions likely reflect both greater familiarity with the model and its ongoing refinement. This reinforces its perceived value not only as a conceptual framework but also as a practical tool that can be applied across a wide range of professional contexts.

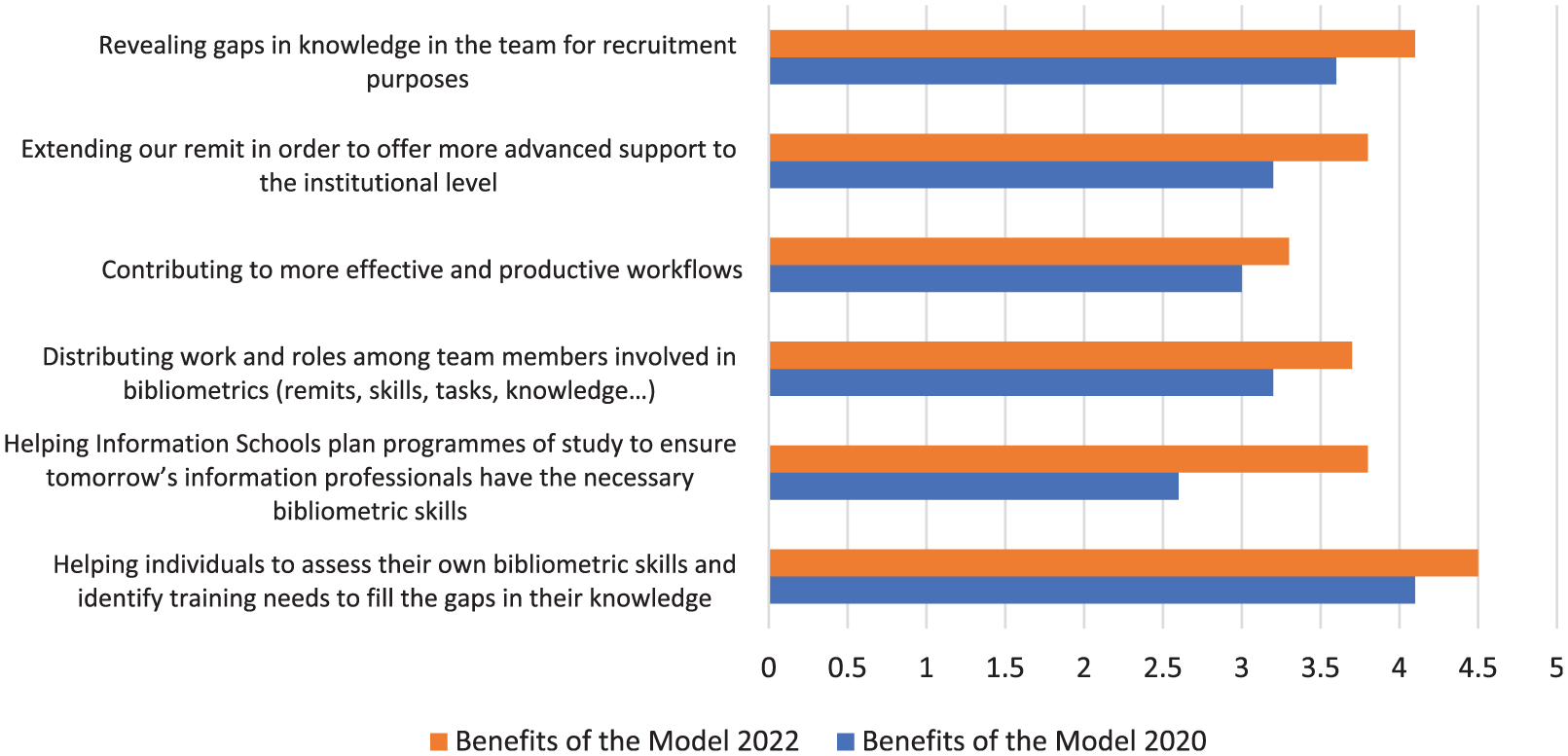

Figure 5 presents the benefits of the competencies model, rated on a scale of 0 to 5, as assessed by participants in 2020 and 2022. The ratings indicate that the model’s value, particularly in supporting self-assessment and professional growth, has been increasingly recognised, with significant improvements observed in 2022.

Benefits of the model (rated out of 5).

In 2022, the perceived value of the competencies model increased across all measured dimensions, highlighting its growing role as a foundational tool in bibliometric practice. Compared to 2020, participants expressed greater appreciation for its ability to support self-assessment, foster professional development and enhance team functioning. The model is increasingly used not only to help individuals evaluate their skills and identify areas for growth but also to map expertise within teams, enabling more strategic task allocation based on individual strengths.

Importantly, the model’s ability to reveal gaps in team knowledge emerged as one of its most valued features. This function is especially critical for recruitment planning, allowing organisations to target hiring efforts to complement existing capabilities. The sharp increase in ratings related to institutional applications such as planning programmes of study and extending support at an organisational level signals a broader institutionalisation of the model. No longer confined to individual assessment, it is now being integrated into long-term strategies for workforce development and capacity building.

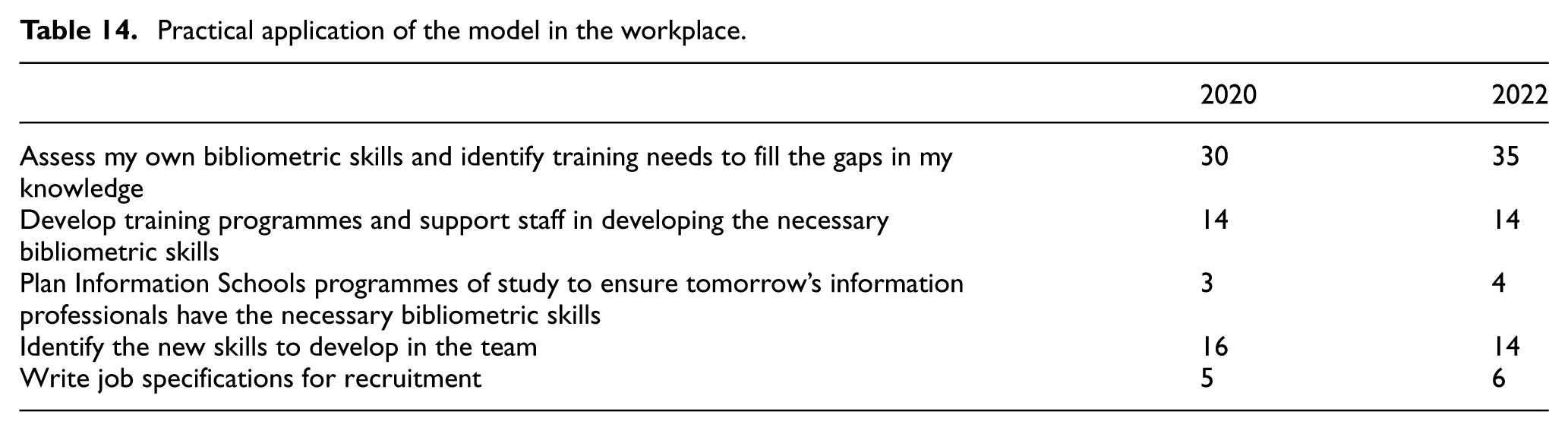

Table 14 highlights the practical applications of the competencies model in the workplace, based on responses from participants.

Practical application of the model in the workplace.

The data show that the competencies model is most widely used for self-assessment and identifying training needs. This consistency over time underscores the model’s enduring relevance as a reflective tool, helping individuals assess their own bibliometric competencies and target areas for development. Its accessibility and clarity likely contribute to its sustained adoption in this context.

However, its use in more strategic or institutional functions remains limited. Applications such as developing training programmes and identifying team-level skill gaps are reported by fewer than one-third of respondents. Similarly, the model’s use for writing job specifications or planning Information School curricula remains marginal. These findings suggest that while the model is well adopted at the individual level, it has not yet become fully integrated into organisational or educational planning workflows.

This discrepancy between perceived value (as seen in Figure 5) and practical implementation highlights a gap between potential and actual use. The model’s utility for team development, recruitment and curriculum design may not yet be fully recognised or operationalised within institutions. Factors such as limited managerial awareness, lack of integration into HR processes, or role constraints of practitioners themselves could explain this underutilisation.

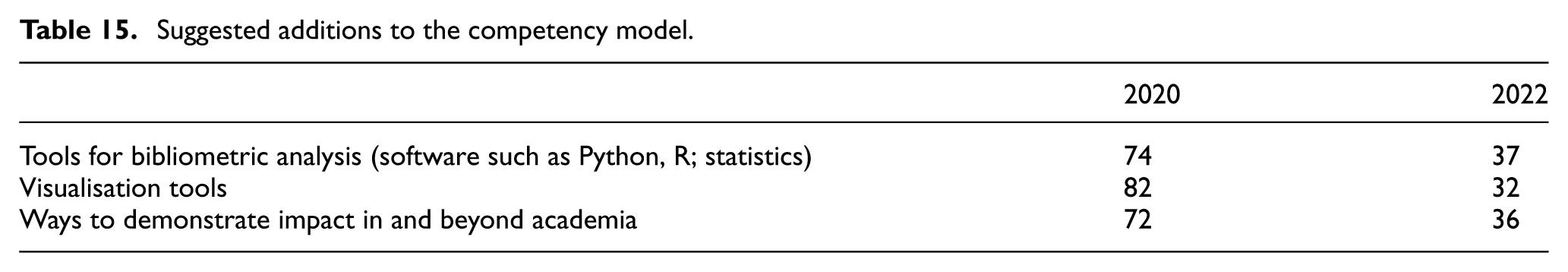

Table 15 presents the suggested topics, tasks, knowledge and skills to add to the competencies model. The table reflects participants’ interest in expanding the model to cover additional areas relevant to bibliometrics practice.

Suggested additions to the competency model.

The model appears responsive to user feedback. When participants suggested additional competencies, the top recommendations – tools for bibliometric analysis (e.g. Python, R), visualisation tools, methods for demonstrating impact – were incorporated in the updated version. Looking forward, the rapid development of artificial intelligence (AI) suggests that the model will need ongoing updates to remain aligned with emerging technologies and professional practices.

These results indicate sustained interest in technical skills (e.g. programming, statistics), data communication and the societal relevance of research – three areas that are increasingly central to bibliometric work. The recurring themes of technical tools, visualisation and societal impact highlight a shared understanding of the core competencies required in modern bibliometric practice. This alignment is further supported by findings from a workshop at the LIS Bibliometrics Conference at the University of Leeds, which explored training needs directly with practitioners.

Over 90% of the 54 workshop participants indicated that they would have appreciated more bibliometric training. This widespread need may reflect not only gaps in prior formal education but also the accelerating pace of technological innovation and evolving methodological frameworks, both of which require continual upskilling.

The workshop at Leeds identified five priority areas for further bibliometric training:

Applications for data analysis, combined with statistical literacy

Data visualisation skills

Ethical and responsible use of metrics

Programming, coding and AI

Use of APIs for efficient, automated data collection

This ranking highlights a strong demand for both technical skills and ethical, responsible practices, reflecting the evolving requirements of modern bibliometric work.

4. Discussion and conclusion

The findings of this study highlight the importance of a comprehensive, forward-looking conceptual model of bibliometric competencies – one that not only captures current practices but also supports ongoing professional development in response to the rapidly evolving demands of bibliometrics and is able to anticipate future needs. Surveys and workshop data provide descriptive insights into self-assessed expertise, tool use and training priorities; their value lies not only in illustrating patterns across respondents’ experiences but also in revealing conceptual gaps in how bibliometric work is understood, performed and supported. These insights underscore the need for the model to remain dynamic, incorporating continual feedback and periodic updates to stay aligned with emerging technologies, methodological innovations and evolving professional expectations.

Empirically, a key theme that emerged is the tension between perceived proficiency and actual training pathways. Many respondents reported working at an advanced level despite limited years of experience and a lack of formal education in bibliometrics. This overestimation of competence appears to stem from the rapid acquisition of tool-specific skills through vendor-led training or peer learning channels that often bypass foundational knowledge and critical engagement with the field. These findings highlight limitations in current training models and point to the need for a scaffolded framework that defines competency levels and provides structured development pathways, from basic awareness to expert-level analytical skills.

A second empirical pattern relates to professional identity. Many participants identified themselves as ‘Bibliometricians’, despite this title being largely absent from formal job classifications. This signals an emerging sense of professional belonging within a domain that remains institutionally unrecognised, even though bibliometric expertise is frequently embedded in adjacent roles such as research support, scholarly communication or library analytics. From an interpretive standpoint, this blurring of role boundaries underscores the need for a shared, role-inclusive model that standardises competencies across diverse job titles and organisational contexts, thereby clarifying expectations for training, responsibilities and career progression. The surveys reveal a notable paradox: while practitioners articulate an increasingly coherent professional identity, the institutional frameworks required to legitimise and sustain that identity remain underdeveloped.

The theoretical implications of these findings extend beyond the descriptive patterns. Central to the conceptual model required is a shift away from viewing bibliometric skills purely in technical terms. A robust framework must be multi-dimensional, incorporating five core areas: (1) foundational knowledge (e.g. citation theory, scholarly communication and data structures), (2) methodological competence (e.g. metrics interpretation, bibliometric indicators and statistical reasoning), (3) ethical awareness and research integrity (e.g. responsible use of indicators, equity in research evaluation and questionable practices), (4) technical fluency (e.g. APIs, programming languages, visualisation tools) and (5) communicative ability (e.g. translating complex metrics for non-experts and informing policy decisions). Such a model would recognise that bibliometric competence is not merely a matter of operating tools, but also of contextual understanding, critical thinking, communication and the ability to act responsibly in high-stakes evaluation environments.

The study also uncovers one of the most pressing conceptual challenges: the commodification of bibliometric expertise. Although the empirical data show widespread use of commercial platforms such as SciVal, InCites and Dimensions, the broader implication is that these tools have enabled a large number of professionals to produce bibliometric analyses without necessarily engaging with the underlying theoretical or methodological frameworks. This has led to a ‘plug-and-play’ culture in which automated outputs are prioritised over interpretation, often at the expense of accuracy and nuance. As Bornmann (2015) notes, bibliometric indicators can be easily misapplied when used without sufficient contextual knowledge, especially in institutional or policy settings where decisions carry significant consequences. A competency model should therefore help practitioners distinguish between operational skills and analytical judgement, reinforcing the interpretive core of bibliometric work.

The empirical findings regarding training sources further reinforce the theoretical need for a stronger educational infrastructure. In most LIS programmes, bibliometrics is either absent or limited to an elective module, research or tool-based tutorials. Respondents reported relying heavily on vendor-led training, peer support or self-teaching – approaches that contribute to fragmented and uneven learning experiences. The broader implication is that the LIS field must play a central role in embedding bibliometric competencies within curricula, ensuring that professionals are equipped with both conceptual understanding and practical skills. Training that focuses solely on tool functionality without discussing data sources, metadata structures and interpretive risks is unlikely to build meaningful competence.

In addition, emerging threats and opportunities in the scholarly communication landscape introduce conceptual pressures that current competency models do not sufficiently address. Issues such as questionable publishing, citation manipulation, hijacked journals and AI-generated content (including instances where tools like ChatGPT may fabricate citations) raise new challenges for the ethical use and interpretation of bibliometric data. In parallel, the increasing availability of open and alternative data sources, such as OpenAlex and Overton, requires competencies in data integration and metadata accuracy. These conceptual challenges call for a competencies model that integrates both established principles and emergent demands.

Furthermore, both surveys and workshop data consistently highlight the growing demand for data science and programming skills, signalling a shift in the technical baseline of bibliometric work. While earlier frameworks may have prioritised familiarity with indicators and databases, the future of bibliometrics increasingly requires professionals to handle large-scale datasets, automate workflows and apply computational methods. Yet, as the broader discussion suggests, technical skills are not sufficient. The ability to communicate insights clearly, work collaboratively with researchers and senior leaders and engage with responsible use of metrics discourse remains essential to the professional practice of bibliometrics.

Taken together, the empirical patterns and conceptual reflections point to an inflection point in the field: bibliometric work is no longer a niche or ancillary function but has become a core and strategically significant activity within institutions. The competencies required to perform it effectively must therefore be cultivated and evaluated with the same rigour as the significance of the work itself demands. These findings collectively suggest that the growing demand for bibliometric expertise is not yet matched by formal education.

The emergence of a fragmented learning ecosystem, combined with rapid technological and methodological change, underscores the need for a more structured and inclusive approach to capacity building. Addressing this gap will require not only an updated conceptual model but also institutional commitment to embedding bibliometric training within formal educational frameworks.

In conclusion, this study demonstrates the pressing need for LIS faculties and schools to rethink and enhance their educational programmes, particularly in bibliometrics. While LIS professionals may possess a solid theoretical understanding of bibliometric concepts, significant gaps remain in the practical application of this knowledge and in linking theory and practice. Most participants are employed in libraries, with smaller numbers working in research offices and academic departments. Despite the widespread presence of LIS qualifications among respondents, many did not receive formal training in bibliometrics during their academic studies.

It is also important to acknowledge the limitations of this study. The geographical scope was concentrated primarily in the United Kingdom and Spain, with additional responses from the RedIRIS network, limiting the generalisability of the findings. The absence of participation from Latin America and the lack of responses from North America indicate that future research should seek to engage a broader international community. Moreover, while data were collected in 2020 and 2022, it was not possible to determine whether the same individuals participated in both surveys, creating uncertainty about the consistency of responses over time. Variation in respondents’ roles and professional experiences further complicates interpretation, as small sample sizes prevented meaningful subgroup analysis. In addition, there was no clear indication of which version of the competencies model participants were familiar with, complicating assessments of changes in awareness or application between 2020 and 2022.

Given these limitations, the study’s results should be interpreted with caution. Further research involving a larger and more diverse sample – and addressing both geographical and methodological gaps – is needed to develop a more comprehensive understanding of the bibliometric landscape. Nonetheless, this study provides important insights into the current state of bibliometric education and practice, underscoring the need for more robust, practice-oriented training that bridges the gap between theory and application and offers a clearer pathway for the professional development of bibliometric specialists.

Based on these findings, several practical recommendations emerge for stakeholders responsible for developing bibliometric expertise. For LIS schools, the results underscore the need to embed bibliometrics as a core, not peripheral, component of curricula by introducing scaffolded courses that integrate theoretical foundations with hands-on analytical work, exposure to multiple data sources and training in responsible use of metrics. Institutional managers should establish structured professional development pathways that go beyond tool-specific training, including mentorship schemes, protected time for methodological upskilling and clear competency expectations aligned with job roles and career progression. Policymakers and professional bodies are encouraged to formalise bibliometric competencies at the sector level by developing standards, accreditation mechanisms and guidelines for ethical evaluation practice, thereby ensuring consistent training quality and reducing reliance on vendor-led instruction. Together, these measures would strengthen the professional infrastructure of bibliometrics, promote methodological rigour and support a more coherent, well-prepared workforce across research institutions.

Although directly comparable empirical studies on bibliometric competencies are limited, existing international research highlights similar concerns regarding uneven training pathways and the dominance of tool-centred learning. These parallels situate our study as one of the first region-specific analyses to provide empirical evidence on these issues within the LIS communities of the United Kingdom and Spain and contribute to a growing international conversation on bibliometric capacity building.

This study makes several original contributions to the understanding of bibliometric practice and professional development. First, it provides one of the few empirical examinations of how bibliometric competencies are perceived and enacted across diverse professional roles, revealing a systematic misalignment between self-assessed expertise, actual training pathways and institutional expectations. Second, it identifies the emergence of a distinct yet institutionally unrecognised professional identity –‘the Bibliometrician’– offering new insight into how practitioners position themselves within rapidly evolving research evaluation environments. Third, the study exposes structural weaknesses in current learning ecosystems, demonstrating that vendor-led, tool-centric training has become the de facto educational pathway in the absence of robust LIS-based instruction. Fourth, it advances the field conceptually by proposing a multi-dimensional competencies framework that integrates technical, methodological, ethical and communicative capacities, thereby addressing gaps in existing models. Finally, the study situates bibliometrics at a critical inflection point, arguing that it has become a strategic institutional function requiring formalised competencies, curricular integration and clearer professional recognition. Together, these contributions provide a strong empirical and theoretical foundation for rethinking how bibliometric expertise is defined, cultivated and supported across the LIS and research evaluation communities.

Footnotes

Appendix 1

Acknowledgements

For the purpose of open access, the authors have applied a Creative Commons Attribution (CC-BY) licence to any Author Accepted Manuscript version arising from this submission.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.

Data availability statement

Raw data for the dataset is not publicly available to preserve individuals’ privacy under the European General Data Protection Regulation.