Abstract

The purpose of this study is to explore the factors influencing individuals’ intentions to adopt generative AI, particularly within the context of digital literacy. It examines the impact of human capabilities and the relationship with technology from a transhumanist perspective. The study utilises an integrated framework combining the Unified Theory of Acceptance and Use of Technology (UTAUT) and the information quality dimension of the Information Acceptance Model (IAM). The moderating effect of attitudes towards AI is also analysed. Survey data from 362 participants in Turkey were collected and analysed using partial least squares structural equation modelling (PLS-SEM), supported by multi-group analysis based on digital literacy levels determined through clustering. The findings indicate that information quality, performance expectancy, social influence and attitudes towards AI significantly influence AI adoption intention. However, effort expectancy does not have a significant effect. Multi-group analysis reveals that higher digital literacy strengthens the impact of attitudes towards AI on adoption, and the effect of social influence on attitudes towards AI. Conversely, lower digital literacy strengthens the effect of information quality on adoption intention. This research contributes to the understanding of the role of digital literacy in generative AI acceptance. It provides insights for improving digital competence to facilitate AI adoption, especially in the context of diverse digital literacy levels, highlighting that the moderating role of digital literacy varies across different predictor–outcome relationships, which has not been extensively explored in existing literature.

1. Introduction

Recent advancements in generative artificial intelligence (GenAI), particularly in the realm of creativity and content production, have begun to reshape prevailing assumptions about the roles of humans and machines [1]. Historically, the traditional task paradigm of human–computer interaction assumed that computers would support users in structured, predefined tasks, while creativity and content generation were uniquely human capabilities. However, this assumption has been increasingly challenged by the advancements in artificial intelligence’s capacity to generate content [2].

In addition to content creation, generative AI can also overcome more technical challenges such as generating realistic synthetic data [3] and detecting anomalies (e.g. time series) [4]. GenAI’s potential for generating high-quality content and enhancing human-machine interaction has been acknowledged and adopted across a range of industries. Furthermore, the use of GenAI tools is critical in the transition to intelligent systems that reduce the need for human intervention in the future [5].

The development of GenAI technologies is expected to have a profound impact on global business processes and the global economy. Goldman Sachs Research predicts that these technologies could affect 300 million full-time jobs and potentially contribute to a 7% expansion in global GDP [6]. It is predicted that the GenAI sector could reach $118.6 billion by 2032 [7]. GenAI tools have garnered significant interest in both industrial and academic contexts. These tools contribute to academic research across numerous interdisciplinary applications, ranging from social sciences [8] to the health sciences [9]. Recent studies suggest that GenAI tools, particularly those that leverage large language models, can provide educators and students with powerful resources [10,11].

Despite rapid advancements of GenAI technologies, there is still limited awareness regarding how individuals perceive and adopt these innovations. Existing studies conducted in various contexts, such as the healthcare sector [1], product development [12] and general use [13], have examined users’ intention to adopt these technologies by considering GenAI adoption within the framework of different theoretical models. However, only limited research has examined individuals’ perceptions of the quality of AI-generated information [14] and the impact of their attitudes towards GenAI on the adoption process [15]. Furthermore, these valuable contributions reveal that, particularly in the context of GenAI, the role of digital literacy in shaping individuals’ information evaluation skills in decision-making and technology acceptance processes has not yet been comprehensively addressed.

This study examines the adoption intentions of individuals aged 18 and above in Turkey regarding general everyday use including educational, professional and personal purposes by considering digital literacy levels alongside performance expectancy, effort expectancy, social influence, information quality and attitudes towards artificial intelligence. To this end, a comprehensive conceptual model is proposed, integrating the Unified Theory of Acceptance and Use of Technology (UTAUT) [16] with constructs related to information quality [17] and attitudes towards AI [18]. Digital literacy levels are classified using a clustering method, and a multi-group analysis (MGA) is conducted to compare how different literacy levels influence the GenAI adoption process.

The findings are expected to provide valuable insights into how digital literacy shapes individuals’ trust in AI-generated information and affects their adoption behaviours. Given that the integration of generative AI into daily life represents one of the most profound technological transformations of the 21st century (accelerated by the public release of ChatGPT in 2022) understanding these dynamics is essential not only for academic discourse but also for societal adaptation. This is particularly critical for adults aged 18 and above, who constitute most of the economically active population and directly experience AI’s transformative effects in the labour market. Differences in digital literacy within this group risk creating an ‘AI divide’, whereby unequal skills and competencies lead to disparities in access, usage and benefit from AI technologies. Moreover, concerns about the reliability, credibility and quality of AI-generated information have direct implications for decision-making in high-stakes contexts such as education, healthcare and governance.

From a practical standpoint, the results are expected to guide policymakers in developing digital inclusion strategies, assist educators in designing AI literacy curricula and support technology designers in creating transparent, trustworthy and user-centred AI systems. Furthermore, the study investigates how perceived information quality shapes individuals’ attitudes towards AI, and how this, in turn, indirectly influences the adoption of GenAI, thereby offering a theoretical contribution to technology acceptance models. By integrating cognitive (trust, perceived information quality) and affective (attitude) dimensions into a unified framework, this research extends existing technology acceptance theories and provides a more comprehensive understanding of user behaviour in the era of generative AI. The research questions examined in the study are as follows:

RQ1. How do individuals’ perceptions of performance expectancy, effort expectancy, social influence and information quality-along with their attitudes towards AI-affect their intention to adopt and use GenAI tools?

RQ2. What kind of impact does attitude towards AI have in mediating the influence of other variables on individuals’ intention to adopt and use GenAI tools?

RQ3. How do individuals’ digital literacy levels affect their intention to adopt and use GenAI tools?

2. Conceptual framework and literature review

2.1. Generative artificial intelligence

GenAI is a type of artificial intelligence that can generate new content such as text, images, and audio based on previously learned patterns. GenAI models distinguish themselves from traditional machine learning approaches through their ability to produce novel content by leveraging deep learning techniques [19]. Theoretically, this technology is based on four conceptual frameworks. The first of these is ML, which serves as a fundamental concept for GenAI. Due to its operational principles, ML facilitates GenAI’s ability to generate diverse content by learning from large datasets [20].

Natural Language Processing (NLP) is a field of artificial intelligence that focuses on the structure of human language, enabling computers to understand, interpret and generate text. NLP plays a critical role in generative artificial intelligence, as the accuracy and coherence of the text generated by language models are closely related to a deep understanding of human language [21]. Image processing is a process that involves analysing, processing and manipulating image data [22]. In image-focused generative AI applications, image processing plays a crucial role, particularly in tasks such as image enhancement, compression, filtering and noise reduction. Another key concept is computer vision, which is defined as the ability of computers to comprehend, analyse and interpret images [23]. Given its relevance to the creation of original, high-quality and realistic visual content, computer vision is a crucial aspect of generative AI [24]. In this context, the combined use of computer vision and image processing allows GenAI to operate more effectively in visual tasks.

An expanding body of research examines individuals’ intentions to utilise GenAI tools in professional and personal contexts. Khlaif et al. [25] and Ayyoub et al. [26] investigated educators’ acceptance of GenAI in higher education, while Tiwari et al. [27] and Strzelecki [28] examined the intentions of higher education students to use ChatGPT. Similarly, Nan et al. [13] aimed to examine the factors influencing individuals’ intentions to continually use and recommend ChatGPT for general purpose tasks.

2.2. Technology acceptance

With the advancement of information technologies, various theories have been proposed to explain users’ adoption and use of these technologies. One of the most prominent among these theories is the Unified Theory of Acceptance and Use of Technology (UTAUT). Venkatesh et al. [16] introduced UTAUT to address the limitations of prior models. Since its development, UTAUT has made significant and comprehensive contributions to the literature on technology acceptance and use. Another important theory related to the acceptance of information technologies is the Information Adoption Model (IAM). IAM integrates the Technology Acceptance Model (TAM) and the Elaboration Likelihood Model (ELM) and focuses on the process by which users evaluate and adopt acquired information, along with the factors influencing this process [17]. While UTAUT primarily addresses behavioural intention and technology usage, IAM adds a complementary focus on how users assess the quality and credibility of the information produced. Although UTAUT is a highly useful model, some researchers have suggested that it can be extended by excluding certain factors or incorporating new ones depending on specific contexts [29–31]. In this regard, the adoption of GenAI technologies extends beyond users’ intention to utilise these tools and includes how the information generated by these technologies is perceived and evaluated. While the UTAUT model focuses on individuals’ intentions to adopt a technology, the IAM model examines users’ responses to the quality of information produced by GenAI. In addition, this study retains the ‘attitude’ construct from the original TAM, despite TAM2 and UTAUT introducing ‘perceived usefulness’ as a dominant factor, because attitude captures affective responses such as enjoyment, trust and perceived creativity that are particularly relevant in the context of generative AI. This affective dimension offers explanatory value beyond performance expectancy and perceived usefulness and can mediate the relationship between these constructs and behavioural intention. This study aims to integrate the concept of information quality from the IAM model into UTAUT to provide a more comprehensive understanding of the GenAI adoption process.

The UTAUT theory has served as a reference framework for some researchers examining the intention to use GenAI technologies. For instance, Strzelecki and ElArabawy [32] investigated the ChatGPT usage intentions of higher education students in Egypt and Poland, while Sobaih et al. [33] examined the usage intentions of higher education students in Saudi Arabia. However, there are a limited number of studies in the literature that combine the UTAUT and IAM models. Camilleri [14] conducted a study integrating the UTAUT1/UTAUT2 and IAM models to examine the determinants influencing ChatGPT’s performance expectancy and usage intention. The findings revealed that perceived interactivity positively influences users’ intention to use ChatGPT during the initial phase of its adoption. Moreover, the perception of the information source’s credibility was found to significantly shape users’ expectations regarding ChatGPT’s performance.

2.3. Digital literacy

Digital literacy (DL) is an essential competency for navigating the age of information in which we live. This concept pertains to the integration of three distinct dimensions technical, cognitive and socioemotional [34]. DL is defined as the totality of individuals’ awareness, attitudes and abilities to identify, manage, analyse information, produce new content and communicate with others using digital tools and resources [35]. DL is characterised as a meaningful and critical engagement with technological tools within the context of human-machine interaction, extending beyond mere access to and usage of technology. This process empowers individuals to adopt conscious, critical and analytical approaches in the digital realm. Bawden [36] highlights that digitally literate individuals not only possess the skills to effectively locate, manage and organise digital resources but also the capacity to generate new knowledge and develop innovative projects using these resources.

The growing incorporation of GenAI into daily life highlights the importance of the connection between the adoption of this technology and digital literacy. This connection is evident not only in GenAI’s ability to create content but also in the critical evaluation of such content in terms of reliability and ethical considerations. Floridi and Taddeo [37], emphasised that the ethical use of AI hinges on technological awareness and critical thinking skills. Within this framework, exploring the impact of DL on the adoption of GenAI can offer important insights into adoption processes and support the broader public’s informed integration with this technology.

In the literature review, no research has yet been found that examines the intention to use GenAI based on DL levels within the framework of technology acceptance. However, other studies demonstrated that digital literacy significantly influences digital technology acceptance, either directly or indirectly [38,39].

2.3.1. Model Conceptualisation and Hypothesis Development

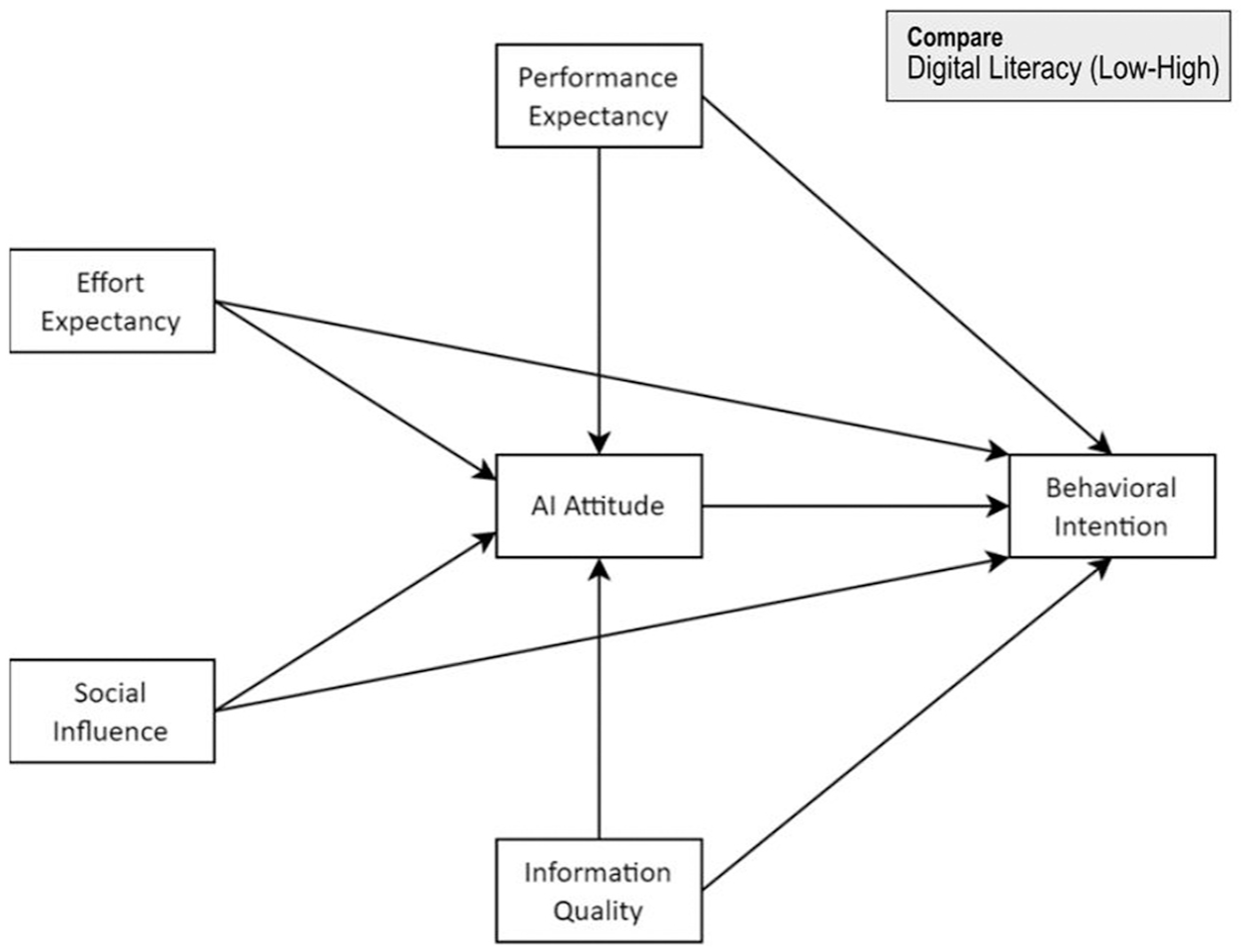

This study applied the UTAUT and IAM models, in conjunction with the attitude construct, to assess individuals’ motivation for adopting generative artificial intelligence tools. Furthermore, the study examined how attitudes towards artificial intelligence operate as a mediating factor in the process of adoption. The conceptual framework developed for the current purpose is given in Figure 1.

Conceptual framework.

2.4. Performance expectancy

Performance expectancy (PE) according to the UTAUT theory, performance expectancy is a fundamental construct influencing individuals’ intentions to adopt and utilise technology. Venkatesh et al. [40] defines this construct as a person’s conviction that employing a technological solution will improve job performance. Similarly, Luo et al. [41] argued that performance expectancy serves as a key factor in explaining users’ intention to embrace a technology. A growing body of research suggests that PE plays a crucial role in shaping users’ intentions to adopt technologies [1,10,14,32,33,42].

PE from technology significantly influences the attitudes users adopt towards it. Various studies report that PE is a significant factor in positively influencing attitudes towards artificial intelligence technologies [15,43]. However, Chatterjee and Bhattacharjee [30] determined that performance expectancy had no substantial influence on attitudes in their investigation of the integration of AI technologies among university students.

In this study, PE pertains to the belief that GenAI tools can enhance productivity in routine or specific tasks. Accordingly, we put forth the subsequent hypotheses:

H1. There is a positive relationship between PE and behavioural intention.

H2. PE is positively associated with attitudes towards AI.

2.5. Effort expectancy

Effort expectancy (EE) encapsulates individuals’ beliefs about how effortlessly and straightforwardly they can interact with a given technology [40]. EE influences how easily users can integrate a technology into their daily routines, positioning it as an essential aspect of technology adoption [44]. Numerous studies indicate that the perceived ease of use of technology plays a crucial role in shaping individuals’ decisions to adopt it [10,14,28,32,45]. However, in the Egyptian sample of Strzelecki and ElArabawy [32] study and in the work of Sobaih et al. [33], it has been concluded that effort expectancy does not significantly influence users’ behavioural intention towards ChatGPT adoption.

Like PE, EE serves as a pivotal factor in influencing users’ perceptions and disposition towards technology adoption. Extensive experimental investigations have shown that the perceived effort required significantly affects attitudes within the realms of AI [30] and GenAI [15]. However, Alzahrani [43] found that EE did not significantly affect attitudes in his study on higher education students’ perceptions and behaviours regarding AI. In this study, EE denotes the anticipated simplicity in employing GenAI technologies and the minimal cognitive effort required to engage with these tools. Building upon this foundation, we propose the subsequent hypotheses:

H3. There is a positive relationship between EE and behavioural intention.

H4. EE is positively associated with attitudes towards AI.

2.6. Social influence

Social influence (SI) refers to the extent to which influential individuals encourage or support the adoption of a specific technology [40]. This construct corresponds to the concept of subjective norm, indicating informal agreements among peer groups [46]. In this context, subjective norm suggests that when individuals perceive an expectation for certain conduct from those around them, they are inclined to demonstrate that conduct, even if they do not personally approve of it or its outcomes [47]. Literature review demonstrates the significant impact of social influences on individuals’ intentions regarding the adoption of generative artificial intelligence [14,28,32,33,45]. However, social influence demonstrated no discernible effect on the intention to utilise generative AI, as reported by Foroughi et al. [48] and Al-Emran et al. [42].

In addition, the effect exerted by social influence upon attitude has been empirically confirmed across numerous studies, highlighting it as a primary determinant of attitude [15,49,50]. In this study, SI is conceptualised as the extent of users’ perception that significant others endorse their adoption of GenAI technologies. The hypotheses proposed are as follows:

H5. There is a positive relationship between SI and behavioural intention.

H6. SI is positively associated with attitude towards AI.

2.7. Information quality

Information quality (IQ) is a crucial factor in technology acceptance [17,51]. It pertains to the degree to which a message is perceived as current, clear and useful by an individual [52]. Users frequently express concerns regarding the precision and reliability of information delivered by technology-based systems. High-quality information positively influences users’ perceptions of technology, leading to increased user satisfaction and higher rates of technology adoption [53]. Consequently, the quality of information is crucial in shaping users’ acceptance of technology.

Several empirical studies have explored the indirect influence of information quality on individuals’ intention to use GenAI. For instance, Camilleri [14] found that the perceived quality of information provided by ChatGPT did not have a significant impact on PE. Conversely, Nan et al. [13] identified a positive relationship between IQ and ChatGPT adoption, mediated by confirmation and satisfaction. Furthermore, while some studies suggest that IQ plays a crucial role in shaping individuals’ technology adoption intentions [54,55], Lee et al. [56] reported no significant effect of IQ on users’ intention to adopt food delivery applications.

IQ significantly influences user attitudes. Users’ trust in technology grows in proportion to the perceived reliability, accuracy and timeliness of the information, fostering a favourable perception of the technology [57]. Extensive research has consistently shown that information quality plays a crucial role in shaping user attitudes, exerting a significant positive influence [58,59]. In this study, IQ denotes individuals’ assessments of the perceived quality of information produced by GenAI tools. Accordingly, the research hypotheses are as follows:

H7. IQ has a positive relationship with behavioural intention.

H8. IQ is positively associated with attitudes towards AI.

2.8. AI attitude

AI attitude (AIA) denotes an individual’s inclination to perceive and assess an entity, person, or context in either a positive or negative way [60]. It is a critical construct in technology acceptance, as it encompasses the general emotional response to technology and significantly shapes one’s intention to adopt it. Within the framework of the TAM proposed by Davis et al. [61], attitude is recognised as a strong determinant of behavioural intention. Research has demonstrated that a favourable attitude towards AI [30,43,62,63] and GenAI [27,64] enhances the probability of adopting these technologies. In this study, AIA is conceptualised as users’ comprehensive assessment of the services and functionalities offered by GenAI tools.

Although the attitude construct has shown contextual variability and has been criticised in prior models for overlapping with constructs such as perceived usefulness (TAM2, UTAUT), recent studies indicate that in emerging technology contexts (particularly with content-generating AI) attitude captures affective dimensions (e.g. enjoyment, trust, perceived creativity) that are not fully explained by cognitive beliefs alone. These affective evaluations can be especially salient when users interact with generative systems that produce novel and unpredictable outputs. Accordingly, the inclusion of AIA in the present model allows for a more nuanced understanding of how both cognitive and emotional factors jointly shape behavioural intention, while also enabling the examination of its mediating role between key predictors (PE, EE, SI, IQ) and intention.

In light of this, we propose the following hypotheses:

H9. There is a positive relationship between AIA and behavioural intention.

H10. The positive relationship between (a) PE, (b) EE, (c) SI, (d) IQ and behavioural intention is mediated by AIA.

H11. The impact of (a) PE, (b) EE, (c) SI, (d) IQ on AIA is likely to differ between two DL groups (Low DL and High DL).

2.9. Behavioural intention

Behavioural intention (BI) serves as a crucial element for explaining technology adoption and use [61]. BI is characterised by the readiness of individuals to employ a technology for particular objectives [40]. Numerous studies investigating the adoption of GenAI have treated behavioural intention as an independent variable [10,32,33,45,48]. In the present research, BI denotes the readiness to engage with GenAI technologies. We examine how PE, EE, SI, IQ, AIA and DL level impact individuals’ intention to adopt GenAI technologies. Thus, we put out the following theory:

H12. The impact of (a) PE, (b) EE, (c) SI, (d) IQ on behavioural intention is likely to differ between two DL groups (Low DL and High DL).

H13. The role of AIA as a mediator in the connections among (a) PE, (b) EE, (c) SI, (d) IQ and BI is expected to vary across the two DL groups (Low DL and High DL).

3. Research design

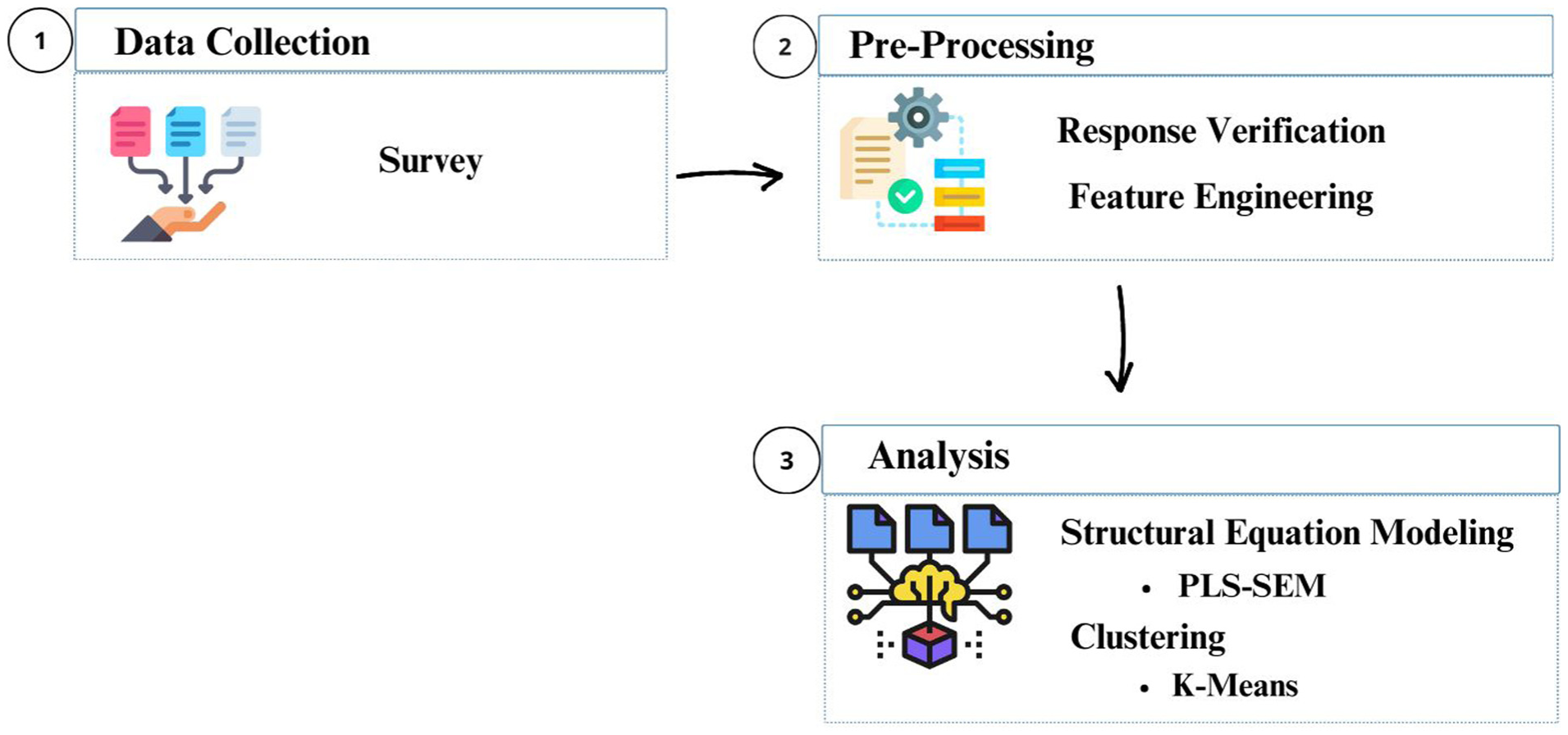

In this section, the processes involved in preparing the dataset for the study, along with the theoretical foundations of the methods employed in the analyses phase, are detailed. The study’s workflow is illustrated in Figure 2.

Flow chart of the study.

3.1. Data collection

In this study, a purposive sampling method was employed to target individuals with at least a basic level of digital literacy and prior experience with AI, to examine the adoption of generative AI technologies. Data were collected through an online survey to ensure geographical diversity, enable rapid and efficient participant access, and maintain consistency with the digital context of the research. This approach enhanced both the validity and applicability of the study. The dataset was obtained between 1 February 2024 and 31 March 2024, targeting individuals aged 18 and above, including students and professionals. The survey was structured in two parts: the first section collected demographic details (age and gender), while the second section included 27 items designed to measure key constructs within the proposed conceptual framework (see Appendix 2). Most survey items were rated on a 5-point Likert-type scale, except for those assessing attitudes towards AI, which employed a 10-point Likert-type scale. To enhance clarity and ensure comprehensibility, a pilot test was conducted with a small participant group, and their feedback was integrated into the final version of the survey. This empirical research adhered to the university’s research ethics guidelines, and all necessary permissions were obtained. A total of 364 participants voluntarily completed the survey.

3.2. Pre-processing

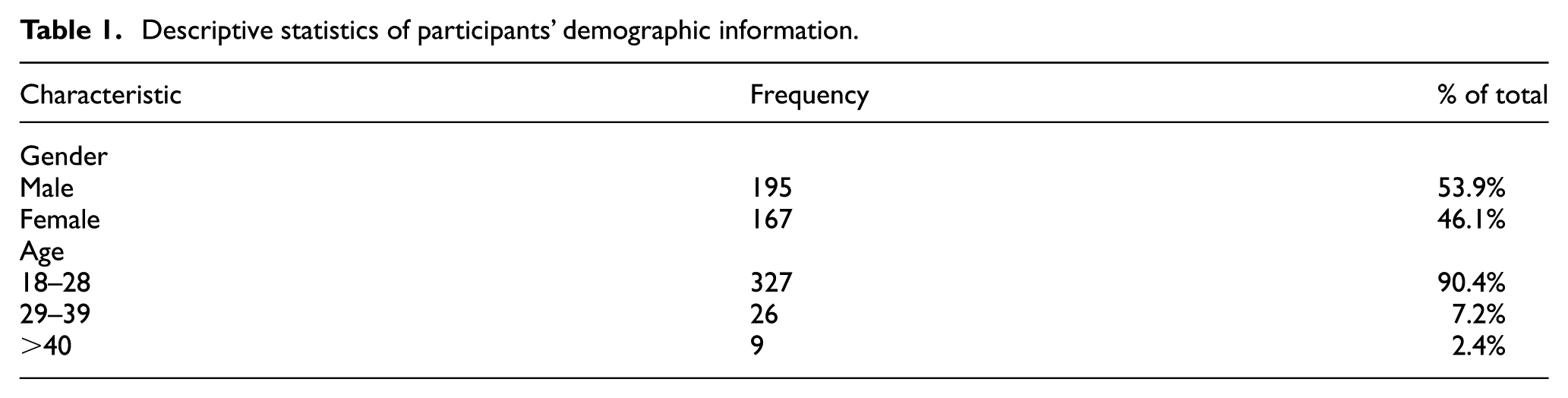

During the initial data screening, it was found that one participant had selected the same response for all survey items, while another had provided unrealistic age information. To uphold the accuracy and reliability of the dataset, these entries were discarded, reducing the final sample to 362 participants. Descriptive statistics for the demographic characteristics of these 362 valid responses are presented in Table 1.

Descriptive statistics of participants’ demographic information.

To evaluate digital literacy levels, a clustering analysis was performed using a dataset composed of participants’ responses to digital literacy items. The dataset was restructured through feature engineering, which refers to the process of generating new input variables from raw data. Prior research suggests that feature engineering can support the identification of meaningful patterns in clustering algorithms [65]. Accordingly, the dataset was enriched by including both the average scores calculated as the arithmetic mean of each participant’s responses to the 10-item digital literacy scale, and the groups formed based on the frequencies of their answers – grouped as ‘low’ for responses of 1–2 (‘disagree’), ‘medium’ for 3 (‘neutral’) and ‘high’ for 4–5 (‘agree’) on the 5-point Likert-type scale.

As shown in Table 1, the study sample comprised 195 (53.9%) male participants and 167 (46.1%) female participants. To facilitate analysis, participants were divided into three distinct age brackets: 18–28, 29–39, and 40 and above. The majority of respondents belonged to the youngest cohort (n = 327, 90.4%), while a smaller proportion fell within the 29–39 range (n = 26, 7.2%). The least represented group comprised individuals aged 40 and older (n = 9, 2.4%).

3.3. Analysis

This study adopted a multifaceted analytical approach, integrating partial least squares structural equation modelling (PLS-SEM) using SmartPLS 4 [66] alongside cluster analysis executed in R Studio (version 4.3.1).

3.3.1. PLS-Sem

PLS-SEM is a variance-oriented structural equation modelling (SEM) approach designed to analyse intricate relationships between observed and latent constructs without imposing strict distributional assumptions [67]. Its core objective is to enhance predictive accuracy and explanatory power for the key dependent variable while simultaneously identifying the underlying factors that drive its variation [68]. PLS-SEM employs a model estimation approach that synthesises indicator variables into composite constructs through a linear combination process within the measurement framework [69]. This method enables a nuanced representation of latent variables, enhancing the model’s capacity to capture intricate relational dynamics.

3.3.2. K-means

The k-means clustering method is a nonparametric algorithm that partitions data into k distinct clusters [70]. The algorithm aims to maximise intra-cluster similarity while minimising inter-cluster similarity by iteratively assigning data points to clusters. The process begins with k randomly selected centre points, to which data points are assigned based on proximity. The centres are then recalculated, and this process is repeated until the centre points stabilise [71].

The k-means algorithm is a straightforward, fast and effective clustering method; however, it has limitations, such as requiring the number of clusters to be specified in advance. Various methods have been proposed in the literature to address this limitation. In this study, the Silhouette method, recognised for its effectiveness, was selected to determine the optimal number of clusters [72]. The Silhouette method evaluates the average proximity of a data point within its cluster compared with its closest distance to points in other clusters. To assess cluster quality and identify the appropriate number of clusters, average Silhouette scores are calculated for different cluster configurations; the highest score indicates the most suitable number of clusters [73].

The number of clusters in the study was determined to be two using the Silhouette method (Appendix 1). Based on this, participants were categorised as having either ‘low’ or ‘high’ digital literacy levels. Subsequently, a MGA was conducted to identify differences between the data groups defined by these digital literacy levels.

4. Results

4.1. Measurement model

In this investigation, partial least squares path modelling was conducted using SmartPLS 4.0, aiming to validate the reflective constructs and ensure reliable measurement [66]. The estimation involved the consistent-PLS method, applied with 5000 resamples. Given that every research variable was modelled as reflective, consistent-PLS was selected to minimise the likelihood of inflated Type I and Type II errors [74]. Furthermore, prior studies indicate that consistent-PLS matches covariance-based SEM in terms of both parameter estimation precision and statistical power [75].

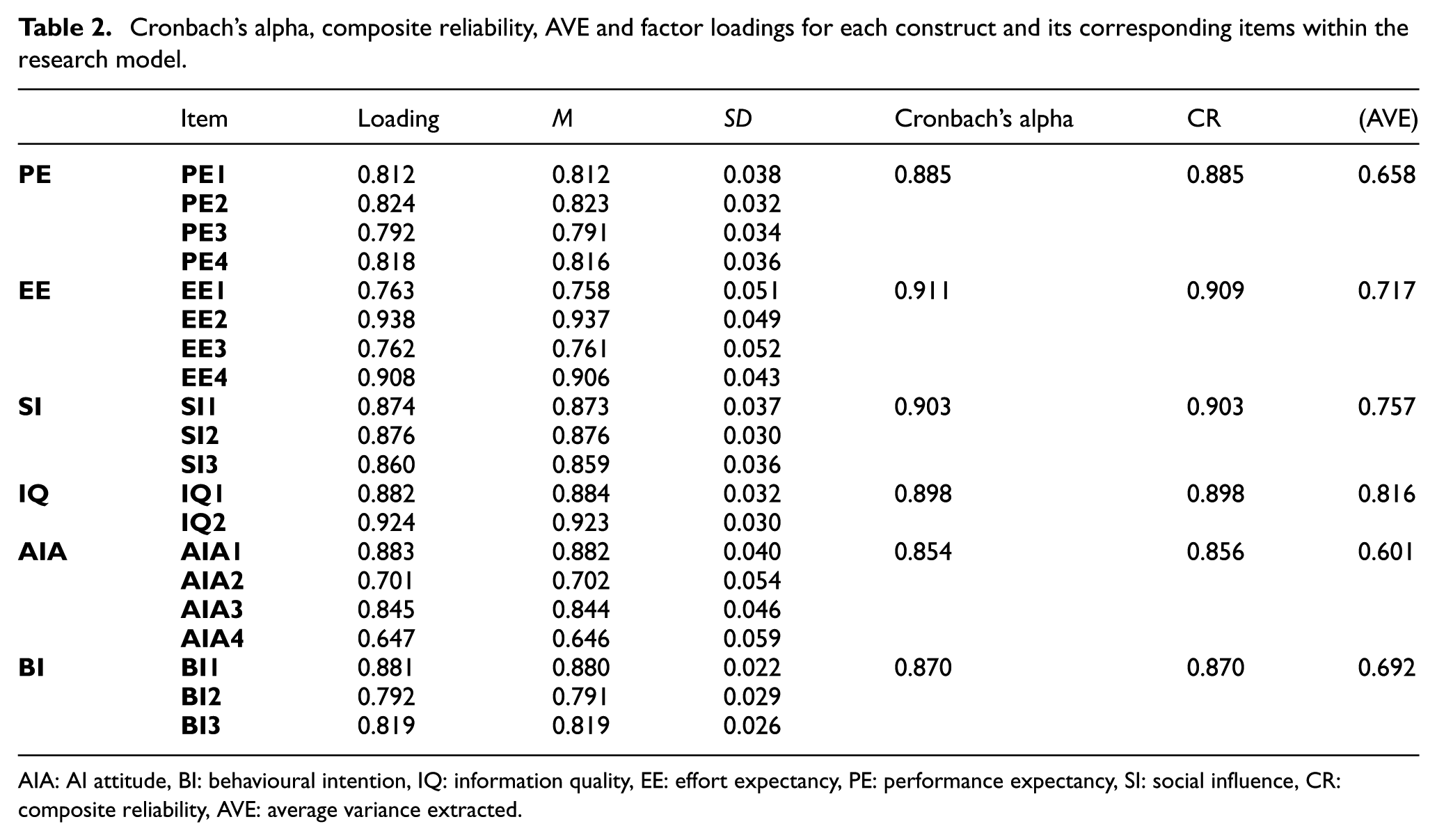

Prior to examining the structural model, a thorough assessment of each construct’s validity and reliability was performed. Specifically, the evaluation focused on three aspects: internal consistency, convergent validity and discriminant validity. Cronbach’s alpha and composite reliability (CR) were employed to measure internal consistency, while convergent validity was determined using the average variance extracted (AVE) from item loadings. In line with established benchmarks, factor loadings should meet or exceed 0.70, and Cronbach’s alpha along with CR should surpass 0.70. Furthermore, the AVE must exceed 0.50 [76,77]. Table 2 presents the findings that align with these criteria.

Cronbach’s alpha, composite reliability, AVE and factor loadings for each construct and its corresponding items within the research model.

AIA: AI attitude, BI: behavioural intention, IQ: information quality, EE: effort expectancy, PE: performance expectancy, SI: social influence, CR: composite reliability, AVE: average variance extracted.

As shown in Table 2, factor loadings range from 0.647 to 0.938. Although Hair et al. [75] recommend loadings of 0.70 or higher, items scoring between 0.40 and 0.70 can be retained if composite reliability (CR) and average variance extracted (AVE) exceed their respective cutoffs. In this study, AVE and CR values met these criteria, so the items below 0.70 were retained (see Table 2).

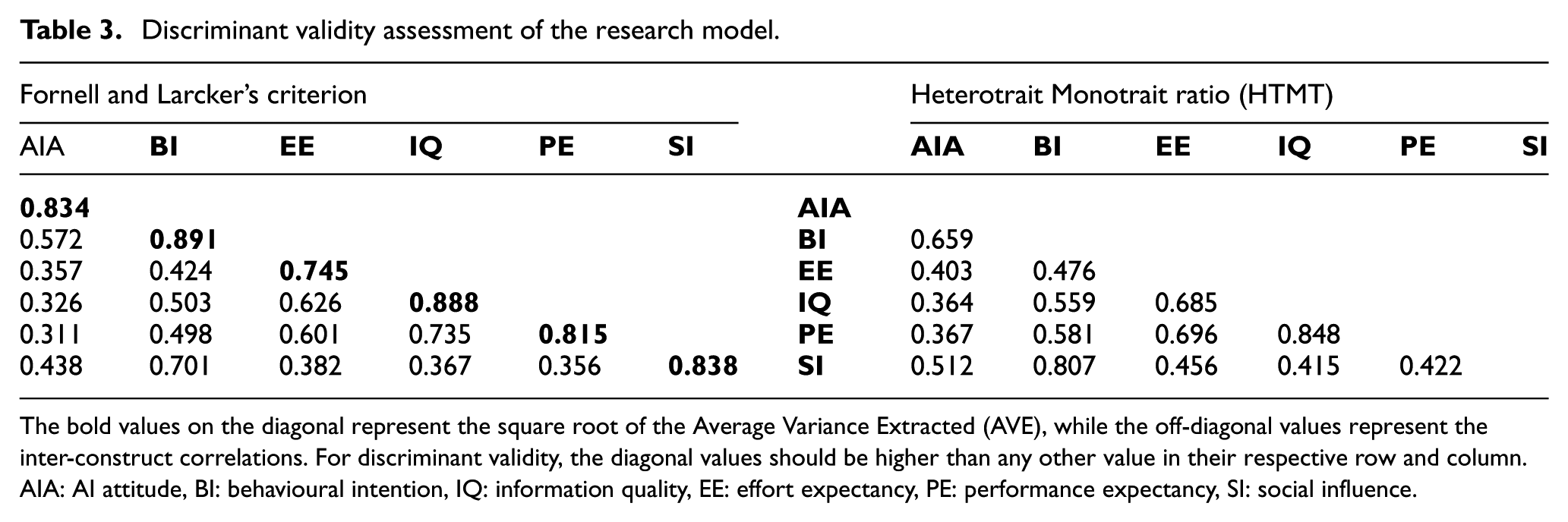

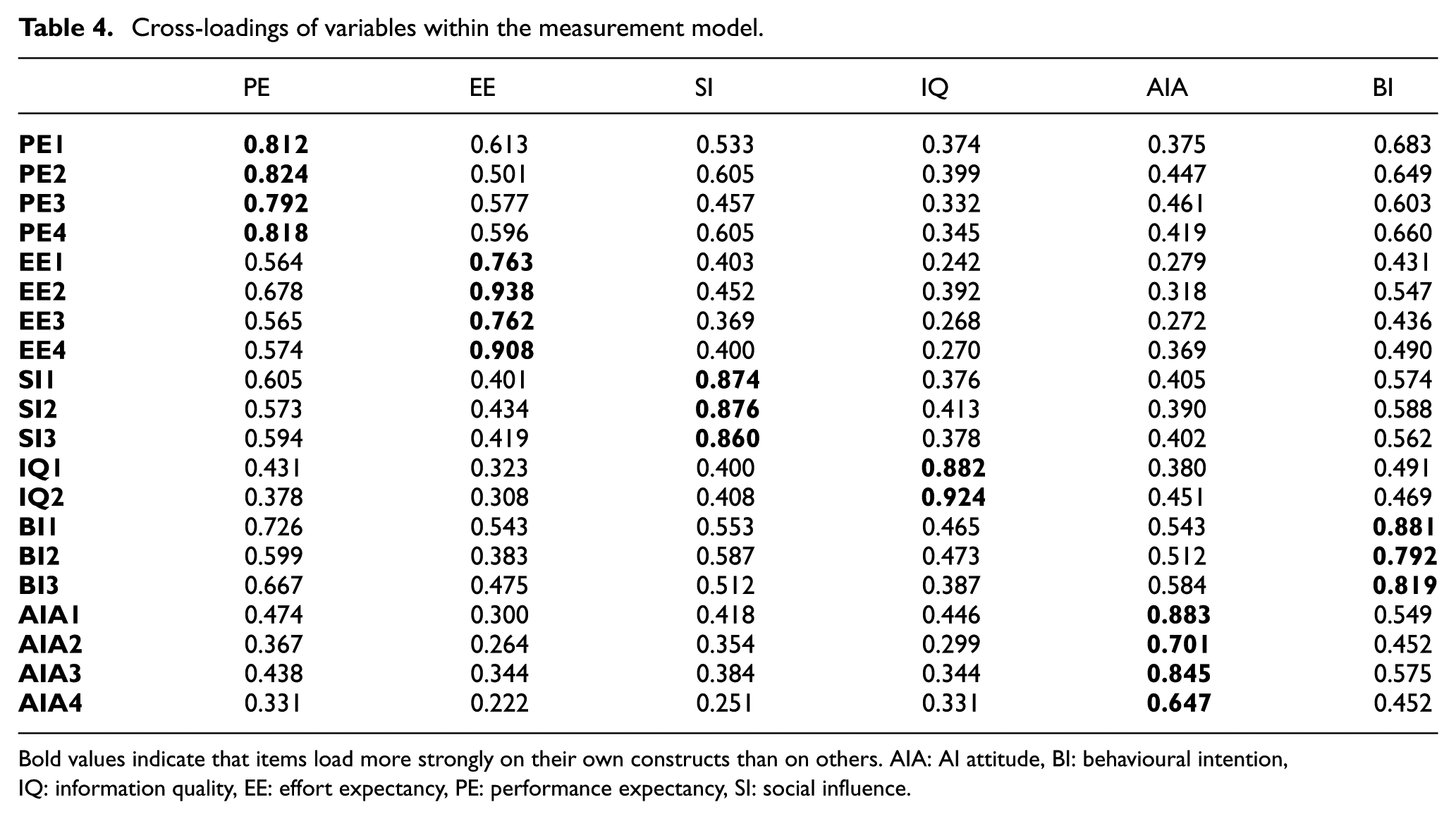

Cronbach’s alpha values (0.854–0.911), CR values (0.856–0.909) and AVE (0.601–0.816) confirm that internal consistency reliability and convergent validity requirements are satisfied. Discriminant validity was examined using the Fornell–Larcker criterion, the HTMT approach [78] (Table 3) and cross-loadings (Table 4). In particular, the square roots of each construct’s AVE (shown in bold) exceeded the inter-construct correlation coefficients, and the HTMT values remained below 0.90. Furthermore, cross-loadings demonstrated that each item chiefly aligns with its corresponding construct [79], confirming discriminant validity.

Discriminant validity assessment of the research model.

The bold values on the diagonal represent the square root of the Average Variance Extracted (AVE), while the off-diagonal values represent the inter-construct correlations. For discriminant validity, the diagonal values should be higher than any other value in their respective row and column.AIA: AI attitude, BI: behavioural intention, IQ: information quality, EE: effort expectancy, PE: performance expectancy, SI: social influence.

Cross-loadings of variables within the measurement model.

Bold values indicate that items load more strongly on their own constructs than on others. AIA: AI attitude, BI: behavioural intention, IQ: information quality, EE: effort expectancy, PE: performance expectancy, SI: social influence.

4.2. Structural model

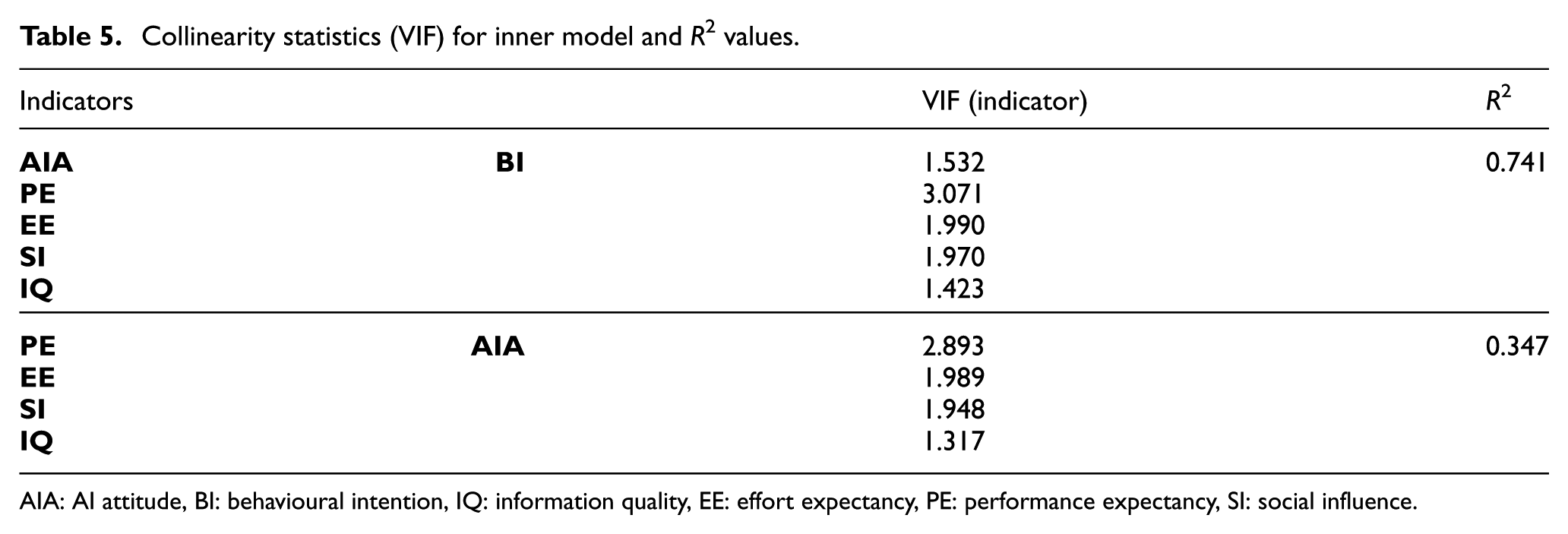

Before estimating the structural model, variance inflation factor (VIF) values for each indicator and latent construct were examined to confirm that multicollinearity was not problematic. The VIF scores, ranging from 1.317 to 3.071, are within acceptable limits [75]. In addition, the saturated model’s square root mean residual (SRMR) value of 0.04 indicates a satisfactory level of model fit, remaining below the recommended threshold of 0.08 [78].

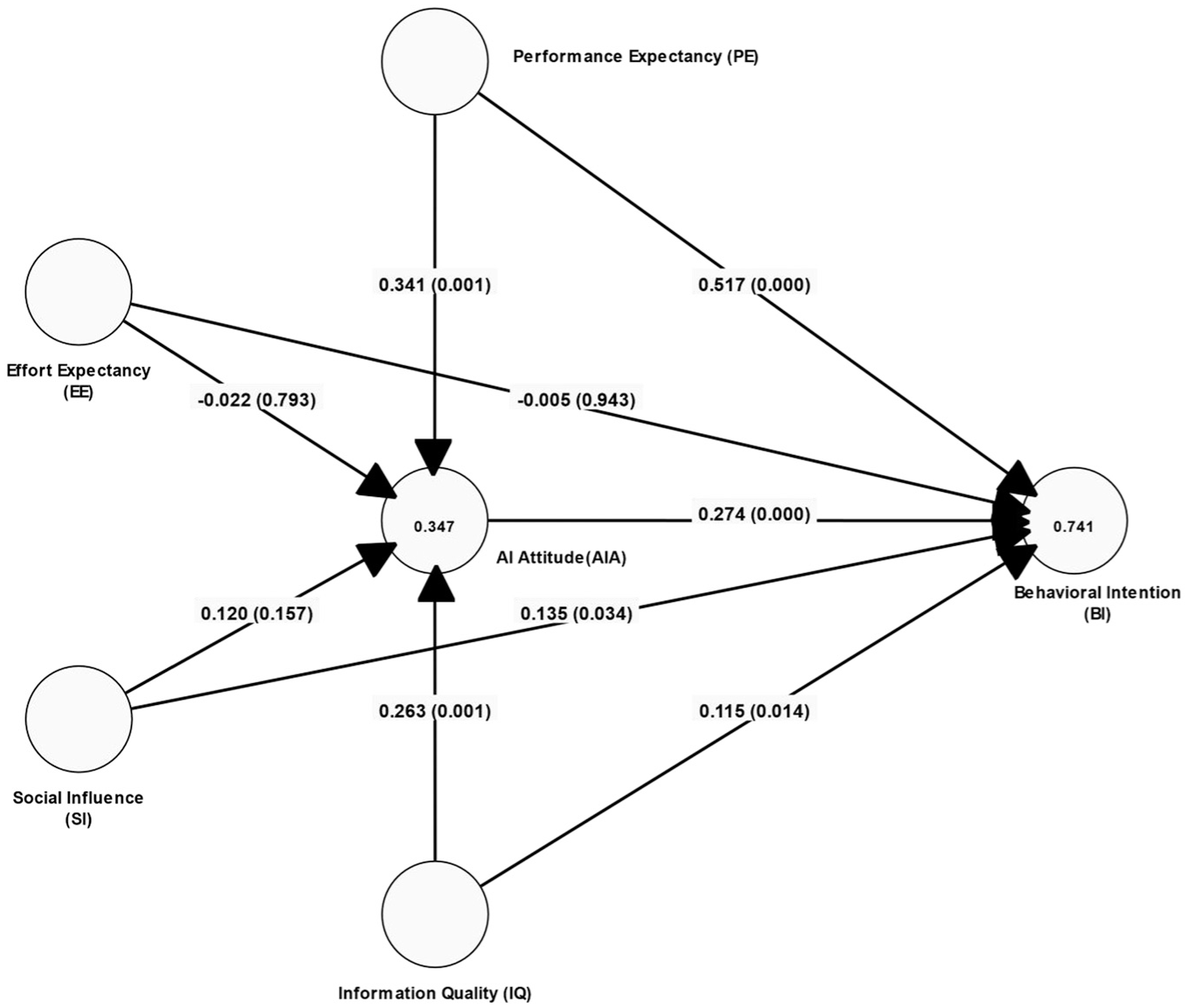

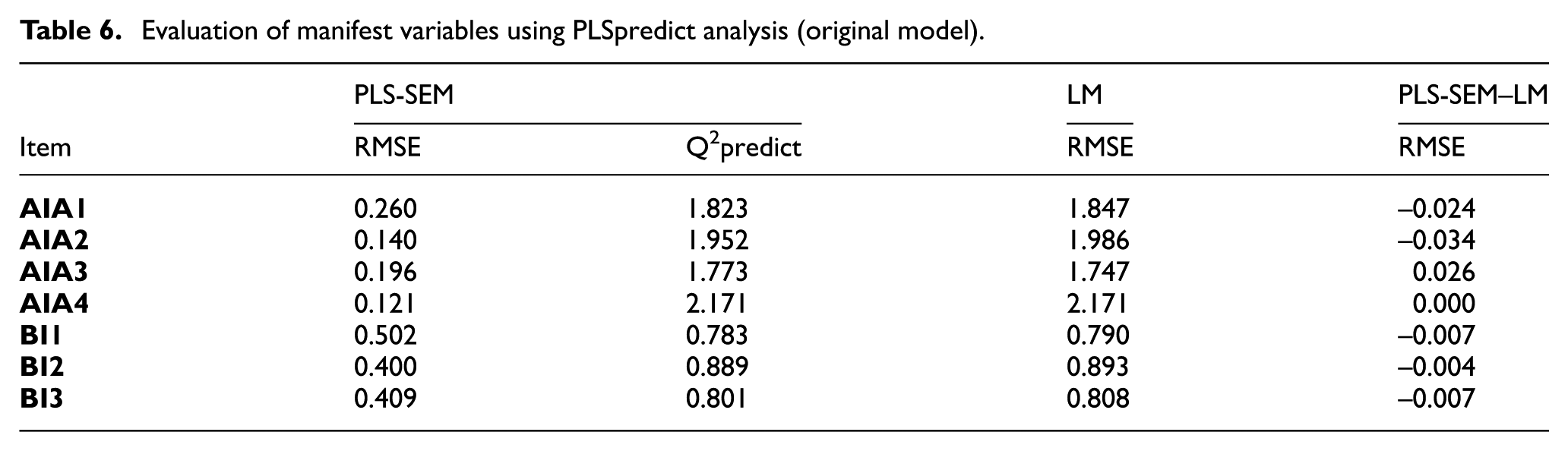

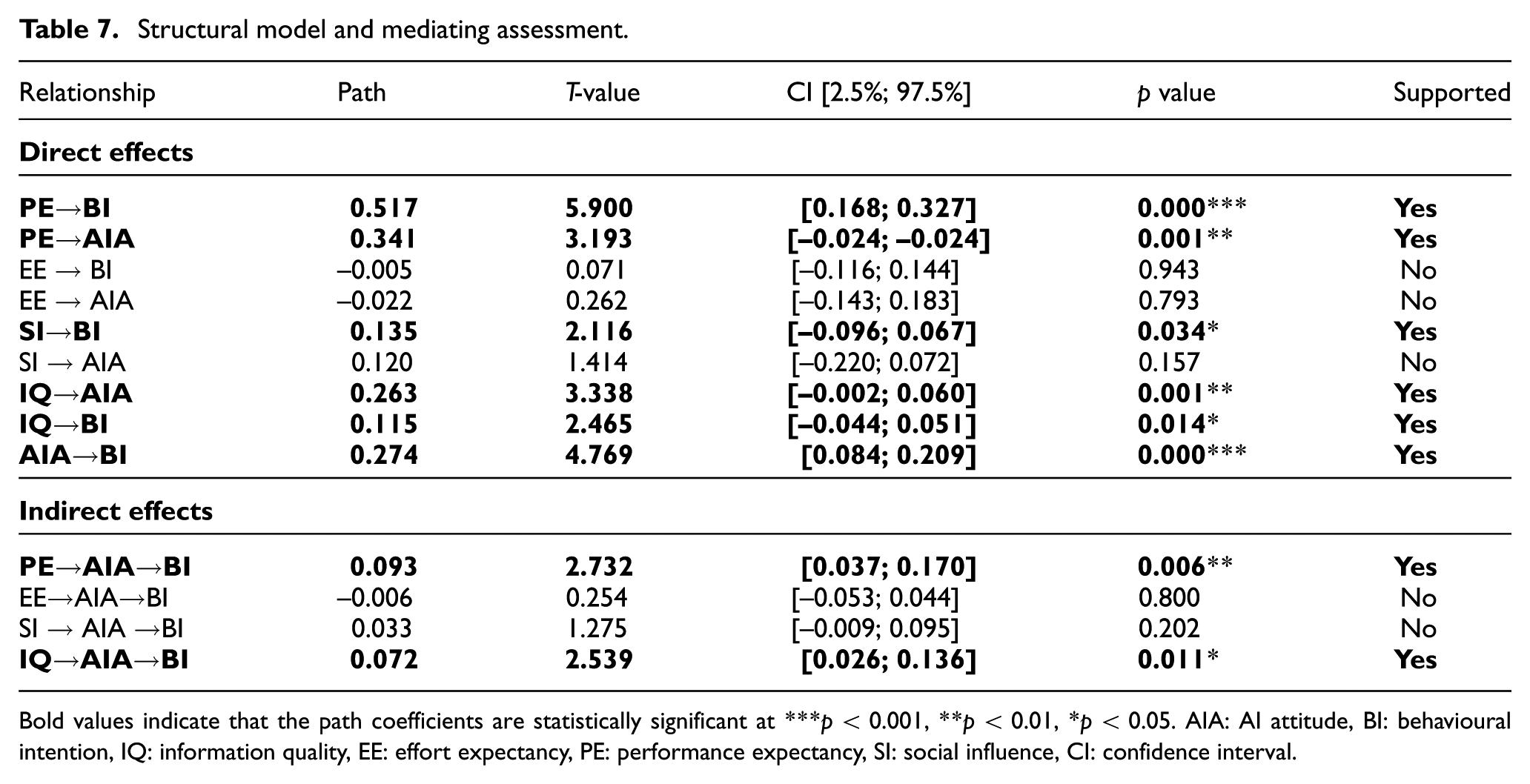

The structural model was evaluated through explained variance (R2), path coefficients (β) and their corresponding t-values. Notably, behavioural intention and AI attitude achieved R2 values of 0.741 and 0.347, respectively, surpassing the suggested 10% threshold [80] (see Figure 3, Table 5). The model’s predictive performance outside the estimation sample was then assessed using the PLSpredict procedure, employing 10-fold cross-validation with a single repetition (Table 6). As illustrated in Table 7, each endogenous construct’s indicators exhibited superior predictive outcomes compared with baseline values from the training sample, with all predictions exceeding zero.

PLS-SEM analysis results.

Collinearity statistics (VIF) for inner model and R2 values.

AIA: AI attitude, BI: behavioural intention, IQ: information quality, EE: effort expectancy, PE: performance expectancy, SI: social influence.

Evaluation of manifest variables using PLSpredict analysis (original model).

Structural model and mediating assessment.

Bold values indicate that the path coefficients are statistically significant at ***p < 0.001, **p < 0.01, *p < 0.05. AIA: AI attitude, BI: behavioural intention, IQ: information quality, EE: effort expectancy, PE: performance expectancy, SI: social influence, CI: confidence interval.

Subsequent analysis focused on the distribution of prediction errors to identify the appropriate predictive statistic; because these errors did not follow a symmetrical pattern, RMSE was used to measure predictive accuracy. Across every indicator, the PLS-SEM approach yielded lower RMSE values than the corresponding linear model (LM) analysis, demonstrating the model’s robust predictive capability [75,81].

When the structural model results are examined, the variables that positively affect the behavioural intention to use generative artificial intelligence the most, respectively, are: PE (β = 0.517, t = 5.900, p < 0.001), AIA (β = 0.274, t = 4.769, p < 0.001), SI (β = 0.135, t = 2.116, p < 0.05) and IQ (β = 0.115, t = 2.465, p < 0.05). The effect of EE is not significant. The variables that positively affect AIA the most, respectively, are PE (β = 0.341, t = 3.193, p < 0.001) and IQ (β = 0.263, t = 3.338, p < 0.01). The effect of EE and SI is not significant. When the model is examined in terms of indirect effects, AIA is positive through PE

4.3. Multi-group analysis

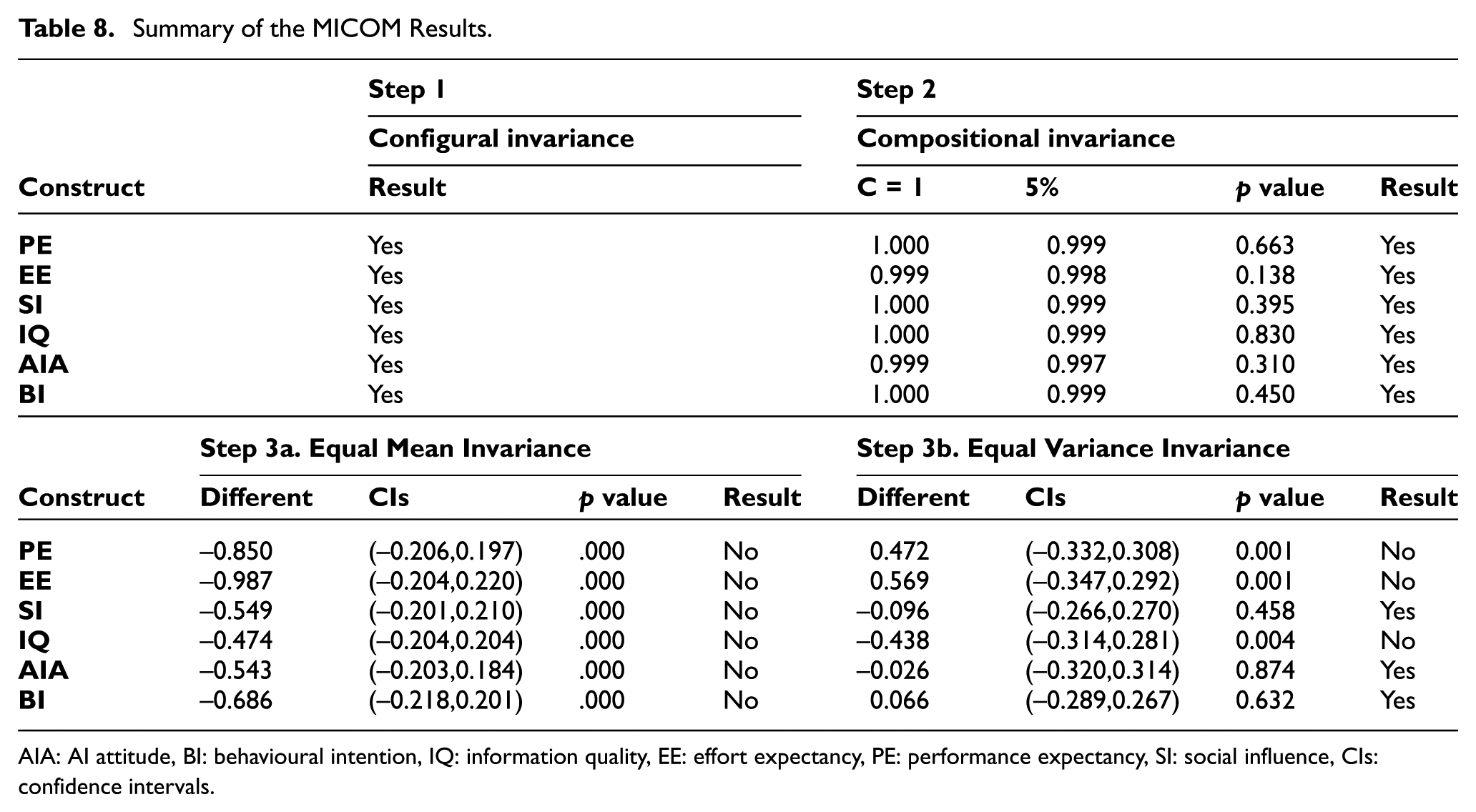

Within the multi-group context, the MICOM procedure was implemented for the PLS-SEM framework, employing 5000 permutations and two-tailed tests at a 0.05 significance threshold. The assessment progressed through three stages. First, at the configural invariance stage, both subgroups were confirmed to share the same underlying model structure, thereby meeting the initial requirement. Next, during the compositional invariance stage, permutation tests showed no statistically significant differences in indicator weights and loadings, fulfilling the second criterion (Table 4). Finally, in the evaluation of mean and variance equality, partial invariance emerged-while certain composites exhibited consistent means and variances across the groups, some divergences were observed (Table 8). Consequently, only partial measurement invariance was achieved, indicating that although the model is largely valid and comparable across subgroups, not all composite means and variances coincide [75].

Summary of the MICOM Results.

AIA: AI attitude, BI: behavioural intention, IQ: information quality, EE: effort expectancy, PE: performance expectancy, SI: social influence, CIs: confidence intervals.

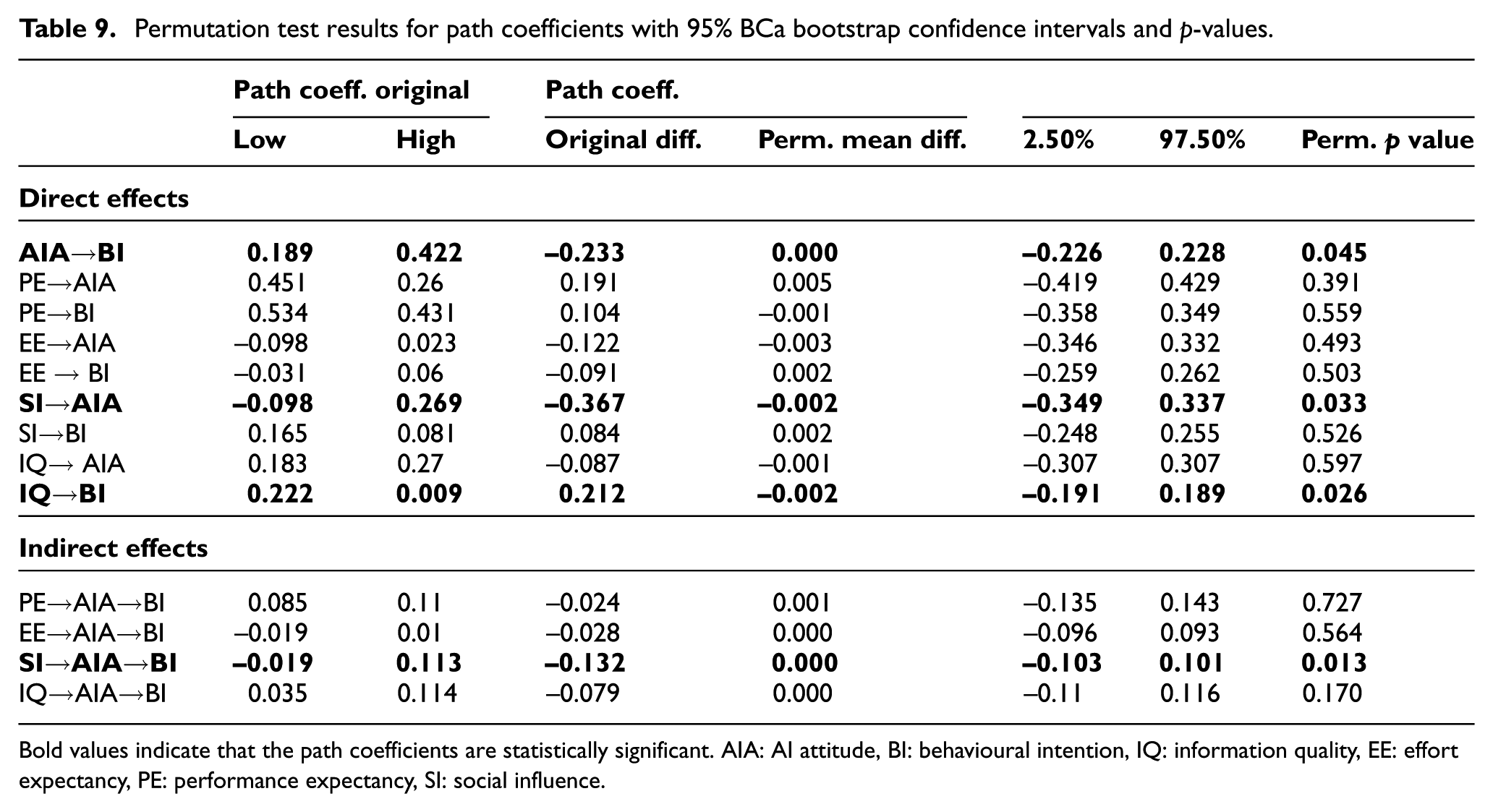

Subsequent comparisons of the path coefficients were carried out using a nonparametric Permutation test aligned with the PLSc algorithm, reflecting the nonparametric nature of PLS-SEM (see Table 9).

Permutation test results for path coefficients with 95% BCa bootstrap confidence intervals and p-values.

Bold values indicate that the path coefficients are statistically significant. AIA: AI attitude, BI: behavioural intention, IQ: information quality, EE: effort expectancy, PE: performance expectancy, SI: social influence.

DL significantly moderated the relationship between AIA and BI (βdifference = –0.233; p < 0.05), IQ and BI (βdifference = 0.212; p < 0.05) as well as SI and AIA (βdifference = –0.367; p < 0.05). High DL group had significantly higher effect AIA on BI (βlowDL = 0.189, βhighDL = 0.422) and SI on AIA (βlowDL = –0.098, βhighDL = 0.269). On the other hand, Low DL group had significantly higher effect IQ on BI (βlowDL = 0.222, βhighDL = 0.009). In terms of indirect effects, results show that DL only moderates SI

5. Discussion

This study examines the factors influencing the adoption of generative AI using a UTAUT-based framework. The findings indicate that the model explains 74% of the variance in the intention to use AI and 34% of the variance in attitudes towards AI.

The research results confirmed the positive effect of PE on BI, indicating that a person’s belief that using generative AI will improve their job performance will increase their intention to use generative AI. This result aligns with previous studies in the literature. Strzelecki and ElArabawy [32] found in their study that PE was the most important variable affecting BI. AIA is another factor that positively influences BI. That is, the more positive an individual’s AIA is, the greater the intention to use GenAI. Prior research has demonstrated that AIA plays a crucial role in shaping the intention to adopt GenAI [27,64]. The accuracy of the information produced by Generative AI is an important topic of discussion. The response performance of newly developed language models is evaluated and is considered an important indicator in this field. Our study investigated how the perceived quality of information produced by GenAI influences usage intention and found that trust in such information has a positive impact on the willingness to adopt GenAI. While prior research largely supports this finding [54,55], other studies suggest that this variable does not have a meaningful impact [56]. Our findings indicate that the SI variable has a relatively low but significant impact on BI. This result aligns with prior research suggesting that SI contributes to individuals’ motivation to adopt GenAI technologies [14,28,32,33,45] but contradicts the studies of Al-Emran et al. [42] and Foroughi et al. [48]. The low impact of SI on BI, combined with the technological diffusion brought about by globalisation and the widespread availability of GenAI, may explain the relatively low social influence on GenAI adoption intentions, as individual decisions are influenced by broader factors rather than the social environment [82].

Finally, our study indicates that EE has no substantial impact on BI. This is surprising. Because studies in the literature generally indicate that EE has a significant and positive impact on BI [10,14,28,32,33,45]. It is stated that significant relationships in literature are generally observed in studies in a specific business context [12]. This result may have emerged because the general GenAI usage contexts of individuals were considered in this study. Studies that reveal similar findings suggest that this may stem from the perception that the relevant sample group finds a particular technology easy to use [83] or their familiarity with that technology [84]. The fact that the sample group studied in this study consisted primarily of young individuals who can be called digital natives may also have contributed to this situation.

When the findings regarding the variables affecting the attitude towards GenAI were examined, it was seen that PE and IQ were positive and significant, while EE and SI were not significant. In the literature, PE is generally observed to have a positive impact on AIA [15,43]. This finding differs from Chatterjee and Bhattacharjee [30]. The possible reason for this finding may be that individuals assume that GenAI will help them do their jobs more effectively [85]. In terms of IQ, our findings indicate similar results with previous studies [58,59]. In terms of the effect of EE and SI, the literature results are generally inconsistent. While studies on this subject mostly show that EE affects AIA positively [15,30], some studies are also consistent with our finding [43]. It may be possible that the effect of EE on AIA emerges in results within a specific business context, as in the case of BI-related results. This study determined that the effect of SI on AIA was not statistically significant. However, previous studies mostly emphasise that SI affects AI attitude positively [15,49,50].

Although the studies examining the mediation effect of AI attitude are limited, it is seen that the same patterns apply here as in the direct effects of AI attitude. The findings regarding how AI attitude mediates the effect of the variables in the model on BI show that PE and IQ affect BI on AI attitude. Lee et al. [15] found that AIA mediates the effect of PE on BI. In addition, our study found that the mediation effect of AIA on EE and SI was not significant. This finding contradicts the study of Lee et al. [15]. The reason for this contrast can be explained by the possibility that individuals may have reflected their attitudes on the subject in their BI, as an extension of the rationale outlined for EE and SI, considering that direct and indirect effects exhibit a similar pattern.

Our multi-group analysis results indicate that the DL level significantly moderates the relationships between AIA→BI, IQ→BI and SI→AIA. Specifically, the AIA→BI effect is significantly stronger among individuals with a high DL level compared with those with a low DL level. Since the awareness levels of the group with high DL level regarding the potential benefits and usage of technologies have improved, they may have a more positive attitude towards these technologies. It has also been stated in the literature that the high DL level positively affects the attitude towards technology [86]. However, in contrast to common assumptions, it is noteworthy that the impact of IQ on BI is more pronounced in the low DL group than in the high DL group. A possible reason for this situation can be explained on the basis that individuals with low DL level may have relatively limited technological knowledge and critical thinking ability, and therefore they are inadequate in filtering, understanding and evaluating the information acquired. This limitation may enhance their reliance on the information they acquire and technologies such as GenAI, potentially fostering their adoption. Our finding aligns with the literature, indicating a negative relationship between digital literacy levels and trust in information [87]. Another key finding of our study is that the effect of SI on AIA strengthens at higher DL levels. Moreover, this finding indicates that the mediating role of AIA in the relationship between SI and BI, within the context of indirect effects, varies significantly between high and low DL groups. This effect was found to be higher in the high DL group. The reason for these strong effects may be that individuals with high DL levels are more familiar with technology and its related concepts, leading to a positive attitude towards innovations like GenAI as a result of their effective use of social networks.

6. Conclusion

This study aims to examine the behavioural intention of individuals aged 18 and above in Turkey towards the use of GenAI tools for various purposes, within the framework of key influencing factors, and to evaluate the effect of digital literacy levels on this process through multi-group analysis. The findings provide insights into how individuals evaluate cognitive and motivational factors and reveal how digital literacy levels diverge within the model.

Addressing the limitations of the UTAUT and IAM models, this study integrates these two frameworks within a theoretical perspective. In addition, to enable a more comprehensive analysis of GenAI adoption intention, the model incorporates the factor of AIA. This approach allows for a more holistic identification of the key determinants influencing GenAI adoption. The findings indicate that PE, IQ and AIA have significant and positive effects on the intention to use GenAI. Users’ trust in AI-generated information, along with their belief in its contributions to job performance and their positive AIA, play a decisive role in the adoption process. However, EE does not have a significant effect on BI, whereas SI has a positive and significant effect on BI, deviating from traditional perspectives and supporting the argument that effort expectancy becomes more pronounced in specific work contexts.

The results of the multi-group analysis demonstrate that DL is a critical factor in shaping attitudes towards AI and the adoption process. Considering that the vast majority of participants (n = 327, 90.4%) fall within the 18–28 age range, these findings offer valuable insights into the impact of young adults’ digital awareness and information evaluation processes on GenAI adoption. Among individuals with higher digital literacy levels, the effect of AIA on BI is stronger, whereas the effect of IQ on BI is stronger among individuals with lower digital literacy levels. This highlights the role of advanced digital awareness in shaping technology adoption attitudes and processes. Conversely, individuals with lower digital literacy levels tend to place greater trust in AI-generated information due to differences in their information evaluation strategies, leading to a higher intention to use GenAI.

These findings provide significant insights into how digital competence, recognised as one of the most essential 21st-century skills, influences the adoption of emerging technologies. Identifying the varying mechanisms affecting GenAI adoption processes based on digital literacy levels could contribute to the development of more targeted digital skills training policies. Furthermore, the study’s findings offer practical implications for technology firms and platform providers developing GenAI-based systems at an industrial level. Given the impact of information quality and attitudes towards AI on user trust and adoption rates, the findings serve as a guide for structuring information presentation in ways that enhance user confidence and engagement.

6.1. Limitations and Future Studies

As with any research, this study also has certain limitations that should be taken into account. Most participants were young adults, and the overrepresentation of certain age groups in the sample may have influenced the findings. Therefore, it is recommended that future studies examine age-related differences using samples that include different age groups in a balanced manner. The gender variable was used solely for descriptive purposes and was not included as an independent variable in the analyses. Moreover, this study focused on assessing general tendencies regarding GenAI use; therefore, detailed questions about specific usage contexts or purposes were not included. This may have limited the in-depth analysis of contextual differences in GenAI usage. Although key parameters of technology acceptance tend to yield consistent results across different cultures, the importance of cultural differences cannot be overlooked. Therefore, the fact that the sample is drawn exclusively from Türkiye may reflect effects specific to this cultural and socioeconomic context. Future studies testing similar models in diverse cultural settings would help strengthen the generalisability of the findings. The fact that all data were collected through self-report surveys carries risks of bias, such as exaggerating digital skills or reflecting positive attitudes towards AI through social desirability. In future research, supplementing self-reports with behavioural or observational data could increase the reliability of the findings. Furthermore, the sample size of only Turkey may lead to effects specific to cultural and socioeconomic contexts; therefore, testing similar models in different cultural settings in future studies would strengthen the generalisability of the findings. We believe that these limitations should be considered when interpreting the findings and that they offer potential avenues for future research.

Footnotes

Appendix

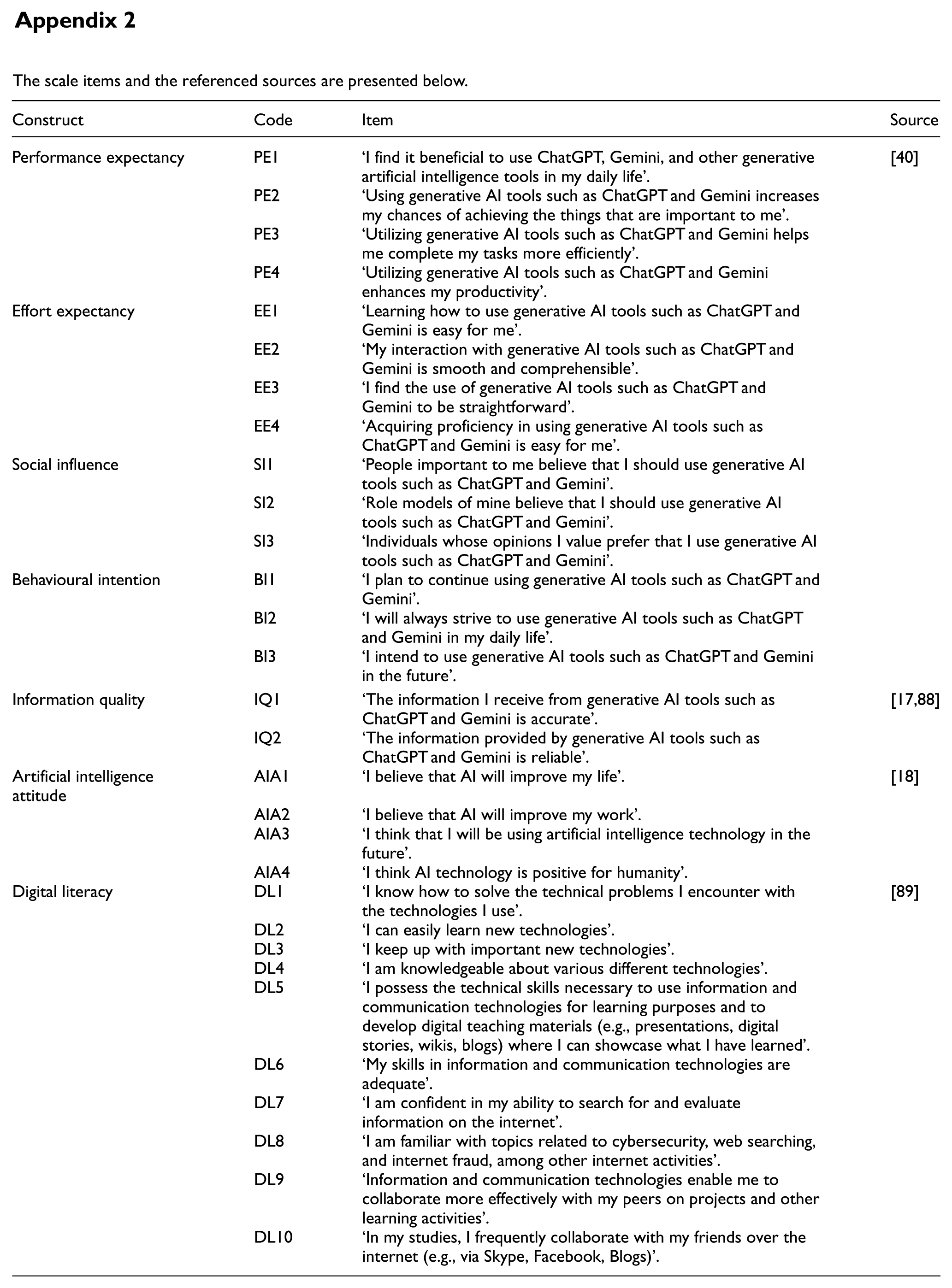

The scale items and the referenced sources are presented below.

| Construct | Code | Item | Source |

|---|---|---|---|

| Performance expectancy | PE1 | ‘I find it beneficial to use ChatGPT, Gemini, and other generative artificial intelligence tools in my daily life’. | [40] |

| PE2 | ‘Using generative AI tools such as ChatGPT and Gemini increases my chances of achieving the things that are important to me’. | ||

| PE3 | ‘Utilizing generative AI tools such as ChatGPT and Gemini helps me complete my tasks more efficiently’. | ||

| PE4 | ‘Utilizing generative AI tools such as ChatGPT and Gemini enhances my productivity’. | ||

| Effort expectancy | EE1 | ‘Learning how to use generative AI tools such as ChatGPT and Gemini is easy for me’. | |

| EE2 | ‘My interaction with generative AI tools such as ChatGPT and Gemini is smooth and comprehensible’. | ||

| EE3 | ‘I find the use of generative AI tools such as ChatGPT and Gemini to be straightforward’. | ||

| EE4 | ‘Acquiring proficiency in using generative AI tools such as ChatGPT and Gemini is easy for me’. | ||

| Social influence | SI1 | ‘People important to me believe that I should use generative AI tools such as ChatGPT and Gemini’. | |

| SI2 | ‘Role models of mine believe that I should use generative AI tools such as ChatGPT and Gemini’. | ||

| SI3 | ‘Individuals whose opinions I value prefer that I use generative AI tools such as ChatGPT and Gemini’. | ||

| Behavioural intention | BI1 | ‘I plan to continue using generative AI tools such as ChatGPT and Gemini’. | |

| BI2 | ‘I will always strive to use generative AI tools such as ChatGPT and Gemini in my daily life’. | ||

| BI3 | ‘I intend to use generative AI tools such as ChatGPT and Gemini in the future’. | ||

| Information quality | IQ1 | ‘The information I receive from generative AI tools such as ChatGPT and Gemini is accurate’. | [17,88] |

| IQ2 | ‘The information provided by generative AI tools such as ChatGPT and Gemini is reliable’. | ||

| Artificial intelligence attitude | AIA1 | ‘I believe that AI will improve my life’. | [18] |

| AIA2 | ‘I believe that AI will improve my work’. | ||

| AIA3 | ‘I think that I will be using artificial intelligence technology in the future’. | ||

| AIA4 | ‘I think AI technology is positive for humanity’. | ||

| Digital literacy | DL1 | ‘I know how to solve the technical problems I encounter with the technologies I use’. | [89] |

| DL2 | ‘I can easily learn new technologies’. | ||

| DL3 | ‘I keep up with important new technologies’. | ||

| DL4 | ‘I am knowledgeable about various different technologies’. | ||

| DL5 | ‘I possess the technical skills necessary to use information and communication technologies for learning purposes and to develop digital teaching materials (e.g., presentations, digital stories, wikis, blogs) where I can showcase what I have learned’. | ||

| DL6 | ‘My skills in information and communication technologies are adequate’. | ||

| DL7 | ‘I am confident in my ability to search for and evaluate information on the internet’. | ||

| DL8 | ‘I am familiar with topics related to cybersecurity, web searching, and internet fraud, among other internet activities’. | ||

| DL9 | ‘Information and communication technologies enable me to collaborate more effectively with my peers on projects and other learning activities’. | ||

| DL10 | ‘In my studies, I frequently collaborate with my friends over the internet (e.g., via Skype, Facebook, Blogs)’. |

Declaration of generative AI and AI-assisted technologies in the writing process

During the preparation of this work, the author(s) utilised ChatGPT 4o and Quillbot for English translation. After using these tools, the author(s) carefully reviewed and edited the content as necessary and assume full responsibility for the final version of the published article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.

Data Availability Statement

The data that support the findings of this study are available on request from the corresponding author.