Abstract

Rapid digitalisation has resulted in a literature about technology acceptance that is ever increasing in size, naturally creating debates about the developments in the field and their implications. Given the size of the literature and the range of factors, theories and applications considered, this article reviewed the relevant literature using a meta-analytical approach. The objective of this review was twofold: (a) to provide a comprehensive analysis of the factors contributing to technology acceptance and investigate their effects, depending on theoretical underpinnings, and (b) to explore the conditions explaining the variance in the effects of predictors time-, application- and journal-wise. This review analysed data from 693 papers. A total of 21 independent predictors having differential effects on attitude, intention and use behaviour were found. The effects of the predictors were different depending on the theoretical frameworks they were related to. The analysis of the consistency of the role of the predictors suggested that there was no longitudinal change in their effect sizes. However, a significant variance was found when comparing predictors across research applications and the journals in which the papers were published. The analysis of publication bias demonstrated a tendency to publish studies with significant results, although no evidence was found of p-value manipulation.

1. Introduction

Technology acceptance research has been one of the fastest-growing streams in the information systems (IS) literature. The popularity of this research domain is due to the constantly developing nature of the technology. On the one hand, fast technology development calls for fresh insight into the users’ side of technology use. This is needed to capture changing users’ demands, beliefs, preferences and expectations against contextual differences, such as culture, geographical location and the difference in technologies [1–4]. To bring diverse perspectives into the field, researchers have introduced theories and concepts from psychology, sociology, economics and marketing into technology acceptance research [5–10]. Scholars examined parsimonious models, aiming to generalise predictions on users’ behaviour, and developed context-specific theories, in order to explore the acceptance of specific technologies [11–14]. On the other hand, there is an on-going debate about whether technology acceptance has become an overly-researched topic in IS [15,16]. The arguments suggest that the theoretical and practical contributions of the research are limited, due to the tendency to replicate prominent models [15,17]. The scale on which the technology acceptance stream has grown and the current discourse around the future of this area have created the need to perform a meta-analytic review of the relevant work and identify future research avenues.

To this end, there are two gaps in the literature that this review article aims to address. First, there are a few systematic reviews and meta-analyses on technology acceptance [18–23]. Still, they tend to focus on specific theories such as technology acceptance model (TAM) [24–26] or unified theory of acceptance and use of technology (UTAUT) [27–29] and specific contexts, such as mobile banking, e-learning and e-government [30–33]. The reviews have explored the effectiveness of those theories [25,27] and offered the best combination of constructs to predict adoption [18,34]. Given the focus of published reviews, the literature would benefit from a review at a different level. A meta-analytic review of the factors of technology acceptance across theories might not only help put theories into perspective, but it could also make it possible to focus on individual constructs more widely, examining whether attitude, intention or use behaviour can be regarded as a proxy for behaviour [12,35–40]. Also, by exploring the predictors of the three dependent variables, the review can provide a new insight into the role of attitude, use intention and use behaviour in technology acceptance.

Second, the fast-paced development of technology has been fuelling the growth of the literature exploring the utilisation of new technology and its new applications [41–43]. Considering the changes in technology use cases over the past decades, there is a need to aggregate the effects of factors and compare them across time and applications. In addition, to complement discussions about the variance in studies due to the publication outlet rank [44–47], it is important to compare the factors of acceptance in the papers published in high-/low-ranked journals. Such approaches would help explore the variance in the effects of predictors rooted in the causes related to both technology and the reputation of the academic outlets. An understanding of the conditions underpinning the difference in results is important for scholars, who can take into account the relevance of the factors and the importance of evidence for theorising future models of technology acceptance.

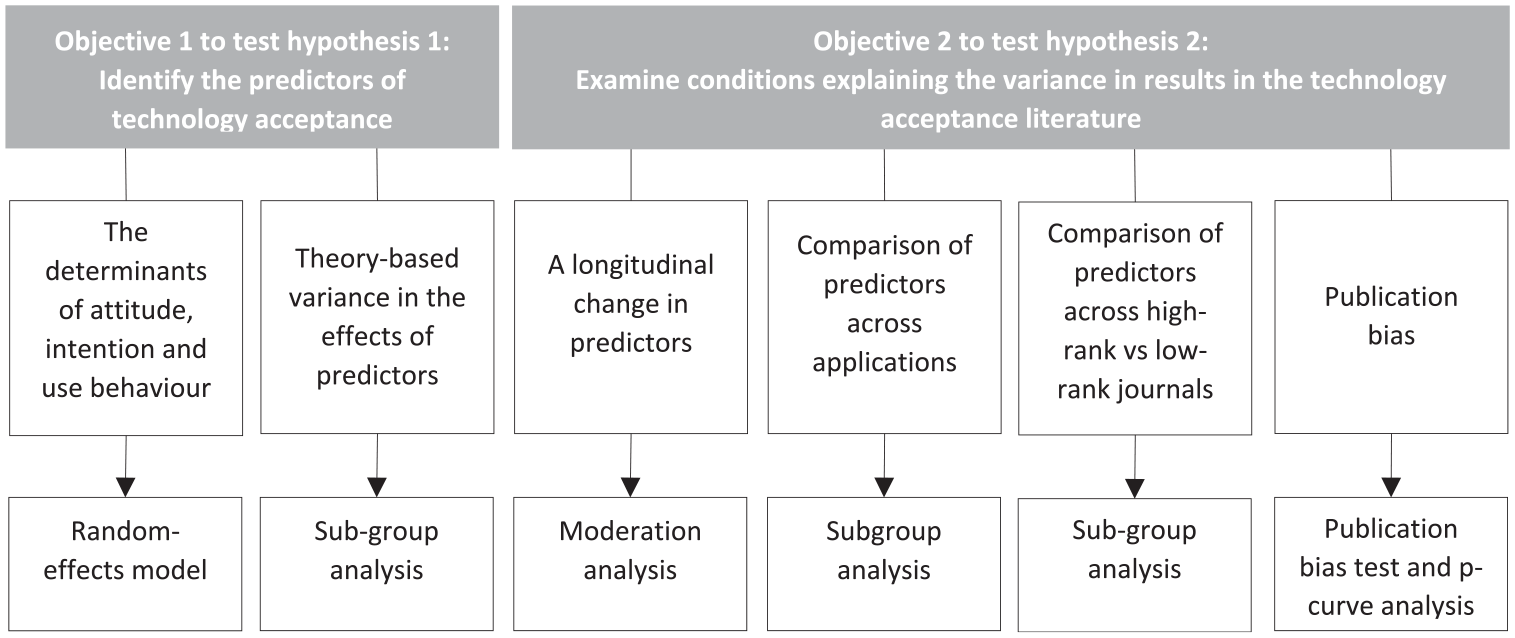

Given the aforementioned gaps, this study pursued two objectives. The first objective was to conduct a meta-analysis of technology acceptance research rather than a specific theory to explore the predictors of technology acceptance evidenced in literature to date. To address this objective, two sub-studies were conducted. The aim of the first one was to identify the factors of acceptance and examine their relation to attitude, use intention and use behaviour. This approach provided insights into the explanatory role of each dependent variable and an understanding as to whether these three outcomes can be used interchangeably as a proxy for acceptance. The aim of the second sub-study was to explore the effects of predictors depending on underpinning theories. By employing a subgroup analysis, which can identify the significance of the variance in factors across theories, the importance of the adopted theoretical foundations was examined. The results help put the findings of theory into perspective when it comes to attitude to technology use, use intention and actual use behaviour.

The second objective of this meta-analysis was to examine the variance in results in the technology acceptance literature across time, applications and journals. By pursuing this objective, this article provides an understanding of the dynamics of the research on technology acceptance, the generalisability of findings across applications and the reliability of reported effects. To address the second objective, four sub-studies were conducted. The aim of the first sub-study was to explore the existence of temporal trends in the field, using a moderation analysis. The identification of the changes in the power of researched constructs across time can explain which factors have received increasing importance. The second sub-study examined whether the effect sizes differ depending on the research contexts by utilising a subgroup analysis. By establishing the variance in results, it is possible to conclude whether a research context defines the importance of different technology and the users’ perception of the aspects of technology use. This, in turn, helps future studies look at the context and the models that matter the most for their research context. The third sub-study examined the difference between those papers published in high- and low-ranked journals, by employing a subgroup analysis. Given the influence of journal ranking on the perception of research, the finding of the analysis makes it possible to understand whether there are differences among papers published in high-/low-ranked journals. Finally, the study explores the potential presence of publication bias, namely the systematic underrepresentation of research findings. For that purpose, Egger’s test and p-curve analysis were used in order to examine whether evidence published in the reviewed scope of literature was reliable.

2. Technology acceptance

The research on technology acceptance started from the development of the TAM by Davis in 1989. This model explained and predicted the utilisation of IS, with two basic concepts measuring the perception of the usefulness of the system for the task and the perception of the amount of effort that is required for its use [48]. Since then, the literature has accumulated evidence about a plethora of constructs, aiming to understand the determinants of different technologies, their applications and contexts.

Among the most powerful theories that have been extensively used until today are task-technology fit (TTF) model, diffusion of innovation (DOI) theory, Delone and Mclean success model, UTAUT and its extensions, as well as the original and extended TAM. They brought evidence about the role of the characteristics of innovation (e.g. relative advantage, compatibility and complexity), the fit of technology to tasks, the interdependence between the dimensions of IS success (e.g. information quality, system quality and service quality) and the beliefs about internal and external factors affecting behaviour (e.g. social influence and performance expectancy) [12]. Many of the theories have been the subjects of revisions, resulting in integrated models, such as TAM-TTF [49,50] and UTAUT-TAM [51]. Scholars have often complemented theories with additional factors (e.g. privacy and trust) to test explored conditions that could explain adoption [52–54].

Over the years, technology acceptance research and theories have also been informed and underpinned by research and theories grounded in other disciplines that are concerned with human behaviour [6,55,56]. For example, social cognitive theory (SCT), stemming from social anthropology, stresses the importance of environmental and individual differences and has enabled researchers to explore contextual variables facilitating technology utilisation [57,58]. The theory of reasoned action (TRA) and the theory of planned behaviour (TPB), which originate from psychology, have been extensively used to predict technology utilisation through behavioural and normative beliefs (subjective norm, perceived control and attitude) [59–61]. The technology readiness index (TRI) has helped scholars explore the propensity to adopt innovation through multidimensional psychometric constructs [62–64]. Flow theory was useful in explaining hedonic aspects and cognitive absorption when it comes to engagement with technology [65]. Also, psychological theories have been used to explain use satisfaction in dissonant situations [6], types of motivations [66] and mental states underpinning behaviour [67].

Theoretical frameworks have been employed to investigate the adoption of various applications, such as e-health, smart technologies, e-banking, mobile services, e-learning technologies and e-government [6,9,68–71]. It has been proposed that some factors have a distinctive relation to the home versus the organisational context, such as applications for personal use, friends and family influences [11,66]. The on-going development of technologies and applications (e.g. virtual reality and intelligent agents) motivates scholars to adapt theories or develop new ones to embrace the characteristics of new devices and contexts[14,71].

A wide body of knowledge on technology acceptance accumulated over decades requires a multi-perspective insight into the published research, which has not been systematised to date. To reflect on the development in technology acceptance research, a number of reviews have been conducted, and they have adopted three main directions. A large number of papers systematically analysed the drivers of the acceptance of specific IS in domains such as healthcare, education, human resource management and tourism [14,24,33,72–75]. Another stream of meta-analytic reviews focused on particular theoretical models, such as TAM, UTAUT, TRI [17,18,76–78] and their extensions [28,79,80] to understand the impact of the theories on technology acceptance and new evidence that could move forward the research in the area by advancing those theoretical frameworks. The third stream of meta-analysis studies specialised in the exploration of specific factors and issues, such as the role of technology in disaster risk management, the implications of social media for students’ performance and consumers’ resistance to innovation [81–83]. However, a focus on specific theories, issues, domains or applications limits the scope of the papers included in the analysis, providing a partial insight into the underpinnings of use behaviour [18,25,84]. Also, scholars have not explicitly differentiated between the proxies of acceptance (i.e. attitude towards technology use, intention to use and use behaviour) [25], assuming that they have the same predictors and can be used interchangeably.

In addition, since the development of the first TAM, individuals’ perceptions towards the technology, the context and the technology itself have significantly changed. For instance, early studies mainly examined technology acceptance in the organisational context characterised by mandatory use [12,85,86]. Technology was less integrated into private individuals’ life. Such use patterns reflected the prevalence of certain factors, such as perceived usefulness and perceived ease of use. As technology becomes widespread, the role of such factors has become inconsistent [1,16,87–89]. Also, radical and incremental changes in technology and innovation shape users’ activities, transform technology applications and consequently affect its acceptance [6,90]. How technology acceptance and its antecedents have been explored across different journals needs to be investigated too, as it has been argued that the publications in higher-ranked journals have higher research contributions [91]. Hence, there is a possibility of divergent arguments and findings in papers published in low- versus high-ranked journals.

Given the above, this study suggests that:

Hypothesis 1. The factors of technology acceptance are different when examined in relation to attitude, intention and use behaviour. Those factors vary depending on the theoretical underpinnings adopted by papers.

Hypothesis 2. There is variance in the predictors of attitude, intention and use behaviour across time, technology applications and journals.

To test the study hypotheses, we provide a comprehensive analysis of the research using a meta-analytic approach. To identify the possibility of the underrepresentation of non-significant findings, we also test the likelihood of publication bias present in the published research on technology acceptance. The following sections provide a detailed explanation of the methodological steps and analyses undertaken and present the results of the meta-analysis.

3. Methodology

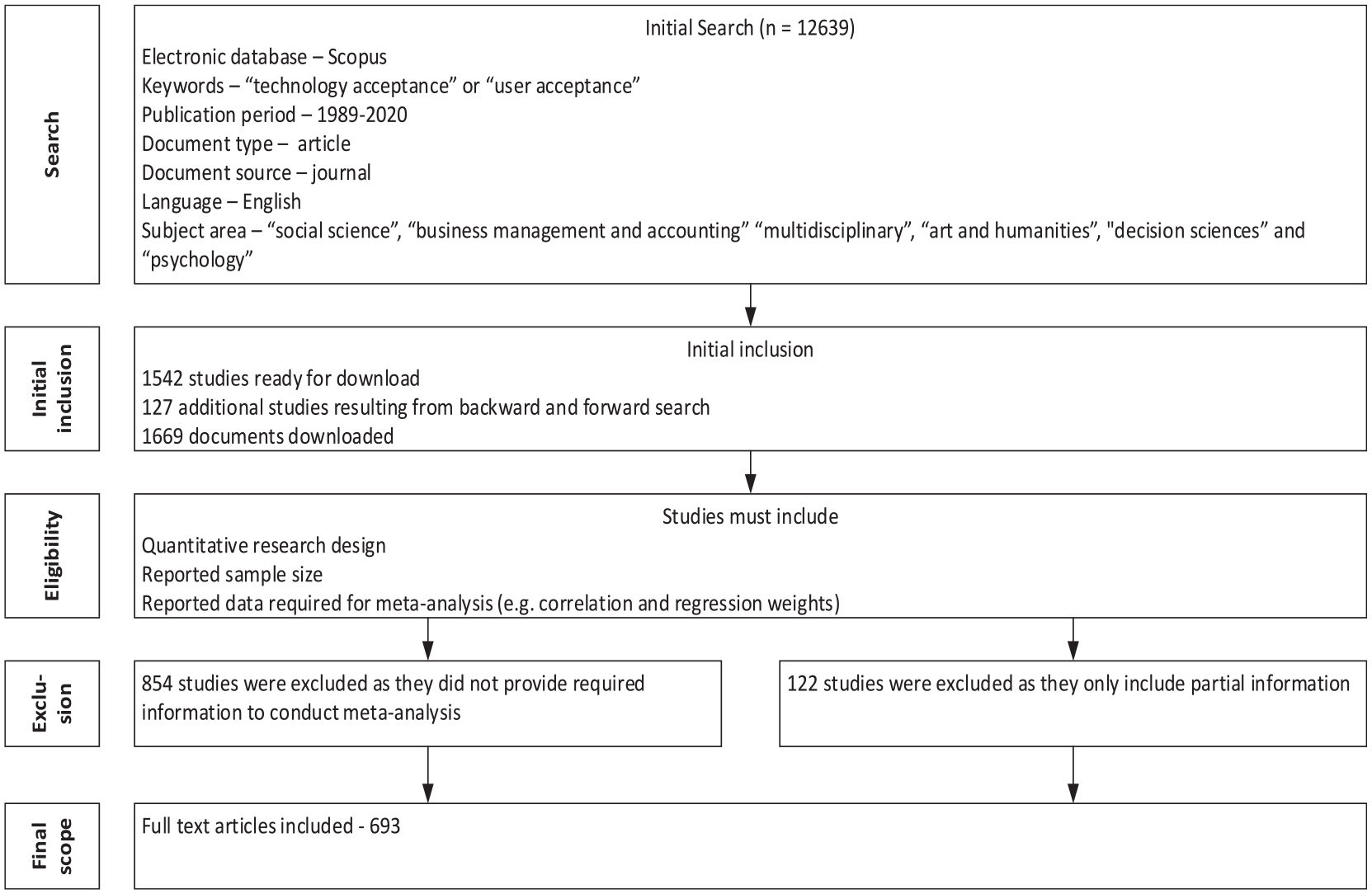

To analyse the literature, this study uses a meta-analytic approach, which enables researchers to examine relationships between identified variables and moderation effects [92–94]. The review of the literature was conducted in a systematic way in three steps to minimise potential biases (Figure 1) [95]. First, we embarked on the planning stage by starting the preliminary review of the technology acceptance literature, proposing objectives and developing a research protocol. Three reviewers were involved in the procedures of the planning stage. The expertise of the reviewers in the field increased the potential to adopt a robust approach to examining the topic and to identify novel themes and insights [96]. The preliminary survey of the literature enabled us to identify gaps in the existing literature and find a different perspective for addressing those gaps. Based on the review protocol, all documents related to technology acceptance were identified and scanned to filter out those which were not suitable for meta-analysis (e.g. qualitative studies).

Literature search and the selection of studies eligible for meta-analysis.

Second, we selected electronic databases, keywords, exclusion and inclusion criteria, the extraction of the material for data analysis and the analysis of the data. The Scopus electronic database was used as a source from which articles were searched and extracted, as it provides wide coverage of academic literature [97]. The search of publications was based on the keywords ‘technology acceptance’ or ‘user acceptance’– the terms that are commonly used in the literature [12,71,98,99] to denote the acceptance and utilisation of technology by end users. This resulted in 12,639 documents. After the filtration process, 1542 papers were left to download. Following the guidelines by Croom [100] and Thomé et al. [101], we conducted an additional backward and forward citation search, which resulted in an additional 127 articles. The utilisation of the backward and forward citation search technique made it possible to include all relevant papers. The resulting scope included 1669 documents. Then, we excluded articles that we could not use in the meta-analysis. As per Lipsey and Wilson [102] and Cooper et al. [103], this review only included studies that had a quantitative research design and studies that reported the sample size and the type of analysis conducted (e.g. correlation and regression weights). In addition, we only included studies that examined at least one of the outcome variables, such as intention to use, attitude and use behaviour that we aimed to study. After applying these filtering criteria, 854 articles were excluded.

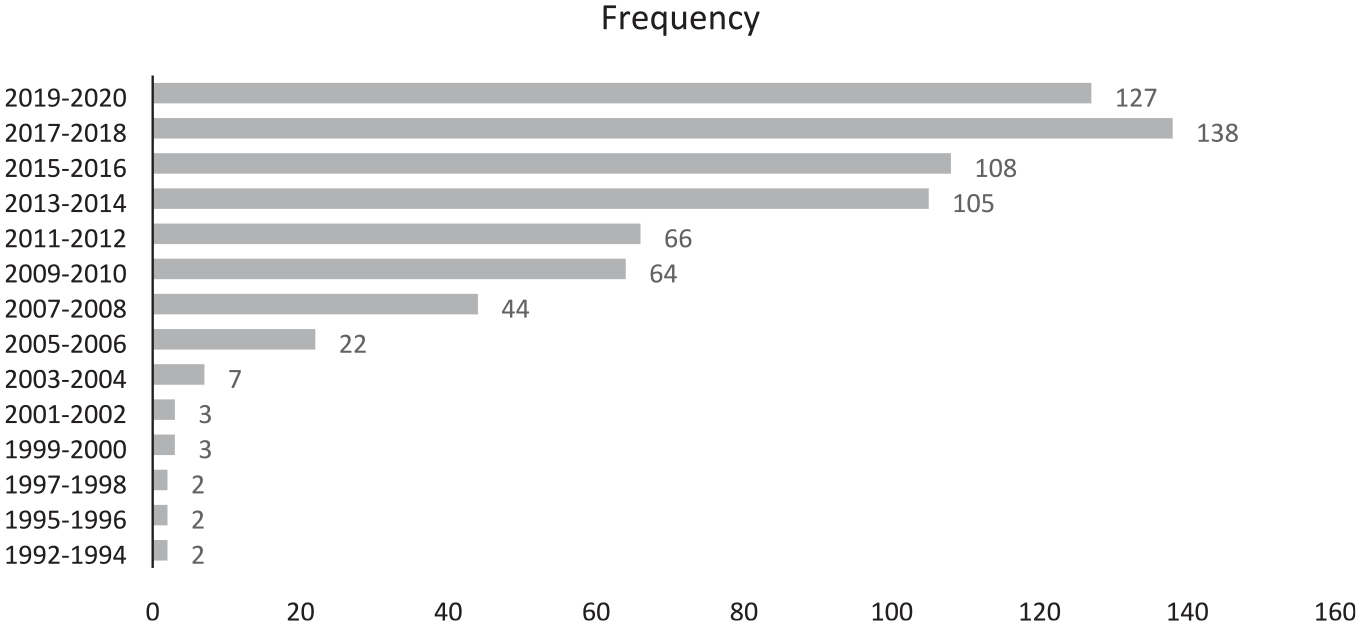

Third, after downloading the final sample of documents, we embarked on the extraction of data for the meta-analysis. Given that meta-analysis is a method by which the cumulative effects of relationships are assimilated from individual studies [104], we had to collect data about the relationships between the factors of acceptance and dependent variables. The data collection started with the extraction of sample size, the coefficients of regression weights and p-values[18,25,105]. Then, the variance for each relation was calculated. Relationships that had been tested less than three times were excluded [93]. During the final phase of data extraction, 122 articles were excluded due to insufficient information. As a result, the final scope of articles comprised 693 documents. These papers presented data on 2865 relationships, which were analysed using a total sample of 221,772 respondents. A total of 578 reviewed documents were published in ‘low-tier’ journals and 115 papers were from ‘high-tier’ ones. The categorisation of journals was based on the guide by the Association of Business Schools (ABS2018). ‘Low-tier’ journals are not listed or ranked 1 or 2 in the ABS list. The journals ranked 3 and 4 in the ABS list fall into the category of ‘top-tier’ outlets. A ranking of documents based on their publication in the top eight journals in the IS field (i.e. the ‘basket’ of eight journals) was not possible, because of the low numbers of such papers in our sample. Figure 2 illustrates the frequency of published papers by year, demonstrating the growth of the research on technology acceptance starting from 2006. The highest number of papers was published between 2017 and 2020.

Year of publication.

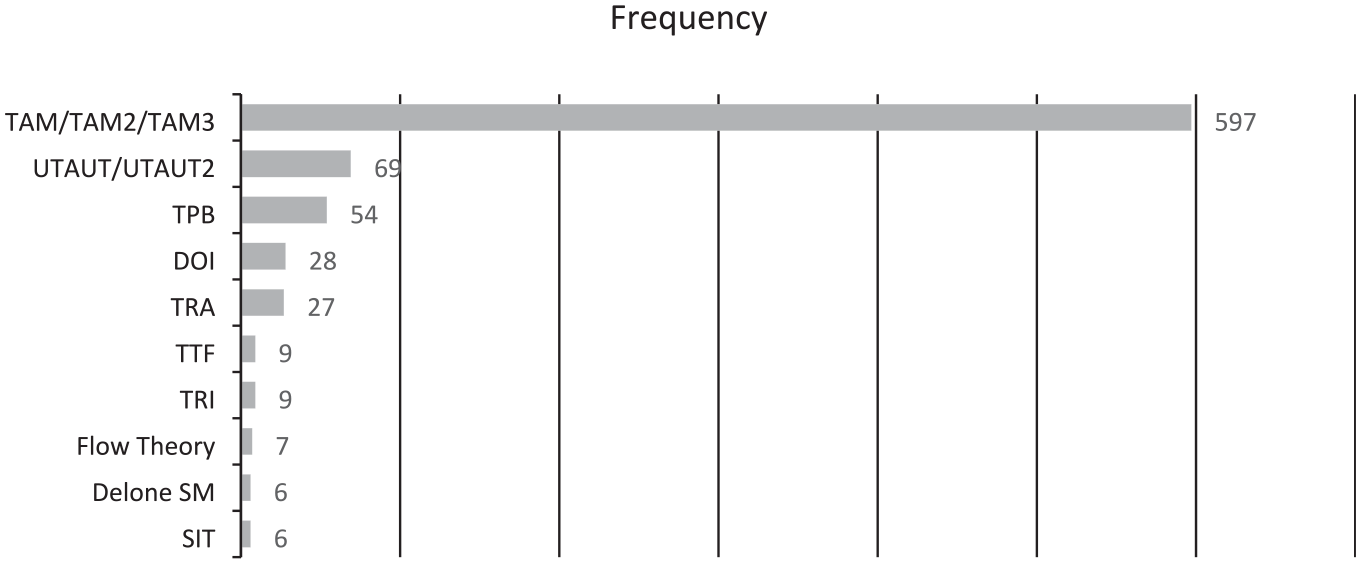

The published research was based on 10 main underpinning theories with their extended versions, such as TAMs (TAM, TAM2, TAM3), UTAUT and its extensions and TRI (Figure 3). A predominant number of papers utilised TAM as a theoretical framework.

Underpinning theories of published papers.

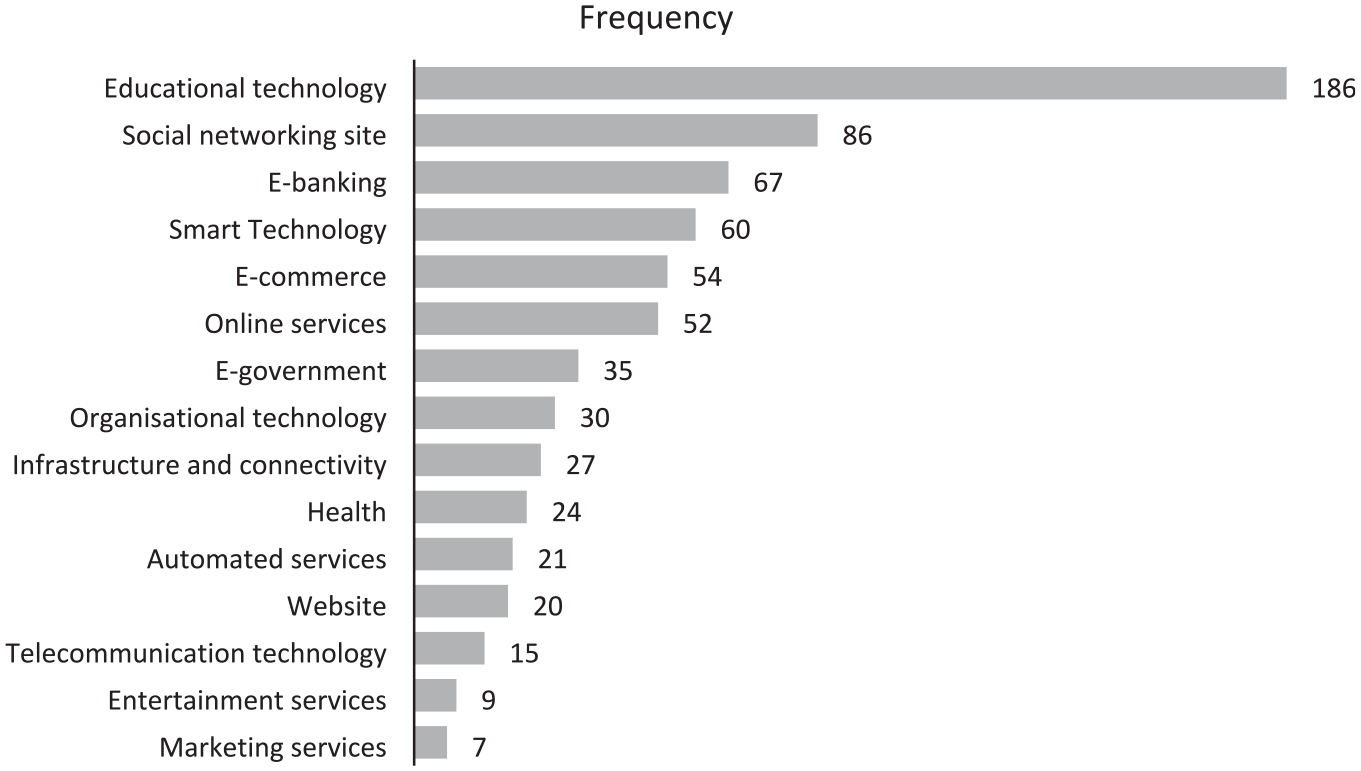

Figure 4 presents 15 main areas of the application of technology examined in the selected papers. The most widely researched technology, such as e-learning tools and websites, refers to the educational context. Significantly fewer numbers of documents focused on technologies applied in entertainment and marketing services.

Technology applications.

To test the hypotheses and address the two objectives of the study, five types of analysis were used, namely pooling effect sizes, subgroup analysis, the analysis of moderation effects, a small-sample publication bias and p-curve analysis. For the analysis, we used RStudio with dmetar and metafor packages [106,107]. The effect sizes of all identified determinants of acceptance were calculated using variance and relationship weights (beta) [92,93,108,109]. Effect sizes demonstrate the magnitude of the investigated effects in the population [110]. Bigger effect sizes mean a stronger relationship [108]. Given that the effect size is dependent on the number of factors being investigated in the literature, this article only included the variables that had been tested more than three times [103,111]. A random-effect approach was used to pool (aggregate) effect sizes from all studies. A random-effects model is more appropriate, as it assumes that the results in each study derive from different samples [92]. Prior to pooling the effect sizes, homogeneity across the synthesised papers was calculated by employing Q and I2 statistics. This showed the variation across papers due to heterogeneity, above and beyond the variation expected due to chance [112].

The variance in predictors due to a theory, application and journal rank was explored using a subgroup analysis, and the difference due to a publication year was examined using a moderation analysis. The idea behind the subgroup analysis is that effects are pooled and compared between the groups (e.g. high-ranked versus low-ranked journals, TAM-based studies versus UTAUT-based studies, etc.). The results make it possible to study whether the difference between the groups is meaningful [106]. Moderation effects were examined by introducing control variables, which enabled us to calculate the variance in the outcome variable due to the interactive effect of the predictors with the year of publication.

For the analysis of publication bias, two tests were employed: a small-sample publication bias method and p-curve analysis. Publication bias is defined as ‘the systematic suppression of research findings due to small magnitude, statistical insignificance, or contradiction of prior findings or theory’ [106]. The presence of publication bias in findings means that the true-effect sizes could be potentially lower than the ones presented in the published body of research [106]. Therefore, testing for publication bias made it possible to see whether only studies with high effect sizes were published. For the small-sample publication bias test, we built a funnel plot and conducted Egger’s test. Asymmetrically plotted studies around the axes of the funnel indicate the presence of publication bias [113]. P-curve analysis was employed to check the validity of the first test because some recent papers have claimed that the small-sample publication bias method could produce inaccurate results. Publication bias may happen not because of unreported data on non-significant relationships, but manipulations to achieve significant results [114,115]. Therefore, the use of the p-curve test helped see evidence of altered p-values by calculating the true effects behind the collected data.

Figure 5 presents the types of analysis that are utilised in the article to address the objectives of the research.

Research objectives and analyses.

4. Results and discussion

4.1. The determinants of technology acceptance

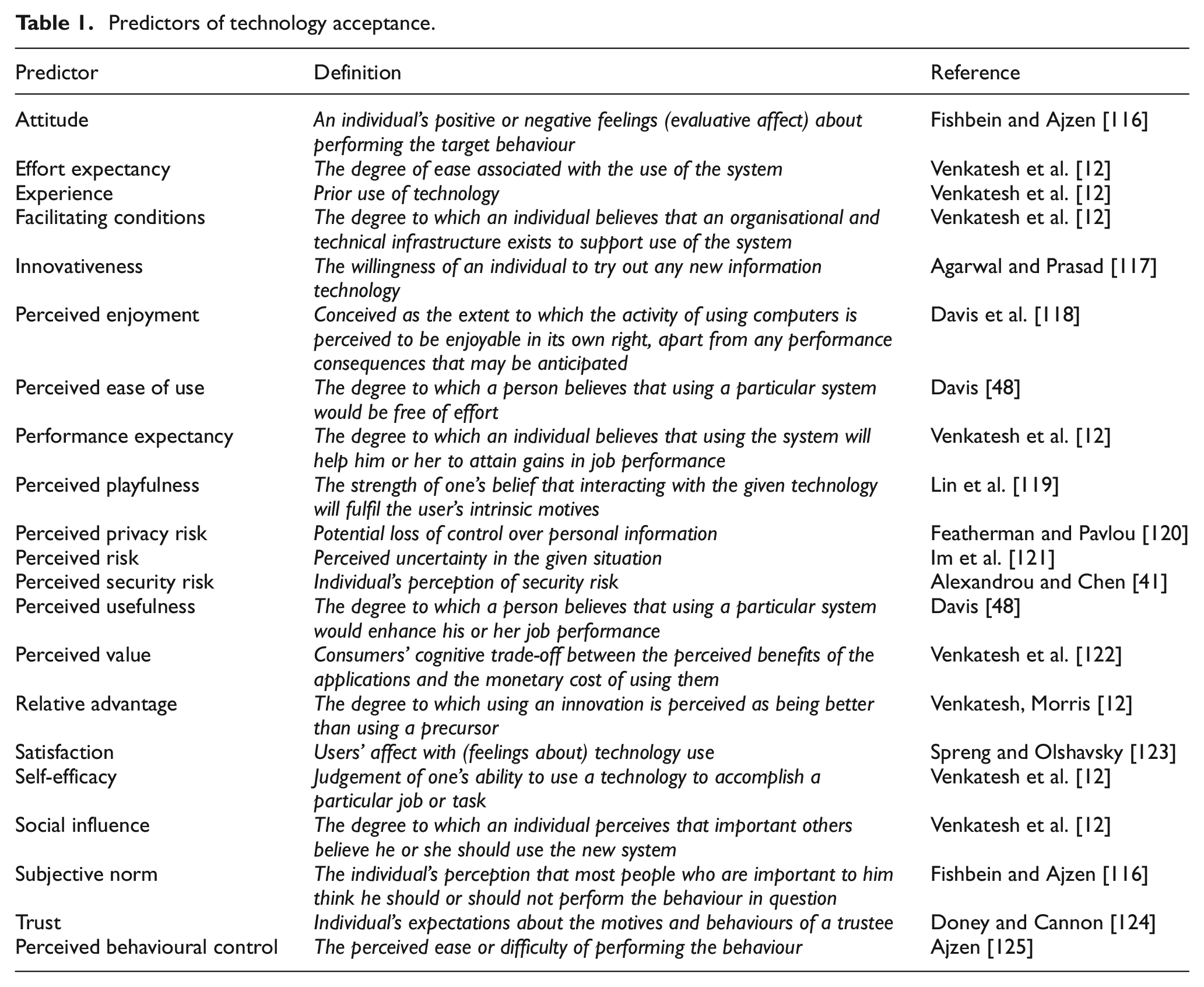

The first research objective was tackled by identifying the predictors of technology acceptance and pooling their effects on attitude, intention to use and use behaviour. In total, 21 determinants were identified, which are summarised in Table 1.

Predictors of technology acceptance.

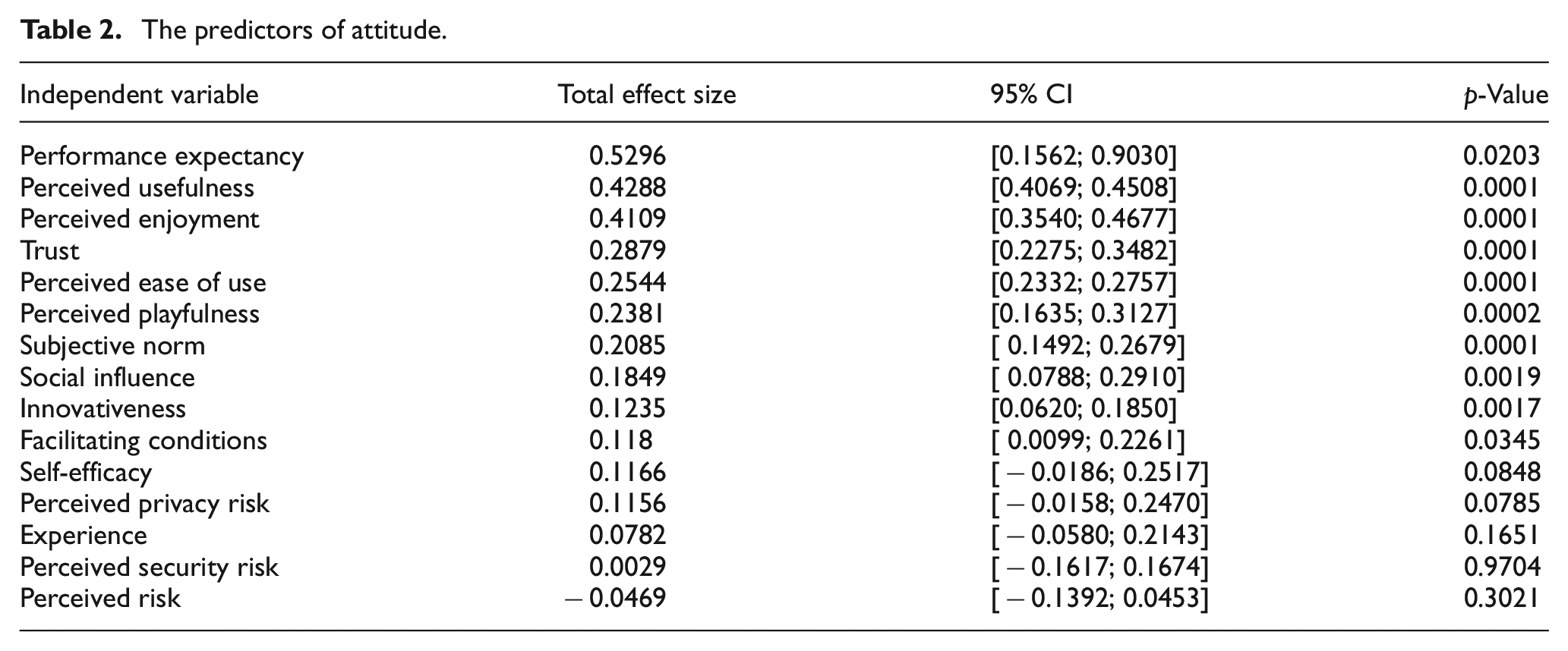

The analysis of pooled effect sizes for each outcome variable demonstrated that out of 15 predictors of attitude, 10 were significant (Table 2). The predictors mostly reflect the positive perceptions about how technologies are used and what outcomes the utilisation can bring, such as perceived playfulness, perceived ease of use and perceived enjoyment. Experience, perceived privacy risk, perceived risk, perceived security risk and self-efficacy had non-significant effects. The non-supported effect of experience is consistent with prior findings in the literature, providing conflicting results on the role of this predictor in different contexts (e.g. social media marketing, online shopping and personal computers) [126–128]. This can be explained by the assumption that when it comes to attitude, experience can weaken trust in technology, underpinning a positive attitude [127]. Rather than directly influencing attitude, experience moderates the effect of predictors on technology acceptance [128,129]. The non-significant effect of all three risk variables is against the research arguing that individuals’ uncertainty about the outcome of technology use undermines use behaviour [120,121,130–132]. A plausible interpretation could be that attitude to technology is an affective state and may be more psychologically distant than actual use or intentions. Given the technological advancements, the benefits that new technologies promise may reduce the uncertainty of technology use [97]. Therefore, in a situation of general technology evaluation, risks may seem abstract and irrelevant. The effect of self-efficacy was not confirmed either. This is not surprising, as prior research provided mixed results [133–135]. Prior literature provided evidence that self-efficacy has a mediated effect through cognition [133]. Thus, it is assumed to be an indirect predictor of technology acceptance.

The predictors of attitude.

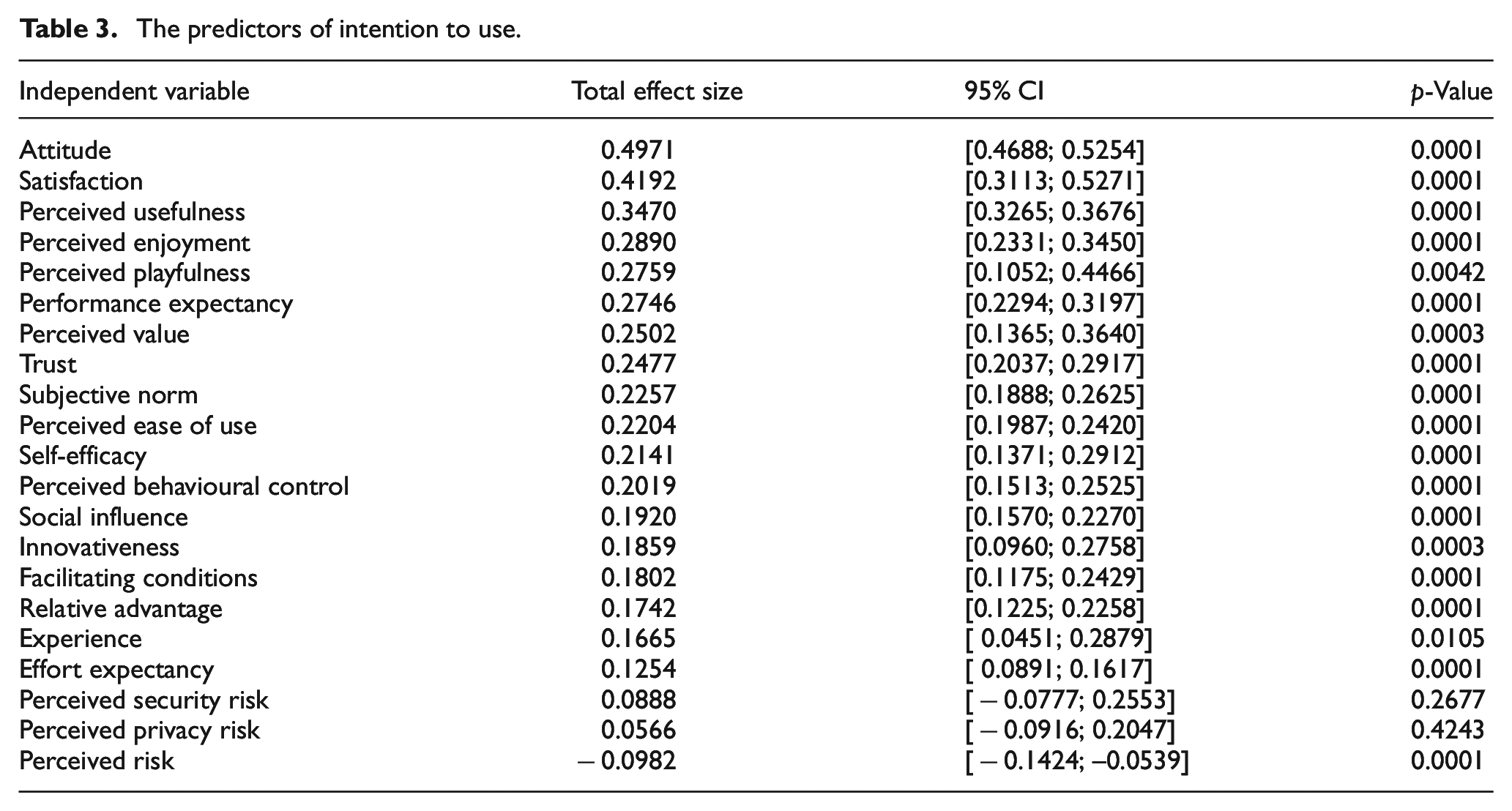

The highest number of factors was identified to be the predictors of intention to use (Table 3). Out of 21 factors, the effects of only two were not found to be significant. The variables with significant effects embrace all positive and negative beliefs about the consequences of technology utilisation, as well as the beliefs about personal abilities and control over behaviour. Although the effects of privacy and security risks were not supported, the overall perception of risks was found to determine the willingness to embark on technology utilisation. For measuring intention, studies focus on the potential users of technology, who might not have a clear understanding of the types of risks that the use of technology may entail. Such results could mean that respondents did not expect adoption to result in specific risks beyond the typically expected ones that they had accepted.

The predictors of intention to use.

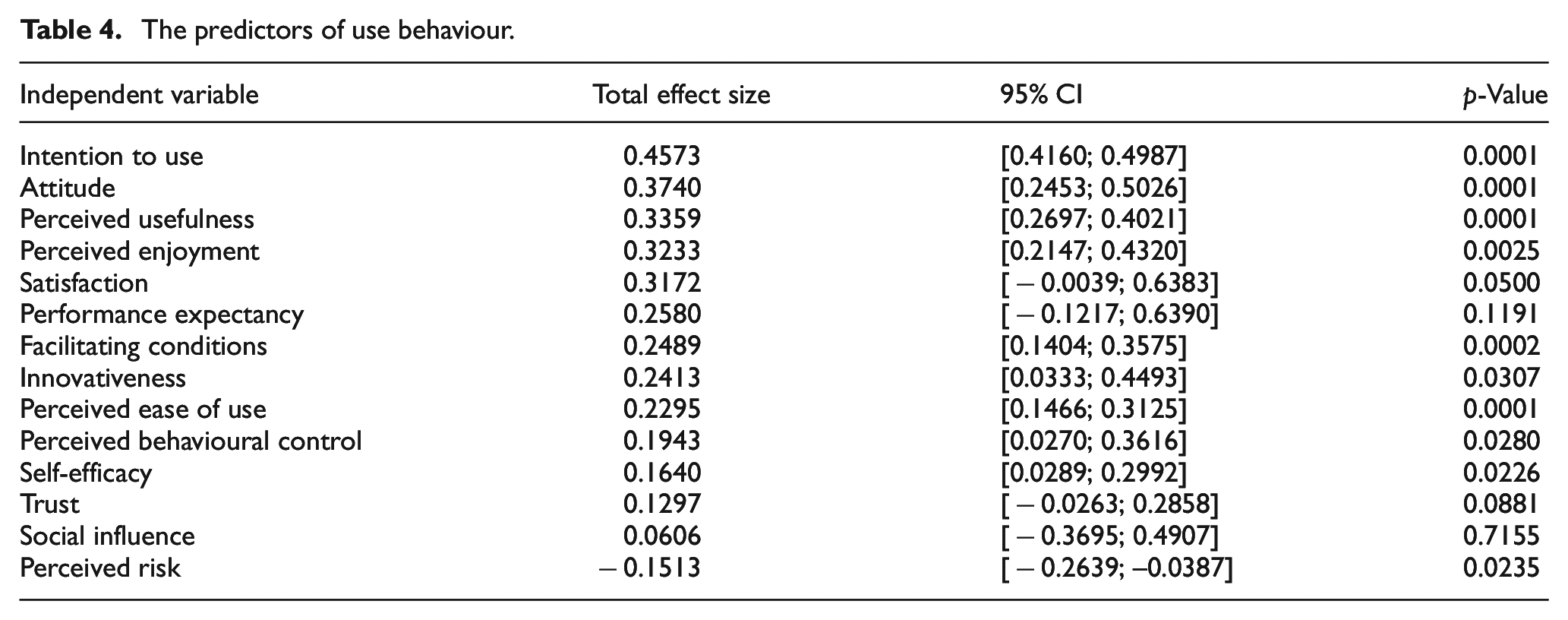

Use behaviour has been predicted by 14 factors, which in general can be described as beliefs about the risks, positive outcomes and personal capabilities (Table 4). Trust, performance expectancy and social influence were found to have non-significant effects. These findings contradict the general argument in the research that these variables are important when using technology [12,136,137]. However, trust, performance expectancy and social influence were found to have positive effects on attitude and intention to use [138–140], meaning that their role changes depending on the outcome variables. When it comes to actual use behaviour, people who have experience with technology take performance for granted. The perception of the degree to which technology can perform well in delivering services is not important, as people might set a new threshold of expectations for future technology use. Similarly, trust may not play an important role when users have already been engaging with the technology. Also, due to prior experience, firsthand knowledge about the outcomes of adoption lowers the influence of the opinions of other people about technology.

The predictors of use behaviour.

The results of the pooled effects of the factors show that each outcome variable has different predictors. Such results add to the debate on whether attitude, intention to use or use behaviour can be used as a proxy for technology adoption [12,35–40]. Specifically, research in sociology and psychology suggested that attitude explains behaviour [141–145]. Other scholars debated the need to focus on use intention, although the findings of the degree to which this translates into actual behaviour were not consistent [36–38,40,146,147]. They found differences in the explanatory power of attitude, use intention and actual behaviour, which provide important evidence and advance research in two ways. On the one hand, the findings empirically validate prior research about the role of attitude in enhancing the predictability of TAMs [148]. Attitude is formed under the effects of two psychological paths – cognitive and affective – having a varying impact on actual use behaviour [149]. However, a plethora of studies does not account for the difference in underlying psychological mechanisms when measuring attitude in technology acceptance [148] and examining the determinants of technology acceptance through a one-dimensional attitude construct [150,151]. On the other hand, the findings suggest that there is an intention–behaviour gap, which has been discussed by researchers [152,153]. Although some investigation has been done to confirm that external factors should be considered (e.g. social desirability), which may distinguish intention from actual use [152], little endeavour has been put into explaining the gap. Consequently, the difference between the constructs has largely been downplayed in technology acceptance research. Given the above, future research could be developed in a few directions. First, researchers should consider the types of beliefs specific to a technology pre-adoption phase and actual use. A focus on the factors which matter for users in specific situations (i.e. when assessing technology prior to use, when considering the predisposition towards its use in the future and after having experience of interacting with the technology) will help improve the predictive power of future technology adoption theories. Second, future studies should take into account the impact of psychological mechanisms and external factors shaping attitude, intention and use behaviour, which may explain their different role in TAMs.

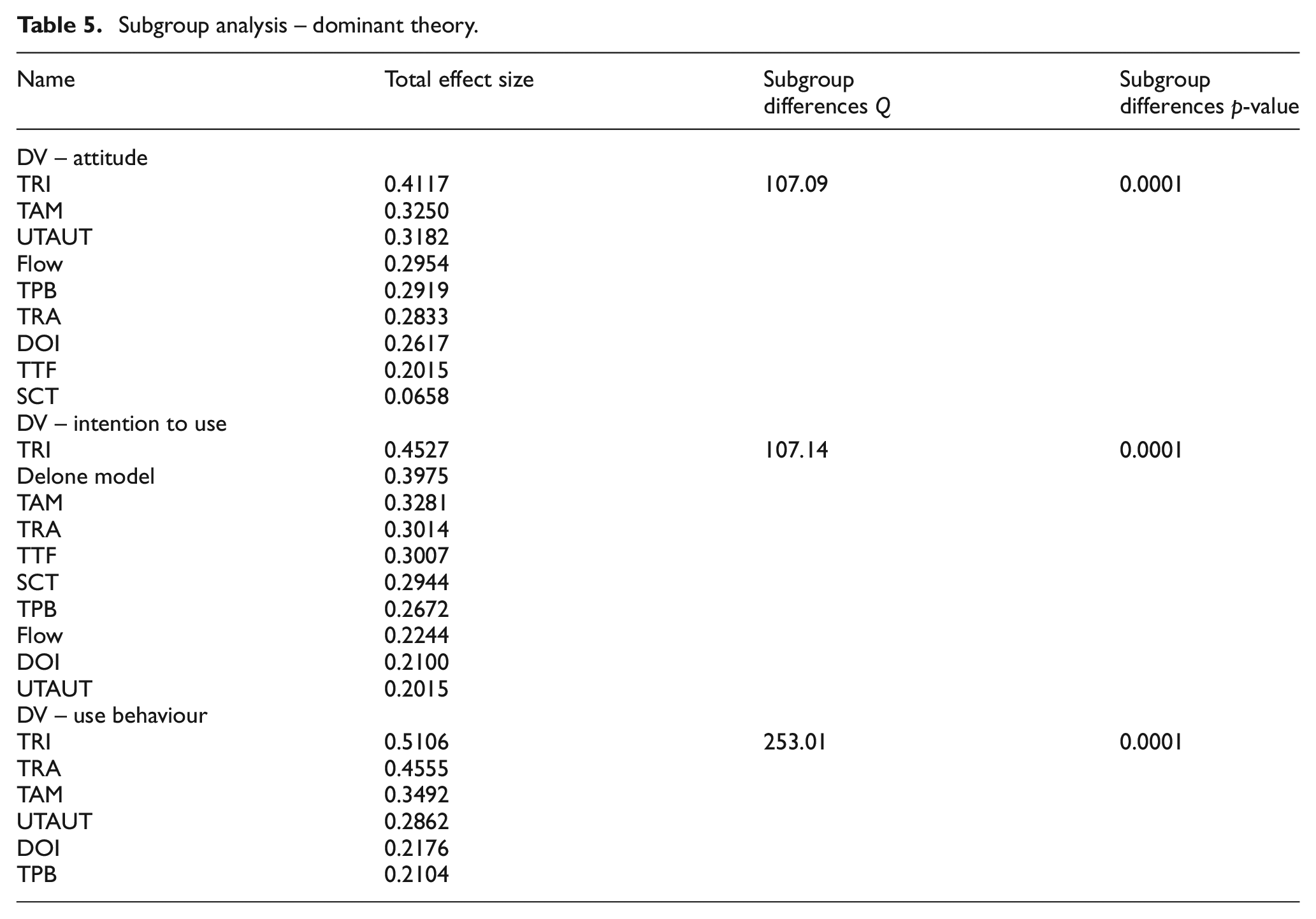

4.2. Theory-based variance

The subgrouping of predictors based on theories results in different total effect sizes for all outcome variables, as demonstrated by p-values below 0.05 and Q-test results showing the difference between subgroups (Table 5). There are three types of observations resulting from the subgroup analysis. First, although there is a variance in effect sizes among theories, some of the theoretical models are consistent in their predictive strength irrespective of the outcome variable. For example, TRI and TAM are among the top three theories with the strongest effects on attitude, intention to use and actual use behaviour, ranging between 0.32 and 0.51. Second, the importance of the theories varies depending on the outcome variable. For instance, UTAUT (B = 0.32) plays a more significant role when examining attitude, while it becomes the least important when investigating use intention (B = 0.20). Delone and McLean’s IS success model (B = 0.39) and TAM (B = 0.32) are the strongest theoretical frameworks in the studies focusing on intention. The factors of SCT have medium effects on intention (B = 0.29) but very weak effects on attitude (B = 0.06). Third, the highest variance in the effects of theories is found when examining the predictors of use behaviour. There are fewer theories that are employed for studying the actual use of IS, compared with the research focusing on two other outcome variables. In line with the analysis of the role of each dependent variable (attitude, intention to use and use behaviour) in predicting technology acceptance, such findings can be interpreted in two ways. These findings could mean that each theoretical framework has a different contribution depending on the adoption phase. For example, TAM and TRA focus on the beliefs and attitudes about technology utilisation and the results of its use [85,130,154]. Such beliefs are essential at any stage of the technology adoption process, whether it is the evaluation of the technology prior to its use or after its trial. TRI measures individual traits, such as optimism, innovativeness, insecurity and discomfort, underpinning the utilisation of technology [63,151], which explains the invariant importance of the theory irrespective of the outcome variable. Delone and McLean’s success model is used to explore system characteristics affecting intention and satisfaction [155,156], making the theory more applicable to the investigation of the willingness to use IS. By using SCT in technology adoption, researchers emphasise users’ beliefs about their capabilities to operate technology, such as self-efficacy, rather than the technology functions [12]. Therefore, the theory is underemployed when exploring the behaviour of technology users with experience. In addition, following the discussion about the difference between the predictors of attitude, use intention and use behaviour, some theories may be relatively more useful in explaining the psychological and external factors underlying attitude towards technology, its use or the intention to use it. For example, given that SCT emphasises the impact of environmental factors on users’ behaviour [157], it is logical that SCT has a stronger predictive power when it comes to intention, as intentions can be shaped under the influence of contextual conditions [152]. Hence, the theories explaining psychological, motivational and affective states through factors such as self-efficacy, social influence and trust are less powerful when examining actual behaviour and more useful to explore pre-adoption stages. The above evidence adds to the research that has been questioning the contributions of new theories to technology acceptance, beyond TAM and its extensions [15,17,158,159]. Furthermore, such findings could encourage future researchers to take into consideration the adoption phases when selecting the theories that can explain the psychological/cognitive states triggering behaviour.

Subgroup analysis – dominant theory.

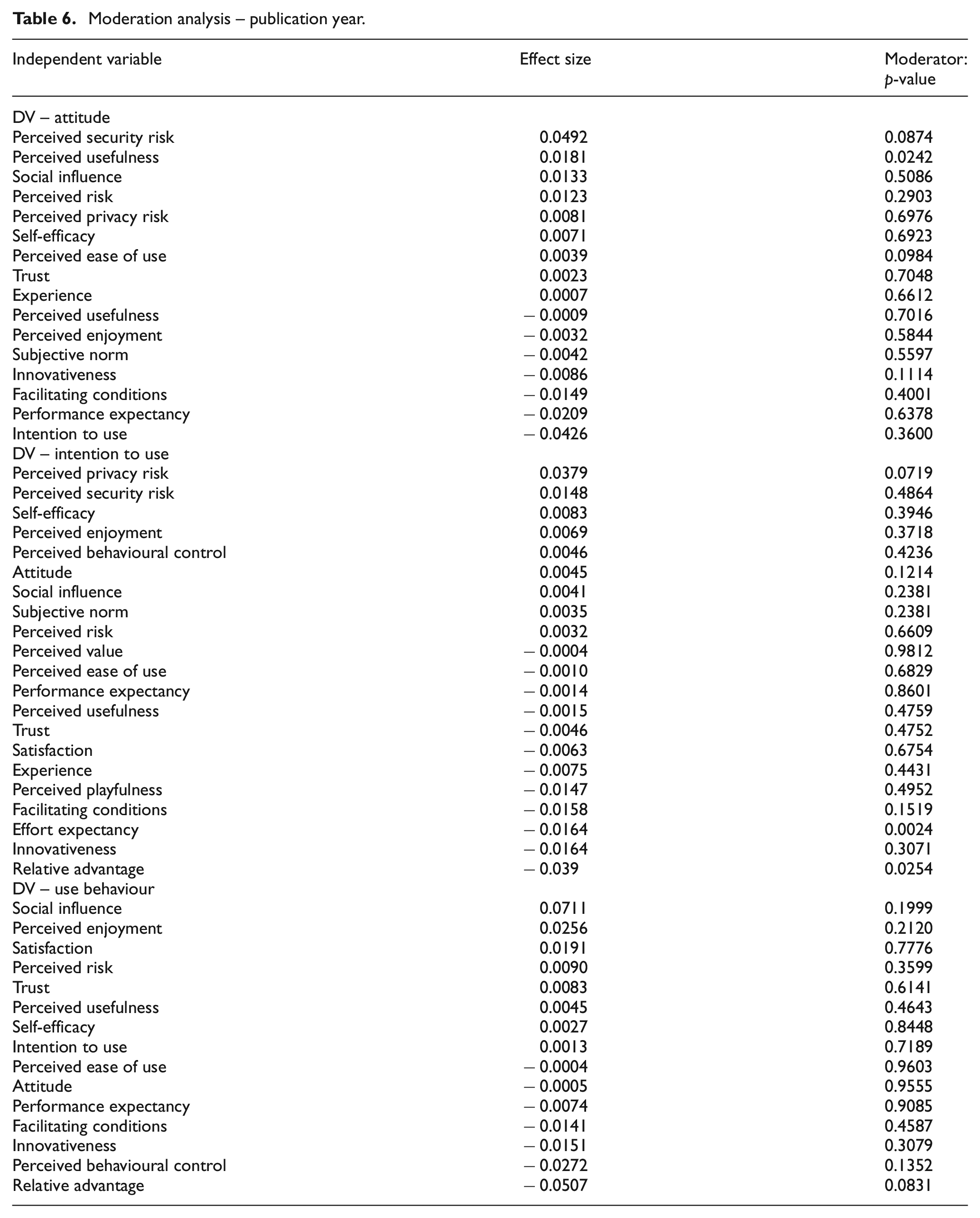

4.3. Longitudinal change

Technology acceptance factors could have different levels of importance for people over time. The main driver of such a change could be the development of technology, which has evolved from stand-alone computers to intelligent connected environments and applications cutting across many contexts and life domains [160]. Such advancements, in turn, could underpin the transformation of people’s mind-set when it comes to their expectations, perceptions and beliefs. However, moderation analysis by publication year demonstrated that the effect sizes of almost all the predictors have not changed significantly over time, as demonstrated by p-values being greater than 0.05 and small effect sizes (Table 6). Since the introduction of the first TAM by Davis [48], the role of the factors in determining behaviour has mostly stayed as critical as it was before. The results do not support the assumption that the pace of technology advancements, globalisation, the deeper integration of technology into individuals’ daily life and presumably enhanced user skills have changed the importance of the factors underpinning technology adoption. Only perceived usefulness, effort expectancy and relative advantage have significant effects, although they are close to zero, which suggests that there is almost no meaningful change in the effects of variables on adoption. Perceived usefulness has a very weak positive effect (B = 0.0181, p < 0.05) when examining attitude. This shows that when evaluating technology, the increase in the strength of the belief that technology can enhance users’ performance is minimal. The effects of effort expectancy (B =−0.0164, p < 0.05) and relative advantage (B =−0.0390, p < 0.05) are weak and negative when explored in relation to intention to use. In contrast to perceived usefulness, the relative advantage is measured in juxtaposition to alternative systems and is reliant on a clear understanding of the capabilities of the systems being compared. When measuring future use intention, people started paying slightly less attention to the advantages of new systems over incumbent ones. Such an assumption is also supported by the non-significant change in the effect of relative advantage in relation to use behaviour. The weakened effect of effort expectancy means that over time the belief about the degree to which the system is effortless to operate has become less critical. A plausible interpretation is that, with the wider embeddedness of technologies, users have become more technically skilled in operating Information communication technology (ICT) systems. However, such a small change in the effects of relative advantage and effort expectancy demonstrates that they still have almost the same influence on adoption decisions as at the outset of technology acceptance research. Given the potential interrelation of longitudinal changes in the technology acceptance domain and IS applications, an analysis of the variance across applications would provide a more critical insight into the relative importance of the factors, depending on the context where technology is utilised.

Moderation analysis – publication year.

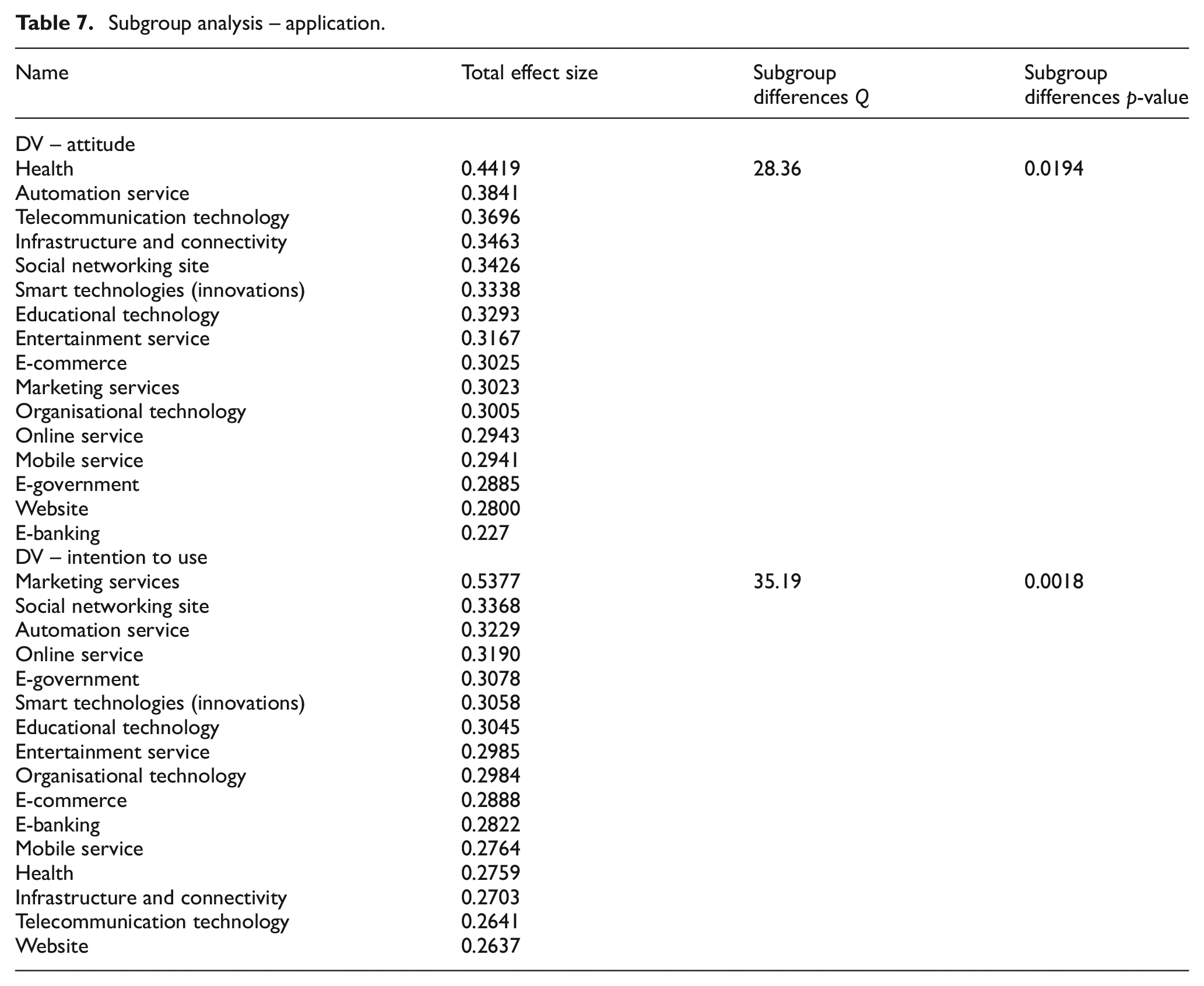

4.4. Comparison across applications

Table 7 presents the results of the subgroup analysis based on applications showing a significant variance in the effects on intention and attitude in different contexts (p < 0.05). A subgroup analysis with the predictors of use behaviour was not performed. Due to the low number of studies that actually measured use behaviour using various applications, no analysis was possible. The results indicate that the significance and effects of the predictors vary for different applications. This finding is consistent with the arguments raised in a wide body of research, which extended theories to examine the adoption of applications, such as e-banking, education technology, health care, e-government or smart technologies. The study supports the claim that the factors that predict adoption in household and organisational settings are different, as the role of convenience, privacy, trust and security is more critical when systems are used for private purposes [11,66]. To interpret the variance in the predictors’ power, the above results should be considered in light of the findings of the analysis on longitudinal changes in the effect sizes of the factors. The findings mean that beliefs about technology utilisation mainly stay constant, while there is heterogeneity in the predictors of the use of a particular technology. The context in which technology is utilised creates unique conditions, needs and value, affecting the judgement of technology utility [150] and potential risks resulting from technology use. Such findings offer a few directions for future research that would help expand the boundaries of existing knowledge about the determinants of technology acceptance. First, researchers could potentially focus on models in the areas that matter the most by selecting factors that are likely to be the most relevant in the given context. Second, future studies could examine the patterns of interaction in different contexts. This would help understand the behavioural implications of new technology applications, which may impact users’ beliefs, expectations and preferences. Third, new applications may entail specific problems (e.g. psychological challenges and risks), which can be explored on a more granular level in future research.

Subgroup analysis – application.

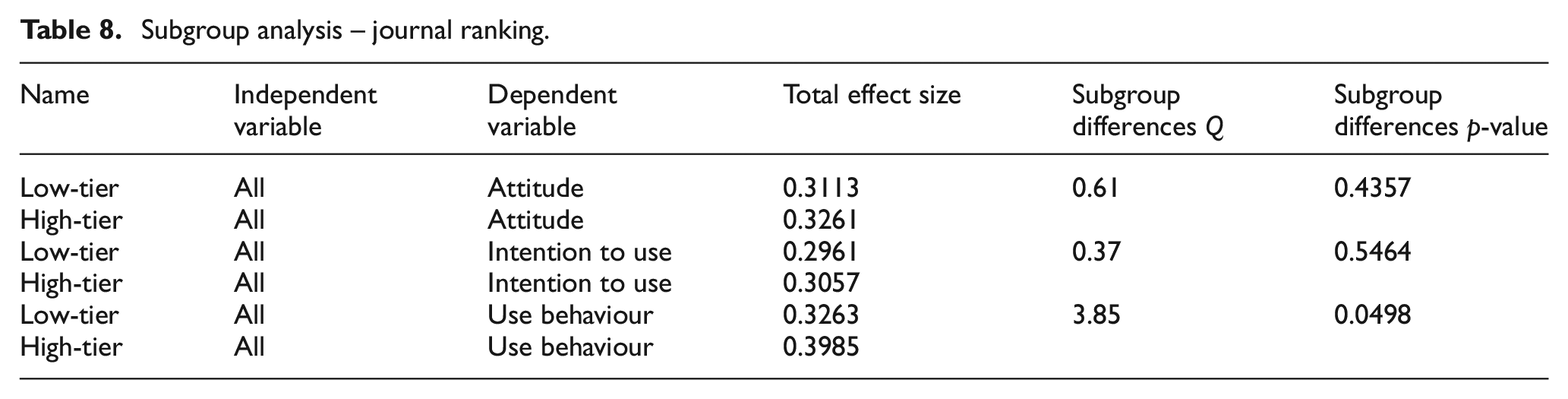

4.5. Comparison across journals

While the subgroup analyses based on applications and publication time show a variance in the examined factors, a comparison of the results across journals demonstrates how consistent the findings are in the published research depending on the outlet rank. Publishing in top-tier outlets has been challenging and time-consuming. It is often considered a prerequisite for job promotion as it is considered to demonstrate the quality of research [161]. Although a paper needs to be objectively evaluated on its merits rather than the journal in which it is published, journal ranking may still affect the perception of the value of published research. The results of subgroup analysis presented in Table 8 show the variance in the predictive strength of factors among ‘top-tier’ (ABS 3 and 4) and ‘low-tier’ (ABS 1 and 2) journals. The results of the analysis show that a change in effect sizes is observed only when acceptance is manifested as use behaviour (p < 0.05). This finding brings some clarity about the differences among the journals of different ranks [91]. They demonstrate that the variance in the effect sizes among journals is non-significant for use intention and attitude (p > 0.05). This indicates that evidence about predictive strength presented in low-ranked journals is similar to that published in high-ranked outlets. The significant variance when it comes to use behaviour, though, could suggest that ‘top-tier’ outlets frequently adopt a more sophisticated methodology and research design, which makes it possible to measure actual behaviour.

Subgroup analysis – journal ranking.

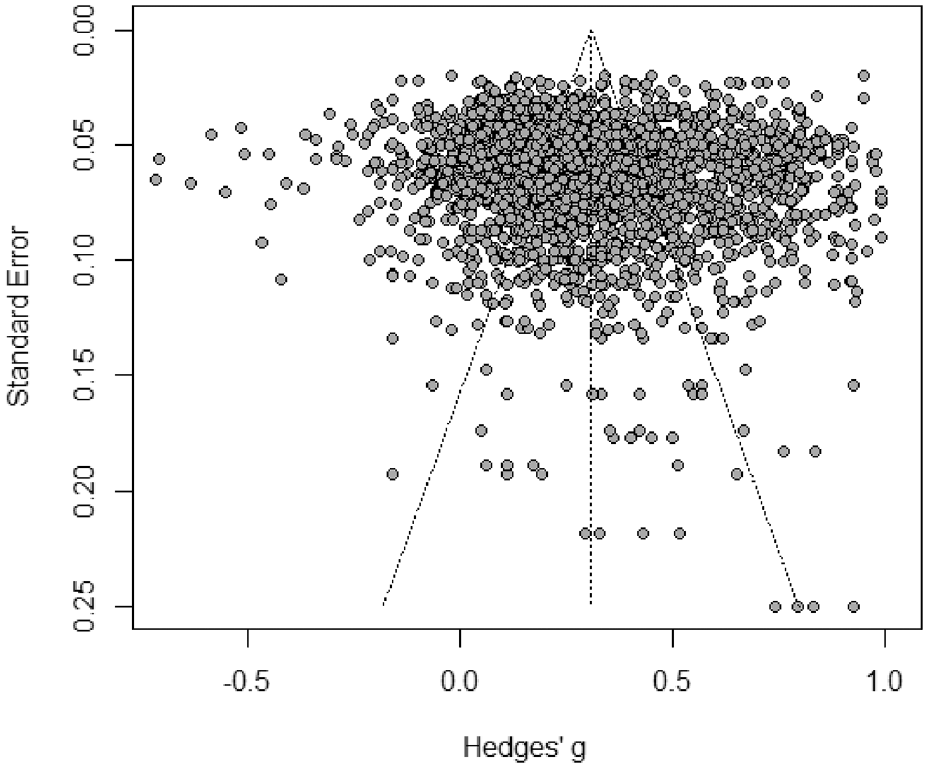

4.6. Publication bias

The funnel plot in Figure 6 shows that there is a likelihood of publication bias. The plot demonstrated the distribution of pooled effect sizes in the sample across the y-axis (standard error) and x-axis (effect size). To exclude the possibility of publication bias, all studies should be evenly distributed around the central dotted line of the funnel. The figure shows that the values are centred on the top of the plot, which gives reason to assume the possibility of a small-sample bias. Egger’s test [113] results were significant (intercept = 0.707, p-value = 0.0045), confirming the asymmetry. These findings indicate that some research studies have been published on the basis of the significance and strength of the results, thus potentially leading to skewed overall conclusions. Such a finding is in line with previous results that all academic research fields can be affected by such publication bias [162–166]. Due to the possibility of unreported findings of weak and/or insignificant predictors, the pooled effects of some adoption factors could potentially be lower or not significant. This result warrants future research and provides valuable evidence, given that publication bias in the area of IS management has not been fully explored [163,167].

Funnel plot.

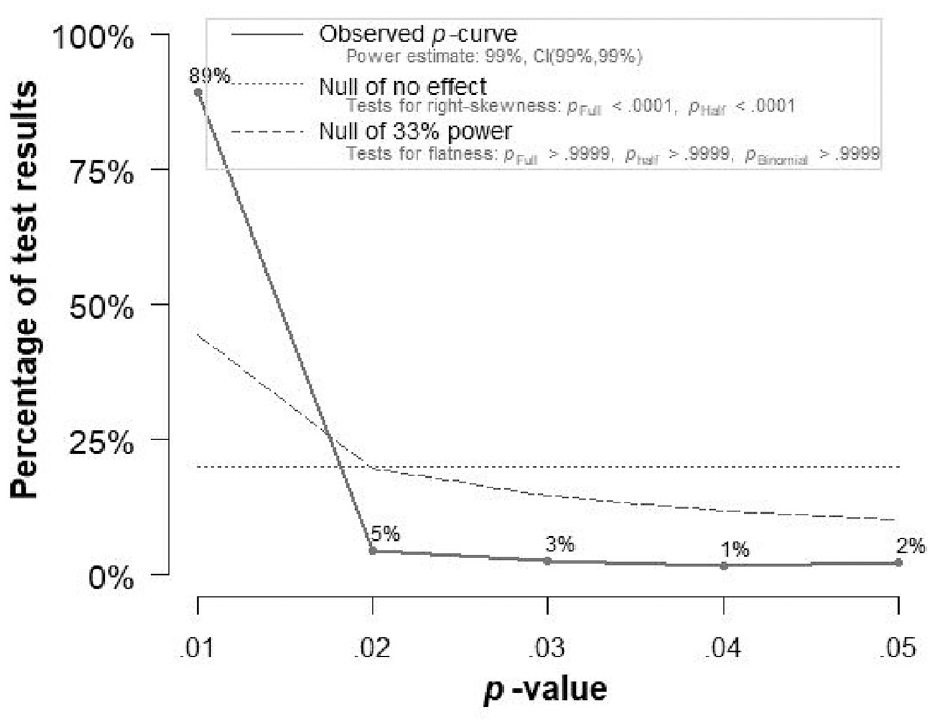

We also investigated the possibility of p-hacking practices in the technology adoption stream. The assessment of p-hacking is a relatively new practice. Figure 7 presents the p-curve plot, representing the distribution of effect sizes along the x (p-values pooled from analysed papers) and y axes (the percentage of papers with particular p-values). The plot features three lines. A solid line represents the distribution of ‘true’ effects–the empirical p-curve, aggregated from the p-values of all the papers which are analysed in this study. The dashed line shows the expected p-value distribution, while the dotted line shows the distribution in the condition if no effect is found. To confirm that studies publish true effects as expected, first, the skewness of the solid line should be similar to the dashed line, and, second, 80% of pooled effects or more should be at a p-value of 0.01 or 0.02 [168]. The p-curve plot produced in this study indicates that 89% of published effects are at a p-value of 0.01, confirming that the majority of published research reported true effects. This means that there is no evidence that the p-values of the relationships examined in the sample of this meta-analysis were manipulated.

P-curve analysis.

5. Conclusion

This article has provided a comprehensive analysis of the determinants of technology acceptance and explored the conditions that explain the difference in the effects of predictors published in the literature. To address the two objectives, six sub-studies were conducted. In the first sub-study, we identified 21 independent variables and pooled effect sizes in relation to attitude, intention and use behaviour. The predictors of each outcome variable were found to be different, enabling us to conclude that attitude, intention and use behaviour cannot be used interchangeably. In the second sub-study, the use of the subgroup analysis provided insights into the variance in the strength and the significance of the predictors depending on the theoretical frameworks. It was found that TAM, UTAUT and TRI are the most robust and powerful theories when testing factors in relation to all three outcome variables. In the third sub-study, the results of the moderation analysis suggested that there is no significant change in the effect sizes of the predictors published at different points in time. The results of the subgroup analysis in the fourth sub-study demonstrated the change in the predictors of technology acceptance when applied in different contexts. The fifth sub-study using the subgroup analysis showed the change in variables predicting use behaviour, suggesting that there is a difference in research published in low- and high-ranked journals. Finally, the analysis of publication bias indicates that the technology adoption research suffers from publication bias, meaning that published results should be treated with caution, as they might underrepresent actual findings. The analysis of p-hacking showed that there is no evidence of p-value manipulation.

5.1. Theoretical contributions and practical implications

This meta-analytical review makes several contributions to the literature. First, this review produced comprehensive data on all key predictors of technology acceptance, in contrast to prior research, which mostly focused on particular theories [18,25,84,169]. The adopted approach provided a complete picture of the underpinnings of technology use. The analysis of the predictors of attitude, intention to use and use behaviour contributes to the debate as to which outcome variable works better as a proxy for acceptance [12,35–40]. Such findings suggest that there is a conceptual difference among the dependent variables that have been used to explain technology acceptance. Such difference is important to consider to drive conclusions about the predictors of technology acceptance as the focus on a single variable can overstress or underemphasise the importance of underpinning conditions. Also, a comparative insight into the strength of the predictors depending on TAMs adds to the discussion about the contribution of theories to advances in the field [15,17,158,170]. These findings facilitate an understanding of the importance of theoretical frameworks conducive to technology adoption stages and the factors underlying users’ cognitive, affective and behavioural states, which, in turn, helps identify the potential paths of the development of technology acceptance research.

Second, the article provides a longitudinal insight and the application-wise difference of factors in technology acceptance research, which has been missing in prior meta-analytic studies. Such an insight brings evidence about the dynamics in technology acceptance research and helps understand how the determinants of users’ behaviour change along with the progress in technology and new users’ practices. The evidence provided is important as it offers ways in which future research could progress by focusing on the instrumental factors, particularly in understanding specific applications, interaction patterns and problems, rather than the variables that are generalisable across different use scenarios and settings.

The findings of the analyses undertaken to address the objectives of the research provide implications for practice too. First, the findings of the predictors of attitude, intention and use behaviour inform practitioners about the importance of delineating the factors that they need to focus on when trying to improve users’ perception of technology versus facilitating its actual purchase. Since the risk factors, social influence and trust are significant in relation to use behaviour but not attitude and/or intention, marketing communication for existing customers needs to be tailored around safety, security and evidence about positive technology use outcomes. In contrast, for prospective customers, the promotion of technology can mainly focus on inducing positive psychological states through the reassurance that the technology is useful, easy and pleasant to operate. Second, the mainly insignificant variance in the effects of factors over time suggests that the findings from past research could be reusable as the main beliefs underpinning technology acceptance have largely been unchanged. Third, the significant difference in the predictors of attitude and intention depending on the research context suggests that the development and promotion of IS should be based on the careful evaluation of the needs, beliefs and expectations of their target users. The finding also means that the longitudinal invariance in the predictors of technology acceptance should be treated with caution since beliefs are not universal for different technologies and applications. Finally, as the antecedent factors of technology acceptance are largely the same across journals, practitioners can be assured of the consistency of evidence irrespective of the type of academic outlets they have access to.

5.2. Limitations and future research avenues

Given that the focus of this article was to provide a comprehensive view of the predictors of technology adoption, this study is limited in the analysis of factors for the moderation analysis. Future research could examine the role of context-specific or individual-specific (e.g. personality) factors moderating the strength of predictors in different technology adoption models. Another limitation is that meta-analytic reviews usually exclude qualitative studies. However, future research could use techniques of coding qualitative data and quantitatively analyse the findings. Third, future research could use the strongest relationships between predictors, attitude, intention to use and use behaviour to verify them empirically. Finally, current literature shows that emergency events, such as the pandemic, lead to the digitalisation of daily and business practices, widening the applications of the technology and changing individuals’ perceptions [171–173]. This rapid and forced adoption creates a need for further examining technology adoption and investigating whether there will be changes in the factors identified in this study.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.