Abstract

We used a multi-method design to determine the quality of technology-enhanced performance-based peer feedback, teacher candidate strategy use, and child behaviors over time. We also explored teacher candidates’ perceived social validity of technology-enhanced performance-based peer feedback. Eleven teacher candidates enrolled in an early childhood certification program and their focus children that were at-risk or identified with disabilities engaged in naturalistic early childhood environments (home, community, and classroom settings). Teacher candidates were grouped by their environmental assignment and reviewed video interactions to provide feedback to their peers. Teacher candidates' quality of feedback changed over time which impacted teacher candidate use of strategies as well as focus child use of skills. Additionally, the teacher candidates perceived the intervention package to be feasible and acceptable. Implications for research and practice are discussed.

Naturalistic instruction (NI) is an evidence-based practice which has enhanced a variety of outcomes including individualized education program goals, engagement, and generalized skills (Snyder et al., 2015). NI includes the educator using everyday activities and people to engage all children while intentionally embedding learning opportunities to provide the intensity of support necessary for skill acquisition. For example, choice making is an NI strategy that can be used during many activities and routines such as play and mealtime to create an opportunity to engage with others by prompting a child to make a choice between two items. The use of NI is important for teacher candidates (TCs) to use to engage all children and provide the frequency and intensity of opportunities needed to support young children’s attainment and maintenance of target skills. Although NI is evidence based, NI occurs variably and infrequently within classrooms suggesting a research to practice gap (Coogle et al., 2019).

Quality educator preparation results in educators gaining access to information and practice opportunities necessary to confidently and competently deliver evidence-based practices (Brownell et al., 2010). To provide quality preparation, teacher candidates must have opportunities to advance their knowledge and engage in application through hands-on experiences in field settings as these can lead to enhanced confidence and practice in addressing the diverse situations that arise within a learning environment (Leko et al., 2012; Ottley et al., 2017). For this reason, field placements have been identified as one of the most critical aspects of teacher preparation (Ottley et al., 2017). To ensure a quality field experience resulting in enhanced confidence and competence, Ottley et al., 2017 suggest five recommendations when structuring field placements. These include: (a) define the goal(s) of the field placement, (b) select activities that directly align with the goal(s) (e.g., small group instruction, co-teaching a math lesson), (c) determine the products or means by which candidates will demonstrate knowledge and skills (e.g., video-based reflection, lesson plans, data-analysis report), (d) assess candidates using concrete performance measures, and (e) provide ongoing feedback.

Although we have more to learn regarding which of these five practices is most effective and under which conditions, researchers have demonstrated the effect and promise of performance-based feedback on early childhood teacher practices (Barton et al., 2016, 2019, Coogle et al., 2015, 2020). For example, researchers have provided performance-based feedback as a component of their preparation program upon face-to-face observations (Barton et al., 2016, 2019). Researchers have also reported the need for technology to overcome barriers related to an authentic environment, scheduling and monetary resources that are frequently associated with face-to-face supervision. This has resulted in using technology to observe and provide both affirmative and suggestive feedback from an alternate location through the use of Skype, Zoom, and Google Meets (Coogle et al., 2015, 2020). This is often referred to in the literature as technology-enhanced performance-based feedback (TEPF) (Coogle et al., 2020). As a result, of both face-to-face and TEPF, early childhood teacher candidates (TC) have increased their use of NI.

While observations have occurred in both face-to-face and from an alternate location using technology, the feedback component in both contexts has been delivered through technologies such as email (e.g., Barton et al., 2016) and real-time video conferencing systems (Coogle et al., 2015). Given the various technologies available and those with access, this is a resource which can be leveraged to enhance and intensify supervision.

Another variable to consider regarding TEPF feedback is social validity. Social validity is a critical element as it allows researchers to determine an intervention’s perceived feasibility and effectiveness (Coogle et al., 2015). Participants receiving TEPF feedback have found feedback to be socially valid, commenting on the immediacy, feasibility, and effectiveness (Coogle et al., 2015). This is important because if educators do not perceive an intervention to be feasible or effective, it is unlikely that they would continue to use it despite its effectiveness.

Although instructor and researcher-delivered TEPF feedback is both effective and socially valid, one limitation is the capacity individuals such as teacher educators have to deliver feedback, even when technology-enhanced, to a larger number of TCs (Hudson et al., 1994; Loman et al., 2020). Some researchers have suggested differentiated supports, such as providing feedback more frequently, to ensure TCs receive the intensity of support needed to use a target practice with fidelity (Coogle et al., 2015). When a TC uses a target practice with fidelity, he or she uses the practice as intended. TEPF delivered by peers may be one tier of support to alleviate challenges, such as distance and time, associated with one individual such as a course instructor or university supervisor providing feedback (Bowman & McCormick, 2000; Hudson et al., 1994; Kennedy & Lees, 2016; Loman et al., 2020; Mallette et al., 1999; Morgan et al., 1992; Pierce & Miller, 1994).

One group of TCs which is important to focus are early childhood TCs. Early childhood educators have indicated feeling unprepared to meet the diverse needs of all children (Barton & Smith, 2015; Bruder et al., 2013; Savolainen et al., 2012). Therefore, identifying potential effective and feasible mechanisms such as TEPF peer feedback may support their practice.

To the best of our knowledge, only two studies have addressed peer feedback implemented with early childhood TCs (Kennedy & Lees, 2016; Loman et al., 2020). Researchers have studied video-based peer feedback to promote TCs’ use of adult-child interactions (Kennedy & Lees, 2016). The authors found improvement in pre to post-test CLASS (Classroom Assessment Scoring System) scores for all TCs when targeted (e.g., additional explicit feedback and recommendations) or intensive (e.g., individual improvement plans) support were provided. Additionally, Loman et al., (2020) investigated peer coaching with early childhood/elementary education TCs in school settings. University supervisors and peer coaches rated the coaching as helpful and positive in terms of improving the candidates’ ability to provide objective feedback. The author indicated additional training for the coaches and intentionally attending to the matching process for peer partners.

Although peer feedback has demonstrated effectiveness, researchers have not yet investigated how early childhood TC practices, focus child behaviors, and quality of feedback change over time upon receiving TEPF peer feedback, nor have researchers determined the perceived social validity. Therefore, the purpose of this study was to determine how early childhood TCs and focus child behaviors change over time, how the quality of feedback changes over time, and the perceived social validity of a TEPF peer feedback intervention package. The research questions we sought to answer included: 1. Do TCs, who participated in an early childhood teacher preparation course that included a field experience with TEPF peer coaching, demonstrate greater use of TC behaviors over time based on exposure to peer feedback? 2. Do early childhood focus children, who received NI, demonstrate greater use of focus child behaviors over time? 3. Do early childhood TCs, who participated in an early childhood teacher preparation course that included a field experience with TEPF peer coaching, demonstrate improved quality of feedback provision over time? 4. Is there an association between early childhood TC use of target behavior and focus child use of target behavior? 5. What are early childhood TC’s perceived social validity of the TEPF peer feedback system?

Method

We used a multi-method design to answer our research questions (Collins et al., 2007). Using multiple methodological strategies helped to increase rigor and ensure data was credible, transferable, dependable, and confirmable (Brantlinger et al., 2005).

Researchers’ Role

The research team included five researchers. The first author was the course instructor, and the third author was the graduate assistant for the course in which participants were recruited. The second author was a faculty member at an external higher education institute with early childhood education programs. The fourth and fifth authors were consultants on the project and had extensive experience training TCs. Combined, researchers had over 30 years of experience in preparing early childhood educators.

Participants and Setting

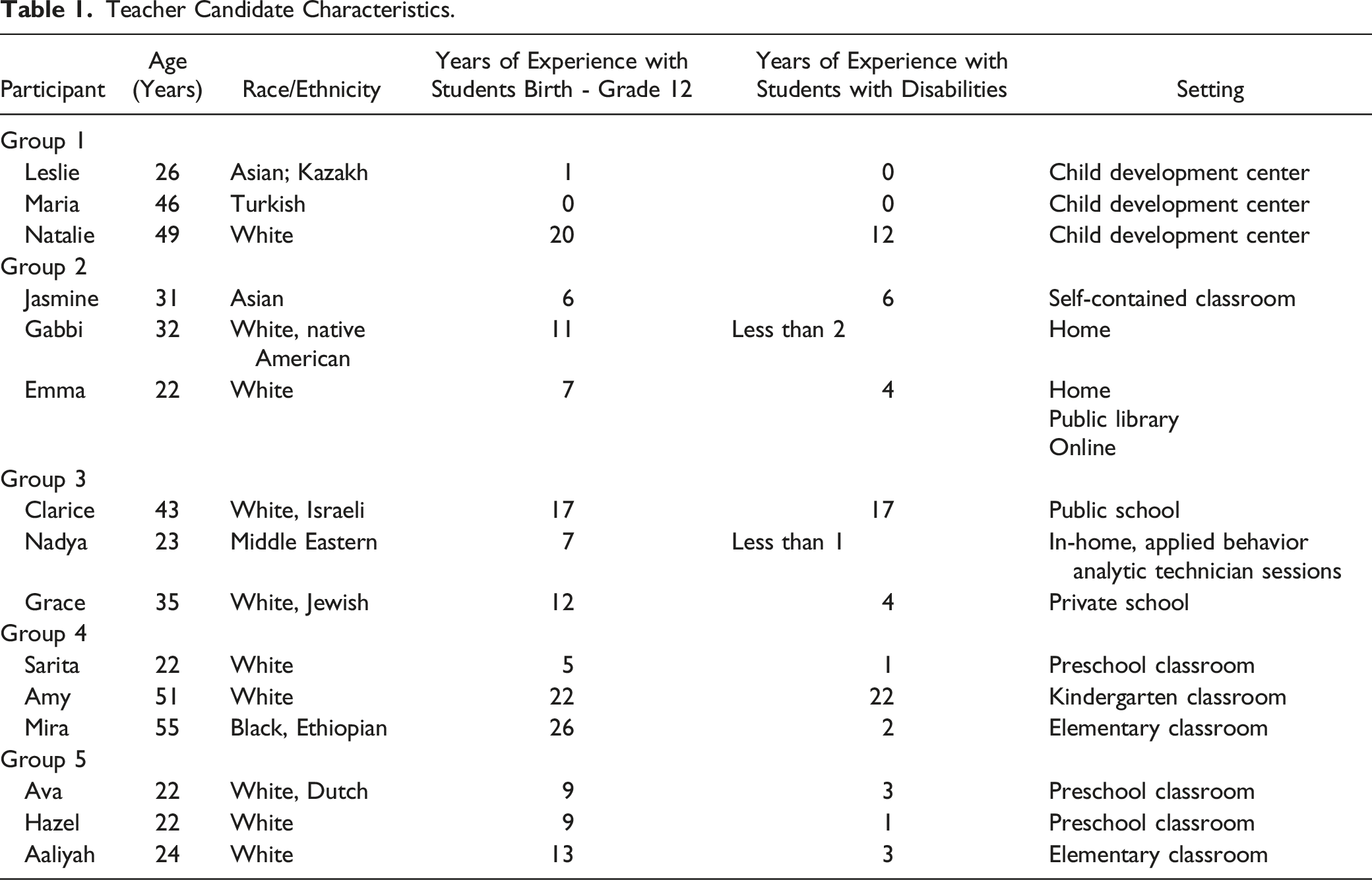

Teacher Candidate Characteristics.

Procedures

We obtained university institutional review board approval prior to beginning the study. Participation in this research study was one of the course assignment options within the early childhood capstone course. All TCs and the legal guardians of the focus children provided informed consent to participate. After providing consent, the first and third authors provided instruction on NI through a definition, examples, and class discussion. Then they placed the TCs into five groups of three to complete all research study procedures. These groupings were based on the setting in which the TCs were completing their field placement (e.g., school, home, or community).

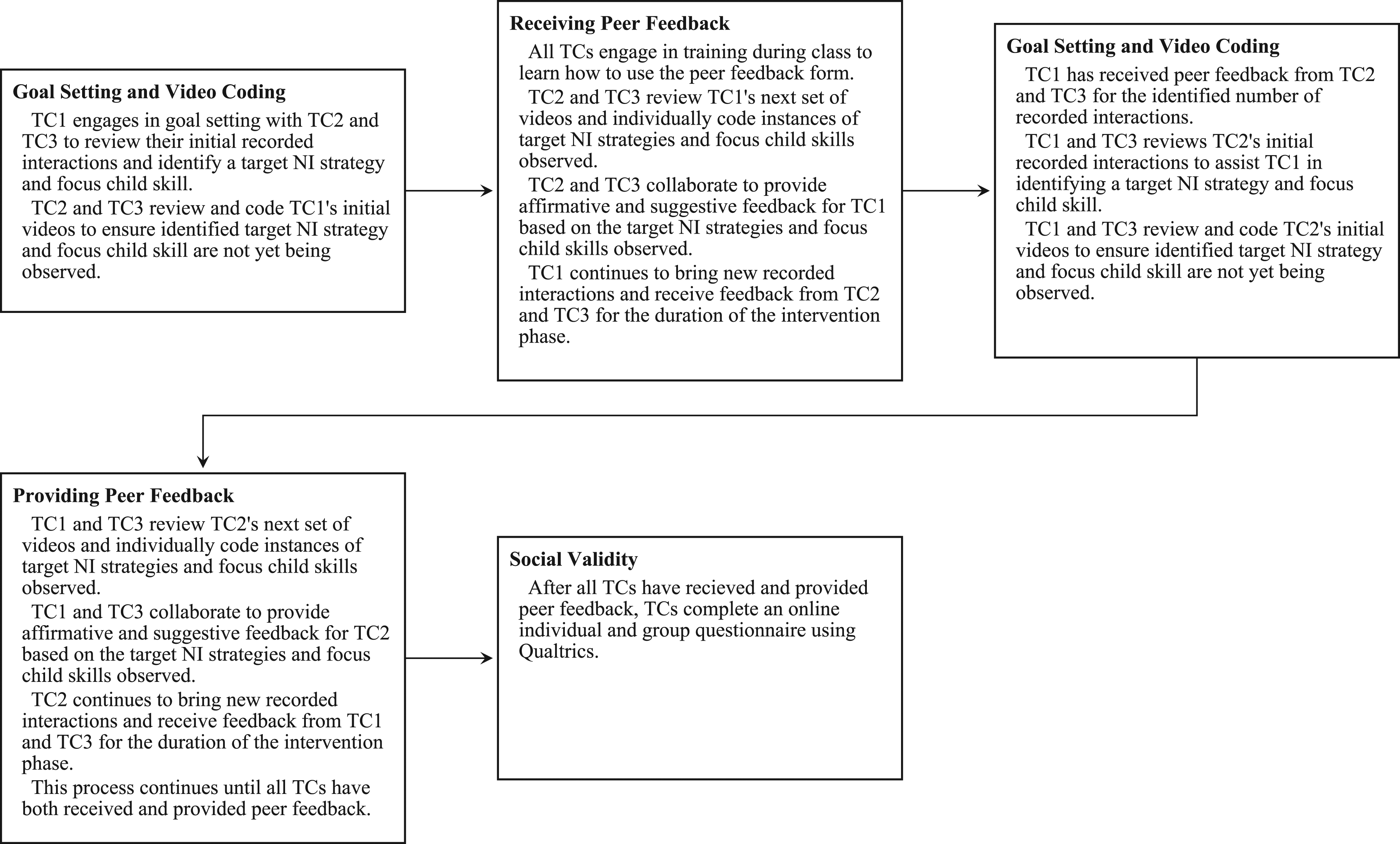

TCs were asked to upload recordings of their interactions with their identified focus child. Recorded sessions ranged in length from 4:23 minutes to 8:29 minutes during the baseline condition and 3:37 to 5:59 minutes during intervention. During these sessions, TCs and focus children engaged in a variety of adult-led and child-led activities either one on one or in a small group. After uploading these recorded interactions, TCs engaged in goal setting, video coding, and peer feedback procedures as described below. Lastly, TCs completed questionnaires to provide information regarding social validity of the TEPF peer feedback process. Figure 1 provides a visual with details of how the TCs engaged in the TEPF peer feedback process. Engaging in the Peer Feedback Process.

Use of Technology

TCs video-record their interactions with their identified focus child using a password protected personal device (e.g., iPhone) to collect both TC and child data. Each TC set up their video recording equipment to enable them to record interactions with their focus child within their learning setting. This may have included the use of a stand to prop up a phone or tablet if needed. After recording interactions, TCs uploaded these recordings to Blackboard, the secure course website.

Goal Setting and Video Coding

The first and third author provided TCs with examples from a list of several NI strategies that aligned with specific skills (e.g., using environmental arrangement to provide opportunities to communicate wants and needs). Upon receiving examples, TCs engaged in a goal setting meeting with procedures from our previous research that involved (a) reviewing initial recorded interactions and (b) collaboratively identifying and defining a target NI strategy and focus child skill. The first and third authors were present in class while this goal setting meeting took place and supported TCs when needed in developing operational definitions for NI strategies and focus child behaviors. Since NI strategies were identified based on review of initial recorded interactions with the focus child and each focus child had unique strengths and areas of need, TCs identified different target NI strategies. The identification of NI strategies was also dependent upon whether TCs were already demonstrating use of the strategy. For example, if a TC was observed to be using choice making frequently in the initial recorded interactions, then this NI strategy would not be selected for peer feedback.

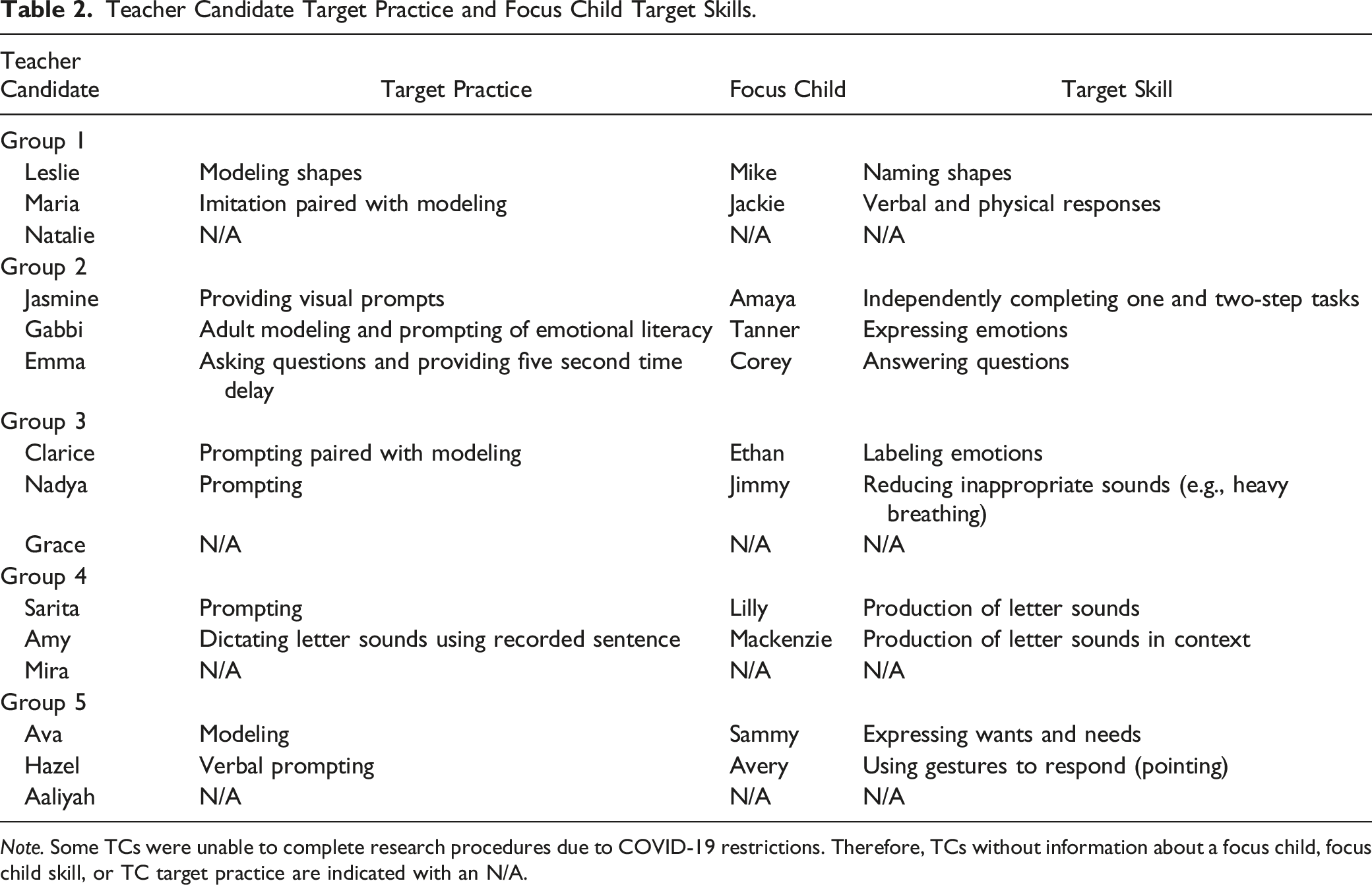

Teacher Candidate Target Practice and Focus Child Target Skills.

Note. Some TCs were unable to complete research procedures due to COVID-19 restrictions. Therefore, TCs without information about a focus child, focus child skill, or TC target practice are indicated with an N/A.

Peer Feedback

After completing the goal setting and video coding procedures, in-class training was provided regarding how to use the peer feedback form (see measures) to engage in peer feedback. The first author provided an overview of what quality feedback should include, gave examples of feedback statements, and discussed how feedback could be used to improve practice. Then, TCs provided collaborative feedback specific to the target NI strategy. This collaborative feedback procedure took place during each course session and feedback was provided within 1 week of the recorded interactions. After receiving feedback, TCs met with their focus child again according to their established field placement schedule, with most TCs meeting at least once per week with their focus child. Time between recorded interactions and the provision of feedback varied across participants, especially given the onset of the COVID-19 pandemic and resulting shifts in TC scheduling.

TCs used a feedback form developed by the first and third authors to provide a maximum of three affirmative and three suggestive feedback statements. This helped to ensure that although the time between recorded interactions and the provision of feedback may have varied across participants, each TC received at most three affirmative and three suggestive feedback statements. One feedback form was provided with agreed upon feedback from both TCs during each feedback session. Affirmative feedback included examples of TC use of the target NI strategy and how this elicited the target skill (e.g., “I noticed you used modeling when you said I don’t like that when playing blocks with the child. Students with whom you were working responded by echoing back I don’t like that.”). Suggestive feedback included how TCs could use the target NI strategy and how this would elicit the target skill (e.g., “You might consider using modeling by expressing I don’t like that to the child when they display inappropriate behaviors. This would create an opportunity for the child to practice the phrase independently.”). After the second week of in-class peer feedback, the first and third authors made amendments to the feedback form due to a need for more explicit feedback prompts upon watching the video-recorded interactions. These prompts were necessary to ensure feedback was consistently aligned with the target NI strategy and focus child skill.

Fidelity of Implementation

The first and third author reviewed completed feedback forms for fidelity. Although the feedback form included a maximum of six total feedback statements (three affirmative and three suggestive), the number of feedback statements provided during each feedback session varied according to the TCs’ use of target NI strategy. For example, if a TC only used their target NI strategy one time during an interaction, peers could only provide one affirmative feedback statement. After class meetings switched to an online format due to COVID-19, TCs engaged in peer feedback using an online version of the form and providing feedback verbally during online class sessions in small breakout groups via Blackboard.

Each week, TCs also had a checklist to ensure all steps in the peer feedback process were followed. These checklists were individualized for each group of TCs to align with the schedule for feedback. Checklist items included steps such as bringing the assigned number of video recordings to class, watching videos with peers, engaging in coding of TC and child behaviors, and engaging in peer feedback.

Social Validity

To determine perceptions about the feasibility and effectiveness of engaging in TEPF peer feedback, TCs completed online individual and group questionnaires using Qualtrics, an online cloud-based survey tool (see measures). TCs were placed in groups of three to complete the group questionnaire during the final class meeting of the semester. This group questionnaire allowed TCs to collaboratively discuss and respond to a questionnaire about their experiences engaging in peer feedback. The authors hoped to elicit more in-depth discussion and elaboration by placing TCs in groups of three to complete the group questionnaire rather than asking the entire class to discuss the peer feedback process together. To collect individual social validity data, TCs were provided with an invitation to complete a separate individual questionnaire at a time convenient for them at the conclusion of the semester. Both questionnaires focused on all aspects of the peer feedback process.

Measures

Video Coding Form

The first measure was the video coding form. The peers responsible for providing feedback used a video coding form to determine the frequency at which the target NI strategy identified and defined during the goal setting meeting occurred during each video-recorded interaction. The same definition for each target NI strategy was used for each video coding session. Within this document they also described what the strategy looked like and when the strategy took place. This same form and procedures were used to determine the frequency of the child target skill. Data were coded for frequency of strategy/skill use across sessions.

Peer Feedback Form

The second measure was the peer feedback form. The first and second author coded for affirmative and suggestive feedback based on Scheeler’s framework (2004). Suggestive feedback was defined as clear and concise directions for desired behavior change. Affirmative feedback was defined as feedback that is positive, focused on specific teaching behaviors. Feedback was scored with anchors between 0–3. For example, a score of three in suggestive feedback required specific naming of the target strategy and suggestions for using the target teaching practice. Similarly, a score of three in affirmative feedback contained specific affirmations of using that target NI strategy.

Social Validity Questionnaires

Group and individual questionnaires consisted of both open-ended items which were developed by the first and fourth authors to determine perceived effectiveness and feasibility of all aspects of the study. The individual questionnaire consisted of six open-ended questions related to the process of engaging in peer feedback. Questions focused on all aspects of the peer feedback process including receiving and delivering feedback to peers. The group questionnaire included the same six open-ended questions designed to elicit conversations between educators regarding their experiences providing and receiving peer feedback. TCs were specifically asked to discuss challenges and successes during the peer feedback process.

Data Analysis Procedures

A within-subjects, repeated measures analysis of variance (ANOVA) across three time-points was conducted in SPSS to answer research questions one and two. Each participant and corresponding focus child were tracked for several baseline sessions (between one and seven sessions) to collect TC use and child use counts prior to introducing the intervention. Frequency counts were calculated for all TCs and child participants based on the number of observations within each video. The number of strategies used by the TC and the number of skills used by focus children were counted (see Table 2). These baseline probes were averaged to represent time-point one for TC use and child use scores. Taking the average as opposed to using the final baseline probe for each candidate prevented a loss of data and helped control for increased familiarity with the procedures over time being mistaken for improvements of target behavior. Time-point two, represented TC use and child use scores after one dose of feedback. Time-point three was calculated by taking the average TC use and child use scores after feedback doses two and three. Mauchly’s Test of Sphericity indicated sphericity was not assumed. Therefore, a more stringent criterion, Greenhouse-Geisser, was used for the test of within-subjects effects, which resulted in a reduction of the degrees of freedom both for analysis of TC use and analysis of focus child use.

A within-subjects, repeated measures ANOVA across two time-points was conducted in SPSS to answer research question three. TCs’ feedback was assessed for quality so that time-point one represented their first attempt at providing feedback and time-point two represented the quality of their final provision of feedback. Total scores are provided for each feedback form based on the quality of the feedback form and the number of opportunities provided as determined by the number of feedback statements. Total scores include both suggestive and affirmative feedback statements. The quality scores were reported out of 100% to add additional context to the findings. Mauchly’s Test of Sphericity was not significant indicating sphericity was assumed.

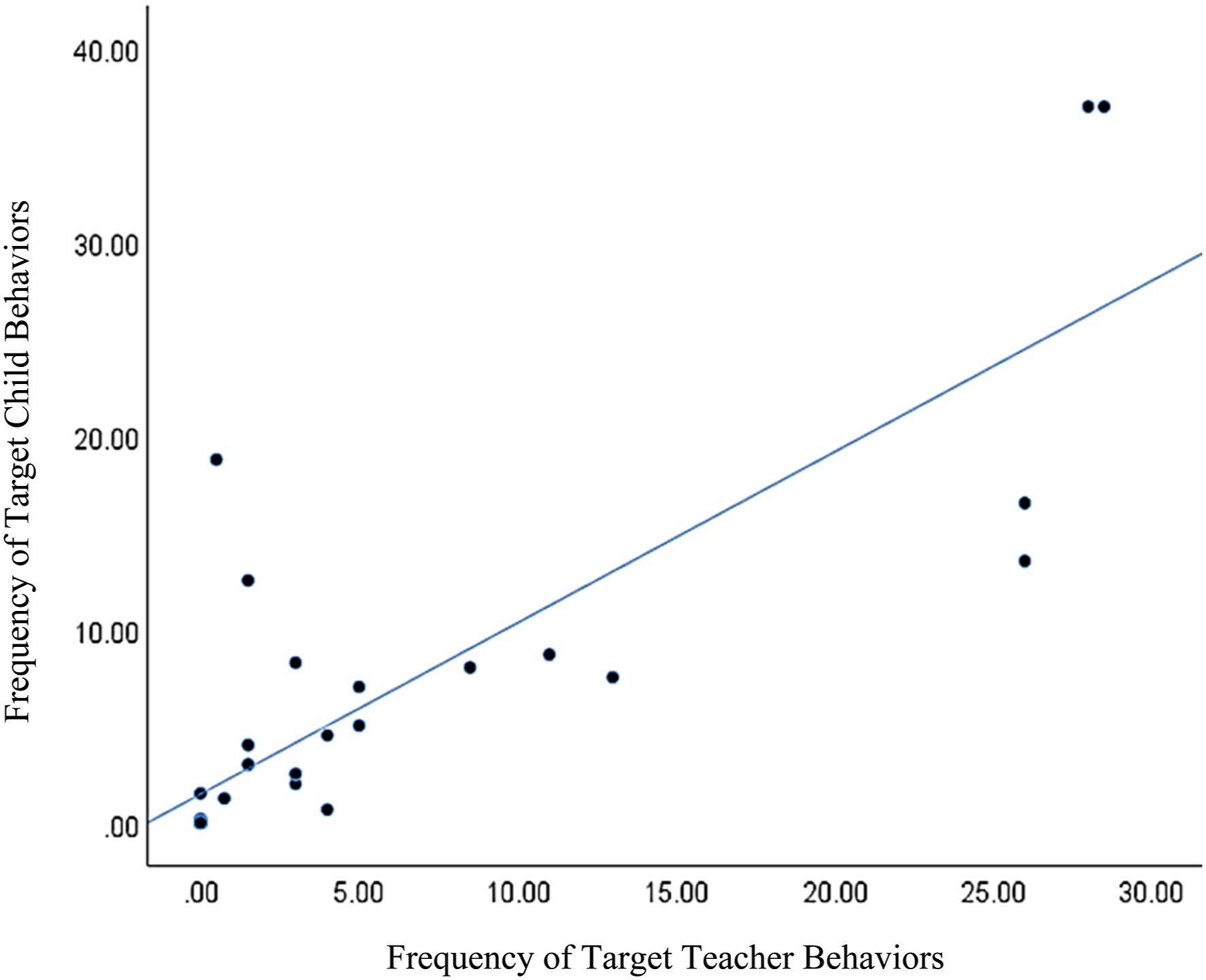

The SPSS Bivariate Correlation program was used to examine the association between TC use of target NI strategy and focus child use of target behavior to answer research question four. This approach allowed for an examination of the related pair of variables to determine both the strength and direction of the relationship across three time-points. The dataset was reviewed prior to the analysis, and there were no outliers. The associations were further investigated through a scatterplot that included all three time-points on one plot. Representing all data-points on one plot allowed for a more straightforward visual analysis of any potential linear relationship.

Pattern coding of social validity data was used to answer research question five. The first and second author independently reviewed the questionnaires using starter codes that aligned with the questionnaires (Miles et al., 2020). Originally, five starter codes were identified from the group questionnaire (intentional procedures, challenges, successes, future changes, and future similarities) and five starter codes were identified from the individual questionnaire (peer feedback, peer feedback enjoyment, challenges, data collection enjoyment, and data collection challenges). Subthemes emerged and units of meaning were identified by participant responses utilizing pattern coding (Miles et al., 2020). Subthemes from the group and individual questionnaires included (a) intentional procedures, (b) challenges and future changes, and (c) successes and future similarities. Finally, any group responses that included ‘not applicable’ were removed (i.e., three). Traditional consensus coding was used by the first and second author throughout the analysis process to determine interobserver agreement (IOA) (Brantlinger et al., 2005). The first and second author analyzed all responses, and the third author reviewed all of the analyzed responses to determine agreement, resulting in 100% agreement on the group questionnaire responses and 95% agreement on the individual questionnaire responses. To determine interrater agreement for anchor codes, percent agreement was calculated for 26% of the feedback forms by the third author across all participants. The first and third authors served as primary data coders. Total agreement resulted in 98% with a range of 66.60%–100%. Agreement was low for one document as there were a maximum of six items to be coded in any given feedback form so any disagreement resulted in a substantially lower score. To determine agreement for teacher practice and child behaviors TCs used the point-by-point method (Ledford & Gast, 2018), came to agreement on any disagreements, and finalized the coding form. This process was repeated with each of the three TCs in each group during class sessions throughout the semester.

Results

Research Question One

On average, TC strategy use scores at time-point one were 00.09 (SD = 0.22), 10.46 (SD = 10.80) at time-point two, and 11.44 (SD = 11.17) at time-point three. Results of the repeated measures ANOVA indicated a significant difference, F (1.2, 9.3) = 8.3, p = 0.015, in TCs' use of target behavior over time. The LSD Post Hoc comparisons indicated a significant difference between time-points one and two (p = 0.021) and time-points one and three (p = 0.016). There was no significant difference in TC use between time-points two and three. Therefore, TCs significantly increased their use of the target NI strategy after one dose of feedback where Cohen’s d = 1.44 indicating a large effect size, and TC maintained these higher rates after the third dose of feedback.

Research Question Two

On average, focus child use scores at time-point one were 00.44 (SD = 1.33), 9.44 (SD = 10.71) at time-point two, and 9.33 (SD = 10.65) at time-point three. Results of the repeated measures ANOVA indicated a significant difference, F (1.1, 8.5) = 5.83, p = 0.039, in focus child’s use of target behavior over time. The LSD Post Hoc comparisons indicated a significant difference between time-points one and two (p = 0.041) and time-points one and three (p = 0.041). There was no significant difference in TC use between time-points two and three. Therefore, focus children significantly increased their use of the target behavior after the TC received one dose of feedback where Cohen’s d = 1.18 indicating a large effect size, and TC maintained these higher rates after the TC received the third dose of feedback.

Research Question Three

On average, TC feedback quality scores at time-point one were 26.89% (SD = 22.37) and 58.33% (SD = 27.15) at time-point two. Results of the repeated measures ANOVA indicated a significant difference, F (1, 8) = 10.59, p = 0.012, in the quality of feedback provided by participants over time where Cohen’s d = 1.26 indicating a large effect size. TCs significantly improved their ability to provide feedback with multiple opportunities for practice.

Research Question Four

For this sample (n = 11 at time-point one and n = 9 at time-points two and three), a significant association was found between the between TC use and child use counts at time-point one, r = 0.97, p = .000, time-point two, r = 0.83, p = .002, and time-point three, r = 0.73, p = .027 using the SPSS Bivariate Correlation program. TC use of NI strategies and child use of target skills are positively correlated where a strong relationship between increased TC use and increased child use was identified over time. These significant associations were further investigated through a scatter plot (see Figure 2). Results of the scatterplot reinforce the strong linear relationship between TC use of NI strategy and focus child target behavior. Across time, as teachers increased the use of target teaching behaviors, students were more likely to increase target behaviors as well. TC use of NI strategies and child use of target skills.

Research Question Five

Data from our group questionnaires suggests that TCs perceived peer feedback to promote intentional procedures. These data contained units of meaning such as doing things purposefully with the child and using a step-by-step process. For example, one group of TCs indicated, “We felt we were more aware of our actions and what we were saying.”

Challenges

Data from both the group and individual questionnaires suggest that TCs perceived the intervention to have challenges. These included barriers, things they would do differently in the future, complexity of procedures, time constraints, and initial difficulty with the process. One group of TCs responded, “This was more time intensive than what we would normally do when taking data on children, it would simply take too long.” Another group indicated that they experienced some initial difficulties “At the very beginning, it was difficult to understand our project. Sometimes it was hard to arrange meetings with them and keep them going because children could get distracted.” A TC indicated, “Following the specific feedback model was challenging at times.” As the research study continued, TCs also indicated the additional challenges related to COVID-19, such as, “The second half of the ARP [project] (as expected) was challenging in regard to school cancellations and COVID-19. Using video calls was a new experience for all of us to communicate with students. Also, finding time to record the amount of videos we needed while also staying on the student’s schedules was a challenge at times.”

Successes

Within group and individual questionnaires, TCs also perceived successes associated with the intervention. Units of meaning included what they would do the same, data collection, and peer feedback. For example, one group of TCs said, “I had a great experience with the child. It was the first time working with [a] very young child and it was beneficial for me. I had a chance to see a constructivist classroom environment. I could implement IVs under different circumstances. I enjoyed receiving feedback. I had really good interactions with my focus child.” TCs identified data collection as a success, “I really enjoyed working with the child and finding ways to introduce the IV. I was excited to see whether the child produces more DV.” Another TC stated, “I think it was helpful having other fresh sets of eyes to help in reviewing and analyzing my videos. It helped me catch moments I might have missed and helped me conduct better videos.” Over time, TCs also noted the benefits that they observed, “It was interesting to see the data in graph form and really see the progress being made.”

Discussion

Supports

This research supports previous literature identifying that TEPF feedback can promote changes in TC and child behavior, and the peer feedback intervention package is socially valid (Barton et al., 2016; Coogle et al., 2015). Like Coogle et al., 2015, this research suggests that there were elements of the feedback system which TCs found socially valid. Early childhood special educators previously indicated feedback enhanced their ability to implement strategies and resulted in the use of effective teaching strategies (Coogle et al., 2015). Our research supports previous findings that TCs perceive increased ability to intentionally implement strategies and focus on specific effective teaching strategies when receiving feedback. They indicated observing the video-recorded observations provided them the opportunity to determine how they were engaging with children. Previous research has also included challenges associated with feedback intervention, although this was specific to real time coaching provided by researchers to preservice and in-service early childhood special educators with a focus on communication strategies. Challenges associated with this type of feedback intervention included technology challenges, difficulty multitasking, and technology creating a distraction for children (Coogle et al., 2015). Our research supports these previous findings indicating TCs experience challenges when receiving feedback. Specifically, TCs similarly reported challenges related to technology and multitasking finding time to film children during the day and desiring more time to set aside for data collection.

Extensions to the Literature

This research extends the literature by examining how TC practice, child behavior, and quality of TEPF feedback changed over time. We also extend the literature by investigating the correlation between TC practice and focus child behavior and examining the social validity within TCs responses from individual and group questionnaires. First, TCs significantly increased their use of target practices upon receiving TEPF feedback over time. This suggests that upon receiving feedback, TCs practice changed, and that change maintained over time. This is an important finding as it suggests that multiple doses of feedback significantly affect TC practice. Although field placement and internship opportunities look different across early childhood licensure programs, they are frequently characterized by limited observations and feedback opportunities due to logistic challenges such as travel and time (Leko & Brownell, 2011; Leko et al., 2012); however, in the current study peer feedback paired with technology promoted change in TC practice over time. Embedding technology (video-recorded interactions) allowed TCs to capture what was taking place in their specific setting and bring it back to a common meeting location.

Additionally, child behaviors increased over time. This suggests that although the primary variable within our intervention package was TC practice, child behaviors were significantly affected. This is the ultimate goal of supporting TC practice. This finding indicates that investing in TC practice via TEPF over time, has significant implications for focus child behaviors. This intervention package provided TCs the opportunity to review their practice and receive performance-based feedback to elicit focus child behaviors.

The quality of TEPF also improved over time. This is an important finding as these participants did not yet have experience in providing peer feedback before taking this course and participating in this study. Although they did not yet have this experience, performance-based feedback was better aligned with the focus TC practice when providing affirmative and suggestive feedback as they received more opportunities to provide feedback. This may have also had implications for TC practice and focus child behaviors.

We observed a strong correlation between TC practice and focus child behaviors. These findings suggest that when TCs are provided the opportunity via a peer feedback intervention package, they are able to align a target NI strategy with a focus child behavior, and as they use that strategy children are provided opportunities to practice target skills. This is the ultimate goal of using NI with fidelity, and our findings suggest that this peer intervention package may be an initial support to consider as our findings suggest it was effective in improving TC practice, child behaviors, and that TC strategy use and child behavior was strongly correlated.

This study included both group and individual opportunities to examine social validity with consistent themes regarding intentional procedures, challenges, and successes emerging. Results extend the work of Coogle et al., 2015 by examining the social validity of TEPF provided by TCs rather than external coaches. TCs similarly reported that the TEPF intervention was socially valid and promoted increased use of effective teaching strategies. The challenges associated with completing the feedback intervention extend previous findings indicating, in addition to in-service and pre-service early childhood special educators, TCs also reported difficulty with technology and finding time to complete procedures within the typical educational day.

Limitations

The findings from this study support the use of TEPF peer feedback to support teacher candidate practice and child outcomes. However, methodological limitations need to be recognized. First, this within subjects analysis of nine TCs and their focus child falls short of more rigorous research designs in both a lack of comparison group and small sample size. As such, it is difficult to understand how accurate the very large effect sizes are because of the increased variability or large standard deviations within the sample. Second, due to COVID-19, not all TCs were able to complete the study procedures, and there was less than ideal control over research settings. In an attempt to analyze data that were collected, average scores had to be used because some participants completed fewer sessions at one or more time-points. Similarly, we did not control for variance in settings given the changes taking place in instructional delivery during the study timeframe. Contextual factors such as setting and teacher-child relationships should be considered in future iterations of work exploring the use of TEPF peer feedback to support teacher candidate practice and child outcomes. Finally, each TC had a different NI strategy which could have impacted TC behavior as they may have varied in complexity. Comparing the complexity of the different NI strategies selected was not within the scope of the current study. Researchers should consider this in future studies to determine if differences in complexity between NI strategies impacts effect sizes.

Implications for Research and Practice

Despite the limitations, these findings have implications for future research and practice. Researchers and teacher educators should consider how TC practice, child behavior, and TEPF quality improved and maintained over time, and how these variables were correlated to one another. Additionally, TCs found this intervention package to be socially valid. Teacher educators might consider incorporating TEPF peer feedback as a mechanism to enhance both TC practice and child behaviors when TCs are engaged in field placements and internships. This type of feedback would likely not be feasible without the use of technology, and therefore, will be an important element to embed. Providing field experiences paired with TEPF peer feedback early in a TC’s program may support them in developing their practice over time, using a practice-based teacher preparation model. This would be particularly valuable in teacher education programs that intentionally and cohesively provide opportunities to develop their practice over time across coursework. When providing such opportunities, practice and explicit instruction on the feedback process may be beneficial and may result in more immediate and sustained change across variables.

Future researchers might consider prolonged time in the field and multiple doses to learn more about how specific doses of feedback affect TC practice, child behaviors, and quality of feedback over time. Learning more about what works most effectively for whom under what conditions is also an area which researchers might consider. For example, is there a quantity or immediacy or format of feedback that is most effective. Across performance-based feedback research, we know that although consistently effective, TCs have displayed variable outcomes (e.g., Coogle et al., 2015), and therefore, researchers might consider a tiered system of support to promote fidelity of practice. It may also be beneficial to learn more about TC characteristics such as their years of experience and whether there are differences between undergraduate and graduate TCs. Finally, this type of intervention may also have a positive effect on other educators (e.g., practitioners, teachers) in professional development settings which might be an avenue for researchers to consider studying.

Conclusion

The quality of TEPF peer feedback, TC practice, child behaviors, and the perceived social validity are interrelated topics that the field of early childhood has more to learn about. This study provides preliminary data that suggests the quality of TEPF peer feedback changes over time and feedback influences TC practice, child behaviors, and how TCs perceive a TEPF peer feedback intervention package. These data will be important for practitioners and researchers to consider as they partner with local communities in providing field placement and internship opportunities and develop and implement feedback to support the fidelity of practice.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.