Abstract

This paper introduces the design principle of legibility as means to examine the epistemic and ethical conditions of sensing technologies. Emerging sensing technologies create new possibilities regarding what to measure, as well as how to analyze, interpret, and communicate said measurements. In doing so, they create ethical challenges for designers to navigate, specifically how the interpretation and communication of complex data affect moral values such as (user) autonomy. Contemporary sensing technologies require layers of mediation and exposition to render what they sense as intelligible and constructive to the end user, which is a value-laden design act. Legibility is positioned as both an evaluative lens and a design criterion, making it complimentary to existing frameworks such as value sensitive design. To concretize the notion of legibility, and understand how it could be utilized in both evaluative and anticipatory contexts, the case study of a vest embedded with sensors and an accompanying app for patients with chronic obstructive pulmonary disease is analyzed.

Keywords

Introduction: The Canary in the Coal Mine

How do we measure and detect aspects of the world that we, as humans, are unable to perceive? And equally important, how do we make this information understandable and usable? To appreciate the significance of such questions, we can draw from the now outmoded practice of bringing canaries into mines (Eschner 2016). These singing birds have a greater sensitivity to odorless and colorless noxious gases, such as carbon monoxide, than humans. In the mines, their illnesses or death would alert the miners that they were in danger, providing them with the opportunity to evacuate before being harmed. In this circumstance, the canary can be understood as a “sensing technology” that offered a measurement of something crucial, but otherwise undetectable, to the workers. The death of the bird, and subsequent silence that followed, effectively communicated a specific piece of knowledge: that there were toxic substances in the air and a certain threshold had likely been passed. Animal welfare questions aside, canaries proved an incredibly useful—and readily understandable—form of detecting otherwise unperceivable substances in the environment. So effective was this practice that the idiom “canary in the coal mine” has become evocative of the very notion of an early warning system.

Today, there is a host of new measuring and detecting techniques under development, largely targeted at indicators of—or dangers to—health and well-being. Technologies that sense, measure, and quantify environmental factors or bodily functions are not only becoming more sophisticated but are also entering into the public sphere. This is, in essence, the development of new canaries for different coal mines. Further, such domains of application extend the functionality of these novel canaries. They are no longer relatively simple binary alarms but also able to provide nuanced data ranging in scale from individualized to epidemiological outputs. Devices exist, or are in development, that range from detecting particulates in a person’s breath to measure cancerous growths or quantify environmental pollutants (e.g., Cipriano and Capelli 2019), to identifying patterns of illegal drug use by analyzing wastewater (e.g., Prichard et al. 2014). Through making that which is imperceptible into something that we can perceive, these sensing technologies 1 surface previously undetectable information. Instead of relying on our senses (e.g., “does it smell or look safe/healthy”), or even on centralized data sources, we are increasingly able to utilize individualized and precise tools. In doing so, they carry the capacity to actively mediate our perceptions and experiences, giving us a new lens through which to understand, and in turn evaluate, the world (see Verbeek 2011). Further, new innovations continue to increase the scope—and advance the quality—of what can be sensed, measured, and detected. This creates new possibilities and challenges regarding what to measure, as well as how to analyze, interpret, and communicate said measurements.

These novel sensing technologies can be utilized for justifiable and desirable ends but also raise many ethical and political questions regarding their responsible development and use. 2 At stake are key moral values such as privacy, trust, autonomy, identity, and dignity, as well as broader questions concerning power relations between individuals and governments or private corporations (e.g., Biesiot, Jacquemard, and van Est 2018; Biesiot et al. 2019). A key moment where such questions materialize, and which can have significant downstream effects, is the design of the user or societal interface. More specifically, how these devices present knowledge about the world—how they interpret and communicate novel information—can play a constructive or destructive role in operationalizing moral values. Appreciating this moralizing capacity of sensing technologies adds a layer of responsibility to design choices. For this reason, this paper focuses explicitly on the complex and critical task of extracting information from the environment (as well as users and other [indirect] stakeholders), and translating and communicating that information in a meaningful and usable way. This is a critical design challenge for the interface of these devices and services, with profound moral and epistemic ramifications.

To address this challenge, legibility is proposed as a design principle to be utilized by developers of sensing technologies, as part of a value sensitive anticipatory and reflexive design process (e.g., van den Hoven 2017). Contemporary sensing technologies are complex devices that require layers of mediation and exposition to render the data they sense as intelligible and constructive to the end user. Without this, these technologies and the insights they offer are illegible to the laypeople that encounter them. The concept of legibility offers a means to navigate this process and to surface and confront the value-laden questions and opportunities that may be encountered. Importantly, legibility is not a rigid prescriptive framework but a heuristic design principle. Exactly what is being made legible is dependent on the device or service in question, and similarly for whom it is being made legible. Thus, legibility itself is not a distinct form of (or framework for) value sensitive design but rather a design principle to assist with the operationalization of values at a key stage in the design process. It thus functions as both an evaluative lens and a design criterion to be utilized within existing methodologies.

The paper will first elaborate on the functionality of sensing technologies, identifying key moments for intervention (Sensing Technologies and Value Sensitive Design section). Here, the moral significance of sensing technologies will also be explored in more detail, to situate this study within discourse on Responsible Research and Innovation, and more specifically within value sensitive design literature. Legibility as a Design Principle section then introduces and defines legibility and outlines a framework for applying it to sensing technologies. Legibility in Practice: COPD Vests Case Study section illustrates the usefulness of legibility via an in-depth look at a specific sensing technology: the development of a wearable device and associated app for patients with chronic obstructive pulmonary disease (COPD). This paper concludes with a brief reflection on practical and theoretical next steps for the development of legibility as a design principle, as well as its broader applicability.

Sensing Technologies and Value Sensitive Design

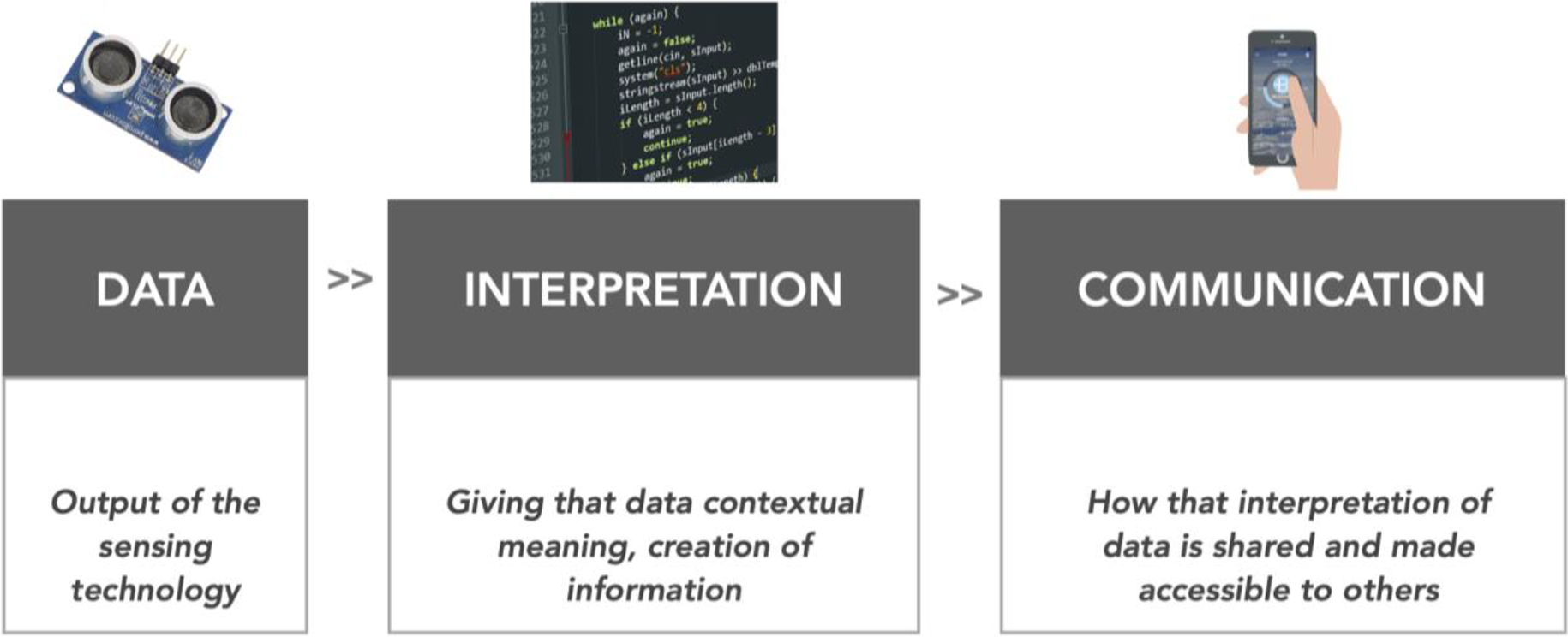

Sensing technologies that operate outside a lab context can be broadly characterized as having three essential and interrelated technical activities (Figure 1). First, a sensor (or sensors) gathers data about whatever it is measuring or detecting, which comes in the form of raw information. For example, this can be the parts per million of particles in an air sample, body temperature, or the prevalence of a particular antibiotic in blood. That data then are processed and interpreted with another layer of technology, such as code. In these moments, the meaning of the raw data that the sensor collected is being evaluated. Here, there is an opportunity for the meaning of that data to be constructed by the expert who has developed or designed this technology. This is where certain thresholds are defined and different data points may be correlated with one another to derive some kind of meaning. The last stage is concerned with the communication of this information to its audience. This is likely the first opportunity for the nonexpert users to interact with the collected data. Here, again there are opportunities for mediation by the designers and developers, in terms of determining what gets communicated, to whom, how, and why.

Sensing technologies carry important ontological and epistemic questions, specifically around the translation and communication of data into usable information. But considered as a practical issue, there are also important questions regarding how to present this information to users. The act and intention of communicating information is a crucial facet of the moral significance of sensing technologies and one that places responsibility on the developers of these devices and services. There are many potential benefits brought by efficient and accurate environmental monitoring or individualized healthcare services. Such innovations can improve the lives of people via monitoring their well-being, encouraging healthy lifestyles, allowing for early detection of diseases, allowing for a better understanding of polluted environments, providing early warning systems in labor situations, creating noninvasive detection services, and so on. But, we can also easily foresee the morally dubious nature of such devices and services—something already being debated in both academic and popular literature (e.g., Biesiot et al. 2019). Through the collection of sensitive personal data, violations of (informational) privacy can occur (e.g., Christin 2016; van Zoonen 2016). The use of this information can be used to exploit unjust power dynamics (Allen 2018). Biases can be reinforced rather than challenged, regarding the standing of individuals and the validity of knowledge production (see Kidd and Carel 2017; McKinnon 2016). And, seemingly well-intentioned “nudges” aimed at fostering healthy lifestyles can lead to political contestation and potentially create instances of unjust manipulation (see Selinger and Whyte 2011; Wilkinson 2013).

This is not an exhaustive list of possible benefits or harms, but rather meant to highlight the dual nature of sensing technologies, and the need for a reflexive and anticipatory approach to how the information they produce is communicated and ultimately utilized. The complexity and uncertain grounding of extracting usable information, translating or interpreting it in a way that is meaningful and promotes engagement, and doing so in a way that is morally acceptable and socially accepted (see van de Poel 2016), is thus a critical design challenge. A key theoretical question (with practical weight) is thus if, and how, we can positively foster values through the design of sensing technologies—and in particular, through the translation and communication of the information they produce. This orientation has been referred to as the “design turn” in applied ethics, a constructive approach striving to realize moral values through the design of artifacts, infrastructures, systems, or institutions (van den Hoven 2017). Toward this goal, in recent years, the notion of value sensitive design, or design for values, has been developed—originally focused on information technologies and human–computer interaction, and now more generally utilized within the ethics of technology (e.g., Friedman and Hendry 2019; Friedman and Kahn 2002; Friedman, Kahn, and Borning 2013; van den Hoven 2007; van den Hoven, Vermaas, and van de Poel 2015). This approach is centered on an acknowledgment that technologies—and our interactions with or through them—are not neutral but inherently value-laden. This necessitates an understanding of the moral values at stake early in the development process and asserts that we should proactively strive to incorporate them as “supra-functional” design requirements (van den Hoven 2017). Doing so will, in theory, align future innovations with societal goals and values—what is referred to in European contexts as Responsible Research and Innovation (e.g., Guston et al. 2014; Stilgoe, Owen, and Macnaghten 2013; van den Hoven 2013; von Schomberg 2013).

Toward the identification and operationalization of values in design processes, procedural pathways exist for translating values into design requirements, such as the tripartite methodology of conceptual, empirical, and technical analyses (Friedman and Hendry 2019), or the value hierarchy approach of translating values into norms and then design specifications (van de Poel 2013). However, what is currently missing is a design principle to diagnose how specific values surface during the stages of interpretation and communication as outlined above (see Figure 1). A key (moral) condition is the responsibility for interpretation and communication that is entailed within the act of translating data into usable information. This is a moment where values are made concrete, or operationalized. To assist with this process, a guiding design principle is useful to help concretize these value-laden questions and to navigate the moral-epistemic terrain in the design process.

Mapping the three technical activities of sensing technologies.

Legibility as a Design Principle

Broadly speaking, legibility is concerned with the conscientious processes of mediation and exposition necessary to render technologies as intelligible and constructive to the end user. This is an inherently value-laden act that should be carefully navigated. A useful way to position legibility is in relation to existing concepts with similar aims: usability from the fields of industrial design and human computer interaction and transparency from information (and business) ethics.

Usability is concerned with how technologies are made accessible to the broad spectrum of people who will ultimately be engaging with them, typically referred to as “users” (Norman 2013; Nielsen 1994). As technologies become increasingly complex, usability conventions have developed favoring designs that minimize barriers for the nonexpert user, promote ease-of-use and efficiency, and increase user satisfaction. Generally speaking, this has been achieved with interfaces and encasements that mask or obfuscate the complexity of the technology to make it more palatable for the general user. This is often engineered to cater to preexisting conceptual models or metaphors that users likely have, to help bridge the knowledge gap between the user and the computer: for example, the icon of a trash can on a desktop to help users understand how they can delete content (Norman 2013). Yet, in pursuing this technique, the user becomes further removed from what the model actually represents. The complexity behind technologies, and the curatorial choices behind how they deliver content, is made opaque to the user. With such an impetus to make these technologies and the way they work invisible, the general user is not in a position to evaluate whether the behavior of the technology or its content is appropriate and operates with limited agency and autonomy. For example, in the case of a Google search, the user’s inquiry is being corroborated with other data elements such as the user’s location, search history, and demographic profile. With those various data points, the search engine returns the result that it assumes is most suitable for that individual user. That user generally has very limited perspective into the specifics of these tailoring measures that the search engine performs; however, Google’s results are conveniently specific to the user’s (assumed) needs.

Another concept that could guide such processes is transparency. It most often refers to the actions of individuals or organizations and is closely associated with accountability as a means to enforce responsibility (Menéndez-Viso 2009). It can also be built into a system, to foster trust and reliability—an example is online Wikis, where the edit history of a page is available to anyone (Hulstijn and Burgemeestre 2015). Transparency is thus often sought in order to account for the legal or moral responsibility of an actor’s procedures and choices. There is a clear value in transparency within many digital and information-related contexts, as a means to enable oversight of (e.g.,) governments and public funds, and of companies with substantial access to personal data. However, transparency can become unwieldy with respect to the design of technologies. Literally speaking, clear encasements don’t help users understand what’s going on with their technologies. Similarly, terms and services agreements that must be agreed to upon downloading new software or an update often offer little actual insight. These agreements are fully transparent, explicitly detailing various agreements and liabilities associated with the technology or service. Yet, they are often written in dense legalese that is impenetrable to the average user. Here, transparency is being pursued, at the expense of accessibility or usability.

If we put the concepts of usability and transparency in relation to one another, they can be envisioned as opposite ends of a spectrum. On one end are efficient and intuitive usability devices and services, at the expense of “black boxing” key decisions and processes (Robbins 2018). On the other end is full openness of data and processes, at the expense of user experience and intelligibility. Legibility is a design principle that captures the key underlying goals of usability and transparency but attends to the shortcomings of black boxing while still appreciating the responsibility for interpretation.

Legibility was introduced and developed as a constructive design principle to acknowledge the moral significance of layers of mediation required to promote the accessibility of complex technologies to a lay user (Robbins 2018). More crucially, as a design principle, legibility seeks to find ways to reveal and contextualize the significance of those layers of mediation. In this paper, we have reframed legibility as a means to surface and prescribe values that become operationalized in a design process. Legibility embraces the principle that there are some levels of mediation and curating necessary in the interpretation and communication of data of technologies. But, it also acknowledges that the moral significance of this act should be handled in a very deliberate manner. Thus, in surfacing and contextualizing these layers of mediation, legibility seeks to offer avenues for users to maintain their agency and autonomy while still utilizing (novel) technologies (Friedman and Nissenbaum 1997).

Designing for Legibility of Sensing Technologies

The value-laden questions surrounding sensing technologies revolve around a central concern: they measure and interpret that which is beyond our perception, thus challenging our ability to make the processes of mediation and exposition behind these technologies intelligible. Recalling the moral significance of sensing technologies laid out above—navigating the interpretation and communication of information, and making this meaningful to all stakeholders—transparency is an insufficient design goal. The very nature of these technologies requires some interpretation and translation of (often complex) data. Some amount of curation is unavoidable and necessary. The goal of “full transparency” for the end users is an unrealistic target and likely would not lead to better comprehension, use, or trust of the device or service. Similarly, purely operating under the guidelines of “full usability” can have a similar outcome, not leading toward truly value sensitive designs. We propose that legibility be used as a design principle to deconstruct the interpretation and communication stages for sensing technologies laid out above, to provide a pathway to make apparent—and ultimately navigable in conceptualization, development, and design processes—moral values in these devices and services. Utilizing the concept of legibility to surface moral values in sensing technologies, two important questions arise: legibility of what and for whom?

First, legibility of what? What data are being translated into useable information and to what purpose? The sensor’s data are made legible with the technical algorithms that designers and developers create to render the raw data from the sensors into information that experts can find workable. But there is another layer to this process, namely, that designers or developers may exert editorial discretion to give the data context and purpose. This can, for example, come in the form of selecting the range of values from the raw data that can determine what is and is not “healthy behavior.” In these processes, the designers and developers are making editorial and curatorial decisions that can have a significant moral impact. Should the threshold selected by a particular industry supersede an international standard, for example?

These questions point to the layers of mediation required to render their services available to a general audience of users, where decisions are made that shape not only the functionality of the technology, but also its moral impact. This leads to the second question: legibility for whom? Information is presumably being interpreted and communicated toward some end goal, with particular users in mind. Who are the expected or possible user groups or stakeholders? What distinguishes various end users from one another, what are the possible knowledge gaps that exist between these different parties, and what are their possibly different vested interests in data from these technologies? Further, who are the indirect stakeholders, such as bystanders, that may be affected by the method of data collection and the means of communication? With these questions in mind, the question becomes: how is information from these technologies being communicated to direct and indirect stakeholders?

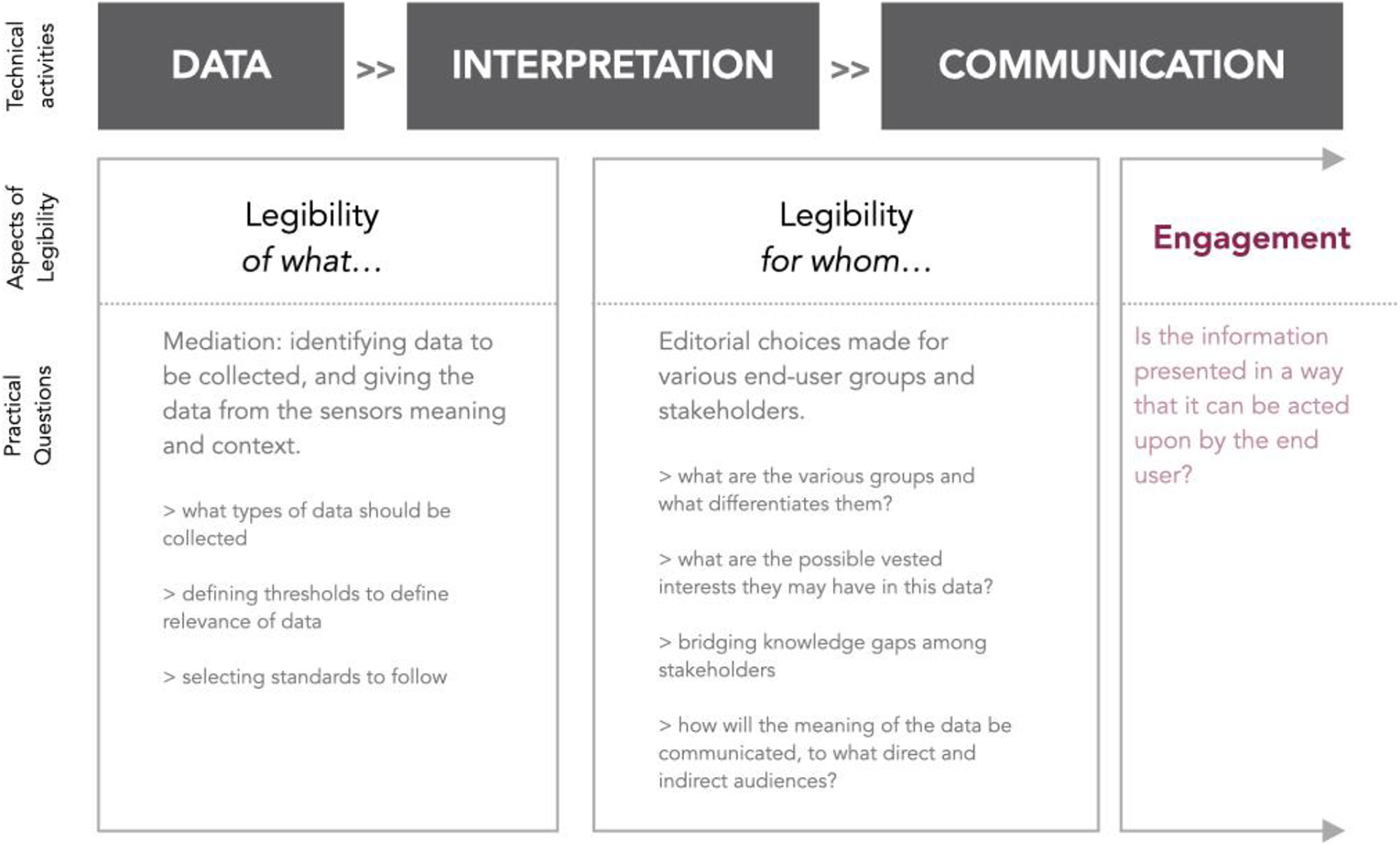

The ultimate objective of legibility is to create opportunities for engagement. Engagement arises via the communication of (contextualized) data to its intended audience. The sensing technology should not only make intelligible what is being measured, and communicate it in a way that can be understood by the relevant audience(s) of that sensing technology, but should also allow for meaningful interaction. This can become particularly fraught if the end user does not share the expertise of the people designing the interface or that of the domain of science pertinent to the measurement at hand. The designers and developers have to determine how, at what time, and in what form, meaningful information is conveyed. In doing so, this layer of mediation shapes how the device or service, and the content it produces, can be engaged. Each aspect of legibility maps onto the distinct technical activities of sensing technologies, which point to practical questions for the design and development phases (Figure 2).

A visualization of the legibility process as it maps onto the technical activities of sensing technologies; thus pointing to the practical, yet moral-laden, questions pertinent to the design and development processes.

A Prudential Account of Engagement

Importantly, legibility is not conceptualized as a rigid prescriptive framework. Rather, it is a principle to guide design processes related to information communication, as well as the evaluation of existing devices and systems. There is space for interpretation and negotiation dependent on the context to which it applies, which will cause different needs to emerge or be prioritized, and different concerns to take precedent. Given the contextual, nonprescriptive nature of legibility, it may be difficult to delineate boundaries for what a positive type of engagement entails. Instead, as general framework for analysis, we can ask: when does the communication stage of sensing technologies fail to live up to the standard of engagement? 3 With the definition above, it is possible to identify instances where legibility fails to live up to moral and epistemic expectations or requirements and as such can lead to issues of moral overload (van den Hoven, Lokhorst, and van de Poel 2012) or negatively affect moral values.

As a general framework for analysis, we can ask whether sensing technologies fail to present meaningful and engage-able information. However, it is important to appreciate that any decision regarding the communication of information will contribute to what is often referred to as the choice architecture of systems or services. In other words, the design of the interface will have some sort of effect on how people will make decisions. That is a burden and responsibility faced by both the developers of the technology and the designers of the interfaces. To navigate this responsibility, a popular (and much debated) idea in recent scholarship is that of nudging, which is “…any aspect of the choice architecture that alters people’s behavior in a predictable way without forbidding any options or significantly changing their economic incentives” (Thaler and Sunstein 2009, 6). In setting up a choice architecture that aims to be both liberty-preserving and paternalistic, nudges are meant to leave people better off “by default,” without hindering their ability to choose an alternative path.

With respect to epistemic autonomy, legibility must walk an admittedly fine line between communicating necessary knowledge and unjust paternalism. At the core of legibility is understanding and meaningfulness but not full transparency. It thus leaves open the possibility that, in some conditions, it is reasonable to intervene with the acquisition and interpretation of knowledge, so long as it serves the purpose of promoting positive engagement. Thus, there are arguably instances where some level of paternalism is justified. 4 But, clear instances of manipulation, coercion, misinformation, or misrepresentation of critical data do constitute a failure and must be avoided. In sum, we can say that paternalism is not a priori a failure of legibility—leaving a somewhat large gray area for future research. 5

Alongside questions of infringements on autonomy, the fairness of how information is made engage-able—and for whom—is a key consideration. A way to understand failures in fairness is to identify when epistemic injustices have (or may) occur. Drawing on the work of Miranda Fricker (2007), McKinnon (2016) explains that when we attribute too much or too little credibility to a speaker (or “knower”), they suffer an injustice. Epistemic injustice can be further divided into testimonial and hermeneutic injustice. Testimonial injustice is focused on individual cases and knowers and occurs when the credibility of a knower is dismissed or disregarded due to an identity prejudice. Hermeneutical injustice is systemic, where “…a certain socially disadvantaged group is blocked—whether intentionally or unintentionally—from access to knowledge or access to communicating knowledge (to those in more socially privileged locations) due to a gap in hermeneutical resources, especially when these resources would help people understand the very existence and nature of the marginalization” (McKinnon 2016, 441). Making new forms of knowledge readily available, and legible, can be a tool for social good. It can be utilized to confront biases and open up disenfranchised segments of the population’s knowledge and credibility. But it can also have a negative role in these same issues, and we must be aware of this potential failure.

As a first step toward seeing where and how positive engagement may fail, we must therefore ask: Is access to—and control over—information unjustifiably hindered through the interpretation of data? Is freedom of action hindered or unjustly affected by the communication of information?

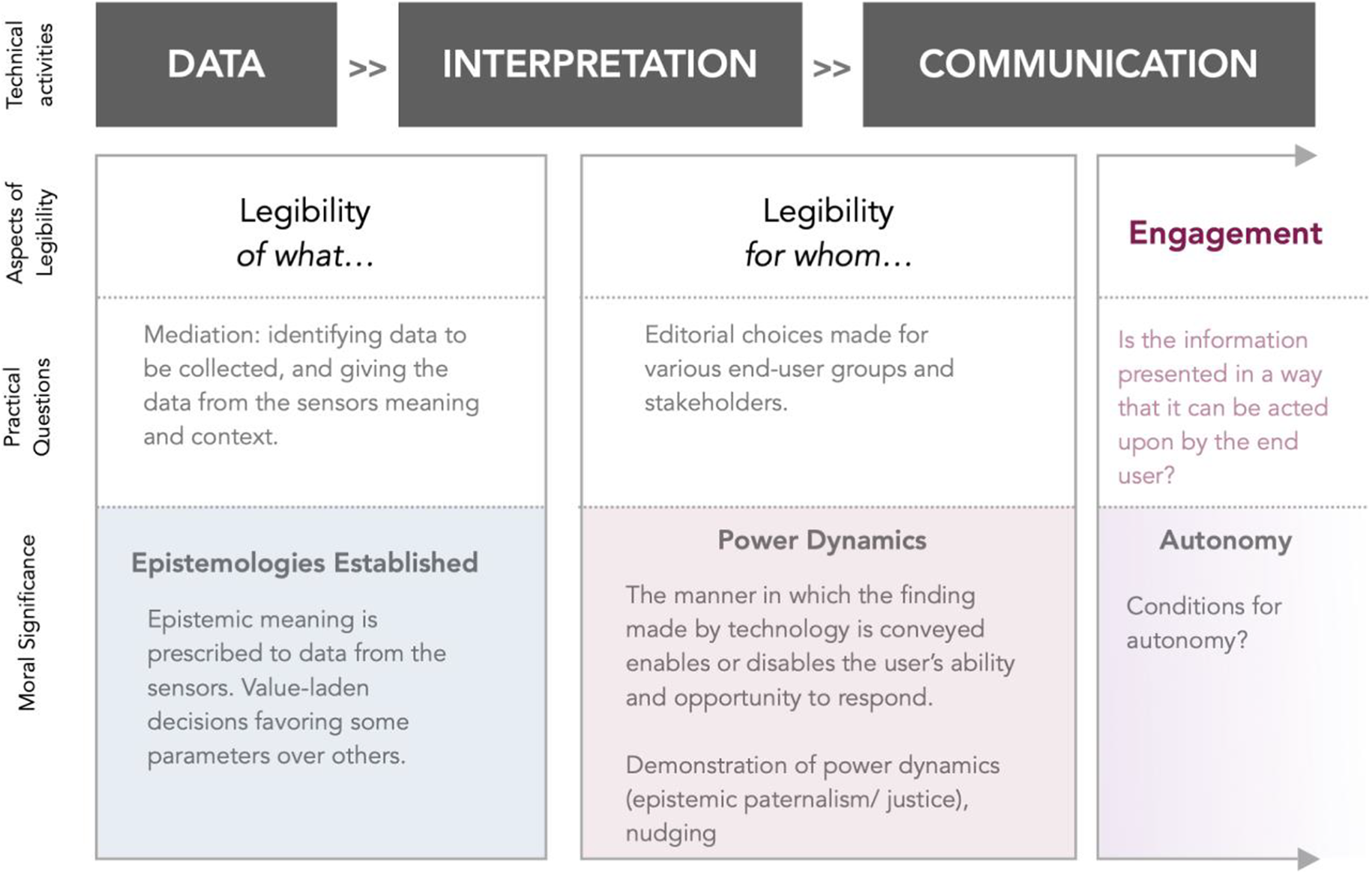

To open up these questions, we can explore sensing technologies via issues such as editorial discretion (of the designers), choice of content, and intended audience (Figure 3).

A representation of the key moral touchstones in the legibility process.

Legibility in Practice: COPD Vests Case Study

To concretize the notion of legibility, and further refine it as both an evaluative and anticipatory tool, we will explore a case study in detail: a vest embedded with sensors and an accompanying mobile app aimed at promoting the well-being of patients with COPD. COPD is a condition characterized by an irreversible airway obstruction, which is generally progressive and is caused by an abnormal inflammatory response of the lungs to inhalation of harmful particles or gases. This is exacerbated by exposure to environmental factors and physical strain. As COPD is a progressive lung disease, it is critical that lung health and exposure to risks be carefully monitored and managed. Often, these particular risks or triggers are outside individual perception, until of course a dangerous threshold has been passed and the symptoms are experienced. It is also necessary to monitor activities, especially the effect of exercising and body posture on the development of the disease. Even the patient’s perception of their health has a major influence on the progression of their disease. Underestimating, ignoring, or not responding promptly to acute symptoms can lead to exacerbations, hospitalization, and accelerated progression of their disease. In contrast, overly cautious behavior can lead to an inactive lifestyle and a deterioration of overall fitness. In addition to these physical problems, patients with COPD are exceptionally susceptible to anxiety (Willgoss et al. 2011). This is an opportunity for sensing technologies to assist in the individualized monitoring and management of this disease. Through a conscientious design—including the communication of symptoms and triggers—there is a possibility for contributing to the health and well-being of those with COPD. More idealistically, new innovations can strive to contribute to the autonomy and dignity of people living with COPD.

While different types of sensing technologies bring unique challenges, and thus cause different values to take priority, the COPD vest is nevertheless a particularly rich case. It is at a stage where the technology is developed and ready to be deployed, with the user interface being designed. Functionally, it includes both a wearable device and app for feedback to both patients and caretakers (e.g., physiotherapists, doctors); it measures or monitors individual biometrics, local environments, and larger weather trends; and, its information will be utilized by both the wearers of the vest and their healthcare workers. Further, as the development of the vests and app is at an intermediary stage, legibility can be utilized to reflect on the development process to date, as well as look toward potential downstream issues in the use phase. Thus, it serves as a relevant case study that encapsulates many variables affecting the legibility of sensing technologies, helping to define and refine the concept.

Concept Development and Framing of Legibility

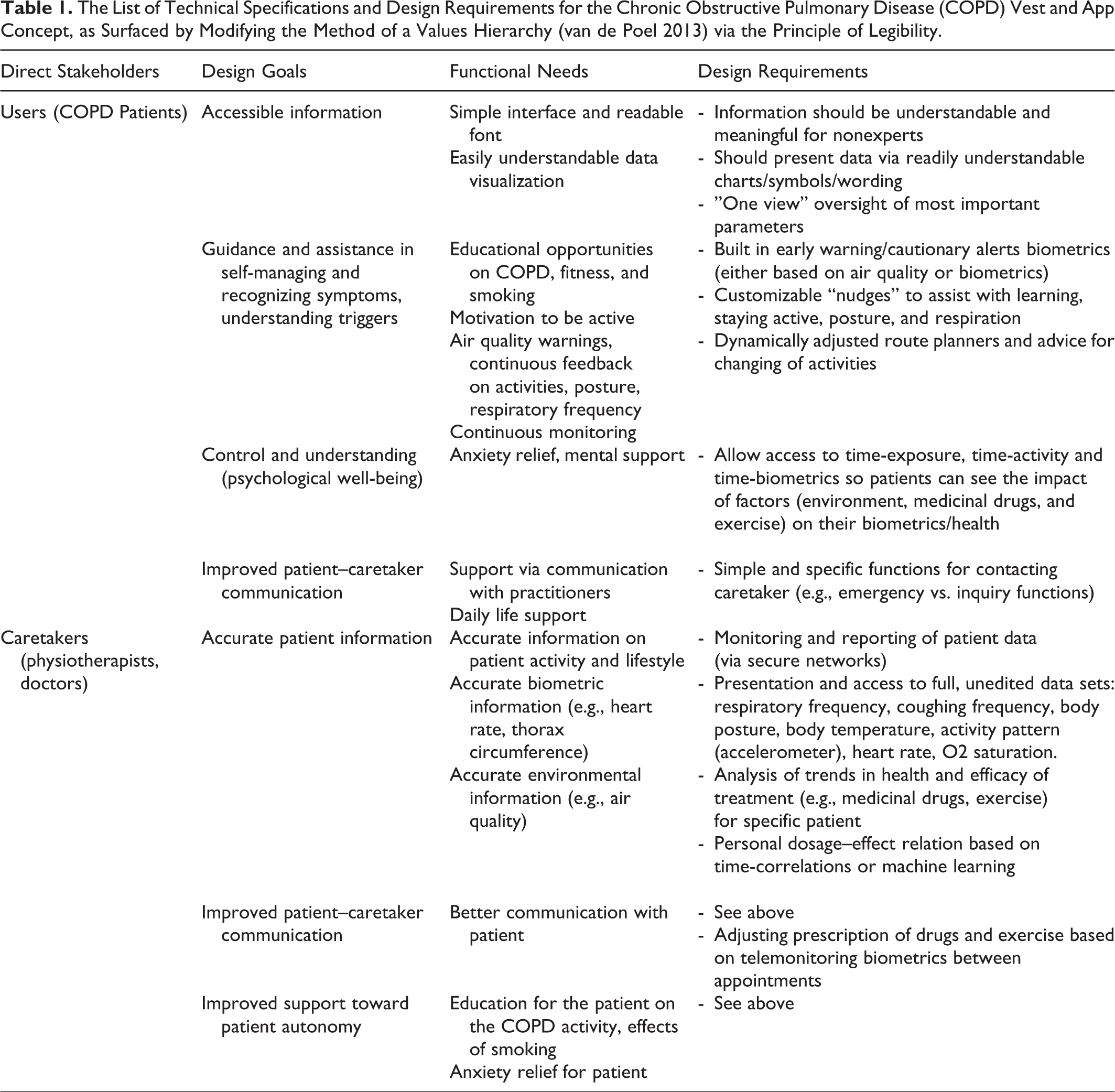

Researchers at the Smart Sensor Systems group of The Hague University of Applied Sciences are developing a wearable sensing technology to assist in the management of COPD. An extensive list of design requirements for the vest was developed based on the existing care practices of COPD, as well as qualitative interviews with medical professionals and patients (Table 1). 6 Additionally, customer journeys with touch points for different personas were developed to understand what data should be communicated in what way and at what time.

The List of Technical Specifications and Design Requirements for the Chronic Obstructive Pulmonary Disease (COPD) Vest and App Concept, as Surfaced by Modifying the Method of a Values Hierarchy (van de Poel 2013) via the Principle of Legibility.

Qualitative research indicated that COPD patients expressed a wish for more self-reliance and would welcome a personalization of the treatment tools (Grijsen et al. 2018). One bottleneck identified was the lack of information on either side (between the patient and healthcare provider), when the disease status has to be communicated and decisions have to be made based on this information. Patients also mentioned missing support from doctors, no holistic approach of the treatment, and a focus only on medication, resulting in a limited information flow to the patients. This is a central challenge for designers and developers: to mediate the data at hand, so that it can be interpreted for specific treatment applications, as well as communicated for multiple audiences.

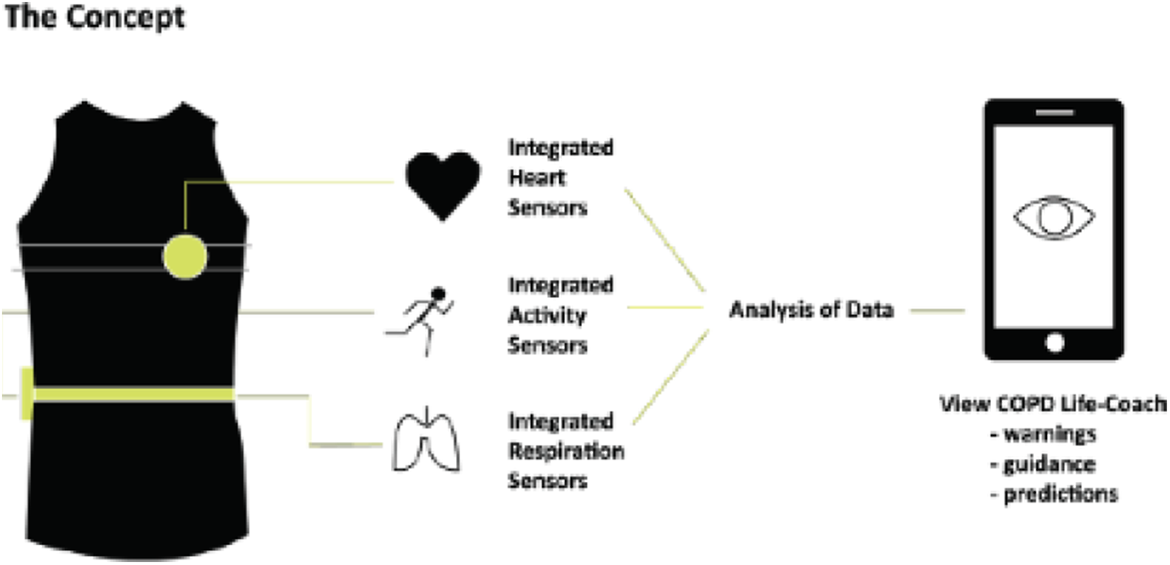

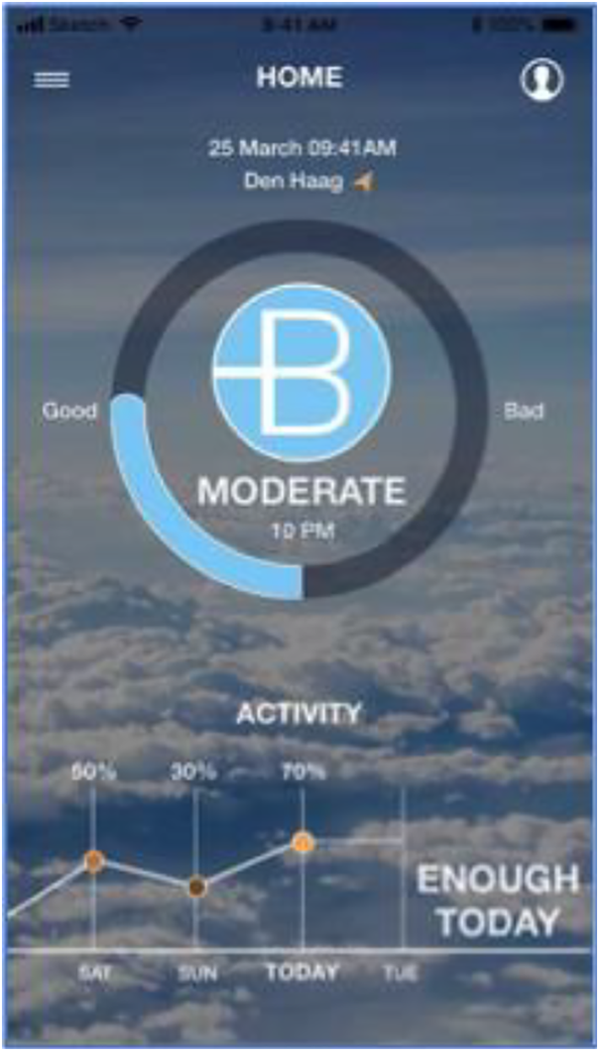

With this in mind, this project not only involves a vest with embedded sensors, but also an app to communicate the findings (Figure 4). This vest is worn directly on the patient’s body, measuring physiological responses and movement, and includes an additional layer with sensors measuring local air quality and location. An app gathers regional weather data to forecast changes in environmental conditions over time or when moving. Further, the app gives the opportunity to register medicine intake, healthcare appointments, treatment plan exercises, and set diary alarms accordingly. These data points are aggregated to identify correlations between the particular environmental conditions and an individual’s inflammatory responses in order to provide insight into the particular boundaries of that patient’s disease. Data are sent (securely) to a cloud-based service that analyzes the data, which is later communicated back to the patient via an application on their mobile phone (Figure 5). The app relies on clear visuals (graphs colored green-orange-red with levels below-at-over the set threshold values) that attempt to contextualize how the data from the vest relate to the patient’s individual health status, behavior, and environment.

A rough schematic for the chronic obstructive pulmonary disease vest and the app. Source: Baeckert (2018a).

A mock-up of the user-interface for the chronic obstructive pulmonary disease patient’s app. Source: Bergstroem et al. (2019).

The goal of the COPD vest project is to improve the quality of life of COPD patients by helping them make more informed decisions about their behavior in relation to their disease. This is done in two ways: directly, by giving patients real time insight into air quality and physiological data via individualized alarms based on thresholds set by patients, caretakers, or by a trained machine learning model; and also indirectly, by giving this insight to caretakers to implement future treatment according to individualized data. From this, we can frame legibility for COPD vests and app as follows:

Legibility of what: biometric data and exposures to environmental pollutants;

Legibility for whom: both the patient and the healthcare provider/caretaker, as well as relevant indirect stakeholders (e.g., those proximal to the patient such as family members or bystanders);

Positive engagement: the success or failure of meaningful and positive engagement would entail the understanding and treatment of individualized symptoms and triggers, toward an improved quality of life for COPD patients (including greater autonomy), as well as improved doctor-patient relations.

If the COPD vests were designed purely to pursue transparency, graphs illustrating data points and projected calculations could easily overwhelm a user, leaving them with little context as to how to make sense of the information collected. Conversely, a vest purely guided by the principle of usability could perhaps only direct the wearer to behave according to the ideals of the designer or caretaker, without allowing users any control or determination regarding how (and why) to pursue their own lifestyle choices. The design of the COPD vests and apps creates opportunities to improve the patient’s physiological and psychological well-being and will ideally allow for greater autonomy, self-reliance, and dignity in managing the disease.

Challenges in Pursuing Legibility

By taking inspiration from the values hierarchy methodology (van de Poel 2013), and adding the insights provided via the principles of legibility outlined above, the challenges and opportunities surrounding the creation of engage-able communication across key direct stakeholders emerges (see Table 1, Design Requirements). It allows us to critically explore the vest and app, asking: what are the potential issues that arise in the pursuit of legibility, how should engagement be conceptualized in this context, and where are the key moments where engagement may fail?

Engagement is multifaceted, and with COPD vests highly dependent on the patient’s ownership and control of information. Should medical caretakers have unfiltered access to all the data collected? What are the appropriate nudges and forms of guidance that should be offered by medical caretakers, and to what degree should patients co-create these nudges? What communication strategies should be deployed to respond to COPD patient’s susceptibility to anxiety (Willgoss et al. 2011)? What amount of information sharing is appropriate, what contextual factors are helpful, and should the patient only be given an interpretation of the data and instructions for how to respond? Sensing technologies make it possible to individualize data, but should they also be used to tailor communication strategies? How should this profiling be made apparent to the patient-user? Further, how ought we prevent the disease being foregrounded at all times, and thus enhancing negative self-image or increasing feelings of being sick, due to continuous monitoring and feedback? How visible are the vest’s and the app’s forms of feedback? Are they something that a bystander may become aware of, therefore violating the patient’s sense of privacy? Ensuring monitoring by caretakers without impeding autonomy, as well as promoting the self-reliance of COPD patients, becomes a central design requirement. A continued reflection on these questions will allow for values to surface, and responding to these concerns (and opportunities) will move the device toward the goal of legibility.

A central anticipatory question is how to make critical data meaningful on an individualized scale. Sensors create objective data, but evaluating and giving meaning to that data is subjective. While regulatory bodies set (legal) limits to evaluate air quality, those levels do not necessarily account for vulnerable populations’ sensitivities. Conversely, the threshold determined by the patient may be rather subjective, based on recalled experience of their response to certain contexts or environments, thus not covering all possible adverse events for all circumstances. A level determined by a medical professional based on individual exposure and responses would likely provide a generally accepted threshold, but it might not take in and weigh all variables and interactions at hand. A more nuanced and comprehensive approach could involve studying the historical data of a particular patient over time or correlating time series of individual exposure, biometrics, complaint patterns (Bogers et al. 2018), or by machine learning methods (as are used in predictive maintenance industry and machine health). These kinds of predictive methods are already commonly used in sports and quantified self-applications (e.g. Stetter et al. 2019), but risk becoming black-box relations lacking a clear physiological explanation. In light of the challenge of determining appropriate thresholds (and associated cautionary warnings to the patient or their healthcare worker), it is important that patients be actively shown that these varied measurements and predictive techniques are being used, and why. Otherwise, instead of engagement, we risk unjust (epistemic) paternalism.

To foster engagement, the vest and app design includes vibrating and visual alarms (i.e., traffic light color coding of graphs). These alarms can be set based on exceedance or projected exceedance in the near future of thresholds. These thresholds are based on settings provided by patients, caretakers, or by a machine learning model once data of an individual patient have been gathered long enough to create a personalized response model to external stressors. Although patients asked for these alarm features in interviews (so they would feel less anxious), they should get the opportunity to switch off the alarm settings and thus maintain control. Moreover, they should be able to see the graphs with historical data and triggers so they can learn what events led to the alarms, to understand risks and provide opportunities for behavior change.

Conclusion: Designing the Canary

This paper introduces the design principle of legibility, which is concerned with surfacing the layers of mediation and exposition behind complex technologies (such as sensing technologies) in a form that’s intelligible and constructive for the general end user. Here, legibility was applied in detail to the case of COPD vests and an accompanying app, to flesh out the possibilities and usefulness in the design process. Like the canary in the coal mine, these sensing technologies have the potential to identify and communicate when environmental factors can have negative impacts, before humans are capable of sensing it themselves. However, these sensing technologies introduce new challenges. While the canary could detect a single threshold that applied to all the humans within earshot, the COPD vests can individualize their sensing capabilities to particular patients and even adapt as that individual’s condition progresses. Similarly, canaries performed their sensing function in a relatively isolated context (mines) with an isolated user group (miners), whereas today’s sensing technologies are designed to integrate with diverse daily contexts. The emerging complexity and range of these sensing technologies necessitate new modes and approaches to account for the expanded epistemic and moral issues introduced.

As a guiding principle, legibility can assist with enacting a proactive, anticipatory approach to sensing technologies, helping to elucidate the moral issues at stake in the communication of information to users. If successful, a legible device or service can promote positive engagement, fostering values such as autonomy (Friedman and Nissenbaum 1997). This serves a much-needed practical purpose: a means to operationalize a value sensitive design approach within the emerging field of sensing technologies. As such, legibility is intended to be incorporated into existing value sensitive design frameworks (e.g., Friedman and Hendry 2019; van den Hoven, Vermaas, and van de Poel 2015). However, as preliminary concept, it is not necessarily limited to value sensitive design, or to health-related sensing technologies. Legibility could be adapted as a tool for other design-oriented approaches within ethics of technology, such as technological mediation (e.g., Kudina and Verbeek 2018; Verbeek 2011).

As sensing technologies become more ubiquitous, so too could the applicability of legibility. Take, for example, artificial intelligence (AI), and specifically the rising importance of “explainable AI.” This refers to research that attempts to explain and make understandable to the user how deep learning neural networks produce their output (e.g., Arrieta et al. 2020). A range of methods and techniques are used to represent and visualize the inner working of the neural nets that often epistemically outperform human experts (e.g., medical diagnosis). This leads to a situation where the user has very good reasons to believe that the output of the system is accurate even though the user is not able to justify the output in any way other than on the basis of the epistemic authority of the system. In feature mapping, for example, colored heat maps are produced of images that indicate which feature of the image the neural network has focused on to arrive at a certain object classification. The quest to open the black boxes of AI is sometimes referred in terms of explainability, interpretability, understandability, or transparency, which are all epistemic ideals to counter the inherent opacity of machine learning technologies. Through offering a pragmatic and user-focused outlook, the framework of legibility could be refined to offer insights for the responsible development of AI interfaces.

As another example, take the growing trend of “smart cities.” In Building and Dwelling, Richard Sennett (2019) critiques dominant approaches to smart city development. They are too easy to live in, paradoxically stupefying us, claims Sennett. In particular, the focus on usability (or what he calls “friction-free and user-friendly”) creates a rigid and controlled environment, which fails (in part) because its does not engage citizens. Alternatively, new technologies can be used to coordinate participation, allowing for innovations such as new governance models (e.g., participatory budgeting). With some refinement, it would seem plausible that legibility could be utilized as a principle to promote engagement and guide the deployment of sensors in urban spaces.

Explorations of the broader applicability of legibility can be coupled with theoretical explorations into conceptual critiques of value sensitive design, such as the vague definition of values (Jacobs and Huldtgren 2018; Manders-Huits 2011). If utilized and applied through extensive user testing, it can also be explored whether, or to what extent, legibility better aligns the design and use contexts of technologies, a tension referred to as both the “positivist problem” and the “designer fallacy” (Albrechtslund 2007; Ihde 2008). Taken together, future practical and theoretical work across different domains can serve to refine the ideas proposed here. This paper is thus a starting point, not a final statement, on legibility as a design principle. Through a critical and iterative development of the concept of legibility, we can strive to ensure that our future canaries are responsive, engage-able, and ultimately better suited to help us navigate their respective coal mines.

Footnotes

Authors’ Note

Holly Robbins and Taylor Stone shared first authorship.

Acknowledgments

Earlier versions of this paper were presented at The Society for Philosophy and Technology bi-annual conference, held at Texas A&M University in May 2019, and the New HoRRizon Workshop on Responsible Research and Innovation, held at Delft University of Technology in May 2019. We would like to thank both of these audiences for their feedback. The authors further thank thesis advisors Chris Heydra, Fidelis Theinert and Anjoeka Pronk of the Smart Sensor Systems research group of The Hague University of Applied Sciences, Maarten Gijssel of Kinetic Analysis, and the student groups working on the development of the requirements of the interface and vest. Finally, we also thank the Dutch Research Council (NWO) for funding this research project, as part of the National Science Agenda Startimpuls “Measuring and Detecting for Healthy Behaviour” (dossier 400.17.604).

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.