Abstract

This paper explores how calculative cultures shape perceptions of models and practices of model use in the financial industry. A calculative culture comprises a specific set of practices and norms concerning data and model use in an organizational setting. Drawing on interviews with model users (data scientists, software developers, traders, and portfolio managers) working in algorithmic securities trading, I argue that the introduction of complex machine-learning models changes the dynamics in calculative cultures, which leads to a displacement of human judgment in quantitative finance. In this paper, I distinguish between three calculative cultures: (1) an idealistic culture of undivided trust in models, (2) a pragmatic culture of skepticism toward model accuracy, and (3) a pragmatic idealist culture of early stage skepticism and implementation and production-phase idealism. Based on the empirical material, the analysis engages with examples of each of the three calculative cultures. The study contributes to the social studies of finance and science and technology studies more broadly by showing how perceptions of models shape and are shaped through model work in data-intensive, computerized finance.

Keywords

Introduction

We can’t speak for all quants, but by and large much of quantitative investing is about common sense and discipline, rather than about esoteric math and computer algorithms.

Computer algorithms and models are ubiquitous in today’s financial markets. They function as intermediaries, connecting traders to markets, and as extensions of human capabilities, enabling investors and fund managers to find an edge in unfathomable amounts of data. Over the past couple of decades, algorithms have accelerated trading and expanded the realm of opportunity in data-driven investing (Arjaliès et al. 2017; Pardo-Guerra 2019). As markets’ technical infrastructures become more advanced, algorithms also become more sophisticated. Moreover, as the sophistication and complexity of algorithms increase, questions about transparency, use, assessment, and control of these technical intermediaries of decision-making and extensions of human capability seem as relevant as ever.

The availability of vast amounts of data and the hardware needed to store and process them have paved the way for the introduction of increasingly sophisticated algorithms and models in the fields of investment management and securities trading (Kirilenko and Lo 2013). Machine-learning algorithms have gained ground in recent years and are expected to lead a profound transformation of the way people trade and invest in financial markets (Guida 2019; Lopez de Prado 2018). As these technical innovations fuse into the investment apparatuses of top-tier quantitative hedge funds and high-speed algorithmic trading firms and trickle down to lower echelon market participants, the demand for people with distinct skillsets soars. Data scientists, engineers, and software developers are now coveted human resources, while traders and financial analysts have increasingly become casualties of automation (Buchanan 2019). That the financial industry is eager to attract human resources capable of actualizing the potential of emerging technologies is neither surprising nor a new development. 1 However, as science, technology, engineering, and mathematics PhDs gradually replace the old guard, it is increasingly the former group, the enablers of automation and facilitators of machine intelligence, that shapes finance’s present and future.

This study investigates how calculative cultures in quantitative investment management and algorithmic trading change as the technological aids available become more advanced. Calculative cultures or cultures of model use constitute “specific practices of integrating models into financial decision-making and combining them with emotions, views and stories of their users” (Svetlova 2018, 4). It is cultures and not practices of model use, because it is the emotions, views, attitudes, and disciplinary backgrounds of people combined with organizational and scientific procedures, rules and norms that shape the development, and use of models in decision-making processes (i.e., the material practices of model use). Although culture includes practice, equating it with practice ignores crucial nonmaterial elements of culture such as the formation of frames of meaning within which people make sense of and act in the world (Knorr Cetina 2007; Martin 1998). The concept of cultures of model use subscribes to the idea that models and algorithms are not isolated entities decoupled from developers and users, but rather entities that exist in a relationship with market actors (Bader and Kaiser 2019; Grosman and Reigeluth 2019; Lange, Lenglet, and Seyfert 2019; Seaver 2017). Influence is mutual in these socio-material practices: human influence feeds into and shapes models, while, in return, models affect perceptions and practices (Rahwan et al. 2019, 483). Data scientists, software developers, computer engineers, and other quants all have views about research and model use, and if they do not have an opinion about markets when entering the world of finance, they quickly acquire one. Quants’ attitudes toward models shape and are shaped within cultures of model use and studying them may help shed light on the ways emotions, experience, and judgment remain crucial elements in contemporary finance, despite it being an increasingly quantified and automated world.

Drawing on interviews with market participants working mainly in the fields of algorithmic trading and quantitative investment management, I examine how different views on the use of algorithms, emerging technologies, and data affect both model development and how trades are conducted. Although there are manifold calculative cultures in the financial industry (Svetlova 2018, 4), I distinguish between two: idealistic and pragmatic calculative cultures (Mikes 2009, 2011; Power 2005). Calculative idealists have great faith in their models and no worries about basing decisions on model output. Calculative pragmatists, on the other hand, do not expect formal models to produce accurate representations of reality (Svetlova 2018, 69-70; Svetlova and Dirksen 2014; Wansleben 2014, 608). Although not opposed to model use, pragmatists or skeptics “treat model output as the starting point for…the exercise of judgment,” and thus not as the primary basis for decision-making (Mikes 2011, 240). I use the distinction between idealists and pragmatists to categorize and examine two distinct attitudes toward models and the effects they have on practices of model use.

Studies of calculative cultures in finance have yet to account for the introduction of complex adaptive machine-learning algorithms. This study attempts to make up for this gap in the literature by reflecting on the use of machine learning for trading and investment management purposes. I argue that employing machine-learning models generates changes in model use, leading to dynamic intertwinement of models and users. I suggest this reflects a novel calculative culture, which I term pragmatic idealism. I argue that the combination of the complexity, opacity, and ability to learn of machine-learning models shapes how quants approach model use, making them pragmatic in the preparatory stages of model development but idealistic in the implementation phase. In other words, the introduction of machine-learning models seems to elicit a displacement of the role of human judgment in model-driven financial decision-making processes. The study contributes to the social studies of finance and science and technology studies more broadly by showing how perceptions of model use shape and are shaped in a highly data-intensive, computer-driven industry.

Cultures of Model Use

In May 2019, I gave a talk on calculative cultures in systematic investing at BlackRock’s UK headquarters in London. 2 The talk came about due to a classic example of snowball sampling leading to something other than the original intent. I had reached out to a BlackRock employee working on mitigating decision bias in investing and asked if she would be interested in doing an interview. She declined with reference to firm policies but instead invited me to London to present at one of their biweekly seminars that included guest speakers from industry or academia. In the talk, I explained the distinction between idealistic and pragmatic calculative cultures and used it as a point of departure to address different practices of model use in quantitative hedge funds and algorithmic trading firms that I have researched. A person in the audience, who I later learned had been with BlackRock for several years, found the distinction interesting, although somewhat crude. He pointed his finger to a place outside the conference room and said that a group of pragmatic portfolio managers were sitting out there. Then, he pointed to the floor downstairs, where another group of pragmatic investors were apparently located. Finally, he pointed to the ceiling and said that there used to be a group of calculative idealists sitting upstairs, but, he added laughing, they were no longer with the firm.

He seemed to imply that pragmatists were all around, while model purists were few and far between. This was no real surprise. Financiers, especially the more seasoned ones, generally like to think that experience, common sense, discipline, and intuitive judgment still matter—in other words, there are aspects of savvy investing that models cannot learn. To them, investing is both an art and a science. This sentiment is captured in the slogan “where art meets (data)science,” which adorns the website of BlackRock’s systematic fixed income team. Although models and algorithms are ubiquitous in finance, most practitioners seem to acknowledge that quantitative investment and algorithmic trading require more than simply feeding a model with data and then waiting for it to produce an estimate or value that then determines action (Svetlova and Dirksen 2014, 564). Financiers deploy models in specific social and epistemic contexts in which modeling practices intertwine with decision-making processes. Judgment calls, norms, and narratives complement and sometimes contest the use of sophisticated trading algorithms, complex models, and comprehensive data sets in these cultures of model use (Svetlova 2018, 4). 3 Whether done manually or with the aid of cutting-edge technology, investing and trading involve pragmatic elements. However, to acknowledge that pragmatic considerations and adjustments happen in cultures of model use does not mean that no one in the financial industry idealizes models and puts a tremendous amount of faith in numbers. As the comment made at my BlackRock talk suggests, it certainly is possible to find both pragmatists and people with more idealistic views of model use in the world of finance.

The distinction between idealistic and pragmatic calculative cultures is borrowed from studies of risk management in banks (Mikes 2009, 2011; Power 2005, 2010). These are ideal-typical logics of practice and frames of meaning that delineate practitioners’ attitudes toward numbers and models. Power (2005) describes adherents of calculative pragmatism as those who “regard numbers as attention-directing devices with no intrinsic claims to represent reality” (p. 592). Calculative idealists, on the other hand, see “numbers as aiming to represent the costs of true economic capital based on high quality frequency data, and inducing correct economic behavior in the light of these risk measures” (p. 593). The idealist is, in other words, someone who believes that model outputs generate economically sound decision-making. In Mikes’s adoption of Power’s two ideal types, the names change to quantitative enthusiasm and quantitative skepticism, but the meaning of the distinction remains more or less the same. According to Mikes (2009, 20), quantitative enthusiasts assume that risk numbers “reflect the underlying risk profiles.” The output from a model is, to the quantitative enthusiast, an accurate representation of real-world conditions. In contrast, Mikes (2011, 241) argues that risk officers with a skeptical attitude toward quantification of risk do not shy away from calculative practices but instead combine them with a focus on “envisioning risk”—a risk-anticipation practice based on “mental models, prior experience, and intuition.” Hence, the distinction is not between quant-centrism and numbers-aversion. Rather, it is a matter of differing attitudes toward numbers and models: idealists trust models and let their output guide decision-making, while pragmatists use model output merely as one kind of input in decision-making. Furthermore, idealists believe models provide accurate representations of reality, while pragmatists think models need to be tweaked and complemented by human judgment, intuition, and experience to fit the context of application.

“Pragmatic adjustments” is the term Svetlova and Dirksen (2014, 564) use about work performed “outside” or around models, which “enable the explicit connection of models to the world.” In her study of the use of discounted cash flow (DCF) valuation models in banks and asset management firms, Svetlova (2013, 323; see also Svetlova 2012, 2018) describes it as a process of “adjusting valuation models to market realities.” A DCF model calculates the present value or discounted value of an asset based on estimates of future cash flows, that is, expected flows of revenue and outflows (costs) from the asset. The value produced by the model indicates whether investing in the asset is a financially sensible thing to do (Law 2015). Quantitative pragmatists thus assume that some sort of mediation process is required to eliminate incoherencies between model output and the current market environment. This is especially the case when dealing with generic models such as DCFs. With this type of model, usability and efficacy depend on how analysts and traders exercise their experience and judgment when interpreting model output in light of the current market situation. Abolafia (2010, 253) has described the practice of aligning models to their context of application as one of “comparing the ideal relationship in the model to the real one represented by current events.” Any cognitive dissonance caused by incoherencies between a model and the current market situation is, according to Abolafia (2010, 356), remedied through construction of a narrative in which model output makes sense in the current economic environment. Sensemaking thus appears to be an important tool in pragmatic cultures of model use.

The idea that model output forms a starting point for the exercise of judgment hinges on the common-sense assumption that humans, not models, are the main source of decision-making. This assumption certainly fits cases where models are equations in a spreadsheet which spit out an estimate of an asset’s present value or a portfolio’s value at risk. However, things start to blur when, for example, an automated trading system is capable of pricing, assessing risk, and executing trades. Such automation of decision-making does not imply that the exercise of human judgment is superfluous. It does, however, seem to turn the dynamic between model use and nonmechanized decision-making on its head—not by eradicating human judgment but by displacing the moment at which it is crucial. When dealing with automated algorithmic systems, pragmatic adjustments are important during the initial phases of problem formulation, model selection, and parameter setting. Yet, once an automated algorithm is in production, the role of the human largely changes from active decision maker to passive controller. This change is evident in cases of machine-learning model deployment for investment management and algorithmic trading purposes (Hansen 2020).

A rule of thumb in machine-learning model use in finance is to refrain from tinkering with the model when it is running (Arnott, Harvey, and Markowitz 2018; Dixon and Halperin 2019). Although it might be tempting to tweak a model that is not doing as well as expected, it is important to abstain from the natural inclination to intervene in its operations (Arnott, Harvey, and Markowitz 2018, 13). Arnott, Harvey, and Markowitz (2018, 12) even imply that model tweaking could have counter-performative effects: “the mere act of studying and refining a model serves to increase the mismatch between our expectations of a model’s efficacy and the true underlying efficacy of the model.” 4 That being said, pragmatic considerations are nevertheless central in the initial stages of machine-learning model development, because they address important concerns such as “benefits (accuracy, understandability), cost (of measuring features, of making mistakes), and resource usage” of/in modeling (Provost and Buchanan 1995, 57).

When it comes to the study of machine-learning model use in finance, I argue for the existence of a third type of calculative culture, pragmatic idealism, which synthesizes the pragmatic and idealistic attitudes toward models. The term pragmatic idealism suggests that in cultures of machine-learning use, pragmatic considerations are central in the process of selecting and devising complex adaptive models, while model users are unwavering idealists when models are running. That pragmatic idealists put a lot of trust in carefully devised and rigorously tested models does not mean that they are “model dopes,” unthinkingly accepting a model’s actions (MacKenzie and Spears 2014b, 108). It rather suggests that they are aware of their limited comprehension of the actual big data processing undertaken by their models and that the worth of their judgment and domain knowledge is measured by their ability to select and parameterize a model suitable for the problem at hand (Hansen 2020).

Researching Attitudes toward Models

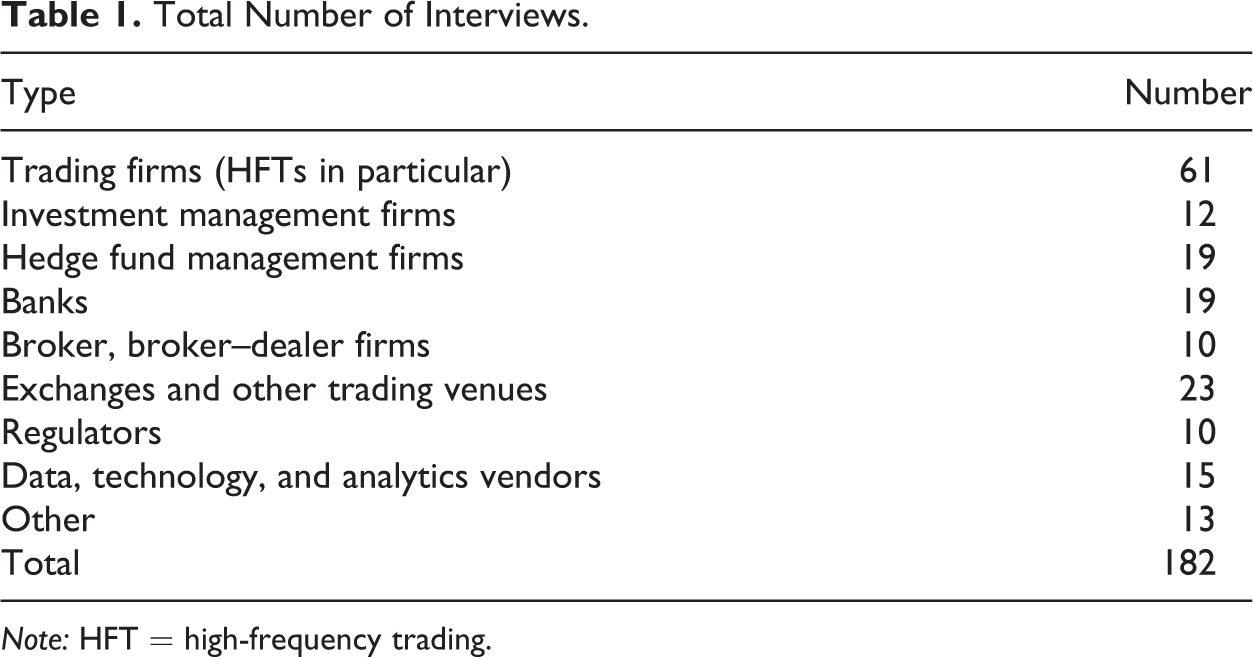

To understand how attitudes shape and are shaped by practices of model use, it is necessary to consider the roles of model users as well as the processes of judgment, justification, and negotiation in calculative cultures (Svetlova and Dirksen 2014, 562-63). It requires being attentive to the way meaning structures forge practices and thus influence decision-making processes (Knorr Cetina 2007, 364; Martin 1998, 28). The ambition of this study is less to investigate the technical specificities of individual models than to think of models as entangled in socio-material practices or simply as culture, that is, as part of the patterns of meaning and practice in specific contexts (Seaver 2017). The empirical material is drawn from a body of 182 semi-structured interviews conducted over a two-year period (2017–2019) by me and three other members of the interdisciplinary research project “Algorithmic Finance: Inquiring into the Reshaping of Financial Markets.” We conducted most of our interviews on-site with market participants working in hedge funds, investment management firms, proprietary algorithmic trading firms, banks, brokers, regulators, exchanges, and technology vendors in London, New York, and Chicago (see Table 1). 5

Total Number of Interviews.

Note: HFT = high-frequency trading.

The majority of our interviewees worked in the field of quantitative finance. Many were quants and those who were not relied—in one way or another—on input from the models that quants construct and operate. The term “quant” has mostly been used as an abbreviation for quantitative analyst, yet today it covers a wide range of roles in finance and thus no longer exclusively refers to investment bank analysts doing financial engineering. In a very basic way, a quant can be defined as someone who uses “tools and insights from economics, finance, statistics, math, computer science, and engineering” to analyze and solve financial and risk management problems (Pedersen 2009, 6-7). Quant is thus a rather broad signifier.

When analyzing the data, I focused on instances of what I call “model talk.” Model talk reports the inner workings of cultures of model use. It comprises reflections on and narrations of modeling practices and speaks to the use of specific models as well as the challenges of adopting them in existing practices. Moreover, model talk includes normative reflections on what model use ought to be and what new developments in the field might bring in terms of opportunities and challenges. The intertwinement of models with decision-making practices is something that came up frequently in our interviews. It could be as a reflection on the communication and interpretation of model output between, say, developers and traders. The following quote, which is from an interview with a former hedge fund quant, suggests how modeling and decision-making practices are interwoven. Whether something is good enough or not is largely a subjective question. Often people think that because mathematics is involved, you can always measure things. This is only partly true. There is a huge subjective element when it comes to evaluating whether something that you obtain in the results is or is not good for a particular kind of application. At the end of the day, this is a matter of whether the metric that you calculated is within certain thresholds. But the thresholds have to be imposed by the project itself and they are not always very objective. Often you end up in a situation where you have a particular procedure that gives you the result x. Then, you have another procedure that gives you the result y. You believe that y is better than x, but everyone else might think we should stick to x because it is more convenient and easier.

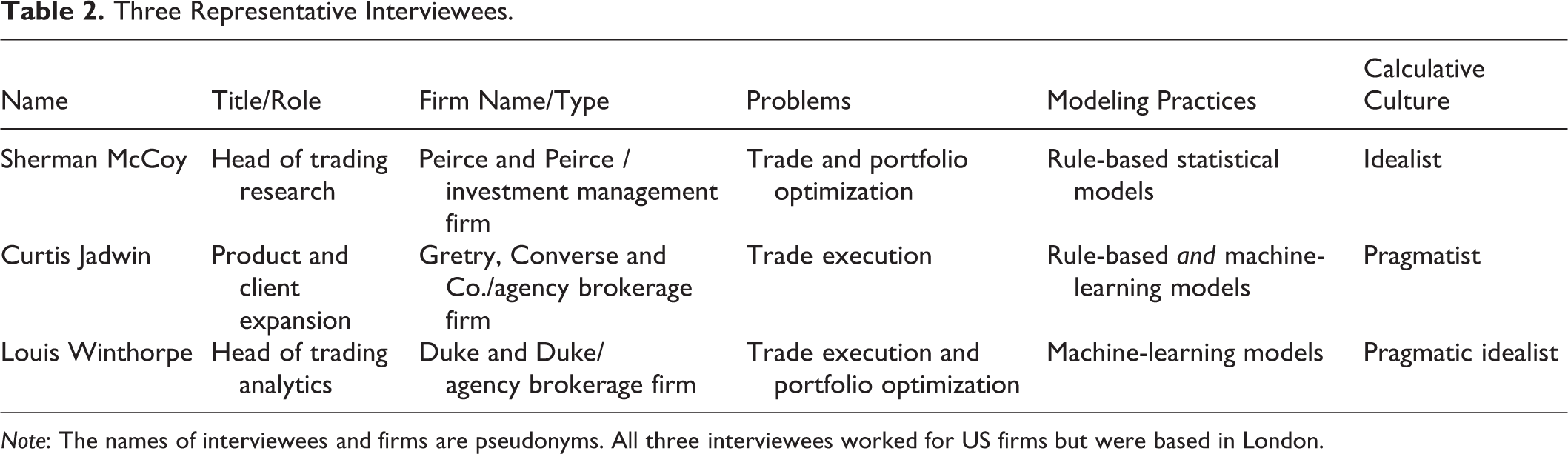

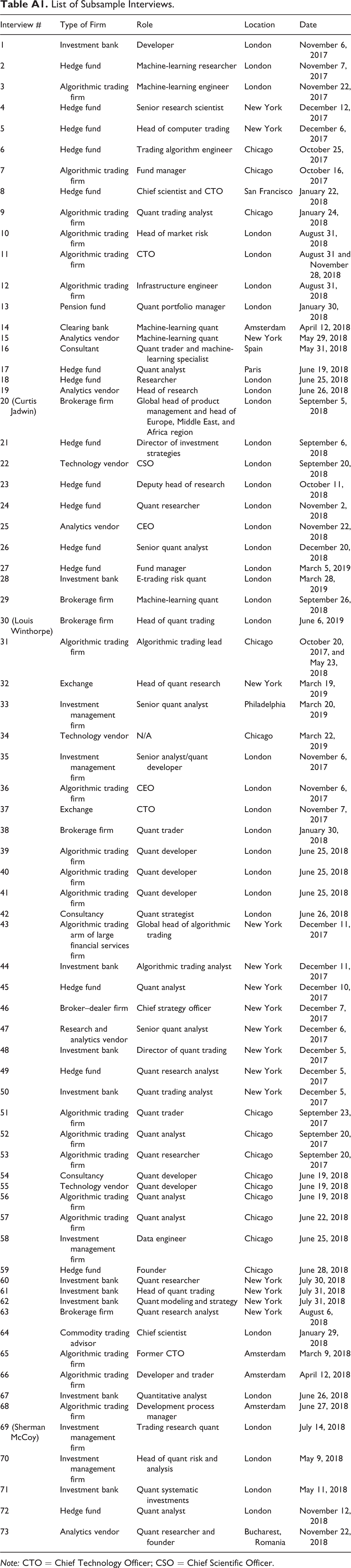

Of the 182 interviews, I created a subsample of 73 interviews with quants working mainly in quantitative investment management and algorithmic trading (see Appendix Table A1). In thirty-one of the cases, interviewees had been or were applying machine-learning techniques to financial and risk management problems. The seventy-three subsample interviews were analyzed using an open coding approach to capture instances of model talk. Codes include judgment, decision-making, bias, human element, quantification, intuition, automation, market knowledge, complexity, and explainability. Instances of model talk were, if possible, categorized as machine learning or nonmachine learning and idealistic/enthusiastic or pragmatic/skeptic. Passages in which interviewees express idealistic and pragmatic views on model development and use got their own category, which became the pragmatic idealistic calculative culture category. Based on the analysis of the subsample interviews, I will discuss the idealistic, pragmatic, and pragmatic idealistic calculative cultures by focusing on three interviews, each representing, in an emblematic form, their particular type of culture (see Table 2). Besides the fit with one of the three calculative cultures, the main criteria for selecting the three interviewees were similarities in the types of problems they dealt with and their roles in the organizations of which they were a part. Moreover, the three interviewees adhere to different modeling practices—one does not use machine-learning techniques, one uses a suite of both adaptive machine-learning and deterministic or rule-based models, and one is mainly focusing on machine-learning models—which suggest a connection between the types of models being leveraged and the quants’ view on the application of them. 6

Three Representative Interviewees.

Note: The names of interviewees and firms are pseudonyms. All three interviewees worked for US firms but were based in London.

Although the three interviews constitute the main source of data in the analysis, the study draws on all interviews from the subsample. In addition to the interview data, I have attended industry conferences on quantitative finance and machine learning in finance. To substantiate and expand upon interviewees’ reflections on particular quantitative techniques such as specific branches of machine learning, I carefully read financial economics literature and blog posts by market professionals as well as financial newspaper articles on technology and model use in quantitative finance.

Quantification and Domain Knowledge

As suggested above, practices of modeling and model use involve negotiation, deliberation, and collaboration. For example, quants who deal with problems associated with executing trades and managing portfolios necessarily have to engage with traders and portfolio managers. However, different views exist among quants about the relevance of traders’ domain expertise. Some consider traders’ market savvy a relic from a time before the computerization of finance and a bias-bug potentially hindering the smooth course of an otherwise thoroughly quantified trading machinery. Other quants see traders’ domain knowledge as crucial in model use, even in cases where human traders are being replaced by automated trading systems. In the following section, I contrast the idealistic and pragmatic attitudes toward model use and thus the corresponding calculative cultures by comparing two quants’ views on how trading problems ought to be solved.

Sherman McCoy worked as head of trading research for the Europe, Middle East, and Africa region at the global investment management firm, Peirce and Peirce. The role of the three-person trading research team was to provide support for and technical solutions to traders working different markets such as bonds, stocks, and foreign exchange. As Sherman put it, they solve trading problems and bring “visibility” to the problems traders encounter. They achieve visibility and efficiency in problem-solving, he argued, through a process of quantification. We take the problem, we translate it into data, and quantify it—because you cannot understand a problem if you do not quantify it. You need to put it in numbers, and when you put it in numbers, you can focus on improving the numbers; that is exactly what we do.

The types of trading problems Sherman and his team deal with are mainly associated with trade execution. For them, it is not a matter of devising investment strategies but rather ensuring that strategies are most optimally effectuated in the market. Sherman gives the following examples of problems that traders bring to him: Traders can come to you and say, “I have traded this, I have traded that, I have no idea whether my trade went well or not. Can we meet and do some analysis?” Or, it could be: “I have a portfolio of trades and I want to optimize it. I am looking to trade efficiently and I do not want to just go to the market and blindly bid it and make an impact.”

Handling these types of trading-related problems requires interactions with traders. While traders are the ones who detect problems, quants make sense of them and come up with a solution. Sherman explained that the trading research team meets with the traders once a week. Daily interactions, he said, might result in traders “telling you what to do,” which is another way of saying that he does not want traders to impose their bias on him and his team. According to Sherman, most traders at Peirce and Peirce do not know a lot about data, software, and models. Quants’ views on the domain knowledge of traders and portfolio managers and the extent to which it plays a role in practices of model use are indicative of the type of calculative cultures of which they are a part. While the quantitative idealist, Sherman, does not think that the traders’ market expertise is of much use to quants, other interviewees had slightly different views of the utility of traders’ market knowledge and judgment.

Unlike Sherman, Curtis Jadwin from the US agency broker Gretry, Converse, and Co. believes that it is difficult to separate the work of the quant occupied with trading problems from that of the trader. He described the current situation in the financial industry as a period of transition toward more automation. For this transition to be smooth, Curtis argues that it is necessary to retain the valuable experience and domain knowledge of traders and somehow incorporate it into practices of model use in trading. Curtis had learned the importance of practical market knowledge from experience. At his previous employer, a London-based hedge fund giant, Curtis had worked on a “virtual trader,” that is, an automated trading system. Before getting the green light to start building the system, the head of trading told Curtis, “I do not want you to do anything before you have traded for a year!”

Curtis traded for an entire year. However, his performance left a lot to be desired. He described the experience as “terrifying” and his performance as “rubbish.” It was humbling and taught him to value the knowledge and judgment of those who had a better feel for the quirks of the market than he did. Experiencing the brutal reality of trading affected Curtis’s approach to and views on model use. His appreciation for domain expertise in the area of trading was reflected in his views on automation, machine learning, and human–machine interactions. Curtis argues that a crucial distinction to be aware of is that, contrary to machines, humans possess self-awareness and intuition. Self-awareness and intuition concern accountability, creativity, and reflexivity. Not only are humans capable of detecting whether something is a good idea or not, they are, as Curtis pointed out, also inclined to reflect on whether the idea is right or not. The message I always try to convey is that we are not looking to replace human beings. We are looking to improve our performance and it is a case of what role each component has. If you are making a decision that could be materially catastrophic for your firm, it is probably sensible to have a human review that decision.

The Displacement of Judgment in Complex Model Use

The pragmatic attitude toward model use and automation, as reflected in Curtis’s views, assumes that human judgment is a safeguard against potential machine misbehavior as well as an important element in aligning models and market realities. It became clear to me from talking to interviewees who utilize machine-learning techniques for trading and investment purposes that automation is not replacing human judgment but is forcing its displacement. It is not so much a case of model output guiding decision-making as it is one of human judgment and experience guiding the model. In other words, judgment, experience, and reflexivity are valuable capabilities in the process of assigning the right quantitative tool, including the right data, to the problem at hand. This is, of course, always the case when using models for problem-solving, but the point is that in machine learning, the pragmatic adjustments take place in the modeling phase and not in the model use phase. What follows is a discussion of the displacement of human judgment in light of automation.

Louis Winthorpe from the brokerage firm Duke and Duke was convinced that machine learning could assist the automation of large parts of the practices of modeling and model use. “Let us take humans out of this and completely automate the whole thing,” he said, with reference to firsthand experiences he had in a major US investment bank. He imagined building an assemblage of connected models—“a black box system”—to which additional models could be simply plugged in, thereby expanding the scope of the system. A central argument for removing humans from the equation is to eliminate human bias (emotions, unfounded expectations, idiosyncrasies, etc.) from the decision-making process. It is, however, worthwhile noticing that human biases create a sort of double bind in financial markets. Although automation might mitigate them in the trading practices of a specific market participant, biases are constantly affecting the course of markets. This is at least the case if one assumes markets are social systems of continuously modified expectations, which means that most variables are neither stable nor independent (Svetlova 2013, 323). Therefore, removing human quirks from a model does not remove them from the market. Another argument for reducing human interference in the operations of machine-learning models concerns the logic of such models. Whereas ordinary rule-based algorithms follow explicit rules established by the developer, machine-learning models develop rules as they learn from processing data (Bank of England and Financial Conduct Authority 2019, 6; Hansen 2020, 2). The purpose of these models is not only to be efficient, accurate, and robust but also to discover things (problem solutions, patterns, correlations, anomalies) that were neither specified nor necessarily possible to contemplate in advance (Mullainathan and Spiess 2017, 88). Besides eliminating alleged human follies, such models extend human capabilities, meaning that they expand the realm of data-processing possibilities beyond human comprehension.

Like Sherman and Curtis, Louis was working on trading problems. More specifically, he had spent about a decade developing sophisticated machine-learning algorithms for trade execution purposes. Louis found reinforcement learning the most suitable and promising machine-learning technique for dealing with the optimal execution problem. A reinforcement-learning algorithm employs trial and error to come up with optimal solutions to problems. The algorithmic agent’s actions lead to rewards or penalties, and it thereby learns how to maximize its total reward (Kearns and Nevmyvaka 2013). In the case of trade execution, the algorithmic agent learns optimal actions under certain conditions—optimal action in this case means reducing market impact or slippage as much as possible. Recently, Louis had begun to look at other areas where reinforcement learning could possibly be of use. Portfolio management seemed an obvious yet challenging area for him to leverage his technical expertise. However, the potential upside of an automated reinforcement-learning solution seemed to make taking on the challenges worthwhile. Reinforcement learning really helps us combine all the things we know and put them into a single place and try to optimize a solution for trading. It also brings us skill. We can customize or write the book’s [the portfolio’s] strategies so that they are fine-tuned to the idiosyncrasies of individual securities. That is another benefit. At the same time, it brings new challenges—huge challenges. Scalability is one of them. You know, we have to be able to trade on a large number of securities over a long period. Are we entirely certain that [market] regimes are constant over time? What happens when there is insufficient data regarding a particular security? What happens if we want to combine networks of securities? What happens when it is dominated by securities that have a lot of data and you [the reinforcement-learning algorithm] learn to trade those and not the illiquid ones?

The challenges of using reinforcement learning for portfolio management are associated with the complexity of the quantitative technique itself, the many factors involved in managing large portfolios of securities and the dynamic nature of financial markets. In portfolio management, actions cause changes in the composition and configuration of the portfolio, which means actions lead to new situations to which the model will need to adapt. As Louis points out, “you cannot wait a week and train a solution for a portfolio. You need a solution in two minutes or less. This is the reality.” Making sure that the model is able to learn at a sufficiently high pace is therefore a huge challenge. Changes in market regimes—which can occur if some market-internal or market-external factor, for example, causes a spike in volatility (Ang and Timmermann 2012)—further complicates the model’s learning process. Essentially, the concerns Louis raise relate to the problem of insufficient information, but not in a straightforward way. It is not merely an issue of acting if a sufficient amount of data is available and not acting if there is not enough data. The question furthermore concerns how data disparity might affect the algorithmic agent’s learning process, that is, the foundation of its decision-making. Will data disparity skew the automated optimization of the portfolio on aggregate? Will it affect dynamics in networks of securities if baskets of securities are included in the portfolio? The point is that Louis cannot know for sure.

Due to the many issues that need consideration, Louis’s approach is, in his own words, practical and “very pragmatic.” Feasibility, simplicity, and explainability are virtues in the ideational and modeling phases, and these parts of model work very much hinge on the judgment of the model developers. Louis’s attitude toward machine-learning model development and use thus reflects a pragmatic idealistic calculative culture: all the pragmatic considerations and adjustments are of upmost importance up until the point of deployment in the market. After that, the role of the quant shifts from inventor and builder to maintainer and controller.

Conclusion

As automated algorithms and adaptive, big-data-processing machine-learning models become increasingly prevalent in securities trading and investment management, new opportunities and challenges emerge for the people who deploy these quantitative tools. With the proliferation of machine-learning techniques in finance, model users do not necessarily know in advance what patterns and anomalies their models will find when perusing a data set. In fact, that is part of the appeal of these types of models (Mullainathan and Spiess 2017). Such models are not simply producers of estimates or values that help guide decision-making. Unlike “static” valuation models (e.g., DCF and value-at-risk) with fixed parameters, machine-learning models adapt to and learn from the data they process. The dynamic nature of these models, combined with their ability to process unfathomable amounts of data, change the way modelers perceive the possibilities of model use. As this paper has emphasized, the emergence of these novel models also shapes cultures of model use. Several empirical studies have explored expert cultures, valuation cultures, calculative cultures, modeling cultures, and cultures of model use in financial services and beyond. Accordingly, this study arguably represents a first attempt to explore the impact of the proliferation of machine-learning techniques on trading and investment practices in the financial industry.

The main objective of this study was to examine different ideal-typical calculative cultures and see to what extent attitudes toward models, quantification, and automation shape processes of model development and use. As the literature on cultures of model use suggests and as this study confirms, affectation in such cultures is not one directional, but reciprocal. Attitudes toward models shape the interplay between models and model users, while, in return, the applied quantitative tools affect the perceptions and practices of users. This study has demonstrated that model use in financial markets is an intricate social practice that implicates a mélange of firm internal and external actors, quantitative tools (models, algorithms, pieces of software, etc.), judgment, domain knowledge, and norms. Moreover, it showed how idealistic and pragmatic attitudes toward models are expressed in processes of model-driven financial decision-making. Whereas calculative idealists believe numbers and models induce rational conduct, calculative pragmatists use model output as an input to a decision-making process in which they also rely on judgment, experience, and intuition.

Although the financial industry is becoming increasingly mechanized, pragmatic elements such as judgment, intuition, and domain-specific market knowledge remain crucial in modeling practices and practices of model use. What changes with quantification and automation is when—not whether—pragmatic elements show their worth in the process of model development and use. I have introduced the concept of pragmatic idealism to account for the crucial ways in which practices of model use change with the introduction of machine-learning techniques in investment management and algorithmic trading. The excavation of pragmatic idealism in processes of machine-learning model use points to concerns about comprehension, transparency, explainability, and accountability in a world in which models are increasingly complex and automation is more and more prominent. Some of these concerns are already being addressed in the burgeoning literature on ethics of algorithms (see, e.g., Ananny 2016; Mittelstadt et al. 2016), but more studies of these issues in the financial realm are needed.

Footnotes

Appendix

List of Subsample Interviews.

| Interview # | Type of Firm | Role | Location | Date |

|---|---|---|---|---|

| 1 | Investment bank | Developer | London | November 6, 2017 |

| 2 | Hedge fund | Machine-learning researcher | London | November 7, 2017 |

| 3 | Algorithmic trading firm | Machine-learning engineer | London | November 22, 2017 |

| 4 | Hedge fund | Senior research scientist | New York | December 12, 2017 |

| 5 | Hedge fund | Head of computer trading | New York | December 6, 2017 |

| 6 | Hedge fund | Trading algorithm engineer | Chicago | October 25, 2017 |

| 7 | Algorithmic trading firm | Fund manager | Chicago | October 16, 2017 |

| 8 | Hedge fund | Chief scientist and CTO | San Francisco | January 22, 2018 |

| 9 | Algorithmic trading firm | Quant trading analyst | Chicago | January 24, 2018 |

| 10 | Algorithmic trading firm | Head of market risk | London | August 31, 2018 |

| 11 | Algorithmic trading firm | CTO | London | August 31 and November 28, 2018 |

| 12 | Algorithmic trading firm | Infrastructure engineer | London | August 31, 2018 |

| 13 | Pension fund | Quant portfolio manager | London | January 30, 2018 |

| 14 | Clearing bank | Machine-learning quant | Amsterdam | April 12, 2018 |

| 15 | Analytics vendor | Machine-learning quant | New York | May 29, 2018 |

| 16 | Consultant | Quant trader and machine-learning specialist | Spain | May 31, 2018 |

| 17 | Hedge fund | Quant analyst | Paris | June 19, 2018 |

| 18 | Hedge fund | Researcher | London | June 25, 2018 |

| 19 | Analytics vendor | Head of research | London | June 26, 2018 |

| 20 (Curtis Jadwin) | Brokerage firm | Global head of product management and head of Europe, Middle East, and Africa region | London | September 5, 2018 |

| 21 | Hedge fund | Director of investment strategies | London | September 6, 2018 |

| 22 | Technology vendor | CSO | London | September 20, 2018 |

| 23 | Hedge fund | Deputy head of research | London | October 11, 2018 |

| 24 | Hedge fund | Quant researcher | London | November 2, 2018 |

| 25 | Analytics vendor | CEO | London | November 22, 2018 |

| 26 | Hedge fund | Senior quant analyst | London | December 20, 2018 |

| 27 | Hedge fund | Fund manager | London | March 5, 2019 |

| 28 | Investment bank | E-trading risk quant | London | March 28, 2019 |

| 29 | Brokerage firm | Machine-learning quant | London | September 26, 2018 |

| 30 (Louis Winthorpe) | Brokerage firm | Head of quant trading | London | June 6, 2019 |

| 31 | Algorithmic trading firm | Algorithmic trading lead | Chicago | October 20, 2017, and May 23, 2018 |

| 32 | Exchange | Head of quant research | New York | March 19, 2019 |

| 33 | Investment management firm | Senior quant analyst | Philadelphia | March 20, 2019 |

| 34 | Technology vendor | N/A | Chicago | March 22, 2019 |

| 35 | Investment management firm | Senior analyst/quant developer | London | November 6, 2017 |

| 36 | Algorithmic trading firm | CEO | London | November 6, 2017 |

| 37 | Exchange | CTO | London | November 7, 2017 |

| 38 | Brokerage firm | Quant trader | London | January 30, 2018 |

| 39 | Algorithmic trading firm | Quant developer | London | June 25, 2018 |

| 40 | Algorithmic trading firm | Quant developer | London | June 25, 2018 |

| 41 | Algorithmic trading firm | Quant developer | London | June 25, 2018 |

| 42 | Consultancy | Quant strategist | London | June 26, 2018 |

| 43 | Algorithmic trading arm of large financial services firm | Global head of algorithmic trading | New York | December 11, 2017 |

| 44 | Investment bank | Algorithmic trading analyst | New York | December 11, 2017 |

| 45 | Hedge fund | Quant analyst | New York | December 10, 2017 |

| 46 | Broker–dealer firm | Chief strategy officer | New York | December 7, 2017 |

| 47 | Research and analytics vendor | Senior quant analyst | New York | December 6, 2017 |

| 48 | Investment bank | Director of quant trading | New York | December 5, 2017 |

| 49 | Hedge fund | Quant research analyst | New York | December 5, 2017 |

| 50 | Investment bank | Quant trading analyst | New York | December 5, 2017 |

| 51 | Algorithmic trading firm | Quant trader | Chicago | September 23, 2017 |

| 52 | Algorithmic trading firm | Quant analyst | Chicago | September 20, 2017 |

| 53 | Algorithmic trading firm | Quant researcher | Chicago | September 20, 2017 |

| 54 | Consultancy | Quant developer | Chicago | June 19, 2018 |

| 55 | Technology vendor | Quant developer | Chicago | June 19, 2018 |

| 56 | Algorithmic trading firm | Quant analyst | Chicago | June 19, 2018 |

| 57 | Algorithmic trading firm | Quant analyst | Chicago | June 22, 2018 |

| 58 | Investment management firm | Data engineer | Chicago | June 25, 2018 |

| 59 | Hedge fund | Founder | Chicago | June 28, 2018 |

| 60 | Investment bank | Quant researcher | New York | July 30, 2018 |

| 61 | Investment bank | Head of quant trading | New York | July 31, 2018 |

| 62 | Investment bank | Quant modeling and strategy | New York | July 31, 2018 |

| 63 | Brokerage firm | Quant research analyst | New York | August 6, 2018 |

| 64 | Commodity trading advisor | Chief scientist | London | January 29, 2018 |

| 65 | Algorithmic trading firm | Former CTO | Amsterdam | March 9, 2018 |

| 66 | Algorithmic trading firm | Developer and trader | Amsterdam | April 12, 2018 |

| 67 | Investment bank | Quantitative analyst | London | June 26, 2018 |

| 68 | Algorithmic trading firm | Development process manager | Amsterdam | June 27, 2018 |

| 69 (Sherman McCoy) | Investment management firm | Trading research quant | London | July 14, 2018 |

| 70 | Investment management firm | Head of quant risk and analysis | London | May 9, 2018 |

| 71 | Investment bank | Quant systematic investments | London | May 11, 2018 |

| 72 | Hedge fund | Quant analyst | London | November 12, 2018 |

| 73 | Analytics vendor | Quant researcher and founder | Bucharest, Romania | November 22, 2018 |

Note: CTO = Chief Technology Officer; CSO = Chief Scientific Officer.

Acknowledgments

I want to thank Maria Jose Schmidt-Kessen, Per H. Hansen, and Thomas Presskorn-Thygesen for their comments on an early draft of this paper and fellow “AlgoFinance” team members Bo Hee Min, Daniel Souleles, and Christian Borch for engaging in ongoing discussions of model use in finance. I am also grateful to the Science, Technology, & Human Values editors and the two anonymous reviewers for their constructive reading of this paper and insightful comments.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: This project has received funding from the European Research Council (ERC) under the European Union’s Horizon 2020 Research and Innovation Program (grant agreement no. 725706).