Abstract

I was interested to read the papers by Miller and colleagues published in April 2020 issue of Ear, Nose & Throat Journal. 1 Telemedicine is an increasingly prevalent component of medical practice. In otolaryngology, there is the potential for telemedicine services to be performed in conjunction with device use, such as with a nasolaryngoscope. The authors aimed to evaluate the reliability of remote examinations of the upper airway through an iPhone recording using a coupling device attached to a nasopharyngolaryngoscopy (NPL). The NPL was performed using a coupling device attached to a smartphone to record the examination. A second, remote otolaryngologist then evaluated the recorded examination. Both otolaryngologists evaluated findings of anatomic sites including nasopharynx, oropharynx, base of tongue, larynx including subsites of epiglottis, arytenoids, aryepiglottic folds, false vocal cords, true vocal cords, patency of airway, and diagnostic impression, all of which were documented through a survey. Results of the survey were evaluated through inter-rater agreement using the κ statistic. They mentioned that 45 patients underwent an NPL. The inter-rater agreement for overall diagnosis was 0.74 with 80% agreement, rated as “good.” Other anatomic subsites with “good” or better inter-rater agreement were nasopharynx (0.75), oropharynx (0.75), and true vocal cords (0.71), with strong percentage agreement of 89%, 91%, and 87%, respectively.

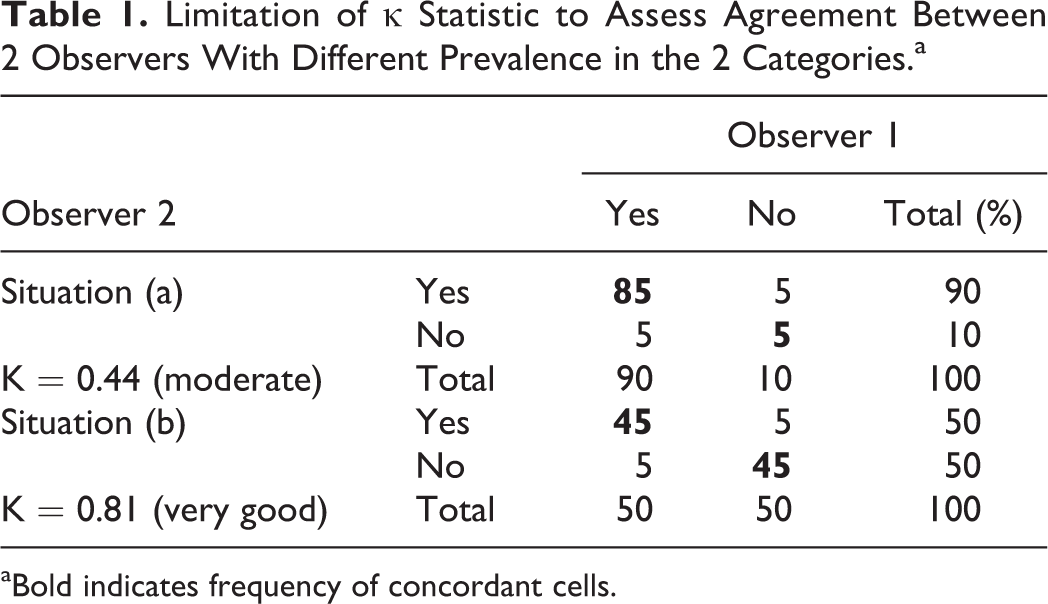

I want to congratulate the authors for this successful article and make some contributions. The main purpose of my letter is to mention methodological limitations of κ statistic to assess reliability (agreement). First, κ statistic depends on the prevalence in each category. It is possible to have the prevalence of concordant cells equal to 90% and discordant cells to 10%, however, get different κ-coefficient value (0.44 as moderate vs 0.81 as very good), respectively (Table 1). κ statistic also depends on the number of categories. 2 -8 I should mention that applying the weighted kappa would be a good choice to assess intra-rater agreement. However, Fleiss’ kappa is suggested to assess inter-rater agreement. Briefly, for quantitative variable, intraclass correlation coefficient should be used, and for qualitative variables, weighted kappa should be used. 2 -8 They concluded that a telemedicine device for NPL use demonstrates strong diagnostic accuracy across providers and good overall evaluation. It is crucial to know that accuracy (validity) and reliability (agreement) are 2 completely different methodological issues. To make it brief, any conclusion on accuracy and reliability should take into account correct statistical approach. Otherwise, misinterpretation may occur.

Limitation of κ Statistic to Assess Agreement Between 2 Observers With Different Prevalence in the 2 Categories.a

aBold indicates frequency of concordant cells.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.