Abstract

Conducting a functional analysis (FA) is considered the gold standard for assessing the function of disruptive behavior and informing function-based treatment plans for individuals with autism and developmental disabilities. However, data collected during FAs are subject to human error. Accelerometers are wearable sensors that capture an individual’s movement and can be used to identify behavioral events. The purpose of this study was to pilot the use of accelerometers to identify the occurrence of self-injurious behavior events during a FA. Three participants with autism, who engaged in self-hitting behaviors, participated in this study. Researchers conducted a FA with the participants while they wore small accelerometer devices. Observational data were collected using (1) live observation (“clinical-grade”), (2) from frame-by-frame video analysis (“research-grade”), and (3) via accelerometers. Researchers calculated interobserver agreement across data sets. Discussion of results and recommendations for practice and future research are included.

Introduction

Autism spectrum disorder (ASD) is a neurodevelopmental condition characterized by impaired social interaction, communication challenges, and repetitive behaviors (American Psychiatric Association, 2013). Up to 42% of the individuals with ASD also engage in destructive behavior, such as self-injurious behavior, aggression, or property destruction (Steenfeldt-Kristensen et al., 2020). These behaviors can lead to physical injury, social isolation, limited educational or vocational opportunities, and overall diminished quality of life (Leader et al., 2021). Additionally, these behaviors often highlight the limitations and inadequacies of current educational, healthcare, and social service systems in effectively identifying, managing, and supporting individuals who engage in self-injurious and other destructive behaviors (Crowe & Drew, 2021; Rava et al., 2017; Righi et al., 2018; Wink et al., 2018).

Therapy based on the science of behavior analysis uses evidence-based assessment and intervention procedures to treat destructive behavior, with the underlying theory that individuals engage in the behaviors to signal a want or need (Carr & Durand, 1985; Iwata et al., 1994). To identify this need, behavior analysts conduct a functional analysis (FA) to determine the function of the behavior (Beavers et al., 2013; Carr & Durand, 1985; Ghaemmaghami et al., 2021; Iwata et al., 1994). Within a FA, a behavior analyst will systematically simulate conditions that are hypothesized to occasion the behavior (e.g., losing access to attention, losing access to a tangible item, being asked to do a task, etc.) The analyst then collects data regarding the occurrence of the target behavior in these conditions. The condition in which the behavior occurs at the highest frequency, longest duration, or shortest latency indicates a potential function (Beavers et al., 2013; Carr & Durand, 1985; Iwata et al., 1994). The results of this analysis are then used to inform individualized, interventions that target the specific needs and behavioral patterns of the individual (Chezan et al., 2018; Ghaemmaghami et al., 2021; Hagopian et al., 1998).

Because the data collected in an FA informs treatment decisions, it is important that the data are objective and accurate (Baer et al., 1968). Current best practices rely on observational measurements from one or two human raters using continuous (e.g., frequency, duration, latency) or discontinuous (e.g., partial-interval recording) measures (Cooper et al., 2021). However, live data collection can be prone to errors, which can then lead to errors in treatment planning (Morris et al., 2022; Vollmer et al., 2008). For example, one such error, “rater drift,” occurs when the human rater recordings changes over the course of the observation, or over multiple observations (Cooper et al., 2021; Kazdin, 2021). To address this source of errors, raters may be re-trained or systematically calibrated with other raters to ensure reliability of the data (Kazdin, 2021). Calibration with other raters involves recruitment of a second rater to collect simultaneous data on the same behavior. The data collected are then compared to the primary rater. Highly reliable data (e.g., above 80% reliable) conveys confidence in the data. While this method can reduce errors, it is not a feasible option for many under-resourced settings (Angell et al., 2018). More importantly, highly

Many researchers have noted specific situations in which human rater data can pose limitations. For example, when an individual engages in the behavior at high frequencies, it is difficult for the human rater to capture every response (Delgado et al., 2017; Neely et al., 2022). In such cases, clinicians often employ discontinuous measures, such as partial-interval or whole-interval recording (Cooper et al., 2021; Kazdin, 2021). However, these measures are also subject to error, overestimating or underestimating the occurrence of the behavior (Fiske & Delmolino, 2012). For individuals with severe and potentially dangerous behaviors, it may be important to capture every behavioral occurrence to ensure treatment decisions are individualized and effective.

One possible solution to address these limitations is to leverage wearable technologies to supplement data collection (Goodwin et al., 2019; Lory et al., 2023; Neely et al., 2022; Scheithauer et al., 2022). For example, wearable technologies, such as an accelerometer, may be affixed to the human body to allow for automatic collection of linear acceleration data along three orthogonal axes (1 g = 9.81 m/s2). Based on Newton’s 2nd law (i.e., net force = mass × acceleration), a spike in acceleration data signal a potential “forceful” behavioral event. Recent research by Neely et al. (2025) investigated the reliability of wearable devices containing an accelerometer to identify behavioral events during an FA. In their study, the wearable device captured every response, as well as several that the human rater missed due to the rapid succession of the responses. Additionally, the sensors identified behavior without delay, while the human rater recorded delays of up to 9 s. These results indicate that the use of wearable sensors during an FA to measure behavior may be an accurate tool to supplement measurement of behavior.

While promising, the study by Neely et al. (2025) only enrolled one participant and the researchers noted several limitations in their methodology to identify behavioral events. First, the researchers utilized angular velocity measured by a gyroscope as a proxy for acceleration to measure behavior due to the limited measurement range of acceleration in the device (<6 g). Second, the researchers only evaluated the potential for the devices to capture behavioral events (true positives). They did not evaluate potential for false positives, false negatives, or true negatives; that is, the researchers did not fully investigate the “accuracy” of the devices. Therefore, the purpose of this study was to extend the previous work and investigate the use of wearable accelerometer technology to identify behavior events during FAs. Additionally, we sought to determine if the decision-making regarding function of behavior during an FA will be affected by the use of the accelerometer data. Our research questions are:

Can wearable accelerometer technology reliably capture frequency of behavior during a FA?

What is the statistical accuracy of the accelerometer ratings?

Would data collected via an accelerometer change decision making regarding function of behavior during an FA?

Methods

Participants

Participants were included in this study based on the following criteria: (1) they had a diagnosis of ASD, (2) engaged in self-hitting behaviors, (3) were able to wear the accelerometer devices for the duration of the session, (4) had no reported allergies to the adhesive used to secure the accelerometer to the body, (5) had a caregiver consent to the research procedures, and (6) the participant assented to the research. To identify assent and the ability to wear the devices, the researchers conducted 5 min wearable probes with each participant. The researchers defined assent as the participant leaving the device on, and dissent as moving, removing, or playing with the device during the probe. If the participant moved, removed, or played with the device, the researcher represented the devices two more times. If the participant still moved them, removed them, or played with them, the researcher assumed dissent. Researchers recruited a total of 12 participants for this study and their caregivers consented to the procedures. Of the 12 participants, eight participants assented to wearing the devices and four were excluded as they did not assent to the devices. Of the eight participants that wore the devices, three of participants were excluded as they did not engage in behavior during sessions, two were excluded as they did not engage in the target topography of behavior (e.g., self-hitting), and three participants completed this study. To note, the researchers did also monitor for signs of dissent (e.g., attempts to remove the sensors) following these initial probes. The participants did not engage in any dissent behaviors for the remainder of the study.

All participants were diagnosed with ASD and engaged in self-hitting behaviors. Kayla, a 9-year-old Hispanic female, and Ryder, an 8-year-old Hispanic male, engaged in self-hitting behavior consisting of using an open or closed fist to contact their head or body. John, a 14-year-old Hispanic male, engaged in self-hitting behavior consisting of an open or closed fist to contact his head. All were able to wear the accelerometer devices for the duration of the session and had no reported allergies to the adhesive used to secure the accelerometer to the body. All participants were non-vocal and used a combination of gestures and augmented communication devices to express their needs and wants. All of the participants were able to follow one-step instructions.

Settings and Materials

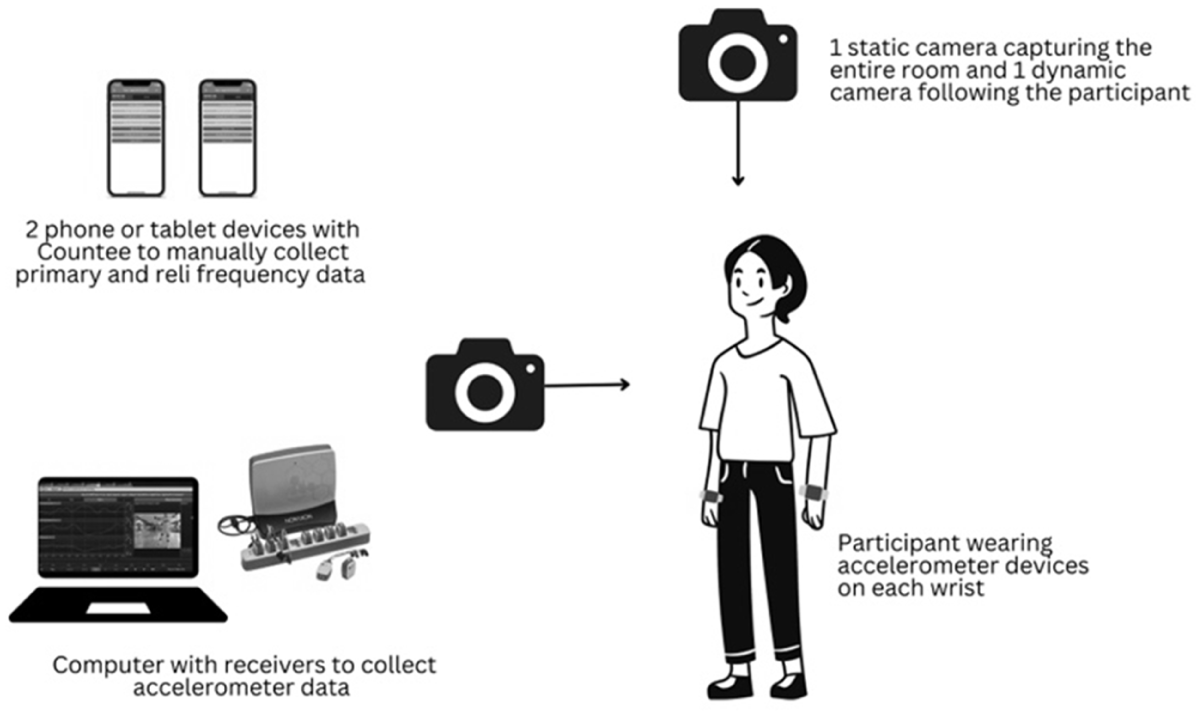

Researchers conducted sessions at a university-run lab located in an outpatient primary healthcare clinic. They equipped the treatment rooms with worktables, lounging furniture, and age-appropriate stimuli tailored to each participant. The session materials included video recording devices, live data collectors, and Noraxon accelerometers. The accelerometers had a signal range of 0 to 400 g and captured data at a sampling frequency of 500 Hz. The upward range of the accelerometers (i.e., 400 g) is greater than twice the peak acceleration recorded in Neely et al. (2025) and thus was chosen to capture all impacts. Figure 1 illustrates the setup of the cameras, live data collectors, and accelerometer data receivers in the treatment room. Researchers positioned two cameras to face the participant. Participants wore accelerometers on their wrists, with the receiver and computer also located in the room. Additionally, two human raters used iPhone devices to collect live data during each session. The videos were captured at 30 frames per second.

Technology set-up.

Response Measurement and Interobserver Agreement

A trained researcher collected frequency data in-vivo during the FA sessions, or from real-time video recordings post-session, using the Countee Data Application (Hernandez, 2020). Countee is a free mobile application with customizable buttons that allows for measurement of behavioral events using frequency or duration recording methods. Countee has the capability of capturing data at a rate of one data point per second. During the FA, raters collected data on

The raw data from Countee were divided into 10 s intervals, and interobserver agreement (IOA) was calculated using the interval-by-interval method (Kazdin, 2021). This method involves determining the number of intervals in which both observers agreed on the presence or absence of the behavior, dividing this by the total number of observation intervals and multiplying by 100 to obtain a percentage. If both raters scored the occurrence or nonoccurrence of the behavior within a given 10 s interval, the interval is scored as an agreement. The reliability of the clinical-grade data set met the minimum quality criteria, achieving above 80% agreement between the two raters.

Procedural Fidelity

A procedural task list was created, and two raters collected procedural fidelity for 100% of the FA sessions. The task list included evaluations of the experimenters’ adherence to study procedures. Raters coded a “+” if the implementer’s behavior occurred and a “−” if it was absent. They calculated procedural fidelity by dividing the number of steps completed correctly in a session by the total number of opportunities and multiplying the result by 100.

General Procedures

For all participants, the researchers first conducted a FA with live clinical coding (“clinical-grade”), which involved real-time data collection during an FA or from video recordings post-session. After completing the FA, researchers conducted advanced data analysis to generate a “research-grade” data set based on frame-by-frame analysis of video recordings, and an accelerometer data set based on the continuously collected acceleration data. The details of how each data set was generated are described in the response measurement section. Finally, researchers calculated IOA for clinical-grade, and accelerometer data against the research-grade data.

Functional Analysis

Before the FA sessions with the participant, the researcher administered the

Kayla’s mom completed the QABF and FAI for her. Kayla’s mom reported that her behavior was socially maintained by access to attention. She also indicated potential escape from demand and escape from attention functions. All except the escape from attention function was confirmed during the observation. Therefore, researchers conducted a FA adapted from the Iwata et al. (1994) model with the addition of a tangible condition, 5 min sessions, and individualized for the participant. The FA included five to eight sessions for each condition, and the sequence of conditions was randomized to control for order effects.

To initiate the attention condition, the researcher directed the participant to play with the stimuli in the room and stated that they “had to go do work.” The researcher then looked down at a clipboard indicating the unavailability of their attention. The researcher did not provide attention for the participant’s nontarget behaviors (e.g., request for attention). Contingent on the occurrence of the target problem behavior, the researcher provided brief attention (e.g., fixed interval 15 s) and asked, “What do you need?” Every instance of the target behavior was reinforced.

In the escape from demand condition, the researcher initiated instructional trials using a three step least-to-most prompting procedure (i.e., verbal, modeling, physical prompt). The researcher used an inter-prompt interval of 3 s (Rispoli et al., 2011). Target problem behavior resulted in the termination of the instructional sequence for 15 s. Every instance of the target behavior was reinforced upon completion of a task, the researchers would provide a neutral statement and present the next task.

In the tangible condition, the researcher stayed in the room and kept close to the participant. Before starting the session, the researcher provided the participant with 30 s of access to the preferred tangible item. After 30 s, the researcher initiated the session by saying “my turn” and placing the item in sight but out of reach. The researcher ignored any non-target behaviors and did not provide programmed consequences for appropriate behavior (e.g., requesting). If the participant engaged in the target behavior, the researcher immediately presented the item for 15 s, saying “okay, your turn.” Every instance of the target behavior was reinforced.

During the play condition, the participant had access to preferred stimuli, and the researcher stated, “We can do whatever you want, play or hang out.” The researcher provided attention on a fixed time schedule (e.g., every 30 s). The researcher also responded to all participant bids for attention. The researcher did not provide any consequences for target behavior.

John’s mom and brother completed the QABF and FAI for him. John’s family reported that his behavior was automatically maintained with potential social functions. John’s family also reported a potential diverted attention function. For John, researchers conducted a FA adapted from the Iwata et al. (1994) model with the addition of a tangible condition, diverted attention condition, 10 min sessions, and individualized for the participant. Each condition was repeated several times, and the sequence of conditions was randomized to control for order effects.

For John, researchers conducted the social conditions (e.g., access to tangibles) using the same procedures as outlined for Kayla above. For the diverted attention condition, the researcher provided highly preferred items in the session room. Highly preferred items were available as mom reported she provided John with preferred items when she knew her attention would be diverted. Therefore, the researchers designed this condition to simulate the natural condition. The researcher informed John that their attention would be unavailable, stating, “You can hang out John, I have to go work/talk.” The researcher moved away from the participant and engaged in continuous conversation with the assistant. The researcher did not respond to any bids for attention and did not provide any programmed consequences for appropriate behavior. When target problem behavior occurred, the researcher paused the conversation and delivered 30 s of attention. After 30 s of attention, the researcher resumed the conversation with the assistant. In the alone condition, the researcher stayed in the room with the participant. John had access to moderately preferred items. During the session, the researcher ignored all appropriate responses and did not give any programmed consequences for target problem behavior during sessions. A second researcher was also present to block self-hitting when it exceeded 10 s, based on a predetermined safety criterion established in consultation with John’s family. This threshold was selected due to observations indicating the force of John’s self-hitting behavior occasionally escalated with continued engagement. Blocking was implemented as a brief, protective measure to prevent escalation and ensure safety. Importantly, blocking was limited to momentary physical interruption (1 s) and did not involve removal from the environment, redirection, or delivery of any form of reinforcement. Beyond this safety procedure, the researcher did not interact with John to minimize potential interference with the functional analysis.

Ryder’s mother completed the QABF and FAI for him. Ryder’s mother reported that his behavior was maintained by escape from demand and access to tangibles. She also mentioned that, once the behavior began, it was difficult to de-escalate Ryder. She also reported the behavior was quick to occur if a preferred item was restricted or a task was presented. With this logic, the researchers conducted a latency-based FA (Falcomata et al., 2014; Thomason-Sassi et al., 2011) including escape from demands, access to tangibles, and play as a control condition. During the session, the researcher reinforced the first instance of the target behavior and terminated the session immediately after the target behavior occurred. The session terminated at 2 mins in the event the target behavior did not occur during the session.

Clinical Grade Data Set

During the FA sessions, a trained researcher collected live data on the target behaviors using the Countee application (as described in the

Research Grade Data Set

To prepare the research ratings data set, trained researchers used Adobe Premiere Pro (Adobe Inc., San Jose, CA) to manually conduct frame-by-frame analysis of the FA videos (using both angles from the static and moving camera), and mark behavioral events according to the predefined behavioral definitions. Both sound and visual cues were employed to identify the exact frame number (1 frame = 1/30 s), in which the impact for each behavioral event occurred. Raters had the flexibility to review the videos frame by frame and as many times as necessary to ensure precise data collection. Raters discussed any discrepancies to establish 100% inter-rater reliability for this data set. These data were used to establish the “ground truth” data set for data analysis. A ground truth data set is commonly used in statistics and machine learning to refer to the correct or “true” answer to a given problem or question (Krig, 2014). Events that occurred outside the camera’s view were not marked.

Accelerometer Data Set

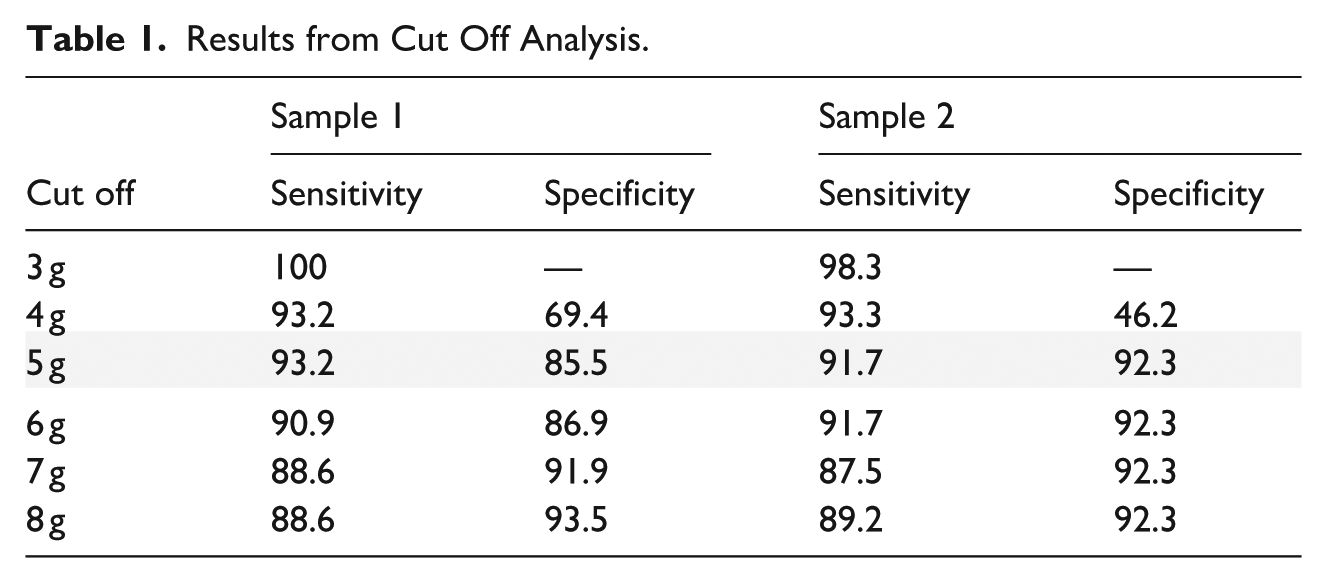

To establish the optimal threshold to identify target behavioral events, researchers analyzed two random samples from Kayla and John that included all impacts exceeding 3 g. Sensitivity and specificity of event detection were evaluated across six threshold values ranging from 3 g to 8 g. Results from this analysis are presented in Table 1. As seen in Table 1, using 3 g and 4 g as a cutoff resulted in higher sensitivity but lower specificity. Using 7 g and 8 g as a cutoff resulted in higher specificity but lower sensitivity. Prioritizing sensitivity, researchers established 5 g as the optimal threshold to identify behavioral events from the continuous stream of acceleration data for hitting.

Results from Cut Off Analysis.

The accelerometer data set was produced by scanning the data for instances when the resultant acceleration (=sqrt (

Data Analysis

IOA Between Data Sets

Researchers computed IOA among the clinical-grade and accelerometer data sets as compared to the research-grade data set to assess the reliability of each data collection method. The data sets were split into 10 s intervals, with raters examining interval-by-interval for agreement, marking instances where the frequency of recorded behavior matched and disagreements where the data sets disagreed. IOA was calculated as the percentage of intervals with agreement by dividing the total number of intervals with agreement by the total number of intervals and multiplying by 100 to obtain a percentage (Kazdin, 2021). Additionally, each disagreement with the research-grade data set was classified as either false positives (behavior recorded but absent in the research-grade data set) or false negatives (behavior not recorded but present in the research-grade data set).

Researchers then calculated sensitivity (ability of the tool to measure true positives) and specificity (ability of the tool to measure true negatives). Sensitivity was calculated as the number of true positive intervals divided by the number of intervals with true positives or false negatives. Specificity was calculated as the number of true negative intervals divided by the number of intervals with true negative or false positive. Finally, the researchers calculated Cohen’s kappa statistic to assess the level of agreement, considering both observed and expected accuracies (Sun, 2011). Researchers classified the results according to Landis (1977) with a resulting kappa of 0.41 to 0.60 indicating “moderate” agreement, 0.61 to 0.80 indicating “substantial” agreement, and 0.81 to 1.00 indicating “almost perfect” agreement.

Function of Behavior

Researchers evaluated the function of the data using visual analysis (Cooper et al., 2021) and the modified visual inspection criteria (Roane et al., 2013) for Kayla and John and adaptive structured visual-inspection criteria (SVI) for latency-based FAs for Ryder (Sunde et al., 2022). Researchers prepared the FA data by first visualizing the data in line graph format. The researchers also prepared an excel sheet with the raw data to facilitate calculation of the modified visual inspection criteria.

Visual Analysis

The research team evaluated the line graphs using visual analysis (Cooper et al., 2021; Kazdin, 2021). First, the lead researcher prepared descriptive data regarding the level, trend, and variability of data in each condition. The research team then evaluated the graph and descriptive data to determine the function of the target behavior.

Modified Visual Inspection Criteria

To identify function of behavior, the research team also applied the modified visual inspection criteria as presented in Roane et al. (2013) for Kayla and John. Following the outlined procedures, the lead researcher first calculated the mean and standard deviation (SD) of the control condition data. The lead researcher then calculated the upper criterion line (UCL) and the lower criterion line (LCL) by adding (UCL) and subtracting (LCL) the SD of the control condition and the mean. Finally, the lead researcher counted the total number of points for each condition that were above the UCL and below the LCL, subtracted the number of points below the LCL from the points above the UCL, and divided the difference by the total number of points in the condition. The lead researcher then coded any result of “0.5” or above as positive for the identified function. For Ryder, researchers conducted a latency FA, and, therefore, applied the adapted SVI for latency-based FAs (Sunde et al., 2022).

Results

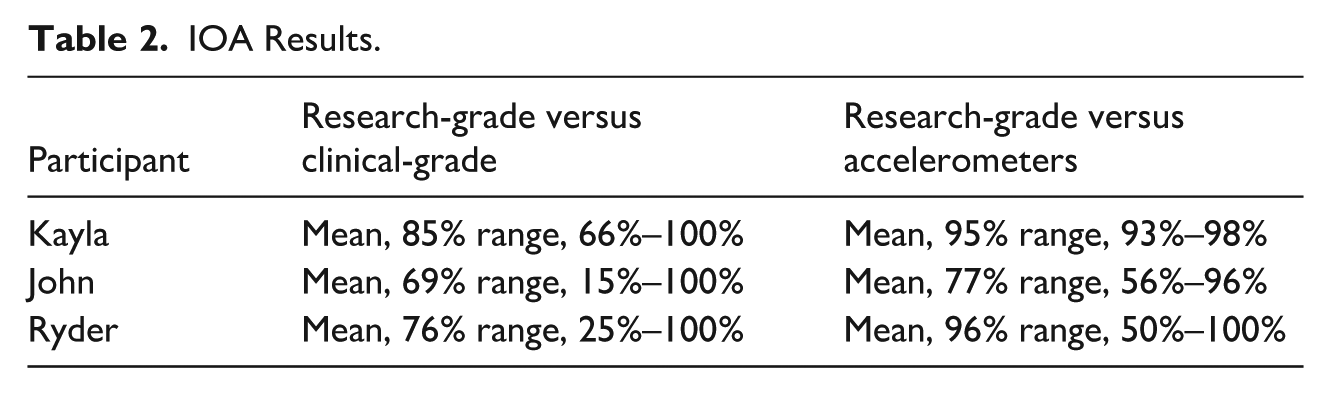

Q1. Can Wearable Technology Reliably Capture Frequency of Behavior During a FA?

The first research question focused on the reliability of the data captured during the FA. Table 2 presents the interval-by-interval IOA between the clinical-grade and accelerometer data sets as compared to the research-grade data set for Kayla. The table presents the average reliability across all sessions of the FA and the range of IOA for all sessions. For Kayla, the average agreement between the research-grade and the clinical-grade data set was 85% (range, 66%–100%). The average agreement between the research-grade and the accelerometer data set was 95% (range, 93%–98%).

IOA Results.

Table 2 also presents the interval-by-interval IOA for John between the clinical-grade and accelerometer data sets as compared to the research-grade data set. The average agreement between the research-grade and clinical-grade data set was 69% (range, 15%–100%), and the average agreement between the research-grade and the accelerometer data set was 77% (range, 56%–95%). For Ryder, the average agreement between the research-grade and clinical-grade data set was 76% (range, 25%–100%), and the average agreement between the research-grade and the accelerometer data set was 96% (range, 50%–100%).

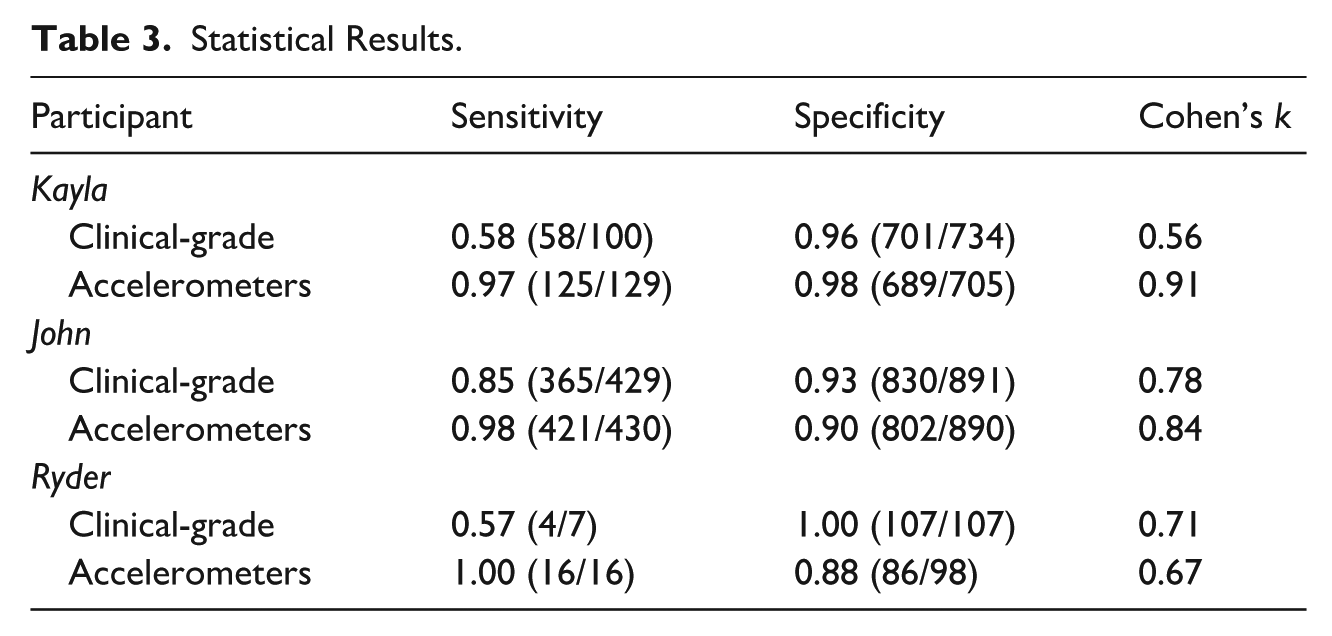

Q2. What Is the Statistical Accuracy of the Accelerometer Ratings?

Table 3 presents the statistical results for question two. For Kayla, the clinical-grade and the research-grade data sets agreed on behavioral occurrences in 58 of the intervals (true positive) and non-occurrence in 701 of the intervals (true negative). The clinical-grade data set recorded behavior in 33 intervals when behavior did not occur (false positive) and did not record behavior in 42 of the intervals when the behavior did occur (false negative). The resulting sensitivity was 0.58 and specificity was 0.96. The resulting Cohen’s kappa statistic for the clinical-grade data set was 0.56, indicating moderate agreement with the research-grade data set.

Statistical Results.

The accelerometer and the research-grade data sets agreed on behavioral occurrences in 125 of the intervals (true positive) and non-occurrence in 689 of the intervals (true negative). The accelerometers recorded behavior in 16 intervals when behavior did not occur (false positive) and did not record behavior in 4 of the intervals when the behavior did occur (false negative). The resulting sensitivity was 0.97 and specificity was 0.98. The resulting Cohen’s kappa statistic for the accelerometer data set was 0.91, indicating almost perfect agreement with the research-grade data set.

For John, the clinical-grade and the research-grade data sets agreed on behavioral occurrences in 365 of the intervals (true positive) and non-occurrence in 830 of the intervals (true negative). The clinical-grade data set recorded behavior in 61 intervals when behavior did not occur (false positive) and did not record behavior in 64 of the intervals when the behavior did occur (false negative). The resulting sensitivity was 0.85 and specificity was 0.93. The resulting Cohen’s kappa statistic for the clinical-grade data set was 0.78, indicating substantial agreement with the research-grade data set.

The accelerometer and the research-grade data sets agreed on behavioral occurrences in 421 of the intervals (true positive) and non-occurrence in 802 of the intervals (true negative). The accelerometers recorded behavior in 88 intervals when behavior did not occur (false positive) and did not record behavior in 9 of the intervals when the behavior did occur (false negative). The resulting sensitivity was 0.98 and specificity was 0.90. The resulting Cohen’s kappa statistic for the accelerometer data set was 0.84, indicating almost perfect agreement with the research-grade data set.

For Ryder, the clinical-grade and the research-grade data sets agreed on behavioral occurrences in 4 of the intervals (true positive) and non-occurrence in 107 of the intervals (true negative). The clinical-grade data set recorded behavior in 0 intervals when behavior did not occur (false positive) and did not record behavior in 3 of the intervals when the behavior did occur (false negative). The resulting sensitivity was 0.57 and specificity was 1.00. The resulting Cohen’s kappa statistic for the clinical-grade data set was 0.71, indicating substantial agreement with the research-grade data set.

The accelerometer and the research-grade data sets agreed on behavioral occurrences in 16 of the intervals (true positive) and non-occurrence in 86 of the intervals (true negative). The accelerometers recorded behavior in 12 intervals when behavior did not occur (false positive) and did not record behavior in 0 of the intervals when the behavior did occur (false negative). The resulting sensitivity was 1.00 and specificity was 0.88. The resulting Cohen’s kappa statistic for the accelerometer data set was 0.67, indicating substantial agreement with the research-grade data set.

Q3. Would Data Collected Via Accelerometers Change Decision Making Regarding Function of Behavior During an FA?

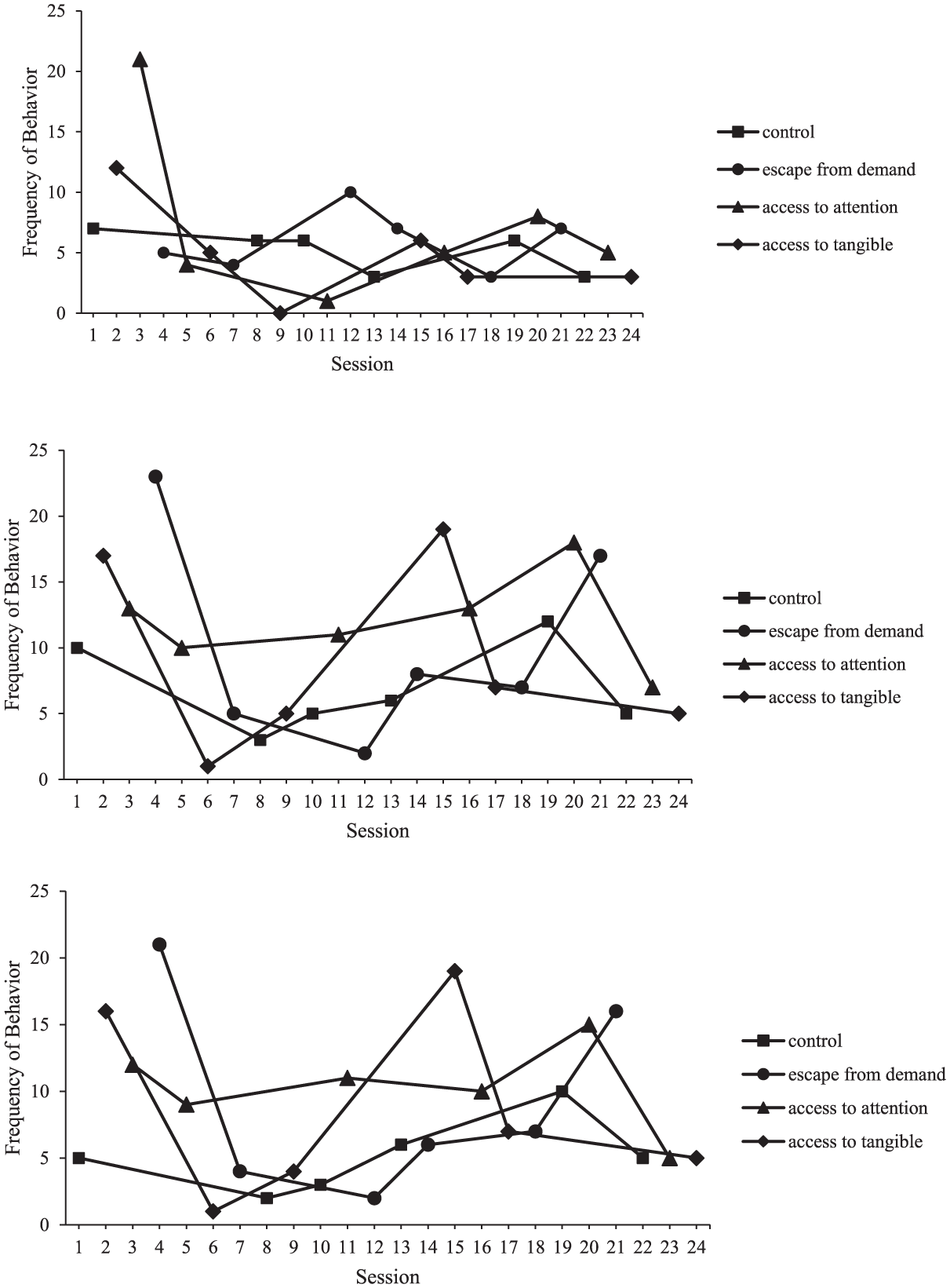

The results for Kayla’s FA using the clinical-grade data are presented in Figure 2 (top panel). Kayla engaged in self-hitting behaviors during the control condition at an average frequency of 5 behaviors per session (range, 3–7). She also engaged in self-hitting behaviors at an average frequency of 6 behaviors per session during the escape from demand condition (range, 3–10), a frequency of 7 behaviors per session in the access to attention condition (range, 1–21), and a frequency of 5 behaviors per session in the access to tangible condition (range, 0–12). Analysis using the modified visual inspection criteria resulted in 0.0 for the access to tangible condition, 0.33 for the escape from demand condition, and 0.17 in the access to attention condition. Kayla engaged in self-hitting behaviors in all conditions of the FA, including the control condition, indicating her behavior was automatically maintained.

FA results for Kayla from clinical grade data (top panel), accelerometers (middle panel), and research-grade (bottom panel).

The results for Kayla’s FA using the accelerometer data are presented in Figure 2 (middle panel). Similar to data presented in the top panel of Figure 2, Kayla engaged elevated levels of behavior across all conditions. During the control condition, Kayla engaged in self-hitting behaviors at a frequency of 7 behaviors per session (range, 3–12). She also engaged in self-hitting behaviors at a frequency of 10 behaviors per session during the escape from demand condition (range, 2–23), a frequency of 12 behaviors per session in the access to attention condition (range, 7–18), and a frequency of 9 behaviors per session in the access to tangible condition (range, 1–19). Analysis using the modified visual inspection criteria resulted in 0.16 for the access to tangible condition, 0.16 for the escape from demand condition, and 0.83 in the access to attention condition. These results indicate the behavior was multiply maintained by access to attention and automatically maintained. These results were different than the clinical-grade data set outcomes with the addition of an attention function.

The results for Kayla’s FA using the research data are presented in Figure 2 (bottom panel). Similar to data presented in the top panel of Figure 2, Kayla engaged elevated levels of behavior across all conditions. During the control condition, Kayla engaged in self-hitting behaviors at a frequency of 5 behaviors per session (range, 2–10). She also engaged in self-hitting behaviors at a frequency of 9 behaviors per session during the escape from demand condition (range, 2–21), a frequency of 10 behaviors per session in the access to attention condition (range, 5–15), and a frequency of 9 behaviors per session in the access to tangible condition (range, 1–19). Analysis using the modified visual inspection criteria resulted in 0.16 for the access to tangible condition, 0.16 for the escape from demand condition, and 0.83 in the access to attention condition. These results indicate the behavior was multiply maintained by access to attention and automatically maintained. These results were different than the clinical-grade data set outcomes with the addition of an attention function.

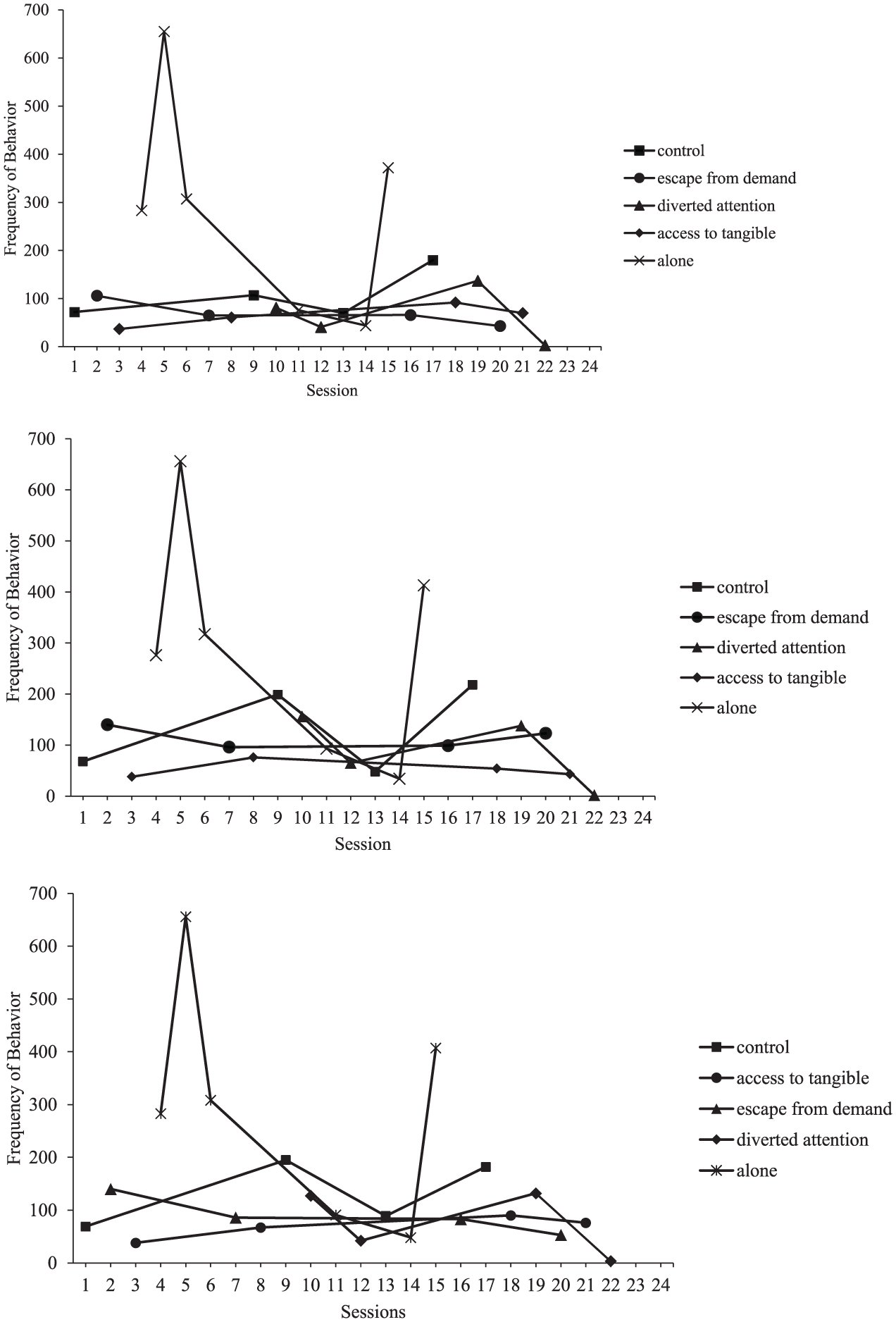

The results for John’s FA using the clinical-grade data are presented in Figure 3 (top panel). John engaged in self-hitting behaviors during the control condition at an average frequency of 107 behaviors per session (range, 70–180). He also engaged in self-hitting behaviors at an average frequency of 65 behaviors per session during the access to tangible condition (range, 37–92), a frequency of 70 behaviors per session during escape from demand condition (range, 43–106), a frequency of 66 behaviors per session during the diverted attention condition (range, 3–137), and a frequency of 290 behaviors per session during the alone condition (range, 44–655). Analysis using the modified visual inspection criteria resulted in 0.0 for the access to tangible condition, 0.0 for the escape from demand condition, 0.0 in the diverted attention condition, and 0.50 in the alone condition. John engaged in high levels of self-hitting behaviors in all conditions of the FA, including the control and alone conditions, indicating his behavior was automatically maintained.

FA results for John from clinical grade data (top panel), accelerometers (middle panel), and research-grade (bottom panel).

The results for John’s FA using the accelerometer data are presented in Figure 3 (middle panel). John engaged in self-hitting behaviors during the control condition at a frequency of 133 behaviors per session (range, 48–218). He also engaged in behaviors at a frequency of 53 behaviors per session during the access to tangible condition (range, 38–76), a frequency of 115 behaviors per session during escape from demand condition (range, 96–140), a frequency of 90 behaviors per session during the diverted attention condition (range, 2–156), and a frequency of 298 behaviors per session during the alone condition (range, 34–656). Analysis using the modified visual inspection criteria resulted in 0.0 for the access to tangible condition, 0.0 for the escape from demand condition, 0.0 in the diverted attention condition, and 0.50 in the alone condition. John engaged in high levels of self-hitting behaviors in all conditions of the FA, including the control and alone conditions, indicating his behavior was automatically maintained. This result matched the outcome from the clinical-grade data set.

The results for John’s FA data using the research-grade data are presented in Figure 3 (bottom panel). John engaged in self-hitting behaviors during the control condition at a frequency of 133 behaviors per session (range, 69–195). He also engaged in behaviors at a frequency of 68 behaviors per session during the access to tangible condition (range, 38–90), a frequency of 91 behaviors per session during escape from demand condition (range, 53–140), a frequency of 76 behaviors per session during the diverted attention condition (range, 3–132), and a frequency of 298 behaviors per session during the alone condition (range, 48–656). Analysis using the modified visual inspection criteria resulted in 0.0 for the access to tangible condition, 0.0 for the escape from demand condition, 0.0 in the diverted attention condition, and 0.50 in the alone condition. John engaged in high levels of self-hitting behaviors in all conditions of the FA, including the control and alone conditions, indicating his behavior was automatically maintained. This result matched the outcome from the clinical-grade data set.

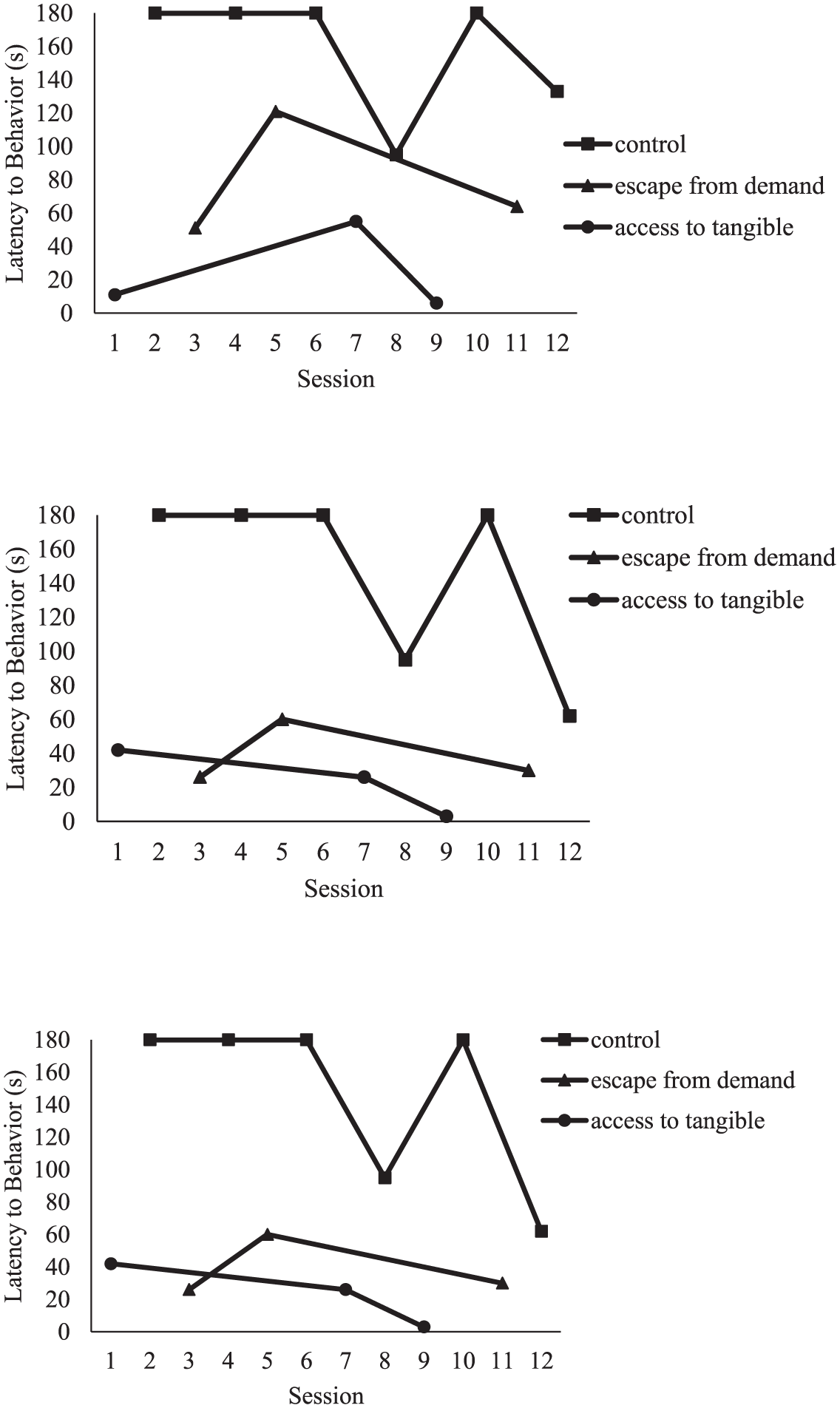

The results for Ryder’s FA using the clinical-grade data are presented in Figure 4 (top panel). Ryder engaged in self-hitting behaviors during the control condition with an average latency of 158 s (range, 95 s–180 s). He also engaged in self-hitting behaviors in the access to tangible condition with an average latency of 24 s (range, 6 s–55 s), and in the escape from demand condition with an average latency of 79 s (range, 51 s–121 s). Analysis using the modified visual inspection criteria resulted in 1.0 for the access to tangible condition and 1.0 for the escape from demand condition. Ryder engaged in self-hitting behaviors in both conditions of the FA, indicating his behavior was multiply maintained.

FA results for Ryder from clinical grade data (top panel), accelerometers (middle panel), and research-grade (bottom panel).

Figure 4 (middle panel) shows the latency to self-hitting behaviors for Ryder during each condition of the FA using the accelerometer data. Ryder engaged in self-hitting behaviors during the control condition with an average latency of 156 s (range, 62 s–180 s). He also engaged in self-hitting behaviors in the access to tangible condition with an average latency of 24 s (range, 3 s–42 s), and in the escape from demand condition with an average latency of 39 s (range, 26 s–60 s). Analysis using the modified visual inspection criteria resulted in 1.0 for the access to tangible condition and 1.0 for the escape from demand condition. Ryder engaged in self-hitting behaviors in both conditions of the FA, indicating his behavior was multiply maintained. This result matched the outcome from the clinical-grade data set.

The results for Ryder’s FA data using the research-grade data are presented in Figure 4 (bottom panel). Ryder engaged in self-hitting behaviors during the control condition with an average latency of 146 s (range, 62 s–180 s). He also engaged in self-hitting behaviors in the access to tangible condition with an average latency of 24 s (range, 3 s–42 s), and in the escape from demand condition with an average latency of 39 s (range, 26 s–60 s). Analysis using the modified visual inspection criteria resulted in 1.0 for the access to tangible condition and 1.0 for the escape from demand condition. Ryder engaged in self-hitting behaviors in both conditions of the FA, indicating his behavior was multiply maintained. This result matched the outcome from the clinical-grade data set.

Discussion

The purpose of this study was to evaluate the potential use of wearable technology, specifically accelerometers, to measure frequency of self-hitting behavior during an FA. Researchers conducted FAs with three participants who engaged in head hitting behaviors. Data were collected on the behaviors using: (1) live clinical coding procedures (“clinical-grade”), (2) research-grade procedures, and (3) accelerometers. The research-grade data set served as the ground truth data set. Results indicate the accelerometers were highly reliable with the research-grade/ground truth data set. Function identification, based on the resulting accelerometer data, was the same for two of the three participants. The accelerometer data for the third participant identified a second function that was not identified in the clinical-grade data set. Overall, these results support the promise of wearable technologies to supplement, and potentially automate, measurement of behavior during FAs.

The first purpose of the study was to evaluate the reliability of the accelerometers to capture frequency of self-hitting behavior during an FA. Overall, the accelerometers were more reliable with the research-grade as compared to the clinical-grade data, with Kayla’s data set showing the highest agreement. The lower agreements for John and Ryder may be attributed to the lower specificity or the higher false positive rates in John and Ryder’s data sets as compared to Kayla’s data set. Notably, John engaged in other behaviors that were topographically similar to the target behavior that the accelerometers falsely identified as the target behavior (e.g., stamping on paper). Similarly, Ryder often moved his arms rapidly, which may have resulted in the accelerometers falsely detecting the behavior. Overall, the sensitivity of the accelerometers in detecting behaviors was very high (0.97–1.00) for all three participants. Ultimately, these results add to the growing evidence supporting the potential use of wearable technology to supplement measurement during behavioral assessments and treatments. For example, recent research by Imbiriba et al. (2023) extended a pilot study by Goodwin et al. (2019) and demonstrated the viability of a wearable biosensor to measure heart rate as a predictor for the onset of aggression for autistic youth. These results, combined with the results of this study, offer promise for improving the reliability of measurement during FAs, and potentially extending FA measurement to other topographies of behavior (e.g., aggression), dimensions of behavior (e.g., force; Neely et al., 2025) or biobehavioral measures (e.g., physiological; Imbiriba et al., 2023). Additionally, wearable technologies offer the potential to measure behavior across settings, providing visibility to generalizability of treatment effects (Scheithauer et al., 2022). This can be particularly beneficial for collecting data in settings with limited access to trained observers or in real-time data collection environments.

While the accelerometer data set was highly reliable, there were errors that warrant further discussion. In analysis of the errors, many can be attributed to capturing movements that did not meet the definition of the target behavior (e.g., high fives). This is a logical result considering the accelerometers capture behavioral events over a certain threshold (e.g., 5 g), but do not discriminate between topographies or consider the context of the behavior. This source of error does reduce the generalizability of the results as many participants may engage in behaviors that are topographically similar, but functionally different. One potential solution to this issue is to fuse the accelerometer data with another source of data (e.g., videos) to facilitate more precise measurement. For example, a recent article by Deng et al. (2022) used language assisted deep learning models to accurate identify behaviors such as head banging, using just video input. Similarly, work by Dufour et al. (2020) applied artificial intelligence technology to automatically measure vocal stereotypy. While there are also limitations to video input data (e.g., portability), this is a potential solution.

The second purpose of the study was to evaluate if data collected via an accelerometer changed decision making regarding function of behavior during an FA. For two of the three cases, the function identification did not change between the clinical-grade and accelerometer data set. However, for one participant, Kayla, the accelerometer data identified a second attention function. Anecdotally, Kayla’s mother had reported an attention function, and the researchers did provide functional communication training for the attention function (results currently being prepared for publication). To note, the lead rater for data collection during the FA was a BCBA (credentialed for 6 years) with 8 years of experience in conducting FAs. Also of note, the FA results did not, ultimately, change the decision-making of the researchers in providing treatment given discussion with the caregiver.

Although not the primary focus of this study, one notable result of this study was the low reliability between the clinical-grade data set and the research-grade data set. To note, the data collectors in this study were behavior analysts with experience in conducting FAs and collecting real time data. While there are currently no studies to date looking at the impact of potential inaccuracies in human data collection on treatment, this study does highlight the potential for human error in live data collection to impact treatment decisions, particularly in fast-paced or high-intensity clinical environments (Morris et al., 2022; Vollmer et al., 2008). Given the central role of data in guiding behavioral interventions, this finding underscores the need for additional research into the reliability of clinician-collected data and strategies to improve data collection.

Limitations and Considerations

One of the major limitations of the current research is the small sample size. While the research team did recruit 12 participants, only eight (75%) assented to wearing the devices. Previous research by Scheithauer et al. (2022) demonstrated the effectiveness of a habituation process, and future research may consider this as an alternative to the assent procedure used in this study. Future research may also investigate a decision-making process for when to consider either procedure as medical need might necessitate immediate habituation to the sensors. A second limitation is the exclusion of an alone condition for Kayla. Given the undifferentiated results of the FA, inclusion of an alone condition may have resulted in differentiation. Future researchers may consider adopting a fixed sequence approach (Hammond et al., 2013) to increase the likelihood of obtaining differentiated outcomes. Ultimately, there is a need to extend this research to a larger sample with emphasis on inclusion of both social-positive, social-negative, and automatically maintained behavior and additional topographies to further assess the impact of data collection via accelerometers on identifying functional relations during an FA. A third limitation was the use of a blocking procedure for John during the FA; however, it was brief, safety-driven, and did not interfere with the study’s primary aim of evaluating sensor-based detection of behavior. In addition, the inclusion of moderately preferred items in John’s alone condition may have functioned as abolishing operations for automatically reinforced behavior and should be considered a potential limitation. The research team also only included participants who engaged in head hitting behavior. Future research should replicate these procedures across different topographies of behavior. Another major limitation was the use of behavior analysts, with research experience, as lead raters for the data sets as this is not necessarily available in practice. Future research might consider replicating with in-practice clinicians to promote generalizability of results. Finally, a major limitation is the researchers only investigated the use of accelerometers during an FA and within a clinical setting. Future research is necessary to evaluate the use of wearable technologies throughout intervention and treatment and to less controlled settings (e.g., school, home, etc.)

Future research may also consider the practical aspects of implementing wearable technologies in natural settings. For example, there may be challenges related to calibration, potential costs, and training required for practitioners. Some of these limitations were outlined in a recently published study by Neely et al. (2025), highlighting the need to further explore these potential challenges. In addition, future research may explore the ethical considerations related to using wearable technologies. For example, there are privacy concerns and data ownership considerations. There is also the potential for use of artificial intelligence to analyze the large data generated by wearable technologies, allowing for advanced pattern analysis, and advanced prediction of behavioral events. This advanced prediction could support safety and improve treatment for affected persons. However, this prediction may also occasion discriminatory practices where persons with disruptive behavior are excluded due to a predicted behavioral event. Considering the ethical considerations for this vulnerable population should be at the forefront of this line of research (Jennings & Cox, 2024).

Finally, future research might consider additional error analyses. For example, it is possible that some of the errors recorded were related to when the responses were scored and not whether the responses were scored. That is, the false positives or negatives may actually be due to delayed responding by the human observer. Ultimately, the outcomes of the FA for both John and Ryder did not differ between the clinical, research and accelerometer data set supporting this potential hypothesis. However, the differential outcomes of the FA for Kayla indicate potential missed events by the human observer. To note, the human raters for this study were highly trained or had the opportunity to rate via video, which is not typical for clinical practice. Ultimately, further replication and error analysis is warranted.

Conclusion

In conclusion, this study highlights the promise of wearable technology, specifically accelerometers, in supplementing and potentially automating the measurement of self-hitting behavior during functional analyses. The high reliability of the accelerometer data with the research-grade data set underscores the potential utility in FAs. Despite some errors, the accelerometer data also facilitated accurate function identification for the participants. These findings support the integration of wearable technologies with traditional behavior analytic methods to enhance the precision and generalizability of measurement. However, further research is necessary to address practical challenges, ethical considerations, and to replicate these findings across diverse behaviors and settings. By advancing the use of wearable technology, this research opens new avenues for enhancing data-driven decision-making and improving treatment outcomes in behavior analysis.

Footnotes

Ethical Considerations

This article complies with all ethical requirements and was approved by the University of Texas at San Antonio Institutional Review Board #18-029. Consent was obtained from participant caregivers and assent or dissent was obtained from the participants using the procedure outlined in the study.

Author Contributions

Leslie Neely conceptualized the study, designed the study, designed the data analysis procedures, and led the writing of the manuscript. Katherine Holloway wrote the methods and results. Samantha Miller and Karen Cantero led data collection and data analysis. Sakiko Oyama led the data analysis of the accelerometer data. Adel Alaeddini contributed to the data analysis and writing of the discussion.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Leslie Neely and Adel Alaeddini disclosure ownership of Intelligent Behavior Analytics, LLC. This company has no conflict of interest with the current work. Katherine Holloway, Samantha Miller, Karen Cantero, and Sakiko Oyama disclose no conflict of interest.

Data Availability Statement

All data from this publication is available by request from the lead author, Leslie Neely.