Abstract

Previous research found a beneficial effect of augmentative signs (signs from a sign language used alongside speech) on spoken word learning by signing deaf and hard-of-hearing (DHH) children. The present study compared oral DHH children, and hearing children in a condition with babble noise in order to investigate whether prolonged experience with limited auditory access is required for a sign effect to occur. Nine- to 11-year-old children participated in a word learning task in which half of the words were presented with an augmentative sign. Non-signing DHH children (N = 19) were trained in normal sound, whereas a control group of hearing peers (N = 38) were trained in multi-speaker babble noise. The researchers also measured verbal short-term memory (STM). For the DHH children, there was a sign effect on speed of spoken word recognition, but not accuracy, and no interaction between the sign effect in reaction times and verbal STM. The hearing children showed no sign effect for either speed or accuracy. These results suggest that not necessarily sign language knowledge, but rather prolonged experience with limited auditory access is required for children to benefit from signs for spoken word learning regardless of children’s verbal STM.

Introduction

Word learning is at the centre of language development (e.g. E. Clark, 1993). For children to acquire the words of a spoken language, however, auditory access is vital. Children who are deaf/hard-of-hearing (DHH) have limited access to spoken language even when they use hearing aids (HAs) and/or cochlear implants (CIs), and this limited access may impede their spoken vocabulary development (Blamey, 2003; Marshall et al., 2018). Indeed, upon entering primary school, many DHH children have smaller vocabularies in their spoken language than their hearing peers (Coppens et al., 2012; Hayes et al., 2009), and some never catch up (Convertino et al., 2014; Sarchet et al., 2014).

Speech can, however, be complemented by visual input such as augmentative signs to aid comprehension. These signs originate from a sign language and are produced alongside speech, following the spoken language’s grammatical structure. Signing DHH children who were taught words with and without an augmentative sign correctly remembered more words they had been taught with a sign than words presented to them without one (Mollink et al., 2008; van Berkel-van Hoof et al., 2016). However, it is unclear if the observed positive effects of signs on spoken word learning can also be found in non-signing, oral DHH children, instead of only in DHH children with active knowledge of sign language. Additionally, our previous study (van Berkel-van Hoof et al., 2016) found no effect of signs on word learning for hearing children. It is therefore also unclear whether only DHH children (who have prolonged experience with limited auditory access) benefit from signs for word learning, or whether hearing children may also benefit if they attempt to learn spoken words in adverse listening conditions (i.e., a temporary condition of limited auditory access). Furthermore, the influence of cognitive skills on a sign effect for word learning has not yet been studied. The present study investigated spoken word learning with and without signs in two groups of children without sign language knowledge: one group of DHH children, for whom limited access to spoken language is the default condition; and one group of hearing children, who performed the task in an experimentally manipulated condition of babble noise. We also included a task tapping into verbal short-term memory (STM).

A comparison of spoken word learning by DHH and hearing children

Hearing children produce their first words between 8 and 15 months of age (e.g. Bloom, 2000). They acquire the words of the ambient language via child-directed speech, as well as via overhearing conversations between others. We consider connectionist models of the mental lexicon (e.g. Fang et al., 2016; Plunkett et al., 1997), in which ‘lexical and semantic information (i.e., word label and its meaning, respectively) are stored and linked within a distributed neural network that processes both simultaneously’ (Capone & McGregor, 2005, p. 1469). Thus, it is assumed that the phonological features of a specific word (i.e. the label, e.g. cat) are connected to semantic features of that label (e.g. visualisation of an exemplar, sounds a cat produces, things a cat can do), as well as to other semantic and conceptual features (Vigliocco & Vinson, 2011), and to lexical labels in other language(s) 1 (Fang et al., 2016; Grainger et al., 2010). Importantly, these semantic representations play a major role in word retrieval (Capone & McGregor, 2005), which suggests that richer semantic representations of a concept (e.g. with information from more than one modality) result in faster and/or more accurate word retrieval.

Cognitive skills, like working memory (WM), also play an important role in vocabulary learning and development (e.g. Archibald, 2017; Gathercole & Baddeley, 1993). Because cognitive load may be reduced by the use of manual stimuli for spoken word learning (see below), we include a forward digit span task in our design. Here, we consider the WM model of Baddeley and colleagues (e.g. Baddeley, 2012; Gathercole & Baddeley, 1989), which distinguishes a phonological loop that processes verbal input, a visuo-spatial sketchpad that processes visual input, an episodic buffer that combines and processes input from the other systems, and a central executive that controls attention of the three slave systems. Verbal short-term memory (STM; the phonological loop) has been found to correlate with the early stages of word learning (Archibald, 2017; Gathercole, 2006). Archibald (2017) argues that when verbal STM is limited, the linguistic detail that is encoded by a learner is diminished, suggesting slower learning of new words and lower vocabulary size for those with poorer verbal STM skills. Lower STM skills hamper speech comprehension (and by extension, word learning) even more when the acoustic signal is disrupted, for example, by background noise (e.g. McMurray et al., 2017; Osman & Sullivan, 2014). This suggests that WM skills may be more important to spoken word learning and until a later age for DHH children than for hearing peers.

The question is how spoken word learning occurs when access to auditory input is limited permanently. DHH children do not have the same access to spoken language as do hearing children, even when they use HAs and/or CIs (e.g. Lesica, 2018). Several studies found that DHH children’s spoken vocabulary size lags behind that of their hearing peers, even after multiple years of HA or CI use (see Lederberg et al., 2013 for a review; and Lund, 2016 for a meta-analysis). Although some studies find that the rate of spoken vocabulary growth of school-age DHH children can be faster than that of hearing peers (Coppens et al., 2012; Hayes et al., 2009), other research suggests that many DHH individuals never achieve the same vocabulary size in their spoken language as hearing peers (e.g. Convertino et al., 2014; Sarchet et al., 2014).

According to Gathercole (2006), children with smaller vocabularies may rely more on verbal WM and STM for learning new words than do peers with larger vocabularies, while their smaller vocabularies may be related to a lower WM capacity, increasing the difficulty for learning new words in their spoken language. DHH children frequently have limited WM skills compared to their hearing peers (Hansson et al., 2004), as well as smaller vocabularies (Lederberg et al., 2013; Lund, 2016). This may impede their spoken vocabulary development more than limited auditory access alone accounts for, and it may cause verbal STM to play a role in word learning even at later ages.

Alternatively, early language deprivation may cause poorer WM skills. For example, Pierce et al. (2017) argue that children whose spoken language development has a later onset due to, for example, congenital hearing loss have a less well-developed phonological WM. This in turn leads to difficulties in language acquisition. In their paper, they review extensive literature suggesting that the window of opportunity for the full development of phonological WM for a given language closes at around 1 year of age. This means that infants whose input is impoverished during this period (and who thus have lower language skills), have poorer phonological WM skills as a result. Indeed, Marshall et al. (2015) found that DHH native signers performed similarly to age-matched hearing peers on non-verbal WM tasks, whereas non-native DHH signers performed below both signers and hearing controls. This suggests that early language deprivation influences the development of WM in general. However, whether initial poorer language skills cause poorer WM skills or vice versa, there is no doubt that ‘[phonological WM] development is closely linked to language acquisition’ (Pierce et al., 2017, p. 1290), and is thus likely to correlate with word learning performance.

Phonological STM may also correlate with the effect of manual stimuli on spoken word learning. The use of multimodal input during a (word) learning task has been suggested to reduce cognitive load (e.g. J. Clark & Paivio, 1991; Paivio, 2010; Paivio et al., 1988; see below). Thus, children with lower WM skills may benefit (more) from manual stimuli than children with better WM, and WM skills may be a marker to identify children for whom augmentative signs (still) aid spoken word learning. Indeed, various studies with adults have found a facilitative effect of manual stimuli on speech comprehension by reducing load on WM (Chu et al., 2014; Ping & Goldin-Meadow, 2010), particularly for those with lower WM skills (Marstaller & Burianová, 2013). This reduced load may also apply to spoken word learning paradigms, and the relation to WM skills may also apply to children.

Effects of signs on word learning in DHH children

It makes intuitive sense that DHH children should benefit from visual input through augmentative signs in spoken language learning in either accuracy or response time, or both. After all, the visual modality is more accessible to them than the auditory one, and ‘deaf individuals are highly reliant on visual information for perception and communication’ (Rudner, 2018, p. 5). Indeed, as Pierce et al. (2017) suggest, when phonological WM is underdeveloped, visual (non-verbal) areas of the brain may be used for speech processing, compensating for the lagging phonological skills. As we noted above, phonological STM (as often measured via digit span tasks) has been shown to be related to word learning for hearing children and may also be related to effects of manual stimuli on spoken word learning. Since DHH children rely more on visual information, while their phonological STM is often lower than that of hearing peers, this relation seems likely to be present with DHH children.

Furthermore, the Dual Coding Theory by Paivio and colleagues (e.g. J. Clark & Paivio, 1991; Paivio, 2010; Paivio et al., 1988) hypothesises that information that is perceived through more than one modality is better retained in memory than stimuli perceived through a single modality, because the input from each modality creates its own memory trace. Memories with multiple memory traces can later be accessed through multiple paths and can thus be more easily retrieved (i.e. faster and/or with higher accuracy). Concepts that are learned via multimodal input will have more semantic features stored in the mental lexicon, which means more nodes are activated during retrieval, easing this process. On the other hand, adding manual stimuli to a spoken word learning task may increase complexity. The extra stimulus implies learners need to divide their attention between three elements (picture, spoken word and sign). Furthermore, the sign adds semantic and phonological information, which needs to be processed and stored on top of the semantic information provided by the picture and the phonological information present in the spoken word. This may in fact increase cognitive load. However, whether augmentative signs decrease or increase cognitive load for DHH children, this means it is to be expected that a sign effect on spoken word learning is related to WM skills.

Little research has focused on investigating the effect of signs on word learning for DHH children, however, and the results are inconclusive. Two word learning studies found a positive effect of signs on the number of retained words by DHH children aged 4–8 (N = 14) (Mollink et al., 2008) and 9–11 (N = 16) (van Berkel-van Hoof et al., 2016). Both studies compared words learned with a sign and those without one as a within-subject factor. In contrast, Giezen et al. (2014) found no significant effect of signs on word learning. They investigated eight 6- to 8-year-old DHH children, who were taught minimal and non-minimal novel word pairs (Study 2 in their paper). The children learned as many words with as without a sign. This led the authors to conclude that there was no negative impact of sign-supported speech on learning spoken words. It is possible that the lack of a clear positive influence in this study was due to the small numbers. The studies by Mollink et al. (2008) and van Berkel-van Hoof et al. (2016) did not have that disadvantage.

It remains unclear, however, whether DHH children without sign language knowledge equally benefit from signs for spoken word learning. Bimodal bilinguals are more experienced in processing auditory and visual linguistic input than those who use (mainly) a single modality for communication. Furthermore, code-blends (i.e. simultaneous signs and spoken words produced by a bimodal bilingual in a context other than sign-supported speech) have been found to facilitate comprehension in both modalities (Emmorey et al., 2012). This skill may have been the reason for the positive results found by Mollink et al. (2008) and van Berkel-van Hoof et al. (2016), who investigated children in special education for the deaf, who all used Sign Language of the Netherlands (SLN) and sign-supported speech on a daily basis.

It is similarly unclear whether hearing individuals can make use of information from the manual modality for spoken word learning when the acoustic signal is not ideal. Van Berkel-van Hoof et al. (2016) found a positive effect of signs on spoken word learning for DHH children, but there was no such effect for the hearing control group. However, hearing adults who perceive spoken language in a condition with babble noise have been found to benefit from manual gestures for speech comprehension in their native language (Obermeier et al., 2012). Gestures are meaningful movements, usually of the hands, that accompany speech and add to the verbal message. Unlike signs, gestures are not governed by principles regarding, for example, form or grammar, and their meaning can often only be interpreted in relation to speech. 2 The participants listened to sentences containing a homonym with a dominant and subordinate meaning. The homonym was preceded by a disambiguating gesture, and a target word that verbally disambiguated the homonym appeared later in the sentence. In a condition with noise, there was no N400 effect for the subordinate meaning if the homonym had been preceded by a gesture, while this effect was present in the noise-free condition. This indicates the gesture had been interpreted to display the meaning of the homonym in the noise condition only. This suggests that (adult) listeners process the meaning of a gesture as it relates to speech, and that they can use that information to disambiguate vague spoken language, particularly when the acoustic signal is disrupted. It is therefore possible that a condition of noise heightens hearing individuals’ attentiveness to visual input to aid in understanding the message.

In contrast, Ting et al. (2012) investigated 8.5-month-old infants’ ability to learn spoken words in babble noise (with a speech/noise ratio of –10 dB). Infants who had been familiarised with the words in a condition with a sign were unable to differentiate between familiar and novel words in the test phase, whereas infants trained with videos containing only the words displayed longer looking times at the familiar words. Signs thus seemed to negatively impact spoken word learning within this experiment. However, the vast age difference between these groups, as well as the different methodologies, may have driven these contrasting results. Furthermore, the speech/noise ratio may influence the results significantly, with lower performance on spoken word learning in conditions with a lower speech/noise ratio (McMillan & Saffran, 2016). Thus, it is unclear whether older hearing children in a condition with babble noise should be expected to benefit from manual stimuli for spoken word learning.

Iconicity

Iconicity may influence the effect of manual stimuli on spoken word learning. An iconic sign or gesture bears a visible resemblance to its referent, for example, when a signer/speaker forms an orb shape with the hands to refer to a ball. To our knowledge, only one study investigated the impact of sign iconicity on spoken word learning by DHH children. Mollink et al. (2008) compared words taught to hard-of-hearing children with strongly iconic signs to those accompanied by weakly iconic signs. They found that 5 weeks after training the words learned with a strongly iconic sign were better retained than those learned with a weakly iconic sign. Similarly, studies have shown that iconic gestures are also more effective for spoken word learning than arbitrary ones for both hearing adults (Kelly et al., 2009; Macedonia et al., 2011) and hearing children (Lüke & Ritterfeld, 2014). These combined results suggest that iconic manual stimuli have a higher, positive, impact on spoken word learning, which is why we included them in our study.

Summary

In short, DHH children often lag behind their hearing peers in spoken language development. Augmentative signs have been found to aid signing DHH children in learning spoken words. Research on this topic, however, is still scarce and it is particularly unclear whether the positive effects that have been found for signing DHH children also hold for non-signing DHH children. Furthermore, to our knowledge, no studies have investigated the relation between cognitive skills and the effect of manual stimuli on spoken word learning. While multimodal stimuli are argued to reduce cognitive load, it is as yet unclear whether WM skills are of influence within this particular context of multimodal input for spoken word learning. Also, results of previous studies on manual stimuli and their effect on speech comprehension or word learning in a condition of limited auditory access for hearing participants were contradictory and applied to very different groups of participants than those in the current study. It is therefore unclear if older hearing children can benefit from manual stimuli when learning spoken words in non-ideal listening conditions.

Present study

The present study compared oral DHH children, and hearing children in a condition with babble noise in order to investigate whether prolonged experience with limited auditory access 3 is required for a sign effect to occur. We focused particularly on non-signing DHH children, because DHH children are likely more attuned to relying on visual cues (e.g. speech reading or a speaker’s gestures) than hearing children because their access to the speech signal is limited (Rudner, 2018). The question here is whether experience with this limited access is required to focus on visual input, or whether children who normally do not need such extra cues for speech comprehension can rely on them when auditory input is distorted by babble noise. Thus, if our results show a positive effect of augmentative signs on spoken word learning for the DHH children, but not the hearing children, this means that experience with limited auditory access is required for children to benefit from iconic signs for spoken word learning. However, if we find an effect of signs for both groups of children, this means that iconic signs aid spoken word learning whenever the speech signal is degraded. A third possibility is that we find no sign effect for either group of children (i.e. the children learn as many words with a sign as without one). This would suggest that experience with sign language is what is required to benefit from augmentative signs for spoken word learning.

By investigating DHH children who do not use sign language, we can separate the influence of limited auditory access and knowledge of sign language. It is possible, namely, that the results from previous studies (Mollink et al., 2008; van Berkel-van Hoof et al., 2016) may have been influenced by children’s existing and active knowledge of Sign Language of the Netherlands (SLN). Indeed, Thompson et al. (2012) and Ormel et al. (2009) found that iconic signs facilitate children’s comprehension and production of signs in British Sign Language and SLN, respectively. Therefore, it is possible that the signs in the studies by Mollink et al. (2008) and van Berkel-van Hoof et al. (2016) were easier to learn than the spoken words for these signing children, and could then serve as scaffolds for spoken word learning, as Leonard et al. (2013) suggest is part of the process of second language learning.

We conducted a word learning experiment, in which the presence of signs in training was a within-subject factor, with two groups of children: one group of oral DHH children, for whom limited access to spoken language is the default condition, and one group of hearing children, who performed the same task in babble noise. Verbal STM skills were measured to investigate the influence of these skills on a possible sign effect. Verbal STM has been found in many studies to correlate with vocabulary size and/or spoken word learning skills (see Archibald, 2017) and multimodal input has been suggested to decrease cognitive load (e.g. Paivio, 2010; Paivio et al., 1988). Children with lower verbal STM may thus benefit from this reduction effect and learn more spoken words when they are presented to them with a sign, showing a larger sign effect than children with better WM skills.

If sign language knowledge is related to the previously observed positive sign effect for signing DHH children, this means we should find the same performance in the Sign condition as in the No Sign condition for the oral DHH and hearing children in the present study. However, if experience with limited auditory access is key, only the DHH children should perform better on words with a sign; whereas if experience is not required, both the DHH and hearing children should benefit from signs for spoken word learning. Finally, children with lower verbal STM may benefit (more) from augmentative signs for spoken word learning than children with higher cognitive skills, due to the reduction of cognitive load by the multimodal input and the additional semantic information it provides. We therefore included verbal STM as a covariate to test for a relation between a sign effect and verbal STM.

Method

Participants

Sixty-two 9- to 11-year-old children participated in this study (21 DHH, 41 hearing). The children participated voluntarily and were rewarded for their participation with a sticker after each session. All the parents of the children gave informed consent. Furthermore, this study was approved by the Scientific Committee of Radboud University’s Behavioural Science Institute. Three hearing children were excluded from analysis because they did not participate in all four sessions. Two DHH children were excluded because they used a sign language interpreter in the classroom and were thus current users of SLN (more details on the inclusion criteria of DHH children are provided below). The mean age of the remaining 57 children was 10;9 (years;months) (SD = 9.44 months). A one-way ANOVA showed that the two groups’ ages did not differ significantly (F(1, 55) = 2.46, p = .123). Also, there was no significant group difference between group scores on the forward digit span task (F(1, 55) = .03, p = .860). There were 27 girls and 30 boys. All children used spoken Dutch in school and were fluent in this language. The children’s parents were asked about language use at home. Most children spoke only Dutch at home (53%). Several children spoke Dutch combined with either a Dutch dialect (18%) or a foreign language (12%). Some children’s home language was a foreign language (7%) and one child’s was a Dutch dialect (2%). The questionnaire was not returned by the parents of two DHH children and two hearing children, so we have no information on language use in their homes.

DHH children

The DHH children were recruited via their remedial teachers and via a Facebook group for DHH individuals and their parents. It was indicated that the children needed to be DHH without exposure to any sign language. However, it was unfeasible to find a sufficient number of DHH children who had never been exposed to a sign language or to sign-supported speech. We therefore accepted as participants all DHH children who did not communicate via a sign language or a sign system themselves and who were currently not exposed to sign language or a sign system on a daily basis. Also, the reported proficiency of SLN was defined as ‘none’ for all DHH children. Table 1 provides the background characteristics of the DHH participants, as provided by their parents and teachers.

Background information on the DHH children (N = 19).

Notes: Age mnt. = age in months. Pp nr. = participant number. Freq. tech. use = Frequency of technology use. Age enrol. special (pre-)ed. = Age at enrolment in special (pre-) education. Age enrol. mains. = Age at enrolment in mainstream education. Freq. SSD use parents = Frequency of SSD (Sign-Supported Dutch) use by the parents. n/a = not applicable. Enrolment information was provided by the teachers; some children had moved to the school’s area after age 4 (when Dutch children start kindergarten), so the teachers did not know when enrolment in mainstream education commenced.

Parents reported this child used a HA in both ears from 3 months of age until 1 year after implantation. After this time, he only used a CI in his left ear and no support in the other ear.

Parents reported this child started using hearing aids from 2011, without specifying further.

Parents reported this child attended a preschool facility where Flemish Sign Language was used. Age at enrolment and duration are unknown to us.

As indicated on a five-point scale with options: always, often, sometimes, rarely, never.

The mean age of this group was 10;6 years (SD = 10.90 months). There were seven girls and 12 boys. All DHH children attended mainstream education in schools throughout the country. Most had been enrolled in mainstream schools since at least the beginning of grade 1 (6 years of age), but some children had attended special kindergarten and/or day care where sign language may have been used before starting mainstream education. One DHH child enrolled halfway into grade 2. All DHH children were native speakers of Dutch. None of the children used SLN or Sign-Supported Dutch (SSD), but the parents of one child reported that they had used SSD until he was 8 years old (participant 15) and the parents of another child (participant 12) had used SSD while their child was learning to speak. The other children’s parents reported they never (53%) or rarely (31%) used SSD. None of the parents reported consciously using gestures to support their speech when they communicated with their child. One child was reported to experience balance problems. Two children had both Autism Spectrum Disorder (ASD) and Attention Deficit Hyperactivity Disorder (ADHD), and one child was born 12 weeks premature. Two children’s parents did not return the questionnaire, so we have no information on additional diagnoses or on the use of signs at home.

Hearing children

The hearing children (mean age 10;10 years, SD = 8.45 months) were recruited via their schools. There were 20 girls and 18 boys. They all attended mainstream primary education and had no knowledge of SLN. They had typical hearing and had no known learning or language disorders. There was also no diagnosis of either ASD or dyslexia. Dyslexic children were excluded because the pseudowords used in the present study have been taken from a list of pseudowords that is used to test progress in the reading abilities of dyslexic children in some mainstream schools. The home language of 15 children (39%) was only Dutch. In 10 cases (26%) there was a Dutch dialect aside from Dutch (Limburg dialect), and five children (13%) spoke Dutch and a foreign language at home. One child (3%) spoke only a Dutch dialect at home (Limburg dialect) and four children (11%) used only a foreign language at home. There are no data on home language for three children (8%).

Materials

We used the same materials as those used by van Berkel-van Hoof et al. (2016), 4 creating highly controlled, narrow conditions for word learning: no context other than pictures, and unfamiliar words and objects to be learned. There were 20 pictures of aliens per child. Each picture was accompanied by a short video clip in which the pseudoword (and pseudosign for half of the words) were produced. The words were embedded in a spoken carrier phrase (Kijk, een X! ‘Look, an X!’), in which the sign was produced at the same time as the target word. No signs other than the sign for the target word accompanied the spoken carrier phrase. The children were asked to repeat the words and signs during training, and they were tested for their receptive knowledge of the target words during testing (see below). E-Prime 2.0 was used to run the experiment on a laptop computer. The children’s responses, accuracy and reaction times (RTs) were recorded.

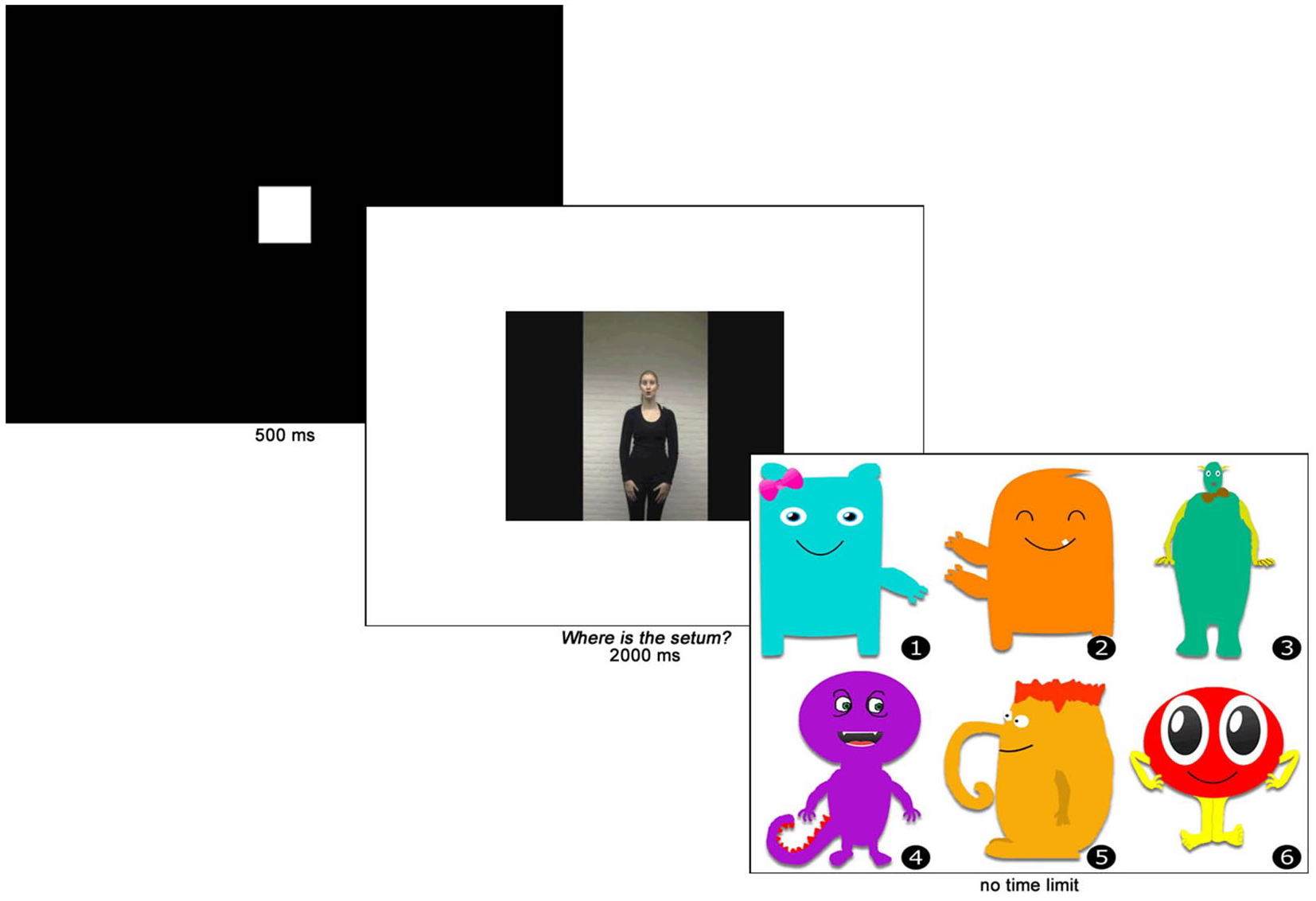

Pictures

The aliens in the pictures had friendly faces and bright colours (see Figure 1 for examples). They all had a characteristic that made them unique compared to the other aliens. The aliens were created in pairs such that the aliens in the Sign condition were comparable to those in the No Sign condition. For example, one alien pair consisted of an alien with only one arm, which was on its left side (a-picture; nr. 1 in Figure 1). The other alien in this pair had two arms, which were both on its right side (b-picture; nr. 2 in Figure 1). All other aliens had one arm on each side of their body. Pictures were counterbalanced across participants, such that half of the children saw the a-pictures with a sign and half saw the b-pictures in this condition.

Test trial of the word learning task.

Pseudowords

The pseudowords were based on pseudowords from a Dutch test of reading skills for 6- to 13-year-old children (van den Bos et al., 1994). All words were disyllabic and were stressed on the first syllable. The selected words had, or were changed into, a CVCV or CVCVC structure (10 words each). The words were assigned to the pictures randomly. However, the CVCV and the CVCVC words were evenly divided over the a- and b-pictures.

Pseudosigns

Iconic pseudosigns were created to match the pictures. Each sign depicted a unique aspect of the alien it referred to. For example, in the pair with an alien with a single arm and an alien with both arms on the same side, the signs referred to the single arm and the arms on one side, respectively. We used iconic signs, because previous research found that iconic manual gestures versus arbitrary gestures yielded higher accuracy in a word learning task (e.g. Macedonia et al., 2011) or larger activation of the semantic network as measured by blood-oxygen-level-dependent functional magnetic resonance imaging (Krönke et al., 2013). An experienced SLN teacher and interpreter aided in the creation of the signs. She ensured they adhered to the phonotactic parameters of SLN without being existing signs. The person who had co-created the signs produced the signs and words in the videos for the experiment. She spoke standard Dutch with a mild accent from the southern province Limburg and she had learned SLN as an adult.

Babble noise

Babble noise was added to the videos in the training phase for the hearing children. This condition was created to investigate whether temporary limited access to the speech signal (i.e., the presence of babble noise for hearing children) would yield an effect of signs on spoken word learning, compared to prolonged experience with limited auditory access (i.e. children who are DHH). The noise consisted of five male and five female voices from an audiobook for children (Radboud University & Rubinstein, 2011). The volume of the noise was adjusted so the sound level of the target words was 2.5 dB higher than the noise. We chose this difference based on Obermeier et al. (2012), who found that this level of difference between babble noise and speech still allowed adult participants to correctly recognise speech in their native language, whereas no difference between speech and noise yielded significantly lower results for repeating target words (98% and 88%, respectively) and complete sentences (88% and 72%) (Obermeier et al., 2012). Because the children in our experiment needed to learn new words, we wanted to use a noise level that would increase the difficulty of recognising words without disrupting listening comprehension too much. We therefore selected this speech/noise ratio of +2.5 dB.

Verbal short-term memory

Verbal STM was measured by an adaptation of a standardised forward digit span task (Wechsler, 2003). We used only those numbers that have one syllable in Dutch. We made this adaptation because the current study is part of a larger umbrella project, in which children with Developmental Language Disorder (DLD) also perform this task. Since the number of syllables in a word influences children with DLD’s ability to repeat them (Parigger & Rispens, 2010), we decided to exclude disyllabic numbers (i.e. numbers 7 and 9). This left eight numbers, meaning that a span of eight was the maximum score that could be obtained in this task. The experimenter read out random sequences of numbers at a calm speech rate (approximately 1 second per digit) from a sheet of paper and monitored the child’s oral response.

Procedure

The experiment consisted of four sessions in one week. Each session took approximately 20 minutes. In the first session, the children were trained on the words without a test. In the second and third sessions, the children were tested on their receptive word knowledge before receiving the same training as in the first session. The fourth session consisted of the test of the words followed by the cognitive tasks. Because we used non-existing objects and pseudowords, the children had no prior knowledge of either the words or the objects. The children sat in front of a laptop. The experimenter sat next to them and operated the computer. The procedures of the training and test phases of the word learning task and that of the cognitive task are explained separately below.

Training

Each training began with a display of all pictures divided over five screens. There was no time limit to this part of the training. After having viewed the pictures of the aliens, the children started with four practice trials before the experimental trials commenced. The items in these trials did not appear in the experimental blocks.

The training consisted of four repetitions of 20-trial blocks. Each block was subdivided into a block of 10 words with a sign and 10 without a sign. In case of the Sign Condition, a trial started with a black screen with a white plus, followed by the simultaneous display of a picture (on the left-hand side of the screen) and a video clip with the word and sign. The children then had approximately 4 seconds to repeat the word and sign. The trials in the No Sign Condition started with a white minus and then followed the same procedure as the trials in the Sign Condition, except for repeating a sign. The children were told that the signs were there to help them remember the words. Each sub-block of 10 trials was followed by a 4-second break.

To avoid an effect of order of either Signs first or No Signs first, we created two orders. In order 1, the children began with the Sign Condition and in order 2 the No Sign Condition was the first one in the training. Children with an odd participant number participated in order 1, children with an even participant number participated in order 2. In order 1 the a-items (e.g. nr. 1 in Figure 1) were accompanied by a sign and in order 2 the b-items (e.g. nr. 2 in Figure 1).

Test

The test phase measured the children’s receptive knowledge of the pseudowords (see Figure 1). The trials commenced with a white square on a black background. The same person from the training videos asked the children Waar is de X? (‘Where is the X?’) via a new set of video clips, in which no sign was present. This question was followed by a screen that displayed six pictures of aliens. The children chose their answer by pressing a number on the keyboard. There was no time limit on this task. After the children selected their answer, the next trial started automatically. There was a 4-second break after every five trials. RT in milliseconds was recorded by E-Prime.

Verbal short-term memory task

After the test on the fourth day, the children performed a digit span task to measure their short-term memory. The children were asked to repeat a sequence of numbers in the same order as they heard it. The children were explained that they started with a sequence of two numbers, which would become lengthier as the task progressed. There was one practice sequence before the experimental trials commenced. In the first experimental trial, a sequence of two digits needed to be repeated. Each trial consisted of two (different) attempts. If at least one of the attempts was correct, the child moved on to the next sequence, which had three digits. This procedure continued until the children were unable to correctly repeat both sequences of the same length or had finished the sequences of eight digits, which were the final sequences. The score for this task was the maximally achieved span.

Analyses

After a power analysis, we decided to analyse the data using SPSS 19 (IBM, 2010). The data distribution was normal in all variables and sphericity could be assumed in most analyses. If the assumption of sphericity was violated, the Greenhouse–Geisser ε was checked. If this was below .75, the Greenhouse–Geisser correction was applied to the values and if it was above .75, we used the Huyn–Feldt correction for the results. For our research question concerning the effect of signs on word learning, a Repeated Measures (RM) ANOVA was conducted with Group (DHH, hearing) as between-subjects factor and Condition (Sign, No Sign) and Measurement (M1–M3) as within-subject factors. To answer our research question on the influence of cognitive abilities on a sign effect, we conducted an RM ANCOVA with the performance on the cognitive task as a covariate to investigate a relation between this task and the factor Sign.

Results

Descriptive statistics

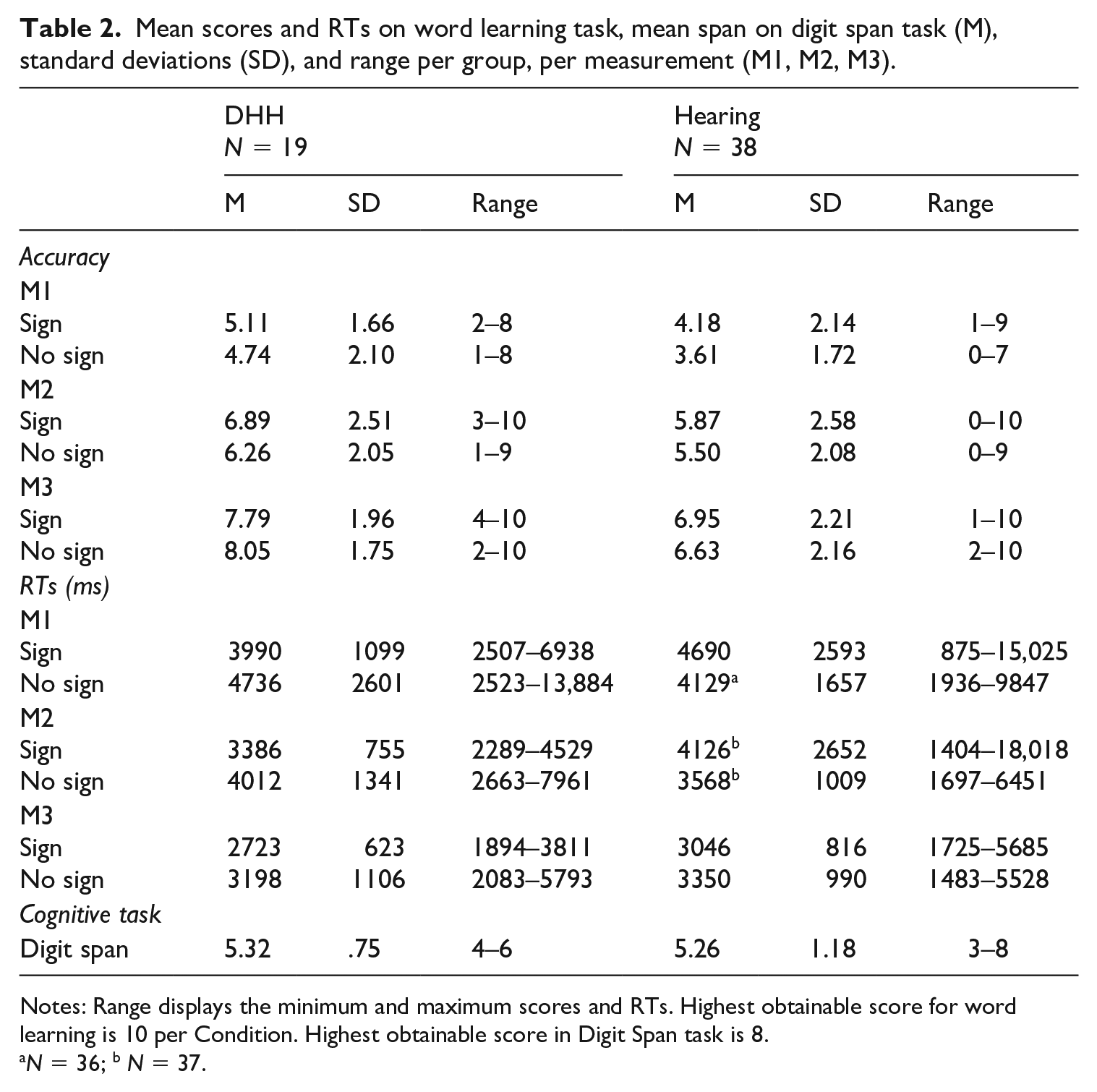

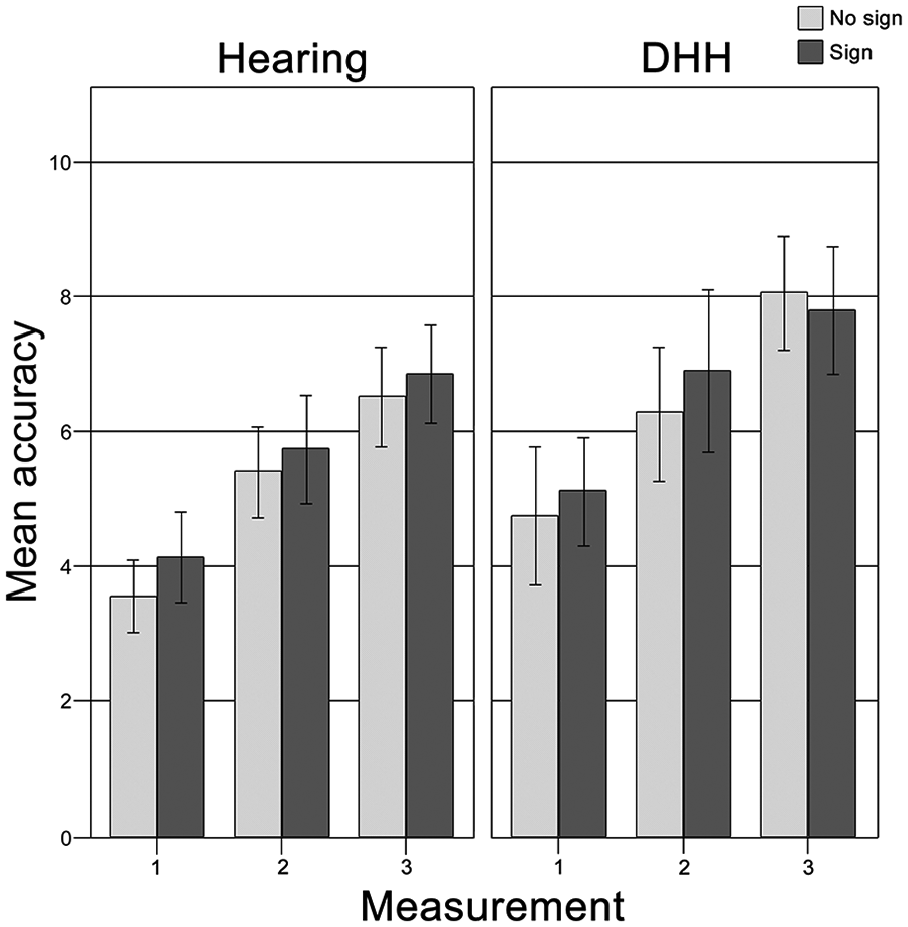

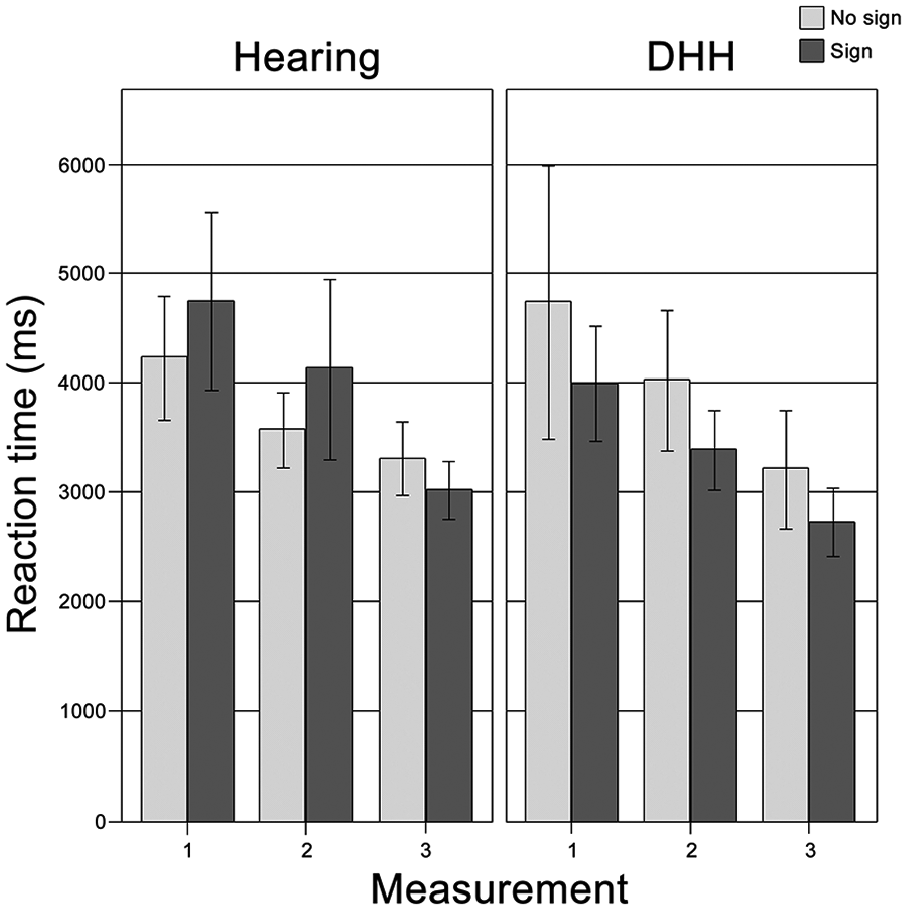

The mean accuracy and RTs obtained in the word learning task are displayed in the upper parts of Table 2 and in Figures 2 and 3. The numbers per measurement, per condition are shown for the groups separately. The mean RTs per child were calculated by selecting RTs of responses that were correct. Furthermore, RTs were discarded if they were either two standard deviations (SDs) below or above the participant’s mean RT or the item’s mean RT in a measurement. This led to the exclusion of four participants in the hearing group from the RM ANOVA (three participants had a score of 0 in a condition in one of the measurements; one had a score of 1 in one case, but the RT was two SDs above the mean RT on that item). The mean performance per group on the cognitive measure is displayed in the bottom part of Table 2.

Mean scores and RTs on word learning task, mean span on digit span task (M), standard deviations (SD), and range per group, per measurement (M1, M2, M3).

Notes: Range displays the minimum and maximum scores and RTs. Highest obtainable score for word learning is 10 per Condition. Highest obtainable score in Digit Span task is 8.

N = 36; b N = 37.

Mean accuracy on the word learning task per group, per measurement.

Mean reaction time on the word learning task per group, per measurement.

Effects of signs on word learning

An RM ANOVA on the accuracy of all children showed a main effect of Measurement (F(2, 110) = 109.92, p < .001, η² = .666), but not of Sign. The number of correctly recognised items increased significantly over time, but the presence of signs did not influence the accuracy on the word learning task (see top half of Table 2 and Figure 3). There were no interaction effects, but there was a significant main effect of Group (F(1, 78.69) = 4.61, p = .036, η² = .077). A pairwise comparison (Bonferroni adjusted for multiple comparisons) showed that the DHH children had learned significantly more words than the hearing children (means of 6.47 and 5.46, respectively; p = .036). The mean difference between the groups was 1.02 (SD = .47).

The RM ANOVA on the RTs displayed a significant main effect of Measurement (F (1.66, 84.55) = 16.44, p < .001, η² = .244). The children’s RTs were faster as time progressed. We found no significant main effect of either Group or Sign. This indicates that, overall, both groups reacted equally fast and that the RTs were similar in the condition with a sign and the one without one. We found a significant interaction effect of Sign * Group (F (51, 1) = 8.05, p = .007, η² = .136), indicating that the effect of signs on the RTs was different for the DHH children compared to the hearing children. We therefore conducted separate analyses of the sign effect per group.

The DHH children’s mean RT for the Sign condition (over Measurements) was 3366 ms and the mean RT for the No Sign condition was 3982 ms. The RM ANOVA showed a significant main effect of Sign (F (1, 18) = 5.91, p = .026, η² = .247). A pairwise comparison (Bonferroni adjusted for multiple comparisons) showed that the DHH children responded significantly more quickly to correct items with a sign than those without one. There were no interaction effects for this group.

The hearing children did not show a sign effect (F (1, 33) = 2.97, p = .094, η² = .083). The mean RT for the Sign condition (over Measurements) was 4000 ms and the mean RT for the No Sign condition was 3620 ms. Furthermore, there were no interaction effects.

Relation between cognition and sign effect

The bottom row of Table 2 displays the mean scores on the cognitive task that measured verbal STM (Digit Span). An RM ANCOVA was conducted for the DHH children to determine whether there was an interaction between the main effect of Sign and Digit Span, using Z-scores on the Digit Span task. We created Z-scores per age group of the entire group of children (9-year-olds, 5 10-year-olds and 11-year-olds) to control for age. We calculated Z-scores so as not to include age as a second covariate, which would have decreased power of our analysis. The ANCOVA displayed no significant interaction between verbal STM and the sign effect in RTs (F (1, 17) = .47, p = .504, η² = .027).

We also checked whether Digit Span was related to word learning without a sign for all children, to compare this to previous literature in which a correlation of verbal STM and word learning has been reported. A partial Pearson correlation controlling for age with the accuracy on only the final measurement of the No Sign condition indicated a significant positive correlation (r2 = .390, p = .003) between word learning in our experiment and performance on the Digit Span task. This thus replicates previous findings that verbal STM is related to first language acquisition (e.g. Archibald, 2017), indicating that our word learning paradigm and adapted version of the Digit Span task (Wechsler, 2003) function as expected.

Discussion

The present study compared oral DHH children, and hearing children in a condition with babble noise in order to investigate whether prolonged experience with limited auditory access is required for a sign effect to occur. Furthermore, we investigated a relation between a sign effect and STM skills. Our experiment focused on highly controlled, narrow conditions for word learning: no context other than pictures was present and none of the children used sign language. Also, whereas DHH children’s limited auditory access is a constant factor for them, a condition of babble noise for hearing children was an experimentally manipulated adverse listening condition. We found a positive effect of signs on the DHH children’s reaction times. They responded faster to correctly recognised words they had been taught with a sign than those without one. Moreover, the DHH children had learned more words overall than the hearing children. However, there was no effect of signs on word learning accuracy for either group of children and the hearing children responded equally fast to items they had learned with and without a sign. Furthermore, we found no evidence for a relation between the sign effect for the DHH children and the cognitive task as a covariate.

Previous studies reported a positive effect of augmentative signs on word learning for signing DHH children (Mollink et al., 2008; van Berkel-van Hoof et al., 2016). We found that non-signing DHH children displayed a positive effect of signs on response times to newly learned words, indicating that current sign language knowledge is not required for a sign effect to occur. It is possible that this effect is caused by multimodal stimuli (be they signs or gestures) increasing the number of semantic features stored in the mental lexicon for a given concept (Paivio, 2010; Paivio et al., 1988). Indeed, (Kelly, 2017, pp. 247–248) suggests that gestures’ role in spoken language learning is that they ‘deepen sensorimotor traces and make long-term memories for newly learned linguistic items less likely to decay’. This probably also applies to augmentative signs. Furthermore, Vigliocco and Vinson (2011, p. 201) emphasised that word meaning is grounded in embodied experience as ‘concrete aspects of our interaction with the environment (sensory-motor features) are automatically retrieved as part of sentence comprehension’. This is also in line with connectionist models of word learning (Plunkett et al., 1997) and word retrieval (Capone & McGregor, 2005) and with the developmental Bilingual Interactive Activation model (Grainger et al., 2010). Thus, the iconic signs in our study may have aided storage of semantic features of the aliens for the DHH children, thereby creating more memory traces and a more elaborate representation of the concept and its connections to lexical labels in the mental lexicon. This may have caused a better consolidation (and thus faster recognition) of the words in the Sign condition for the DHH children. Furthermore, the sign effect seemed sufficiently robust to be unrelated to cognitive abilities, although more research with a larger participant group is required to confirm this.

Interestingly, we found no sign effect for accuracy with the DHH children. This contrasts with earlier studies on sign effects on first language word learning by DHH children (Mollink et al., 2008; van Berkel-van Hoof et al., 2016). It is possible that the null-effect in our study was the result of a ceiling effect (score of 9 or 10 on both conditions) for a relatively large proportion of the DHH children (3 out of 19 children [16%] in Measurement 2, and 6 out of 19 [32%] in Measurement 3), whereas, respectively, only 3% and 11% of the hearing children (1 and 4 out of 38) reached ceiling.

Regarding the hearing children, we replicated our previous finding that hearing 9- to 11-year-olds learned as many words with a sign as without one (van Berkel-van Hoof et al., 2016), even in a condition with babble noise. We suggest that DHH children may be more adept than hearing peers at using visual information as additional cues or scaffolds to vocabulary learning to compensate for less-than-optimal auditory input, even if they do not use sign language (anymore). Indeed, Pierce et al. (2017) stated that sequential bilingual children who have missed the window of opportunity for optimal development of verbal WM (age 0–1) for the ambient language have been found to activate additional brain areas compared to monolingual peers when performing an n-back task. These brain areas are associated with ‘nonverbal memory and cognitive control processes’ (p. 1274), thus suggesting that these parts of the brain support non-verbal language learning mechanisms such as using visual cues.

Alternatively, the level of noise may have been so high that the task became too challenging for a sign effect to occur for hearing children and that the children’s ability to use augmentative signs for word learning was impeded by the babble noise. 6 We did not compare different speech/noise ratios or conduct a pilot with different levels of noise to test this hypothesis. However, we argue that while we cannot be certain that a lower level of noise would provide the same results, it seems unlikely that the hearing children were impeded in using signs for word learning. If that had been the case, their results in the Sign Condition should have been poorer than in the No Sign Condition, but we found no difference between the Conditions. Furthermore, a previous study with the same materials in normal sound conditions found no sign effect for hearing children (van Berkel-van Hoof et al., 2016). So, when there is no extra challenge from added babble noise, hearing children do not learn more or fewer words in a Sign condition compared to a No Sign Condition. Future research might investigate if there is a cut-off point of a speech/noise ratio in which hearing children do benefit from manual stimuli for spoken word learning, like hearing adults in a noise condition do for speech comprehension in their native language (Obermeier et al., 2012).

Additionally, we found that the DHH children in our study correctly recognised more words than the hearing children. This suggests that the negative impact of limited auditory access on spoken word learning may have been bigger for the hearing children. The hearing children seemed unable to adjust to the limited auditory access in the short period of three training sessions. This is on par with studies on school-aged children’s speech comprehension in noise. It has been found that background noise causes a drop in speech comprehension (Rudner et al., 2018) as well as in performance on school work or auditory WM tasks (Shield & Dockrell, 2003; Sullivan et al., 2015). Thus, hearing children’s speech comprehension, and by extension their ability to learn new words in a spoken language, is significantly impeded by background noise.

Furthermore, the fact that the hearing children in our study learned fewer words than the DHH children shows that the manipulation of the babble noise worked. That is, the babble noise proved to be significantly disruptive for word learning. We can therefore conclude that the lack of effect for the hearing children was not caused by too high a speech/noise ratio, making the task too easy. It is possible, however, that the babble noise created a larger limitation to the target words than did the DHH children’s limited sound perception. This may have caused the lower number of words learned by the hearing children. Future research could include tests of hearing level of stimuli similar to those used in the experiment for all participants to ensure comparable conditions of limited auditory access.

Of course, this study can only be seen as a first attempt to investigate a sign effect on spoken word learning for oral DHH children. We aimed to find children who had had as little exposure to sign language as possible. Our DHH group therefore included participants who had been exposed to sign language or sign-supported speech earlier in life. Because the subgroups were relatively small, we did not conduct statistical analyses per subgroup to measure the effect of sign language exposure on a sign effect. Furthermore, while we measured verbal STM, we did not administer measures of spoken language skills, such as vocabulary size. As reported by Gershkoff-Stowe and Hahn (2007), children with smaller vocabularies perform poorer on word learning tasks, and DHH children often have lower vocabulary than their hearing peers (e.g. Blamey, 2003; Coppens et al., 2012; Marshall et al., 2018). Connecting such measurements to results of a spoken word learning task with manual stimuli would be interesting to include in future research. Additionally, we have no data on socio-economic status and parental education levels, or on the input received by the children (either DHH or hearing). It is possible that the input between the groups differs regarding, for example, quantity and quality of spoken language used by the parents of DHH vs hearing children, as was found by Ambrose et al. (2015) for 3-year-old hard-of-hearing children. There may also be differences in parents’ use of gestures, although, as we reported in the Method, parents of the DHH children did not report consciously using gestures when they were communicating with their children, suggesting they may not use more gestures than do the parents of the hearing children. However, it would be interesting to consider gestural input from a child’s environment in future research. Finally, we investigated only iconic signs. It is therefore not clear if the results can be generalised to different kinds of signs.

Conclusion

In conclusion, our results show that oral, non-signing DHH children benefit from augmentative signs for their response time in spoken word learning. This suggests that the signs created additional (semantic) memory traces compared to speech alone (Kelly, 2017; Paivio, 2010). Furthermore, our results indicate that the sign effect found by Mollink et al. (2008) and van Berkel-van Hoof et al. (2016) was not influenced by active knowledge of sign language, because the DHH children in our study did not use SLN or Sign-Supported Dutch, and their proficiency of SLN was described as ‘none’. Experience with limited auditory access to the speech signal may influence a sign effect for spoken word learning, because hearing children did not benefit from signs in a condition of babble noise. Finally, 9- to 11-year-old DHH children did not show a relation between performance on the cognitive task and a sign effect. This suggests that the effect of signs on word learning is not related to children’s verbal STM abilities, although more research with a larger participant group is required to confirm this.

Footnotes

Author contributions

Corresponding author:

Ms. Lian van Berkel-van Hoof: Conceptualisation, Data curation, Formal analysis, Funding acquisition, Investigation, Methodology, Project administration, Visualisation, Writing – original draft, Writing – review and editing

Co-authors:

Dr Daan Hermans: Conceptualisation, Funding acquisition, Methodology, Supervision, Writing – review and editing

Dr Harry Knoors: Conceptualisation, Funding acquisition, Methodology, Supervision, Writing – review and editing

Dr Ludo Verhoeven: Conceptualisation, Methodology, Supervision, Writing – review and editing

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by Fonds Instituut voor Doven and Steunstichting HD Guyot (Joint Grant, assigned to H.K. and L.vB.-vH. on 4 December, 2012).