Abstract

Quantitative risk estimates from exposure to ionizing radiation are dominated by analysis of the one-time exposures received by the Japanese survivors at Hiroshima and Nagasaki. Three recent epidemiologic studies suggest that the risk from protracted exposure is no lower, and in fact may be higher, than from single exposures. There is near-universal acceptance that epidemiologic data demonstrates an excess risk of delayed cancer incidence above a dose of 0.1 sievert (Sv), which, for the average American, is equivalent to 40 years of unavoidable exposure from natural background radiation. Model fits, both parametric and nonparametric, to the atomic-bomb data support a linear no-threshold model, below 0.1 Sv. On the basis of biologic arguments, the scientific establishment in the United States and many other countries accepts this dose-model down to zero-dose, but there is spirited dissent. The dissent may be irrelevant for developed countries, given the increase in medical diagnostic radiation that has occurred in recent decades; a sizeable percentage of this population will receive cumulative doses from the medical profession in excess of 0.1 Sv, making talk of a threshold or other sublinear response below that dose moot for future releases from nuclear facilities or a dirty bomb. The risks from both medical diagnostic doses and nuclear accident doses can be computed using the linear dose-response model, with uncertainties assigned below 0.1 Sv in a way that captures alternative scientific hypotheses. Then, the important debate over low-level radiation exposures, namely planning for accident response and weighing benefits and risks of technologies, can proceed with less distraction. One of the biggest paradoxes in the low-level radiation debate is that an individual risk can be a minor concern, while the societal risk—the total delayed cancers in an exposed population—can be of major concern.

Keywords

To those outside the scientific community, it might come as a surprise that, until 2005, 1 essentially no large-scale epidemiologic studies directly demonstrated the existence of risks related to protracted radiation exposures—the kind that would be expected from releases from nuclear reactors or dirty bombs. Historically, atomic-bomb data—which look at the one-time exposures to Hiroshima and Nagasaki survivors—have dominated quantitative risk estimates for any type of low-level exposure to ionizing radiation, including protracted exposure. The standard approach to calculating risk estimates for long-term exposure has been to use biologic arguments—that is, concepts of cancer initiation, promotion, and repair, coupled with data from cellular experiments—to conclude that long-term exposure entails lower risk than sudden exposure, by a factor of two to ten (Healy, 1981); only in the last decade has the scientific community unofficially settled on a factor of two (Preston, 2011; UNSCEAR, 2006).

Presumably, a factor of two should have had a rather minor impact on policy, which should have meant a quick end to this portion of the story on low-dose radiation risks. No such luck. In fact, there has been, and continues to be, considerable debate among members of the scientific community, political and industry leaders, and the public around the claim that atomic-bomb data is relevant to estimating risks from protracted exposures. This debate has contributed to the delay in updating some US regulatory dose limits that are based on a pre-1990 understanding of radiation risks.

Three new studies could very well change the balance of the debate, as knowledge of them—one on off-site citizens and two on radiation workers—percolates into the wider discussion.

Deconstructing the debate

The debate over radiation risks has many tentacles that extend into the fields of biology, epidemiology, medicine, sociology, and political science. The biggest tentacle penetrates directly into the political sphere, wrapping itself around arguments on energy policy and the consequences of radioactive releases like those at Chernobyl and the Fukushima Daiichi Nuclear Power Station. Quantitative estimates of health risks affect public policy, although sometimes it takes many decades before scientific studies affect regulations (see Brock and Sherbini, 2012, in this issue of the Bulletin). Likewise, it also may take years before these studies trickle down to the public and industry stakeholders.

To some in the public, the quantitative aspects determined by experts are irrelevant; they argue that experts are not to be trusted and that the existence of any imposed risk of cancer is unacceptable. Others believe that evolution has provided humans with repair mechanisms that protect against natural background radiation; they reason that the radiation risk at doses close to the background is, in fact, zero. But for researchers in the field, and committees of scientists trying to reach consensus and assemble what amounts to a scientific jigsaw puzzle, reaching quantitative conclusions about risk is not so simple. Researchers cannot solely rely on gut instincts; they must analyze data, scrutinize their findings, and rigorously defend these findings.

Though the debate takes on many shapes, it always revolves around one magical number: 0.1 sievert (Sv), the dividing line between what is considered high and low exposure today. It is equivalent to about 40 cumulative years of the average unavoidable background radiation and to about 40 years of average medical diagnostic radiation in the United States (Einstein, 2009; NCRP, 2009). 2 And from this magical number, more disputes spring, specifically on the radiation risks below 0.1 Sv, as well as the risks from protracted radiation exposures above and below this number. The debates can be brutal—so much so that, at times, they make the spats between William Jennings Bryan and Clarence Darrow look tame.

One-time exposure above the dividing line

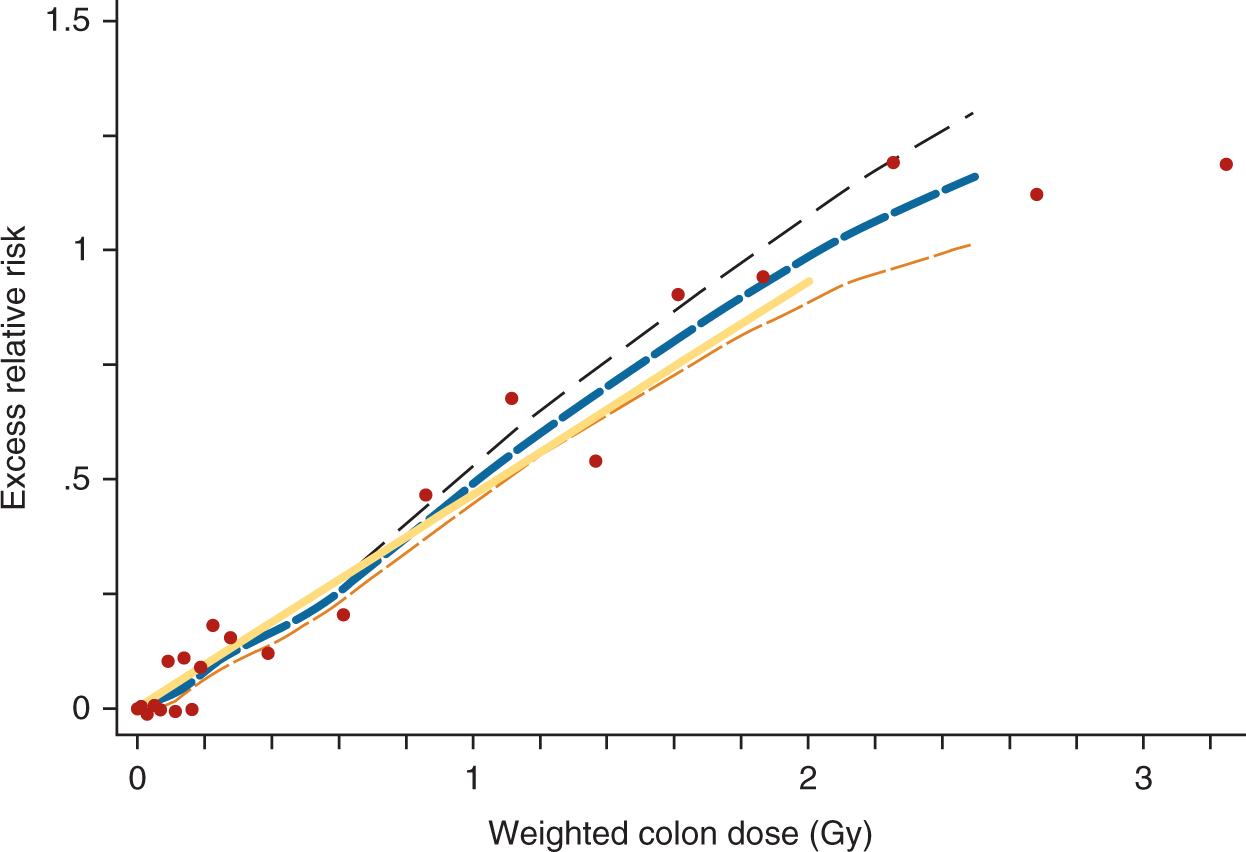

Since the United States dropped atomic bombs over Hiroshima and Nagasaki in 1945, it has worked with Japan to produce 14 periodic joint reports, known as Life Span Studies, on the fate of more than 80,000 bomb survivors. What makes these regular reports so formative—and why analysis of the atomic-bomb data continues to dominate quantitative risk estimates today—is that the vast data set, including regular follow-ups on the study population, has produced consistent epidemiologic results, providing little room for contention on the radiation risks related to acute exposures above 0.1 Sv. Despite some limitations (see Richardson, 2012, in this issue of the Bulletin), analysis of the atomic-bomb dose data presents a clear picture that a linear no-threshold (LNT) model—the theory that radiation risk declines in proportion to dose, but never goes to zero—holds for a large range of survivor doses (see Figure 1).

3

Solid cancer-incidence dose response for atomic-bomb survivors.

But atomic-bomb data, some argue, focus on high doses and are therefore irrelevant to the low-dose debate. 7 However, as Figure 1 shows, the data set contains a wide-range of doses— including those that are quite relevant to occupational exposure limits, as well as those that match accumulated background radiation and medical-diagnostic doses, which are normally considered to be low. Bear in mind that these doses are in addition to the natural background radiation that the survivors had already received.

Over the decades, as new excess cancers have emerged in the atomic-bomb cohort at lower and lower doses, the number that defines “low dose” has shrunk fivefold to its current value of 0.1 Sv. At the same time, the estimated risk has risen tenfold since 1980; 8 thus, it is of little surprise why there is continuing concern about low-dose radiation. So the natural question is: When will the estimated-risk increases stop? Fortunately, further dramatic changes are not expected in the atomic-bomb data—certainly not for adults at doses above 0.1 Sv—because a sufficient number of excess cancers has ensured tight confidence bounds, or limits. 9

Epidemiologic data can include many individual variations, including subgroups of people with different genetic susceptibilities (see Greenland, 2012, and Richardson, 2012, both in this issue of the Bulletin). These variations are too often lost in the debate over low-level radiation effects. For instance, some researchers argue that raw, dose-category data, specific to children, indicates an LNT response down to 0.01 Sv. Further evidence of a strong difference in susceptibilities among groups—such as from DNA sequence variations—may lead to calls for tighter health and industry regulations (Locke, 2009).

The LNT theory predicts that background radiation exposure and medical diagnostic exposures slightly increase a population’s future cancer rates. The cancers don’t appear immediately but are diagnosed five years to more than 50 years after exposure. Delayed cancers are the usual focus of the low-level radiation debate, but delayed stroke and heart disease account for “about one third as many radiation-associated excess deaths as do cancers among atomic bomb survivors” (Shimizu et al., 2010).

Protracted exposures above the dividing line

For years, nuclear power advocates have argued that the atomic-bomb cancer data were insignificant to nuclear reactor regulations and irrelevant to nuclear reactor risk assessments—not just because the atomic-bomb doses were supposedly too high, but because the bomb exposures occurred suddenly. These advocates, along with a number of researchers, argued that the body’s DNA repair mechanisms assist in the recovery from slow exposures caused by routine reactor releases. Even doses from accidental releases would not be as bad as atomic-bomb doses, these advocates claimed, because radioisotopes in the body or on the ground decay with predictable half-lives, protracting the radiation dose. 10 But conventional wisdom was upset in 2005, when an international study, which focused on a large population of exposed nuclear workers, presented results that shocked the radiation protection community—and foreshadowed a sequence of research results over the following years.

15-country nuclear worker data

It all started when epidemiologist Elaine Cardis and 46 colleagues surveyed some 400,000 nuclear workers from 15 countries in North America, Europe, and Asia—workers who had experienced chronic exposures, with doses measured on radiation badges (Cardis et al., 2005). The prediction of total excess cancers for these nuclear workers was striking: Cancer deaths in this population increased by 1 to 2 percent, making past nuclear work a rather dangerous industrial occupation relative to others. (It should be noted that Cardis’s study looked at workers exposed many years ago, when efforts to reduce workers’ radiation exposure were less effective than they are today.)

This study revealed a higher incidence for protracted exposure than found in the atomic-bomb data, representing a dramatic contradiction to expectations based on expert opinion. Further, this challenged the relevance of cell dose-rate experiments to human epidemiology. However, the dose response did not include as tight error lines as in the atomic-bomb data. Even though the incidence data were statistically significant, some researchers, along with industry and medical radiation advocates, quickly attacked the study.

Seizing on the data from Canada—the country with the highest excess relative risk (ERR)-per-Sv rate—the critics contended that the study was flawed; they charged that it be repudiated by the authors, which it never was. Atomic Energy of Canada Limited’s industry consultants continue to focus on the Canadian segment of the data, particularly on the reliability of the dose estimates. 11 Their relentless attacks have been effective in neutralizing the study, despite the authors’ defenses. 12

UK radiation workers

A second major occupational study appeared a few years later, delivering another blow to the theory that protracted doses were not so bad. This 2009 report looked at 175,000 radiation workers in the United Kingdom, and was an update to earlier reports of the same data set. Sufficient diseases had appeared since the previous assessment, making the cancer risk statistically significant and the same as in the atomic-bomb data. Again, protracted exposures did not turn out to be less dangerous than acute exposures.

12 worker studies combined

After the UK update was published, scientists combined results from 12 post-2002 occupational studies, including the two mentioned above, concluding that protracted radiation was 20 percent more effective in increasing cancer rates than acute exposures (Jacob et al., 2009). The study’s authors saw this result as a challenge to the cancer-risk values currently assumed for occupational radiation exposures. That is, they wrote that the radiation risk values used for workers should be increased over the atomic-bomb-derived values, not lowered by a factor of two or more.

If history is any guide, it is questionable that this analysis and the results of other studies will lead to actual changes in what defines worker-exposure limits. Industry pushback is very strong, as can be seen by the efforts of the California-based Electric Power Research Industry (EPRI), a nonprofit energy consortium. In 2009, the group issued a damning report that dismissed all of the new, high results in the 12 occupational studies, citing the work as either flawed or irrelevant to the exposures received by the most exposed nuclear workers (EPRI, 2009), which, EPRI says, are around 0.02 Sv per year. Their concerns about the irrelevance of protracted studies are puzzling, because an annual exposure at 0.02 Sv over a period of 10 years would be 0.2 Sv—an accumulated exposure well above the low-dose dividing line of 0.1 Sv.

Techa River data

So what about the risks to the general population? In 2007, one study—the first of its size—looked at low-dose radiation risk in a large, chronically exposed civilian population; among the epidemiological community, this data set is known as the “Techa River cohort.” From 1949 to 1956 in the Soviet Union, while the Mayak weapons complex dumped some 76 million cubic meters of radioactive waste water into the river, approximately 30,000 of the off-site population—from some 40 villages along the river—were exposed to chronic releases of radiation; residual contamination on riverbanks still produced doses for years after 1956.

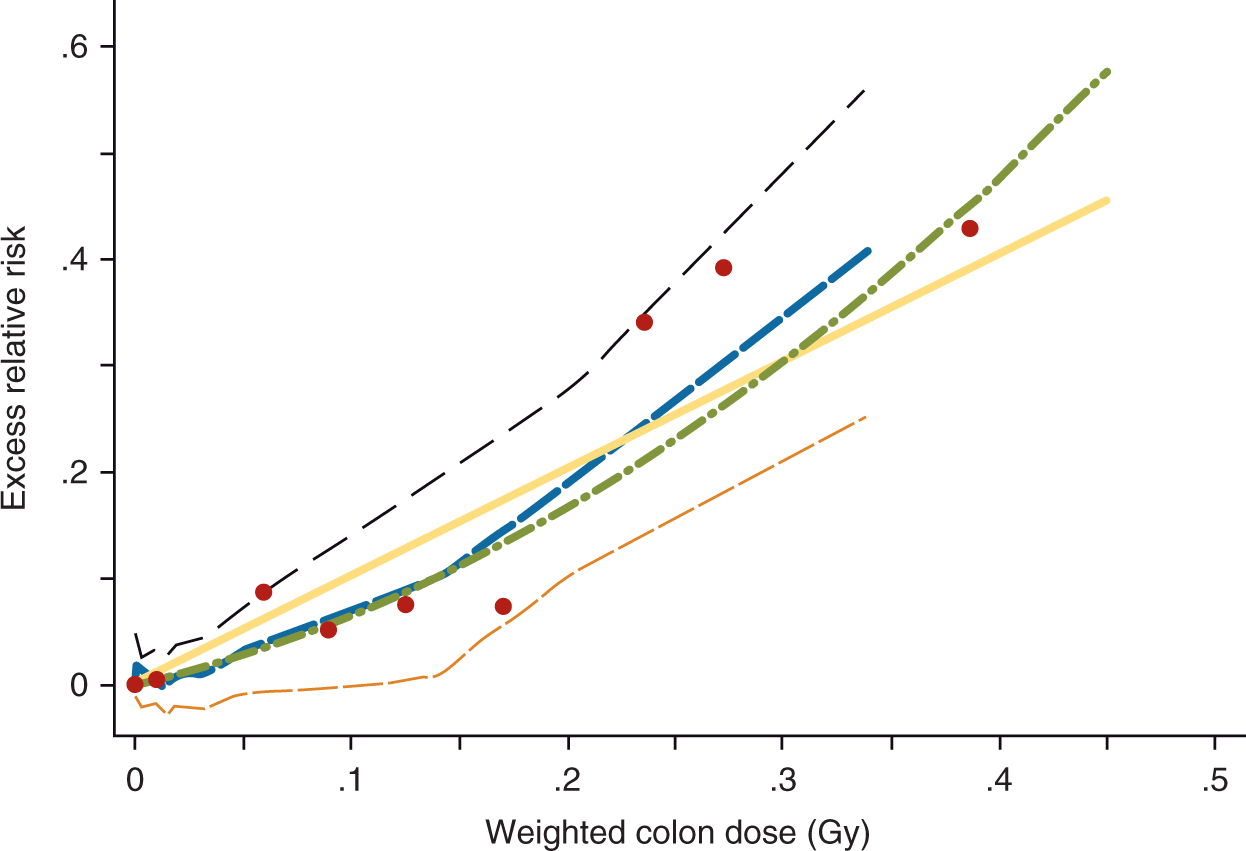

Here was a study of citizens exposed to radiation much like that which would be experienced following a reactor accident. About 17,000 members of the cohort have been studied in an international effort (Krestinina et al., 2007), largely funded by the US Energy Department; and to many in the department, this study was meant to definitively prove that protracted exposures were low in risk. The results were unexpected. The slope of the LNT fit turned out to be higher than predicted by the atomic-bomb data, providing additional evidence that protracted exposure does not reduce risk.

13

Furthermore, as seen in Figure 2, the raw data showed cancer excess around 0.1 Sv of protracted exposure, before any linear fit is made. The distinction between acute and chronic exposures no longer exists in epidemiologic data. But as with the 15-country study, the Techa River study was attacked, particularly on the reliability of doses. EPRI discounted the study, claiming it contradicted other studies on low background-radiation areas in India and China. However, EPRI failed to mention in its 2009 report the limitations of such studies in high-background-radiation regions,

14

not to mention that the confidence bounds around the estimate were consistent with the risks from the atomic-bomb study (Tao et al., 2012).

Solid cancer-incidence dose response for the Techa River cohort.

Too much is at stake in terms of the cost of worker and public protection to expect the nuclear industry to be anything but skeptical of studies that undermine past practices and positions. The industry will want unequivocal evidence. Thus, the debate over protracted exposures will continue, probably even after the next US National Academy of Sciences and the Institute of Medicine’s Biological Effects of Ionizing Radiations (BEIR) scientific committee issues a communal judgment five to ten years from now. 17

Protracted exposures below the dividing line

Though they inspire and instigate forward-thinking research, the above studies do not put the debate over low-risk radiation to rest. In fact, the debate goes beyond this, to an even thornier issue: namely, the dose response below the divide at 0.1 Sv. 18 The debate over low doses is as intense as the debate over protracted-versus-acute exposure. Why? Because doses in this range are those of most concern to a public skeptical about nuclear power’s side effects. Without low-dose risk estimates, it is not possible to predict the full consequences of events like Fukushima and Chernobyl; it is not possible to make estimates of the consequences of relying on nuclear power; nor is it possible to understand the consequences of relying on a high-level waste-storage site like Yucca Mountain.

Medical-diagnostic doses also often fall in this range, although the public is less concerned about them than doses from nuclear power or nuclear waste (see Slovic, 2012, in this issue of the Bulletin). Still, doctors, public health officials, emergency planners, and responders need to understand the risks at these doses.

Some public officials and nuclear energy supporters say doses below 0.1 Sv, particularly below 0.01 Sv, are nothing to worry about, while nuclear activists contend they are very dangerous. Personal interests can play a role in fanning the flames of the debate. In summary, there are scientific, medical, governmental, and political reasons for knowing the risks below 0.1 Sv: for example, creating an informed public, choosing energy sources, advancing scientific knowledge, facilitating an appropriate balance of risks from medical diagnostics. But there are obstacles, as well: like sabotaging lucrative business ventures and potentially amplifying risk perceptions.

Setting the record

Experts, governments, industry, and the public aren’t left to their own devices to settle this debate. Due to the complex nature of the issue—and the many opinions that it elicits—two major scientific committees oversee this matter, periodically reporting on new evidence related to quantitative risk estimates. They are the BEIR committee and the UN Scientific Committee on the Effects of Atomic Radiation (UNSCEAR).

UNSCEAR’s reports are compiled by staff and a small group of knowledgeable consultants (UNSCEAR, 2006). The BEIR reports, on the other hand, have a more elaborate procedure that has a more transparent process (National Research Council, 2006). 19 The reports’ conclusions usually agree, but the UNSCEAR report, being a product of the United Nations, must be cognizant of national politics in UN countries—this means that the report never states that some risk continues down to zero dose. Such careful phrasing ensures that the LNT, and the risk numbers associated with it, can be used around the world for cost–benefit analyses and other regulatory purposes. Agencies can justify the use of the LNT solely on the basis of adopting a conservative or precautionary approach, thereby escaping strong objections from industry leaders and dissenting researchers.

In the UNSCEAR reports, for example, the dose models have risks going toward zero dose, but the authors do not write that the risks definitely reach zero—rather, they provide an obtuse explanation that “the inability to detect increases in risks at very low doses using epidemiological methods does not mean that the cancer risks are not elevated.” No doubt, there is concern at the United Nations that too much talk about cancer risk at low doses will amplify risk perception (see Kasperson, 2012, in this issue of the Bulletin). 20

The BEIR committee, which does not answer to any government, is more direct in its pronouncements. Based on their extensive review of the biologic evidence, including epidemiology, animal data, cellular data, and cancer theory, they conclude that the risk decreases with dose, but never goes to zero. In fact, their exact words are: A comprehensive review of the biology data led the committee to conclude that the risk would continue in a linear fashion at lower doses without a threshold and that the smallest dose has the potential to cause a small increase in risk to humans. (National Research Council, 2006)

Still, science is a contested territory, so scientific dissent from these reports is to be expected. For the sake of understanding how layered the arguments are, it is worth exploring these theoretical dissents.

Supralinear response

One theory that uses biologic evidence to predict a response greater than the LNT is a supralinear response. Such evidence is based on studies of cellular communication from radiation-damaged cells—chemical communication that transfers damage to an undamaged cell, multiplying the deleterious effect of the original radiation (the bystander effect). 21 Other evidence comes from studies of genomic instability, a phenomenon in which radiation damage doesn’t show up until several cell generations have passed (Morgan and Sowa, 2009; see also, Hill, 2012, in this issue of the Bulletin).

In a 2012 study on atomic-bomb survivor mortality data (Ozasa et al., 2012), low-dose analysis revealed unexpectedly strong evidence for the applicability of the supralinear theory. From 1950 to 2003, more than 80,000 people studied revealed high risks per unit dose in the low-dose range, from 0.01 to 0.1 Sv. The study’s authors, themselves, did not go so far as to suggest a supralinear response, saying only that the results were difficult to interpret. However, advocates for supralinear response are likely to see a simple explanation, namely supralinearity. Note that such effects do not show up in studies of cancer incidence in the atomic-bomb studies.

Zero response

In contrast to theories of supralinear responses, some industry stakeholders argue that the response might be zero for doses up to a threshold, below 0.1 Sv. A completely zero response is unlikely, given the heterogeneity of human populations, including differing immune and repair systems among people. However, a quasi-threshold, with a dose response below 0.1 Sv, is a standard theoretical possibility and a hypothesis that some researchers strongly believe will be vindicated one day, thereby confounding the conventional view of an LNT dose response.

In 2007, the latest cancer-incidence results were released on atomic-bomb survivors from 1950 to 1998; in this update, there was a mathematical fit to a threshold at 0.04 Sv, as well as a fit to the LNT model. 22 However, these findings were not reiterated in the 2012 update on cancer mortality, which reported a response that appeared to rise above the LNT prediction at low doses.

The French National Academy and Institute of Medicine put out their own short report advocating for a quasi-threshold based largely on cellular data (Aurengo et al., 2005) and criticizing those who think otherwise. In turn, the French study has been criticized, not just on the merits, but also for lack of objectivity.23,24 For LNT dissenters, the French Academy’s study has been their counterpoint when the authority of the US BEIR report is invoked. Thus, any layperson wishing to engage in the discussion must reach a decision on which of these reports is likely to contain the most credible scientific judgments.

Hormesis theory

Demonstration of a quasi-threshold would be unlikely to assuage those who abhor radiation-producing technology on existential grounds, but it might eventually affect regulations and overall opinion. The radiation hormesis theory—that some radiation is beneficial—would provide more comfort, if it could be demonstrated. The best evidence for this concept in humans can be found in national data on home radon measurements and lung cancer rates at the county level. However, the reliance on cancer data aggregated to the county level has been roundly criticized by epidemiologists (Lubin, 2002). Results from more sophisticated epidemiologic studies of the same association do show the expected dose response when individual cancers are matched to dose (Darby et al., 2005; Krewski et al., 2006).

Though it still is a pet topic of enterprising journalists, the radiation hormesis theory is no longer of much interest to researchers. The BEIR VII report, published in 2006, discounted the concept; the French Academy of Sciences took it more seriously, while discounting other evidence that suggests the response might be supralinear at low doses.

Given the increase in radiation from medical diagnostics and the interest in protracted exposure, the possible existence of a threshold or hormetic effect for public policy appears to be a moot issue for developed countries when it comes to future exposures. Even if the level of medical diagnostic exposures does not increase in the future, over the course of 40 years most people in developed countries will receive an average of 0.1 Sv from medical procedures, alone. With this in mind as a dose starting point for millions of people, it is fair to say that any exposure to radioactive elements from a nuclear accident or a dirty bomb would definitely contribute to their delayed cancer risk.

Adaptive response

Of more current interest than hormesis is the concept of adaptive response, where low doses of radiation can prime cells to withstand later, higher doses of radiation (see Hill, 2012, in this issue of the Bulletin). The idea is that very low doses, like a vaccination, teach the body how to recognize and repair or remove radiation-damaged cells. Thus, subsequent chronic doses would be less dangerous. Under some theories, this effect can lead to a sublinear response at doses below 0.1 Sv (Morgan and Sowa, 2009). In other words, the excess risk at low doses could be less than predicted by the LNT. The range of doses for which this effect might be applicable is not known; nor is it known whether it might compensate for deleterious bystander effects and genomic instability.

LNT as matter of convenience

It should be noted that all of these cellular effects—including bystander effect, genomic instability, and adaptive response, some of which are thought to have effects working in opposite directions—could already be incorporated into the linear human dose-response curve (Morgan and Sowa, 2009), making the debate much ado about nothing.

Many professional risk analysts, especially those with calm temperaments, do not fret much about the debate over dose response below 0.1 Sv. They simply handle it as a standard problem in uncertainty management, much as they handle many other parts of risk assessment calculations: They take the LNT as the mid-range, add uncertainty bounds around it, and possibly use subjective-likelihood distributions to accommodate alternative scientific hypotheses. This is essentially the approach that has been followed in calculations supporting compensation of weapons-test veterans and workers in the weapons complex (Kocher et al., 2008), although the possibility of a threshold or hormesis has not been included in this work. If non-linear, dose-response models are included—for example, threshold or hormesis models—consistency would be necessary and dose terms would need to be added for medical technology and, possibly, background radiation.

Dealing with the uncertainty components from gaps in knowledge about low-level radiation effects is essential (Hoffman et al., 2011). According to these risk analysts, by quantifying uncertainty, the most important debates over low-level radiation exposures can proceed with less distraction (e.g., planning for accident response and weighing benefits and risks of technologies). However, this approach does not satisfy critics of the LNT, because the average risks usually come quite close to the LNT predictions, even though the uncertainty bounds on the resulting predictions may include their views.

Conclusion

The public, legislators, and journalists are often at a loss to deal with the charges and counter charges that surface in the debate over low-level radiation exposures. It does not help to listen to industry leaders, nuclear activists, or individual researchers, who, one after another, propound their competing images of the underlying truth. Given the complexities, the only alternative for most people is to rely on scientific committees, like the BEIR committee and UNSCEAR, recognizing that the scientific jigsaw puzzle is incomplete. Not all pieces fit correctly, but a reasonable idea of the true situation emerges from the recognizable image visible from the pieces already assembled.

It is now reasonably clear that protracted exposure does not protect against radiation-induced cancer. Rather, it is the cumulative radiation exposure from all sources that must be examined. In developed countries, the average accumulated dose from medical procedures is now so high that a significant percentage of the population in these countries will be above 0.1 Sv. Therefore, this population will be primed for radiation-induced, delayed cancers from releases from nuclear reactors or dirty bombs, even using the hypothetical dose-response models of the LNT dissenters. There is no longer a convenient excuse to avoid using the LNT to estimate consequences from real or projected releases of radioactive materials, even when the dose of concern is below 0.1 Sv.

Particularly when it comes to cost–benefit decisions on retrofitting reactors or planning for spent-fuel pools, regulations that depend on estimates of cancer risks are using LNT slope coefficients that are decades out of date (see Brock and Sherbini, 2012, in this issue of the Bulletin). Thus, pressure to update regulations may build, as awareness grows of the five-to-tenfold disparity between the risk estimates per unit dose recommended by scientists today and the older values still used by regulators in cost–benefit calculations for determining allowable doses.

Footnotes

Funding

This research received no specific grant from any funding agency in the public, commercial, or not-for-profit sectors.

Notes

Author biography

Guest editor of the Bulletin’s special issue on low-dose radiation risks,