Abstract

Society’s resistance to even minor changes in our nuclear posture demonstrates that it sees little or no risk in the nuclear status quo. This article proposes using an engineering discipline known as risk analysis for determining whether society’s nuclear optimism is justified. If requested by Congress and performed by the National Academies, a risk analysis of nuclear deterrence could bring greater objectivity to the debate over our nuclear posture. The National Academies have frequently been called on by the government to provide objective, impartial advice on similar matters, as exemplified by a current study of the Deepwater Horizon oil spill. The major difference is that, in the case of nuclear deterrence, it is essential to mitigate the risk before disaster strikes, not afterward.

In September 2009, Newsweek carried a cover story, “Why Obama should learn to love the bomb,” that quoted Columbia University Professor Kenneth Waltz: “We now have 64 years of experience since Hiroshima. It’s striking and against all historical precedent that for that substantial period, there has not been any war among nuclear states” (Tepperman, 2009: 45). Waltz is a leading advocate of nuclear optimism and argues that fears of nuclear war are exaggerated: “The probability of major war among states having nuclear weapons approaches zero” (Waltz, 1990: 740). Waltz is not alone. In a July 2009 interview, former Secretary of Defense and Director of Central Intelligence James Schlesinger claimed, “We will need a strong deterrent … that is measured at least in decades—in my judgment, in fact, more or less in perpetuity.” In September 2009, after President Barack Obama was awarded the Nobel Peace Prize for his efforts to rekindle the vision of a world free of nuclear weapons, Time magazine had an online essay arguing that the Nobel Committee should have awarded the prize to the atomic bomb instead. The headline read, “Want peace? Give a nuke the Nobel” (von Drehle, 2010).

Last year’s BP oil spill demonstrates why nuclear optimism would require much more evidence than the absence of world war in the last 65 years. In November 2009, BP’s vice president for exploration in the Gulf of Mexico, David Rainey, touted offshore drilling’s safety record in these words: “I think we also need to remember that OCS [Outer Continental Shelf] development has been going on for the last 50 years, and it has been going on in a way that is both safe and protective of the environment” (Rainey, 2009). Five months later, BP’s Deepwater Horizon drilling rig exploded, killing 11 workers, creating an environmental catastrophe, and proving that 50 years of success was inadequate evidence for complacency.

Similar misguided thinking was responsible for the loss of the Space Shuttle Challenger when gaskets— called O-rings—on a booster rocket burned through, directing a blowtorch-like flame against a fuel tank and causing it to explode. Engineers who had designed the booster rocket tried to halt the launch because of partial O-ring failures on previous launches in cold weather. Lawrence Mulloy, manager of NASA’s booster rocket program, cited past successes to justify ignoring those concerns: “What you are proposing to do is to generate a new Launch Commit Criteria on the eve of launch, after we have successfully flown with the existing Launch Commit Criteria 24 previous times.” Here too, we learned the hard way that a long string of successes is inadequate evidence for assuming continued favorable outcomes (Rogers, 1986: ch. 5).

The Gulf of Mexico will eventually recover from the BP oil spill, and the Challenger disaster was not the end of the world. The same cannot be said for mistakenly extrapolating 65 years without a nuclear exchange into the indefinite future. Where nuclear weapons are concerned, we cannot afford to wait for disaster to strike before realizing that complacency was unwarranted.

A teetering nuclear coin

Fortunately, quantitative risk analysis can illuminate the danger by gleaning more information from the available data than might first appear possible. Think of each year since 1945 as a coin toss with a heavily weighted coin, so that tails shows much more frequently than heads. Tails means that a nuclear war did not occur that year, while heads corresponds to a nuclear catastrophe, so the last 65 years correspond to 65 tails in a row. Risk analysis reclaims valuable information by looking not only at the gross outcome of each toss (whether it showed heads or tails), but also at the nuances of how the coin behaved during the toss. If all 65 tosses immediately landed tails without any hesitation, that would be evidence that the coin was more strongly weighted in favor of tails and provide additional evidence in favor of nuclear optimism. Conversely, if any of the tosses teetered on edge, leaning first one way and then the other, before finally showing tails, nuclear optimism would be on shaky ground.

In 1962, the nuclear coin clearly teetered on edge, with President John F. Kennedy later estimating the odds of war during the Cuban Missile Crisis at somewhere between “one-in-three and even” (Sorenson, 1965: 705). Other nuclear near misses are less well known and had smaller chances of ending in a nuclear disaster. But, when the survival of civilization is at stake, even a partial hesitation before the nuclear coin lands tails should be of grave concern:

During the 1961 Berlin crisis, Soviet and US tanks faced off at Checkpoint Charlie in a contest of wills so serious that President John F. Kennedy briefly considered a nuclear first strike option against the Soviet Union (Burr, 2001). In 1973, when Israel encircled the Egyptian Third Army, the Soviets threatened to intervene, leading to implied nuclear threats (Ury, 1985). The 1983 Able Archer incident was, in the words of Secretary of Defense Robert Gates, “one of the potentially most dangerous episodes of the Cold War” (Gates, 2006: 270). This incident occurred at an extremely tense time, just two months after a Korean airliner had been shot down after it strayed into Soviet airspace, and less than eight months after President Ronald Reagan’s “Star Wars” speech. With talk of fighting and winning a nuclear war emanating from Washington, Gates noted that Soviet leader Yuri Andropov developed a “seeming fixation on the possibility that the United States was planning a nuclear strike against the Soviet Union” (Gates, 2006: 270). The Soviets reasoned that the West would mask preparations for such an attack as a military exercise. Able Archer was just such an exercise, simulating the coordinated release of all NATO nuclear weapons. Certain events during the 1993 Russian coup attempt that were not recognized by the general public led a number of US intelligence officers at the North American Aerospace Defense Command (NORAD) headquarters to call their families and tell them to leave Washington out of fear that the Russians might launch a nuclear attack (Pry, 1999). In 1995, Russian air defense mistook a meteorological rocket launched from Norway for a US submarine-launched ballistic missile, causing the Russian “nuclear football”—a device which contains the codes for authorizing a nuclear attack—to be opened in front of President Boris Yeltsin. This was the first time such an event had occurred, and fortunately Yeltsin was sober enough to make the right decision (Pry, 1999). Confusion and panic during the 9/11 attacks led an airborne F-16 pilot to mistakenly believe that the USA was under attack by Russians instead of terrorists. In a dangerous coincidence, the Russian Air Force had scheduled an exercise that day, in which strategic bombers were to be flown toward the United States. Fortunately, the Russians learned of the terrorist attack in time to ground their bombers (Podvig, 2006). The August 2008 Russian invasion of Georgia would have produced a major crisis if President George W. Bush had followed through on his earlier promises to Georgia: “The path of freedom you have chosen is not easy but you will not travel it alone. Americans respect your courageous choice for liberty. And as you build a free and democratic Georgia, the American people will stand with you” (Bush, 2005). The danger was compounded because most Americans are unaware that Georgia fired the first shots and Russia was not solely to blame (Tagliavini, 2009). Ongoing tensions could well produce a rematch, and Sarah Palin, reflecting the mood of many Americans, has said that the United States should be ready to go to war with Russia should that occur (Meckler, 2008).

The majority of the above incidents occurred post-Cold War, challenging the widespread belief that the nuclear threat ended with the fall of the Berlin Wall. Further, nuclear proliferation and terrorism have added dangerous new dimensions to the threat:

India and Pakistan combined have approximately 150 nuclear weapons. These nations fought wars in 1947, 1965, 1971, and 1999. India suffered a major attack by Pakistani-based terrorists as recently as November 2008. Pakistan is subject to chaos and corruption. In October 2009, internal terrorists attacked Pakistan’s Army General Headquarters, killing nine soldiers and two civilians. A. Q. Khan, sometimes called “the father of the Islamic bomb,” ran a virtual nuclear supermarket and is believed to have sold Pakistani nuclear know-how to North Korea, Iran, and Libya. If terrorists were to obtain 50 kg of highly enriched uranium (HEU), it would be a small step from there to a usable nuclear weapon.

1

The worldwide civilian inventory of HEU is estimated at 50,000 kg. HEU is used in over 100 research reactors worldwide, many of which are not adequately guarded. South Africa stores the HEU from its dismantled nuclear arsenal at its Pelindaba facility. In November 2007, two armed teams, probably with internal collusion, circumvented a 10,000 volt fence and other security measures. They were inside the supposedly secure facility for almost an hour but, fortunately, were scared off before obtaining any HEU (Bunn, 2009). In the recent film, Nuclear Tipping Point, former secretary of state Henry Kissinger said that “if the existing nuclear countries cannot develop some restraints among themselves—in other words, if nothing fundamental changes—then I would expect the use of nuclear weapons in some 10-year period is very possible” (Nuclear Security Project, 2010). Richard Garwin, a former member of the President’s Science Advisory Committee (1962–65 and 1969–72) holds an even more pessimistic view. In Congressional hearings he testified: “We need to organize ourselves so that if we lose a couple hundred thousand people, which is less than a tenth percent of our population, it doesn’t destroy the country politically or economically … We need to have a way to survive such an attack, which I think is quite likely—maybe 20 percent per year probability, with American cities and European cities included” (Energy and Water Subcommittee, 2007: 31).

These incidents show that the nuclear coin has teetered on edge far too often, yet society’s lack of concern and resultant inaction demonstrate that nuclear optimism is a widespread illusion. A prerequisite for defusing the nuclear threat is to make society aware of the risk that it bears before catastrophe strikes.

Illuminating the risk

By fostering a culture of risk awareness, quantitative risk analysis has improved safety and illuminated previously unforeseen failure mechanisms in areas as diverse as nuclear power reactors, space systems, and chemical munitions disposal (Apostolakis, 2004). Quantitative risk analysis also has been applied to the risk of nuclear proliferation (Caswell, 2010) and nuclear terrorism (Bunn, 2009), and both Los Alamos and Lawrence Livermore National Laboratories have performed such analysis for various aspects of the country’s nuclear programs. It is therefore surprising that the applicability of quantitative risk analysis to estimating and reducing the failure rate of nuclear deterrence has only recently been recognized (Hellman, 2008), and its serious employment is yet to be accomplished.

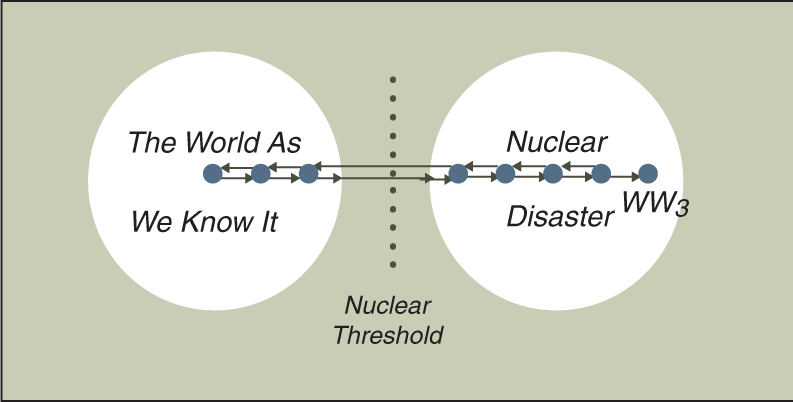

As depicted in the state diagram in Figure 1, quantitative risk analysis decomposes a catastrophic failure of nuclear deterrence into a sequence of smaller, partial failures. The large circle labeled The World As We Know It is a super-state that includes all possible states (conditions) of the world prior to a nuclear weapon being used in anger. Each such state is depicted by a small circle or dot, and the arrows indicate possible moves from one state to another as international tensions rise and fall. In reality, there are many more states than could be depicted in the figure.

Quantitative risk analysis decomposes a catastrophic failure into a sequence of partial failures.

During the Cuban Missile Crisis of 1962 the world was in a state that had high potential for crossing the Nuclear Threshold, while today we are in one of the much safer states, near the middle of the super-state. The other super-state, labeled Nuclear Disaster, includes all possible states after the nuclear threshold has been crossed. That occurs the first time a nuclear weapon is used in anger, for example in a terrorist attack, in a regional nuclear war (e.g., India–Pakistan), or by an accidental missile launch. As devastating as the use of a single nuclear weapon would be, in time the world would recover, as indicated by the arrow re-crossing the Nuclear Threshold in the positive direction. The state labeled WW3 represents a full-scale nuclear exchange and is assumed to be a state of no return, as indicated by the lack of a return arrow to any other state in the diagram.

This state diagram helps explain why people have difficulty envisioning the possibility of a nuclear disaster: There is no direct path across the nuclear threshold from the usual states that we occupy. Nuclear optimists would be right if we never made enough mistakes to come close to the nuclear threshold. But, just as a sequence of miscalculations resulted in the Cuban Missile Crisis of 1962, our continued reliance on nuclear bluffs and Cold War-era nuclear strategies could again take us to the brink, and possibly beyond.

Quantitative risk analysis allows improved estimates of the catastrophic failure rate because existing data on partial failures can be used in the analysis. For example, in nuclear power plant design, reliable data exist for the failure rates and repair times of many components. Utilizing this information allows better estimates of how frequently the plant will be in a vulnerable state. Applying the same approach to a failure of nuclear deterrence, we have significant information on the frequency of nuclear threats, international crises, and other events that put the world at greater risk. Utilizing those data allows better estimates of the risk of nuclear deterrence failing.

While other definitions for a failure of nuclear deterrence are possible, this article uses a full-scale nuclear exchange, depicted as WW3 in Figure 1. At the other extreme, deterrence could be defined to fail the first time the nuclear threshold is crossed (e.g., in a nuclear terrorist incident). The definition used here has the advantage of providing a system-level perspective and incorporating all lesser failure modes. By definition, a full-scale nuclear war can occur only after a first nuclear weapon has been used in anger. Thus, estimating the catastrophic failure rate also requires estimating the failure rates of nuclear terrorism and other events that could cross the nuclear threshold. If, instead, and as much current work suggests, we were to focus solely on the risks of nuclear terrorism and nuclear proliferation, then our analysis could overlook an unacceptable threat.

While estimates of the risk inherent in nuclear deterrence can be only approximate, they still can be extremely useful. For example, even if they were to indicate that civilization can be expected to survive another 1,000 years, then a child born today with a 78-year life expectancy would have almost a 10 percent chance of not living out his or her natural life due to our reliance on nuclear weapons—a highly unacceptable level of risk. 2

To simplify the analysis to the point that a lone researcher could undertake the task, when I performed the only currently existing quantitative risk analysis of nuclear deterrence, I lower-bounded the risk, meaning I purposely underestimated it (Hellman, 2008). Even so, I found the risk to be at least 200 times greater than living near a nuclear power plant. My preliminary risk analysis produced a statement, endorsed by a number of prominent individuals, 3 that urgently called on the international scientific community to undertake in-depth risk analyses of nuclear deterrence and, if the results so indicate, to raise an alarm alerting society to the unacceptable risk it faces, as well as initiating a second phase effort to identify potential solutions (NuclearRisk.org, 2008). Efforts to initiate such in-depth analyses have not yet borne fruit, and it is hoped that this article will help create support for such studies.

Illuminating the hope

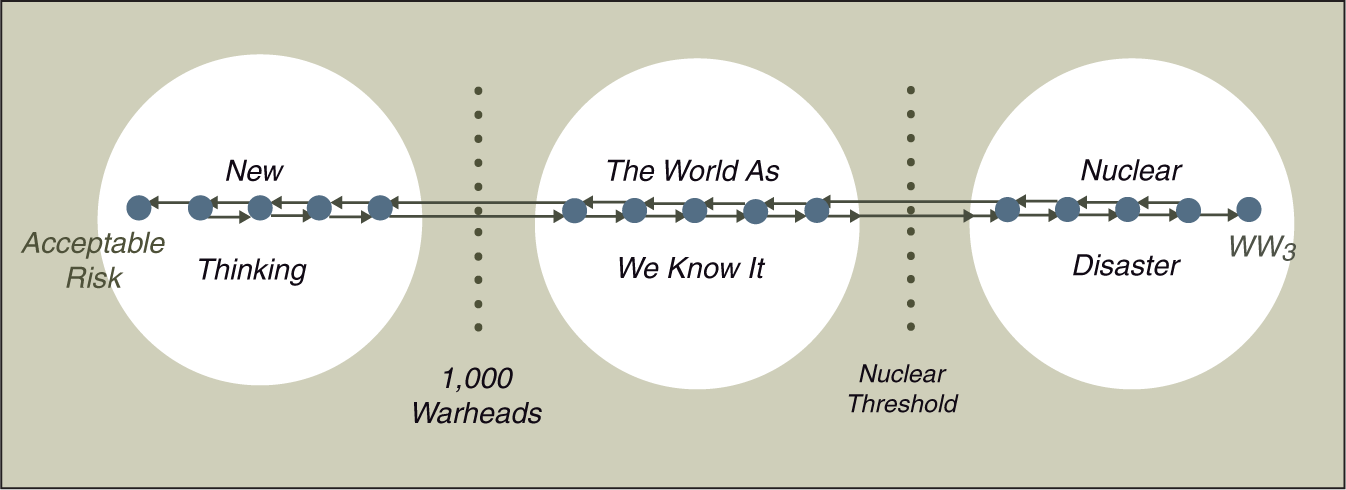

Just as quantitative risk analysis illuminated the risk by decomposing a nuclear disaster into a sequence of smaller mistakes, Figure 2 illuminates the hope by breaking down the solution into a sequence of smaller, more credible steps. The World As We Know It encompasses not only states of greater risk, but also lower risk states with greater hope. Today, we are in a state near the center of that super-state. As we reduce the risk, step-by-step, it becomes possible to cross a positive threshold, defined here as a worldwide arsenal of 1,000 nuclear weapons.

4

Reaching that intermediate goal will require an immense, 95 percent reduction from current levels. Yet, for better or worse, such a reduced arsenal still would support nuclear deterrence, obviating the many arguments that have been made against nuclear abolition. (If 300 nuclear weapons were allocated to the United States, 300 to Russia, and 400 to the other nuclear states, no rational leader would dare attack another nuclear power. During the Cuban Missile Crisis, Kennedy was deterred from attacking the Soviet missiles out of fear that even a few might survive and destroy a US city.)

The mirror image of quantitative risk analysis highlights the solution.

While a world with 1,000 weapons is still very dangerous and far from the ultimate goal, reducing the worldwide arsenal to that level will require a fundamental shift in human thinking. Albert Einstein recognized that need when, soon after Hiroshima and Nagasaki, he warned: “The unleashed power of the atom has changed everything, save our modes of thinking, and we thus drift toward unparalleled catastrophe” (Nathan and Norden, 1981: 376). Drawing on that quote, the new super-state in Figure 2 is named New Thinking.

Figure 2 illustrates another advantage of the risk-based approach to deterrence. The ultimate goal is to reduce the risk of a nuclear disaster to an acceptable level, indicated by the end state named Acceptable Risk. This approach has advantages over explicitly describing that goal. Some have described it as world peace, others as world government, and yet others as nuclear abolition. Calling it a state of acceptable risk avoids arguments about its exact nature, as well as whether it can be achieved. Reducing the risk to an acceptable level may well require something akin to world peace or world government or nuclear abolition. But discovering the exact nature of the goal is better deferred until we are closer to it and better able to discern its outlines. From our current vantage point, it is too easy for opponents to deride explicit goals, such as nuclear abolition, as fantasies. In contrast, it is hard to argue that we cannot reach a state of acceptable risk, or that we should not strive to do so.

The lack of a direct path from our current state to the desired end state explains why many people dismiss solutions to the nuclear dilemma as impossible dreams. Completely solving the problem is impossible in our current environment. But, once we move to lower risk states, the environment changes and new possibilities come into clearer view. The positive half of Figure 2 is a graphic way of expressing a metaphor used by former secretaries of state Henry Kissinger and George Shultz, former defense secretary William Perry, and former US senator Sam Nunn (Democrat of Georgia) in their pioneering sequence of opinion editorials: In some respects, the goal of a world free of nuclear weapons is like the top of a very tall mountain. From the vantage point of our troubled world today, we can’t even see the top of the mountain, and it is tempting and easy to say we can’t get there from here. But the risks from continuing to go down the mountain or standing pat are too real to ignore. We must chart a course to higher ground where the mountaintop becomes more visible. (Shultz et al., 2008)

The first critical step is for society to recognize the unacceptable level of risk that it currently faces. Until that is accomplished, there will be inadequate interest in alternatives to the nuclear status quo and, in Einstein’s words, we will continue to “drift toward unparalleled catastrophe” (Nathan and Norden, 1981: 376). The National Academies (the National Academy of Science, the National Academy of Engineering, and the Institute of Medicine) are ideal institutions for helping society take that first step. The Academies and their research arm, the National Research Council (NRC), have frequently provided input to the government on urgent matters involving science and technology. Their reputation for objectivity and impartiality make them ideal for applying risk analysis to nuclear deterrence.

As an example of their ability to reach consensus on controversial issues, during the 1990s I served on an NRC committee that, at Congressional request, made far-reaching recommendations on securing our nation’s cyber-structure (National Research Council, 1996). Although competing desires of the intelligence community, law enforcement, and privacy advocates had previously produced a standoff, and our committee included representation from all three groups, we were able to reach unanimous recommendations, most of which were implemented fairly rapidly and made major improvements to the nation’s information security. The Academies have also made important contributions on risk-related issues, including the ground-breaking study, Risk Assessment in the Federal Government, commonly known as the Red Book (National Research Council, 1983). A more recent example is an ongoing study of the Deepwater Horizon oil spill (National Research Council, 2010).

The Academies and the National Research Council stand ready to help answer the difficult questions surrounding how best to reduce the risk of a nuclear disaster. But first they need to be asked. Such a request can come from either Congress or an agency within the executive branch. A Congressional request would be particularly helpful for raising awareness if it resulted in hearings after the study was completed. As Henry Kissinger remarked in Nuclear Tipping Point: “Once nuclear weapons are used, we will be driven to take global measures to prevent it [from happening again]. So some of us have said, let’s ask ourselves: ‘If we have to do it afterwards, why don’t we do it now?’” (Nuclear Security Project, 2010).

Footnotes

Acknowledgements

The author wishes to thank Dr. Richard Duda, Dr. Pavel Podvig, and Mr. Bruce Roth for helpful comments on earlier drafts of this article.

1.

Unlike more complex plutonium-based implosion weapons, those using HEU’s simple gun assembly are unlikely to require testing prior to use. The HEU bomb used on Hiroshima had never been tested.

2.

If an event has 1 chance in 1,000 of occurring each year, then the probability of it not happening in 78 years is 0.99978, i.e. 92.5 percent, and the probability of it occurring within that time frame is 7.5 percent. In order not to imply unwarranted accuracy, this is rounded to 10 percent.

3.

Prof. Kenneth Arrow, Stanford University, 1972 Nobel Laureate in Economics; Mr. D. James Bidzos, Chairman of the Board, VeriSign Inc.; Dr. Richard Garwin, IBM Fellow Emeritus, former member President’s Science Advisory Committee and Defense Science Board; Adm. Bobby R. Inman, USN (Ret.), University of Texas at Austin, former Director NSA and Deputy Director CIA; Prof. William Kays, former Dean of Engineering, Stanford University; Prof. Donald Kennedy, President Emeritus of Stanford University, former head of FDA; Prof. Martin Perl, Stanford University, 1995 Nobel Laureate in Physics.

4.

Clearly, this positive threshold is more subjective than the negative nuclear threshold, and other definitions are also possible. In keeping with its order of magnitude approach, this paper uses 1,000 weapons worldwide, of all types, whether deployed or in storage. Not everyone agrees that reducing the worldwide nuclear arsenal to that level would result in less risk, and resolving that question would be one task of the proposed in-depth quantitative risk analysis.

Author biography