Abstract

Modern societies are characterized by unprecedently broad and fast diffusion of various forms of false and harmful information. Military personnel’s motivation to defend their country may be harmed by their exposure to disinformation. Therefore, specific education and training programs should be devised for the military to systematically improve (social) media literacy and build resilience against information influence activities. In this article, we put forward a useful methodological approach to designing such programs based on a case study: the process of developing a media literacy learning platform tailored to the needs of the Estonian defense forces in 2021. The approach is grounded in data on (a) the current needs and skills of the learners, (b) the kinds of influence activities that the learners may encounter, and (c) the learning design principles that would enhance their learning experience, such as learning through play and dialogue through feedback.

Keywords

The uses of disinformation in international influence activities, such as those propagated by the Russian Federation as a part of its hybrid warfare against its neighbors and NATO allies, pose major challenges to Western democracies (Doroshenko & Lukito, 2021; EU Commission, 2019; Hellman & Wagnsson, 2017; Lanoszka, 2016, 2019; Ramsay & Robertshaw, 2019; Wagnsson, 2022). In this article, we focus on how Western countries could respond to this challenge by providing new media literacy training to their military personnel so that they would better recognize and resist disinformation that they may be exposed to online. We demonstrate a novel course development methodology that is specially suited to the needs and culture of armed forces and helps service members learn to deal with disinformation.

Modern societies—and armed forces therein—are affected by the unprecedently broad and fast diffusion of various forms of false and harmful information due to complex sociopolitical, cultural, and technological changes (Freelon & Wells, 2020; Humprecht et al., 2021; Jungherr & Schroeder, 2021; Kapantai et al., 2021; Miller & Vaccari, 2020; Tenove, 2020; Ventsel et al., 2021; Wardle & Derekshan, 2017). While the combination of critically thinking citizens and free media is crucial for the proper operation of democracy (Habermas, 2006), contemporary social media amplifies misleading information and is dominated by affective reactions to current events rather than a rational debate—a tendency enabled by the prevalence of emotionally and visually oriented communication (Chadwick & Howard, 2009; Chadwick et al., 2022). Social media disinformation is increasingly used during military conflicts (see, for example, Doroshenko & Lukito, 2021), and the rise in false information has been conceptualized as a major concern for military commanders (Verrall & Mason, 2018). As modern military cultures in Western countries have a tendency toward self-steering practices and toward uniformed organizations becoming less insulated from the rest of the society (Soeters et al., 2006), it is necessary to improve the media literacy of military personnel as social media users.

As part of our research, we developed a new web-based media literacy learning platform Learn to Recognise Manipulation (Õpi ära tundma manipulatsiooni in Estonian, available at https://mojutustehnikad.ee) that, unlike the previously existing learning tools 1 , was designed specifically for the defense forces of the Republic of Estonia, a NATO country that borders Russia and has been a frequent target of its influence operations. Military personnel are among the targets of such operations, launched to curb their will to defend their country and erode trust in their political system (Nissen, 2015; Schreckinger, 2017). Malicious foreign influence via (social) media is often based on appeals to narratives and events that bear specific relevance to the identity and cultural memory of the target of manipulation and affect them emotionally (e.g., Hansson et al., 2022; Ventsel et al., 2021b). Therefore, the development of the learning platform involved interdisciplinary and multi-method preliminary research, using surveys among the potential users of the learning platform, discourse analysis of media texts in Russia’s state-controlled channels that contain manipulative messages, and a review of literature on learning design that would be most suitable for the users. By being grounded in data about the service members and the larger context, this evidence-based approach to designing information literacy learning platforms could be applied to develop platforms tailored to the needs of other countries and help address the disinformation-related challenges faced by militaries in different parts of the globe.

This work is rooted in the action research paradigm (Stringer & Aragon, 2021) where researchers themselves are involved in solving a real-world problem and bringing about positive change rather than remaining observers. The main steps in action research include gathering and generating data, implementing practical solutions, and reflecting upon the knowledge generated in the process. These steps are reflected in the structure of the article. The first section summarizes the preliminary research that underpins the development of the new learning platform and helps to understand the learners (in our case, the conscripts and active servicemen in Estonian defense forces), the influence activities that the learners may have to deal with (in our case, the main strategies and means of foreign influence deployed by Russian state-controlled channels), and the learning design principles that facilitate web-based learning. The second section describes the design of the learning platform, outlining its topics and types of exercises presented to the learners. The third section provides the researchers’ reflection on the main insights gained in action and suggestions on how these could be applied for the future development of online learning platforms in military training to build resilience against hostile information influence activities in other countries.

Methodological Steps for Developing a Learning Platform

Understanding the Learners

The process of developing a learning platform should begin by gaining an overview of the level of awareness of hostile information influence activities and potential problems regarding this issue among the learners. In the case of the Estonian defense forces, we first carried out a quantitative survey to map out the (social) media consumption habits of active servicemen and conscripts, evaluated their media literacy, and inquired about their experiences with hostile influence activities on social media. Research into modern information warfare has shown that influence activities against democracies and their citizens take place primarily through social media as it enables strategic actors to maintain their loyal supporters and shape their perception of the world, win the support of an impartial audience, and spread narratives that undermine their opponents (see Nissen, 2015, pp. 84–85; Singer & Brooking, 2018).

We asked the learners to complete a questionnaire that covered four themes: media consumption (what channels were used, level of trust toward these channels), detecting and responding to fraudulent messages (what kind of false or deceptive information they have encountered), competences and training needs of conscripts (how conscripts assess their ability to identify misinformation), and the perception of threats affecting Estonia’s security, including the spread of false information. At the end of the questionnaire, we included a module where we provided the respondents with various examples of disinformation (memes, news clips, etc.) and asked them to describe what kind of goals could be achieved by disseminating these.

The survey was carried out in October 2020 and included responses from 1,035 conscripts and 200 servicemen. 2 The respondents ranked the television channels of Estonian Public Broadcasting as the most trustworthy sources of information while the national daily newspapers ranked second, followed by family/friends and social media (Youtube and Facebook). The results indicated that the spread of dis- and misinformation was seen by both conscripts (60%) and active army servicemen (65%) as one of the main threats for Estonia’s national security. In all, 72% of conscripts and 95% of active army members agreed that the reliability of information is most enhanced when it is transmitted via a reliable channel. Russian television channels were generally seen as providing unreliable information (49% of conscripts and 77% of active servicemen) and the same applied to social media (57% and 75%, respectively) despite the fact that social media is used most often for finding news. Respondents pointed out that, in their view, the perceived reliability of news is not affected by whether these are shared by friends/acquaintances (38% of conscripts and 29% of active servicemen) and whether these are illustrated by photographs (30% of conscripts and 23% of active servicemen), drawings, or graphs (42% of conscripts and 33% of active servicemen). However, the results of a survey test among conscripts suggested that a photo attached to the news actually might increase the audience’s trust in the news presented to them. In all, 16% of respondents claimed to have been exposed to false information almost every day and 29% at least once a week. For those respondents who consumed news most actively, these figures were even higher: 25% and 35%, respectively. Last but not least, more than 90% of training staff and 60% of conscripts agreed that conscripts should be taught skills on how to better recognize disinformation and information influence activities.

Importantly, attitudes toward military education reflect the norms and values underlying the country’s broader military culture (Wilson, 2008). As Estonia has adopted an integrated approach to national defense, staff members know that the scope of ensuring the country’s security extends beyond military defense and involves international activities, civil sector support to armed forces, and psychological defense. The latter could be understood as “the achievement and maintenance of information resilience against propaganda within own population or armed forces” (Nissen, 2015, p. 29). The central defense policy document, the National Security Concept of Estonia (2017), states that “psychological defense is about informing society and raising awareness about information-related activities aimed at harming Estonia’s constitutional order, society’s values and virtues.” The learning platform could help to consolidate this mindset within armed forces and provide guidelines for applying these principles in practice.

Understanding the Influence Activities

The second step in the development process of a media literacy platform involves a careful study of the types of malicious influencing that the learners are most likely to encounter or be susceptible to. This is often complicated because influence activities organized according to the principle of hybrid warfare may not necessarily be part of conventional military operations. Instead, hostile influencing may occur in the news media, social media, ordinary civilian conversations, and other non-military environments. Information warfare is often waged with the help of civilians and bystanders, thereby not appearing to the public as a foreign state activity (Jantunen, 2018, p. 37; Nissen, 2015). Influencers may stay hidden by not publicizing their own messages but rather staging certain events, such as protest meetings, to bring about a desired effect. 3 The potential consequence of hostile information influence activities is the increase of instability and polarization and the weakening of social cohesion.

To better understand the specific influence activities that the defense staff in Estonia may be exposed to, we carried out a series of qualitative discourse analyses, focusing on the identification of the key messages appearing in the Russian disinformation texts and the ways in which they were constructed. Our dataset included stories from Estonian, Russian, and English language (social) media between 2019 and 2020, such as the Kremlin-funded information channels RT, Sputnik and ITAR-TASS, NTV, and Pervij Kanal (on these channels, see Bradshaw et al., 2022; Mölder et al., 2021; Wagnsson, 2022). We mapped out the topics of the messages that contained information manipulation and examined the strategic construction of these stories. Specifically, we explored how the stories were worded so as to appear more credible and how the hostile goals were hidden in the text (e.g., which “experts” or institutions were used to legitimize the claims), what means were used to attract the audience’s attention (e.g., references to culturally significant or conflictual topics), and what rhetorical/narrative/semiotic means were used to damage the reputation of NATO and the European Union (e.g., naming, antithesis, metaphors, metonyms, and attribution of agency). The results of this work have been published in various academic journals and books (Hansson et al., 2022; Madisson & Ventsel, 2021, 2022; Ventsel, in press; Ventsel et al., 2021a, 2021b).

The results of preliminary research can be used as a basis for specifying the topics and tasks presented on a learning platform. The analysis of media texts indicates what could be some of the more common manipulation strategies and techniques the learners at hand may encounter. In our case, the analyses referred to above suggested that conscripts in Estonia would benefit from learning how to identify various forms of disinformation, attempts at polarizing groups and discrediting opponents, and the uses of false identity. Therefore, as will be demonstrated subsequently, we designed the tasks of the learning platform to cover these four topics. The surveys, however, provide insights into the possible media literacy-related weaknesses and biases of the learners that could be addressed in the tasks. For instance, as the results of our survey suggested that conscripts tend to regard as more trustworthy the news items that include photographs and refer to expert sources, we specifically included tasks in our learning platform that made use of photographs and manipulated sources.

Understanding the Learning Design Principles

Drawing on the research done in the previous phases as well as on the wide range of various studies that address the topics of media literacy, countering hostile information influence activities in education, and digital learning practices, we arrived at three principles that we saw as foundational for designing the learning experience on the platform: (a) enhancing media literacy, (b) learning through play, and (c) establishing a dialogue through feedback. Below, we will briefly explain what we mean by these principles, and why we consider them important for building resilience against hostile information influence activities.

Enhancing Media Literacy

Media literacy has been conceptualized in various ways, and there is no unified definition. The task of defining media literacy has become even more challenging due to the wide range of closely related and overlapping literacy concepts that have flooded the education field with the occurrence of new media (see Chen et al., 2011; Hartley, 2017; Koltay, 2011; Scolari et al., 2018). One of the early definitions of media literacy comes from Aufderheide (1993) who has explained media literacy as the ability to access, analyze, evaluate, and communicate messages in a variety of forms. These four aspects, due to their comprehensiveness and adaptability to various contexts, have been often taken as the basis for describing the skillset a person must possess to be considered literate in media (see Koltay, 2011; Livingstone, 2004).

Developing media literacy skills has been discussed in various research as a crucial preventive measure to enhance students’ ability to deal with misinformation (Bjola & Pamment, 2016; Sanderson & Ecker, 2020; Wineburg & Reisman, 2015). There are also preliminary studies that show the effectiveness of media literacy interventions in education for reducing the potential harm of media messages resulting from various information manipulation strategies (see Craft et al., 2017; Jeong et al., 2012; Walton & Hepworth, 2011). However, the skill-based view of literacy has its shortcomings as well. David Buckingham (2019, p. 219) has argued that the ability to evaluate media messages “must depend to some extent on knowing about content as well as form—understanding the topics at hand, rather than just how they are presented.” He suggests that a much broader notion of media literacy is necessary; one that does not approach fake news or other types of misinformation as an isolated phenomenon but develops critical thinking about the economic, ideological, and cultural dimensions of media (Buckingham, 2019, p. 213).

That is why, in regard to our platform, we conceptualized enhancing media literacy to involve two complementary dimensions: on the one hand, it entails developing the universal skills that allow students to navigate the contemporary information field successfully; on the other hand, it includes broadening students understanding of the nature of the modern media sphere and informing them on the various ways this sphere can be maliciously manipulated in the concrete context of national defense. This way students are enabled to work with concrete media messages as well as comprehend the broader processes these individual messages are a part of. The development of both of these dimensions is supported throughout the learning process on the platform.

Learning through Play

One approach to media literacy interventions against misinformation that has gained a lot of attention in recent years is game-based learning (Roozenbeek & van der Linden, 2019a, 2020; Literat et al., 2020; van der Linden et al., 2020). Many of such games follow similar logic of design: The game introduces some techniques of misinformation through experiential learning where the learners have to actively participate in information manipulation activities. The importance of experiential learning has been increasingly acknowledged in relation to online education, where engaging students through personal experience empowers them to take greater responsibility for their own progress in learning (see, for example, Alexander & Boud, 2018).

The games are usually short and entertaining and do not require too much intellectual effort or previous knowledge about the topic, as the activity lies mainly in choosing between various pregiven options. The games are based on learning scenarios that immerse players in a story. For example, in a fake news game called Harmony Square (harmonysquare.game), the player is hired as a Chief Disinformation Officer whose job is to disturb the idyllic neighborhood obsessed with democracy. The player is introduced to various manipulation techniques that can be used for fomenting internal divisions and pitting its residents against each other. There are preliminary studies that have shown the effectiveness of such games in building resistance against information influence activities (Basol et al., 2020; Maertens et al., 2021; Roozenbeek & van der Linden, 2019b). The effectiveness of this approach has been explained through the inoculation theory (Cook et al., 2017; Roozenbeek & van der Linden, 2019a, 2019b, 2020; van der Linden et al., 2017) which was developed by psychologist William J. McGuire in the 1960s (see McGuire, 1964). As explained by Roozenbeek and van der Linden (2019a), the theory of inoculation is based on a medical analogy:

Injections that contain a weakened dose of a virus can confer resistance against future infection by activating the production of antibodies. Inoculation theory postulates that the same can be achieved with “mental antibodies” and information. In other words, by pre-emptively exposing people to a weakened version of a (counter)-argument, and by subsequently refuting that argument, attitudinal resistance can be conferred against future persuasion attempts. (p. 571)

Hence, the misinformation games vaccinate the players against manipulation techniques by engaging them through perspective-taking tasks. One problematic aspect of this approach is whether or not it has any long-term effect. Some initial experiments show that the inoculation effect was no longer significant already 2 months after the intervention. It has been argued that the effectiveness could be connected to the level of active involvement, meaning that the more the participants are cognitively engaged in the process, both the level of learning and the longevity of the effect are heightened (see Literat et al., 2020; Maertens et al., 2021; McGuire & Papageorgis, 1961; Rogers & Thistlethwaite, 1969).

That is why, on our platform, while following the idea of inoculation through active participation in information-influence activities, we decided to shift our focus from game to play. In the conceptualizing play, we followed the ideas of Henry Jenkins, an American media scholar, who sees play as one of the central new media skills. In his interpretation, play indicates learners’ ability to experiment independently with their surroundings or the material they acquire, to invent new ways of solving problems, and to develop their readiness to experience different points of view through the embodiment of different roles (Jenkins, 2009). Incorporating play into the process of learning on our platform enabled us to move away from clear-cut definitions and open up a space of open experimentation where students have a wide range of possibilities to approach the problems of information manipulation.

Establishing a Dialogue through Feedback

While the wide range of possibilities and the freedom to choose your path in the process of learning can be seen as the basis for a meaningful learning experience (see English, 2013; Semetsky, 2015), it can also be a cause for experiencing anxiety, doubt, and uncertainty that at heightened levels could start to inhibit learning (see Tauritz, 2012). One possible solution to manage the indeterminacy of a learning process is by providing feedback to the learners. Especially in online learning platforms, where students have to be much more independent than in a regular classroom, the importance of meaningful and in-depth feedback is crucial for helping students to manage their learning more effectively (Wang & Wu, 2008) and avoid excessive confusion or anxiety in the process of learning (Arguel et al., 2019).

However, providing meaningful feedback can be problematic in the case of automated feedback, particularly if high-tech solutions are not accessible. In such cases, the creators of digital learning materials can be tempted to opt only for test-type solutions that allow evaluating students’ answers in terms of correct/incorrect which can bring about the oversimplification of the study object. To avoid this, we searched for ways how to establish a dialogue with the students through automated feedback. As has been brought out by Steen-Utheim and Wittek (2017, p. 20), dialogic feedback is a process in which individuals’ learning can be activated because others’ experiences, thoughts, and utterances are made visible and available in concrete contexts. From this perspective, a dialogic process can be initiated also by presenting students with an answer representing one exemplary solution to the problem at hand.

A comparison of students’ answers with the desired solution predefined by the instructors is quite a broadly adopted method for automatic feedback in online learning environments (Birch et al., 2016; Cavalcanti et al., 2021; Dutchuk et al., 2009). As shown by Erhel and Jamet (2013, p. 164) in the context of digital game-based learning, the type of feedback that presents a correct answer to students, leads the participants to devote more cognitive resources to deep cognitive processing, thereby triggering the central processes required for learning. By providing longer explanations and mapping out possible ways of reasoning, it is possible for the students to compare their own answers with the ones provided by the platform. Such feedback creates a dialogue between the learner and the learning environment and stimulates students to think about these topics independently.

In the following section, we will introduce the structure and content of the online platform and explicate how the learning design principles discussed in this part are manifested through the educational materials.

Design of the Learning Platform

Media literacy learning platforms should ideally be structured and designed in a way that helps the learners to recognize the most prevalent types of disinformation, critically analyze various kinds of media content, and act appropriately when they encounter potential disinformation. In our case, we chose to focus on topics and practical exercises that would best support building resilience against the main verbal and visual influence techniques that foreign influencers might use in (social) media to create tensions between different groups in society and discredit the Estonian constitution, Estonian defense forces, and NATO. We also wanted to improve the learners’ understanding of how bots may be used in information influence activities, how to identify harmful bots in social media, and what to do when they encounter a malicious bot. Therefore, based on the insights gathered during the preliminary research, we built a prototype of the web-based learning platform around four thematic blocks and respective sets of tasks: disinformation, polarization, discrediting opponents, and false identity. Admittedly, these four topics are not meant to provide a comprehensive overview of all possible techniques of influence but allow to introduce the phenomena of hostile information influence activities from different angles.

We designed the learning process so that each thematic block followed a similar four-level structure, starting with a brief introduction of the study topic followed by three types of exercises (individual, in pairs, and in groups). The exercises were inspired by the real-life influence techniques identified in media texts during the preliminary research phase (see the analyses in Hansson et al., 2022; Koppel & Hansson, 2022; Madisson & Ventsel, 2022; Ventsel, in press; Ventsel et al., 2021a, 2021b) but presented in a slightly modified and shortened form together with relevant contextual information to make these suitable for the learning platform. We also made sure not to breach anyone’s privacy, so we used fictional characters and simulated social media threads. Importantly, some learning tasks involve the participation of an instructor who could support learning and also provide feedback. We will explain and exemplify the four levels of the learning process below.

Level 1: Introduction

The introductory level begins with an affective trigger that is meant to intrigue the learners and to make them pay attention to the following topic. We selected potentially interesting news, memes, and videos for this initial attention-grabbing function.

For example, the introduction to disinformation begins with a news piece taken from the Russian TV channel Rossija 1 (2015, see https://mojutustehnikad.ee/vaarinfo/) that misrepresents an episode of Estonian TV comedy show “Tujurikkuja” as Nazi propaganda. In the original episode, aired years ago, the well-known TV comedians created a parody of the reality game show “Estonia is Searching for a Superstar” (a local version of “Pop Idol,” see https://en.wikipedia.org/wiki/Eesti_otsib_superstaari) that depicted it as a competition to “find the best Neo-Nazi.” However, the Russian news channel turned this parody into disinformation by shifting the context and presenting it as a timely and topical reflection of reality. The aim of selecting this particular example was to draw attention to the complexity of disinformation and illustrate how it may exploit fragments of information that are removed from their original context.

The introductory example is followed by a theoretical section where we give a more detailed overview of the topic, explain what is meant by disinformation, what are the main types of it, and which societal risks it may cause. We offer concrete examples of the most common manipulation techniques. At the end of the section, there are hyperlinks to additional materials on the topic (videos, short articles, etc.)

Level 2: Individual Work

The second level starts with exercises that are meant for individual processing. The aim of those exercises is to test and deepen the knowledge gained from reading the theoretical introduction. The learner has to conduct a short analysis of an example of hostile influencing.

For instance, the exercise about polarization involves analyzing a piece of news that illustrates a narrative of the moral decline of the Western world—one of the main polarizing narratives that spread Russian media. The news story talks of incest education in the Western education system (https://mojutustehnikad.ee/polariseerimine/ulesanne-1/) and the polarizing tonality is manifested by the usage of identity markers (ethnicity, sexual orientation, parental responsibility) as well as emotional expressions and appeals. The learner is asked to explain what techniques are used to induce polarization in that example, how the Us vs Them confrontation is created, and discuss which goals the influencer may want to achieve with this story. The learner is given a task to provide written answers to those questions, and the platform sends the answers to the supervisor who can give each student individual feedback.

Individual work ends with a multiple-choice test. After selecting an answer, a learner sees a detailed answer that explains the proposed problem. The aim of this test is to give learners an instant opportunity to assess their knowledge and to reiterate the lessons learned in the theoretical part.

Level 3: Pair Work

The third level involves an in-depth analysis of either one particular case or a number of different examples of influence activities. Such analysis tasks improve the learner’s capacity to recognize similar techniques of manipulation in real-life situations.

For example, at the beginning of a task concerning “discrediting opponents” (https://mojutustehnikad.ee/vastase-halvustamine/ulesanne-2/), the learner is shown a battery of sentences from the stories of Russia’s state-funded propaganda channel RT.com. Those stories mediate the Kremlin’s responses to the criticism NATO has expressed with regard to Russia’s cyber attacks on other countries. The learner has to write an explanation of why the usage of particular words and phrases in these sentences indicates the conscious attempts at discrediting and/or ridiculing NATO or its members.

Feedback is an important component of the third level. The platform enables the learners to send their analysis to a fellow learner or a supervisor. In the first case, the learners can read and check each other’s work. A possible weakness of this approach is that a learner may sometimes receive unhelpful or less detailed feedback if fellow learners have limited knowledge and expression skills. In such cases, the feedback should be preferably given by a supervisor who is well-informed about the topic and would assess all works based on the same assessment criteria. In addition to identifying certain techniques of manipulation, such an interactive approach enables establishing a dialogue through feedback. It also allows to deepen the knowledge gained from the introductory part and to develop the skills of analysis and argumentation.

Level 4: Group Work

The fourth level involves a do-it-yourself type of task that aims to advance learners’ understanding of how manipulation techniques work. It also relies on the synergy that is created by the co-experience of learning games and group work based on it.

For example, to learn about deceptive identities (https://mojutustehnikad.ee/identiteedipettus/ulesanne-3/), a group of three to four learners have to create a fake social media account (e.g., fake person, Hollywood star, Fembot) that seems as authentic as possible. The platform guides the learners to classtools.net or zeoob.com webpage that enables them to create fake profiles. In the second phase of the task, the groups send their accounts to other groups for analysis and feedback. The learners are asked to explain the main characteristics that indicate the inauthentic nature of such profiles. Presumably, a learner who has tried to create a fake account in a classroom situation will likely recognize similar manipulation techniques in real life. A similar teaching logic is applied in all fourth-level assignments of other thematic blocks (see the appendix).

Reflections on the Development Process of the Learning Platform

The learning platform described above is a prototype that could be developed further to include new themes and additional exercises. Based on our experience, there are at least three challenges that one may encounter in that process.

First, the selection of textual/visual examples to be used in exercises requires careful consideration: these should exhibit manipulation techniques that are (a) realistic, that is, based on real-life cases of influence activity, and (b) reasonably difficult for the learners to identify. The use of examples of a completely hypothetical or theoretical kind, or those that seem obviously propagandistic, might cause the learners to lose interest. In general, people tend to overestimate their capacity to detect manipulation (Martínez-Costa et al., 2022). But the resourcefulness of malicious influencers should not be underestimated. Detailed studies of manipulation techniques used in Russian media channels suggest that these may not always take the form of straightforward lies or fake news (e.g., Miskimmon & O’Loughlin, 2017; Ramsay & Robertshaw, 2019).

The second challenge follows from the first one. Much of the influencing is more complex than a mere presentation of particular pieces of inaccurate information as “facts.” Manipulation most commonly rests on contextual knowledge—the manipulator accomplishes its goals via incomplete or biased representations of circumstances, events, relationships, actions, and actors. This means that, to understand and complete the exercises, the learners must be provided with relevant contextual knowledge beforehand and then guided by an instructor who would oversee and provide expert feedback to the learners’ analysis. With that in mind, our platform includes a built-in facility for collecting students’ answers to exercise questions (provided that they give an explicit permission to do so on the platform). This allows the instructors to analyze learners’ interaction with the learning environment and, based on that, possibly make adjustments to the tasks on the platform. However, it certainly seems necessary to provide specialized training to the instructors so they could support learning and evaluate learning outcomes in the best way possible.

The third challenge concerns the tailoring of the exercises to the interests of a particular audience and ensuring their high learning motivation. As the target users of our platform included conscripts and military staff, we felt inclined to present them primarily with scenarios where Russian military authorities and NATO were the main actors, considering these as most familiar and relevant to them. However, the empirical analysis of Russia’s strategic communication suggested that the stories constructed and disseminated for hostile purposes may often be (at least seemingly) unrelated to military and security issues. For instance, malicious attempts at eroding trans-Atlantic relations and inflaming conflict among NATO allies could be made via narratives about economic/technological competition between the United States and China that appear to have nothing to do with Russia (see Hansson et al., 2022). Therefore, it seems necessary to (a) include in the learning platform at least some examples of such more clandestine forms of influence that may (at least initially) be perceived by the learners as somewhat distant or irrelevant and (b) provide more detailed explanations of the particular historical and political background that would help learners understand the possible harmful effects of such influence techniques. One way for motivating learners to engage with these more challenging analytic tasks is to present these as part of group work so that group members could support each other in interpreting the case and possibly also compete with other groups in coming up with the most insightful analysis.

Concluding Remarks

The importance of new media literacy programs needs to be viewed against the wider impacts of (social) media on public communication that affect citizens from all walks of life, not only in uniformed organizations. Social media environments tend to amplify false information and provoke affective reactions, thereby providing a fertile ground for malicious attempts at deceiving or confusing people and sowing division in society. The provision of media literacy learning materials specifically to the defense forces is increasingly necessary for highly mediatized societies where military personnel face the challenges posed by hostile influence activities. In this article, we have proposed a novel course development methodology that helps service members to deal with disinformation. The methodology is adaptable to different contexts of military education beyond Estonia. Based on our experience, we recommend that the development of the learning platforms should be grounded in a solid evidence-based understanding of (a) the current needs and skills of the learners, (b) the kinds of influence activities that the learners may encounter, and (c) the learning design principles that would enhance their learning experience. Media literacy could best be improved if the platform facilitates learning through play and establishes a dialogue through feedback.

We hope the insights presented earlier will initiate further discussions about media literacy in the armed forces and promote additional empirical studies as well as research and development projects. While our learning platform currently focuses on four types of influence activities, the topics addressed could be modified and/or expanded in the future based on the evolving needs of the learners and possible sociopolitical, cultural, and technological changes in their security environment. For instance, additional topics could include malicious uses of information leaks, threats, historical narratives, and various forms of social hacking. Our platform could be used as a prototype for building similar learning environments for other users beyond military personnel who could be affected by malicious foreign influence. For example, this could be redesigned specifically for the purpose of improving the situational awareness of citizens who are exposed to disinformation in the context of military aggression that is accompanied by hostile foreign influence, such as Russia’s war against Ukraine (Doroshenko & Lukito, 2021; Erlich & Garner, 2023; Golovchenko et al., 2018; Kuzio, 2019; Mölder & Sazonov, 2018). However, as suggested previously, this should in each case involve thorough preliminary research that would allow the topics and exercises to be tailored to their needs and the appropriate training of instructors who could support the building of resilience against hostile influence activities among other groups in society.

Footnotes

Appendix

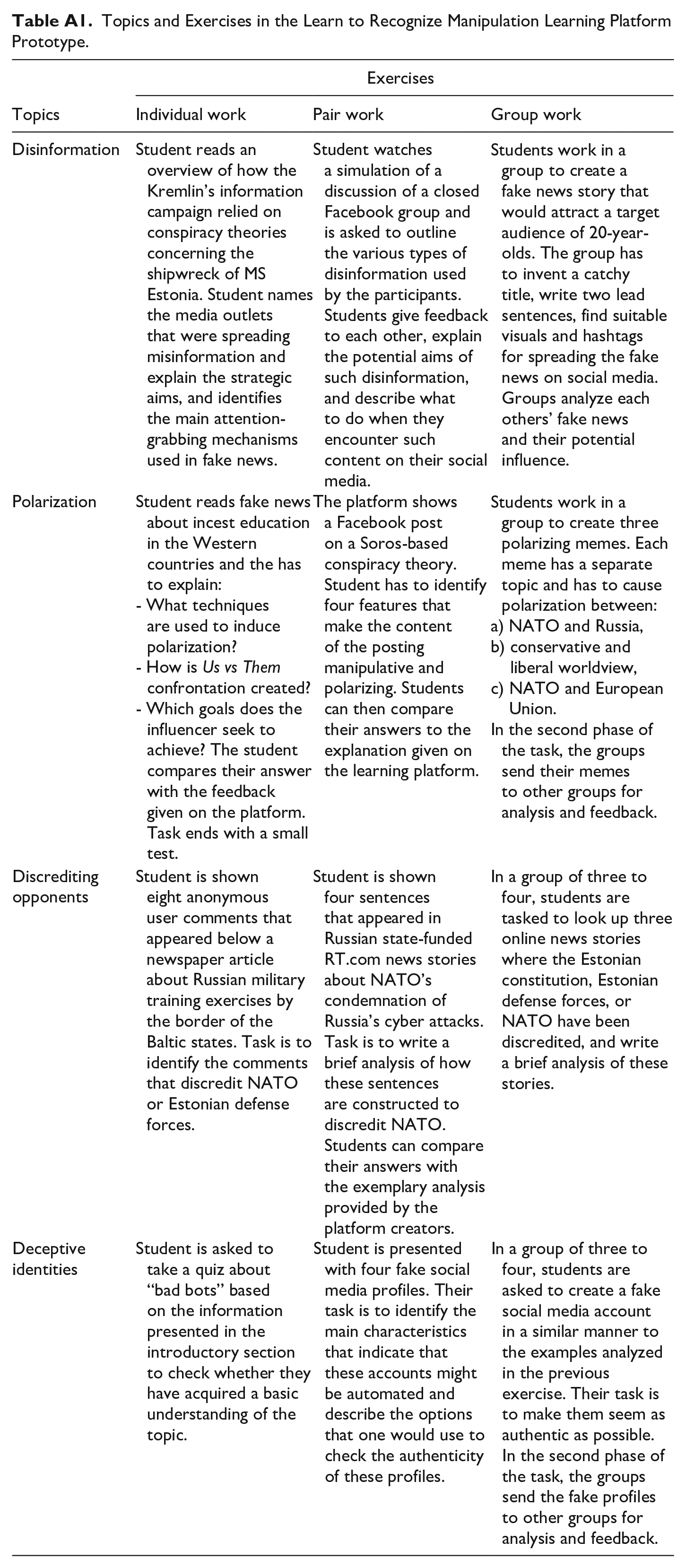

Topics and Exercises in the Learn to Recognize Manipulation Learning Platform Prototype.

| Topics | Exercises | ||

|---|---|---|---|

| Individual work | Pair work | Group work | |

| Disinformation | Student reads an overview of how the Kremlin’s information campaign relied on conspiracy theories concerning the shipwreck of MS Estonia. Student names the media outlets that were spreading misinformation and explain the strategic aims, and identifies the main attention-grabbing mechanisms used in fake news. | Student watches a simulation of a discussion of a closed Facebook group and is asked to outline the various types of disinformation used by the participants. Students give feedback to each other, explain the potential aims of such disinformation, and describe what to do when they encounter such content on their social media. | Students work in a group to create a fake news story that would attract a target audience of 20-year-olds. The group has to invent a catchy title, write two lead sentences, find suitable visuals and hashtags for spreading the fake news on social media. Groups analyze each others’ fake news and their potential influence. |

| Polarization | Student reads fake news about incest education in the Western countries and the has to explain: - What techniques are used to induce polarization? - How is Us vs Them confrontation created? - Which goals does the influencer seek to achieve? The student compares their answer with the feedback given on the platform. Task ends with a small test. |

The platform shows a Facebook post on a Soros-based conspiracy theory. Student has to identify four features that make the content of the posting manipulative and polarizing. Students can then compare their answers to the explanation given on the learning platform. | Students work in a group to create three polarizing memes. Each meme has a separate topic and has to cause polarization between: a) NATO and Russia, b) conservative and liberal worldview, c) NATO and European Union. In the second phase of the task, the groups send their memes to other groups for analysis and feedback. |

| Discrediting opponents | Student is shown eight anonymous user comments that appeared below a newspaper article about Russian military training exercises by the border of the Baltic states. Task is to identify the comments that discredit NATO or Estonian defense forces. | Student is shown four sentences that appeared in Russian state-funded RT.com news stories about NATO’s condemnation of Russia’s cyber attacks. Task is to write a brief analysis of how these sentences are constructed to discredit NATO. Students can compare their answers with the exemplary analysis provided by the platform creators. | In a group of three to four, students are tasked to look up three online news stories where the Estonian constitution, Estonian defense forces, or NATO have been discredited, and write a brief analysis of these stories. |

| Deceptive identities | Student is asked to take a quiz about “bad bots” based on the information presented in the introductory section to check whether they have acquired a basic understanding of the topic. | Student is presented with four fake social media profiles. Their task is to identify the main characteristics that indicate that these accounts might be automated and describe the options that one would use to check the authenticity of these profiles. | In a group of three to four, students are asked to create a fake social media account in a similar manner to the examples analyzed in the previous exercise. Their task is to make them seem as authentic as possible. In the second phase of the task, the groups send the fake profiles to other groups for analysis and feedback. |

Acknowledgements

The authors wish to thank the web and software studio Redwall Digital for helping to design the web-based learning platform, and colleagues from the Institute of Social Studies at the University of Tartu who conducted the quantitative survey among conscripts and active servicemen in Estonian defense forces.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Estonian Research Council grants 1716 “Relational Approach of Strategic History Narratives”, MOBTP1009 “A Semiotic approach to e-threat discourse: Analysis of Estonian media”, and SHVFI23109 “Strategic communication in the context of the war in Ukraine: lessons learned for Estonia”.