Abstract

The study addresses the effects of piloting methods on the cross-cultural comparability and reliability of the measurement of gender and age stereotypes. We conducted a summative evaluation of expert reviews, cognitive pretests and web probing. We first piloted a gender role, an ageism, and a children stereotypes instrument in German and American English. We then randomly assigned the original and piloted versions to respondents in Germany and the United States using an online survey experiment and quota samples. No configural invariance was shown by the original instruments and the reliability of the gender role instrument was insufficiently low. The results show that piloting methods increased reliability and improved measurement invariance, although the effects varied by topic. Cross-cultural expert reviews and web probing provided more consistent results than other methods. A combination of web probing and cross-cultural expert reviews can maximize both reliability and measurement invariance.

Keywords

Introduction

Cross-cultural (CC) research is concerned with comparisons across countries and regions. For statistical comparisons, data should not be biased by group membership and should also be reliable: with the lack of reliability little confidence could be placed in any differences found (i.e., Kline 2016). Potential bias due to group membership can be analyzed by means of measurement invariance (MI) analysis (Mellenbergh 1989; Meredith 1993). If MI is not shown in the data, group membership bias would confound the results (e.g., Benítez et al. 2022; Leitgöb et al. 2023). Bias may be due to respondents realizing the concept under investigation in different ways (concept bias), due to remarkable differences in the data collection methodology that confound group membership (method bias) or due to respondents’ different understanding or treatment of instrument indicators (item bias) (cf. van de Vijver 2018). Examples of comparability bias might be different conceptions of time spans when thinking about the future (Scheuch 1993) or CC differences in the conception of “household” and “family” (Hoffmeyer-Zlotnik and Warner 2014).

MI analyses have become increasingly popular in CC research, but invariance has often been found to be violated, suggesting that more attention should be paid to the appropriate piloting of measurement instruments to ensure invariance in CC comparisons (cf. Leitgöb et al. 2023). Piloting may include cognitive pretests or expert reviews. Cognitive pretests are qualitative studies of the burden on cognitive response processes (Willis 2015; Willis and Miller 2011) in terms of understanding questions, retrieving relevant information, forming judgments, and providing responses (Tourangeu et al. 2000). Web probing has recently been introduced as a method for conducting cognitive pretests online (Behr et al. 2020). Less expensive and time-consuming are expert reviews, which do not rely on qualitative studies and in which questionnaire design experts review a questionnaire for potential problems (Forsyth and Lessler 2004; Rammstedt et al. 2015). While it has been shown that problems identified in the cognitive pretests are associated with results that do not support MI (Benítez et al. 2022; Maitinger 2017), no studies have demonstrated that conducting CC cognitive pretests, web probing, or expert reviews to pilot questionnaires actually improves MI results.

Research on piloting methods has mainly focused on their effectiveness in finding cognitive problems. Comparisons of cognitive pretests with expert reviews have yielded mixed results (DeMaio and Landreth 2004; Presser and Blair 1994; Rothgeb, Willis, and Forsyth 2001; Willis, Schechter, and Whitaker 1999), whereas cognitive pretests and web probing have been found to be comparably effective (e.g., Lenzner and Neuert 2017). A few studies have compared different piloting methods with respect to the potential improvement in measurement quality in terms of reliability and validity, with cognitive pretests outperforming other methods (Maitland and Presser 2016, 2018; Yan et al. 2012).

We therefore address the research question of whether the comparability of data evaluated using exact MI (Meredith 1993), as well as the reliability of measurement in CC projects, can be improved by using a specific piloting method. We compared cognitive pretests, web probing, and expert reviews. We used Germany and the United States as examples for CC comparison and addressed the measurement of gender and age stereotypes. Contextual differences in labor force participation, child care, and population aging between the countries (Eurostat 2019; World Economic Forum 2022) may be related to the differences in residents’ understandings of concepts, which can lead to biased comparisons (André, Gesthuizen, and Scheepers 2013; Constantin and Voicu 2015; Lomazzi 2017).

To evaluate piloting methods, measurement instruments were first piloted and modified, and then evaluated in a CC survey experiment. We conducted a summative evaluation of piloting methods, which evaluates a method as an entity, as opposed to formative evaluations, which allow the effects of the elements of a method to be evaluated as a subsequent step (Taras 2005; Scriven 1967). The findings can help researchers to improve their decisions when conducting CC comparative research.

Methods

Data Analysis

MI analysis is conducted by Multigroup Confirmatory Factor Analysis (MGCFA; Mellenbergh 1989; Meredith 1993). The score of a manifest variable Y in each group j and for each individual i is described as a linear function (equation 1) between Y and the latent variable η Configural invariance is given if a manifest variable loads on the same latent factor in each group. Establishing configural invariance means that using the indicators for the given concept would be appropriate for the groups under investigation, but does not yet mean that there is no bias in statistical comparisons of latent variables or simple sum scores. Metric or weak invariance is supported when the loadings are comparable across groups. To evaluate metric invariance, a restriction on the equality of the corresponding factor loadings between the groups is introduced into the configural model. Equality of factor loadings is proven if the introduced restrictions do not significantly decrease model fit. If metric invariance is supported, measurement bias as an explanation for the results when comparing correlations (of latent variables or sum scores) can be ruled out. Finally, scalar or strong invariance is achieved when the manifest variables approach the latent means on a comparable metric. Scalar invariance is evaluated by restricting the respective intercepts to be equal between groups. Again, this restriction should not significantly decrease model fit. Support for scalar invariance allows the exclusion of measurement bias as an alternative explanation for the results when comparing latent or summarized mean scores.

MGCFAs to evaluate MI were conducted with the software Mplus 8.2 (Muthén and Muthén 2014). The latent factor variances and means were fixed to 1 and 0 respectively (cf. Byrne 2011). We also evaluated the scalar model when freeing factor means (scalar_a; Tables 2–4). The model fit of MGCFAs was evaluated using the chi-square test (CMIN), the root mean square error of approximation (RMSEA), and the comparative fit index (CFI) (Beauducel and Wittmann 2005). The CFI should be 0.95 or higher, while an RMSEA of 0.08 or less indicates an acceptable fit (Hu and Bentler 1999). Due to the ordinal nature and non-normality of the data, the robust maximum likelihood estimator was used, which is also an appropriate method for small samples (Li 2016; Muthén and Muthén 2014). A significant change of CMIN (Meredith 1993) or a change of ΔCFI ≥ 0.005 and ΔRMSEA ≥ 0.010 indicates significant differences in model fit (Chen 2007 for n < 300), and thus a lack of MI. Configural models with poor model fit were improved through modification search (e.g., Kline 2016).

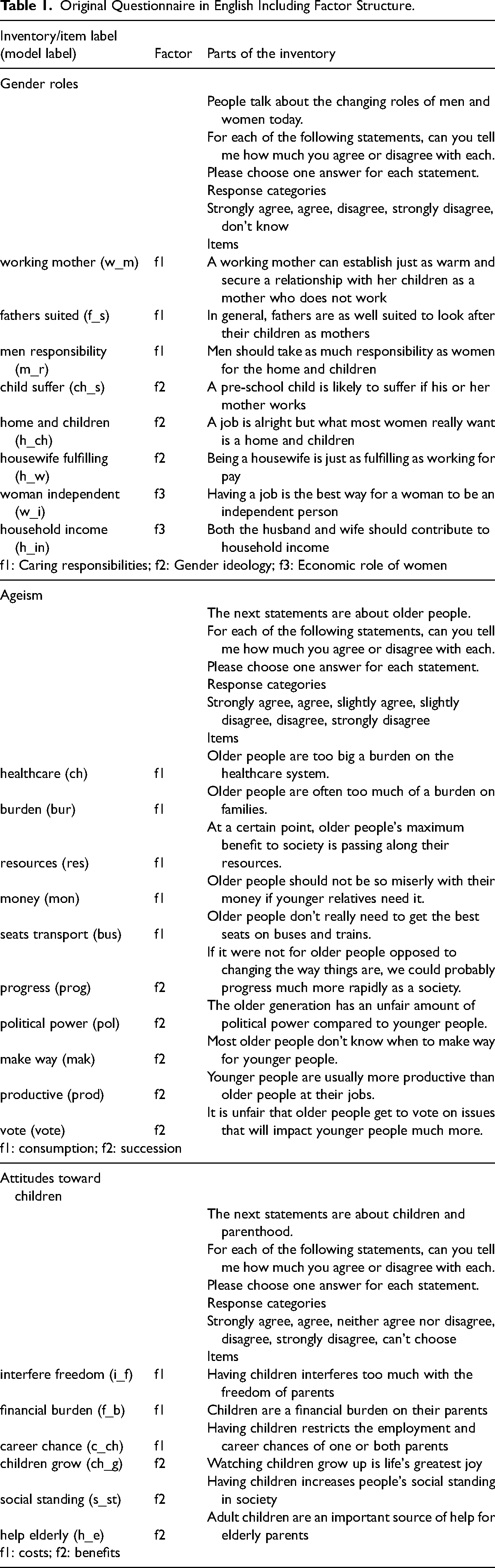

Original Questionnaire in English Including Factor Structure.

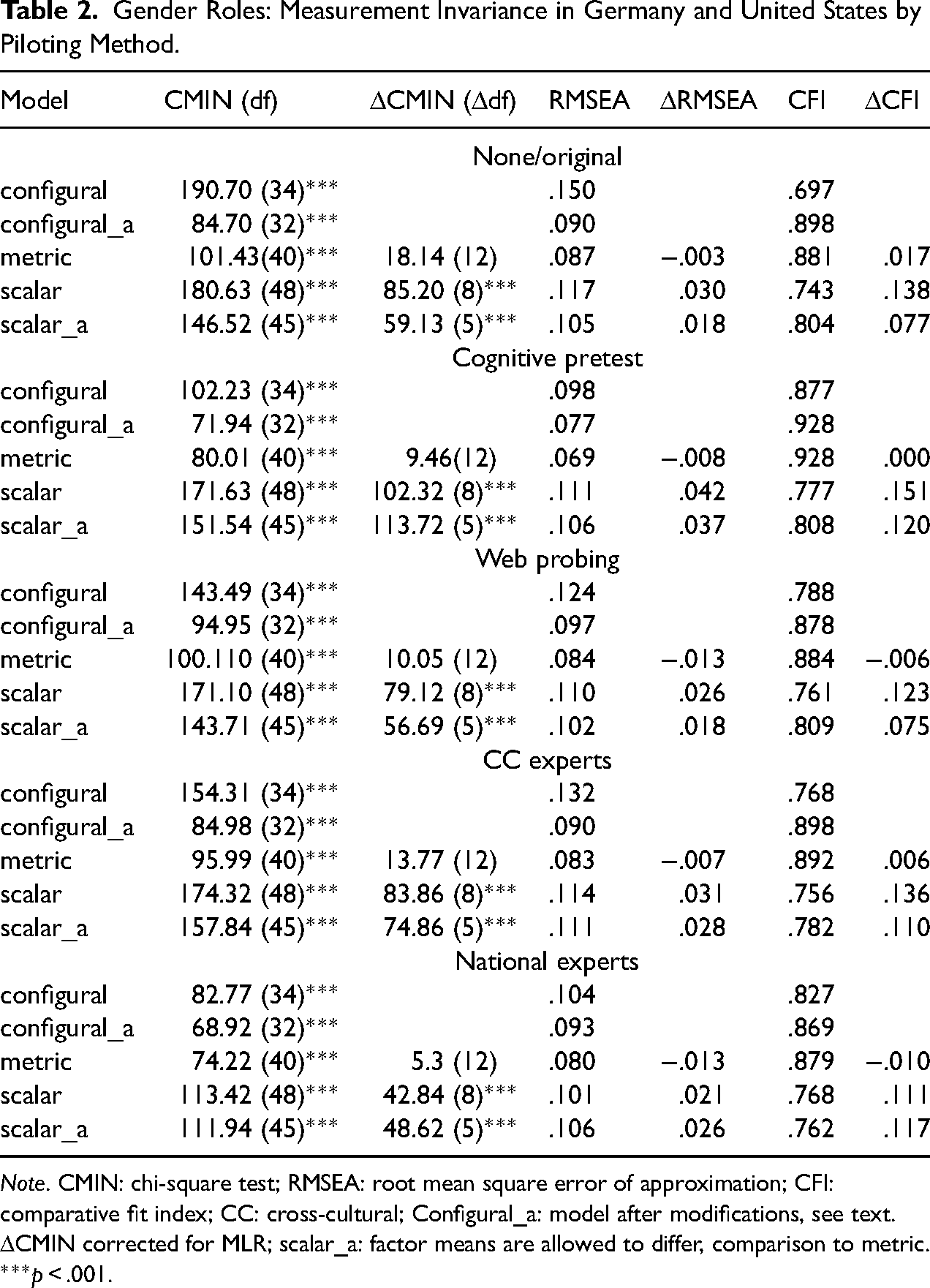

Gender Roles: Measurement Invariance in Germany and United States by Piloting Method.

Note. CMIN: chi-square test; RMSEA: root mean square error of approximation; CFI: comparative fit index; CC: cross-cultural; Configural_a: model after modifications, see text. ΔCMIN corrected for MLR; scalar_a: factor means are allowed to differ, comparison to metric. ***p < .001.

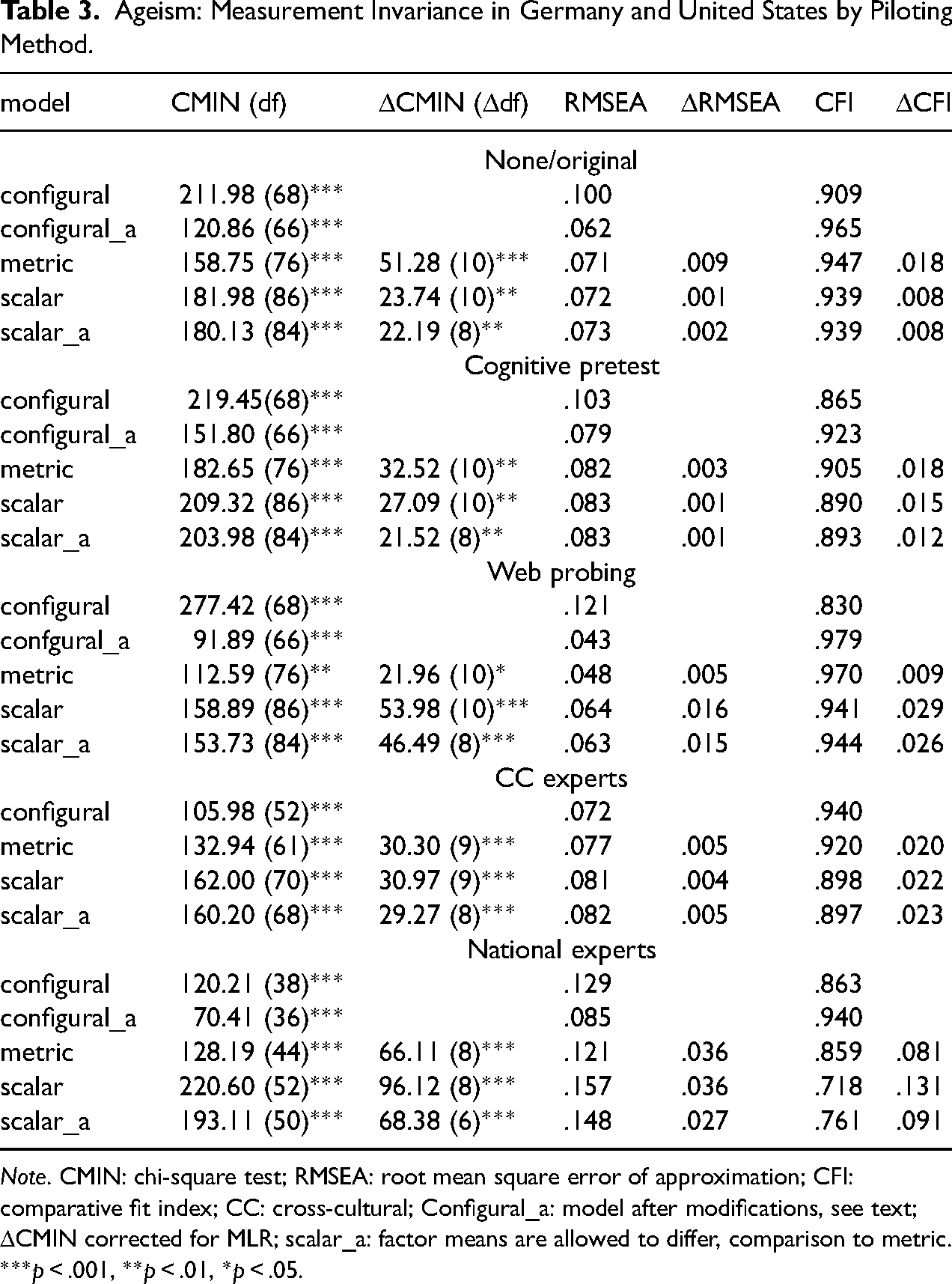

Ageism: Measurement Invariance in Germany and United States by Piloting Method.

Note. CMIN: chi-square test; RMSEA: root mean square error of approximation; CFI: comparative fit index; CC: cross-cultural; Configural_a: model after modifications, see text; ΔCMIN corrected for MLR; scalar_a: factor means are allowed to differ, comparison to metric. ***p < .001, **p < .01, *p < .05.

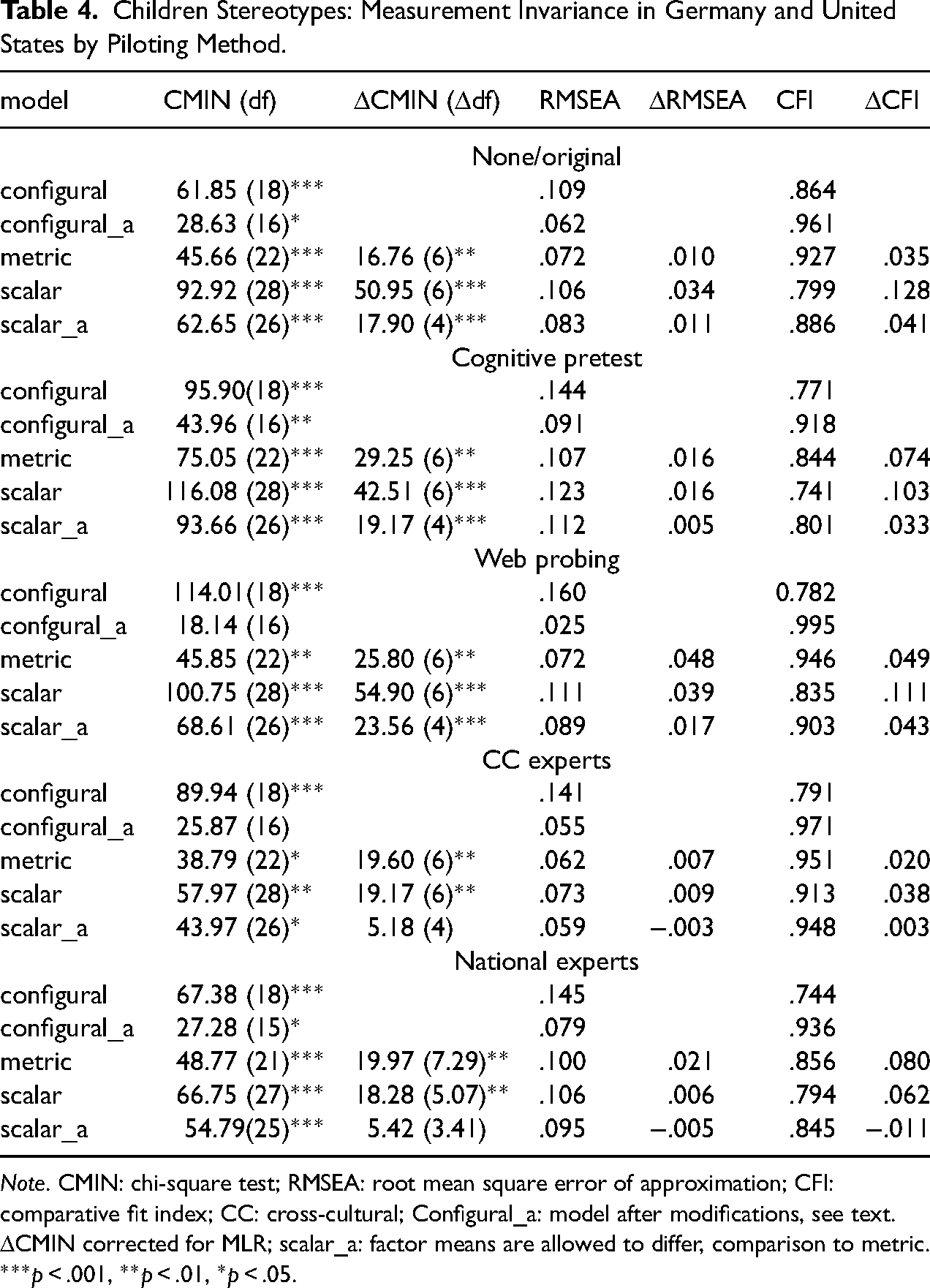

Children Stereotypes: Measurement Invariance in Germany and United States by Piloting Method.

Note. CMIN: chi-square test; RMSEA: root mean square error of approximation; CFI: comparative fit index; CC: cross-cultural; Configural_a: model after modifications, see text. ΔCMIN corrected for MLR; scalar_a: factor means are allowed to differ, comparison to metric. ***p < .001, **p < .01, *p < .05.

For our own reliability analyses, we used factor analysis-based estimation of latent composite reliability (CR; Raykov and Marcoulides 2011: 161), which was compared between two languages (as two groups) for each method separately by means of the MGCFA (Menold and Raykov 2016). CR is based on the so-called congeneric measurement model and does not presume equal factor loadings or uncorrelated error term variances. It is also possible to consider correlated error terms as a part of the error variance. We used the estimation of CR (ρ) for the general structure (Raykov 2012) to obtain one score for each group while considering multifactorial structure, for example, for two factors as shown in Equation 2.

Measurement Instruments Piloted

Table 1 provides an overview of the instruments in U.S. English and their underlying factor structure (see Online Supplement A for the German wording).

The first inventory was the gender role attitudes scale with eight items as employed in the European Values Study (EVS; GESIS 2011). The items make up the factors of “caring responsibilities,” “gender ideology,” and “economic role of women” (Lomazzi 2017). Lomazzi (2017) reported that the Cronbach's alpha for the scale was sufficiently high (.78); however, there were partially very low values in some countries. 1 Constantin and Voicu (2015) did not support the scalar invariance of the International Social Survey Program (ISSP) instrument that partly used the same indicators as EVS. Our own analyses with the EVS 2008 data for Germany and Great Britain did not support configural MI (goodness of fit [GOF]: CMIN = 1073.79, df = 34, p < .001; CFI = 0.76; RMSEA = 0.13).

The second instrument was the Succession, Identity, and Consumption ageism inventory by North and Fiske (2013a). It was developed and tested in the United States but has not yet been translated or used in the German language. The U.S. instrument provided high reliability (Cronbach's α ∼ .90 for different factors). We excluded the Identity factor and omitted items with low loadings and redundancy (North and Fiske 2013a, 2013b), resulting in a ten-item scale with five items on each of the two remaining factors (Table 1). The inventory was translated into German by a bilingual expert in questionnaire design.

The six-item inventory on attitudes toward children from the ISSP 2012 (GESIS 2016) was chosen as a third measurement instrument. The factor “costs” relies on the economic theory of fertility (Becker 1960) and describes the possible costs of having children. The factor “benefits” is related to the Value-of-Children-approach (Hoffman 1972), which describes children as beneficial to parents. Own analyses for the two-factor model with the ISSP 2012 data from Germany and the United States were associated with estimation problems and rejected configural invariance (CMIN = 119.06, df = 16, p < .001; CFI = 0.891; RMSEA = 0.069). As the items of the two factors are also keyed differently, it is plausible to assume the existence of an artificial method factor associated with either the acquiescence or the keying of the items (Schriesheim et al. 1991; Swain et al. 2008). We therefore modeled an attitude factor with the acquiescent response style (AS model) or the keying effect (KE model) as additional factors. The specification is illustrated in Online Supplement F. Both models showed acceptable model fit and elimination of estimation problems (e.g., for the AS model: CMIN = 81.88, df = 16, p < .001; CFI = 0.93; RMSEA = 0.055). Metric and scalar invariance could not be supported in either model (e.g., results for the AS model metric: ΔCMIN = 19.69, Δdf = 6; p < .01; ΔCFI = .014; scalar: ΔCMIN = 115.28, Δdf = 5; p < .001; ΔCFI = 0.10; ΔRMSEA = 0.017).

Piloting Studies

The instruments were piloted with two different expert reviews, a cognitive pretest and a web probing between July and September 2018. For all methods, a standard procedure was implemented (see Online Supplement C for details). Participants for the cognitive pretests and web probing were recruited to differ by gender, age, education, and German vs. United States residential status or citizenship. Of the cognitive pretest participants, 13 were German and eight were U.S. citizens. The web survey for online probing used a commercial online access panel. A total of 333 respondents (Germany: n = 167; United States: n = 166) participated. The expert review consisted of two steps: (a) the instruments were analyzed separately in German and U.S. English by two respective questionnaire design experts (national experts); (b) a team of two CC experts used the suggestions of the national experts with the task of maximizing comparability. The staff was different for each method but matched in terms of expertise and experience.

Revisions were made to all parts of the questionnaire, including the question stem, items, and response alternatives (Online Supplement B and D). A general strategy in all piloting methods (except national experts) was to unify wording either within an instrument or for all three instruments. All groups removed double-barreled stimuli in the item “carrier chances” of the children stereotypes, sometimes using different methods. The cognitive pretesting team skipped one stimulus, but the web probing team and experts preferred to split the barrels into two items leaving the selection of the appropriate item to the subsequent MI analysis. 2

Unique to the web probing was the specification of the question context by adding aspects or examples. For the ageism inventory, the web probing team replaced the item “resources” with two items to avoid using an unclear term. 3 Cognitive pretests and the German national expert consistently avoided negations. In the ageism scale, the CC experts deleted the item “seats transport,” and their version contained nine items. The national expert for German deleted this and the item “vote,” which resulted in eight items. The questionnaire design expert in German implemented the highest number of revisions and deleted items more extensively than CC experts.

A large discrepancy between the teams was observed in the revisions of the rating scales. The unifying of the three instruments was implemented by cognitive pretests and CC experts and the English national expert, while the web probing and the German national expert decided to use five or seven category rating scales instead of the original four or six categories, thus staying closer to the original versions. There was disagreement on the use of the middle category, which was not used in any of the experts’ versions. In addition, the German expert rejected the use of the “Do Not Know” (DK) category, while other teams consistently used it. Due to disagreement between the two national experts, the English version used a DK category and an agreement dimension, while the German expert did not use the DK category and preferred the “applies” versus “does not apply” dimension. The web probing team addressed the different rating scale polarity in German and English and implemented rating scales consistently as bipolar in both languages.

Survey Experiment to Evaluate Piloting Methods

We conducted an online survey experiment in Germany and the United States. Respondents in each country were randomly assigned to the following five versions (a) original questionnaire, (b) revision after cognitive pretests, (3) revision after web probing, (4) revision after national expert review, and (5) revision after CC expert review. Quota specifications were the same as in the web probing study, with randomizations considered for an equal quota filling for each of the versions in each country. The experiment took place in March 2019. The same online access panel was used as for the web probing study, but respondents who had participated in the previous web probing study were excluded from participation in the experimental study. 1,977 individuals (Germany: n = 994; United States: n = 983) participated in the survey. The sample composition is shown in Online Supplement E. It did not differ significantly between Germany and the United States or between the experimental groups with respect to gender, age, and education (tested with CMIN, p > .10).

Results

MI

Global model fit and model differences are shown in Tables 2–4, and the local fit and local differences are shown in Online Supplement G.

Gender Roles

The configural model for the original version was of poor local and global fit (Table 2). Factor loadings were all significant in Germany, but in the United States, none of the loadings on the “gender ideology” factor were significant, with one of these items, “home and children,” exhibiting negative residual variance. The model fit was significantly improved by introducing a cross-loading (via the correlated error term) between the items “working mother” and “child suffer” (configrural_a model, Table 2; Online Supplement F). This confirms the findings of Constantin and Voicu (2015), who also introduced this term. Although the global and local fit of the modified model was still poor, we kept this model in order to compare the results with other versions. Introducing equality of factor loadings significantly decreased CFI, although there was no significant decrease in other fit indices. We conclude that metric invariance was only slightly violated. Scalar invariance was strongly violated, as modeling the intercepts to be equal noticeable and significantly decreased model fit.

The configural model was not sufficient for all piloting methods either. As in the original version, we could improve the model fit by introducing the same cross-loading in all piloting groups except the national expert version, in which there was cross-loading between the items “working mother” and “housewife fulfilling” (Table 1). An acceptable model fit was achieved in the case of the cognitive pretests, and the loadings of the second factor were significant in all versions except for web probing, where the loading of one item “housewife fulfilling” was only significant at the 10% level. Therefore, all methods improved configural invariance, with the cognitive pretests performing best. Metric invariance was given for cognitive pretests and CC experts due to the nonsignificant change in all GOF statistics, implying a positive and similar effect of these methods. Scalar invariance was not improved by any of the piloting methods.

Ageism

In the original version, the configural model provided insufficient model fit (Table 3). The introduction of the correlated error terms between the “healthcare” and “burden” items significantly improved the GOF of the configural model (configural_a, Table 3). All loadings were significant and standardized loadings ranged from .40 to .85. The metric model was associated with no significant change in RMSEA, a significant increase of CMIN and a significant decrease of CFI, and was therefore slightly violated. Restricting the intercepts to be equal across countries was associated with a significant change in CMIN and CFI, so there was also a small violation of scalar invariance.

The version obtained by the CC experts exhibited configural MI, whereas it did not improve after other piloting (Table 3). The version after cognitive pretests suffered from nonsignificant factor loading of one item (“seats transport”) in Germany and two items (“resources” and “seats transport”) in the United States (Online Supplement G). Configural invariance was therefore positively affected by CC experts but negatively affected by cognitive pretests. To improve the fit of the configural model, we proceeded as in the original version and introduced a correlated error term in one version when implementing the highest modification indexes (see Online Supplement G for introduced terms). Metric invariance was improved in the case of web probing. Scalar invariance could not be improved by piloting. Violation of scalar invariance was stronger than in the original and other groups after the revisions by national experts.

Children Stereotypes

The two-factor model for children stereotypes did not converge in any of the groups. We implemented the AS model (Online Supplement F) in all groups except the national experts’ group, where the KE model (Online Supplement F) was implemented due to the convergence problems of the AS model. The resulting model fit was acceptable for all versions except the cognitive pretest version (Table 4). Local fit was poor due to very low and nonsignificant loadings of the benefit factor in all versions with exception of web probing, where it was improved. Cognitive pretests therefore had a negative effect on configural invariance, while web probing had a positive effect.

Metric invariance was violated in all versions, and scalar invariance was rejected in the original and both pretest groups. With the modeled mean differences of the attitude factor (scalar_a in Table 4), scalar invariance held in both expert groups and was therefore improved.

Reliability

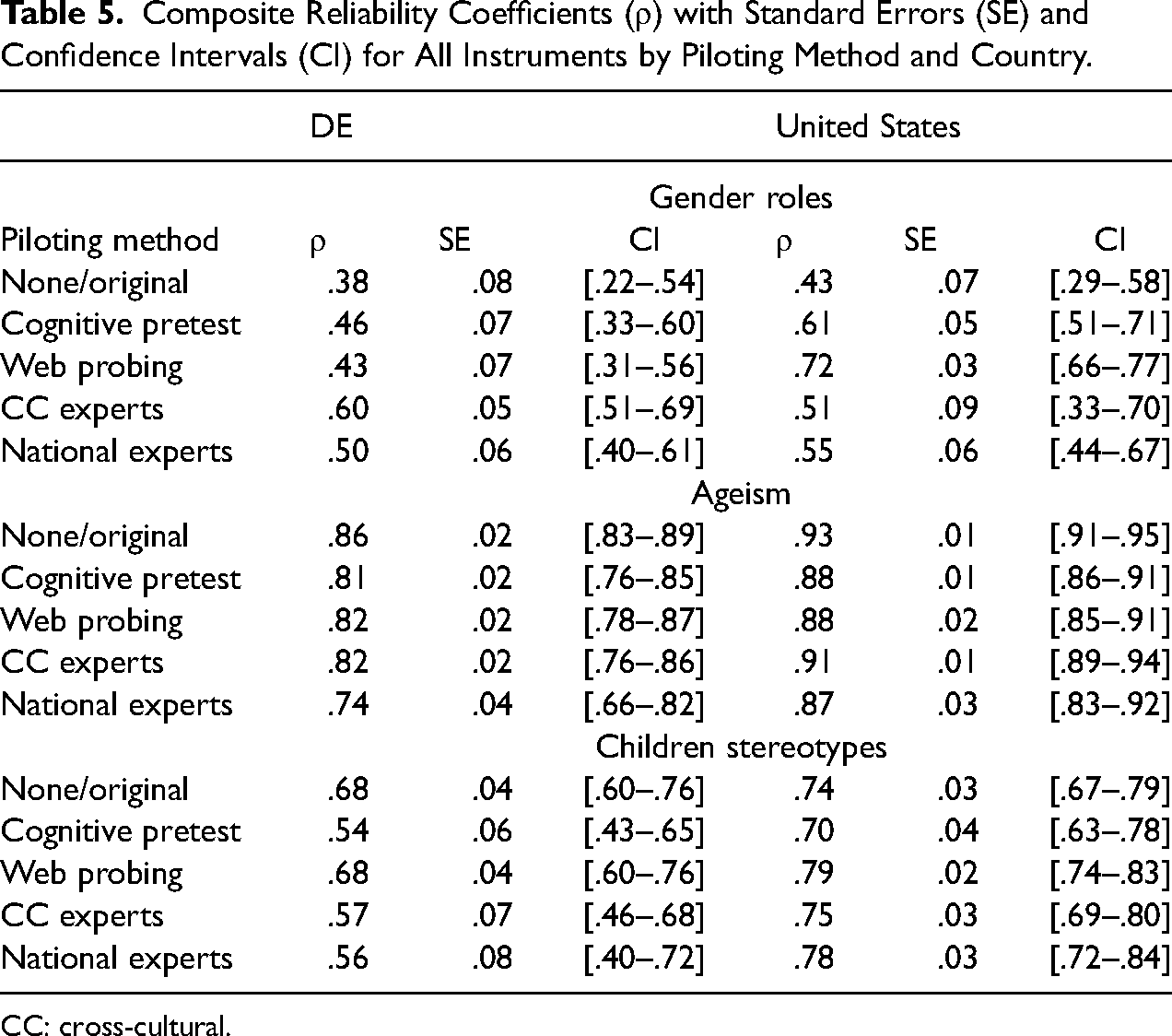

CR coefficients are provided in Table 5. For the original version of gender roles, it was insufficiently low at .38 in Germany and at .43 in the United States. Every revision increased reliability in both countries. Significant increases (due to small or no overlap of the 95% confidence intervals) were found for the CC experts version for Germany and cognitive pretests and web probing for the United States.

Composite Reliability Coefficients (ρ) with Standard Errors (SE) and Confidence Intervals (CI) for All Instruments by Piloting Method and Country.

CC: cross-cultural.

For ageism, the original version exhibited high CR of .86 in Germany and .93 in the United States. After cognitive pretests, web probing, and in CC expert group, the reliability of the German instrument decreased slightly, but nonsignificantly (due to overlapping confidence intervals). Significantly lower CR than in the original inventory was obtained for the revision by national experts in Germany. In the United States, all revisions led to a slight but significant decrease in CR, except the revision by the CC experts.

In the case of children stereotypes, the original version obtained low but acceptable reliability in both countries. For Germany, web probing did not change reliability, but it was greatly reduced and no longer acceptable in cognitive pretests and both expert revisions. For the United States, web probing and the national expert review increased reliability.

To conclude, all piloting methods improved the poor reliability for gender roles. An increase for the U.S. version of children stereotypes was obtained for web probing and national experts. In the case of high or acceptable reliability (ageism and children stereotypes in Germany), web probing did not lead to the unfortunate decrease of reliability, whereas other methods did. When comparing the two pretesting methods, web probing outperformed cognitive pretests.

Discussion

With respect to the research question on whether piloting methods allow for improvements in CC comparability, we found that web probing and CC expert reviews showed positive and no negative effects on the otherwise sufficient results for the original versions. In addition to the positive effect on configural invariance for gender roles, web probing positively affected configural invariance for children stereotypes and metric invariance for ageism. CC expert review improved metric invariance for gender roles, configural invariance for ageism, and scalar invariance for children stereotypes. Cognitive pretests and national expert reviews had not only positive but also strong negative effects on MI. Cognitive pretests helped to improve configural and metric invariance in the case of gender roles but were less helpful for other inventories, negatively affecting configural invariance. National experts had a positive effect on scalar MI for children stereotypes. However, there was a strong negative impact on metric and scalar invariance for ageism.

In responding to the research question with respect to reliability, the reliability of the gender roles instrument increased after each pilot method comparable to configural MI. Web probing consistently led to increased reliability if it was insufficiently low and did not negatively affect acceptable or high reliability. In the process, web probing outperformed cognitive pretests in increasing reliability. Other piloting methods tended to worsen reliability when it was high.

Taking all effects together, web probing and CC expert reviews had consistently positive effects on MI, with web probing having the best effect on reliability. Other methods appear to be insufficient if both, CC comparability and reliability are to be maximized.

In light of the positive effects observed for web probing and cognitive pretests, it can be concluded that piloting methods based on qualitative data have the potential to improve reliability and CC comparability. The impact of the cognitive pretests and web probing on comparability may be limited as compared to the CC expert review due to the need to select a limited number of items for probing. It would be advantageous to consider the measurement quality and MI of the original instrument for the selection of indicators for piloting.

The similar effect of all piloting methods on the gender role instrument can be explained by the high level of agreement on the changes made to the items. For the other two inventories, the results of piloting differed and the effects were therefore also different. In the case of the ageism scale, the versions revised by national experts performed poorly compared to the other methods, while in the case of gender and children stereotypes, national expert reviews were able to provide improvements similar to those of the CC experts. This is surprising, as the national experts’ versions differed in many instances, even employing different kinds of rating scales. However, a strong negative impact on MI and reliability would be explained by the many differences between the national experts’ versions. The primary objective of the revisions implemented by national experts was to optimize instruments in the respective language. Our findings suggest that CC surveys must strike a careful balance between the importance of optimal questionnaire design in one language, on the one hand, and survey instruments that are comparable across languages, on the other. As the CC experts’ revisions are also based on the input of the national experts, we expect that expert reviews involving national experts and CC experts to be an optimum, which is also known as the complementary methods hypothesis (Maitland and Presser 2016, 2018).

To evaluate different piloting methods, we implemented each method once and compared the results. Although we involved two to eight different individuals with matched expertise in the implementation of different methods, we cannot exclude the possibility that individual researchers may have impacted the results. Nevertheless, the results regarding the effect of expert reviews and web probing on reliability are comparable to those of previous studies (Maitland and Presser 2016, 2018; Yan et al. 2012).

It should also be noted that we conducted a summative evaluation (Scriven 1967), which shows the overall effects of a method on CC comparability and reliability. Our study should be followed by a formative evaluation to evaluate the effect of different components of a method. Due to the difficulty of achieving configural invariance, some results need to be validated with the data that were invariant at the upper level.

Due to the use of a commercial nonprobability online access panel, results may differ from probability sample studies. However, particularly for piloting of questionnaires, such preliminary studies with nonprobability samples are helpful in evaluating measurement quality and the comparability of measurement instruments at reduced costs.

As a by-product, our study provides an improved measurement instrument for gender roles that has higher factorial validity, reliability, and MI than the original German and English EVS versions. The ageism instrument, piloted in our study, can also be used due to its sufficient MI and reliability. The ISSP instrument on children stereotypes provided a very poor factorial structure, which could be sufficiently improved after web probing, making this version preferable.

The findings suggest that it is worthwhile to use piloting methods if the aim is to improve CC comparability and reliability and that combing methods such as CC expert review and web probing would produce the best results with respect to both CC comparability and reliability. However, testing this assumption is the task of further research.

Supplemental Material

sj-docx-1-smr-10.1177_00491241241307600 - Supplemental material for Improving Cross-Cultural Comparability of Measures on Gender and Age Stereotypes by Means of Piloting Methods

Supplemental material, sj-docx-1-smr-10.1177_00491241241307600 for Improving Cross-Cultural Comparability of Measures on Gender and Age Stereotypes by Means of Piloting Methods by Natalja Menold, Patricia Hadler and Cornelia Neuert in Sociological Methods & Research

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Deutsche Forschungsgemeinschaft (Grant FOR 2928/GZ: ME 3538/10-1).

Data Availability Statement

Access data of survey experiment and software codes: Menold, Hadler and Neuert (2024). All remaining data are available at GESIS pretesting laboratory on request: pretesting@gesis.org.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.