Abstract

Probes are follow-ups to survey questions used to gain insights on respondents’ understanding of and responses to these questions. They are usually administered as open-ended questions, primarily in the context of questionnaire pretesting. Due to the decreased cost of data collection for open-ended questions in web surveys, researchers have argued for embedding more open-ended probes in large-scale web surveys. However, there are concerns that this may cause reactivity and impact survey data. The study presents a randomized experiment in which identical survey questions were run with and without open-ended probes. Embedding open-ended probes resulted in higher levels of survey break off, as well as increased backtracking and answer changes to previous questions. In most cases, there was no impact of open-ended probes on the cognitive processing of and response to survey questions. Implications for embedding open-ended probes into web surveys are discussed.

Introduction

In recent years, open-ended questions have experienced a renaissance (Neuert et al. 2021), particularly in the context of web surveys (Smyth et al. 2009), as the cost and effort of data collection (Gavras and Höhne 2020; Revilla and Couper 2019) and coding of responses (Schonlau and Couper 2016) are much decreased due to technological development. Open-ended narrative questions are considered the “classic open-ended question […], in which respondents are invited to articulate their response using their own words” (Couper et al. 2011). Probes are a specific type of open-ended narrative question that directly relate to a forgoing closed survey question (Behr et al. 2012b; Schuman 1966) and can be used to assess the validity and even cross-cultural comparability of survey questions (Meitinger 2018). They are frequently used at the stage of question development and cognitive pretesting for the purpose of question evaluation, for instance, to examine whether a term in the survey question is understood in the way intended by the researcher or to gain insights on why respondents chose a response option (Collins 2015; Miller et al. 2014). The implementation of open-ended probes in large-scale surveys is less common, though researchers have repeatedly argued for this to clarify reasons for a response (Schuman 1966), gain insights on reasons for lack of measurement invariance (Meitinger 2017), or even to encourage more truthful answers (Couper 2013; Singer and Couper 2017).

Next to these described benefits, there are concerns that open-ended probes impact surrounding survey questions. Recent studies that embedded open-ended probes in web surveys indicated an increase in survey break offs and item nonresponse (Luebker 2021) as well as slight shifts in response behavior (Couper 2013; Fowler and Willis 2020). If embedding open-ended probes affects the response behavior to web survey questions, the comparability of survey questions asked with and without open-ended probes may be compromised. This would affect settings such as the one proposed by Schuman (1966), in which probes are asked to a random subsample within a survey, or longitudinal analysis of panel data, if probes are implemented in some, but not all waves. Moreover, the validity of insights gained from web probing responses depends on respondents understanding and answering the survey questions in the same way regardless of whether or not they receive probing questions.

The present article sets out to examine the effects of open-ended probes on web survey questions. The background section begins with an overview of previous studies that examined the effect of open-ended probes on closed survey questions and points to current research gaps. Next, the differences between open-ended and closed questions in terms of burden, cognitive processing and response behavior are summarized, and the notion of measurement reactivity in surveys is introduced. From this, the research questions and hypotheses are derived. A between-subject experiment is reported which assessed the effects of embedding open-ended probes on the processing of and response to closed web survey questions. The experiment examined survey break off, backtracking, and answer changes to previous survey questions, as well as response times, nonresponse, and response behavior to successive survey questions. Finally, the benefits and potential adverse effects of embedding open-ended probes into web surveys are discussed.

Background

Previous Research on the Impact of Open-Ended Probes on Survey Response

In the realm of web surveys, three studies have examined the impact of open-ended probes on closed survey questions. Luebker (2021) examined the effect of embedding an open-ended probe on survey break off and item nonresponse to a closed opinion question. He found that a probe displayed on the same survey page as the question it pertained to increased survey break off by 0.6 and item nonresponse by more than 25 percentage points. When using a paging design—that is displaying the probe on a separate survey page—there was a stronger impact on survey break off of 1.4 percentage points, but no effect on item nonresponse as compared to inserting no probe. It must be noted that the probe in this experiment more resembled an open-ended text field at the end of a closed survey question than a typical open-ended narrative probe and was worded in a strongly nonmandatory manner (“If you like, you can add some bullet points to your response.”).

Couper (2013) reported the results of two experiments that inserted open-ended probes into a ten-item scale on attitudes towards immigrants in a probability panel in the Netherlands. In the first experiment, respondents were presented with one mandatory open-ended probe after each item using a paging design. In the second experiment, respondents were presented with an optional open-ended probe on the same screen as the respective closed survey item. In both experiments, there was a small but significant difference in the overall means of the item battery between the experimental condition with probes and the control group, with respondents reporting lower levels of prejudice in the condition with probes. In the experiment using the paging design, this effect occurred as of the second-shown survey item.

Finally, a study by Fowler and Willis (2020) compared responses to survey questions depending on probe placement. Respondents answered nine closed items on perceptions of neighborhood walkability, such as the presence of sidewalks, trails, or paths. On one condition, they received four open-ended probes on the survey page directly after the item battery. In the other condition, respondents were presented with the probes retrospectively at the end of the survey, that is, with several unrelated survey questions in between. Results showed a small but significant effect of probe placement on the mean walkability score, with respondents who received the probes directly after the survey questions reporting slightly enhanced perceptions of walkability. It must be noted that the study was not a strictly randomized experiment, as the condition that included probes directly after the survey items was fielded three weeks before the condition with retrospective probes, and the sample was not representative of the U.S. population in terms of demographics.

In sum, previous studies lend support for the notion that open-ended probes impact whether a respondent continues the survey, answers survey questions, and how they answer them. However, the studies also raise many questions. Regarding survey break off and item nonresponse, the effect sizes found by Luebker (2021) merit further examination. The study only examined one survey question, and the probe was rather atypical. Effects may vary across both probe and question types. Regarding response behavior to the survey questions, Couper (2013) found that using a paging design influenced response behavior to subsequent items (i.e., as of the second-shown item), whereas Fowler and Willis (2020) found an effect on their item battery despite presenting their probes on a separate page after the survey questions. A possible explanation for this effect could be that respondents in Fowler and Willis’ study backtracked to the previous survey page and changed their answers; however, the study provides no details on this. Most importantly, the reasons for the shift in the overall means found in both studies remain unclear. Couper (2013) had assumed that open-ended text fields in which respondents could justify their responses would reduce the threat of sensitive questions and lead to an increase in socially undesirable answers; however, the shift in response behavior indicated the opposite effect. Moreover, in both studies, the effects on the overall means were rather small. Possibly, effects on response behavior can be better examined using other measures, such as indicators of response quality or response styles. However, there is currently no framework to predict such effects.

Cognitive Processing of Open-Ended and Closed Questions

The following section draws on literature on open-ended probes in the context of cognitive interviewing and web probing, as well as open-ended narrative questions in general. Other types of open-ended (such as numeric) questions or probes with closed response options, also known as targeted embedded probes (Scanlon 2019, 2020), are not considered.

The process of survey response optimally consists of several cognitive steps (Tourangeau, Rips, and Rasinski 2000). Respondents must interpret the pragmatic meaning of a survey question. They embark on an information retrieval process, which is truncated when respondents have gathered enough information to form a judgment of sufficient certainty. The relevance of the accessible information is assessed, and an internal judgment is formed. This is then adjusted to the response format of the survey question.

For closed questions, the available response options may contribute to construing the meaning of a question (Schwarz et al. 1988) and impact the perceived relevance of the retrieved information. For open-ended questions, neither question interpretation nor the assessment of which accessible information is relevant to form a judgment is guided—and potentially limited—by predefined response options. However, open response formats also bear the risk that respondents deem aspects irrelevant if they consider them self-evident, or that information retrieval is truncated before relevant information is retrieved (Tourangeau et al. 2014).

The differences in these processes impact whether and how respondents answer open-ended and closed questions. In general, the respondent tasks associated with open-ended questions are considered more demanding and burdensome (Krosnick 1999; Tourangeau and Rasinski 1988). In line with this, a higher number of open-ended questions in a survey is associated with an increased likelihood of survey break off (Galesic 2006), and inserting multiple open-ended questions on one page has a particularly strong effect (Peytchev 2009). The study by Luebker (2021) confirmed the negative impact on survey break off for open-ended probes, in particular when the probe is presented on a separate survey page. Moreover, open-ended questions result in higher levels of item nonresponse than corresponding closed questions (Reja et al. 2003; Zuell, Menold, and Körber 2015), particularly among lower educated respondents (Andrews 2005; Miller and Lambert 2014; Schmidt, Gummer, and Roßmann 2020; Scholz and Zuell 2012; Zuell and Scholz 2015). These findings have been confirmed for open-ended as compared to closed probes (Neuert, Meitinger, and Behr 2021).

Differences can also be found in response distributions between open-ended and closed survey questions (Reja et al. 2003) and probes (Neuert, Meitinger, and Behr 2021). Responses not included in a closed format are unlikely to be named by respondents, even when an open-ended “other” field is included. On the other hand, any given opinion, theme, or topic is less likely to be volunteered in an open response format than when it must simply be “recognized” in a closed question (Bradburn 1983).

Open-ended probes are more directed than other open-ended questions as they directly pertain to the preceding closed survey question (Foddy 1998; Neuert, Meitinger, and Behr 2021; Silber, Zuell, and Kuehnel 2020). In the context of web probing, three types of probes are mainly employed.

Measurement Reactivity in Surveys

The notion that examining a phenomenon can alter the phenomenon itself is discussed in many areas of research, from physics to behavioral psychology. In survey research, the notion of measurement reactivity was examined in a series of experiments using personality measures. Knowles et al. (1992) argued that thinking about questions has consequences for question construal and that increased reflection on a topic makes a certain interpretation more salient, leading to a polarization of judgment. They postulated that later items within a measure (or items in a repeated measurement) show more extreme, but also more reliable and consistent responses. To examine this, the order of multi-item measures was randomized (Knowles 1988), with later items showing higher reliability and more extreme answers. Importantly, there was generally no visible effect on the mean value of these items. The studies demonstrated that increased reflection about survey questions influences both cognitive processing and response to survey items and that these effects must not (necessarily) be visible by a simple comparison of means.

Whether respondents’ verbalized reflection on survey questions causes reactivity has been subject to debate since the dawn of cognitive testing. The early standard of verbal protocols required respondents to think aloud while answering a survey question (Ericsson and Simon 1980, 1993). This was criticized by researchers as potentially increasing the effort required to create a response (Willis 1994, 2005), especially after an experimental study demonstrated that think-aloud protocols impact task accuracy and response times for some tasks (Russo, Johnson, and Stephens 1989). The debate gave rise to the use of probing questions, which are administered after the respondent has completed the survey question. Beatty and Willis (2007) argued that using probes in cognitive interviews may be less likely to cause reactivity than employing the think-aloud technique. However, other researchers argued that probes may likewise lead to invalid or reactive reports (Conrad, Blair, and Tracy 1999) by interfering with the natural flow of the survey interview (Beatty 2004), and a recent meta-analysis supported the notion that directive probing can impact task accuracy (Fox, Ericsson, and Best 2011). A further study showed some indication of increased respondent motivation through verbal probing, but remained inconclusive (Sudman, Bradburn, and Schwarz 1996).

Research Questions and Hypotheses

This study aims to enhance our understanding of the impact of open-ended probes on survey responses. I differentiate between effects on the survey in terms of survey completion, effects on the questions being probed, and effects on subsequent questions.

Based on the findings from previous studies and literature on open-ended questions and measurement reactivity, I put forward several hypotheses. The strongest possible adverse effect of an open-ended question occurs if a respondent chooses to discontinue the survey. The sum of past research indicates that adding open-ended probes to a web survey results in higher levels of survey break off (Galesic 2006; Luebker 2021; Peytchev 2009).

Embedding open-ended probes may impact the survey questions they relate to, either if probes are presented alongside the survey question on the same page (as in some of the experimental conditions in Couper 2013; Luebker 2021), or if respondents have the possibility to return to previous questions in a paging design (which would explain the effects found by Fowler and Willis 2020). A probe may cause respondents to reconsider their interpretation of a survey question, access other information, or include other information in their judgment. Previous research has indicated that reverse question order effects may arise when respondents have the possibility to return to previous questions (Sudman, Bradburn, and Schwarz 1996), meaning that subsequent questions can influence responses to previous ones (Bishop et al. 1988; Schwarz and Hippler 1995; Schwarz et al. 1991). Therefore, I hypothesize that embedding open-ended probes leads to an increase in backtracking and changing one's answer to previous survey questions:

Next to the effects on the survey questions they relate to, open-ended probes may impact how respondents process and answer subsequent questions. The following hypotheses rest on the assumption that probes cause respondents to reflect on their previous survey responses, and that respondents process survey questions more deeply when they are expecting these questions to be followed by probes. Knowles (1988) demonstrated that increased thinking about questions leads to judgment polarization and more consistent responses.

Regarding cognitive effort, response times are considered “the most important means for investigating hypotheses about mental processing” (Yan and Tourangeau 2008). Findings from think-aloud and verbal probing (Fox, Ericsson, and Best 2011; Russo, Johnson, and Stephens 1989) indicate that response times increase in interviewer-administered settings for some questions. Therefore, I hypothesize that response times to closed survey questions increase when open-ended probes are embedded:

Unfortunately, previous studies did not report on nonresponse (Couper 2013; Fowler and Willis 2020) or only examined one question (Luebker 2021). However, if embedding open-ended probes causes respondents to reflect on survey questions more deeply and this leads to judgment polarization, it can be assumed that nonresponse decreases for subsequent items:

Previous research has found effects of embedding open-ended probes on the mean sum score of multi-item measures (Couper 2013; Fowler and Willis 2020), but the effect size was small and the direction could not be predicted. Knowles et al. (1992) argued that increased thinking about answers impacts response behavior but that this must not necessarily be visible in the form of a mean shift. Rather, increased thinking about questions leads to judgment polarization and more consistent responses. Therefore, I hypothesize that embedding open-ended probes does not (consistently) impact means. Instead, differences become visible in the form of increased extreme responding and nondifferentiation:

Method

An experiment was designed with the aim of comparing closed survey questions that were either accompanied by open-ended probes or not. Respondents received six survey pages with closed attitude questions. A between-subject design was used, in which respondents were randomly assigned to experimental condition (A) which embedded open-ended probes between the survey pages with closed questions, or condition (B) which contained only the closed questions.

Survey and Probing Questions

The closed survey questions presented a mix of single- and multi-item measures using common constructs in social science research, such as political attitudes, personality, and well-being. Measures that have been accompanied by open-ended probes in other studies were chosen when possible. Multi-item measures were presented in a grid format on one survey page. The exact wording of the closed survey questions and the open-ended probes from condition (A) can be found in Online Appendix Table A1.

The first closed survey question was a single-item measure of

In experimental condition (A), each closed question was followed by one open-ended probe. For the multi-item inventories (Q2, Q3, and Q6), respondents were randomly presented with a comprehension or category selection probe. The single-item measures on life and relationship satisfaction (Q4 and Q5) were each followed by a specific probe. The question on left–right orientation (Q1) was followed by two probes on the understanding of the terms “left” and “right” as in previous studies (Zuell and Scholz 2015). To keep the survey setting identical across conditions and to be able to attribute survey break offs to the probing situation, the probes were not announced on the welcome page of the survey. Instead, the first probe was introduced with the words “We would like to receive more information on the previous question.” Probes were presented using a paging design, with the survey question being repeated on the page with the probe. In addition, the selected survey response was repeated for category selection and specific probes.

Web Survey

An online survey was carried out with a nonprobability sample between November 20 and December 2, 2020, with the panel provider Respondi AG. The sample included quotas to depict the German online population in terms of gender (male, female), 1 age (18–29, 30–39, 40–49, 50–59, and 60 or more years), education (low, medium, and high) and region (former East and West Germany). Respondents were randomly assigned to experimental condition (A) or (B). There were no significant differences regarding demographics or devices used between experimental groups (see Online Appendix Table A2 for the sample composition). The web survey included several experiments, which were randomized independently of each other. The reported study was placed towards the beginning of the survey after the screening and quota questions and three short scales. No open-ended questions were implemented before the experiment.

The Universal Client-Side Paradata script by Kaczmirek and Neubarth (2007) was implemented to ensure a more exact measure of response latency (Yan and Tourangeau 2008) and collect questionnaire navigation data (Callegaro, Manfreda, and Vehovar 2015). The script records response behavior sequentially so that the resulting string variables enable coding backtracking to previous survey pages and answer changes to items. In accordance with both legal and ethical research standards (ADM et al. 2021; Kunz et al. 2020), respondents were informed about the collection and use of client-side paradata on the welcome page of the survey.

Of the 9,731 panelists invited to participate in the survey, 2,441 started the survey and of those, 241 broke off before completing it (before, during, or after the reported experiment), resulting in 2,200 completed questionnaires. This leads to a participation rate of 22.6 percent (American Association for Public Opinion Research 2016) and a break off rate of 9.9 percent (Callegaro and DiSogra 2008). Respondents received €1.50 for survey completion. About a quarter (27.1%;

Probe Response Quality and Content

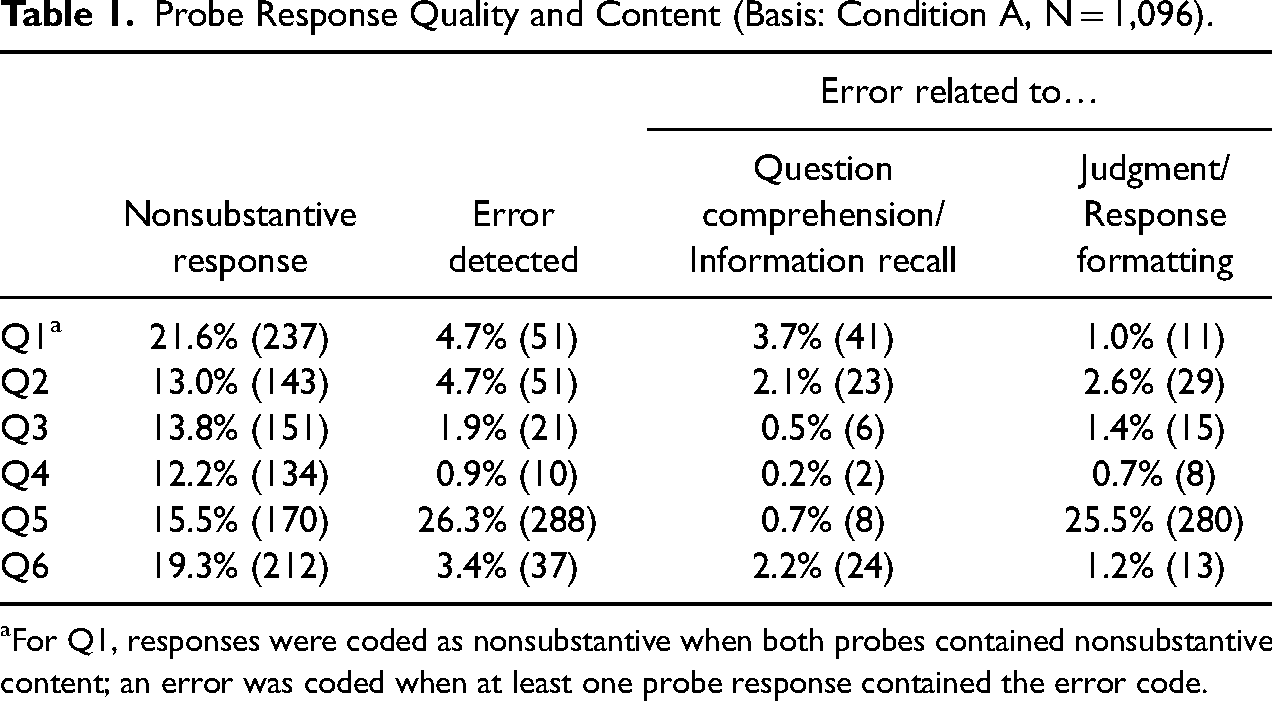

Prior to examining the survey responses, probe response quality and content were analyzed. Low probe response quality may point to poorly designed probing questions. Probe response content was examined to gain insights into the respondents’ cognitive process of survey response and the quality of the survey questions. To ascertain probe response quality, the share of nonsubstantive responses was determined. Responses were coded as non-substantive when respondents left the probe empty, entered random characters, typed a “don’t know” answer or an explicit refusal, or other nonintelligible or noncodable content (Behr et al. 2012a). Between 12.2 percent and 21.6 percent of respondents in condition (A) gave nonsubstantive responses to the probing questions (see Table 1), which coincides with previous web probing studies (Behr et al. 2017; Meitinger and Behr 2016). To examine probe response content, the substantive probe responses were subjected to cognitive coding (Willis 2015) to determine whether the probe responses hinted at issues in respondents' cognitive process of survey response. This approach is also known as an error perspective as it gives insights into possible reasons behind measurement errors (Meitinger and Behr 2016). Errors or problems may occur at the stages of question comprehension, information retrieval, judgment, or response formatting (Willis, Schechter, and Whitaker 1999). For all but one question, error codes were detected for under 5 percent of respondents (see Table 1). Reported problems included misinterpreting words central to the question (i.e., the terms “left” or “right” in Q1) or mismatches between survey and probe responses (i.e., a low score of political cynicism in Q2, but probe responses indicating very low trust in politicians). Question Q5 on relationship satisfaction showed an unusually high share of error coding, with 25.5 percent of responses pointing to difficulties with judgment and/or response formatting. Almost all of these respondents reported that they were missing an answer to indicate that they were currently not in a relationship. A complete list of reported problems is available from the author on request.

Probe Response Quality and Content (Basis: Condition A, N = 1,096).

For Q1, responses were coded as nonsubstantive when both probes contained nonsubstantive content; an error was coded when at least one probe response contained the error code.

Dependent Measures and Data Analysis

All dependent measures were compared across the two experimental conditions (1 = condition (A) open-ended probes; 0 = condition (B) without probes).

Survey break off, backtracking, and answer changes

Break offs that occurred during the reported experiment, that is on the pages containing the closed survey questions (Q1–Q6) or the open-ended probes (P1a/b-P6) were included in the analysis. Backtracking was recorded for respondents when the client-side paradata string recorded more than one page visit. Multiple page visits were coded into a binary variable for each respondent on page level (1 = backtracking to survey page; 0 = no backtracking to survey page) and aggregated across all six closed survey questions (1 = backtracking to at least one survey page; 0 = no backtracking to any survey pages). Likewise, the prevalence of answer changes after backtracking was coded on page level (Heerwegh 2003, 2011) (1 = answer change after backtracking; 0 = no answer change/no backtracking) and aggregated across all six closed survey questions (1 = answer change after backtracking on at least one survey page; 0 = no answer change after backtracking for any closed survey questions).

Chi-square tests of independence were used to examine whether the share of survey break offs, backtracking, and answer changes after backtracking differed between conditions. For backtracking and subsequent answer changes, analyses were additionally carried out on page level, with Bonferroni adjusted alpha levels for multiple comparisons.

Response times

Client-side response times were captured for each survey page. Response time data is positively skewed and subject to outliers, which makes decisions about outlier definition, handling, and potential transformation of response times prior to analysis of utmost importance (Kunz and Hadler 2020). A variety of response time outlier definitions exist (Matjašič, Vehovar, and Manfreda 2018). Detecting outliers based on the mean and standard deviation remains a common procedure (Yan and Olson 2013), but has been criticized, as the mean value is in turn influenced by outliers. Researchers have increasingly recommended using median-based outlier definitions (Höhne and Schlosser 2018). Employing 2.5 times the median absolute deviation is considered the most robust outlier threshold (Leys et al. 2013), and applied in the present study. This method led to between 9 percent and 12 percent of response times being identified as outliers. Response time outliers were omitted from response time analysis, as were all instances in which a survey page was visited more than once (backtracking).

To test the third hypothesis, a multivariate analysis of covariance (MANCOVA) was applied to the adjusted response times as of the second survey question (Q2–Q6), with the experimental condition as the main predictor. The model included age, education, and device used (PC/laptop or mobile) as covariates, as it was not possible to include baseline reading speed, which otherwise accounts for much of the variance within response times (Couper and Kreuter 2013; Lenzner, Kaczmirek, and Lenzner 2010; Yan and Tourangeau 2008). Because outlier exclusion as described above leads to a sizeable decrease in available cases for a MANCOVA, separate analyses of covariances (ANCOVAs) were run for each survey page (thus, only excluding outliers page-wise).

The robustness of response time analyses should be tested by applying different outlier detection methods (Revilla and Couper 2018). The alternative approach used excluded observations beyond the upper and lower one percentile from analysis (Yan and Tourangeau 2008). A MANCOVA and separate ANCOVAs were run using the same predictors. All analyses led to the same results.

Response behavior

Survey response

T-tests were used to test for differences between conditions. For the single-item measures (Q4 and Q5), the scale values coincide with the items’ raw values. For the multi-item measures, scoring was carried out according to the instruments’ documentation. For the two-item measure on political cynicism (Q2), the second item was recoded so that the scale direction was the same across items, with higher values indicating higher cynicism. The score is the sum of both items divided by the number of items (Aichholzer and Kritzinger 2016). For the social desirability responding measure (Q3), the two factors exaggerating positive qualities (PQ+) and minimizing negative qualities (NQ−) were calculated separately using the sum score, again dividing by the number of items (Kemper et al. 2014). For the intergenerational social support inventory (Q6), the fourth item was recoded so that the scale direction remained the same across items, and principal components factor analysis was conducted on the six items with oblique rotation (Direct Oblimin). The Kaiser-Meyer-Olkin measure of sampling adequacy was 0.65, and Bartlett's test of sphericity reached statistical significance (χ2(15) = 1,947.06,

Item nonresponse

Item nonresponse can occur in the form of (1)

Chi-square tests of independence were used to examine whether the frequency of skipping items or choosing nonsubstantive response options differed between conditions. Analyses were additionally carried out on page level (for Q2–Q6), with Bonferroni-adjusted alpha levels for multiple comparisons. For multi-item inventories (Q2, Q3, and Q6), nonresponse was aggregated across all items. The item battery on social desirability responding (Q3) did not to include a non-substantive response option and is not included in this analysis.

Nondifferentiation.

Nondifferentiation, also referred to as straightlining, was examined for the two multi-item batteries Q3 and Q6. A dichotomous and a metric measure of straightlining were calculated. The dichotomous measure indicated whether a respondent chose the identical response option for all items within one battery. The second measure “mean root of pairs” was chosen to “capture variations in the choice of answers in a battery” (Kim et al. 2019). It is calculated by producing a temporary index by computing the mean of the root of the absolute differences between all pairs of items in a battery and then rescaling the temporary index to range from 0, indicating least straightlining, to 1, indicating most straightlining (Chang and Krosnick 2009). To examine whether nondifferentiation differed between conditions, chi-square tests of independence were used for the absolute and

Extreme responding

Extreme responding was defined as choosing either endpoint of a response scale (apart from non-substantive response options). Chi-square tests of independence were carried out on item level with Bonferroni-adjusted alpha levels for multiple comparisons.

All analyses were carried out using IBM SPSS Statistics Version 24.0.

Results

Effect on Survey Break Off

The first hypothesis postulated that embedding open-ended probes into a web survey increases survey break off. Seventy-nine respondents dropped out of the survey during the reported experiment. Most of these break offs (82.3%;

More than half of the break offs in condition (A) (

Effect on Preceding Survey Questions

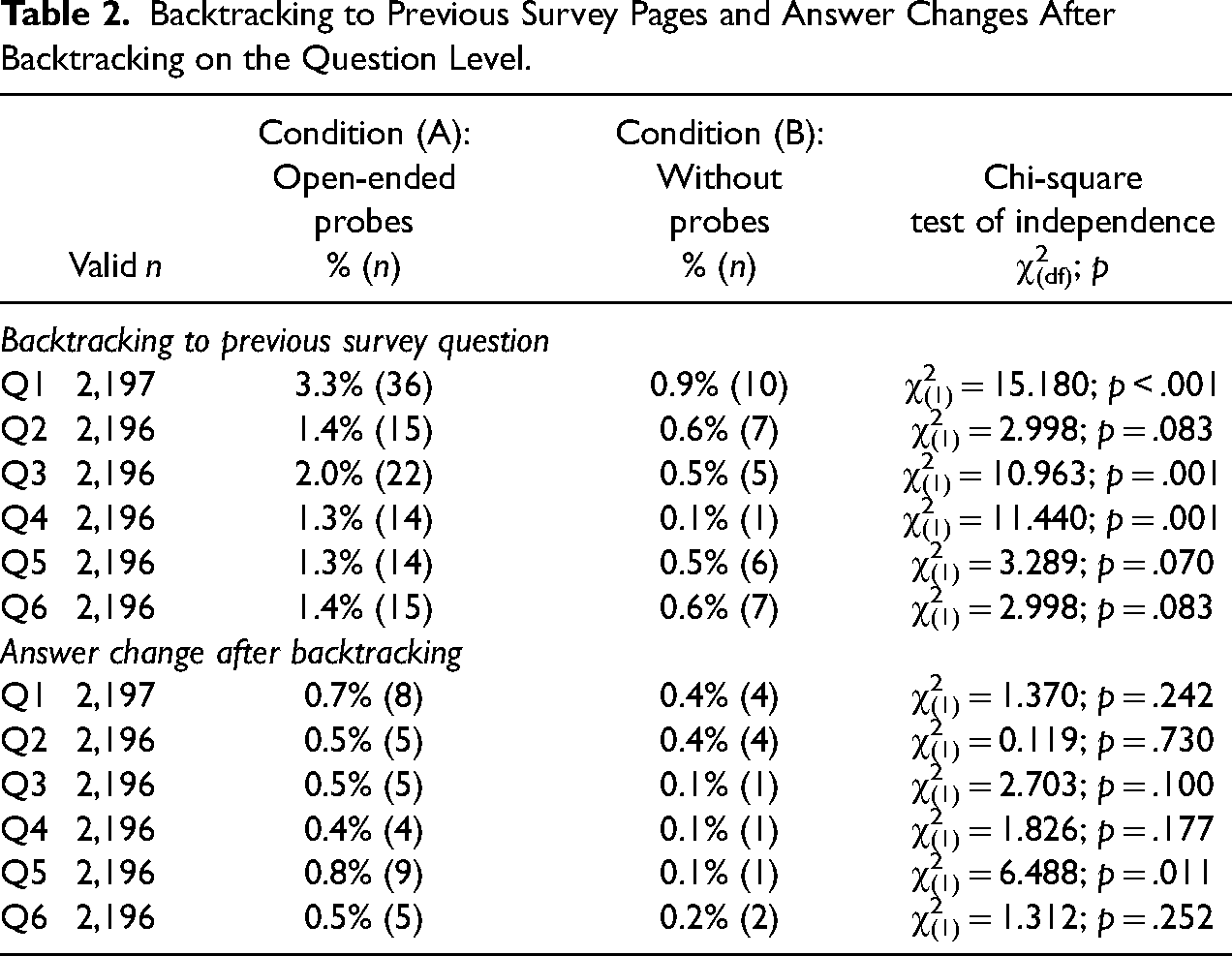

The second hypothesis stated that embedding open-ended probes into a web survey increases backtracking (H2a) and subsequent answer changes (H2b) to previous survey questions. In total, 6.1% (

Backtracking to Previous Survey Pages and Answer Changes After Backtracking on the Question Level.

Answer changes after backtracking were carried out by 2.0% of respondents (

Effects on Subsequent Survey Questions

Response times

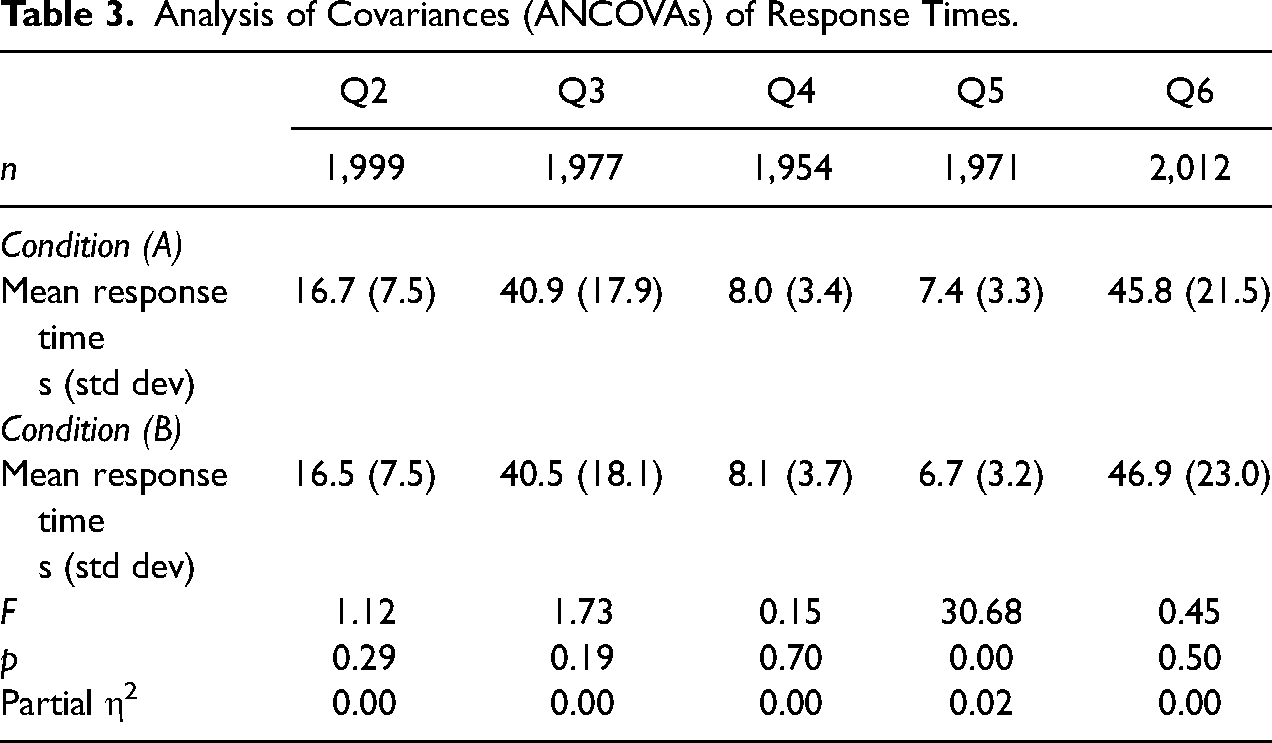

The third hypothesis stated that embedding open-ended probes into a web survey increases cognitive effort and thus response time invested in subsequent questions. To examine this, the response times to Q2–Q6 between conditions were examined using MANCOVA. After outlier exclusion, 1,509 cases remained for analysis (condition A:

Analysis of Covariances (ANCOVAs) of Response Times.

Nonresponse

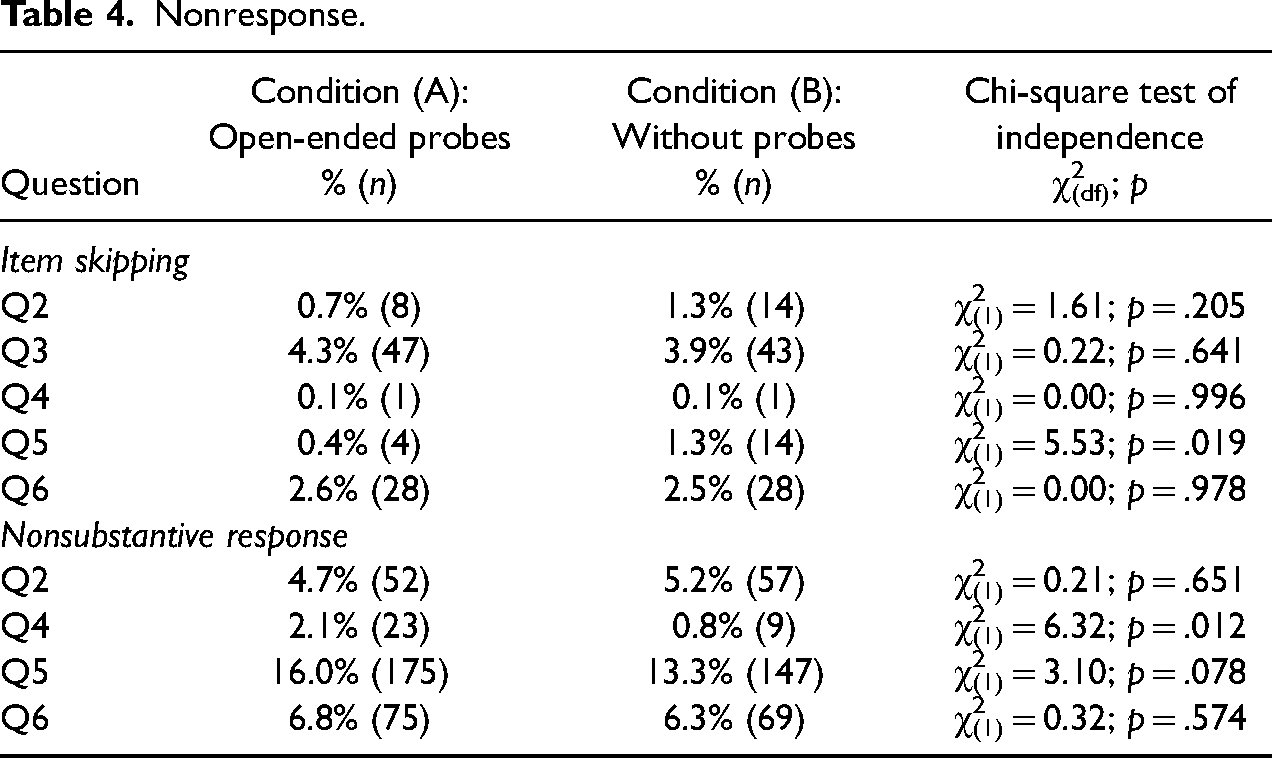

The fourth hypothesis postulated that nonresponse is lower in the condition with open-ended probes. Table 4 shows the occurrence of skipping items and choosing non-substantive response options on question level. Across Q2-Q6, 8% (

Nonresponse.

Respondents had the option to choose a nonsubstantive answer (“I don’t want to answer”) for all questions except Q3. In total, 22.7% (

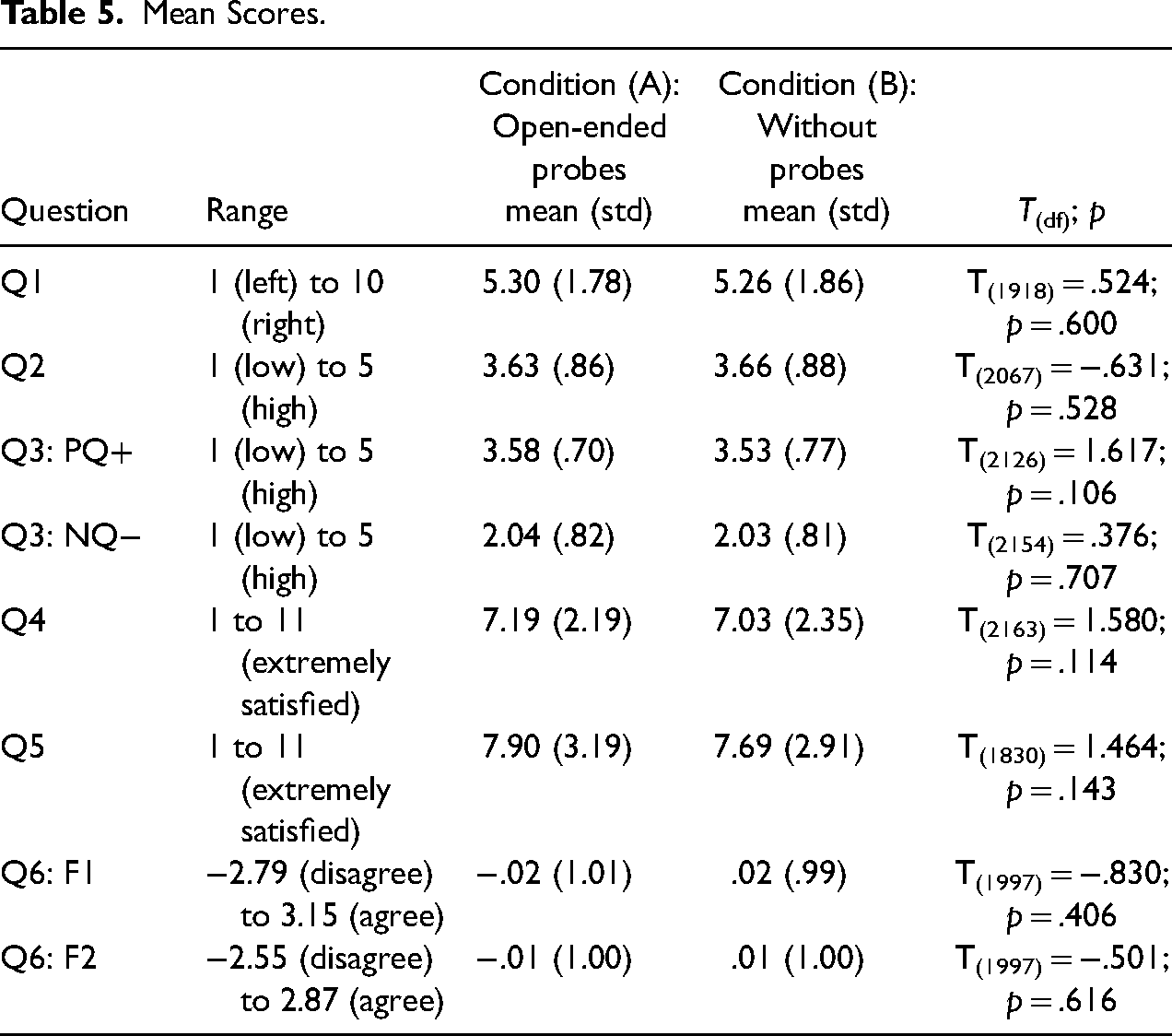

Mean scores

In line with hypothesis 5a, there were no significant differences between means for any of the single-item or multi-item measures (see Table 5).

Mean Scores.

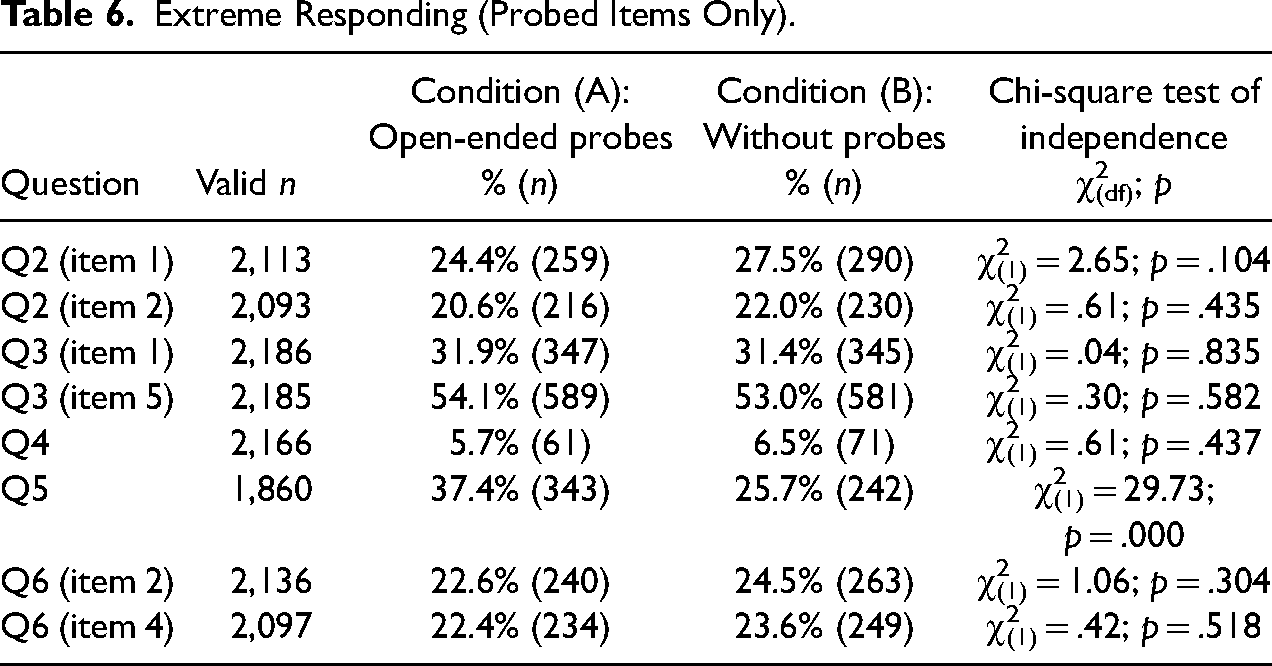

Extreme responding

According to hypothesis 5b, more extreme responding should occur in condition (A) with open-ended probes. Chi-square tests were conducted for each of the eight probed items of Q2–Q6, using Bonferroni adjusted alpha levels of .00625 (.05/8). Table 6 shows the share of extreme responding across conditions on item level. Extreme responding was more likely for the single-item measure of relationship satisfaction (Q5). In line with the hypothesis, significantly more respondents reported that they were extremely satisfied or unsatisfied with their current relationship in condition (A) that included open-ended probes (37.4%,

Extreme Responding (Probed Items Only).

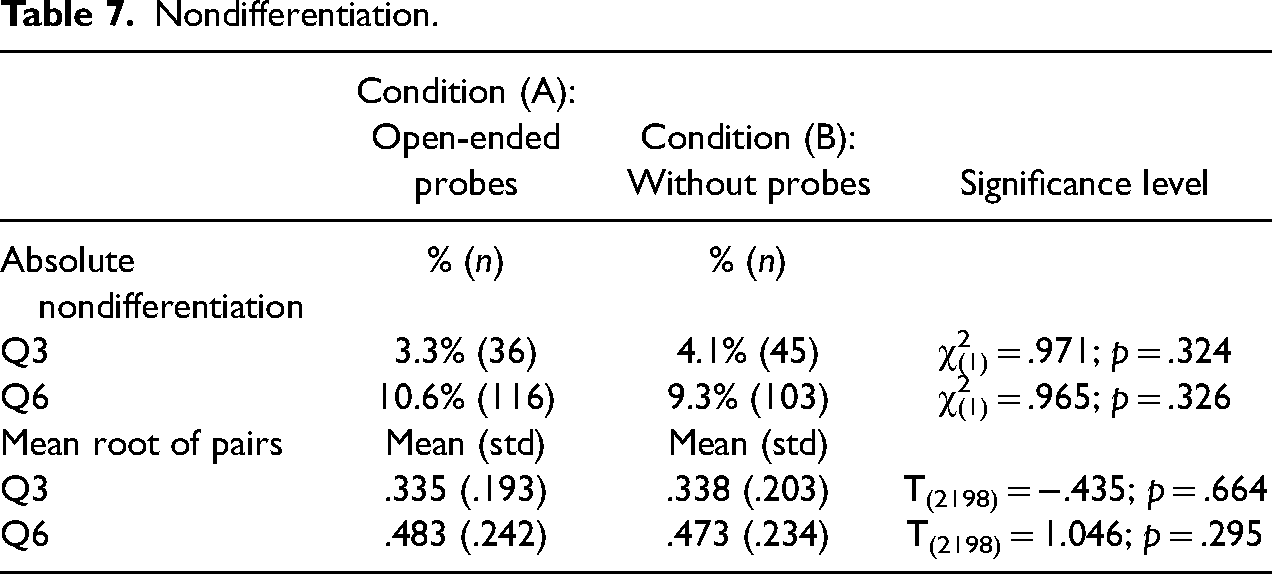

Nondifferentiation

Hypothesis 5c assumed higher levels of nondifferentiation among respondents in condition (A) with open-ended probes. This was examined for the two multi-item batteries Q3 and Q6. However, neither the absolute nor the metric measure using the mean root of pairs showed significant differences between conditions (see Table 7). Therefore, an impact on nondifferentiation cannot be confirmed.

Nondifferentiation.

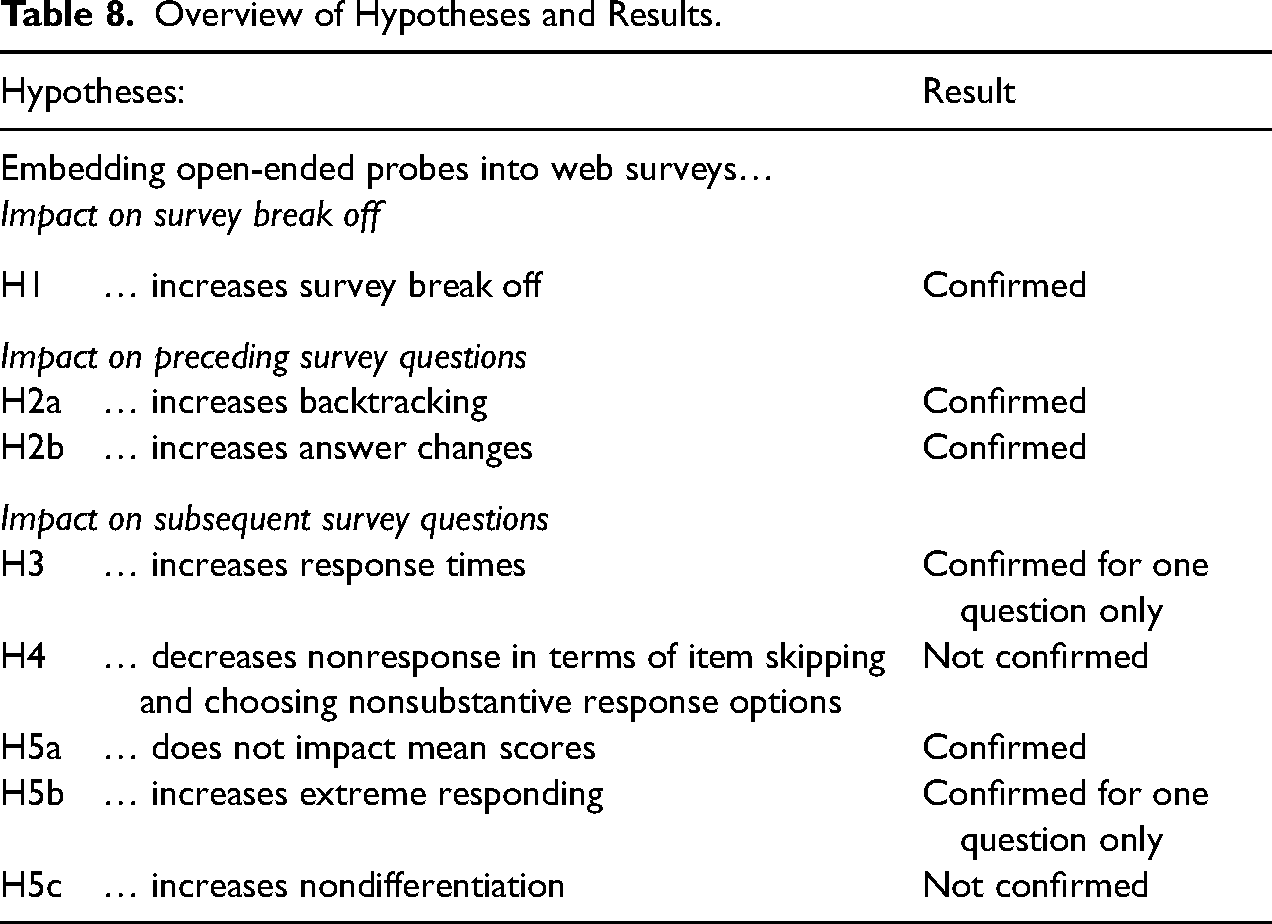

Discussion and Conclusion

The purpose of the presented research was to determine whether and in which ways embedding open-ended probes into web surveys impacts the process of responding to closed survey questions. In doing so, it took a different perspective than many current studies in the area of open-ended questions, which examine contextual effects on the response quality to open-ended questions and probes. 3 The study differentiated between the effects of open-ended probes on survey completion, on the survey questions the probes pertain to, and on subsequent survey questions. To this end, a randomized web survey experiment was carried out, in which closed survey questions were fielded with and without open-ended probes using a paging design. Inserting open-ended probes increased survey break off and impacted the survey questions the probes pertained to in the form of increased backtracking and answer changes. Effects on subsequent questions occurred in single cases (see Table 8 for an overview of the hypotheses and results).

Overview of Hypotheses and Results.

The majority of break offs occurred in the condition with open-ended probes, particularly after the first- and second-shown probe. The open-ended probes were not announced at the beginning of the survey, which may have contributed to the increase in break offs for the first-shown probes (rather than at the announcement that probes will be asked). Importantly, respondents with lower education and women were more likely to break off the survey. Although embedding open-ended probes did not lead to an unusually high level of survey break off, survey researchers should consider this potential nonresponse bias when implementing open-ended probes in web surveys.

Embedding open-ended probes significantly increased backtracking and answer changes to previous survey questions. In the present study, 9 percent of respondents who received open-ended probes returned to a previous question, while only 3 percent of respondents did this in the condition without probes. Across both conditions, about one of three respondents who backtracked changed their response to the preceding survey question. Like survey break off, backtracking and answer changes to previous questions occurred most often in response to the first open-ended probes.

Asking open-ended probes did not impact subsequent questions for the most part. There were no significant effects on mean scores, item skipping or non-differentiation for any of the examined questions. For four of the five examined questions, there were no significant effects on response time, choosing a nonsubstantive response option or extreme responding. This indicates that, in most cases, the cognitive processing of survey questions and subsequent web survey data are not impacted by inserting open-ended probes. However, there are notable exceptions.

Respondents were significantly more likely to choose a nonsubstantive response option to the single-item measure of life satisfaction (Q4) in the condition with open-ended probes. Moreover, respondents took significantly longer to answer and were more likely to give an extreme response to the question on relationship satisfaction (Q5) in the condition with open-ended probes.

There are several possible explanations for these findings. First, the responses to the open-ended probes indicated that the question on relationship satisfaction was flawed because it lacked a response category to indicate that one was currently not in a relationship. By the time respondents in condition (A) reached the survey question on relationship satisfaction, they were certainly expecting an open-ended probe to follow. Possibly, respondents lacking a suitable response option dealt with this irritation differently when they were expecting to be able to explain their response in an open-ended text field than when they were not expecting this. The majority of respondents who indicated that they were not in a relationship in the probe either chose the available non-substantive response option (“I do not want to answer this question”) or an extreme response option (i.e., “very unsatisfied”), while only one respondent in condition (A) chose to skip the question. 4

However, alternative explanations should be considered. Perhaps, the effects of embedding open-ended probes on the response to subsequent closed survey questions are more likely to occur when there is a close connection to the preceding survey and probing questions. In the present study, the question on relationship satisfaction was directly preceded by the closely related construct on life satisfaction (Schwarz, Strack, and Mai 1991).

Probing techniques and probe design vary strongly, as do the survey questions they pertain to, and generalizing the results of any given study to all settings is not possible. In the present study, the effect of open-ended probing on the response time and behavior of only one of the examined questions highlights that future research should establish which question, probe, and respondent characteristics determine when open-ended probes impact surrounding survey questions.

Thus, the present study has several limitations which point the way to future research. For instance, it could be that certain probe types, such as category selection probing, increase the likelihood of respondents backtracking and changing their answers more than other probes that do not prompt respondents to reconsider their survey response. Different spacing designs should be examined to understand whether asking probes after (almost) each survey question leads to other effects than spacing probes throughout the survey or only inserting one (random) probe. Future research should include other question types, such as behavior and factual questions. Finally, future studies should employ further measures of data quality, such as test–retest reliability (Knowles et al. 1992).

In summary, embedding open-ended probes can increase survey break off, backtracking, and answer changes to previous questions, though fortunately, none of these outcomes occurred very often in the present study. Survey researchers may omit a back button to prevent effects on previous questions in practice. Of course, effects on response behavior to subsequent survey questions cannot be prevented technically; however, for this study such effects were seldom, and there is no reason to assume a worrisome impact on data collection. More than ever, Schuman's (1966) suggestion to ask single open-ended probes to a subsample of a survey seems a timely and pragmatic compromise in order to control for rare effects of open-ended probes on response behavior while gaining insights into respondents’ thought processes and thereby validating survey responses.

Supplemental Material

sj-docx-1-smr-10.1177_00491241231176846 - Supplemental material for The Effects of Open-Ended Probes on Closed Survey Questions in Web Surveys

Supplemental material, sj-docx-1-smr-10.1177_00491241231176846 for The Effects of Open-Ended Probes on Closed Survey Questions in Web Surveys by Patricia Hadler in Sociological Methods & Research

Footnotes

Author's Note

The quantitative data set of this study and analysis file is available under the following link: Hadler, Patricia (2023): Hadler 2023 SMR_Effect of openended probes_Analysis.sps. figshare. Dataset. ![]() . The answers to the open-ended questions are not publicly available due to them containing information that could compromise participant privacy.

. The answers to the open-ended questions are not publicly available due to them containing information that could compromise participant privacy.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biography

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.