Abstract

Scholars study representative international surveys to understand cross-cultural differences in mentality patterns, which are measured via complex multi-item constructs. Methodologists in this field insist with increasing vigor that detecting “non-invariance” in how a construct’s items associate with each other in different national samples is an infallible sign of encultured in-equivalences in how respondents understand the items. Questioning this claim, we demonstrate that a main source of non-invariance is the arithmetic of closed-ended scales in the presence of sample mean disparity. Since arithmetic principles are culture-unspecific, the non-invariance that these principles enforce in statistical terms is inconclusive of encultured in-equivalences in semantic terms. Because of this inconclusiveness, our evidence reveals furthermore that non-invariance is inconsequential for the cross-cultural functioning of multi-item constructs as concerns their nomological linkages to other variables of interest. We discuss the implications of these insights for measurement validation in cross-cultural settings with large sample mean disparity.

Keywords

Introduction

When similar scores on a multi-item index are similar in their consequences, they are equivalent.

More and more, social scientists rely on multinational surveys to study cross-cultural differences in psychological orientations, like collectivism/individualism, nationalism/cosmopolitanism, authoritarianism/liberalism, religiosity/secularity, in-group/out-group trust or patriarchal/emancipative values, among others (cf. Hofstede 2001; Inglehart and Welzel 2005; Minkov and Hofstede 2014; Schwartz 2004). The inspiration motivating this work presumes that cross-cultural variation in people’s psychological orientations reflects historically evolved differences in prevalent mentalities, which in turn determine how individuals behave and how societies as a whole develop (cf. Inglehart 2019).

Technically speaking, scholars measure the respective psychological orientations on multi-item scales. These scales average numerically coded answers to a set of thematically related questions in a single index score for each respondent of a sample. Analysts then average the individual-level index scores to yield group-level means for the relevant units of comparison, which in most cross-cultural studies are countries. Researchers typically consider the group-/country-means in the respective psychological orientations as representations of the surveyed populations’ prevalent mentalities, which they examine for their association with other group-/country-level differences to understand the sources or consequences of encultured mentality differences. Also, scholars feed group-/country-means in psychological variables into the level 2 component of multilevel models to illuminate how contextual variation in encultured mentalities influences individual-level differences in how people behave and what they think (cf. Welzel 2013: chap. 2).

Obviously, cross-cultural survey data of this kind yield valid results if—and only if—it is certain that numerically similar scores on the related multi-item scales are sufficiently equivalent in meaning across the groups of comparison (Adcock and Collier 2001; Brady 1985; Chen 2008; King et al. 2003; Przeworski and Teune 1966).

Growing concern about this issue motivates a newly spreading type of study that uses the methodology of multi-group confirmatory factor analysis as a tool of measurement validation (cf. Davidov et al. 2014; Muthén and Muthén 2012). This increasingly fashionable methodology (henceforth: MGCFA) tests psychological constructs for group-to-group invariance in the respective items’ interconnections. If these tests fail, the diagnosis is “non-invariance”—a lethal verdict that supposedly invalidates the respective construct for further use in cross-cultural comparison (Stegmueller 2011; van de Vijver and Poortinga 2002).

Informed by item response theory and the related worry about “differential item functioning,” 1 the whole MGCFA methodology rests on an axiomatic presumption about group-wise differences in inter-item connections: Indications of such differences are an infallible sign that respondents understand the respective items differently, due to their group’s specific culture. This premise equates statistical non-invariance in item connections with semantic in-equivalence in item understandings, which then informs incomparability judgements. 2 Advocates of this logic insist with increasing vigor that MGCFA invariance tests provide the penultimately authoritative tool of construct validation in cross-cultural research. 3

Questioning this authority, our contribution highlights some inherent limitations of the concept of non-invariance. These limitations corroborate the conclusion that group-to-group non-invariance in statistical item connections provides no infallible sign of culture-biased in-equivalence in item understandings. Anticipating this article’s punchline, the following paragraphs briefly substantiate this conclusion.

Let us begin with the central axiom of MGCFA methodology, namely, the presumption that group-to-group variability in a construct’s item connections

4

invalidates the construct’s comparability, especially as concerns differences between group means. Upon closer scrutiny, the underlying logic of inference turns out to be lopsided because, in fact, comparability suffers in the exact opposite direction of inference. Indeed, it is differences in group means that undermine the group-to-group comparability of item connections. The reason is a three-step sequence of logical consequences, starting from group mean disparity: The more disparate group means are, the more of a mixture between moderate and extreme group means exists. Under the arithmetic of closed-ended scales, extreme group means only exist when overwhelming majorities of individuals in a group are culturally unified in the strong rejection or approval of the respective items. This implies small within-group variation, which decreases the likelihood to find strong inter-item connections (whether by means of covariances or correlations). Consequently, the likelihood to find strong inter-item connections decreases steeply with the extremity of group means, which renders inter-item connections incomparable across groups with a mixture of moderate and extreme means. When the probabilities for strong inter-item connections vary on a group-by-group basis, it is more likely to get a mixture of weak and strong connections, which inevitably causes markers of “non-invariance” to turn on—yet not because of culture bias in item comprehension but because of the math of closed-ended scales.

Against this backdrop, the takeaway of our contribution boils down to three limitations regarding non-invariance diagnoses. First, invariance tests follow a particular logic of cross-cultural comparison that is—against strong claims to the contrary—not generalizable for all kinds of multi-item constructs. Instead, the invariance testing methodology operates within a clearly restricted sphere of applicability that solely works for “reflective” constructs tailored to the “similarity” criterion of index formation. By contrast, “formative” constructs tailored to the “complementarity” criterion are out of reach of MGCFA’s judgmental authority and should therefore not be subjected to MGCFA test standards—which is, however, a continued malpractice (e.g., Alemán and Woods 2015).

Second, even within its restricted realm of applicability, the MGCFA methodology suffers from further limitations, two of which are particularly noteworthy. To begin with, disparity in group means bereaves non-invariance markers of their conclusiveness as an indicator of culture bias in how respondents understand survey items. This loss of conclusiveness actually progresses in direct proportion to the magnitude of group mean disparity. The reason is that group mean disparity enforces non-invariance due to the mathematical implications of closed-ended scales. Since mathematical principles are culture-unspecific, the non-invariance that they enforce entails no information whatsoever on encultured biases in the respondents’ item understanding.

The next limitation follows suit. Precisely because of its inconclusiveness in cross-cultural settings with disparate group means, non-invariance is inconsequential for a construct’s cross-cultural performance in nomological terms. This in-consequentialness results from the simple—albeit largely overlooked—fact that the functioning of a multi-item index across groups depends in no way on the functioning of its single items within groups. This non-dependence relates to “compositional substitutability” as a principle in construct functioning. The principle applies when numerically similar multi-item scores coexist with variable item connections that are compositional substitutes to each other. That is, similar multi-item scores map on other variables of interest in corresponding fashion across groups, irrespective of non-invariance in item connections within groups (Welzel and Inglehart 2016).

The remainder of this contribution substantiates these points in greater detail, following a sequence of logical steps. In the final section, we close with the conclusion that—in settings with pronounced group mean disparity—“compositional substitutability” tests of nomological linkages offer an alternative guide for establishing measurement equivalence.

The Online Appendix (henceforth: OA, which can be found at http://smr.sagepub.com/supplemental/) to this article expands the discussion of a couple of selected points (Section 1), followed by a mathematical proof (Section 2) and an empirical demonstration with observational cross-cultural data (Section 3).

As a preliminary remark, we wish to stress that our logic regarding the role of group means applies in principle to all sorts of comparison groups. Yet, in practical terms, the most important grouping units in cross-cultural comparison are nations, for which reason country means represent the type of group mean we have primarily in mind.

The Index Performance Paradox

Since recently, scholars publish articles that do nothing else than scrutinizing well-established constructs under the invariance testing arsenal of MGCFA. Upon test failure, scholars diagnose “non-invariance,” which (supposedly) disqualifies the construct in question as a case of culture-biased “measurement mis-specification”—a fatal verdict that delegitimizes the further use of the respective construct in cross-cultural comparison. The fact that in the meanwhile even top-ranking journals publish articles solely concerned with testing measurement models confirms MGCFA’s rapidly grown reputation as the penultimate validation tool in cross-cultural research. But this reputation comes with a price tag, which we illustrate referring to a prominent example of in-equivalence judgements: the emancipative values index (henceforth: EVI).

Two studies using MGCFA disqualify the EVI as a mis-measure that lacks cross-cultural equivalence based on failed invariance tests across countries (Alemán and Woods 2015; Sokolov 2018). 5 The problem we wish to draw attention to is that these invariance tests fail in spite of the fact that the EVI performs impressively in explanatory terms using nomological validity criteria.

Based on data from the World Values Survey (henceforth: WVS), the EVI summarizes a dozen items, grouped into four larger themes—voice, choice, equality, and autonomy—to measure people’s support of universal freedoms. 6 Across more than a hundred countries, including the biggest national populations in each global region, the EVI correlates in theoretically expectable ways with other, well-established markers of cultural difference, like collectivism/individualism, 7 or embeddedness/autonomy, and with scores of objective indicators of societal development, including various measures of economic modernization, state functioning, and political liberalization. Many of these correlations are strikingly strong, often reaching an r of .80 and higher (Welzel 2013: chap. 2; Welzel and Inglehart 2016). 8 These correlations are not limited to the country level. At the individual level, too, at which the WVS covers more than 400,000 respondents from all over the world and from different time periods, the EVI correlates in theoretically anticipated ways and stronger than any other measure from the WVS with orientations and behaviors that form the civic building blocks of healthy democracies, like secular orientations, out-group trust, social movement activity, informational connectedness, and a liberal understanding of democracy (for evidence, see OA Section 3, which can be found at http://smr.sagepub.com/supplemental/).

In a nutshell, the EVI picks up quite an impressive chunk of social reality and, thus, provides a first-rate cultural marker of inherently meaningful and profound societal differences. In other words, the EVI performs outstandingly under nomological validity criteria. 9 Nevertheless, this index fails all MGCFA-style equivalence tests clearly, no matter how much one relaxes the strictness of MGCFA’s invariance requirements, as Sokolov (2018) points out.

This is not the only example in which invariance test requirements seem to conflict with nomological validity criteria. Another example from the WVS comprises two three-item constructs measuring liberal and illiberal notions of democracy: Across countries, the illiberal notions index fails invariance tests clearly, while the liberal notions index passes them neatly. But the illiberal notions index outperforms the liberal notions index by far in terms of nomological validity—showing much stronger explanatory/predictive qualities at both the individual level and country level (for proof, see OA Section 3, last subsection, which can be found at http://smr.sagepub.com/supplemental/). In the face of this apparent tension between invariance requirements and nomological criteria, it seems legitimate to ask whether there is something wrong with an equivalence logic that debunks constructs at such ease when these constructs are so strongly reality-linked.

Obviously, there is an “index performance paradox” in the sense that strength in a construct’s connections to other variables, on the one hand, and invariance in this construct’s item connections, on the other, operate somehow against each other. That would mean a (partial) trade-off between inward and outward construct connectivity. If so, tailoring measures one-sidedly to invariance requirements comes at a cost that needs deeper reflection.

The point is that invariance requirements focus solely on a construct’s item connections. Therefore, designing multi-item constructs in such fashion that they meet invariance requirements is to maximize inward construct connectivity. But if there is indeed a trade-off with outward construct connectivity, shaping multi-item scales toward accordance with invariance criteria is to sacrifice outward for inward construct connectivity. This is not a trivial sacrifice because outward construct connectivity touches directly upon a construct’s explanatory performance in nomological terms. For this reason, trading-off outward in favor of inward connectivity might increase the risk of a type-II error: rejecting theoretically important relationships that actually exist.

Tentatively, these considerations suggest that designing constructs to make them pass invariance tests is trading off these constructs’ explanatory performance in nomological terms. Further evidence in favor of this conclusion relates to the two typical recommendations that MGCFA practitioners propose to cure a construct from diagnosed non-invariance. One recommendation is to search in a construct’s item set for a smaller subset of items that might pass the invariance tests and to limit the further use of the construct to this particular subset only (cf. Janssen 2011).

In this vein, Sokolov (2018) recommends to reduce the full-blown 12-item EVI to the three items of the index’s “choice” component (which addresses reproductive freedoms in matters of homosexuality, abortion, and divorce). The reason for this recommendation is that the EVI choice component passes MGCFA invariance tests—at least under somewhat relaxed requirements using Bayesian statistics. Yet, the reduced-version EVI’s nomological connections to theoretically expected covariates are weaker—and consistently so—compared to the complete EVI (for proof, see OA Section 3, which can be found at http://smr.sagepub.com/supplemental/).

We suppose that this pattern is not a coincidence but follows a systematic logic: Reducing a construct’s item set is to narrow the bandwidth of its measurement, which in turn delimits explanatory outreach. As a consequence, nomological connections suffer.

The second solution that MGCFA proponents recommend to cure constructs from non-invariance is to eliminate from further examination those groups of comparison (e.g., countries) in which the measurement model performs badly (in terms of factor loadings, goodness of fit measures and the like; cf. Alemán and Woods 2015). This recommendation leads to the exclusion of groups with extreme means on the respective construct’s multi-item scale. The reason is, as we will demonstrate, that groups with extreme means are likely to exhibit underwhelming measurement models. Removing groups with more extreme means, of course, empties a construct’s multi-item scale at its lower and upper ends. And eliminating the lower and upper ranges of variance also reduces the covariance with other variables of foremost interest. 10 So again, curing the supposed “disease” of non-invariance is sacrificing nomological connections and increasing the risk of a type-II error.

The partial trade-off between a construct’s performance in invariance tests versus nomological tests is real. 11 It relates to another phenomenon known as the “bandwidth–fidelity dilemma” (Cronbach and Gleser 1957; Ones and Viswesvaran 1996; Salgado 2017). This dilemma denotes the fact that wider item sets cover a broader “bandwidth” of reality and, hence, explain a larger spectrum of externally located phenomena (i.e., stronger outward connectivity). At the same time, a wider spectrum of items reduces “fidelity” in the sense of the items’ connectivity among each other (i.e. weaker inward connectivity). Since MGCFA invariance criteria aim solely at inward connectivity, creating constructs in accordance with these criteria involves a tendency to diminish outward connectivity. Expressing the same trade-off in terms of reliability and validity, we can say that MGCFA invariance criteria aim at maximizing measurement reliability, which incurs the risk to sacrifice measurement validity.

As Clifton (2020) explains, the risk to sacrifice validity on behalf of reliability is particularly acute in the presence of systematic measurement error. The reason is that a consistently deflationary or inflationary bias in the measurement makes numerical scores indeed more reliable, yet at the same time also less valid. 12 If one considers both measurement qualities as equally important, a methodology that resolves their partial trade-off one-sidedly in favor of just one of them is inherently unbalanced. We question whether one should leave the ultimate authority in judging measurement quality to a methodology whose criteria resolve basic trade-offs in such a partisan fashion.

Methodological Absolutism

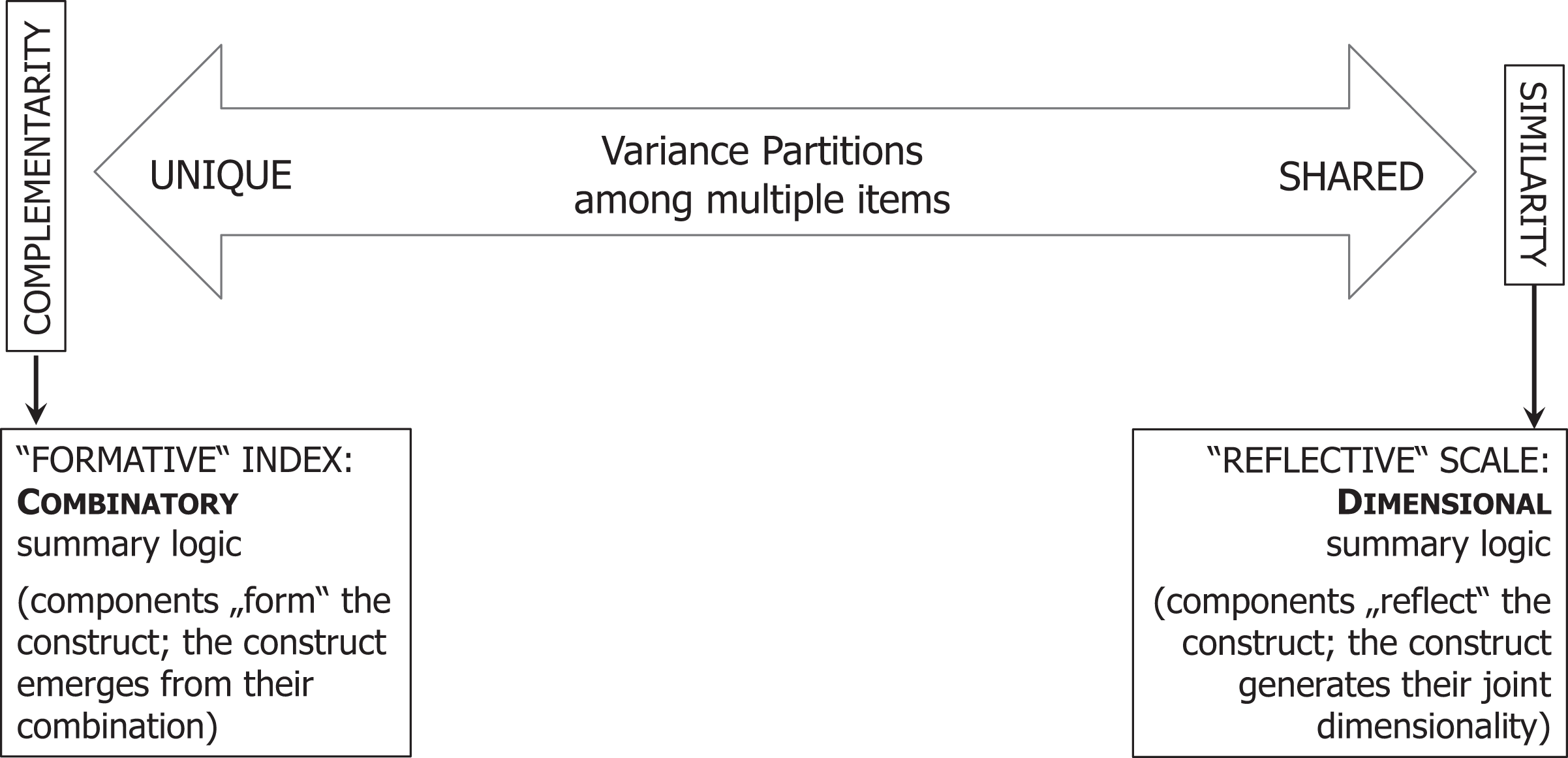

At times, MGCFA’s inbuilt imbalance in resolving measurement trade-offs magnifies into a form of methodological absolutism. This becomes obvious when one recognizes MGCFA’s stance to the two alternative logics of how to create multi-item constructs. Figure 1 places these two logics at the opposite ends of a continuum ranging from perfect item “similarity,” at one polar end, to pure item “complementarity,” at the opposite end. At the similarity end, all variation among a construct’s items is shared. At the complementarity end, by contrast, each item contributes a perfectly unique variance partition to the overall construct. 13

The complementarity–similarity continuum.

It is appropriate to tailor multi-item constructs to the similarity criterion when the respective measurement theory supposes the constituent items to represent a joint underlying dimension. But it is just as appropriate to tailor multi-item constructs to the complementarity criterion when the measurement theory supposes the overall construct to emerge from the combination of the constituent items. All of this is known under the terms “reflective” and “formative” logics of construct design (Coltman et al. 2008).

MGCFA invariance tests are shaped in such fashion that only constructs tailored to the similarity criterion can pass them. Constructs tailored to the complementarity criterion cannot, since complementarity defies the mono-dimensionality criterion toward which any type of confirmatory factor analysis, including MGCFA, is oriented (cf. Brown 2015; Joreskog 1971; Kline 2016). This partisan position of MGCFA on the similarity–complementarity continuum of construct logic causes no problem as long as MGCFA practitioners stay on their home turf and restrict the application of their tests to constructs explicitly tailored to the similarity criterion.

This self-restraint goes lost when scholars insist that only those multi-item constructs which accord to MGCFA invariance criteria are cross-culturally comparable. Authors repeatedly use this dictum as their justification to subject even explicitly complementarity-tailored constructs their similarity-seeking test logic, not even mentioning the difference (e.g., Alemán and Woods 2015). This malpractice exhibits a form of methodological dogmatism that, we think, should be abandoned rather sooner than later.

Misconceptions of Culture

To pursue meaningful cross-cultural comparisons, it is essential to understand the role of culture in shaping prevalent mentality patterns. A central point of departure in this context is to realize that humans’ social nature embodies a need for group identification (Abele and Wojciszke 2019). This need makes humans extend their egos into a “collective self” that incorporates we-feelings among those who recognize each other as being of the same kind, which is part of our tribal psychology. Thus, the human mind searches for identity in “imagined communities,” of which the nation has evolved as the most powerful one, beyond the family (Gat 2013). By creating an extended form of kinship, nations shape collective identities more strongly than other community-generating forces (Inglehart and Welzel 2005, 2010; Minkov and Hofstede 2014). Nations are manifest in countries as their territorial space and in states as their organizational frame. But as imagined communities, nations are after all a psychological category.

Against this backdrop, the role of culture is to shape distinct patterns of thinking and behavior that make imagined communities recognizable to their members. Accordingly, culture evolves through the tendency to make people’s mentalities and habits more similar within imagined communities and more dissimilar between them. Technically speaking, culture shapes prevalent mentalities by enlarging the between-group variation in psychological orientations and limiting their within-group variation. 14 Therefore, focusing on the between-group partition in cross-cultural variation is key to capture culture where it leaves its biggest mark. MGCFA, however, does the exact opposite in that it targets its tests almost exclusively at the within-group variance partition by conducting factor analyses in a group-centered manner (cf. Marsh et al. 2018).

MGCFA infers the comparability of aggregate-level group means from individual-level item connections. Thus, the direction of inference points from the individual level to the aggregate level. This direction of inference implies an ontological primacy of individual-level orientations over their aggregate-level prevalence. This premise reveals another incomprehension of how culture works. In its essence, culture is a phenomenon of inheritance. For this reason, every society already has an inherited aggregate pattern of how people think and behave before any newly born individual begins to acculturate and to develop her own personality. In that sense, the aggregate pattern of orientations is ontologically prior to the individual manifestations from which we calculate it (Welzel and Inglehart 2016). 15

While collective action theorists like Diekmann (1985) and Coleman (1990) acknowledge the ontological primacy of the aggregate over the individual, 16 survey analysts seem oblivious about this priority. The reason is that survey-based measurement procedures reverse the sequence and, hence, leave us with the false impression that the aggregate is a derivative of individuals, when in fact the aggregate shapes the individuals in its realm. This happens in that a group’s central tendency in moral norms exerts pressure on its members to internalize the group norms. In so doing, the collective culture shapes individual personalities—much more so than the other way around. 17 Indeed, by the manner in which we measure psychological orientations, we reverse the true direction of genesis because we measure orientations first at the individual level before we calculate these orientations’ distributional features in the aggregate. But in the moment, we measure individual-level orientations in interviews, these orientations have already been shaped by the normative pressures of their surrounding culture. 18

Summing up, MGCFA operates under assumptions that turn the ontological order of priority between aggregate- and individual-level variance components upside down. Epistemologically, these assumptions distort the configurational importance of the between- and the within-group variance partitions in the making of culture. Indeed, in the creation of culture, aggregate-level properties are more configurative than individual-level ones and the between-group variance is more constitutive than the within-group one. The MGCFA methodology reverses this order of priority in that it judges the validity of aggregate-level properties and between-group variances depending entirely on individual-level item connections within groups.

If one agrees that this reverse-order prioritization of variance partitions and levels of analysis reflects a misconception of culture, the unchallenged authority of MGCFA as the mandatory measurement validation tool in cross-cultural research is in question.

Dimensional Cohesions and Group Means

The whole idea of cross-cultural comparison is inspired by the intuition that culture is a meaningful source of variation in how humans think and behave. Consequently, it is almost certain that group means in psychological and behavioral constructs differ greatly as soon as the sampled groups pass a certain level of cross-cultural diversity. Since psychological and behavioral constructs are measured on closed-ended scales, large differences in group means imply that these means scatter over wide ranges of the respective scales, with some group means coming close to the lower and others to the upper scale end. If this is the case, group-by-group variability in item connections is almost inevitable. The reason is a brute mathematical principle that forces item connections into a probabilistic dependency on group means, given the nature of closed-ended scales.

Indeed, when group means vary over most of the scale range of a given index, variability in within-group item connections is doomed to be sizable. Why? Because the location of the group mean on a closed-ended scale sets strict limits to the possible magnitude of item connections and their between-group similarity. To be specific, consistently strong item connections are likely only for groups whose means are located in the middle ranges of a closed-ended scale. By the same token, consistently strong item connections become increasingly unlikely—and ultimately impossible—as group means come closer to the floor and ceiling of the respective scale.

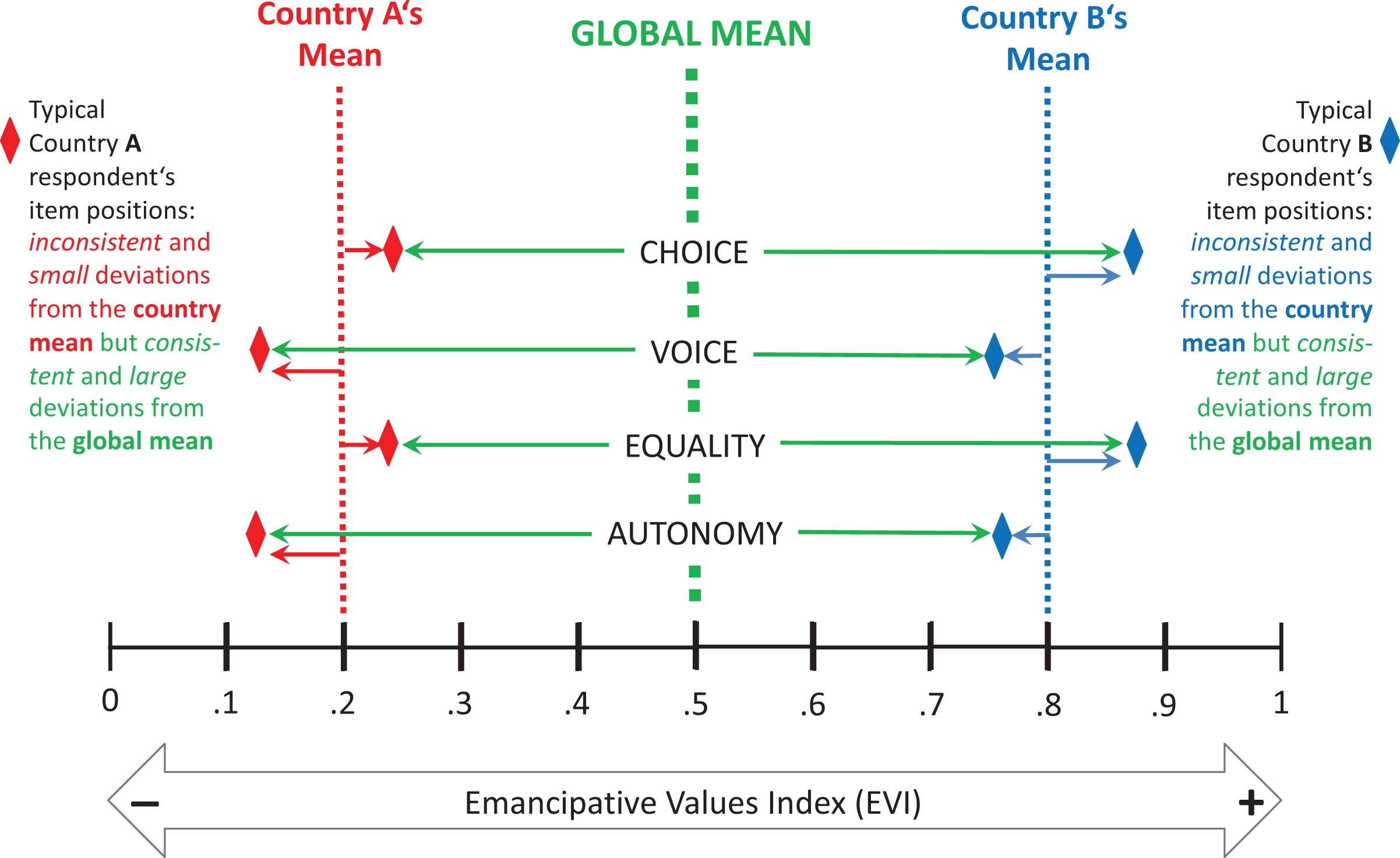

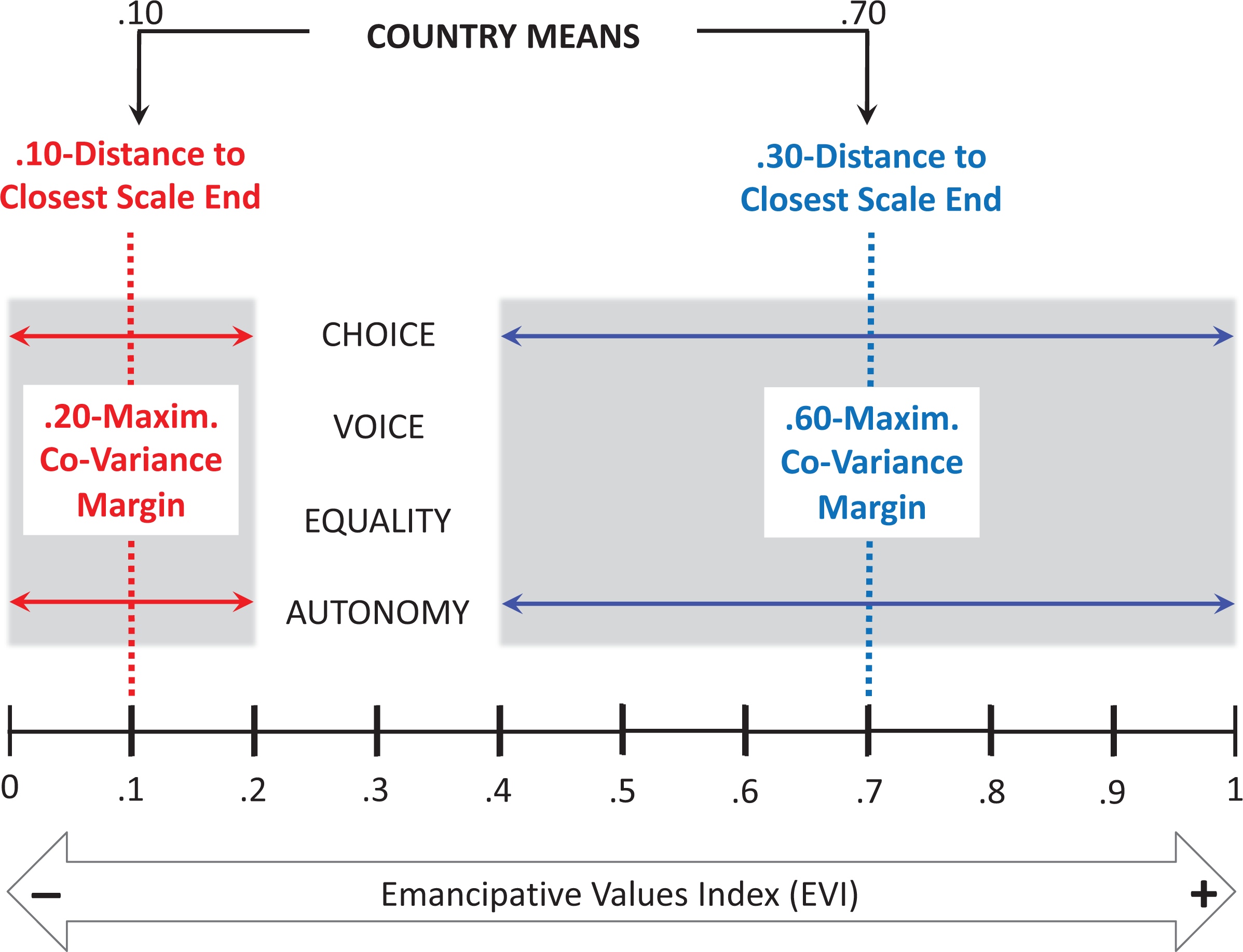

For illustration purposes, Figure 2 provides a stylized depiction of the EVI’s continuous 0-to-1 scale. On a closed-ended scale like this, a group’s mean score can only approximate the floor or ceiling when overwhelming majorities of the respective population are culturally unified in either the strong rejection or approval of emancipative values. Therefore, the presence of low and high group means implies correspondingly small deviations from these means due to the nature of close-ended scales.

The between/within model of cross-cultural variation.

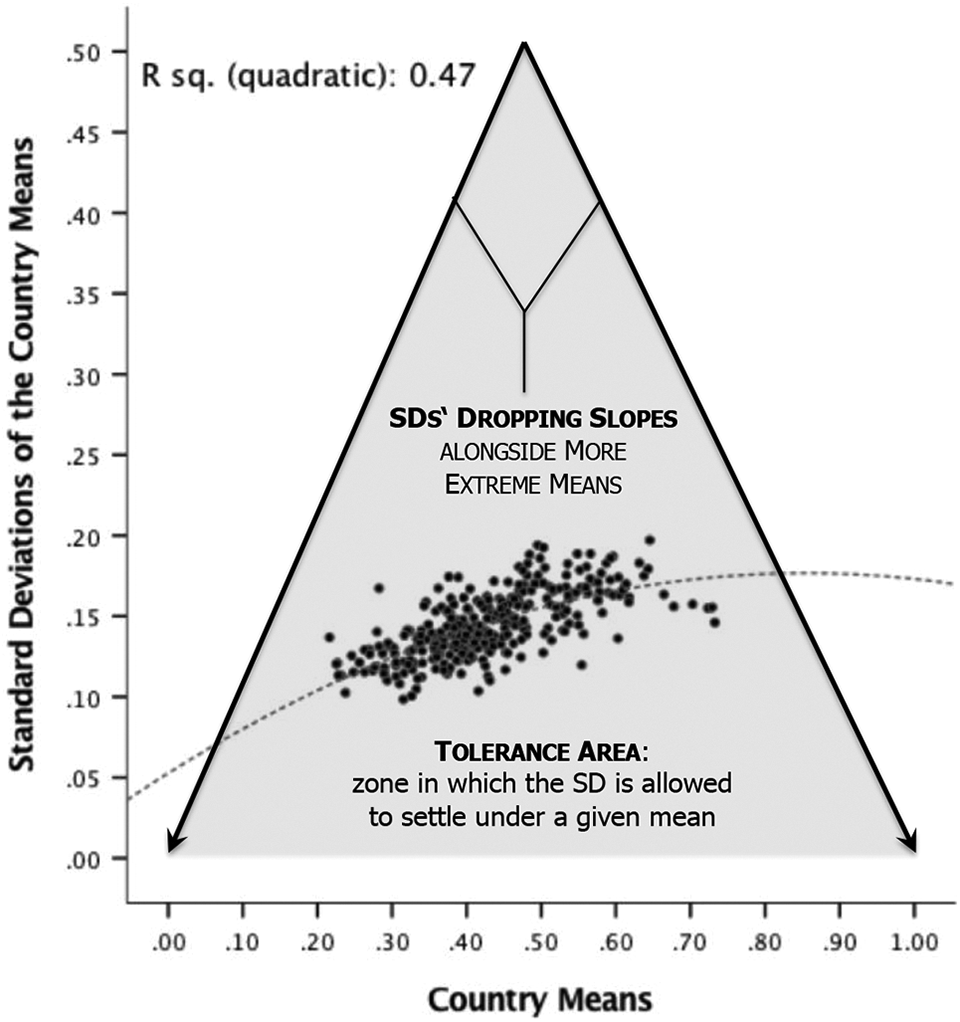

This principle forces the relationship between group means and mean deviations within groups into the triangular area shown in Figure 3 (see OA Section 3 for detailed descriptions of the respective measurements, which can be found at http://smr.sagepub.com/supplemental/). This mathematically imposed distribution area covers exactly 50 percent of the entire Cartesian plane in Figure 3. Its shape makes it likely that mean deviations within groups relate to their group means in a curvilinear (inverted U-shape) manner whenever a sizable number of group means approximate the floor and ceiling of the scale.

The link between EVI country means and their standard deviations.

Figure 3 illustrates this principle using standard deviations. As a matter of fact, standard deviations can reach their theoretical maximum only when the group mean coincides with the scale midpoint. On a 0-to-1 scale, the largest possible standard deviation is 0.50, which represents the strongest possible polarization, with 50 percent of the sample scoring at 0 and the other 50 percent at 1—a situation that necessitates a group mean of exactly 0.50. Group means below or above the scale midpoint will unavoidably have standard deviations below 0.50. Thus, standard deviations approach zero as the group mean approaches either the lower or upper scale end, with the tails of this approximation dropping steeply toward the scale ends.

The relationship between group means and mean deviations within groups is known (Beugelsdijk and Klasing 2016).

19

But scholars neglect the series of consequences that follows from the mathematical enforcement of low mean deviations by extreme group means. These consequences resolve the whole issue of variability in within-group item connections, albeit in ways that invalidate MGCFA’s take on this theme: Extreme group means on closed-ended item scales always involve narrow mean deviations within groups. Since inter-item covariances are calculated from group mean deviations on the respective item scales, small group mean deviations produce low covariances.

20

Low covariances accordingly characterize the matrices on which the calculation of factor loadings ultimately relies. Low factor loadings seemingly indicating weak cross-item consistency are unavoidable for groups with more extreme means.

21

In highly diverse cross-cultural settings in which group means scatter widely over the scale range of a given index, substantial differences between group-centered factor models are certain. Consequently, invariance tests are doomed to indicate “non-invariance,” no matter how much the researcher relaxes the invariance requirements.

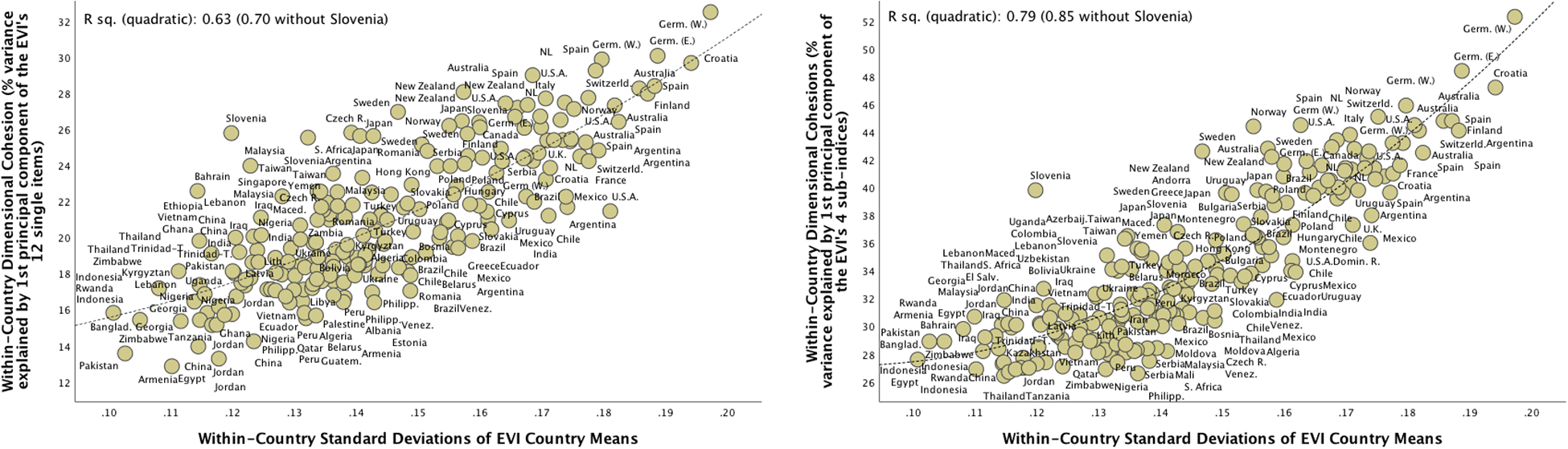

Figure 4 illustrates that small mean deviations in countries with more extreme means indeed enforce low factor loadings. As mentioned, this enforcement follows from the math of closed scales. And since mathematical principles do not vary by culture, the variability in within-group factor loadings that derives from these principles embodies no information whatsoever on encultured differences in the respondents’ semantic understanding of the involved items. Arguing otherwise is to confuse matrix equivalence with semantic equivalence. The only thing we learn from non-invariance across within-country factor loadings is that the sample means differ widely across countries. Such group mean disparity embodies nothing suspicious; it is actually a testimony of culture’s power to diversify human mentalities.

The link between the standard deviations of EVI country means and their dimensional cohesions within countries.

Self-confirming Circularity

A possibility to liberate invariance tests from the influence of group mean disparity is to reduce the very disparity in group means, which is obviously a matter of scale development. 22 The challenge then is to design psychological constructs in such fashion that they exhibit largely similar group means, preferably centering on the scale midpoint. Interestingly, the idea that psychological measures should vary largely among individuals within groups but not so much (or not at all) between groups was one of the drivers of MGCFA’s rise to prominence. The discussion of culture bias in IQ tests is exemplary. Scholars argued indeed that, unless one believes in a racial theory of intelligence, ethnic or national group means in IQ test scores must be largely similar. The fact that they are not, accordingly, disqualifies the group mean differences as an artifact of culture bias in the measurement (van de Vijver 2004; van de Vijver and Poortinga 2004). Clearly, this position openly idealizes psychological measures that exhibit sizable and roughly the same variation within each group of comparison but little variation between groups.

The MGCFA test methodology is implicitly tailored to this ideal and therefore doomed to fail under strongly disparate group means. From such failure, scholars then infer the invalidity of the group mean disparity—which seemingly proves true the initially implicit discomfort with group mean differences. In other words, the MGCFA methodology is designed in such fashion that its results always verify the premise that the method pretends to test in an open-ended manner. The inbuilt circularity of this logic makes the initial premise invulnerable to the methodology that is supposed to test it, which is as good as stipulating from the outset that group mean disparity is erroneous by definition.

Against this unspoken axiom, we hold that whenever psychological variables sensibly capture responses in prevalent mentalities to other group-level realities, such as prevailing existential conditions, disparity in group means is to be expected rather than suspected. When these disparities map indeed in corresponding fashion on differences in other group-level characteristics, this does not prove bias in the measurement but testifies nomological validity. For this reason, meaningful group-level correlations provide an indispensable tool of measurement validation. 23

As mentioned, to keep MGCFA invariance statistics conclusive, one needs to design psychological constructs in such a manner that they show only modest group-level differences. From the viewpoint of psychology, with its primary interest in individual-level variance, such psychological constructs might be desirable. From the viewpoint of sociology, this is profoundly different. As a science of society, sociology’s interest is oriented toward culturally inherited group-level differences that exhibit lasting variation in the aggregate. This includes variation in the aggregate distribution of individuals’ psychological orientations, which is a manifestation of differences in prevalent mentalities. Therefore, sociologists are interested in culture mostly insofar as it diversifies prevalence patterns in collective mentalities. In other words, the psychological premium on constructs with modest group-level differences conflicts with sociology’s epistemological orientation. Sociologists would, hence, be ill-advised to calibrate their measurement instruments in such fashion that the resulting between-/within-group variance partitions meet the requirements under which MGCFA tests indicate “sufficient” invariance.

Mistaking Non-invariance for In-equivalence

Some advocates of MGCFA dropped the premise that invariance in item connections is sufficient to infer equivalence in meaning, that is, how people understand survey items (Boehnke et al. 2014; Meitinger 2017). Yet, the consensus remains that invariance is at least necessary to infer equivalence in understandings. In line with this axiom, scholars continue to treat statistical non-invariance as an infallible sign of semantic in-equivalence. 24 Due to this premise, non-invariance disqualifies differences in group means as an artifact of culturally induced biases in people’s item comprehension.

The premise is questionable, however. The reason is that group mean disparity enforces, as mentioned, non-invariance due to the mathematical rules of closed-ended scales. Since mathematical rules are culture-unspecific, the non-invariance that these rules enforce has nothing to do with culture-biased differences in people’s understanding of survey items. Therefore, non-invariance does not say anything about how truthful the group mean differences are from which this very non-invariance itself derives. In short, non-invariance in statistical terms does not imply in-equivalence in semantic terms.

Regardless of non-invariance, group mean differences may or may not reflect “true” differences in item approval related to groups. Validity in this sense can be inferred conclusively only from qualitative in-depth interviews that explore the semantic structure of respondents’ item understanding. For cost reasons, this is often no practical option in mass surveys. The best alternative is a nomological network of theoretically expected relationships that reveals how tightly and meaningfully differences in group means map on other group-level differences of significance.

In OA Section 3 (which can be found at http://smr.sagepub.com/supplemental/), we present evidence showing that multi-item constructs can pass nomological tests formidably, despite well documented non-invariance. In such cases, non-invariance is obviously inconsequential for a construct’s cross-cultural functioning. The reason of this in-consequentialness is the inconclusiveness of non-invariance as a sign of measurement in-equivalence under disparate group means.

Incomparability Reformulated

MGCFA relies on group-wise inter-item covariance matrices. Extreme group means on the related item scales enforce small item variances, which in turn impose small inter-item covariances. This is inevitable due to the covariance formula (see Endnote 10). With the concept of correlation, whose formula includes the standard deviation, this might be different, at first glance. Although extreme group means also enforce small standard deviations, inter-item correlations can still be high, if the group mean deviations of the related items are just sufficiently consistent with each other in direction and scope. This raises the question of whether using correlation instead of covariance matrices offers a solution to MGCFA’s problem with group mean disparity.

Unfortunately, group-wise inter-item correlations are just as sensitive to group mean disparity. For ease of understanding, assume that an overall construct is measured on a continuous but closed-ended multi-item scale, ranging from minimum 0 to maximum 1.0 (as in Figure 5), with decimal fractions indicating intermediate positions. The closer a group mean is to either end of this scale, the narrower is the margin within which the individuals’ deviations from the mean vary. This is inevitable because extreme group means can only exist when most individuals are unified in their joint rejection or approval of the statements phrased in the related set of items. Consequently, in order to generate high correlations and high (correlation-based) factor loadings among given items, the mean deviations of the item responses must be mutually consistent within a narrower margin when the group mean is more extreme. In fact, as Figure 5 illustrates, the width of the margin within which mean deviations must be consistent to produce high correlations and factor loadings is precisely two times the group mean’s distance to its closest scale end (presuming a non-skewed distribution). Thus, the consistency margin is 0 for a hypothetical group mean of 0 or 1.0, 0.20 for a group mean of 0.10 or 0.90, but 1.0 (i.e., the entire scale range) for a group mean of 0.50. Since mean deviations within a narrower margin are more likely the result of measurement imprecision, it is harder to find strong consistency within narrower margins. Consequently, the possibility that correlations can be high even under extreme groups’ means is largely hypothetical and unlikely in all practical terms.

Margins within which item-wise mean deviations are allowed to co-vary depending on country means.

It follows that the requirements to find high correlations and factor loadings increase steeply in strictness with the group means’ distance from the scale midpoint. Whether we use covariance or correlation matrices, group-centered factor analyses turn in either case into an entirely different game depending on the scale position of the group mean. This insight unmasks a lopsidedness in MGCFA’s comparability logic: While MGCFA presumes that differences between group-centered factor solutions render group means incomparable, the truth is that differences in group means render group-centered factor solutions incomparable.

This whole issue reveals a deeper misunderstanding of the concept of correlation itself. The standard interpretation of low inter-item correlations in a group is that the item positions of the typical respondent in this group are inconsistent. But when the group mean is extreme, the inconsistency verdict derives from an extreme standard. Measured against an extreme standard, the respondents’ item positions easily appear inconsistent, when in fact they reveal themselves as pretty consistent, if one just holds them against the only standard that is the same for all groups—the global mean, that is.

Another look at Figure 2 illuminates the issue. As is obvious, item positions that exhibit small and inconsistent deviations from an extreme group mean, show large and consistent deviations from the global mean—assuming the global mean is somewhere close to the scale midpoint.

Extremity as Consent

One can see extreme group means as a problem of scale development in the sense that items are not sufficiently sharp to exhibit a group’s true diversity. Alternatively, extreme group means might reveal something profoundly real: that some groups share a widespread cultural consent on important moral questions (Blaydes and Grimmer 2020).

A good case in point relates to WVS questions included in the “choice” component of the EVI. These questions address the acceptability of homosexuality, abortion and divorce. The WVS asks respondents to indicate their position to each of these reproductive freedoms on a scale from 1 (“never justifiable”) to 10 (“always justifiable”). Strikingly, in several Muslim-majority countries, up to 90 percent of the respondents chose positions 1, 2, or 3 on each of the item scales (Norris and Inglehart 2003). In so doing, these people express a strong and consistent rejection of reproductive freedoms. The sample means on the three items and on the overall reproductive freedom index center, accordingly, on a score of 2 (which on a scale from 1 to 10 is an extreme group position). Sample mean deviations in individual item responses vary mostly within the narrow range between −1 (for respondents choosing scale position 1), 0 (for scale position 2), and +1 (for scale position 3), with most respondents clustering on a deviation score of 0, showing actually no deviation from the group mean.

Sample mean deviations of such a tiny scope fall into the margin of measurement imprecision, which infuses a sizable amount of random error into the within-group variation. 25 Small and mostly random sample mean deviations inevitably yield low covariances, correlations, and factor loadings, which scholars routinely interpret as an indication of inconsistency in item response and lack of understanding of the items’ thematic connectedness. 26

Yet, this group-centered perspective is deceptive because it ignores the mathematical implications of extreme group means, which actually result from unusually consistent (i.e., consistently dismissive or appreciative) item responses. 27 Indeed, the calculus of group-centered covariances, correlations, and factor loadings obscures the unusual consistency in individual-level item responses. At least this holds true in all cases of culturally consensual samples that lack a large enough contrast group with consistent item responses in the opposite direction (which, if present, would move the group mean toward a more moderate scale position).

Consequently, small covariances, correlations, and factor loadings indicate inconsistency in item response only among samples with moderate group means. In samples with extreme means, by contrast, the exact same statistics indicate something else: a high degree of cultural consent among people who mostly respond in a similarly extreme manner to certain moral questions, which is the exact opposite of inconsistency. When covariances, correlations, and factor loadings indicate different things, depending on the scale position of group means, these statistics are strictly speaking not comparable across groups with different means.

Besides, among 81 countries covered by the WVS, cross-national differences in poverty versus prosperity, autocracy versus democracy, and religiosity versus secularism explain fully 82 percent of the cross-national variation in people’s rejection versus approval of reproductive freedoms (Alexander et al. 2015:23). In our eyes, this suggests that extreme group means in the rejection versus approval of reproductive freedoms reflect a true response in prevalent mentalities to correspondingly extreme societal environments, which vary on a continuum of restrictive versus permissive economic, political, and cultural conditions. Therefore, extreme group means express a reality that we wish to see as it is, rather than making it disappear through the development of measurement schemes that “construct” the same variance pattern in each group—only for the sake of invariance.

At any rate, given the incomparability of group-wise covariances, correlations, and factor loadings, group-to-group non-invariance in these issues is an inconclusive statistical marker under large group mean disparity. Because of this inconclusiveness, non-invariance is inconsequential for the cross-cultural functioning of multi-item constructs in nomological terms.

The analyses in OA Section 3 (which can be found at http://smr.sagepub.com/supplemental/) demonstrate this point empirically. To do so, we use the EVI as an example, testing its explanatory performance in a nomological framework of theoretically expected relationships. As the findings show, well-documented non-invariance in the EVI’s within-group item connections provides no source of disturbance, neither in a suppressor nor a moderator fashion, that would compromise the index’s explanatory power over other variables of foremost interest.

To repeat it, indications of statistical non-invariance in a construct’s item connections are inconclusive of semantic in-equivalence in item comprehension and inconsequential for a construct’s cross-cultural functioning in nomological terms. These insights place a question mark behind the high inferential value that MGCFA advocates attribute to non-invariance markers.

Conclusion

The takeaway is that MGCFA invariance tests are tailored to a particular between-/within-group variance partition. Indeed, these tests are designed to exhibit sufficient “measurement invariance” only in configurations in which between-group differences in means are limited to the middle ranges of closed-ended scales. By contrast, great disparity in group means dooms MGCFA invariance tests to fail because of the brute mathematical principle by which group mean disparity enforces between-group variability in within-group item connectivity. For this reason, the conclusiveness of MGCFA invariance tests as a weapon of measurement invalidation declines in direct proportion to the disparity in group means. Hence, the methodological absolutism that surfaces at times when MGCFA proponents treat incidences of statistical non-invariance as an infallible sign of culture-biased measurement in-equivalence is to be rejected.

To what extent group mean disparities in psychological constructs are real cannot be inferred from markers of non-invariance when this non-invariance is itself enforced by the very group mean disparities it is supposed to invalidate. The truthfulness of group mean disparity in psychological constructs hinges, instead, on a substantive question, not a mathematical one: To what extent do these disparities reflect a positional response in prevalent mentalities to other group-level differences of existential quality, such as differences in living standards, cultural norms, and political orders? The answer to this question resides in meaningful nomological correlations at the group level. To examine the micro-foundations of these macro-correlations, multilevel models are useful as a next step. These models can illuminate the role of group means in psychological variables as a source of normative group pressures on other individual-level orientations and behaviors.

In light of our findings, we advise scholars who wish to stick to a latent variable approach to give up comparing group-wise dimensional statistics in cases in which group means are so disparate that a substantial number of them reaches into the floor and ceiling section of a closed-ended scale. Instead, to establish that the constituent items of a construct are mono-dimensional, we advise to run a single holistic CFA over the pooled data, using a two-level design to examine correspondence in dimensionality simultaneously at the group and individual levels, as demonstrated by Duelmer, Inglehart, and Welzel (2015:77). Running a holistic CFA over the pooled data, using a two-level design, models the between-/within-group variance partitions in the data. To further validate the measures in a nomological sense, one can expand the measurement model by a structure model that includes supposed predictors of the latent construct at both the individual and group levels (Duelmer et al. 2015:84).

However, there is no necessity to stick slavishly to a mono-dimensional approach in index construction. Constructs do not have to be mono-dimensional to be meaningful theoretically; nor do they have to be mono-dimensional to be consequential empirically. Instead, conceptually thoughtful work can create constructs whose constituents represent more than one dimension, yet exactly because of that complement each other in forming a meaningful higher-ordered construct with important consequences.

Formative constructs of this sort follow a combinatory logic that escapes the reflective logic of MGCFA. It is, hence, a gross malpractice to use MGCFA as a “weapon of destruction” against formative constructs. With formative constructs, empirical tests of index quality should consider the following three issues: examining the degree to which similar scores on the overall construct map in similar fashion on the construct’s expected correlates, which is basically a nomological network analysis; examining whether the overall construct performs better in this respect than each of its components; examining to what extent variability in within-group inter-item connections operates as a source of disturbance that either suppresses or moderates the construct’s linkages to its expected correlates.

If (a) and (b) are tested positively and (c) is tested negatively, the respective construct meets the Welzel–Inglehart “compositional substitutability” criterion and is valid in this sense. If so, differing inward connections of a construct by group represent compositional substitutes that sustain equivalence in the construct’s outward functioning.

To conclude, we classify non-invariance as an overstated problem with misconceived causes. It is overstated because the presence of non-invariance might be inconsequential for a construct’s cross-cultural performance in nomological terms. And the causes are misconceived since non-invariance does not necessarily result from cross-cultural differences in item understanding when group mean disparity enforces its presence by the mathematical rules of closed-ended scales.

Supplemental Material

Supplemental Material, sj-pdf-1-smr-10.1177_0049124121995521 - Non-invariance? An Overstated Problem With Misconceived Causes

Supplemental Material, sj-pdf-1-smr-10.1177_0049124121995521 for Non-invariance? An Overstated Problem With Misconceived Causes by Christian Welzel, Lennart Brunkert, Stefan Kruse and Ronald F. Inglehart in Sociological Methods & Research

Footnotes

Acknowledgments

Because the substance of our contribution is fundamentally critical of the methodological mainstream in the field of measurement equivalence, we are especially grateful to the editor of SMR and the anonymous reviewers for their instructive comments and for letting us pass to publication. We also thank the following colleagues for their invaluable comments on earlier versions of this paper and their encouragement: Plamen Akalyiski, Sjoerd Beugelsdijk, Klaus Boehnke, Michael H. Bond, Hermann Duelmer, Vera Lomazzi, Bert Meulemann, Michael (Misho) Minkov, Eduard (Ed) Ponarin, and Boris Sokolov. Any remaining shortcomings fall exclusively into our own responsibility.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Significant parts of this study have been funded by the Russian Academic Excellence Project ‘5-100’.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.