Abstract

Generative artificial intelligence (AI) is reshaping technical communication, necessitating strategies to assess its impact. This article introduces a framework combining human-in-the-loop automation with a task-based approach for communication roles. Effective AI integration requires identifying and organizing key writing tasks to fit into automated workflows. The framework underscores the value of writing expertise and offers practical guidance for practitioners, scholars, and educators. By aligning AI tools with technical communication tasks, professionals can produce accurate and complex communication products. This approach highlights the essential role of human expertise in effective, AI-assisted writing.

Keywords

Introduction

Generative artificial intelligence (GenAI) is poised to have a dramatic impact on the field of technical communication. Technical communication, both as a field of scholarship and as a field of practice, is experiencing significant shifts due to the proliferation of GenAI tools. Carradini (2024) calls this “an era in which the world of work is rapidly transforming,” an era in which we are dealing with both opportunities and threats that need careful consideration (p. 7). As has been the case with the advent of any new communication technology, disruption is inevitable. To give just two brief examples: many universities are in crisis as they seek to understand what plagiarism means when any student can ask a generative pretrained transformer (GPT) to assist or even write their assignments based on a prompt. At the same time, many technical writers are struggling to understand their role in organizations that are deploying AI as a means to complete mundane or repetitive tasks in order to cut costs and improve productivity, without fully understanding the inherent risks.

In the middle of this crisis, we—two researchers and teachers of technical communication and one technical communication practitioner—seek to provide a framework for understanding the possibilities at the intersections of GenAI, technical communication, and writing. As a central activity within both academia and industry, writing remains one of the most labor-intensive processes that technical communication practitioners, researchers, and teachers engage in. The genres of writing we produce as a field vary strongly, but include syllabi, pages within learning management systems, academic articles, technical documentation, grants, company web pages, structured content repositories, and many many more. What has unified all these genres in the past is that one or more human beings had to brainstorm, draft, revise, edit, and deliver all the text within them.

The growing body of research at the intersection of AI and technical writing shows that there is deep interest in how AI has led to a wide range of challenges and questions that need to be addressed. While much of the existing research has explored the theoretical and ethical landscape, there are few examples of technical communication scholarship that focuses explicitly on actionable, practitioner-oriented advice. We seek here to present an actionable guide in the form of heuristics that technical communication professionals can rely on when using AI to engage in a writing task. Specifically, these heuristics are guides for deciding what tasks are appropriate for an AI tool to produce, how to effectively prompt the AI tool, how to evaluate AI outputs, and how to automate and scale tasks. We want to emphasize that GenAI is simply a very complex apparatus that still needs a human pilot. Below we begin our article by exploring what we currently know about this new technology and how it can assist with writing within the field of technical communication. From there, we introduce our framework, explore how it can be used at each stage of the writing process, and then discuss the limitations of the framework before concluding with our predictions on the future of writing with GenAI.

While existing scholarship has significantly discussed theoretical implications of AI, few studies provide a structured, actionable framework that practitioners can immediately apply in real-world technical communication workflows. Our human-in-the-loop (HITL) model differs from general AI literacy frameworks (e.g., Cardon et al., 2023) and prompt engineering strategies (e.g., Ranade et al., 2024) by emphasizing a structured, decision-making approach to integrating AI into writing processes, rather than focusing solely on prompting techniques or literacy concerns. By grounding our heuristics in real-world writing tasks, we offer a practical guide for technical communicators navigating AI adoption in both industry and academia.

Literature Review: What We Know About GenAI and Writing in Technical Communication

As scholarship on AI emerges in our field, one main question arises: what do we currently know about GenAI and how it can be used for writing? Although literature on this topic is still developing—there simply hasn’t been enough time to generate a large amount of rigorous, peer-reviewed research—we already have a variety of articles that have investigated the relevance, credibility, and pitfalls of GenAI for writing. Johnson-Eilola et al. (2024) analyzed the ability of ChatGPT to generate effective instructions, concluding that though they “remain skeptical that current and near-future generative AI will replace technical and professional communicators in tasks such as instruction sets,” they “do see value in their use as part of a skilled practice—in the same way that we now rely on a host of production technologies to improve our workflows” (p. 11). Echoing this claim, Verhulsdonck et al. (2021) argue “AI creates new TPC practices due to the use of AI-driven tools to develop content and new ways of addressing users through chatbots. AI toolkits now can help us augment our thinking… to learn what users are doing or saying online–and also let us develop content for chatbots” (p. 486). These sentiments have been echoed by several other scholars (Cardon et al., 2023; Darwin et al., 2023; Morrison, 2023; Tang, 2021). The consensus thus far is that AI is introducing new occasions for writing, new workflows for writing, and new methods of replicating existing tasks.

Concerning the relationship between the workplace and education, we are also seeing innovation as technical communication researchers and teachers explore new territory. Carradini (2024), in his editor's introduction to his special issue on the Effects of Artificial Intelligence Tools in Technical Communication Pedagogy, Practice, and Research argues that “[a]s workplaces take up these new tools, TPC educators inside and outside university settings may need to respond to and train students on these tools in order to prepare them for a changing workplace” (p. 6). Perhaps most pertinent to our current endeavor, Gallagher and Wagner (2024) systematically assessed different writing tasks and whether or not GenAI is seen as honest in the context of academic dishonesty. They found that “[w]hen compared to human collaborators, AI agents of collaboration are perceived as more academically dishonest for both writing instructors and students when the AI

From the private sector, a variety of companies have produced ebooks, blog posts, and white papers regarding the impact of AI on their particular workflows. In a representative piece, the content governance software company Acrolinx has developed an ebook called Robots Are Terrible Writers: Strategies to Elevate Content and Eliminate Risk in the Age of AI that “offer[s] insights into the dynamic interplay between human expertise and AI efficiency” (Acrolinx, 2024, p. 2). Such efficiency, they claim, “is particularly crucial in the technology sector where complex subject matter is the norm, and regulated industries like financial services, where precision and compliance are paramount” (Acrolinx, 2024, p. 2). This concern not only echoes the findings of Gallagher and Wagner (2024) on the trustworthiness of AI-enabled writing but is also mirrored by deliverables from a variety of other organizations and individuals on the practitioner side (Iroegbu, 2024; Jones, 2023; Snowflake, 2023; Tuner, 2024). Within the private sector, many companies are assessing the trustworthiness of AI and its appropriateness and efficacy in a variety of writing tasks.

In addition, AI will likely have specific impacts on the subfields that technical communication is connected to, including technical writing, technical editing, content strategy, instructional design, and user experience (Chng, 2023; Lew & Schumacher, 2020; Soe, 2021; Stige et al., 2023; Tang, 2021; Verhulsdonck et al., 2021; Zhu, 2022). These impacts are beyond the scope of the current article but bear exploration in future work. More than likely, the very role of technical communicator is being challenged in substantive ways by AI. At a deeper level, AI will impact the ways members of our field solve complex communication problems, such as how to govern online platforms (Katzenbach, 2021), how to analyze and visualize data (DeJeu, 2024), and even how we experience daily life, including engaging in such common activities as exploring our immediate environment (Duin & Pedersen, 2023). At a larger scale, world governments are just beginning to consider policies for how we will regulate AI's impact on the technical systems and infrastructure that enable our societies to function (European Commission, n.d.).

Perhaps most pertinent to our current argument, in their introduction to the recent special issue of [t]hough the idea of an automaton dates back at least as far as ancient Greece… The tropes of runaway monsters, helper robots and killer robots are ways to conceptualize our initial reactions to GAI tools, such as ChatGPT. Yet GAI tools are not robots, do not follow any prime directives, nor are they intelligent. (at least not yet, despite their name)… Particular capabilities of GAI, put in place by that “beautiful math,” such as its ability to “chat” with us in a friendly and helpful tone, to summarize texts and teach us concepts, or to perform tedious tasks for us, call to mind the helpful and protective robot, Robbie, and align with a posthuman impulse to move beyond the traditional divisions between human and machine. (p. 360)

It is this figure of helpful robot, junior writer (Kelly, 2024), or coauthor (Ossiannilsson, 2024) that underlies our argument. Our goal is to reflect on the ways GenAI can be helpful to writers in the field of technical communication and ways that it falls short. We do this below by critically examining the ways that GenAI writes, the ways that human writers write, and then provide heuristics for uniting these two workflows. Like Knowles (2024), who has used the rhetorical canons to discuss the ways GenAI can complement human rhetoric, we seek to provide a framework that begins with the practical challenges faced by technical communicators as they write.

How GenAI Writes: How We Got Here

One thing missing from conversations in technical communication regarding the impact of AI is a deep dive into the ways in which it produces text. Although such a deep dive is beyond the scope of this article, we explain below some key facets of GenAI that will help clarify why it is an effective tool for automating certain writing tasks. GenAI is a tool unlike any we have had before and this is because, following Al-Amin et al. (2024), it is actually a combination of decades of previous technologies, including AI, natural language processing (NLP), and machine learning (ML) (p. 2).

Early chatbots were limited by their inability to understand context and nuance and relied almost exclusively on the Markov chain, a statistical model for predicting random sequences (Al-Amin et al., 2024, p. 5). This was a problem of memory. The introduction of transformers significantly improved the contextual awareness of AI (Vaswani et al., 2017), laying the groundwork for modern language models. Without the ability to understand context, probability alone was insufficient to enable chatbots to create complex text responses that were nuanced and responsive to human prompts. This was the case until advances in ML-enabled chatbots to digest enormous amounts of text, a process we now refer to as “training.” Training “involves exposing the model to an extensive corpus of textual data, enabling it to recognize language patterns and correlations” (p. 3). The GPT of today is now trained on more than 200 billion parameters, far more text than any human could ingest in a lifetime. The training process comprises two parts, including unsupervised training on “unlabeled text using language modeling to acquire linguistic capabilities” and “supervised fine-tuning on labeled data” which ensures limited hallucination (or misleading or inaccurate responses) as well as bias reduction (Al-Amin et al., 2024, p. 16). Chatbots, at first simple probability technologies, can now be trained on large data corpora, fueling their ability to recognize and respond to context and thus heralding the era of the large language model (LLM), a program trained in pattern recognition via massive amounts of data.

Finally, NLP, the process by which chatbots use pattern-matching capabilities to better understand and respond to human writing patterns, has enabled chatbots to develop robust language skills. Using AI, ML, and NLP, modern chatbots such as ChatGPT and Bard can interpret questions from human users, generate several potential responses, gather evidence for those responses, parse patterns in potential answers, and apply probability to the output, all within a matter of seconds.

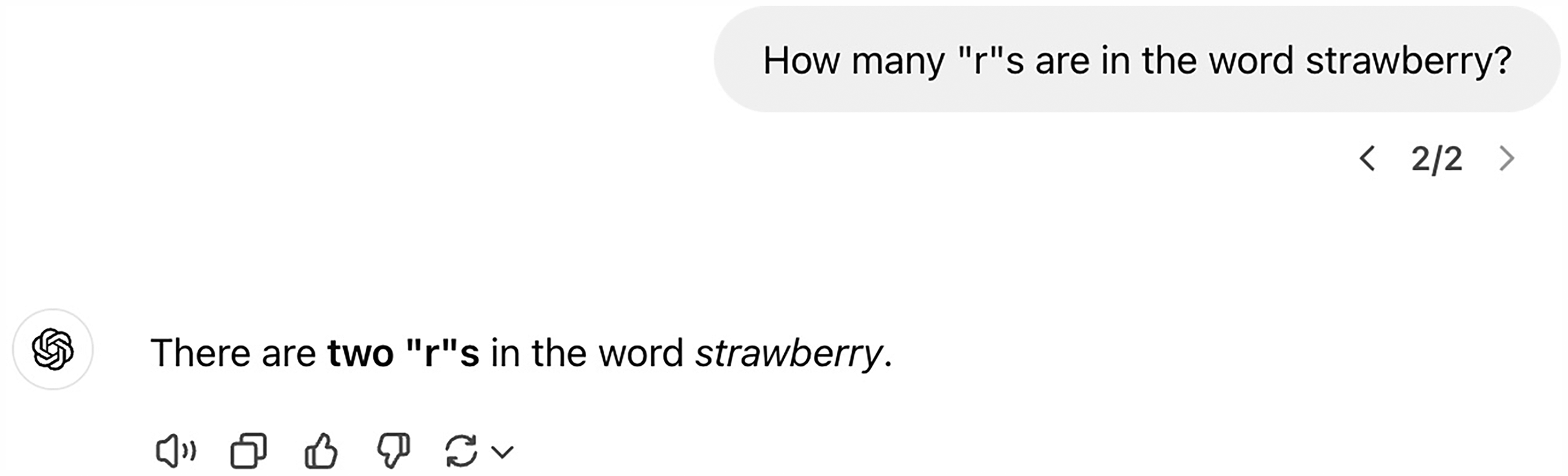

To summarize, the chatbots available to both teachers and practitioners of technical communication today are less generating wholly original writing than they are writing the most probabilistic text based on a prompt. They are getting better and better at doing this all the time, however, meaning that they can now generate text that is very similar to what a human would generate if given the same prompt. They are also not without flaws. To date, ChatGPT, despite its vast capabilities, can’t correctly count how many “r”s are in the word “strawberry” due to the way it parses language tokens (see Figure 1 1 ).

An Example of a Hallucination.

These are hallucinations, defined as “a phenomenon wherein a Large Language Model (LLM)—often a generative AI chatbot or computer vision tool—perceives patterns or objects that are nonexistent or imperceptible to human observers, creating outputs that are nonsensical or altogether inaccurate” (IBM, 2023). Due to hallucinations, in addition to other pitfalls, we explain below that GenAI is best considered as a writing assistant that empowers technical communicators to be more efficient and to ease points of frustration within their writing process.

As Hicks et al. (2024) point out, it is better to consider GenAI as less concerned with truth and more with the production of language that imitates human speech, and thus writing. This means it is good at imitation and replication, which further means it can be powerful ally in the difficulties of the writing process, but also that it still needs human supervision and validation. With these thoughts in mind, below we present the HITL model of automation, which is a key background theory for our framework for using AI as a writing assistant within technical communication.

The HITL Model of Automation

HITL has emerged as a promising framework in technical communication for approaching the use of GenAI. Conceiving of GenAI as a tool of automation suggests that the lessons of automation can be applied to the ways we think about writing. Full automation may call to mind a view of writing without human intervention, and in some specialized places automated reporting mechanisms may be useful. However, tasks are not simply categorized as automated or not. The HITL framework suggests a model in which GenAI assists writers. In an HITL approach to writing, human authors take responsibility for the final product. Knowles (2024) frames HITL writing as a baseline ethical standard for AI use when working with writing. He argues that authoring with GenAI tools can be laid out on a spectrum from purely human to fully synthetic. We contend that developing skills related to GenAI in writing tasks requires careful consideration in order to balance the kinds of work that are being automated with the human work that guides, shapes, evaluates, and implements the final products.

Knowles (2024) has argued that “HITL is a process by which the performance of an automated system is improved, or quality controlled through some intervention of a human agent, often at the beginning and end of an automated process” (p. 7). Knowles has used HITL as an example workflow for describing what he calls rhetorical load sharing, or “the process by which the rhetorical labor expenditure of writers is shared amongst collaborators” (p. 4). For Knowles, this description of the writing process is meant to “complicate what may otherwise appear to be a simple classificat[ion] of texts as either human-authored or synthetic” (p. 4). The key benefit of the HITL model is that it provides useful language for considering what aspects of the writing process is being automated, and what aspects of writing are the domain of the human. In technical writing, there are many facets of the writing process that are independent of textual production or textual refinement, and recognizing these facets helps explain effective and ethical uses of GenAI in the writing process.

As GenAI is adopted as part of writing workflows, it is essential to consider how existing models of writing accommodate the change or the ways they need to be adapted. Positioning writing as a product, process, or means of discovery leads to different implications when GenAI is introduced. The proliferation of tools that effectively generate, reorganize, refine, reword, and translate text challenges existing models, and scholars have begun to account for this change. For example, Jiang et al. (2024) consider the rhetorical canons (invention, arrangement, style, memory, and delivery), and they refer to the use of GenAI as “human-machine” or “human-AI” collaboration. Collaboration is a well-studied and intuitive way to describe writing, but its application to AI raises questions of agency. In human collaboration, coauthors contribute meaningfully and autonomously. By contrast, AI operates through statistical generation rather than independent decision-making. While “collaboration” is often used to describe AI-assisted writing, this framing risks overstating AI's role in authorship. Writers that work with GenAI should consider their own sense of ownership and if collaboration is the right way to describe their work with AI tools.

Framing automation as collaboration implies that authors are relinquishing control. Collaboration requires autonomy, but GenAI operates entirely within the constraints of human input. The acceptance and use of synthetic output is an active decision made by human users. Lyons et al. (2021) theorized a distinction between automation and autonomy, a boundary that depends on the idea that the machine is either completing a routine task or “capable of making decisions independent of human control” (p. 3). They go on to define agency for a machine as “the capability and authority to act” (p. 5), a high-level of participation in an activity. Collaboration suggests autonomy, that the AI is making substantial decisions independent of a user, without the review. Ultimately, though, the responsibility, ownership, and authority of the writing process in professional writing is never with the software or tools. It must be capable of making independent decisions, and for authors that give-up control, this may be an appropriate framing. However, in technical writing, we would suggest that the autonomy and control is in the hands of the human writers that initiate, evaluate, refine, and implement their writing products.

The HITL model is more consistent with the way information is developed, owned, and circulated. Following Kumar et al. (2024) such models constitute “collaborative approaches that involve both humans and machines working together to enhance the performance of ML algorithms” (p. 75737). HITL models attempt to provide AI with the guard rails it requires to accomplish tasks accurately and effectively while substituting human intelligence for tasks it struggles with.

As Wang (2023) has asked: What if, instead of thinking of automation as the

HITL models require skilled professionals to align their knowledge and practices within a complex network of activity. Rather than abdicate all tasks to AI, AI accomplishes tasks in service of human professionals at levels of efficiency impossible for a human being while being closely managed by the human professional.

In other words, it doesn’t matter who accomplishes which tasks, human or AI, as long as tasks are accomplished to human satisfaction. This breaks down a lot of the binaries human beings have created regarding AI. The HITL model frames AI as an augmentation tool, enhancing rather than replacing human capabilities. Just as writers recognize their own limitations, HITL accounts for AI's constraints while leveraging its strengths. The following section outlines a framework for effectively integrating GenAI into technical communication workflows.

How to Write With GenAI in Technical Communication: A Framework

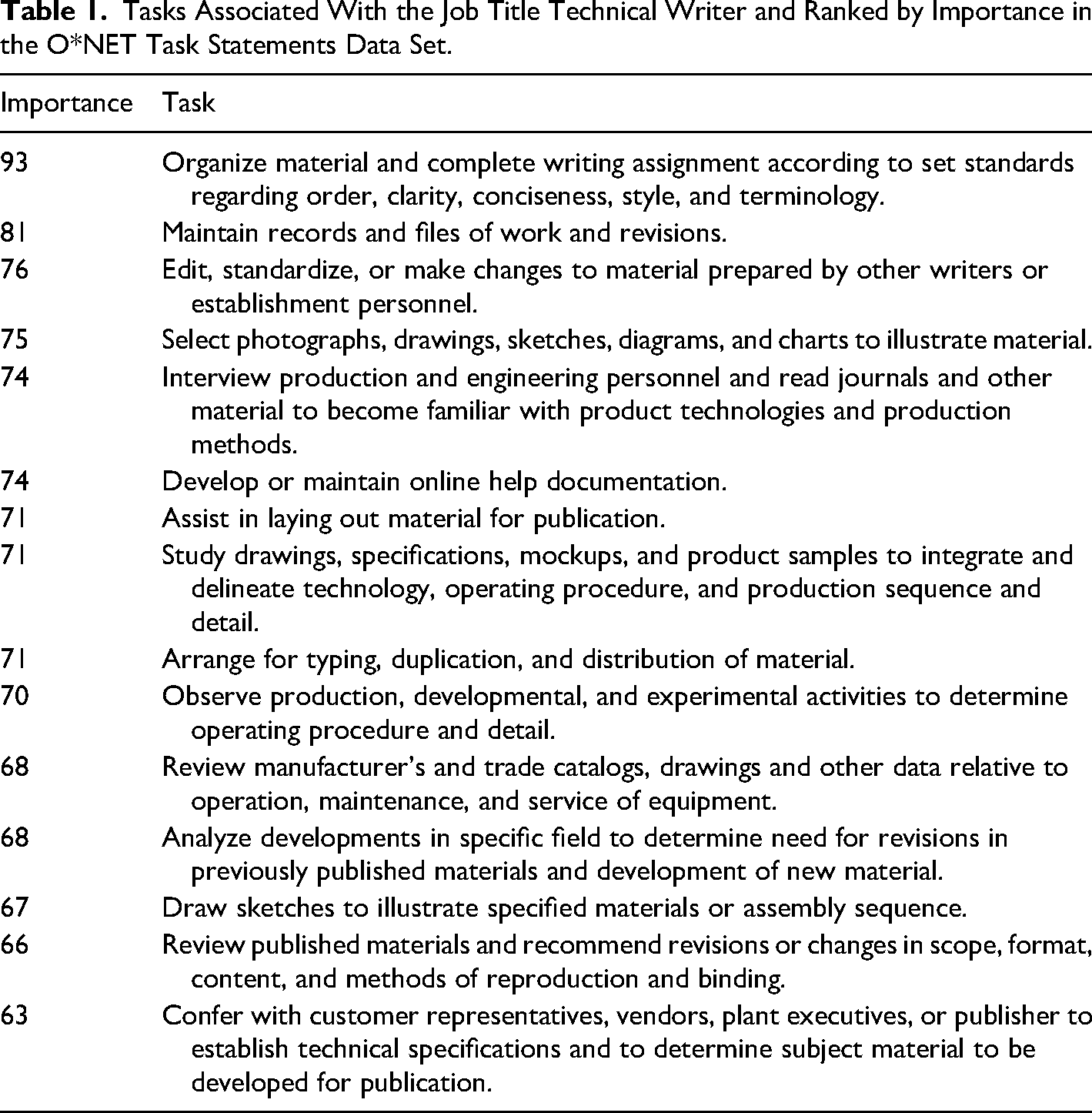

Writing tasks are notoriously difficult to nail down. Each written genre requires its own conventions, workflows, and forms of collaboration. However, since the tasks can be listed and described, they can also be organized and categorized. As one example of the various tasks technical communication practitioners engage in, consider O*NET, an online source sponsored by the U.S. Department of Labor/Employment and Training Administration. O*NET contains hundreds of standardized job descriptions across economic sectors, including task breakdowns for the job title Technical Writer (O*NET OnLine, n.d.; Table 1).

Tasks Associated With the Job Title Technical Writer and Ranked by Importance in the O*NET Task Statements Data Set.

Similarly, the Bureau of Labor Statistics (BLS) Occupational Outlook Handbook has an entry on technical writers that lists duties instead of tasks, but the duties can also provide a reasonable framework for considering some of the tasks central to technical writing. The duties include:

Determine the needs of users of technical documentation. Study product samples and talk with product designers and developers. Work with technical staff to make products and instructions easier to use. Write or revise supporting content for products. Edit material prepared by other writers or staff. Incorporate animation, graphs, illustrations, or photographs to increase users’ understanding of the material. Select appropriate medium, such as manuals or videos, for message or audience. Standardize content across platforms and media. Collect user feedback to update and improve content (Bureau of Labor Statistics, 2024).

These lists are, of course, in no way exhaustive and represent a high-level overview of only one specific manifestation of the field of technical communication, technical writing.

As mentioned earlier, and supported by a host of research articles too numerous to cite completely, the modern field of technical communication arguably contains a variety of subfields beyond technical writing, subfields such as technical editing, content strategy, instructional design, and user experience (Chng, 2023; Lew & Schumacher, 2020; Soe, 2021; Stige et al., 2023; Tang, 2021; Verhulsdonck et al., 2021; Zhu, 2022). Then there is the work of academic researchers and teachers of technical communication, who engage in their own variety of tasks. Suffice it to say that no single article can break down the tasks associated with each of these fields and explain how AI can be used to assist with them.

Thus, in our framework we narrow the scope of discussion significantly to those tasks which can be

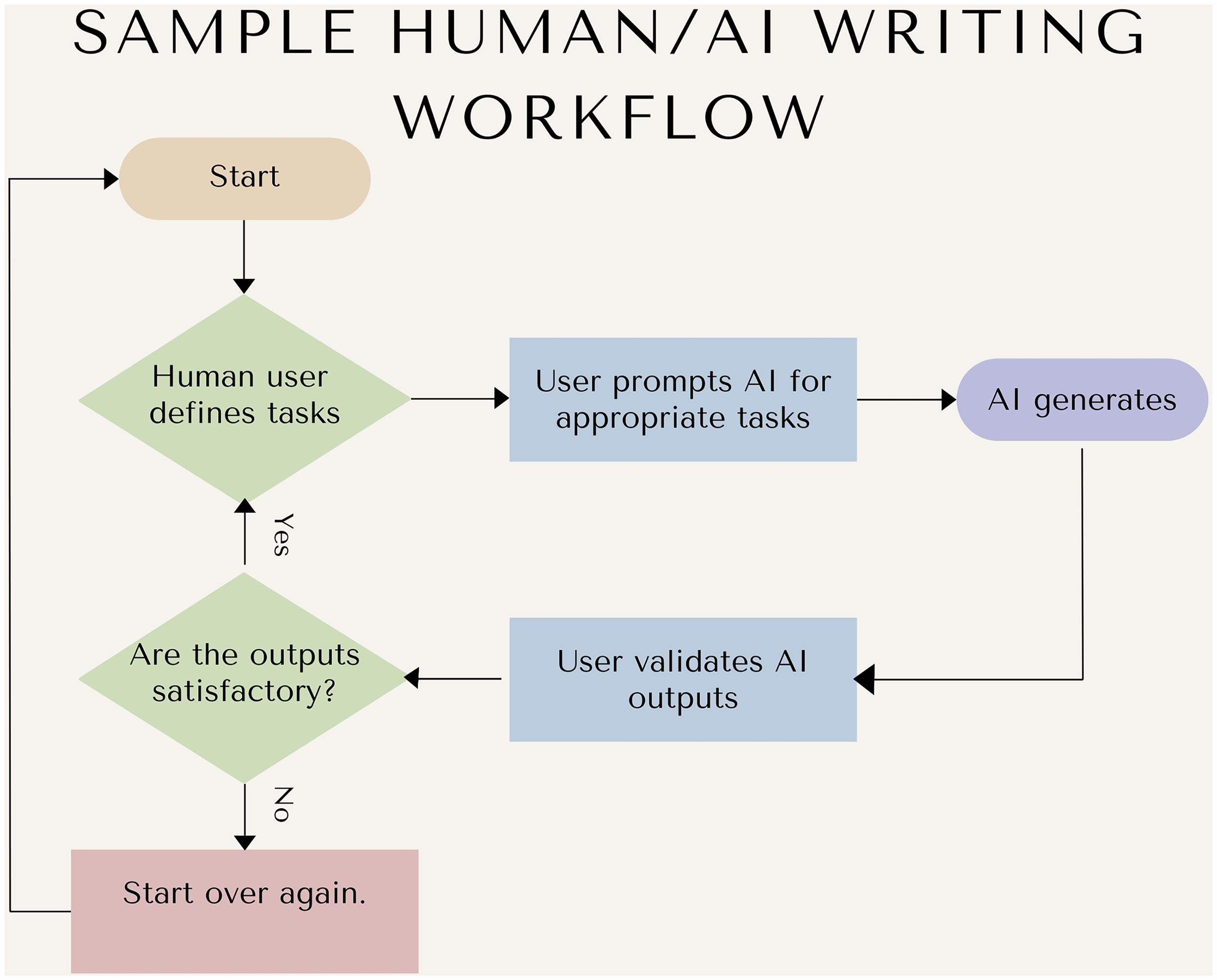

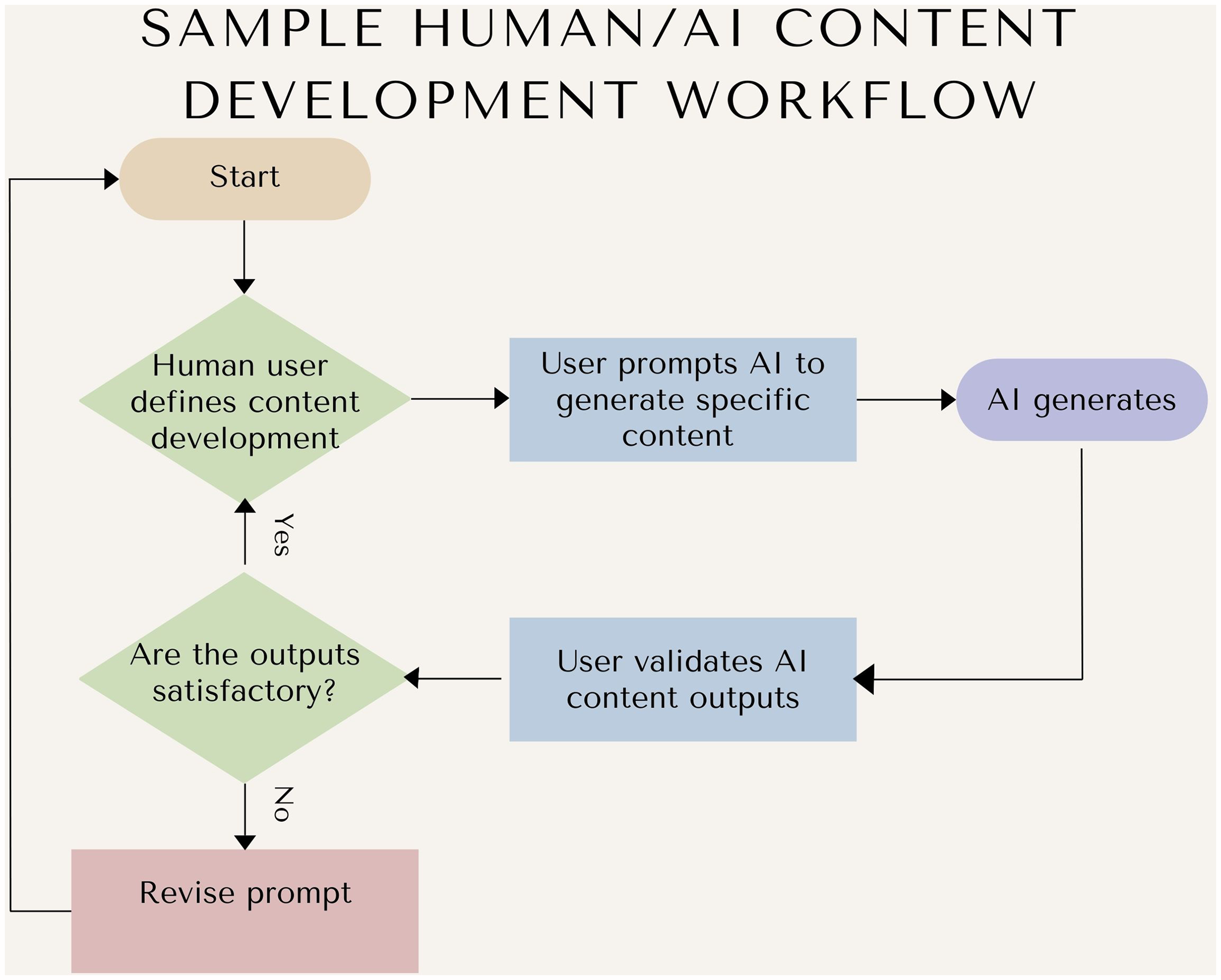

A Sample Writing Workflow that Incorporates AI.

This process, simplified in Figure 2 for the purposes of explanation, shows how HITL can be used to automate tasks that a human writer finds burdensome, because they are time-consuming, because the human doesn’t have sufficient expertise to complete them, or for any other reason. In this model, the AI tool generates outputs at the behest of the human user, who then incorporates these outputs into their existing workflow after first validating that they are appropriate.

Refining and adjusting prompts over multiple attempts often leads to more precise responses. A single prompt may not yield the initial desired results, so users of AI typically refine prompts, including varying levels of detail and structure, to guide the model more effectively. By making small adjustments such as specifying tone or personality, adding context, or simplifying complex queries, users can guide the model toward producing a higher-quality response. This process, called “prompt tuning” or iterative refinement, enhances the overall effectiveness of AI-assisted tasks (Martineau, 2023). While detailed visual examples of prompt tuning are beyond the scope of this article, we acknowledge that prompt iteration is a critical skill for technical communicators and recommend further exploration in works such as Ranade et al. (2024).

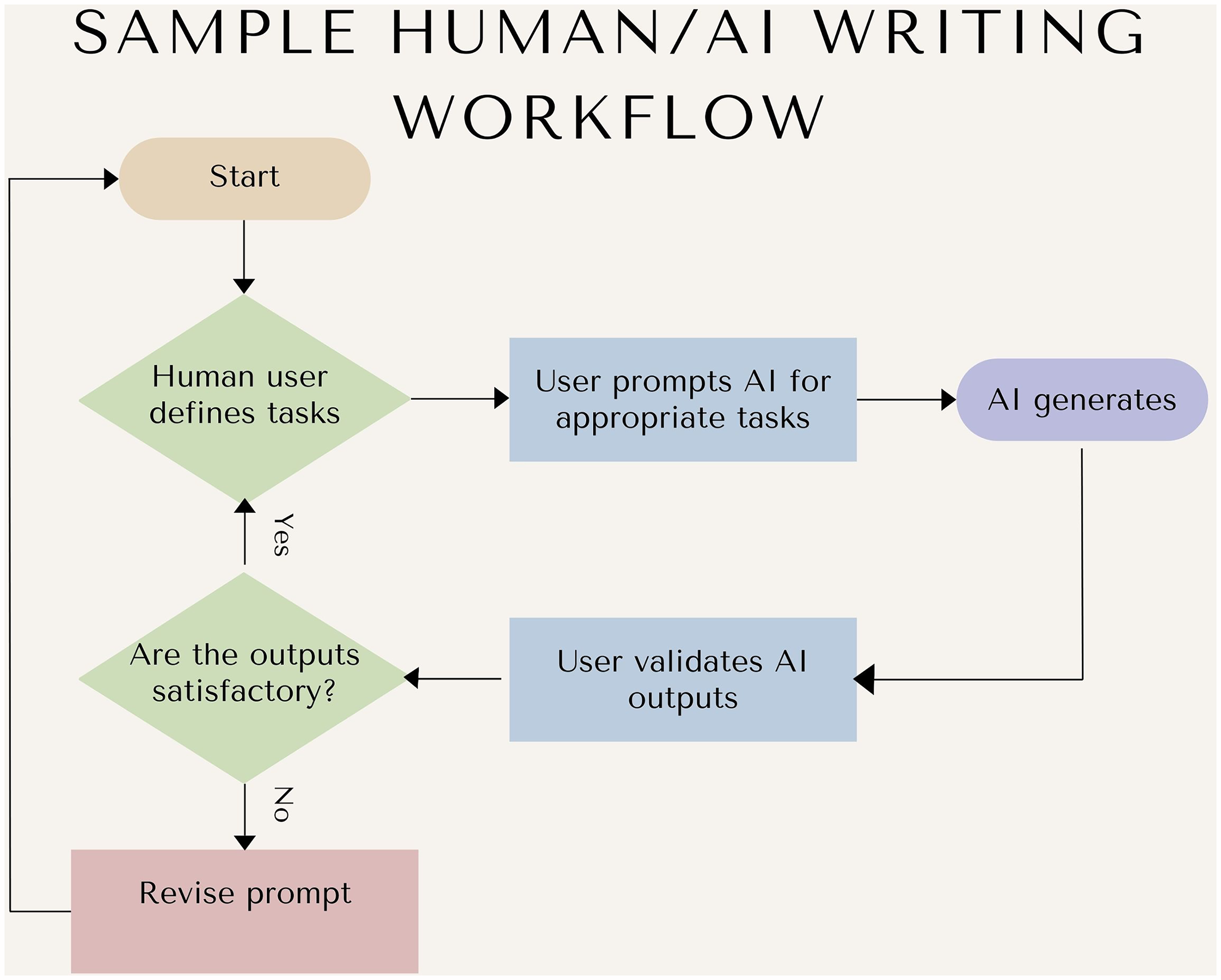

If we consider common, broadly-defined writing tasks that many technical communication professionals of different stripes engage in, that is, invention, content development, research, analysis, revision, and editing, we can plug one or more of them into this process to see what an AI-enhanced writing process would look like. Using our sample task from a technical writer, “Develop or maintain online help documentation,” such a user might engage with a process shown in Figure 3.

A Sample Writing Workflow that Incorporates AI and Reprompting.

The technical can replicate any tasks they need assistance with in a similar manner. They might prompt the AI to suggest revisions for existing documentation, for example, or edit a document for Plain Language.

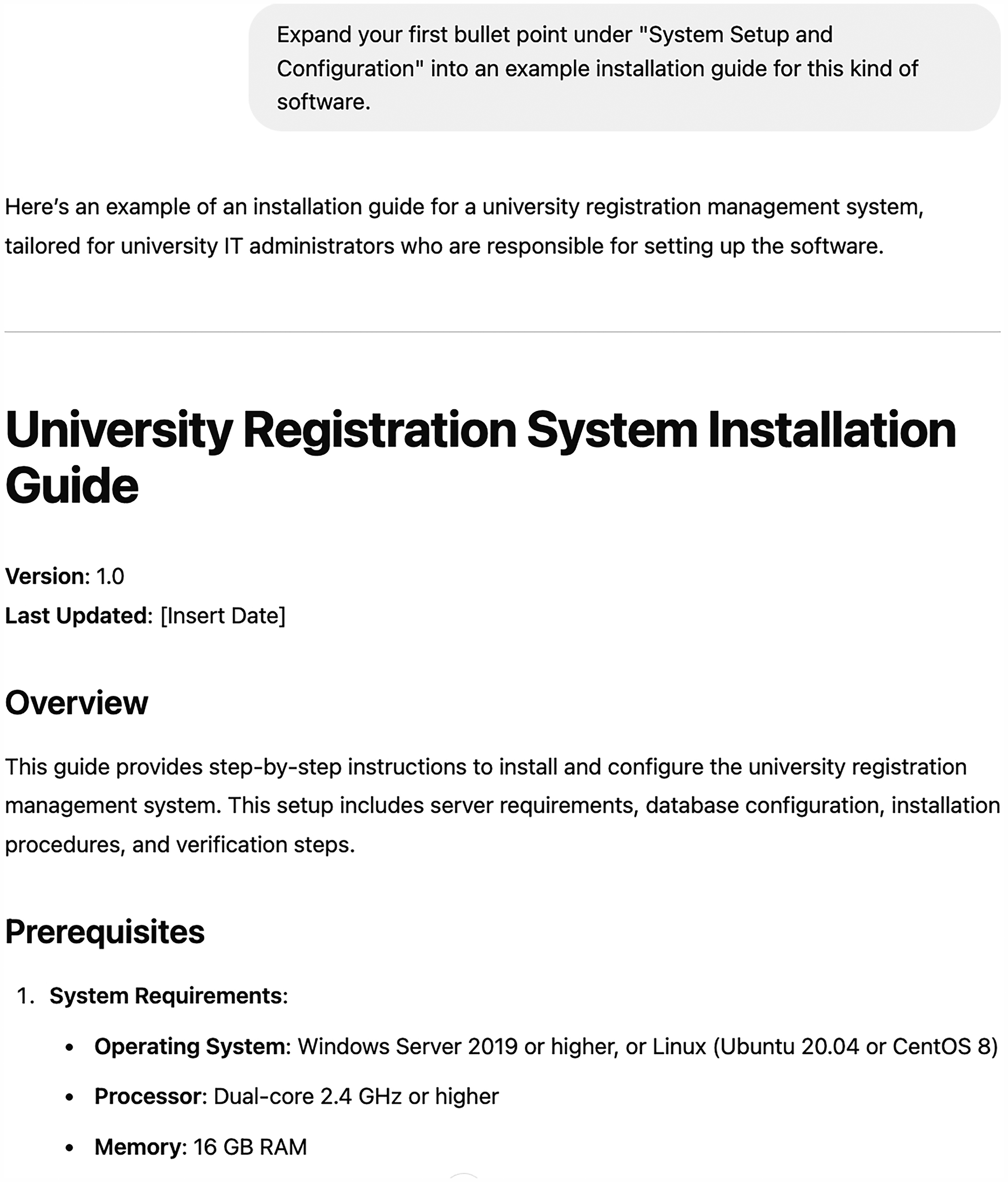

When used in an actual tool, such as ChatGPT, the resulting process is shown in Figure 4.

A Sample Content Development Workflow that Incorporates AI.

Once the technical writer has reviewed and validated that the response from the AI is indeed helpful, they can ask for additional outputs, such as those shown in Figure 5.

An Example of a Reprompt.

Each iteration of the HITL process gets closer to the mark, with the human user serving as the ultimate arbiter of the outcomes of the process and the AI model working more as a writing assistant.

Rather than replacing the human writer as the center of the workflow, in HITL the human writer becomes the manager of the process, validating any outputs the tool generates for whatever criteria they are aiming for. If the model fails to generate an appropriate response, the user can try redefining the task or simply ask for the same task from a different angle, as we demonstrated in Figures 4 and 5 when we asked for a follow-up task to zero in on a specific piece of content. In this way, AI becomes an augmentation of human abilities rather than a replacement for them.

HITL thus provides a clear-minded and effective approach to AI-enabled writing. The HITL process should begin by establishing the expectations and values that the human participants in the loop (i.e., writers, designers, managers, and audiences) provide. Understanding the desired end goals of writing efforts (i.e., communicating accurately, efficiently, effectively, etc.) and the objectives of target deliverables ensures that skilled writing practice remains the cornerstone of AI-infused writing. In this way, the quality and usefulness of communication will continue to be a human question, not a technological one.

Like any technology-augmented workflow, this process has pitfalls, however, which is why we expand the HITL process into a framework for writing with GenAI in technical communication. We break down this framework into the following heuristics:

Defining tasks Prompting strategies Quality Control Scaling and automation strategies

For each heuristic, we provide considerations for technical communication professionals interested in using AI to improve their workflow without ceding creative control, including potential stumbling blocks and how to recover from them.

Defining Tasks

As we have argued, AI requires oversight due to its flaws and limitations (Bender et al., 2021). It also needs skilled writers to serve as guides that provide not only questions and problems for it to respond to, but also additional context and monitoring so that it can provide the most useful outputs. Writing with AI starts with understanding that AI is not like other technologies, and we cannot simply input commands and get a predictable result. The power of AI is that it is crafting a response for users with each interaction. Just as a human writer may answer the same question differently if asked at different times or by different people, the AI's response will vary based on a number of factors.

When working with AI, the experience goes beyond using a simple text-generation tool like a word processor. The techniques needed to get the most value out of an AI may be better understood as structured roles in a conversation (Thominet et al., 2024). Advocates of this view note that giving instructions and context to an LLM will result in more relevant and useful content. Just as is the case when working with human conversational participants, we need to articulate what role our collaborator is taking (e.g., author, reviewer, editor, subject matter expert [SME], etc.) when working with an AI. An AI needs to understand its role in the conversation to provide the best support.

Because of this, carefully defining what tasks an AI is best suited to within a particular workflow is essential. Assembling a short guide for a new task from existing documentation requires a different mindset and skill set than gathering and verifying new technical details to be used in developer documentation. A skilled writer would not ask their writing assistant to immediately take on all of their work with no context for how to do so. Similarly, when defining tasks that may be automated by AI, it's important to prioritize ones that it can accomplish successfully.

We recommend brainstorming all the tasks associated with a given project and then assessing which tasks are most appropriate for automation. If we look at our running example, the development of help documentation, we can think of a variety of tasks associated with this broader workflow, tasks such as:

Gathering requirements for the new documentation, such as information from style guides, SME requirements, and audience requirements. Reviewing existing documentation for currency, relevancy, and authoritativeness. Gathering legacy content into a single place for reuse. Identifying which pieces of legacy content can be reused and which need to be retired. Developing new content. Sending out new content for review by test users, SMEs, and peers. Revising content to incorporate changes. Editing content.

Such tasks result in several pain points for writers, even those using AI, which include:

Wasting time on repetitive, routine tasks that limit creative or strategic engagement. Difficulty recognizing which tasks genuinely benefit from automation versus those requiring nuanced human judgment. Misjudging AI's capabilities, leading to frustration or wasted effort on inappropriate tasks.

From this initial list of tasks and pain points, we can then consider which tasks are the easiest to automate. Our goal in providing a series of heuristics for automating writing is to provide writers with help performing the above kinds of tasks and avoiding the above pain points. Our heuristic accomplishes this by:

Providing clear criteria for quickly identifying tasks suitable for automation. Helping writers save time by clearly separating tasks that GenAI can reliably complete from those better handled by humans. Reducing trial-and-error inefficiencies by setting practical expectations for AI performance.

Given that chatbots are programs that generate text in response to a specific prompt based on a probability-based algorithm, we want to avoid attempting to automate tasks that require other human beings, such as SMEs, to provide expertise. We could ask an AI model to play the role of a highly-skilled SME such as a mechanical engineer or software developer in reviewing a document, but we are unlikely to get a high-fidelity response. Because AI programs, at time of writing, don’t necessarily have real-time access to the most current state of knowledge within a given field, they are not reliable stand-ins for SMEs.

On the other end of the spectrum, however, AI tools excel at rule-based systems such as grammar and style (Liu et al., 2023; Neha et al., 2024). Most AI tools are highly capable of applying a set of standards to a document, so asking an AI tool to compare a document to a style guide or to review a document via a particular rhetorical strategy, such as concision or Plain Language, is very plausible. AI tools are also decent at generating content if the specifications for the content are clearly laid out in advance and if the user corrects any deviations from these specifications that they witness in the output.

So, when defining writing tasks that can be automated by AI, consider the following questions:

Is the task a measurable, achievable one? Does the task require cutting-edge or real-world knowledge of a specific knowledge domain? Does the task require the AI to play the role of a human being with high fidelity? Can the user provide the AI with specific standards that can guide the AI in completing the task?

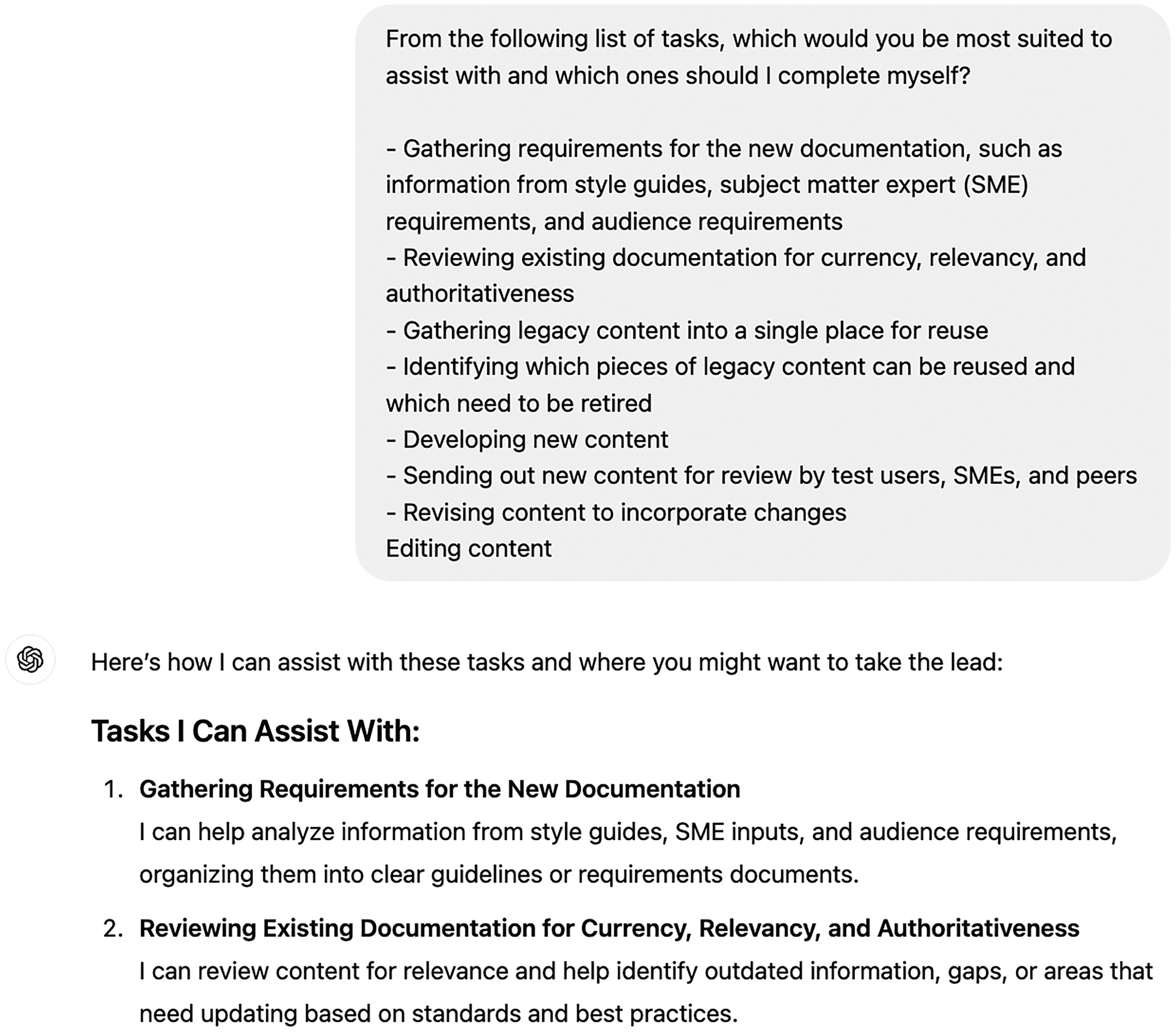

The answers to these questions help guide the user as to what role the AI can play in a given project. It is also appropriate to ask the AI what role it might play in the project. AI tools readily provide lists of tasks they are good at as well as ones they might struggle with (Figure 6).

An Example of Prompting an AI to Define Tasks.

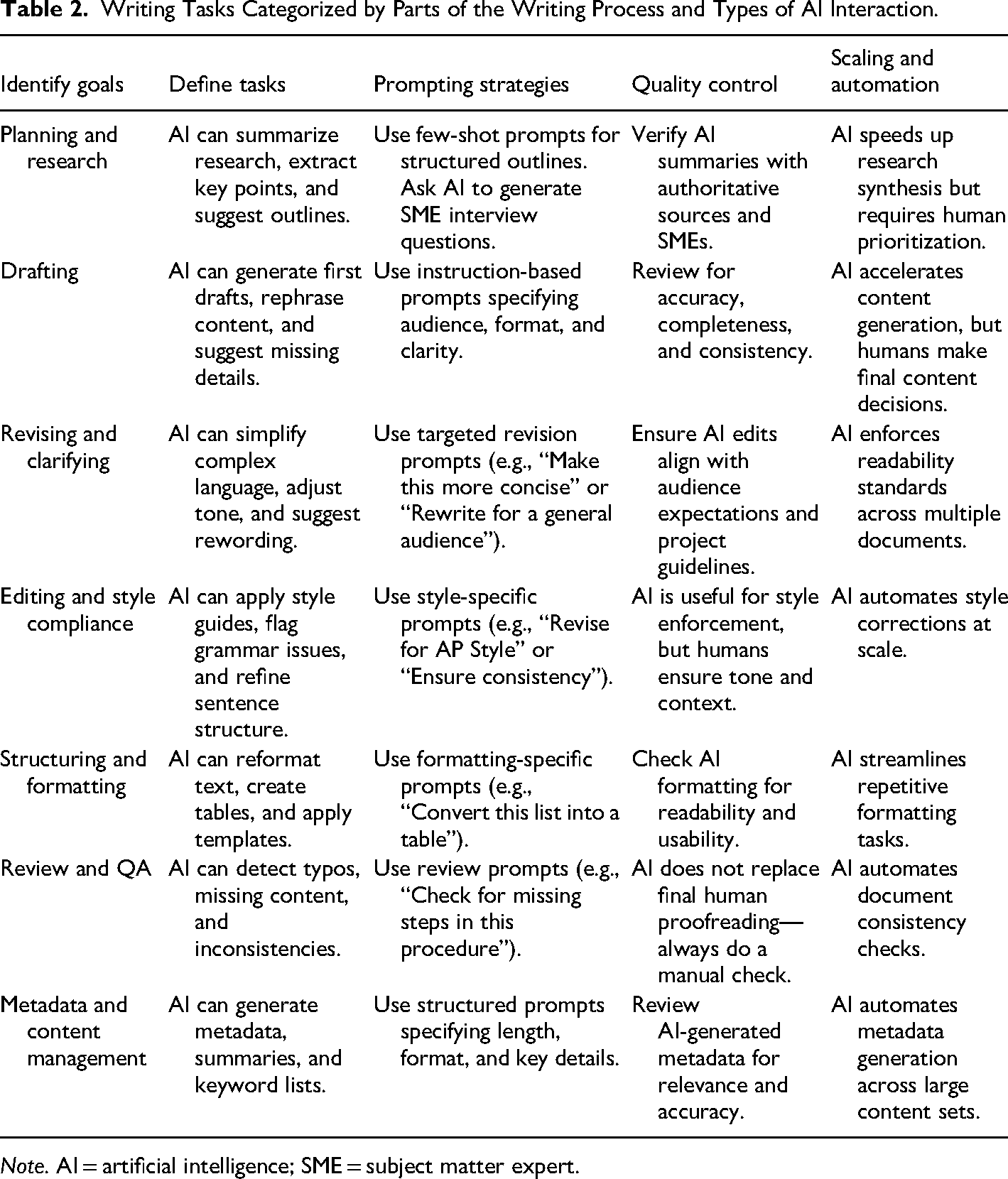

For ways to frame the types of tasks that AI can best automate, see Table 2, which provides not only tasks, but aligns them with our overall heuristic in a grid. The table categorizes tasks by a part of the writing process as well as a type of AI interaction (i.e., planning and research vs. prompting).

Writing Tasks Categorized by Parts of the Writing Process and Types of AI Interaction.

Once the user has successfully defined which tasks they want to automate, it's time to consider prompting strategies for automating those tasks.

Prompting Strategies

It should also be noted that these heuristics do not represent a linear process, but rather considerations that may occur at any step in the HITL process. In the above example, we used a prompt to ask the AI model which tasks we should automate, which means we were already making use of a prompting strategy. The most basic use of GenAI is to ask it for something. Ask it a question and you will get an answer. Ask it for a specific kind of document and you will get that kind of document. Direct asks like this are called “zero-shot” prompts. Users may ask simple and direct questions when working with AI because of our comfort with search engines. Because this user behavior, which traditional information systems rely on, is restricted to a matching system in which the program serves the user content that already exists, zero-shot prompts rarely yield the best results. Zero-shot prompts, without any contextual guidance, rely entirely on the model's pretrained knowledge. This is why prompting without examples or context frequently leads to vague, inconsistent, general, or even hallucinatory responses. Providing examples and instructions (few-shot prompting) helps the model to narrow the response, which in turn improves relevance and accuracy.

Zero-shot prompting can result in a variety of pain points, including:

Inconsistent or irrelevant outputs from initial AI interactions. Frustration due to unclear understanding of how to effectively prompt AI tools. Unnecessary effort spent repeatedly revising AI-generated content.

Our goals in this section are thus to offer the following solutions to these pain points:

Introducing clear and tested prompting methods (e.g., few-shot, chain-of-thought) with technical writing-specific examples. Providing explicit guidance for structuring prompts to yield consistently accurate, relevant, and usable outputs. Offering immediately actionable prompting strategies for a variety of technical writing tasks (drafting, revision, Plain Language editing, etc.).

In doing so, we would also direct readers to Ranade et al. (2024) who provide the following general purpose definition of an effective prompt: “Prompt = (audience + genre + purpose + subject + context + exigence + writer).”

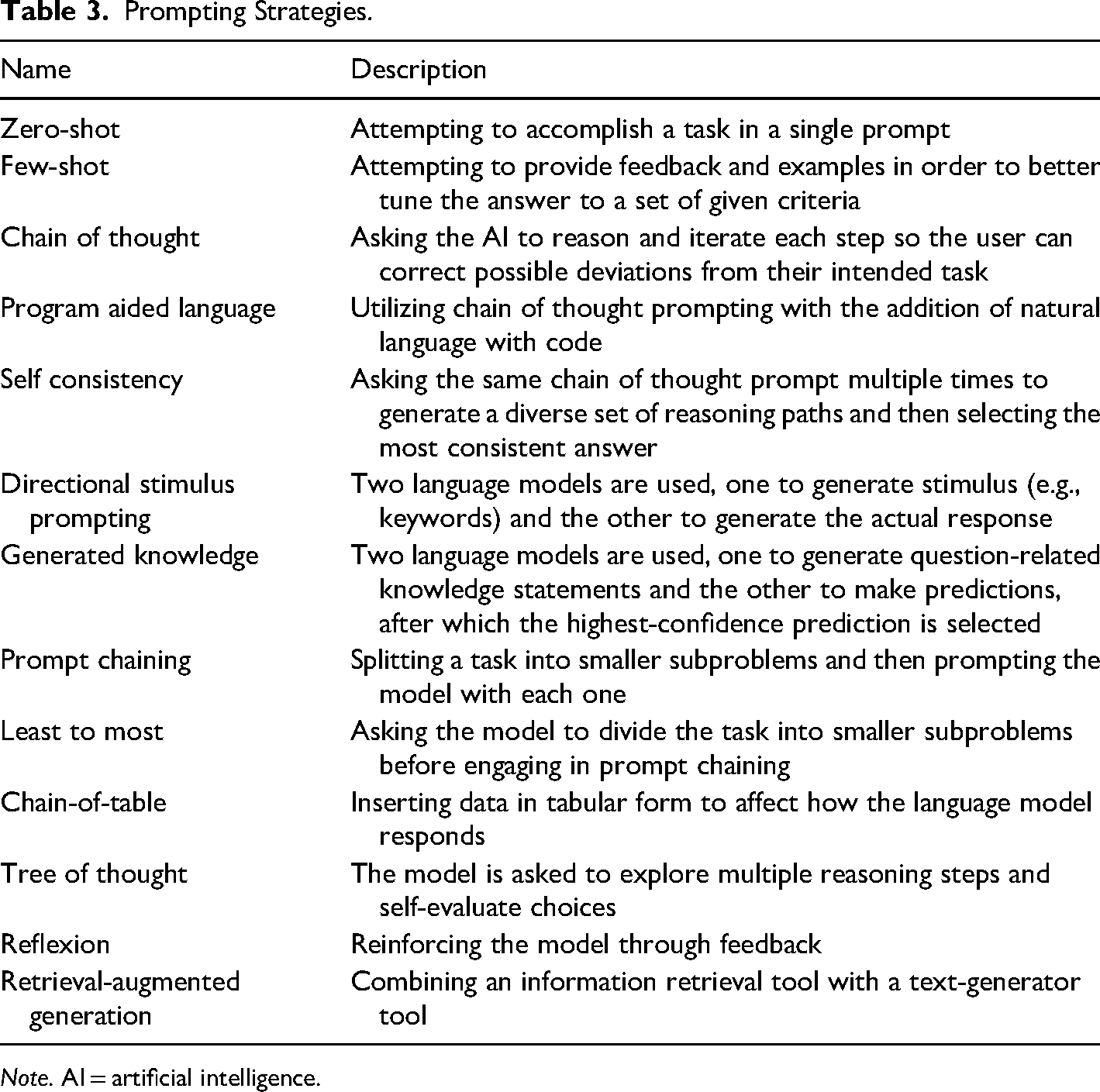

Interactions with AI require much more creativity than a single-line prompt with no human-led guidance. An effective prompt can generate exactly the information required for a given task whereas an ineffective one may result in the wrong information, in a hallucination, or in having to continuously re-prompt the model, thus wasting valuable time. The term for developing prompts that will result in the best outputs has been called prompt engineering (Boettger, 2024). There are a large variety of prompting strategies besides the zero-shot technique which have been discussed by both scholars and practitioners. Following Ruksha (2024), they are given in Table 3.

Prompting Strategies.

These are just some of the prompting strategies human AI users can use. Many AI tools now come prepackaged with particular strategies in mind and can often recommend strategies based on the desired task. There are also a variety of prompt libraries, or prewritten prompts broken down by task, knowledge domain, and use cases that users can consult (Oswalt, 2024).

The main takeaway from all these strategies is that skilled writers must experiment with their AI writing assistant in order to generate the best outputs. Many users attempt a prompt, are unsatisfied with the output, and take from this experience that AI isn’t capable of providing that kind of output. However, just like other technology-augmented tasks, such as using a search engine, trying out different parameters is often the key to success. Below we describe another key facet of HILT writing with AI: validating the information that AI tools generate.

Quality Control Strategies

An HITL approach to AI-assisted writing positions the human as the ultimate authority over the writing task. Language models alone lack awareness of—or commitment to—the purpose, context, and outcomes of the content they generate. While this makes them highly flexible, it also makes them prone to misrepresenting information or misunderstanding critical nuances. Humans, therefore, remain essential for determining writing goals (context and exigence), structuring the workflow, managing each step, and ensuring the overall quality and effectiveness of the final product. As AI tools rapidly advance, these oversight and quality-control responsibilities become increasingly complex and vital. For technical communicators, rigorous validation is especially critical, as the accuracy, credibility, and usability of information are paramount.

Such an approach is, in turn, a response to common pain points writers are experiencing with AI tools, including:

Concern over accuracy and reliability of AI-generated content, leading to trust issues. Increased cognitive load and uncertainty when verifying AI outputs. Difficulty in managing and correcting subtle inaccuracies or biases introduced by AI tools.

Our heuristic helps deals with these pain points by providing:

Step-by-step validation methods that integrate smoothly into existing workflows (e.g., self-consistency checks, cross-validation, source verification). Clarification on precisely how to detect common AI errors (hallucinations, biases, factual inaccuracies). Assurance writers can confidently maintain rigorous professional standards while using AI, preserving credibility and trustworthiness.

A further challenge in AI-assisted writing is ensuring accuracy when users lack deep subject matter expertise in a given area. While AI can assist in summarizing complex topics, it may also fabricate details, misinterpret nuanced technical information, or generate misleading responses. To mitigate this, technical communicators can use several validation strategies, even without domain expertise:

Cross-check AI-generated content against multiple reputable sources (e.g., industry reports, government guidelines, or scholarly articles). Leverage regulatory or style guides (e.g., Microsoft Style Guide, ISO documentation standards) to assess AI-generated outputs for alignment with best practices. Use specialized AI fact-checking tools (e.g., Perplexity AI, Elicit) that aggregate and verify information from multiple data sources. Consult SMEs selectively, not for full document review, but for targeted fact-checking of critical sections.

These techniques allow writers to use AI effectively without blindly trusting its outputs, ensuring that AI remains an assistive tool rather than an unquestioned authority.

Effective quality control begins with understanding why and how AI tools commonly fall short. Despite their growing sophistication, language models frequently encounter several specific limitations: they may introduce biases, inadvertently promoting stereotypes or unfair perspectives; generate entirely fabricated information; misinterpret specialized terminology or context-specific nuances; or struggle with logical reasoning, numerical accuracy, and basic commonsense tasks. Each of these issues poses a substantial risk to the reliability and usability of technical content. Technical communicators must remain vigilant, proactively identifying potential inaccuracies, and applying targeted validation strategies to ensure the integrity of their work.

Managing the quality of outputs requires an informed and capable user that can critically evaluate the entirety of the situation from goals to end-product. Before working on a project, the quality of GenAI will be, at least in part, determined by the particular tools that are selected. Not all autonomous systems are equal, and understanding the strengths and limitations of a particular model or application can be a significant step. AI tools undergo extensive training, refinement, and testing. Reviewing model cards and benchmark data can provide insights into the kinds of tasks models are well suited for, and the limitations or dangers that have been identified. For a deep dive into the ways AI systems are validated, see Myllyaho et al. (2021).

Again following Ruksha (2024), many AI tools show clear bias and fail when citing sources, judging the accuracy of claims, in various math and common sense reasoning problems, or in being manipulated by users. From the start, human users should consider how effectively a GenAI is responding to prompts. Ranade et al. (2024) examined how rhetorically-informed prompt design is an essential skill that is critical to ensuring AI outputs align accurately with human intentions. Developing an effective prompting strategy is one loop that can be highly-valuable. Determining what information to include in a prompt, and how much information to include, are essential steps for successful AI writing. Technical communicators should establish clearly structured, well-defined input parameters and context-specific guidelines for AI systems, enabling more accurate and effective generation of content. For instance, when engaging AI in generating metadata or standardized documentation, initial datasets or exemplary formats provided in advance can substantially improve consistency and reliability in outputs.

Technical communicators can employ several validation strategies to address these common issues. Prompt iteration strategies, such as self-consistency checks or multiangle prompts, can enable communicators to identify and rectify embedded biases or subtle inaccuracies within AI responses. Responses may be more accurate with higher-performing models and with models capable of reviewing source material. However, it is essential to confirm the accuracy of all information through additional validation, especially for specialized technical information. Independent verification of references and resources can help ensure quality, as can consulting an SME. Factual accuracy and relevancy checks for vital information should be done methodically and should be conducted with independent sources (external to the AI). For tasks involving complex reasoning or detailed analysis, communicators can utilize prompting methods that require the AI to explicitly articulate reasoning processes, thus revealing potential logical errors or misunderstandings. Ultimately, triangulation of AI-generated content through collaborative review provides an essential safeguard against subtle inaccuracies or overlooked biases.

Fundamentally, quality control in AI-assisted writing within technical communication demands that human communicators consistently remain at the core of the evaluative and decision-making process. Rather than passively accepting outputs generated by AI tools, technical communicators must actively manage and refine AI-generated content, maintaining oversight to ensure it aligns with professional standards and audience expectations. By thoughtfully applying these validation strategies, practitioners can integrate AI into their workflows effectively and responsibly, enhancing their productivity while ensuring the quality and credibility of their communications.

Although writing is a fundamentally creative process, as of right now the human needs to be in charge of the creative elements such as choosing topics, choosing tasks for AI to assist with, and critically evaluating all outputs. The parts of writing that can be most easily validated are the ones that are rule-driven. As we discuss below, however, the real power of HILT writing is scaling the GenAI tool's participation in the writing process to fully leverage its ability to generate large amounts of writing work quickly and efficiently.

Scaling and Automation Strategies

The main driving force behind skilled writers implementing AI within technical communication is the possibility of automating our work in order to gain speed and scale advantages. Like other kinds of automation, writing with AI requires careful attention to the goals, qualities, and steps of the entire process. While automation leads to speed increases, it comes with questions about quality and consistency. If writers working with GenAI can maintain a high level of control and oversight, they can rely more heavily on the technology during the process.

Like every other element of our heuristic, this section responds to several pain points, including:

Limited capacity to manage large volumes of repetitive writing or documentation updates. Difficulty maintaining consistency and uniformity across large document sets. Missed opportunities to automate routine yet essential tasks, causing slower project turnaround and reduced efficiency.

We respond to these pain points by providing:

Clear guidelines for leveraging AI's strengths in rapid, high-volume content generation. Strategies for automating tasks such as metadata generation, standardizing document formats, or updating instructional materials. Practices that enable writers to reclaim significant time and attention, allowing more focus on creative, high-value tasks.

Although automation should be a concern at every level of our framework, such as defining tasks, prompting, and validation, perhaps the most important consideration comes at the defining task level. Again, the best tasks to automate are ones that are rule-driven and require less creativity. The more room for interpretation in a writing task, the more noise may creep into the outputs. As examples, consider the following tasks. Our combined experiences with AI have led us to believe that at the time of writing, the majority of commercially available AI models are not well equipped to do the following:

If we examine these examples, we can see that they follow our warnings throughout this article that AI will struggle with anything that is niche, nuanced, requires strong domain expertise, or is largely open to interpretation. Although AI is developing at a rapid pace, at the time of writing, available models tend to struggle with these kinds of tasks because of the way they are designed, that is as probability machines that require a high degree of context to be successful.

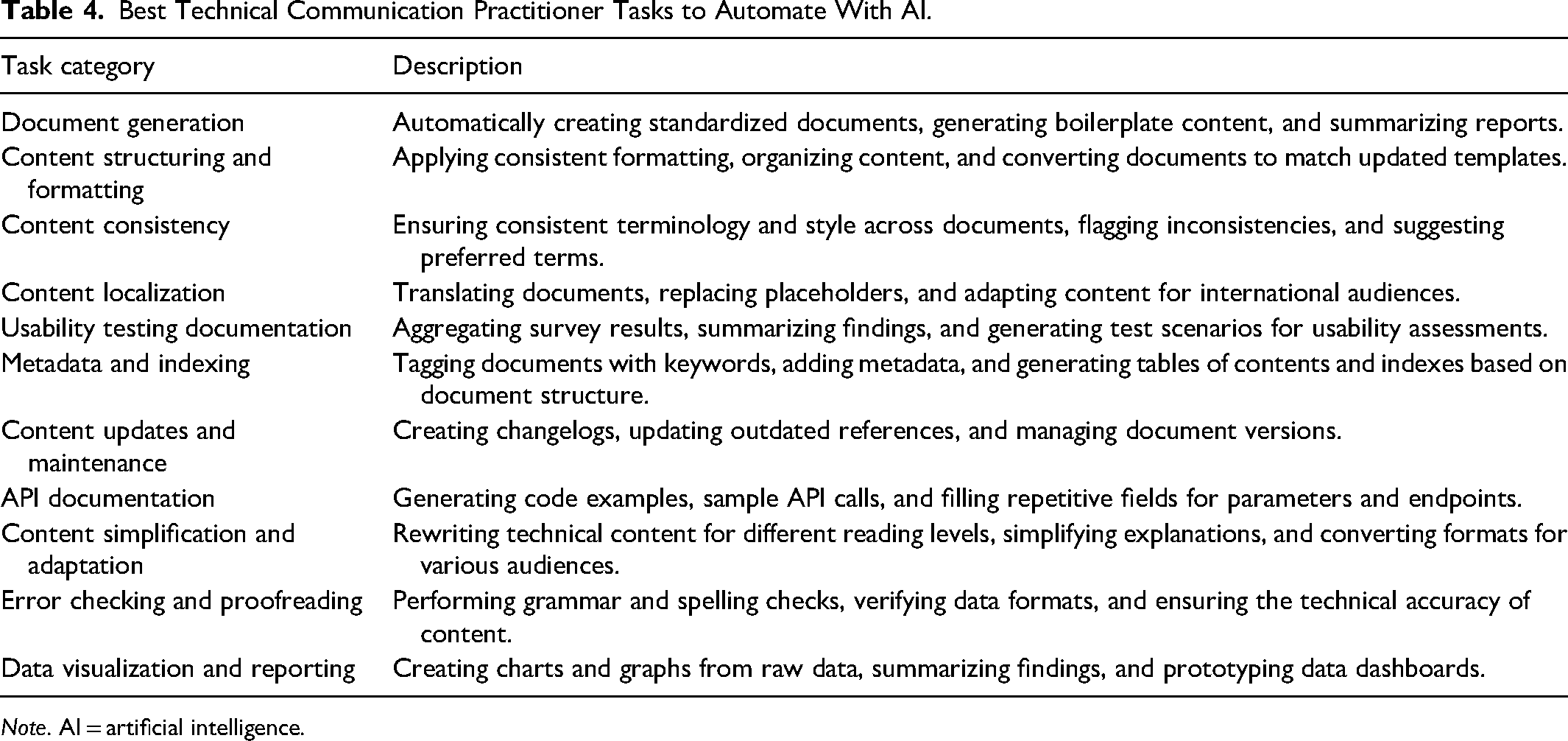

However, there are many other tasks that the field of technical communication

Best Technical Communication Practitioner Tasks to Automate With AI.

These examples were chosen because they follow our dictate that AI is best at automating rote, structured tasks with clear parameters and outputs. Anyone who has worked as a technical communication practitioner probably also recognizes one or more pain points within the list. To zero in on just one example: creating metadata for a large corpus of documents is often incredibly time-consuming and onerous for a human being to complete, whereas AI tools can complete this task in seconds.

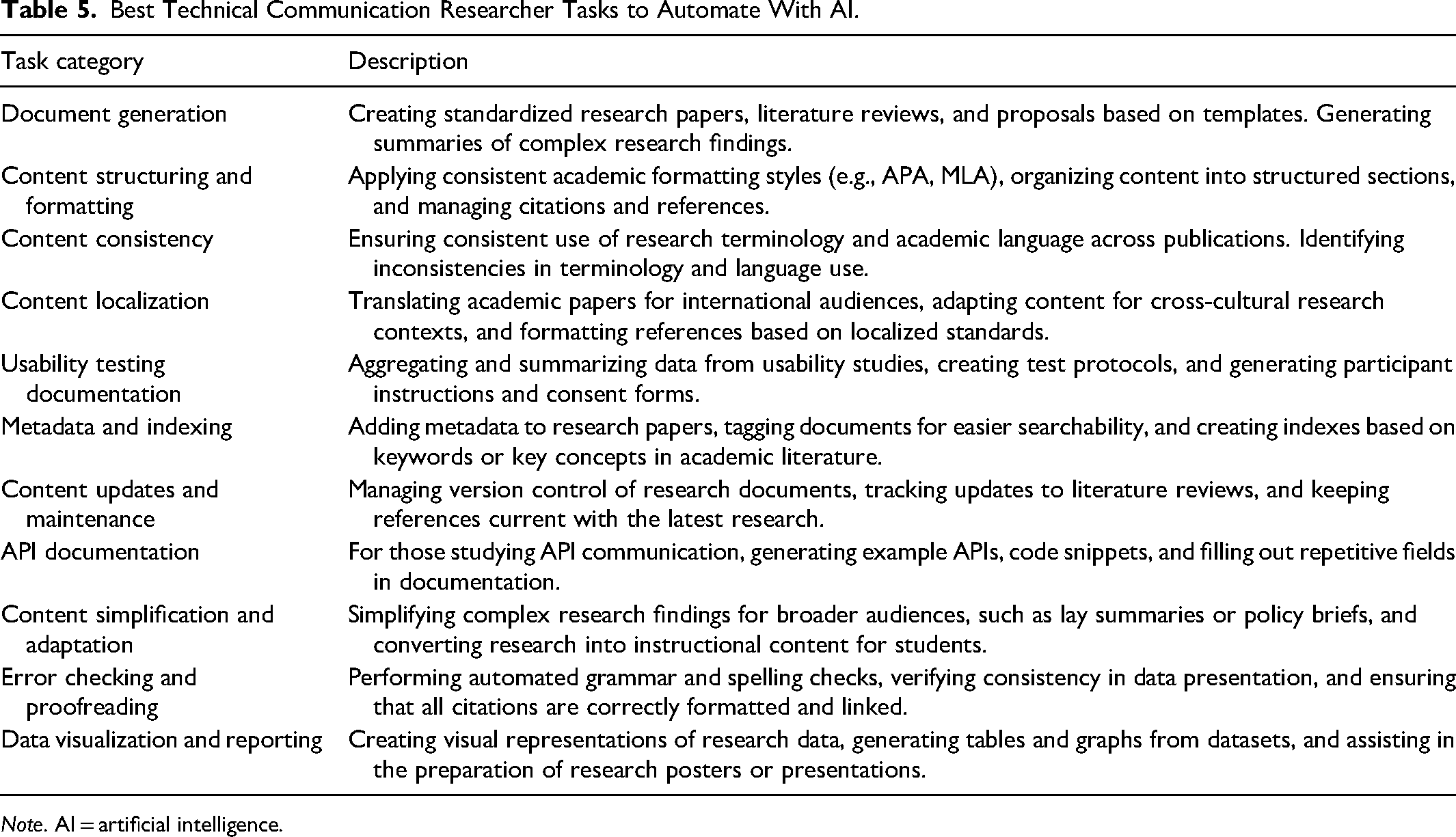

Looking at the other side of the pond, academic researchers of technical communication can also use AI to automate tasks involved with academic research, though we would caution these writers to pay close attention to all guidelines from specific venues regarding the use of AI. The two authors of this article who are primarily academic researchers have found that guidelines for the use of AI in scholarly publishing vary strongly from venue to venue. Depending on the guidelines of a specific venue, Table 5 presents some of the best research tasks to automate with AI. We have kept the categories the same for comparison purposes.

Best Technical Communication Researcher Tasks to Automate With AI.

Similar to the practitioner tasks, these examples were chosen because they are aspects of scholarly research that are less creative and more rote. As academic researchers, we have personally found that AI particularly shines in the areas of document consistency, structure, and formatting. Within the field of technical communication, there is a wide variety of genres between venues (i.e., journals, professional organizations, conference proceedings) and it can be difficult to switch gears between all these genres consistently. AI can assist with this by pointing out places where academic writing has fallen short of the specific venue's formatting expectations.

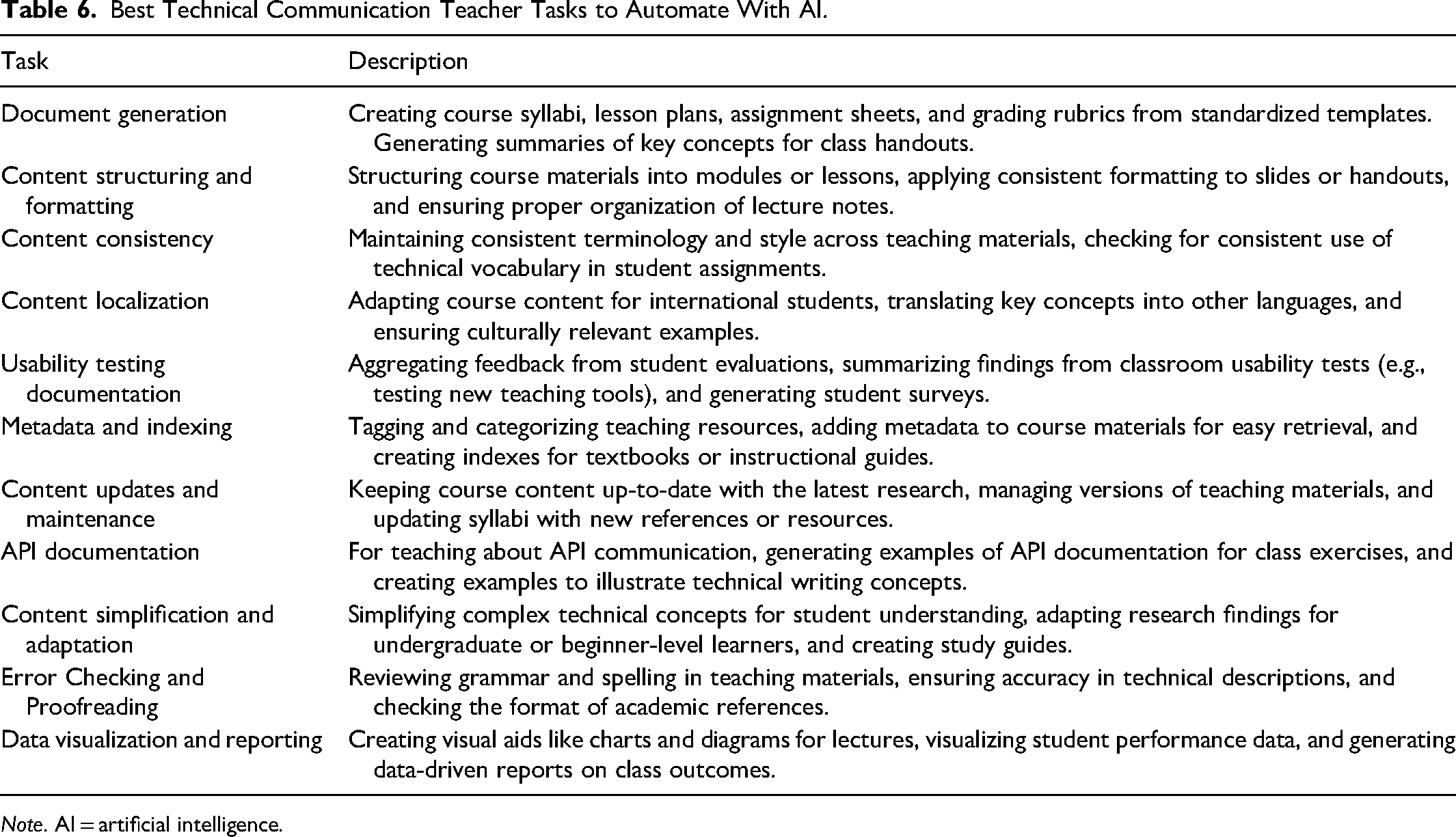

Finally, we also want to present some of the tasks that AI can help automate within the teaching domain. Similar to our other tables, Table 6 presents tasks that AI can help automate for teachers of technical communication.

Best Technical Communication Teacher Tasks to Automate With AI.

Like all the tasks we’ve recommended for automation, these focus on some of the most structured, rote aspects of creating teaching materials. In particular, AI is particularly adept at generating the kinds of day-to-day, but time-intensive documents required for teaching a sound class: syllabi, activities, handouts, assessment rubrics, and so on. While it is essential that teachers of technical communication continue to do the hard work of innovating their courses as our field develops, it is now possible to automate some of the most time-consuming tasks involved with this innovation.

Tables 4 to 6 present only a small portion of the writing tasks within the field of technical communication that can be automated with AI, but we hope presenting these examples has furthered our argument that AI can be a powerful writing assistant for any skilled writer. Following, Johnson-Eilola et al. (2024), rather than a future in which AI replaces the difficult, necessary work of communicating specialized and technical topics to a wide variety of audiences that all technical communicators arguably contribute to our society, we envision a very near future in which AI helps turn pain points in technical communication work into easily-accomplished tasks. Below we close our article by discussing some of the limitations of our framework before concluding with future directions scholarship on AI in technical communication might take.

Limitations and Caveats

Perhaps the largest limitation of our framework is that AI, like any tool, has a learning curve. Skilled writers who explore AI to help automate tasks may find that they initially struggle to define tasks, prompt, validate, scale, and automate when doing their writing work. We have developed this framework not only by reviewing academic and practitioner research on this topic but also through much trial and error. Just as major innovations in writing technology such as the word processor and the automated spell checker required adaptation, AI will also require writers to deeply consider how their workflows can be improved through AI. And this reflection will necessarily involve many writers rejecting one or more features of AI due to personal preference or ethical concerns.

This brings us to our second limitation, which is that AI is also not a neutral tool. No technology is. AI models are trained by human beings with particular biases, values, and power relationships with other human beings. As a product of human labor, AI necessarily inherits these predispositions. It contains not only particular affordances but also features that were decided by its creators before the user ever encountered it. To this limitation, we respond that the best way for technical communicators to ensure the ethical use of AI within our field is not to refuse to use it, but to get more involved with its production. As a reminder, many AI models are released in an open source format, meaning that anyone with sufficient technical expertise can use these models as frameworks for developing their own AI tools. If individual technical communicators lack this expertise, they can collaborate with developers. We would challenge technical communicators who are hesitant about AI for one or more reasons to

Our third and final limitation is that AI is constantly developing and is still in its infancy. We have tried to create a framework for technical communication that is agnostic of any one tool, context, or user. In this way, our framework is less a series of prescriptions than it is a series of heuristics for researchers, teachers, and practitioners of technical communication who are looking for ways to automate rote writing tasks. It is very difficult to predict which tasks will remain under the rubric of “rote” for GenAI. Some of the tasks we’ve recommended against attempting to automate, those that require a high degree of specialist knowledge and/or creativity, may very well soon be achievable with AI. It is difficult to say. Regardless, our framework attempts to provide readers with some longevity by providing a series of critical decision points that anyone utilizing AI as a writing assistant within technical communication should consider.

Conclusion

The field of technical communication is just now beginning to feel the impacts of AI technologies on the ways our members research, write, and teach. Some members are excited by this impact, others are anxious, and still others probably find AI deeply threatening to our identity as a field. Regardless of one's personal feelings toward AI, we would argue that the impact of this technology on our field is real and profound. Technical communication has always prided itself on being adaptive to new technologies and workflows. This is one of the main values we offer to society. Although it is impossible to predict what the future holds for the place of AI in technical communication, one thing is certain. If we do not embrace AI, others within the technology sector will be the ones to shape it. Thus, we invite readers of this article to try our framework out and see for themselves what affordances AI holds for our field. It is only through interrogating and reshaping this technology that our field will ensure that it is utilized in the most equitable and effective manner for users from all walks of life.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical Approval

Institutional Review Board approval was not required.

Informed Consent

Informed Consent was not required for this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Notes

Author Biographies

![]() .

.